Abstract

According to the simulation hypothesis, mental imagery can be explained in terms of predictive chains of simulated perceptions and actions, i.e., perceptions and actions are reactivated internally by our nervous system to be used in mental imagery and other cognitive phenomena. Our previous research shows that it is possible but not trivial to develop simulations in robots based on the simulation hypothesis. While there are several previous approaches to modelling mental imagery and related cognitive abilities, the origin of such internal simulations has hardly been addressed. The inception of simulation (InSim) hypothesis suggests that dreaming has a function in the development of simulations by forming associations between experienced, non-experienced but realistic, and even unrealistic perceptions. Here, we therefore develop an experimental set-up based on a simple simulated robot to test whether such dream-like mechanisms can be used to instruct research into the development of simulations and mental imagery-like abilities. Specifically, the hypothesis is that ‘dreams’ informing the construction of simulations lead to faster development of good simulations during waking behaviour. The paper presents initial results in favour of the hypothesis.

1 Introduction

Mental imagery can be described as the maintenance and manipulation of perceptions and actions of a covert sort, i.e., it arises not as a consequence of environmental interaction but is created internally by our nervous system. Hesslow’s simulation hypothesis (1994, 2002, 2012) views mental imagery as simulations of perceptions and actions: the nervous system reactivates neural structures used for perception and action in an offline fashion where the reactivated perceptions and actions only give rise to mental images rather than actual interaction with the external world.

This type of offline mental imagery is also a central part of the phenomenology of dreams as has sometimes been noted by previous dream research (Domhoff, 2011). The inception of simulation (InSim) hypothesis (Thill & Svensson, 2011) postulated that one function of dreams during infancy and childhood is the development of simulations. Here, we suggest that the InSim hypothesis can be used to inspire new techniques for modelling and developing imagery-like abilities in artificial cognitive systems.

A number of robot experiments have investigated the possibility of using simulations as specified by the simulation hypothesis to guide behaviour (Stening, Jakobsson, & Ziemke, 2005; Svensson, Morse, & Ziemke, 2009b; Ziemke, Jirenhed, & Hesslow, 2005), as well as other similar theories grounding cognition in sensorimotor processes (Gross, Heinze, Seiler, & Stephan, 1999; Hoffmann, 2007; Hoffmann & Möller, 2004; Wintermute, 2012). However, the task is far from trivial and the InSim hypothesis might be useful for constructing more robust simulations. Another aspect that remains relatively unexplored concerns the origin of simulations. Part of an explanation thereof involves the evolutionary development of perception and action (Hesslow, 2002), for instance, neural mechanisms for learning predictions 1 (Svensson, Morse, & Ziemke, 2009a), but the development of simulations used in mental imagery is not fully understood (Thill & Svensson, 2011). We argue that seeking answers to the question of what initially guides the formation of the predictive associations between motor and sensory areas of the brain (resulting in mental imagery simulations) is a worthwhile endeavour that assists the development of mental imagery like abilities in robots. It further contributes to the on-going discussion about whether models of embodied cognition can also explain the grounding of higher-level cognitive abilities in sensorimotor mechanisms (e.g., Downing, 2007; Pezzulo et al., 2011).

The basic story and structure of the paper are as follows. The next section outlines the simulation hypothesis and summarizes the available empirical evidence showing simulations to play a major role in mental imagery. The following section entitled ‘The inception of simulation’, describes evidence suggesting that dreaming plays a major developmental role from birth and that there are a number of significant similarities between dreams and mental imagery at both the phenomenological and neural level. Furthermore, it argues that simulations are a contributing factor to the similarities found between dreams and mental images in waking. The final parts of the paper present a proof-of-concept experiment of how the InSim hypothesis can be applied to the development of mental imagery in robots.

2 The simulation hypothesis

Several so-called simulation or emulation theories (all addressing, to some degree, the grounding of higher-level cognition in sensorimotor structures) suggest that sensorimotor structures are reused (cf. Anderson, 2010) in different cognitive phenomena, e.g., perception (Möller, 1999), mental imagery (Jeannerod, 1994; Moulton & Kosslyn, 2009; Thomas, 1999), mental representation (Grush, 2004), concepts (Barsalou, 1999) or language (Zwaan, 2004). Although these theories share a common ground, they are not identical concerning details of how simulations are thought to be implemented in biological or artificial neural networks and the epistemological assumptions made. We do not discuss these differences here but it is therefore necessary to point out that we focus mainly on a particular simulation theory, i.e., Hesslow’s (1994, 2002, 2012) simulation hypothesis. 2

2.1 Core concepts

Hesslow (2002) postulated three different components that are the core of the simulation hypothesis.

(1) Simulation of actions: we can activate motor structures of the brain in a way that resembles activity during a normal action but does not cause any overt movement. (2) Simulation of perception: imagining perceiving something is essentially the same as actually perceiving it, only the perceptual activity is generated by the brain itself rather than by external stimuli. (3) Anticipation: there exist associative mechanisms that enable both behavioral and perceptual activity to elicit perceptual activity in the sensory areas of the brain (Hesslow, 2002, p. 242).

Hesslow (2002, 2012) argued that simulation can be interpreted within a behaviourist framework and does not imply cognitivist concepts, such as mental representations or internal models. Although we agree that traditional notions of ‘representation’ as depicting some kind of objective reality are highly problematic, it should be noted that, as von Glasersfeld (1990, 1995) pointed out, using the term representation in the sense of the German term Vorstellung as used by Kant and Schopenhauer, i.e., representation as subjective expectation/imagination, or in the Piagetian sense of representation as mere re-presentation of previous experiences projected towards the future, is much less problematic. This is also very much in line with Bickhard’s notion of interactive representation as indications of interaction outcomes (e.g., Bickhard, 1998; cf. Svensson & Ziemke, 2005).

With this in mind, we now briefly review evidence showing that it is possible to reactivate perceptual brain areas internally so that they can serve as stimuli for both simulated and overt actions (Hesslow, 2012; Svensson, Lindblom, & Ziemke, 2007).

2.1.1 Behavioural evidence

Early mental chronometry studies, such as mental rotation experiments (Shepard & Metzler, 1971) and mental scanning experiments (Kosslyn, Ball, & Reiser, 1978) showed that the time mentally to execute the tasks involved is a linear function of the distances in physical space (cf. Finke, 1989). Motor imagery research, where subjects mentally imagine themselves performing an action from the first person perspective, have similarly demonstrated correspondences between the timing of overt and covert actions (Jeannerod & Frak, 1999; Papaxanthis, Schieppati, Gentili, & Pozzo, 2002). For example, Decety, Jeannerod and Prablanc (1989) compared the durations of walking blindfolded towards targets placed at different distances and mental imagery of walking to the same targets. Furthermore, Decety and Jeannerod (1996) found that Fitt’s law (task execution times increases with task difficulty) also holds for motor imagery.

2.1.2 Neuroscientific evidence

Neuroimaging studies suggest that mental images are accurately described as reactivated perceptions and actions. Extensive research has demonstrated similarities between visual imagery and visual perception (Kosslyn, 1994; Kosslyn & Thompson, 2000). The correspondence between visual imagery and visual perception is, however, neither complete nor uniform across experiments: activation of the primary visual cortex in imagery has for instance only been observed in some experiments. However, this can be explained by individual differences, the type of imagery and resolution of the mental images (Kosslyn, Ganis, & Thompson, 2001; Thompson, Kosslyn, Sukel, & Alpert, 2001).

A similar pattern of results emerges for the motor modality. Following the first study to describe correspondences between motor imagery and areas used for planning actions (Ingvar & Philipson, 1977), several neuroimaging experiments have confirmed these initial results. Together with results from studies on neurological disorders (Jeannerod, 1995), these experiments have, albeit with some discrepancies, found motor imagery to involve structures primarily associated with the execution of actions, such as primary motor cortex, premotor cortex, supplementary motor area, lateral cerebellum and the basal ganglia. Further, motor imagery also involves areas primarily associated with action planning, such as the dorso-lateral prefrontal cortex, inferior frontal cortex and posterior parietal cortex (for reviews and references to particular experiments see e.g., Grèzes & Decety, 2001; Jeannerod, 2001). As was the case with visual mental images, the overlap is not total; nor was it expected to be, since mental actions do not necessarily mimic every aspect of external actions. For example, subjects in mental chronometry experiments tend to over- or underestimate durations of actions in some conditions (Guillot & Collet, 2005) and activation of the primary motor cortex is not found in every neuroimaging study on motor imagery (Dechent, Merboldt, & Frahm, 2004; Grèzes & Decety, 2001).

However, it has been observed that mental motor imagery can cause autonomic effects similar to physical exercise (Decety, Jeannerod, Durozard, & Baverel, 1993). Some evidence also suggests that motor imagery activates the spinal cord and organs of proprioception (Lethin, 2005). Lethin (2005) describe the evidence as follows: There is evidence that the specifications for covert action include changes in the body itself and not just in the brain … The covert efferent arm [signal, pathway] to the body may include stimulation of the gamma as well as the alpha motoneurons. There is evidence that an ascending pathway from the muscle spindles to the brain carries such feedback during simulation and probably during preparation. (pp. 111–112)

To conclude, the behavioural and neuroscientific evidence suggest that in many respects there is a close correspondence between covert perceptions/actions and perception/actions. It has also been pointed out that the reactivations of neural areas and mechanisms are not exact replicas and the difference in degree of activation can be explained by, for example, the type of information imagined. However, a total reactivation might not be advantageous as that could possibly cause problems distinguishing externally and internally generated mental images or hindering the execution of a simulated action. The next section provides a description of some mechanisms that are part of generating the close correspondences described in this section.

2.2 Different forms of anticipatory mechanisms in simulations

According to the simulation hypothesis, predictive associations between simulated perceptions and actions are formed to create simulations. They allow the cognitive agent to form an inner world in which the agent can explore actions and, to use a phrase from Karl Popper, ‘permit our hypotheses to die in our stead’ (Dennett, 1996). Svensson, Morse and Ziemke (2009a) described three different forms of predictions involved in the formation of an inner world: implicit, bodily and environmental anticipations. We briefly summarize these here.

2.2.1 Implicit anticipation

Studies in neuroscience have identified neural mechanisms that have the ability to predictively map sensory contexts onto motor actions, for example the basal ganglia (Downing, 2009; Humphries & Gurney, 2002; Prescott, Gurney, & Redgrave, 2002) and cerebellum (Downing, 2009; Yeo & Hesslow, 1998). The stimuli-response associations are predictive in the sense that they have been formed by reinforcement and supervised learning mechanisms that ensure that the associated actions lead to favourable outcomes. These mechanisms establish anticipations that are implicit in the sense that they are only about the action and do not involve the prediction of the sensory feedback that the action would result in. That is, there is only a stimulus-response association. Thus, a creature endowed with only implicit anticipations could not construct a simulation, since it lacks the mechanisms for mapping the covert action to a future sensory context. However, implicit anticipations can play a role in simulations since they could assist in one central aspect of simulations; the process of making a simulated perception to act as stimulus for an overt or covert action (Hesslow, 2002, 2012). A possible function of implicit anticipations in simulations could be to direct and constrain the course of simulations by selecting some actions over others, but at the same time also to prevent them from causing overt movements (cf. Cotterill, 2001). In other words, just as they support action selection they might be able to select the action content of our thoughts. These mechanisms ensure that simulations are effective, in the sense that they constrain the simulated actions to a subset of the possible actions in a situation. The neural mechanisms involved in implicit anticipation are further elaborated by Svensson et al. (2009a).

2.2.2 Bodily anticipation

Most people find it very difficult to tickle themselves. However, it might be possible through the use of a feather or better yet through the mediation of someone else (using a feather). This is explained by the existence of predictive models of our own actions and proprioceptive and other (proximate) sensory signals that normally results from those actions. Blakemore, Wolpert and Frith (2000) argued that the neural mechanisms producing this phenomenon are based on efference copies fed to the cerebellum. The cerebellum both predicts the sensory consequences of that action and compares it with the resulting sensory feedback from touch sensors, which attenuates the activity in somatosensory cortex if there is no discrepancy. In other words, the cerebellum implements a forward model of the world (Wolpert, Miall, & Kawato, 1998). It should be noted that it has been difficult to determine whether the cerebellum computes the sensory consequence, only motor responses (as in implicit anticipation), or both (e.g., Bastian, 2006; cf. also Hesslow, 2012).

Imagery based on bodily anticipations is likely to be closely tied to details of the execution and proximal consequences of bodily movement (cf. Johnson, 2000). Hence, the sensory predictions are related to proprioceptive signals and the proximal senses of touch (and perhaps taste). As previously mentioned, motor imagery sometimes elicits activation of the proprioceptive signals, which, although highly speculative, could possibly produce a learning signal for offline training of cerebellar forward models (cf. Downing, 2009). While bodily anticipation is similar to the environmental predictions described in the next section in the sense that they are more or less explicit predictions, it differs with regard to the content of the predictions and their neural substrate (Svensson et al., 2009a). It is also possible to make a similar distinction between bodily and environmental anticipation in the context of mental imagery. Tomasino, Toraldo, and Rumiati (2003) found a double dissociation between mentally rotating body parts and mentally rotating external objects. Patients with lesions to the left hemisphere were unable to complete a task associated with the rotation of the hand, while patients with right hemispheric lesions had trouble with mental rotation of external objects. The prediction of states of the body has also been shown to be a useful capability in robots (Bongard, Zykov, & Lipson, 2006) and it has been suggested that this should be generalized to internal models of the environment (Adami, 2006).

Environmental anticipation in mental imagery

The function of environmental anticipation is to generate predictions of future sensory states relating to objects and situations in the world external to the animal’s body. This task is often more difficult than predicting the state of the body because of less control over those aspects which may also change on a much faster time scale.

Environmental predictions can be in the form of associations between two neural states, which each correspond to an external object or event. For example, if a particular perception, say the shadow of a hawk is associated, in a prey, with the ‘image’ of the hawk then the prey might have a better chance of escaping its predator. This type of sensory-sensory association is certainly present in simulations (Hesslow, 2002). However, motor patterns should also be involved in eliciting the sensory consequences associated with the execution of the corresponding action (Cotterill, 2001; Hesslow, 2002). This type of environmental prediction might be crucial for implementing longer and more specific or goal directed simulations. Studies have found evidence of behavioural response-effect associations in the form of covert perceptions as would be suggested by the simulation hypothesis (Kunde, Elsner, & Kiesel, 2007).

Although evidence shows that motor imagery is highly dependent on covert activation of the motor system, motor imagery may, as also described above, consist of simulated sensations and perceptions (Gibbs & Berg, 2002; Grush, 2004). Conversely, visual imagery might be accompanied by covert actions. However, it seems that, to some extent, humans can choose a strategy where they rotate the object, which then causes activation of motor areas, or choose a strategy where the object is moved by an external force (Kosslyn et al., 2001), which can also explain the disparate findings of motor involvement in mental rotations of shapes (Lamm, Windischberger, Leodolter, Moser, & Bauer, 2001) and tools (Vingerhoets, de Lange, Vandemaele, Deblaere, & Achten, 2002). The important aspect here is that there are predictive mechanisms in simulations that contribute both to elicit simulated perceptions based on simulated actions and vice versa.

3 The inception of simulations

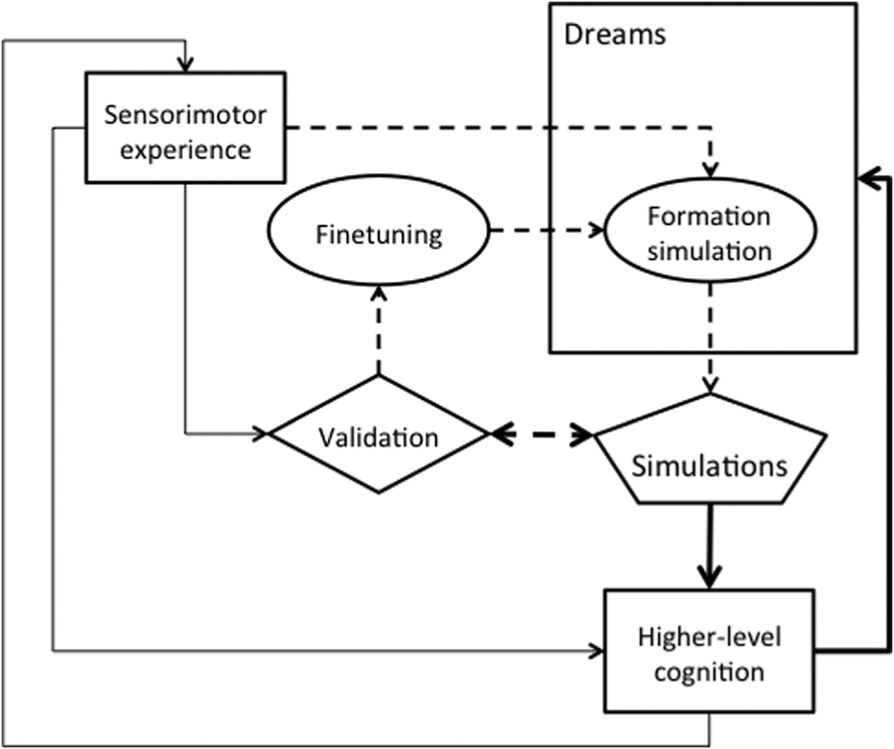

There is, as seen above, comprehensive research into what kind of information is involved in simulations of perceptions and actions and their neural substrate. The precise origin and development of these simulations however remains less understood. Hesslow (2002) emphasized that simulation theory explains cognitive functions in terms of phylogenetically older brain functions, i.e., functions evolved to allow mammals to eat, move and reproduce. Thus, part of the explanation might in fact relate to the explanation of the evolution and development of perception and action processes themselves. However, while some of the basic neural substrates for developing simulations, such as neural mechanisms underlying the three types of anticipation described in the previous section (Svensson et al., 2009a), are likely to have evolutionary origins, it is quite clear that simulations have to be learnt during the life-time of an individual. Not only does the world change at various time scales, our body grows and changes sometimes in unexpected ways. There is thus a necessity for an explanation of what initially guides the formation of the neural connectivity between motor and sensory areas of the brain that underlie simulations. Although some accounts of simulation have touched upon on it (e.g., Grush, 2004) an account of the origin of simulation is partially missing. Thill and Svensson (2011) developed the inception of simulation (InSim) hypothesis, which states that dreams during infancy and childhood, the inception phase, may play a fundamental role in the inception of simulations while the relationship is reversed as dreams reaches an adult level of complexity. In this mature phase, simulations instead support and guide the dreams as they do for mental imagery in waking. Figure 1 provides a representation of the different functions postulated by the inception of simulation hypothesis.

Schematic illustration of the functions involved in the InSim hypothesis. Dashed arrows indicate functions active the inception phase, whereas thick arrows indicate functions associated with the mature phase. Early in the inception phase re-enacting of experienced sensorimotor perceptions within dreams shape internal simulations of the world the infant is living in (circle Formation simulation). Based on these simulations, the infant or young child will generate predictions which are then validated while awake (arrow from Simulation to Validation), leading to a fine-tuning of the simulation (circle fine-tuning). As the child grows older, the ‘Formation simulation’ mechanism ceases to play an important role and dreams simply use existing simulations (the route from Simulation via Higher-level cognition).

3.1 The inception phase

In infants and young children, sensorimotor experiences are hypothesized to be re-enacted within dreams, which leads to the formation of associative structures underlying simulations. This is the inception phase. Since this takes place in young infants, it is hard to know what the phenomenological experience of this phase is; it is therefore somewhat of an assumption that it exists (rather than the inception of simulations being a subconscious process). However, it seems likely that it is initially composed of a more or less direct repetition of the impressions of recent sensorimotor experiences. Once a simulation is active, dreams begin to use it, at which stage the phenomenological experience begins to increase in complexity, including the generation of event sequences that were not directly experienced but are rather predictions of what is possible.

The child can then test these predictions while it is awake, leading to a validation mechanism, which in turn is used to fine-tune the existing simulations. It is clear that simulations that depend on sensorimotor experiences are constrained by the infants abilities, which are themselves continuously developing throughout childhood. The first decade of life is therefore likely to be spent considerably refining the simulations. It is worth pointing out that other models based on simulated actions and perceptions propose similar mechanisms. For instance, Grush (2004) proposed in his emulation theory of representation, a model based on Kalman filters (from control theory) in which the predictions produced by simulations (or emulations in his terms), are also used to update the emulations themselves by comparing them with the corresponding actual input.

3.2 The mature phase

At the same time, however, as simulations begin to be more complex, the predictions they generate during dreams can be used in other aspects of higher-level cognition for example mental imagery and episodic memory. The complexity is characterized both in phenomenological terms of the number of and relations between objects and situations, and at the neural level in terms of the ability to reactivate the neural substrate for actions and perceptions, without causing overt actions or conflicts with externally generated perceptions. We do not attempt to characterize its function in dreams in detail but this would for instance include functions of dreams such as proposed by Revonsuo’s (2000) Threat Simulation Theory. In other words, as the need for fine-tuning is reduced, the function of dreams as support for higher-level cognitive mechanisms increases. In this mature phase, higher-level cognition defines the narratives of dreams (thick arrows in Figure 1), which increases in complexity as simulations increase in completeness.

The hypothesis is both consistent with available evidence and compatible with theories related to the functions of dreams in adults as well as the position taken by Domhoff (2001, 2011). The next section briefly outlines the main arguments for the InSim hypothesis, but see Thill and Svensson (2011) for additional details.

3.3 Dreams and simulations

The InSim hypothesis suggests that research into dreams and simulations may benefit from each other. Domhoff (2011) recently also argued just that: ‘Recent research on mind wandering, daydreaming, and simulation provides a new avenue for those dream researchers who begin with the premise that systematic studies of waking cognitive processes and their neural correlates are the best starting point for developing a neurocognitive theory of dreaming and dream content’ (p. 1169). We would add that it is not a one-way street, but dream research can lead to new discoveries and theories regarding waking cognitive processes. The following subsections illustrate the basic motivation for involving dream research in simulation theories, and more specifically why dreams should be considered to play a role in the initial development of the ability to simulate.

3.3.1 Developmental aspects

The basic claim that dreams in young children can play a crucial role in the inception and refinement of simulations is motivated in part by the ontogenetic hypothesis (Blumberg, 2010; Roffwarg, Muzio, & Dement, 1966) of the function of children’s dreams, namely that the large amount of REM sleep at the beginning of life can be explained by a need for endogenous stimulation of the nervous system, especially higher cortical areas, which ‘may be useful in assisting neuronal differentiation, maturation, and myelinization’ (Roffwarg et al., 1966, p. 616) of these areas. Thus, the ontogentic hypothesis focuses on the neurophysiological aspects of brainstem induced cortical and muscle spindle activity, rather than a possible function of the conscious experiential aspect. However, the activation of higher-cortical areas during dreams is necessary for establishing associations between perceptual and motor areas of the brain that can be further validated and refined during waking behaviour.

Even though children’s dreams are described as ‘impoverished’ (Domhoff, 2001) compared with those of adults, infants spend around 14 hours per day sleeping, compared with 8–9 hours for 16-year-olds (Iglowstein, Jenni, Molinari, & Largo, 2003). About half of that is spent in REM sleep, dropping to 30% to 40% (but approaching adult levels in quality) in infants aged between 1 month to 1 year (Finn Davis, Parker, & Montgomery, 2004). Although it is difficult to determine whether any phenomenological experience is associated with these sleeping patterns in infants, we do know that this is the case in young children (Domhoff, 2001). A recent study of preterm babies showed that the brain is more fully developed at birth than previously thought (Doria et al., 2010), which could favour a more inclusive view of the abilities present at birth.

There is also a correlation between the phenomenological quality of dreams and mental imagery ability in children. Domhoff (2011) concluded, based on dream report data, that ‘the ability to produce a coherent and animated dream narrative parallels the development of waking cognitive capabilities, with the ability to generate mental imagery as the most important correlate’ (p. 1165). A reasonable assumption is that this correlation can be explained by the development of more complex simulations. The influence of simulations in dreams increase as the accuracy and complexity of simulations have advanced sufficiently, marking the transition to the mature phase. For example, children then begin to simulate and prepare for threats as suggested by the Threat Simulation Theory (Revonsuo, 2000). This is at least indirectly supported by the fact that studies that do show evidence for the threat simulation theories rely on data from children aged on average about 12 years, at which age dreams are already adult-like in their complexity and narrative (Domhoff, 2001, 2011). The following subsection further outlines the phenomenological aspects pertaining to simulations and dreams.

3.3.2 Phenomenal aspects

There are a number of phenomenological properties that can play a role in both the inception and the mature phase. Firstly, dreams are somewhat more unconstrained than other thought processes, e.g., dream imagery (during REM-sleep) is sometimes more bizarre than wake thoughts, self-reflection is absent in dreams, and dreams lack orientational stability (Hobson, Pace-Schott, & Stickgold, 2000). Thus, dreaming allows the emergence of paths of simulated actions and perceptions that would not have been thought of while awake. Secondly, dreams might be useful for creating longer and more stable simulations as they contain several organizing aspects. Dreams integrate several different dream elements into a coherent story, intensified emotions are experienced, which also seem to guide the narratives during dreams, and instinctual programmes such as fight-flight mechanisms are also used to guide the dreams (Hobson et al., 2000). Although dreams are sometimes bizarre and very unlike the waking state, studies have shown that the majority of dreams are rather closely tied to past, present and likely future situations even though there is a preference for threatening and dramatic situations (Domhoff, 2011), suggesting that, at least in their final adult form, dreams construct an internal replica of the outer world in which behavioural strategies can be tried out.

3.3.3 Sharing of neural mechanisms

Although the understanding of the neural substrate has advanced with the development of neuroscience, there are different views about which mechanisms are actually causing dreams to appear (e.g., Blumberg, 2010; Hobson, 2009; Hobson & Pace-Schott, 2002; Solms, 2000). It is also uncertain whether dreams in different stages of sleep share the same neural substrate. For example, Domhoff (2011) argued for a default network underlying dreams in general, which is also functional in waking activities, such as mind wandering and simulating past and future situations, while Hobson (2009) emphasized the role of REM-sleep and the associated changes in terms of variations in neurotransmitters that dominate brain activity in different points in time. However, drawing on evidence from dream research, it is possible to explicate a few similarities between the neural substrate implicated in dreams and those previously cited for simulations in, for example, mental imagery experiments (cf. section ‘The simulation hypothesis’). Actions in dreams are thought to be neurophysiologically similar to real actions except for not being executed (Revonsuo, 2000). In the language of the simulation hypothesis, actions in dreams are therefore simulated actions. Hobson (1999, cited in Revonsuo, 2000, p. 889) argued that ‘the experience of movement in dreams is created with the help of the efferent copying mechanism, which sends copies of all cortical motor commands to the sensory system’. Again, these insights are highly relevant to the simulation hypothesis as the use of efferent copies and input from all levels of the motor hierarchy (Fuster, 2004; for a comprehensive review see Thill, Caligiore, Borghi, Ziemke, & Baldassarre, 2013) has also been proposed as a possible neural substrate for establishing simulations generally (Cotterill, 2001; Hesslow, 2002, 2012). The anterior cingulate cortex has been suggested as an important area for dreams (Domhoff, 2011), as well as way station for efference copies from the premotor areas towards the sensory cortex (Cotterill, 2001). Furthermore, the spontaneous production of motor activity, including proprioceptive signals caused by limb twitching, during REM sleep (Blumberg, 2010), could play a significant role in triggering simulated sensations and perceptions. Proprioceptive signals originating in limb muscles have also been observed in motor imagery (Lethin, 2005). Even though the evidence on the neural level cited here is not conclusive, it points to some interesting overlaps in need of further investigation.

Additionally, since simulations and the resulting internal models of the world can be seen as a form of memory (of the functioning of the world, cf. Hesslow, 2012), the same mechanisms that were used in early life to create these simulations can be used later on to consolidate or process memories during dreams, as hypothesized for instance by Hobson (1994) or Crick and Mitchinson (1995), even if this function of dreaming is not related to the phenomenological experience.

Finally, dreaming is a state in which the brain is largely decoupled from the environment, i.e., there are a number of hardwired mechanisms, which cause sensory inputs to be dampened and motor output to be blocked (e.g., Hobson & Pace-Schott, 2002). This is a crucial aspect of dreams for their potential role in the development of simulations, since it means that the latter can be formed without the necessary architectural requirements of the waking state in which imagined and real perceptions, on the one hand, and overt and covert actions, on the other, must be able to coexist. To illustrate just one possible advantage: the simulation hypothesis suggests that a simulated action in neural terms is an action except for (not) being executed. We therefore need to explain why simulated actions in thought processes do not cause an overt action. One possibility is that the neural activations in simulations do not reach the threshold for over movement (Cotterill, 2001). A possible advantage of dreams would be that sensory and motor areas could be strongly activated (because of the induced muscle atonia), such that associative learning mechanisms can function in similar ways as in the waking state (cf. Downing, 2009), before mechanisms that allow simulations to occur in the waking state (without overt execution of the simulated actions) have developed.

To summarize, we take the empirical evidence to indicate that it is likely that in the adult brain simulations are present in both dreams and mental imagery. Further, there appears to be a progression during the child’s development from very impoverished but rudimentary simulations in dreams to more complex dreams featuring longer narratives and more resemblances to situations in waking life.

4 Dream-like mechanisms for robust simulations in robots

It might sound somewhat contradictory to argue for dreaming robots as robots are very much developed for acting (Brooks, 1991), but there are some advantages of dreaming. Adami (2006, p. 1094) argued that a robot could … spend the day exploring part of the landscape, and perhaps be stymied by an obstacle. At night, the robot would replay its actions and infer a model of the environment. Armed with this model, it could think of – that is, synthesize – actions that would allow it to overcome the obstacle, perhaps trying out those in particular that would best allow it to understand the nature of the obstacle. Informally, then, the robot would dream up strategies for success …

Adami (2006) did not further elaborate on the implementation of dreaming, but Conduit (2007), commenting on Adami’s, idea suggested that it might be a route for further investigation of dreams, by implementing ‘some form of “sleep” algorithm that randomizes memory and combines it in unique arrays’ (p. 1219). In this section, we further motivate the InSim approach for developing mental-imagery-like abilities in robots. In the remainder of the paper, we will use the term ‘robodreams’ when referring to the implementation of dreams in robot. Note that we do not claim that we have achieved dreaming robots, but only model some aspects of dreaming. The following section briefly reviews previous attempts to model imagery-like abilities in robots. There is not enough space here for a comprehensive review of the previous work on implementing simulations in robots, but for example Marques and Holland (2009) provide a good overview of the requirements of and possible architectures for ‘functional imagination’ in robots.

4.1 Robots with mental imagery

Mel’s (1991) robot (arm) Murphy and Chrisley’s (1990) connectionist navigational map are early examples of computational agents using artificial neural networks to construct a cognitive map through a kind of mental imagery based on sensory predictions. Since, there have been advancements in a number of different areas of using at least one of the three types of predictions specified earlier (see section ‘Different forms of anticipatory mechanisms in simulations’), for example:

learn the topological structure of a maze like environment, and navigate it physically or mentally (Hoffmann & Möller, 2004; Jirenhed, Hesslow, & Ziemke, 2001; Nolfi & Tani, 1999; Tani & Nolfi, 1999; Ziemke et al., 2005);

perform goal-directed navigation, where the robots are given a goal-configuration and should find the goal by using the cognitive map as internal/planning resource (Baldassarre, 2002; Hoffmann & Möller, 2004; Tani, 1996);

perform obstacle avoidance (Gross et al., 1999); and

develop allocentric spatial knowledge (Hiraki, Sachima, & Philips, 1998).

Although different tasks have been investigated three different aspects of central concern for simulation theory in general and mental-imagery-like processes in particular: prediction, abstraction and planning. Abstraction concerns the question of how concepts are formed and grounded in sensorimotor states (and simulations) (Holland & Goodman, 2003; Stening et al., 2005; Wintermute, 2012). Planning concerns how simulations can be used to plan, for example, an (optimal) route between places (Baldassarre, 2001). However, since we focus on prediction, it is described in more detail.

4.1.1 Prediction

A crucial aspect for models of mental imagery based on simulations of actions and perceptions is whether the robots can develop accurate predictions, i.e., capture the relationship between actions and their effects, given the context. The models investigating prediction ability have generally used the following set-up: (1) current (sensory) state+motor state (or context) are used as input and the output is the sensory state at the next time step, and (2) the predictions are learnt by comparing the expected sensory state with the actual sensory state. The assumption is that if you are able to acquire good one-step predictions (forward models), they can be used to generate simulations by allowing the predicted sensory state to be used to generate a new covert motor state/action, which generates a new predicted sensory state and so on. Indeed these models have shown both that they are able to produce accurate predictions and that they outperform purely reactive architectures (Gross et al., 1999). However, it should be noted that other approaches exist. Ziemke et al. (2005) evolved a model which internal states where quite different during mental walks from the internal states observed during perception-driven interactions. 3

Although, the above cited models contribute to the understanding of the particular cognitive ability in question, the task of getting a robot or animat to simulate previous or future encounters with the world is far from trivial. Jirenhed et al. (2001) found that their robot was able to achieve one-step prediction of some sensors but failed to predict the ones that were seldom active. Those sensors nevertheless played an important role in turning behaviours as it turned out, and therefore also caused problems for the simulations. Ziemke et al. (2005) failed to develop simulations based on the short-range proximity sensors using a realistic simulation of a Khepera robot and had to resort to a long-range rod sensor, which simplified the detection of corners/turns, and a less complex environment. Hoffmann and Möller (2004) used a Pioneer robot with an omni-directional camera but found that the camera image was of too high dimensionality for the training scheme they used to develop good predictions and the image had to be further processed and simplified.

Furthermore, all models of simulations committed to the idea that sensory predictions should correspond to states of the environment have to deal with noise. Simulations are argued to be able to function on much longer time scales than perception driven behaviour, which means that it will not be corrected via proprioceptive and sensory feedback. If noise accumulates, the predictions will drift and become increasingly imprecise. This is a problem if the simulation processes are to be used for planning future actions or some other task depending on a close correspondence between the simulated perceptions and actions, and the original ones. Gross et al.’s (1999) experiments showed that their model system had some trouble with very noisy data, but when noise was reduced their model was successful at prediction. Furthermore, Hoffmann and Möller (2004) found that there is an accumulation of error as the chains of predictions increase in length.

4.2 Implementation of robodreams

The purpose of implementing robodreams is twofold. On the one hand, it may further refine the InSim hypothesis, on the other it can lead to new and better ways of developing mental imagery like abilities in robots, i.e., it can increase the robots abilities to form internal worlds. In the present paper, we model dreams only at a functional level, drawing inspiration from the phenomenological nature of dreams, especially REM-sleep dreams that are most common early in development.

In particular, we focus on the fact that dreams produce sensory experiences that are sometimes similar to previous waking situations, distortions of waking situations, or non-experienced situations (cf., e.g., Domhoff, 2011; Hobson, 2009; Revonsuo, 2000). Thus, robodreams should incorporate non-experienced events as well as distorted versions of previously experienced events that can later be refined during waking encounters with the environment. Although there is little previous evidence from robotic research that this could be beneficial for the development of simulations: it is for instance possible that robodreams sometimes produce sensory perceptions of non-experienced situations, but which can be similar if not exactly the same as a future environmental state, leading to faster acquisition of true predictions in the waking state. Thus, we are interested in investigating whether the inception phase may establishes associations that produce sensory inputs that improve the quality of simulations by pushing the simulated paths toward the optimal solution. Robodreams may also reduce the amount of training examples necessary to develop simulations of a particular environment. The next section describes the details of the experimental set-up used to provide a proof of concept of the utility of robodreams.

4.3 Proof-of-concept experimental set-up

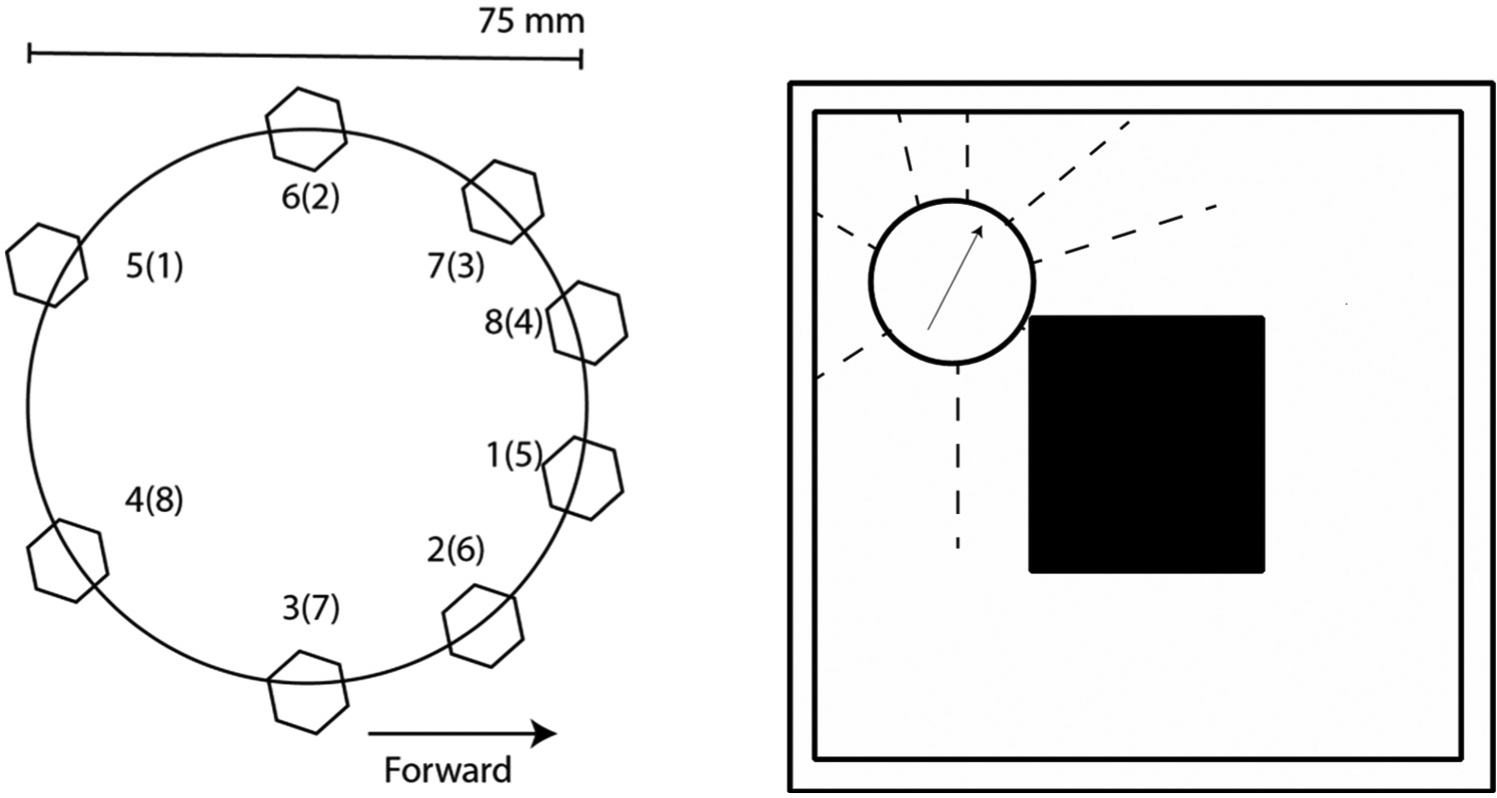

The absence of previous work on how to model dreams in robots, at least on the phenomenological level, makes this an explorative experiment: we therefore investigate different ways of implementing phenomenological aspects of dreams in robots. Firstly, we use two different ways of modelling the nature of the sensory impressions during dreams: randomization and recombination (Table 1). Randomization alters previous sensory impressions randomly such that another sensory impression is created. As discussed earlier, distortions of different kinds are common in dreams (Hobson et al., 2000); further this follows Conduit’s (2007) suggestion of randomizing memories. In recombination, the actual sensory inputs change place rather than become distorted. Figure 3 (left) illustrates how this is done. This aspect somewhat resembles dreams’ lack of orientational stability and frequent shifts of attention (Hobson et al., 2000). Secondly, we implemented two different ways of timing the occurrence of robodreams: in the beginning of each run (after a short (5%) waking period) and intermittent (after a short (5%) waking period) robodreaming, cycling between waking and robodreaming. Although we can determine, to some extent, the development of the amount of dreaming from infancy to adult (Roffwarg et al., 1966), it is not necessarily beneficial for informing the current experiments except for the general insight that dreams may have a larger impact on simulation-forming mechanisms during infancy as the InSim hypothesis states. With regards to the simulation hypothesis, the current experiments are limited to the ‘critical assumption of the theory that perceptual simulations can function as perceptual stimuli’ (Hesslow, 2012, p. 73), i.e., that the self-generated sensory activations are sufficiently similar to those caused by the environment. Thus, we do not, as earlier in experiments (e.g., Svensson et al., 2009b), test the ability of simulated actions to generate the sensory predictions.

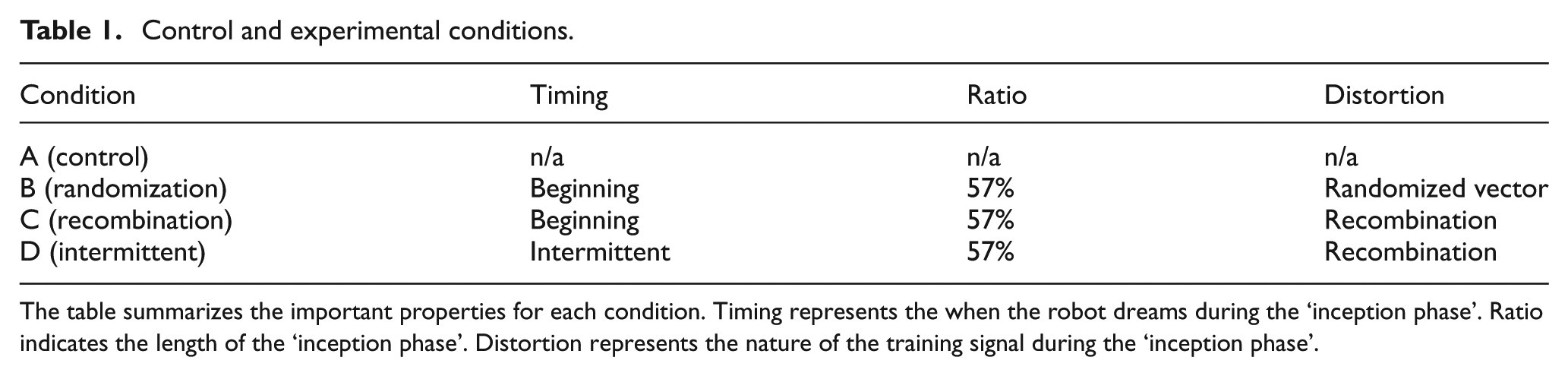

Control and experimental conditions.

The table summarizes the important properties for each condition. Timing represents the when the robot dreams during the ‘inception phase’. Ratio indicates the length of the ‘inception phase’. Distortion represents the nature of the training signal during the ‘inception phase’.

In this initial proof-of-concept experiment, we focus on investigating the effects of robodreams on the rate of acquisition of simulations in waking states. According to the InSim hypothesis, waking periods are used during the inception phase to finetune the simulations. Thus, after a period of robodreaming, simulations should be acquired faster than waking states alone. In the following, the details of the experiment are described.

4.3.1 Robot and task

The experiment is based on a simulated E-puck robot (www.e-puck.org) running on a robot simulation platform (Webots 6.4.1). The e-puck has a circular body with a diameter of 75 mm, and is equipped with eight infrared proximity sensors around its body with a range of approximately 6 cm. It has two motors, which independently control the two wheels, one to each side. The task of the robot is to develop simulations that reactivate the sensory experiences that would occur if the robot were to navigate through a square shaped environment (Figure 3, right) similar to previous worlds used to investigate the simulation hypothesis (Svensson et al., 2009b). The robot is controlled (during both learning and testing) by a simple behaviour program (cf. Braitenberg, 1986) that allows the robot to move along the corridors clockwise, but never influences the simulations directly.

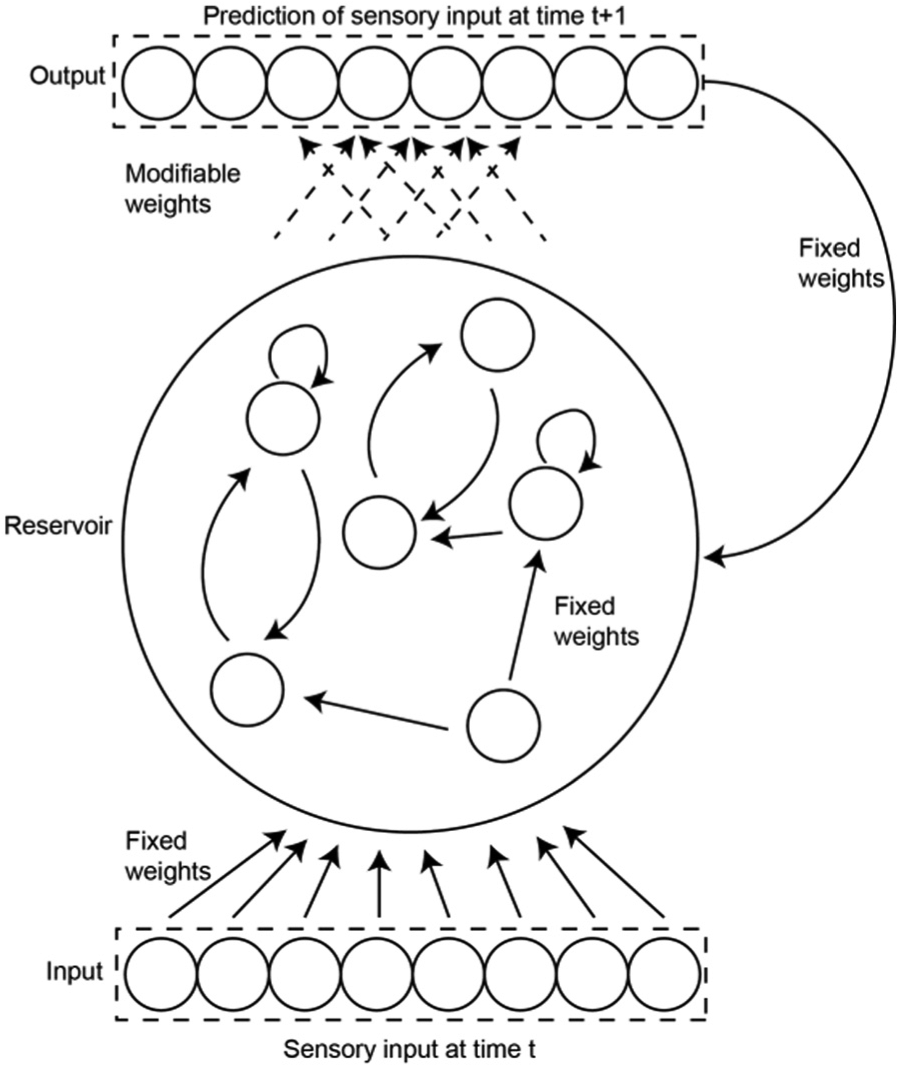

4.3.2 Simulation architecture

The architecture that models the simulation mechanisms is based on a so-called echo state network (ESN). The motivation for using an ESN is twofold; firstly it provides interesting computational features such as acting as a fading memory (Jaeger, 2001; Maass, Natschläger, & Markram, 2002) and secondly, although at an abstract level, it provides a somewhat biologically plausible model of one way in which the brain implements environmental predictions via cortical columns (Svensson et al., 2009a, 2009b).

The reservoir of the ESNs are derived from a random weights matrix populated with randomly chosen connectivity and adjusted to have a spectral radius below, but close to 1 (which sometimes are considered a necessary requirement for the network to function properly; Jaeger, 2002). The ESN reservoir is cycled according to standard discrete time recurrent neural network (DTRNN) equations: ai =Σyj wij +ii , where ai is the activation of node i, yj is the output of the previous node j, and wij is the value of the weight between nodes j and i. The output is computed by: yi =tanh(ai ). The information from the eight infrared sensors are propagated to the reservoir via an input layer of eight nodes, which scales the sensory input to values between 0 and 1. The input weight layer is randomly generated, sparsely connected, and fixed (i.e., not trained). The nodes within the reservoir are connected via a modifiable (randomly initiated) layer of weights to a layer of eight output nodes with sigmoid activation functions. There is also a fixed and sparsely connected feedback layer of weights from the output neurons that, at every time step, project back to the reservoir. Figure 2 illustrates the flow of information in the architecture and the nature of the weight layers. For the experiments, we created 145 ESN with different reservoirs and input/output weight matrices and each condition (Table 1) were tested on all 145 ESN.

Schematic illustration of the basic ESN structure used to implement simulations. The architecture consists of four layers of weights represented by arrows in the figure (input, output, feedback and reservoir). Continuous arrows represent fixed weights, while dashed arrows represent modifiable weights.

The output layer is trained online with a simple supervised learning scheme using a perceptron learning rule: Δwi =α(tp −ap ) xi p , where Δw is the weight change, α is the learning rate, tp is the target (or training) signal, ap is the activity of output node p, and xi p is the activation of the input node. The robot always, after initiation of weight matrices, navigates the environment for 200 time steps without any learning to eliminate unwanted effects of the random initialization of the ESN (Jaeger, 2002).

4.3.3 Conditions

We have investigated four conditions as described in the section ‘Proof-of-concept experimental set-up’ (Table 1). In the control condition, the predictions at time t−1 are trained with the sensory input at time t using online learning, without any form of robodreaming. In the experimental conditions (see B–D in Table 1), the same learning method is used but the content of the training signal (the nature of the robodreams) and timing (when robodreams occur) are altered as follows. In condition B, a random string is generated at each time step and subtracted from the actual sensory input. In condition C, the training signal is altered by shifting the sensory inputs as described in Figure 3. In condition D, the training signal switches between robodream input and waking input every 150th time step.

Schematic representation of the e-puck robot and the environment. (Left) The hexagons represent the placement of the infrared proximity sensors. The sensors are numbered from 1–8 and the numbers in parenthesis represent how the sensor inputs were recombined in condition C. (Right) A somewhat simplified schematic depiction of the world used in the current set-up. The arrow represents the robots direction and the dashed arrows represent the infrared sensors.

4.3.4 Evaluating simulations

The robot’s ability to simulate is evaluated every time the root mean squared error (rmse) of sensory predictions of the next time step drops below a threshold (which decreases incrementally). This testing phase corresponds to ‘blindfolding’ (i.e., preventing the robot from receiving sensory inputs) the robot and letting it predict what the next sensory impressions would have been had it continued to get sensory input from the environment. This is implemented by replacing the input from the robots infrared sensors with the activation values of the eight output nodes, which are directly copied to the input layer (not represented in Figure 2).

5 Results

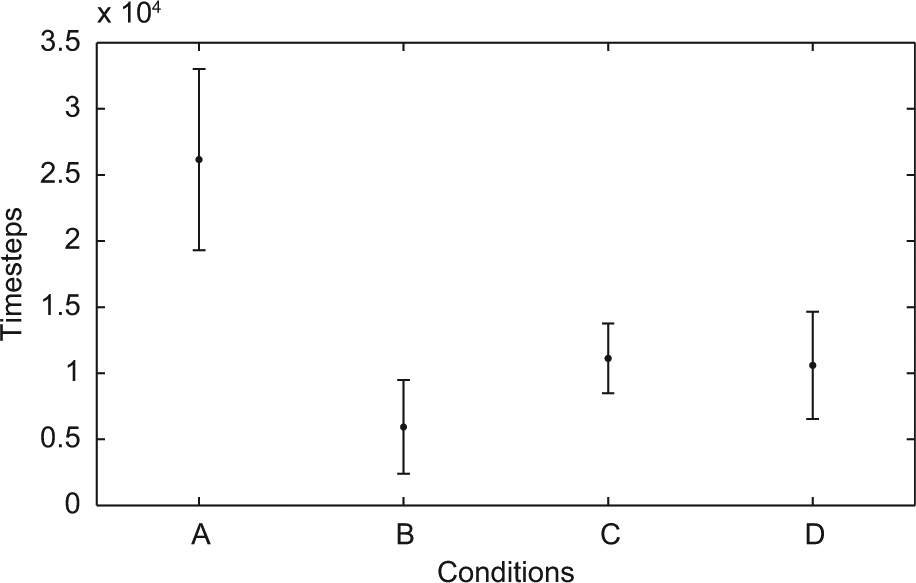

We first compared the results between the different conditions (cf. Table 1) of all 145 ESN. In particular, we measured, in the testing phase, the rmse of all sensors summed over a period of 100 time steps of simulation performance (termed rmseTotal) and also recorded the number of time steps of waking behaviour, i.e., timesteps without robodreaming (termed Wakestep), needed to reach a good simulation according to the method described in the previous subsection. A Jarque–Berra test showed that the samples were not normally distributed (p<.05). A Kruskal–Wallis test (using Matlab’s function for the test) showed that the data contained significant differences (df=3, χ2=147.9571, p<.01). (Matlab’s multiple comparison procedure was used further to analyze the differences between conditions.)

The main finding was that waking learning preceded by phases of robodreaming lead to faster acquisition of good simulations (cf. Figure 4). The means of all experimental conditions were significantly lower than the control condition. Furthermore, condition B was significantly faster than conditions C and D. Conditions C and D did not differ.

Timesteps until simulation threshold (145 ESN). The x-axis represents the different conditions and the y-axis the number of time steps of waking behaviour (Wakestep). The mean (dot) and standard deviation (error bars) is plotted for each condition.

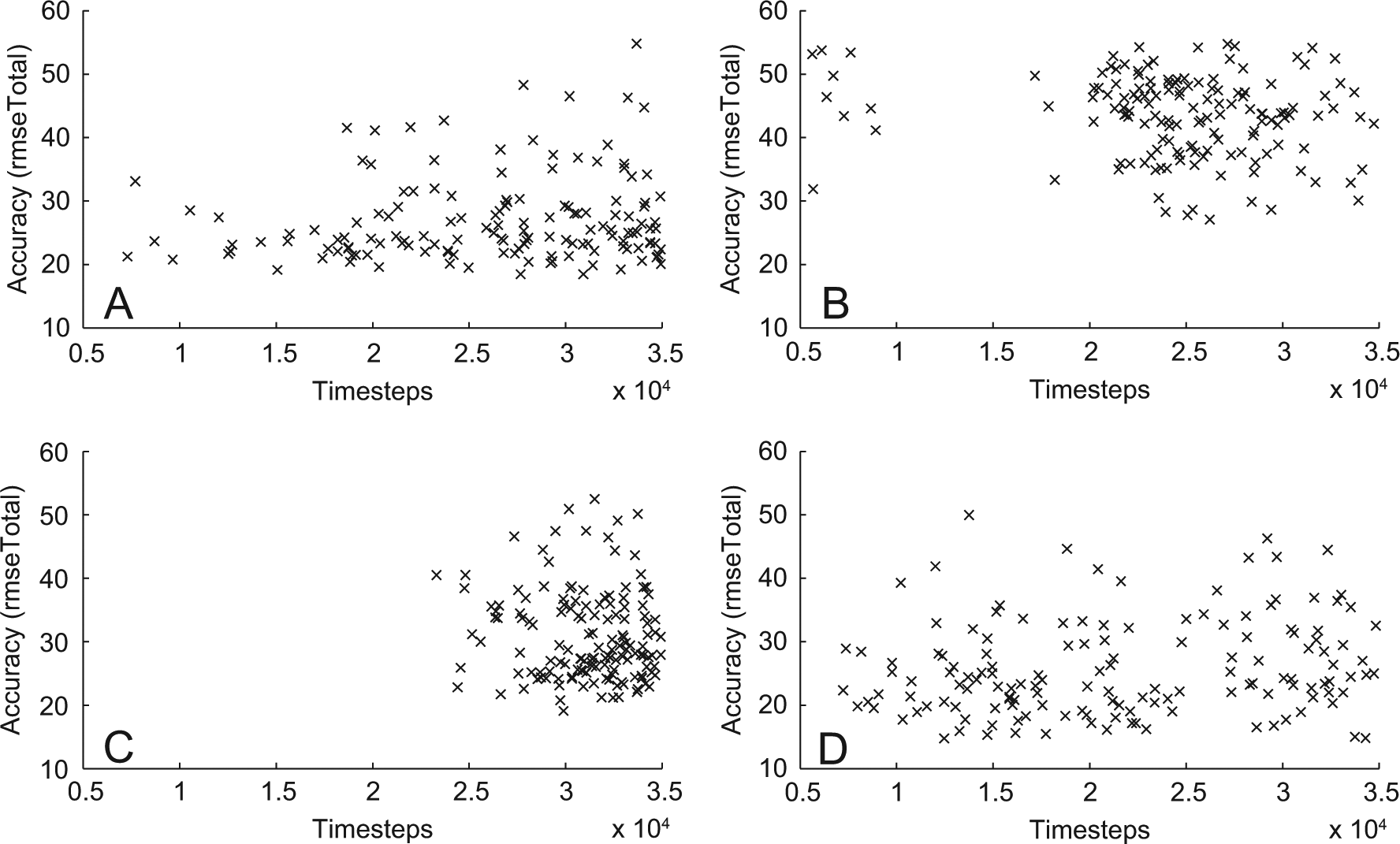

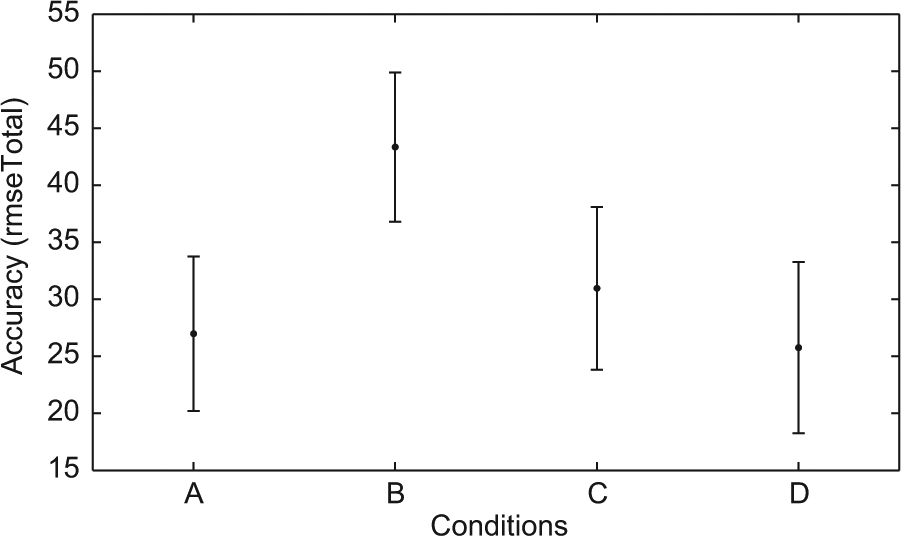

One could expect that with more training better results would accumulate with more training and that the speed at which simulations emerge is dependent on the accuracy of the simulation. However, as can be seen in the scatter plots in Figure 5 there is no clear correlation between number of timesteps and accuracy (we only found a small negative significant correlation −0.25, p<.05 in condition C and a small positive correlation in D). Therefore we need take this into consideration when evaluating the speed of acquiring simulations (Kruskal–Wallis: df=3, χ2=269.42, p<.01). In terms of overall accuracy (rmseTotal), the control condition and condition D, were significantly better than conditions B and C.

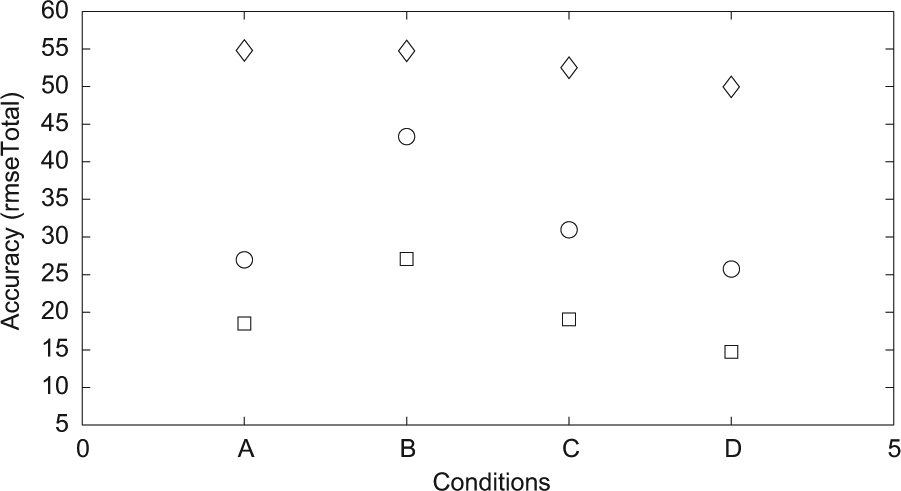

Accuracy versus timesteps. The scatterplot shows the total number of timesteps of each ESN plotted against its accuracy for all different conditions (A-D).

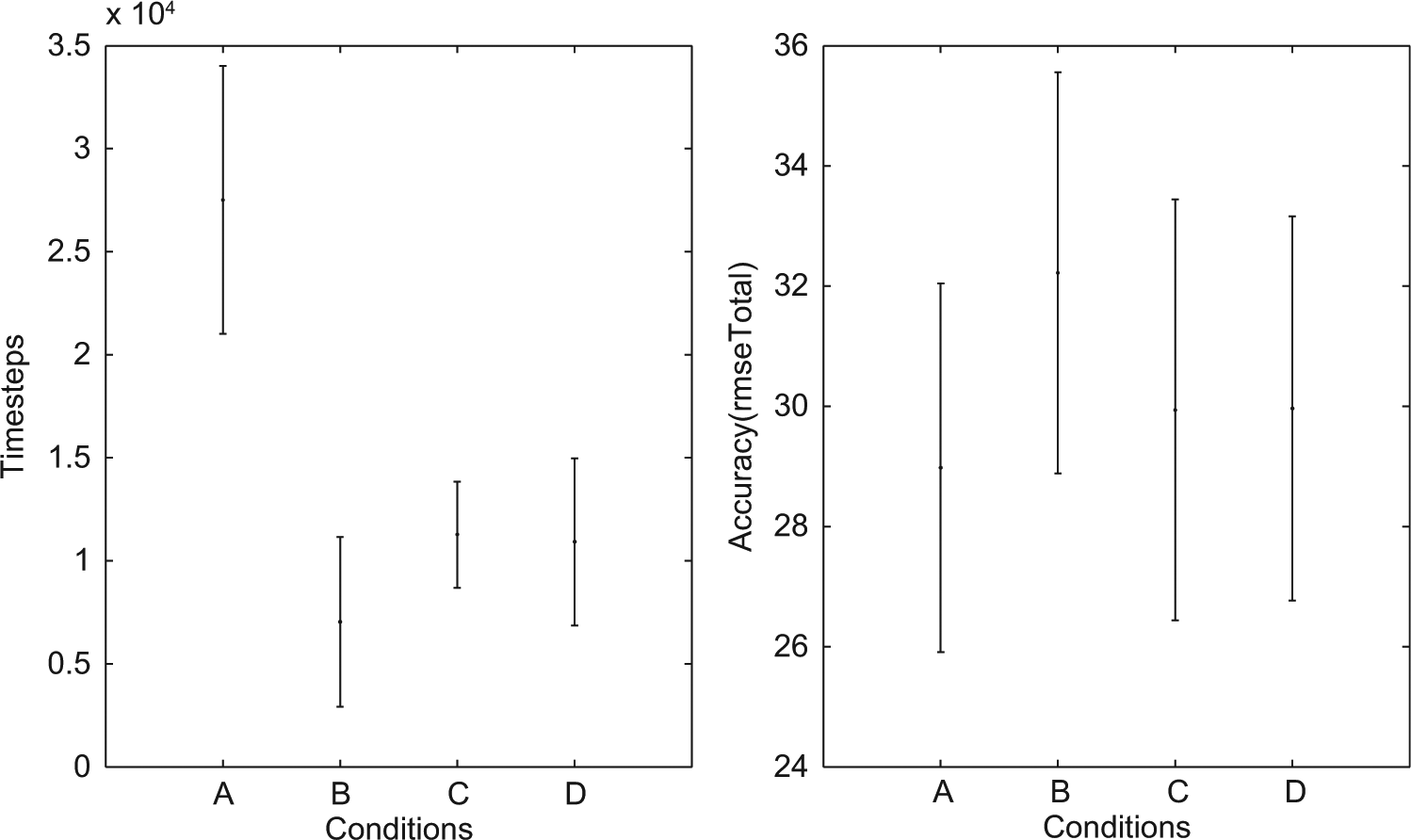

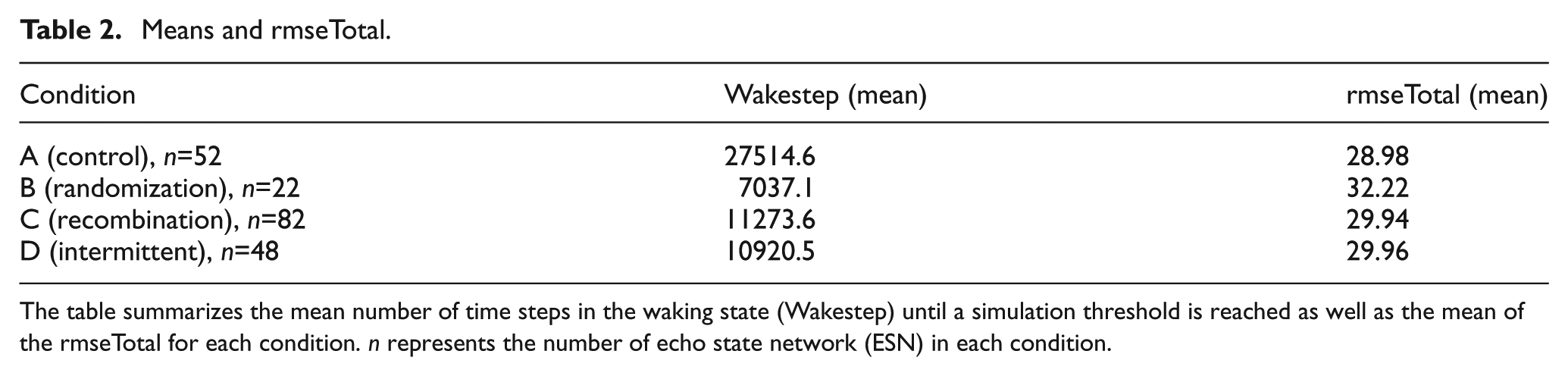

However, the comparison of the best 145 ESNs is somewhat inconclusive, where assessing the quality of the simulations is concerned, since this includes ESNs with a relatively high rmseTotal (cf. Figures 6 and 8). Such networks do not simulate at all or only for a few time steps. Instead we have determined by qualitative inspection of the sensor and simulated sensor values, that a rmseTotal of 36 forms a threshold below which good simulations, i.e., where the simulated input follows the fluctuations in actual sensor input from the environment during the entire evaluation phase, can reliably be found. We therefore compare networks that have a rmseTotal between 25 and 36, summarized in Table 2. Since the sample sizes of the different conditions differ, we therefore used the Wilcoxon rank sum test (in Matlab) to analyze the differences between conditions. In terms of time steps in waking until a simulation was formed this subset of ESN produced results (summarized in Figure 7) comparable with those of all 145 ESNs. Since we choose networks based on the rmseTotal score all conditions now have more similar means with regards to the accuracy (rmseTotal). However, condition B was found to differ from the other conditions (p<.01).

Accuracy of simulations for all 145 ESN. The x-axis represents the different conditions and the y-axis the rmseTotal. The mean (dot) and standard deviation (error bars) is plotted for each condition.

Timesteps and accuracy subset of ESN. The mean (dot) and standard deviation (error bars) is plotted for each condition. (Left) The diagram represents the number of waking timesteps (Wakestep) until a simulation threshold was reached for the ESNs with an rmseTotal between 25 and 36. (Right) The diagram represents the accuracy of simulations for the ESNs with an rmseTotal between 25 and 36.

Max and min values. Diamonds represent max values, circles means and squares min values for each condition.

Means and rmseTotal.

The table summarizes the mean number of time steps in the waking state (Wakestep) until a simulation threshold is reached as well as the mean of the rmseTotal for each condition. n represents the number of echo state network (ESN) in each condition.

Thus, condition B is faster but less accurate, while conditions C and D produce similar results both in terms of accuracy and rate of acquisition. It is, however, questionable whether condition B is faster in an absolute sense. Analysis of the simulations seen in condition B (with rmseTotals ranging between 25 and 36) showed that the ESNs only produced simulations of five or six of the eight sensors. Thus, it is likely that this was the cause of the faster development of simulations with higher rmseTotal scores.

Furthermore, we performed an additional trial with robodreams consisting of random values not related in any way to previous sensor input, but that did not produce any simulations, indicating that the effects are not due to introducing noise or randomness per se. Also, as seen in Figure 8, representing the maximum and minimum rmseTotal scores for the different conditions, condition D produced the best simulations. Thus, intermittent robodreaming might have a further advantage in terms of generating good simulations.

6 Discussion

Although only a first proof-of-concept study, we have shown that dream-like mechanisms do have an effect on the robot’s ability to simulate. The results suggest that periods of dream-like sensations make the learning based on real sensory input more efficient. Although dreams may be seen as a somewhat random replay of previous interactions, the results suggest that the way in which the sensory impressions get replayed is important and affects the performance of waking learning, which indirectly supports dream theories that attribute roles to phenomenological states, such as Revonsuo’s (2000) threat simulation theory. However, future work should more closely model different aspects of the phenomenological content of dreams. For example, the recombination condition could be extended to more closely model different types of attentional shifts and orientational instabilities observed in dream reports.

The InSim hypothesis is developmental in the sense that the function of the model evolves over time by shifting the focus from one phase to the other, which results in a shift from using dreams primarily as a way of re-fining simulation to using dreams as a way to generate simulations that can be used in other aspects of cognition. The focus here was on the inception phase, but a further question not addressed in the current set-up, which should be further investigated is the interdependencies of the two phases, as they are likely to overlap and to some extent coexist. Another aspect for developing mental imagery in robots is switching during online interactions between simulated and experienced states to allow the robot to go beyond offline ‘day dreaming’ to use mental imagery in real time problem solving. As mentioned earlier the current set-up focused on one part of the simulation hypothesis, i.e., that the self-generated sensory activations are sufficiently similar to those caused by the environment, but further experiments could also include explicit tests of how robodreams affect the ability of self-generated simulated actions to generate sensory predictions. This may also allow further tests of how robodreams can contribute to adaptive behaviour in, for example, novel situations.

Our results further show that reservoir systems can be used to model simulations and robodreams. However, it should be noted that a fraction of the ESN used did not produce any or very bad simulations. This could have been caused by the memory capacity or dynamics of the ESN, but there is need for further analysis of whether there are any properties of the ESN that systematically contribute to the development of simulations. Such analysis and further optimization of the training procedure could enable robodreams with more complex environments and tasks.

In sum, we have shown how insights from the simulation hypothesis and dream research can be beneficial in the context of mental imagery in robots. Whether or not our robots dreamt of electric sheep, we couldn’t say.

Footnotes

Acknowledgements

We thank the anonymous reviewers for their helpful comments.

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Notes

About the Authors