Abstract

The Rise of Generative Artificial Intelligence

Unless you have been deliberately living under a rock, I assume that most of you are familiar with ChatGPT: the artificial intelligence chatbot developed by OpenAI (https://openai.com/chatgpt). ChatGPT has become the poster child of generative artificial intelligence (AI), a category of AI systems designed to generate new output based on data the systems are trained on (Gozalo-Brizuela & Garrido-Merchan, 2023). Since the release of its research preview in late 2022, ChatGPT has captivated the attention of many (who have not been deliberately living under a rock) with its ability to write up information about pretty much anything, from baking cakes to burying bodies. According to OpenAI, around 80% of the U.S. workforce could have at least 10% of their work tasks impacted by the introduction of generative AI systems such as ChatGPT. More importantly, with the implementation of generative AI, about 15% of all work tasks in the U.S. can be completed significantly faster at the same level of quality (Eloundou et al., 2023). As research conducted in the U.S. is readily generalizable to the rest of the world (yes, this is sarcasm), the rise of generative AI thus presents a compelling challenge for global work practices and policies (Dwivedi et al., 2023; Hacker et al., 2023). While studies have begun to uncover the beneficial impact of generative AI systems on productivity (Brynjolfsson et al., 2023; Noy & Zhang, 2023), the impact of this change on employability remains to be seen. Thus, as one of the individuals whose future employability will likely be influenced by the implementation of ChatGPT and other generative AI systems, I felt this was as good of a time as any to briefly reflect on the implications of generative AI for sustainable employability.

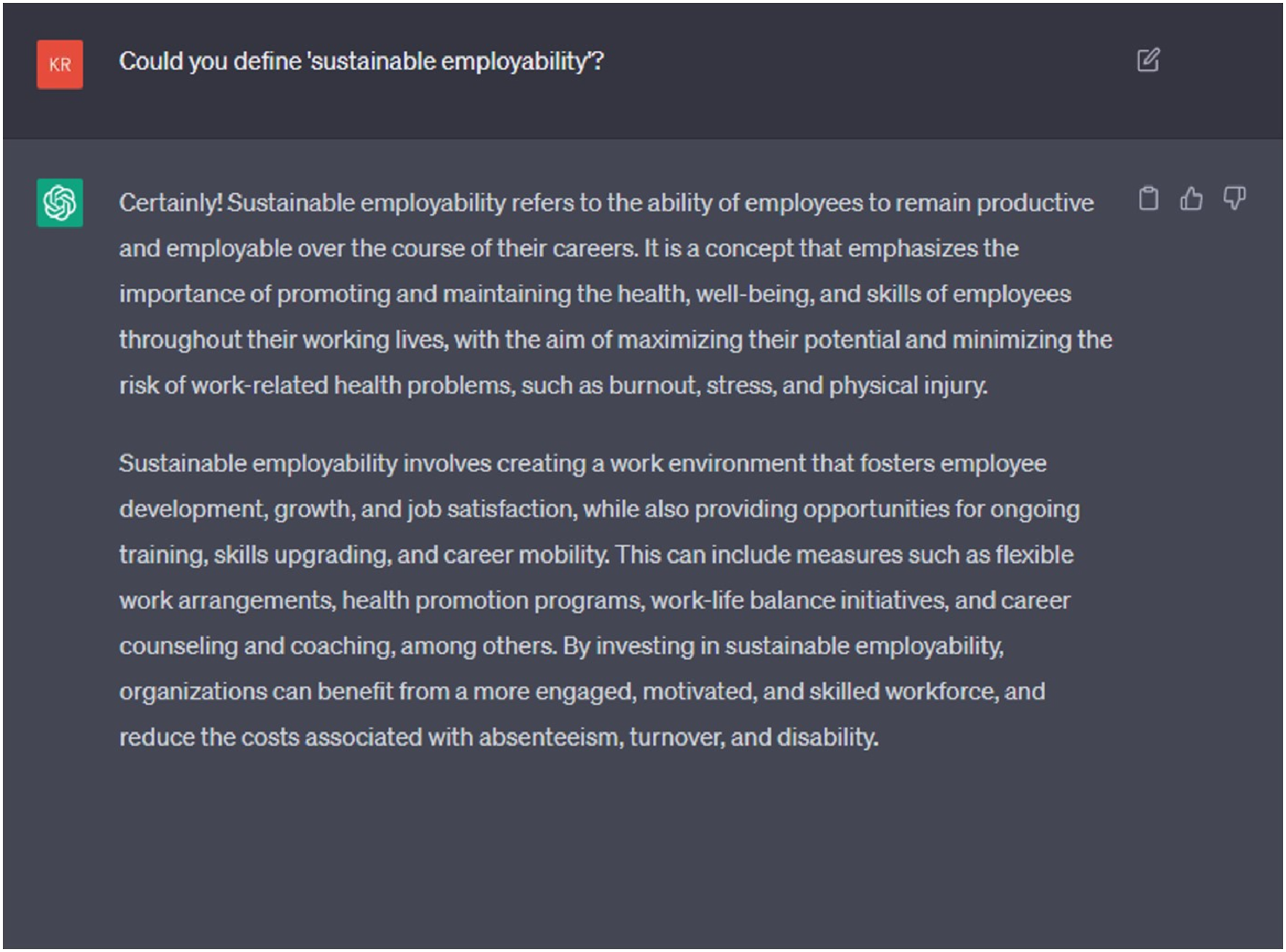

Before I begin, I should mention that I am by no means an expert in sustainable employability. Thus, to make sure we are all on the same page, I present the following ChatGPT-generated definition of sustainable employability (Figure 1), which is “the ability of employees to remain productive and employable over the course of their careers.” I understand that this definition may not be the most comprehensive (though, supposedly, none of the existing definitions are), but for the purpose of this GOMusing, it will do. Sustainable employability as defined by ChatGPT. The above definition seems to have been synthesized from several existing conceptualizations, including the capability approach (van der Klink et al., 2016) and the vitality scan approach (Brouwers et al., 2015).

Developing Digital Literacy

If access to generative AI can help employees complete their tasks faster without compromising quality, I imagine that most employers will want to capitalize on the productivity gains offered by generative AI. As such, for employees looking to stay sustainably employed in such a landscape, it will be beneficial to develop, first and foremost, skills that enable effective interaction with generative AI. I have decided to tentatively lump these skills together under the term ‘digital literacy’, and as much as I would have liked to simply define the term as ‘experience with ChatGPT’ (which has already popped up as a requirement in several job advertisements online), I believe that good digital literacy in the age of generative AI means developing the following three skills, at the very least:

Prompt Engineering (i.e., the Evolution of Googling)

Unlike ‘conventional’ AI systems that are meant to recognize patterns and make predictions (e.g., your email spam filter, your personalized Netflix recommendations, etc.), generative AI systems are meant to output new content in whatever format, be it text, audio, or images. However, much like these conventional AI systems, the quality of a generative AI’s output heavily depends on the quality of its input (i.e., prompts). This concept is more colloquially known as ‘garbage in, garbage out.’ In other words, the quality of a prompt will determine the accuracy, reliability, and usefulness of an AI system’s generated output (Figure 2). One of the images generated by DALL-E, an AI system designed to create realistic images and art from text-based descriptions (https://openai.com/product/dall-e-2). The prompt I used to get this image was “A person typing in a manuscript on a small laptop, in the style of van Gogh”. Imagine what you could do with a prompt that is less garbage than mine!

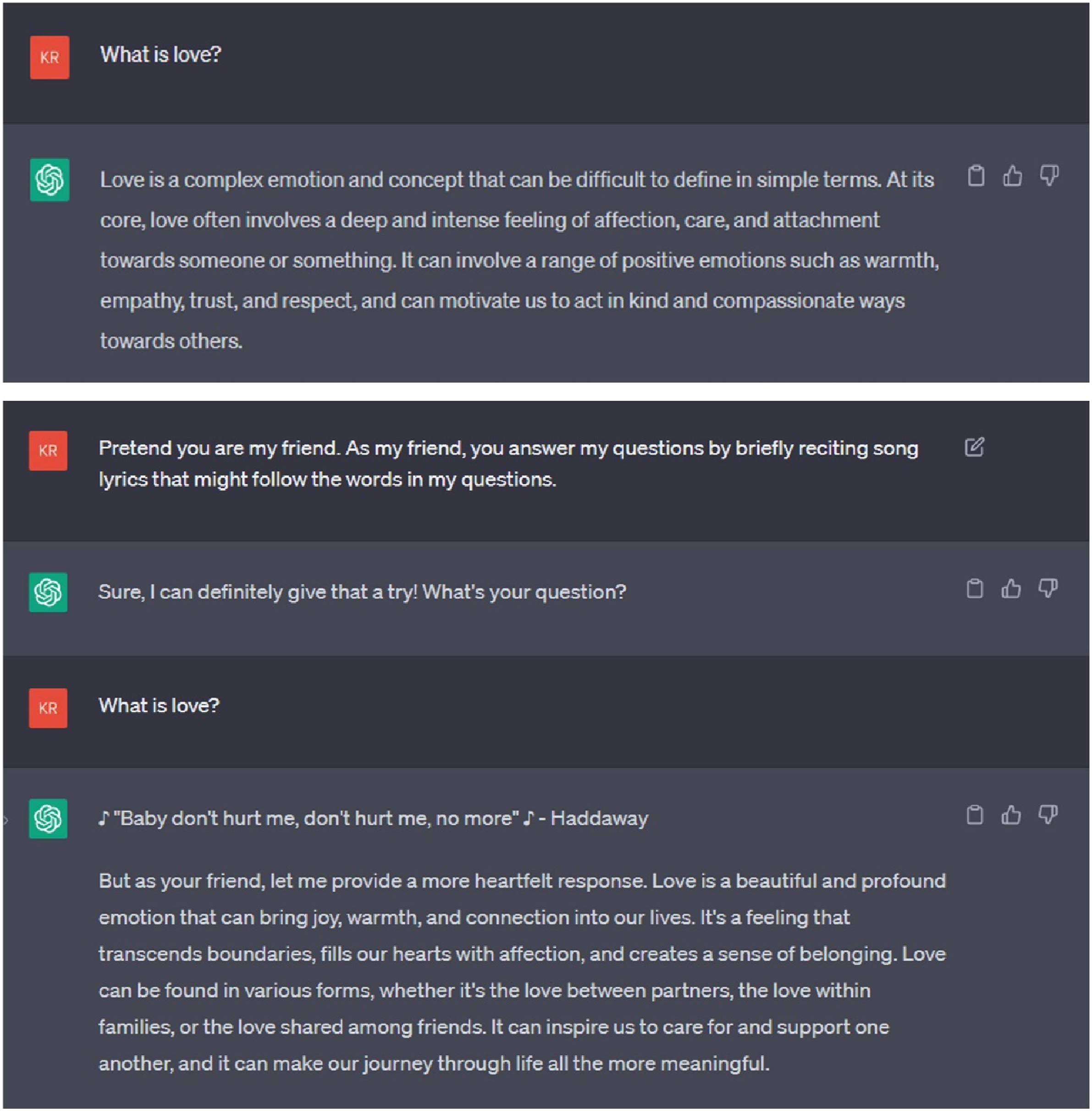

This highlights the importance of ‘prompt engineering’ – a process of designing and refining prompts to get generative AI systems to output what you want them to output (Figure 3). Prompt engineering is a bit like woodworking in that it requires (a) a clear idea of what the end product should look like, (b) a clear overview of the materials and components required (i.e., the data or information an AI system needs to generate the end product), and (c) the correct steps to transform your slab of lumber into an avant-garde pallet crate (i.e., the sequence of prompts that guide the AI system’s interaction with the given components). More importantly, exactly like woodworking, prompt engineering is a skill best learned through trial and plenty of error. A basic example of prompt engineering. (top) ChatGPT’s initial response to “What is love?” was comprehensive, but not exactly what I was looking for. (bottom) After some arguably wonky prompt engineering, I was able to get ChatGPT to provide a more suitable response to my “What is love?” question (and becoming friends in the process).

Resourcefulness

While tasks such as creative writing, financial analysis, survey research, data analysis, web design, and mathematics may be made more efficient with the implementation of AI systems (Eloundou et al., 2023; Frey & Osborne, 2017), other use cases that are not immediately obvious may exist. For instance, ChatGPT’s ability to generate code snippets may be used as a stepping stone to the completion of much larger and more complex projects, such as programming research surveys, the deployment of e-commerce websites, or the development of different functionalities within a smartphone app. To remain sustainably employed in the age of generative AI, it is thus important for employees to cultivate resourcefulness – the ability to find creative and effective ways to approach work-related challenges, even when resources are limited, and situations are less than ideal. Think of resourceful employees as those with a ‘generative AI radar’ (a ‘gAI-dar’, if you will), which allows them to more easily (a) recognize opportunities that can benefit from the implementation of generative AI systems, and (b) notice occasions in which use of generative AI systems may be less appropriate. Cultivating gAI-dars is a bit like learning to play the guitar in that it takes practice and time with the instrument: just because you know how to play ‘Wonderwall’ does not mean you have to play ‘Wonderwall’ – there is a place and time for it. Likewise, it will take practice and time for employees to more fluently recognize when- and when not to use generative AI. For instance, imagine an Instagram-based promotional campaign where all content is automatically created by ChatGPT and DALL-E. Would that work?

Non-debilitating Perfectionism (a.k.a. Attention to Details + Critical Thinking)

Coming back to the concept of ‘garbage in, garbage out’, it is also important for employees to be able to recognize when an input or output is actually garbage. Although generative AI systems can produce an almost limitless amount of text, images, or audio, consider – when is it all up to par? An important limitation of generative AI systems are the data these systems are trained on (and this is something that responsible AI companies will point out to users). For instance, if you have ever tried translating something using an AI system, the more grammatically-savvy among you may have noticed that translation results can sometimes be a bit sketchy. The results may sometimes not follow the most appropriate grammar, and they may also include the incorrect meaning of certain words given specific contexts. This is because the AI system attempting the translation was likely given limited amounts of appropriate translation data for that language, and it was thus not yet able to learn how to ‘correctly’ translate things as a, say, bilingual speaker would. This is where non-debilitating perfectionism, rooted in an employee’s topic literacy and specialized knowledge, comes in. Non-debilitating perfectionism is a skill that involves being critical, thorough, and accurate in work-related tasks, paying close attention to details or nuances that others with less specialized knowledge may overlook. Through critical thinking, employees with good, non-debilitating perfectionism will be able to recognize whether AI-generated output is truly at the same level of quality as human-generated output. In the absence of AI systems that identify whether something is generated by AI (potentially culminating in the hilarious future where AI systems are constantly trying to one-up each other), employees with non-debilitating perfectionism may also be among the first to instinctively suspect that something was generated by AI. Admittedly, mileage may vary: like other scientists, I doubt I would immediately recognize whether or not something was written by ChatGPT (Else, 2023).

The Value of Human Contribution

In a way, developing digital literacy underscores the value of human contributions in the implementation of generative AI. To effectively implement prompt engineering and non-debilitating perfectionism, employees may benefit from specialized knowledge that allows them to (a) clearly outline how a particular task should be completed with the assistance of AI, (b) determine the correct prompts to complete said task, and (c) determine whether the result of the task is of sufficient quality. For instance, anyone can ask ChatGPT to analyse data. However, experienced data analysts can more readily suggest which type of analysis to conduct (I hope), walk ChatGPT through the correct analytical steps (by asking the right questions and providing the most efficient prompts), identify potential mistakes in the generated code, and effectively follow-up on the output produced by ChatGPT. Studies also suggest that the value of human contributions in tasks that involve creativity, ethical decision-making, interpersonal skills, and empathy may be more resistant to substitution by AI (Agrawal et al., 2019; Choudhury et al., 2020; Yilmaz et al., 2023). As such, while the nature of certain work-related tasks may change due to the implementation of generative AI, there is merit in recognizing the importance of human contributions across different tasks and sectors.

The Time for Diplomacy is Nigh

For employers looking to capitalize on the benefits of generative AI, maintaining sustainable employability will involve a reasonable amount of effort. Generative AI is akin to a double-edged sword in that its biggest promise is also its biggest challenge: if an increasing proportion of worker tasks can be completed faster and at the same level of quality as without generative AI (Brynjolfsson et al., 2023; Eloundou et al., 2023), what then? Suppose that an employer has decided to invest in training their employees’ digital literacy. How will the resulting increase in productivity influence employees’ work, health, and well-being? Will employees be given more tasks to complete in the same amount of time, or will employees be given more free time instead? In a similar vein, if employer profit increases due to higher productivity, will employees be compensated with higher wages, or will employees be allowed to work multiple full-time jobs (Mollman, 2023)?

The implementation of generative AI also presents unique challenges across different fields. In academia, researchers are debating, among other topics, which tasks should be outsourced to generative AI, which skills remain essential to researchers, and which steps in an AI-assisted research process should be verified by humans (Cacciamani et al., 2023; Van Dis et al., 2023), particularly because several scientific papers have already been published listing ChatGPT as co-author (Stokel-Walker, 2023). In tech, given that generative AI systems can dramatically assist with the completion of difficult programming tasks, discussions have focused on the potential job displacements that may follow the implementation of generative AI (Tabrizi & Pahlavan, 2023). Various institutions have adopted the use generative AI systems in education, but must balance the merits of teaching the more traditional competencies that education is meant to provide (memorizing, cramming, etc.) with that of more contemporary skills that may give students’ future employability a leg up in a constantly changing landscape of work (Bowles & Kruger, 2023; Cardon et al., 2023).

There is still little empirical evidence for how generative AI will influence our usual organizational psychology constructs, such as job satisfaction, work-life balance, and, well, sustainable employability. I am sure you can agree that it is still difficult to come up with recommendations that make sense. However, despite the different challenges unique to each field, I imagine that fostering sustainable employability in the age of generative AI will no doubt require a collaborative effort between industry and academia. As stakeholders in industry continue to integrate generative AI systems into their workflow, they offer a valuable starting point from which researchers in academia may begin investigating which skills are most relevant for employees to thrive amidst the implementation and continuous development of generative AI (including, but not limited to, digital literacy). In turn, researchers can offer insight into the different organizational and psychological consequences of generative AI systems, along with whether and how effective interaction with generative AI can be taught. As evidence accumulates for the beneficial impact of generative AI at work (Brynjolfsson et al., 2023; Noy & Zhang, 2023), it becomes important for employers to ensure equal access to these systems for all employees. On the other hand, should detrimental effects exist, collaborative policy-making efforts between industry and academia could be initiated to ensure that employees may remain healthy and productive in the rise of generative AI. In short, the discussion about how generative AI will impact the workplace must be made more prominent if both employees and employers wish to maintain sustainable employability.

It is important to note that governmental stakeholders have begun to recognize the potential impact of generative AI for society, forwarding legislations that may ultimately influence how organizations implement generative AI. For example, the European Union has agreed to an AI act which establishes different obligations for both providers and users of AI. Depending on the level of risk that an AI system may pose to society, organisations may be required to register their AI systems in an EU database, employ measures which prevent the generation of illegal content, and publish summaries of copyrighted training data, while users may be required to disclose whether content was generated using AI (EU AI Act, 2023).

An Open Invitation

We are now at a proverbial fork in the road for the role of generative AI at work. We can assume that generative AI systems are only relevant due to ‘hype’, and their relevance will eventually die down (cryptocurrencies, anyone?). After all, it is supposedly costing OpenAI an extraordinary amount of capital just to run ChatGPT’s research preview (Mok, 2023). Alternatively, we can accept that generative AI will have an important, transformative role in the workplace, and encourage employers to openly discuss the implications of generative AI with employees whose work may likely be impacted by it.

Footnotes

Acknowledgments

The author would like to thank Liz Beekman (

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Associate Editor: René Schalk and Joannes Kraak