Abstract

This article, together with a companion video, provides a synthesized summary of a Showcase Symposium held at the 2016 Academy of Management Annual Meeting in which prominent scholars—Denny Gioia, Kathy Eisenhardt, Ann Langley, and Kevin Corley—discussed different approaches to theory building with qualitative research. Our goal for the symposium was to increase management scholars’ sensitivity to the importance of theory–method “fit” in qualitative research. We have integrated the panelists’ prepared remarks and interactive discussion into three sections: an introduction by each scholar, who articulates her or his own approach to qualitative research; their personal reflections on the similarities and differences between approaches to qualitative research; and answers to general questions posed by the audience during the symposium. We conclude by summarizing insights gleaned from the symposium about important distinctions among these three qualitative research approaches and their appropriate usages.

Management scholars now widely accept qualitative research, with as many qualitative papers published in the decade between 2000 and 2010 as in the prior two decades (Bluhm, Harman, Lee, & Mitchell, 2011). Qualitative research has not only grown in quantity but also has produced a substantial impact on the field by generating new theories that have shaped scholars’ understanding of core theoretical constructs (e.g., Bartunek, Rynes, & Ireland, 2006). However, qualitative research cannot be described as a singular approach: Rather, it encompasses a heterogeneous set of approaches. As a result, although qualitative research methods provide researchers with diverse philosophies and toolkits for studying and theorizing the actions of organizations, their members, and their influence on the world, as these tools and methods proliferate, there is an opportunity for enhanced awareness of and sensitivity to the unique assumptions associated with different qualitative methodologies (Langley & Abdallah, 2011; Sandberg & Alvesson, 2011; Smith, 2015). Notably, different approaches to qualitative research often presume distinct ontologies and epistemologies, resulting in different assumptions about the nature of theory and the relationship between theory and method (Morse et al., 2009; Sandberg & Alvesson, 2011).

As qualitative research has proliferated, we have observed a tendency for qualitative papers to invoke a mashup of different qualitative citations. For instance, looking at the methods sections from a sample of qualitative papers we recently reviewed for journals such as Academy of Management Journal (AMJ), Administrative Science Quarterly, Journal of Business Venturing, Journal of Management Studies, and Organization Science, several contained citations to Eisenhardt (e.g., Eisenhardt, 1989a; Eisenhardt, Graebner, & Sonenshein, 2016), Gioia (e.g., Gioia, Corley, & Hamilton, 2013), and Langley (1999)—all in the same paper! Other papers we reviewed contained citations to some or all of these same three authors, together with others such as Yin (2009), Strauss and Corbin (1998), Patton (2002), Denzin and Lincoln (2005), Lincoln and Guba (1985), van Maanen (1979), Golden-Biddle and Locke (2007), Miles and Huberman (1994), and Garud and Rappa (1994). Although these different methodological citations may be relevant on their own and in various combinations, more often it seems that such diverse methods are cited without attending to their different and potentially incommensurable assumptions.

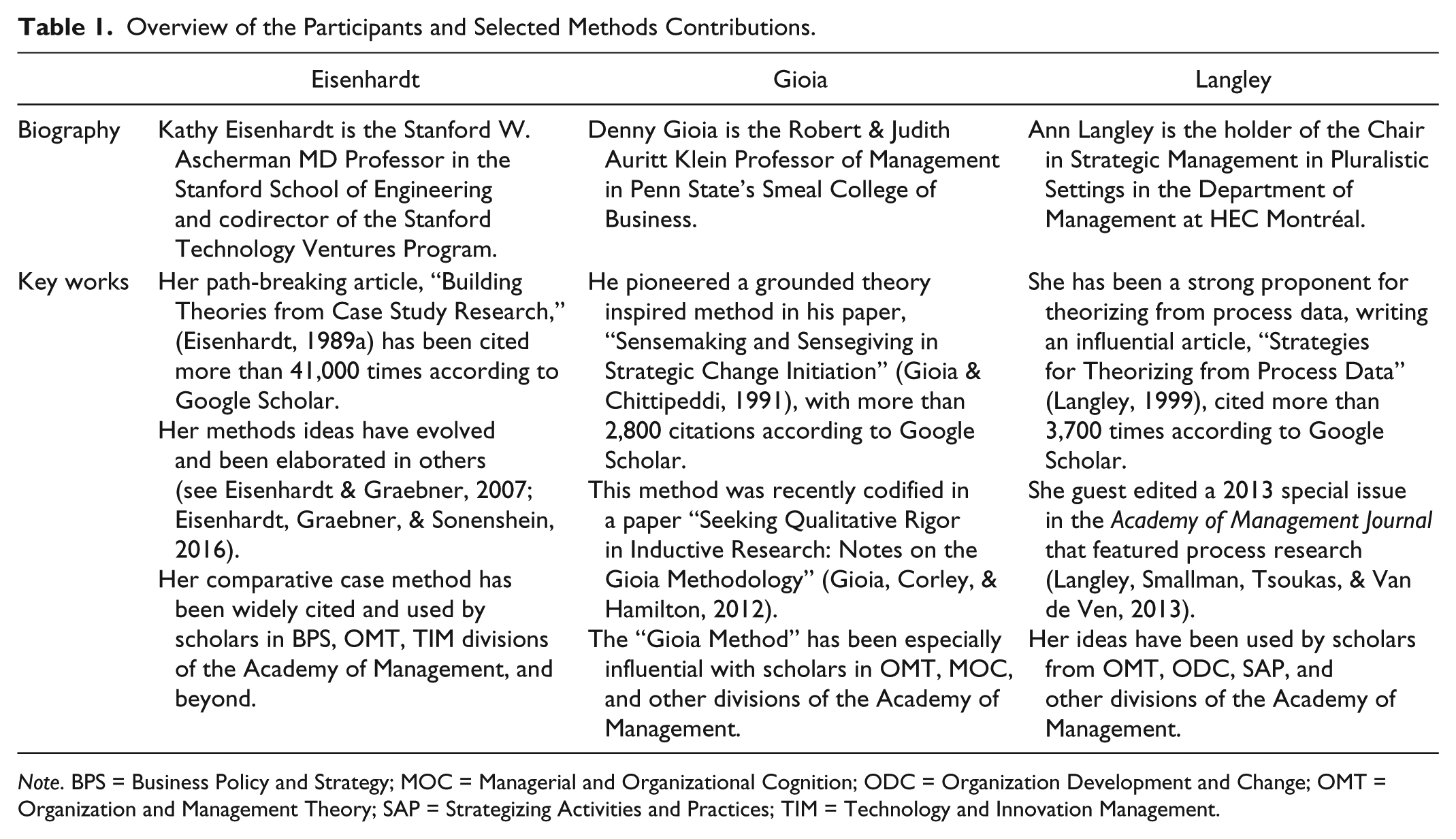

Inspired by such experiences, we organized a symposium to help frame our thinking about how to use qualitative methods (i.e., the tools in our toolbox) in a more disciplined way. Our basic intuition is that methods are tools; some tools are good for certain purposes, whereas other tools are good for other purposes. Specifically, at the 2016 Academy of Management Annual Meeting in Anahiem, California, we brought together three scholars who have been particularly influential in shaping how we conduct qualitative research in our field: Denny Gioia, Kathy Eisenhardt, and Ann Langley. Although Denny was unable to attend in person, he recorded his remarks via video, and Kevin Corley, a longtime collaborator, kindly participated in the questions and answer session on Denny’s behalf. Table 1 provides an overview of the three key participants and some of their methodological contributions.

Overview of the Participants and Selected Methods Contributions.

Note. BPS = Business Policy and Strategy; MOC = Managerial and Organizational Cognition; ODC = Organization Development and Change; OMT = Organization and Management Theory; SAP = Strategizing Activities and Practices; TIM = Technology and Innovation Management.

By organizing this symposium, we aspired to provide a forum for these influential scholars to present their perspectives on qualitative research, and engage in an interactive discussion with each other and the audience about their methodological similarities and differences. Although the approaches espoused by these scholars are commonly utilized by management scholars, by no means do they exhaust the ways that we might engage in theory building through qualitative research. Rather, these three scholars are notable exemplars and collectively provide a sense of the range of approaches available to qualitative researchers. We had three specific goals for the symposium: First, we wanted to provide academy members an opportunity to hear three leading scholars describe their personal approaches to qualitative research. Second, we hoped to foreground some important similarities and differences among these three approaches—thereby fostering greater sensitivity to critical methodological issues among researchers. Finally, we aimed to generate discussion and debate about appropriate combinations of qualitative methods, research designs, research questions, and theoretical insights.

We have written this paper to accompany the video of the symposium. In doing so, we have synthesized the discussion to increase management scholars’ sensitivity to the importance of theory–method fit in qualitative research. Based on transcripts from the symposium and the panelists’ presentation materials, we have integrated the panelists’ prepared remarks and interactive discussion into three sections: an introduction by each scholar to her or his own approach to qualitative research; their personal reflections on the similarities and differences between these approaches, and answers to questions posed by the audience during the symposium. We conclude by summarizing insights gleaned from the symposium about important distinctions among these three qualitative research approaches and their appropriate applications.

An Introduction to Three Qualitative Methods

Denny Gioia

Overview

Here’s the opening passage from my recent methods piece with Kevin Corley and Aimee Hamilton in Organizational Research Methods (ORM): What does it take to imbue an inductive study with “qualitative rigor,” while still retaining the creative, revelatory potential for generating new concepts and ideas for which such studies are best known? How can inductive researchers apply systematic conceptual and analytical discipline that leads to credible interpretations of data and also helps to convince readers that the conclusions are plausible and defensible? (Gioia et al., 2013, p. 15)

For the past 25 years, I’ve been working to design and develop an approach to conducting grounded-theory-based interpretive research to accomplish just these aims. My main focus has been on the processes by which organizing and organization unfold, tipping my hat to my old friend Ann Langley (1999) who articulated the processual view so very well. My approach revolves around what I consider to be perhaps the single most profound recognition in social and organizational study: That much of the world with which we deal is socially constructed (Berger & Luckmann, 1967; Schutz, 1967; Weick, 1979). This recognition means that studying this world requires an approach that captures the organizational experience in terms that are adequate at the levels of (a) meaning for the people living that experience and (b) social scientific theorizing about that experience.

Quite honestly, I was also motivated to devise a systematic methodology for inductive research because too many nonqualitative scholars simply don’t believe that inductive approaches are rigorous enough to demonstrate scientific advancement (see Bryman, 1988; Campbell, 1975; Popper, 1959). When I started out on this project, I dare say that most researchers (Kathy Eisenhardt notably excepted) saw qualitative research as a way to report impressions and cherry-pick quotes that supported those impressions, a variation in the old theme of “My mind is made up, do not confuse me with the facts.” My assumptions and stances led me to devise an approach that allows for a systematic presentation of both first-order analysis, derived from informant-centric terms or codes, and second-order analysis, derived from researcher-centric concepts, themes, and dimensions (see van Maanen, 1979, for the inspiration for the first-order/second-order terminology).

Some basic steps

As the research progresses, I start looking for similarities and differences among emerging categories. I bend over backward to give those categories labels that retain informants’ terms, if at all possible. I then consider the constellation of first-order codes. Is there some deeper structure or process here that I can understand at a second-order theoretical level?

When all the first-order codes and second-order themes and dimensions have been assembled, I then have the basis for building a data structure. This is perhaps the most pivotal step in the entire research approach, because it shows the progression from raw data to first-order codes to second-order theoretical themes and dimensions, which is an important part of demonstrating rigor in qualitative research. To me, a data structure is indispensable for this style of work. I kind of have a guiding mantra for the data structure that I express colloquially, which goes like this: “You got no data structure, you got nothing.’” I know the statement is over the top, but it keeps me focused on obtaining evidence for my conclusions.

As important as the data structure might be, it’s nonetheless only a static photograph of an inevitably dynamic phenomenon. It allows insight into the content of my informants’ worlds, the “boxes” in a boxes-and-arrows diagram, if you will. You can’t understand a process unless you can articulate the “arrows”; thus, that photograph needs to be converted into a movie (Nag, Corley, & Gioia, 2007) that sets the concepts in motion and constitutes the “holy grail”—the grounded theory itself. The grounded theory is generated by showing the dynamic relationships among the emerging concepts. Properly done, the translation from data structure to grounded theory clearly illustrates the data-to-theory connections that reviewers so badly want to see these days.

Of course, there’s an opportunity for inspiration in this process, too, of what I like to call the “Grand Shazzam!” (see Gioia, 2004), some flash of insight about how the revealed processes explain how or why some phenomenon plays out. I sometimes use a biological metaphor to describe the transformation from a data structure to a grounded theory model. If you think of the data structure as the anatomy of the grounded theory, then the grounded model becomes the physiology of that theory. Writing the grounded theory section then amounts to explaining the relationship between the anatomy and physiology that yields a systematically derived, dynamic, inductive theoretical model that describes or explains the processes and phenomena under investigation. This model chases not only the “deep structure” of the concepts as Chomsky (1965) so famously put it but also the “deep processes” (Gioia, Price, Hamilton, & Thomas, 2010) in their interrelationships.

Exemplar studies

I recently summarized my philosophy of qualitative research in an Organizational Research Methods article with Kevin Corley and Aimee Hamilton (2013) and an autobiographical essay in the Routledge Companion to Qualitative Research (Gioia, in press). Some of the studies that exemplify this research approach include Gioia and Chittipeddi (1991), a “precursor study” that set the stage; Gioia, Thomas, Clark, and Chittipeddi (1994), the first study to articulate the methodology in print; Gioia and Thomas (1996); Corley and Gioia (2004); Nag et al. (2007); Gioia et al. (2010); Clark, Gioia, Ketchen, and Thomas (2010); Nag and Gioia (2012); and Patvardhan, Gioia, and Hamilton (2015).

Kathy Eisenhardt

Overview

For me, the goal of the “theory building from cases” method is theory—plain and simple. The method conceptualizes theory building and theory testing as closely related. They’re two sides of the same coin: The former goes from data to theory and the latter from theory to data. Theory building from cases is centered on theory that is testable, generalizable, logically coherent, and empirically valid. It’s particularly useful for answering “how” questions, may be either normative or descriptive, and either process (i.e., focused on similarity) or variance based. Sometimes, the goal is to create a fundamentally new theory, while at other times the goal is to elaborate an existing theory. Regardless of the specifics, the goal is always theory building. Within this method, theory is a combination of constructs, propositions that link together those constructs, and the underlying theoretical arguments for why these propositions can explain a general phenomenon. And again, the goal is strong theory (i.e., theory that is parsimonious, testable, logically coherent, and empirically accurate).

Theory building from case studies (Eisenhardt, 1989a; Eisenhardt & Graebner, 2007) really stems from a combination of two traditions. On one hand, theory building from cases relies on inductive grounded theory building—very much rooted in the tradition of Glaser and Strauss (1967), where researchers walk in the door and don’t have a preconception of what relationships they’re going to see. They may have a guess about the constructs, but are fundamentally going in open-minded, if you will. I think Denny [Gioia] described that very well. That’s exactly the way I see it as well. On the other hand, theory building from cases fundamentally depends on a case study. Here, I’m drawing on Robert Yin (e.g., Yin, 1994, 2009): A case study is a rich empirical instance of some phenomenon, typically using multiple data sources. A case can be about a group or an organization. There can also be cases within cases, so one can imagine a single organization with multiple cases or a single process with multiple temporal phases. That said, not all qualitative research is theory building from case studies. Likewise, not all case study research is theory building—sometimes it is deductive.

A case study focuses on the dynamics present in a single setting. A case study can have multiple levels of analysis (i.e., embedded design). Central to case studies is the notion of replication logic in which each case is analyzed on its own, rather than pooled with other cases into summary statistics such as means. That is, each case is analyzed as a stand-alone entity, and emergent theory is “tested” in each case on its own. Case studies can include qualitative and quantitative data. Moreover, data can be collected from the field, surveys, and other sources. Practitioners of the method often use multiple cases because the generated theory is more likely to be parsimonious, accurate, and generalizable. In contrast, single cases tend to lead to theory that is more idiosyncratic to the case, is often overly complex, and may miss key relationships or the appropriate level of construct abstraction.

Theory building from cases is appropriate in several different research situations. First, and most typically, case study is appropriate for building theory in situations where there’s either no theory or a problematic one. For example, Melissa Graebner did work on acquisitions (Graebner, 2004, 2009; Graebner & Eisenhardt, 2004). If you know the acquisition literature at all, you know that 95% or more of studies are from the point of view of the buyer, but she took the point of view of the seller. My work with Pinar Ozcan on networks serves as another example (Ozcan & Eisenhardt, 2009). If you know network theory, you know that it’s focused on how the “rich get richer”—that is, if you have a tie, then you can get another tie, and so forth. We wanted to look at a situation where the focal actors didn’t have any ties and study how they built their networks from scratch.

Second, this method is also appropriate for building theory related to complex processes; for example, situations where there are likely to be configurations of variables, where there are multiple paths in the data, or equifinality (e.g., see Battilana & Dorado, 2010; Davis & Eisenhardt, 2011; Hallen & Eisenhardt, 2012). Third, theory building from cases also works well in situations with “hard to measure” constructs. For example, I think identity is a very hard construct to measure reliably using surveys (see Powell & Baker, 2014). I think Denny [Gioia] has also been particularly strong in dealing with “hard to measure” constructs. Another example is Wendy Smith (2014), who deals with paradox, another construct that’s hard to measure. Fourth and finally, theory building from cases is also useful when there is a unique exemplar. For example, Mary Tripsas and Giovanni Gavetti examined Polaroid Corporation, a company that looked like it had everything going for it and yet couldn’t change (Tripsas & Gavetti, 2000). Unique exemplars might be a bit more where Ann [Langley] often plays. In general, I think all of us are united by process questions—“How do things happen” questions—as opposed to “what” and “how much” questions.

Some basic steps

I believe in knowing the literature, and then looking for a problem or question where there’s truly no known answer. It’s almost impossible to find those problems without knowing the literature. I also think that research should at least start with a research question. It may not be the question of the study in the end or the only question, but I think it’s “crazy” to start with no question.

The next two steps, research design and theoretical sampling, are particularly important, regardless of the kind of inductive work, but especially in multicase research. They might be less important in single-case research, where people are a bit more drawn to an exemplar or maybe a case that’s particularly convenient. However, in theory building from cases, the researcher is trying to, on one hand, control the extraneous variation, and on the other hand, focus attention on the variation of interest. For example, one research design is what I call the “racing design.” This is a design where the researcher starts with, let’s say, five firms at a particular point in time in a particular market and lets them “race” to an outcome. For example, in my work with Pinar Ozcan in the mobile gaming industry (Ozcan & Eisenhardt, 2009), we began with five firms with matched characteristics at a particular point in time, and then we observed what happened over time. Some died, some did well, and some were in the middle. My work with Doug Hannah on ventures in the U.S. residential solar ecosystem (Hannah & Eisenhardt, 2016) and with Rory McDonald on ventures in the social investing sector (McDonald & Eisenhardt, 2017) also relies on this design. Another design is “polar types” (e.g., good and bad; see Eisenhardt, 1989b; Martin & Eisenhardt, 2010). Another design is focused on controlling antecedents. For example, I did some work with Jason Davis on understanding effective R&D alliances between major incumbents (Davis & Eisenhardt, 2011). Jason read the alliance literature. He then knew what the antecedent conditions were for effective alliances (e.g., partners before; experience; good resources). Next, he then selected cases with those antecedent conditions and so, effectively removed alliances that might fail simply because the antecedent conditions were poor. This control let us focus on uncovering novel process insights. Sam Garg and I took a similar approach in choosing cases for studying how CEOs engage in strategy making with their boards (Garg & Eisenhardt, 2016). Research design and the related theoretical sampling, I think, are critical, particularly in multicase research. And they are particularly difficult for the deductive researchers, the ones reviewing our papers, because they expect random sampling.

The next step is data collection. Here, I think what unites us all is deep immersion in the setting. Perhaps I and some other researchers use more varied data sources than say Denny [Gioia] who prefers interviews. For example, ethnography techniques can be very exciting for questions where informants are not all that helpful—they may not know or even if they do know, they won’t tell you their thoughts. Other data collection techniques include observation, interviews (obviously important for most studies), archival surveys, Twitter feeds, and so on. Recently, Melissa Graebner and I did a survey of what people think “qualitative research” means. While no one was able to articulate a comprehensive definition, the most common definition was as follows: Qualitative research is based on deep immersion in multiple kinds of data. I think that’s a fundamental characteristic. Some of us may prefer one data type over others but the inherent feature of “qualitative research” is multiple types of data that help reveal the focal phenomenon.

The next step is around grounded theory building. When I started, I called what I did “grounded theory building.” Then, there was an interpretivist “beat down” of anybody who used the grounded theory building term but didn’t exactly follow Strauss and Corbin (1998). What Walsh and several coauthors including Glaser (see Walsh et al., 2015) are now confirming is that grounded theory building is a “big tent”—that is, building a theory from data. It almost invariably involves collecting data, breaking it up into what Denny [Gioia] calls first-order and second-order themes, or what I call “measures” and “constructs,” and then abstracting at a higher level. Regardless of the terms, this process is at the heart of what most theory-building qualitative researchers are doing.

In theory building from cases, we typically explore multiple cases. The analysis begins with a longitudinal history of each case or maybe cases within cases. We then do cross-case pattern recognition. We try to develop measures from the data while we are thinking about emergent theory. As the theory advances, we incorporate other literature, from both our field and other fields. For example, because my work with Chris Bingham is on learning (Bingham & Eisenhardt, 2011), we often considered work from cognitive science, outside our base disciplines. Then, we iterate among the literature, data and emergent theory to come up with logical explanations that we term “the whys” for the underlying logic of the emergent relationships among constructs.

Finally, there’s writing. There is a rough formula. I think people who follow what I do or do similar research have one as does Denny [Gioia]. The typical components of my formula: overarching diagram, presentation of our findings, themes, propositions, or whatever you want to call the theoretical framework, and weaving that presentation with case examples to explain the emergent theory and its underlying theoretical logic. I’m a “proposition person” if that’s what my reviewers want. I don’t actually care either way . . . If my reviewer says “include propositions,” I’m good. If not, they’re gone. But presentation of the underlying theoretical arguments (i.e., the “why’s”) is very important.

Exemplar studies

I initially articulated my thoughts on the “theory building from cases” method in the Academy of Management Review (Eisenhardt, 1989a), and extended these thoughts in the AMJ (Eisenhardt & Graebner, 2007) and again more recently in AMJ (Eisenhardt et al., 2016). Some exemplars have been referenced in my talk, and include Ozcan and Eisenhardt (2009), Battilana and Dorado (2010), Martin and Eisenhardt (2010), Bingham and Eisenhardt (2011), Davis and Eisenhardt (2011), Hallen and Eisenhardt (2012), Pache and Santos (2013), and Powell and Baker (2014).

Ann Langley

Overview

I do not have a specific method. I also believe that trying to reduce our options to a single methodology is really not a good idea. However, I do have a position about research, and it is about the importance of looking at processes. I am interested in any kinds of methods that can help us understand them. I originally wrote my 1999 paper about process research methods (Langley, 1999) because I was puzzling over how on earth to analyze complex data dealing with temporally evolving processes that might be persuasive and theoretically insightful. The starting point for that paper was that there are two different kinds of thinking that underlie most of our research: variance thinking and process thinking. Variance thinking is what most of us actually do as social scientists, which is looking at the relationships between variables. However, I am interested in a different kind of understanding of the world where we think about how things evolve over time. This form of understanding is very much based on flows of activities and events. It turns out that variables and events are really quite different entities, so you do very often need quite different methods to deal with them. For example, you might explain innovation in two different ways: either by looking at the factors that might be correlated with it (the variance approach) or by asking what are the activities you actually have to engage in over time to produce it (the process approach). A fascinating example of how these two forms of thinking might apply to the same qualitative data on innovation is illustrated by two papers by Alan Meyer and colleagues from the 1980s (Meyer, 1984—a process study; Meyer & Goes, 1988—a variance study).

Why is studying processes over time important? First of all, it is important because time is the only thing we cannot escape. Time is a very central part of the world we live in, and it is very surprising that a lot of our research still does not take it seriously into account. A second reason is that process is extremely important from the perspective of practitioners. We may know, for example, that bigger organizations tend to have economies of scale, and because of that they may be able to be more profitable, generally speaking. But if you are a small organization, that does not tell you what to do. You cannot get bigger instantaneously. Using a variance understanding (i.e., A is better than B) does not capture the movement over time to move from A to B. The process of becoming bigger can make all the difference, and it is this that an organization will need to understand if it wants to grow. A third reason for studying processes is that we often forget the huge amount of work and activity that is required to stay in the same place. The world has to sustain itself, and so the process (i.e., the activities and effort involved) is very important.

A final reason why process thinking is important is concerned with the multiple and flowing nature of outcomes. The usual variance study has a single outcome: Usually, this is organizational performance, but that is a static one-time thing. Yet, we all know that everything we do has multiple rippling consequences that spread out over time. There are short-term effects and there are long-term effects. One of the studies that I did with Jean-Louis Denis and Lise Lamothe on organizational change (Denis, Lamothe, & Langley, 2001) brought this home to me rather starkly. We identified cases where CEOs and their management teams were very successful in achieving change in the shorter term. However, the things that they did in the process upset so many people that the top management teams broke down and people were forced to leave and the organizations involved had to start all over again. Process research resists stopping the clock to focus on unique outcomes. Time and process always go on. In fact, one of the questions that Joel [Gehman] and Vern [Glaser] asked us to address in this symposium is, “When do you stop collecting data?” I find that a difficult question because I know that any stopping point is arbitrary. Classic variance studies seem to overlook this.

Some basic steps

There is no one best way to perform process research, and I think that this is an important message that I want to convey here. In my 1999 paper (Langley, 1999), I described several approaches to data collection and analysis that can be used to study processes. Moreover, these approaches are not necessarily better or worse than each other; they just produce different though often equally interesting ways of understanding of the world. I believe that it is important to know about some of the options that are available.

That said, I do have a few principles and suggestions about how one might try to generate convincing and theoretically insightful process studies. These are based on my own research and also on that of others. Notably, if you are interested in process research, I suggest reading the recent AMJ Special Forum on Process Studies of Change in Organization and Management I coedited with Clive Smallman, Hari Tsoukas, and Andy Van de Ven, which came out in 2013 (Langley, Smallman, Tsoukas, & Van de Ven, 2013). This is a really nice collection of 13 articles that illustrate different facets of process research (e.g., Bruns, 2013; Gehman, Treviño, & Garud, 2013; Howard-Grenville, Metzger, & Meyer, 2013; Jay, 2013; Lok & de Rond, 2013; Monin, Noorderhaven, Vaara, & Kroon, 2013; Wright & Zammuto, 2013).

One of the first principles of process research is that you have to actually study things over time. This is a prerequisite, and it requires rich longitudinal data. Interviews and observations are typical sources for qualitative data, but other kinds of data can be used as well. There is, for example, a lovely paper by April Wright and Ray Zammuto (Wright & Zammuto, 2013) in that special issue which is based on temporally embedded archival data; specifically the minutes of the meetings of the Marylebone Cricket Club which provide in enormous detail a record of how the rules of cricket actually changed over time and the discussions that led to that. Many papers in the special issue are based on rich ethnographies (e.g., Bruns, 2013; Jay, 2013; Lok & de Rond, 2013), and others are based on mixed archival and real-time methods (e.g., Gehman et al., 2013; Howard-Grenville et al., 2013). The Monin et al. (2013) paper was based on more than 600 interviews describing the integration processes following a mega-merger over several years.

What is important is that the data fit with the time span of the processes that you are studying. You can actually do a process study of something that does not last very long (e.g., a meeting or this symposium), as long as you have longitudinal moment by moment data to capture it in sufficient detail to derive interesting insights about process. If you are going to be using interviews, you may wish to interview people about specific factual events that happened in the past (as Kathy often does in her research). However, if you are interested in people’s interpretations or cognitions and how those evolved (as Denny likes to do), you probably need to carry out interviews in real time as processes are evolving because people cannot realistically remember what their cognitions were 3 years ago. The data must fit the needs of the project.

In the 1999 paper, I came up with seven ways of analyzing those data once you have them: narrative, quantification, alternate templates, grounded theory, visual mapping, temporal bracketing, and comparative cases. I think that all these methods are valuable. However, I also think that there are probably many other approaches worth considering that I did not include in that paper. I also think that one point was perhaps not sufficiently emphasized when I wrote it (although it is there if you read carefully): The fact that these methods can be mixed and matched in various different ways. They are not completely distinct.

In terms of relating these ideas to the methodologies favored by my colleagues, the grounded theory method or the way I described it in the 1999 paper is very much what Denny is proposing. Denny’s work clearly represents one approach to doing process research. I also included Kathy’s comparative case approach in that original article. For me, this may be another way of doing process research, although I believe that Kathy’s approach has usually (though not always) tended to move from original process-based data toward variance theorizing. I have great admiration for these two approaches. I think that both Kathy and Denny have helped make qualitative research legitimate for all of us, a major advance that we need to thank them for.

However, there are two other approaches that I like very much, and which I think are extremely useful for process analysis: visual mapping and temporal bracketing. Both of these are particularly valuable for examining temporal sequences. A visual mapping strategy is able to show how events are connected over time, emphasizing, for example, ordered sequences—events, activities, choices, entities which we tend to forget about when we are focusing on categories and variables. Temporal bracketing enables us to simplify temporal flows over time. The problem with temporality is that new stuff is happening every second. I have found that it is a useful approximation to try to decompose processes into phases. These phases are not necessarily theoretically relevant in and of themselves; they are just continuous episodes separated by discontinuities. They can become units of analysis for comparison over time. This is a different form of replication that I have also labeled longitudinal replication. Through this technique, it is possible to explore the recurrence of process phenomena over time (e.g., see Denis, Dompierre, Langley, & Rouleau, 2011; Howard-Grenville et al., 2013; Wright & Zammuto, 2013).

Exemplar studies

I articulated some initial thoughts on process theorizing in the 1999 AMR article (Langley, 1999), and extended this thinking in a piece in Strategic Organization (Langley, 2007). In a paper with Chahrazad Abdallah (Langley & Abdallah, 2011), we contrast Kathy [Eisenhardt] and Denny’s [Gioia] templates for qualitative research and introduce two “turns” in qualitative research: the practice turn and the discursive turn. I referred to many excellent studies in this talk, and would recommend using the AMJ special issue on process studies as a source of inspiration for qualitative methods and theorizing (Langley et al., 2013).

Comparing and Contrasting the Three Approaches to Qualitative Research

To highlight the similarities and differences between the three approaches to qualitative research, we asked each of the senior scholars to reflect on three issues: What constitutes theory, what do they see as the similarities and differences between the three approaches, and what are their “pet peeves”?

What Constitutes Theory?

Gioia

My methodology is specifically designed to generate grounded theory, so the emergent theory rooted in the data constitutes the theory. I have a simple, general view of theory. As Kevin Corley and I put it, “Theory is a statement of concepts and their interrelationships that shows how and/or why a phenomenon occurs” (Corley & Gioia, 2011, p. 12). Relatedly, theoretical contributions arise from the generation of new concepts and/or the relationships among the concepts that help us understand phenomena. The concepts and relationships developed from inductive, grounded theorizing should reflect principles that are portable or transferable to other domains and settings.

Eisenhardt

Theory is a combination of constructs, relationships between constructs, and the underlying logic linking those constructs that is focused on explaining some phenomenon in a general way. Assume we have Construct A and Construct B (or second-order code). The underlying logic for why A might lead to B is extremely important, that’s “the whys.” What are the one, two, three logical reasons why A and B might be related? The reason could be a logical argument. It could draw on prior research in our field or elsewhere, or on what the informants say. Or it might draw on all of these sources. Let’s say you studied a bunch of companies and observed that CEOs with blue eyes did better. If you can’t come up with an underlying reason why blue-eyed CEOs perform better, then you don’t have a theory. You just have a correlation. This is a really important point.

Langley

Depending on which analytic strategies you use, the kind of theory that you will produce will be different. If you’re using a narrative strategy and using the grounded theory strategy of the type that Denny [Gioia] and Kevin [Corley] are talking about, you are going to be developing an interpretive theory. You are going to be focusing on the sense given by participants to a phenomenon. If you are using a comparative strategy or a quantitative strategy, you are going to be talking about a different kind of theory more focused on prediction. I think that this is what Kathy [Eisenhardt] is talking about. She is interested in identifying causes and relationships between variables which are demonstrated empirically in the data and which also have a theoretical explanation attached to them that can be generalized and tested.

Another kind of theoretical product is a pattern. When you identify similarity in sequences of events for a phenomenon across different organizations, you have a surface pattern. Visual mapping may be very good for deriving such patterns, but this has other problems because it may not provide you with an understanding of why those patterns are there. Another kind of theorizing focuses on mechanisms; that is, the set of driving forces that underlie and produce the patterns that we see empirically. I particularly like Andy Van de Ven’s (1992) analysis of different kinds of theoretical mechanisms underlying processes of change and development, although I do not think that the mechanisms he proposes necessarily exhaust all possibilities.

Methodological Similarities and Differences

Gioia

Ann Langley is the purest among us. She does pure process research and it is beautiful. I consider myself a pure interpretivist, but sometimes I think Ann thinks I’ve gone astray with my focus on systematic techniques for studying process. My work is much different from Kathy Eisenhardt’s, as her work is usually based on multicase study comparisons and focused in some way on, what I might term, hypothesis assessment.

Beyond a basic assumption that the organizational world is essentially socially constructed, my methodological approach is predicated on another critical assumption that my informants are “knowledgeable agents.” I know that term is a classic grandiose example of academese, but all it means is that people at work know what they are trying to do and that they can explain to us quite knowledgeably what their thoughts, emotions, intentions, and actions are. They get it. They’re not even close to Garfinkel’s (1967) rich notion of cultural dopes, so I always, always, always foreground the informants’ interpretations.

Above all, I’m not so presumptuous that I impose prior concepts, constructs, or theories on the informants to understand or explain their understandings of their experiences. I go out of my way to give voice to the informants. Anyway, my opening stance is one of well-intended ignorance. I really don’t pretend to know what my informants are experiencing, and I don’t presume to have some silver-bullet theory that might explain their experience. I adopt an approach of willful suspension of belief concerning previous theorizing.

Here’s a quick example of why it’s important to suspend prior theory. Twenty-five years ago, I was researching strategic change in academia. At the time, the received wisdom was that strategic managers thought about issues as either threats or opportunities. I just wasn’t sure that was true in academia, so in my interviews of university upper-echelons executives, I pointedly did not use those terms. Perhaps surprisingly, in 3 months of interviews, not once did any of them refer to issues in threat-opportunity terms. They saw issues as either “strategic” or “political.” When the study was over, I asked about it. One of the informants said to me, “Oh, I can use those terms if you like, but that’s just not the way we think about the issues around here.”

Of course, I’m never completely uninformed about prior work. I’m not a dope or a dummy either, but I try not to let my existing knowledge get in the way. I assume that I’m a fairly knowledgeable agent, too. I’ve worked in responsible positions in organizations. I understand the organizational context from an on-the-ground, gotta-make-a-decision-now point of view, not merely from an abstract theoretical perspective.

The implications of these assumptions are, however, pretty profound. Perhaps most importantly, it puts me, the researcher, in the role of glorified reporter of the informants’ experiences and their interpretations of those experiences. I’m not at all insulted by this subordinate role. I guess I get a little jealous of other forms of qualitative research that give people what I call a license to be brilliant, whereas I am bound by my oath to be faithful to my informants’ constructions of reality. I’ve discovered over the years that my self-imposed restraint gives me a different kind of creative license, actually.

Eisenhardt

Initially, I’d like to observe that there are more similarities than differences among the approaches to qualitative research represented here. That being said, when qualitative researchers are theory building, whether it’s myself or Denny [Gioia] or Ann [Langley], there are other people who are theory building too, and they’re using formal models, or they might be armchair theorizing. As a group, we contrast with those other methods. I like to use the analogy that just as math keeps formal theory honest, it’s data and being true to the data that keeps our theory building honest—which is why we’re not just reporting what we feel like saying.

To further elaborate, I am a big believer that a lot of us who are doing theory-building research are basically all doing the same thing and on the same team. We’re all using diverse data sources with deep immersion in the phenomenon. We’re all doing theoretical sampling, not random sampling. And, we’re all doing grounded theory building, whether we’re following the bible of grounded theory building or the spirit of grounded theory building by going from data to theory. I think that’s what unites the panel, and what unites much of qualitative research. Although there are qualitative researchers who have other aims, the people who see themselves as theory builders are all doing these. When I read over the article that Kevin [Corley], Denny [Gioia], and Aimee [Hamilton] wrote (Gioia et al., 2013), I’m mostly agreeing: “I know this. I believe this. This is where I’m coming from too.”

I think we’re probably all in agreement that rigor is about a strong theory that’s logical, that’s parsimonious, that’s accurate. We have concepts or second-order themes. We know what they are—They’re defined, distinct, well-measured, and well-grounded. And we’re coming up with theory that is insightful. I think regardless of who you are in this room—whether you’re an ethnographer, an interpretivist, a multicase person or a process person, whoever you might be—at the end of the day, if you’re a theory builder, then you must ask yourself: Is my theory a strong theory in the traditional sense?

Now, to discuss some of the differences between my approach to theory building and Denny’s or Ann’s approach. For me, theory building from cases is an inductive approach that is closely related to deductive theory testing. They are two sides of the same coin. In comparison with interpretivist and ethnographic approaches, the goal is generalizable and testable theory. As such, it is not solely focused on descriptions of particular situations or privileging the subjective perspective of participants. I used to call myself a positivist. I don’t do that much anymore—it’s a loaded term. But I also don’t cringe at positivism. Finally, my approach and theory building from cases broadly are not locked into an epistemological or an ontological point of view, but it is often locked into a 40-page limit. A multiple-case study author has a much different writing challenge than a single-case author.

Regarding page limits, a criticism of my work and the work of other multicase authors from some reviewers is, “We don’t see enough description.” My response is, “How are we going to fix that in 40 pages?” We can’t, and so we can’t take the same approach to writing as single-case authors. There’s really quite a difference, I think, in the writing challenge that we have. So while some readers are looking for stories, multiple-case papers are necessarily written in terms of theory with case examples and not as a single-narrative story.

Beyond writing differences, the analytic techniques and presentation of data are distinct. In theory building from cases, researchers use a variety of techniques for cross-case analysis techniques as they iterate across cases and at later stages, with the extant literature. There is also openness with regard to how data are coded and displayed. This stems from the belief that different data, research questions, and even researchers may call for distinctive approaches to the specifics of coding and display.

One final specific difference to observe: Denny [Gioia] said, “I couldn’t live without a data structure.” While theory building from cases has measures and constructs that constitute a data structure, I don’t want to present a “data structure” in my papers. A data structure has no data in it, and so takes up precious journal space that is already tight. Instead, I show the reader the data structure in a series of construct tables that tie particular measures of the construct to specific cases. So, don’t make me do a data structure! Likewise, I don’t want a “data and themes” table. There are two problems in multiple cases. First of all, you have to fit all the cases into the table. Then second, you have to show that the data for Case 1 are fitting (or not) with Case 2, Case 3, Case 4, and so on. If you use a data and themes table, you can’t show the systematic grounding of each construct in each case because you are showing only a piece here and a piece there. So the replication logic across cases is obscured. Replication logic requires systematically observing constructs and relationships in each case—Case 1, Case 2, Case 3. If multicase research is forced into a data structure table and especially a data and themes table, it’s deeply problematic—certainly for the kind of work I do and, I think, for other people conducting multiple-case studies.

Langley

I think my key point here is that I am not proposing a single method or template for doing qualitative research. However, I am arguing for the need to consider phenomena processually and for finding suitable ways of doing this. Process researchers seek to understand and explain the world in terms of interlinked events, activity, temporality, and flow (Langley et al., 2013) rather than in terms of variance and relationships among independent and dependent variables. There are a variety of qualitative designs and analytic strategies that one can adopt to capture and theorize processes, each having advantages and disadvantages in terms of what can be revealed and understood. It might be reassuring for some to have a clear-cut template for doing successful work of this nature (and I personally see Denny’s and Kathy’s approaches as fairly template like although they might deny it). In contrast, I am not proposing a single approach, and indeed, I believe that any specific template is bound to have blind spots—and that it is better to welcome diversity.

There are, however, a few common elements that I think are important for qualitative process research. First, as process research is about evolution, activity, and flow over time, this needs to be reflected in the data. Process studies are longitudinal, and data need to be collected over a long enough period to capture the rhythm of the process studied. In addition, while process researchers often use retrospective interviews as part of their databases, real-time observation or time-stamped archival data and repeated interviews are generally important to capture processes as they occur, rather than merely their retrospective reconstruction. Second, the analysis process itself needs to focus on temporal relations among events in sequence to develop process theory.

It is also important to recognize that the analytic approaches to sensemaking that we adopt quite clearly influence the theoretical forms and types of contributions that we are able to make. For example, interpretations based on a narrative strategy or grounded theory provide a sense of participants’ lived experiences (as in Denny’s approach); predictions based on a comparative or quantitative strategy provide a sense of causal laws (more like Kathy’s approach); patterns based on visual mapping provide a sense of surface structure; and mechanisms based on a narrative strategy, alternate templates, or temporal composition provide a sense of driving forces. Above all, it is important to remember that there is still room for creativity! I would hate that a symposium like this might imply that there are only three approaches to seeing the world qualitatively. There are many approaches, some perhaps remaining to be invented. There are however some substantive differences between the different approaches to qualitative research, and I have outlined some detailed thoughts on this in a recent article (Langley & Abdallah, 2011).

Pet Peeves

Gioia

There are a number of issues that I would like to address about the way the methodology I’ve been developing has been implemented over the years by others (see also Gioia, in press). The first is that the first-order or second-order terminology seems to have become increasingly prevalent in recent years. As my friend, Royston Greenwood, put it in a good-natured ribbing not long ago, “Is that it, then? Are we all going to talk only in terms of first- and second-order findings in our research reporting now? Is that a good thing?” My answer is, “Oh, good grief! I hope not.” No, it’s not a good thing. I’m a big tent kind of guy. I have no desire to see the particular systematic approach that I’ve developed become the template for qualitative research.

Another colleague said that the approach is creating a kind of arms race where each study has to outdo the other on demonstrating its qualitative rigor. Lord, I hope that’s not true either, especially when it gets the point of feeling that we need to include coding reliability statistics in our reporting. That sort of outcome will play directly into the hands of critics who see the methodology as an example of creeping positivism, a statement that gives me the heebie-jeebies.

I developed this approach mainly because I’m also an evidence-based guy. I just believe that the presentation of evidence matters. I’ve become my own victim too. One of my recent reviewers said, “This Gioia-methodology approach is just becoming too common,” and asked if I couldn’t please figure out some other approach. Oh, the benefits of blind review!—Gioia being asked not to use the Gioia methodology! I love it! If I were a bigger, more understanding guy, I should probably be receptive to the request. Yet, I’m not sure reviewers would ask people not to use multiple regression, for instance, if it were appropriate to answer the research question posed.

Finally, I’m concerned that so many scholars seem to be treating the methodology mainly as a presentational tactic, which offends my sensibilities. I designed this thing as a systematic way of thinking about designing, executing, and writing up qualitative research—the “full Monty.” The approach is meant to systematize your thinking while providing the wherewithal to discover revelatory stuff. It galls me to think that people are using it as just a formulaic presentational technique. Remember, it’s a methodology not just a method or set of cookbook techniques.

Eisenhardt

In a new AMJ paper (Eisenhardt et al., 2016), we write about rigor and rigor mortis. What’s rigor mortis? It’s requiring specific formats like a data structure. I understand why it works for Denny [Gioia] but I don’t think it works for everybody. Data and themes tables don’t work well for everybody or in multicase research either. And, they don’t work well outside of interview data, or with time-varying data. Second, rigor mortis involves following rigid analysis steps as if there’s a bible—for example, turning grounded theory building into a religion, not a technique. My third pet peeve, related to rigor mortis, is excessive transparency. What matters is the sampling and the data. I don’t need to know every step of the journey. I don’t even want to know every step of the journey. Instead, I want to get to the findings. In collecting data for our article (Eisenhardt et al., 2016), we surveyed about 30 qualitative researchers—not just researchers like me but all kinds. Most everybody writes their Methods section as linearized: “I did Step 1, Step 2, Step 3, Step 4.” But this is the equivalent of “kabuki theater” for most people. We all use a much more creative process that can’t accurately be turned into a linear, mindless, step-by-step description. That just isn’t what we do.

I also have a couple of idiosyncratic preferences. I like multiple cases better than single, although I recognize that there are unique exemplars, and sometimes data challenges. I also think that some single-case studies are actually multicase because the authors actually do break up the case and compare. I will say, however, that I’ve never seen (in my own studies) a single case that told me nearly as much as two, three, four cases told me. A single case is just too idiosyncratic and leads to an overdetermined theory in the mathematical sense.

The second thing I prefer is theory, that is, explicit and generalizable theory. So I’m interested in why A and B go together, not just that A and B do go together. I’m also actually happy to engage with deductive research and with its concepts like controls and measures because (at the end of the day) we theory build and deductive researchers theory test. I say, “We rule.” They do our work. Seriously, I think that we should connect to deductive researchers.

Langley

I am not sure that I would call these pet peeves, but when we edited the special issue of AMJ (Langley et al., 2013), we did come across some examples of process research that somehow failed in their mission to capture processes insightfully, even though they involved studying processes empirically over time. Most of these papers were rejected on the grounds that they made “no theoretical contribution.” So what does this mean exactly? Let me elaborate on some of the patterns we noticed.

A first problem is simply generating a narrative without any obvious theorization. For example, one reviewer noted, “The case is interesting and well written. It could be useful in a strategic management course.” That will not get you published. A second problem I have noticed is what I call antitheorizing: This involves pitting your case against a “received view,” which is usually a very rational kind of theorizing, and saying, “Well, actually it’s not like that.” This approach to attempting to make a contribution may have worked in the past, but that is no longer the case. Saying that “things are messy” is simply not enough. A third problem is what I call “illustrative theorizing.” This is what happens when you start with a theory and apply it to your qualitative process data. This is tempting but is not particularly convincing. The author is simply labeling things that happened according to a preconceived theory. As one reviewer of a paper submitted to the special issue noted, “The analysis is a form of labeling: here’s something that happened and here is what it would be called in our theoretical framework. This is not a test of the framework, but a mapping exercise.” The fourth approach that does not seem to work all that well is finding regularities but not really explaining them—I call this “pattern theorizing” and mentioned it above. An interesting example I always give for this is based on a very nice piece of process research by Connie Gersick (1988), which is about how groups with deadlines make decisions. She found with eight different groups that, bang in the middle, they shift the way they are thinking and working. Is that really a theory? As such, I do not think it is. It is just an empirical pattern. One of the things that Connie has mentioned when writing about this study in a later publication (Gersick, 1992) is that the lack of an obvious theoretical explanation was what gave her trouble in publishing the paper, despite the clear empirical pattern. She did in fact eventually find a theoretical explanation and wrote another paper supporting this, developing an interesting analogy between her findings and other phenomena that have a punctuated equilibrium structure (Gersick, 1991). Finally, another form of problematic process theorizing I call patchwork theorizing (or bricolage), in which authors just take a few ideas from here, a few ideas from there, a little bit from elsewhere, and stick the whole thing together in a kind of mashup. Unfortunately, readers will not usually see this as a contribution, as it lacks coherence and integration.

As a counterpoint to these problematic issues, I would also like to point to examples of the kinds of theorizing that can make a theoretical contribution and that were successful in the special issue of AMJ. For instance, Philippe Monin and colleagues examined how dialectics and contradiction constitute a process motor (Monin et al., 2013) explaining sensemaking and sensegiving patterns over time during a complex merger. Joel [Gehman] and colleagues have a very nice paper on multilevel interaction between microprocesses and macroprocesses, and how one grew out of the other (Gehman et al., 2013). A third kind of contribution is focused on the dynamics of stability, that is, the work you need to do to stay in the same place (Lok & de Rond, 2013). In fact, a final point I would like to make is that what makes a theoretical contribution in process research is itself a moving target (or a processual phenomenon). The kinds of theoretical framings that appeared insightful in earlier decades no longer have the same attraction today. Part of the common challenge of doing qualitative research (and I think Denny and Kathy would agree with me here) is in fact the continual push for novelty.

Themes From the Interactive Discussion

On Controlling Variance

Corley (substituting for Gioia)

Something that is very important in Kathy’s method is controlling variance, and then really focusing on the specific variance you’re interested in studying. In contrast, one of the things that comes out of an interpretivist perspective is this notion that variability in people’s experiences—and their understanding of that experience—is really interesting. As a grounded theorist trying to understand the phenomenon from the experience of those living that phenomenon, I want to gather as many varied perspectives on the phenomenon as possible. I think that this leads partly to the need or desire at some point to begin to try to structure the data, because as an interpretive theorist I’m out collecting a lot of data and I’m trying to make sense of it and figure out how this helps me understand the phenomenon better. Then, I have to pivot a little bit and say, “How can I help my reader understand this phenomenon, because they don’t have the benefit of being absorbed in all these varied data.”

So interestingly, interpretivists have a rather different way of thinking about variance; we’re much less interested in controlling variance and more interested in capturing variability and trying to understand why that variability exists. This leads to the need to find a way to structure the data, so that our readers can understand it better.

Eisenhardt

One of the reasons why controlling variance comes up in my world is multiple cases. I think that this is actually the huge difference. If Denny or Ann were doing an identity study at a major university and they wanted to do a multicase study, would they control the variance by looking at another major university or would they try to create variance by looking at a corporation or government? I think the big difference is that, in a multicase study, once we specify the focal phenomenon and research question, we then think carefully about where to control versus create variance in the research design.

Langley

Obviously, process approaches do not emphasize the explanation of variance. I can see that when you want to explain variance and you only have a small sample, you really need to control for everything except the central elements that you are interested in. What I see as one of the differences between Denny [Gioia]’s and Kathy [Eisenhardt]’s approach is in what the final theoretical product looks like and what kind of generalization might be conceivable from that? Those who follow Kathy’s approach develop constructs from a series of cases that enable them to explain differences. In doing so, they abstract out all of the richness of the particular stories to focus on those specific things that make the difference. That is a very important thing to do. To do it well, you need to control for extraneous variance on things you are not focusing on. Whereas in interpretive research such as that favored by Denny and Kevin, you might want all that messiness to be present and visible, because interpretivists have a different conception of what generality is. Rather than talking about generalizability, they would talk about transferability. To achieve this, you need to include as much richness as possible in your account, so that the readers themselves can see to what degree the story you are telling finds resonance. For me, that is an entirely different approach to theorizing. One is not better than the other; they both contribute to our understanding in different ways. However, you do need to know which of these you want to do when you’re developing a study.

Eisenhardt

First, I think that my cases are probably as rich as Denny’s—although maybe not quite. But as I was trying to say before, it is not possible to write about five cases with the same richness as one case when there is a 40-page or so limit. It’s not possible.

Second, my coauthors and I have also lately been told by some reviewers that we can’t have a process study and a variance study in the same study. I think that this is also not true. The confusion arises from the multiple meanings of “process.” Process can refer to events over time as Ann notes. Most of us doing qualitative research take this kind of longitudinal perspective. But process can also mean similarity which contrasts with variance. In theory building from cases, a researcher can be looking at two or three companies and see a given process like socialization occurring in different ways (variance). In fact, Anne-Claire Pache and Filipe Santos (Pache & Santos, 2013) have a very nice paper on social aid organizations where the administrative processes are different—that is, a variance study of process phenomena. Finally, an update on Ann’s diagram may be that the diagram has a particular view of variance studies that implies static antecedents (not time-varying processes) and outcomes.

Langley

I think you can mix process and variance, but it is hard to put all of that in one paper. I have tried that, but reviewers tend to push you to either drop cases to provide more richness or develop comparisons with clearly distinct outcomes. I also think that in a process study, multiple-case studies can serve a different kind of role from the one that Kathy is suggesting by showing how similar processes occur in different contexts, rather than emphasizing variance (see, for example, Abdallah, Denis, & Langley, 2011; Bucher & Langley, 2016; Denis et al., 2001). This is a very powerful way to show that the process that you were describing actually has some generality. It is not just something that you found in one particular context, but rather similar sorts of dynamics are occurring in very different places.

Eisenhardt

That’s also something we theory building from cases researchers think about too. We’re trying to figure out where we want the variation, how we want to handle generalizability, where we want to control for the variation that we don’t care about. In designing our research, we’re balancing all of them—that is, variation, control, and generalizability. In the ideal multicase world, Denny might replicate his university-based study of identity in a corporation, and then see what parts of the process in the university are the same in the corporation, what parts are different, and why.

On the Creative Process

Eisenhardt

I read Ann Langley’s work and get great ideas about the creative process. I don’t think Denny and Kevin have quite articulated theirs (and I haven’t articulated mine), but I suspect we’re all doing pretty similar things because we’re trying to see what the data are saying. We’re trying to figure out different ways to look at our data to see fresh insights. For example, I might mix and match: Let’s compare Cases A and B or let’s compare Periods 1 and 2.

Corley

I think another thing that pops out to me is that part of this process is really getting lost in your data. From an interpretivist’s perspective, that means I need to go out and collect a lot of data and struggle my way through it and really try to understand what’s going on. I know Joel [Gehman] and Vern [Glaser] are interested in this notion of theoretical sampling and at these key points looking at your data going: “Okay. What do I not understand? And where could I go in my context to get data that would help me understand that?” That process of gathering a lot of data and getting lost in it and then finding your way through it so that when you come out you have, for me, a plausible explanation of what’s going on, is a really key part of the creative process. Not that it’s necessarily different, but it’s something that I think you don’t pick up in a lot of methodology texts and how-to type of articles. It’s that messiness that is the creative process.

Langley

On this topic, I recently published paper with Malvina Klag in International Journal of Management Reviews titled “Approaching the Conceptual Leap” (Klag & Langley, 2013). It confirms what Kathy and Kevin have been saying, but includes another idea which is that there is a kind of dialectic process occurring here. For example, being very, very familiar with your data—being inside your data, your data being inside you—is extremely important. Yet however, it is also so important to detach yourself from it at some point, because otherwise you just get completely crushed by it.

For example, there is nothing like coming to the Academy of Management meeting and being forced to do a PowerPoint presentation that you are not ready to do for making a creative leap, provided the data are inside you. If not, you could probably still make a creative leap, but it might not have anything to do with the data, which would not be good. That dialectic between being immersed in the data and separating yourself from it is important. Other kinds of dialectics are important as well, such as being able to talk to a lot of other people without being too influenced by them and being able to draw insights from the literature not only in your field but in other disciplines as well.

However, accepting the role of chance is also very important in the creative process. Our paper (Klag & Langley, 2013) really talks about these different dialectics and the importance of combining the systematic disciplined side of research with the free imaginative side. Karl Weick (1989, 1999), if you remember, talked about theorizing as “disciplined imagination,” so essentially what we are saying is a reflection of that tension between the systematic discipline part and the freeing up part. You must have both. I think if you stay too close to the data, you end up with something that’s very mechanical, but if you’re just freewheeling, you finish up with something that has no relation to anything that’s actually grounded. Both are needed to develop strong and valuable theoretical insight.

On the Replicability of Findings

Corley

I think if you read what interpretivists believe and understand the philosophical underpinnings of interpretivism, you wouldn’t expect two different people walking in with the same research question to find the same explanation for the same phenomenon. I think perhaps this explains why it’s difficult for a lot of our colleagues who, having been trained in much more positivistic quantitative methods, struggle with what we do, because we’re not making truth claims about what we find. What we are doing is providing some deep insights into phenomena that we couldn’t obtain without engaging the people who experienced it. Determining whether these insights are “true” (according to some consensual criterion) is the next step in the process. We must test these theoretical insights in lots of different contexts. Our job as interpretivists is to go out there and gain new insights into a phenomenon from the people who are living it. So, I would not expect someone who had been at my research site asking the same questions I did to come up with the same grounded model that I did, because they’re not me. They didn’t interact with my informants in the same way.

Eisenhardt

I have an alternative view. I think if you had asked my research questions in my cases, you would get pretty much the same answer that I got. What I do think would be different is the questions that would be asked. Ann might choose a different question or Kevin might choose another different question that was interpretivist. But I think that if you used my question, you would see what I saw. So I differ on this point.

On Induction Versus Deduction

Eisenhardt

In connecting with our deductive friends, I do think that theoretical sampling is mind blowing, and so one does have to explain that concept. But I also think that there are many similarities between the two approaches. So if we’re actually doing the same thing as deductive researchers like measuring constructs, then we should use the same terms. That’s why I use “measures” and “constructs” not the terms “first” and “second order codes.” I don’t think that inventing more terms adds value. If we’re actually doing something genuinely different, then we should call it something else like theoretical sampling and replication logic. Finally, my deductive editors often like propositions, and if so, I usually provide them.

Corley

I tend to push back when they ask for propositions because propositions are not always the best output of inductive research. I agree that propositions can be a useful way of transitioning from inductive insights to deductive testing, but some inductive efforts produce deeply meaningful insights that can’t be easily reduced to proposition-type language.

Langley

I personally think that we overemphasize the idea of induction, that we are completely theory free. I actually think that what we are doing is abduction rather than induction. Induction for me implies that you are generalizing from empirical observation, and that there is not really any a priori theory there, which is illusory. I think that to develop a richer understanding of the world, we do need to connect to prior theory.

In most of my studies, we go into a site with some vague idea about the kinds of concepts and ideas that we are interested in. We collect some data that make us think about some other angles that might be interesting, and then we go to the literature and search for theories that would be relevant. Usually, when we do that, we can see how theories that are relevant can take us part, but not all, of the way to an enhanced understanding, and it is the remaining piece that we contribute. Thus, both deduction and induction are present in a kind of cycle. The word for that is abduction, which means connecting what you see in the empirical world with theoretical ideas, which are also out there and can be further developed.

Of course, you do have to have something over and above what is already expressed in theories. That’s why I said that the labeling approach to theorizing does not work. A typical example I give is actor-network theory. Actor-network theory, unfortunately, is so wonderful in that you can explain everything with it if you just label things the correct way. However, you will not make a contribution to actor-network theory by doing that because it will stay the same. It has not moved; you have not added to it. You do need to be able to extend theory. Quite often, my studies have a section called theoretical framework where I say, “Well, this is what the theory says but this is what we don’t know.” That gives me enough to move forward.

Conclusion

This symposium led to several major insights. Overall, the panelists agreed that there is some commonality between the different qualitative approaches. For instance, Kathy Eisenhardt concluded, “Let’s get past those minor points. Let’s focus on doing great research and let’s remember that 90 percent of the academy is composed of deductive researchers, so let’s play on the same team.” Although this is certainly something to be celebrated, this does not necessarily mean that anything goes. Within the “big tent” of qualitative research, there are different pockets or niches of scholars with their own toolkits and methodologies that should be engaged or leveraged thoughtfully. In our concluding thoughts, we highlight three takeaways for scholars using qualitative research: (a) in determining what qualitative approach to use, it is important to have a clear theoretical goal and objective for your research—this theoretical purpose animates the decisions made about research design; (b) every qualitative theory–method package, while potentially providing some degree of template or exemplar, nonetheless needs to be customized for a particular research context; (c) it is important to create a theory–method package “fit,” in which the methodological tools and their particular configuration are suited to the research question and theoretical aims of the project.

First, the purpose of a research study is very important. The scholars in this presentation explicitly or subtly described several different potential purposes that research seeks to theorize or explain. For example, do you want to understand what characteristics of a firm are associated with superior performance, perhaps using extant constructs? Are you attempting to understand how organizational actors in a social setting understand their circumstances or surroundings? Are you attempting to understand processual relationships among events? Different purposes of research result in the need to use and to discover different types of concepts and relationships among concepts. One takeaway from this session: If you want to generate a theory that can be tested deductively, the Eisenhardt method may be the place to start; if you want to understand the lived experiences of informants, the Gioia method may be the place to start; and if you want to understand temporal or practice dynamics in organizational life, Langley’s approach may be a source of inspiration. By the same token, there seem to be rather limited circumstances when a single paper would appropriately draw on many of the specifics of all three approaches.

Second, it is important to customize the method for your research context. Research situations are different, and require the use of tools and techniques in different ways. On one hand, some tools and techniques might be used in multiple approaches to qualitative research. For example, a general technique such as the constant comparative method for coding (i.e., Strauss & Corbin, 1998) might be used across multiple approaches to qualitative research. On the other hand, techniques such as visual mapping might be generally applied but will need to be customized for particular studies. That said, given the different onto-epistemological assumptions embedded in these methods packages, seemingly common concepts are likely to have different meanings and implications as you move from one method to another. For example, a concept such as replication differs quite a bit among the approaches. In Eisenhardt’s approach, replication is central: Without replication across cases, the researcher is left with just a particular story. In Langley’s approach, the logic of replication is temporal (e.g., see Denis et al., 2011). In Gioia’s approach, replication functions at the level of codes. So qualitative researchers can look to techniques that are shared across approaches, but the needs and idiosyncrasies of every research project will require customization. To sum up, Denny, Kathy, and Ann each agree that their method should be used flexibly. A methodology is not a cookbook; rather, it provides scholars with orienting principles and tools that always need to be modified and customized.