Abstract

Early intervention (EI) is federally mandated under Part C of the Individuals with Disabilities Education Act. It is a critical system supporting young children with developmental delays and their families; however, the use of evidence-based practices (EBPs) remains under-implemented across EI systems. Implementation climate is a key driver of successful EBP implementation, yet no validated tools exist to assess this within EI settings. This study adapted and validated the Implementation Climate Scale for Early Intervention (ICS-EI) using data from 183 EI providers across 33 states and Washington, D.C. The data indicated excellent internal consistency, and exploratory and confirmatory factor analyses supported a seven-factor structure with excellent model fit. The ICS-EI offers a psychometrically strong, contextually appropriate tool for assessing implementation climate in EI. Findings provide preliminary support for the ICS-EI as a tool to assess implementation climate in EI settings, which may inform future efforts to enhance EBP use.

Early intervention (EI) is a federally mandated system that is available in every state in the United States to support families of young children who may benefit from developmental support, such as young autistic children. EI is covered under Part C of the Individuals with Disabilities Education Act (IDEA, 2004), a federal special education law that is housed under the U.S. Department of Education and provides services that can shape long-term developmental outcomes for young children as well as foster positive outcomes for their caregivers (Guralnick, 2011; Landa, 2018). As EI is an IDEA mandate, it is required to provide services based on scientifically valid research and through the implementation of evidence-based practices (EBPs).

EBPs serve as the foundation of high-quality EI, ensuring that services are grounded in empirically supported methods known to improve child and family outcomes. The IDEA explicitly emphasizes the use of scientifically validated practices as part of its mandate to provide effective and equitable developmental support. In EI, there are several EBPs encompassing a range of practices that are well-established through research and professional consensus. These include family centered and routine-based approaches, caregiver coaching and capacity-building strategies, naturalistic developmental behavioral interventions, and other evidence-informed practices that promote children’s participation in everyday activities (Dunst & Trivette, 2009; ECTA Center, 2025; Friedman et al., 2012; Odom et al., 2010). The consistent implementation of these EBPs is essential to improving both developmental outcomes for children and empowerment outcomes for caregivers.

Unfortunately, however, similar to other public systems, reports consistently suggest that EBPs are not being implemented optimally in the EI system (Bishop-Fitzpatrick & Kind, 2017; Peterson-Katz et al., 2023; Pickard et al., 2024). There are numerous factors contributing to the under-implementation of EBPs in public systems of care, at the levels of individuals, organizations, and larger systems. Among these, implementation climate, organizational factors, and characteristics have emerged as primary determinants of EBP implementation (Aarons et al., 2011).

Implementation Climate in EI

Implementation climate refers to providers’ shared perceptions among organizational members on how their use of EBPs is expected, supported, and rewarded within their organization (Ehrhart et al., 2014). It conceptually represents a focused form of organizational climate that is both strategic and innovation-specific, rather than a general description of organizational culture or context. A strong implementation climate indicates high consensus that the organization values and reinforces the consistent use of EBPs, which in turn predicts greater implementation effectiveness (Weiner et al., 2011). As an organizational-level construct, implementation climate represents the shared perceptions of providers within a defined work unit or agency. It is also considered to be an important factor to incentivize and improve the use of EBPs among providers in various systems of care (Powell et al., 2021). Therefore, assessing implementation climate within EI can provide critical insight into how organizational support, leadership, and expectations influence providers’ ability to deliver EBPs with fidelity. Measurement of this construct allows us to identify contextual barriers and strengths, target implementation strategies accordingly, and monitor progress over time (Ehrhart et al., 2014; Weiner et al., 2011).

Measurement of Implementation Climate

Often, it is assessed through aggregated perceptions of agency staff using validated measures, such as the Implementation Climate Scale (ICS; Ehrhart et al., 2014). ICS was developed to assess the organizational context for implementation to support strategies that will advance effective implementation of EBPs. Since its development, the ICS has been validated and widely used to assess implementation climate for settings, such as health care settings (Ehrhart et al., 2014), child welfare organizations (Ehrhart et al., 2016), and school settings (Melgarejo et al., 2020; Thayer et al., 2022; Williams et al., 2022). There are several measures that have been developed to assess other organizational contexts and implementation determinants in health and mental health settings, including Organizational Readiness for Implementing Change (Shea et al., 2014) and Implementation Leadership Scale (Aarons, Ehrhart, & Farahnak, 2014). However, these measures may not adequately capture the climate of the multidisciplinary, family-centered, and policy-driven context of EI services.

Similar to other systems of care, EI faces a multitude of complexities. Despite the fact that it is federally mandated, individual states are responsible for funding these services independently. As such, EI services in each state operate uniquely. For example, in some states, independent contractors provide EI services through state contracts, and not necessarily through an organization (Pickard et al., 2024). While this system provides great flexibility, it increases the complexity in terms of professional development opportunities for supporting and promoting the use of EBPs in their practice. In other states, EI is provided through agencies that have contracts through the state. Regardless of the service format, however, the extent to which EBPs are utilized can be quite variable, with many EI providers and agencies reporting less use of EBPs among low-resourced families (Aranbarri et al., 2021) and most states reporting an overall shortage of providers (New America, 2020). There is also system-level variability that contributes to this issue, with states having significant latitude regarding how services are administered and funded, resulting in substantial variability across states in terms of fidelity, quality, and types of services available. Unlike school or mental health settings, EI systems often operate through decentralized structures, with providers functioning as independent contractors or through varied state-level contracts. These organizational and funding structures create unique conditions that can affect the implementation climate. For example, EI systems often emphasize interdisciplinary collaboration and family-centered service delivery, which necessitate context-specific measurement tools.

Given these complexities, it is crucial to understand how organizations may better support providers in the use of EBPs (Lee, Terol et al., 2024). While the ICS has been applied in various sectors, no studies to date have validated its use specifically within the EI settings. Thus, the purpose of this study is to address this gap and inform targeted strategies for increasing the equitable and consistent use of EBPs. Toward this end, we conducted the current study with EI providers to assess implementation climate specifically within EI agencies with these guiding research questions: (a) What is the internal consistency reliability and internal structure of the ICS-EI subscales? (b) What is the factor structure of the ICS-EI? (c) What are the descriptive characteristics of implementation climate in EI settings? and (d) To what extent are practitioner and program characteristics associated with implementation climate in EI settings?

Method

Scale Adaptation

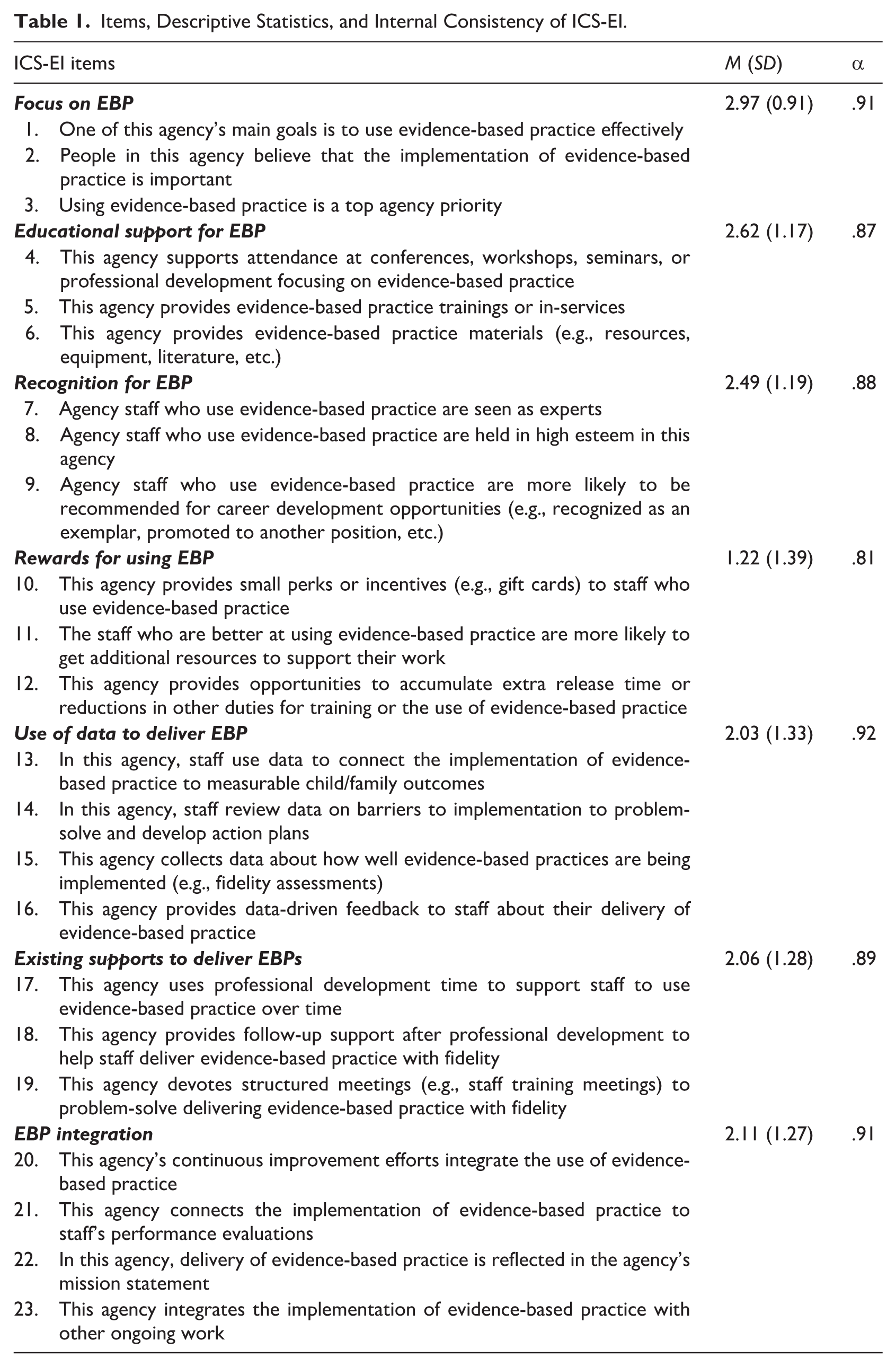

The original ICS was developed for mental health service organizations, which differ from EI systems in important ways. The original ICS was further adapted to the School Implementation Climate Scale (SICS; Thayer et al., 2022), which comprised 18 items across six dimensions of implementation climate. The SICS subsequently adapted the ICS for educational settings to expand the instrument to 23 items organized into seven subscales that reflect the educational context (see Table 1 for the subscales). In developing the ICS-EI, we began with the SICS as our base because its structure already reflected the realities of service systems serving young children. All 23 SICS items were reviewed for relevance to EI and family-centered service delivery. The adaptations included (a) modifying language to reflect the operational structures, (b) revising the items to reflect unique contexts of EI service delivery models, (c) modifying language to represent roles that are unique to EI settings (e.g., referring to ‘providers’ rather than ‘teachers‘), and (d) retaining the two items that were deleted in the original validation of SICS due to their conceptual relevance (Items 13 and 22). The adaptation was led by the first author in consultation with implementation science experts familiar with the ICS framework, who provided feedback for clarity and contextual fit. Given how SICS has been established and validated, the primary goal of adaptation was to ensure the scale accurately captured key aspects of the EBP implementation, such as interdisciplinary team dynamics or family-centered practices, among EI agencies. To ensure content validity, we reviewed the adapted items in an iterative process that included making minor revisions to improve clarity and relevance. See Table 1 for a list of items in the ICS-EI.

Items, Descriptive Statistics, and Internal Consistency of ICS-EI.

Participants and Recruitment

The study sample consisted of a subsample of a larger study (Lee et al., 2026). The sample for this study included 183 EI providers representing 33 states and Washington D.C. Upon receiving approval from an institutional review board, participants were recruited online, primarily through professional associations (e.g., newsletter through the Division for Early Childhood), statewide networks, and flyers on social media (e.g., Facebook) to ensure a diverse representation of EI settings, including urban, suburban, and rural agencies. EI providers were eligible to participate if they (a) provided EI services of any kind to young children between the age of 0 and 5 and their families, and (b) were able to complete the survey in English. Participants were instructed to rate the organization through which they primarily deliver EI services, which could include public agencies, private clinics, or independent contracts with the state. Each participant was automatically entered into a raffle for a $25 electronic gift card with a 10% chance. All participants provided informed consent before responding to the survey. Data collection started in April 2023 and ended in April 2024 after a total of 183 participants completed the survey on REDCap. For the purposes of this study, EBPs were conceptualized as interventions and approaches supported by peer-reviewed research demonstrating positive developmental or behavioral outcomes among young children. No standardized definition was provided to participants in the survey.

Given the online nature of the study, we implemented procedures to detect and remove likely bot responses following Storozuk et al.’s (2020) recommendations. Specifically, responses were flagged for deletion if they exhibited indicators of inauthenticity, such as (a) implausibly short completion times (e.g., less than 1 min for the entire survey), (b) multiple entries submitted at the exact same timestamp, (c) illogical open-ended write-in responses, and (d) email addresses having patterns such as nonsensical combinations of letters and numbers. All flagged cases were reviewed manually prior to removal to ensure accuracy in data cleaning.

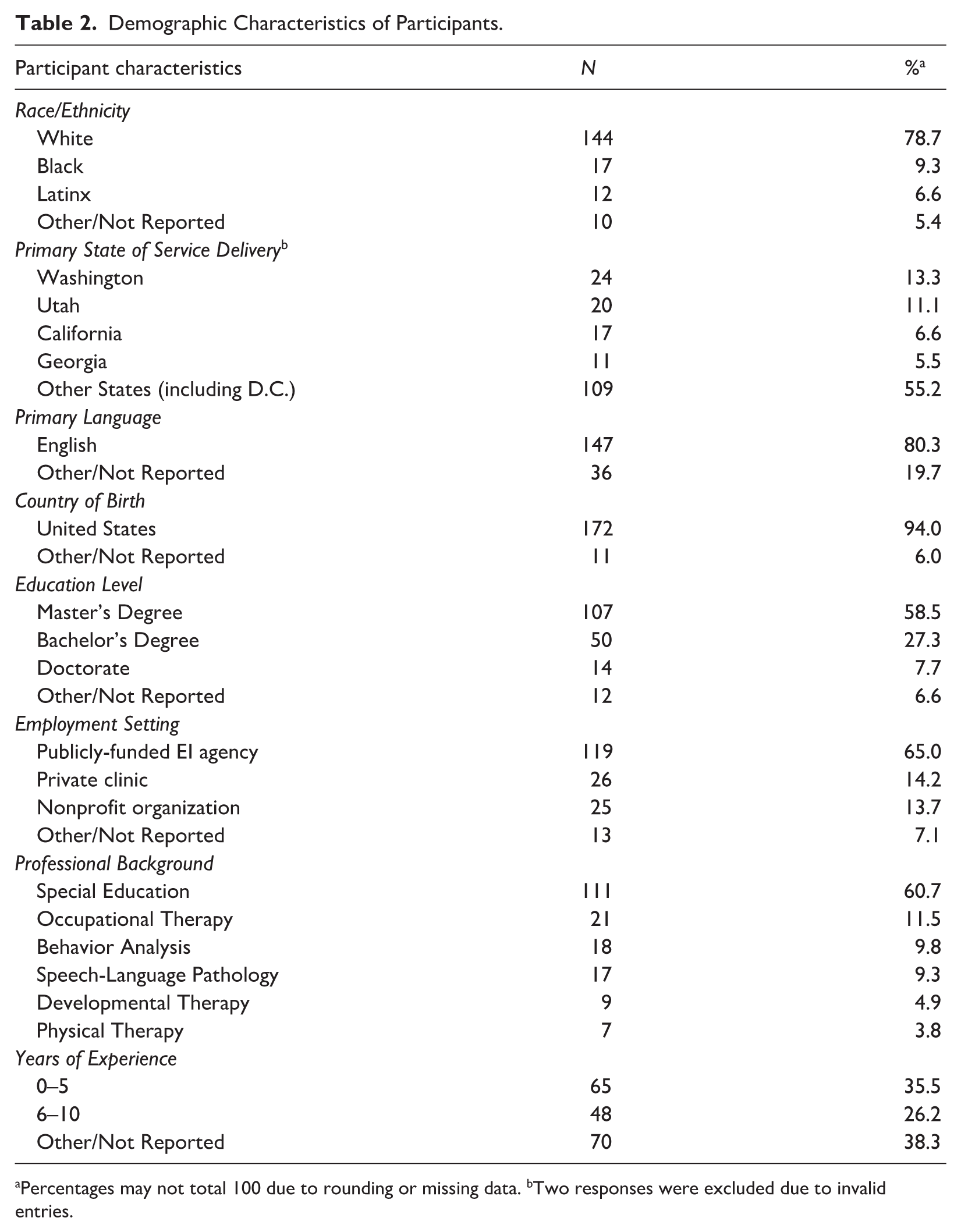

Participants completed a demographic and professional background questionnaire that assessed characteristics relevant to EI practice, including professional role, years of experience in EI, service setting, and prior training related to EBPs. These variables were selected based on prior implementation research suggesting that practitioner and organizational characteristics may be associated with perceptions of implementation climate. Notably, this sample was predominantly White and U.S.-born, with most participants reporting English as their primary language. The majority of participants worked in publicly funded Part C EI agencies with at least a master’s degree. See Table 2 for detailed demographic information.

Demographic Characteristics of Participants.

Percentages may not total 100 due to rounding or missing data. bTwo responses were excluded due to invalid entries.

Data Analysis

We conducted a series of analyses to evaluate the psychometric properties of the ICS-EI, starting with the descriptive statistics and internal consistency using Cronbach’s alpha. We then conducted an exploratory factor analysis (EFA) to preliminarily identify the factor structure, and a confirmatory factor analysis (CFA) was conducted to validate this structure. To conduct EFA, we employed oblimin rotation and the ordinary least squares (OLS) method to find the minimum residual (MR) solutions. We also used a polychoric correlation matrix as the participants’ data were recorded as ordinal (i.e., 0–4 Likert-type scale). For EFA, we fitted Model 1 (one-factor model) to Model 8 (eight-factor model) and compared the explained variance proportion and factor loading patterns. Consistent with common EFA guidelines, factor loadings of .30 or greater are considered as a minimal criterion for factor interpretation (Stevens, 2002; Tabachnick et al., 2007). For CFA, we estimated the model using the weighted least squares mean and variance adjusted (WLSMV) method to take ordinal responses into account. McDonald’s omega coefficients were further computed to examine the reliability of the scale. Furthermore, we computed correlations among each subscale of ICS-EI. Finally, a multiple linear regression analysis was conducted to identify practitioner and program characteristics that influence ICS-EI subscale scores. The predictor variables were participants’ employment settings, education levels, experience levels, professions, training, and experiences of family coaching. Participant’s employment settings were modeled using dummy coding (urban, suburban, and rural) due to their nominal measurement level. All other predictors were treated as ordinal variables and entered as single predictors in the regression model. All predictor variables were included in the regression model simultaneously. The assumptions of linear regression were evaluated through visual inspections of scatter plots, QQ plots, residual plots (residuals vs. fitted) and variance inflation factors (VIFs) and were determined to be acceptable. All analyses were performed using statistical software R with the implementation of psych (Revelle & Revelle, 2015) and lavaan packages (Rosseel, 2012).

Results

Data from a total of 183 participants were included in the analysis. The average subscale scores for the ICS-EI across all participants varied, reflecting differences in perceived dimensions in implementation climate. On a scale ranging from 0 to 4, the highest average score was observed for focus items (M = 2.97; SD = .97), followed by educational support (M = 2.62; SD = 1.17) and recognition (M = 2.49; SD = 1.19). In contrast, rewards had the lowest average score (M = 1.22; SD = 1.39), indicating that participants perceived minimal reward for implementation efforts. Other subscales, including use of data (M = 2.03; SD = 1.33), existing supports (M = 2.06; SD = 1.28), and EBP integration (M = 2.11; SD = 1.27), showed low to moderate levels of endorsement.

Factor Structure of ICS-EI

EFA yielded a well-defined loading pattern in which items exhibited primary loadings on their intended factors and minimal cross-loadings on non-target factors. The resulting seven-factor solution was readily interpretable based on the theoretical framework and the original scale. In addition, the seven-factor model accounted for 82% of the total variance, which indicates that the extracted factors captured a substantial proportion of the common variance in the item set. Overall, the EFA results provide empirical support for the proposed seven-factor structure and suggest that the scale reflects distinct yet related dimensions of the construct. Next, the seven-factor CFA was conducted and provided further evidence of the scale’s validity, revealing good model fit with a comparative fit index (CFI) of .960, a Tucker–Lewis index (TLI) of .952, a root mean square error of approximation (RMSEA) of .046, and a standardized root mean square residual (SRMR) of .039. Based on the Hu and Bentler (1999) criterion, the model fit indices were acceptable (e.g., RMSEA < .05, CFI & TLI > .95, and SRMR < .08). The omega coefficients also showed high reliability: .91 (Focus), .91 (Use of data), .91 (EBP integration), .89 (Existing support), .88 (Recognition), .87 (Support), and .80 (Rewards). These indices are strong evidence that the ICS-EI’s factor structure across all items is reliable and valid and well-supported by the data, complementing the findings from the EFA and demonstrating the scale’s robustness in measuring the intended constructs.

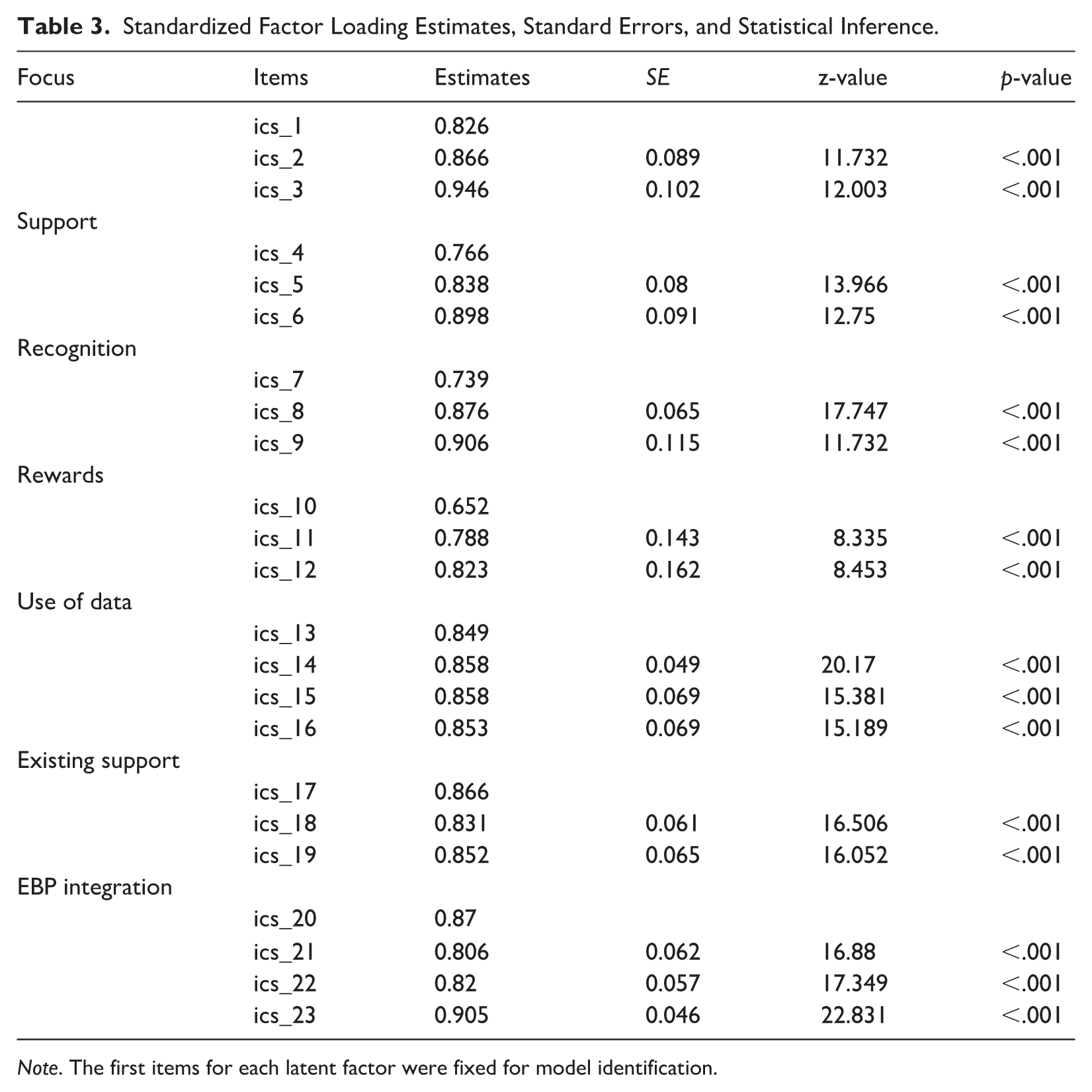

Table 3 shows the standardized factor loading estimates from the seven-factor CFA model. Overall, the standardized factor loadings showed that individual items were highly correlated with the latent factors, ranging from .65 to .95 and the average of .84. The standardized factor loading coefficients were considered high and strongly correlated, indicating developed items have strong measurement properties. The standard error estimates for the factor loadings were also acceptable, ranging from .05 to .16, and the average of .08. The standard errors were precise, implying that the factor loadings are reliable estimates.

Standardized Factor Loading Estimates, Standard Errors, and Statistical Inference.

Note. The first items for each latent factor were fixed for model identification.

Internal Consistency Reliability and Internal Structure

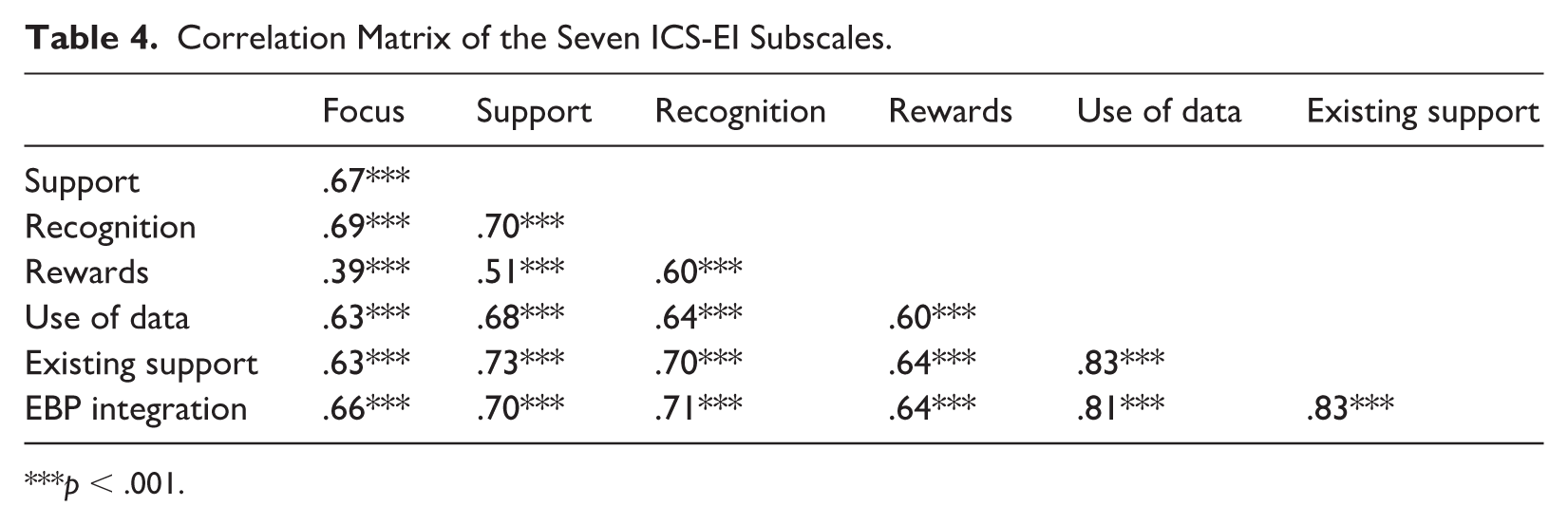

In terms of the psychometric properties, the ICS-EI exhibited excellent internal consistency in this sample’s total scores (α = .97), indicating that the items in the scale are consistently measuring the construct of implementation climate. To evaluate construct validity, correlations among each ICS-EI subscale were analyzed. The results indicated strong and significant correlations between all factors, with coefficients ranging from moderate to high. Table 4 shows the results for construct validity evidence of the scale. The correlations were significant and ranged from .39 (subscales between Focus and Rewards) to .83 (subscales between Existing support and EBP integration). The average correlation was .67, indicating strong internal consistency.

Correlation Matrix of the Seven ICS-EI Subscales.

p < .001.

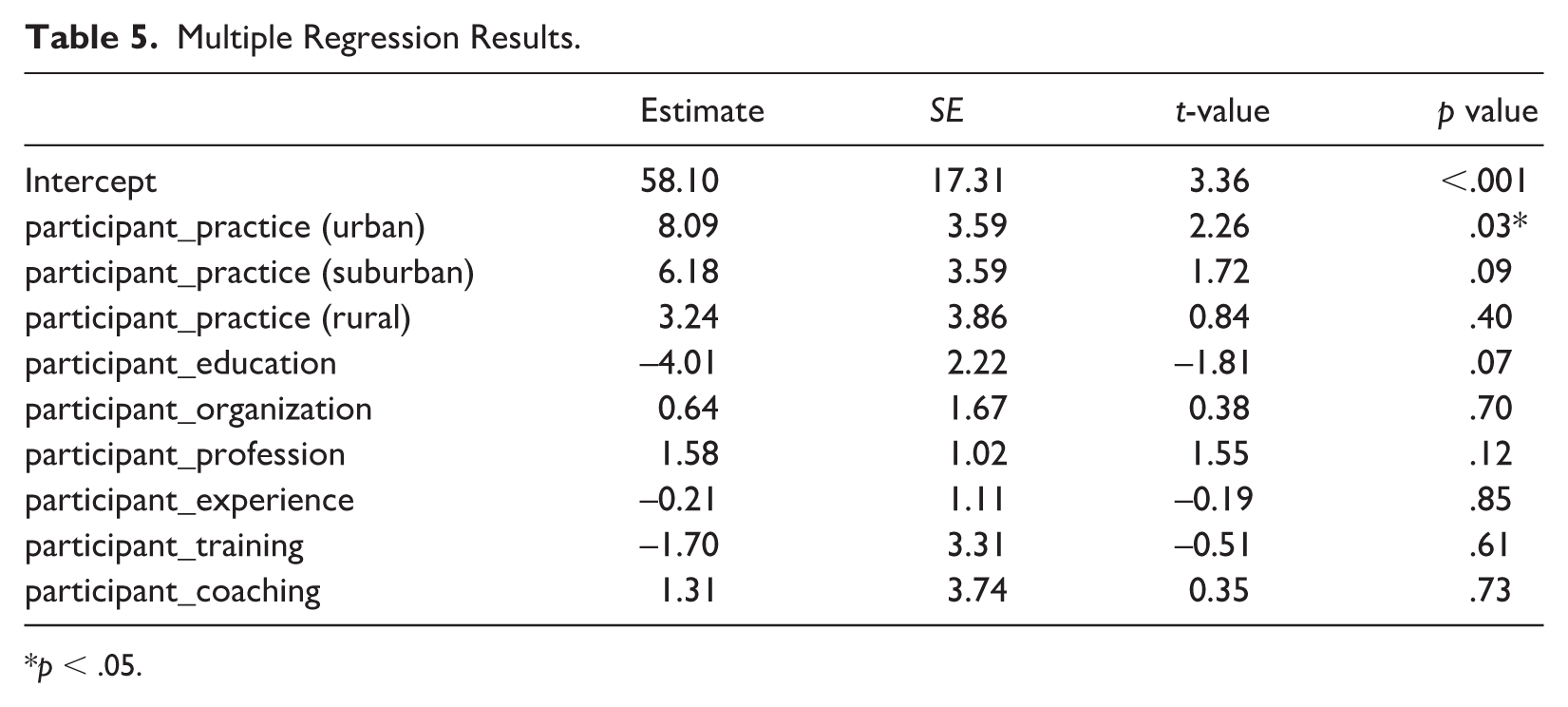

Regression Analysis

A multiple regression analysis was conducted to examine the relations between demographic variables and the total ICS scores. Table 5 shows the multiple regression coefficient results. The results revealed that only one predictor (participants practicing in urban areas) significantly explained the ICS scores. This analysis revealed that participants who practice in urban settings were significantly more likely to report higher ICS-EI scores than those who practice in suburban and rural areas (p < .05).

Multiple Regression Results.

p < .05.

Discussion

The purpose of this study was to examine the psychometric properties of the ICS-EI. Using the data from 183 EI providers across 33 states and Washington, D.C., we found that ICS-EI demonstrated high internal consistency, significant intercorrelations among its subscales, and evidence of a multidimensional structure. A CFA supported a seven-factor model with robust fit indices, highlighting the distinct yet related components of implementation climate measured by the ICS-EI. In addition, the total ICS-EI score was significantly predicted by relevant demographic/practice characteristics, further supporting the scale’s validity. The results from this study confirm the findings from prior studies that examined the ICS (e.g., Ehrhart et al., 2014; Thayer et al., 2022) in a different context and population, supporting its use in evaluating implementation climate within EI settings.

The descriptive analysis revealed notable variations in subscale scores, with the highest ratings observed for ‘focus on EBP’ and ‘educational support’. Conversely, participants rated the ‘rewards’ subscale lowest, which is consistent with prior studies that reported rewards do not necessarily align with the cultures in educational settings, and that education professionals are not often incentivized financially (Locke et al., 2019). Given these findings, EI agencies may benefit from considering explicit reward structures to acknowledge and externally reinforce staff engagement with EBPs. This may be particularly important yet complicated within EI systems using independent contracting, where there are fewer incentives, and even disincentives, tied to the use of EBPs. Furthermore, correlations among the constructs indicated robust associations between factors. Specifically, the ‘support’ and ‘recognition’ subscales demonstrated strong correlations, suggesting that these two constructs are closely related within the implementation climate context.

This newly validated ICS-EI can be a tool that is used flexibly, depending on the specific needs and contextual factors of an EI agency. For example, an EI agency that primarily utilizes independent contractors might focus more on subscales related to ‘educational support’ or ‘existing supports’ to identify gaps in professional development opportunities. Conversely, agencies facing workforce shortages or high turnover rates could emphasize the ‘recognition’ and ‘rewards’ subscales to strategically develop incentives and retention strategies. In addition, given the variability across states and differing implementation structures, agencies can selectively target specific dimensions, such as ‘use of data’ or ‘EBP integration’, to prioritize areas of greatest need or align their improvement efforts with state-level mandates or policies. This can be achieved by conducting internal implementation climate assessments using tools like the ICS-EI to identify subscale-level weaknesses. Based on these findings, agencies can then collaborate with local or state-level implementation teams, professional development providers, or technical assistance networks to design focused supports. These findings suggest that while there is a generally stronger focus on educational support, areas such as rewards and data-driven decision-making may require further attention to enhance the overall implementation climate.

Improving the implementation climate is crucial for enhancing the delivery and sustainment of EBPs in publicly funded systems of care. A positive implementation climate can directly support providers in adopting and consistently utilizing EBPs by clearly communicating organizational expectations, providing targeted resources and supports, and reinforcing effective practices through recognition and rewards (Lyon et al., 2018). Given that publicly funded systems often experience resource constraints, workforce shortages, and variability in service delivery (Pickard et al., 2024), fostering a strong implementation climate can mitigate these challenges, ultimately leading to improved service quality and better outcomes for children and families receiving EI services. It is important to note that the way in which implementation climate is enhanced may differ across EI systems with varying structures (e.g., those that use independent contracting versus those contracting with agencies that employ providers). Furthermore, an improved climate can help reduce disparities in developmental outcomes for children and families who need these services the most, particularly during this time of heightened systemic and sociopolitical uncertainty that severely threatens service access for all families. Thus, the ICS-EI will also be important to characterize how aspects of implementation climate are overlapping or distinct across varying EI system structures.

Specifically, the ICS-EI can be used in pre- and post-assessments to measure changes in organizational climate following a targeted intervention, such as coaching, leadership engagement, or data feedback systems, to improve EBP implementation. This makes it a valuable tool for researchers and administrators aiming to understand how implementation strategies influence provider experiences and EBP uptake within EI agencies. Furthermore, special education legislation and the relevant mandate under the IDEA require that services be grounded in scientifically validated practices. The ICS-EI can play a critical role in ensuring that agencies meet these legal and ethical obligations by supporting the development of infrastructure that facilitates sustainable and equitable use of EBPs across diverse EI contexts.

Implications for Research and Practice

In various public health contexts in which ICS has been used, it has served as a practical decision-making tool, helping organizations to (a) assess their readiness to implement EBPs, (b) identify strengths and areas for growth in their implementation climate, and (c) inform targeted strategies to improve service delivery. For example, schools serving students with disabilities may use the ICS to guide professional development planning (e.g., Williams et al., 2022), and community-based mental health agencies can leverage the data from their ICS to align organizational practices with EBP adoption goals for enhanced clinical outcomes (e.g., Woodard et al., 2021). The ICS-EI can serve as a pre–post measure to evaluate changes in implementation climate following specific implementation strategies within EI systems. By assessing climate at baseline and again after targeted implementation strategies, such as coaching, professional development, or leadership engagement, researchers, and administrators can determine the effectiveness of these strategies and understand how shifts in climate may mediate the adoption and sustainment of EBPs over time.

More considerations are warranted to ensure optimal implementation of EBPs for EI settings that have unique determinants. For example, EI providers have varying degrees of expertise and professional backgrounds, such as speech-language pathology, occupational therapy, and special education. Therefore, in practice, it would be important to consider how to assess and improve the implementation climate given the multidisciplinary nature of the EI workforce. Given how underfunded EI systems are, it would also be important to consider nonfinancial incentives to affect changes. Some providers may also contract directly with the state, in which case opportunities for continuing education depend largely on their own motivation or the minimal requirements set by the state or other regulatory bodies.

As noted, the SICS was adapted from the original ICS, partly by revising and adding three subscales, including Existing Supports to Deliver EBPs, Use of Data to Support EBPs, and Integration of EBPs (Thayer et al., 2022). Consistent with this change, the ICS-EI retained the same seven-factor structure as the SICS while contextualizing item language to EI systems. Compared with other organizational constructs such as implementation readiness (Shea et al., 2014) or leadership for implementation (Aarons, Ehrhart, Farahnak, & Hurlburt, 2014), the ICS-EI focuses specifically on the shared perceptions of expectations, supports, and rewards for EBP use, which makes it conceptually narrower but complementary to these broader contextual measures.

Limitations

This is the first article to adapt ICS in the EI settings, and the findings of this study should be interpreted with some limitations in mind. First, although we implemented several safeguards against inauthentic responses and detailed survey prompts, we cannot fully rule out the possibility of response bias or inaccurate reporting, particularly given the online nature of data collection. Second, we may not have fully captured the wide range of EI organizational structures, particularly among underrepresented regions in the United States. Relatedly, despite our efforts to recruit a diverse sample, the majority of participants identified as White and held advanced degrees, which is consistent with the EI field’s demographic composition. In addition, because the study did not collect organizational identifiers or examine inter-rater agreement, we were unable to assess whether perceptions were shared within organizations. Thus, the results should be interpreted as individual-level perceptions of implementation climate within their respective organizations. Next, we did not include a standardized definition of EBP to ensure responses reflected participants’ natural understanding and contextual use of the term, but we acknowledge that this may have inadvertently led participants to interpret the term differently. In addition, not all participants were within public EI systems. Some participants served children up to age 5, and some participants reported contracting with the state, while in for-profit clinics or university medical centers, to provide services under Part C of IDEA. Finally, although the ICS-EI was conceptually derived from ICS and SICS, the current study did not include a formal content validity assessment (e.g., expert rating) to confirm that all items adequately capture the construct of implementation climate within EI contexts. As such, it remains possible that some items may not fully represent the unique features of EI systems. Future research should include systematic expert review and stakeholder feedback to further establish the content validity and contextual fit of the ICS-EI.

Conclusion

This study provides strong initial evidence supporting the reliability and validity of the ICS-EI for assessing implementation climate in EI settings. This tool offers a nuanced understanding of the organizational factors that influence EBP uptake. As the field continues to prioritize the equitable delivery of high-quality services, tools like the ICS-EI can guide both research and practice in identifying and addressing structural barriers to implementation. Ultimately, strengthening the implementation climate within EI systems is a critical step toward improving provider practices and reducing disparities in outcomes for young children and families.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by the NCATS KL2 Multidisciplinary Clinical Research Career Development Award (KL2TR002317) awarded to Dr. James Lee.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.