Abstract

High-leverage practices (HLPs) are foundational in special education teacher preparation, yet programs lack systematic approaches for teaching and assessing them. This article presents a five-step design process for creating HLP rubrics that serve dual instructional and assessment purposes. The process was developed by analyzing lessons learned during the creation of the High-Leverage Practice Rubric of Assessment (HLPR-A) from 2019 to 2021. Using HLP 5 (interpreting and communicating assessment information) as an example, the methodology includes reviewing course objectives and identifying aligned HLPs, choosing authentic tasks, identifying task components, defining observable behaviors, and developing the final rubric. Teaching examples demonstrate how the HLPR-A functions as both an instructional tool and an assessment measure using Grossman’s practice-based framework. This evidence-based methodology provides faculty with a systematic approach for creating effective HLP rubrics.

Keywords

High-leverage practices (HLPs) are practice-based, foundational teaching practices that all beginning special education teachers should be able to implement effectively. Developed in the field of special education (McLeskey et al., 2017), HLPs are core practices essential for instructing students with disabilities across grade levels, schools, and content areas (Billingsley et al., 2019; McLeskey et al., 2017). Special education teachers face unique challenges in their classrooms, including managing challenging behaviors, individualizing instruction for students with diverse learning needs, and collaborating with families and other professionals (Billingsley et al., 2019). Given their importance, many teacher preparation programs have adopted HLPs as a framework for coursework (McLeskey et al., 2017). Researchers have found that HLPs are effective for helping preservice teachers build foundational teaching skills, and there is growing evidence supporting their effectiveness (Nelson et al., 2022).

However, effectively preparing special education teachers to use HLPs presents two challenges: teaching and assessing preservice teachers’ proficiency with them. Despite their inclusion in many teacher preparation programs, HLPs are often not systematically taught or assessed (McLeskey et al., 2018). Several barriers hinder the practice-based implementation of HLPs, including curriculum rooted in theoretical instruction, limited financial resources, and faculty instructional preferences (Cohen & Wyckoff, 2016; Wiseman, 2012). Teaching HLPs requires faculty to demonstrate expertise in practice-based approaches and to provide opportunities for preservice teachers to move beyond theoretical knowledge and apply their learning in practical contexts (McLeskey et al., 2018).

Assessing HLPs presents its own set of challenges because they require authentic practice experiences and authentic tools to measure preservice teachers’ performance in realistic teaching contexts. While rubrics are widely used in higher education, faculty often create them without systematic design processes or validation studies, leading to tools with questionable reliability and validity (Jonsson, 2014). The lack of a systematic approach and design, as well as validity and reliability issues in rubric development, extends to HLP assessments. The field of special education teacher preparation programs needs valid and reliable rubrics that can both teach and assess the HLPs; however, systematic approaches for developing such tools remain limited (McLeskey et al., 2017).

The five-step design process presented here was developed by systematically analyzing the lessons learned during the creation and implementation of the High-Leverage Practice Rubric of Assessment (HLPR-A) from 2019 to 2021. This work began with embedding virtual simulation for parent–teacher conference practice (Luke & Vaughn, 2021), which led to the development of the HLPR-A. Following validation studies that confirmed the rubric’s reliability and validity (Ford et al., 2022), the development and implementation process was analyzed to identify generalizable design principles. The following methodology presents these evidence-based insights, providing faculty with a systematic five-step approach for creating their own HLP rubrics and using them as both instructional and assessment tools.

High-Leverage Practices in Special Education

High-leverage practices are practice-based, foundational teaching practices that all beginning special education teachers should be able to implement effectively. Developed in the field of special education (McLeskey et al., 2017), HLPs are core practices essential for instructing students with disabilities across grade levels, schools, and content areas (Billingsley et al., 2019; McLeskey et al., 2017). Introduced initially as foundational practices to be included in teacher preparation programs (Billingsley et al., 2019; McLeskey et al., 2017), HLPs have since been reorganized into four domains: collaboration, data-driven planning, instruction in behavior and academics, and intensifying and intervening as needed. This article focuses on HLP 5: interpreting and communicating assessment information with stakeholders to collaboratively design and implement educational programs. HLP 5 is particularly critical in special education because teachers must regularly interpret formal and informal assessment data, communicate results to families who may have limited understanding of technical terminology, and collaborate to make high-stakes educational decisions that affect students’ services and placement (McLeskey et al., 2017).

Despite the recognized importance of HLPs in special education teacher preparation (Billingsley et al., 2019), programs still need structured approaches for teaching and assessing them. Researchers have conducted empirical studies involving HLPs and performance-based assessment, with some establishing rubric reliability (e.g., Luke et al., 2024) and others not using validated rubrics (e.g., Driver et al., 2024). While these efforts demonstrate that reliable HLP rubrics can be developed, the field still lacks systematic design methodologies that faculty can use to create validated assessment tools across different HLP contexts. Developing both instructional approaches and corresponding validated assessment tools is essential for maximizing the impact of HLPs in special education teacher preparation. Systematic HLP implementation would be strengthened by the creation of valid and reliable HLP rubrics that can be used confidently across programs to support preservice teachers’ learning.

Teaching HLPs Through Practice-Based Approaches

Since HLPs are inherently practice-based, rubrics designed to teach and assess them must also be grounded in practice-based principles. Practice-based teacher education emphasizes that novices learn professional practices most effectively when they can see examples of the practice, understand its component parts, and have opportunities to rehearse it in controlled settings before applying it with real students (Grossman et al., 2009). This article draws on the framework proposed by Grossman and colleagues, which supports meaningful, practice-based teacher education through three interrelated components: representations of practice (making invisible work visible), decompositions of practice (breaking complex tasks into teachable parts), and approximations of practice (providing realistic rehearsal opportunities). For example, when teaching HLP 5, representations might include watching videos of parent–teacher conferences; decompositions might involve breaking the conference into discrete skills, such as describing assessments and interpreting scores; and approximations might include practicing conferences with a simulated parent of a student with a disability in low-stakes environments (e.g., role-play or simulation). This framework provides the theoretical foundation for both designing and implementing HLP rubrics that serve dual instructional and assessment purposes.

Assessing HLPs Through Authentic Assessment and Rubrics

Teaching HLPs using practice-based approaches naturally aligns with authentic assessment. Authentic assessments engage learners in meaningful, real-world tasks and help preservice teachers make connections to professional practice (Butakor & Ceasar, 2021; Darling-Hammond & Snyder, 2000). Unlike traditional assessments that focus on knowledge recall and understanding, authentic assessments require preservice teachers to demonstrate their knowledge through the performance of skills. In special education teacher preparation, many programs require preservice teachers to demonstrate proficiency in HLPs in contexts that approximate real teaching environments, such as simulated parent–teacher conferences. One of the most practical tools for teaching and evaluating authentic assessment is a rubric, which clarifies expectations, supports learning, and assesses student performance (Montgomery, 2000). Analytic rubrics are especially helpful for preservice teachers because they identify specific skills and describe what successful performance looks like. They also support metacognition by helping learners track their progress over time.

However, despite the frequent use of rubrics in education, research examining the validity and reliability of rubrics remains limited (Jonsson, 2014). Faculty often create rubrics based on personal preferences or course norms, which can lead to vague descriptions or inconsistent application. In HLP contexts, this results in rubrics that are presented as prescriptive rather than illustrative of practice and are developed by faculty working in isolation without the benefit of cross-program collaboration and feedback. While some researchers have created valid and reliable rubrics to support HLP use (Ford et al., 2022; Johnson et al., 2019), the field still lacks systematic approaches for developing such tools. This gap is particularly problematic for special education faculty who need reliable tools to assess complex practices like HLP 5, where performance quality can significantly impact family engagement and educational decision-making.

In light of the need for valid and reliable HLP rubrics, the HLPR-A was developed and validated as both an instructional and an assessment tool. The HLPR-A is a valid and reliable tool developed to assess preservice teachers’ performance of HLP5: interpreting and communicating assessment results (Ford et al., 2022). Drawing from the iterative development and validation of the HLPR-A, the following five-step design process provides faculty with an evidence-based framework for creating rubrics that both teach and assess HLPs systematically.

The High-Leverage Practice Rubric for Assessment (HLPR-A)

Five-Step Rubric Design Process

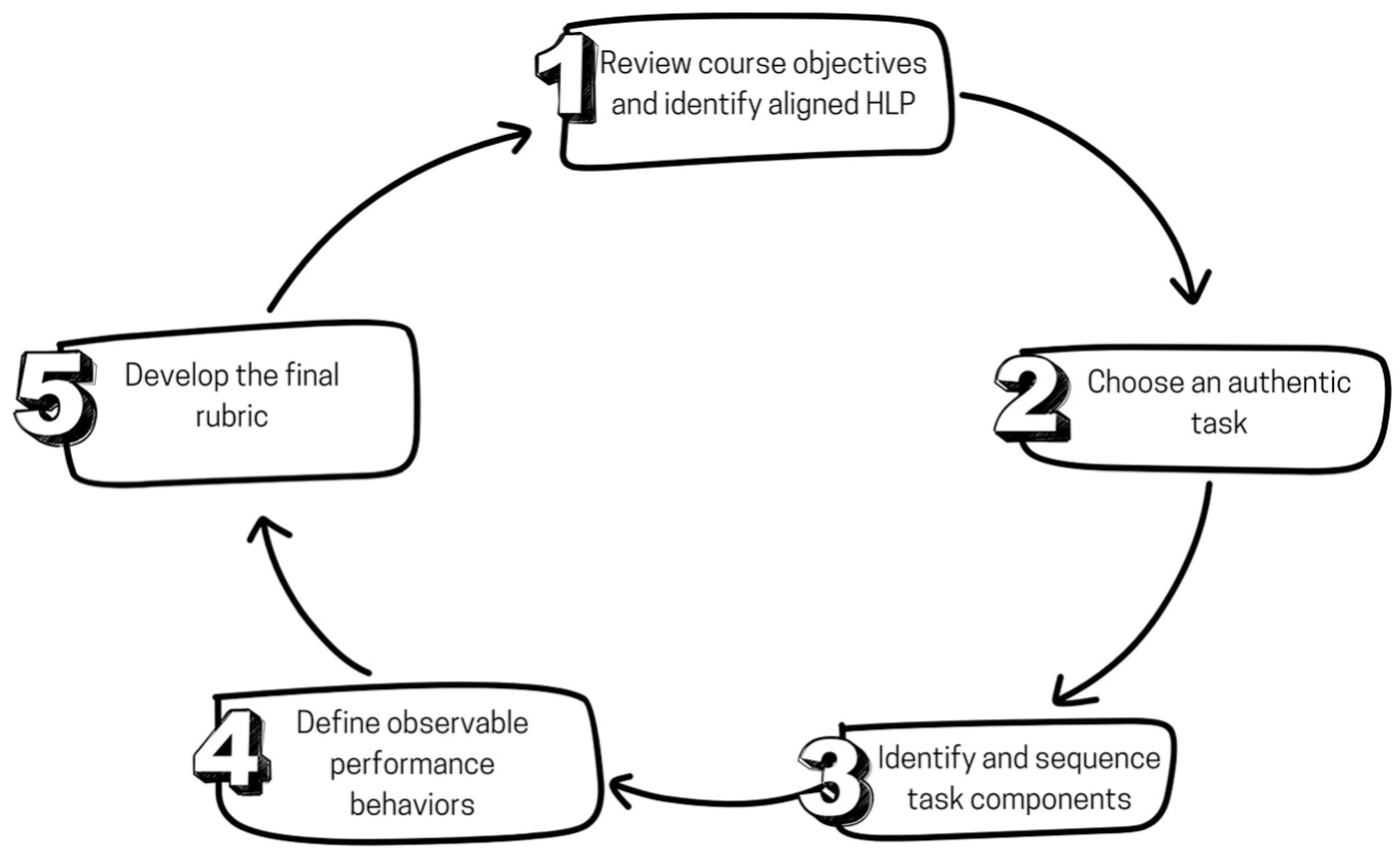

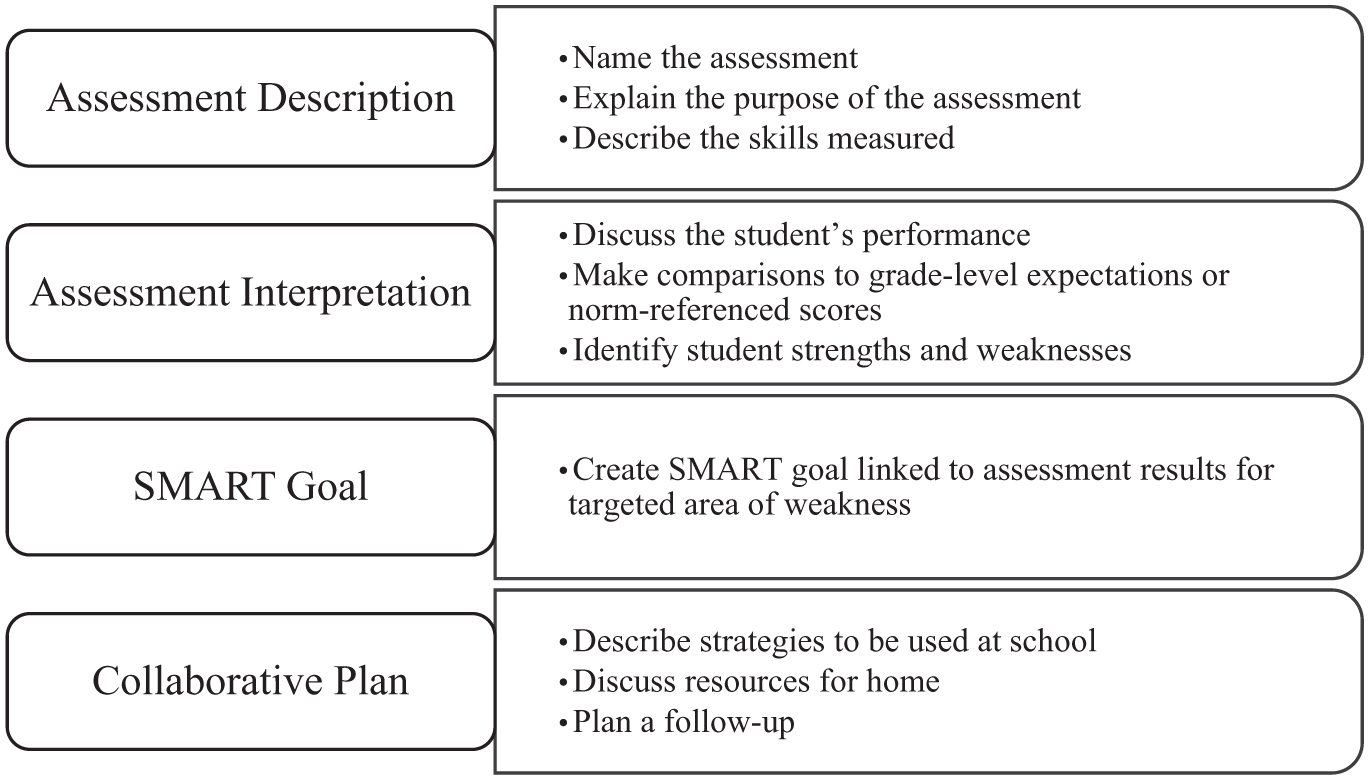

Faculty who want to create rubrics for teaching and assessing HLPs can follow a structured, five-step process (see Figure 1). The HLPR-A is an analytic rubric developed to teach and evaluate special education preservice teachers’ performance of HLP 5, interpreting and communicating assessment information during the authentic task of a parent–teacher conference. The five-step design process presented here was developed and refined through the iterative creation of the HLPR-A from 2019 to 2021. The following example illustrates how this systematic methodology applies to rubric development, drawing from lessons learned during the HLPR-A creation process.

Five-Step Rubric Design Process for Teaching and Assessing High-Leverage Practices.

Step 1: Review Course Objectives and Identify Aligned HLP

The first step in the rubric design process is to review course objectives and organize them from lower-order thinking to higher-order thinking. Then, review relevant HLPs and identify potential HLPs that align with the course content area. Looking at the HLP domains may help narrow down the selection. For example, a special education methods course may lead faculty to review instruction-focused HLPs, while interpreting data may point to assessment HLPs. When selecting an HLP, choose the one(s) that best match both the cognitive level and content focus of the course objectives. Eliminate HLPs that address different aspects of the broader domain and prioritize those that can be authentically practiced within the course. An additional step may be to ensure that the HLP is aligned with relevant field standards, though most course objectives are already aligned with field standards, so this would be a double-check of that alignment.

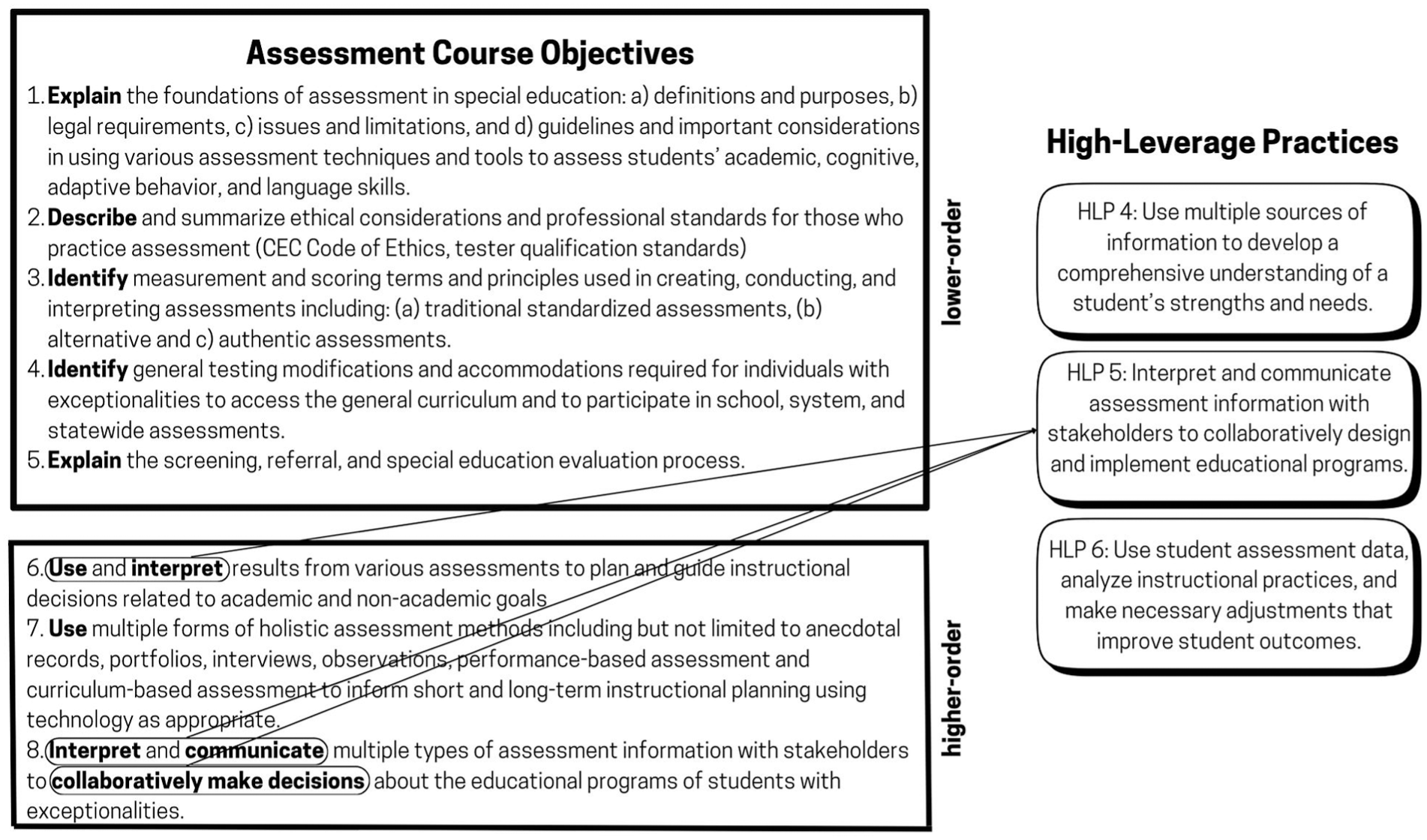

For example, when designing the HLPR-A rubric, the course objectives for the undergraduate special education assessment course were organized into lower-order thinking objectives (i.e., describe, identify, and explain) and higher-order thinking objectives (i.e., interpret, use, and communicate). The course objectives emphasized interpreting and communicating assessment results, which prompted a review of the assessment-related HLPs. After considering several options, HLP 5, interpreting and communicating assessment information with stakeholders, was selected because it aligned most closely with the course objectives that emphasized higher-order cognitive skills. HLP 4 and HLP 6 were also reviewed, but they focused more on gathering data or adjusting instruction. HLP 5 directly addressed the course’s instructional goals and the essential skill of sharing assessment results with families and professionals.

Once HLP 5 was selected, it was then aligned with the Council for Exceptional Children (CEC) Professional Practice Standard 4: Assessment. Beginning with the course objectives allowed for a clear connection between what preservice teachers were expected to learn, the targeted HLP, and relevant field standards (see Figure 2). The alignment not only ensured that the rubric targeted appropriate and measurable performance outcomes but also served as the foundation for identifying the rubric’s criteria and observable performance behaviors. HLP 5 was particularly well-suited for instruction because communicating assessment results is a critical responsibility for new special education teachers in the K-5 setting, especially during parent–teacher conferences and IEP meetings.

Alignment of Course Objectives and High-Leverage Practice 5.

Step 2: Choose an Authentic Task

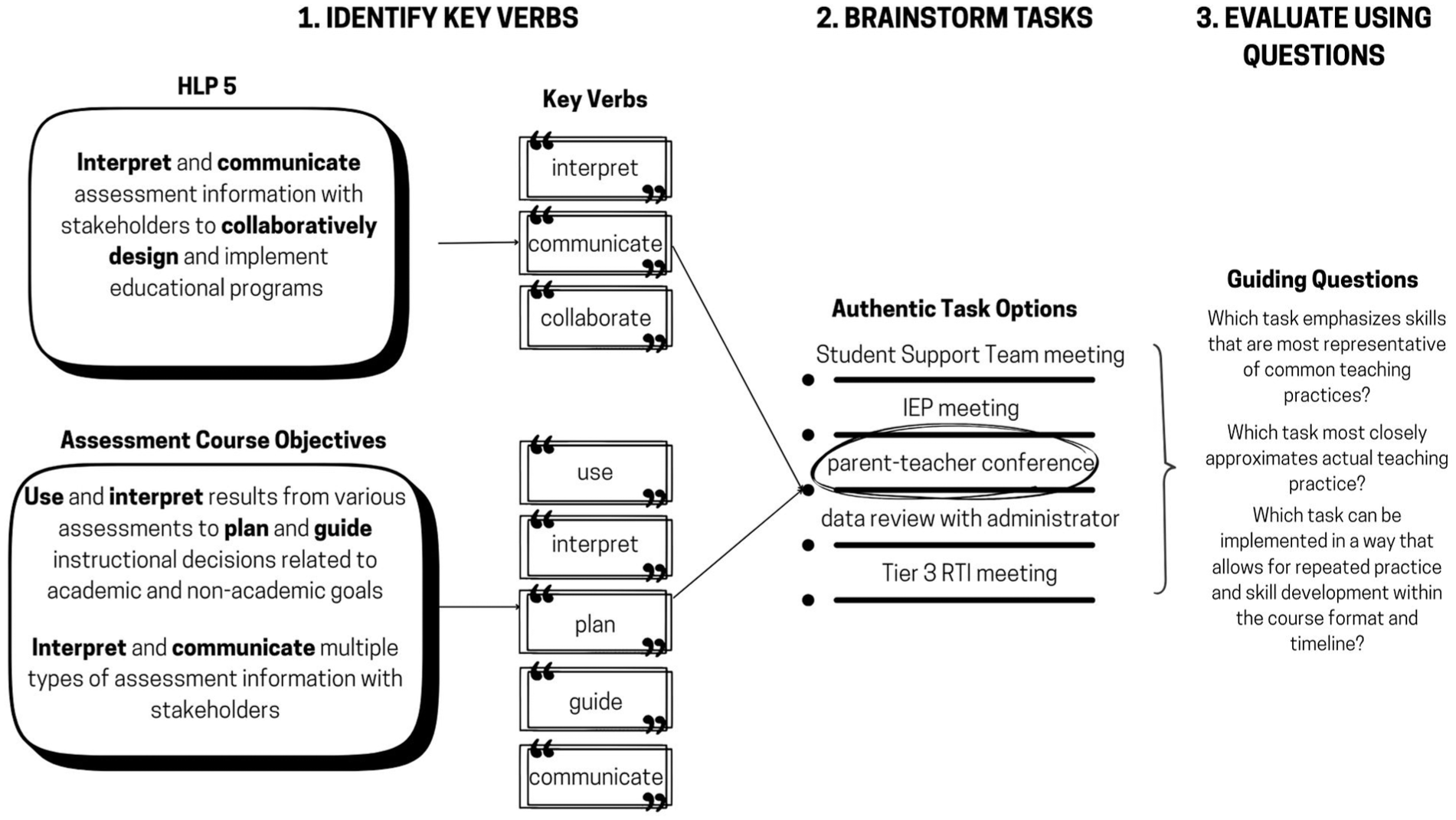

Once the HLP is chosen, selecting an authentic practice task is the second step in the design process. The task must be one that the preservice teachers can authentically practice within the course and receive feedback on. When selecting an authentic task to practice, first, identify the key verbs embedded in the standard, HLP, and course objectives. These action words signal the types of tasks preservice teachers need to perform and provide a productive starting point for brainstorming authentic practice tasks. Second, brainstorm potential practice tasks preservice teachers could rehearse in the course. There will be many options, so making a list of practical, useful options based on the faculty member’s knowledge of the course objectives and professional experience is a great place to start. Third, evaluate each potential authentic practice task using three guiding questions. One question is whether the skills emphasized in the task are generalizable to common teaching experiences. Another consideration is whether the authentic practice task meaningfully approximates actual professional practice. Finally, faculty should consider whether the task can be implemented in a way that allows for repeated practice and skill development within the course format and timeline. See Figure 3 for an illustration of the process for choosing the authentic task.

Process for Choosing Authentic Task.

For the HLPR-A, the verbs were drawn from the HLP and assessment course objectives. HLP 5 focused specifically on the verbs interpret, communicate, and collaborate. The course objectives included use, interpret, plan, guide, and communicate. After applying the evaluation criteria, a parent–teacher conference was selected as the authentic practice task. To focus more specifically on HLP 5, the conference centered on assessment information, since conferences may also address behavior, attendance, or general progress. When using the guiding questions, faculty considered that practicing a parent–teacher conference was likely to be more relevant across both general and special education settings than facilitating a Tier 3 response-to-intervention (RTI) meeting. A basic peer role-play may be helpful, but adding complexity through a faculty role-player or a virtual simulation makes the authentic practice task more realistic. Since the course was 8 weeks and online, the authentic practice task was feasible and flexible enough to be practiced multiple times. Based on these considerations, a parent–teacher conference was chosen, and preservice teachers were required to interpret and communicate both formal and informal assessment data for a fictional struggling student.

Step 3: Identify and Sequence Task Components Using Key Verbs

First, break the authentic practice task into smaller, teachable components that preservice teachers can observe (representation), demonstrate (decomposition), and practice (application). Conduct a literature review using key terms related to your task, focusing on practitioner literature that outlines established skills or procedures for performing the task, especially when no standardized procedure exists. Use professional judgment to select the most practical article for identifying skills. Another possible activity, in addition to the literature review, may be to interview or observe a practicing teacher to identify the specific steps of the authentic practice task.

Second, make a list of skills mentioned in the selected article, then examine the key verbs from the HLP and the course objectives alongside the essential practices found in the literature, and develop the task components. Third, organize the components by imagining the authentic practice task sequence yourself, then intentionally remove components that are not directly tied to the course objectives and the HLP. Faculty should note that this process involves professional judgment, and different faculty may have valid reasons for including or excluding specific components based on their course context and student needs.

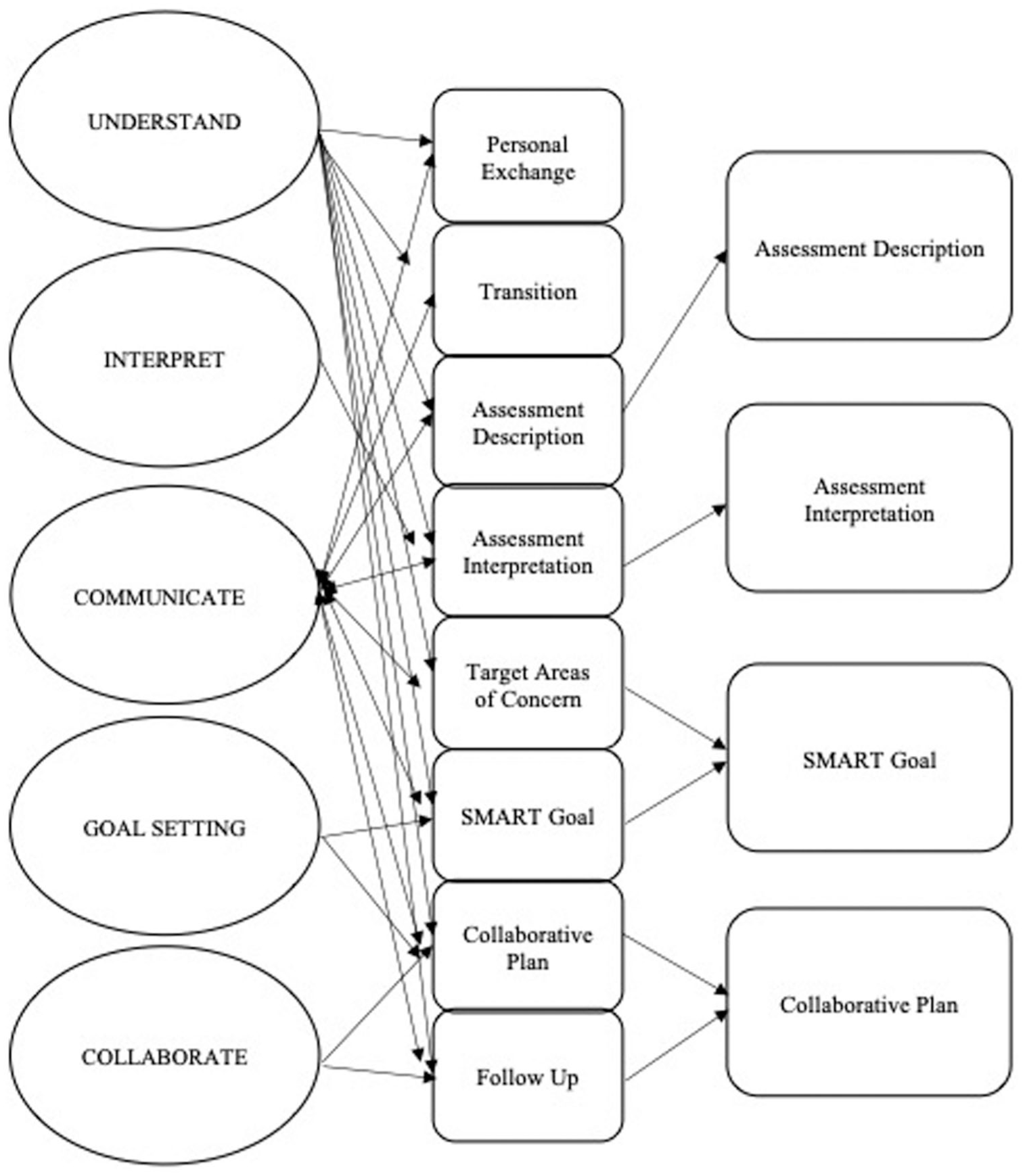

For the HLPR-A project, a brief literature review was conducted using key terms such as parent–teacher conference and parent–teacher collaboration, with a focus on practitioner literature rather than research studies to identify applied practices. After reviewing four or five articles, the Staples and Diliberto (2010) article was selected using professional judgment based on 15 years of teaching experience as the most practical for identifying parent–teacher conference skills. A list of skills from their article (building rapport, preparing communication systems, sharing agendas, action planning, and inviting meaningful parent participation) was compared with verbs from HLP 5. Authentic practice task components not directly tied to interpreting and communicating assessment information were intentionally removed. For example, personal exchange and transition components could be excluded because these focused on social conversation rather than assessment-related communication. Figure 4 shows how each key verb was mapped to task components that could be practiced during the parent–teacher conference.

Key Verbs Mapped to Parent–Teacher Conference Task Components.

Step 4: Define Observable Performance Behaviors

After mapping the authentic practice task and identifying key components, Step 4 is to define what performance looks like. First, faculty should review the aligned key verbs alongside the structured components of the authentic practice task. Second, write specific, observable performance behaviors for each component to reflect the expected actions preservice teachers should demonstrate when performing the task. Observable performance behaviors are essential for both teaching and assessment because they clarify exactly what preservice teachers should say or do during the task and enable instructors to provide specific feedback on individual components of the task. Third, ensure each behavior is written in specific, concrete terms so it can be taught, observed, practiced, and assessed.

For the HLPR-A project, faculty reviewed the aligned key verbs alongside the structured components of the parent–teacher conference. Observable performance behaviors were written for each component of the conference (e.g., assessment description, assessment interpretation, SMART goal, and collaborative plan) to reflect the expected actions preservice teachers should demonstrate when performing the conference. These performance behaviors became the rubric criteria in the HLPR-A. They provided a shared understanding for both instructors and preservice teachers, making the rubric both an instructional and assessment tool. Figure 5 illustrates the observable performance behaviors developed for each parent–teacher conference component. For example, the assessment description component included specific behaviors, such as naming the assessment, explaining its purpose, and describing the skills it measures.

Structured Components of Parent–Teacher Conference Aligned to Observable Performance Behaviors.

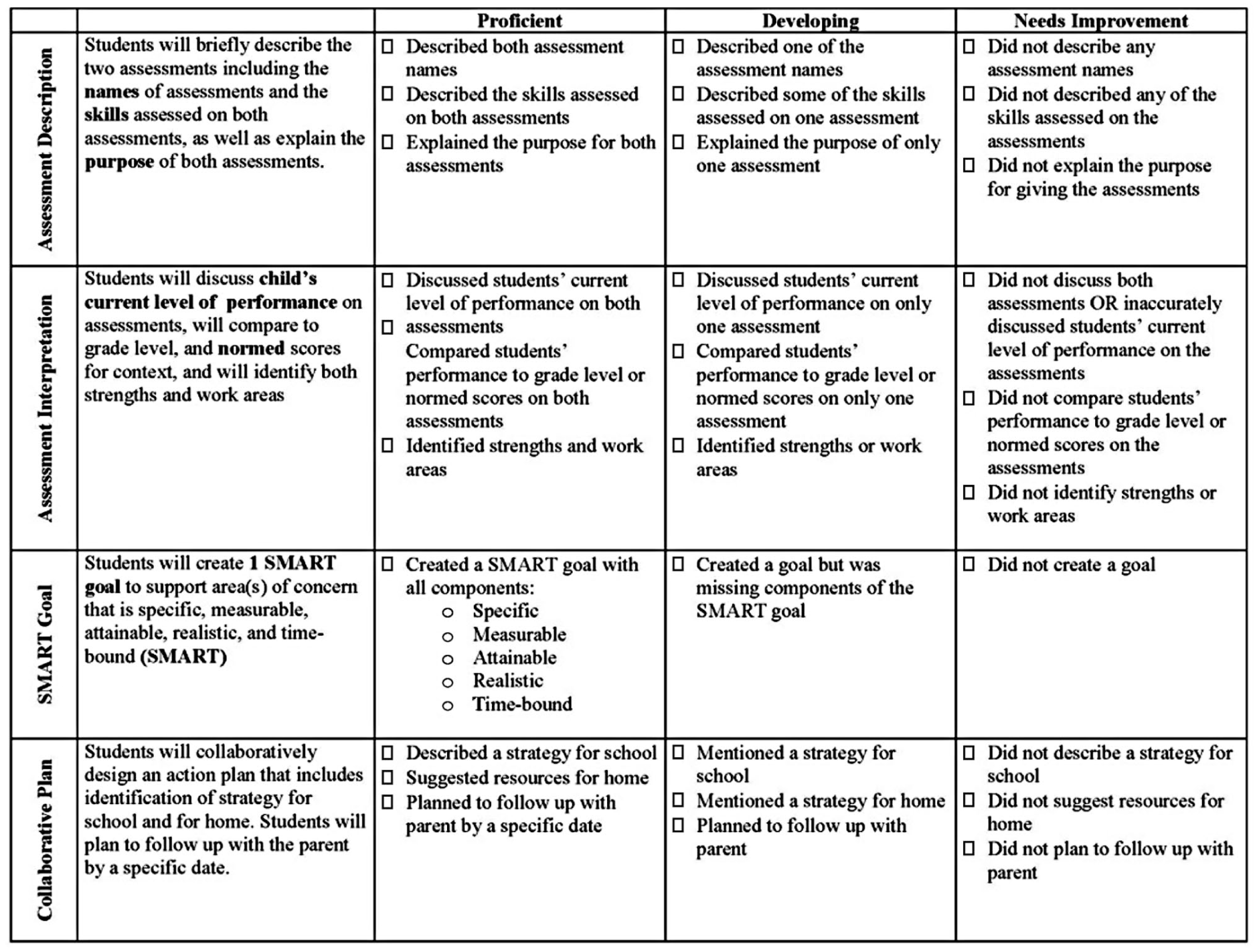

Step 5: Develop the Final Rubric

After identifying the authentic practice task components and defining the performance behaviors, the final step is to develop the rubric for both instruction and assessment. Creating the rubric includes choosing a rubric format, organizing it by components, and selecting and defining performance levels.

Choose an Appropriate Rubric Format

Start by selecting a rubric format that works well for the HLP(s) in the course. Unlike a holistic rubric that emphasizes summative performance, an analytic rubric format works especially well for HLPs because it allows faculty to evaluate discrete skills and provide targeted feedback on those skills.

Organize the Authentic Practice Task by Components

Next, structure the rubric so that each row corresponds to a task component of the authentic practice task. Listing each authentic practice task component in its own row helps preservice teachers clearly identify specific skills they need to develop and allows faculty to provide focused feedback. Avoid combining multiple performance behaviors into a single row, as this creates visual clutter and makes the assessment less precise. Include a brief description of the observable performance behaviors (from Step 4) and use formatting tools like bold or underline to emphasize key elements.

Select and Define Performance Levels

Faculty should select the number of performance levels and label them, then write performance-level descriptions that are operationalized enough for other raters to use to score preservice teachers consistently. Begin by selecting how many performance levels the rubric will include, such as three (e.g., proficient, developing, and needs improvement) or four levels (e.g., exceeding, proficient, developing, and needs improvement). The number of levels sets expectations for what preservice teachers should achieve. Faculty should consider the learners’ experience and the complexity of the skill being assessed. For example, undergraduate preservice teachers with no prior teaching experience may benefit from a three-level rubric (proficient, developing, needs improvement) that focuses on building foundational competence rather than exceeding expectations. Alternately, graduate students already working in the classroom might effectively use a four-level rubric that includes an exceeding category, as they may have some of the targeted prerequisite skills and need to focus on refining them. Ultimately, there are no specific rules for choosing the number of levels, and faculty should consider their goals for their learners when deciding how many levels to include.

After selecting the number of levels for the rubric, choose level labels that clearly communicate expectations. Labels like “proficient” or “meets expectations,” “developing” or “growing toward expectations,” and “needs improvement” help preservice teachers understand their current performance and what skills need further development. Use explicit, observable descriptions of behaviors so that different raters can score performances consistently. Beginning the description of the behavior with a verb can help faculty stay focused on operationalizing actions that preservice teachers can demonstrate. Avoid vague qualitative terms like “adequately,” “poorly,” or “good,” and instead use specific, quantitative criteria whenever possible (e.g., two, both). For example, rather than stating “describes assessments adequately,” specify “describe both assessment names, identifies the skills on both assessments, and explains the purpose of both assessments.” When quantification is not possible, describe exactly what the preservice teacher says or does at each level:

Proficient level: Include all behaviors identified in Step 4.

Developing level: Specify which essential behaviors are present and which are missing or incomplete.

Needs Improvement level: Identify which critical behaviors are absent or incorrectly performed.

The specificity of performance-level descriptions is crucial from an instructional perspective because preservice teachers use the rubric to plan their performance of the authentic practice task and then self-assess afterward to determine whether they have met expectations. The more specific the level descriptions, the more feedback preservice teachers receive from the rubric itself, reducing reliance on instructor interpretation and making expectations transparent.

Use the Rubric Formatively and Summatively

Plan to use the rubric throughout the course for both formative and summative purposes. Early in instruction, introduce the rubric as a teaching tool to model the performance behaviors for each authentic practice task component and to give specific feedback on preservice teachers’ practice. Later, use the same rubric to evaluate performance, providing consistency across preservice teachers’ learning and assessment. By building the rubric around clearly defined task components and performance behaviors, faculty can create a tool to teach and assess HLPs.

For the HLPR-A project, an analytic rubric was chosen because a holistic rubric was not specific enough to break down each component of the task. Each component of the parent–teacher conference was placed in a row, with the performance behaviors added (see Figure 6). For example, the first component is the assessment description, in which preservice teachers had to briefly describe the two assessments, including the names, skills, and purposes of each. Under proficient, the expected behavior was that preservice teachers could name both assessments, identify the skills each assessed, and explain, in one sentence, the purpose of each assessment. This structure was applied across all four authentic practice task components (i.e., assessment description, assessment interpretation, SMART goal, and collaborative plan), creating a tool for both teaching and assessment.

High-Leverage Practice Analytic Rubric for Assessment Focused Parent–Teacher Conference.

To implement the HLPR-A, the instructor introduced the rubric to preservice teachers at the very beginning of the course to help them familiarize themselves with parent–teacher conferences and the skills needed to conduct one focused on assessment. Over the duration of the course, the instructor modeled each task component and then allowed preservice teachers to practice the task component, and gave them a copy of the rubric with their performance marked as formative feedback. At the end of the course, the instructor assessed their final performance in a virtual simulation and gave them a copy of the rubric with their performance assessed.

Teaching With the HLPR-A Rubric

Given that HLPs are grounded in practice-based teacher education, the HLPR-A implementation followed Grossman’s practice-based framework for representing, decomposing, and approximating the practice of a parent–teacher conference on assessment. The HLPR-A made the invisible practices of parent–teacher conferencing about assessment explicit through representing the skills within a parent–teacher conference, breaking those skills down into smaller steps through decomposition, and supporting preservice teachers as they practice skills in as real as possible parent–teacher conference approximations.

Representations of Practice

The HLPR-A represented an assessment-focused parent–teacher conference through identifying the skills of describing the assessment, interpreting the assessment, creating goals, and making a plan. Preservice teachers who had only read about parent–teacher conferences would have had only one representation, but the HLPR-A provided an explicit model of what a parent–teacher conference looks like. Preservice teachers used the HLPR-A to observe a teacher during their field experiences or to reflect on a past parent–teacher conference and identify the components demonstrated. The HLPR-A was used in conjunction with observing parent–teacher conferences in field placements or in video and reading about parent–teacher conferences, so it gave preservice teachers a clearer understanding of what a conference could look like and how it could be organized. Using the HLPR-A to model the major skills in an assessment-focused parent–teacher conference helped preservice teachers develop a more specific understanding of what a parent–teacher conference is. For example, preservice teachers saw that the HLPR-A described the assessment differently from how they interpreted the assessment scores. The HLPR-A’s specific criteria made these distinctions explicit to preservice teachers who might otherwise struggle to differentiate between these two conference components.

Decompositions of Practice

The HLPR-A made the invisible practices of a parent–teacher conference explicit by decomposing the actual parent–teacher conference. It took the assessment description, interpretation, SMART goal, and collaborative plan and gave preservice teachers steps to accomplish those parts of the conference. The decomposition of the task allowed preservice teachers to practice smaller skills to build toward bigger skills in conferencing, making the task less daunting and more approachable for novices. Planning templates aligned with the HLPR-A further supported the decomposition, allowing preservice teachers to focus on planning their conferences in line with the specific performance behaviors identified in the HLPR-A. For example, the planning template guided preservice teachers to prepare for each conference component on the HLPR-A. They wrote a one-sentence description of each assessment, listed the assessment scores for interpretation, drafted a SMART goal based on the fictional student’s weaknesses, and identified specific strategies for home and school to support the student and their family. The performance behaviors and the HLPR-A criteria (e.g., proficient and developing) helped preservice teachers improve their practice by clarifying what each level represented and allowing them to set personal goals for their practice conferences.

Approximations of Practice

Preservice teachers engaged in approximations of practice through self-rehearsals, peer role-plays, and virtual simulations. The HLPR-A served as an instructional foundation tool for practice and feedback during a parent–teacher conference. In pairs or small groups, preservice teachers took turns leading mock parent–teacher conferences using a fictional data set and a role-play guide, reinforcing the expectations established by the HLPR-A. These sessions served as low-stakes rehearsals, allowing preservice teachers to build confidence and refine their communication before the final virtual simulation.

During these approximations, the HLPR-A supported systematic observation and feedback. Preservice teachers used the HLPR-A to observe and score their own videoed conferences and then applied the same criteria to evaluate their peers’ conferences. They were required to justify each rating with observable evidence that helped them internalize the rubric content. This process allowed preservice teachers to digest the complexity of parent–teacher conferences while setting personal goals for improvement, based on targeted peer and instructor feedback, in line with the HLPR-A’s specific criteria. The HLPR-A served as a roadmap throughout the instructional process, not just as an assessment tool at the end. As a result, preservice teachers approached the final simulation with a clear understanding of what high-quality performance of HLP 5 in a parent–teacher conference context looked like in practice.

Conclusion

This article presented a five-step design process that faculty can use to systematically create analytic rubrics for teaching and assessing any HLP through authentic tasks. Developed and refined through the iterative creation of the HLPR-A from 2019 to 2021, the design process outlined in this paper addresses the critical gap in the lack of systematic tools for both teaching and assessing HLPs. While HLPs are recognized as foundational practices, many teacher preparation programs struggle to move beyond theoretical instruction and provide preservice teachers with effective opportunities to practice and receive targeted feedback on foundational teaching skills.

The five-step design process provides faculty with a replicable, adaptable framework across HLP domains, course contexts, and authentic practice tasks. By grounding rubric development in course objectives, key verbs, and observable performance behaviors, faculty can create tools like the HLPR-A that serve as both instructional resources and assessment measures. The HLPR-A demonstrates how this methodology provides preservice teachers with an explicit guide to improving their teaching practices while giving faculty both a teaching tool and a reliable means to assess competency in HLPs.

Footnotes

Authors’ Note

This article builds on previous work published as Luke, S. E., & Vaughn, S. (2021). Using virtual simulations to prepare preservice teachers for parent-teacher conferences. Intervention in School and Clinic, 57(3), 182–188. ![]()

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.