Abstract

In a world fraught with Grand Challenges, there is a renewed urgency in innovating in both what and how we teach. Teaching innovations (TI) are a vital aspect of our scholarly impact. In this paper, we explore how the maturation of the field and the calls for increased rigor have influenced TI articles. First, we examined the evolution of JME’s editorial policy regarding TI. We find that two major requirements for TI articles have been gradually emphasized: a stronger grounding in the literature, and more robust evidence of effectiveness. Second, we explored how current TI articles have responded to these requirements, by analyzing the TI articles published in JME from 2014 to 2023. While some attempts to meet these new requirements were observed, our findings indicate that the literature could be more fully leveraged and that the evidence of effectiveness presented remains weak. We argue that JME should explicitly affirm its identity as a research journal, which would require that TI articles adopt a research mindset. We outline the overall characteristics of this research mindset, along with specific requirements as they apply to TI articles. Implications for the community, TI articles, and JME are discussed.

Keywords

In a world fraught with Grand Challenges, there is a greater scrutiny than ever before on what and how management students should be taught (Mailhot & Lachapelle, 2024; Shantz et al., 2023). In this challenging context, teaching innovations (TI) are a crucial pathway for achieving a positive impact as management educators (Mason et al., 2024). We must innovate not only in our teaching objectives, but also in the content designed to achieve these objectives, the methods and activities employed to facilitate learning, and the approaches used for assessing learning outcomes (Bajada et al., 2019; Mason et al., 2024). Sharing, disseminating, improving, assessing, and critically questioning these teaching innovations are vital aspects of our scholarly endeavors (Weimer, 2006). Over the past 50 years, by consistently publishing teaching innovations across various realms of business and management education, JME has significantly contributed to the advancement of the field in this regard. TI articles in JME present “cutting-edge, experientially-oriented teaching, and learning approaches,” which are presented “with sufficient detail and evidence of effectiveness for readers to implement the activities in their own environments” (MOBTS, 2024a).

As the field of management education matured and moved forward in its “long march to legitimacy” (Arbaugh, 2008; Rynes & Brown, 2011), calls for more rigor in management education research have been relentless (Arbaugh, 2008; Bacon, 2016a, 2024; Hamdani et al., 2023; Köhler, 2016; Köhler et al., 2017; Schmidt-Wilk & Fukami, 2010). In comparison to educational research (research conducted by faculty in education), management education research (carried out by business and management faculty) has been accused of falling short of scientific standards, standards typically adopted when these same researchers conduct research in their core management discipline (Canning & Masika, 2022; Weimer, 2006). These calls for greater rigor have extended to teaching innovations (Hamdani et al., 2023; Kember, 2003; Weimer, 2006). The way management education scholars design, deploy, and assess teaching innovations has significantly evolved, as knowledge about teaching quality and effectiveness, along with knowledge about learning assessment, increased (Kember, 2003; Shaw et al., 1999).

In this paper, we investigate how the maturation of the management education field has influenced our practices in developing and publishing teaching innovations. Drawing on JME and its rich 50-year history of publishing teaching innovations, we proceed through a structured two-phase process. First, we examined the evolution of JME's editorial policy regarding teaching innovations since its establishment 50 years ago. This has resulted in two key aspects for TI articles: a stronger grounding in the literature to establish the TI’s relevance, and a requirement for more substantial and more robust evidence of its effectiveness. Second, we explore how TI articles published in JME have responded to the call for heightened rigor. We evaluated the 42 TI articles published in JME from 2014 to 2023 across these two key dimensions: their use of the relevant literature and their approach to showcasing the TI’s effectiveness.

Our findings indicate significant progress compared to earlier assessments of the quality and rigor of TI articles published in JME or other SoTL (Scholarship of Teaching and Learning) journals (e.g., Shaw et al., 1999; Schmidt-Wilk & Fukami, 2010; Weimer, 2006). However, the literature was too often ignored or not leveraged to its full potential, and the evidence of effectiveness presented remains weak. More importantly, our analysis highlights the frequent absence of a research mindset in the TI articles studied. This has a detrimental impact on how a TI is designed, how its effectiveness is evaluated, and how the results are communicated. We argue that JME should explicitly affirm its identity as a research journal, which would require that TI articles adopt a research mindset. The overall characteristics of this research mindset, along with specific requirements as they apply to TI articles, are presented. Implications for the management education community, TI articles, and JME are discussed.

The paper is organized as follows. The first section presents Phase 1 of the research, starting with the method used for selecting and analyzing JME editorials, and then discussing the findings on the evolution of JME’s editorial policy related to TI articles. In the second section, we highlight key insights from the literature on learning assessment to contextualize the editors’ messages and inform our analysis of the evidence of effectiveness. We then proceed to Phase 2 of the research, beginning with the method used to extract and code the 42 TI articles published in JME between 2014 and 2023, followed by a presentation of the results. The Discussion section explains why adopting a research mindset is necessary, how it would specifically apply to TI articles, and what the practical implications for JME are.

Teaching Innovations Across JME’s 50-Year Journey

To explore how JME’s approach toward TI has evolved over the 50 years of its existence, we analyzed the journal’s editorials since its inception. Editorials represent a platform for editors to directly communicate with their readers and authors and “shape a unique character of the journal” (Plakhotnik, 2024, p. 89). They represent a “potential to influence” (Hulme et al., 2018), which editors seize for conveying key messages about their field and journal. Editorials have been a subject of scholarly research to understand the evolution of thinking in a particular field, or to explore how a specific issue has been dealt with over the years (Hulme et al., 2018; Nestel, 2017; Plakhotnik, 2024), including in management (Hensel, 2019).

Method: Phase 1

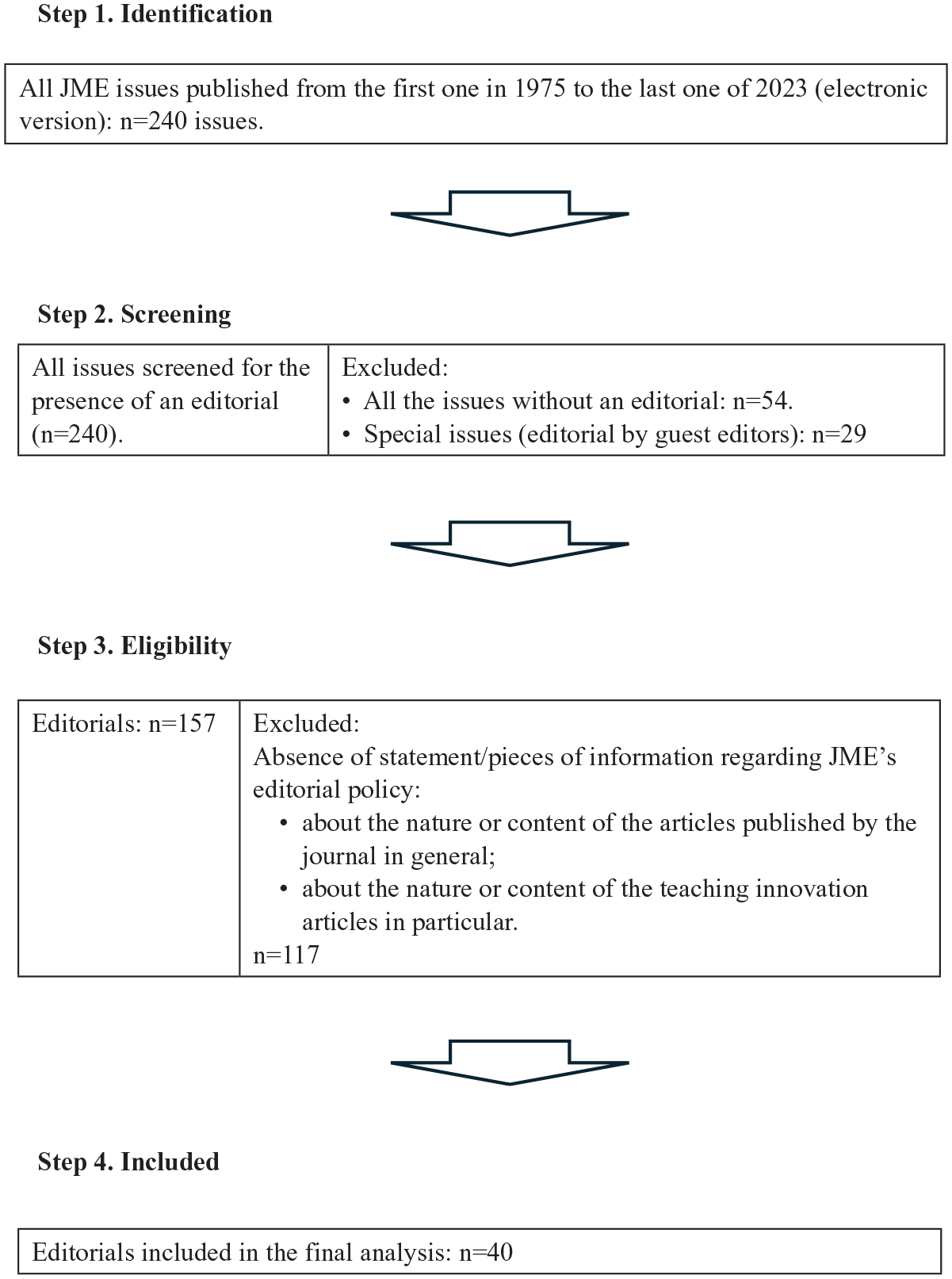

Following Paré et al.’s (2015) typology, we conducted a descriptive systematic review with the goal of identifying the key messages about TI communicated through JME editorials since its first publication in 1975. The selection and analysis of editorials was conducted using a step-by-step approach for systematic literature reviews based on Moher et al.’s (2009) guidelines (see Figure 1).

Phase 1 Method—Flow Diagram.

All JME issues were considered from the first one in 1975 to the last one of 2023. This led us to the identification of 240 issues (Step 1). We went through the electronic version of each issue to extract the “editorial” (Step 2). In many cases, the editorial was clearly labeled as such with titles such as “Editor’s Corner”; in other cases, we considered as an editorial the first article (generally less than five pages) in an issue signed by the Editor. From 2005 onwards, all issues have an editorial; before 2005, the presence of editorials is very sporadic. We excluded the editorials in Special issues signed by guest editors since they aim at presenting a particular issue (mostly about content) rather than shaping the journal’s editorial policy. At the end of this screening step, 157 editorials were detected and subsequently analyzed (Step 3). The two authors split the reading of the 157 editorials between them. An editorial was to be included based on whether it contained statements or pieces of information that concerned JME’s editorial policy either (1) regarding the nature or content of all articles published in the journal (thus including TI); or (2) regarding the nature or content of TI articles specifically. If there was any doubt about the eligibility of an editorial, it was initially included. At the end of this step, the two authors discussed the few editorials with uncertainties and made a final decision on their eligibility. This investigation led to the final selection of 40 editorials (see Appendix A) that contained statements regarding one or both themes (Step 4).

We conducted a content analysis of the 40 editorials (152 pages in total). Each author analyzed the 40 editorials independently, extracting the key messages of each editorial and chronologically organizing them in three categories: (1) the factors in the journal’s environment mentioned by the editors that explain or motivate orientations or changes in editorial policy (e.g., “This change is the emergence and increasingly important role that journal rankings are playing in people’s perceptions of research quality” [Billsberry, 2012, p. 608]); (2) JME’s mission and vision and their implications on the nature and content of JME articles (e.g., “JME does not consider itself a theory-building publication” [Lund Dean & Forray, 2018, p. 699]); and (3) the place of TI within JME’s mission and their nature and content (e.g., “instructional innovation articles also provide convincing evidence that students learn from the activity” [Robinson & Leigh, 2023, p. 558]). We then pooled our analysis. Despite very minor differences, we rapidly agreed on key messages communicated over the years, which are presented below.

Results: Phase 1

From Craft to Science

The narrative thread used by editors over the years referred to a journal initially based on craft, which gradually evolved into science, while seeking not to lose its identity in the process. At its inception in 1975, the journal (then named The Teaching of Organizational Behavior) emphasized good management teaching as a craft, while exhibiting “a possible bias against research” (Schmidt-Wilk & Fukami, 2010, p. 137) and some skepticism toward science: “Our goal is to keep this journal as uncomplicated as possible and devote it to teaching techniques and theory rather than making it another research publication” (Bradford, 1975, p. 2). From the 1990s onwards, editors repeatedly stressed that good management teaching was “simultaneously art, craft, and science” (Gallos, 1995, p. 303), but that JME had now to focus more on science (Gallos, 1995, p. 304).

From the 2000s onwards, a “new agenda for management education scholarship” was suggested, based on “rigorous exploration and inquiry” (Bilimoria, 2000, p. 704). Editors emphasized that this more scientific approach to teaching and learning was reflected in the 2003 AACSB standards, which placed SoTL research publications on par with discipline-based research (Schmidt-Wilk, 2007, p. 441). Editors also emphasized the “increasingly important role that journal rankings are playing in people’s perceptions of research quality” (Billsberry, 2012, p. 608), which implies that JME urgently needed “an impact factor, strong citation rates, and elevated positions in ranking lists to justify its reputation” (Billsberry, 2012, p. 609). Editors’ goal was now to raise “the rigor of contributions without sacrificing [JME’s] reputation for publishing scholarly work relevant to its readership” (Schmidt-Wilk, 2009, p. 411). Lack of rigor was no longer acceptable: “as management education is maturing as a discipline, its ‘Wild West’ character is a thing of the past. The rigor expected in other domains was now also expected in management education” (Billsberry, 2014, p. 7).

Editors focused on two key elements which were emphasized with stronger force in the 2010s and 2020s. First, they wished for a more intensive and systematic use of the rapidly increasing literature in management education. All types of articles had to demonstrate a solid grounding in the literature (Billsberry, 2014, p. 4). It was now expected that articles were “to contain increasing numbers of references to previous work” (Billsberry, 2012, p. 610). This was in stark contrast to JME’s first editor’s half-joking suggestion, 37 years before, that “any article with (. . .) a reference list longer than six items should be rejected” (Bradford, 1975, p. 2). A literature review was now considered as a “distinctive component of all JME articles” (Lund Dean & Forray, 2018). Second, editors reaffirmed the need to rely more strongly on “empirical knowledge” (as opposed to “craft knowledge”; Lund Dean & Forray, 2019, p. 480). JME articles had become “more empirical” (Schmidt-Wilk, 2007, p. 440) and authors “were increasingly expected to provide evidence of the effectiveness of their teaching, usually in terms of student learning outcomes” (Schmidt-Wilk, 2007, p. 440). These expectations applied to “articles of any type” (Lund Dean & Forray, 2019, p. 480), including TI articles, to which we now turn.

Teaching Innovations and the “Evidence of Effectiveness” Challenge

JME's ambition has always been to be “an important source of innovative experiential materials of the highest quality and widest applicability (. . .), encouraging readers to experiment with these new materials in their teaching” (Bowen, 1995, p. 268). From 1995 onwards, the transition from craft to science involved formalizing the nature and requirements for TI articles, which resulted in integrating the two key elements required for all types of JME articles: a review of the literature and a focus on empirical knowledge (Bowen, 1995). Requirements grew regarding the anchoring in the literature and regarding empirical knowledge about TI’s effectiveness, while those regarding implementation of the TI were maintained. TI articles, thus, had now to: “(a) bring out the pedagogical value and contributions of the activity; (b) ground the activity in previous literature; (c) clarify the contingencies influencing the effective application and implementation of the activity; (d) describe the activity in detail (. . .); (e) provide suggestions for effective use and debriefing (. . .), and (f) summarize data on student learning and reactions to the activity” (Bilimoria, 1999, pp. 335–336).

In line with AACSB standards about Assurance of Learning (Martell, 2007), from the 2000s onwards, authors were asked to provide evidence of their TI’s effectiveness, “usually in terms of student learning outcomes” (Schmidt-Wilk, 2007, p. 440). Four strategies were suggested for producing strong evidence of effectiveness: “(1) develop and test activities through multiple classroom iterations; (2) collect evidence from multiple sources, such as students and outside observers; (3) collect evidence using multiple methods; and (4) tie evidence to learning objectives” (Schmidt-Wilk, 2010, pp. 196–197). Reports about “student reactions” to the innovation were no longer enough; direct measures of student learning were to be a priority (Schmidt-Wilk, 2010, p. 195). In JME’s early days, the instructor’s perspective was emphasized: “Saying why we like an approach or how that approach affects us (as well as affects students) is valuable information. Rather than such an approach threatening the objectivity of what we write, doesn’t it just recognize the subjectivity in all our words?” (Bradford, 1980, p. 2). Thirty years later, editors stressed, in contrast, that since the instructor has a vested interest in the TI’s success, his or her perspective is potentially biased and cannot serve as robust evidence of the TI’s effectiveness (Schmidt-Wilk, 2010, p. 196).

Questioning the Move Toward Science

By privileging science over art and craft, JME editors have been mindful of both gains and losses (Lund Dean & Forray, 2019, p. 481). While advocating for robust evidence of effectiveness, they recognized at the same time the immense difficulty of proving the effectiveness of any pedagogical innovation: “The problem is that it is incredibly difficult to demonstrate that your new way of teaching a subject is: (a) effective, (b) more effective than alternative ways of teaching the subject, and (c) replicable in other classrooms with other teachers” (Billsberry, 2014, p. 7). Ultimately, they acknowledged that the impact of teaching practices on students remained elusive: “We want what we teach to shape how our students think; we want something to stick (. . .). We assume that we actually can do that, but we will never know, even if the students test high on some impact-relevant instrument or come to us with stories of how what we taught changed something real in their lives.” (Lund Dean & Forray, 2015, p. 547). In the end, “not knowing ha[d] to be okay, too” (Lund Dean & Forray, 2015, p. 547).

Recently, editors acknowledged that the gradual move toward “science” implied that there was “little institutionally rewarded space any longer for the wise but empirically unexamined knowledge resulting from one’s years of experience running a particular activity or exercise” (Lund Dean & Forray, 2019, p. 481). They stressed, however, that the Management Teaching Review (MTR) launched in 2015 by MOBTS, the society behind JME, was a journal based on craft and practice (Lund Dean & Forray, 2019, p. 481).

In sum, the requirements regarding the nature and content of TI articles now appear to be higher, since the articles must now be firmly anchored in the literature and provide robust evidence of the TI’s effectiveness. Authors are left to decide for themselves what constitutes robust evidence of effectiveness depending on their epistemological allegiance and on the kind of learning and learning objectives they aim for. These complex challenges are widely acknowledged in the learning assessment literature, which can provide us with recommendations on how best to tackle them.

Assessment of Learning: Key Conceptual Points

Learning is a process that implies “acquiring knowledge and skills and having them readily available from memory so you can make sense of future problems and opportunities” (Brown et al., 2014, p. 2). Assessment of learning has mostly entailed trying to measure learning as an outcome—students’ level of knowledge or skills at a given moment, in relation to specific learning objectives. Depending on the nature of educational objectives being pursued, the assessment of learning will vary in complexity.

Rigorous measurement of learning is referred to as “actual learning” (Bacon, 2016b) and should be distinguished from “perceived learning.” Unless they developed the metacognitive skills to become better at judging their own learning, students’ perception of their own learning is not a very good proxy for actual learning (Armstrong & Fukami, 2010; Bacon, 2016b; Benbunan-Fich, 2010; Brown et al., 2014; Dunning, 2011; Sitzmann et al., 2010). When assessing learning, instructors are thus urged to use direct measures of actual learning (AACSB, 2023; Bacon, 2011; Martell, 2007), which imply “scoring a student’s task performance or demonstration as it relates to the achievement of a specific learning goal” (Elbeck & Bacon, 2015, p. 282). To relate this score to a particular teaching practice or activity, it is recommended to adopt a pre-post strategy (Bacon, 2016b; Cook & Campbell, 1979; Shaw et al., 1999), although pretest scores might be unreliable when assessing cognitive outcomes and only reliable “when assessing an ability which exists in all students to some degree at the start of the experiment such as cultural intelligence” (Bacon, 2024, p. 6). Authors also need to take appropriate measures to control as far as possible for variables other than the TI (instructor’s personality, class size, discipline, etc.) that could explain students’ task performance (Bacon, 2024; Hamdani et al., 2023; Kember, 2003; Weimer, 2006), while recognizing that a convincing quasi-experimental approach is extremely difficult to implement and, in any case, not a panacea (Becker, 2006; Kember, 2003). Establishing that the performance task (exam, case analysis, test, internship, and presentation) adequately captures the learning goal (validity issue) is a complex endeavor, and so is ensuring that the scoring itself is reliable. The scoring can be done by the student him/herself (self-assessment), by their peers (peer-assessment), by the instructor or by another party.

Indirect measures of learning involve using indicators other than direct performance measurements, which are taken as proxies for learning, that is, covary with learning (Elbeck & Bacon, 2015, p. 279). The more an indirect measure is assumed to covary with learning, the more valid it is (and vice versa). Student motivation and engagement during an activity are necessary but not sufficient conditions for learning (Dostaler et al., 2017; Pintrich, 2003). Neither is student satisfaction and consequently, neither are student evaluations of teaching (SET; Armstrong & Fukami, 2010; Clayson, 2009; Elbeck & Bacon, 2015; Shaw et al., 1999; Wiers-Jenssen et al., 2002). Other measures are sometimes used, which have a weak established link with actual learning, such as course grades, placement rates, starting salaries or career success (Bacon, 2017; Elbeck & Bacon, 2015). All measures of learning can be taken at different points in time, most often immediately after the teaching activity or practice, but also at a later date, in order to assess knowledge retention, learning decay and long-term learning outcomes (Bacon, 2024; Bacon & Stewart, 2006). As no single source or method for collecting evidence can establish effectiveness convincingly, using multiple sources is recommended (Armstrong & Fukami, 2010; Kember, 2003; Weimer, 2006). As Phase 1 of the research showed, most of these points from the literature about learning assessment were put forward by JME editors over the past 20 years. We now turn to Phase 2 of the research about the TI articles themselves and the way they responded to editors’ calls for increased rigor.

Teaching Innovation Articles: Exploring Today’s Standards of Rigor

In this second phase of the research, we are turning our attention to the TI articles published in JME in the past 10 years to explore how authors have responded to editor’s calls and instructions regarding increased rigor and stronger evidence of effectiveness. We first present the method and the coding scheme used, followed by the results of our analysis.

Method: Phase 2

Similar to Phase 1, and following Paré et al.’s (2015) typology, Phase 2 entailed conducting a descriptive systematic literature review with the specific goal of extracting characteristics from the articles and calculating frequencies to depict the current state of the field; in this specific case, to evaluate the rigor of today’s TI articles.

Article Selection

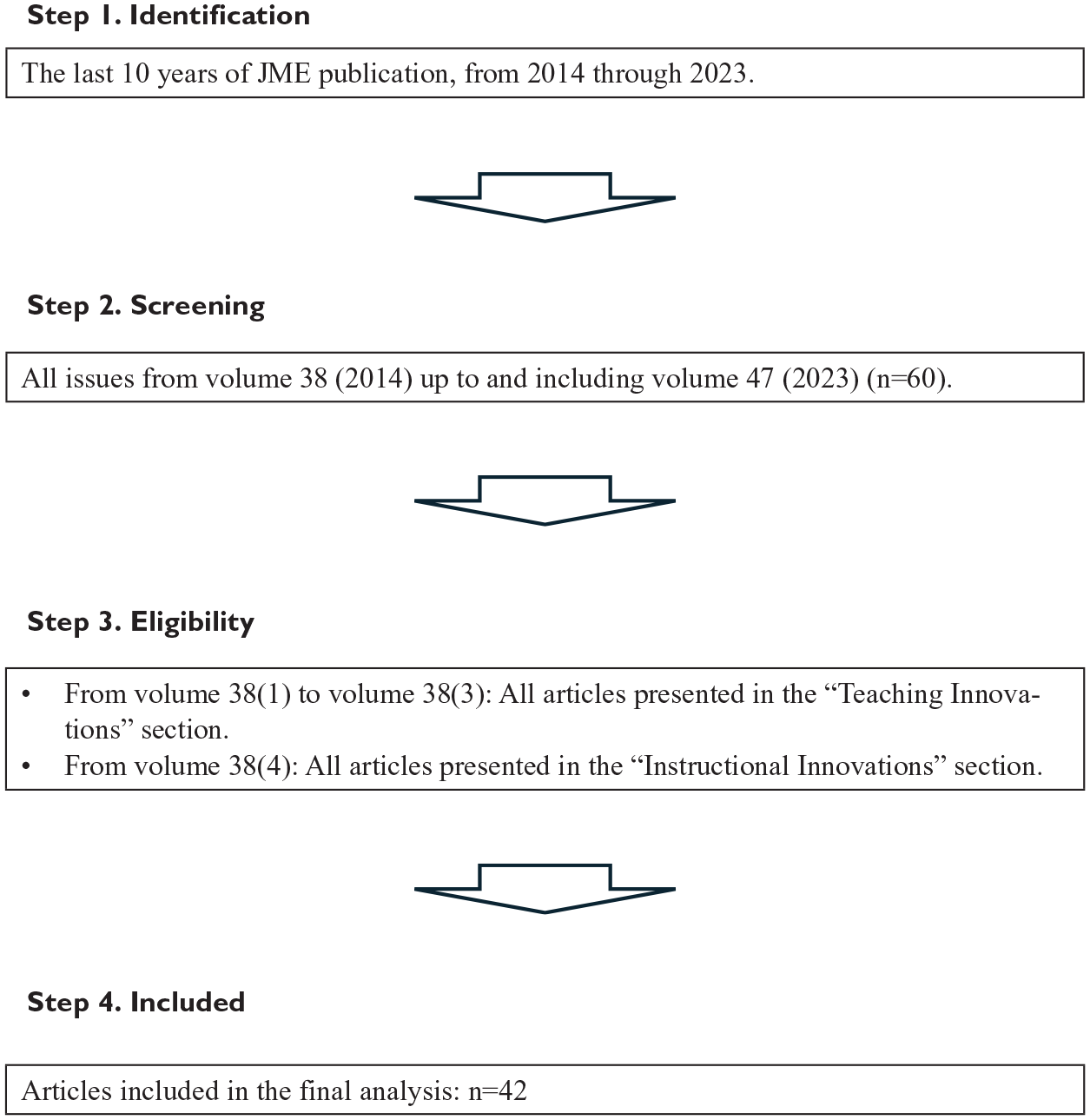

As in phase 1, a step-by-step approach was adopted (see Figure 2). With the goal to evaluate the current state of TI articles, we chose to include the last 10 years of JME issues in our analysis, spanning volume 38 (2014) to volume 47 (2023; Step 1). In the 60 issues of volume 38 up to, and including, volume 47 (Step 2), we included the TI articles, initially classified under the Teaching Innovations section, which was subsequently renamed (beginning in volume 38, issue 4) the Instructional Innovations section (Step 3). The final dataset contains 42 articles (Step 4). Appendix B presents a list of the 42 articles under study.

Phase 2 method—flow diagram.

Coding Scheme

A coding scheme was developed to analyze the use of the literature and the evidence of effectiveness presented in the 42 TI articles. Two distinct aspects of an article were coded: literature utilization and evidence of effectiveness.

Literature Utilization

As we showed in Phase 1, JME editors have repeatedly emphasized that articles should draw upon the appropriate scientific literature. We assessed TI articles’ utilization of the literature along two dimensions. First, we looked at the number of references cited by each article. While not a perfect indicator, it should reflect the extent of the paper’s connection to relevant literature. Second, we explored how the literature had been used. We defined five key roles the literature is likely to play when presenting a TI: (1) to support the TI’s relevance or general objective, (2) to show the TI’s originality—how it is different from what is currently available, (3) to identify specific learning objectives, (4) to help design the TI, and (5) to help design a strategy for assessing the TI’s effectiveness. These were assessed using a binary yes/no scale.

Evidence of Effectiveness

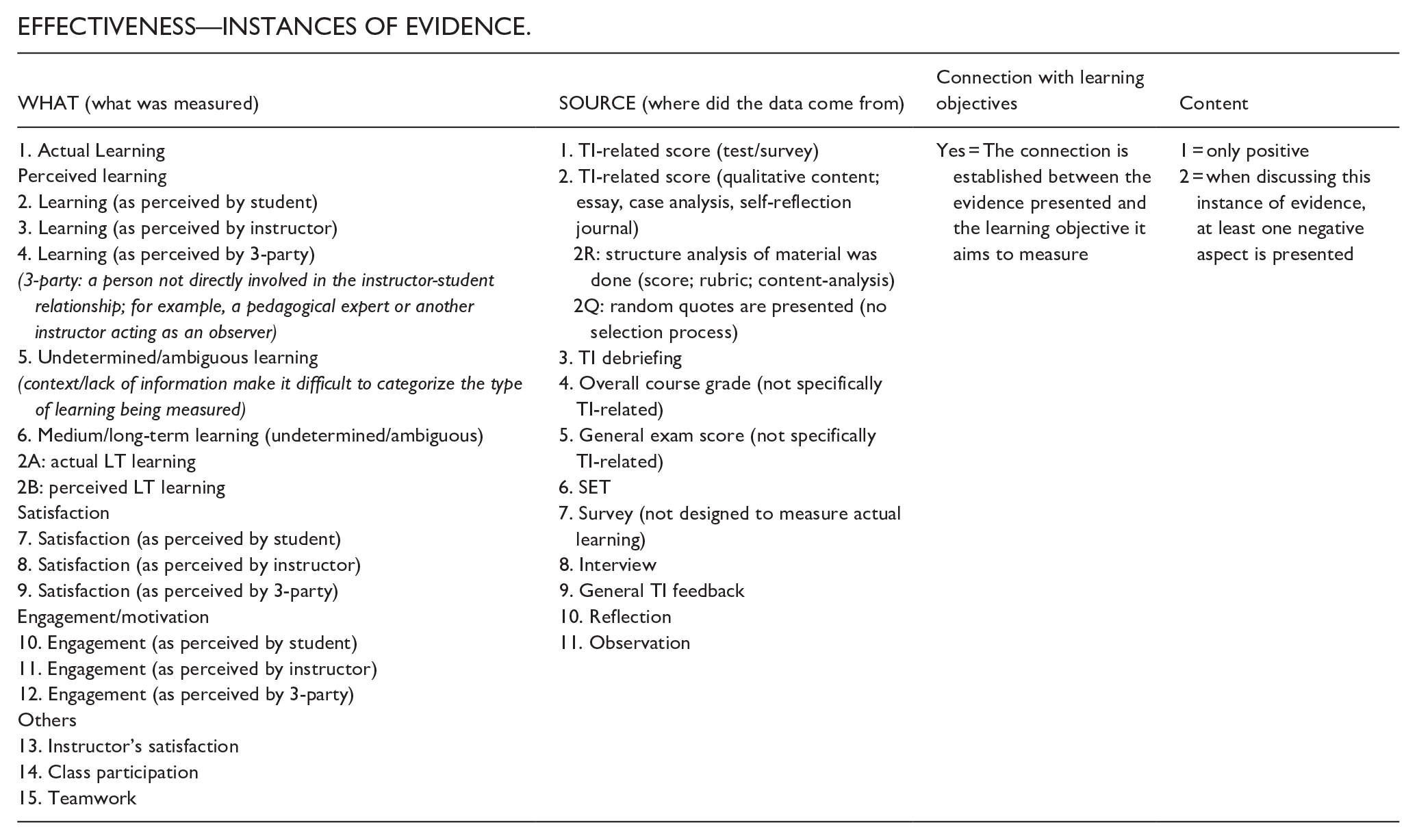

Studying the evidence of effectiveness encompasses two distinct aspects: (1) evaluating the characteristics of the overall strategy adopted to gather evidence and (2) examining the evidence gathered, including what was evaluated (variable) and where the data come from (source). Regarding the first aspect, strategy, we coded if the article presents at least one evidence of effectiveness. If so, the investigation of the strategy used to present this evidence was initiated; we coded the presence of a strategy, the research design, the presence/use of a method used to enhance robustness (validity and causality), and the presence of a discussion of at least one limitation of the measures used to provide evidence of effective. In addition, we coded for the presence of reflective indicators. Specifically, we coded whether at least one challenge other instructors are likely to face when using the TI and at least one limit of the TI itself were acknowledged. These aspects were coded using various scales; some with a binary yes/no scale and others with a multiple-category scale.

Regarding the evidence gathered, drawing from the key points from the literature on learning assessment, we coded instances of evidence of effectiveness that were presented in each article. Each instance of evidence is made of four components: (1) the variable being measured (actual learning, perceived learning by student, long-term learning, satisfaction, and motivation); (2) the data source (where the data presented as a measure of this variable was from: test, journal, SET, and observation); (3) the connection (whether a link is clearly established between the instance of evidence and the learning objective it aims to measure; yes/no scale); and (4) the content (whether the evidence presented portrays only positive aspects or both positive and negative aspects). In the 36 articles presenting a strategy to provide evidence of effectiveness, we identified 102 instances of evidence. The instance is the basic unit of analysis and can be aggregated at the article level when required.

The detailed coding scheme, including the definition and possible values assigned to each variable, can be found in Appendix C.

A Word of Caution

The results presented in Phase 2 should be interpreted in light of the following considerations. As a general principle, when coding, we sided with the authors. Any indication for an item based on the content of the article, without inferring the authors’ intentions, even if modest, was coded as present. All the articles were coded by one of the authors, who also identified all the ambiguous cases (about 15 articles). The other author also analyzed these cases, and notes were then compared. These discussions led to the identification of fundamental conceptual and methodological issues, as well as the refinement of the coding scheme (such as the addition of an “undetermined/ambiguous” code for certain elements; see Appendix C). The issues identified and the reasons why an article received a “undetermined/ ambiguous” code are explained in the next section.

A limitation of the coding process is that we did not measure the extent or quality of the items coded as present. For many items, a binary yes/no code was used. As it will be discussed later, the lack of information/details in many articles when presenting evidence of effectiveness made it challenging to even evaluate the basic presence of an element. If its presence was not easily established, this made it very difficult to conduct a more in-depth analysis and evaluate the extent to which it was used or if recognized criteria of excellence were followed (quality).

Results: Phase 2

The results are presented in two separate sections. The first section focuses on the utilization of the literature, while the second examines how TI articles provide evidence of effectiveness.

Literature Utilization

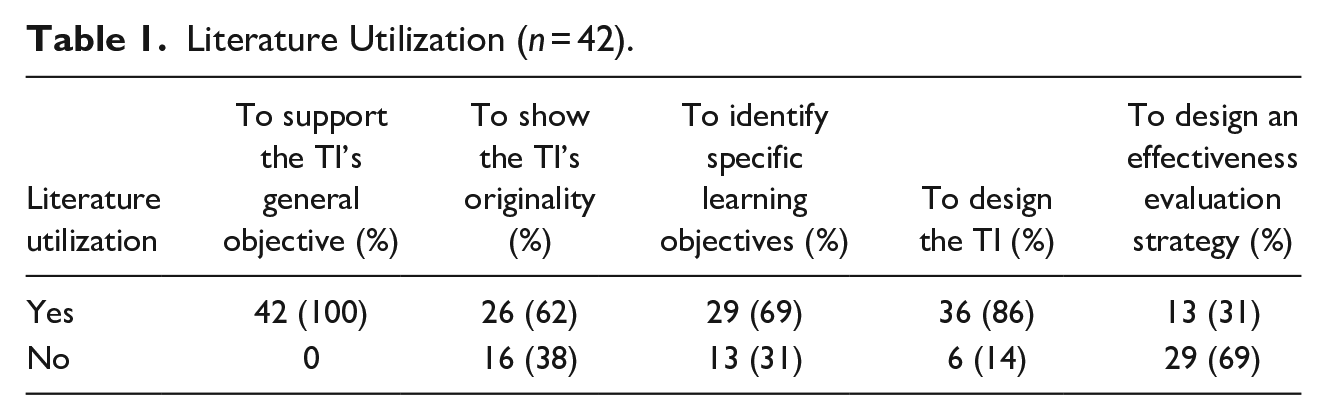

The reference count shows that each article cited on average 46 papers (ranging from 12 to 113). As shown in Table 1, all articles use the literature to present their general objective. This is generally done by highlighting a complex management issue and demonstrating how innovative pedagogical approaches need to be developed to enhance the skills necessary to tackle this complexity. In many cases (69%), this will prompt authors to clearly establish a connection between this literature and the specific learning objectives they aim to achieve. Similarly, 62% of all articles also discuss why a new innovative approach is needed. This is achieved by identifying gaps in what is available, or by comparing the presented TI with others and emphasizing its merits. In the vast majority of articles (86%), the literature had clearly influenced one or several aspects of the TI’s design. It is frequently used to determine the concepts/dimensions that need to be integrated to the TI (e.g., the use of network theory to shed light on an often overlooked aspect of network characteristics that becomes the focus of the TI), or to provide guidance on how it should be operationalized to achieve a specific objective (e.g., the role students must play during a simulation or the nature of the treatments in an experiment). Finally, even with a rather generous coding approach (such as considering the use of existing scales to measure, for example, assertiveness and risk propensity as a form of literature utilization), fewer than a third of all articles (31%) use the literature, even if only modestly, to design their strategy for evaluating effectiveness.

Literature Utilization (n = 42).

In sum, in response to JME editors’ calls for heightened rigor of TI articles, our results show that, regarding literature mobilization, authors now routinely use the relevant literature to support the TI’s relevance, learning objectives, and design. However, the existing literature is considerably less frequently used to design an effectiveness evaluation strategy.

Evidence of Effectiveness

The results related to evidence of effectiveness are organized into three sections: strategy, method, and measures of effectiveness.

Strategy

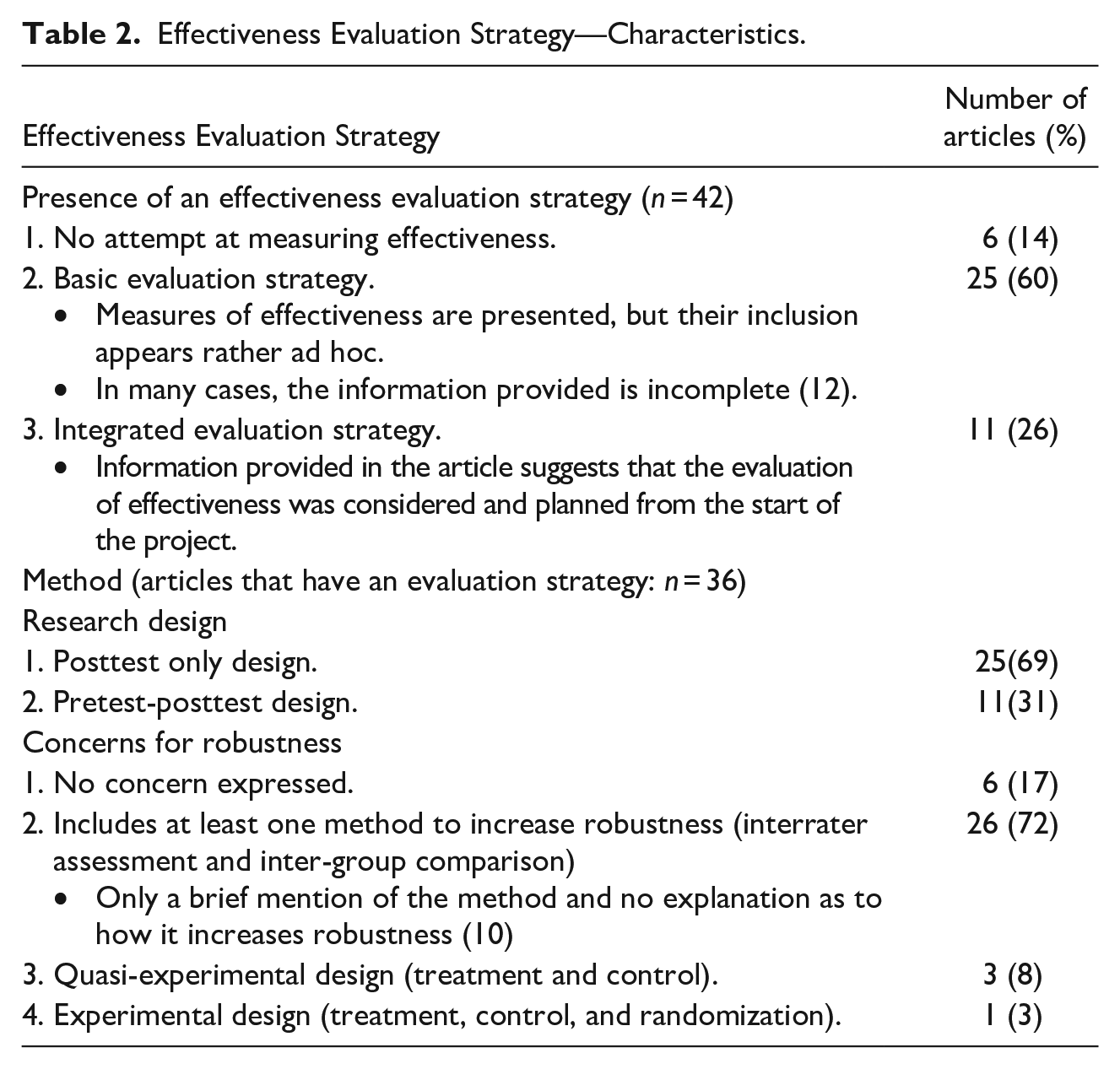

We first explore the broader characteristics of the strategy adopted by the authors to gather evidence of effectiveness (see Table 2). Six articles (14%) made no attempt at measuring effectiveness, 25 articles (60%) used a basic evaluation strategy (despite the fact that 12 of them [48%] suffered from a lack of information making it difficult to assess the robustness of the evidence provided), while the remaining 11 articles (26%) provided indications that the effectiveness strategy was planned from the start and integrated to the TI development.

Effectiveness Evaluation Strategy—Characteristics.

Method

The analysis proceeds with the 36 articles that included an evaluation strategy. Table 2 indicates that these TI articles most frequently used a posttest research design (69%) while 31% adopted a pre- and posttest design. A small number of articles (17%) did not express concerns about the issue of methodological robustness, specifically for ensuring that the TI was responsible for the observed outcomes. In line with what the editorial considered as a minimal requirement, 26 articles (72%) did mention testing the TI across multiple instances (groups, semesters, and/or instructors). In this group, however, 10 articles (38%) did not discuss how their methodological choices contributed to increasing the robustness of their findings. In contrast, the other 16 articles of this group (62%) actually compared outcomes between groups/semesters and/or use multiple raters, thereby providing stronger support for the validity of their findings. Finally, three articles (8%) use a quasi-experimental design, and one article (3%) used an experimental design.

Measures of Effectiveness

Out of the 36 articles that presented an effectiveness evaluation strategy, 35 (97%) aimed to measure some form of learning: 15 articles (42%) presented at least one measure of actual learning, while 13 articles (36%) presented only measures of perceived learning as assessed by student, by instructor, or a third party. The type of learning remained undetermined in seven articles (19%). Three articles (8%) offer evidence of medium/long-term actual learning and two (6%) remained undetermined as the type of medium/long-term learning that was measured. Besides learning, we also found that 22 articles (61%) present what are considered indirect measures of learning (motivation, engagement, and satisfaction), despite significant doubts in the literature about their actual connection to student learning. Further investigation shows that six articles (17%) included other variables of interest, such as class participation, teamwork quality, or instructor satisfaction. Overall, 29 articles (81%) present at least two sources of evidence, while 7 articles (19%) rely on the presentation of only one source.

Determining what was measured and, in the case of learning (or medium/LT learning), whether the measures were of actual or perceived learning was fraught with challenges in many instances. Reasons include: (1) the objectives were not always centered around learning; some articles aimed to answer research questions (e.g., one article aims to study the most effective aspects of a global competition), introduce a new program (design and implementation of an innovative MBA program), or focused solely on measuring some other variables (e.g., how a TI was effective for career awareness and preparedness); (2) when it was about learning, very few articles directly addressed the issue of the type of learning—actual or perceived—that is measured which was challenging to determine based on the information provided (this determination may even be more critical and deserving of more attention when affective learning objectives related to raising awareness are involved). Even in the articles where it is mentioned, it is usually very brief; (3) lack of information—what data was used, the specific instructions given to students, and how it was collected were unclear. This is especially true for measures of learning derived from students’ reflections. While some articles provide the reader with the instructions and list of questions distributed to students, others merely mention the idea of student reflection as a course requirement and as a basis for assessing learning, then proceed to offer a limited amount of content (e.g., a few positive quotes); and (4) no mention of proper learning objectives—the article may only contain a pedagogical intention (e.g., use an esthetic workspace to prepare students for the dynamic and turbulent settings managers evolved in), or if learning objectives were presented, they were either so general or deeply muddled that it was difficult to understand what had indeed been measured (e.g., students should be able to identify ways of, and feel confident about, using and continuing to develop a skill in the future).

With greater depth, Table 3 presents the instances of variables and sources used as evidence of effectiveness. Almost two-thirds of the evidence presented included a measure of learning (53%) or medium to long term learning (6%). Actual learning is typically assessed through specific TI-related content (such as tests, essays, or the content of reflection journals). For certain learning objectives, self-reflection journals can be a valuable source of actual learning if instructions to students are clear (for an example, see Kuechler & Stedham, 2018). Surveys can prove to be a mixed bag depending on the type of questions asked (about the TI or about learning); in one case, it was used to measure a very broad learning objective, such as one pertaining to general awareness, but this is more the exception than the rule. Surveys more generally measure perceived learning. Learning as perceived by students was typically measured through SET or a survey. In all articles where evidence of effectiveness was presented, perceived learning by instructor or by a third-party was never presented as the only source of evidence of effectiveness.

Instances of Evidence of Effectiveness Presented in the 36 articles (n = 102 a ).

The total is greater than 36 because some articles used more than one measure to show evidence of effectiveness.

Quotes from students (from surveys, SET, essays, or journals) presented as evidence of effectiveness deserve particular attention since they can refer to either actual or perceived learning and because, as qualitative data, they need to be properly collected and analyzed. When these quotes were used as a measure of actual learning, only 30% of the articles make an effort to establish a link between the outcomes and the learning objective being measured. In addition, for the 10 articles that used qualitative data from essay, cases or journal as evidence of effectiveness, 4 articles use a structured way to analyze this data (using rubrics, systematic content-analysis, and multiple evaluators), while 6 other presented quotes without communicating their selection process. Combined with the fact that articles tended to not present a balanced evaluation of their TI (only 15 articles out of the 36 articles feature excerpts or data that express resistance or opposing opinions from students) and that less than half of the articles (42%) make a deliberate effort to highlight the limitations of their TI measures (with vastly varying depth, ranging from a few words to a more comprehensive analysis), this raises serious questions about the robustness of the evidence of effectiveness presented.

In terms of likely challenges during implementation, our results show that 64% of all articles address this topic, mainly discussing possible pitfalls and how to manage them. Many authors, for example, discuss how using their TI may be very labor-intensive and explain the optimal context for its use (size of class and number of assignments). Regarding the TI limitations, only half of the articles address this topic, though some provide very useful insights. An article, for example, ends with a word of caution to potential adopters, explaining the changes to the pricing model of the software provider supporting the TI and how these changes could impact the cost for students.

In sum, regarding evidence of effectiveness, our analysis reveals mixed results. Compared to previous accounts of TI articles’ rigor in JME, progress is undeniable. In 1999, Shaw et al. drew the following conclusion from their analysis of the TI published in JME between 1990 and 1999: “With a few exceptions, evidence for the effectiveness (. . .) has been similarly impressionistic and anecdotal. (. . .) Criteria other than student reactions are seldom obtained, and innovative methods are rarely explicitly compared to more standard methods.” (p. 510). Twenty-five years later, our findings indicate that many TI authors now adopt a more structured approach regarding evidence of effectiveness. They present learning objectives, link sources of evidence to the learning objectives, and make more efforts at measuring actual learning rather than relying solely on perceived learning or student satisfaction.

Notwithstanding this progress, substantial limitations persist in many articles: no attempt at measuring effectiveness; incomplete information that makes evidence of effectiveness ambiguous or unconvincing; very limited attempt to support the validity of the causal link between TI and learning outcomes; no attempt at measuring actual learning rather than perceived learning; and absence of discussion on the limitations of the evidence of effectiveness offered. Several of the issues are methodological in nature, but they also stem from too general, poorly formulated, not ambitious enough learning objectives, or centered on “raising awareness,” which possible assessment relies solely on students’ perception, as there are no observable behaviors that can demonstrate achievement (with the associated desirability bias, which is rarely discussed).

Discussion

In contrast to other management education journals such as AMLE or ML, JME has consistently emphasized articles devoted to relevant, creative, and cutting-edge teaching innovations, presented “with sufficient detail and evidence of effectiveness for readers to implement (. . .) in their own environments” (MOBTS, 2024a). The TI articles published in JME are not classified as research articles (“instructional innovations” are distinctly classified from “research articles” on the Journal’s website (see, e.g., MOBTS, 2024b). Over time, however, as observed in Phase 1 of this research, JME editors have progressively introduced research requirements for TI articles, particularly regarding the use of scientific literature and the presentation of evidence of effectiveness. In Phase 2, we analyzed how the TI articles published over the past decade have implemented these requirements. Our findings elicited two opposing reactions. As instructors, we were enthusiastic about many of the TI and appreciated the effort their authors invested in describing them in an inspiring way, prompting us to consider trying them out. However, as researchers, we were often puzzled and even disappointed by the lack of rigor and transparency evident in many articles, both in the mindset adopted for developing the TI and writing the article, as well as in the way evidence of effectiveness was collected and analyzed.

In our view, there is a significant risk associated with continuing to publish in a research journal the type of TI articles that we studied. This risk is the potential loss of credibility for management education research in the eyes of two important stakeholders: management educators who are both passionate instructors and active researchers in their management disciplines and who approach any article published in a research journal with high research standards; and the modest but growing number of management educators who conduct rigorous research on teaching and learning issues and who are striving for this research to be recognized as equally valuable as their disciplinary research. More generally, the risk extends to threatening the field’s still-fragile legitimacy by reinforcing the view that research on teaching and learning “from the disciplines” (such as management) is a “watered-down version of research” (Canning & Masika, 2022, p. 1088) compared to research conducted by education researchers.

To mitigate this risk, we suggest that TI articles published in research journals like JME be research articles in their own right. It goes beyond adding “rigor” and research-inspired criteria to an approach that isn't inherently a research approach (which appears to have been the case with many of the JME's TI articles we analyzed). Rather, it's about considering the design and development of a TI as a research project from the outset. In the following sections, we clarify what it means to develop a TI with a research mindset, along with the principles that could support the implementation of this mindset, while respecting the epistemological and methodological diversity that characterizes our field.

Developing TI With a Research Mindset

The very definition of what constitutes research is far from universally accepted (Johnson & Duberley, 2000), and it is not the aim of this article to contribute to this debate. More modestly, our objective is to highlight a few key characteristics of a research-driven approach to the development of TI, which appear to be lacking in many of the articles we have analyzed. These characteristics, we believe, remain relevant across a wide range of epistemological paradigms and are therefore relatively agnostic in this regard. Developing TI with a research mindset implies that:

Research begins upstream, at the TI design and development stages. Our detailed reading of the TI articles suggests that many authors first developed and tested their TI in a relatively unstructured manner, only later attempting to comply with the journal’s requirements related to literature utilization and evidence of effectiveness. This approach often led to weaknesses and inconsistencies in the implementation of these requirements.

The goal is to contribute to knowledge about teaching and learning rather than to “sell” the TI to possible adopters. Many TI articles seemed to adopt a biased approach by presenting only positive data on the TI’s effectiveness or by omitting any mention of limitations or doubts regarding its merits compared to other activities or methods for achieving the same learning objectives. As observed in our findings, selectively presenting a few “celebratory” quotes from students was too often considered acceptable evidence of effectiveness.

Claims about the TI’s effectiveness must be based on the rigorous deployment of a clearly identified methodology, and sufficient information must be provided to enable the reader to make an informed judgment about the robustness of the methodology. As mentioned earlier, our main challenge in analyzing the TI articles stemmed from omissions, inconsistencies, or lack of information, which made it difficult to follow the conceptual foundations supporting the TI and the chain of evidence regarding its effectiveness. It is the authors' responsibility to specify their research tradition, present the associated criteria of excellence, and demonstrate how they have competently met these criteria.

The TI is situated within a body of knowledge that must be both utilized and contributed to. Authors need to be familiar with and build upon previous research findings in management education and/or educational research, so as not to reinvent the wheel or overlook important insights regarding the TI’s design, implementation, and assessment. Our findings suggest that while the literature was often used to support the general relevance of the theme addressed by the TI in the articles under study, and in some cases to formulate the learning objectives, efforts were insufficient in other areas where the literature should have been leveraged: to demonstrate the novelty of the TI (what it adds to our current portfolio of pedagogical tools), to ground the TI in appropriate theoretical frameworks, to identify the relevant dependent variable of interest considering the TI objectives, and to design a methodological strategy for assessing the TI’s effectiveness.

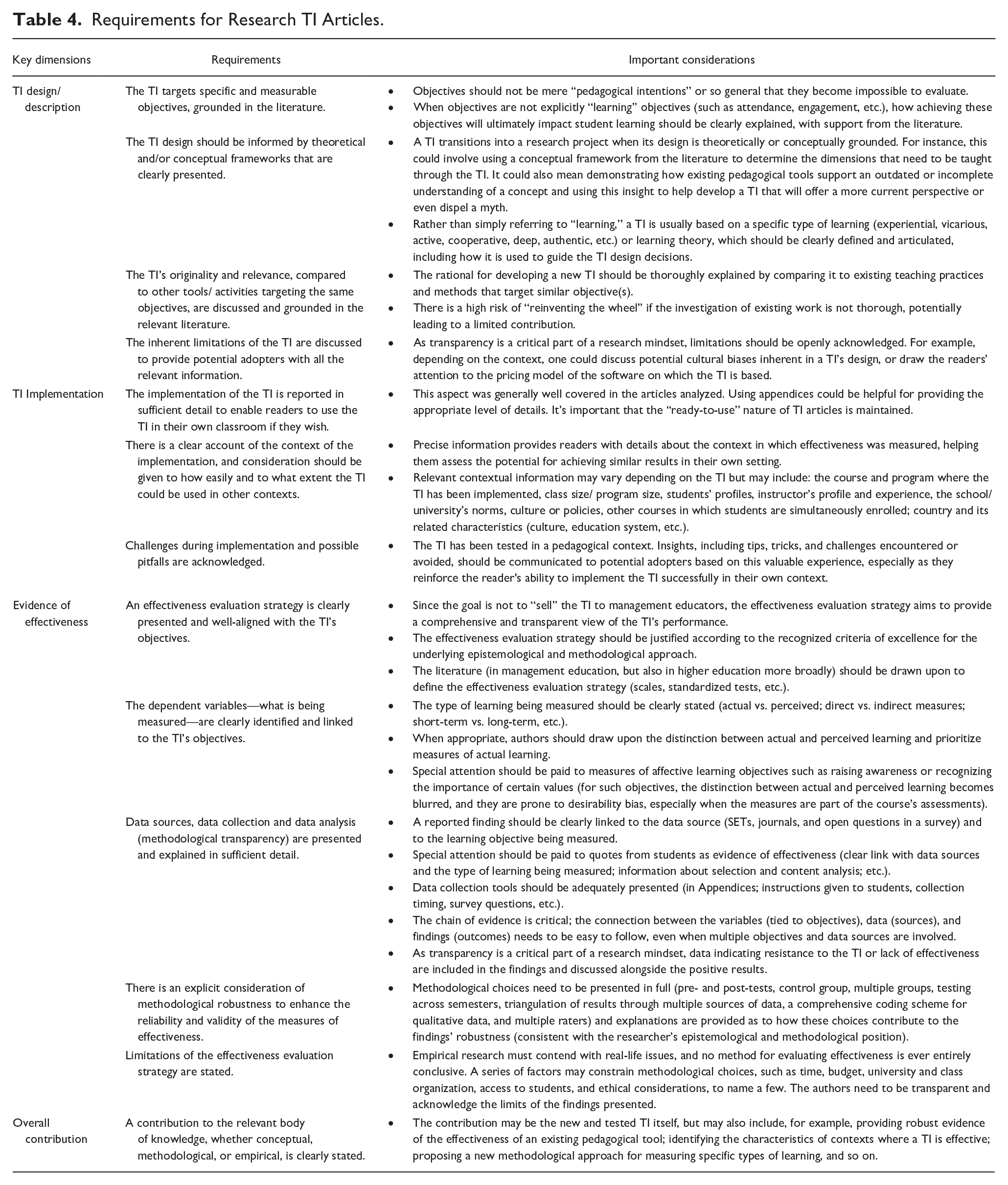

Table 4 presents how the above characteristics of a research mindset can be translated into concrete requirements for research-based TI articles. While criteria for high-quality education research already exist (e.g., Evans et al., 2021), we believe that research articles on TI deserve specific consideration. These requirements span four key dimensions: TI design/description, TI implementation, evidence of effectiveness, and overall contribution. For each dimension, we highlight important considerations emerging from our findings. Our goal, however modest, is to contribute—based on the results of Phase 2—to the ongoing reflection of JME editors who, as demonstrated in Phase 1, have worked over the years to clarify the expectations for TI articles.

Requirements for Research TI Articles.

Practical Recommendations for JME

Over the past two decades, SoTL has evolved into an umbrella concept that includes a wide spectrum of practices, such as a culture of continuous improvement in teaching; “reflective practice”—where faculty engage in and publicly share their local concerns and successes; and rigorous research conducted by instructors/researchers on teaching and learning issues within their own disciplines (Bilimoria & Fukami, 2002; Canning & Masika, 2022; Cotton et al., 2018; Kanuka, 2011; Tierney, 2020). While journals like AMLE or ML have clearly established themselves as research journals in management education, JME’s positioning as “a leading voice in the SoTL in management” (MOBTS, 2024c) sends a more ambiguous message regarding the types of contributions it values.

In our view, a pedagogical innovation lends itself to two main types of project and their related publications: (1) research-based articles that aim to explore and demonstrate the relevance and effectiveness of an innovation based on well-identified learning concepts and theories, a robust methodology, and a strong understanding of the literature on existing practices and pedagogical approaches that facilitate similar learning objectives; (2) practice-based articles that aim to present and disseminate the innovation to management educators, inspiring and enabling them to implement this innovation in their own contexts. The first type refers to research projects that must be conducted by researchers, specifically management educators who are also researchers and have the field knowledge and skills to carry out this type of study. We presented above the characteristics of these research-based TI articles. Beyond the TI itself, these articles have a research goal: for example, the applied test of a theory, developing measurement scales, or comparing the effectiveness of two competing TIs. The second type refers to publications produced by educators (who may or may not be researchers) who embody all the qualities of reflective and innovative practitioners. We argue that these practice-based TI articles don’t need to mimic the characteristics of research articles; their interest and value lie in giving voice to the reflexive practitioner’s experience, with the sole goal of providing an innovation’s clear description to encourage other educators to experiment with it themselves.

We strongly believe that JME should more clearly affirm its commitment to being a research journal. JME having a dedicated category for TI (or “Instructional Innovations” as they are currently called) sets this journal apart and contributes to its uniqueness (Beatty & Leigh, 2010). This must be preserved while also contributing to the ongoing development of a credible research tradition in management education. Thus, we suggest that the TI articles published in JME should clearly be research-based, as defined and characterized in the previous section.

This research positioning would have two major implications. First, it would help establish JME as a leading research journal in a field where a “still-fragile” research tradition (Canning & Masika, 2022) is slowly emerging. As a field, we need to take decisive steps to solidify management education as a legitimate research domain alongside disciplinary research. Unfortunately, our analysis suggests that a disciplinary researcher reading some of the TI articles we analyzed might reach the opposite conclusion. Second, the current positioning may confuse authors, leading some to unsuccessfully attempting to fit practice-based papers to the journal’s research-inspired criteria (as revealed by our Phase 2 analysis). Addressing this misalignment imposes a heavy burden on the editorial team. By communicating a clear research positioning, JME would signal that all submissions must be produced by proficient researchers and commit to having such articles reviewed by qualified researchers. In this context, the work of the editor and reviewers becomes comparable to that of any other research journal: very demanding, but manageable. With its continued emphasis on enhancing teaching and learning and ensuring the practical value of research findings to educational practice, JME would remain a valuable resource for practitioners. These readers would be empowered to make informed decisions on which innovations to adopt and implement in their classrooms.

As JME editors testified over the years, the quest for scientific legitimacy and the choice to value science over art and craft imply both gains and losses (Lund Dean & Forray, 2019). While we distinctly call for a shift toward a more scientific approach for TI articles, we do so with full acknowledgement and respect for all other types of contributions to the broad field of management education. It seems to us that this must have been the very goal underlying the creation of Management Teaching Review (MTR) in 2015, JME’s companion journal, created by MOBTS, who oversees both journals. MTR publishes “short, topically-targeted, and immediately useful resources for teaching and learning practice” (MOBTS, 2024d), for which empirical knowledge about evidence of effectiveness is not required. The combination of JME and MTR appears to us to be a major contribution to the field, provided the distinction between the two genres is respected: JME no longer publishes practice-based articles or attempts to “dress them up” as research. These submissions should simply be systematically transferred to MTR. With a clear focus, both journals could then focus on metrics with high visibility in their respective fields: JME could concentrate on the primary metric for research journals—citations—while MTR could develop metrics related to the diffusion and use of the innovations it publishes (similar to those for teaching cases on platforms like HBR or The Case Centre). Both types of contributions can coexist and be valuable and inspiring in their own right. In our own research institution, publishing a TI in MTR is a valued addition to a faculty member’s teaching portfolio; however, publishing a research article in JME and having it count toward one’s research publication portfolio still presents a challenge. This could be reduced as JME strengthens its status as a research journal and devotes energy to securing a place in the SSCI index in the JCR. While it may not please everyone, this is how the game is set today in many higher education institutions, and it is necessary for the field to gain legitimacy and JME to get the status of a legitimate research outlet.

Conclusion

JME started its journey by publishing practice-based articles, at a time when research-based articles virtually didn’t exist. Over the years, as the field of management learning and education matured, scientific research took an increasingly important place. Management education scholars now approach TI in the light of scientific knowledge about learning and assessment of learning. We are less naïve, more rigorous, and more circumspect. We want to be able to evaluate effectiveness claims on empirical knowledge grounded on solid conceptual and methodological foundations. Rather than clearly choosing between these two paths—research and reflective practice—JME has tried “not to take sides” and to honor both approaches, respecting its practice-based origins and tradition of practice-based publications while embracing and encouraging the advancement of research. Our study and reflections are an invitation to reconsider this position, so that JME can continue its important contribution to the legitimacy of the management education and learning field. The “golden age” of management education research (Arbaugh & Hwang, 2015, p. 171) is yet to come, and research-driven articles about teaching innovations can contribute to achieve the scientific legitimacy we strive for (Mason et al., 2024).

Footnotes

Appendix

EFFECTIVENESS—INSTANCES OF EVIDENCE.

| WHAT (what was measured) | SOURCE (where did the data come from) | Connection with learning objectives | Content |

|---|---|---|---|

| 1. Actual Learning Perceived learning 2. Learning (as perceived by student) 3. Learning (as perceived by instructor) 4. Learning (as perceived by 3-party) (3-party: a person not directly involved in the instructor-student relationship; for example, a pedagogical expert or another instructor acting as an observer) 5. Undetermined/ambiguous learning (context/lack of information make it difficult to categorize the type of learning being measured) 6. Medium/long-term learning (undetermined/ambiguous) 2A: actual LT learning 2B: perceived LT learning Satisfaction 7. Satisfaction (as perceived by student) 8. Satisfaction (as perceived by instructor) 9. Satisfaction (as perceived by 3-party) Engagement/motivation 10. Engagement (as perceived by student) 11. Engagement (as perceived by instructor) 12. Engagement (as perceived by 3-party) Others 13. Instructor’s satisfaction 14. Class participation 15. Teamwork |

1. TI-related score (test/survey) 2. TI-related score (qualitative content; essay, case analysis, self-reflection journal) 2R: structure analysis of material was done (score; rubric; content-analysis) 2Q: random quotes are presented (no selection process) 3. TI debriefing 4. Overall course grade (not specifically TI-related) 5. General exam score (not specifically TI-related) 6. SET 7. Survey (not designed to measure actual learning) 8. Interview 9. General TI feedback 10. Reflection 11. Observation |

Yes = The connection is established between the evidence presented and the learning objective it aims to measure | 1 = only positive 2 = when discussing this instance of evidence, at least one negative aspect is presented |

Acknowledgements

The authors extend their heartfelt gratitude to the anonymous reviewers, whose exceptional insight, open-mindedness, and feedback were instrumental in shaping both our thinking and the article itself in subtle and significant ways. We also wish to thank special issue guest co-editor Diana Bilimoria and JME co-editor Jennifer Leigh for their invaluable support in navigating the process.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.