Abstract

Discussions about the status of scholarly journals in management have tended to revolve around impact factor and standing on relevant lists—cues whose validity has been oft-debated. We introduce a new metric for gauging the status of scholarly journals. Syllabus share represents the proportion of doctoral seminar syllabi comprised of articles from a given journal. We introduce this metric by drawing on a content analysis of 179 management doctoral syllabi (90 micro seminars, 89 macro seminars) from 53 business schools in North America, Europe, and Asia. Our results showed that syllabus share was distinct from impact factor and standing on relevant ranking and advisory lists. Moreover, syllabus share was correlated with perceptions of journal status on the part of both junior and senior scholars. Syllabus share provides a more continuous view of journal status (in contrast to lists) while allowing results to be contextualized (in contrast to impact factor). Our discussion focuses on the value of syllabus share for a more pluralistic conceptualization of journal status and its contributions to the scholarship of management education.

One of the most oft-debated topics by management scholars concerns the status of the field’s journals—how they should be perceived, how they should be viewed relative to one another, and how such judgments should affect relevant decisions. Debates about the status of the field’s journals are particularly intense among doctoral students because they are currently undergoing socialization with respect to their field and departments. Socialization is the process through which people become a part of a collective’s pattern of activities (Ashforth, Sluss, & Harrison, 2007) and involves acquiring knowledge about those activities and the context in which they occur (Ashforth, Sluss, & Saks, 2007).

Unfortunately, doctoral students possess few cues for making informed judgments about the status of journals. They may be aware of annual updates to journal impact factors—the ratio of how many citations a journal receives in a given year from articles published the two prior years, relative to the number of articles published those 2 prior years (Archambault & Larivière, 2009). Their newness to the field may, however, prevent them from putting a given number in a broader context. They may also be aware of journal lists associated with relevant rankings or particular advisory bodies. Again, though, their inexperience may prevent them from having an accurate view of the origins and limitations of such lists.

The purpose of this paper is to introduce a new metric for gauging the status of journals in management—one that should be particularly salient to doctoral students. That metric is syllabus share—the proportion of doctoral seminar syllabi comprised of articles from a given journal. Doctoral students can only be given so many articles to read in a given week, making syllabi collections that must be carefully curated (Ashford, 2013; Rasheed & Priem, 2020). Journals whose articles appear more frequently in syllabi will be higher in syllabus share—providing a more positive cue for gauging journal status. Journals whose articles appear less frequently in syllabi will be lower in syllabus share—providing a less positive cue for gauging journal status.

Our introduction of syllabus share draws on 179 management doctoral syllabi (90 micro seminars, 89 macro seminars) from 53 business schools in North America, Europe, and Asia. We are not aware of any prior content analysis of doctoral syllabi in management, though a similar method was used in social psychology to explore gender bias in doctoral education (Skitka et al., 2021). Importantly, we also begin to construct a nomological network for syllabus share. Specifically, we show that syllabus share is distinct from impact factor and standing on relevant ranking and advisory lists. We also show that syllabus share is correlated with perceptions of journal status on the part of junior and senior scholars. Such results provide discriminant and convergent validation evidence for the new status indicator.

Our work offers important contributions to the literature on journal status. A continuing call in that literature is for the emergence of a more pluralistic conceptualization of journal status (Aguinis et al., 2020; Anderson et al., 2021; Chapman, 2012; Haley et al., 2017; Kacmar & Whitfield, 2000; McWilliams et al., 2005; Podsakoff et al., 2005). Syllabus share represents a new status cue—and one that may be quite distinct from existing cues. In addition, the literature has often bemoaned the artificial dichotomization of journal status created by the “an A is an A” audit culture (Aguinis et al., 2020; Anderson et al., 2021; Bartunek, 2020). Syllabus share provides one response to that criticism because it is a continuous metric that allows for nuanced distinctions among journals.

Aside from its role in gauging journal status, syllabus share provides a suggestive look at which journals encapsulate the foundational works and recent advancements that mark the field of management. After all, syllabi represent one of the central ways of documenting the scholarship of both teaching and learning (Brown et al., 2013). In reflecting on his scholarly career, Whetten (2007) noted that the title of “professor” can be earned when one realizes what one wants to profess. For his part, he argued that one of the most impactful forms of professing was the choice of reading material. Scholars who disproportionally choose reading material from a given journal are—implicitly or explicitly—elevating that journal as especially foundational to the field. Regardless of whether such choices are driven by the importance of the contribution, the quality of the theorizing, or the rigor of the empirics, it clearly means something if some journals are more represented on syllabi than others. Thus, syllabus share can also serve as an index of how valuable journals have been to the field.

Our manuscript unfolds as follows. First, we review the existing metrics that are currently available for gauging journal status. Then we describe how syllabus share could complement those existing metrics. That section puts forth specific research questions about how journals will rank on syllabus share, how distinct syllabus share will be from existing status metrics, whether syllabus share will correlate with scholar perceptions of journal status, and how syllabus share will differ across the micro and macro sides of the field. Our Method section then describes the sampling and coding strategies used to operationalize syllabus share. We also describe our gathering of other status cues and scholar perceptions of journal status. Finally, our Results section provides syllabus share rankings and empirical analyses of our specific research questions. To help unpack our findings, we also describe a qualitative study of how the instructors attached to our syllabi actually went about choosing the readings for their seminars.

Existing Metrics for Gauging Journal Status

Status is a central construct in management. Magee and Galinsky (2008) define status as the degree to which someone or something is respected or admired. Washington and Zajac (2005) define it as a socially constructed and accepted ranking of targets in some larger system. Status is one member of a larger class of social hierarchy constructs—alongside constructs like power, reputation, and legitimacy. What makes status unique is its emphasis on relative standing within some system (Bitektine et al., 2020; Djurdjevic et al., 2017).

That emphasis on relative standing explains why journals are often described in status terms. After all, scholars in the field of management can only submit to one outlet at a time. If a given manuscript fits the mission of multiple journals—and the conversation it is contributing to is occurring in multiple journals (Huff, 2009)—then socially constructed standing becomes a salient factor in deciding where to submit. That emphasis on relative standing is less endemic to other terms sometimes used to evaluate journals, such as influence (Podsakoff et al., 2005) or productivity (McWilliams et al., 2005).

If journals are often discussed in status terms, how do scholars who are new to the field begin to learn about journal status? The literature on newcomer socialization emphasizes the role of discourse—being a part of discussions that provide literal and symbolic cues to learn about the organization (Ashforth, Sluss, & Harrison, 2007). Doctoral students are exposed to discourse in a number of settings, from hallways to lunch tables to classrooms to email threads. At some point, such discourse is likely to reference three indicators commonly used to judge journal status: impact factor, ranking lists, and advisory lists. We describe these indicators of status below, to help illustrate how syllabus share could be complementary to them.

Impact Factor

Impact factors came into being as a tool to guide librarians’ decisions about which journals to include on their shelves (Archambault & Larivière, 2009). They attained more salience when the Institute for Scientific Information (ISI) created its Journal Citation Report (JCR), complete with the formula to divide a journal’s citations in a given year from the prior 2 years’ articles by the number of articles published those prior 2 years. At the time of this writing, Clarivate provides the JCR for 12,856 journals indexed into 238 categories. The categories most relevant to management include behavioral sciences, business, communication, economics, ethics, industrial relations and labor, management, operations research and management science, political science, psychology-applied, psychology-experimental, psychology-social, and sociology.

On the one hand, impact factors have value because they provide an objective complement to more subjective ratings of journals by subject matter experts. On the other hand, impact factors have limitations that inject noise to go along with their signal. For example, impact factors vary significantly across fields, as a function of field size, typical article length, and citation and reference norms (Adam, 2002; Amin & Mabe, 2000; Archambault & Larivière, 2009; Seglen, 1997). As another example, review articles garner significantly more citations, providing an advantage for journals that specialize in (or partially include) such pieces (Adam, 2002; Archambault & Larivière, 2009; Falagas & Alexiou, 2008; Seglen, 1997). In addition, although the numerator of the impact factor counts citations to any type of article, the denominator excludes editorials, comments, letters, and news items (Adam, 2002; Amin & Mabe, 2000; Archambault & Larivière, 2009; Falagas & Alexiou, 2008; Moed & Van Leeuwen, 1995; Seglen, 1997)—pieces which can garner citations.

Ranking Lists

Ranking lists represent journal lists that feed into annual rankings of business schools and management departments. The most venerable ranking list belongs to the Financial Times, which has used it to generate its Research Rank since 1999 (https://www.ft.com/content/3405a512-5cbb-11e1-8f1f-00144feabdc0). The Research Rank combines the absolute number of publications by a business school’s faculty in 50 journals over a 3-year period, weighted by the number of full-time faculty. The Research Rank then represents 10% of the total score used to generate the FT Global MBA Ranking—one of the most salient rankings of business schools. The list of 50 journals includes 21 that would typically be considered management outlets.

The University of Texas at Dallas (UTD) created its own ranking list in 2005, with some similarities to the Financial Times Research Rank (https://jsom.utdallas.edu/the-utd-top-100-business-school-research-rankings/). The ranking combines the absolute number of publications by a business school’s faculty in 24 business journals over a 5-year period. The list of 24 journals includes seven considered management outlets: Academy of Management Journal, Academy of Management Review, Administrative Science Quarterly, Journal of International Business Studies, Management Science, Organization Science, and Strategic Management Journal.

A third ranking list is focused solely on management departments, as opposed to broader business schools. Appearing in its original form in 2002, the TAMUGA Rankings tally the absolute number of publications by management faculty in eight journals in a given year and over a 5-year period (https://www.tamugarankings.com/methodology/). The eight journals are Academy of Management Journal, Academy of Management Review, Administrative Science Quarterly, Journal of Applied Psychology, Organizational Behavior and Human Decision Processes, Organization Science, Personnel Psychology, and Strategic Management Journal.

Advisory Lists

Advisory lists are compiled by associations or councils to provide journal status cues to relevant members and stakeholders. Although Harzing (2020) summarizes a number of advisory lists, two have gained particular mindshare in the field. One is the Academic Journal Guide (AJG) which is published by the Chartered Association of Business Schools (https://charteredabs.org/academic-journal-guide-2015/). The AJG first appeared in 2004 and utilizes judgments from a committee of experts while being informed by impact factor. The AJG ranks journals with one of five ratings: 1, 2, 3, 4, or 4*. A journal that receives a 4* is recognized as a journal with distinction in that particular field.

The other is the ABDC Journal Quality List (ABDC) which is published by the Australian Business Deans Council (https://abdc.edu.au/abdc-journal-quality-list/). The ABDC first appeared in 2008 and utilizes judgments from a panel of researchers and member-institutions in Australia and New Zealand. The ABDC ranks journals with one of four ratings: C, B, A, or A*. A journal that receives an A* is from the highest quality category—representing the top journals in the field.

Taken together, advisory lists and ranking lists provide both signal and noise when gauging journal status. Beginning with the signal, they provide some external validation about the journals likely to be weighed most heavily in promotion and tenure decisions. Turning to the noise, the criteria by which journals are chosen or retained for the lists are often unclear (Adler & Harzing, 2009). Of the five lists, FT, AJG, and ABDC are revised periodically, with UTD and TAMUGA remaining consistent since their inception. In addition, the FT, UTD, and TAMUGA lists create an artificial dichotomization of “counts” versus “doesn’t count” whereas journal status is more continuous (Aguinis et al., 2020; Anderson et al., 2021; Rasheed & Priem, 2020). Although the AJG and ABDC lists offer more interval-style ratings, there is still variation among the outlets that receive the same rating. Finally, three of the five lists—FT, UTD, and TAMUGA—are focused primarily on business outlets. They are therefore more silent on the status of basic disciplinary outlets in psychology, sociology, or economics.

Syllabus Share as a Metric for Status

Given that the space on doctoral syllabi is valuable terrain (Ashford, 2013; Rasheed & Priem, 2020), it means something when some journals regularly appear on syllabi across institutions. Likewise, it means something when journals rarely appear on syllabi across institutions. The introduction of syllabus share as a metric for journal status answers calls for an increased emphasis on knowledge transfer in management education. In their discussion of the impact of lists, Anderson et al. (2021) argued that more attention should be paid to knowledge transfer—to whether certain sources are used in teaching. Aguinis et al. (2019) provided one example of capturing knowledge transfer, by gauging the degree to which journals are referenced in undergraduate textbooks.

Our work therefore begins by providing a “proof of concept” for syllabus share—illustrating what it would look like using doctoral seminar syllabi. However, we also wanted to begin constructing a nomological network for this new status cue. Specifically, it is important to explore the distinctiveness of syllabus share from impact factor and standing on ranking and advisory lists. If instructors begin their syllabi curations by solely pulling from high impact outlets, then syllabus share would be largely redundant with impact factor. Similarly, if instructors only draw from journals on their favored ranking list—or that earn the top rating in AJG or ABDC—then syllabus share would be largely redundant with those status cues. Thus, we were interested in the degree to which our new status cue showed evidence of discriminant validation from existing cues.

Research Question 1: How do the various journals associated with the field of management rank on syllabus share, based on doctoral syllabi from management PhD programs?

Research Question 2: As a metric for gauging journal status, is syllabus share distinct from impact factor and standing on ranking and advisory lists?

Of course, the notion of knowledge transfer lays at the core of socialization (Ashforth, Sluss, & Harrison, 2007; Ashforth, Sluss, & Saks, 2007; Cooper-Thomas & Anderson, 2006; Wang et al., 2015), and there are reasons to expect syllabus share to be particularly salient to doctoral students as they grapple with the status of journals. Seminars are likely to be a conduit for the discourse experienced by doctoral students—as they learn who the key figures are, how their work has contributed, and where it was published. Syllabi themselves could act as a discursive practice (Ashforth, Sluss, & Harrison, 2007), with additional discourse occurring as papers are discussed formally or during unplanned tangents, breaks during classes, and meals after classes.

In addition, doctoral syllabi could be viewed as a form of institutionalized socialization—a purposeful attempt to integrate newcomers into an orthodoxy about the way departments and fields function (Jones, 1986; Van Maanen & Schein, 1979). As Wang et al.’s (2015) model of socialization illustrates, formal organizational practices—like doctoral curricula—can be key drivers of institutionalized learning. That contention echoes the importance of written materials during socialization (Filstad, 2004), or what Cooper-Thomas and Anderson (2006) describe as “the organizational literature” (p. 499).

Doctoral syllabi are more than just literature, however, as they also provide insights into the faculty who curated them. Theorizing in the socialization literature is clear about the importance of mentors and supervisors who act as the agents of socialization (Ashforth, Sluss, & Harrison, 2007; Wang et al., 2015). Mentors and supervisors play the role of insiders who possess the knowledge that newcomers lack (Cooper-Thomas & Anderson, 2006). In addition, they become role models who illustrate how seasoned veterans think and behave (Filstad, 2004). The instructor of the seminar should be viewed in just this fashion, especially given that doctoral courses are typically taught by tenured faculty.

We therefore sought to extend our nomological network for syllabus share by seeing whether it converged with perceptions of journal status on the part of two distinct groups. One group was recent graduates of the doctoral programs in the 53 business schools we sampled—graduates who took seminars like those our coding will describe and are now junior scholars in other business schools. A correlation between syllabus share and junior scholar perceptions would be consistent with the socialization logic described above. Syllabi could have been a form of institutional socialization that shaped discourse and encouraged role modeling of more senior insiders. The other group was full professors in the 53 business schools we sampled—senior scholars who have been part of the same units that produced the syllabi that will be described in our coding. A correlation between syllabus share and senior scholar perceptions would likely indicate a causal flow opposite to the junior scholar perceptions. Here the senior scholars would have helped maintain or reinforce the norms that might have shaped the instructor’s curation decisions. Regardless, associations between syllabus share and junior and senior faculty perceptions of journal status would provide convergent validation evidence for our new status indicator.

Research Question 3: Is syllabus share correlated with junior and senior scholar perceptions of journal status?

An additional drawback to some existing indicators of journal status is that they offer limited contextualization. Management is a particularly eclectic field given differences in levels of analysis, research design, and disciplinary underpinnings. The Academy of Management represents that eclectic nature through its various divisions. Wiseman and Skilton (1999) examined patterns in those division memberships, identifying higher-order clusters created from members belonging to two or more divisions. The micro cluster was largely indicated by belonging to the organizational behavior and human resources divisions. The macro cluster was largely indicated by belonging to the strategic management and organization and management theory divisions.

That eclectic nature results in two different forms that the departments that sponsor doctoral programs might take. One form is a “big tent” department—often labeled “Management”—that includes micro and macro faculty and doctoral students. Another form is a more specialized standalone unit—often labeled in the same fashion as the Academy’s divisions (e.g., “OBHR” or “Strategy”). Consider the faculty and doctoral students in a more specialized micro group. They might wonder about the degree to which basic disciplinary journals in psychology are cited in the management field. Unfortunately, impact factor does not provide such contextualization as it is based on total citations—regardless of where they originate. The faculty and students in our example might also wish that relevant ranking lists were more nuanced (or comprehensive) with respect to micro outlets.

We therefore sought to provide some contextualization for syllabus share. Specifically—in addition to the overall results relevant to Research Question 1—we provide separate results for micro and macro seminars. The micro results provide status information rooted in that side of the field, including which “big tent” journals are more salient, how more specialized journals compare to them, and which basic psychology journals are most visible. Likewise, the macro results illustrate which “big tent” journals are more salient in that side of the field, how more specialized journals compare to them, and which basic sociology and economics journals are most visible.

Research Question 4: How do syllabus share rankings vary across micro and macro seminars?

Method

Sampling of Syllabi

In order to introduce syllabus share as a metric for gauging journal status, we needed a sample of syllabi that had three qualities. First, the syllabi needed to come from doctoral programs at research-productive business schools—schools whose scholarly profiles would provide a face-valid foundation for the syllabus share benchmark. Second, the syllabi needed to be representative of the worldwide nature of the management field. Third, the syllabi needed to provide a representative sample of both micro and macro seminars.

We therefore rooted our sampling in two rankings of business school research productivity. The first was the UTD Worldwide Top 100, which shows the 100 most productive business schools based on 5-year totals of publications in the 24 UTD journals. Our sampling occurred in the spring of 2017, so the Top 100 was based on research productivity from 2013 to 2017. The second was the FT Global MBA Ranking, which highlights 100 business schools based, in part, on the FT Research Rank—a 3-year total of publications in the 50 FT journals. In 2017, the Research Rank component was based on productivity from 2015 to 2017.

After considering the two lists in tandem—and after removing schools that did not have doctoral programs—we wound up with a total of 113 business schools. We then built a database of contact people that included the email addresses of PhD coordinators and department chairs. We reached out to those contact people, requesting all syllabi that were taught within the doctoral program by the department’s faculty. Notably, the emails we sent to request syllabi did not reference the creation of a metric for gauging journal status. Rather, the emails only mentioned a research study focused on doctoral curricula. The request for syllabi asked for “any PhD content seminar that would fall under the broad heading of Management. Those may include more general seminars on Organizational Behavior, Strategic Management, Human Resource Management, and Organization Theory, or more specific seminars on subtopics of those areas.”

The email to request syllabi was sent near the end of the 2016 to 2017 academic year, with follow-up reminders sent over the summer and into the fall. Of the 113 schools contacted, 53 schools responded to our request and sent syllabi, for a response rate of 47%. We gauged potential response bias by comparing the 53 schools that responded to our email with the 60 schools that did not. Using a two-tailed t test, we found no statistically significant differences between the two groups on their UTD Worldwide Top 100 ranking (t = 1.49, p =.14), their FT Global MBA ranking (t = 1.41, p =.16), their total university enrollment (t = 1.55, p =.12), or whether the school was in an English majority speaking country (t = 1.78, p =.08). The 53 schools in our sample are shown in Appendix A. We received 179 codeable syllabi—90 from micro seminars and 89 from macro seminars. That sample size compares favorably to Skitka et al.’s (2021) coding of social and personality psychology syllabi, which involved 72 syllabi. Appendix B provides representative course names for the syllabi we received.

Coding of Syllabi

We created a coding spreadsheet that would allow us to identify and count the different journals used in each seminar. The authors met in person with a team of research assistants to review the coding sheet and practice the procedures. Because each team member was allowed to code independently, we created a Slack channel to manage group messaging. Coders were encouraged to post excerpts of any syllabus details they were unsure about, with the authors able to clarify coding for the benefit of the entire team. In some cases, syllabi included optional readings in addition to required readings. We coded those optional readings as well, given that students typically still read them—whether in preparation for class or when studying for comprehensive exams. The average time to code each syllabus was 30 min.

Calculation of Syllabus Share

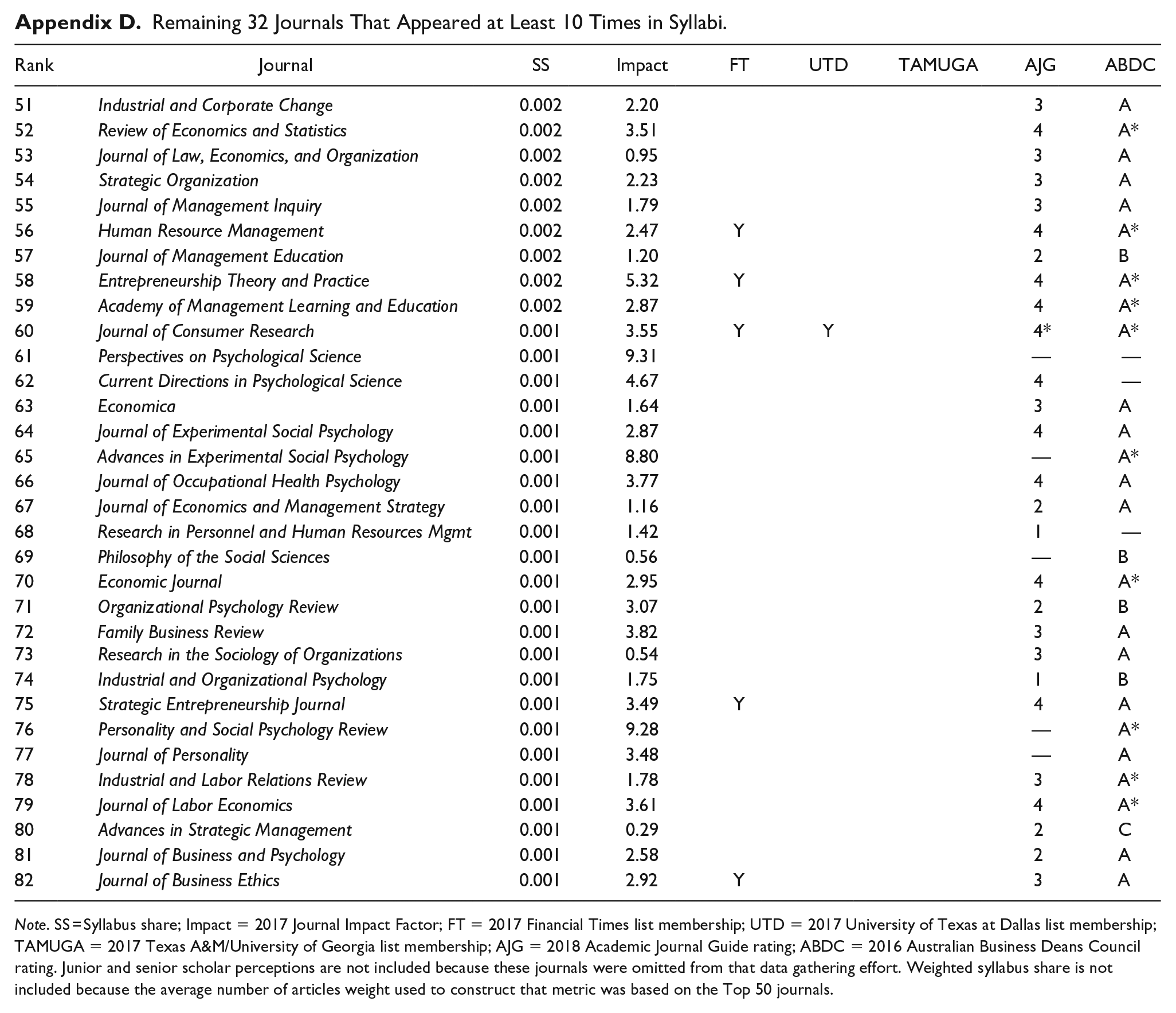

There were a total of 16,740 readings across the 179 syllabi, meaning that each syllabus contained an average of 94 readings. Of those, 2,616 were either books or book chapters that were outside the scope of our study. That left 14,124 journal articles across the 179 syllabi—79 journal articles per syllabus. That total sample size of 14,124 journal articles compares favorably to Skitka et al. (2021), where the sample size was 3,415 journal articles. In all, 376 journals were represented in our sample, with 82 of those appearing 10 or more times across the 179 syllabi. We focused our analyses on the Top 50 of those journals because our gathering of scholar perceptions of journal status required participants to rate journals one-by-one. We felt that limiting our set to 50 would retain a comprehensive sample without overwhelming those participants. Appendix C lists those Top 50 journals. Appendix D lists the remaining 32 journals that appeared 10 or more times in our syllabi but were excluded from the gathering of scholar perceptions of journal status.

To calculate syllabus share, we first divided the number of times a journal appeared on a given syllabus by that syllabus’s total number of journal articles. So, if Administrative Science Quarterly appeared six times on a given syllabus, and that syllabus included 62 total journal articles, Administrative Science Quarterly earned a quotient of 0.097 (6/62) for that syllabus. The syllabus share for Administrative Science Quarterly was then the average of those 179 quotients across the 179 syllabi. The metric is therefore interpreted as follows: on average, what proportion of a syllabus’s journal articles come from a given journal?

Although the version of syllabus share described above is straightforward, it fails to consider the fact that some journals publish more articles than others (Wiseman & Skilton, 1999). We therefore also calculated what we term weighted syllabus share, which multiplies syllabus share by a weight that captures whether a journal publishes more or less than the average number of articles annually. We created a weight for each journal in the following way. First, we coded the publication year for the journal articles in our sample in order to see the distribution of those years. The mean publication year was 2002, with a standard deviation of 11 years. Thus, the majority of articles in our sample were published between 1991 and 2013. We then entered that date range into a search of Web of Science for the Top 50 journals, to see how many articles they published during that date range. Mirroring the way the numerator of impact factor is calculated (Archambault & Larivière, 2009), we limited that search to what Web of Science classifies as articles or review articles, omitting book reviews, editorial materials, proceedings papers, corrections, biographical items, additions, and news items. We then divided that total number of articles by 22 to get the average number per year for the 1991 to 2013 span.

Across the Top 50 journals in our sample, the average number of articles published from 1991 to 2013 was 73. We then created the weight for weighted syllabus share by dividing the Top 50 average of 73 by a journal’s own average. The weight therefore enhances syllabus share for journals that publish fewer than the average number of articles, provides little change for journals that publish around the average number of articles, and diminishes syllabus share for journals that publish more than the average number of articles.

Other Indicators of Journal Status

We also captured other indicators of journal status in order to explore Research Questions 2 and 3—and to begin constructing a nomological network for syllabus share.

Impact Factor

We accessed the 2017 impact factor for the journals in our syllabi, taken from Clarivate’s JCR. The 2017 impact factor for a given journal reflects citations in 2017 from articles published in 2015 and 2016, divided by the total number of articles published in 2015 and 2016.

Standing on Lists

We created three dummy variables corresponding to membership in the FT, UTD, and TAMUGA lists as of 2017. Journals received a 0 if they were not on a particular list and 1 if they were. We used the 2018 edition of the AJG because it was closer to our 2017 time frame than the 2015 edition. We treated the 1 to 4* scale as interval, including the distinction between 4 and 4*. None of the journals in the Top 50 were rated 1 or 2. Thus, we coded 3, 4, and 4* as 0, 1, and 2, respectively. We used the 2016 edition of the ABDC because it was closer to our 2017 time frame than the 2019 edition. We treated the C to A* scale as interval, including the distinction between A and A*. None of the journals in the Top 50 were rated C or B. Thus, we coded A and A* as 1 and 2, respectively.

Junior Scholar Status Perceptions

We identified recent graduates of the 53 doctoral programs that provided syllabi by scanning program websites and LinkedIn profiles. Given that our syllabi were gathered in the 2016 to 2017 academic year, we looked for graduates in the 2019 to 2022 time frame. We identified 144 recent graduates from the 53 programs. We asked them to “please choose the letter grade that corresponds to how you perceive the overall status of each journal listed below,” with the list being our Top 50 journals. The letter grades provided were A, A-, B+, B, B-, C+, and C. Their letter grades were then translated to the typical GPA numbers: 4.0 for A, 3.7 for A-, 3.3 for B+, 3.0 for B, 2.7 for B-, 2.3 for C+, and 2.0 for C. They were given a $10 Amazon eGift Card for their participation. We received surveys from 45 of the 144 recent graduates, for a response rate of 31%. We gauged potential response bias by comparing the 45 junior scholars who responded to our email with the 99 junior scholars who did not. Using a two-tailed t test, we found no statistically significant differences between the two groups on their school’s UTD Worldwide Top 100 ranking (t = 1.51, p =.14), FT Global MBA ranking (t = 0.96, p =.34), total university enrollment (t = 1.51, p =.14), or presence in an English majority speaking country (t = 0.59, p =.56). We averaged across the 45 ratings for each journal to provide a GPA-style rating. Those ratings were shown to be reliable, with an ICC(1) of 0.48 and an ICC(2) of 0.98.

Senior Scholar Status Perceptions

We identified full professors at the 53 departments that provided our syllabi by scanning departmental websites. We omitted any instructors who had provided syllabi from that group, so that senior scholar status perceptions would be independent of the curation decisions that resulted in our syllabus share results. We identified 402 full professors at the 53 departments. We followed the same procedure used for junior scholar perceptions—asking them to rate 50 journals in exchange for a $10 Amazon eGift Card. We received surveys from 88 of the 402 full professors, for a response rate of 22%. We gauged potential response bias by comparing the 88 senior scholars who responded to our email with the 314 senior scholars who did not. Using a two-tailed t test, we found no statistically significant differences between the two groups on their school’s UTD Worldwide Top 100 ranking (t = 0.78, p =.44), FT Global MBA ranking (t = 0.20, p =.84), or presence in an English majority speaking country (t = 1.21, p =.23). Those who responded did come from slightly larger universities, however (t = 2.15, p =.04). We averaged across the 88 ratings for each journal to provide a GPA-style rating. Those ratings were shown to be reliable, with an ICC(1) of 0.46 and an ICC(2) of 0.99.

Results

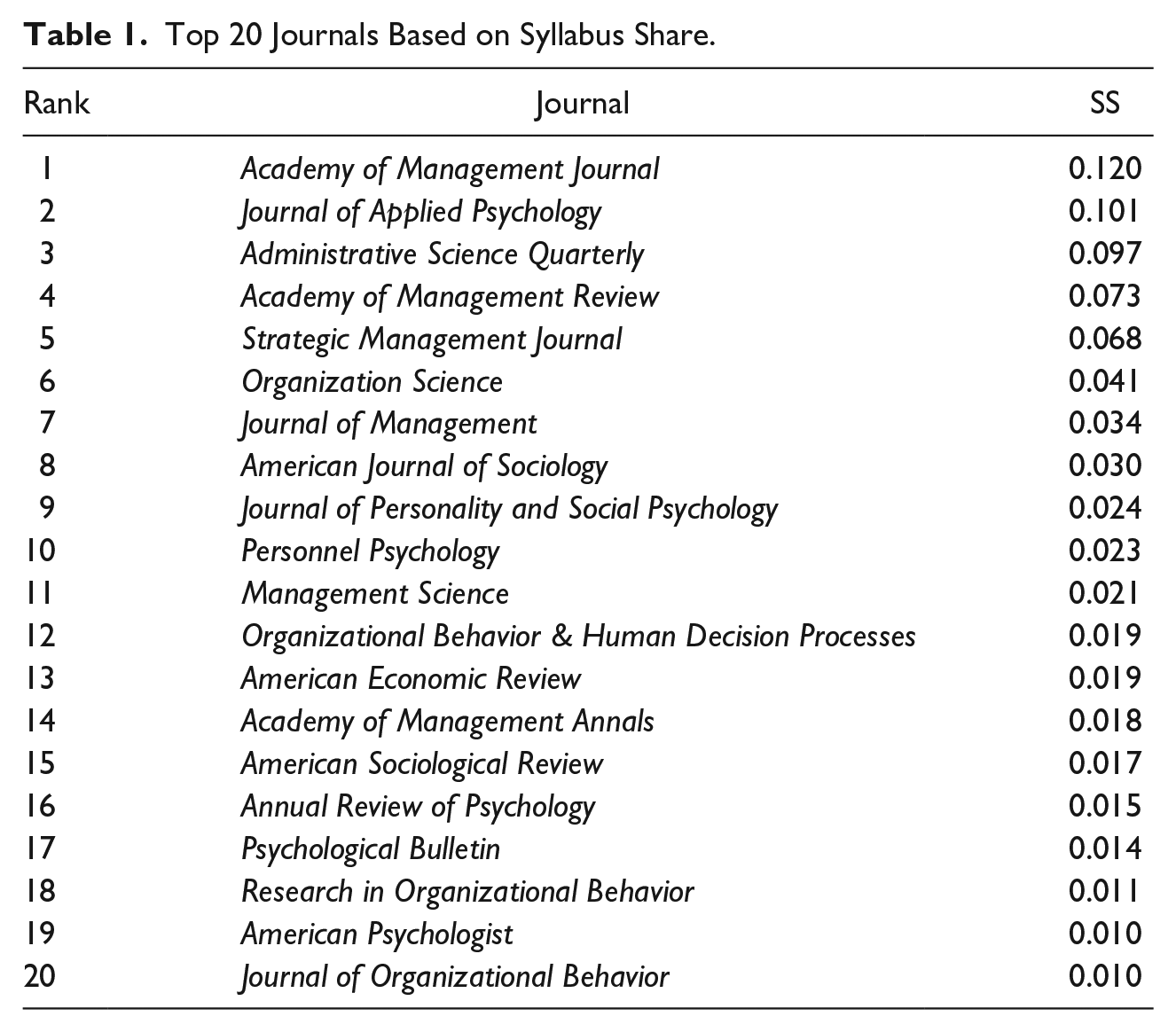

Research Question 1 asked how the journals associated with the field of management rank on syllabus share, based on doctoral syllabi from management PhD programs. Table 1 lists the Top 20 journals for syllabus share. The top-ranked journal was Academy of Management Journal, with its 0.120 share illustrating that—on average—12.0% of the journal articles in management syllabi come from that journal. Rounding out the Top 5 were Journal of Applied Psychology (0.101), Administrative Science Quarterly (0.097), Academy of Management Review (0.073), and Strategic Management Journal (0.068).

Top 20 Journals Based on Syllabus Share.

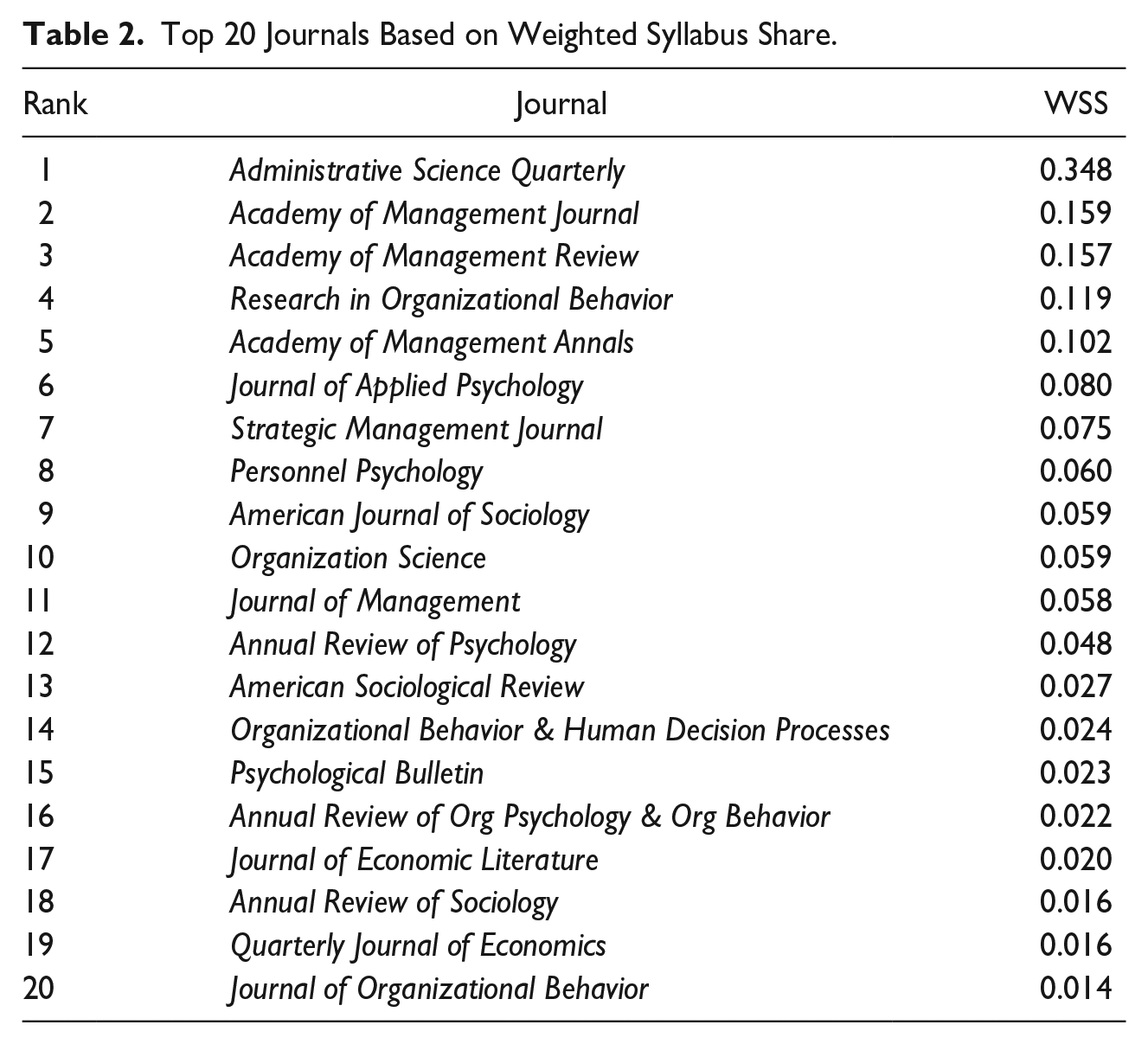

Table 2 lists the Top 20 journals for weighted syllabus share, where the weight enhances syllabus share for journals that publish fewer articles than average and diminishes syllabus share for journals that publish more articles than average. The top ranked journal was Administrative Science Quarterly, with its 0.348 share illustrating that—on average, and if differences in number of published articles are taken into account—34.8% of the journal articles in management syllabi would appear to come from that outlet. What is clear in Table 2 is that Administrative Science Quarterly stands alone in syllabi presence once the number of articles published is considered. Its weighted share is more than double that of second-place Academy of Management Journal and third-place Academy of Management Review.

Top 20 Journals Based on Weighted Syllabus Share.

Other than the separation by Administrative Science Quarterly, a few things stick out when comparing Table 2 to Table 1. First, journals that publish quarterly (Academy of Management Review, Personnel Psychology) or annually (Academy of Management Annals, Annual Review of Psychology, Research in Organizational Behavior) rise as their more limited number of articles is taken into account. This adjustment winds up placing more of a premium on review pieces. Second, journals that publish monthly (Journal of Applied Psychology, Strategic Management Journal, Journal of Personality and Social Psychology, Management Science, and American Economic Review) fall in the weighted rankings, with the latter three falling out of the Top 20 altogether.

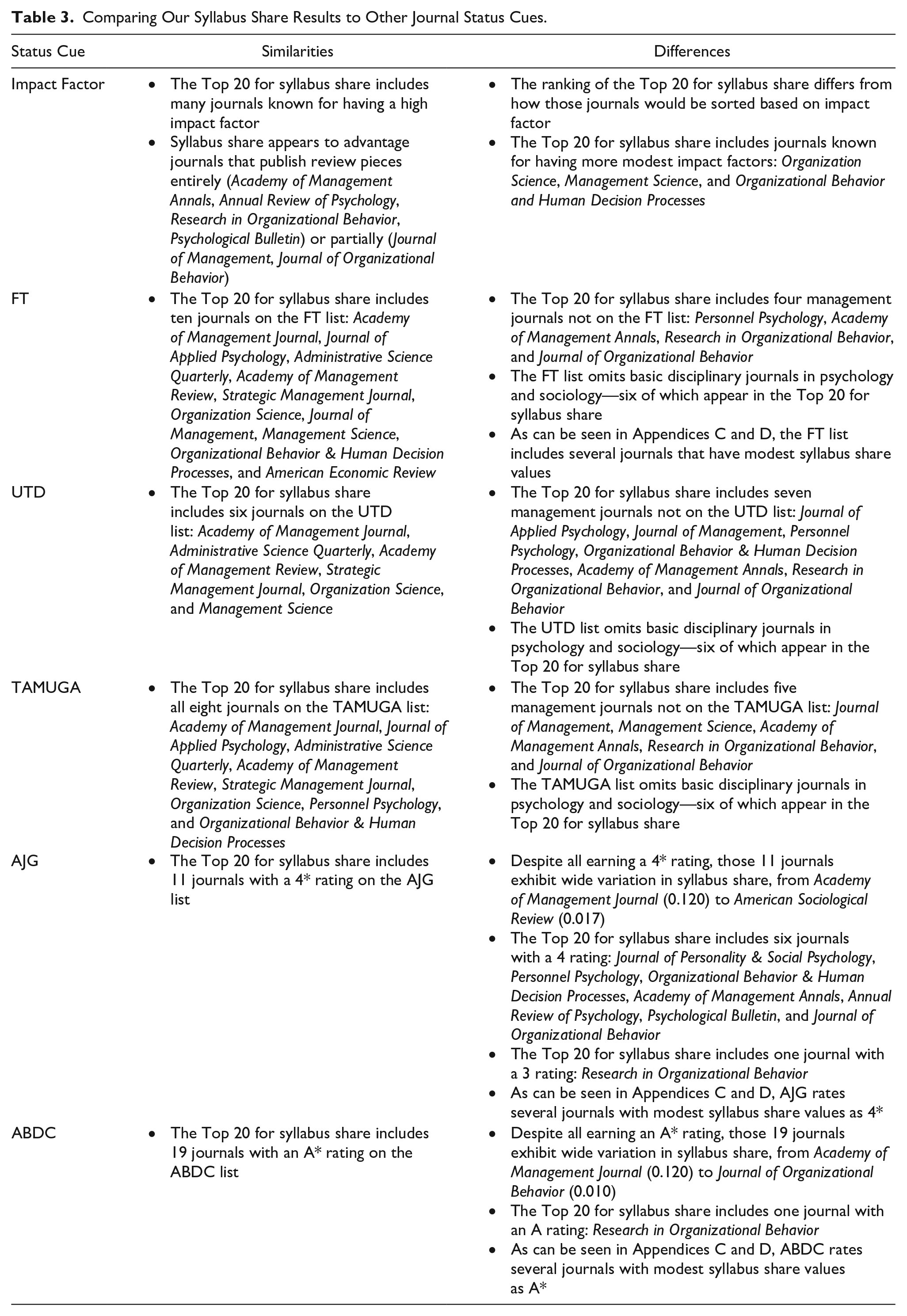

One way to gauge the value of syllabus share is to consider whether it tells a similar or different story when compared to existing indicators of journal status. Table 3 summarizes comparisons to other status cues using the regular syllabus share metric, though the same kinds of comparisons could be made with the weighted version. The table illustrates that there are some similarities between the status takeaways with syllabus share and the status takeaways from impact factor, membership in ranking lists, and ratings in advisory lists. But—with every status cue shown in Table 3—there are important differences as well. Those differences support the value of syllabus share as a distinct metric for gauging journal status.

Comparing Our Syllabus Share Results to Other Journal Status Cues.

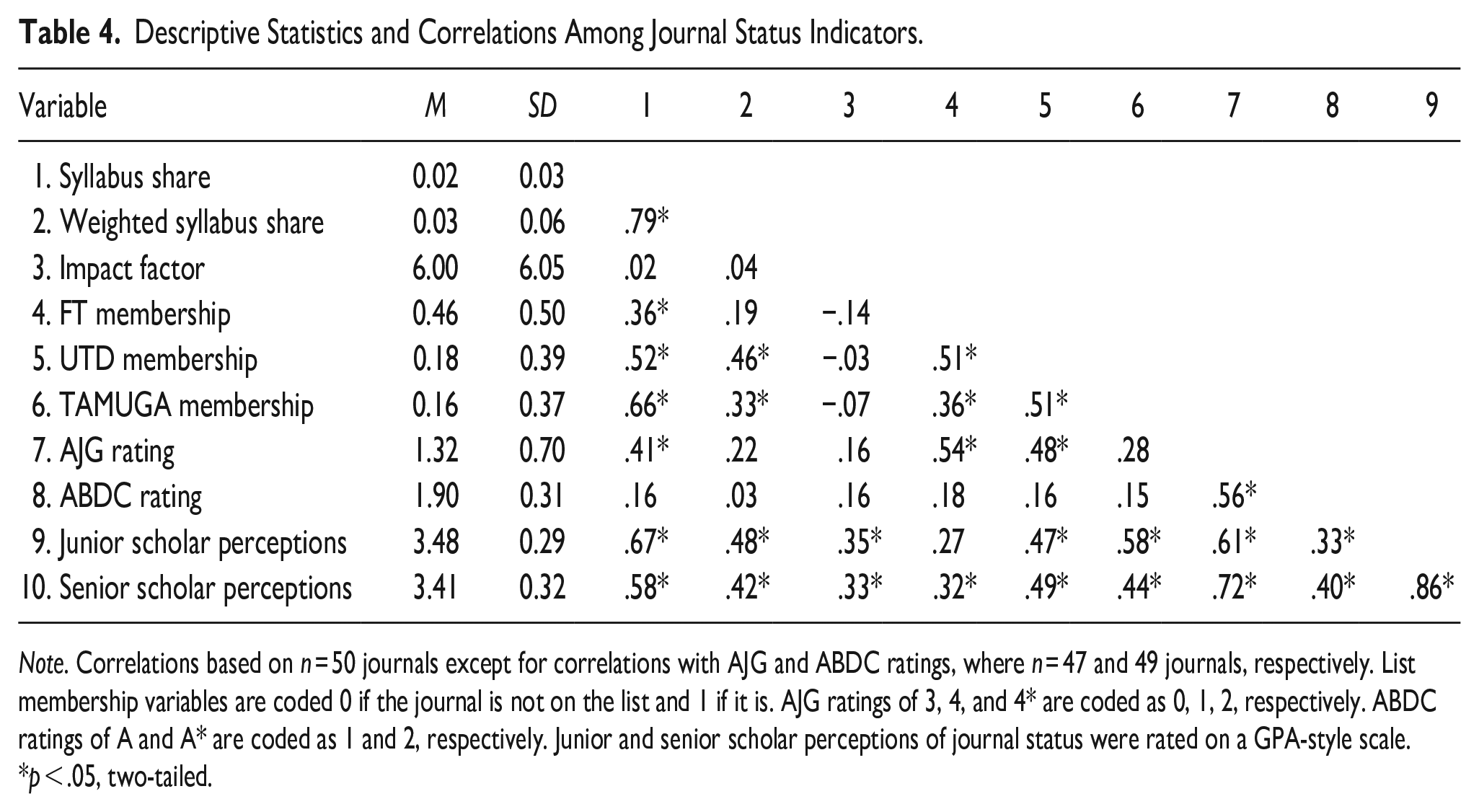

Understanding Syllabus Share’s Nomological Net

Research Question 2 asked how highly correlated syllabus share is with existing metrics of journal status, like impact factor and standing on ranking and advisory lists. Table 4 provides descriptive statistics and correlations for those variables. The syllabus share metrics were only weakly correlated with impact factor. Their correlations with membership in ranking lists ranged from weak to strong, depending on the specific syllabus share metric and the particular list being used. Their correlations with ratings in advisory lists ranged from weak to moderate, again depending on the specific syllabus share metric and the particular list being used. In general, these results again illustrate that syllabus share is not redundant with existing indicators of journal status—providing some evidence for discriminant validation.

Descriptive Statistics and Correlations Among Journal Status Indicators.

Note. Correlations based on n = 50 journals except for correlations with AJG and ABDC ratings, where n = 47 and 49 journals, respectively. List membership variables are coded 0 if the journal is not on the list and 1 if it is. AJG ratings of 3, 4, and 4* are coded as 0, 1, 2, respectively. ABDC ratings of A and A* are coded as 1 and 2, respectively. Junior and senior scholar perceptions of journal status were rated on a GPA-style scale.

p < .05, two-tailed.

Research Question 3 asked how highly correlated syllabus share is with junior and senior scholar perceptions of journal status. Table 4 illustrates moderate to strong correlations between the syllabus share metrics and both junior and senior scholar status perceptions. These results therefore provide convergent validation evidence for syllabus share as an indicator of journal status.

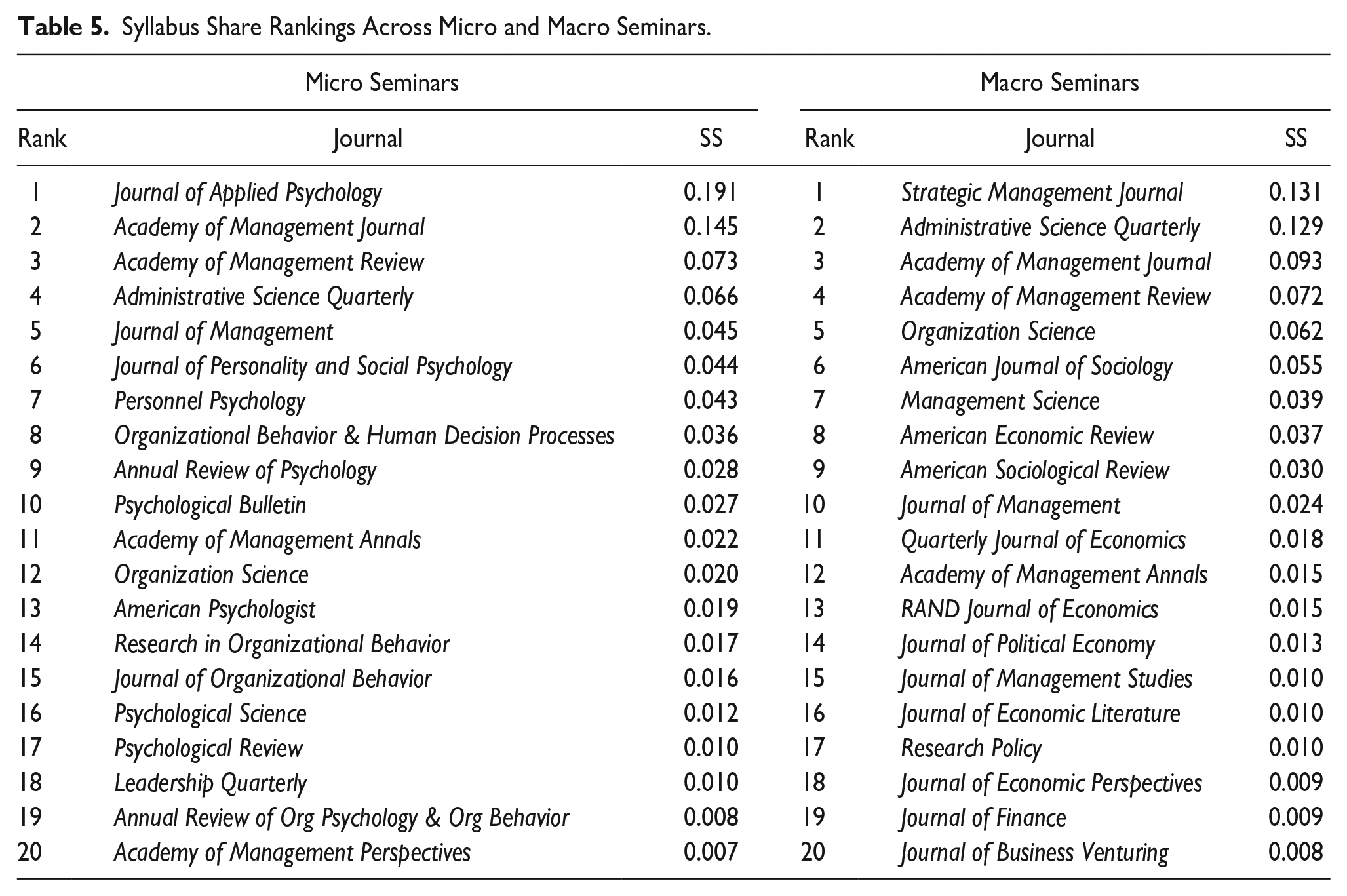

Contextualizing Syllabus Share

Research Question 4 asked how syllabus share rankings varied across micro and macro seminars. Table 5 provides a comparison of the Top 20 for those two types of seminars. As would likely be expected, Journal of Applied Psychology takes the top spot in micro seminars with Strategic Management Journal assuming that position in macro seminars. In addition, basic disciplinary journals in psychology are prevalent in micro seminars (with six such titles) whereas basic sociology and economics journals are prevalent in macro seminars (with seven such titles, not counting Journal of Political Economy and Journal of Finance). With respect to more “big tent” journals, Administrative Science Quarterly, Academy of Management Journal, and Academy of Management Review remain in the top four across the micro-macro divide. Three “big tent” journals do evidence some differences, however, with Organization Science seven spots higher for macro seminars, Management Science going unranked with micro seminars, and Journal of Management being five spots higher for micro seminars. Table 5 also reveals differences in literature- or subfield-based journals, with Leadership Quarterly appearing for micro seminars and Journal of Business Venturing appearing for macro seminars.

Syllabus Share Rankings Across Micro and Macro Seminars.

Qualitative Study

We conducted a qualitative study to examine the thought process instructors use as they curate a syllabus. This qualitative study allowed us to understand the curation process that may have given rise to our syllabus share results. It also allowed us to gauge the degree to which the socialization dynamics described in our manuscript are explicit or implicit. That is, are some instructors consciously attempting to socialize doctoral students with their syllabus curation? Or is any socializing impact of their syllabus only implicit? Taken together, such insights could help us unpack the status dynamics that surround syllabus curation decisions—clarifying syllabus share’s value as an indicator of journal status.

To gather the qualitative data, we reached out to the 152 instructors who taught the 179 seminars in our sample. We asked them to complete a Qualtrics survey that used a combination of open-ended questions—completed by writing responses in text boxes—and quantitative prompts. The survey began by asking instructors two open-ended questions about how they choose a given article for their syllabus. The first question read, “Please consider the decision to incorporate a particular journal article into your PhD seminar syllabus. What is the thought process that leads to you to decide to include a particular journal article? Put differently, when you include a journal article, what exactly goes into deciding that it’s ‘made the cut?’” The second question read, “Different professors bring with them different priorities when constructing a PhD seminar syllabus. What are some of the priorities you bring to the experience? Put differently, what are some personal values, principles, or priorities that shape the journal articles you include in a syllabus?” Note that neither question explicitly asked about issues of journal status.

Our next two questions asked about the socialization dynamics at play in syllabus curation decisions. Both questions began with a quantitative prompt that asked whether instructors feel they send signals with their syllabi, with an open-ended follow-up asking what signals they might send. The first question read, “Some professors might use a PhD seminar syllabus to send signals to PhD students as they begin their scholarly journeys. That is, they might use their syllabus as a means to socialize new scholars as they learn the terrain of the field. Do you feel like you use the journal articles in your syllabus to send any signals to PhD students about the field?” with anchors of (1 = Definitely not; 2 = Probably not; 3 = Maybe not; 4 = Not sure; 5 = Maybe; 6 = Probably; and 7 = Definitely). Its follow-up asked, “If so, what signals do you try to send with your chosen journal articles?” Although this question also avoided asking about journal status, our next one did broach that topic. It read, “The previous question asked about the possibility of using the journal articles in your syllabus as a means of socializing new scholars. On a more specific note, do you use the particular journals that appear more often in your syllabus (and less often in your syllabus) as a signal to PhD students about the field?” with anchors of (1 = Definitely not; 2 = Probably not; 3 = Maybe not; 4 = Not sure; 5 = Maybe; 6 = Probably; and 7 = Definitely). Its follow-up asked, “If so, what signals do you try to send with the particular journals you include more often (vs. less often)?” The instructors were offered a $20 Amazon eGift Card in exchange for their participation.

We received completed surveys from 55 of the 152 instructors, for a response rate of 36%. We gauged potential response bias by comparing the 55 instructors who responded to our email with the 97 instructors who did not. Using a two-tailed t test, we found no statistically significant differences between the two groups on their school’s UTD Worldwide Top 100 ranking (t = 1.66, p =.11), FT Global MBA ranking (t = 0.08, p =.94), total university enrollment (t = 0.50, p =.62), or presence in an English majority speaking country (t = 0.62, p =.54). We analyzed the open-ended comments following typical guidelines for the thematic analysis of qualitative data (Charmaz, 2014; Glaser, 2001; Glaser & Strauss, 1967; Strauss & Corbin, 1998). We began by importing our data into NVivo Release 1.6.2 by QSR International. We then created open (i.e., initial) codes that capture the lived experiences and interpretations of the instructors. That process resulted in the creation of 34 open codes that incorporated 266 passages of text. Next, we generated focused (i.e., parent) codes that are more abstract, appeared frequently in the data, and seemed to have particular significance. Sometimes those focused codes were created by grouping multiple open codes; other times they represented an elaboration of one open code. That process resulted in the creation of 12 focused codes.

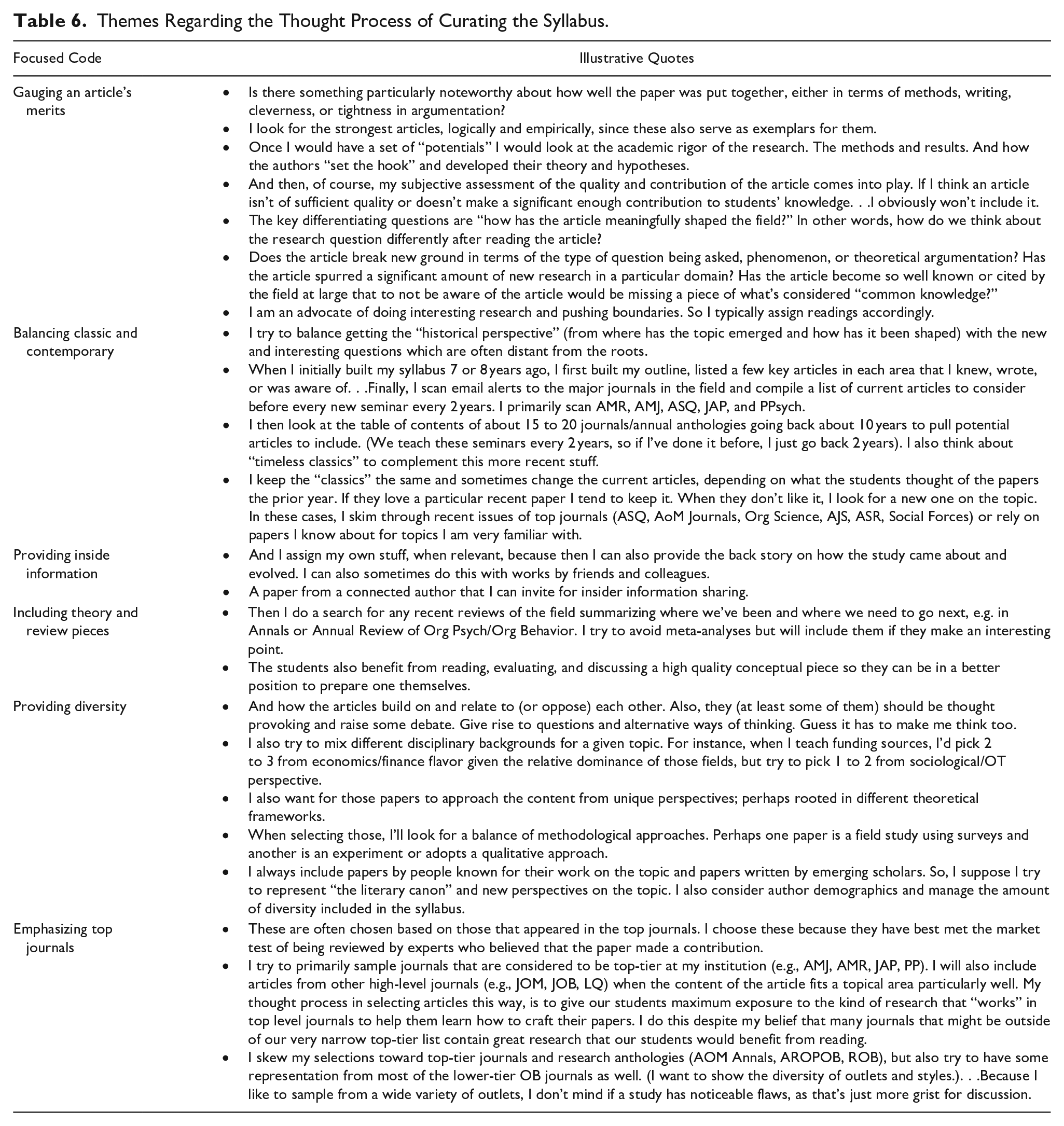

Table 6 reveals six themes that capture the thought process used to curate the readings on a syllabus—many of which support the logic of syllabus share as an indicator of status. Although some instructors did indicate that they occasionally included “flawed” articles to drive discussion, gauging an article’s merits was a dominant theme. And the criteria instructors used are the same criteria that many high status journals emphasize in their mission statements and reviewer scoring forms: theoretical logic, methodological rigor, magnitude of contribution, and interestingness. The desire to balance classic and contemporary pieces also wound up advantaging high status journals, as many instructors pointed to a process of scanning recent issues of top journals to add current pieces. Other themes pointed to a broader picture of status, however. For example, instructors pointed to a desire for narrative reviews, to go along with theory pieces. And diversity was valued for its own sake—including diversity of takes on a topic, disciplinary underpinnings, theoretical frameworks, methodological approaches, and author demographics. That diversity was somewhat less emphatic when it came to journals, however, though that takeaway differs somewhat in the section on socialization signals.

Themes Regarding the Thought Process of Curating the Syllabus.

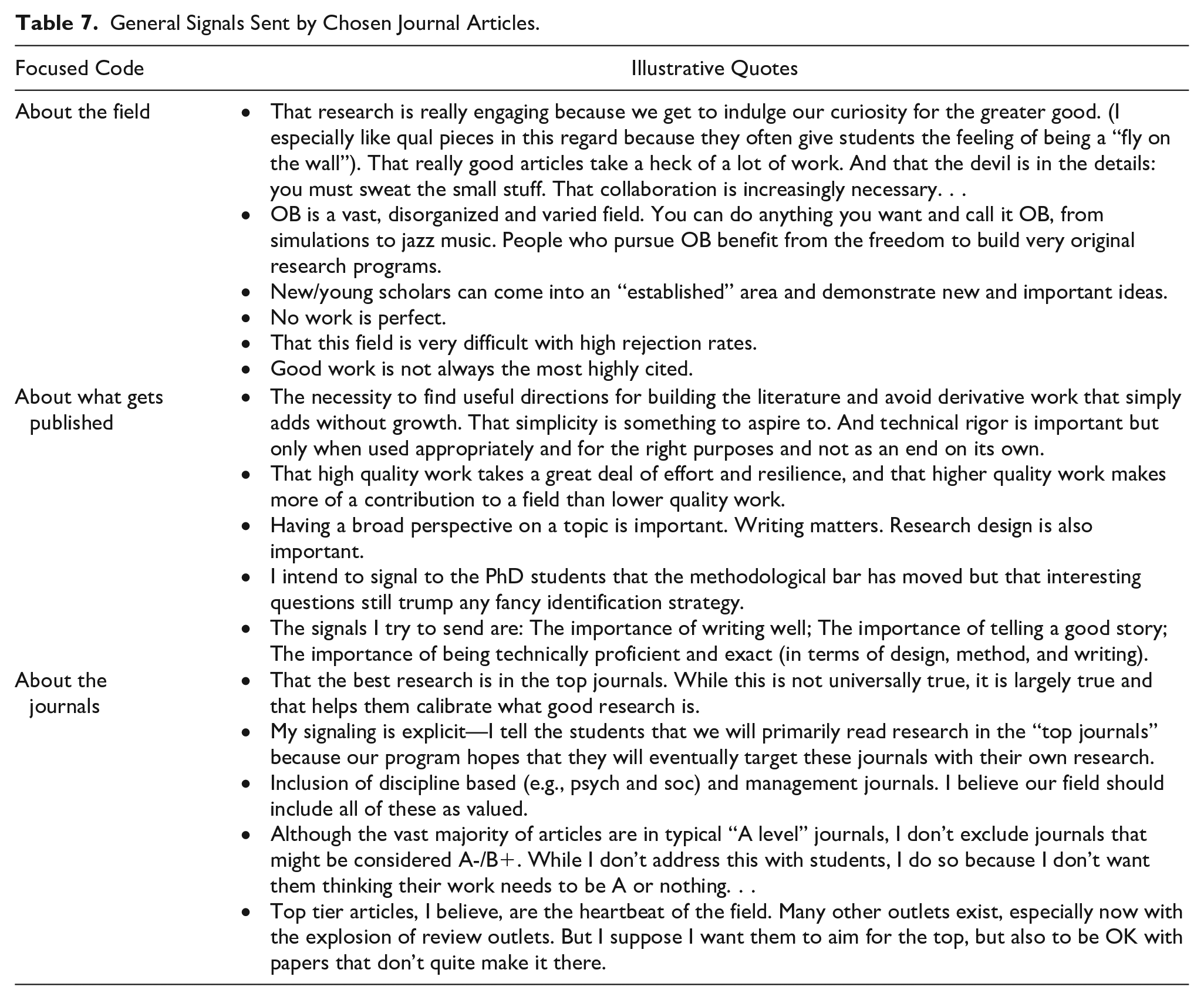

With respect to the quantitative prompt about general signals sent by journal article choices, 92% of instructors indicated that they used the journal articles in their syllabi to send signals to doctoral students, with 22% indicating that they maybe did so, 22% indicating that they probably did so, and 48% indicating that they definitely did so. Table 7 illustrates the themes that encapsulated the majority of those signals. Instructors sent signals about the positives and negatives associated with the field and about the kind of work that tends to get published. The theme of journals comes up again, this time with some instructors noting that they signal an emphasis on high status journals but others noting that they signal a more diverse view. That theme also includes some emphasis of the importance of basic disciplinary outlets.

General Signals Sent by Chosen Journal Articles.

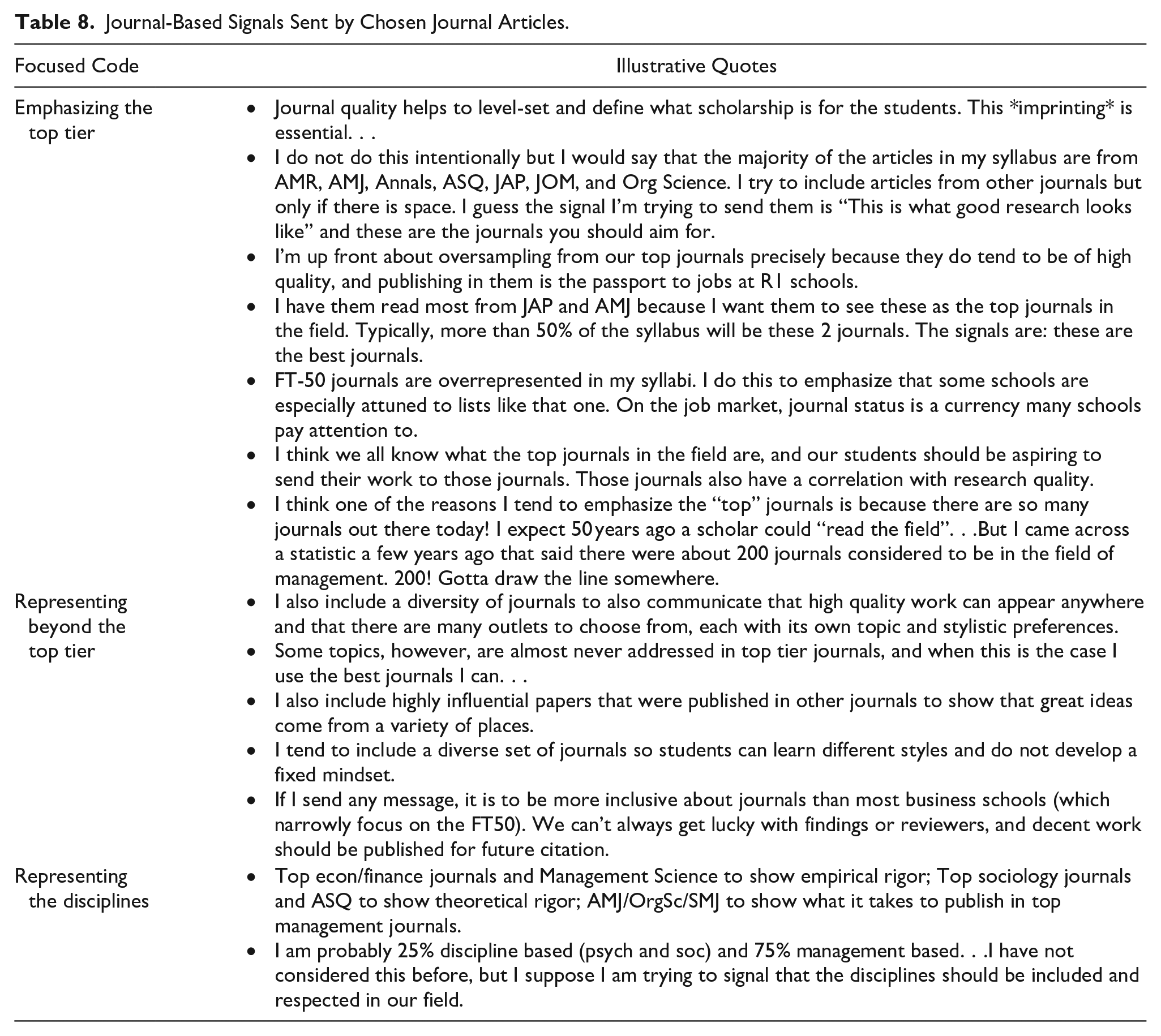

The quantitative prompt about journal-specific signals was the first time the survey explicitly mentioned issues of journal status. Here 78% of instructors indicated that they used the journal articles in their syllabi to send journal-based signals, with 15% indicating that they maybe did so, 24% indicating that they probably did so, and 39% indicating that they definitely did so. In terms of the signals actually sent, Table 8 illustrates some of the same signals that emerged in Table 7. Instructors did send the signal that high status journals should be emphasized—for imprinting purposes, job market purposes, aspirational purposes, and practical purposes. But there was also an explicit desire to represent a broader swath of the field’s outlets—and to showcase basic disciplinary journals.

Journal-Based Signals Sent by Chosen Journal Articles.

Discussion

Consider a doctoral student who is pursuing a lead-authored project. That new scholar is immediately faced with the question of which outlet to target. That decision will be based, in large part, on the missions of potential outlets and where the scholarly conversation that the project is contributing to is occurring (Huff, 2009). Sometimes that conversation may be occurring in multiple outlets, however, and often a manuscript will be rejected from the outlet that seems the best fit. Thus, in navigating submission decisions, journal status often becomes a relevant consideration.

Unfortunately, existing metrics of journal status typically provide incomplete guidance. Impact factors possess signal and noise and may ebb and flow over time (Adam, 2002; Archambault & Larivière, 2009; Falagas & Alexiou, 2008; Seglen, 1997). Ranking lists only highlight a small fraction of the journals relevant to the broad field of management. They also draw no distinctions between the journals that are (or are not) included. Similarly, advisory lists draw no distinctions between journals that receive the same rating, whether AJG’s 4* or ABDC’s A*. In addition, those existing metrics are not contextualized. Our doctoral student might make different choices if housed in a “big tent” department or a group more specialized in micro or macro domains.

We believe that syllabus share represents a useful metric for this student—and for other scholars in management. Importantly, our results showed that syllabus share was mostly distinct from impact factor and standing on relevant lists. Moreover, our qualitative results showed that—although cues like impact factor, ranking lists, and advisory lists were sometimes considered—curation decisions were made in a more thoughtful and considered fashion. Specifically, instructors gauged the merits of included articles, paid attention to article mix, and sent signals about what the field values. Supporting our case for syllabus share as a status cue, they also explicitly emphasized their own views of what the top journals are—to provide imprinting and to prepare students for the rigors of the job market.

Importantly, our results showed that syllabus share was correlated with junior and scholar perceptions of journal status. That junior scholar result provides some support for the notion that syllabi can be sources of socialization, given that the junior scholars were recent graduates of the programs who provided our syllabi. It may be that syllabi represented an input into the discourse they experienced during their program (Ashforth, Sluss, & Harrison, 2007), or comprised a tool for institutionalized socialization (Cooper-Thomas & Anderson, 2006; Filstad, 2004; Wang et al., 2015). It may also be that syllabi provided a cue about the views of a respected insider—or the norms of the faculty as a whole (Ashforth, Sluss, & Harrison, 2007; Cooper-Thomas & Anderson, 2006; Filstad, 2004; Wang et al., 2015). Indeed, our qualitative study showed that 78% of instructors used the articles in their syllabi to send signals about the field’s journals.

Aside from its usefulness as a status cue, syllabus share can provide insights into which journals instructors use to profess. Whetten (2007) pointed to the critical role played by the choice of reading material in the educational mission. The journals that sit atop our syllabus share rankings have “passed the tests” described by the instructors in our qualitative study. One can infer that instructors view those journals as encapsulating the foundational works and important recent contributions in management. Consider the Top 5 journals in syllabus share: Academy of Management Journal, Journal of Applied Psychology, Administrative Science Quarterly, Academy of Management Review, and Strategic Management Journal. The mission statements of those journals note that they are looking for work that is important, interesting, insightful, fresh, bold, cutting-edge, elegant, and rigorous. Our syllabus share results—together with the insights into the curation decisions offered by our instructors—suggest that those journals are delivering on their missions. And—because their inclusion in syllabi may be driven by a desire to imprint on new scholars—those journals are showing new scholars what their very best work could look like.

Theoretical and Practical Implications

Our work has a number of theoretical and practical implications for conversations occurring in Journal of Management Education. For example, our examination of how doctoral students are socialized adds to existing work focused on the doctoral student experience. The 5 years spent inside a doctoral program are stressful times (McCauley & Hinojosa, 2020), as students navigate seminars, comprehensive exams, research workload, and dissertations—while also teaching (Bonner et al., 2020) and finding their own “scholarly voice” (Ismail et al., 2020). Indeed, those years can be marked by anxiety and feelings of “imposter syndrome” (Pervez et al., 2021). Our work lends insight to one aspect of doctoral students’ “becoming” process (Trank & Brink, 2020): learning the terrain of the field’s journals. Importantly, learning that terrain is helpful to navigating some of the stressors experienced in doctoral life. Hollenbeck and Mannor (2007) encouraged scholars to combat the ambiguities of the research enterprise by being “resilient”—by submitting rejected manuscripts to still more outlets. We suspect our syllabus share results can inform such resilience.

Although our work does have implications for conversations occurring in Journal of Management Education, we would be remiss if we did not speak to the low syllabus share observed for journals that specialize in the scholarship of teaching and learning (SOTL). As shown in Appendix D, Journal of Management Education ranked 57th in syllabus share, with Academy of Management Learning and Education ranking 59th. Those rankings bely the facts that (a) SOTL journal articles are often in conversation with constructs and theories that are core to management, (b) the Management Education and Development division boasts significant membership in the Academy of Management, and (c) teaching is a signature aspect of the life of a professor. We would therefore encourage instructors—when they are engaging in the decision processes observed in our qualitative study—to review SOTL journals as well.

As another example, our work contributes to conversations about how administrators should handle the challenges of evaluating scholarly work. Administrative roles within business schools can be challenging—with decisions involving a number of quantifiable metrics of varying utility (Balkin & Mello, 2012; Dean & Forray, 2019; Stark, 2019). When it comes to evaluating research, decisions often revolve around ranking and advisory lists that are partially anchored by impact factor (Chapman, 2012; Mu & Hatch, 2021). How should such status cues be utilized by administrators? Scholars in business ethics have argued that the various ethical principles that exist should act as a prism for making decisions in a given situation (Crane & Matten, 2007). It would be helpful if the status cues that exist for journals could act in a similar fashion.

Unfortunately, a prism needs more than two sides. Standing on relevant lists and impact factor are not enough in many decisions, as many scholars publish in outlets that would fall outside of “in good standing on the list favored by the school” and “having a high impact factor.” Syllabus share could represent an additional side to the prism—one that is intuitive and straightforward. Taken together, Appendices C and D provide syllabus share results for 82 different journals—many more titles than the three ranking lists. And syllabus share does not represent a status cue originally created by librarians—like impact factor—or particular media outlets or universities—like ranking lists. Instead, it is rooted in the essence of management education—training new scholars to give them a foundation for their own research activities. An administrator could use our results in a number of ways, including drawing distinctions among journals with similar impact factors, comparing journals not on any ranking lists, contrasting journals that receive the same rating on advisory lists, and making sense of basic disciplinary outlets that may be unfamiliar to them.

Future Directions

Moving forward, it would be useful to repeat this kind of analysis every few years to be able to detect trends in syllabus share. The Academy of Management could potentially play a role in such an effort, with division office holders soliciting and storing syllabi to provide teaching assistance to members who cover doctoral seminars. From there, computer-aided content analysis could be used, in lieu of our more labor-intensive coding. Such an infrastructure would allow for the calculation of reliability estimates for syllabus share. It would also allow for more nuanced examinations that went beyond our analyses.

For example, scholars in the subfield of entrepreneurship might be interested in the journals most represented in entrepreneurship syllabi. Although our analysis included some syllabi with such content, our solicitation focused on the organizational behavior, strategic management, human resource management, and organization theory domains, specifically. Examining entrepreneurship syllabi specifically could provide a more fine-grained examination of subfield journals alongside more field-wide journals. A similar analysis could be undertaken for a subfield like international business, or for specific literatures like leadership, business ethics, and corporate governance that sometimes have standalone seminars. In addition, many PhD programs possess a “how to teach” seminar that is required before doctoral students enter the classroom. An analysis of those syllabi could illustrate the visibility of SOTL journals as a key tool for aiding those preparation efforts.

Limitations

Our work has some limitations that should be noted. Given our focus on indicators of journal status, we excluded books and book chapters from our coding. As Adler and Harzing (2009) noted, books and book chapters can sometimes be as impactful as journal articles in shaping the scholarly discourse. Our coding did include sources that were once thought of as book chapters but have more recently been treated like journals, such as Research in Organizational Behavior. But that still left thousands of readings across our syllabi that were outside our scope. Those sources are also likely to be agents of socialization for doctoral students.

Also, the application of syllabus share is subject to many of the same limitations as other indicators of journal status. Among those is the common practice of assuming that the status cues for a journal can be applied to any specific article published in that journal (Ramani et al., 2022; Singh et al., 2007). As Starbuck (2005) described, the review process is imperfect at all scholarly outlets. The best journals sometimes publish articles that are conceptually unambitious, methodologically flawed, or otherwise limited in their contributions to science. At the same time, unusually insightful, important, and groundbreaking work sometimes gets rejected from the most high-status outlets. Evaluations of specific articles are best made by a careful examination of the work by relevant experts—especially those who are well-versed in the relevant content and methods terrain.

In addition, some of the instructors who completed our qualitative survey noted that they occasionally included flawed articles in their syllabi as “grist for discussion.” Such articles would be picked up by our metric, despite the fact that instructors may view the articles as having lower status. This is a limitation shared by impact factor, where citations are picked up even when a cited article is being criticized. Another shared limitation with impact factor concerns review articles. Some of the instructors in our sample noted that they explicitly looked for review articles for their syllabi, and journals who entirely (or partially) publish reviews were well-represented in Tables 1 and 2. Likewise, critiques of impact factor have noted that it advantages journals that specialize in these pieces (Adam, 2002; Archambault & Larivière, 2009; Falagas & Alexiou, 2008; Seglen, 1997). Finally, as with impact factor, there are no statistical tests that can be used to contrast any two journals in our ranking. Is, for example, Strategic Management Journal’s 0.068 syllabus share really different from Organization Science’s 0.041 syllabus share? Answering that question would require repeated snapshots of syllabus share in order to be able to calculate a reliability—and, thus, a standard error of the measure.

Conclusion

Few topics are more debated in academic hallways, offices, conference rooms, and lunches than journal status. And few topics are more important for new scholars to understand—as they begin to prepare and submit manuscripts of their own. What new scholars—and more seasoned administrators—need are additional cues for helping to approach such debates. Syllabus share provides one such cue by answering the question “to what extent is this journal used to train new scholars?” Syllabus share is distinct from impact factor, ranking list membership, and advisory list ratings while also correlating with status perceptions held by both junior and senior scholars. And its use can be contextualized in a way that adds nuance for the micro and macro sides of the field. We therefore believe that syllabus share provides a useful “additional surface” for the prism used to gauge journal status.

Footnotes

Appendix

Remaining 32 Journals That Appeared at Least 10 Times in Syllabi.

| Rank | Journal | SS | Impact | FT | UTD | TAMUGA | AJG | ABDC |

|---|---|---|---|---|---|---|---|---|

| 51 | Industrial and Corporate Change | 0.002 | 2.20 | 3 | A | |||

| 52 | Review of Economics and Statistics | 0.002 | 3.51 | 4 | A* | |||

| 53 | Journal of Law, Economics, and Organization | 0.002 | 0.95 | 3 | A | |||

| 54 | Strategic Organization | 0.002 | 2.23 | 3 | A | |||

| 55 | Journal of Management Inquiry | 0.002 | 1.79 | 3 | A | |||

| 56 | Human Resource Management | 0.002 | 2.47 | Y | 4 | A* | ||

| 57 | Journal of Management Education | 0.002 | 1.20 | 2 | B | |||

| 58 | Entrepreneurship Theory and Practice | 0.002 | 5.32 | Y | 4 | A* | ||

| 59 | Academy of Management Learning and Education | 0.002 | 2.87 | 4 | A* | |||

| 60 | Journal of Consumer Research | 0.001 | 3.55 | Y | Y | 4* | A* | |

| 61 | Perspectives on Psychological Science | 0.001 | 9.31 | — | — | |||

| 62 | Current Directions in Psychological Science | 0.001 | 4.67 | 4 | — | |||

| 63 | Economica | 0.001 | 1.64 | 3 | A | |||

| 64 | Journal of Experimental Social Psychology | 0.001 | 2.87 | 4 | A | |||

| 65 | Advances in Experimental Social Psychology | 0.001 | 8.80 | — | A* | |||

| 66 | Journal of Occupational Health Psychology | 0.001 | 3.77 | 4 | A | |||

| 67 | Journal of Economics and Management Strategy | 0.001 | 1.16 | 2 | A | |||

| 68 | Research in Personnel and Human Resources Mgmt | 0.001 | 1.42 | 1 | — | |||

| 69 | Philosophy of the Social Sciences | 0.001 | 0.56 | — | B | |||

| 70 | Economic Journal | 0.001 | 2.95 | 4 | A* | |||

| 71 | Organizational Psychology Review | 0.001 | 3.07 | 2 | B | |||

| 72 | Family Business Review | 0.001 | 3.82 | 3 | A | |||

| 73 | Research in the Sociology of Organizations | 0.001 | 0.54 | 3 | A | |||

| 74 | Industrial and Organizational Psychology | 0.001 | 1.75 | 1 | B | |||

| 75 | Strategic Entrepreneurship Journal | 0.001 | 3.49 | Y | 4 | A | ||

| 76 | Personality and Social Psychology Review | 0.001 | 9.28 | — | A* | |||

| 77 | Journal of Personality | 0.001 | 3.48 | — | A | |||

| 78 | Industrial and Labor Relations Review | 0.001 | 1.78 | 3 | A* | |||

| 79 | Journal of Labor Economics | 0.001 | 3.61 | 4 | A* | |||

| 80 | Advances in Strategic Management | 0.001 | 0.29 | 2 | C | |||

| 81 | Journal of Business and Psychology | 0.001 | 2.58 | 2 | A | |||

| 82 | Journal of Business Ethics | 0.001 | 2.92 | Y | 3 | A |

Note. SS = Syllabus share; Impact = 2017 Journal Impact Factor; FT = 2017 Financial Times list membership; UTD = 2017 University of Texas at Dallas list membership; TAMUGA = 2017 Texas A&M/University of Georgia list membership; AJG = 2018 Academic Journal Guide rating; ABDC = 2016 Australian Business Deans Council rating. Junior and senior scholar perceptions are not included because these journals were omitted from that data gathering effort. Weighted syllabus share is not included because the average number of articles weight used to construct that metric was based on the Top 50 journals.

Acknowledgements

The authors thank the following for their valuable assistance in coding for this study: Belle Long, Alexandra (Alecia) Scott, Kevin Clark, Tyler Dixon, Alexa Bowe, Madhavi Kapadia, Sana Lall-Trail, Ryan McCluskey, and Alexis Kim.

Jason A. Colquitt previously served as Editor-in-Chief of Academy of Management Journal and Coordinator of the TAMUGA Rankings. Both are mentioned in this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.