Abstract

Online training to improve problem-solving skills has become increasingly important in management learning. In online environments, learners take a more active role which can lead to stressful situations and decreased motivation. Gamification can be applied to support learner motivation and emotionally boost engagement by using game-like elements in a non-game context. However, using gamification does not necessarily result in supporting positive learning outcomes. Our analysis sheds light on these aspects and evaluates the effects of points and badges on engagement and problem-solving outcomes. We used an experimental approach with a fully randomized pre-test/post-test design of a gamified online management training program with 68 participants. The results demonstrate that points and badges do not directly improve problem-solving skills but are mediated by emotional engagement to positively influence problem-solving skills. Additionally, satisfaction with the gamification learning process positively relates to emotional engagement. Thus, when creating online training programs, it is essential to consider how to engage students and to think about the design of the learning environment. By identifying the limitations of gamification elements, the study’s results can provide educators with information about the design implications of online training programs for management learning.

Keywords

Introduction

Online learning has become increasingly important over the past two decades which has created new challenges for learners (Bughin et al., 2018). In online learning, management students take a more active role in the learning process and have more responsibilities (Wan et al., 2012), possibly resulting in stressful situations that can lead to decreased motivation and higher drop-out rates (Nawrot & Doucet, 2014; Omar et al., 2009). Moreover, complex online learning environments pose challenges related to motivation and engagement; learners often disengage when confronted with more open-ended tasks (Aparicio et al., 2019; Hanus & Fox, 2015).

Training for problem-solving skills can be particularly challenging in management education. Problem-solving outcomes are especially important for management students because they require that students learn how to resolve unstructured, complex problems (Bigelow, 2004; Janson et al., 2020; Peterson, 2004). Problem-solving skills are defined as “situated, deliberate, learner-directed, activity-oriented efforts to seek divergent solutions to authentic problems through multiple interactions amongst problem solvers, tools, and other resources” (Kim & Hannafin, 2011, p. 405) and they are considered to be essential in an ever-changing business world.

Because it can be difficult to teach problem-solving skills to management students, especially online, learners require some assistance. Gamification is a concept that can help students improve their problem-solving skills and enhance the outcomes of their tasks. Gamification entails deploying elements familiar to online gaming contexts within a non-entertainment context (Deterding et al., 2011; Hamari et al., 2014) to assist learners in staying engaged with and focused on their activities, such as problem-solving tasks. Using gamification elements can have positive effects on learning outcomes—especially concepts that support lower level learning outcomes such as multiple choice quizzes (Dias, 2017). However, the impact of gamification elements on problem-solving outcomes has been questioned because their effects can be positive or negative, creating a divide between researchers and calling their benefit into question (Kalogiannakis et al., 2021). It has been proposed that gamification can emotionally support learner engagement, ending in better outcomes (Hammedi et al., 2021). Although gamification has been intensively discussed in the education literature (Schöbel et al., 2021), analysis of the theoretical and empirical issues related to the overall gamification context remains incomplete (Koivisto & Hamari, 2019). Researchers ask for more experimental studies that analyze how engagement relates to other gamification and learning related variables to better explain its effect and meaning (Hamari et al., 2016; Schöbel et al., 2020). Therefore, the study discussed in this paper focused on the following research question:

RQ: How does gamification relate to emotional engagement and problem solving in management education?

To answer this research question, we conducted a randomized pre-test/post-test experiment in a management course and, for this purpose, we developed a gamified online training program to teach problem-solving skills. Our results demonstrate that although the direct effect of gamification on problem-solving skills was not significant, gamification operates through emotional engagement to influence problem-solving skills. Thus, our study contributes to management learning literature by establishing emotional engagement as an important mediator in supporting the effects of gamification on problem-solving. By highlighting emotional engagement as the mechanism through which gamification influences problem-solving skills we are able to explain how gamified online training, points, and badges can be used to support emotional engagement and ultimately problem-solving skills. In addition to emotional engagement, we establish learning process satisfaction as an important construct that can better support problem-solving skills through its effect on emotional engagement when using gamification in online learning. From a practical perspective, we present how badges and points that are integrated in online trainings should be designed to effectively support learning by reinforcing emotional engagement in and satisfaction with the learning process.

Theoretical Foundations

In the following, we start by defining and explaining gamification compared to other relevant terms such as simulations. Next, we present the elements that can be used to gamify online trainings. Finally, we clarify which elements we used for this study and why.

Gamification in Online Trainings

In management education, we have three different approaches to make learning more engaging with the concepts of games: simulations, serious games, and gamification. A simulation is a synthetic or artificial environment that supports individuals in experiencing reality. It can be used during business development to mimic a business situation (Salas et al., 2009). In a simulation, we can use specific game elements to engage learners in interacting in a business situation. Alternatively, serious games are described as a mental contest, often played with a computer in accordance by specific rules. Serious games use entertainment to further government and corporate training, as well as education, health, public policy, and strategic communication objectives (Sicart, 2014; Zyda, 2005). In serious games, we have a virtual world that is close to those that we have in online games, and elements of games are placed within the virtual world that learners are operating in. However, we can use elements of a game also without a virtual simulation or a virtual world. If we use only elements of a game without embedding them in a virtual world or simulation, we use the concept of gamification. In other words, gamification is the use of game-like elements in non-gaming contexts (Deterding et al., 2011).

Gamification has been used in several different contexts, such as finance, health, education, sustainability, and productivity (Deterding et al., 2011; Fernandes et al., 2012). In the realm of learning, gamification has the following four general purposes: supporting a process, enabling a behavior, supporting in processing information, and creating a business value (Treiblmaier et al., 2018). Gamification supports the learning process by making something ordinary more enjoyable. In other words, gamification can create the joy of application use and can accomplish mastery and autonomy. Another purpose of gamification is to support the processing of information (Treiblmaier et al., 2018). Information processing involves solving problems, learning, or changing learning-related behaviors and attitudes (Treiblmaier et al., 2018). From a business perspective, gamification can assist in making products or services more engaging, thus resulting in greater values (Treiblmaier et al., 2018).

Compared to other concepts, gamification has several advantages. In online learning, learners have to organize their learning process, which makes it necessary for them to understand the reason behind what they are doing (Leemkuil & de Jong, 2012). Training management students to solve daily business problems requires keeping them motivated and engaged in the learning process (Swain et al., 2020). Gamification can make learning more entertaining, helping students to control their actions in a digital learning environment and bring pieces of a puzzle together (Liu et al., 2011). At the same time, from a teacher or managers perspective, gamification is an effective concept that is easy to implement, and inexpensive (Sailer & Homner, 2020).

Elements of Gamification

The elements are an essential part of gamification and can be described as their building blocks (Blohm & Leimeister, 2013), which the designer selects, adapts, and implements in a learning application (Schöbel et al., 2020). Existing gamification approaches are often criticized because of poor planning of the gamified environment, such as the ad hoc use of gamification elements (Kalogiannakis et al., 2021). Moreover, gamification designers have often been criticized for using certain pre-existing patterns of design elements with presumed motivational effects, regardless of the contexts of use (Schöbel et al., 2019a, 2019b). It is commonly agreed that contextual factors are critical for designing gamified systems that support actual learners’ needs (Dichev et al., 2020). Therefore, we describe in the following which gamification elements we selected for this study and how to adapt them to the context of digital learning.

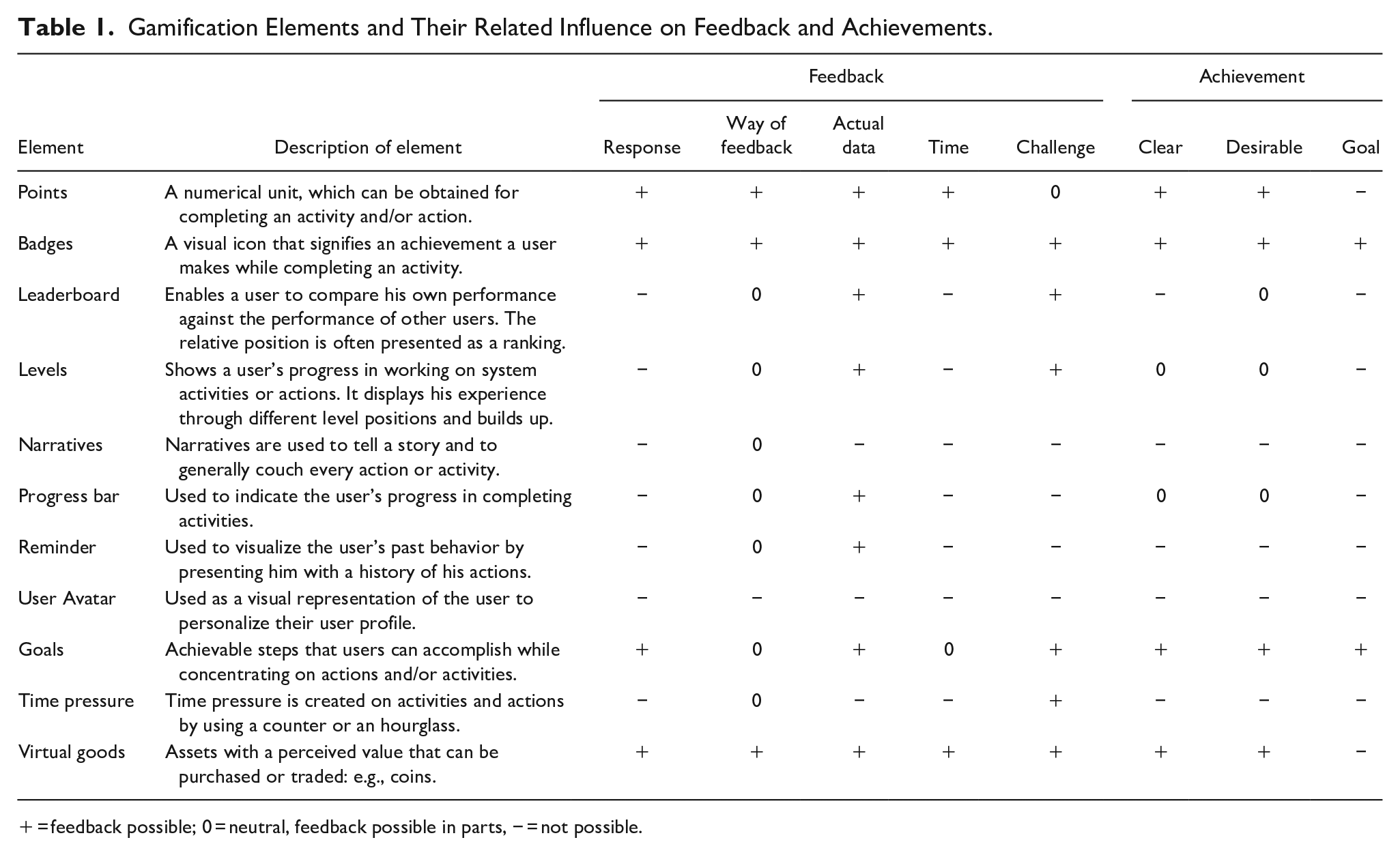

Interaction is typically necessary in open-ended and problem-based learning scenarios to keep learners motivated continuing with their learning processes (Hrbackova & Suchankova, 2016). When considering gamification as an intervention, interaction is possible by considering aspects of feedback. Feedback can be briefly described as a process through which learners make sense of information from different sources (such as peers or a computer-based system) to enhance their work or learning strategies (Carless & Boud, 2018). Feedback helps learners to regulate their learning process because it assists them in combining knowledge to solve complex problems as well as interpreting information (Leemkuil & de Jong, 2012; Wood et al., 1976). Achievement, on the other hand, provides guidance to learners, which is important because they act on their own in online learning environments (Eom & Ashill, 2016; Kuo et al., 2012). We created Table 1 to present the various elements of gamification and explain how each influences feedback and achievement. A “+” indicates that an element can act as a feedback element, whereas a “0” indicates that changes are necessary to support an element’s function of giving feedback. A “−” indicates that giving feedback is not possible with that element.

Gamification Elements and Their Related Influence on Feedback and Achievements.

= feedback possible; 0 = neutral, feedback possible in parts, − = not possible.

Achievement is described with three characteristics: goal, clear, and desirable (Thiebes et al., 2014). Looking at the elements in Table 1, we can see that badges and goals typically satisfy the achievement characteristic of goal. Four elements—points, badges, goals, and virtual goods—satisfy the achievement characteristics of clear and desirable. Feedback has the following five characteristics that support social constructivism and meta-cognitivism in learning (Thurlings et al., 2013): response, way of feedback, actual data, time, and challenge. Knowledge of response refers to informing learners when they have done well. It can be provided by using points, goals, badges, or goods. For example, a user can be rewarded with a point for giving the correct answer (De-Marcos et al., 2014).

The way of giving feedback to learners is usually either positive or neutral (Thurlings et al., 2013). Most elements provide neutral feedback by showing users their progress, such as a progress bar (Silpasuwanchai et al., 2016). Other elements, such as points or badges, can be positively experienced by rewarding users for being successful when completing a learning activity (Davis & Singh, 2015). Actual data refers to the element’s ability to present the actual data related to a learner, which most elements fulfill. Time refers to the timing of giving feedback to a learner, which can be either formative or summative (feedback during a learning process versus consolidated feedback at the end of a learning process). Points, badges, and goods can be offered to learners in both ways by giving a point to learners for each time they have completed a test question or by giving a badge to learners for giving the correct answer to a series of questions (Davis & Singh, 2015; De-Marcos et al., 2014). Finally, feedback is connected to challenges (Thurlings et al., 2013) and an element’s ability to support learners in surpassing their prior achievements. This holds for almost all elements.

For this study, we consider points and badges for the following reasons. Having an overall point score signals how well learners have performed in completing a given learning process. Such signaling is important in online learning because learners have a more active role and need to be supported in developing competencies on their own, which can be partly done by the use of gamification elements (Eom & Ashill, 2016). In line with this, badges support learners in completing achievements, and they provide them with specific feedback.

Hypotheses Development

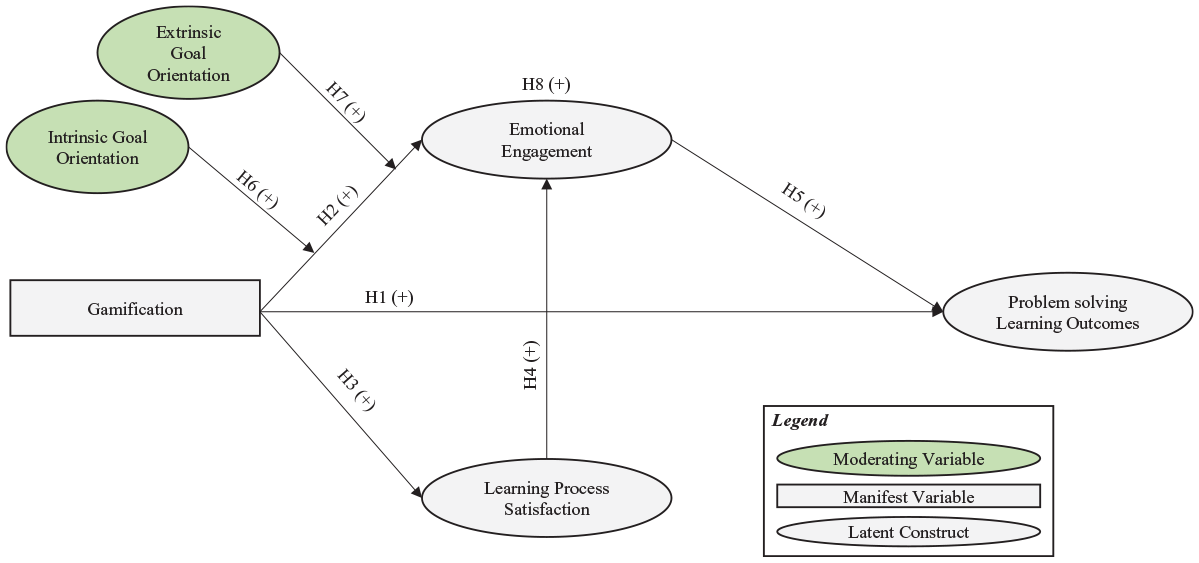

In this section, we discuss our research model (shown in Figure 1). This model shows our theoretical constructs related to gamification (satisfaction with the learning process, emotional engagement, problem-solving outcomes) and our moderators (intrinsic goal orientation and extrinsic goal orientation).

Theoretical model.

Gamification to Support Problem-solving Outcomes and Emotional Engagement

Problem-solving skills are important for the careers of business students; however, to date, students often seem to deal poorly with unstructured problems (Bigelow, 2004). Learning to solve complex problems is especially needed in management education (Grossman et al., 2013) to prepare up-and-coming managers for complex work environments (Bowman, 2019). In online environments, learners have to organize their learning process and they are required to manage complex problems on their own (Peterson, 2004). Gamification can be used to support the learning of problem-solving skills by signaling continuous achievements and progress and activating self-regulation (Hanus & Fox, 2015; Jang, 2008; Newmann, 1992). Gamification can make it easier to learn about complex problems by reducing the difficulty of the learning materials and making learning more fun (Buil et al., 2020; Leemkuil & de Jong, 2012). For example, points can be used to simultaneously regulate and reward learning progress. A regulated learning process can result in better problem-solving abilities (Eom & Ashill, 2016; Kim & Hannafin, 2011) by enabling well-coordinated learning processes. Therefore, gamification can help learners better focus on their learning material by providing feedback that supports them in understanding their progress, which signals achievement (Davis & Singh, 2015; Leemkuil & de Jong, 2012). Thus (and as shown in Figure 1), we hypothesize:

H1: Gamification in online learning has a positive effect on problem-solving outcomes.

While learning is not always enjoyable, it can be (Shernoff, 2013). Creating a gamification concept for digital learning environments can increase emotional engagement (Özhan & Kocadere, 2020) which refers to one’s feeling of enjoyment with a learning activity based on it being considered fun and entertaining (Hamari et al., 2014). Thus, emotion plays a valuable role in experiential learning (Taylor & Statler, 2014). Gamification concepts facilitate a feeling of having fun to assist students in achieving a higher level of concentration, which keeps them emotionally engaged (Khan et al., 2017; Newmann, 1992). By emotionally involving learners in a learning process, such as by rewarding them, we can better support them in engaging in an activity for their own sake (Guzzo, 1979). Based on this, we hypothesize (as shown in Figure 1):

H2: Gamification in online learning has a positive effect on emotional engagement.

Learning Process Satisfaction with Gamification

Learning involves statements of opinions and beliefs or an assessment of worth (Pintrich & De Groot, 1990) known as affective learning outcomes (Gupta & Bostrom, 2013). An affective outcome describes the extent to which learners believe the learning and teaching system they are using meets their expectations (Eom, 2014). Satisfaction is typically outcome oriented, for example, satisfaction could be the result of the completion of learning goals, which can be either positive or negative. Learning process satisfaction describes the favorable and unfavorable response of leaners with regards to different characteristics of the training. A satisfying learning process refers to a learning environment where learners perceive the training as being efficient, coordinated, clear, and fair. Gamification can lead to satisfaction with the learning process by highlighting and rewarding the learners progress in online training to stimulate positive feelings about the training (Shute et al., 2015). With gamification, instructors can create a feeling of being satisfied by informing learners about their performance throughout the learning process and by making the learning experience more efficient (Ferguson & DeFelice, 2010; Fisher, 2003). Thus (as shown in Figure 1), we hypothesize:

H3: Gamification in online training has a positive effect on satisfaction with the learning process.

Being satisfied with a learning process is the psychological state of being able to achieve success and having positive feelings about that success and the ability to act most appropriately (Hui et al., 2007; Keller, 1987; Özhan & Kocadere, 2020). In turn, this will make learners want to continue their learning process, which can be observed as greater emotional involvement (Ferguson & DeFelice, 2010; Lee et al., 2011; Özhan & Kocadere, 2020). Satisfied learners can better interpret information, judge their progress, and determine the steps they need to take to achieve a specific learning goal (LG) (Eom & Ashill, 2016; Leemkuil & de Jong, 2012). Overall, learners do best when they are satisfied with a learning process; thus, they enjoy what they are doing and experience greater engagement with the process. This engagement can be determined by learning process satisfaction and the feeling of a coordinated and understandable learning environment (Watson & Sutton, 2012). Therefore (and as shown in Figure 1), we hypothesize:

H4: Learning process satisfaction in online training has a positive effect on emotional engagement.

Emotional Engagement to Support Problem-solving Outcomes

If learners are emotionally engaged in an activity, they are more likely to be engrossed in the process (Parent & Lovelace, 2015). Thus, learners who invest emotional energy in their learning can enhance their learning outcomes (Rich et al., 2010). Related work on emotional engagement highlights that learners engaged in the learning environment display a higher level of success and a lower prevalence of dropping out (Carini et al., 2006; Özhan & Kocadere, 2020). A person who is emotionally engaged is better able to focus on a task, and is better able to solve complex problems. By being able to continuously focus on the most important content and keep learning without giving up or being distracted, learners can understand the relationship between the different concepts they are learning (Ding et al., 2017). Being emotionally engaged can be key to accomplishing complex problems and persevering, even though the learning materials might be complex or difficult to understand, because students enjoy what they are doing (Arbaugh, 2000; Eseryel et al., 2014). Moreover, learners who enjoy being in a digital environment feel happy and pay attention to the given task; therefore, they are more proactively engaged in solving problems (Özhan & Kocadere, 2020). Thus (as shown in Figure 1), we hypothesize:

H5: Emotional engagement in online learning has a positive effect on problem-solving learning outcomes.

The Mediating Effect of Emotional Engagement

Generally, emotional engagement enables learners to focus on their learning process (Kuo & Chuang, 2016). We hypothesize that emotional engagement is also an important construct that mediates the effects of gamification on problem-solving learning outcomes. Being emotionally engaged in learning can better explain the relationship between gamification, its elements, and problem-solving outcomes by signaling the achievements that learners can attain and by showing them the progress they have made in an environment in which they play a more active role and are more responsible for their actions (Kim & Hannafin, 2011). We posit that, with a guiding and emotionally supportive design, gamification elements can facilitate learners’ emotional engagement, simultaneously making learning more entertaining and more efficient. Consequently, learners are supported in better regulating their learning process and in better discovering knowledge on their own (Eom & Ashill, 2016). Thus (as shown in our model in Figure 1), we hypothesize:

H6: Emotional engagement mediates the effect of gamification on problem-solving outcomes.

The Moderating Effects of Intrinsic Goal Orientation and Extrinsic Goal Orientation

We expect that intrinsic goal orientation and extrinsic goal orientation moderate the relationship between gamification and emotional engagement. Research supports the idea that the goal orientation of learners can influence the power of the relationship between gamification and emotional engagement (Super et al., 2019). Intrinsic goal orientation reflects the learners’ perceptions of why they are engaging in an activity (Pintrich et al., 1991). Learners with a strong intrinsic goal orientation have a strong willpower to participate in an activity (Kwak et al., 2021). Such strong willpower can strengthen the relationship between gamification and emotional engagement. In other words, learners with a strong intrinsic goal orientation will be more emotionally engaged in gamification training because they have a stronger desire to learn. Extrinsic goal orientation is about participating in a task to be rewarded (Pintrich et al., 1991). Learners with a strong extrinsic goal orientation have a strong need to get rewarded for their activities and thus will be more emotionally engaged in the gamification learning process because of the opportunity to earn badges and points (Kwak et al., 2021; Özhan & Kocadere, 2020). Therefore, in our research model, we aim to shed light on the boundary conditions of learner goal orientation (intrinsic or extrinsic) between gamification and emotional engagement. Consequently (as depicted in Figure 1), we hypothesize:

H7: Extrinsic goal orientation is expected to positively moderate the relationship between gamification and emotional engagement.

H8: Intrinsic goal orientation is expected to positively moderate the relationship between gamification and emotional engagement.

Research Design and Method

Study Context and Participants

We evaluated our model by conducting a fully randomized, pre-test/post-test, between-subjects experiment with management students at a western European university. We developed an online training program concerning the value proposition canvas (VPC) concept (Osterwalder et al., 2014). A VPC is a tool that can help ensure that a product or service is positioned around what the customer values and needs. A VPC considers the value proposition of a company and assists in creating a customer profile. From a company’s perspective, gain creators, pain relievers, and product and service assets are specified. Pains, gains and the customer’s job are specified in the customer profile. The training was embedded in the course “Business and Information Systems Engineering,” typically attended by 80 to 150 freshman undergraduate students. Participation was voluntary but embedded in the mandatory tutorial sessions on campus. To avoid common method variance (CMV; variance that is attributable to the measurement method rather than to the constructs the measures represent), we did not reveal the goal of our study to our participants but instead provided a cover story and other ex-ante remedies according to Podsakoff et al. (2003). A Harman’s single factor test ex-post showed that CMV should not be a problem in our study.

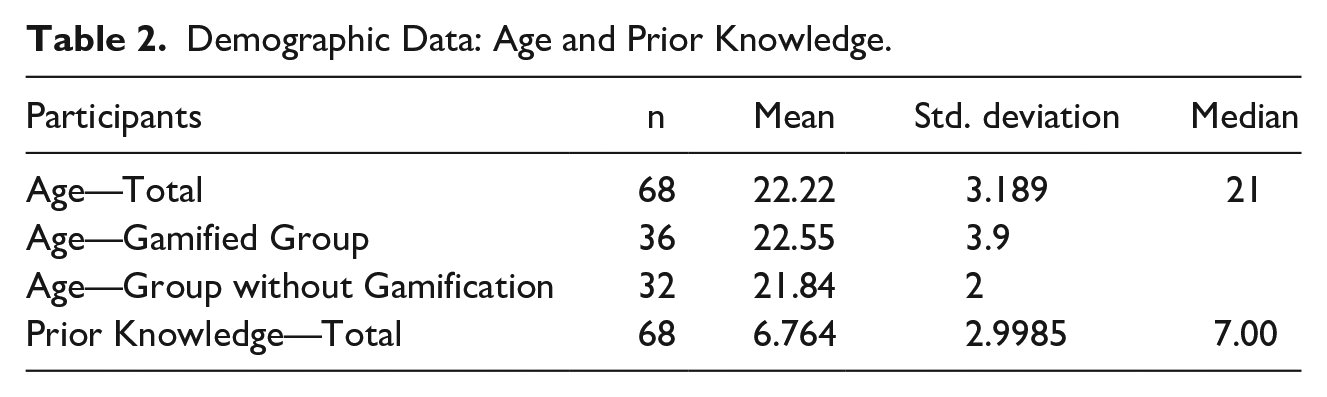

We collected 68 valid datasets, indicating a response rate of 91.89%. Our non-gamified group consisted of 32 students, and our gamified group consisted of 36 students. In terms of majors, 48 students (70.60%) studied business and economics, 15 (22.10%) studied business law, 3 (4.40%) studied pedagogy, 1 student studied industrial engineering, and another one studied an unlisted course. Our sample consisted of 39 female (57.35%) and 29 (42.65%) male students. Their average age was 22.22 years (see Table 2).

Demographic Data: Age and Prior Knowledge.

Online Training and Learning Goals

To analyze our model (Figure 1), we developed an online training program. We began by developing LGs for our online training. Because the instructional environment must represent the learner’s context and learning environment (Gibbons et al., 2000), we decided to guide their cognitive processes, such as analyzing, evaluating, and creating (Anderson & Krathwohl, 2016; Jonassen, 2000).

We focused on three different kinds of problem solving (Jonassen, 2000). Troubleshooting problem solving refers to participants’ abilities to solve case problems by, for example, finding a mistake in a described situation or context. Decision-making problem solving requires the participants to select a single option from a set of alternatives by, for example, identifying the weaknesses and strengths of an existing VPC. And finally, case analysis problem solving refers to using alternative solutions for a given problem that goes beyond using learned methods and techniques by, for example, suggesting alternative canvases to make a business model more valuable for a company.

In accordance with Anderson and Krathwohl (2016) and their updated version of Bloom et al.’s (1956) LG taxonomy, we formulated three overarching LGs concerning type of knowledge and problem-solving skills, as follows:

We then developed the training in three steps. First, we started with a rough concept of our online training. Second, we developed a storyboard. Third, we transferred our storyboard to our online training program using Adobe Captivate as the development tool. In online training, background information should always be available to learners to support them in achieving better learning outcomes (Leemkuil & de Jong, 2012) by, for example, providing additional material in a given learning process. Constructing online training, requires concentration on conveying the message better to get the ideas across (Gibbons, 2003), which is why we decided to split each learning unit into the following three parts: absorb, do, and connect (Horton, 2011). Absorb concerns a passive activity, such as reading a text; do concerns using what was learned during the absorb process to solve a task; and connect concerns by combining the learned aspects with the participants’ own experiences.

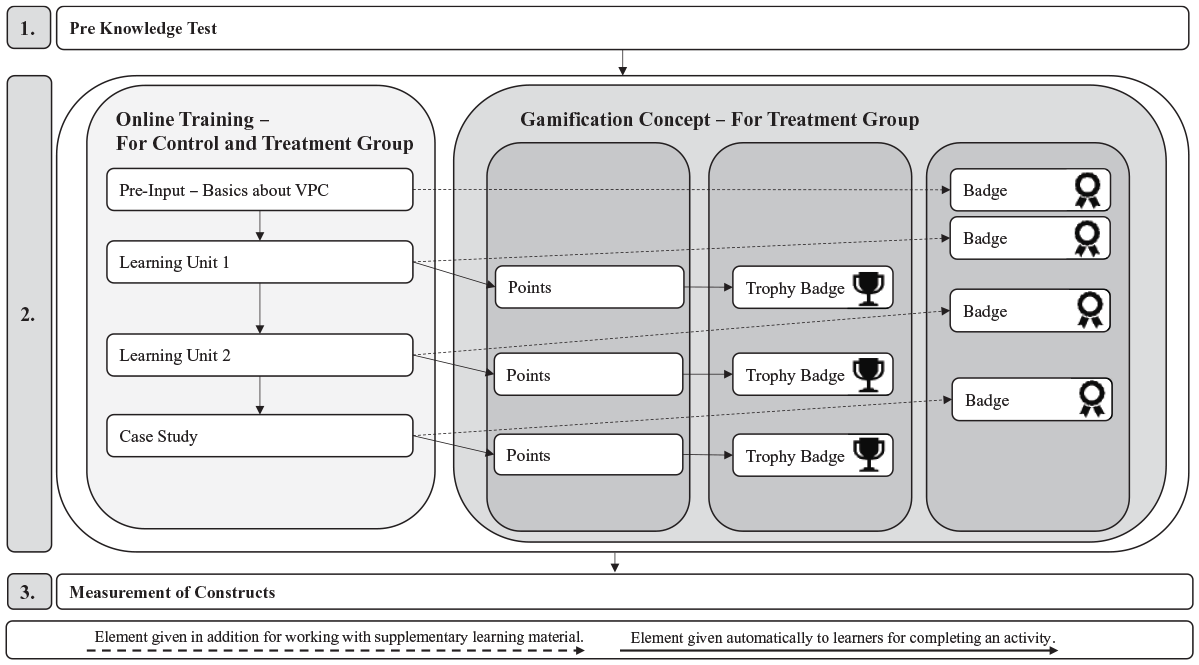

Experimental Manipulation

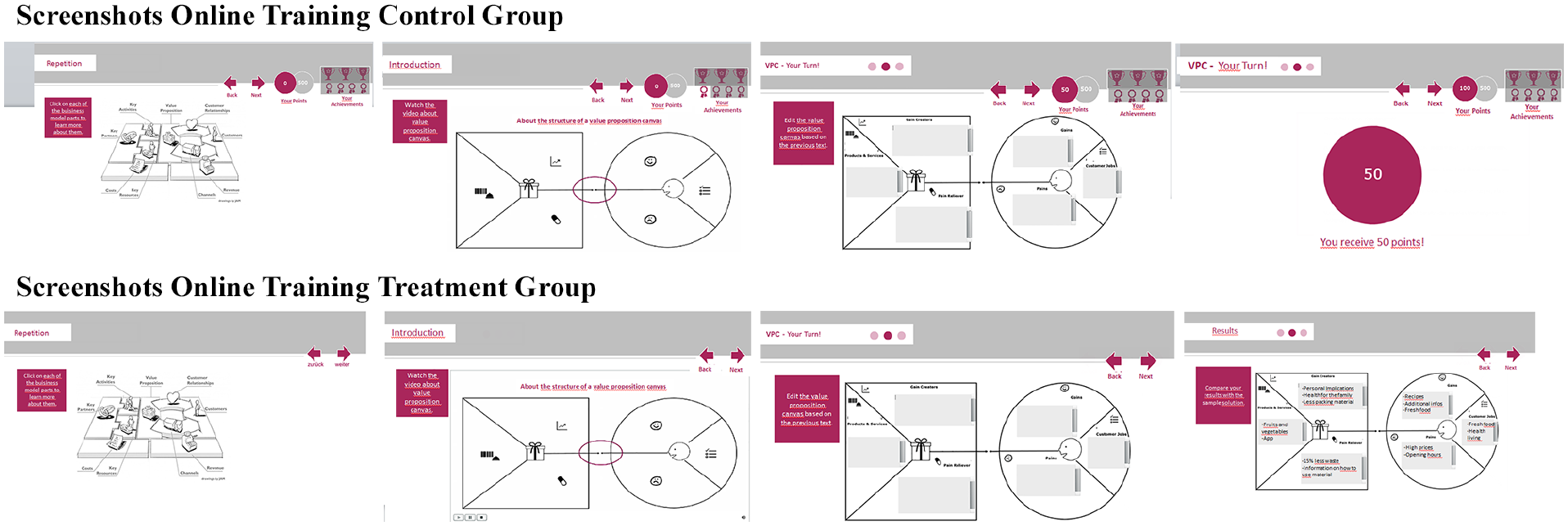

Points were given to participants to reward their progress in the training rather than for giving correct answers on knowledge tests to avoid demotivation (Santhanam et al., 2016). We also gave out the following two different types of badges: trophy badges were given to participants after completing each unit, and regular badges were given only to participants who viewed supplementary material that was not necessary to finish the online training but suggested for successful completion. The participants were informed about the option of collecting additional rewards at the beginning of the training.

In online training environments, it becomes more important to provide feedback to learners about their performance (Leemkuil & de Jong, 2012). We included audible feedback to highlight that participants had received rewards in the form of points or badges (Li et al., 2012). The collected points and badges were visible to the participants from the first moment they were gained. Points were summarized and displayed as an overall score (Hiltbrand & Burke, 2011). To analyze the effects of gamification, we used: a gamified group with points and badges and a non-gamified group without any gamification elements. Participants were randomly assigned to one of the two groups. The structure of our online training and the reward concept is presented in Figures 2 and 3.

Experimental procedure.

Screenshots of training.

Our experiment entailed three parts. The first part consisted of a pretest to determine the prior knowledge and demographics of our participants. The second part was the actual training, which consisted of a repetition unit to learn the basics of the VPC, two learning units, and a case study to measure our learning outcomes. In the third part, the participants had to fill out a questionnaire that was used for the analysis of our hypotheses. The training lasted between 90 and 100 minutes.

Study Measures

To measure problem-solving learning outcomes, we constructed tasks that participants had to solve after completing the second learning unit. For the tasks, we developed a case description by presenting different companies and their VPCs to the participants along with additional information such as company news and other relevant information concerning the cases.

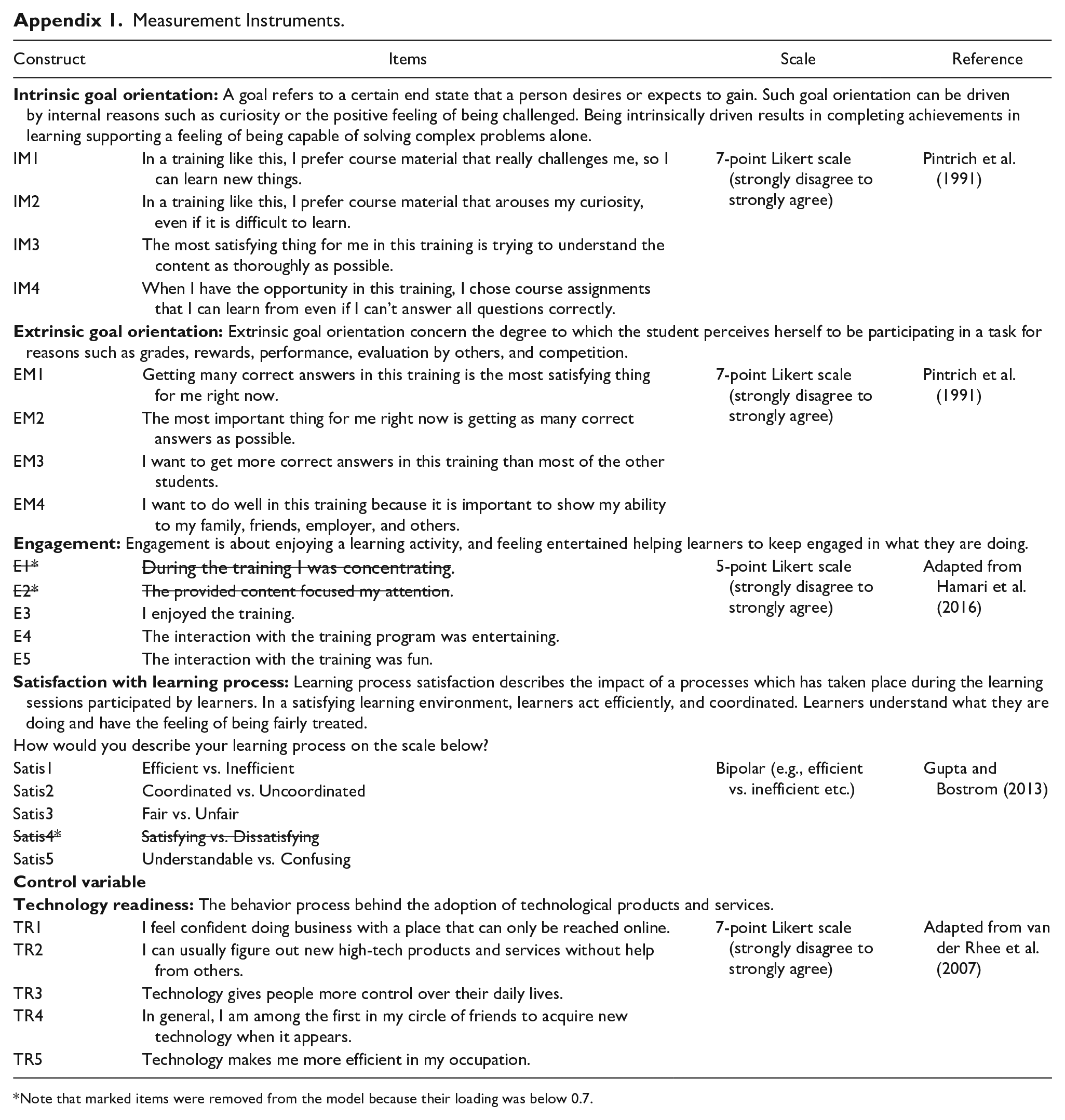

We measured all other dependent variables with established scales and where necessarily adapted scales to our research context. We measured intrinsic and extrinsic goal orientation by using the scale developed by Pintrich et al. (1991), with four items for each construct. The emotional engagement was measured with three provided by Hamari et al. (2014). Two items from the original scale of five items were removed because of their loading below 0.7. We used a bipolar scale from Gupta and Bostrom (2013) to measure the learners’ learning process satisfaction concerned with the use of internet technology (IT) (Chin, 1998) instead of relying on typical measures for affective outcomes and reactions of learners (Brown, 2005). We used four out of five items to measure satisfaction with the learning process. Appendix 1 summarizes our measurement instruments.

In addition, we measured control variables that relate to individual differences in learning behavior, which may influence the outcome of our results. We measured technology readiness by using scales from van der Rhee et al. (2007). We measured participants’ prior knowledge with four test questions about the VPC with a maximum of 10 points that could be collected.

Data Analysis

To evaluate the research model of this study, we used the variance-based partial least squares (PLS) approach. PLS analysis is a multivariate statistical method that allows comparison between multiple response variables (also called constructs) and multiple explanatory variables (in our model, problem-solving outcomes). PLS is one of several covariance-based statistical methods that are often referred to as structural equation modeling, and it was created to deal with multiple regression. In other words, PLS is a regression method that allows for the identification of the underlying factors, which are a linear combination of the explanatory variables, and what we also know as latent variables, which best model the response.

We decided to work with PLS because it is more suitable than other covariance-based methods due to mainly three reasons (Hair et al., 2010). First, PLS is better suited for evaluating data sets with smaller sample sizes that are imposed through our experimental setting in the field. Our sample size (n = 68) is sufficient for the PLS approach using power analyses (Hair et al., 2016). With two constructs pointing at our dependent variable, we would need 33 observations to achieve a statistical power of 80% for detecting R2 values of at least 0.25, with a 5% probability of error (Hair et al., 2016). Second, we want to provide explorative evidence on how to better support learners in online learning by referring to elements of gamification in management education. Third, model identification issues of covariance-based approaches can arise when using single-item measures, such as our learning outcome scores.

We developed a coding scheme to analyze the problem-solving learning outcomes of our tasks. Because the tasks were based on different LGs concerning the application of knowledge as well as analysis and evaluation to solve a problem, the tasks required an increasing degree of problem-solving skill, and the scoring was adapted likewise, specifically, 20 points for the first task, and 70 for the second. Two raters were involved in analyzing the learning outcomes. The raters were trained on the coding scheme by the first two authors. Our analysis showed that the raters had a high interrater reliability (IRR) (weighted Cohen kappa = 0.706, p < .05; Pearson correlation coefficient; r = .772; n = 68; p < 0.001). Due to the complexity of rating the problem-solving outcomes, the raters resolved any ambiguities on their own by discussing until they both agreed on a single consensus score, which we then used for further analysis 1

Results

Control Variables and Manipulation Check

Our control variable technology readiness had no significant influence on problem-solving outcomes (t-value = 1.304; p > .10). Additionally, we evaluated the participants’ prior knowledge. The average prior knowledge was 6.46 in the gamified group and 7.08 in the non-gamified group, showing that prior knowledge did not significantly differ between the two groups (p > .10).

Because we conducted an experiment, we wanted to check whether the gamification elements we used were recognized. For this check, we asked our participants three questions (marked on a 5-point Likert scale from “1 = Totally Disagree” to “5 = Agree”). We explicitly ask them if they recognized the gamification elements of points, trophy badges, and regular badges. The results of a t-test for independent variables revealed that all three manipulations were recognized by the participants of our gamified group. We also added these questions to our control group. The participants of our non-gamified group did not recognize any of the elements (for all three manipulation checks p < .001; mean points: gamified group: 4.66, non-gamified group: 1.37; mean badges: gamified group: 4.50, non-gamified group: 1.10; mean trophy badges: gamified group: 4.71, non-gamified group: 1.24).

Research Model Evaluation

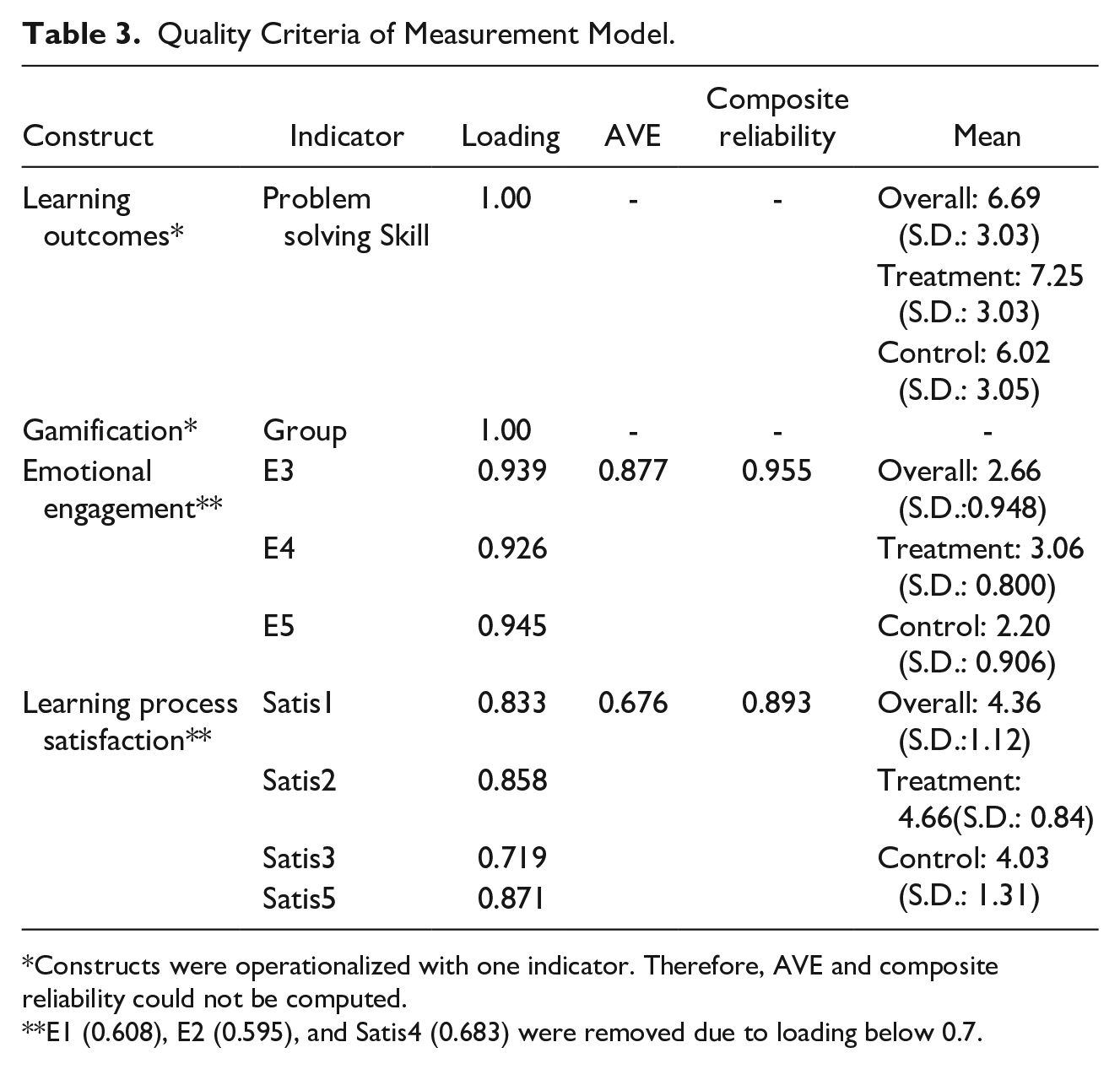

To evaluate our model and to test our hypotheses, we analyzed the outer model and continued with the inner model (Hair et al., 2010). The inner model is the part of the model that describes the relationships among the latent variables that determine the model (Hair et al., 2014). The outer model is the part of the model that highlights the relationships among the latent variables and their indicators (Hair et al., 2014). A part of this involves an analysis of reliability and validity. We evaluated the reliability and validity of the outer model using quality criteria (Table 3).

Quality Criteria of Measurement Model.

Constructs were operationalized with one indicator. Therefore, AVE and composite reliability could not be computed.

E1 (0.608), E2 (0.595), and Satis4 (0.683) were removed due to loading below 0.7.

Indicator reliability was analyzed with indicator loadings. We used indicator reliability to analyze the proportion of indicator variance that is explained by our latent variables and that can range from 0 to 1 (Hair et al., 2014). The indicator loadings should be above the minimum value of 0.7 (Hulland, 1999). We measured internal consistency by referring to the means of construct reliability; values should be above 0.70 to have acceptable construct reliability (Bagozzi & Yi, 1988), and ours were.

Furthermore, we measured convergence validity using the average variance extracted (AVE). Generally, convergence validity is a subtype of construct validity and assisted us in evaluating the degree to which two measures that should be related as claimed by theory that are related (Hair et al., 2014). The value should be above the minimum of 0.50 so that at least half the variance of the constructs is explained by the measured indicators (Bagozzi & Yi, 1988). All of our constructs have AVEs above 0.50.

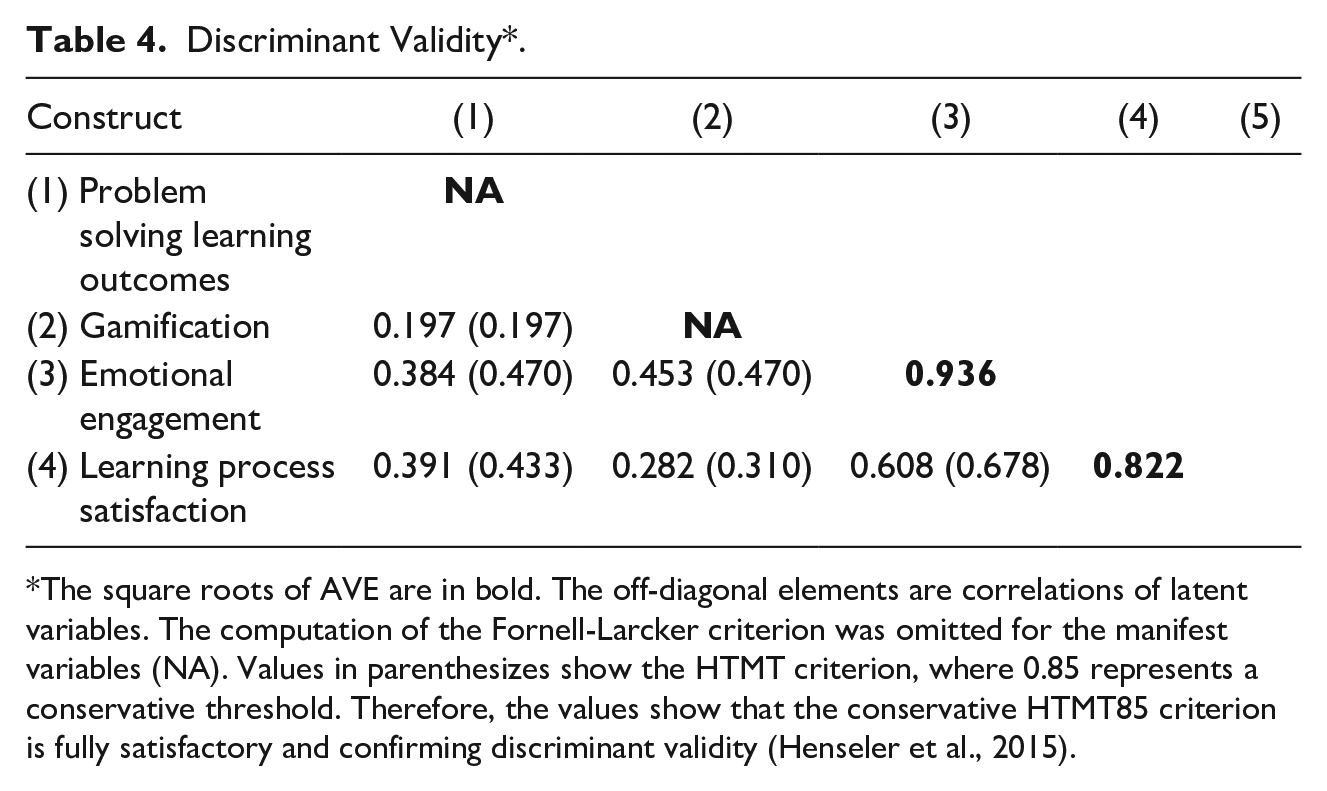

We then used AVE to measure discriminant validity (see Table 4). We used discriminant validity to test the degree to which our measure diverges from another measure. This can happen by measuring the Fornell-Larcker criterion, which states that the square root of the AVE of a construct should be higher than the correlation of the latent construct with other constructs of the measurement and indicates whether a construct shares more variance with its indicators than with other constructs (Fornell & Larcker, 1981). In another step, we assessed the heterotrait-monotrait (HTMT) ratio and the HTMT interference criteria (HTMTinference). HTMT interference is a measure of similarity between our latent variables (Hair et al., 2014). Discriminant validity was established through consideration of the Fornell-Larker criterion and the conservative HTMT85 measure (indicated through all HTMT measures being under 0.85) (see Table 4). The HTMTinference values are all significantly below the threshold of 1.

Discriminant Validity*.

The square roots of AVE are in bold. The off-diagonal elements are correlations of latent variables. The computation of the Fornell-Larcker criterion was omitted for the manifest variables (NA). Values in parenthesizes show the HTMT criterion, where 0.85 represents a conservative threshold. Therefore, the values show that the conservative HTMT85 criterion is fully satisfactory and confirming discriminant validity (Henseler et al., 2015).

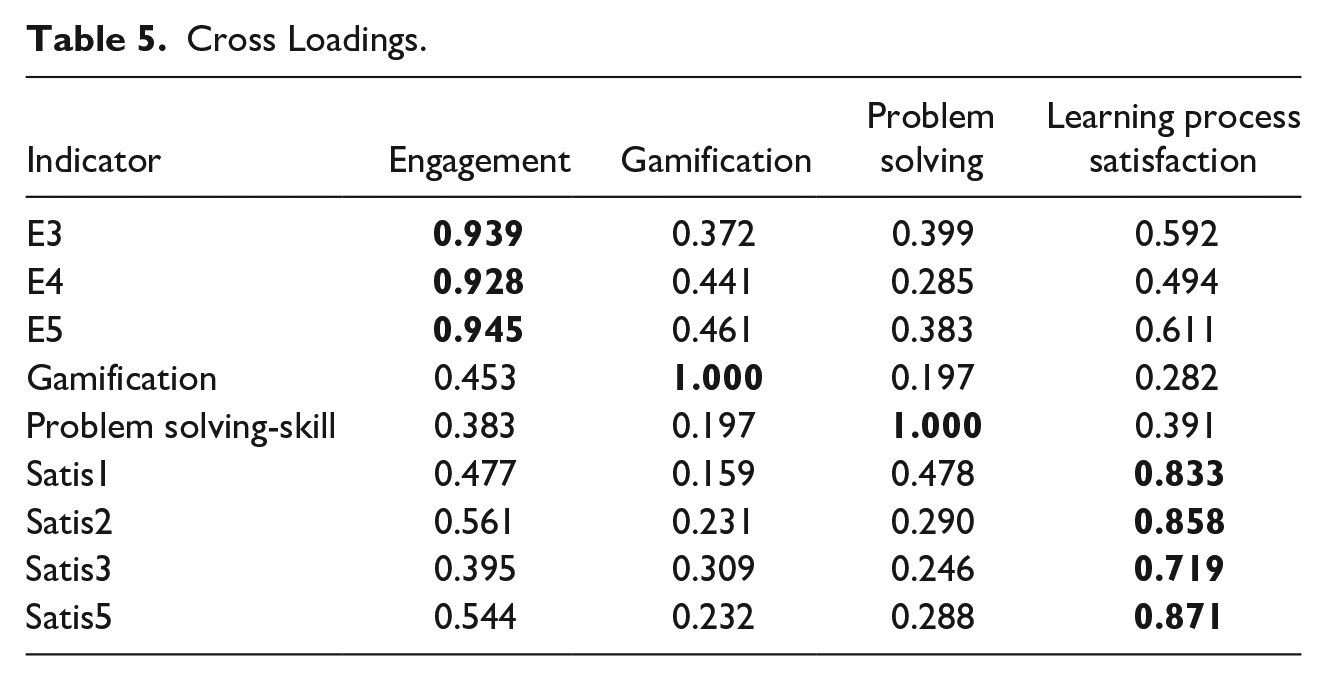

Lastly, to analyze our outer model, we analyzed cross-loadings (Chin, 1998) that show all indicators load the highest on their constructs, (see loadings in bold in Table 5) (Hair et al., 2014); this can be seen in Table 5. Simply saying this provides insurance to us that our indicators clearly can be assorted to our latent variables and not the other ones.

Cross Loadings.

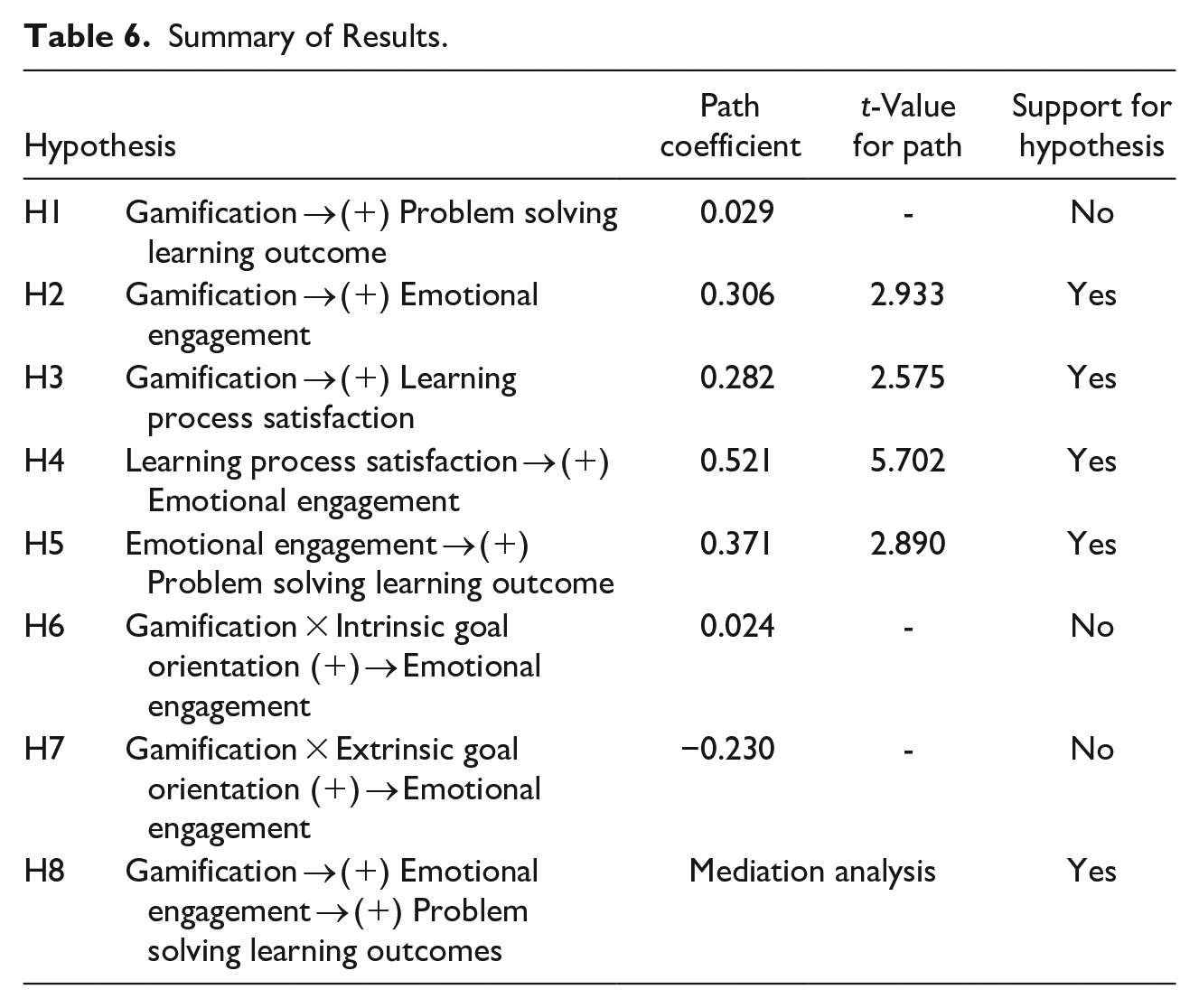

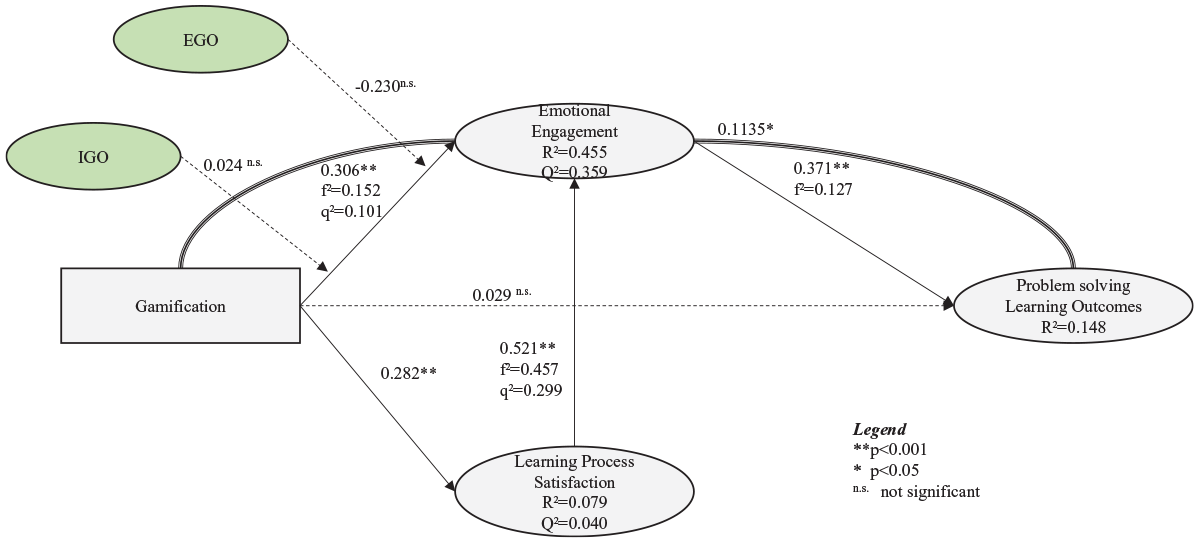

After we evaluated the outer model, we evaluated the inner model to determine which of our hypotheses can be supported and which we need to reject. The results of the structural model can be seen in Figure 3. Hypotheses with a p-value less than or equal to .05 were accepted. Those with a p-value greater than or equal to .05 were rejected. In the remainder of this section we detail how we analyzed our inner model to establish the path coefficients, R2, significance levels, effect sizes, and predictive relevance (Hair et al., 2016). Table 6 summarizes our results, stating each hypothesis and whether it was supported.

Summary of Results.

For Hypotheses 1 to 7, we report the path coefficients and their p-values for the direct effects along with their significance level. The results can be found in Figure 4. Three of our hypothesized relationships are not significant (their p-value is above .05). There is no significant relationship between gamification and problem-solving learning outcomes (ß = .030; p > .05) (H1). Moreover, our PLS analysis also highlights that the moderating effects of intrinsic goal orientation and extrinsic goal orientation on the relationship between gamification and emotional engagement are not significant (ß = .024, p > 0.05; ß = −.230, p > 0.05) (H6 and H7, respectively).

Results of structural model.

All other hypotheses are supported and are reported in order from largest path coefficient to smallest path coefficient. Our results suggest that emotional engagement positively relates to problem-solving outcomes (ß = .371, p < .01) (H5). Learner process satisfaction positively relates to emotional engagement (ß = .521, p < .01) (H4), and gamification positively relates to emotional engagement (ß = .306, p < .01 ) (H2), and positively to learning process satisfaction (ß = .282, p < .01) (H3).

To analyze the mediation hypothesis (H8) we followed the recommendation of Nitzl et al. (2016) evaluate the indirect effect of gamification on problem-solving learning outcomes. We used the bootstrapping procedure that determines the properties of an estimator such as the variance. The bootstrapping of the sampling distribution shows that the indirect effect is significant (p = 0.043 < 0.05) (H8). This means that emotional engagement does indeed mediate the effect of gamification on problem-solving outcome. This represents an indirect-only fill mediation since the direct effect from gamification to problem solving outcomes is insignificant (as reported above).

To analyze the general quality and power of our model, the explained variance R2 and its impact can be used (Hair et al., 2014). The explained variance of the main construct of problem solving can be described as weak (R2 < 0.25) (Hair et al., 2010), which is typical for explaining problem-solving outcomes when considering experimental research in management education and online trainings (Janson et al., 2020). Other than this, emotional engagement can explain more than 45% of the variance (R2 = 0.455).

Effect sizes tell us the strength of a relationship and assist in evaluating the inner model quality (Hair et al., 2014). Effect sizes were calculated for the determinants of problem solving and emotional engagement. As the direct relationship between gamification and problem solving is not significant (H1), we analyzed the relationship between emotional engagement and problem solving (H5). The effect size f² constitutes the influence of an exogenous construct on endogenous constructs by considering the changes of the coefficient of determination—R2 (Cohen, 1988)—where values above 0.02, 0.15, and 0.35 indicate a low, moderate, and high effect, respectively, on the structural level (Henseler et al., 2009). Our results indicate that the effects of emotional engagement on problem solving (H5) can be considered low (f² = 0.127). The effect sizes between learning process satisfaction and emotional engagement (H4) can be considered medium (f² = 0.299), as with the effect sizes between gamification and emotional engagement (f² = 0.152) (H2).

In the last step, we evaluated the predictive relevance as a conclusive assessment of the structural model (Chin, 1998) by using the blindfolding procedure proposed by Stone (1974) and Geisser (1975), which omits one part of the data systematically and uses the resulting estimates to predict the omitted part (Hair et al., 2014). Generally speaking, blindfolding is a sample re-use technique that systematically deletes data points and provides a prognosis on their original values (Hair et al., 2014). We chose d = 7 as the omission distance, which is not a multiple integer of the analyzed cases (n = 68) (Hair et al., 2014). We assessed Q² as the cross-validated redundancy measure to estimate the structural model and measurement models for the data prediction (Hair et al., 2014). The blindfolding procedure is applied to endogenous reflective constructs (Hair et al., 2014). If the value of Q² is larger than 0 for a particular construct, its explanatory variables have a predictive relevance (Henseler et al., 2009). This is the case for both of our constructs of emotional engagement (Q² = 0.359), and learning process satisfaction (Q² = 0.040).

The relative impact of the predictive relevance can be evaluated by the measure q²: values above 0.02, 0.15, and 0.35 respectively indicate a small, medium, or large predictive relevance of constructs, explaining the endogenous construct that is evaluated (Henseler et al., 2009). Our results reflect a medium effect for the relationship between gamification and emotional engagement (H2; q² = 0.152) and the relationship between learning process satisfaction and emotional engagement (H4; q² = 0.299).

Discussion and Implications

Discussion of the Results

In our study, we aimed to understand how the gamification elements of points and badges can support emotional engagement to train students to improve problem-solving outcomes in management education (RQ). In general, the impact of gamification on learning outcomes has often been questioned (Kalogiannakis et al., 2021), resulting in mixed findings about its effectiveness (Bai et al., 2020). To better understand if and how gamification elements can impact specific kinds of outcomes, Koivisto and Hamari (2019) conducted an extensive literature review among all disciplines in gamification research. Out of the 273 empirical studies that were identified, only 12 analyzed engagement and only 8 analyzed satisfaction; moreover, only a few studies used an experimental approach. Thus, scholars have called for the need to conduct more experimental research to analyze gamification (Hammedi et al., 2021), especially by adding control groups to the experiments (Koivisto & Hamari, 2019). Our experimental analysis has shed some light on this research gap.

In our study, we found no positive direct effects of gamification on problem-solving outcomes (H1). Although this hypothesis was rejected, this might be one of the most interesting findings of our study. Many studies have evaluated the positive effects of gamification on learners’ outcomes (De-Marcos et al., 2014; Hew et al., 2016). Consistent with other research that fails to identify the direct effects on learning or behavior outcomes (Super et al., 2019), our study results demonstrate that including points and badges in online training is not a guarantee that management students will be better supported in improving their problem-solving skills. Based on these findings, we suggest that perhaps rather than thinking of gamification as having a direct effect on problem-solving outcomes it is time to think of it as a distal antecedent of problem solving. Thus, we should be focusing on identifying the more proximal explanatory mechanisms that account for why gamification can and should influence problem-solving outcomes.

In this research, we demonstrate that emotional engagement is one such driver of positive problem-solving outcomes from gamification training (H8). When creating online training programs, it is essential to consider how to engage students and to think about the design of the learning environment to increase emotional engagement. We identify that one way to increase emotional engagement is to ensure that training is fair, efficient, clear, and coordinated, such that students are satisfied with the learning process. Thus, based on our results, gamification is successful at developing learner problem-solving outcomes because the learner is satisfied with the process and in turn finds the training enjoyable and useful. Focusing our analyses on emotional engagement enables us to understand why points and badges may result in stronger problem-solving skills for management students.

With our analysis, we aimed to obtain a better understanding of how the relationship between gamification and emotional engagement is moderated by intrinsic and extrinsic goal orientations. In our model, we did not detect any moderating effects of intrinsic goal orientation and extrinsic goal orientation, and had to reject H6 and H7. However, with a negative path coefficient, it is possible that extrinsic goal orientation may harm the positive relationship of gamification and emotional engagement in some scenarios. From this perspective, the goal of striving to obtain for example monetary rewards can weaken the power of gamification on supporting emotional engagement, because gamification is in bigger parts designed to support the inner needs of users such as autonomy (Ryan & Deci, 2006). Our gamification concept is grounded on rewarding learning progress, not by connecting it to correct answers given on knowledge-based tests but by supporting learners to continue their learning process. Our reward concept leads to a positive relationship between gamification and emotional engagement. With learners having a strong extrinsic goal orientation, this positive relationship can be negatively harmed, making the effects of gamification on emotional engagement less effective. With a strong intrinsic goal orientation, it is possible that in some situations the relationship between gamification and emotional engagement would be enhanced by intrinsic goal orientation. However, further analyses need to be done to explain these effects in terms of its significance. It could be interesting to observe if a strong extrinsic goal orientation using a reward-based gamification approach could positively support the relationship of gamification on emotional engagement. Such an approach could be useful for lengthier management courses, as learners could get exhausted more easily.

Implications for Management Education

A challenge in online management learning is to prepare management students to be capable of solving complex business problems once they have graduated. Learning about complex management problems requires educators to create guided and feedback-oriented learning processes (Bigelow, 2004). Regarding management training, educators, and managers should consider gamification as a tool to support learners’ emotional engagement and satisfaction, not by relying on elements such as points or badges but by constructing and designing a meaningful learning experience that learners enjoy and that simultaneously signals their’ achievements to better support them in regulating their online learning experience. A fun and engaging learning experience is also important because, in online learning, it is difficult to provide learners with appropriate feedback about their learning success (Kearns, 2012; Kim et al., 2005). By using elements such as badges or points that signalize the learning progress (e.g., by highlighting how much learners have completed so far), educators can assist learners in improving their learning process by supporting them in continuing with their online training and by rewarding them based on their progress—instead of rewarding or punishing their success or failure according to the results of knowledge-based tests, which deliver negative effects on learning outcomes (Denny, 2013; Haaranen et al., 2014).

To make gamification meaningful, management educators should carefully consider the needs and interests of their management students and the specific contexts instead of randomly selecting and combining elements. For example, research suggests analyzing the conditions under which gamification works best and avoiding pre-existing patterns and designs of the elements (Bai et al., 2020; Dichev et al., 2020). Therefore, educators wishing to support their students in online trainings should carefully consider the elements they select for their training programs; in turn, this can determine the success of a gamification concept (Fogel, 2015; Liu et al., 2017). Educators should also try to determine whether progress or achievements need to be supported. In line with this, creators of online training programs should develop their gamification concepts for training higher-level LGs and problem-solving skills by selecting gamification elements that will better support learners in engaging in their learning processes. Lastly, it is recommended that the factors that support emotional engagement be considered in order to assist learners in better regulating their learning experience.

Implications for Future Research

Following recommendations provided by Brutus et al. (2013), we discussed the internal validity, external validity, construct validity, and statistical conclusion validity for both research implications and future research.

To support internal validity, future studies could investigate different dynamics to analyze how effective they are for the constructs of our research model. In addition to points and badges, other elements, such as rankings or virtual goods, could be considered. Each gamification element enables a different dynamic; for example, ranking stirs competition whereas virtual goods encourage collaborative behavior in learning. Research should also analyze behavioral engagement and cognitive engagement to measure whether there are any effects of gamification. Both kinds of dynamics could be analyzed in future research to better understand the effects of gamification elements that support competition or collaboration (Santhanam et al., 2016).

External validity can be supported by considering different management-related topics and learners with different educational backgrounds, such as managers attending MBA courses online (Arbaugh, 2005). To support construct validity, and to better understand how constructs, such as engagement, goal orientation, and satisfaction, work with the learning process, future research could consider a more detailed and focused analysis of those constructs. Experiments can be used to analyze each construct in more detail. For example, regarding goal orientation, research should try to consider which kind of goal orientation supports learning performance and which designs of gamification concepts are more suitable to address intrinsic goal orientation and extrinsic goal orientation. This could also elicit research about tailored gamification concepts, such as supporting specific kinds of goal orientation (Klock et al., 2020). In terms of the underlying theory, (digital) nudging from behavioral economics (Thaler & Sunstein, 2009; Weinmann et al., 2016) could provide further grounding for inducing behavioral change related to learning through soft-paternalistic mechanisms. This is also true when considering the embedding of gamification into the scaffolding of learning processes to foster learning outcomes in a systematic way (Janson et al., 2020). Lastly, in support of statistical conclusion validity, we suggest future research undertaking a longitudinal analysis with a larger sample size to better understand the long-term effects of gamification on management education.

Limitations

Our study has some limitations. In terms of internal validity, we cannot rule out the possibility of the long-term effects of the gamification elements used in online learning environments. Our results highlight that emotional engagement mediates the relationship between gamification and problem-solving outcomes. However, these results can change over time, leading to findings other than those we saw in our one-time analysis. The fact that our study only examines how management students react toward gamification in the context of online education is likely to affect the generalizability of our results, limiting them to a subset of the target group. With our one-time measurement of emotional engagement and satisfaction for problem-solving outcomes, we cannot interpret the long-term effects of gamification on problem-solving outcomes. Lastly, our statistical measures resulted in three rejected hypotheses. The fact that our study only included a limited number of participants implies that the non-significant effects can change with a larger sample size.

Conclusion

Supporting management students in training their problem-solving skills still needs to be explored in more detail. Companies often lament that new management employees lack the skills to solve the complex problems of daily business. With our study, we demonstrate how teachers and trainers can construct gamified online training that supports management trainees in better emotionally engaging in their learning and thereby strengthening their problem-solving skills. The results of our study demonstrate the importance of emotional engagement in online learning. In the present study, we demonstrate that relying on points and badges is not enough to support the training of problem-solving skills. We can demonstrate that emotional engagement is an important construct to support a positive impact on problem-solving outcomes. We support practitioners in deciding how to design their online teaching courses by using points and badges as one mechanism (among others) to bolster the emotional engagement, and satisfaction with the learning process of their students.

Footnotes

Appendix

Measurement Instruments.

| Construct | Items | Scale | Reference |

|---|---|---|---|

| IM1 | In a training like this, I prefer course material that really challenges me, so I can learn new things. | 7-point Likert scale (strongly disagree to strongly agree) | Pintrich et al. (1991) |

| IM2 | In a training like this, I prefer course material that arouses my curiosity, even if it is difficult to learn. | ||

| IM3 | The most satisfying thing for me in this training is trying to understand the content as thoroughly as possible. | ||

| IM4 | When I have the opportunity in this training, I chose course assignments that I can learn from even if I can’t answer all questions correctly. | ||

| EM1 | Getting many correct answers in this training is the most satisfying thing for me right now. | 7-point Likert scale (strongly disagree to strongly agree) | Pintrich et al. (1991) |

| EM2 | The most important thing for me right now is getting as many correct answers as possible. | ||

| EM3 | I want to get more correct answers in this training than most of the other students. | ||

| EM4 | I want to do well in this training because it is important to show my ability to my family, friends, employer, and others. | ||

|

|

5-point Likert scale (strongly disagree to strongly agree) | Adapted from Hamari et al. (2016) | |

|

|

|||

| E3 | I enjoyed the training. | ||

| E4 | The interaction with the training program was entertaining. | ||

| E5 | The interaction with the training was fun. | ||

| How would you describe your learning process on the scale below? | |||

| Satis1 | Efficient vs. Inefficient | Bipolar (e.g., efficient vs. inefficient etc.) | Gupta and Bostrom (2013) |

| Satis2 | Coordinated vs. Uncoordinated | ||

| Satis3 | Fair vs. Unfair | ||

|

|

|

||

| Satis5 | Understandable vs. Confusing | ||

|

|

|||

| TR1 | I feel confident doing business with a place that can only be reached online. | 7-point Likert scale (strongly disagree to strongly agree) | Adapted from van der Rhee et al. (2007) |

| TR2 | I can usually figure out new high-tech products and services without help from others. | ||

| TR3 | Technology gives people more control over their daily lives. | ||

| TR4 | In general, I am among the first in my circle of friends to acquire new technology when it appears. | ||

| TR5 | Technology makes me more efficient in my occupation. | ||

Note that marked items were removed from the model because their loading was below 0.7.

Acknowledgements

We express our gratitude to the students of the University of Kassel who took part in this study. We would also like to thank Jennifer Hopp for her support in the development of the training and data collection. Furthermore, this research builds on a paper that has been presented at the Academy of Management Annual Meeting 2019 in Boston (Schöbel et al., 2019a). In 2019, this paper was also presented at the Management Education and Learning Writers Workshop at the Academy of Management Annual Meeting 2019. We thank the reviewers and attendees as well as the mentors of the Management Education and Learning Writers Workshop for their valuable feedback that helped us to improve our research and to write this paper. Last but not least, we thank our Associate Editor for her guidance as well as the anonymous reviewers for their constructive feedback and openness during the review process.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The second author acknowledges support from the basic research fund of the University of St. Gallen. This research received funding by Swiss National Science Foundation (100013_192718).