Abstract

Recruitment for qualitative research can have an added level of complexity when working with vulnerable populations, as stigma and confidentiality may play an important role in participants’ willingness to engage. For example, people with HIV and other stigmatizing conditions may prefer to use a pseudonym to maintain confidentiality and may be discouraged from participation if asked to produce proof of diagnosis with a stigmatized condition. However, efforts to respect participant privacy and minimize burden while providing compensation for their time may leave studies vulnerable to infiltration by ineligible individuals who are motivated by the compensation. This article describes how efforts to respect the unique concerns of a vulnerable and stigmatized population, people who considered breastfeeding while living with HIV, resulted in the enrollment of non-eligible individuals in an in-depth interview study. We describe how the breach was discovered and the subsequent actions taken to balance study integrity with meeting the privacy needs of the target population. This example illuminates how scientific validity can be threatened by enrollment of individuals misrepresenting a target population, and the importance of developing approaches to ensure that vulnerable target populations can be reached while respecting privacy needs.

Keywords

Introduction

Qualitative research plays a crucial role in understanding the nuanced experiences of health in diverse populations. However, when working with vulnerable populations, stigma and privacy concerns can be deterrents to disclosing their experiences (Mendez et al., 2021; Schmid et al., 2024; Webber-Ritchey et al., 2021). Recruitment strategies such as community engagement, online recruitment, and options for anonymity can serve as facilitators to help participants feel comfortable participating in research (Oudat & Bakas, 2023; Schmid et al., 2024). Online recruitment in particular has been on the rise since the COVID-19 pandemic (Lei, 2024). Despite its increasing prevalence, there is still a notable gap in research on adapting these methods for vulnerable and stigmatized individuals (Mendez et al., 2021; Webber-Ritchey et al., 2021). Persons living with HIV (PLHIV) are one group for whom stigma and confidentiality concerns are common barriers to participation in clinical research. Without careful attention to the unique needs and concerns of stigmatized populations, studies may face challenges such as poor retention, ethical violations, or fraudulent enrollment (Mendez et al., 2021; Rivera-Goba et al., 2011). While online recruitment methods may reduce some of this group’s psychological and physical barriers to participation, it also presents unique challenges, particularly in verifying participant identities while maintaining confidentiality. Existing literature has focused on general strategies for balancing anonymity and verification across various study populations, but few studies have adapted these frameworks to the specific needs of stigmatized groups (Mistry et al., 2024; Serrano et al., 2025). This gap highlights the need for innovative and ethical approaches to recruitment that protect participants while ensuring data integrity and enrollment of eligible individuals (Serrano et al., 2025).

In this article, we describe our experience with fraudulent enrollment in a study targeting PLHIV using virtual recruitment for remote in-depth interviews. Fraudulent enrollees are those who intentionally misrepresent themselves in an effort to enroll in a study for which they are ineligible; this group is distinct from those who attempt to enroll in good faith and are accurately identified as ineligible. Here, we describe how fraudulent enrollments were discovered and confirmed, and how we subsequently adapted study procedures to integrate participant verification measures alongside trust-based recruitment methods to protect data integrity while continuing to prioritize participant accessibility and safety. Drawing from this experience, we offer generalizable guidance for navigating the ethical and methodological complexities of remote research recruitment with vulnerable and stigmatized populations—guidance that is increasingly critical as digital platforms become integral to health research.

Materials and Methods

Prior to 2023, Department of Health and Human Services (DHHS) guidelines recommended against breastfeeding in the context of maternal HIV due to the risk of HIV transmission to the infant through breastmilk. The guidelines were revised in January 2023 to encourage collaborative decision-making between a patient and a provider and include breastfeeding as an acceptable infant feeding choice (Department of Human and Health Services, 2023). Given the lack of research on breastfeeding in PLHIV in the United States (U.S.), this qualitative study sought to comprehensively describe the infant feeding decision-making process and preferences for provider communication of PLHIV. Eligible participants were 18 years or older, pregnant or within three years postpartum, living with HIV in the U.S. during their last pregnancy, and fluent in any language supported by the institution’s language services program. The requirement to live in the U.S. was important to the study design since the study was designed to inform implementation of the change in U.S. infant feeding guidelines for perinatally HIV-exposed infants. Individuals were excluded from participation if there was documented transmission to the infant in utero since the relevant guidelines address decision-making to balance benefits of breastfeeding with the potential risk of transmission of HIV to an infant born exposed to HIV in utero but uninfected. We aimed to interview a total of 20–30 participants, or the number needed to reach thematic saturation, indicating the point at which no new concepts were expected to be introduced by participants in interviews.

Recruitment and enrollment materials, including the screening script and informed consent summary, were developed by the study team. The materials were adapted based on feedback from the institution’s Center for AIDS Research (CFAR) Community Advisory Board (CAB) to improve inclusivity and accessibility. The CAB also provided guidance on the appropriate study compensation amount and methods of compensation delivery. Participants were offered compensation in the form of a $50 ClinCard (Greenphire) prepaid debit card and were able to choose between receipt of a physical card or a virtual card sent to their email address. Careful consideration was given to both the amount and format of compensation, with the goal of ensuring equal access to research across socioeconomic groups and respecting the time and stories that participants shared (Bierer et al., 2021). The payment of $50 was chosen based on accepted standards that incorporate the potential for lost wages, costs to participate in research (e.g., childcare), and risks of participation (e.g., loss of confidentiality, discomfort in sharing personal and potentially upsetting experiences) while minimizing undue influence (Bierer et al., 2021; Gelinas et al., 2018). The use of a prepaid debit card offers flexibility for participants, without requiring access to banking services (as compensation via check would) or requiring them to use the funds at a specific location (such as with a gift card to a specific vendor). Although ClinCard generally requires that a social security number be entered when registering new participants, we requested and were granted a waiver from this requirement given the confidentiality concerns of our participants. Finally, although ClinCard requires a U.S. address for participant registration, we allowed participants to register using the team’s office address if the participant was uncomfortable providing their home address.

A screening questionnaire was developed in REDcap consisting of yes/no questions, which participants filled out in order to show interest in participating in the study (Harris et al., 2009, 2019). The screening questionnaire was programmed to automatically notify participants if they were not eligible based on their responses. Those who were deemed potentially eligible were asked to provide a name or pseudonym and a phone number or email address where they could be reached by a member of the study team. In an effort to protect participant privacy and address confidentiality concerns, participants were able to provide only as much personal information as was comfortable for them. The study team was particularly conscientious of potential participants’ desire for confidentiality given the study topic and the history of punitive action taken against PLHIV in the U.S. who chose to breastfeed (Symington et al., 2022). The use of yes/no questions was intended to minimize participant burden in completing screening, eliminate the need for additional identifying information (e.g., medical record number), and allow for automated eligibility determination via REDcap. Participants who passed pre-screening were then contacted by a member of the study team.

Recruitment occurred through multiple channels, including direct referrals from providers, distribution of recruitment materials electronically, and referrals from participants. The study team aimed to enroll participants both locally and nationally so that a diverse range of experiences would be captured during interviews. Therefore, both in-person and virtual (via Zoom™) participation was offered. Study flyers were created with a QR code that led directly to the pre-screening form. These flyers were distributed through a listserv dedicated to parents living with HIV and their providers, connecting the study with a pre-curated population of individuals aligned with our target audience. The flyers were also posted on the social media accounts of a partner HIV advocacy organization, allowing for broader distribution of recruitment materials to a population that was otherwise not engaged in discussions of infant feeding (The Well Project, n.d.). Enrolled participants were asked to share the screening link with others who might be interested.

Individuals who were determined to be eligible and who were willing to participate underwent informed consent. During scheduling, participants were asked if they would like to receive a copy of the informed consent form via email to read in advance of the informed consent discussion. Informed consent was obtained by the study interviewer prior to any study activities taking place. Participants were given unlimited time to read the informed consent form and ask questions during the informed consent discussion. If the individual was still interested in participating, they were asked to electronically sign the consent form using the REDcap eConsent framework (Lawrence et al., 2020). At the time of signing, participants were asked for their name (if a pseudonym was previously provided). The signed informed consent form was the only place where true participant names were required. All signed forms are stored within REDcap, allowing for additional restrictions on access to this sensitive identifying information.

After providing informed consent, participants were interviewed by a member of the team and asked to fill out a demographic questionnaire. Although interviews could be conducted in-person for local participants, all participants preferred to participate in the study remotely over Zoom. As an additional measure for participant privacy and confidentiality, participants were not required to appear on camera during any part of the interview. This study was approved by the University of Pennsylvania’s Institutional Review Board (IRB) and the Children’s Hospital of Philadelphia’s IRB under a reliance agreement.

Results

Initial recruitment was done through professional networks and the listserv, and proceeded at a rate of one participant enrolled every 1–2 weeks. Most participants required several days to schedule an interview, often making time during lunch breaks and times when they had someone to watch their children. The majority were interviewed with their camera on (five of the first seven interviews) and the same proportion spoke on the phone with a member of the study team prior to scheduling an interview. The average length of interviews was 53 minutes, ranging from 30 to 76 minutes. After conducting seven interviews over the course of 12 weeks, the study flyer was posted on the social media accounts of an HIV advocacy organization. Six individuals completed the screening questionnaire the day the advertisement was posted, and an additional 19 responses were submitted over the next 10 days, some of which reported being referred by other potential participants.

The participants who enrolled in the period immediately after the social media posting preferred to communicate exclusively over email and were enthusiastic about scheduling interviews as soon as possible. With multiple members of the study team conducting interviews, 10 were completed over the course of one week. During a debrief with the study team, unusual patterns were identified which drew concern about the validity of the recent interviews. All of the recently enrolled participants interviewed with their cameras off. These interviews were generally, although not always, shorter than prior interviews (average of 39 minutes, ranging from 23 to 50 minutes) and despite interviewer probing, the shorter interviews lacked detail about their interactions with healthcare providers and experiences giving birth. Most of the new participants seemed unfamiliar with the U.S. healthcare system, including recent guideline changes, free formula programs such as Special Supplemental Nutrition Program for Women, Infants, and Children (WIC) (U.S. Department of Agriculture, Food and Nutrition Service, n.d.), and human milk banks. Interviewers also identified discrepancies in the personal information participants provided on the pre-screening, eligibility, and demographic questionnaires, such as number of children, current location, and age. While only one out of the first seven participants requested not to provide her address for ClinCard registration, six out of ten of the new participants did not provide an address. Finally, multiple participants contacted the team with issues accessing their online compensation due to their inability to recall the U.S. addresses or date of birth they provided during registration.

Taken together, these issues raised concern for enrollment of individuals who were falsely representing themselves. The REDcap system collected the IP addresses of all signatories during the informed consent process via the eConsent framework (Lawrence et al., 2020). Since IP addresses are location-based, the team was able to determine that out of the ten newly enrolled participants, six had IP addresses based in Nigeria and three used a virtual private network (VPN) which masked their location. The remaining participant was located in the US. Although the study team was unable to verify the location of individuals who had completed only the screening form and had not yet consented for study participation, many reported being referred by individuals using Nigerian IP addresses, raising concerns about their eligibility as well. Out of a concern that ineligible participants were enrolling into the study, the remaining scheduled interviews were postponed, and recruitment was put on hold. The study team consulted with the IRB, reached out to fellow researchers for advice from those who had experiences with fraudulent research enrollees, and consulted further with PLHIV to better understand how to balance the need for eligibility verification with the heightened desire and need among this vulnerable population to maintain privacy.

Several revisions were then made to the initial recruitment methods. The most substantial change was in the content of the initial screening questionnaire. The initial yes/no questions were changed to open-ended questions, such as asking for the place of birth of the infant and a current zip code. Potential participants were also asked to provide both an email address and phone number rather than providing one or the other. Provision of a phone number allowed the study team to verify U.S. residency via carrier lookup if needed, but participants could maintain their privacy by refusing to grant permission to the study team to contact them via phone. We adjusted REDcap settings to automatically collect the individual’s IP address at the time of screening rather than consent, providing an additional and earlier means of verifying location. Potentially eligible participants were invited to speak with a researcher to confirm eligibility. The pre-consent conversation with the interviewer aimed at both building rapport pre-interview and to verify eligibility.

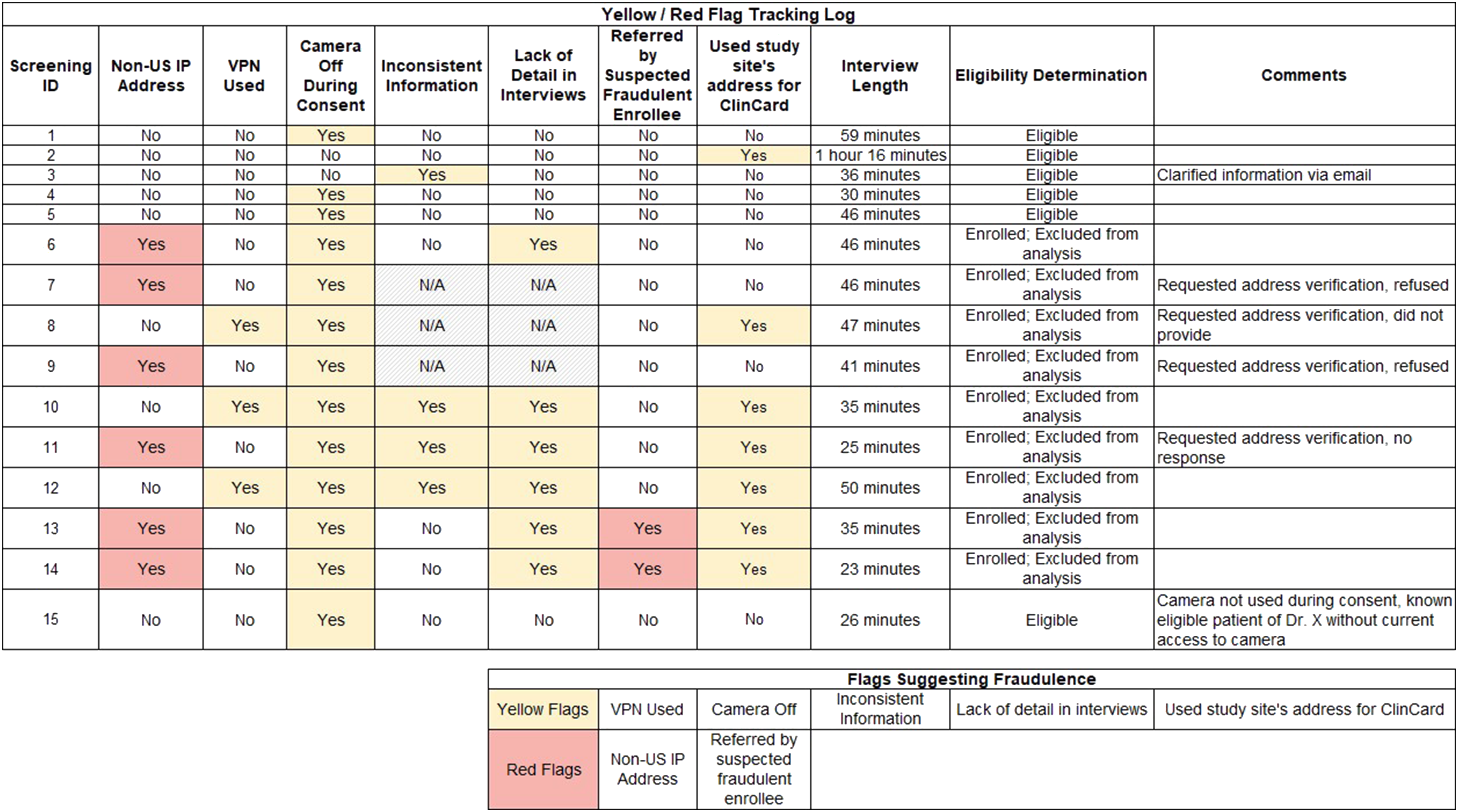

To ensure consistency between team members in how we approached confirmation of eligibility, we developed a “flag” system through which all members evaluated potential future participants. Some factors were considered immediately disqualifying, signaling a “red flag” (e.g., a non-U.S. IP address), and others indicated a need for additional verification, signaling a “yellow flag” (e.g., use of a VPN). Revised enrollment procedures included the ability to request additional information, such as proof of U.S. address and confirmation of HIV or parental status for participants who raised “yellow flags.” Other “yellow flags” included apparent inconsistencies between answers given on the screening questionnaire and answers given to similar questions during informal conversation with the interviewer. This system allowed us to streamline enrollment of individuals who were considered “green flags,” such as women referred directly from known HIV providers, while increasing confidence in the eligibility of other potential participants.

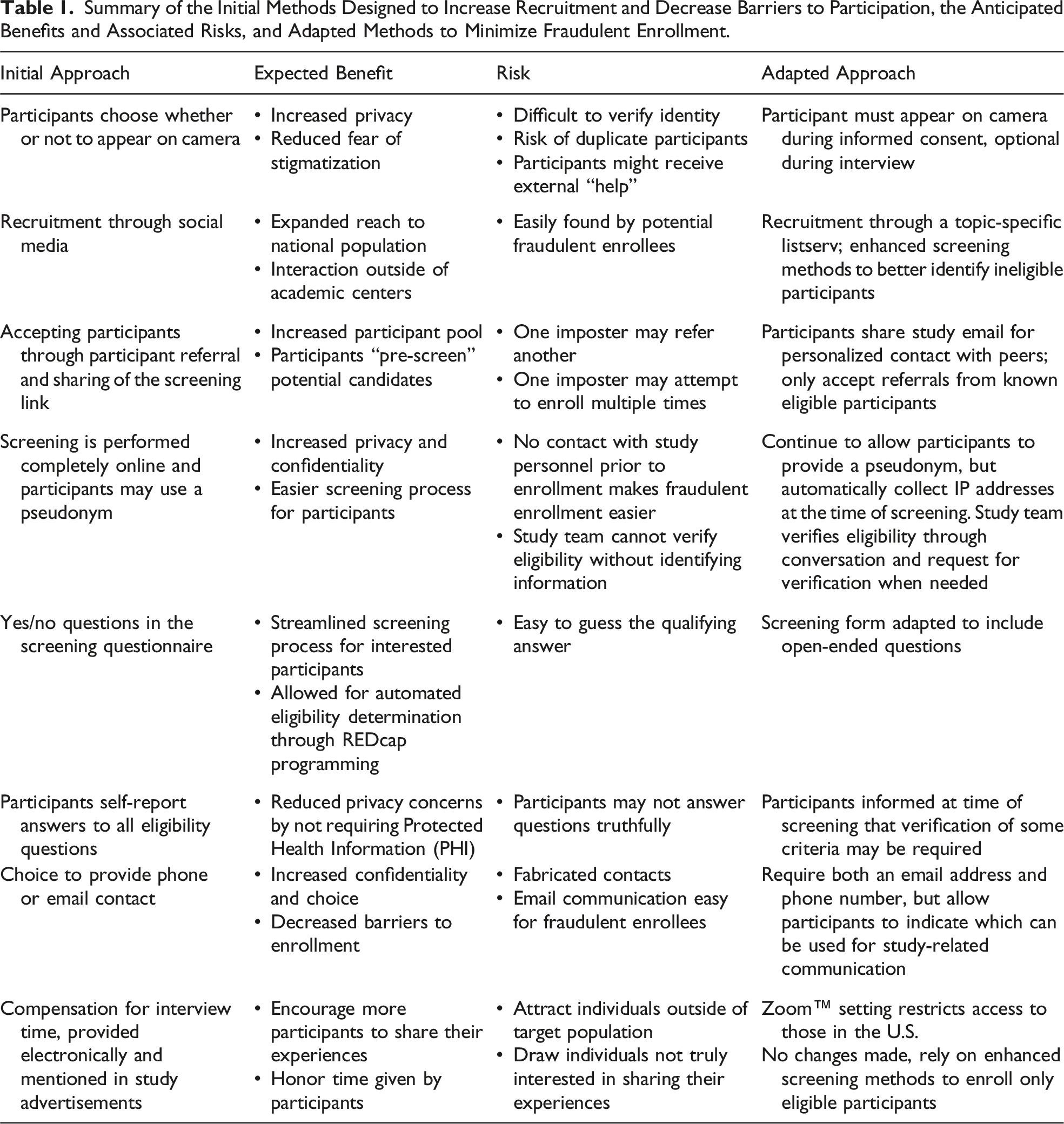

Summary of the Initial Methods Designed to Increase Recruitment and Decrease Barriers to Participation, the Anticipated Benefits and Associated Risks, and Adapted Methods to Minimize Fraudulent Enrollment.

Following the modifications to the screening and enrollment processes, previously screened individuals who had not yet been enrolled were asked to rescreen and were told that the reason rescreening was necessary was because of the discovery of a problem with ineligible individuals having previously enrolled. Of the five individuals whose scheduled interviews were put on hold and the eight individuals who had previously screened but had not been scheduled for interviews, none were willing to be re-screened, and all were therefore considered ineligible. Only one out of the 10 participants who were enrolled after the study advertisement was posted to social media was deemed to be truly eligible. Those who had completed interviews from foreign IP addresses were excluded from the final analysis, as were those who completed interviews from a VPN and whose eligibility could not be further confirmed. Further study recruitment was completed by utilizing professional networks to identify known women living with HIV who had considered breastfeeding, a process that was relatively time-consuming but that helped guarantee the integrity of the data. Recruitment lasted 11 months, including two months of a recruitment pause to address issues with fraudulent enrollment and six months of recruitment following modifications to the screening process. In total, 28 interviews were conducted, 19 of which were included in data analysis and nine of which were excluded due to concerns about fraudulence. Figure 1 shows the flag tracking system used by the study team and includes all participants who were enrolled and subsequently excluded from analysis due to suspected fraud as well as participants who were included in analysis despite the presence of a yellow flag. Flag tracking system used by the study team.It includes all participants who were enrolled and subsequently deemed ineligible and excluded from analysis, and all participants who were included in analysis despite the presence of a yellow flag. Flags were generated based on patterns or behaviors that may indicate ineligibility or potential fraud. These include Non-US IP Address: A non-U.S. internet protocol (IP) address indicates possible residence outside of the U.S., which violates study inclusion criteria. VPN Used: A virtual private network (VPN) masks true location and may reflect privacy concerns, institutional settings, or a non-U.S. residence. Camera Off: May reflect privacy preferences or an effort to conceal identity (e.g., repeated enrollments or searching for scripted answers). Inconsistent Information: Discrepancies in responses across the screening form, demographic questionnaire, and interview (e.g., age and number of children). Lack of Detail: Interviewer-identified flag for vague or implausible descriptions of key experiences (e.g., interactions with providers, in hospital/birthing experience, and postnatal medication regimens). This may also be a sign of participant discomfort or mistrust and should not be used to exclude data without the presence of other flags. Referred by Suspected Fraudulent Enrollee: Snowball recruitment from a flagged participant raises concern about others in their network. Used Study Site’s Address for ClinCard: For virtual gift card delivery, participants were given the option of using the study site’s address (which was a required field in the registration system). This may signal lack of U.S. residence or intent to obscure personal information, or a privacy concern. Interview Length: While not a flag alone, brevity may indicate limited relevant experience. Exclusion of an individual should not be done based on interview length alone. Eligibility Determination: Final assessment based on an accumulation of red and yellow flags across all phases of enrollment.

Discussion

Screening and enrollment procedures for this project were intended to minimize stigma and decrease barriers to study participation. Unfortunately, these strategies created opportunities for ineligible participants to fraudulently enroll in the study, risking its scientific validity and decreasing opportunities for individuals in our target population to share their stories and experiences. Consequently, revisions to the screening and enrollment procedures needed to balance potential privacy and confidentiality concerns of PLHIV while still maintaining the integrity of the project. Through a combination of revised screening procedures and a careful retrospective consideration of “flags” that might indicate ineligibility of potential participants, the study team was able to successfully enroll the intended study population.

As researchers increasingly rely on electronic and remote methods, study teams must innovate recruitment strategies to effectively identify eligible participants while remaining vigilant against fraudulent enrollees (Lei, 2024; Mendez et al., 2021; Oudat & Bakas, 2023). One approach is to standardize warning signs, what we refer to as “red flags” or “yellow flags,” an approach that is best employed prior to the initiation of recruitment but which can still prove valuable later on in the process. In the present project, warning signs were not identified until a substantial number of ineligible participants had already completed the study, costing the project in both physical resources (compensation) and staff effort. However, once a standardized list of red and yellow flags was developed, it was a valuable tool in screening potential participants moving forward.

Accounts in the literature regarding the timing of identification of signs of fraudulent enrollment vary, ranging from early in an individual encounter to after multiple completed encounters that reveal a suspicious pattern (Kumarasamy et al., 2024; Sharma et al., 2024). However, there are commonalities surrounding the nature of these signals. For instance, several researchers have identified sudden and rapid interest in their study as one of the first warning signs of fraudulent enrollment (Martino et al., 2024; Sefcik et al., 2023). The use of snowball recruitment, while a potentially valuable technique, may magnify the risk of fraudulent enrollment, as one ineligible participant can refer and coach other ineligible participants (Sefcik et al., 2023). Additionally, fraudulent enrollees have tended to provide contradictory information across screening forms, videochat display names, and email communication (Martino et al., 2024; Sefcik et al., 2023; Sharma et al., 2024). These common recruitment pitfalls can be used as a starting point for study teams developing a list of warning signs but should be expanded upon based on the specific study design and target population.

While remaining alert to signs of fraudulent enrollment, researchers must also remain non-judgmental of legitimate participants, especially when working with stigmatized and vulnerable populations. Prior studies have emphasized the importance of building trust and minimizing intrusiveness when engaging stigmatized groups in research, particularly when breaches of confidentiality could reinforce or exacerbate existing harms (Mendez et al., 2021; Schmid et al., 2024). In the present study, recruitment efforts for PLHIV initially relied heavily on self-reported information to reduce barriers to participation. We intentionally avoided requesting documentation of a stigmatized condition (HIV) or other sensitive personal information such as medical records or home addresses, recognizing that such requests could raise concerns about privacy and safety. However, the reliance on self-reported data also introduced the risk of fraudulent enrollment, as this trust-based approach made it easier for ineligible individuals to claim eligibility and more difficult for the research team to verify the authenticity of participant information. This issue highlights the tension between respecting privacy concerns and safeguarding the validity of study data. Discomfort with interviewers is also common among vulnerable populations, as sensitive questions can evoke uncomfortable memories or past traumas for the participants (Webber-Ritchey et al., 2021). Furthermore, long-standing histories of mistrust of the research process have been cited as additional barriers to recruiting vulnerable populations (Ellard-Gray et al., 2015). These dynamics are particularly relevant for PLHIV, who often face intersecting stigmas related to race, ethnicity, gender identity, sexual orientation, and socioeconomic status (U.S. Statistics, 2025). These overlapping vulnerabilities likely deepen mistrust and intensify concerns about confidentiality and exploitation. Monetary compensation, while important for respecting participants’ time and labor, presents additional complexities. For populations historically excluded from research benefits, compensation is a means of ensuring fair participation and overcoming financial barriers to participation (Bierer et al., 2021). However, it can also inadvertently attract participants outside the target population who may enroll solely for financial gain, posing a potential threat to the study’s validity. Moreover, while a discussion of fair compensation versus financial coercion is outside of the scope of this paper and has been covered elsewhere, it is important to note that even seemingly small financial incentives may unduly influence vulnerable populations to participate in research when they otherwise would choose not to (Bierer et al., 2021; Gelinas et al., 2018). To effectively balance study validity and the unique concerns of the study population, study teams should consult with community stakeholders and individuals with lived experiences starting as early in the study design as possible and create thoughtful recruitment and enrollment plans that are targeted to address these concerns (Beames et al., 2021; Randolph et al., 2025; Serrano et al., 2025).

While there is no one-size-fits-all approach, our experience has resulted in strategies to detect fraudulent enrollment that respect the needs of the target population that may be transferable to other qualitative research with vulnerable populations. To develop effective and respectful screening and enrollment methods, researchers must learn from their target population and tailor strategies to meet their needs. Approaches such as consulting people with lived experiences, troubleshooting verification methods, creating a standard system of warning signs (e.g., red and yellow flags), and communicating frequently as a team helped ensure our study maintained its scientific rigor while protecting vulnerable participants. Striking a balance between data integrity and respect for the unique needs of vulnerable and stigmatized populations is a necessary challenge, and one which researchers should carefully consider prior to starting a project.

Though other studies have described challenges with fraudulent enrollment and suggested strategies to avoid it, to our knowledge, ours is the first to highlight the ways in which such strategies must be adapted when working with vulnerable and stigmatized populations. For example, researchers who have previously dealt with fraudulent recruitment have suggested prioritizing closed recruitment systems (e.g., care centers, listservs, and charities) while exercising caution in using open systems (e.g., social media and snowball recruitment) (Giles et al., 2025; Mistry et al., 2024). When working with marginalized communities, disenfranchisement and stigma within the healthcare setting itself serve as barriers for stigmatized populations seeking to enter closed recruitment systems. Therefore, a reliance on such systems may result in unsuccessful recruitment efforts or further marginalization of the study population (Boyd et al., 2023). Similarly, while Mistry et al. (2024) recommend requiring on-camera screening throughout interviews or focus groups to deter fraudulent enrollment, this approach may be misaligned with some study populations; prior research suggests that reluctance to appear on camera can be a form of self-protection rather than deception, particularly among individuals navigating stigma (Self, 2021). As such, we limited the on-camera requirement to the informed consent process to prioritize participant comfort and autonomy during the interview.

Ultimately, the infiltration of fraudulent participants does not need to act as a barrier to sharing research findings but should be included in the study narrative to act as a realistic account of the data collection process, allowing readers to judge the trustworthiness of the final product. In this study, several individuals who were later determined to be ineligible were able to enroll into the study and complete the interview process, resulting in data that could not be used to address the research question. This posed an additional challenge, as excluding data from analysis has the potential to introduce, or appear to introduce, bias. Therefore, it is imperative that if qualitative data are excluded from analysis, the researchers have a sound justification that can be clearly articulated in resulting manuscripts. This is doubly important when working with marginalized and stigmatized populations, as choosing to exclude data from an individual could serve to reinforce that marginalization. While existing study reporting standards include categories under which an issue like this could fall, to our knowledge none specifically address the topics of fraudulent enrollment and excluding data from analysis after it has been collected. For example, the Standards for Reporting Qualitative Research includes the category of “data processing,” which is described as “methods for processing data prior to and during analysis, including transcription, data entry, data management and security, verification of data integrity, data coding, and anonymization/de-identification of excerpts” (O’Brien et al., 2014). The challenge of fraudulent enrollment could be conceived as a data integrity problem and reported as such in manuscripts. However, it would serve to strengthen the reporting of future qualitative work if reporting standards made specific mention of reporting results from data validity checks, including whether and why any data was ultimately excluded from analysis.

Conclusion

This study highlights the complexities of working with a vulnerable population in an era where the remote conduct of clinical research is increasingly common. The widespread use of remote enrollment provides significant benefits to study teams, while also making it easier than ever for ineligible individuals to attempt to participate. To address these challenges, we adapted our initial approaches to include more stringent screening while still remaining cognizant of participants’ privacy and confidentiality concerns.

Given the privacy concerns often associated with vulnerable populations and the increasing risk of fraudulent study participants, it is vital that future studies employing online methods adopt tailored strategies specific to their target group, giving careful consideration early on to the balance between ensuring scientific integrity and respecting the unique concerns of vulnerable populations. Without appropriate measures, particularly during the screening and enrollment stages, studies risk compromising data integrity. Moreover, as online platforms play a growing role in research, it is imperative to address these challenges proactively to prevent further dilemmas from undermining the field.

Footnotes

Acknowledgments

We thank the study participants for their contributions to this work and colleagues who provided advice and guidance based on their own experiences after we discovered the enrollment of individuals who were not eligible for study participation.

Ethical Considerations

This study was approved by the University of Pennsylvania Institutional Review Board (UPenn IRB) (study number 854293). The Children’s Hospital of Philadelphia Institutional Review Board operated under a reliance agreement with the UPenn IRB. All participants provided written informed consent.

Author Contributions

Noelle Burwell: conceptualization, methodology, and writing—original draft; Chelsea Mbakop: conceptualization, methodology, and writing—original draft; Kaleb Branch: conceptualization and writing—original draft; Elizabeth D. Lowenthal: Conceptualization, methodology, supervision, and writing—review and editing; Rebecca Clark: conceptualization, methodology, funding acquisition, supervision, and writing—review and editing; Kira J. Nightingale: conceptualization, methodology, project administration, supervision, and writing—review and editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a pilot grant from the Penn Center for AIDS Research (CFAR), an NIH-funded program (grant number: P30 AI 045008).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.