Abstract

While health services are expected to have public involvement in service (re)design, there is a dearth of evaluation of outcomes to inform policy and practice. There are major gaps in understanding why outcome evaluation is under-utilised. The aims of this interpretive descriptive study were to explore researcher participants’ experiences of and/or attitudes towards evaluating health service outcomes from public involvement in health service design in high-income countries. Additionally, the aims were to explore barriers and enablers of evaluation, and reasons for the use of evaluation tools or frameworks. Semi-structured interviews (n = 13) were conducted with researchers of published studies where the public was involved in designing health services. Using framework analysis, four themes were developed that captured participants’ experiences: Public involvement is hard – evaluation is harder; power, a diversity of agendas, and the invisible public; practical and methodological challenges; and genuineness and authenticity matter. Evaluation is driven by stakeholder requirements, including decision-makers, funding bodies, researchers, and academics, and evaluation tools are rarely used. The public is largely absent from the outcome evaluation agenda. There is a lack of commitment and clarity of purpose of public involvement and its evaluation. Outcome evaluation must be multi-layered and localised and reflect the purpose of public involvement, what constitutes success (and to whom), and use the most appropriate methods. Multi-level supports should include increased resources, such as funding, time, and expertise. Without improved evaluation, outcomes of investment in public involvement in health service design/redesign remain unknown.

Keywords

Introduction

This study explores researcher’s experiences of and/or attitudes towards evaluating health service outcomes from public involvement in health service design in high-income countries. Barriers and enablers of evaluation and reasons for the use of evaluation tools or frameworks are investigated. Public involvement in health service design/redesign is expected, and in some countries mandated (Kenny et al., 2013). This has resulted in what Williams et al. (2020, p. 1) described as ‘cobiquity’: a range of ‘co’ words such as co-production and co-design. In this study, public involvement refers to any interaction between the public and employees of a health service or research institution to design or redesign health services.

Public involvement in health service design is enacted in a multitude of ways, from ad hoc client feedback to health professionals, to academic research projects. Public involvement occurs in a wide range of disciplines and settings, emerging from disparate social movements, policies, and contexts. Multiple models and typologies of public involvement exist, with frameworks or hierarchies outlining levels of public involvement, the activity of focus, and/or the degree of power held by participants. For example, the IAP2 spectrum proposed by the International Association for Public Participation (2017) uses a continuum of levels of engagement, emphasising the goals and commitment to the public required at each level. The levels from lowest to higher levels of engagement are inform, consult, involve, collaborate, and empower.

There is a strong drive for public involvement in health service design for democratic and technocratic reasons (Edelman & Barron, 2016; Staley, 2015). Democratic arguments centre on people’s right to participate in decisions that affect them and the intrinsic value of public involvement (Abelson & Gauvin, 2006; Conklin et al., 2015). From a technocratic perspective, designing health services with members of the public draws on lived experience expertise to inform decision-making. Potential benefits include new or improved health services (Bombard et al., 2018; Mockford et al., 2012) that may be more accessible, acceptable, and effective (George et al., 2015), while increasing health service accountability (Fredriksson & Tritter, 2017; Madden & Speed, 2017). Public participants may experience personal and social growth by gaining new knowledge and skills and establishing friendships and networks (Attree et al., 2011; Kenny et al., 2013). For health services and researchers, there may be improved understanding of the needs and perspectives of health service users to enhance work satisfaction and wellbeing (Clarke et al., 2017).

Despite the potential benefits, public involvement activities are resource intensive for health services and public participants. Both are committed to seeing a valuable difference for their efforts as a worthwhile investment (Crocker et al., 2017). However, evaluation of public involvement typically focuses on the process of public involvement rather than outcomes (Boivin et al., 2018). There is a major gap in outcome evaluation, with little evidence of the tangible difference public involvement makes or approaches to evaluation for different situations. In our recent systematic review (Lloyd et al., 2021), a range of outcomes from public involvement were documented. However, evaluations of health service outcomes were reported in less than half of the studies. Study quality, design, and reporting were poor, with a paucity of studies evaluating longer-term health service outcomes.

Authors speculate that evaluating outcomes from public involvement is too difficult or that public involvement is so intrinsically valuable that evidence of outcomes is not required (Snape et al., 2014b). However, this is highly problematic given significant investments in public involvement at personal, organisational, and fiscal levels. In health research, key stakeholders recognise the feasibility of outcome evaluation (Barber et al., 2011) and that it is needed (Crocker et al., 2017; Snape et al., 2014a), but the lack of evidence on health service outcomes from public involvement remains concerning. This study directly addresses a critical gap in knowledge on the evaluation of outcomes from public involvement in health service design. The focus on high-income countries is due to differences in health service design and delivery between low-, middle-, and high-income countries. The aims of this study were to explore researchers’ experiences of and/or attitudes towards evaluating health service outcomes from public involvement in health service design in high-income countries. Additionally, the aims were to explore barriers and enablers of evaluation, and reasons for the use of evaluation tools or frameworks.

Materials and Methods

Study Design

An interpretive description study design was employed (Thorne et al., 2004) within a pragmatist philosophical paradigm (Morgan, 2014). Interpretive description supports the interpretation of phenomenon from the perspectives of those who experience it (Thorne et al., 1997). It is particularly suited to health research where knowledge should move beyond theory towards practical, real-world outcomes. Complexities are highlighted, with data analysis oriented towards these complexities to produce understandings useful to the discipline (Thorne et al., 2004). The knowledge generated from this study was intended to fill a major knowledge gap and assist participants, policymakers, researchers, and health service staff in improving the evaluation of health service outcomes from public involvement.

Sampling Procedure

Researchers who were corresponding authors of studies published between 2015 and 2019 on public involvement in high-income countries were identified from our recent systematic review (Lloyd et al., 2021) and were invited to participate in the study by email (n = 51). Recruitment was purposive (Bryman, 2012) to ensure prospective participants had in-depth knowledge and experience in research which involved members of the public in designing or redesigning health services. A total of 13 participants were recruited, who were not known to us prior to the study.

Data Collection

An interview guide (Supplementary material) was developed from the systematic review (Lloyd et al., 2021) and refined after piloting with a researcher with relevant experience who was not part of the study. Semi-structured interviews lasted between 34 and 79 minutes (mean of 47 minutes) and were conducted by one author (NL) via Zoom videoconferencing between October 2020 and January 2021. Field notes were made after each interview. As data were collected, elements of data saturation (Saunders et al., 2018) and meaning saturation (Hennink et al., 2017) informed when sufficient data had been obtained. The team considered the data from the 13 initial participants and agreed that the perspectives and understandings captured provided deep and meaningful insights into the research question, so recruitment was ceased (Thorne, 2020).

Interviews were audio-recorded with consent and transcribed verbatim by one author (NL). Participants were emailed de-identified transcripts and were asked to edit, retract, clarify, or add information to improve the quality and credibility of data and potentially add further depth and meaning (Birt et al., 2016; Hagens et al., 2009). Not all participants responded, and some only confirmed their approval of the transcript. Several made minor corrections to typographical or grammatical errors. No data were retracted. One participant clarified and added further detail about how the findings of their study were shared with the public. Others provided suggestions to further de-identify data.

Data Analysis

Data were analysed using the framework method, a form of thematic analysis (Gale et al., 2013), supported by NVivo 12 for Mac software (QSR International Pty Ltd., 2021). The five stages of the Framework Method described by Ritchie and Spencer (1994) were followed: familiarisation, identifying an initial framework, indexing, charting data into the framework matrix, and mapping and interpretation. The analytical process was not linear, and we moved back and forth between stages (Smith & Firth, 2011) with reflexive team discussions until there was clarity and agreement on the final themes.

Ethics

Ethics approval for this study was given by the La Trobe University Human Research Ethics Committee, approval number HEC20367. Participants completed a Participant Information Statement and Consent Form, and verbal consent (including to audio-record) was confirmed at interview commencement.

Findings

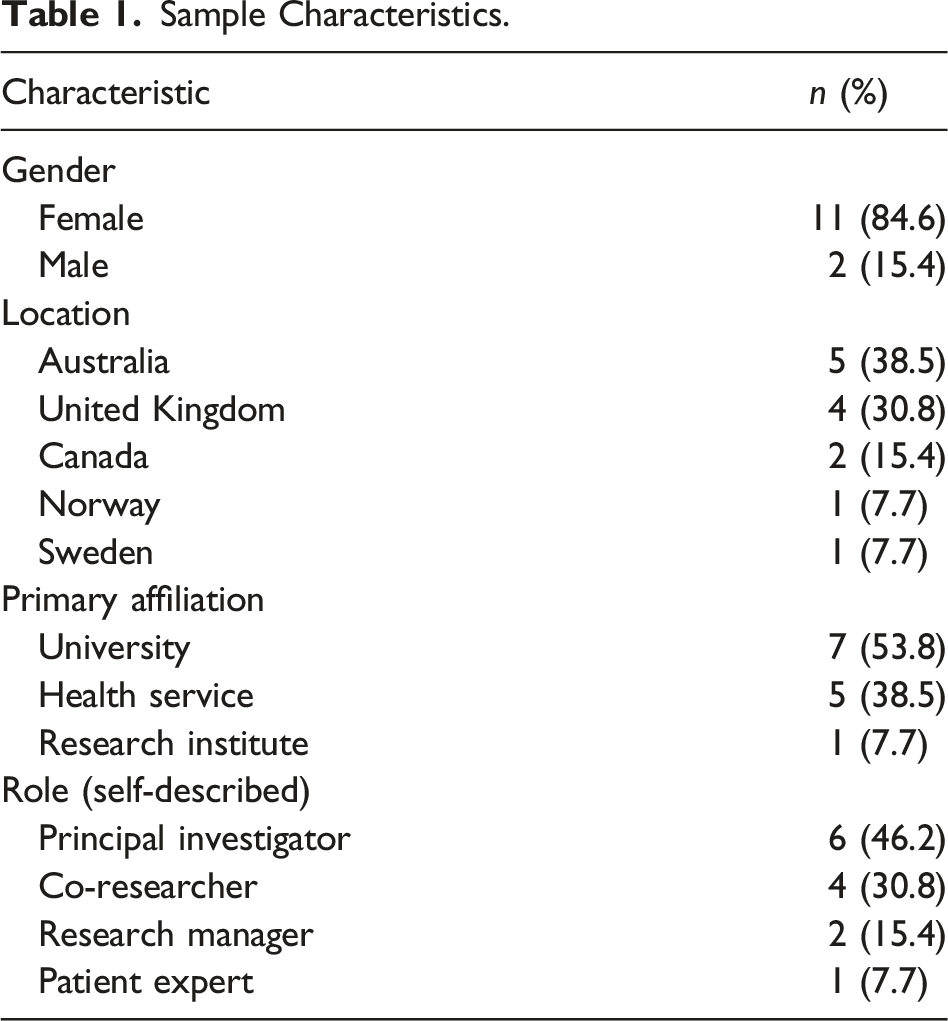

Sample Characteristics.

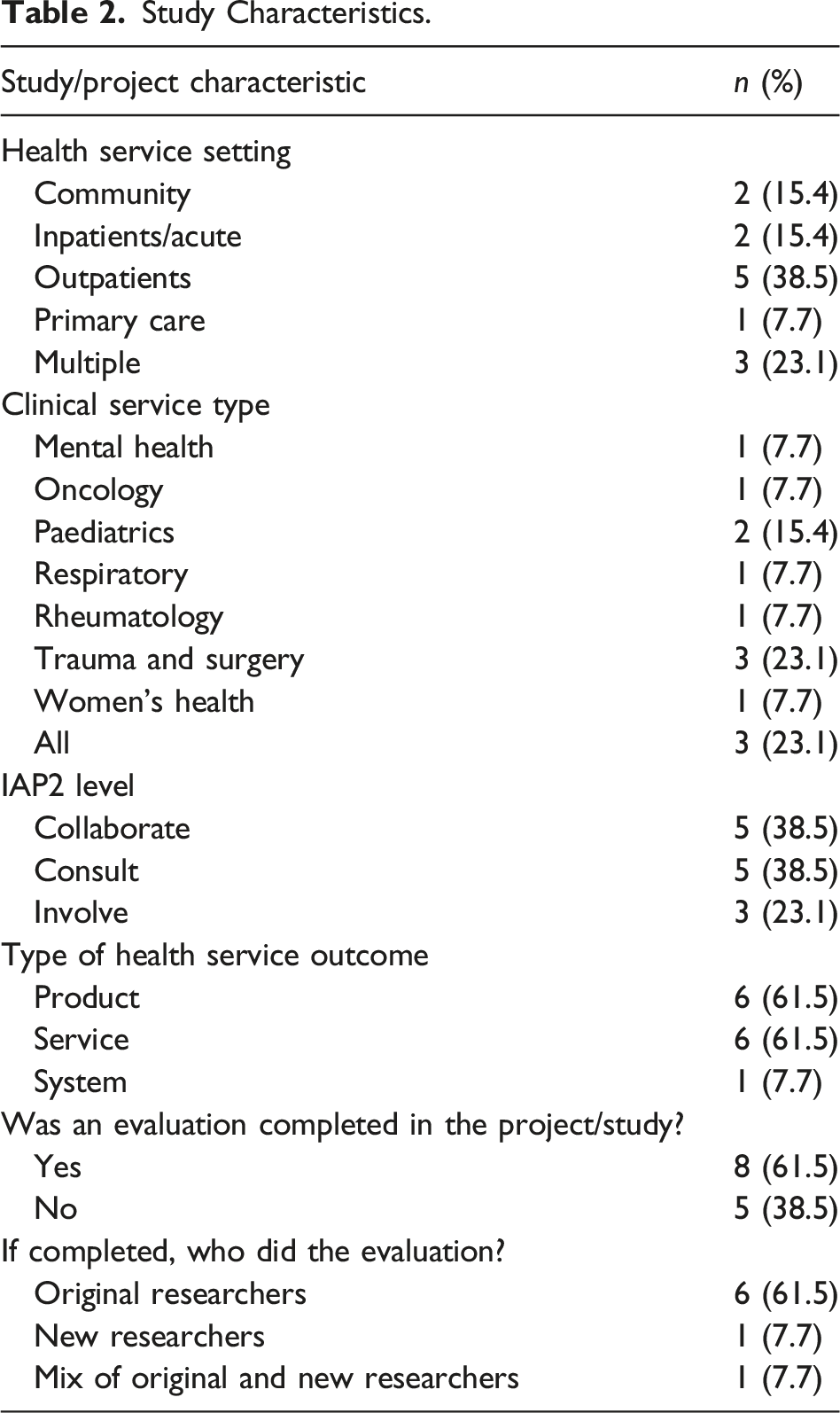

Study Characteristics.

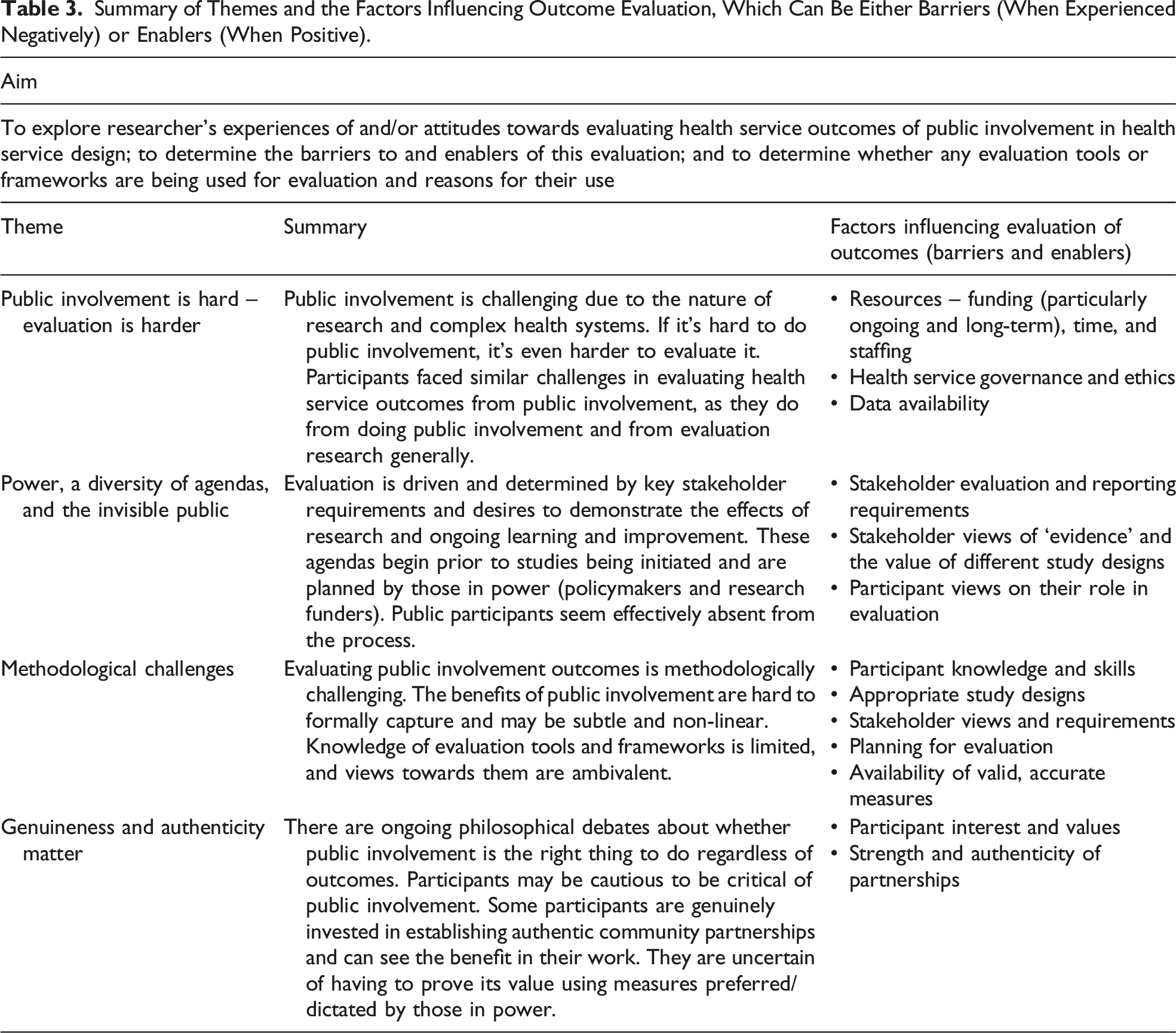

Summary of Themes and the Factors Influencing Outcome Evaluation, Which Can Be Either Barriers (When Experienced Negatively) or Enablers (When Positive).

Public Involvement Is Hard – Evaluation Is Harder

The challenges of evaluating outcomes of public involvement reflected the challenges of doing public involvement, research, and evaluation more generally. Participants described how public involvement in healthcare settings is difficult and while they acknowledged the value of evaluation, they identified that leadership, resourcing, governance, ethics, and data availability were inherent challenges of health systems that had a major impact on their decisions to conduct outcome evaluation.

Leadership from health service managers was considered highly influential in the availability of resources and overcoming obstacles. Sandra recalled, ‘certainly we had a complete lack of interest from management, which was really unhelpful for this project. … if you haven’t got management on board, it is an uphill battle’. Conversely, supportive leaders enabled evaluation: ‘once you get somebody, kind of on-side, who is quite influential like that, then funding and resources tend to follow’ (Lauren).

Participants described difficulties in securing funding, which was often short term and secured in stages and from multiple sources. Rhiannon described it as ‘working on the smell of an oily rag’. Ashley explained the necessity to demonstrate success in multiple small projects as ‘stepping stones … to get the big money’. Several participants described running out of funding, meaning no resources could be directed towards evaluation.

Many participants reported inadequate time to determine what public involvement achieved, especially for longer-term benefits such as improved research culture and staff attitudes. One participant spoke about seeing changes several years after their one-year project had finished which they couldn’t formally capture in an evaluation: ‘it takes so long for these things to filter down, probably not much would have improved by the end’, but they noted, ‘the attitudes have changed amongst a lot of the clinicians towards patient involvement’ (Sandra). The time required for public involvement and outcome evaluation was not always acknowledged or valued by health services or academic institutions.

Participants reported that staff turnover created delays and sometimes changed the focus of projects. New staff didn’t yet have community or health service connections, which made completing and reporting evaluation findings more challenging. Renee described the challenges of sharing evaluation findings with the community: There are a few barriers like, [Researcher] getting sick, and [Researcher] retiring, and me moving away! You know just those human factors you know, that get in the way of really having a full focus on those kind of processes.

Staff turnover limited what Isabel described as the ‘sustainability of corporate knowledge’. Newer staff had not realised that services had public involvement in their design and the outcomes were harder to appreciate or formally evaluate.

Participants described health services as complex systems which are constantly changing yet, paradoxically, are difficult to enact change within. This complexity created barriers in evaluating public involvement. Some participants described delays in managerial approval of resources for evaluation. Slow or non-existent progress could result in reduced engagement from public members over time, before projects reached the evaluation stage, as Sandra experienced: ‘[patient group] attendance reduced because they were being demoralized’.

Ethics approval processes for public involvement projects and evaluation were arduous and time-consuming, and for Sarah, they did not genuinely seem to be about the risk to community members: A lot of the things that would make or break your relationship with community are not something that an ethical review board knows about or cares about … [it] just takes so long! Like you have to get a person in accounts at a hospital to sign off on something – this doesn’t even involve like money, or your time!

Access to data within health services influenced evaluation. Evaluation was often based on existing health service data, such as clinic wait times: ‘we evaluated what we could in terms of what data we were already collecting’ (Sandra). This enabled before and after comparisons. Other data which might have been useful for evaluation was either too challenging or too time-consuming to collect or wasn’t thought of at the research design stage: ‘We didn’t think of that as a thing to evaluate’ (Sandra).

Power, a Diversity of Agendas, and the Invisible Public

Determining what evaluation was completed for projects was driven by several factors: stakeholder requirements, to demonstrate the effects of the research and/or to learn if something worked (and why, or why not). Participants identified key stakeholders as policymakers, funding bodies, academics, and researchers. These stakeholders drove the evaluation agenda, determining what was evaluated and whether the required resources were available. However, stakeholders had different priorities, and this created tension and barriers around what and how evaluations were conducted. Ashley explained: And from who’s perspective, right? … what outcomes do we need to influence who? Am I doing this to … convince the physicians, or do I need to do it to convince the [patient population group], or the hospital administrators to keep these programs and put them in place …?

Policymakers, governments, and other decision-makers (in addition to funders) were seen as the holders of power and often directed the type of evaluation. Participants indicated quantification or measurement of outcomes and cost-effectiveness (i.e. saving money) were most valued by decision-makers, so they attempted to include these in evaluations despite some reservations about the value: ‘Politicians are mostly focused on numbers, not the quality of the numbers’ (Jonas). In the Australian context, several participants spoke of the requirement to demonstrate benefits from public involvement as evidence towards meeting the mandated National Safety and Quality Health Service Standards (Australian Commission on Safety and Quality in Health Care, 2017) for accreditation. There were concerns about whether evaluation and reporting requirements set by governments were worthwhile. Rhiannon commented, ‘often you ended up writing white papers that go to [Health Department] and they sit there, and nobody ever uses them or reads them and then we just go and do something else’.

Funder requirements were targeted when designing studies and applying for funding. Adam explained, ‘if I’m writing it for a bid at the moment, they would probably expect some sort of evaluation, so I’m trying to shoe-horn that in, to keep funders happy’. Some participants included specific clinical indicators for funders, even if at odds with what they considered important to the communities they were working with. Renee explained: The funding bodies would say ‘we want you to do a project on diabetes and we’ll see whether waist circumference changes after a couple of years’ or whatever, and of course, on that indicator they maybe wouldn’t make a change in that amount of time, but there may be a change in something that the community thought was important, like social connectedness, or maybe something like, people did start socialising in groups that went for walks and exercising, and that even though it didn’t result in the physiological kind of outcome right away, was something that was really valued, and obviously could be seen as a stepping stone.

The academic community, often referred to by participants as ‘the scientists’, are powerful stakeholders in determining what constitutes evidence. They were perceived to regard quantitative data more highly than qualitative, as Sandra remarked about qualitative data, ‘the scientific community don’t sort of tend to count that as proper evidence … they don’t believe it … they don’t pay attention to it’. However, many participants considered evaluation research questions were better answered by qualitative methods: ‘[it’s not] quantifiable, and traditional metrics for evaluating the impact of something don’t lend themselves that well’ (Lauren). Qualitative research was perceived as less likely to be published, but if there was ‘appetite in journals’ (Samantha), this would support evaluating outcomes from public involvement which may make it easier to include in the research program.

Participants held different opinions on evaluation, which led to different evaluation agendas. Some considered outcome evaluation as distinct and separate from the evaluation of the developed intervention and thus was ‘outside the scope of what I do … [it’s] research about research … which is not really what I do’ (Samantha). Conversely, participants’ interest in this area was considered an enabler of evaluation.

Public participants were notably absent from the discussion of key stakeholders and drivers of evaluation. An exception was a project with First Nations peoples, where participants had invested time understanding local priorities and what data collection was needed to evaluate whether priorities were met. In one study, there were challenges when public participants wanted to change the primary outcomes that were initially approved. Samantha explained, ‘we were fortunate that our funder was open to us changing our outcome after … it was hard work on the PI’s [Principal Investigator] behalf to convince the funder to do that’. There was one patient expert who participated in the current study. They had suggested collecting baseline measures they considered important to determine whether service improvements were successful, but clinicians were ‘too busy’ to do it and lacked support from their managers.

Methodological Challenges

Evaluating health service outcomes from public involvement was considered ‘really, really hard’ (Rhiannon), with several barriers relating to methodological issues.

All research participants described positive benefits of public involvement for health services, but outcomes were difficult to formally capture and reported impacts were often anecdotal. As Sandra noted, ‘the evaluation is sort of more like what people felt had improved because of it’. Isabel commented that it is ‘very complex, it’s not linear, and it’s multi-layered’, while others described the methodological challenges in evaluating outcomes that might be covert, indirect, and not immediately visible: Some of these things I think are quite subtle … for instance, if patients say something and it changes someone’s way of thinking, you know, and it changes the direction of the project … sometimes you wouldn’t even know about it because unless someone tells you ‘Oh, I’ve changed my idea ’cause a patient said that’. (Sandra)

Broader community impacts were observed by participants, such as community empowerment, improved cultural identity, enhanced trust in health services, and new or strengthened partnerships, but hard to formally capture or measure. These outcomes were particularly evident for participants who had completed public involvement research projects with First Nations populations. Participants were frustrated that meaningful outcomes for communities remained elusive to formal evaluation, either because they didn’t consider this type of evaluation or didn’t know how. As Sarah explained: So [people from target population group] who maybe were a bit isolated from community, for a variety of different reasons, found connections and support, and I guess love from the [First Nations] Elders that would come to community days, and all that sort of stuff, and they weren’t our outcomes in the research. We kick ourselves that we couldn’t include it, something like that, to share that story … but I don’t know how you can quantify that sort of stuff … Yeah you just can’t capture all that stuff and that’s what’s important to community, and so part of our research agenda going forward is … how can we start to capture that stuff.

There were tensions regarding the selection of the most appropriate evaluation design. Randomised controlled trials were seen as ideal but too difficult in the setting. Mixed methods were commonly suggested because as Rhiannon explained, they were often ‘pulling lots of bits of information together to triangulate it into some sort of story that makes sense’ and ‘to tell the story as well as provide the scientists with the numbers’. Several participants suggested needing team agreement on the definition of success and how it should be measured. As Isabel explained: We can debate for hours about how to define impact, how to measure impact, but if there was some agreement to say, well this is what as a team, or as a health service, or whoever, we would deem x or y as being an impact by having consumers involved … then you can set up how to measure it.

Some participants described concerns about the accuracy of measures, such as patient satisfaction, commonly used to determine whether health services improved due to public involvement. For example, one participant compared patient satisfaction survey scores between old and new models of care and found they were similar, even though they considered the new model to be superior. Sarah felt this was an issue with the survey method: ‘people in the two different services actually had very similar answers even though their experience, we knew, was very different. But part of it was that they didn’t necessarily know what they didn’t have access to’.

Participants’ identified knowledge and skills were barriers to outcome evaluation, with a lack of awareness of this type of evaluation for some participants. Many participants reported spending inadequate time considering the expected impact of public involvement and how to capture it. While economic analysis was viewed as important for decision-makers, participants were ambivalent about its value and indicated this evaluation would be deferred to others because of lack of expertise: ‘maybe a health economist could do some calculations’ (Eva). Amanda summed this up by saying ‘don’t ask me how I’d do it ’cause I don’t have a clue!’.

There was some knowledge of evaluation frameworks but limited awareness and experience of evaluation tools with participants ambivalent about their potential value. Only one participant had knowledge of a specific tool, the Public and Patient Engagement Evaluation Tool (McMaster University, 2021), which they are trialling in an upcoming project. Several participants knew of the GRIPP2 reporting guidelines (Staniszewska et al., 2017), which they were either planning to use or had found useful as a guide to planning evaluation. Participants had varying views on evaluation tools and frameworks. While they might be ‘useful. So you can get comparable data and collect it systematically’ (Renee) and ‘helpful to begin to articulate things’ (Lauren), others were uncertain about the utility of evaluation tools: ‘I think that it would probably get used’ (Rhiannon). Time was a barrier to finding and using evaluation tools. Isabel explained: It takes time, one to find a tool that’s going to work, there may not even be an appropriate tool in the area that you’re wanting to measure, so knowing what a good validated tool is, and being able to access it, and then to have it filled in a robust way, you know, we’re all very time poor.

Michelle lamented, ‘I could spend my life looking for tools!’. Many participants remarked that if a suitable evaluation tool existed, it would be worth using. However, participants generally thought it was unlikely and ‘naïve’ that a ‘one-size-fits-all’ evaluation tool could be designed to encompass the evaluation needs of all public involvement projects (Isabel).

Genuineness and Authenticity Matter

An important factor for evaluation was whether participants seemed genuinely invested in public involvement and in establishing authentic relationships with the public.

Several participants supported the democratic argument that involvement is expected by the public and ‘the right thing to do’. It has become integrated into research practices and the culture of their society, and it would be considered unethical not to do it: ‘my country, people – we are a democracy and people are used to having their say’ (Eva). There was notable tension for participants to balance this philosophy of public involvement and whether to evaluate what outcomes it achieves, particularly in discussions about economic analysis. They could appreciate why cost and benefits might be considered, especially when healthcare resources are limited. Ashley wondered what it would mean if public involvement wasn’t financially viable: I guess the question that then comes, is if there’s a … net economic cost, and you do the big analysis and work that out, is it still the right thing to do? Like, I guess, you’d have to ask that question, but – and I would say, probably, well yes. That not everything has to make a profit!

Some participants were wary of evaluating or scrutinising public involvement because of fears of what others would think, due to the democratic argument or the increasing requirement for public involvement. They indicated public involvement would be part of the research agenda regardless of what evaluation showed, and it was hard to challenge the status quo about public involvement regardless of their own personal views or experiences. As Michelle noted, ‘people are quite cautious about being critical of it as a process’. Another participant had a negative experience at a conference presenting an outcome evaluation framework they developed: [I] had quite a backlash from people that it wasn’t something that I should be trying to evaluate or trying to articulate, that it should just be accepted, that it should just be done because it’s the right thing to do. (Lauren)

Justifying public involvement was an important reason to evaluate outcomes for many participants who were genuinely invested in public involvement. Some participants targeted those in power, using evaluation to garner support for public involvement, because as Isabel remarked, ‘… some of those decision-makers will need to see data to believe it’. Others wanted to convince academics who were sceptical of public involvement, noting evaluation often has multiple agendas. Sarah explained, ‘One is getting a better service for the [target population group], right. And the other one is justifying, in a way, all our work, so that we’ll be taken seriously by the scientific community’. There was hope that evidence of positive outcomes could strengthen the argument for reimbursement for public participants.

Participants considered the level of public involvement used in their projects as a hierarchy. Some participants felt lower levels of engagement, such as informing and consulting, risked being tokenistic, or less genuine in practice, and there was speculation that level of involvement may be linked to magnitude of impact. One participant wondered what outcomes would be found when ‘box-ticking’, lower-level public involvement was evaluated. They questioned whether evaluation findings could be used as evidence against public involvement, rather than recognising it hadn’t been done well, or acknowledging ‘the purpose of involving and the transparency around that’ (Amanda): Certainly in my experience, when you talk to health providers, and I did some research on this a long time ago, was that consultation with the public is actually more about, ‘well look we know what we’re going to do, we’re just going to go and tell the public, but we’ll consult with them’, as opposed to ‘well we really want to hear what the public has to say’, and whether that will affect how we subsequently impact, you know, subsequently change how we plan to do things. (Amanda)

For those genuinely invested in public involvement, evaluation of outcomes was seen as critical for ongoing learning and improvement of public involvement practices: ‘Evaluation’s always important, if you want to learn from what you’re doing and be able to do it better’ (Renee). However, it was equally important to understand if and why an activity wasn’t successful. Amanda explained, ‘We need to know if something’s not working, we need to work out why isn’t working. So that we’re building on this knowledge base that can really help better practice …’. Evaluation created opportunities for sharing experiences and learnings. Sarah described how publications enabled knowledge sharing ‘… with other people outside the service, so if other communities or other hospitals or whatever, want to do this, what are the things they need to know, or that could help them not make the same mistakes we did’.

Discussion

This study addressed a major problem: outcomes from public involvement in health design/redesign are rarely reported and there are major gaps in understanding why outcome evaluation is under-utilised (Lloyd et al., 2021). Despite increasing calls for evaluating the outcomes from public involvement in health service design (Boivin et al., 2018), there is a dearth of research seeking to capture the outcomes of public involvement in health service design, and little is known about the challenges and enablers of evaluation.

Our study findings suggest that evaluation is important in demonstrating the value in investing in public involvement; however, challenges included under-resourcing (time, funding, and staffing), inadequate planning, lack of support from those in power (leaders, policymakers, and funders), participants’ attitudes, knowledge, and experience, lack of evaluation tools and measures, and competing stakeholder priorities on what evidence is most valued. As has been reported elsewhere (Snape et al., 2014b), some evaluation factors could be either enablers or barriers. Examples included resourcing, leadership and stakeholder support, and researchers’ attitudes and knowledge. Barriers and enablers to evaluation were similar for doing public involvement, both in this study and the literature (Ayton et al., 2022; Clarke et al., 2017; Ocloo et al., 2021; Snape et al., 2014b; Staniszewska et al., 2018). Complexity in enacting public involvement has been identified by others (Pagatpatan & Ward, 2017). If public involvement is complex to ‘do’, it is not surprising it is complex to evaluate outcomes.

It is not clear how best to evaluate health service outcomes from public involvement in health service design, despite general agreement from participants in this study that it is important. A one-size-fits-all approach to evaluation will not be the solution, but there are some planning and design options suggested in this study, and in the literature, which offer potential. The need to ensure clarity about the purpose and aims of public involvement a priori and consequently evaluate whether these were met has been raised in the literature (Esmail et al., 2015). Our study confirmed that defining what constitutes ‘success’ and what is a meaningful outcome (and to whom) is crucial, an idea supported by others (Harris et al., 2018; Pagatpatan & Ward, 2017). Mixed methods designs might be more practical and valuable than quantitative designs. Evaluating and reporting any negative outcomes must be considered. Participants in this study spoke about personal sacrifices required for their research, and some authors suggest that negative impacts have received little attention to date (Russell et al., 2020).

Capturing, sharing, and disseminating learnings to build on the collective experience are critical. Several authors have agreed that the ‘narrative’ of public involvement can, as suggested in this study, provide valuable knowledge about what works to take forward in future work (Smith et al., 2022; Staley & Barron, 2019). Historically, many project evaluations are shared within health organisations or at conferences, rather than in academic publications (Donetto et al., 2014). Effort must be made to disseminate findings in ways that are readily locatable and utilised across organisations (Boylan et al., 2019).

Participants were generally less experienced and less certain about the role of evaluation frameworks and tools, and economic analyses. There are a range of evaluation tools and frameworks to evaluate outcomes from public involvement in health service design, and these have grown in number in the past decade (Boivin et al., 2018). However, they tend to be used mainly by those who developed them (Greenhalgh et al., 2019), and only one participant in this study had knowledge of a specific evaluation framework. This raises questions about whether there is an issue with awareness of tools, or with their utility and adaptability. Greenhalgh et al. (2019, p. 785) concluded that a locally co-designed ‘menu of evidence-based resources’ might be more successful than an off-the-shelf framework to aid researchers in the process.

There have been increasing calls for economic analyses of public involvement activities (Clarke et al., 2017). Participants in this study generally agreed economic evaluations were highly valued by decision-makers and should be considered. However, they had limited experience and felt this type of evaluation required expertise outside their scope. This reinforces the need for additional resources, skills, and planning for economic evaluations to be incorporated in public involvement projects.

Further research is required to determine how best to overcome the multi-level barriers limiting the evaluation of health service outcomes from public involvement. Most of the barriers to evaluation raised in this study will not be overcome by individuals, but rather require a concerted effort across multiple levels of the system (Ocloo et al., 2021), including micro (individual, program, and service), meso (organisation), and macro (health system) levels. Drawing on theories to understand the health system as a complex adaptive system may aid this process.

In this study, participants were supportive of evaluating outcomes from public involvement in health service design, but challenges arose from working in a complex system with competing priorities and resource limitations. Participants strongly advocated for adequate organisational support and resourcing for public involvement and outcome evaluation. Health service and policy leaders and research funders have a role to play in driving the agenda and in providing resources. Structural barriers to overcome include the challenges created by the positivist-oriented research community, who are perceived to value specific types of research (quantitative, the randomised controlled trial). Focusing only on these methods risks failing to capture other potential outcomes from public involvement, such as effects on power relations, empowerment of individuals and communities, and influences on research culture and agenda (Russell et al., 2020; Staley & Barron, 2019). Participants in this study shared views similar to those reported elsewhere (Smith et al., 2022) that academic journals should adopt processes which support the reporting of public involvement.

There are some synergies between studies which have explored barriers to conducting evaluations in health settings and this study, which may provide opportunities to extrapolate learnings. As an example, Huckel Schneider et al. (2016) explored barriers and facilitators in evaluating health policies and programs in Australia. The authors recommended that overcoming macro political and system-level factors may require a combination of what they refer to as carrots (incentives to build staff evaluation capacity), sticks (mandating evaluation requirements in specific circumstances), and sermons (evaluation expectations). They suggested these will flow down to meso (organisational) and micro (individual behaviour) levels by creating a strong evaluation culture. Improved organisational culture means conducting evaluation and sharing evaluation learnings as part of standard practice. Huckel Schneider et al. (2016) recommended that staff training to increase skills may improve evaluation culture, but also recognised that access to staff with highly specific evaluation expertise is necessary for complex evaluations. The authors (Huckel Schneider et al., 2016) recommended the use of evaluation tools, checklists, and cheat-sheets to assist in initial scoping and design of evaluations, and having high level champions and leaders to drive evaluation thinking and practice. These recommendations require further exploration to determine their applicability in evaluations of public involvement in health service design.

There are limitations of our study. Participants were all from high-income countries. The state of knowledge of barriers and enablers to evaluation in low- and middle-income countries is unknown. Different findings may have resulted from participants from other areas, and this is an opportunity for future research. Participants were authors identified through a systematic review. While participants had in-depth perspectives on the topic, the sampling approach resulted in a relatively narrow sample. Some participants were academic researchers and others were health service staff. While there was one patient expert participant, we did not specifically recruit public participants, nor did we include those responsible for commissioning projects, studies, and evaluations. A broader array of stakeholders may have different views if asked about this topic. Seeking their views is an important area for future research to more fully understand the challenges in evaluating public involvement in health service design.

Conclusion

Determining what difference public involvement in health service design makes is challenging. This study fills a major knowledge gap by exploring experienced individual’s perceptions of the barriers to evaluation. There is a lack of commitment and clarity of purpose in evaluating outcomes of public involvement, differing and competing stakeholder agendas, insufficient resourcing, and practical and methodological challenges of evaluation. Multi-level and individualised project and study designs which acknowledge the complexity of the health service setting are necessary. Enhancing evaluation will require more than individual-level knowledge and skills; it will need organisational, policy, and academic community commitment.

Supplemental Material

Supplemental Material - Barriers and Enablers to Evaluating Outcomes From Public Involvement in Health Service Design: An Interpretive Description

Supplemental Material for Barriers and Enablers to Evaluating Outcomes From Public Involvement in Health Service Design: An Interpretive Description by Nicola Lloyd, Nerida Hyett, and Amanda Kenny in Qualitative Health Research

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical Statement

The study was approved by the La Trobe University Human Research Ethics Committee (approval number HEC20367). All patients provided written informed consent prior to enrolment in the study.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.