Abstract

Background

A challenge to implementation is management of the adaptation-fidelity dilemma or the balance between adopting an intervention with fidelity while assuring fit when transferred between contexts. A prior meta-analysis found that adapted interventions produce larger effects than novel and adopted interventions. This study attempts to replicate and expand previous findings.

Methods

Meta-analysis was used to compare effects across a whole-population of Swedish outcome studies. Main and subcategories are explored.

Results

The 523 studies included adapted (22%), adopted (33%), and novel (45%) interventions. The largest effect was found for adapted followed by novel and adopted interventions. Interventions in the mental health setting showed the highest effects, followed by somatic healthcare and social services.

Conclusions

These results replicate and expand earlier findings. Results were stable across settings with the exception of social services. Consistent with a growing body of evidence results suggest that context is important when transferring interventions across settings.

The science of social intervention (Sundell & Olsson, 2017) is concerned with the systematic development and testing of intentional change strategies to promote health, prevent ill health, or maintain healthy developmental trajectories. The ultimate goal of providing social interventions is to impact societal health and wellbeing (Eichas et al., 2019; O’Connell et al., 2009) by targeting diverse populations across prevention level (i.e., universal, selective, indicated) and setting (e.g., social services, mental health). In order to bring the science of social intervention to scale for widespread public health gain, however, interventions need first be developed, tested for their efficacy and effectiveness, and finally assessed for their readiness for broad dissemination and implementation, where a stakeholder (e.g., policy-maker, practitioner, researcher) interested in using an evidence-based intervention (EBI) makes the decision to put it to use in practice (i.e., scale-up; Aarons et al., 2017). Although there are several, and somewhat varying, guidelines available and in use for evaluating claims of intervention effectiveness (e.g., California Department of Social Services, 2006; Gottfredson et al., 2015), there is widespread support for the understanding that in order for an intervention to be deemed evidence-based, it must first be assessed for its effectiveness according to an accepted standard. Bringing the science of social intervention to scale through the dissemination and implementation of EBIs is, however, challenging (Dymnicki et al., 2017).

Underlying the idea that EBIs should be disseminated to achieve widespread public health impact is an expectation that the positive effects found in efficacy and effectiveness trials will be maintained when interventions are moved from their original setting to new settings. A consistent challenge to the transfer and implementation of interventions is the management of the adaptation and fidelity dilemma (von Thiele Schwarz et al., 2019). This refers to the balance between adopting and delivering an intervention with fidelity while also assuring the intervention fits the local context which may necessitate a certain level of adaptation to the intervention under consideration. The current literature acknowledges that adaptations can be needed when using an EBI while it also highlights the importance of implementing interventions with fidelity (Baumann et al., 2017; Rabin et al., 2018).

Fidelity refers to the degree to which a given intervention is implemented as intended by developers and in relation to previous intervention trials when interventions are transferred to new settings (Carroll et al., 2007; Hasson, 2010). A common argument is that positive effects can only be maintained if interventions are implemented with fidelity in their new setting (Carroll et al., 2007; Gottfredson et al., 2015). However, documented challenges exist to the maintenance of fidelity when transferring interventions across settings (Bonell et al., 2021) and there is a growing literature supporting the idea that planned adaptation is necessary when transferring interventions across settings to maintain effectiveness (Ferrer-Wreder et al., 2012; Moore et al., 2021). For a given stakeholder interested in using a given EBI the dilemma of adaptation versus fidelity needs to be resolved: adapt the EBI to the local context by making systematic changes in specific ways (e.g., add content, remove content, change delivery method) or adopt the EBI as described previously with fidelity.

When the focus of implementation of an EBI is to maintain fidelity (i.e., adoption), stakeholders are expected to deliver the EBI without diverging from instructions on how the intervention is to be delivered. This includes delivering the intervention in the same way (e.g., 30-min sessions), using the same format delivery and channel (e.g., group or individual setting), and with the same content as implemented in a prior setting (von Thiele Schwarz et al., 2019). How fidelity looks may then differ depending on if the EBI is manual based, where the delivery guidance is explicit and generally highly specified, or if it is a general practice or a broad standardized approach (e.g., Responsible Beverage Service training), where there may be general guidance or material available but no manual.

When an EBI is to be adapted to a new setting, the adaptations can vary widely across dose, length, content, format, setting, and target population (Escoffery et al., 2018; Moore et al., 2013). Target population adaptations can be adaptations to an EBI being implemented to a population for which the EBI was not originally designed (Lee et al., 2008). For example, Ahmad et al. (2007) took a manualized eye movement desensitization and reprocessing treatment for adults and adapted the material to suit children. Format adaptations are adaptations made to the delivery of an EBI. For example, Calbring et al. (2007) took a published self-help book and implemented the material in an online program. Pragmatic adaptations are adaptations to the session or material length. For example, Livheim et al. (2015) adapted a manualized ACT protocol to be given in 6 weeks rather than 8 weeks. Cultural adaptations are those where the EBI is adapted to fit the cultural norms of the target setting or population (Barrera et al., 2017; Resnicow et al., 2000). For example, Kling et al. (2010) developed a parent management training program in Sweden based on components in programs from the United States. However, they culturally adapted the program by removing time-outs, as this was not an accepted disciplinary approach in Sweden. Planned adaptation, however, should not be conflated with deviations from fidelity. When interventions undergo an adaptation process, they still need implementation with fidelity in order to achieve desired results.

Many social interventions in use today are locally developed (Bergström et al., 2022; Sundell et al., 2015). Thus, stakeholders have a third option available to them when approaching the provision of a social intervention: develop a novel intervention. Ultimately, the decision regarding which strategy to choose will rest on opportunities and barriers present within a given practice setting. An increased understanding of how these approaches impact client outcomes may help increase our understanding of how to transfer interventions across settings to achieve the widespread goal of impacting societal health and wellbeing. However, the available evidence supporting the approach to scale-up and spread—adapt an existing EBI to fit the local context, adopt an existing EBI with fidelity or develop a novel intervention—is limited.

To address the knowledge gap concerning the impact of these approaches to intervention delivery, we have previously conducted a meta-analysis of social interventions delivered in Sweden and Germany (Sundell et al., 2015). The findings across samples suggested that adapted, adopted and novel interventions were all effective. However, results from the Swedish sample showed that adapted interventions were the most effective but made up only a small proportion of the total number of included studies (approx. 10%). In addition, compared to adapted interventions and novel interventions, the group of interventions adopted without any adaptation had the lowest average effect size. This result was stable when controlled for variations in study design and sample size. However, the Swedish sample in the prior study included only 139 studies and the heterogeneity of interventions (e.g., intervention type, prevention level) limited the ability to draw conclusions about the subcategories of approaches (e.g., cultural adaptation, pragmatic adaptation). In addition, social interventions are used in different settings, (e.g., social work, mental health, somatic health). The discourse on evidence and what constitutes an evidence-based practice differ between settings as well as the relationship between research and practice, potentially impacting how choice and delivery of interventions are approached (Liedgren & Kullberg, 2022). Although interventions from different settings and prevention level were included in the prior meta-analysis, the limited sample size did not allow further investigation of these aspects.

The aim of the current study, therefore, is to build on the study described above by expanding and focusing on the Swedish findings by including a larger population of studies conducted in Sweden. We (a) further explore the effectiveness of social interventions by replicating an earlier meta-analysis comparing interventions imported from other contexts with adaptation, interventions adopted from other contexts without adaptation, and novel interventions that have been evaluated in controlled research and (b) compare the effectiveness of these different types of interventions in various types of settings. The following questions are asked:

What are the frequencies and characteristics of adapted, adopted, and novel interventions found in the population of controlled intervention research undertaken in Sweden and published in peer-reviewed journals between 1990 and 2019? To what extent are approaches to adaptation, adoption, and the development of novel interventions related to intervention outcomes? To what extent are adapted, adopted, and novel interventions implemented in different settings related to intervention outcomes?

Method

Design and Setting

We used meta-analytic techniques on the population of controlled research conducted in Sweden and published during the investigation period.

Eligibility Criteria

Inclusion Criteria

The publication reported on a study which evaluated a behavioral, psychological, or social intervention.

The publication reported on a study which was undertaken in Sweden and the principal investigator was employed by a Swedish university or organization.

The publication was from a scientific journal and was subjected to peer review prior to publication.

The study was published between 1990 and 2019.

The study design reported in the publication was a randomized or non-randomized controlled design.

The study included a behavioral, psychological, or social outcome measure.

Exclusion Criteria

The study reported did not include an outcome measure at the client, patient, or user level (e.g., only included measures of professional behavior change).

The study reported on an intervention designed to impact somatic health without including at least one behavioral, psychological, or social component.

The study reported on an intervention designed to impact pedagogical or didactical outcomes only (e.g., methods to teach children math skills).

Information Sources

This study is a retrospective analysis and based on information provided in the published literature.

Search Strategy

We conducted a whole-population study and used systematic methods to find all publications meeting our inclusion criteria. A previous study (Sundell & Åhsberg, 2016) demonstrated that “Sweden” or “Scandinavia” is rarely (approximately 10% of cases) used in the title or among key words in published articles on Swedish intervention research. Despite this we have attempted to follow as closely as possible PRISMA reporting standards (Supplementary information 1) and have supplemented these with additional efforts aimed at identifying the entire population of studies meeting our inclusion criteria. The starting point for the current study was the 139 published articles reporting findings from controlled research and included in Sundell and Åhsberg (2016). From this initial pool of studies, the search was carried out in five steps. First, all 191 researchers that were previously identified as having published at least one controlled study during the period 1990–2014 (Sundell & Åhsberg, 2016) were contacted directly. Second, bibliographic searches were conducted to identify research produced by these researchers during the period 2015–2019. Third, a broad search of the six largest Swedish research funders: the Swedish Research Council for Health, Working Life and Welfare (Forte), the Swedish Research Council for Sustainable Development (Formas), the European Research Council (ERC), the Swedish Research Council (VR), the Swedish Innovation Agency (Vinnova), and the Swedish Crime Victim Authority (BRÅ), database was searched for grants awarded to conduct controlled studies during the period 2015–2019 (search terms: evaluation, randomized). Fourth, we conducted a broad search of planned controlled studies registered at clinicaltrials.com (search terms: Swed*, random*, effect*, evaluat*, RCT) as well as studies registered with ISRCTN at www.isrctn.com (search terms: Swed*, mental and behavioral disorder). Fifth, the researchers and studies identified in the searches above were then included in searches in unified index EBSCO Discovery (Stockholm University library; search terms: Swed*, random*, effect*, evaluat*, RCT). Details regarding the search strategy are published previously (Sundell & Åhsberg, 2016).

The studies identified were then pooled and duplicates removed. If individual study results were reported in several publications, the first publication was used as the source for data extraction and coding.

Data Items and Data Extraction

Study Characteristics

Extracted study characteristics include date of publication, study setting (e.g., social services), design (e.g., randomized control), type of control condition (e.g., wait-list control), study population (e.g., adults), follow-up period, prevention level (e.g., indicated), study sample size (excluding dropouts), and effect size estimate. If study characteristic (e.g., setting, prevention level) was not explicitly stated in the publication, a determination was made based on the information provided. If we were unable to determine a characteristic by the information provided the data was coded as missing. Data was extracted by two authors with very good (83%) to perfect (100%) agreement on coding of individual items in a random sample of 40 studies (Cohen's kappa 0.63–1.0; Sundell & Olsson, 2021).

Included Intervention

If the study included more than two arms (i.e., experimental, control), the group that was highlighted as the primary focus of the study was selected. If there was no clear information about the priority of the groups, then the first described group was selected and included in this study.

Intervention Type

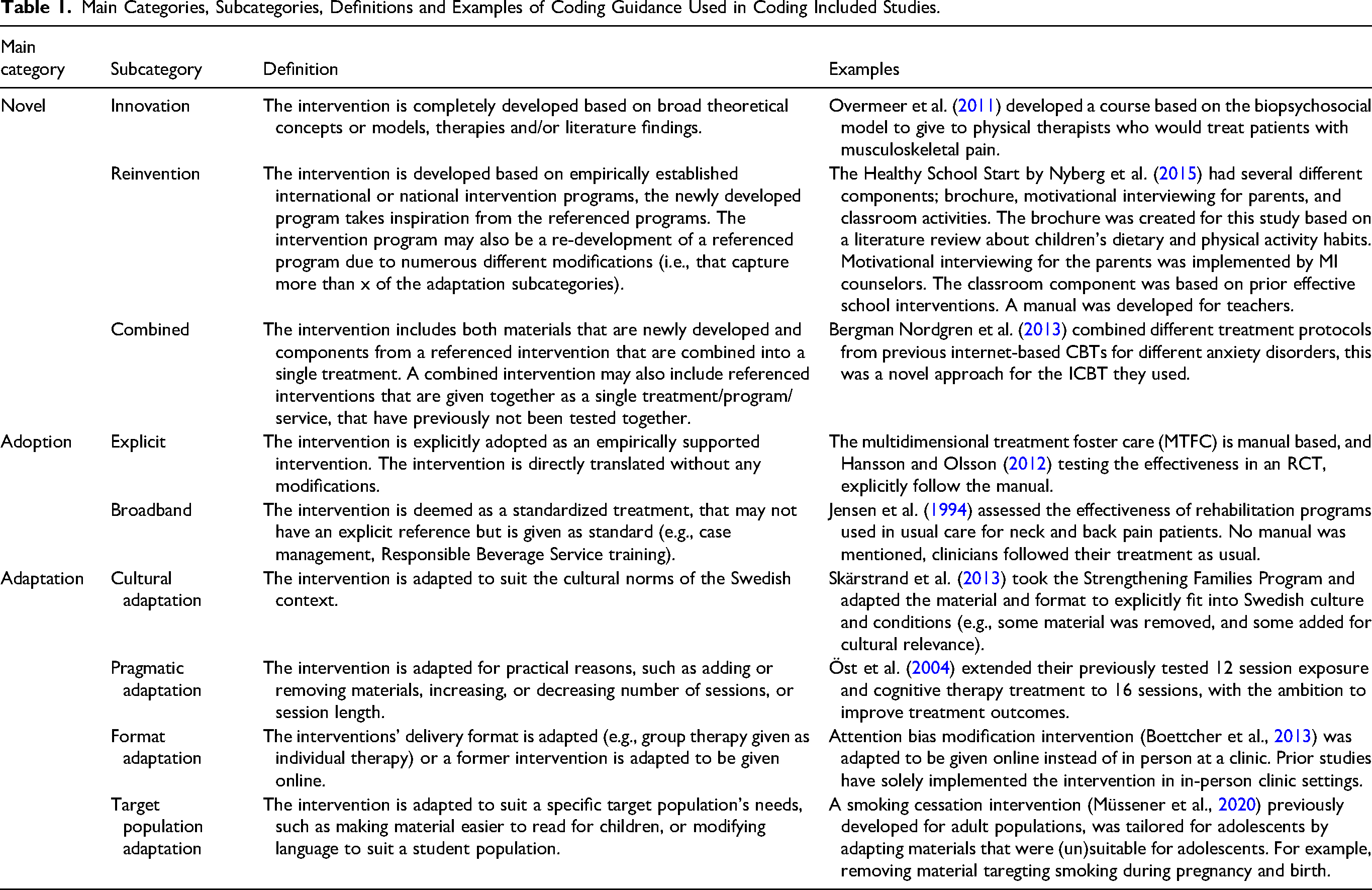

Main intervention type (i.e., adapted, adopted, novel) was further defined in terms of nine mutually exclusive subcategories (Table 1). Determination of intervention type was based on the information provided in the study. If authors referred to their own previous studies in their intervention descriptions, these studies were referred to for additional information. No additional attempts were made to obtain information outside of that published in the sources described above. The five authors independently coded intervention type, by using a coding manual (available from authors upon request). The coding manual was developed and tested iteratively by the authors. Any disagreements in coding and interpretation of the coding manual were discussed prior to final coding. Interrater reliability on coding of type of intervention was assessed as very good on a random sample of 40 studies (κ = .80 main; κ = .88 subcategories).

Main Categories, Subcategories, Definitions and Examples of Coding Guidance Used in Coding Included Studies.

Outcome Measures

The primary outcome measure in each study was used and when no such information was provided, the first measure listed in the method section of the publication was used in the analysis.

Methodolgocial Quality of Included Studies

We assess differences in risk of bias (Higgins et al., 2023) by comparing reported methodological quality across our three categories of intervention (adapted, adopted, novel). Data from each included study was extracted in relation to adherence to reporting standards as described in CONSORT (Moher et al., 2010), TREND (Des Jarlais et al., 2004), and Prevention Science (Flay et al., 2005). Items are reported individually across groups and in a combined index consisting of 16 items (for more information about assessment of methodological quality see Olsson & Sundell, 2023).

Statistical Procedures

Effect sizes were calculated according to (Lipsey & Wilson, 2000). When outcomes in a publication were reported to be statistically significant (p ≤ .05) but no additional information on effect size was offered (n = 4), the effect size was set to the average effect for the remaining sample (d = .48). When studies stated non-significant effects but without reporting actual effect size (n = 25), the effect size was set to 0. Since extreme effect sizes may have a disproportionate influence on conclusions drawn from statistical analyses, we checked for outliers by using Cook's distance (Cook, 1977; Walfish, 2006). Three effect sizes were identified as outliers. To reduce the impact of these outliers, their values were substituted by one that equaled the highest effect that fell within the normal range (d = 2.3). This had a negligible impact on study results when compared to analyses using the original data. Comprehensive meta-analysis software was used to synthesize data. As the sample exhibited a large proportion of random variance both in the total population (Q(522) = 2489.15, p < .0001), and on the reduced sample (Q(295) = 1589.91, p < .0001), analyses were interpreted from the random effects models. Publication bias and study heterogeneity in the population of studies included here has been investigated and reported in a prior study (Olsson & Sundell, 2023).

Sensitivity Analysis

As design and sample size have been shown to have significant effects on outcomes, the previous meta-analysis conducted analyses on the entire sample and on a reduced sample in which studies with a non-randomized experimental design and studies with sample sizes of less than 50 were removed (Table 3). We replicate these analyses to test the robustness of our results.

Results

General Description of the Included Studies

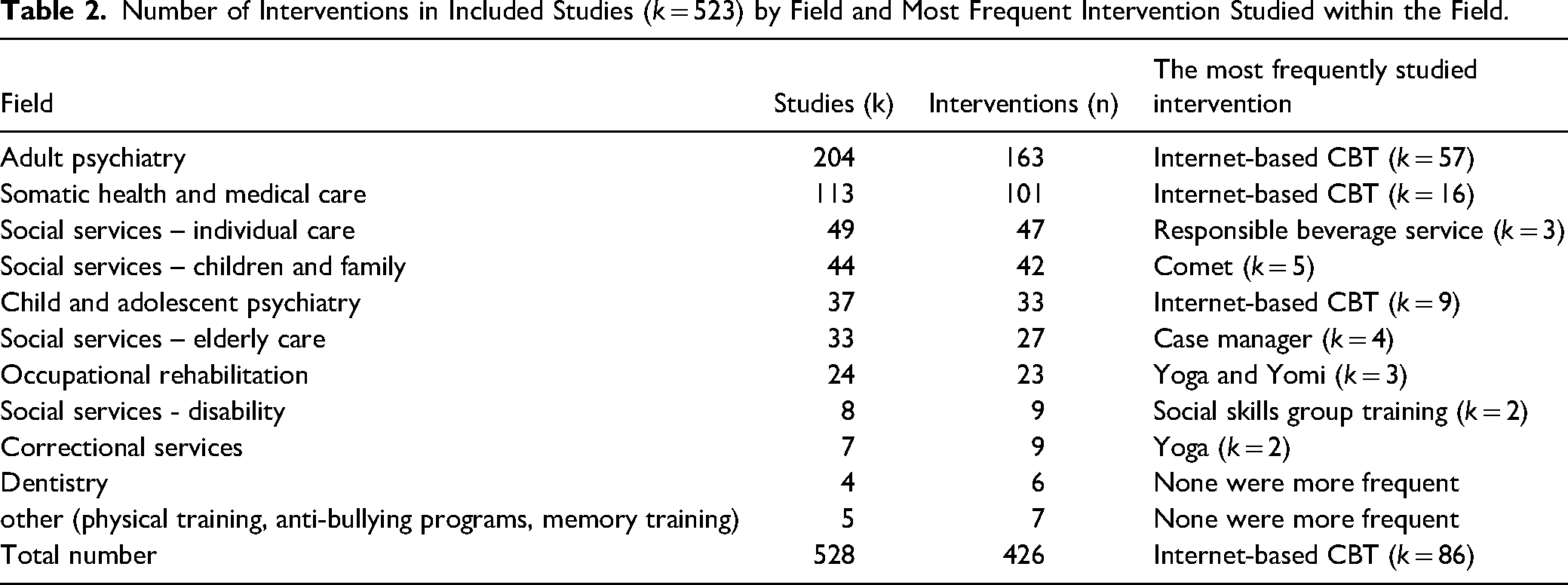

We found 523 unique studies fulfilling our inclusion criteria that included enough information for data extraction. In the 523 studies, 426 unique interventions were studied (Table 2). Table 2 provides an overview of the number of interventions evaluated within specific fields of practice as well as the intervention most often studied within each respective field.

Number of Interventions in Included Studies (k = 523) by Field and Most Frequent Intervention Studied within the Field.

The included interventions aimed to target 52 unique problem areas within 14 ICF categories. The most common areas included mental functions (n = 289; e.g., treatment of substance abuse), activities and participation in major areas of life (n= 63, e.g., treatment of chronic pain), activities and participation related to general tasks and demands (n= 50; e.g., exercise programs for the elderly); interventions designed to impact body functions (n = 21 e.g., internet-based treatment for stress-related incontinence and n = 23 e.g., excercise program in order to regain entrance to the labor market); and, activities and participation related to interpersonal interactions and relationships (n = 38; e.g., programs to prevent criminal behavior among youth).

In all, the studies included in the review included 296 different primary outcome measures. Of these, 72 were used in two or more studies. Examples include the Alcohol Use Disorders Identification Test (AUDIT; 14 studies), the Insomnia Severity Index (ISI; 12 studies), and the Activities of Daily Living (ADL) Staircase (8 studies).

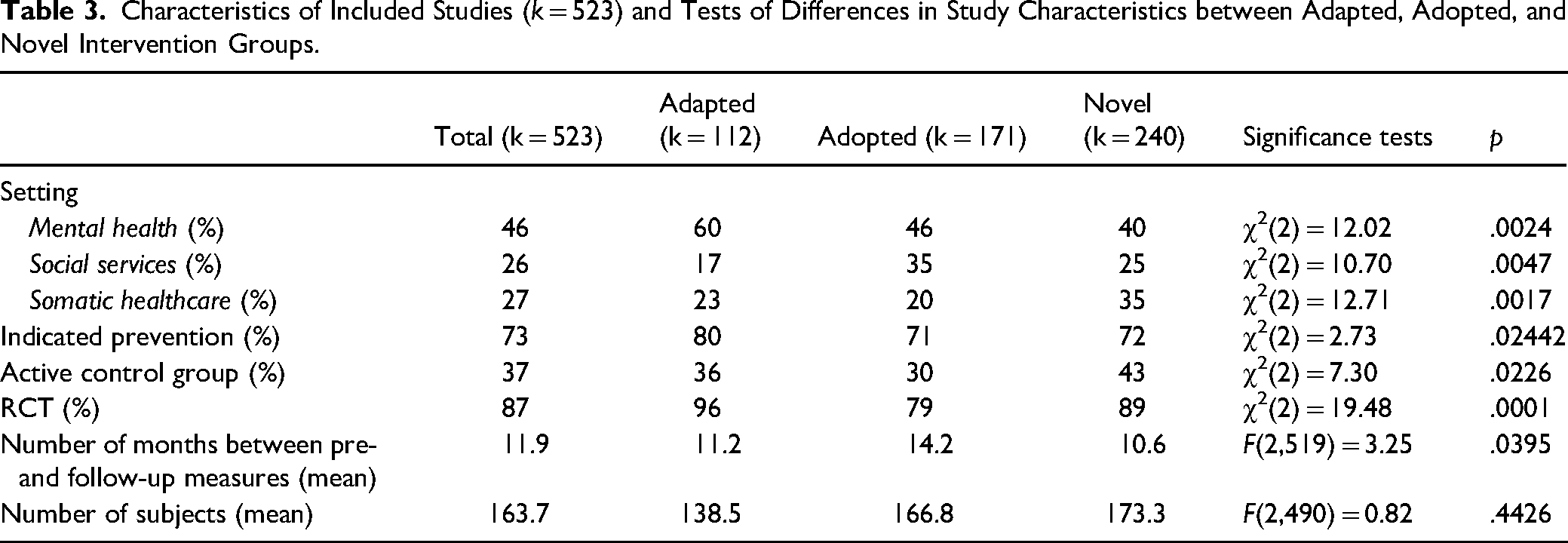

Frequencies and Characteristics of Adapted, Adopted, and Novel Interventions

Of the 523 studies, 21% (k = 111) described adapted interventions, 33% (k = 170) adopted interventions, and 46% (k = 239) novel interventions (Table 3). Table 3 provides a summary of study characteristics. The most common setting for the studies was within mental health 46% (k = 240). The majority (87%, k = 454) of the studies were RCTs. Most control group participants (63%) received another intervention (e.g., a competing intervention), and the remainder (37%) received no intervention or were on a waiting list. Most studies (55%) had a follow-up period of 12 months or more after baseline (M = 11.9 months, SD = 14.6) and included on average 164 study subjects. Significant differences between adapted, adopted, and novel intervention studies were found across a range of study characteristics (Table 3).

Characteristics of Included Studies (k = 523) and Tests of Differences in Study Characteristics between Adapted, Adopted, and Novel Intervention Groups.

Effect Sizes for Adapted, Adopted and Novel Interventions

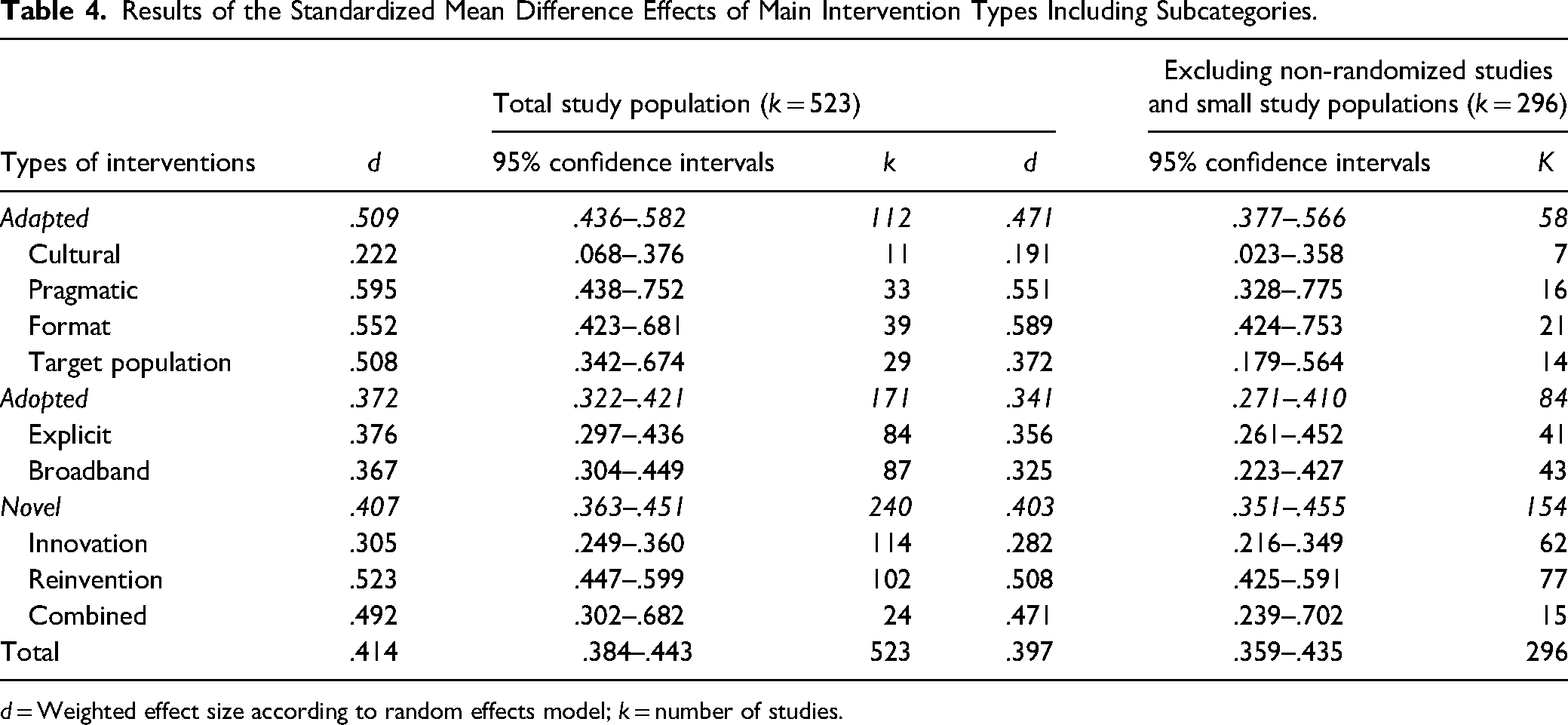

Adapted interventions were found to have the highest standard mean effect size (d = .509), followed by novel interventions (d = .407), and adopted interventions (d = .372; Table 3). There were significant differences between adapted and adopted interventions, Q(1) = 9.35, p < .002, and between novel and adopted interventions, Q(1) = 5.97, p < .05. No significant difference was found between adapted and novel interventions, Q(1) = 1.08, p > .05.

The result for the reduced sample was similar to that of the full sample (Table 4). The effect size was significantly higher in studies of adapted interventions compared to adopted interventions, Q(1) = 4,74, p < .05. There were no significant differences between adopted and novel interventions, Q(1) = 1,974, p > .05, or between adapted and novel interventions, Q(1) = 1.53, p > .05.

Results of the Standardized Mean Difference Effects of Main Intervention Types Including Subcategories.

d = Weighted effect size according to random effects model; k = number of studies.

Effect Sizes for Adapted, Adopted and Novel Intervention by Subcategory

Adapted Interventions

For adapted interventions (Q(3) = 14.18, p < .003), pragmatic adaptations had the highest standard mean effect (d = .595), followed by format adaptations (d = .552), target population adaptations (d = .508), and finally cultural adaptations (d = .222) (Table 3). In the analysis of the reduced sample (Q(3) = 12.83, p < .005), the pattern of results was not maintained. Here, format adaptations were found to have a higher standard mean effect (d = .589) than pragmatic adaptations (d = .551).

Adopted Interventions

There were no significant differences between the two subcategories of adopted interventions in the full sample, Q(1) = 0.35, p > .05, or in the reduced sample, Q(1) = 1.96, p > .05 (Table 3).

Novel Interventions

Out of the three subcategories of novel interventions (Q(2) = 21.96, p < .0001), reinterventions had the highest standard mean effect (d = .523), followed by the combined (d = .492), and finally innovations (d = .305). This pattern of results was maintained in the reduced sample (Q(2) = 17.95, p < .0001; Table 4).

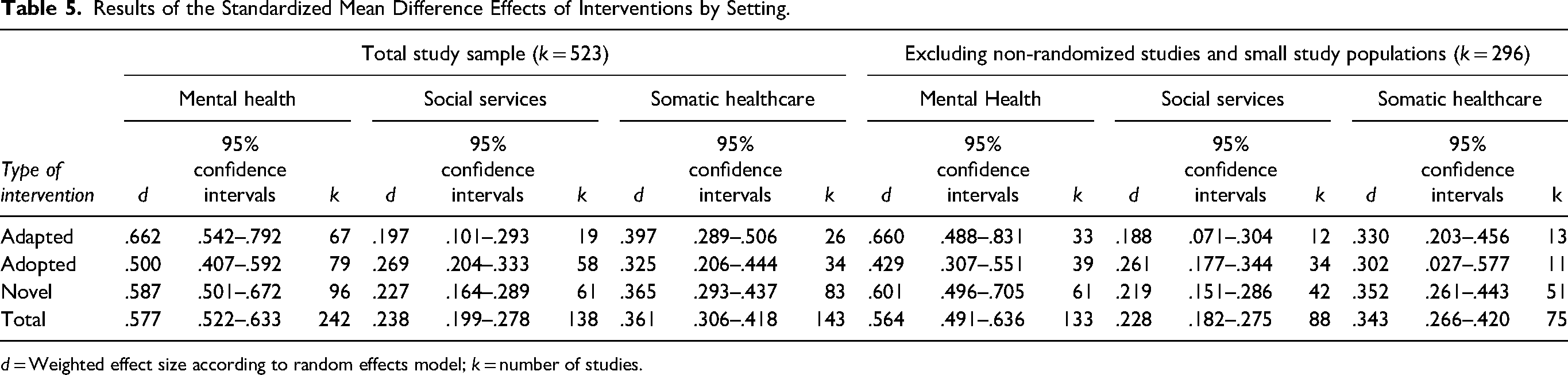

Effect Sizes by Setting

Interventions provided in the mental health setting showed the highest standard mean difference, Q(2) = 95,12, p < .0001 (d = .577), followed by somatic healthcare (d = .361) and last social services (d = .238) (See Table 5).

Results of the Standardized Mean Difference Effects of Interventions by Setting.

d = Weighted effect size according to random effects model; k = number of studies.

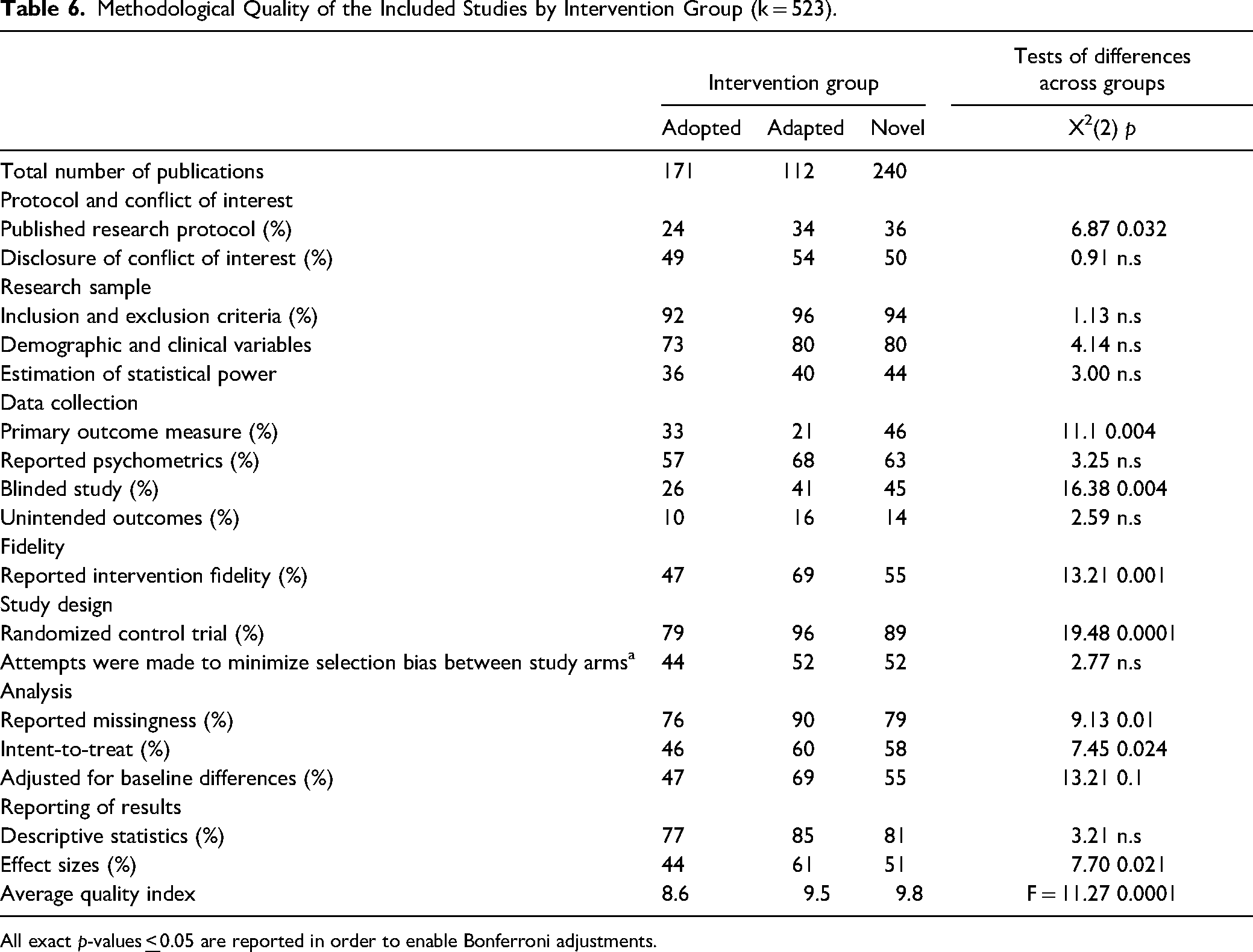

Methodological Quality of Included Studies

Methodological quality across groups of adapted, adopted, and novel interventions can be found in Table 6. Adopted (M = 8.6) interventions had significantly lower methodological quality compared to both adapted (M = 9.5) and novel (M = 9.8) interventions (p < 0.001). Adapted and novel interventions more often used blinding, reported intervention fidelity, used randomization (RCT), conducted intent to treat analyses and adjusted for baseline differences (adapted 41%, 69%, 96%,60%, 69%; novel 45%, 55%, 89%, 58%, 55%) compared to adopted interventions (26%, 47%, 79%, 46%, 47%, 0.001 ≥ p ≤ 0.024).

Methodological Quality of the Included Studies by Intervention Group (k = 523).

All exact p-values

Discussion and Applications to Practice

The purpose of this study was to explore the effectiveness of social interventions by replicating and expanding on an earlier meta-analysis comparing novel, adapted, and adopted interventions. Our results largely replicate our earlier findings. As in the earlier study, we find that novel interventions make up the largest proportion of interventions studied, followed by adopted and adapted interventions. Novel interventions are undoubtedly developed to meet a perceived need in society and this option for intervention delivery is likely preferred when access, awareness, or knowledge of EBIs for specific populations, targeting specific outcomes is scarce. Indeed, although many systematic reviews have identified interventions with strong empirical support for their effectiveness, systematic reviews also consistently find few to no clearly effective interventions across several populations and/or outcomes (e.g., Olsson et al., 2021; Petersen et al., 2022).

We aimed to investigate the extent to which approaches to adaptation, adoption, and the development of novel interventions were related to intervention outcome. We find that adapted interventions produced the largest standard mean effect size followed by novel interventions and adopted interventions. This is in line with a body of research reporting successful adaptations to EBIs in more homogeneous samples (Gladstone et al., 2021; Rajabiun et al., 2021; Stahmer et al., 2019). These findings were stable when we controlled for study design and sample size. In addition, the absolute standard mean effect sizes across intervention type found in the current study are higher than those reported in the prior meta-analysis. Despite our finding of differences in standard mean effect sizes across adapted, adopted, and novel categories, the standard mean effect sizes in all categories fell within the medium range and were significantly different from zero which means on average adapted, adopted, and novel interventions all produce effects in controlled research. It should be noted that uncertainty around the point estimate was large for all categories of interventions. In addition, adopted interventions as a group had the lowest methological quality and as such the highest risk of bias across groups of intervention. It is, however, unclear as to how this may have effected results.

Although statistically significant, the measured difference among the three types of interventions is rather low (d's ranging from .37-.51). Whether this is a meaningful difference is an important question as the mean effect differs by just more than a d of .13 between adapted and adopted interventions. Another way to describe this difference is by converting d to number needed to treat, with the result of 26.08. Or put differently, of one hundred treated individuals four more are successful in adapted interventions compared to adopted interventions. Ethically, one could argue that this difference is not negligible, especially when extrapolated to an entire population, but a more definite conclusion would require an economic evaluation of the included interventions, which was not possible in this study.

Additionally, we aimed to expand the prior meta-analysis by exploring the extent to which the setting within which adapted, adopted, and novel interventions were implemented was related to intervention outcome. We found the same pattern of results in the standard mean effects in mental health and somatic healthcare settings. Although these results were fairly stable when controlling for study design and sample size within mental health, they were not stable within somatic healthcare. This, however, may be due to the small number of studies within the adapted and adopted categories in these analyses. Studies conducted within the social services showed a different pattern of results. In the social service setting, adopted interventions evidenced a higher standard mean effect, followed by novel interventions. Adapted interventions had the smallest mean effect within a social service setting. These results were stable when we controlled for study design and sample size. This result may be due to the relatively low number of adapted interventions within the social services setting found in this study. It may, however, be related to specific characteristics of the interventions, delivery, or research environment within the social services context (Olsson & Sundell, 2016; Sundell & Olsson, 2021). The extent to which there are any specific characteristics of social service interventions or the social services setting that might impact intervention effectiveness needs to be further investigated.

As in the prior meta-analysis, we investigated subgroups of adapted, adopted, and novel interventions. We have also refined and expanded the range of subgroups in this study. Within adopted interventions, cultural adaptations (i.e., adaptations made to the intervention to suit cultural norms) were found to have the fewest number of studies within and across subgroups as well as the smallest standard mean effect size within and across subgroups. This is interesting as this area of adaptation is arguably the most prominent within the literature on intervention adaptation (Barrera et al., 2017; Gonzales, 2017; Mejia et al., 2017). It should be noted, however, that much of the extant literature on cultural adaptation addresses cultural adaptations to EBIs for use with minority populations within the same setting and/or context within which the EBIs were developed, as opposed to cross-cultural adaptations (i.e., when EBIs are transported to new cultural contexts such as countries) which is what we report in the current study. This type of transfer introduces not only changes in the cultural norms of target populations but can also mean changes in broad societal norms and how services are delivered. Similarly, within the novel subgroup, combined interventions (see Table 1) were relatively few and innovative interventions exhibited the lowest standard mean effect size within the novel subgroup. As these subgroups represent a refinement and expansion of coding definitions, we are unable to compare these results to the prior meta-analysis. However, across subgroups the results of the current study find standard mean effect sizes to be higher than that of the prior meta-analysis as well as exhibiting more variability in standard mean effect sizes across subgroups.

Although this study has several strengths there are also four main limitations that should be highlighted. First, although intensive work was done to develop and agree upon the coding manual, the boundaries between the subcategories were not always clear-cut. This introduces not only a methodological challenge but also reflects the complexity of intervention development, evaluation, and spread. The ability to conduct valid and reliable meta-analyses is constrained by the information contained in the report of original research (for a short discussion see e.g., Olsson et al., 2022); however, the intervention development and/or adaptation process may not always be clearly described in publications describing research on interventions. It is a common finding, for example, that despite methodological guidance regarding the inclusion of clear descriptions of interventions assessed in evaluative research (Schulz et al., 2010), investigations and reviews on the effectiveness of interventions consistently point to shortcomings in the extent to which interventions are developed and described (Glasziou et al., 2008; Maden et al., 2017; Olsson et al., 2023). In this study, it is therefore not unlikely that we have categorized interventions as novel when they are in fact adapted interventions. This is due to at least two reasons. First, it is difficult to determine when an intervention is in fact novel as opposed to inspired by prior research on existing interventions. In addition, in reporting intervention studies, authors may limit their descriptions of the intervention development process in order to focus on the reported research findings. Interviews with intervention developers have uncovered for example that the transparent reporting of adaptations in scientific journals involved a conflict between transparency and practical concerns such as word count (von Thiele Schwarz et al., 2018). This in turn may mean that the category “novel interventions” in this study is inflated. Despite this, the interrater reliability between raters was high.

Second, we did not assess the extent to which within study fidelity was tracked or maintained. Although our analysis includes adapted interventions, it should be stressed that these represent intentional a priori efforts to adapt EBIs prior to implementation (as opposed to therapist or program drift). Thus, the findings do not provide information about the impact of adaptations at other time points, for example, when done when the intervention is used in practice. We are, however, unable to assess the extent to which the adapted, adopted, and novel interventions included were implemented with fidelity within each study.

A third challenge is that there are several possible confounders related to method and design present in this study. For instance, the review included different subsets of psychosocial interventions, where some aspects of the intervention itself might be related to the degree and types of adaptation. In the present analysis, we controlled for variation in methodological quality in the included studies by re-analyzing data when articles reporting non-randomized trial designs or low sample sizes were excluded. This did not impact main results and indicates robust findings. However, the impact of other possible confounders remains to be investigated in future research.

Finally, it should be noted that our analysis includes research conducted in Sweden only. The extent to which these findings are generalizable to other contexts and settings is unknown. In addition, we were unable to investigate the reasons why stakeholders choose one approach over another. One could choose to develop a novel intervention when solid evidence on existing interventions is missing or the development of novel interventions may be made when stakeholders lack the ability to search for research on existing interventions and their effectiveness. Cost may also impact choice of approach to intervention development, adaptation or adoption. We were however unable to investigate this in the current study.

The research to practice pathway is based on the idea that interventions are developed and tested and used in the way they were designed. The results reported here are in line with a growing body of evidence suggesting that there is a need to take the fit between the EBI and the context into account when implementing interventions, as shown in the superiority of adapted EBIs and interventions specifically developed for a certain context. This complicates the accumulation of knowledge across studies, with implications for how primary studies as well as reviews of intervention studies are conducted. Primary studies that illuminate what works for whom and how, that is, measurement of the moderators and mediators of intervention effects that are adapted and measured across studies will be vital for ensuring the possibility for an accumulation of knowledge across studies. This type of measurement is also important for increasing our understanding of the core components of interventions. Review methods that can take the variation between cases into account are called for. In conclusion, we find that standardized mean effects for interventions across categories are significantly different than zero, indicating that adapted, adopted, and novel programs all provide benefit to service users. Standardized mean effects for adapted interventions were, however, larger than that for novel and adopted programs and smallest for adopted programs.

Footnotes

Author Contributions

Authors have contributed to this manuscript in the following ways: Tina M Olsson Author’s role: Investigation, data curation, writing original draft, review, and editing, funding acquisition.

Ulrica von Thiele Schwarz: Writing original draft, review, editing and funding acquisition.

Henna Hasson: Writing original draft, review, editing and funding acquisition.

Emily G Vira: Formal analysis, writing original draft, review, and editing.

Knut Sundell: Conceptualization, methodology, formal analysis, investigation, data curation, writing original draft, review, and editing, funding acquisition. All authors read and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for this research has been provided by two grants from the Swedish Research Council for Health, Working Life and Welfare (Forte; Dnr: 2019-01737 and 2018-01315) as well as a grant from the Swedish Research Council (Dnr: 2016-01261).

The datasets used and/or analyzed in the current study are available from the corresponding author on reasonable request.