Abstract

Purpose

The purposes of this systematic review were to systematically summarize components in existing school-based child sexual abuse (CSA) prevention programs and identify predictors for program effectiveness.

Method

Building upon the most comprehensive systematic review on this topic, we conducted systematic searches in both English-language from September 2014 to October 2020 and Chinese-language from inception to October, 2020. Meta-regressions were performed to identify predictors for program effectiveness.

Results

Thirty-one studies were included with a total sample size of 9049 participants. Results from meta-analyses suggested that interventions are effective in increasing participants’ CSA knowledge as assessed via questionnaires (g = 0.72, 95% CI [0.52–0.93]) and vignette-based measures (g = 0.55, 95% CI [0.35–0.74]). Results from meta-regression suggested that interventions with more than three sessions are more effective than interventions with fewer sessions. Interventions appear to be more effective with children who are 8 years and older than younger children.

Discussion

CSA is a global issue that has significant negative effects on victims’ physical, psychological, and sexual well-being. Our findings also provide recommendations for future research, particularly in terms of optimizing the effectiveness of school-based CSA prevention programs, and the better reporting of intervention components as well as participant characteristics.

Introduction

Child sexual abuse (CSA) refers to “the involvement of a child in sexual activity that he or she does not fully comprehend, is unable to give informed consent to, or for which the child is not developmentally prepared and cannot give consent, or that violates the laws or social taboos of society” (WHO, 2006, p.62). CSA is a serious problem for children worldwide. The most recent meta-analysis of 55 studies from 24 countries showed that the prevalence of CSA (defined as non-contact abuse, contact abuse, forced intercourse, and mixed sexual abuse) ranged from 8% to 31% for girls and 3% to 17% for boys (Barth, Bermetz, Heim, Trelle, & Tonia, 2013). CSA is associated with a range of adverse psychological, sexual, and economic consequences. For example, one meta-analysis found a significant relationship (d = .32 to d = .67) between CSA and psychological distress (e.g., anger, anxiety, and depression) as well as dysfunction in adult women (e.g., revictimization, self-mutilation, sexual problems, substance abuse, and suicidality; Manglio, 2009). The global costs related to physical, psychological, and sexual violence against children are estimated to be between 3% and 8% of the global gross domestic product (GDP) (Pereznieto, Montes, Routier, & Langston, 2014). As home to the world’s second-largest child population (UNICEF, 2014), China experiences a significant burden in terms of CSA. Findings from East Asian and Pacific regions indicate that the economic costs of CSA are substantial, with one study estimating the value of Disability-Adjusted Life Years (DALYs) lost due to CSA at $18,378.3 million which accounts for 0.39% of the Gross Domestic Product (GDP) in China alone (Fang et al., 2015).

School-based universal interventions for preventing CSA are variously designed to provide students with skills that can be used to recognize, react, and report abuse (Finkelhor, 2009) and thereby reduce the occurrence and re-occurrence of sexual abuse in children and adolescents (National Institute of Justice, 2012). Most school-based personal safety training programs share common goals, including teaching children about body integrity, body safety, appropriate and inappropriate touching, gender roles, and private body parts (Bronson, 2019). In acknowledgment of the limited power that children have themselves to prevent experiencing CSA, these programs were developed to empower children by enhancing their knowledge about CSA and personal safety and developing their competence in self-protective behaviors, thereby helping them adopt strategies to avoid potentially unsafe situations and disclose their experiences to protective adults (Kim & Kang, 2017). In general, these interventions can be classified into two categories (Kopp & Miltenberger, 2009): (a) information-based trainings (IBT) in which information is delivered to children by an instructor in class using materials such as video tapes, plays, or activity books; and (b) behavioral skills trainings (BST) in which similar information is delivered but is taught using modeling, active rehearsals, and application of self-protection knowledge and skills to problem-based (i.e.,“what if…”) scenarios.

Reviews of the literature over almost three decades, consistently show that children who have received school-based CSA interventions show greater gains in knowledge about CSA prevention concepts than children who have not received such programs (e.g., Kenny, Capri, Ryan, & Runyon, 2008; MacMilan, MacMillan, Offord, Griffith, & MacMillan, 1994; Walsh, Zwi, Woolfenden, & Shlonsky, 2015). One gold standard systematic review of 24 studies with 5802 participants in primary (elementary) and secondary (high) schools in Canada, China, Germany, Spain, Taiwan, Turkey, and the United States found that school-based CSA interventions were effective in increasing participants’ knowledge of CSA and self-protective skills and did so without increasing or decreasing their fear or anxiety (Walsh et al., 2015).

To develop and deliver effective school-based CSA interventions, program developers, practitioners, school systems, and policymakers need to understand what works, for whom, and how intervention effects can be optimized. By identifying and integrating components associated with greater effectiveness, interventions can be strengthened and resource wastage associated with implementation of sub-optimal programs can be reduced (Kaminski, Valle, Filene, & Boyle, 2008). This is particularly important when delivering such interventions in low and middle-income countries (LMICs) facing economic hardship, severe resource restrictions, and service gaps. To date, however, despite a growing body of research on school-based CSA interventions, there has been little attempt to systematically identify the content and structural intervention components which may contribute to the effectiveness of these programs.

To address this research gap, this systematic review, for the first time in this field, will use Intervention Component Analysis (ICA; Sutcliffe, Thomas, Stokes, Hinds, & Bangpan, 2015) to systematically identify intervention components in existing school-based CSA interventions and establish which component(s) are associated with improved outcomes for children who participated. To this end, advancing on the substantial work undertaken by Walsh et al. (2015), the aims of this review are to: (a) map the intervention components of school-based CSA interventions that are aimed at improving children’s knowledge and behaviors in relation to CSA prevention; and (b) identify which intervention component(s) appear(s) to contribute to the most significant improvements in knowledge and behavior outcomes for participating children.

Method

To meet the above aims, the following research methods were employed: (a) a systematic review and meta-analysis of overall program effectiveness to update the most recent and comprehensive review of school-based CSA intervention programs (Walsh et al., 2015) by identifying studies published between September 2014 and October 2020, and widening the inclusion criteria to include studies published in the Chinese-language databases from inception to October 2020; (b) an intervention component analysis to classify and synthesize intervention components in existing school-based CSA interventions; and (c) a meta-regression to identify the intervention components contributing to program effectiveness.

Data Sources, Inclusion Criteria, and Study Identification

New evidence should be added to existing systematic reviews to ensure their currency, and updating existing systematic reviews is more efficient than developing a new protocol to address the same research question (Garner et al., 2016). For example, the Cochrane Review policy recommends that systematic reviews should be updated within 2 years to ensure the up-to-dateness of reviews. This also avoids potential resource wastage or misleading conclusions (Garner, et al., 2016). Elliott et al. (2017) introduced the concept of “living systematic reviews, which are a novel approach to continually updating the reviews and incorporating new evidence when it becomes available” (p.24). To this end, given the consistently evolving evidence relating to school-based CSA prevention programs, in order to keep the evidence as up-to-date as possible, we updated and advanced on the substantial work undertaken by Walsh et al. (2015), and replicated the literature search strategy and screening methods used.

Following Walsh et al.’s (2015) inclusion and exclusion criteria, this review included only studies that had utilized the most rigorous evaluation designs, randomized controlled trials (RCTs), cluster-RCTs, or quasi-RCTs in which participants were allocated to an intervention or control group using explicit methods such as using day of the week, alphabetical order, or other sequential allocation such as by class or school (Walsh et al., 2015). The review included only studies of school-based CSA intervention programs that focused on improving knowledge of CSA and CSA prevention concepts, or skill acquisition in self-protective behaviors. Included studies were undertaken only with students aged five to 18 years, who were enrolled in primary or secondary schools.

A clear limitation of the Walsh et al.’s (2015) review was that it included mostly papers published in English for the period up to and including September 2014. The search strategy for the current review attempted to also capture studies from some of the world’s most populous regions by searching for both English-language and Chinese-language papers because there is a developing body of research on CSA prevention in China and the language skills were available to the review team. English-language databases were searched for the period September 2014 to October 2020. Chinese-language databases were searched from inception to October 2020. In all, twenty-three electronic databases were searched to identify studies meeting the pre-defined inclusion criteria.

Eighteen English-language databases were searched: Applied Social Sciences Index and Abstracts, British Education Index, Campbell Collaboration Library, Cochrane Central Register of Controlled Trials (CENTRAL), Cochrane Library, CINAHL, ClinicalTrials.gov, DARE, ERIC, EMBASE, Global Health, ICTRP: apps.who.int/trialsearch/, Medline, ProQuest Dissertation, PsycINFO, Social Sciences Citation Index, Social Services Abstracts (ProQuest), and Sociological Abstracts. Five Chinese-language databases were searched: CNKI Academic Journals Full-text Database, China Doctoral Dissertations Full-text Database, China Masters’ Theses Full-text Database, Taiwan Electronic Periodical Services, and Wanfang Database. Customized search strategies were developed for each database as search terminology varied across databases. The search strategies are available upon request. We also consulted with a subject librarian in the searching process. Reference lists of all included studies and previous systematic reviews were hand-searched for eligible studies not identified in database searches. Thirteen authors of included studies were contacted via email to identify further ongoing or unpublished trials, of which nine replied with information resulting in the identification of one additional study.

To reduce the potential for missed studies, it is recommended that two or more reviewers undertake the screening process (Stoll et al., 2019). As such, the titles and abstracts of records identified in the database searches were screened against inclusion and exclusion criteria by the first author (ML) and double checked by a second reviewer (YW), both of whom are native Mandarin Chinese speakers and fluent in English. Those studies considered to be not relevant were excluded. Disagreements in judgments between the two reviewers were resolved by discussion, aiming for consensus. The Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines (Moher, Liberati, Tetzlaff, & Altman, 2009) was used in all stages of the review process. The systematic review protocol was registered with PROSPERO (ID: CRD 42019140564).

Data Extraction

Data were extracted to align with the three study methods as noted above: (a) meta-analysis to update evidence on overall program effectiveness; (b) intervention component analysis to classify intervention components; and (c) meta-regression to identify intervention components contributing to program effectiveness.

Two reviewers (ML and YW), working independently, extracted all data by coding each paper line-by-line to ensure that all available information was captured. Where more than one publication referred to the same study or dataset, we used the relevant paper with the most comprehensive and clear description of methods and outcome reports from a peer-reviewed journal as the primary reference in accordance with systematic review guidelines (Higgins et al., 2011).

We extracted characteristics of the included studies including authors, study design, country, intervention outcome measures, and participant age. We extracted intervention outcome data for the following outcomes: knowledge of CSA and/or CSA prevention concepts typically measured via questionnaires or vignettes. Consistent with Walsh et al. (2015) review, we extracted questionnaire and vignette measures as separate knowledge outcomes because these required different methods of administration and measured different domains of children’s knowledge: factual knowledge and applied knowledge (Walsh et al., 2015).

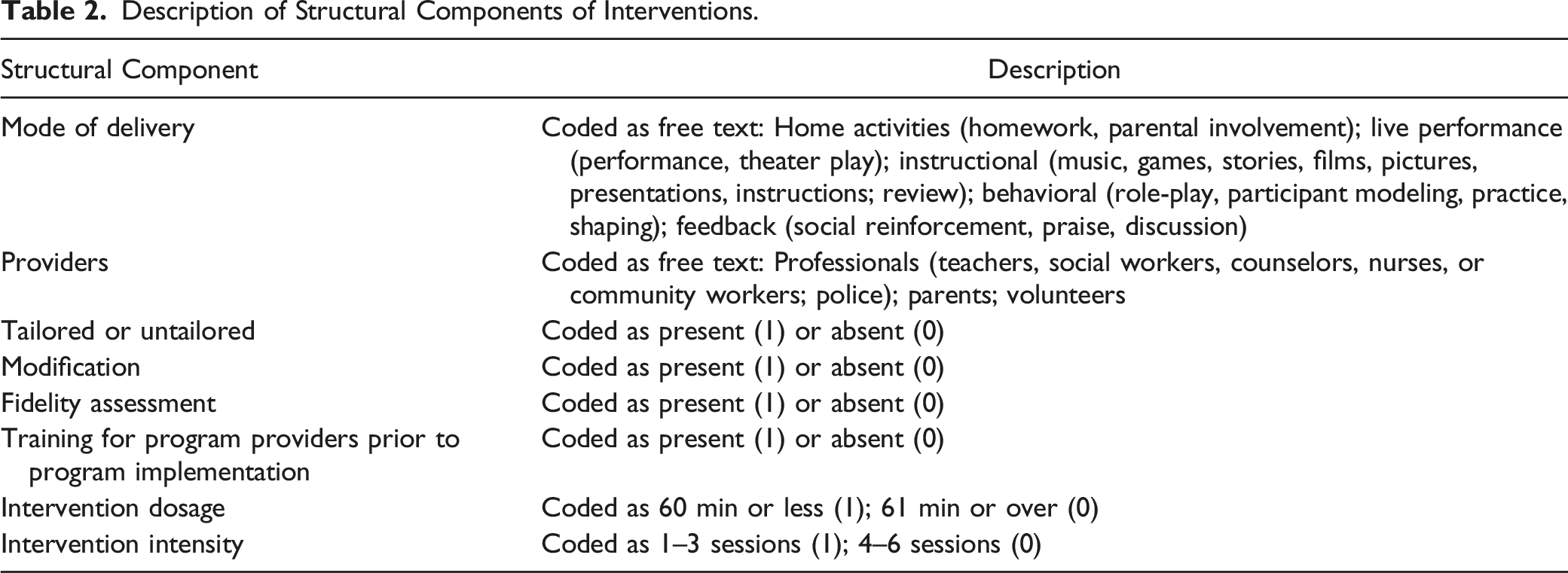

We extracted two categories of intervention components: structural components (e.g., mode of delivery, location, and provider) and content components (e.g., the information delivered during sessions). For structural components, we adopted the Template for Intervention Description and Replication (TIDieR) checklist (Hoffmann et al., 2014). The purpose of the TIDieR checklist is to ensure that interventions are described in sufficient detail to enable replication (Hoffmann et al., 2014). The TIDieR checklist is an official extension of the CONSORT (Item 5; Moher et al., 2010) and SPIRIT statements (Item 11; Moher & Chan, 2014). We extracted the following TIDieR checklist intervention characteristics from study reports: why (rationale of the intervention), what (materials used in the intervention), who (intervention providers), how (modes of delivery), where (location of intervention), when and how much (duration and number of sessions), tailoring (the adaptation of the intervention during the study), modification (the modification of the intervention during the study), and how well (fidelity assessment of the intervention during the study). For content components we identified and inductively coded the themes or concepts addressed in the programs (Sutcliffe et al., 2015) and then summarized and categorized these components into ten dimensions. We contacted one study author for further clarification of the key information (Bustamante et al., 2019). We entered the extracted data into an Excel spreadsheet and imported this to R Studio (RStudio Team, 2015).

Data Analysis

We followed a three-stage data analysis process. To update evidence of overall program effectiveness, the first stage comprised an effectiveness synthesis, which aimed to identify statistically significant differences in the outcomes reported in the study results and to understand the differences between interventions in terms of the effectiveness of the component parts (Sutcliffe et al., 2015). To calculate the overall effect size for the primary outcome of interest—CSA knowledge gains—a random effect model was used for the purpose of meta-analysis due to the anticipated variability in the true effect as a result of differences in interventions, teaching methods, and sample characteristics between studies. A random effect model is useful for providing an overall effect estimate and characterizing the heterogeneity of effects across studies (DerSimonian & Laird, 2015).

We estimated the amount of residual heterogeneity and ratio of true to total variance I2. The I2 is interpreted as the proportion of the observed variability in a set of effect sizes that reflects real differences among true effects (Borenstein, Cooper, Hedges, & Valentine, 2009). A value of 0% indicates no observed heterogeneity, and I 2 values of 25%, 50%, and 75% are considered as indicating low, moderate, and high heterogeneity, respectively (Higgins, Thompson, Deeks, & Altman, 2003).

Using methods not previously used in examining the effectiveness of school-based CSA interventions, to identify intervention components, and to understand variation in participant outcomes that may be attributed to characteristics of interventions, in the second stage we deductively coded, categorized, and described intervention structural components using the TIDieR checklist items as detailed above, and further explained below. We inductively coded, categorized, and described intervention content components that appeared in narrative text of the study reports. Once content components had been identified, we then mapped each study report against these newly-devised categories.

As an advance on previous reviews, using meta-regression we also explored intervention components as moderator variables, an approach that has not previously been possible owing to insufficient information provided in study reports (Davis & Gidycz, 2000; Walsh et al., 2015). Meta-regression allows for the evaluation of one or more covariates simultaneously (Baker et al., 2009). To identify the components that contribute to program effectiveness, in the third stage, with structural and content components clearly identified through the TIDieR checklist and ICA coding, we ran meta-regression to explore sources of heterogeneity among studies by testing whether effect size estimates varied significantly by intervention structural and content components. These components included: (a) modes of delivery; (b) providers; (c) the level of adaptation of the intervention (tailored or untailored); (d) the level of modification of the intervention; (e) fidelity assessment; (f) training for program providers prior to the program implementation; (g) intervention dosage; and (h) intervention intensity. We also examined whether participants’ characteristics contributed to program effectiveness. To further examine whether combinations of intervention components and study characteristics contributed to program effectiveness, we explored the association between effect sizes and intervention content components as well as study characteristics using multiple meta-regression (Harrer, Cuijpers, Furukawa, & Ebert, 2019).

Calculation of Effect Sizes

We calculated standardized effect sizes for all outcomes of interest. When possible, Hedges’ g was calculated for between-group effects for all continuous outcome measures. Where studies reported outcome data at mid-intervention, post-intervention, and/or at follow-up periods, the immediate post-intervention timepoint was used. Where multiple studies reported the outcomes for one trial, the study that reported the outcome of most relevance to the current review was used, namely, CSA knowledge gains or self-protective skills. In addition, some trials had multiple treatment arms (i.e., these studies did not conform to the standard two-arm “intervention group” vs. “control group” design and included two or more intervention groups). For the purpose of including the results of these studies in the meta-analysis, we followed the formulae for combining groups recommended by Higgins and Green (2011) and synthesized the results of the intervention arms to obtain one single comparison with the control group.

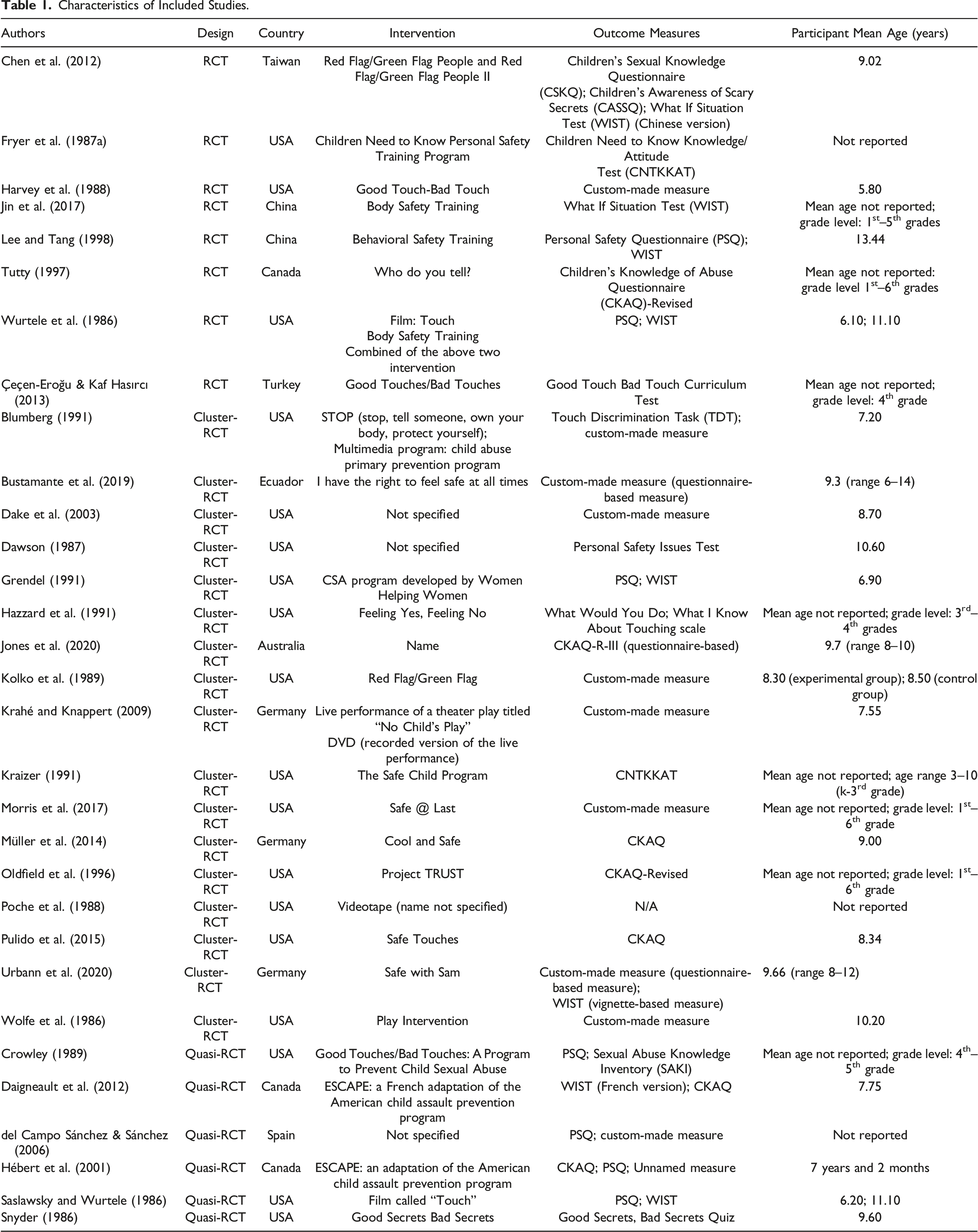

Assessment of Risk of Bias in Included Studies

The methodological quality of the included studies was assessed using the Cochrane Risk of Bias Tool (v1.0; Higgins et al., 2011), which includes criteria to assess the risk of bias across six different domains: random sequence generation, allocation concealment, blinding of participants and personnel, blinding of outcome assessment, incomplete outcome data, and selective reporting. Each domain was assigned a judgment of “low,” “unclear,” or “high” risk of bias.

Sensitivity Analysis

Where appropriate, we performed sensitivity analyses to examine if outliers were associated with the magnitude and significance of effect sizes. Outliers were defined as studies in which confidence intervals did not overlap with confidence intervals of the pooled effect (Harrer, et al., 2019).

Results

Results of the Search

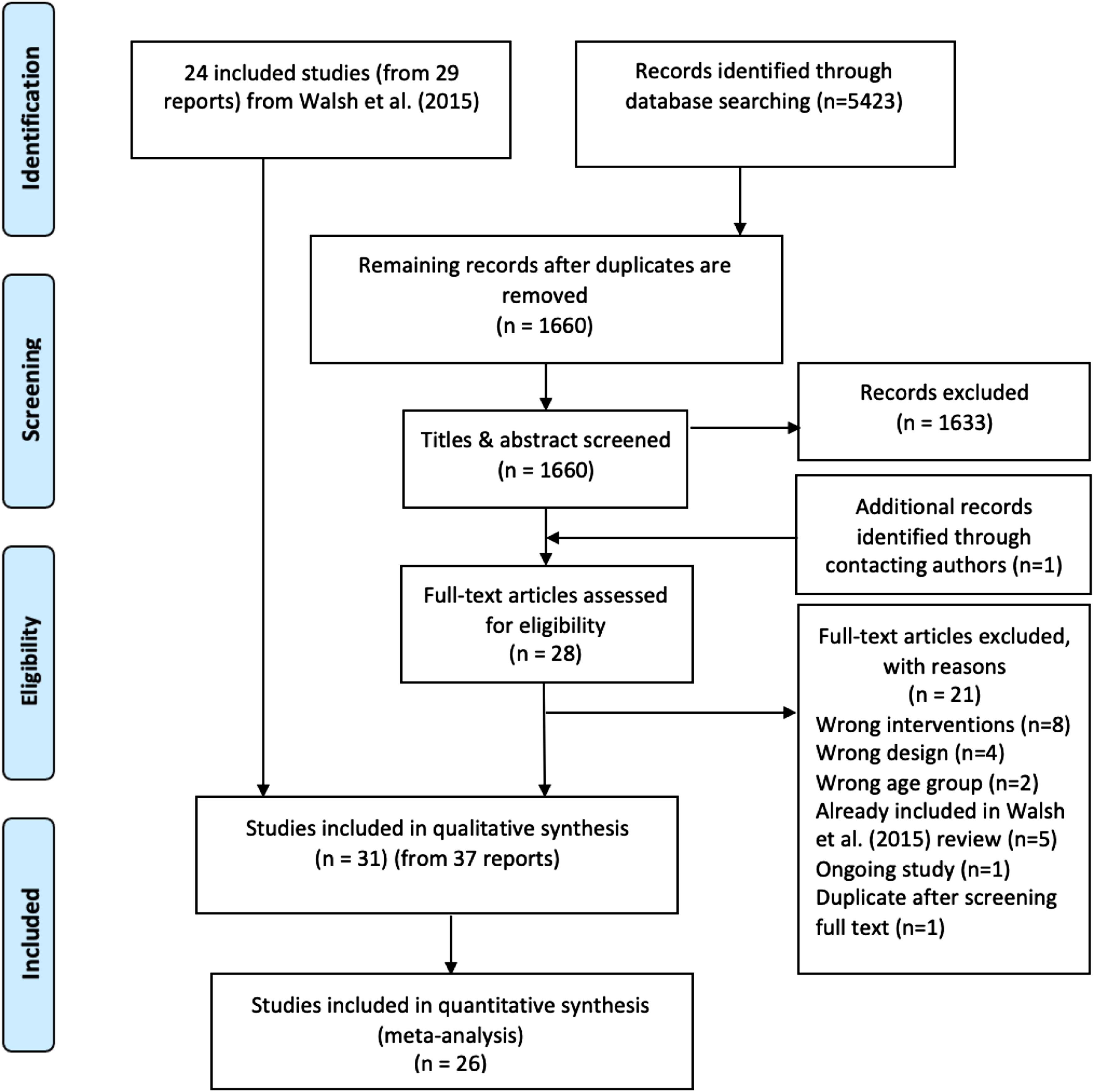

The study flow diagram (PRISMA flowchart) is presented in Figure 1. Database searches yielded 5,423 records which were then imported into the reference management software, Mendeley (version 1.19.3; Elsevier, 2018). One thousand six hundred and sixty records remained for title and abstract screening after duplicates were removed. One additional record was identified through contacting authors. Two papers (Holloway & Pulido, 2018; Pulido et al., 2015) reported the same trial, and we selected the first (Pulido et al., 2015) because it reported both pre- and post-test data. PRISMA flowchart.

After title and abstract screening against the inclusion and exclusion criteria, twenty-eight records progressed to full-text review alongside 29 records from the Walsh et al. (2015) review, which used identical inclusion and exclusion criteria to assess eligibility. Full-text reports of the 28 newly-identified records were assessed including four studies published in Chinese-language journals. Twenty-one papers were excluded: five papers were excluded as these were already accounted for in the Walsh et al. (2015) review and sixteen papers were excluded for reasons documented in the PRISMA flowchart, including the four Chinese-language studies—these were excluded on the study design criteria. One newly-identified study (Jin, Chen, Jiang, & Yu, 2017) was conducted in China and published in an English-language journal. A total number of seven new studies (from eight reports) were identified. Together, the new searches (seven studies from eight reports) and the Walsh et al. (2015) searches (24 studies from 29 reports) resulted in 31 included studies (from 37 reports).

Study Characteristics

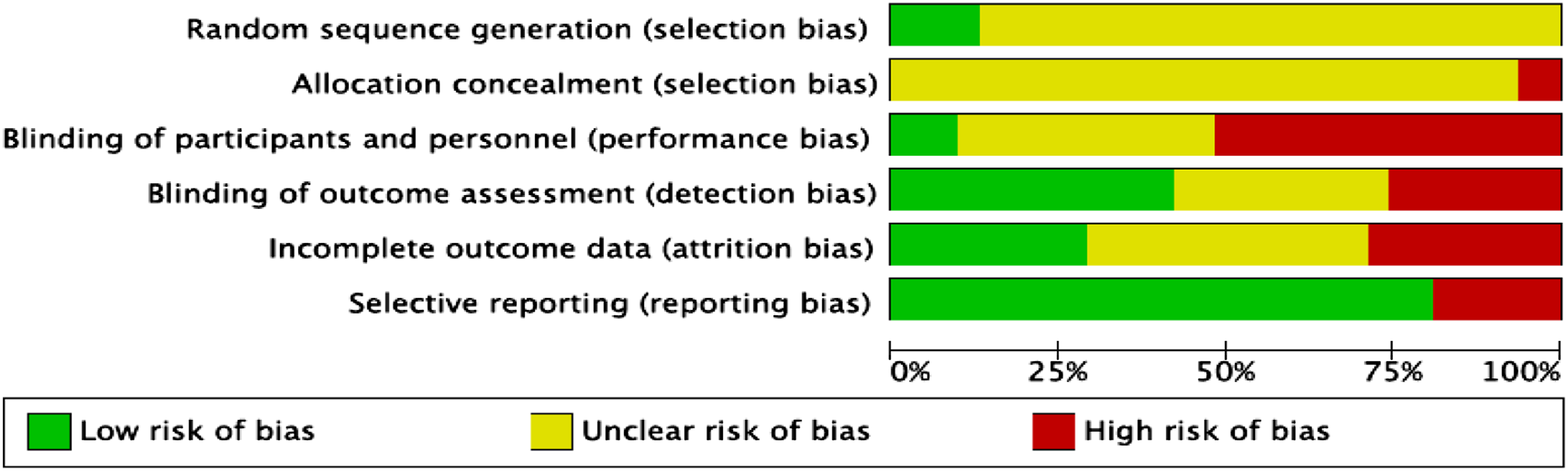

Characteristics of Included Studies.

Designs

Of the 31 studies, seven studies were RCTs, eighteen were cluster-RCTs, and six were quasi-RCTs. The unit of randomization in eighteen studies were groups of children (e.g., classes, schools, or administrative collectives such as districts). Of these, eighteen studies were cluster-RCTs (as above) and three were quasi-RCTs. The unit of randomization in the remaining ten trials was individual school students. Of these, seven studies were RCTs and three were quasi-RCTs.

Participants

A total of 9049 participants were included across the 31 studies (5802 participants from Walsh et al.’s review and 3247 from the newly-included studies). Sample sizes in cluster-RCTs ranged from 74 (Poche et al., 1988) to 1269 (Oldfield et al., 1996). For the standard RCTs, the sample sizes ranged from 46 (Chen et al., 2012) to 565 (Jin et al., 2017).

The studies were conducted in a number of different countries, a majority (n = 18) being from the United States, three were conducted in Canada and Germany, two studies were conducted in China, and one apiece in Ecuador, Spain, Taiwan, and Turkey.

The mean age of participants could be identified in 21 studies. Authors of 10 studies did not report the mean age of participants. Where identified, participant mean age ranged from 5.8 years (Harvey et al., 1988) to 13.44 years old (Lee & Tang, 1998). The proportion of females in the included studies ranged from 44.20% (Pulido et al., 2015) to 56.10% (Jones et al., 2020). As with Walsh et al.’s (2015) review, only one study included female only participants (Lee & Tang, 1998). Gender data for participants were not reported in five studies. Of note, gender data were reported in all newly-included studies.

Outcome Measures

Questionnaire-based measures for studies included in the meta-analysis included the Personal Safety Questionnaire (PSQ), Children’s Knowledge of Abuse Questionnaire and versions thereof (CKAQ, CKAQ-R, and CKAQ-III-R), Children Need to Know Knowledge/Attitude Test, What I Know About Touching Scale; Good Touch Bad Touch Curriculum Test; Children’s Awareness of Scary Secrets, Children’s Sexual Knowledge Questionnaire (CSKQ); Good Secrets Bad Secrets Quiz; Personal Safety Issues Test; Sexual Abuse Knowledge Inventory (SAKI), other custom-designed and unnamed measures were also used. Vignette-based measures included the What If Situations Test (WIST), Touch Discrimination Task (TDT), What Would You Do? (WWYD), and other unnamed measures were also used.

Risk of Bias in Included Studies

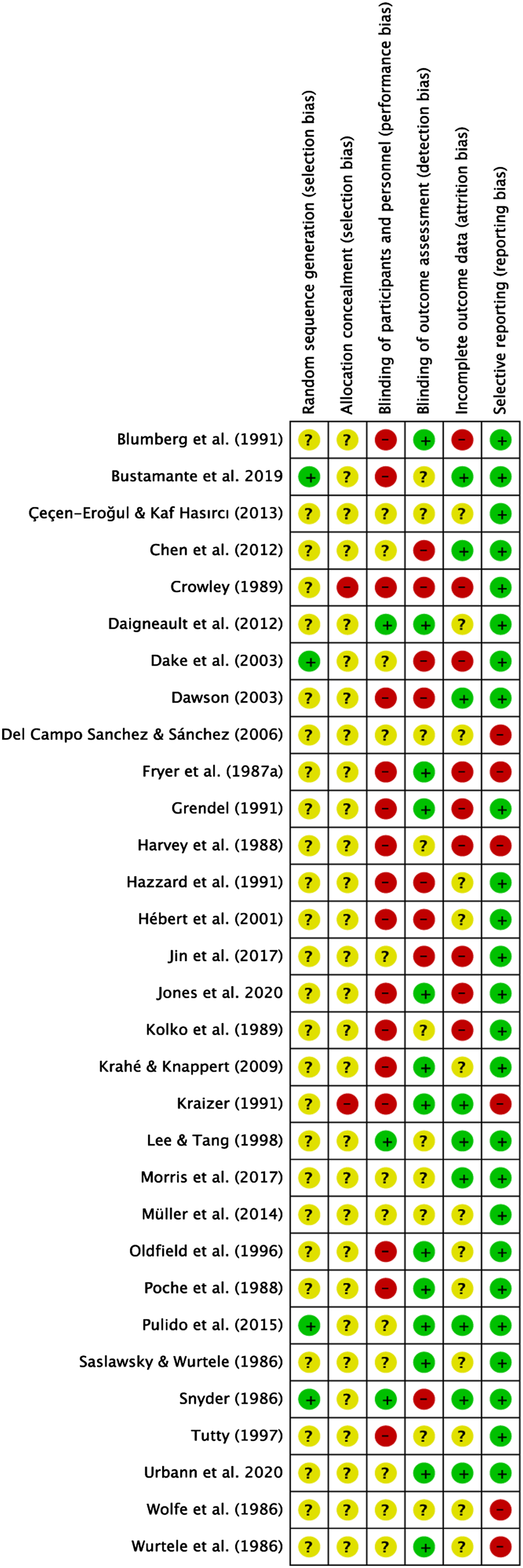

Random Sequence Generation (Selection Bias)

Figure 2 (summary for individual studies) and Figure 3 (summary across all studies) provide details on the quality of the included studies. Notably, only four studies reported successful randomization, two of which were identified in the new search. The remaining 27 studies did not explicitly state the randomization process used, therefore were rated as unclear risk. Risk of bias assessment of included studies. Review authors’ judgments about each risk of bias item across all included studies.

Allocation Concealment (Allocation Bias)

Twenty-nine of the included studies did not report the strategies used for allocation concealment. We therefore classified these studies as unclear risk of bias. Further to this, two studies were rated as high risk of bias by Walsh et al. (2015) due to failure of randomization and the involvement of school staff in the randomization process. None of the seven studies identified in the new search reported allocation concealment procedures, and these were also rated as unclear risk of bias.

Blinding of Participants and Personnel (Performance Bias)

Only three trials reported blinding of participants and personnel, we therefore classified these studies as low risk of bias. Sixteen studies (two of which were newly-included studies) were rated as high risk of bias due to the potential bias resulting from knowledge about the study group. Eleven studies did not report on the blinding of participants and personnel, as a result, we rated them as unclear risk of bias. Five of the seven newly-included studies did not report clearly regarding blinding of participants and personnel. Therefore, we classified these studies as unclear risk of bias.

Blinding of Outcome Assessment (Detection Bias)

Blinding of outcome assessment was not reported in ten studies; therefore, we rated them as unclear risk of bias. Thirteen studies (including six studies identified in our new search) were classified as low risk of bias because authors reported using strategies to minimize outcome assessment bias. Eight studies were rated as high risk of bias as no strategies were in place to blind outcome assessors to group membership or to ensure children completed the assessment independently.

Incomplete Outcome Data (Attrition Bias)

Consistent with Walsh et al.’s (2015) criteria for attrition bias, we classified studies as low risk of bias when attrition rates were less than 10% and high risk of bias when attrition rates were more than 10%. Following this classification criteria, nine studies were rated as low risk of bias because they reported no attrition or loss to follow-up or reported attrition rates lower than 10%. Nine trials reported attrition rates higher than 10%, we therefore rated them as high risk of attrition bias. Thirteen studies did not report attrition rates, we classified these studies as unclear risk of bias.

Selective Reporting (Reporting Bias)

Six studies were rated as high risk of reporting bias because data for outcomes were not reported completely in the results section. The remaining 25 studies (including seven studies identified in our new search) were rated as low risk of bias.

Meta-analysis of the Overall Effectiveness of School-based CSA Interventions

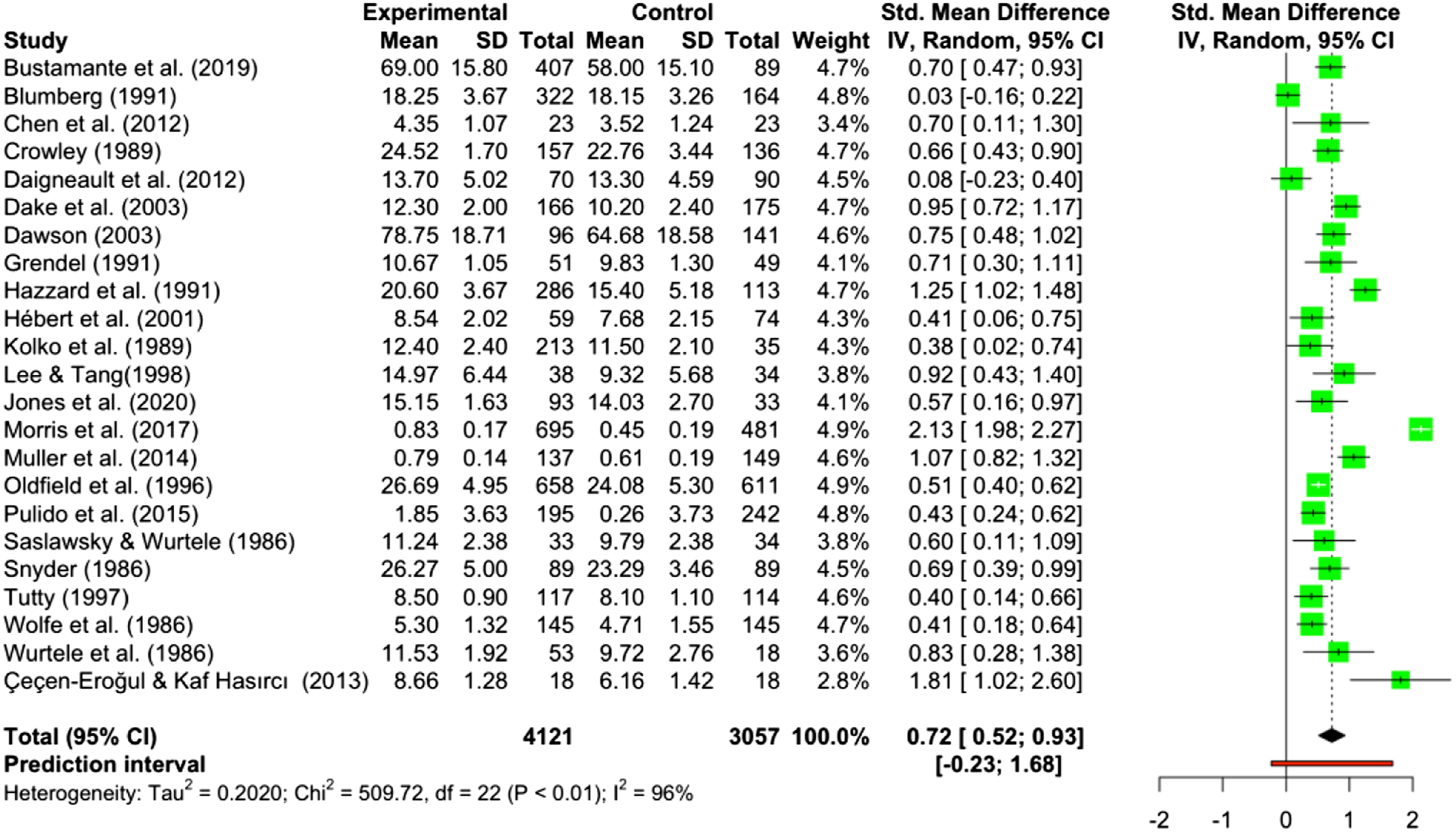

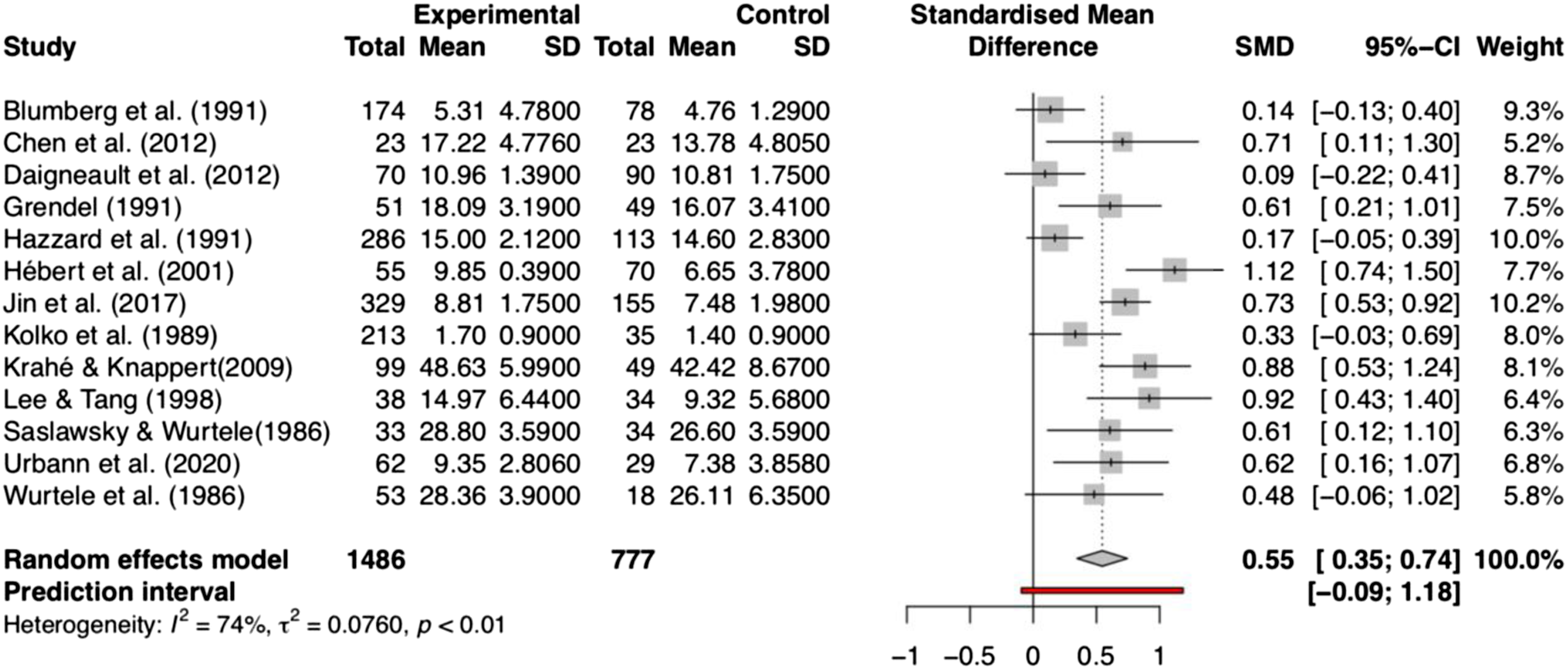

Twenty-five studies were included in the quantitative synthesis (see Figure 1). Two meta-analyses were conducted. A total of 23 studies with 7191 participants were included in the meta-analysis of participant knowledge outcomes assessed using questionnaire-based measures (i.e., assessment of factual knowledge), and a total of 13 studies (2264 participants) were included in the meta-analysis of knowledge outcomes assessed using vignette-based measures (i.e., assessment of applied knowledge).

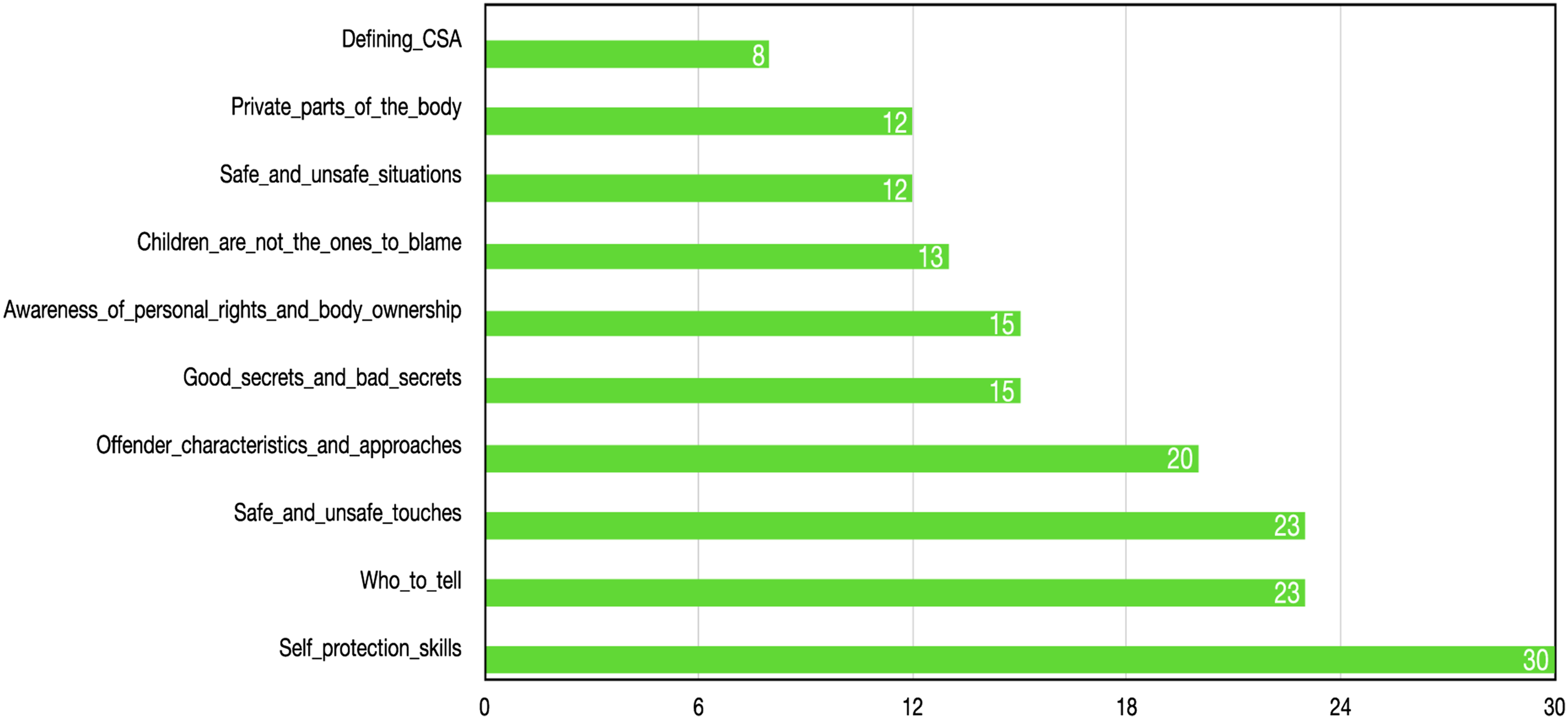

The results of the two meta-analyses are presented in Figures 4 and 5. The random effects meta-analysis showed a significant medium to large effect size (Hedges’ g) of 0.72 (95% CI [0.52, 0.93]) and 0.55 (95% CI [0.35, 0.74]) for factual knowledge with questionnaire-based measures and applied knowledge with vignette-based measures, respectively. This indicates that students who have participated in CSA interventions showed higher levels of factual and applied CSA knowledge than participants who did not receive a CSA intervention program. As expected, there was significant heterogeneity in effect sizes across studies using both measures. These results suggest the importance of examining moderating variables and the value of conducting of meta-regression. Questionnaire-based CSA knowledge gains. Vignette-based CSA knowledge gains. Content components in interventions evaluated in the included studies (n = 31).

Intervention Components Analysis

Structural Components

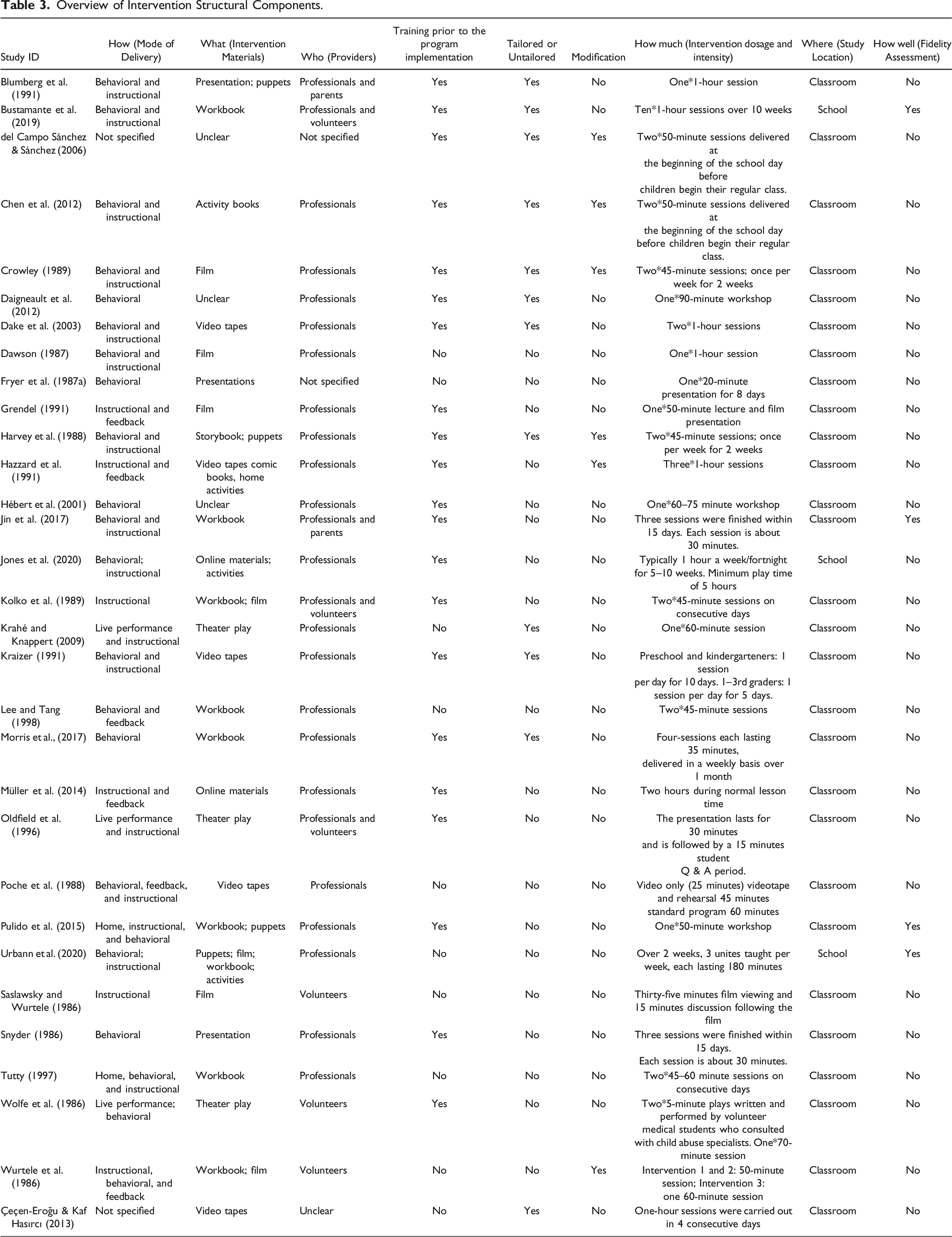

Description of Structural Components of Interventions.

Overview of Intervention Structural Components.

Modes of Delivery

Twenty-one studies incorporated role-play teaching strategies to achieve the intervention goals. Eighteen studies included discussion as a teaching strategy in the program to enhance participants’ understanding of CSA. Seventeen studies employed the use of video recordings or films and six studies incorporated parental engagement or home activities outside the classroom as modalities. Two studies used live performance or theater plays to achieve intervention goals.

Intervention Dosage and Intensity

The duration of interventions ranged from one single 50-minute session (Pulido et al., 2015) to 10 one-hour sessions delivered over 10 weeks (Bustamante et al., 2019). Of the seven newly-included studies, the duration of intervention programs ranged from one single 50-minute session (Pulido et al., 2015) to ten one-hour sessions delivered over a 10-week period (Bustamante et al., 2019). One study (Daigneault et al., 2012) included three types of additional booster sessions beyond the initial program sessions, including a complete version of the original program (ESPACE), or a brief version of the original program (for third and fourth graders), or a program called “Confidence, Solidarity, Respect” (90 minutes) which revisited ESPACE concepts and addressed gender-based violence and respect (for fifth and sixth graders). These boosters were delivered 2 years after the initial intervention.

Provider of the Intervention

The intervention providers fell into three categories: professionals, parents, and volunteers (including student volunteers). Twenty-three studies were delivered by professionals such as teachers, guidance counselors, social workers, mental health professionals, and police. One study was delivered by both parents and professionals (Jin et al., 2017). Five studies were delivered by volunteers including students. Three studies did not provide details about the intervention provider.

Training Prior to Program Implementation

Twenty studies reported providing training sessions for parents and/or teachers prior to program implementation.

Program Tailoring

Twenty-two studies did not report whether or not the program was tailored or adapted. The remaining nine studies reported tailored components. Tailored components included using different teaching strategies to accommodate children’s developmental levels and interests (e.g., teaching younger children songs about safe and unsafe touches and using less complex role plays with younger children), and using culturally adapted names for characters in practical scenarios and examples.

Program Modification

Five studies reported modifying the program during the implementation process. Modifications included using a shorter version of the program in order to keep the length of the entire program comparable with school conditions, modifying program length for students in different grade or year levels, and adjusting the volume of content presented to the students in a given session.

Fidelity Assessment

Four included studies reported fidelity assessment. All were newly included studies. Bustamante et al. (2019) included volunteers to assist program implementation to maintain a degree of standardization between classrooms. Pulido et al. (2015) and Urbann et al. (2020) utilized a fidelity checklist/questionnaire to track whether the program was delivered according to the study protocol. In Jin et al.’s (2017) study, each intervention session was monitored by one of the researchers and fidelity checklists were also used to ensure that program providers covered key concepts.

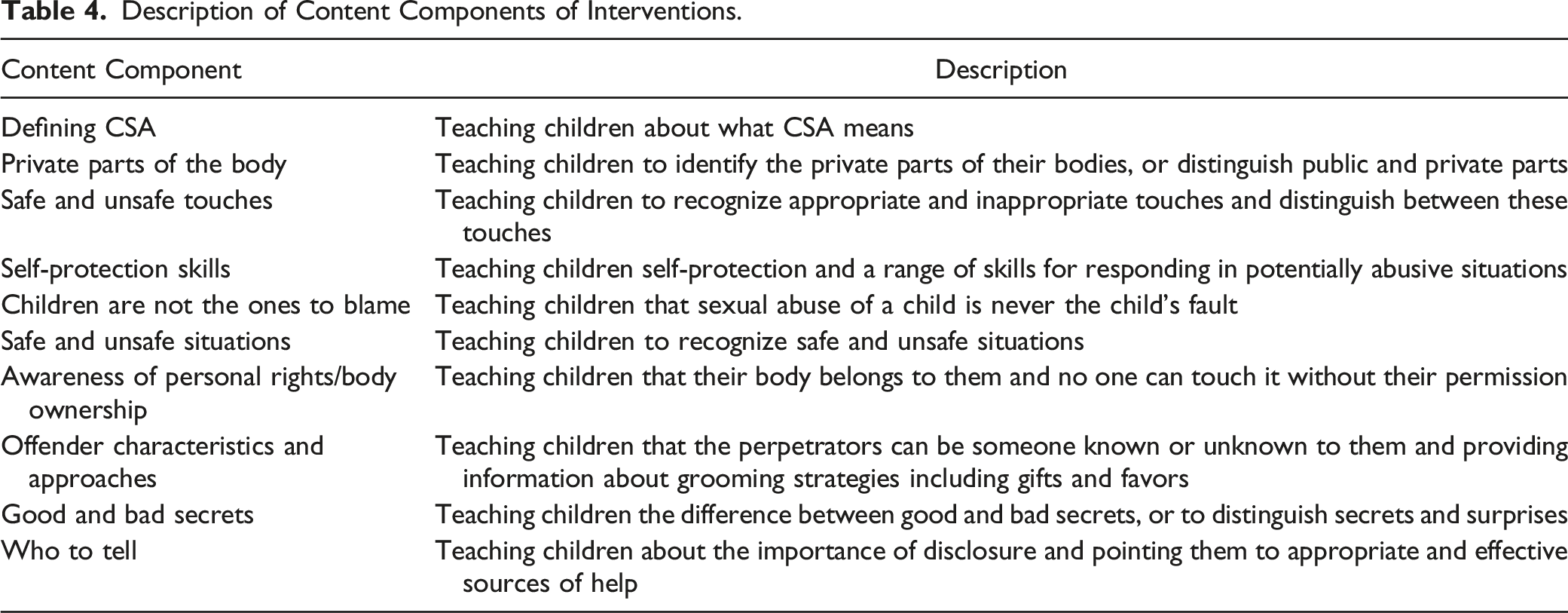

Content Components

The first author and the last author (ML and YW) inductively coded, categorized, and systematically described intervention content components that appeared in the narrative text of the study reports. Any discrepancies between the two reviewers were resolved by discussion or consultation with a third reviewer. Cohen’s kappa was calculated to measure the inter-rater agreement between the two reviewers; the result k = 0.72 indicated substantial agreement between reviewers regarding the coded components (McHugh, 2012). The details of the coding process are available upon request.

Description of Content Components of Interventions.

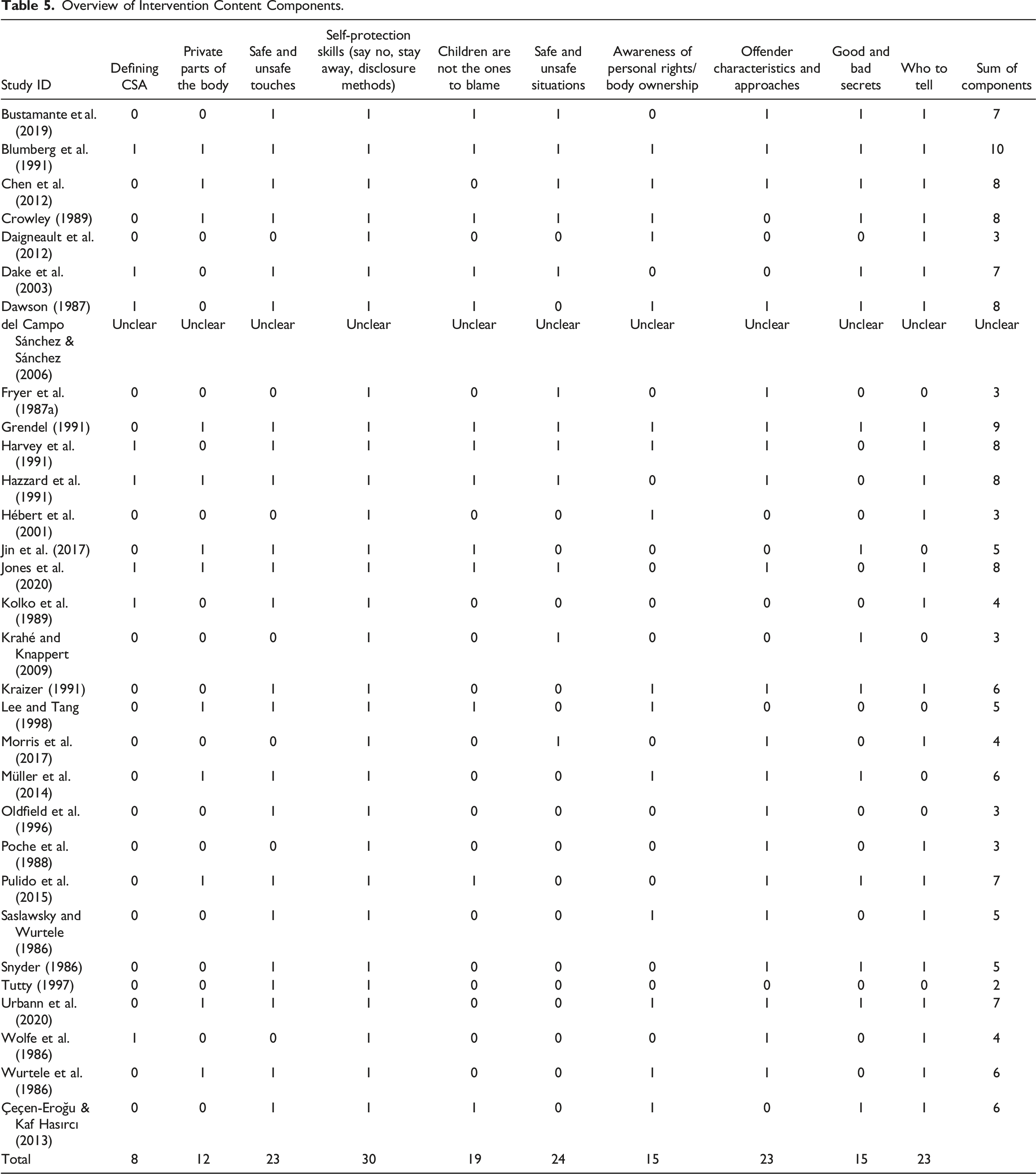

Overview of Intervention Content Components.

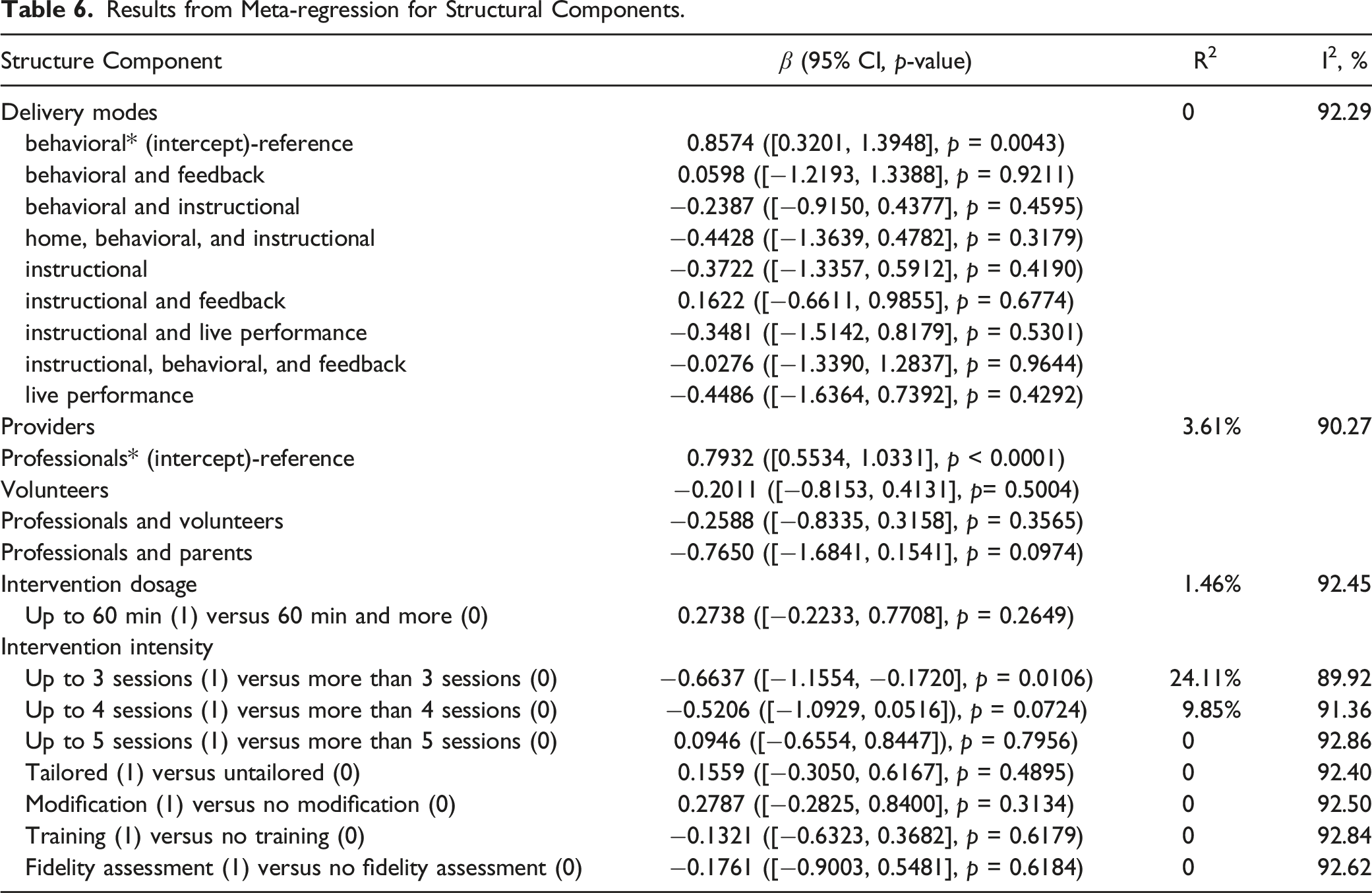

Results from Meta-regression for Structural Components.

Results from Meta-regression Analyses

Meta-regression of Intervention Structural Components

The results of the meta-regression for structural components showed that only one of the eight components was associated with significant program effects as shown in Table 6. In order to explore how many intervention sessions are associated with greater program effectiveness, we categorized the total number of intervention sessions into three major groups: (a) up to 3 sessions (1) versus 3 sessions and more (0); (b) up to 4 sessions (1) versus 4 sessions or more (0); and (c) up to 5 sessions (1) versus 5 sessions or more (0). Results from the meta-regression showed that although interventions with more than three sessions and more than four sessions are both found to be negatively associated with effect size, we observed a statistically significant relationship only between interventions with three or more sessions (β = −0.6637, 95% CI [−1.1554, −0.1720], p = 0.0106), indicating that interventions with more than three sessions tended to be more effective than interventions with three or fewer sessions. We did not observe a statistically significant relationship between other structural components and effect size.

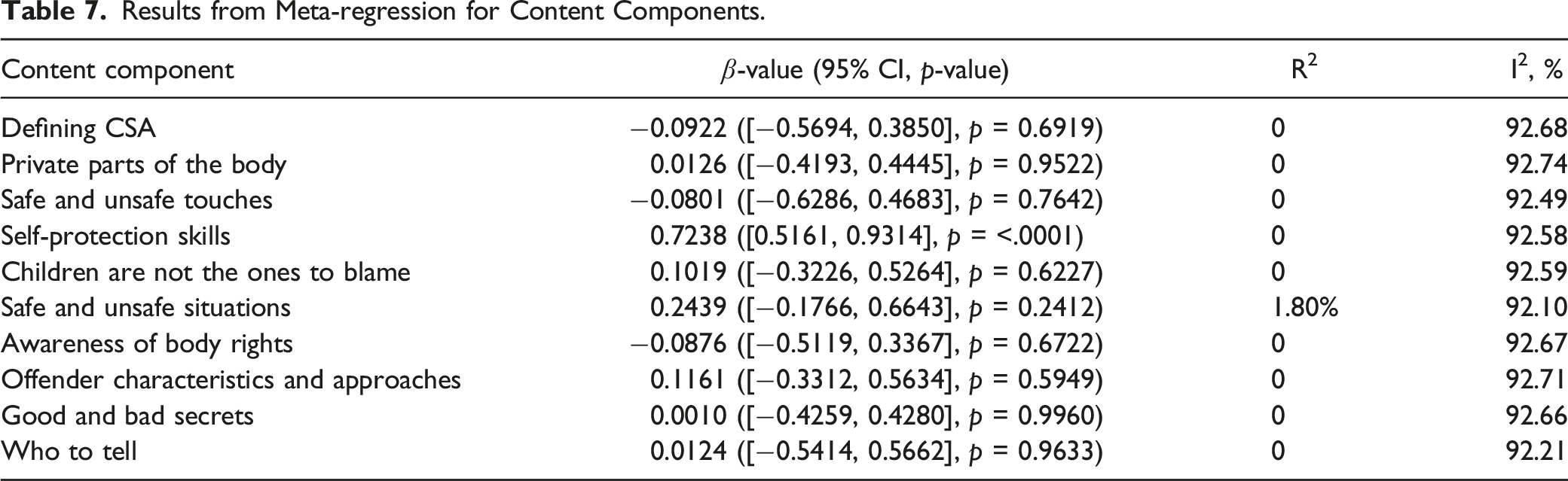

Meta-regression of Intervention Content Components

Results from Meta-regression for Content Components.

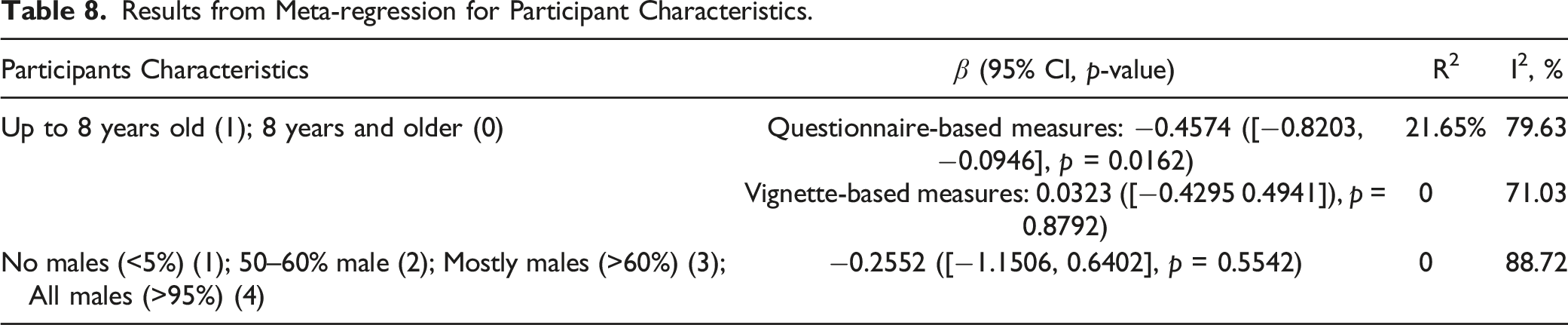

Meta-regression Results of Participant Characteristics

Demographic characteristics such as participant age, gender, ethnicity, socioeconomic position, and ability level are potential effect moderators and might explain existing heterogeneity (Walsh et al., 2015). However, participant demographic information was poorly reported in the included studies, and we were able to extract data only for participant age and gender.

We conducted meta-regression to explore the effects of participants’ age on knowledge outcomes measured using different instruments (questionnaire-based measures vs. vignette-based measures). We treated eight years of age as the cut-off for the meta-regression based on the cut-off used in previous studies/reviews (Davis & Gidycz, 2000; Tutty, 1997) or used corresponding school grades as the cut-off when ages were not provided (e.g., kindergarten to third grade and fourth grade and above). The selection of this cut-off is educationally relevant as it is consistent with the distinction between the early years of school and middle school. For studies using questionnaire-based measures, this categorization resulted in two groups of studies: (a) four studies with participants younger than eight years old; (b) sixteen studies including participants aged eight years and older. The results of meta-regression suggest that when testing factual knowledge utilizing questionnaire-based measures immediately after receiving the program, older children (eight years and older) show greater knowledge gains than children who are younger than eight years old (β = −0.4574, 95% CI [−0.8203, −0.0946], p = 0.0162).

For studies using vignette-based measures, four studies included participants younger than eight years old. Eight studies included participants aged eight years and older. The results of the meta-regression show that when testing applied knowledge using vignette-based measures immediately after the program, we did not observe any association between participants’ age and program effectiveness (β = 0.0323, 95% CI [−0.4295, 0.4941], p = 0.8792).

Results from Meta-regression for Participant Characteristics.

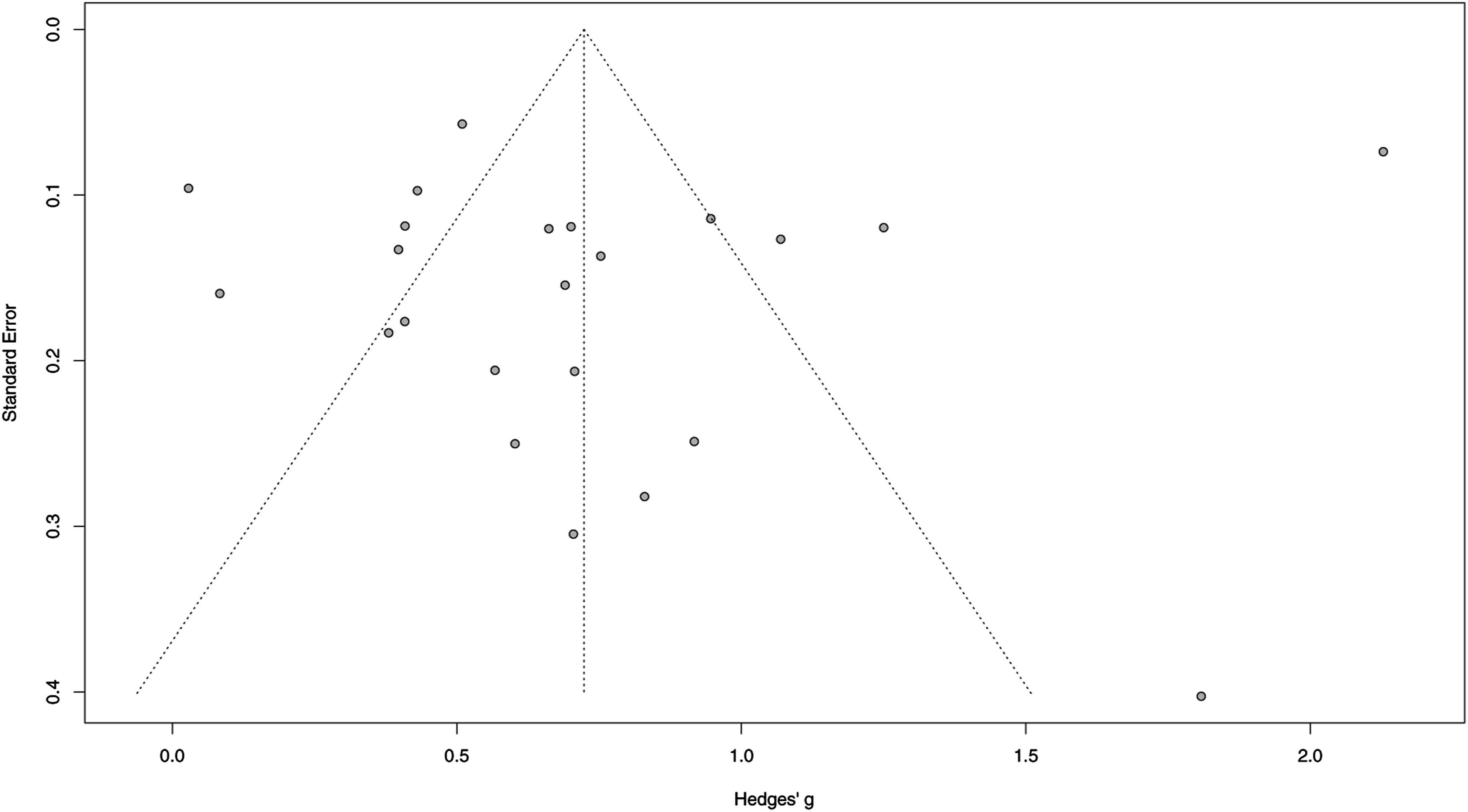

Sensitivity Analysis and Publication Bias

We conducted sensitivity analyses to examine whether study quality was associated with the magnitude and significance of effect sizes. Five studies (Blumberg et al., 1991; Daigneault et al., 2012; Hazzard et al., 1991; Morris et al., 2017; Çeçen-Eroğu & Kaf Hasırcı, 2013) were identified in which effect size confidence intervals did not overlap with confidence interval of the pooled effect (Harrer et al., 2019). The results of the sensitivity analysis in which these five studies were removed showed an overall effect size of 0.6360 (95% CI [0.5302, 0.7417], p < 0.0001), which was lower than the original effect size of 0.7234 (95% CI [0.5159, 0.9310], p < 0.0001) but was still in the range for a medium effect. I2 value was reduced from 95.7% to 58.8% indicating that even with studies at higher risk of bias removed, this remains a heterogenous set of studies.

Finally, we assessed for potential publication bias for the 23 studies included in the meta-analysis. According to Egger’s test, there was no evidence of publication bias (intercept: −1.286; 95% CI [−6.03, 3.46]; p = 0.600785). Although this result did not reach statistical significance, we cannot fully rule out the existence of publication bias because the subjective visual assessment of the funnel plot (Figure 7) suggested that there were fewer studies at bottom left and bottom right on both sides of the center, indicating the potential for missing studies. Funnel plot used to explore publication bias.

Discussion

This systematic review and meta-analysis advances on the substantive work undertaken in previous systematic reviews in several ways. First, by replicating searches using identical search and screening strategies and searching Chinese-language databases, this review also captured studies from one of the worlds’ most populous regions. Second, this systematic review and meta-analysis is the first to catalogue CSA prevention programs’ structural and content components in a way that enables systematic assessment of factors associated with program effectiveness. By systematically mapping out intervention components using inductive thematic analysis, the findings from this review advance the field’s understanding of intervention components in existing school-based CSA prevention programs. This innovation can, in turn, serve several purposes including providing criteria for program development, and the potential to develop an auditing tool for program quality assessment. Third, we provided a very robust analysis of the predictors for greater program effectiveness, which are of particular importance for contexts such as LMICs where resources are limited and the impetus to avoid resource wastage and provide only effective interventions is high.

The majority of included studies (n = 26) were conducted in high-income countries (HICs), only five studies were conducted in LMICs and upper-middle income countries. It should be noted that programs used in these evaluation studies (e.g., Red Flag/Green Flag People, Body Safety Training, Behavioral Safety Training, and Good Touches/Bad Touches) were all originally developed in HICs and the majority of the studies did not report any cultural adaptation undertaken during the implementation process. Therefore, findings from our systematic review indicate an urgent need for developing context-specific school-based CSA interventions that have been adapted to address different sociocultural contexts and gender norms, as well as evaluate the effectiveness of these culturally adapted programs. Further to this, having screened all the studies from database searches, we excluded four Chinese studies that did not meet our study design criterion (i.e., RCT), suggesting the need for rigorous evaluation studies such as RCTs to be conducted in China, and more broadly, in LMICs, to deepen our understanding of factors that influence the effectiveness of programs in these settings.

Structural Components and Participants Characteristics

Findings from our analyses added weight to the argument that once-off single session personal safety programs undertaken in large school assemblies are clearly unlikely to be effective. Furthermore, previous literature suggested that dividing programs into several sessions allows participants to maintain their attention for the entire period of the intervention and increases the opportunity for repeated presentations of key concepts, which reinforces earlier learning (Sanderson, 2004). Davis and Gidycz (2000) proposed that prevention education has a cumulative effect, with children’s knowledge and skills continuing to improve with further exposure to program content, and although repeated exposure is known to be an effective instructional strategy (Hattie & Yates, 2013), it is yet to be tested empirically with this specific category of educational interventions.

As for participants’ characteristics, results from the meta-regression are consistent with Walsh et al.’s (2015) findings, which indicated that when using questionnaire-based measures, older children (8 years and older) demonstrate greater knowledge gains than younger children (less than 8 years) exposed to the same programs. Several reasons might explain the results. First, younger children’s cognitive, social and emotional, and behavioral development may account for some of their challenge in learning CSA prevention concepts. Second, it may also be that programs evaluated in studies in this review were not ideal in terms of developmental appropriateness for children in the early childhood years. The ways in which these concepts are taught may benefit from greater tailoring to suit children in this age range, for example, in the use of interactive toys, games, and multimedia.

Third, as the findings from the meta-regression showed, the way in which program outcomes are measured appears to matter. Further to this, the measures used to assess factual and applied CSA knowledge have typically been independently completed self-report paper and pencil tests. These may favor children with higher reading and comprehension skills and, indeed, more experience with test taking. With the ubiquitous presence and use of digital technologies in schools, study assessors may now begin to capture the opportunities for diverse and more inclusive methods for data collection with young children, meaning that in future, there will be less possibility for testing methods to interfere with the assessment of program effects.

Finally, we did not observe a significant association between program providers and effect size, which is consistent with the findings of a previous meta-analysis (Davis & Gidycz, 2000). Fidelity assessments that record which components were used in the actual program implementation process would be useful in examining the degree to which the intervention was delivered as intended. We were not able to conduct such analysis due to limited reporting with only four studies reporting on fidelity assessment (Bustamante et al., 2019; Jin et al., 2017; Pulido et al., 2015; Urbann et al., 2020). It is noteworthy that these were more recent studies, and this may indicate the quality of study reporting on this criteria is starting to improve.

Intervention Content Components

Although we did not observe significant relationships between any of the individual program content components and the overall effectiveness of the intervention, information about “safe and unsafe situations” tended to be relatively more effective than other components in improving participants’ understanding of CSA knowledge and concepts. The reason for the lack of effect for other content components remains unclear. One possible explanation being that the focus on program components often fails to consider other features of interventions which might contribute to differences in effect sizes, such as component sequencing, coordination, and guiding supervision infrastructure (Chorpita & Daleiden, 2007). Alternatively, the number of included trials was small, and data were strongly heterogenous, which limited the ability of statistical analysis to examine associations.

Limitations

This review has a number of limitations. First, we are aware that many CSA interventions could show changes in children’s CSA knowledge and behaviors in a longer time frame. However, we included only immediate post-test outcome assessments in this review, which does not allow for the identification of whether these results are maintained over time, or for the possibility of sleeper effects that might have been identified with longer term follow-up. It should be highlighted that improvement in self-protection skills and CSA knowledge do not imply that children will implement the skills and knowledge obtained from these programs in real-life situations, and there is no evidence that these school-based CSA prevention interventions reduce actual instances of sexual abuse. Second, the intervention components were coded based on a relatively small number of studies, which might have resulted in spurious associations or masked true differences in effectiveness related to other intervention components that were not examined in our meta-regression. Moreover, our content components analysis relied solely on the narrative text of study reports. We did not seek out the actual programs to determine whether or not the study reports included a comprehensive description of program contents. The publication of reporting guidelines such as the TIDieR checklist (Hoffmann et al., 2014) make it incumbent upon study authors to ensure that they have fully explained the programs they are evaluating.

In terms of the limitations of the included studies, we were not able to conduct moderator analyses with several variables such as fidelity assessment and the program’s theory of change due to poor reporting of the characteristics of interventions in many study reports. We were also not able to conduct exploratory multiple meta-regression to investigate the association between different combinations of intervention content components due to the high heterogeneity across studies. Furthermore, the results of the meta-regression should be seen as exploratory because intervention components were coded based on limited information regarding the theoretical basis of the CSA intervention components in study reports. In addition, the relationship explained by meta-regression analysis is an observational association across trials and it does not indicate causation (Thompson & Higgins, 2002). Finally, it should also be noted that meta-regression will always be subject to the risk of ecological fallacy because it attempts to make inferences about individuals using study-level information (Higgins & Green, 2011).

Implications for Research and Practice Implications

Despite the limitations, our findings have important research, practice, and policy implications. First, clearer reporting of implementation fidelity and intervention components is needed in order to rigorously control for potential sources of confounding. Second, it is important to investigate the program’s theory of change as a moderator of effect size. Future studies should clearly report program theory and further explore the heterogeneity across interventions. Third, researchers need to identify additional moderators and intervention components/moderators that could explain the heterogeneity that was identified across studies, particularly in terms of identifying the factors associated with greater effectiveness in terms of school-based CSA prevention programs. It is also important to include follow-up assessments in a longer-term assessments in future studies, as immediate post-test outcomes might potentially over/underestimate intervention effectiveness. Finally, the majority of included studies in this review were conducted in Western countries and only a limited numbers of studies were conducted in LMICs and upper-middle income countries such as China. In total, we found seven new studies (from eight reports, one of which was conducted in China). Specifically, in terms of the two studies conducted in China (Jin et al., 2017; Lee & Tang, 1998), both had imported programs from the United States (Body Safety Training and Behavioral Safety Training), indicating the need for the development of programs that are appropriately designed for rather than adapted to Chinese cultural contexts. Furthermore, more evaluation studies in LMICs and upper-middle income countries such as China are needed in future to strengthen context-specific evidence of school-based CSA intervention effectiveness.

In terms of optimizing the delivery of school-based CSA interventions, professionals and policy makers should focus on ensuring that programs being offered include components that appear to be associated with greater effectiveness such as safe situations and unsafe situations. Program developers could self-audit their programs against these components to ensure they are delivering programs that have the best chance of success.

Conclusion

CSA is a global issue that has significant negative effects on victims’ physical, psychological, and sexual well-being. The findings of the review suggest that school-based CSA interventions are effective in increasing participants’ CSA knowledge as assessed by both questionnaire-based and vignette-based measures. This review provides the CSA prevention field with a comprehensive overview of the components in school-based CSA prevention programs that are associated with better outcomes. According to our findings, an effective CSA intervention should include at least three or more sessions that cover the content component “safe and unsafe situations.” Program developers should take participants’ age into consideration when designing the interventions and use age-appropriate outcome measures to evaluate children’s performance. Our findings also provide recommendations for future research, particularly in terms of optimizing the effectiveness of school-based CSA prevention programs, and the better reporting of intervention components as well as participant characteristics.

Footnotes

Author’s Note

This review was registered with PROSPERO (ID: CRD 42019140564).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: FM was supported by an Economic and Social Research Council (ESRC) Future Research Leader Award [ES/N017447/1] and the UKRI Global Challenges Research Fund (GCRF) [ES/S008101/1].