Abstract

A Systematic Review

Background

Good communication is central to social work practice and underpins the success of a wide range of social work activities (Koprowska, 2020; Lishman, 2009). People in receipt of social work services value social workers who are warm, empathic, respectful, good at listening and demonstrate understanding and compassion (Beresford, Croft & Adshead, 2008; Department of Health, 2002; Ingram, 2013; Kam, 2020; Munford & Sanders, 2016; Social Care Institute for Excellence, 2000; Tanner, 2019). Even in diverse and challenging circumstances, effective communication is thought to build constructive working relationships and to enhance social work outcomes (Healy, 2018).

Communication, sometimes referred to as interpersonal communication, ‘involves two (or more) people interacting to exchange information and views’ (Beesley, Watts & Harrison, 2018, p. 2). Hargie (2017) suggests interpersonal communication is driven and directed by the desire to achieve particular goals and is underpinned by perceptual, cognitive, affective and behavioural operations. In social work practice and education, the values of the profession and the specific social, cultural, political and ideological contexts in which social workers operate, influence the nature of interpersonal communication (Harms, 2015; Koprowska, 2020; Thompson, 2003).

The impact of failing to communicate effectively has been well documented, particularly through reports into incidents of child deaths (Laming, 2003; 2009; Munro, 2011). Consequently, the importance of teaching communication skills to social work students as a means of enabling them to communicate effectively has long been recognised (Smith, 2002). More recently, there have been calls for the expansion and/or improvement of this training (Luckock, Lefevre, Orr, Jones, Marchant & Tanner, 2006; Narey, 2014). Considerable time, effort and money has been spent on achieving this aim, leading to a wide range of communication skills, with training courses becoming embedded in social work programmes across the globe. Communication generally, and some communication skills specifically, features in the educational standards of different countries including the Australian Social Work Education and Accreditation standards, the Professional Capabilities Framework in the UK and the Educational Policy Accreditation Standards in the US (AASW, 2020; BASW, 2018; CSWE, 2015). One of the consequences of the coronavirus pandemic is increasing diversification in the delivery of teaching and learning in Higher Education. However, the impact of online or blended learning on the development of student social workers’ communication skills remains to be seen.

Communication skills training (CST) can be defined as ‘any form of structured didactic, e-learning and experiential (e.g. using simulation and role-play) training used to develop communicative abilities’ (Papageorgiou et al., 2017, p. 6). In social work education, ‘communication skills training’ is more commonly referred to as the ‘teaching and learning of communication skills’; a trend reflected in the titles of various knowledge and practice reviews. Given that purpose, role and context have a significant impact on communication in social work practice, conceptualisations which integrate knowledge, values and skills, for example, the knowing, being and doing domains developed by Lefevre, Tanner, and Luckock (2008) have become increasingly popular (Ayling, 2012; Woodcock Ross, 2016). In social work education, the intervention includes not only communication processes, but also an understanding of the broader contextual issues in which social work interactions occur. This views communication in social work as both an art and a science (Healy, 2018). Variation in terminology is significant due to the wide knowledge base from which social work draws. The term ‘communication skills’ is not applied uniformly in the social work literature – microskills, interpersonal skills and interviewing skills are frequently used alternatives.

Core communication skills for social work include non-verbal communication such as making eye contact and nodding, alongside a range of verbal techniques including clarifying, reflecting, paraphrasing, summarising and asking open questions. They are described in detail in a plethora of social work textbooks (Beesley et al., 2017; Cournoyer, 2016; Healy, 2018; Sidell & Smiley, 2008). These skills form part of the content of a number of communication skills courses and preparation for practice modules. They feature in the educational standards, competency and capability frameworks of various countries (AASW, 2020; BASW, 2018; CSWE, 2015). Microskills help social workers and social work students ‘establish and maintain empathy, communicate non-verbally and verbally in effective ways, establish the context and purpose of the work, open an interview, actively listen, establish the story or the nature of the problem, ask questions, intervene and respond appropriately’ (Harms, 2015, p. 22). Microskills are thought to be transferable across client groups and settings.

The pedagogic practices used to teach communication skills to social work students include a wide range of affective, cognitive and behavioural components. Educational interventions comprise taught input including theory, rehearsal, role-play and simulation, modelling, observation, feedback, video playback and critical reflection. A safe learning environment encourages students to make effective use of experiential activities. Attention may also be devoted to specific areas of communication such as communicating with children, communicating with people who have hearing impairments and inter-professional communication. No specific blueprint for communication skills training in social work exists. Minimum requirements, dosage and delivery methods are not prescribed, leading to considerable heterogeneity of educational interventions used in practice.

Rigorous high-quality evaluation of outcomes in social work education are still in the early stage of development (Carpenter, 2011). A number of empirical studies have sought to evaluate the teaching of communication skills among social work students, or to investigate the impact of particular components of an intervention (Koprowska, 2010); (Lefevre, 2010); Tompsett, Henderson, Gaskell Mew, Mathew Byrne & Tompsett, 2017). Questions concerning whether the teaching of communication skills to social work students is effective and produces positive outcomes remain unanswered. To address this issue, we conducted a rigorous and systematic review of the quantitative evidence to establish the effectiveness of communication skills training for social work students. The findings will support educators and policymakers to make evidence-based decisions in social work education, practice and policy.

The objective of this systematic review was to critically evaluate studies which have investigated the effectiveness of communication skills training programmes for social work students. The PICO (Population, Intervention, Comparator, Outcomes) framework informed the development of the research question. Student social workers constituted the population; communication skills training was the intervention under investigation; comparators were the absence of communication skills training, a course unrelated to the communication skills training or where different modes of delivery were compared and the outcomes of interest consisted of attitudes, knowledge, confidence and behavioural changes. Stakeholders (academics, practitioners, students and people with lived experience) agreed that neither the comparator nor the outcomes should be specified within the research question itself, on the grounds that researchers and academics were unlikely to have specified these components in the primary studies. The research question which the review posed is ‘What is the effectiveness of communication skills training for improving the communicative abilities of social work students’?

Method

Originally produced for the Campbell Collaboration Library, where the review is registered and the accompanying protocol can be found (Reith-Hall & Montgomery, 2019), this systematic review followed the Cochrane Handbook of Systematic Reviews for Interventions (Higgins, Thomas, Chandler, Cumpston, Page & Welch, 2021) and the PRISMA reporting guidelines (Page, McKenzie, Bossuyt, Boutron, Hoffmann, Mulrow et al., 2021).

Eligibility Criteria

The PICO framework was used to establish eligibility criteria, as follows: The population comprised all social work students taught communication skills on a generic qualifying social work course in a university setting and thus included both undergraduate and postgraduate students. Social work courses designed for a specific client group were excluded, as were students on post-qualifying courses. For the intervention, any underpinning theoretical model, and any mode of teaching (taught input, videotape recording, role-play with peers and simulated interviews with people with lived experience) were considered acceptable. The comparator comprised an absence of an intervention, an intervention unrelated to communication skills training or in the case of a trial comparing effects, two different interventions to improve communication skills were permitted. Outcomes included changes in knowledge, attitudes, self-efficacy and behaviours. In keeping with the literature on outcomes in social work education, student satisfaction alone was not an accepted outcome measure in this review (Carpenter, 2005; 2011). To ensure appropriate counterfactuals were employed, eligible studies included randomised trials, non-randomised trials, controlled before-after studies, repeated measures studies and interrupted time series studies.

Search Methods

We conducted a search for published and unpublished studies using a comprehensive search strategy that included multiple electronic databases, research registers, grey literature sources, and reference lists of prior reviews and relevant studies. Prominent authors were contacted to identify additional studies. In keeping with the Cochrane Handbook of Systematic Reviews for Interventions (Higgins, Thomas, Chandler, Cumpston, Page & Welch, 2021), study selection was not restricted by geography, language, publication date or publication status. The original search took place in September 2019 and was updated in June 2021.

The databases and research registers searched were as follows:

a) Education Abstracts b) ERIC c) MEDLINE d) PsycINFO e) Web of Science/Knowledge Database Social Science Citation Index f) Social Services Abstracts g) ASSIA - Applied Social Sciences Index and Abstracts i) Database of Abstracts of Reviews of Effectiveness j) The Campbell Library k) Cochrane Collaboration Library l) Evidence for Policy Practice Information and Coordinating Centre m) Google Scholar – using a series of searches, the first 2 pages of results for each search were screened n) ProQuest Dissertations and Theses

In addition, a manual search of the most recent issues of key journals were conducted. To do this, the top ranked 5 journals that provided included studies were identified and checked.

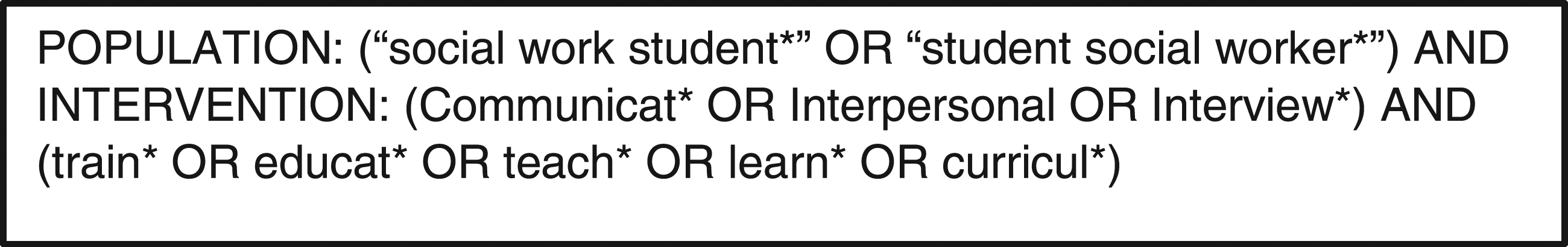

The search string shown in Figure 1 was modified for each database. Exact phrases or proximity searching were not required, and no filters were applied. Search string.

One reviewer conducted the database searches, removing duplicates and irrelevant records. To enhance reliability, both reviewers independently read the titles and abstracts of the remaining records and screened any studies deemed potentially eligible. No automation tools were used in the process. There were no disagreements; hence, discussions with an arbitrator were not required and consensus was reached in all cases. Standardised data collection forms were piloted and then used to identify core programme components including duration, intensity, use of stakeholders and theoretical frameworks to develop a coding frame and overarching typology. Descriptive information including population and study characteristics were also extracted and coded. Quantitative data were extracted to allow for calculation of effect sizes (such as mean change scores and standard error or pre and post means and standard deviations). Data were extracted for the intervention and control groups on the relevant outcomes measured to assess the intervention effects. Assessment of methodological quality and potential for bias was conducted using the ROB-2 tool for randomised studies (Higgins, Savović, Page & Sterne, 2019 and the ROBINS-I tool for non-randomised studies (Sterne, Hernán, Reeves, Savović, Berkman, Viswanathan et al., 2016).

Results

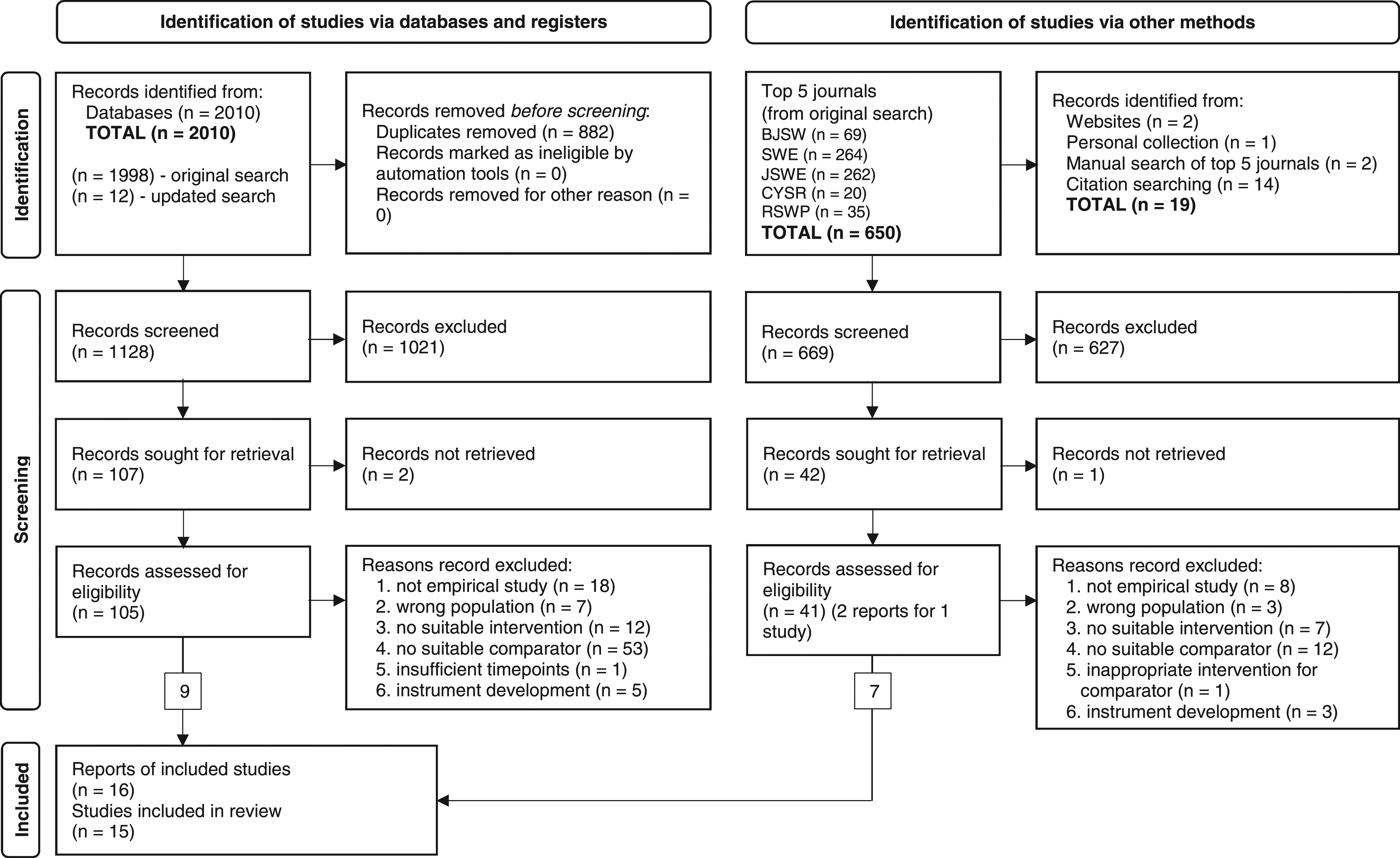

The main bibliographic database and registers search, completed in September 2019, returned 1998 records with an additional 12 added after the search was updated in June 2021. After 882 duplicate records were removed, 1128 were subjected to initial screening by title, and abstract if necessary, following which a further 1021 records were removed because they were not relevant to the topic. Of the 107 remaining records, 2 could not be retrieved; therefore, 105 records were fully screened for eligibility, 9 of which met the inclusion criteria.

Another 650 studies were identified through recent editions of the 5 journals most frequently identified through the database search. A further 19 studies were identified through other methods including citation searching within the included studies. Of the 669 studies subjected to initial screening, 627 were removed because they were not relevant to the topic. One record could not be retrieved resulting in 41 records being fully screened for eligibility, of which 34 records were excluded, and 7 records (reporting 6 studies) were included.

Of the 15 included studies, two experiments are reported in a single paper (Barber, 1988), one study is reported in two papers (Greeno, Ting, Pecukonis, Hodorowicz & Wade, 2017; Pecukonis, Greeno, Hodorowicz, Park, Ting, Moyers et al., 2016) – with both authors contributing to the write-up of each, and another study (Larsen & Hepworth, 1978) is also written up as the first author’s PhD thesis (Larsen, 1975). The combined search results are shown in the PRISMA diagram in Figure 2. PRISMA diagram.

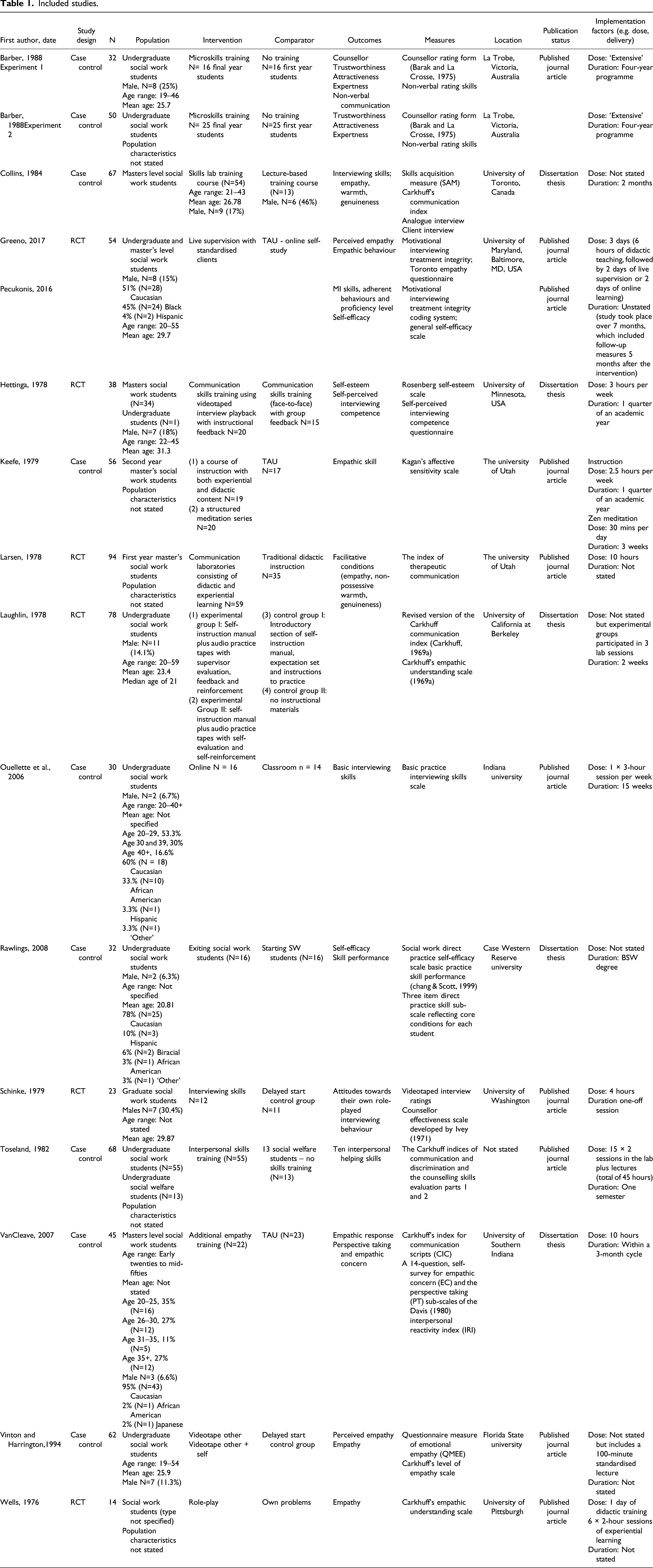

Included studies.

Nine studies employed a case-controlled design, whereby students were not randomly allocated to different groups. These studies suffer from weak internal validity, with confounders such as maturation, the Hawthorne effect, testing effects and pre-existing differences between the intervention and control groups. Such issues are common in educational research. Six studies were randomised controlled trials; however, small sample sizes contributed to two studies being underpowered.

Widespread heterogeneity within the included studies rendered our anticipated measures of treatment effect non-viable; thus, a meta-analysis was not appropriate, nor was it possible to implement some methods outlined in the protocol, such as sensitivity and subgroup analyses. Informal methods were used to assess heterogeneity, configuring ordering tables by hypothesised modifiers based on study design (a methodological characteristics) and on population characteristics including sex, age). Similarly, we were unable to use the GRADE Guidance to summarise the overall certainty of evidence relating to the primary outcomes. All 15 studies were included in the narrative synthesis.

The review found that experiential learning was the dominant underpinning theoretical orientation of the intervention under investigation. Experiential learning involves learning by experience, in which the learner takes on an active role, followed by reflection and analysis of that experience, which they use to further develop their learning. Conceptualisations from psychotherapy were informed by the microskills counselling approach developed by Ivey, Normington, Miller, Weston & Haase (1968) and Ivey & Authier (1971) and the Human Relations training model developed by Carkhuff and Truax (1965) and Carkhuff (1969c). Conceptualisations deriving from a constructivist view of education drew on the experiential learning approach deriving from Kolb’s (1984) experiential learning cycle and Schön’s (1987) concept of reflective practice, both of which have informed learning on professional courses. Bandura’s work is also visible. Social learning theory (Bandura, 1971), ideas about self-reinforcement (Bandura, 1976), the role of self-efficacy (Bandura, 1997) and outcome expectations Bandura, 1982) for skill development are referred to within the body of evidence. Irrespective of which conceptualisation is used, experiential learning is the main theoretical orientation underpinning the teaching and learning of communication skills in social work education.

The role of experiential learning is evident in the delivery format and teaching methods under investigation. Two of the earlier studies (Collins, 1984; Larsen & Hepworth, 1978; n = 161) identified practice-based experiential learning as superior to a traditional didactic lecture-based approach. Conversely, in Keefe’s (1979) study (n = 56), the experiential group did not make the expected gains, unless they also participated in structured meditation. The more recent studies focussed on classroom-based teaching versus online delivery. One study found no significant differences between the two (Ouellette, Westhuis, Marshall & Chang, 2006; n = 30). However, another study found that live supervision with standardised clients compared favourably with the treatment as usual, which was online self-study (Greeno et al., 2017; Pecukonis et al., 2016; n = 54).

Other studies compared specific intervention components. The role of active learning for students was important whether that included participation in role-play with peers or simulated clients. A study comparing the use of role-play using participants’ own problems found neither one to be preferential; it was the active experimentation of students that was key to their interpersonal skills development (Wells, 1976; n = 14). The role of the instructor was also an issue of interest in three studies (Hettinga, 1978; Laughlin, 1978; Greeno et al., 2017 and Pecukonis et al., 2016; n = 170). Although there are not enough studies comparing like for like to draw any firm conclusions, the current body of research suggests that the rehearsal of skills through role-play or simulation accompanied by opportunities for observation, feedback and reflection offer benefits for systematic communication skills training, facilitating small gains – on skill-based outcome measures at least.

Considerable variation in terms of dose and duration is evident across the included studies. The briefest intervention was a single 4-hour training session whilst the longest intervention, described only as ‘extensive’ appears to be interspersed throughout a 4-year degree course (Schinke et al., 1979 ; n = 23; Barber, 1988; n = 82). Literature has documented the ability to teach empathy at a minimally facilitative level in as few as 10 hours (Carkhuff, 1969c; Carkhuff & Berenson, 1976; Truax & Carkhuff, 1967). Indeed, Larsen & Hepburn (1978) found positive change occurred from a ten-hour intervention, but ‘estimated that 20 hours, preferably 2 hours per week for 10 weeks, would be ample’ (p.79). However, Toseland and Spielberg (1982) suggested that the course under investigation in their study, which lasted approximately 45 hours (30 hours of which were experiential learning in a laboratory) may not be sufficient to increase students' skill to the level of competence expected of a professional worker. A number of studies did not report details regarding dosage and duration of the intervention, and some provided rather vague or imprecise details, rendering comparative aims regarding dosage and duration futile.

The issue of selection bias was addressed through rigorous and transparent inclusion criteria. We found no evidence of publication bias and took steps to minimise the risks including a wide reaching and extensive search (excluding outcomes) and contacting subject experts to identify any publications we might have missed through our search strategy. Strategies typically used to assess publication bias, such as funnel plots, were not feasible due to their small size and number, and lack of power.

Effects of the Intervention

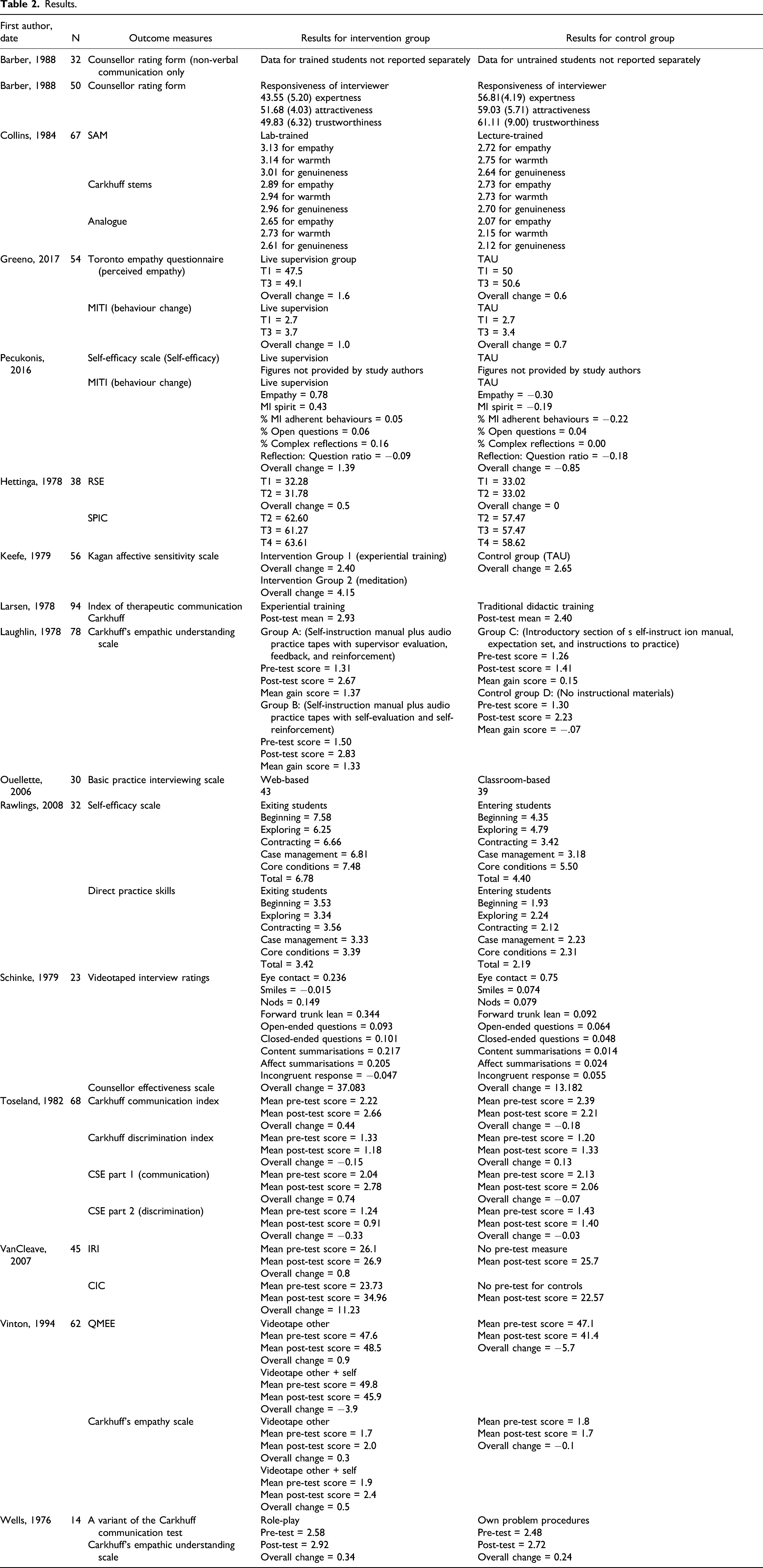

Results.

Level 1

Level 1 learner reactions include students’ satisfaction with the training and their views about the learning experience. Two of the included studies (Laughlin, 1978; Ouellette et al., 2006; n = 108) gathered quantitative data on learner reactions but found no significant correlation between the variables of satisfaction or enjoyment and improvement in students’ communication skills.

Level 2

Empathy and Understanding: Level 2a outcomes relate to changes in attitudes or perceptions towards service users and carers, their problems and needs, circumstances, care and treatment (Carpenter, 2005; 2011). Four of the included studies (Keefe, 1979; Vinton and Harrington, VanCleave and Greeno et al., 2017; n = 217) looked at empathic understanding, yet no statistically significant changes in students' empathic understanding were identified, irrespective of the type of self-report measure used. However, in an extension of his original design, Keefe (1979) found the combined effects of experiential training and meditation produced mean empathy levels beyond those attained by master’s and doctoral students. The heterogeneity of these empathy scores may perhaps be explained by the inclusion of meditation in Keefe’s study, which was not present in the other three studies.

The role of self-esteem and self-efficacy in communication skills attainment was tested in three studies (Hettinga, 1978; Pecukonis et al., 2016; Rawlings, 2008; N = 124), none of which found a difference with practice skills ratings.

Possible risk of harm: Level 2b which includes the acquisition of procedural knowledge ‘used in the performance of a task’ (Carpenter, 2011, 126), featured as an outcome in two reports. Contrary to expectations, and the findings of the other studies in this review, Barber’s (1988) report of two experiments including 82 participants found that the reactions of students who had received microskills training were less accurate than the reactions of untrained students. Barber (1988) acknowledges that artificiality in the first experiment might have led to trained students being more critical than their non-trained counterparts. In the second experiment, Barber (1988) perceived similar ratings between untrained students and clients as evidence that the trained students were underperforming. However, it is possible that the trained students were looking out for different responses than the untrained students and clients. Barber speculated that training reduced student’s capacity to empathise with the client; however, the outcomes of interest: trustworthiness, attractiveness and expertness, which is what students were asked to rate, do not measure empathy, and hence, the face validity of this measurement is questionable. Design limitations are also apparent, with Barber acknowledging that the first year and final year student groups may have been different to each other on variables other than the training.

Barber’s (1988) experiments are important, because the findings that social work students appeared less able to judge responsive and unresponsive interviewing behaviour after training in microskills than counterparts who had yet to receive the training would suggest this teaching intervention could have an adverse, undesirable or harmful effect. However, other studies, including Toseland and Spielberg (1982), in which students were matched on factors such as demographic variables and pre-course experience produced more positive results. It may be that Barber’s paper is an exception to the rule, such that his findings should be interpreted cautiously, with due consideration of the measurement and design issues evident within both experiments. The evidence for this outcome was inconclusive, because too few studies contributed data despite this being an easy outcome to measure.

Skills: Thirteen reports (Collins, 1984; Greeno et al., 2017; Pecukonis et al., 2016; Hettinga, 1978; Larsen & Hepworth, 1978; Laughlin, 1978; Ouellette et al., 2006; Rawlings, 2008; Schinke et al., 1979; Toseland & Spielberg, 1982; VanCleave, 2007; Vinton & Harrington, 1994; Wells, 1976; n = 605) covering 12 studies investigated communication skills. Results are modest yet promising. Skills can be divided into initial skills and compilation skills.

Initial skills: Acquisition of initial skills was an outcome reported in seven studies (n=428) (Collins, 1984; Larsen & Hepworth, 1978; Laughlin, 1978; Toseland and Spielberg, 1982; VanCleave, 2007; Vinton and Harrington, 1994; Wells, 1976). Skills are often practised individually, in response to short statements or vignettes.

Larsen and Hepworth (1978) assessed students' skill levels in providing empathic responses to ‘written messages’. The experimental groups surpassed the control groups on achieved levels of performance. Toseland and Spielberg (1982) sought to replicate and expand on Larsen & Hepworth's (1978) study by developing and evaluating a training programme. Students in receipt of the training increased their ability to communicate effectively using 10 core helping skills including genuineness, warmth and empathy.

Laughlin (1978) sought to test self-instructional methods in an interviewing skills course. One experimental condition relied on self-reinforcement whilst the other received external reinforcement and feedback from an instructor. Both experimental groups produced greater learning gains after training than either of the two control groups, although there was no significant difference between the gain scores of the two experimental groups. Laughlin (1978, 65) suggests that ‘self-managed behaviour change can, under certain circumstances, prove to be as efficacious as externally controlled systems of behaviour change’. However, students in the self-reinforcement group rated their own empathic responses, whereas the supervisor rated the responses of students receiving the other experimental condition. As Laughlin (1978, 68) acknowledged, ‘the self-instruction group may be considered a product of inaccuracy in the self-evaluation process’.

Vinton and Harrington (1994) were also interested in the role of the self in student learning. Only students in the intervention group ‘videotape other and self’, who videoed themselves role-playing client to social work interactions, made gains that reached statistical significance. A slight decline occurred in the control group between pre-test and post-test. Wells (1976) compared the effects of role-play and using participants’ own problems for developing empathic communication skills through facilitative training. Although no preferential effect between role-play and own problem procedures was identified, Wells (1976) suggested the active experimentation of students in both treatment arms was the reason for the modest outcome gains seen in both groups.

Collins (1984) used two written skills measures to capture initial skills. Mean scores at post-test for empathy, warmth and genuineness were slightly higher for lab-trained students than lecture-trained students. However, statistical significance was only reached for empathy. Collins (1984) suggests this might be because lecture and lab training prepare students for training on the relatively straightforward measure of producing written statements as responses to short client vignettes. Warmth and genuineness might be easier to demonstrate than empathy; hence, lecture-based students could manage them satisfactorily. Similar, but slightly higher findings were demonstrated through the Skills Acquisition Measure (SAM), wherein students were asked to respond in writing to a series of vignettes. The lab group showed a positive and significant improvement at the end of the lab training compared to their skills at the start, and their post-test scores compared favourably with lecture-trained students. Collins (1984) concluded that the findings provide evidence that lab-based training is effective for teaching interpersonal interviewing skills for social work students.

VanCleave (2007) noted that making an advanced verbal empathic response is arguably more challenging than producing written statements. In her study, expert raters evaluated the videotaped verbal responses of students to actors. The intervention group received a higher mean score at post-test than they did at pre-test and the overall change score was statistically significant for empathy response training. The post-test score of the intervention group was also significantly higher than that of the control group.

Skill compilation: Carpenter (2005, 12) defines skills compilation as ‘the grouping of skills into fluid behaviour’. Skill compilation was investigated in seven reports covering six studies (N = 244) (Collins, 1984; Greeno et al., 2017; Pecukonis et al., 2016; Hettinga, 1978; Ouellette et al., 2006; Schinke et al., 1979; Rawlings, 2008).

Students’ self-rating of their own competencies is one method of measuring skill compilation (Carpenter, 2011). Three studies (N = 91) (Hettinga, 1978; Ouellette et al., 2006; Schinke et al., 1979) utilised this method. After completing videoed role-plays, students in Schinke et al. (1979) study rated their own interviewing skills; the mean change score was significantly higher for the intervention group than it was for the control group. Using a similar approach, Hettinga (1978) also found significantly higher scores for the intervention group, suggesting that students’ self-perceived interviewing competence was positively impacted by videotaped interview playback with instructional feedback. In Ouellette et al.’s (2006) study, although there were few statistical differences between the groups, classroom-based students responded more favourably towards pedagogical activities than their peers taught online.

Six reports covering five studies (N = 206) (Collins, 1984; Greeno et al., 2017; Pecukonis et al., 2016; Ouellette et al., 2006; Rawlings, 2008; Schinke et al., 1979) measured skill compilation using observer ratings of students’ communication skills in ten-minute role-plays or simulated interviews. Three studies compared the skills of students who had received training with those who had not. Collins (1984) found significant and modest improvements occurred in analogue interviews, whereby students played the client and social worker roles. Lab-trained students also demonstrated more skill than the lecture-trained group. In Schinke et al.’s (1979) study, expert raters found the intervention group demonstrated more improvement in a range of verbal and non-verbal communication skills, receiving higher scores for forward trunk lean, open-ended questions, content and affect summarisations. They also displayed fewer incongruent responses than controls. Similarly, Rawlings (2008) found exiting students scored higher than entering students on each practice skill set, which included beginning, exploring, contracting, case management skills, and the core conditions of genuineness, warmth and empathy.

Two studies sought to compare the effects of different approaches. Ouellette et al. (2006) evaluated the acquisition of interviewing skills between students taught in a traditional face-to-face class and students using a Web-based instructional format with no direct contact with an instructor. Significant differences were identified for only two of the 21 interviewing skills measured (attentiveness and being relaxed) whereby the online students were slightly more proficient than their peers in the traditional class. Ouellette et al. (2006) concluded that the interviewing skills of an online class versus those taught in a traditional face-to-face classroom setting were ‘approximately equal’ on completion (p. 68). Students in the study reported by Greeno et al. (2017) and Pecukonis et al. (2016) received live supervision with simulated clients or treatment as usual, delivered online. Improvements in skills were evident for both groups; however, the overall change for empathic behaviours was statistically higher for the intervention group compared to controls. Although the effect size reported was small, Greeno et al. (2017) express cautious optimism for the intervention. Pecukonis et al. (2016) also observed that the live supervision group received Motivational Interviewing spirit scores which were comparatively higher than the TAU group and maintained their proficiency at 5 months post-intervention.

In summary, the included studies, measuring initial skills or the compilation of skills, demonstrated modest gains in students’ communicative abilities, including general social work interviewing skills and expressed empathy, following training. Gains were modest yet promising.

Level 3 is the implementation of learning into practice. Collins (1984) found students did not transfer their learning from the laboratory into practice, which he suggests was because of measurement anxiety, problems with the measures and the fundamental differences between lab and fieldwork settings.

None of the included studies addressed outcomes from Level 4a – changes in organisational practice or 4b – benefits to users and carers.

Overall, the review found that systematic training does produce modest, yet identifiable improvements in students’ communicative abilities, including empathy. This finding is in keeping with reviews about communication skills training (Aspegren, 1999) and empathy training (Batt-Rawden, Chisolm, Anton & Flickinger, 2013) for medical students and nursing students (Brunero, Lamont & Coates, 2010). One outlier (Barber, 1988) found trained students placed less value on responsive and unresponsive interviewing behaviour and were less accurate in their ability to predict clients’ reactions than their untrained counterparts. However, there was no convincing evidence to suggest that the teaching and learning of communication skills in social work education causes adverse or harmful effects.

Discussion and Applications to Practice

The purpose of this review was to determine the effectiveness of teaching communication skills to social work students. Whilst there was overall consistency in the direction of mean change for the development of communication skills of social work students following training, we must acknowledge that the body of evidence is small in terms of eligible studies, the numbers of participants within them, and that most of the studies are rather dated. Significant gaps in the evidence base remain and the picture provided by the extant body of evidence is incomplete – it does not reflect the involvement of people with lived experience, or the newer innovations or technological advances used in social work education.

The findings of this systematic review broadly agree with the knowledge reviews about communication skills produced for the Social Care Institute of Excellence (Trevithick et al., 2004; Luckock et al., 2006). The knowledge reviews highlight that despite a lack of evidence, weak study designs, and a low level of rigour, study findings for the teaching and learning of communication skills in social work education are promising. Reviews of communication skills and empathy training in medical education where RCTs and validated outcome measures prevail, also suggest that communication skills training leads to demonstrable improvements for students. The findings from our review identified the same gaps as those found in the UK-based social work knowledge and practice reviews for social work education, suggesting that little has changed. Trevithick et al. (2004) suggest that interventions are under-theorised and the issue of whether students transfer their skills from the classroom to the workplace is unclear. This review echoes these findings. Diggins (2004) and Dinham (2006) identified the existence of far greater expertise and more examples of good practice than that reflected in the literature. Regrettably, our review suggests little has changed in almost 20 years. In the USA, methodological design flaws were identified by Sowers-Hoag and Thyer (1985) almost 40 years ago. The requirement for social work educators and researchers to increase their use of experimental research studies remains.

The quality of evidence remains a cause for concern. For the non-randomised studies, the risk of bias was assessed as moderate to serious or incomplete. For the randomised trials, only one study received an overall low risk of bias rating, with an additional two studies receiving a low bias rating in one outcome measure but not the other. For the other randomised trials, the overall bias ratings were high.

Given the range and extent of bias identified within this body of evidence, caution should be exercised in judging the efficacy of the interventions for improving the communicative abilities of social work students.

Rigour across the body of evidence was poor, with the review revealing a number of methodological challenges. First, there is considerable variation in the way the study authors define and conceptualise key constructs, particularly in relation to empathy. The construct of empathy lacks clarity and consensus (Gerdes, Segal & Lietz, 2010) and conceptualisations have changed over time. The issue is not unique to social work. Referring to a health context, Robieux et al. (2018, 59) suggest that ‘research faces a challenge to find a shared, adequate and scientific definition of empathy’.

Second, the included studies identified challenges regarding outcome measures. Some included studies used validated scales, whereas others developed their own measures. However, even with validated scales, measurement problems were encountered by the study authors. Self-reports including self-efficacy scales have been adapted for research into the teaching and learning of communication skills of social work students specifically (e.g. Koprowska, 2010; Lefevre, 2010; Tompsett et al., 2017). However, the limitations of using self-efficacy as an outcome measure are widely acknowledged (Drisko, 2014). Response-shift bias can mask the positive effects of an intervention, which may explain why no change was identified by Pecukonis et al. (2016). The subjectivity of self-efficacy scales has been identified as another area of concern. Students’ self-efficacy scores do not necessarily correlate with externally rated direct practice scores. As Rawlings (2008) cautions, ‘measures of social work self-efficacy are limited to student beliefs or perception regarding skill and do not measure actual performance’ (pp. 7–8).

Although self-report instruments are still the most common way to measure empathy (Ilgunaite, Giromini & Di Girolamo, 2017; Segal et al., 2017), the challenges associated with this outcome measure (Lietz, Gerdes, Sun, Geiger, Wagaman & Segal, 2011; Robieux et al., 2018) was clearly demonstrated in this review. Study authors anticipated that students’ perceived empathy levels would increase following training, but this expectation did not come to fruition in at least three studies, despite the study authors using different self-report measures. High and perhaps inflated ratings caused by social desirability at pre-test might have masked the improvements researchers anticipated. Concerns regarding the validity of self-report questionnaires are well rehearsed. The finding that self-report scores did not significantly correlate with other measures that were used alongside them lends support to the claim that empathic attitudes are not ‘a proxy for actions’ (Lietz et al., 2011, p. 104). It is possible that skills training has more impact on students’ behaviours than their attitudes, a point made by Barber (1988) and Greeno et al. (2017). Regardless of the varying explanations, self-report measures of empathy tell us very little about empathic accuracy (Gerdes et al., 2010, 2334).

Observer ratings, conducted by independent raters, are often considered to be more valid and reliable measures of communication skills than the subjective self-report measures. Observation measures were the primary instrument employed by the researchers of the included studies and produced the clearest demonstration of the effects of communication skills training. However, observation measures also posed some challenges for the studies included in this review, for example, the repeated use of scales in training and assessment creates the problem of test–retest artefacts (Nerdrum & Lundquist, 1995). The validity of the Carkhuff (1969a and 1969b) scales, a measurement instrument used in several of the included studies, has also been questioned. Whilst Carkhuff maintains that ‘both written and verbal responses to help stimulus expressions are valid indexes of assessments of the counsellor in the actual helping role’ (1969a, p. 108), the study authors disagreed. Collins (1984) found ‘students were significantly better at writing minimally facilitative skill responses than demonstrating them orally as measured in a role-play interview’ (p. 124). This lack of equivalence between modes of responding was also acknowledged by Schinke et al. (1979) and VanCleave (2007).

The research designs used to investigate the effectiveness of interventions in social work education lack rigour, with few adhering to the key features of a true experimental design. As Carpenter (2005, p. 4) suggests, ‘the poor quality of research design of many studies, together with the limited information provided in the published accounts are major problems in establishing an evidence base for social work education’ (Carpenter, 2005, p. 4). Identifying a dearth of writing which addressed the challenging issues of evaluating the learning and teaching of communication skills in social work education, Trevithick, Richards, Ruch, Moss, Lines & Manor, (2004, p. 28), in a UK-based review, point out that ‘without robust evaluative strategies and studies the risks of fragmented and context restricted learning are heightened’. Similar issues arise in educational research more generally.

Another concern is that the study authors are predominantly social work academics conducting research within their own institutions. Therefore, the credibility of these studies is potentially threatened by researcher allegiance, positionality and confirmation bias (Montgomery & Weisman, 2021). The studies included in this review are not large multi-team trials, rather the study authors are working in small groups or alone, which hampers the resources available to them to mitigate bias in data collection and analysis procedures. Using an independent statistician to facilitate the blinding of outcome measures would have enabled study authors to overcome the inability to blind the participants or the experimenters.

Communication skills and empathy can be developed through structured teaching; some implications for practice can be identified. Findings suggest that the teaching and learning of communication skills in social work education should provide opportunities for students to practice skills in simulated and real environments. Wilt (2012) argued that simulation fosters more in-depth learning than discussions, case studies and role-plays, due to the location of the student in the role of the worker and real-time decision-making that includes ethical considerations. Opportunities to ‘observe’ practice examples through audiotapes, videotapes and playback were deemed to have a facilitative quality, a point recognised by the study authors who drew on Bandura’s work (Hettinga, 1978; Laughlin, 1978; Vinton and Harrington, 1994). The active engagement with the evaluation and feedback process appears to be an important underlying mechanism for change. However, our understanding of how these skills are learnt remain limited. Interactions in social work practice and education are inherently relational, and whilst instructors are mentioned, the role of peers and people with lived experience are barely acknowledged by the studies in the review. In the UK, where service user and carer involvement is mandatory, social work educators are very aware of the value that people with lived experience bring to the educational context, but our understanding of how and why stakeholder collaboration is so fundamental to teaching and learning needs to be further theorised and researched (Reith-Hall, 2020).

With the global pandemic exacerbating the growth of online teaching, it is also imperative that we develop pedagogic practices for web-based instruction in social work education and test them rigorously. Educators need to adopt a more nuanced perspective than the perceived wisdom that face-to-face teaching is better than online delivery for skills practice. This review identified significant heterogeneity of populations and interventions, low methodological rigour and high risk of bias within the included studies alongside challenges relating to defining key concepts, measuring outcomes and employing robust research designs. Therefore, caution should be exercised when interpreting the findings for practice and policy.

These limitations indicate that outcome studies in social work education generally and for the teaching and learning of communication skills in social work education specifically must be improved. Robust study designs that support causal inferences through the random allocation to intervention and control groups is a necessity. Steps to reduce threats to the internal validity of case-controlled studies should also be exercised. The development of validated and objective measures which can be used consistently across future studies would make comparisons easier and future synthesis more meaningful. Methodological triangulation should also be considered. Follow-up studies to determine whether training benefits endure after the end of training and a though assessment of students’ ability to transfer skills into practice are urgently required. The inclusion of qualitative data in researching the teaching and learning of communication skills in social work education would facilitate exploration and explanation of the quantitative outcomes and enable the voices of the intended beneficiaries of the interventions under investigation and the experiences of stakeholders to be heard and acted upon. Finally, the theory of change appears to be assumed rather than clearly defined. Research that identifies the relevant substantive theories on which the teaching and learning of communication skills is based and can develop our understanding of how and why interventions work would also be helpful. To complement this systematic review, a realist synthesis would support the theoretical development of the teaching and learning of communication skills in social work education, unearthing the influence and impact that key stakeholders have on the teaching and learning process.

Footnotes

Acknowledgements

Thank you to my supervisory team and the stakeholders who contributed their views on this research project.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is supported by ESRC DTP funding [Grant number: ES/P000711/1].