Abstract

Objectives:

This study validated a 36-item Subjective Outcome Evaluation Scale (SOES) and examined the effectiveness of a positive youth development (PYD) program entitled “Tin Ka Ping Positive Adolescent Training through Holistic Social Programs” (TKP P.A.T.H.S. Project) and implemented in mainland China in the 2016–2017 and 2017–2018 academic years.

Methods:

We collected data from 20,480 students from 30 secondary schools in mainland China on their views toward program quality, implementer quality, and program benefits based on the SOES.

Results:

The SOES possessed good factorial, convergent, discriminant, and concurrent validities as well as internal consistency. Besides, the student respondents were generally satisfied with the program quality, implementer quality, and program benefits. Consistent with our hypothesis, junior grade students had more positive perceptions than did senior grade students.

Conclusion:

Utilizing the client satisfaction approach, this study validated the SOES and highlighted the value of PYD programs exemplified by the TKP P.A.T.H.S. Program in a non-Western context.

Keywords

Adolescent well-being is an emerging global issue deserving greater attention. According to Bruha et al. (2018), around 15% of children and adolescents showed mental health problems. In view of the rising prevalence rates of mental health problems in adolescents, one key question we should ask is how to prevent adolescent mental health issues and reduce morbidity? Traditional focus of psychiatry and clinical psychology was on adolescent weaknesses leading to “storm and stress.” However, with the emergence of positive psychology, research under the positive youth development (PYD) framework has been seeking to understand and promote the competences and potentials of young people (Shek, Dou, et al., 2019). One common assertion of different PYD models is that through building up external assets (such as family bonding and community support) and/or internal assets (such as skills and values), young people would thrive with positive developmental outcomes (Shek, Dou, et al., 2019; Shek, Lin, et al., 2020).

Review studies found that PYD programs in different formats (e.g., camp programs, arts programs, and school- and curriculum-based programs) were able to positively impact youth development in a wide range of areas such as academic achievement, self-esteem, social relationship, emotional skills, resilience, and thinking capacity (Durlak et al., 2011; Waid & Uhrich, 2020). For instance, in Durlak et al.’s (2011) review involving 270,034 students, it was reported that school-based PYD programs promoted students’ positive development and reduced their problem behavior. In another review based on 97,406 students who participated in socioemotional interventions in the school context, Taylor et al. (2017) highlighted that the positive outcomes of PYD programs included not only immediate educational benefits but also improved developmental trajectories over time such as graduation and safe sexual behaviors in future. Definitely, these curricula-based programs are important resources for social work in the children and youth work domain.

Nevertheless, effective PYD programs have been identified mainly in Western contexts, especially among U.S. adolescent samples. Despite a recent growing interest in PYD research in non-Western contexts such as South Africa, Brazil, and Hong Kong (Adams et al., 2019; Schwartz et al., 2017; Zhu & Shek, 2020), validated PYD programs in non-Western contexts are few. With specific reference to mainland China, Shek and Yu (2011) concluded that the number of youth prevention programs was highly inadequate after a review of PYD programs in Asia countries. This situation has not improved much after a decade. Just as Wiium and Dimitrova (2019) remarked: “PYD research has mainly been conducted within the US context” (p. 2).

In addition to the lack of PYD programs and related studies in mainland China, there are three other reasons why we should develop PYD programs and conduct PYD studies in China. The first reason is the generalizability of PYD programs and research findings across different populations. In 2018, the adolescent population (aged 10–19) in mainland China was 166.86 million, roughly 13.5% of the world’s adolescent population in the same age range (UNICEF, 2019). As such, it is essential to investigate whether the existing claims, such as that PYD programs are effective and PYD research findings are valid, are applicable to Chinese adolescents as well. This point is essential as far as cultural sensitivity in social work is concerned.

The second reason is the generalizability across different cultures. While Western adolescents develop in societies where there is a strong emphasis on individual rights and personal autonomy, Chinese adolescents are under traditional Chinese socialization focusing on collectivistic interests and interdependence as well as Western influence emerged in recent decades along with rapid industrialization and globalization. Researchers pointed out that the elements of parental control, social harmony, the requirement of obedience of children, and filial piety are intrinsic to Chinese parenting (Leung & Shek, 2020). As China has a unique history and culture of more than 5,000 years, it is important to ask whether Western PYD theories and programs rooted in individualistic ideologies are applicable to Chinese adolescents.

The third reason is that with rapid urbanization and industrialization in the past four decades, risk factors impairing holistic adolescent development have accumulated, and adolescent developmental problems have intensified in mainland China. For example, Jiao et al. (2016) remarked that “the psychological health problems of children and adolescents have been more and more serious recently” in China (p. 15). Yang et al. (2015) reviewed studies on suicidal behavior in Chinese young people and reported that the lifetime prevalence of suicidal attempts was 2.7%. Tang et al. (2020) reviewed 51 studies (

Tin Ka Ping Positive Adolescent Training Through Holistic Social (TKP P.A.T.H.S.) Project in Mainland China

A notable PYD program in the Chinese context is the P.A.T.H.S. Project, which was initially implemented in Hong Kong to promote holistic development among youths in Hong Kong. This project incorporated 15 PYD attributes identified in effective PYD programs by Catalano et al. (2004), such as “bonding,” “emotional competence,” “resilience,” “self-efficacy,” “positive identity,” and “spirituality,” in its 120 curricula-based junior secondary teaching units. Student participants took 40 units each year during the 3-year junior secondary school learning, with each unit lasting for 30 minutes of teaching. Based on different evaluation strategies such as randomized group trial, one-group pretest–posttest design, subjective outcome evaluation developed on a client satisfaction approach, and qualitative evaluation (e.g., student diary and focus groups), findings provided support for the effectiveness of the P.A.T.H.S. Project since its commencement in 2005 (Ma & Shek, 2019; Ma, Shek, & Leung, 2019; Shek, 2019; Shek & Zhu, 2020).

As the P.A.T.H.S. Project is very successful in Hong Kong, and there is an urgent call for solid PYD programs in mainland China, the P.A.T.H.S. Project was introduced to mainland China with the financial support provided by Tin Ka Ping (TKP) Foundation. From the 2011–2012 academic year to the 2013–14 academic year, the TKP P.A.T.H.S. Project was initially implemented in four secondary schools in four cities in East China, including Shanghai, Yangzhou, Changzhou, and Suzhou. As the evaluation findings were very positive (Shek, Han, et al., 2014; Shek, Yu, et al., 2014), the project was further implemented in 30 schools in China from the 2015–2016 to the 2017–2018 academic years (i.e., the full implementation), after one-year intensive training provided to over 450 school teachers as the potential program implementers in the 2014–2015 school year. The junior secondary school curricula used in Hong Kong were adapted for local use by using simplified Chinese characters, Mandarin expressions, and teaching materials (e.g., examples and cases) that are conceptually similar and better fit into social–cultural features in mainland China. Besides, the research team further developed senior secondary school curricula, and the project was extended to senior secondary school students.

To understand the program impacts in the full implementation stage, we conducted objective outcome evaluation, subjective outcome evaluation, and qualitative evaluation. As far as objective outcome evaluation is concerned, we conducted a quasi-experimental study with an experimental group (

Subjective Outcome Evaluation

In addition to the above two forms of evaluation, subjective outcome evaluation using a validated client satisfaction scale serves as an effective evaluation method. Subjective outcome evaluation assesses the perceptions of service recipients on the service received, worker(s) providing the service and impacts of the services. Such an evaluation approach based on client satisfaction has a very long history (Gutek, 1978) and has been commonly used in many domains of human services including education, psychology, social work, counseling, clinical services, and nursing (Fraser & Wu, 2016; Sozer et al., 2019). For example, in the field of higher education, validated measures of course evaluation have been developed (e.g., Chen et al., 2015; Spooren et al., 2007). The client satisfaction approach has been widely used in the social work field (Fraser & Wu, 2016; Hsieh, 2012; Posmontier et al., 2019) as well as in the high school context (Kallestrup, 2018; Pavasajjanant, 2010).

There are several advantages of conducting subjective outcome evaluation using the client satisfaction approach. First, it is cost-effective and convenient to use after completing a program. Clients can complete the questionnaire within a short period, and service providers can get first-hand information about clients’ experiences in a timely manner. Second, as subjective outcome evaluation is not time-consuming and data collection does not involve high technical requirements, frontline professionals, such as teachers and social workers, can easily use this method to evaluate the service they have provided. Third, through the accumulation of client satisfaction data over time, the findings can reveal the perceived quality and effectiveness of a program under consideration (Shek & Sun, 2014; Shek, Yang, et al., 2020).

Despite these advantages and the extensive adoption of subjective outcome evaluation in different fields, the use of the client satisfaction approach in program evaluation has been challenged for its nature of subjectivity. For example, participants may assess the service based on interpersonal relationships with program implementers rather than the service quality or give positive evaluations to show their cooperation and appreciation (Shek, 2014). The most critical issue is that positive subjective outcome evaluation findings do not necessarily indicate program effectiveness. Just as Weinbach (2005) commented, “the major problem of using client-satisfaction surveys as indicators of intervention effectiveness, or of quality of a service, is that satisfaction with services and successful intervention are not the same” (p. 38).

In view of these criticisms, it is essential to enhance the objectivity of subjective outcome evaluation in data collection and analysis by using validated and reliable instruments. In fact, if validated and reliable assessment tools are used, subjective outcome evaluation can yield insightful findings that are well-correlated with objective outcome evaluation findings (Shek, 2014; Sun & Richardson, 2016). However, validated tools on subjective outcome evaluation are almost nonexistent in mainland China, especially in the secondary school sector. This major issue is closely related to the fact that published scientific papers based on subjective outcome evaluation are rare in the Chinese context although there are ample studies in Western societies. Utilizing “client satisfaction” as a search term, a search in PsycINFO showed that there were 7,271 citations on September 14, 2020. If we added Chinese OR China, the result showed that there were only 148 citations. Besides, computer search using “subjective outcome evaluation” and Chinese OR China showed 87 pieces of work, all of which were carried out in Hong Kong. The few exceptions employed the client satisfaction approach to evaluate service effectiveness on different aspects (e.g., client satisfaction with services and service providers) in mainland China (Munro & Duckett, 2016; Xu & Du, 2018); however, the psychometric properties of the instruments were unknown.

Considering the above deficiencies in the existing literature, it is necessary to promote the client satisfaction approach in program evaluation in mainland China by using validated tools. To achieve this goal, this study attempted to examine the psychometric properties of the 36-item Subjective Outcome Evaluation Scale (SOES). We also used this scale for evaluating the TKP P.A.T.H.S. Project. Based on the conceptual framework widely used in the assessment of subjective outcomes in higher education and social services (Fraser & Wu, 2016; Shek et al., 2016; Shek, Yang, et al., 2020), the SOES used in this study had three dimensions including students’ perceptions on program quality such as the design of the curriculum (10 items), instructor performance such as their attitude and mastery of teaching skills (10 items), and program benefits such as the promotion of self-confidence and resilience (16 items).

Regarding the psychometric properties of the SOES, we examined the following research question:

For factorial validity, we expected that there would be support for the invariance of the three-factor structure of SOES (Hypothesis 1a). We also expected that there would be support for convergent validity (Hypothesis 1b) and discriminant validity (Hypothesis 1c). For concurrent validity, it was hypothesized that ratings in the SOES would be significantly associated with three external criteria, including whether the participants would recommend the program to others (Hypothesis 1d), join similar programs again in the future (Hypothesis 1e), and overall satisfaction (Hypothesis 1f). For reliability, we expected that the total scale and subscales would have adequate reliability (Hypothesis 1g).

With reference to the results of subjective outcome evaluation, we addressed the following two research questions:

Based on the previous findings (Shek & Law, 2014; Shek, Zhu, et al., 2019; Zhu & Shek, 2020), it was expected that majority of the respondents would have positive evaluations (Hypothesis 2).

As previous findings suggest that students at lower grades tended to have more positive views toward PYD programs than did students at the senior grades (Shek & Law, 2014), we hypothesized that junior high school students would have relatively more positive perceptions of program quality, program implementer quality, and program impacts (Hypotheses 3a, 3b, and 3c).

Method

To evaluate student participants’ perceptions of the TKP P.A.T.H.S. Project in the full implementation stage from 2015–2016 to 2017–2018 academic years, students responded to the SOES upon completion of the program each year. As the data collected in the 2015–2016 year have been analyzed and reported elsewhere (Shek, Lee, & Ma, 2018; Shek, Wu, & Law, 2018), this study utilized data collected in the 2016–2017 and 2017–2018 years.

Participants and Procedures

At the end of the second semester each year, each project school was asked to randomly select one or more classes of students who joined the project to complete a questionnaire examining their subjective evaluations of the project. Students responded to the questionnaire voluntarily in their classrooms with the presence of a class teacher who explained study purposes, voluntary participation, and principles of anonymity and confidentiality. All the invited students agreed to complete the questionnaire. As this is part of the subject (i.e., collection of feedback at the end of the course), we asked the consent from the students. Besides, the parents agreed that their children joined the activities (including the evaluation) of the project. In total, 20,480 (10,977 in the 2016–2017 year and 9,863 in the 2017–2018 year) completed questionnaires were collected by the project schools. Among these questionnaires, 14,829 were completed by students at junior grades (Grade 7−9), and 6,011 were completed by students at senior grades (Grade 10−12).

Measures

Modeling after existing measures on program participants’ subjective evaluations on program implementation (Shek et al., 2016; Shek & Ma, 2014), we developed a 36-item “SOES” to assess student participants’ views toward the project. The first 10 items measure perceived program quality, such as objectives and design of the program, on a 6-point scale (1 =

In addition to the SOES, three additional items were included to assess (1) whether the participants would recommend the program to others (1 =

Data Analysis

The primary psychometric property under study is the factorial validity of the SOES. We first examined the skewness and kurtosis of all items in the SOES to check normality. According to Curran et al. (1996), if requirements of normality are met (i.e., absolute values of skewness and kurtosis below 2 and 7, respectively), maximum likelihood (ML) estimation could be used in confirmatory factor analyses (CFA) with limited bias. Otherwise, ML with bootstrapping technique (2,000 resampling) can be used to reduce possible bias caused by normality violation (Arifin et al., 2012; Byrne, 2016).

In the second step, we performed CFA to test the prior three-factor structure (i.e., perceived program quality, implementer quality, and benefits) using the whole sample and different subsamples (e.g., junior secondary students and senior secondary students).

In the third step, multigroup CFA were conducted to test measurement invariance across grade level (i.e., junior vs. senior) as well as subsamples grouped based on case numbers (odd vs. even). We examined several forms of factorial invariance sequentially, including (1) configural invariance (baseline model with no constraints across subsamples), (2) weak factorial invariance (factor loadings were set to be equal across subsamples), (3) strong factorial invariance (item intercepts were further constrained to be equal), (4) structural covariance invariance (factorial variance and covariance were further set to be equal), and (5) strict factorial invariance (equality of item residuals was further imposed).

Several indices were employed to evaluate the overall model fit, including “comparative fit index” (CFI), “Bentler-Bonett nonnormed fit index” (NNFI), “standardized root mean square residual” (SRMR), and “root mean square error of approximation” (RMSEA). A general rule is that values of CFI and NNFI ≥ .90 and values of SRMR and RMSEA ≤ .08 indicate adequate model fit (Kline, 2015). As there is bias in the χ2 difference test when the sample size is large, we used a more practical criterion (change in CFI, or ΔCFI, equals to or below .01) to determine measurement invariance (Cheung & Rensvold, 2002).

The second aspect of the psychometric properties concerns the convergent and discriminant validity of the SOES. Convergent validity was assessed by “average variance extracted” (AVE) with a value equals to or higher than .50 (i.e., half or more variance in the observable items can be accounted for by the respective latent factor than residuals) indicates good convergent validity (Fornell & Larcker, 1981; Hair et al., 2006). For discriminant validity, it was examined by comparing AVEs of a pair of factors with the two factors’ shared variances indexed by the value of squared correlation. If the values of AVEs of a pair of factors are higher than their shared variance, there is support for the discriminant validity of the factors (e.g., Arifin et al., 2012).

Third, for concurrent validity, we examined the associations between participants’ evaluations toward the three facets in the SOES and their ratings on the additional three items using structural equation modeling (SEM) with bootstrapping technique (2,000 resampling). In the SEM model, three dimensions of the SOES were latent independent variables indicated by respective items, and the three satisfaction ratings were observed dependent variables.

Finally, we examined the reliability of the scale and subscales. Based on the whole sample, we examined “composite reliability” (CR) for each subscale by assessing factor loadings of the items included. According to Fornell and Larcker (1981), a value of .70 or higher indicates good reliability. Besides, internal consistency in terms of Cronbach’s αs and mean interitem correlations were calculated for each factor based on the whole sample to further check subscales’ reliability.

After clarifying the psychometric properties of the SOES, profiles of participants’ responses in SOES were also analyzed through descriptive statistics. Finally, a one-way multivariate analysis of variance was conducted to test whether junior high school and senior high school students differed in their perceptions of the program, workers, and benefits.

Results

Normality Tests

For items in the subscale of perceived program quality and benefits, absolute values of skewness and kurtosis values were below 2 and 7, respectively, suggesting that these items can be regarded as following a normal distribution (Curran et al., 1996). However, absolute skewness values for the 10 items in the subscale of implementer quality were between 2.11 and 2.46, and kurtosis values of three items in this subscale were between 7.09 and 7.77. Thus, items in this subscale had moderately nonnormal distribution. Given that the normality assumption was violated, ML estimation with the bootstrapping technique (2,000 resampling) was used in CFA to reduce bias (Arifin et al., 2012).

Psychometric Properties of the SOES

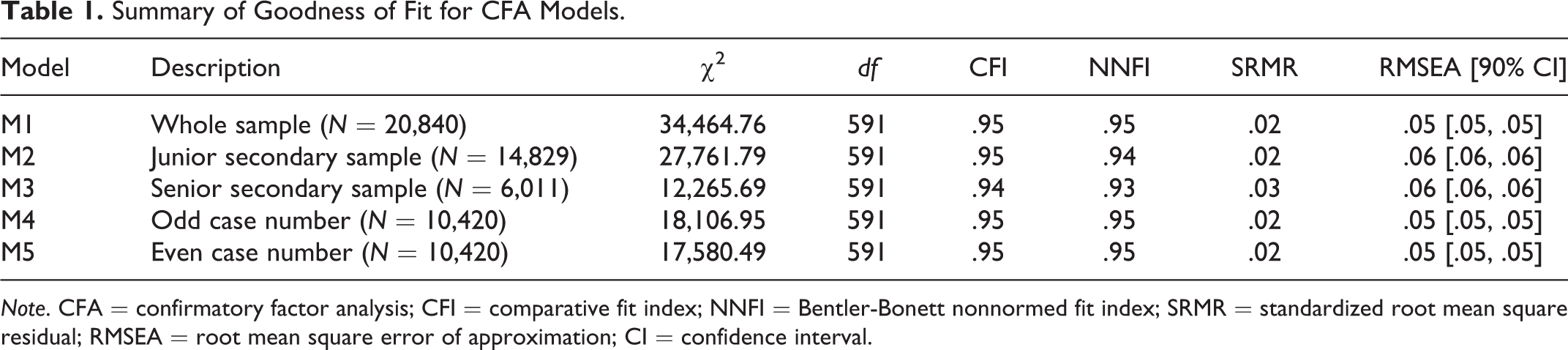

As shown in Table 1, the three-factor structure model fitted the data well (CFI ≥ .94; NNFI ≥ .93; SRMR ≤ .02, and RMSEA ≤ .06), no matter which sample (the whole sample or different subsamples) was used. Therefore, the three-factor structure of the SOES was well-established.

Summary of Goodness of Fit for CFA Models.

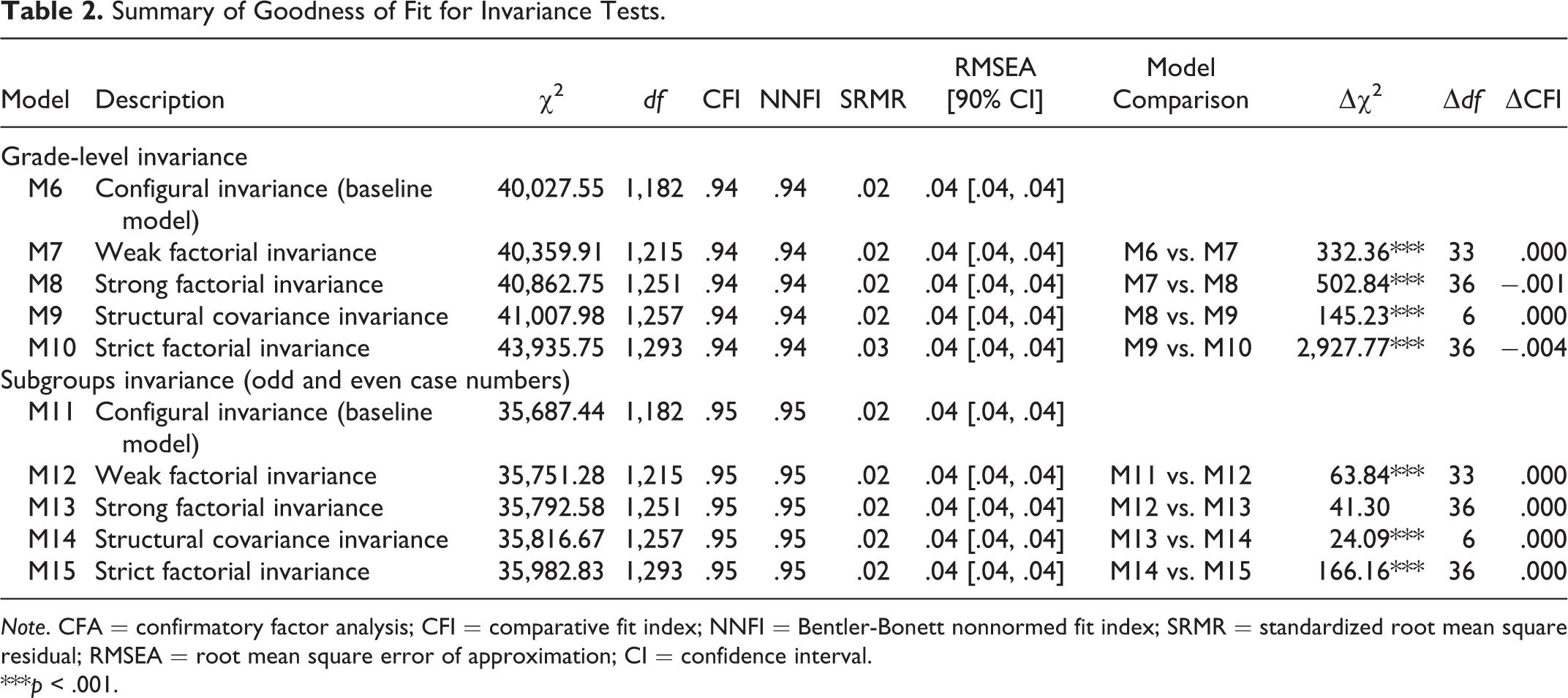

We further tested measurement invariance across the grade levels by adding equality constraints step by step in a series of nested models (see Table 2). First, Model 6 without equality constraints well fitted the data (

Summary of Goodness of Fit for Invariance Tests.

***

With identical procedures, measurement invariance was tested between two subsamples formed based on case numbers (“odd” or “even”). Results were also depicted in Table 2. All models showed adequate goodness of model fit (CFI = .95, NNFI = .95, SRMR = .02, RMSEA = .04). Most importantly, CFI remained unchanged (ΔCFI = .000) when equality constraints were sequentially imposed, suggesting invariant factor loadings, item intercepts, structural covariance, and item residuals between the “odd” and “even” subsamples. In summary, the findings of the invariance tests supported factorial invariance of the three-factor structure of SOES. Thus, Hypothesis 1a was supported.

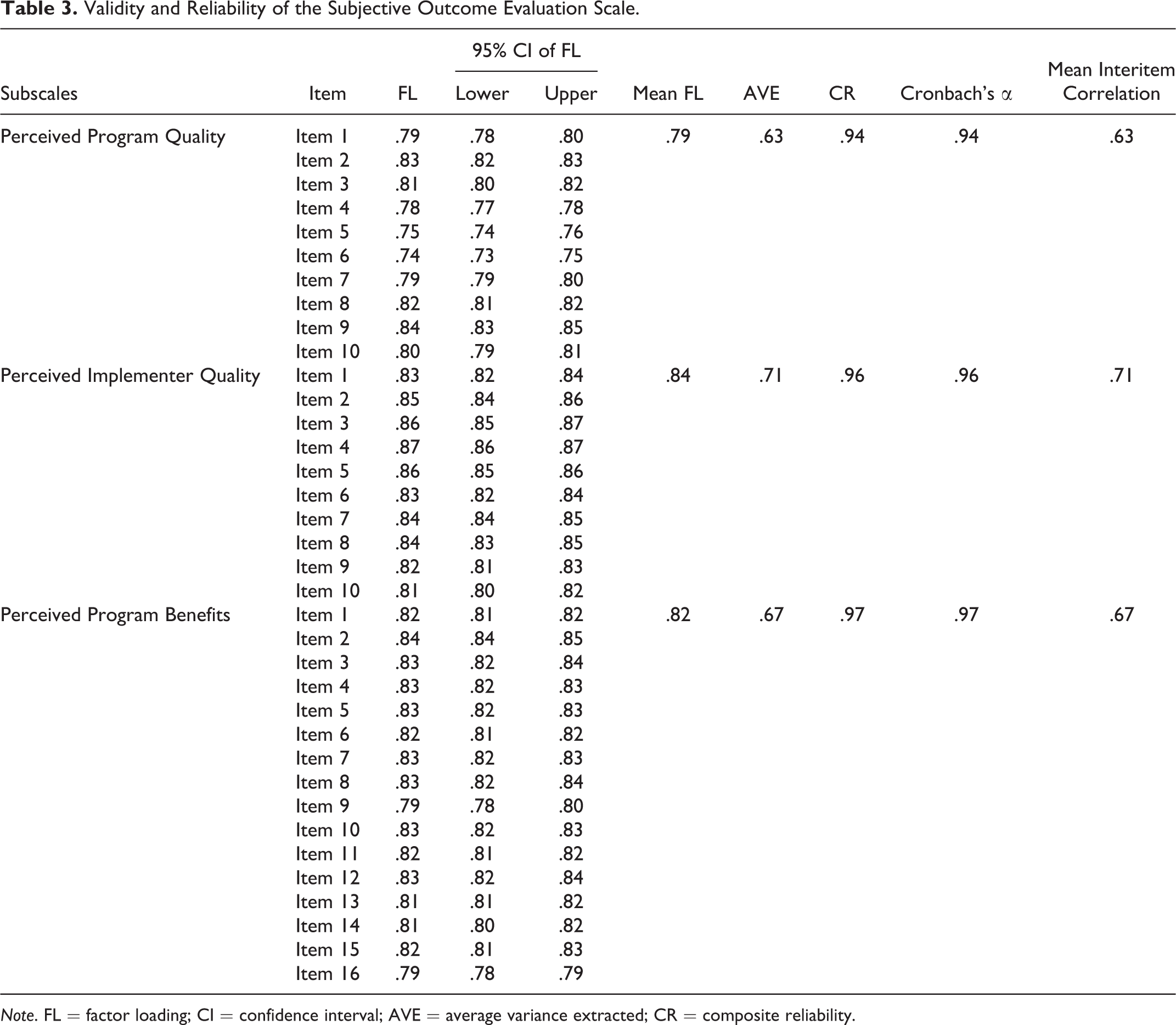

Convergent validity indicators were calculated based on CFA using the whole sample. As shown in Table 3, the “average variance extracted” (AVE) calculated based on factor loadings (all ≥ .70) was .63, .71, and .67 for the three factors, respectively, indicating that over 60% of the observable items can be accounted for by the respective latent factor than residuals. In addition, there were significant correlations between perceived program quality and implementer quality (

Validity and Reliability of the Subjective Outcome Evaluation Scale.

Discriminant validity was checked by comparing AVEs of any pair factors to the shared variance between them as indicated by the squared correlation coefficient. If the AVEs are larger than the shared variance, it means that the items of the latent construct explain more variance than do the items of the other construct, suggesting adequate discriminant validity (Arifin et al., 2012; Fornell & Larcker, 1981). In this study, the aforementioned correlation coefficients (

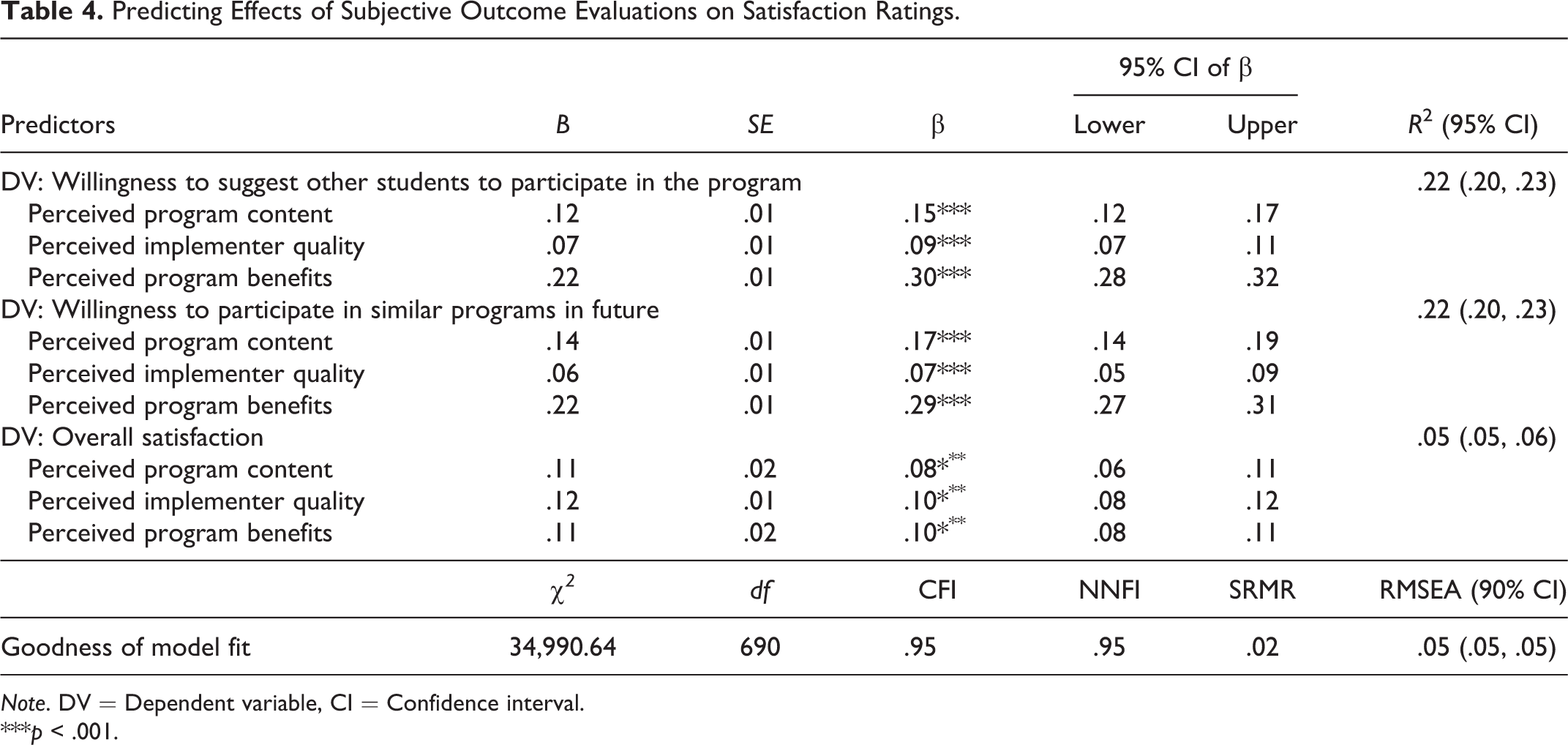

For the concurrent validity of the scale, we performed SEM to understand how the SOES measures predicted the three criterion measures (i.e., recommends the program to others, join similar programs, and overall satisfaction). In Table 4, the model showed adequate goodness of fit with CFI and NNFI equaling to .95 and SRMR and RMSEA below .06. Results indicated significant predictive effects of the three latent variables (i.e., “perceived program quality,” “perceived implementer quality,” and “perceived program benefits”) on “participants’ willingness to suggest other students to participate in the program” (

Predicting Effects of Subjective Outcome Evaluations on Satisfaction Ratings.

***

For the reliability of the SOES measures, the CR calculated based on factor loadings was .94, .96, and .97 for the three factors, respectively, all of which were higher than .70 as a rule of thumb, thereby indicating good reliability of the scale (Fornell & Larcker, 1981). Finally, good internal consistency was indicated by Cronbach’s αs (≥ .94) and mean interitem correlations (.63−.71; see Table 3). Thus, Hypothesis 1g was supported.

Profiles of Participants’ Subjective Evaluation

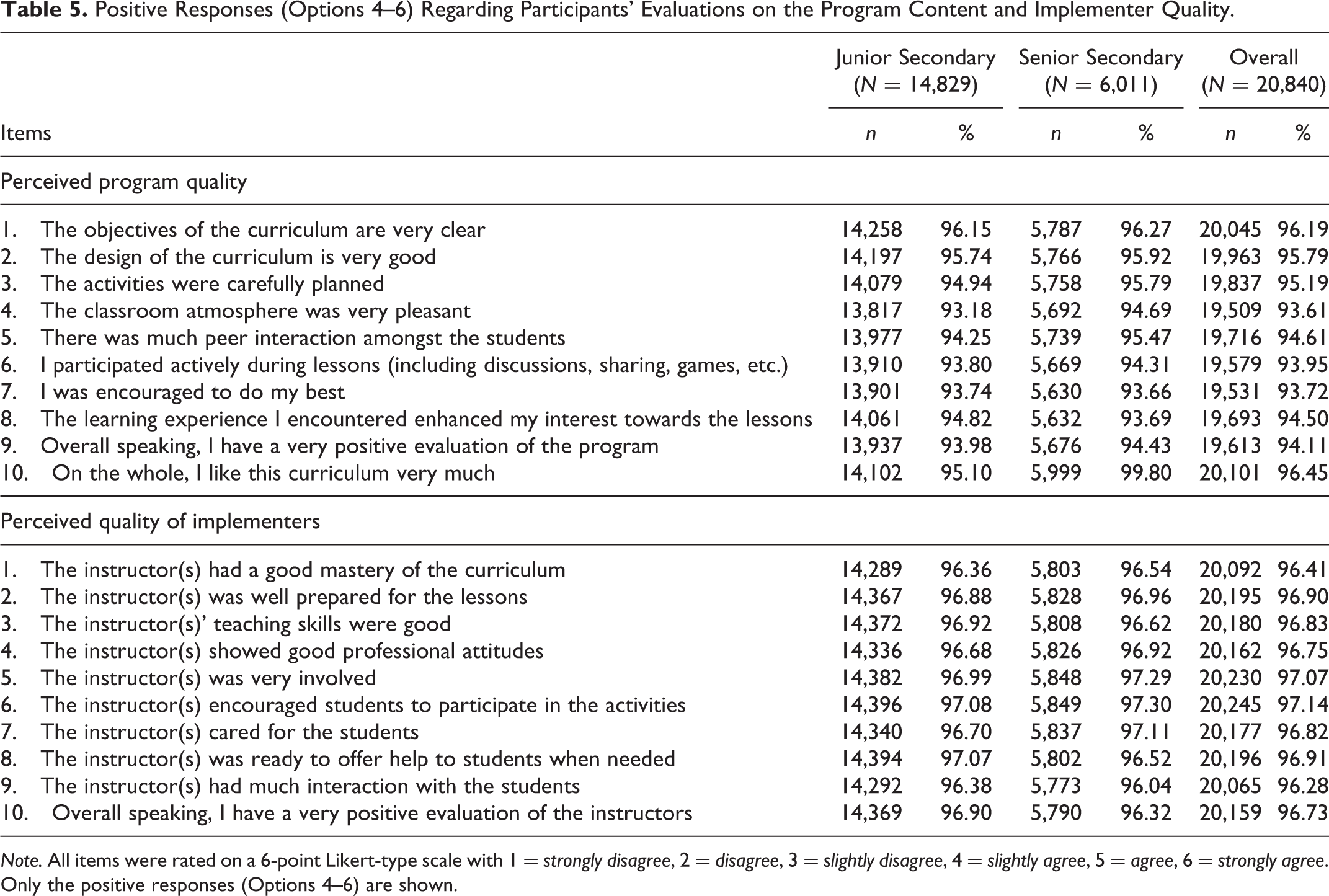

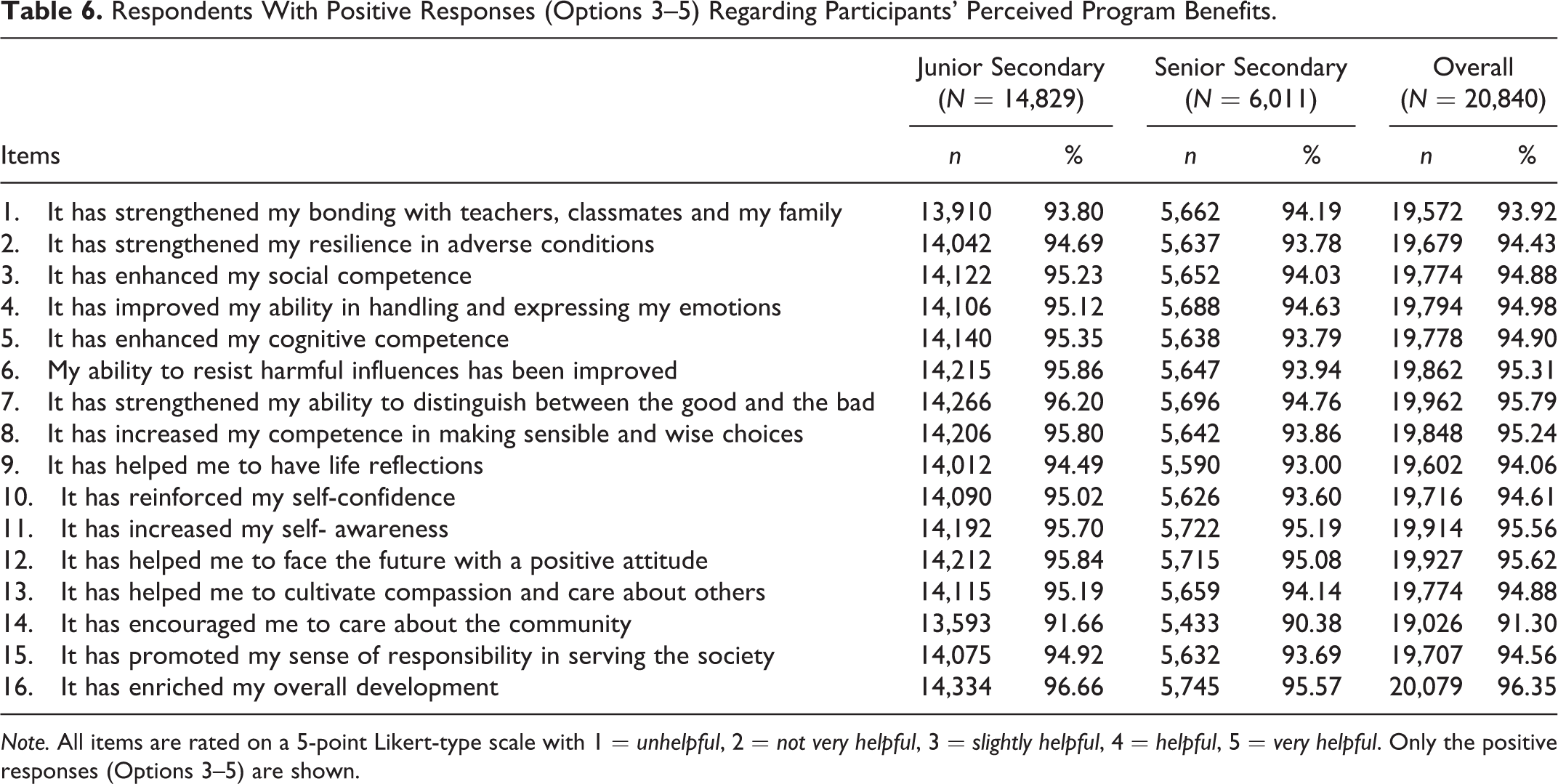

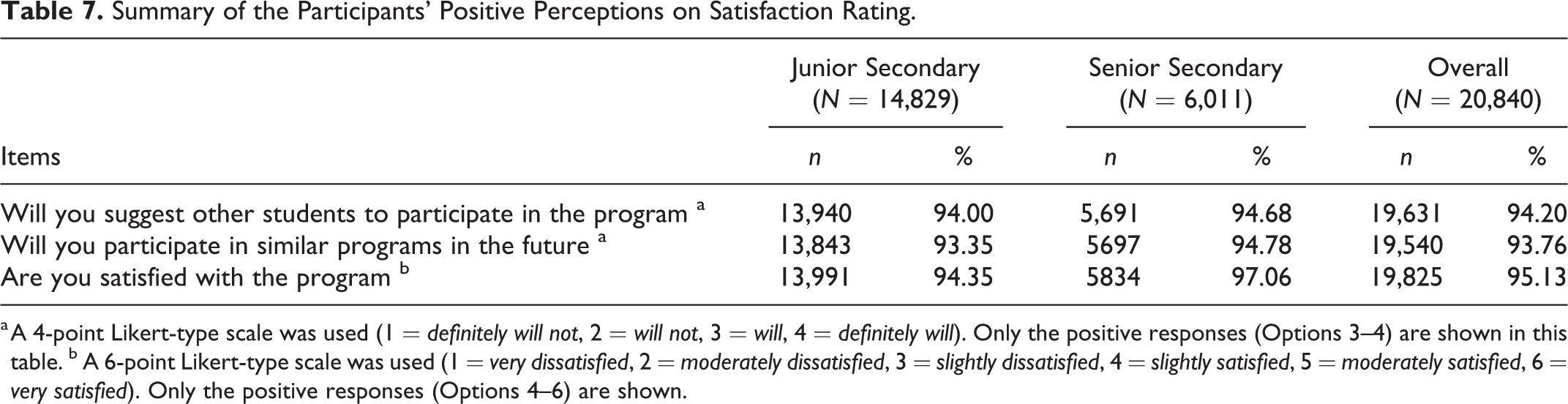

Participants’ positive responses toward the three aspects in the SOES and their satisfaction ratings by grade level are summarized in Table 5 –7. Over 90% of the participating students evaluated the project content positively. For instance, more than 95% of them perceived that the curriculum design is very outstanding, and they like the curriculum very much. Similarly, a very high percentage of respondents held positive perceptions of the program implementers. For instance, more than 96% of them gave positive evaluations of all the different aspects of implementer quality (e.g., preparation, teaching skills, attitudes, and involvement). As for the perceived benefits, there were also predominant positive feedbacks. Over 90% of respondents agreed that they had benefited from program participation for all the listed aspects (e.g., resilience, self-confidence, and compassion). Student participants (over 93%) also showed very favorable ratings on the three items related to their overall satisfaction with the program. These positive evaluations supported Hypothesis 2.

Positive Responses (Options 4–6) Regarding Participants’ Evaluations on the Program Content and Implementer Quality.

Respondents With Positive Responses (Options 3–5) Regarding Participants’ Perceived Program Benefits.

Summary of the Participants’ Positive Perceptions on Satisfaction Rating.

a A 4-point Likert-type scale was used (1 =

Differences in Subjective Ratings Across Grade Level

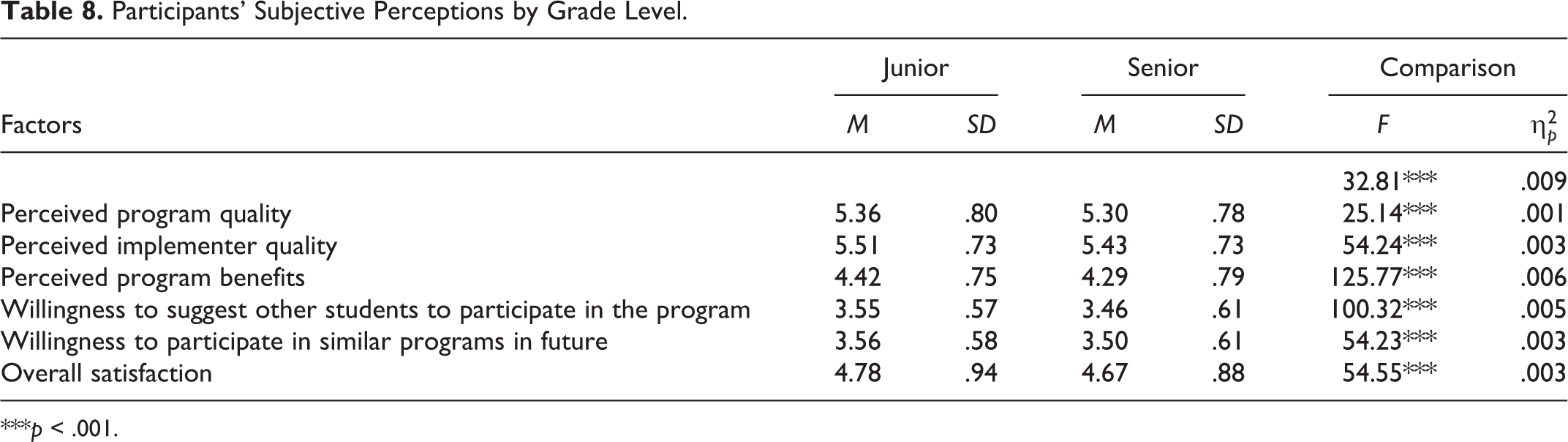

As shown in Table 8, subjective perceptions were significantly different between students at junior and senior grades (Wilk’s λ = 32.81,

Participants’ Subjective Perceptions by Grade Level.

***

Discussion and Applications to Practice

Based on a review of PYD programs in Asia countries, Shek and Yu (2011) concluded that there is a severe lack of validated youth prevention and PYD programs in mainland China. Catalano et al. (2012) similarly pointed out that evidence-based adolescent prevention programs are sparse in non-Western contexts. Hence, the TKP P.A.T.H.S. Project represents a timely response and a pioneering program aiming to promote the holistic development of adolescents in mainland China. There is a need to evaluate the effectiveness of the program because the presence of the program does not mean that it is effective. While earlier studies support the effectiveness of the program (Shek, Han, et al., 2014; Shek, Zhu, et al., 2019), the related samples are not large. Thus, this study further provides additional robust support for the effectiveness of the TKP P.A.T.H.S. Project by analyzing subjective perceptions of a large sample of student participants. With particular reference to subjective outcome evaluation, it is noteworthy that there is no validated measure in the field in mainland China. Hence, another contribution of this study is the validation of a well-articulated SOES that can be used by social work practitioners and researchers to assess client satisfaction in the future.

Regarding the validation of the SOES, findings underscore good psychometric properties of the scale. First, the findings strongly support the three-factor conceptual framework with good factorial invariance across different subsample. Second, the scale showed good convergent and discriminant validities. Third, the concurrent validity of SOES was supported by close associations between the three factors in the SOES and the three criteria. Finally, the SOES and its subscales were internally consistent. Furthermore, the large sample of students from different grades enhanced the generalizability of the findings. These observations strongly suggest that researchers and practitioners can use the SOES objectively to assess the effectiveness of PYD programs. It is important because rigorous program evaluation using validated assessment tools is a crucial element of evidence-based practice in the field of PYD, which is grossly lacking in mainland China (Shek & Yu, 2011; Weersing, 2005).

In this study, the effectiveness of the TKP P.A.T.H.S. Project was evidenced by the student participants’ overwhelmingly positive perceptions on program quality, implementer quality, and benefits of the project. These findings are consistent with the previous studies, which also showed that the project was well-received by the students (Shek, Han, et al., 2014; Shek, Wu, & Law, 2018; Shek, Zhu, et al., 2019). Several factors lead to such observations. First, as the topics (such as emotional management and social competence) were relevant to adolescent developmental needs, students were interested in the program content. Second, as we emphasized the application of the knowledge and experience gained in class, students saw the practical relevance of the program in their lives. Third, as the program emphasized experiential learning, reflective learning, and active interaction among the students and teachers, the student-centered pedagogies significantly increased students’ intrinsic motivation to actively participate in the project activities. Fourth, with solid training (e.g., Shek, Leung, & Wu, 2017; Shek, Zhu, & Leung, 2017), the teachers were able to utilize interactive teaching styles, such as self-disclosure and encouragement of sharing among the students, which further promoted students’ interest and engagement in the program. These conjectures are supported by students’ sharing in their diaries that they enjoyed and appreciated the learning experience in this project as the project is educative and practical and teaching is creative and stimulating (Shek, Zhu, et al., 2019).

Although both junior and senior secondary school students showed very positive perceptions of program quality, implementer, and program benefits, junior grades showed significantly more positive evaluations than did senior grades. This finding is consistent with previous evaluation results for the P.A.T.H.S. Project (e.g., Shek & Law, 2014). Indeed, Western studies also suggest that the effect sizes of youth intervention programs (e.g., social and emotional learning) were negatively correlated with participants’ age (Durlak et al., 2011). January et al. (2011) also noted that early interventions were more effective than interventions with older students. There are several possible explanations for the findings. First, younger students may be more likely to regard the experiential and interactive learning activities as novel and interesting, which would increase their positive evaluation. Second, with cognitive maturation, senior grades students may favor more intellectual forms of learning, such as debates and projects. It might also be more difficult for older adolescents to engage in collaborative and reflective learning. Nevertheless, studies also show that PYD intervention effects were not dependent on participants’ age (Ciocanel et al., 2017). Given the inconclusive picture, future research efforts are warranted to understand how students from different grades perceive different forms of pedagogies and whether they evaluate PYD programs in different ways.

The present findings have several important implications. First, the positive evaluations suggest that compared to the West, curriculum-based PYD interventions are equally applicable to mainland Chinese adolescents (Ciocanel et al., 2017; Durlak et al., 2011). Thus, educators and youth workers such as teachers and school social workers in mainland China are recommended to design PYD curricula and build them into routine school education. This implication is meaningful as it is essential to cultivate life skills and inner strengths among adolescents (Shek, Lin, et al., 2020), and findings have suggested the contribution of PYD attributes to positive adolescent development in mainland China (e.g., Zhou, Shek, & Zhu 2020; Zhou, Shek, Zhu, & Dou, 2020).

Second, this study also reinforces the value of subjective outcome evaluation in assessing program impacts. This study primarily provides a validated assessment tool, including three-facets that fit the commonly adopted conceptual framework in the client satisfaction approach (Fraser & Wu, 2016; Shek, Yang, et al., 2020). Although the present SOES was validated in a school educational context, it is argued that the PYD program can be generalized to other youth service contexts, such as community youth service and social work field, as long as sufficient training is provided for prospective program implementers. In this case, the SOES can also be adapted (e.g., change the wording tailor-made for the school context) and utilized in other youth service contexts such as the social work field. In fact, the P.A.T.H.S. program in Hong Kong has been used in the community contexts by social workers (Ma & Shek, 2019; Ma, Shek, & Chen, 2019; Ma, Shek, & Leung, 2019). Besides, the subjective outcome evaluation gives program participants opportunities to express their first-hand experiences and raise their voices and, at the same time, allows program designers and implementers to have evaluation data provided by different stakeholders in time (Shek, 2013). Most importantly, this evaluation method is relatively easy and convenient to apply in the routine practice of the implementers. As it is economical and easy to administer, subjective outcome evaluation can be regarded as the initial step to be followed by randomized group trials that are costly and complicated.

Third, our findings suggest that the beneficial impacts of PYD programs may be dependent on participant characteristics such as their grade level. It is possible that younger students enjoy more benefits from their participation in PYD programs. Obviously, more studies need to be carried out to further address this issue, and educators, as well as youth workers, should pay attention to targeting students’ developmental needs and features in developing and implementing PYD programs. In future studies, additional demographic and contextual factors, such as student gender, family economic status, and school climate, should be considered to further investigate the differences in responses and the contexts in which the PYD program is more or less likely to be effective.

Despite the pioneering nature of the study in mainland China, there are several limitations of the study. First, although the sample of the study is large, they were based on 30 project schools. Therefore, there is a need to implement the project and collect data from more schools in different parts of China to make the findings more generalizable. Second, although the use of three measures can be regarded as adequate, it would be helpful to use more measures to assess the criterion-related validity of the SOES. In particular, it would be theoretically and practically enlightening to examine how SOES scores predict changes in objective outcomes (such as mental health) over time. The establishment of the relationship between subjective outcome evaluation findings and objective evaluation findings (e.g., pre- and posttest comparison) will further confirm that the client satisfaction approach can serve as a reliable evaluation strategy in different fields. In addition, future research could consider examining test–retest reliability of the scale. Third, the study only collected subjective outcome evaluation data from student participants. It will draw a complete picture if data from other stakeholders, such as program implementers, can also be collected. Additionally, qualitative data can be used to shed light on subjective experiences underlying the quantitative statistics such as why junior grades gave more positive ratings than did senior grades.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was conducted with the approval from the ethics review from the Human Subjects Ethics Subcommittee of The Hong Kong Polytechnic University. This research and the P.A.T.H.S. Project in mainland China are financially supported by Tin Ka Ping Foundation.