Abstract

Background

Learning from feedback is an important part of skill improvement. A common assumption is that failure offers superior learning opportunities when compared to success. However, this assumption remains underexplored in natural time-constrained settings.

Objective

To examine the impact of self-analysis on performance improvement in online speed chess, with a focus on whether analyzing wins versus losses differentially predicts gains in player ratings.

Methods

Using observational data from roughly 2 million online speed chess games, we studied the association between analysis and performance using regression.

Results

Analyzing game outcomes was positively associated with rating improvement, with stronger effects observed when players analyzed wins rather than losses. The total number of games played showed only a weak association with improvement, indicating that repetition of an activity alone is insufficient without reflective practice.

Conclusions

The findings challenge the notion that failure is inherently more instructive than success, suggesting instead that success-based analysis may also offer opportunities for learning improvement. While based on observational data, this study contributes to understanding how feedback mechanisms support learning and performance, particularly in domains requiring rapid, repeated decisions under time constraints.

Background

People can benefit from information about past performances in a variety of activities, but there is evidence that some people overlook it. The ‘ostrich effect’ describes how people avoid low or no-cost feedback (Webb et al., 2013); for example, in financial matters, people are less likely to consult information during market downturns (Olafsson & Pagel, 2017; Sicherman et al., 2016). People also avoid negative health information related to diet and alcohol consumption when it may affect their sense of identity or make them feel uncomfortable (Barbour et al., 2012). However, much of the literature focuses on information about goal progress, and the observation that avoiding information is primarily motivated by a desire to preserve a sense of self-worth (Webb et al., 2013) or avoid information that causes stress and discomfort (Karlsson et al., 2009).

It is widely assumed that people can learn from failure, and that the ostrich effect is a hindrance to improvement and skill development because it prevents us from learning from mistakes (Metcalfe, 2017). While this may be true in the abstract, experimental evidence suggests that people may learn less from feedback about failure than previously assumed (Eskreis-Winkler & Fishbach, 2019). The explanations for this seem to be both emotional and cognitive; cognitively, failures can be more difficult to process in a way that lends itself to future success, particularly because failure often requires more effort to process into useful information. Emotionally, failure can undermine self-esteem, and as a result, can be harder to process and retain (Eskreis-Winkler and Fishbach (2022)).

Early in the development of a skill, just ‘doing’ may efficiently advance learning and skill acquisition, but over time a greater investment in deliberative practice is necessary to continue the learning process (Ericsson et al., 1993). At the same time, research on deliberative practice suggests that there may be limits to what can be gained through studied reflection of performance (Macnamara et al., 2014). Understanding the process of skill acquisition seems to require understanding a balance of these processes; on the one hand, the improvements that can be found by performing an activity, and on the other hand, careful deliberation of that activity (and the form that deliberation takes) can advance learning further.

In this exploratory study, we investigate learning from information in the context of playing a game—specifically, online speed chess. In recent years chess has seen considerable growth of online play (Keener, 2022). Online games typically have short time controls (for example, both players may have 5 minutes to complete all their moves in a game) and chess is an example of a sequential and cognitively demanding decision making activity. On most online chess platforms it is possible for players to analyze their games after they play them. This can involve reviewing each move, typically supported by a chess engine that identifies the impact of moves on the game state. For example, a chess engine can identify a significant mistake (‘blunder’) that a player can then use to learn from. This provides a learning opportunity that can be used as a guide for future play. Using a subset of data from an online chess platform, we ask two questions: 1) do analyses of wins have the same impact on future performance as analyses of losses? And 2) does playing games (just ‘doing’)) have a greater positive impact on improvement than analyzing games (‘deliberative practice’)?

Methods

Data Source

We used data from Lichess.org through their application programming interface (API). Lichess is an open-source online chess platform that allows people to play chess without cost, and has been used previously to understand things like decision-making style (McIlroy-Young et al., 2021) the impact of social status on decision making (Tay, 2023) and compare expert and non-expert behaviour (Chowdhary et al., 2023).

The first step involved downloading an entire month of games (May 2023). We then filtered by blitz games (3-5 minutes per player). We also restricted our search to games involving players with a chess rating between 1600 and 1800 on the Glicko rating scale (Glickman, 1999). This rating is calculated based only on games played within Lichess and this range was chosen because this range of players is stronger than beginners, but still have considerable room to improve their game. This resulted in 35,815 unique players.

We then used the Lichess API to request details about all games of these players between May 1 2023 and May 31 2024. In order to avoid rate limiting restrictions on using the API, instead of using all 35,815 players, we use a two-stage cluster sampling approach. First we randomly sampled 6,000 players. We then sampled up to 90 games per month for each player. We also restrict our analysis to players who were playing actively over the entire period, so included only those who played at least 1 blitz game every month over the time period. The final step involved cleaning the data by excluding games tagged as cheating and games that did not include any moves (likely due to a dropped internet connection). In order to avoid data loss through rate limiting, we added a small delay of 30 seconds between the extraction of each player’s data. The end result is 2,137 players and 1,955,774 games. Downloading the games using the API took roughly 46 hours.

We extracted the Glicko rating of both players in each game, the date of play, how the game ended, who won, and whether or not the game was evaluated with the assistance of a chess computer. This latter measure is used to determine whether a player analyzed their game. This is a very blunt measure of analysis. First, it does not consider analyses that do not include computer assistance. A player that analyzes their game without computer assistance will be mischaracterized as a non-analyzer. Second, this does not indicate which of the two players in a game performed the analysis—only that it was analyzed by someone. As such, some players will be characterized as having analyzed their game without having done so. Importantly, both of these issues bias our results to the null; that is, whatever effect we see is likely an underestimate of the true effect. This is because mischaracterizing players as having analyzed their game when they have in fact not analyzed their game adds statistical noise to the analysis variable that weakens associations between analysis and performance.

Statistical Analysis

We first explore the games as a time series observing changes in rating over the series for each player. In addition we predict the change in chess rating (delta rating) from their first to last game in the series as a function of their rating at the beginning of the series, the total number of games they played, the number of wins analyzed, the number of losses analyzed and the percentage of games that are won. This last variable is important to control for the potential confounding influence of success, which could correlate with both rating change and analysis behaviour. We include all main effects in the models but include interaction and polynomial terms only if they are statistically significant at the α=0.05 level.

Results

Players played a median of 982 games, with a minimum of 140, and a maximum of 1,170. Of the 1,955,774 games played, 119,398 (6.105%) of games were flagged as analyzed with a computer (henceforth referred to as ‘analyzed’). On the chess server, games can end in a draw/stalemate (both representing tied games) or a checkmate, loss on time, or resignation in which one player wins and one player loses. Losses on time occur when a player runs out of time on their clock. Analysis occurred in 5.286% of stalemate/draws, 6.483% of losses and 5.793% of wins.

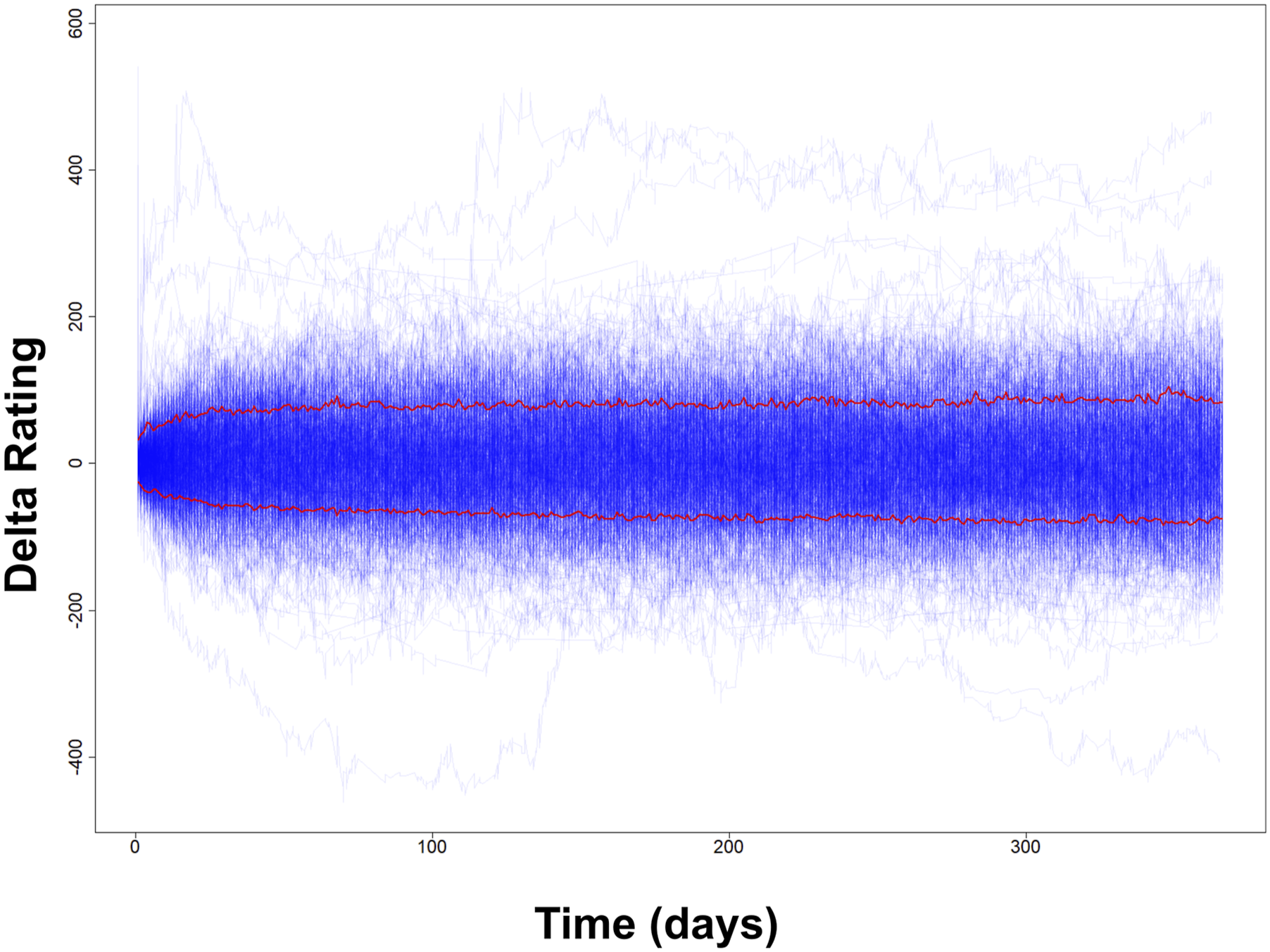

On average, players gained 3.992 rating points over the 13 months, with a maximum gain of 569 rating points, and a maximum loss of 407 rating points. Each player’s performance can be represented as a time series over the 13 months in which data were extracted. Figure 1 shows this pattern over this period for a random sample of 1000 players, with each thin line corresponding to the daily rating for a player. The thicker horizontal lines represent +/- one standard deviation calculated for each day in the series and shows that other than the first few weeks in the series, most players stay within a narrow rating band, and over the course of a year, most player improvement is modest. However, there are exceptions, with some players seeing rapid rises or falls over time. The relationship between rating change and the number of games played

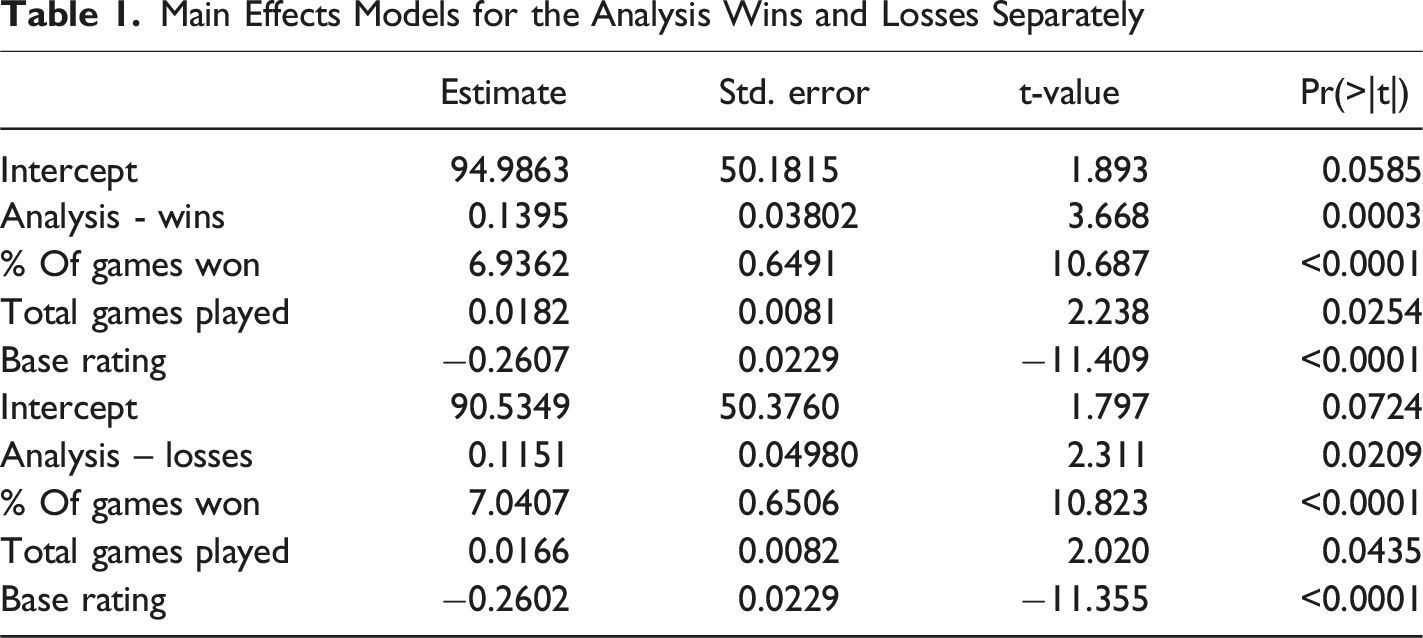

Main Effects Models for the Analysis Wins and Losses Separately

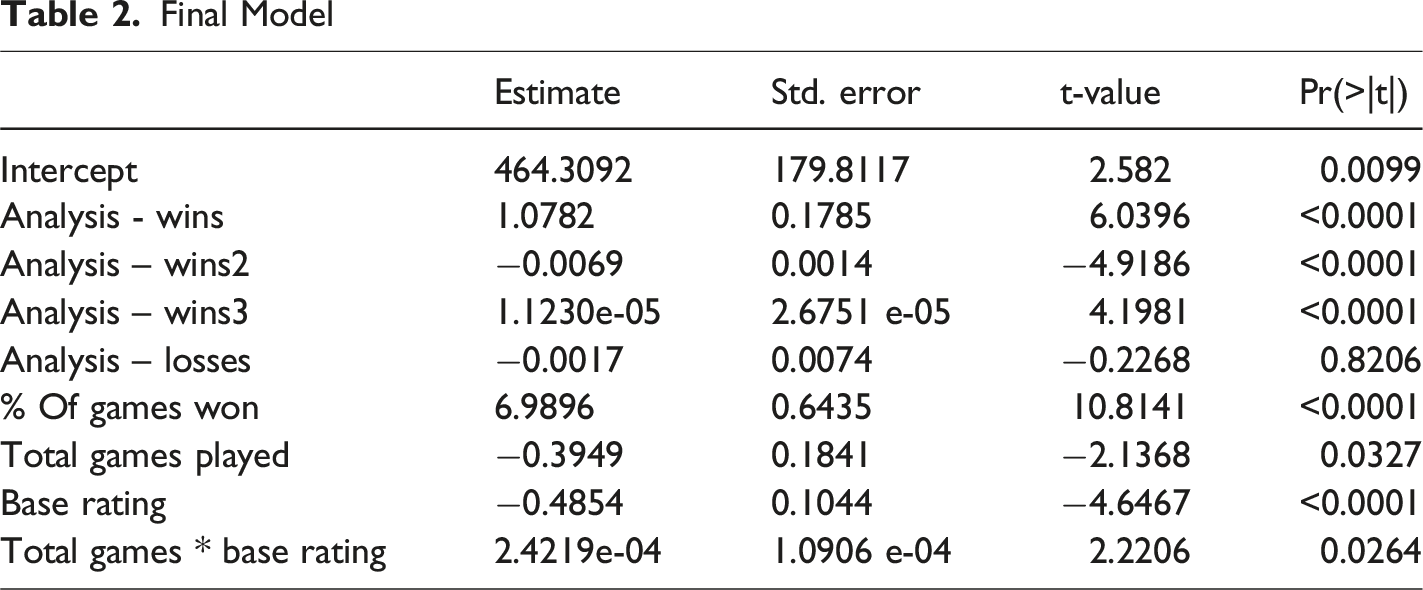

Final Model

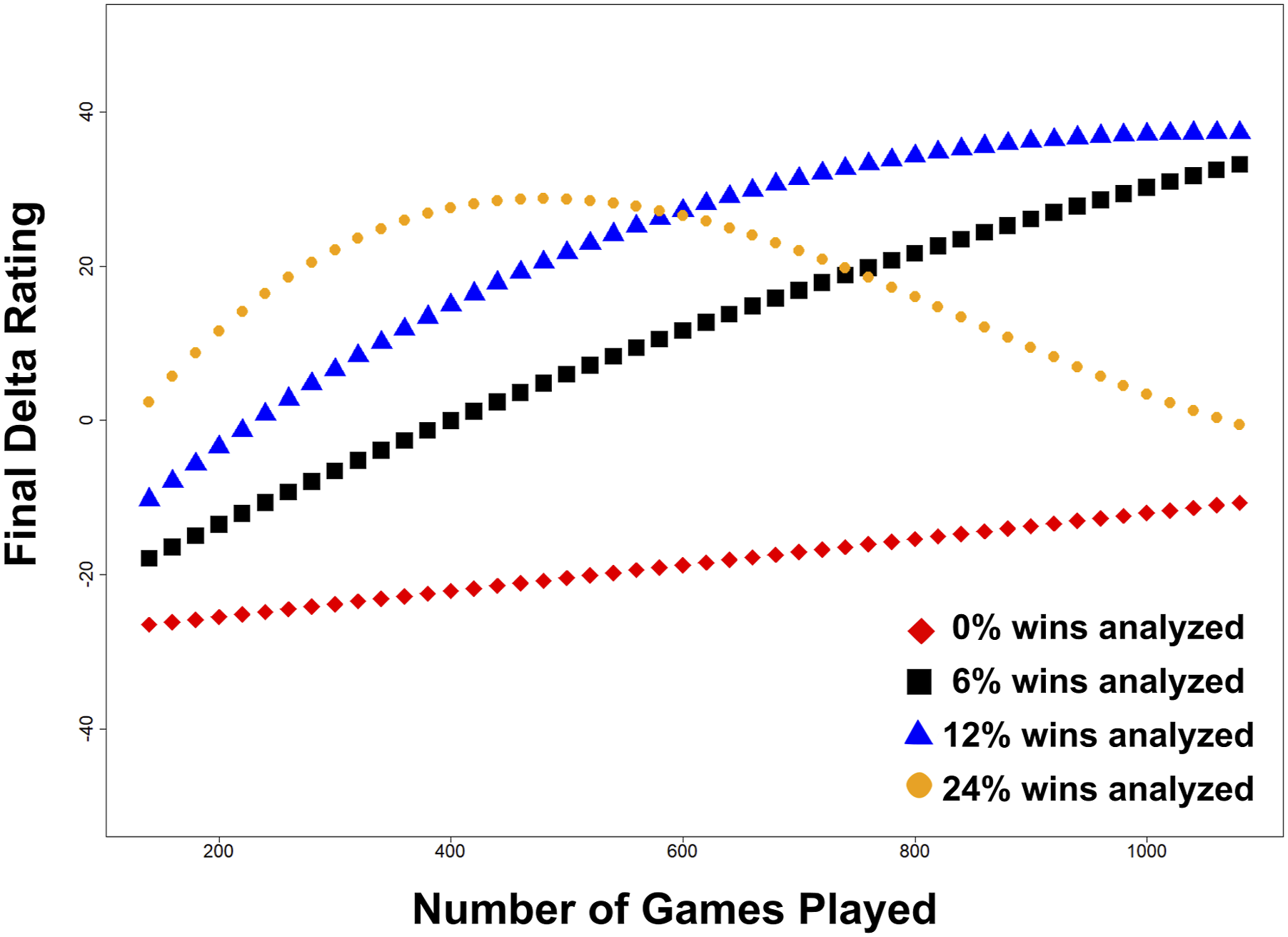

Model predicted rating by games played and % of games analyzed

Discussion

Online speed chess is a game comprised of dozens of quick sequential decisions that have an impact on whether a game result will be favourable (win) or unfavourable (loss). If analysis benefits player performance, it would be expected to do so by gradually improving the decisions they make over time or by speeding up the rate at which they make these decisions. In this study we have examined the impact of both 1) deliberative practice (as analysis of wins and losses) and 2) playing games on chess rating improvement over time. The findings may speak more broadly about how self-analysis could be used to improve decision-making and skill development more generally, but this is largely speculative. The overall effects we observed are modest, and much of the variation in performance over the year seem to be explained by factors exogenous to our analysis—including playing other games, mentorship, the use of chess coaches, reading chess books and, perhaps most significantly, the vagaries of online game playing. For this reason, the results should not be seen as a guide about improving chess play.

Concerning research question 1, our results are consistent with the findings of Eskreis-Winkler and Fishbach (2019) suggesting people may learn less from feedback from failures than from feedback from successes. In speed chess, the effect does not appear to be linear; reviewing more than 20% or so of one’s games may provide little additional benefit to rating. This is also consistent with previous work suggesting that there exist some limits to the benefits of deliberative practice (Macnamara et al., 2014). Concerning research question 2, our results suggest that playing chess may contribute less to the improvement of a player’s rating than analyzing chess games. Indeed, the different magnitude of effects is noteworthy; based on the models in Table 1, for every 10 games played, a player can expect a 1 to 2 unit increase in rating points. In contrast, for every 10 games analyzed, a player could expect a 11 to 13 unit increase in rating points. This may be a self-selection effect; players who choose to analyze games may be more generally interested in improving than players with no expectation of improvement.

Our work is based on secondary observational rather than experimental data, but the effects we have observed here are probably an underestimate of the true effect size, since there is very likely considerable variation in the degree to which players actually review their games. As noted earlier, the analysis data we obtained does not indicate which of the two players in a game did the analysis, or the care with which either player may have analyzed their game, merely that the analysis was flagged by one of the two players. This means that we are very likely over-estimating the frequency of deliberative practice. Moreover, we do not know how rigorous the analysis is; on average, it may be that players spend merely seconds reviewing their game, looking at major mistakes or the overall trend rather than deeply diving into their games. As such, our analysis would only measure the effects of cursory analysis, while the effects of deeper analysis could be even more impactful on improvement.

Our results add to the existing literature on how self-analysis can contribute to improved decision making, particularly in time restricted and sequential decision-making contexts. Outside of chess, our results best generalize to domains in which fast sequential decisions are required. In the real world, rapid sequential decision making is required in many professions, including: airplane pilots, air traffic controllers, emergency service personnel, financiers, military operations and medical professionals. Training in these professions often includes simulation and then reflection on case studies and scenarios to help learners understand the impact of right and wrong decisions on outcomes (Mavin et al., 2018; McKenna et al., 2015). Our results suggest that it may be especially important for the learner to reflect on their successful decision sequences, and that such reflections may help reinforce good patterns that advance their learning. This does not suggest they cannot learn from failures, but that learning from successes could be an untapped opportunity for improvement.

Chess has outcomes that are easy to evaluate. A chess learner can see which moves lead to clear positional weaknesses and tactical opportunities by looking at computer evaluations and game results. In the real world, many activities do not come with objectively measurable success and failure, especially in the short term. This impacts the way that success and failure can be analyzed; for a medical student training to be a family physician, there are often not simple metrics that can provide clear and immediate feedback on every decision. As such, it may be that our results apply more to learning activities in which success and failure can be measured objectively and quickly. For example, learning from success may be more useful to explore for an investment manager—whose success can be quantified in the performance of their portfolio, or in for athlete who can see how their decisions impact the outcome of a game.

Limitations and Suggestions for Further Future Research

As noted above, the measure of analysis we use does not precisely capture the level of self analysis. This very likely biases our results towards the null since our measurement is contaminated by misclassification. However, we cannot rule out other systematic biases that affect our results. As an observational study design, there is also a risk of omitted variable confounding. It may be that analyzing games is confounded with a trait that explains one’s proficiency at improving at chess, rather than the actual effect of analysis itself. This would mean that the effect we are observing is not a measure of the effect of self-analysis on improvement, but a measure of the level of this trait; people with more of this latent proficiency analyze more games, and improve more over time. Another limitation is that ratings do not give a complete picture of improvement. Players in this rating range are playing players with similar ratings, and the rating points gained by one player are lost by another. Indeed, all players may be improving over time by some unknown absolute measure, but this cannot be determined by a rating system which is based on performance against other players. This helps to explain the time series pattern that shows little net change for the players on average.

Future work should explore the causes of the observed effects in an experimental form; for example, whether reflecting on successes increases confidence in a useful way, or whether successes are easier to process cognitively. Future work should also drill into the specifics of analysis in more detail, ideally identifying the depth of analysis a person uses when reflecting on their past decisions.

Conclusion

The results here suggest that reflecting on positive feedback may be a useful strategy for improvement in activities that require sequential decisions under time pressure. For activities that involve rapid sequential decision making, it may be useful to develop more tools that help learners study their successes. Our results also show that by itself, playing speed chess does not lead to timely improvement in player rating. This is consistent with literature suggesting that deliberative practice is an important part of learning and skill improvement.

Footnotes

Acknowledgement

We would like to thank the anonymous reviewers for their helpful suggestions on the manuscript.

Informed Consent

There is no informed consent. The research used open data available online, and did not collect primary data.

Ethical Considerations

No ethics approval was required or sought in this research.

Funding

No funding supported this research

Declaration of Conflicting Interests

I confirm that this manuscript has not been published elsewhere and is not under consideration by another journal. The work is original, and as the sole author, I have approved the manuscript and its submission. There are no conflicts of interest to declare.