Abstract

Background

The addition of biofeedback and artificial intelligence (AI) in simulation training and serious games has shown promising results in improving the effectiveness of training and can lead to increased engagement, motivation, and retention of information. This systematic literature review explores the integration of biofeedback and artificial intelligence into eXtended reality (XR) training scenarios and is the first review to provide a consolidated overview of applied biofeedback and AI technologies in this area.

Method

This review was conducted using keywords related to biofeedback, AI, XR, and training and included papers that: contained the use of biofeedback and AI in XR training scenarios; reported on at least one outcome related to training effectiveness; were published in English; were peer-reviewed; date from 1 January 2016 – 7 February 2022.

Results

The results indicate that many studies collect two or more biosignals using a single biosensing device. This is particularly relevant in applied settings, where ease of use and minimal interference in training/education activities is desired. Also, that light, portable devices such as wrist bands, wireless straps, or headbands are preferred. Additionally, eye tracking, electrodermal activity (EDA), and photoplethysmograms (PPG) present as particularly useful biomarkers of stress and/or cognitive load in XR training contexts. A wide variety of machine learning (ML) approaches were used to support biofeedback systems in XR environments. However, a limited number of studies employed real-time analysis of biosignals (just 1% of studies) which indicates current challenges in implementing such systems.

Conclusion

The majority of papers meeting the selection criteria were from the fields of education and healthcare. Further research in other domains, such as defense and general industry, is needed to gain a comprehensive understanding of the potential for biofeedback and AI integration in XR training scenarios used in these domains.

Introduction

The use of artificial intelligence (AI) and biofeedback or physiological measurement in eXtended Reality (XR) training applications is seeing increasing use (Marín-Morales et al., 2021; Suhaimi et al., 2021). Studies have found that the integration of biofeedback and AI in simulation training or games can lead to increased engagement (Houzangbe et al., 2020), motivation (Ciolacu et al., 2020), and retention of information (Leiker et al., 2016). It can also help to reduce stress and anxiety during training, leading to better performance (Sharma et al., 2022). Further, research has been conducted in various fields such as education, healthcare, and defense, showing the potential benefits of using biofeedback and AI in simulation training (Ciolacu & Svasta, 2021).

Use of biofeedback in these contexts often aims to promote emotion-regulation of trainees (Jerčić & Sundstedt, 2019) with biosignals aiding the understanding of training (Habibnezhad et al., 2021), and/or providing measures of training performance (Jerčić & Sundstedt, 2019). In emotion-regulation contexts, trainees can be presented with a real-time visual indicator of their physiological state during tasks, enabling trainees to learn and/or practice self-regulation techniques (Parnandi & Gutierrez-Osuna, 2017).

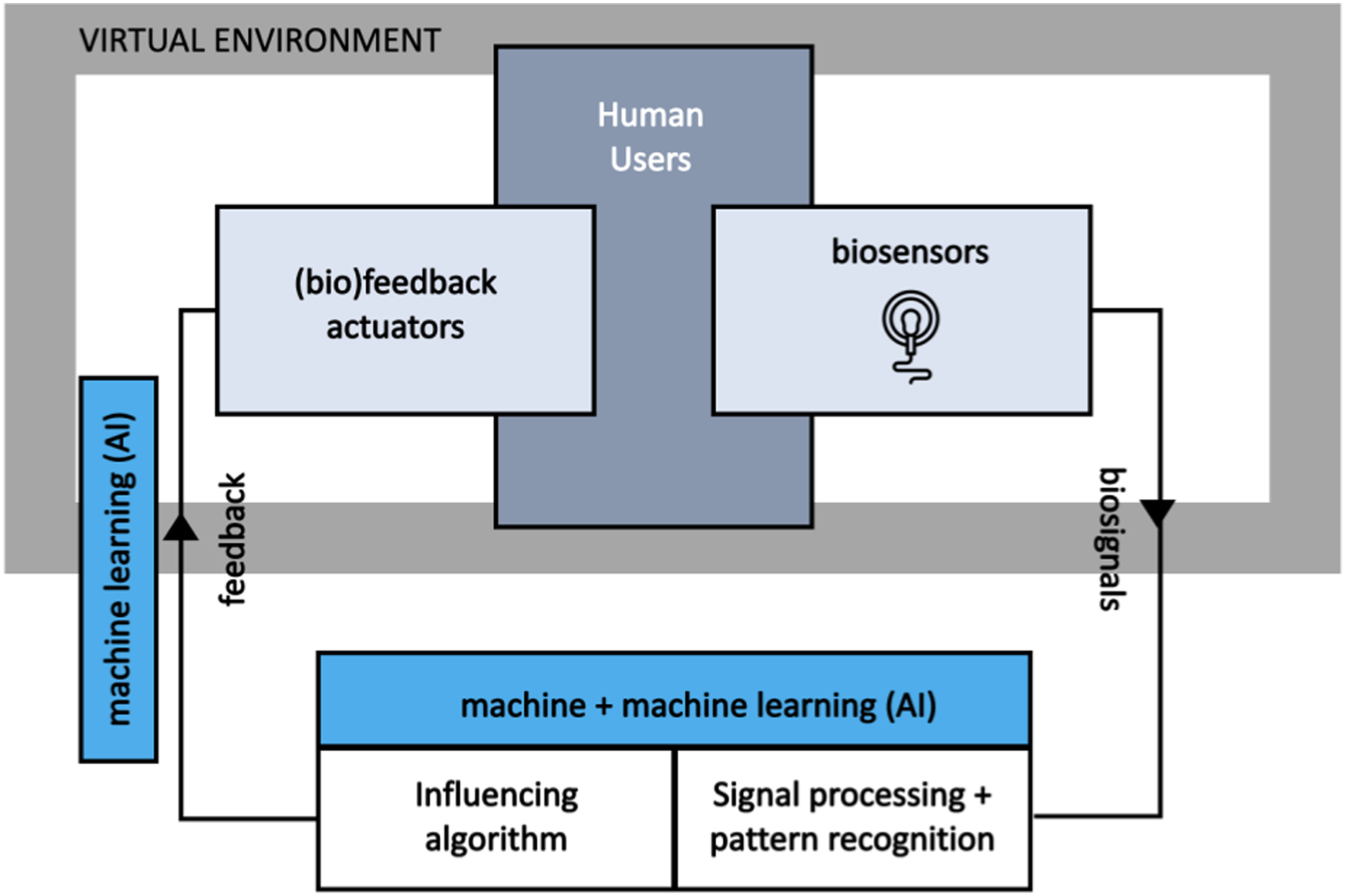

The capture of biosignals can also provide insight into training affect, or the degree to which a trainee is affected by the virtual training task and environment (Kim, Kim, & Ahn, 2021). This insight is useful for broader considerations of training fidelity in stressful and/or emotionally charged contexts. Also, the change in measured physiological signals over the training continuum may indicate training effectiveness, where trainees, through practice, are able to undertake training tasks with increasing resilience (Gamble, et al. 2018). In these areas, the use of AI is typically associated with processing of biosignals (signal processing, pattern recognition) and may potentially be applied in the actuation of biofeedback in virtual environments (see Figure 1). Biofeedback following a general closed loop model. Within virtual environments using XR technologies, the use of AI may focus on processing of biosignals (signal processing and pattern recognition) and/or the actuation of dynamic feedback. Decisions regarding biosensors and choice of biofeedback actuation approaches will also be impacted by technology constraints (Adapted from (van den Broek & Westerink, 2012)).

However, for XR practitioners seeking to add biosignal collection and/or biofeedback into their training environments, a number of challenges present. In addition to determining what might be a useful measure, for example cognitive load, heartrate, or temperature, there is a plethora of different technologies available to collect this data. This is further complicated with some technologies supporting single biosignal collection and others supporting the collection of multiple different biosignals. Also, there are physical constraints on how different technologies are applied that may limit their compatibility, for example, the use of multiple head-worn devices to collect electroencephalogram (EEG), eye movement, and forehead temperature may not be practical depending on the XR hardware used. In training and learning contexts, the use of biosensors that capture real-time physiological and/or emotional states are an important step toward achieving adaptive synthetic training environments (Seyderhelm et al., 2019).

In addition to the physical challenges, each biosignal device will collect data at different granularities and have different levels of noise in the data that will need to be removed (Stangl et al., 2023). For real-time systems, this will need to be done on-on-fly and, additionally for post-session data analysis, the volume of data collected can be significant. Thus, the use of AI techniques become attractive, to clean noisy data (Saganowski, 2022), and to support any downstream data use, for example for feature classification (Delvigne et al., 2020; Sakib et al., 2020). Again, for the XR practitioner seeking to add AI support into biofeedback enabled XR experiences, determining the appropriate technologies and approaches can be a significant challenge.

This paper aims to support XR practitioners by summarizing the features of recent use cases from the research literature involving the integration of biofeedback and AI into XR training systems. This paper presents results across several core aspects including biosignal types, measurement categories, biofeedback and AI technology types and frequency of use. Thus, the research overview presented here provides a resource for XR practitioners looking for examples of current practice and analogous exemplars to support their own custom biofeedback and AI integration needs.

Method

A systematic review of literature seeks to take a “snapshot” of the current state of the art within the academic literature in a specific area using a repeatable search and review approach. To establish appropriate search terms to guide the identification of literature, a research question for this study was defined as: What biofeedback technologies and approaches are being used within AI enabled XR systems for training and/or educational applications?

PRISMA guidelines were used as the basis of this systematic literature review (Moher et al., 2015). PRISMA guidelines provide specific methodology details relating to eligibility criteria, information sources, search strategy, and study records (including data management, selection process, and collection process). This section provides specific details on how these guidelines were applied for the review.

An initial scoping review, searching the existing research, revealed many theoretical and untested-on-human concepts and approaches. From this scoping review, the key inclusion criteria for this literature review were defined. One main requirement was an inclusion criterion that the included biofeedback enabled systems in the research articles were validated on real human participants and therefore, exemplars of real use cases, with actual experimental results.

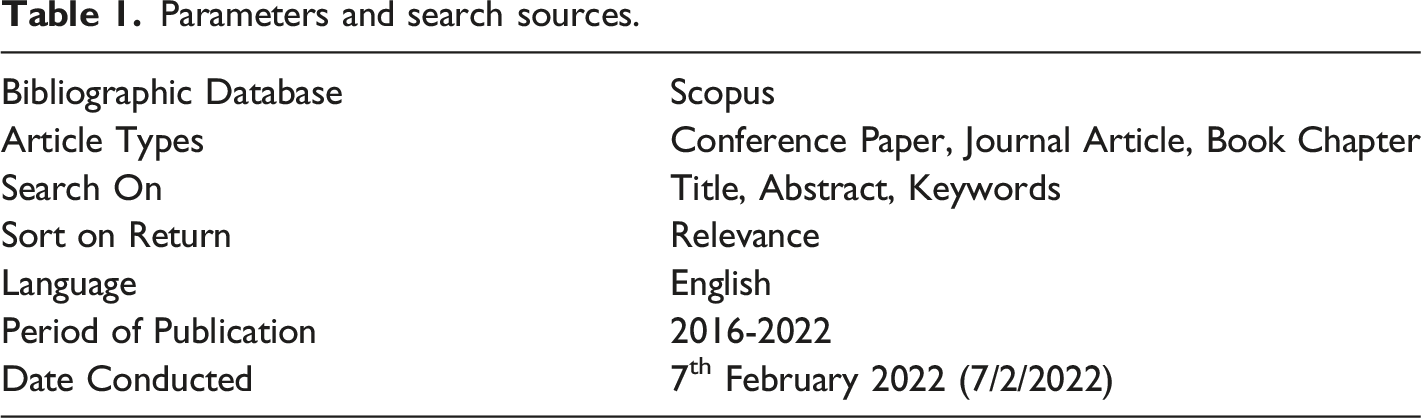

Parameters and search sources.

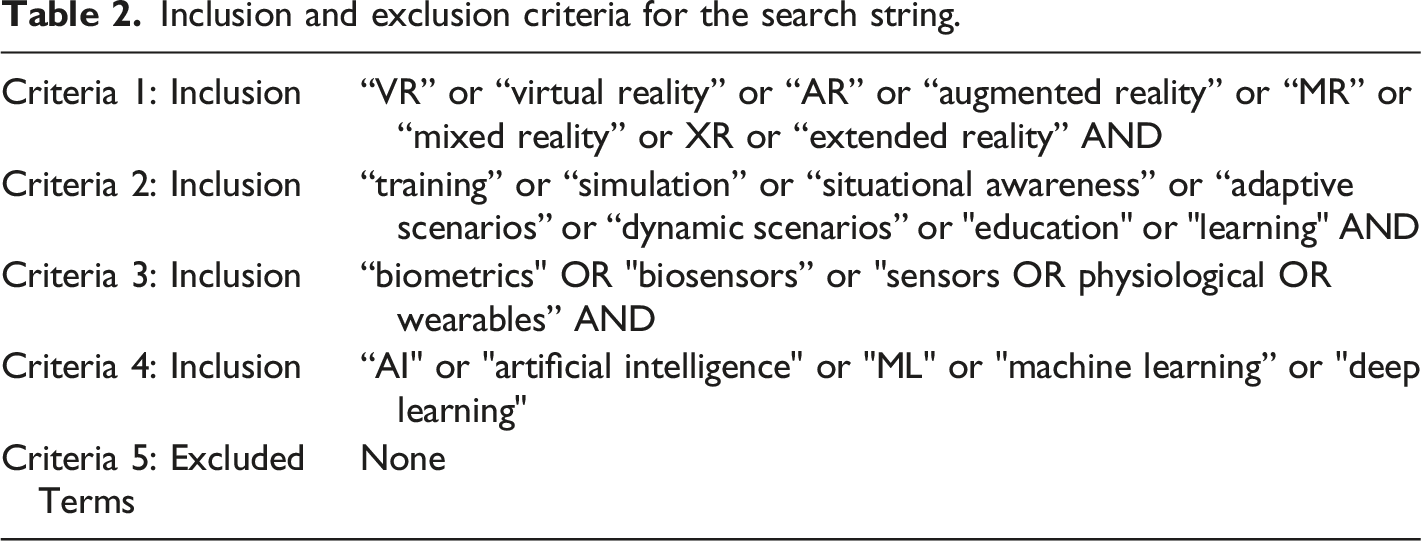

Inclusion and exclusion criteria for the search string.

The inclusion and exclusion criteria for the search string were developed to maximize the number of relevant studies being returned (see Table 2). Similar terms were grouped into specific criterion, for example, Criteria 1 searches for virtual reality related terms such as VR or virtual reality or XR or extended reality. Criteria 2 searches specifically for training, simulation, or scenarios keywords in studies where end-users engage with various educational domains. Criteria 3 returns studies that have used biosensors, sensors, or physiological measurement tools as part of their investigation. Criteria 4 relates to publications that discuss AI and machine learning aspects. No terms were specifically excluded from the search string.

These criteria were combined into the following Boolean search string which was run in Scopus to gather the most relevant literature: TITLE-ABS-KEY( ( "VR" OR "virtual reality" OR "AR" OR "augmented reality" OR "MR" OR "mixed reality" OR "XR" OR "extended reality" ) AND ( "training" OR "simulation" OR "situational awareness" OR "adaptive scenarios" OR "dynamic scenarios" OR "education" OR "learning" ) AND ( "biometrics" OR "biosensors" OR "sensors" OR "physiological" OR "wearables" ) AND ( "AI" OR "artificial intelligence" OR ml OR "machine learning" OR "deep learning" ) ) AND ( LIMIT-TO ( PUBYEAR , 2022 ) OR LIMIT-TO ( PUBYEAR , 2021 ) OR LIMIT-TO ( PUBYEAR , 2020 ) OR LIMIT-TO ( PUBYEAR , 2019 ) OR LIMIT-TO ( PUBYEAR , 2018 ) OR LIMIT-TO ( PUBYEAR , 2017 ) OR LIMIT-TO ( PUBYEAR , 2016 ) ) AND ( LIMIT-TO ( DOCTYPE , "cp" ) OR LIMIT-TO ( DOCTYPE , "ar" ) OR LIMIT-TO ( DOCTYPE , "ch" ) ) AND ( LIMIT-TO ( LANGUAGE , "English" ) )

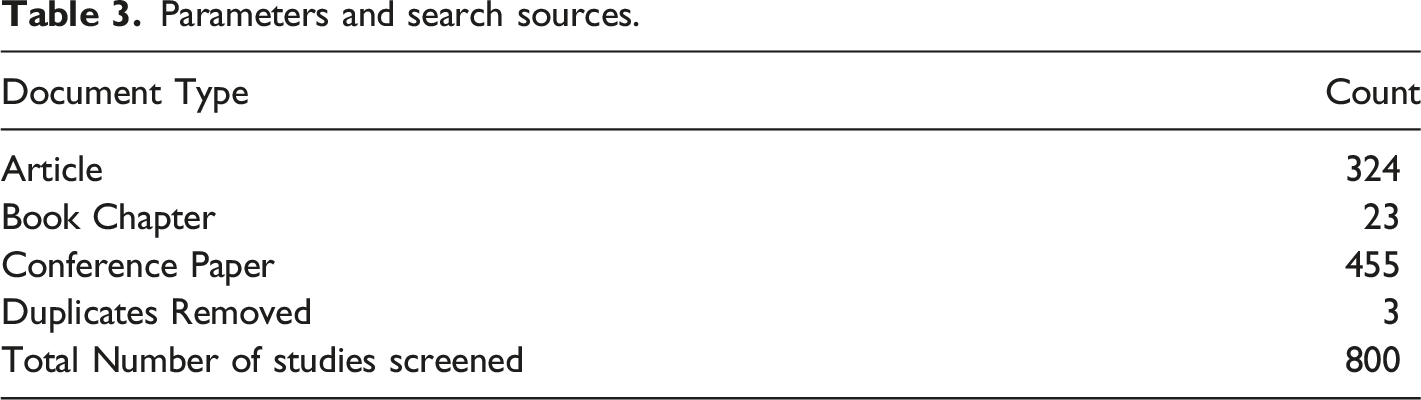

Parameters and search sources.

Inclusion and exclusion criteria for the studies.

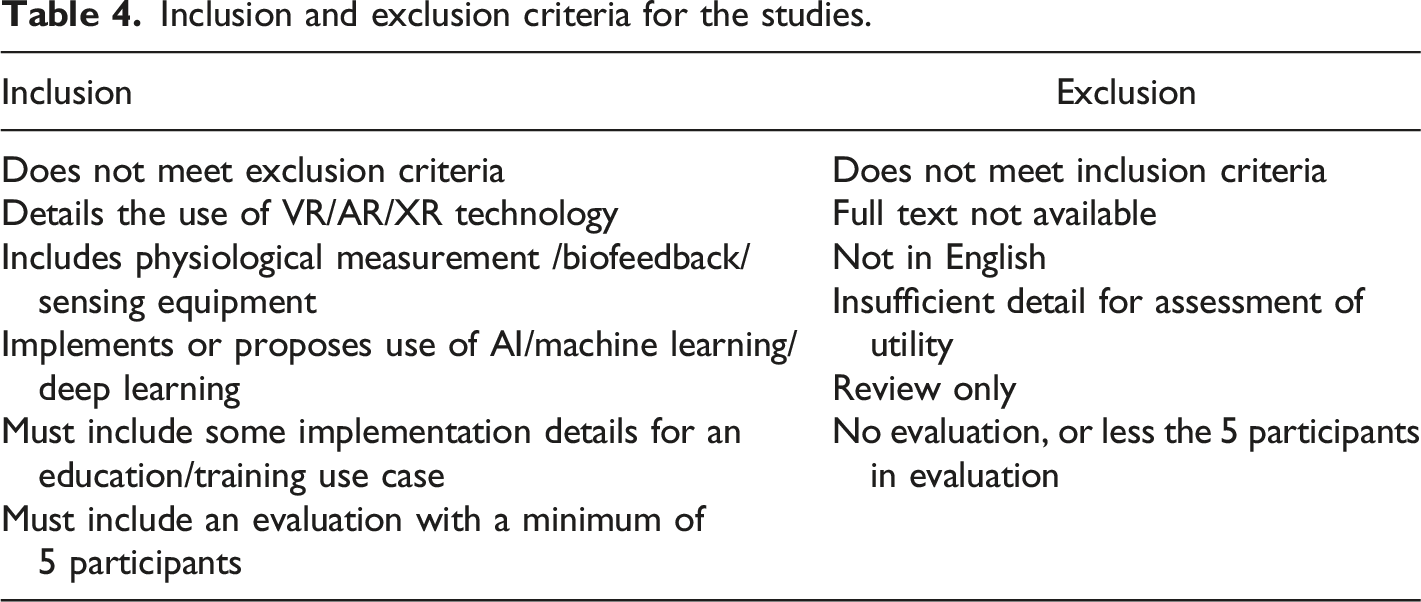

To be included in the results and analysis, a study must have included details of an XR technology. The study must also have included the capture of some form of physiological measurement in addition to using AI or machine learning methods in the data processing and/or analysis. Systems must have been implemented, and some details of a use case in an education/training context must have been provided. These studies must also have included a minimum of 5 participants in an evaluation and must not meet any of the exclusion criteria in Table 4.

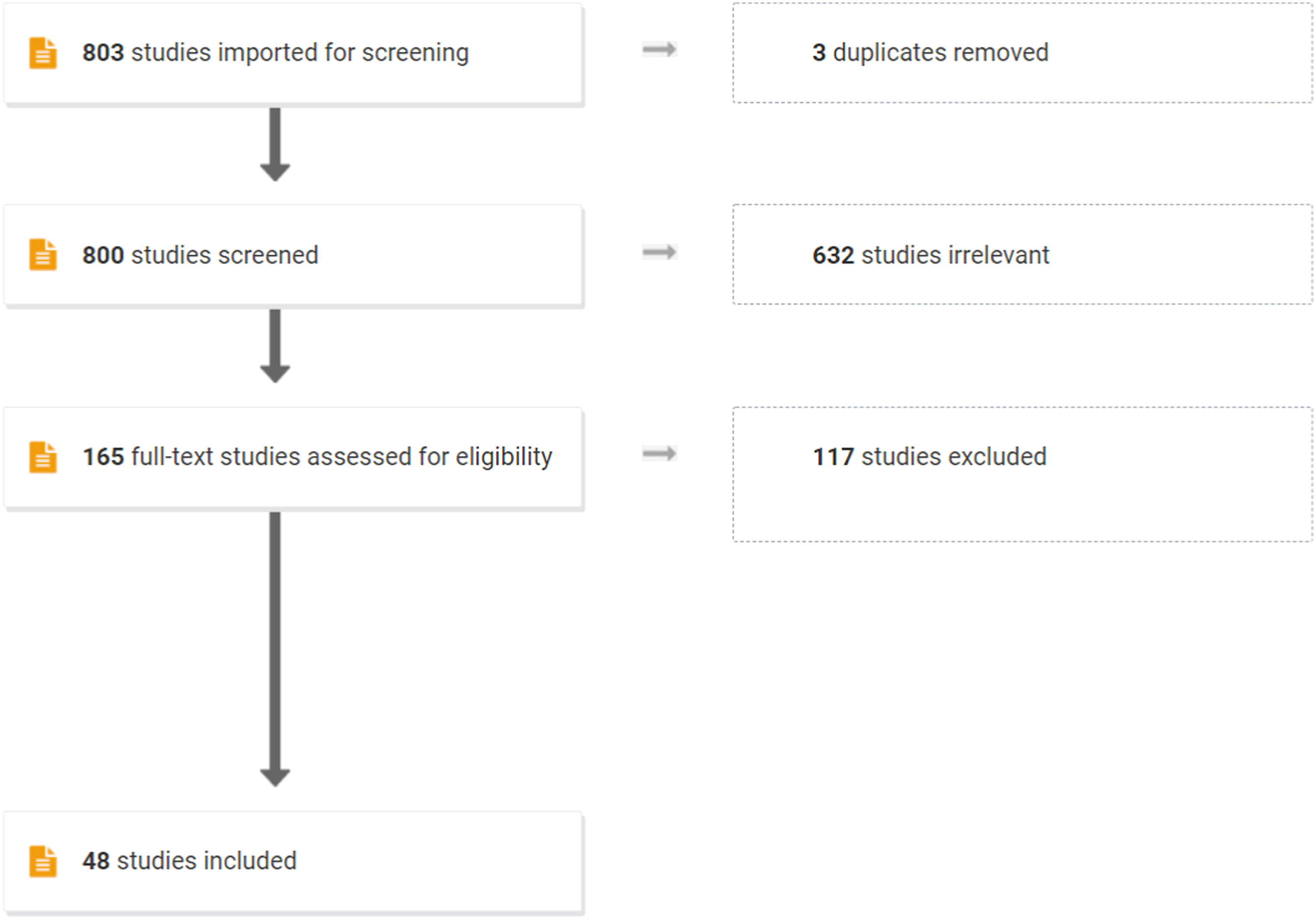

Additionally, for a study to be included in the results, the full text of the document must be fully available online and presented in English. If the paper had insufficient details of the assessment of its utility, the study was excluded. Reviews and evaluations were also excluded as they would not meet the inclusion criteria of examining a use case with at least 5 participants. Once the documents were screened using this double-blind peer review process (see Figure 2), data from the remaining articles (n= 48) were extracted for analysis. PRISMA flow for the selection of relevant literature.

Biosignal Results

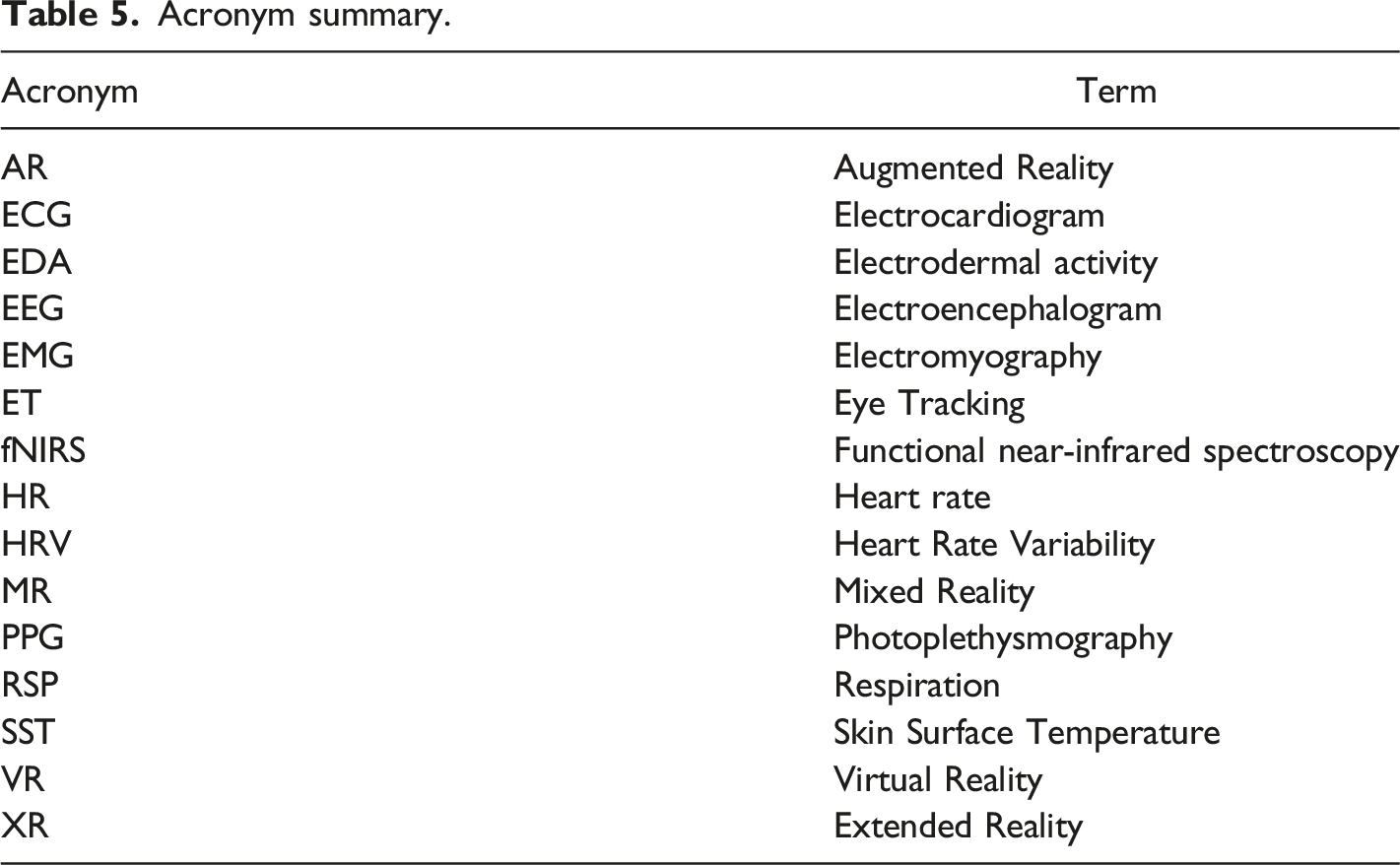

Acronym summary.

Biosignal Collection Summary and Primary Measurement Categories

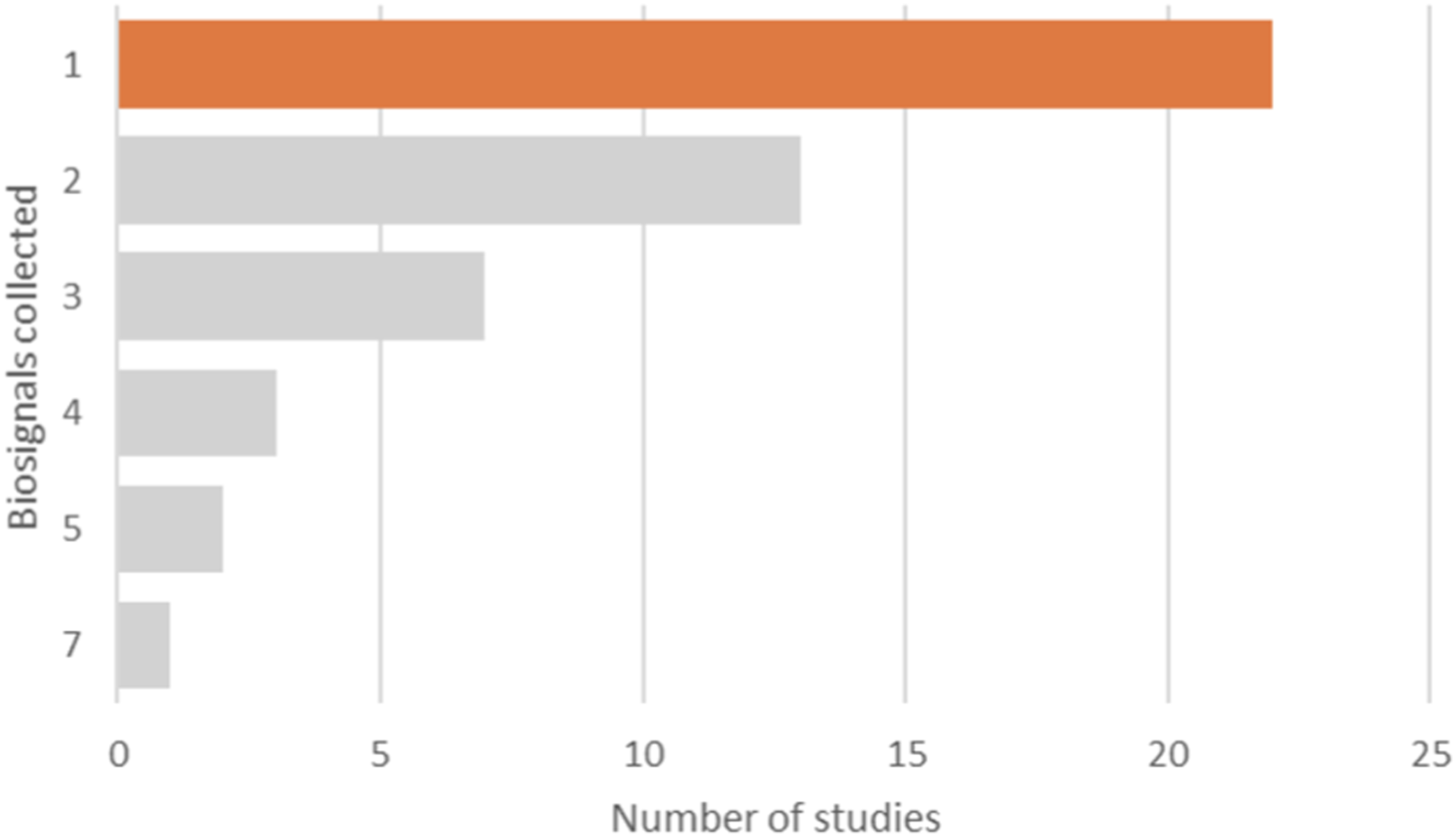

Within each of the final 48 studies, between one (1) and seven (7) biosignals were obtained, with the collection of a single biosignal the most commonly occurring (n=22). However, most studies (n=26) collected 2 or more biosignals (see Figure 3). The maximum number of individual sensors used was three (3), with most studies using only one (1) (n=31, 65%) or two (2) (n=16, 33%) sensing devices. Frequency of the number of biosignals captured in studies.

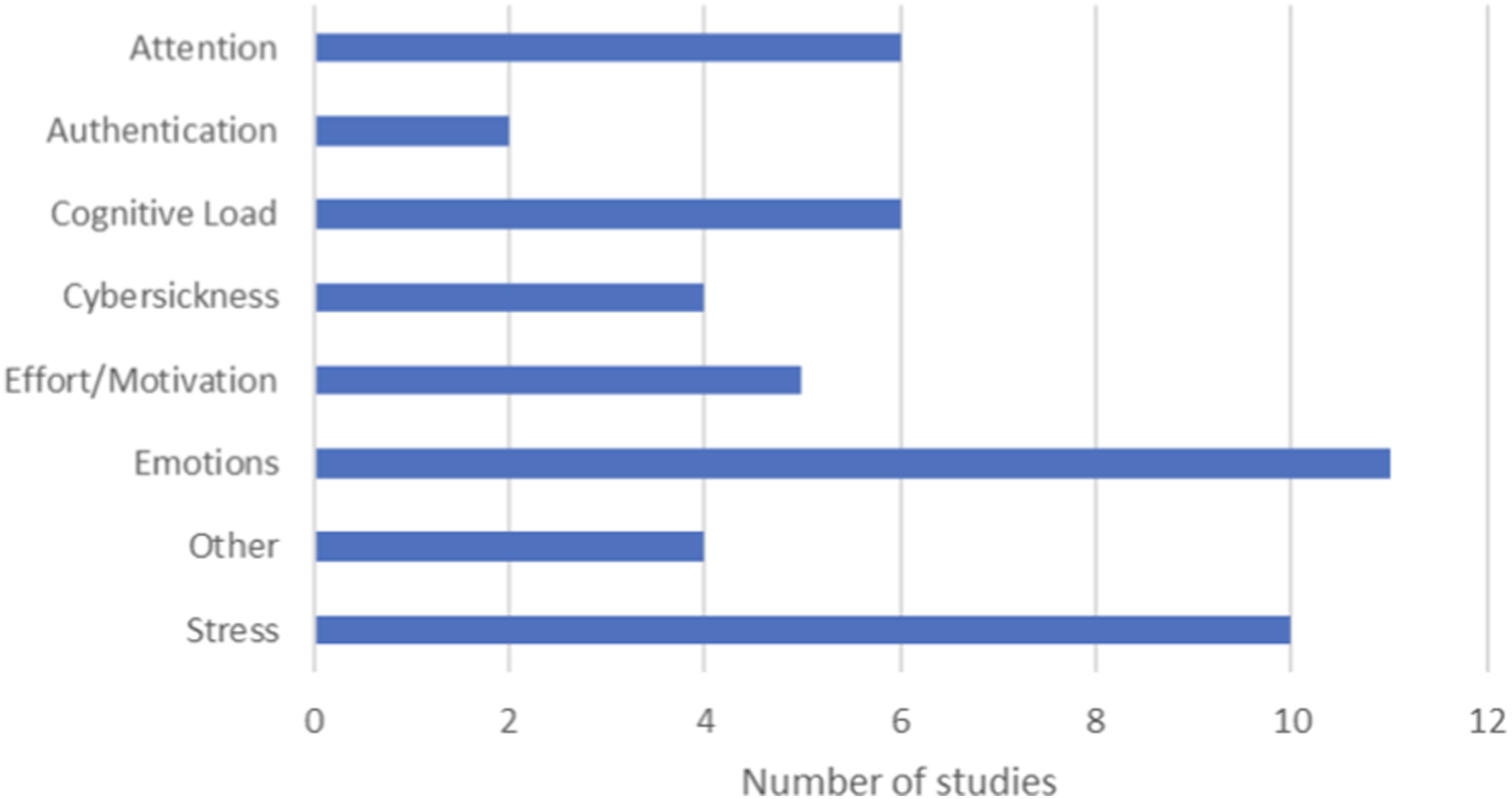

The use of biofeedback in the research papers evaluated in this review addressed a range of primary measures (see Figure 4). Emotion recognition in the users/participants was the most frequently primary measure, followed by measures of stress and attention. Three of the studies categorized as “stress” here considered anxiety as the primary measure, however, given the biosignal was captured while the stimulus (stressor) was present, these are effectively also capturing elements of stress and thus were considered within the single “stress” category. Primary measures by number of studies.

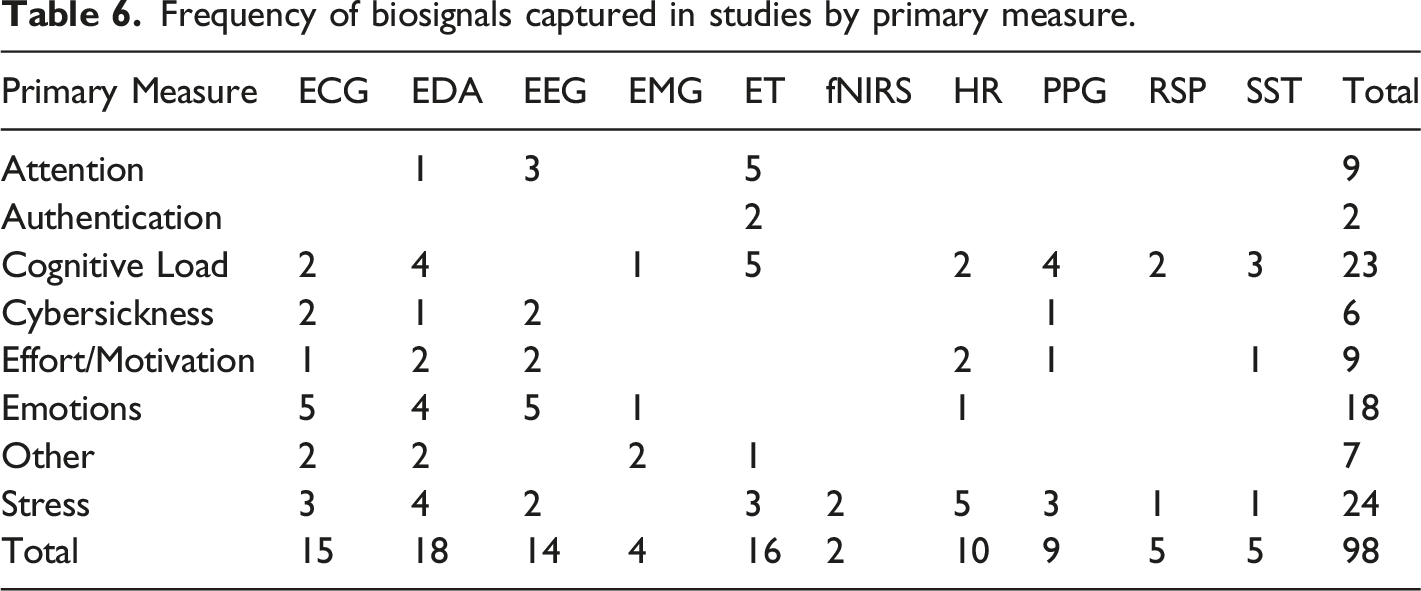

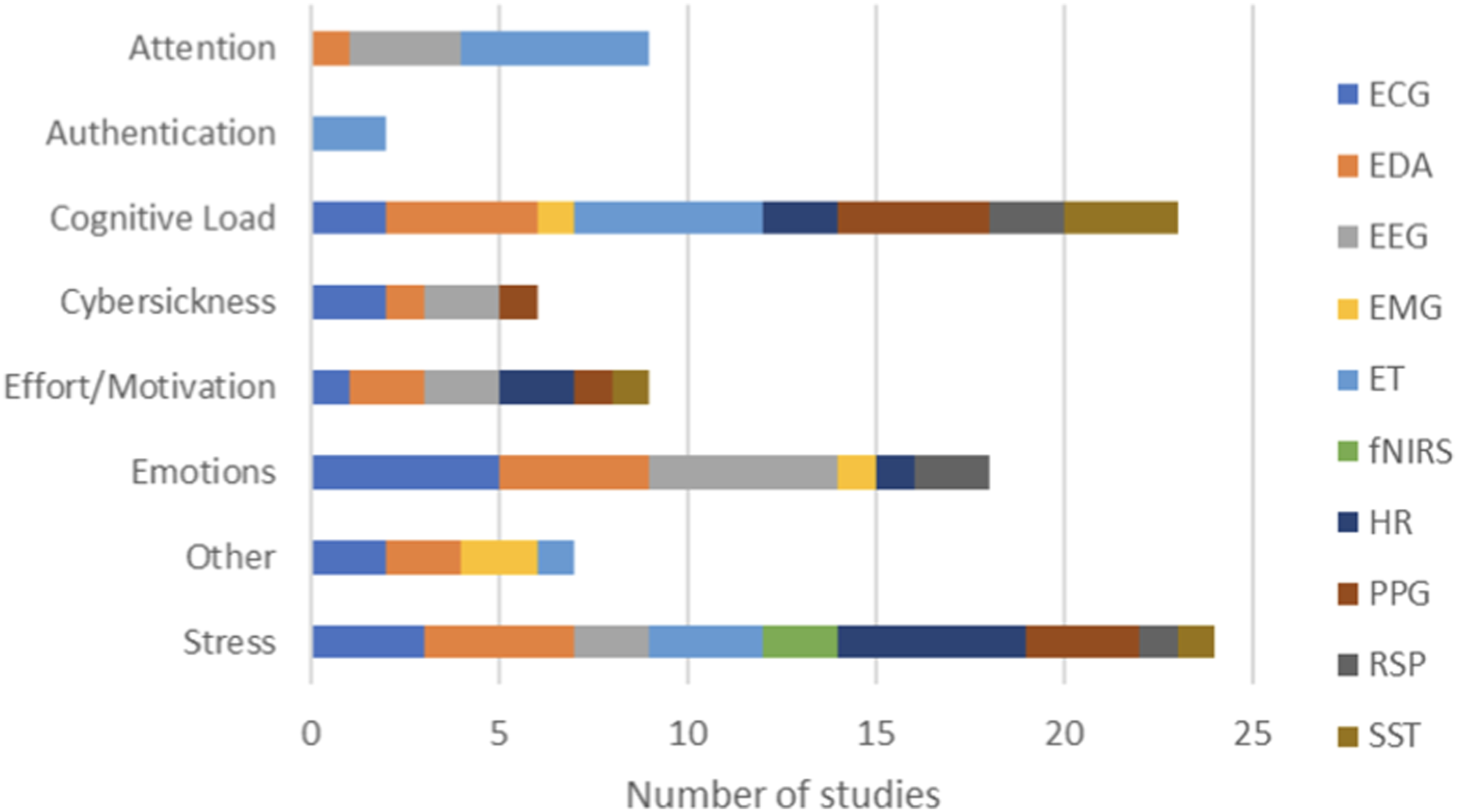

Frequency of biosignals captured in studies by primary measure.

Primary measures by biosignals captures across the number of studies.

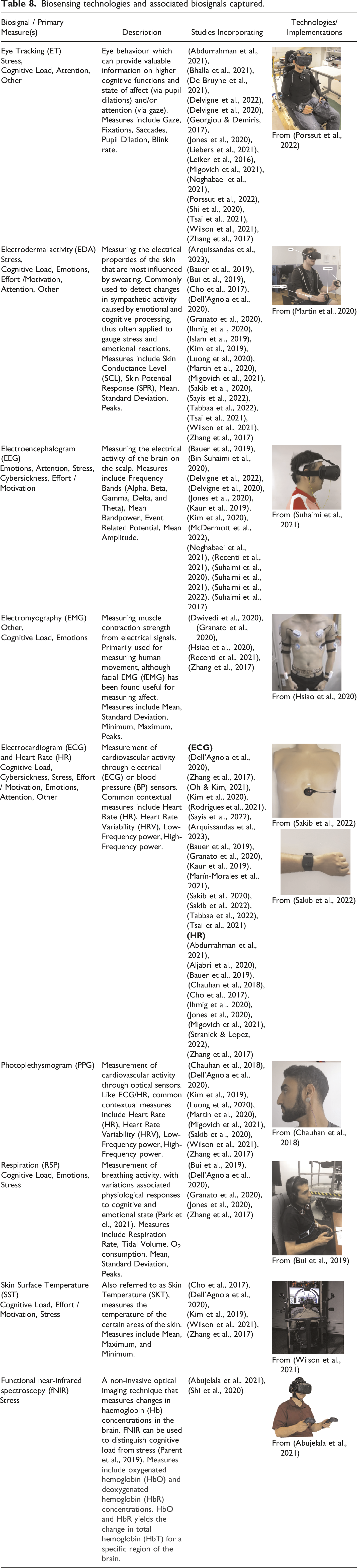

Electrodermal activity (EDA), also referred to as galvanic skin response (GSR), was the most widely used biosignal, with most application in the measurement of stress, cognitive load, and emotions. Eye tracking was also frequently used in the measurement of stress, cognitive load, and attention. The relevance for these usage patterns is provided in the summary of biosignals provided in Table 6.

Technologies and Equipment Used for Biosignal Collection

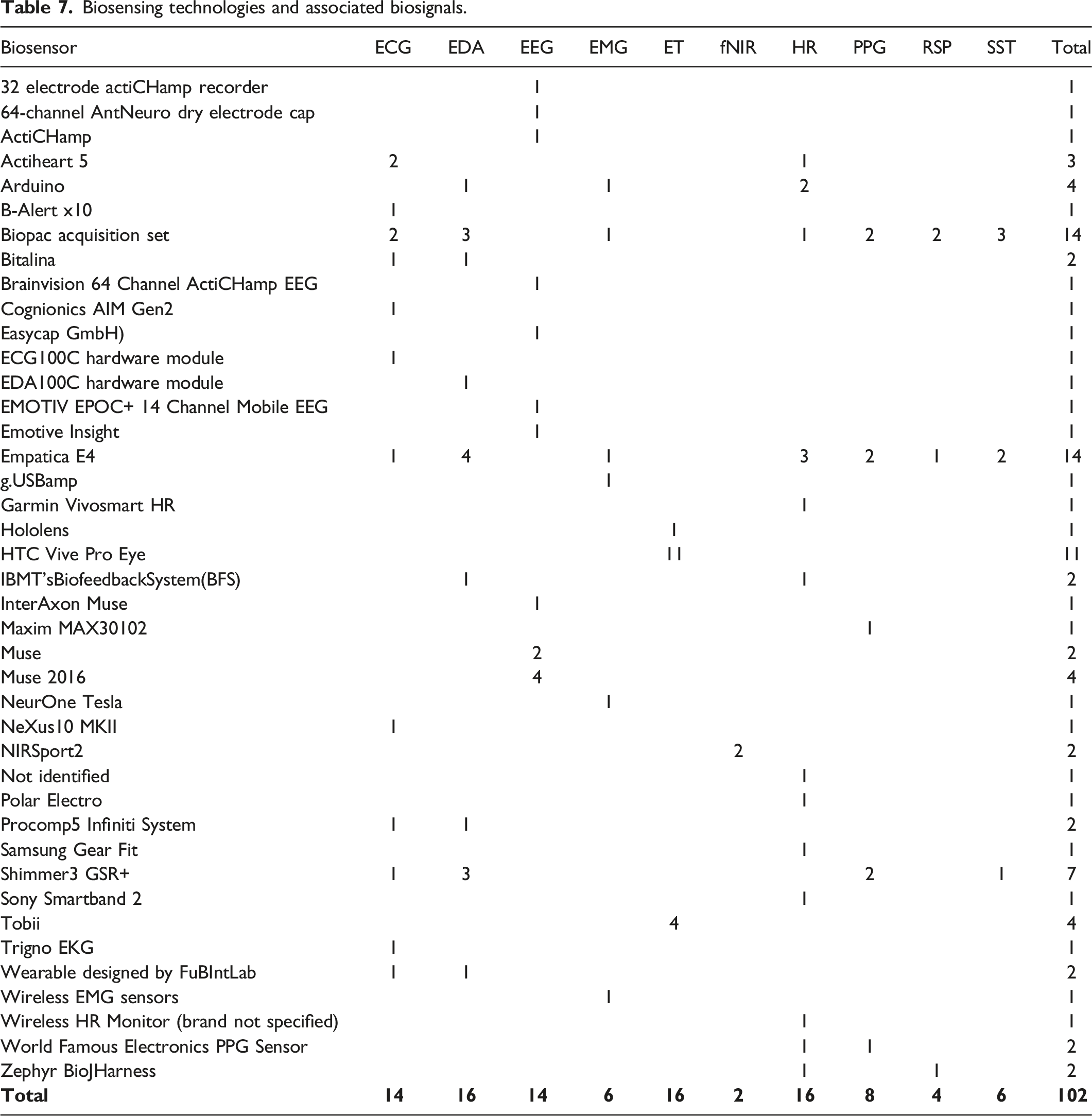

Biosensing technologies and associated biosignals.

Biosensing technologies and associated biosignals captured.

Details on the specific software environments for the capture and analysis of the biosignal data is not discussed in this paper. Approaches vary from custom built software solutions, use of existing software tools and libraries, to complete off-the-shelf proprietary software available with some biosensing technologies. A summary of tools for the recording, synchronization, and processing of physiological data can be found in (Halbig & Latoschik, 2021).

AI/ML Results

Each of the included papers (n=48) were evaluated to determine the type of machine learning approach implemented, whether single or multiple approaches were used, and if these were applied in real-time or not. Following this, the specific uses for these implementations were considered, together with a listing of the definitions of all approaches used with the references to the specific research studies from the review that they were used in.

AI/ML Approach

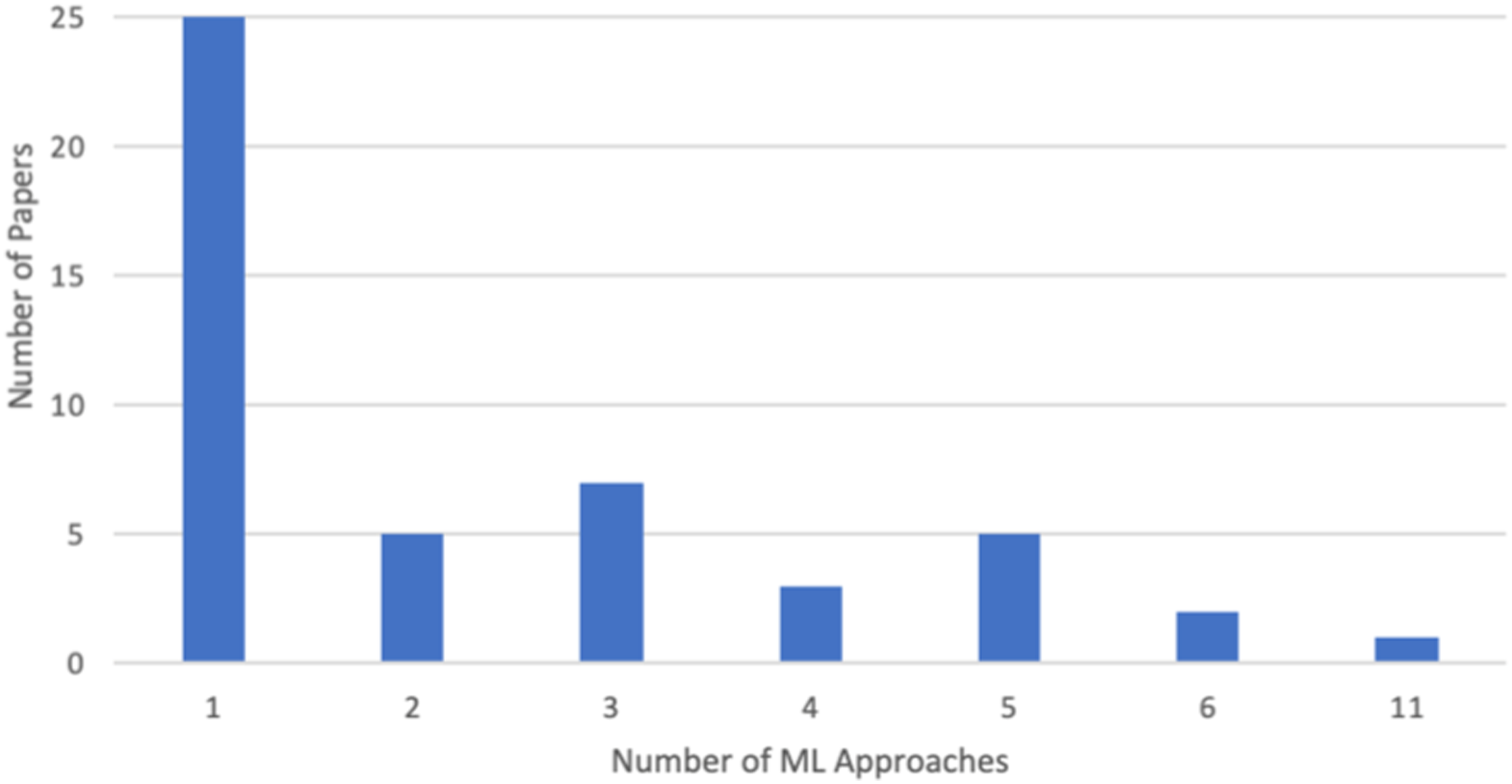

Within each of the final 48 studies, between one (1) and eleven (11) machine learning (ML) approaches were used, with the use of a single ML approach the most commonly occurring (n=25) (Figure 6). Number of ML approaches used per paper.

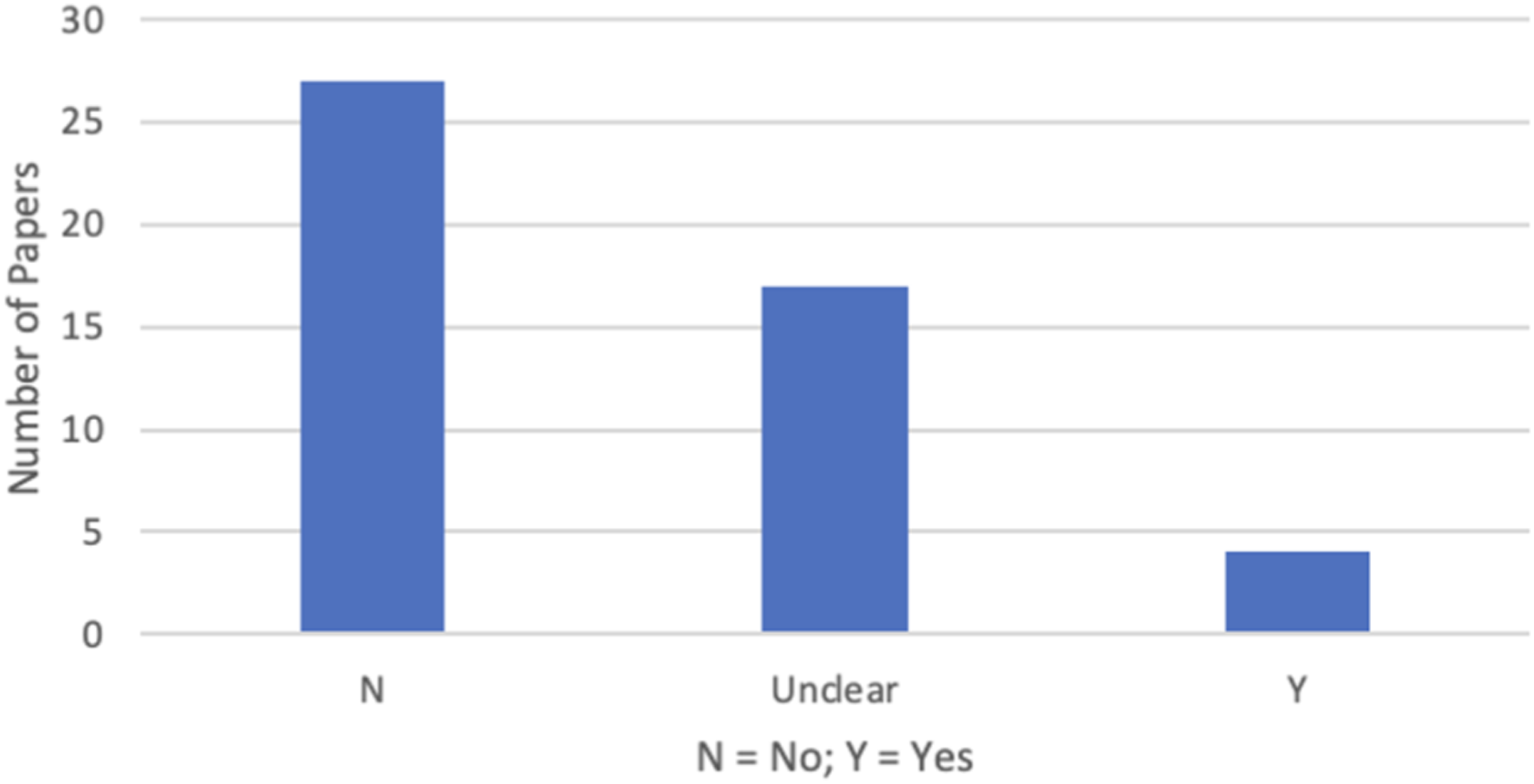

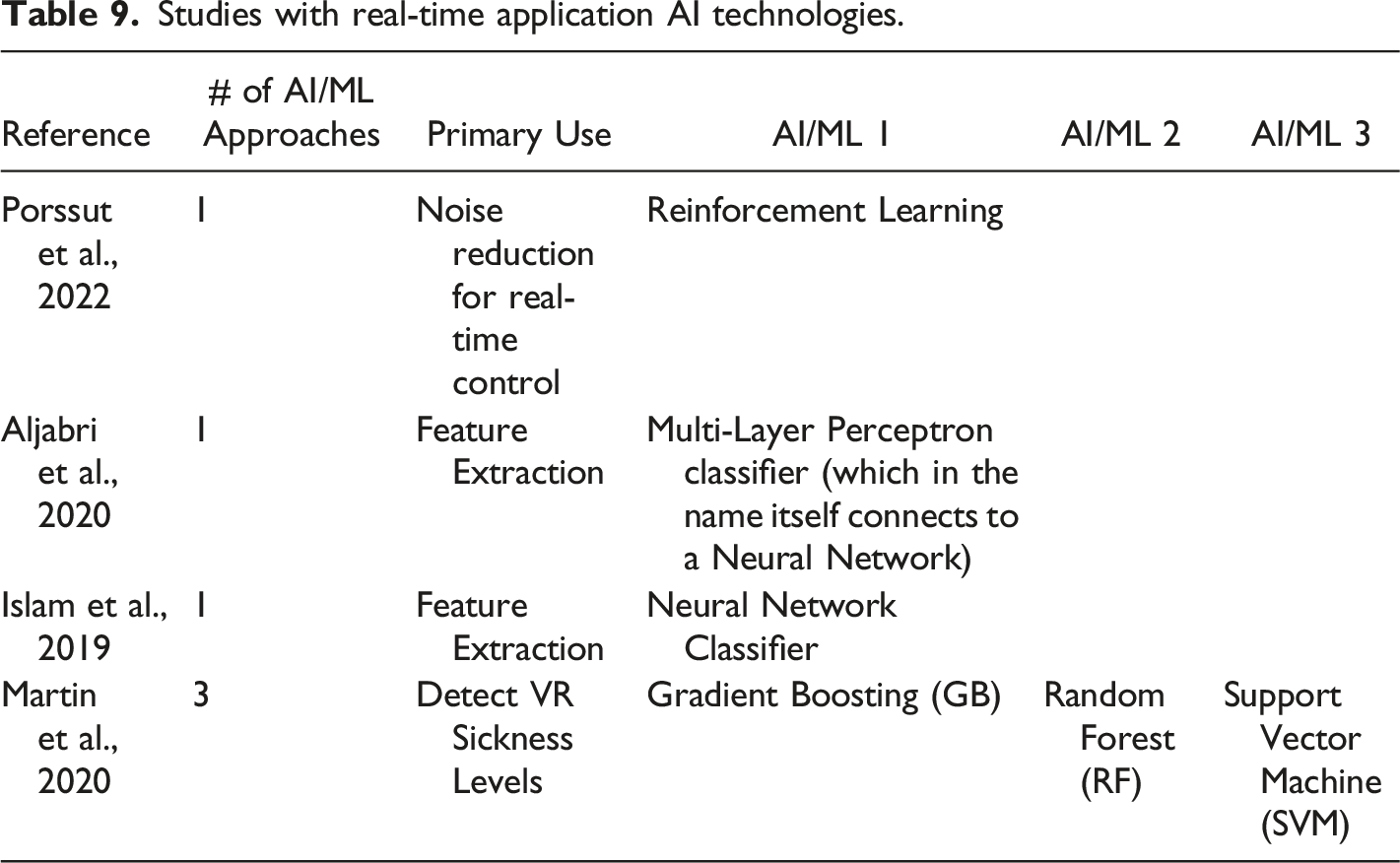

On investigation, only four (1%) of articles implemented real-time AI in their solutions (Figure 7 and Table 9). In an additional 17 studies (15%), it was unclear whether the implementation resulted in real-time application of AI. Number of papers that used real-time approaches. Studies with real-time application AI technologies.

AI/ML Uses and Definitions of Approaches

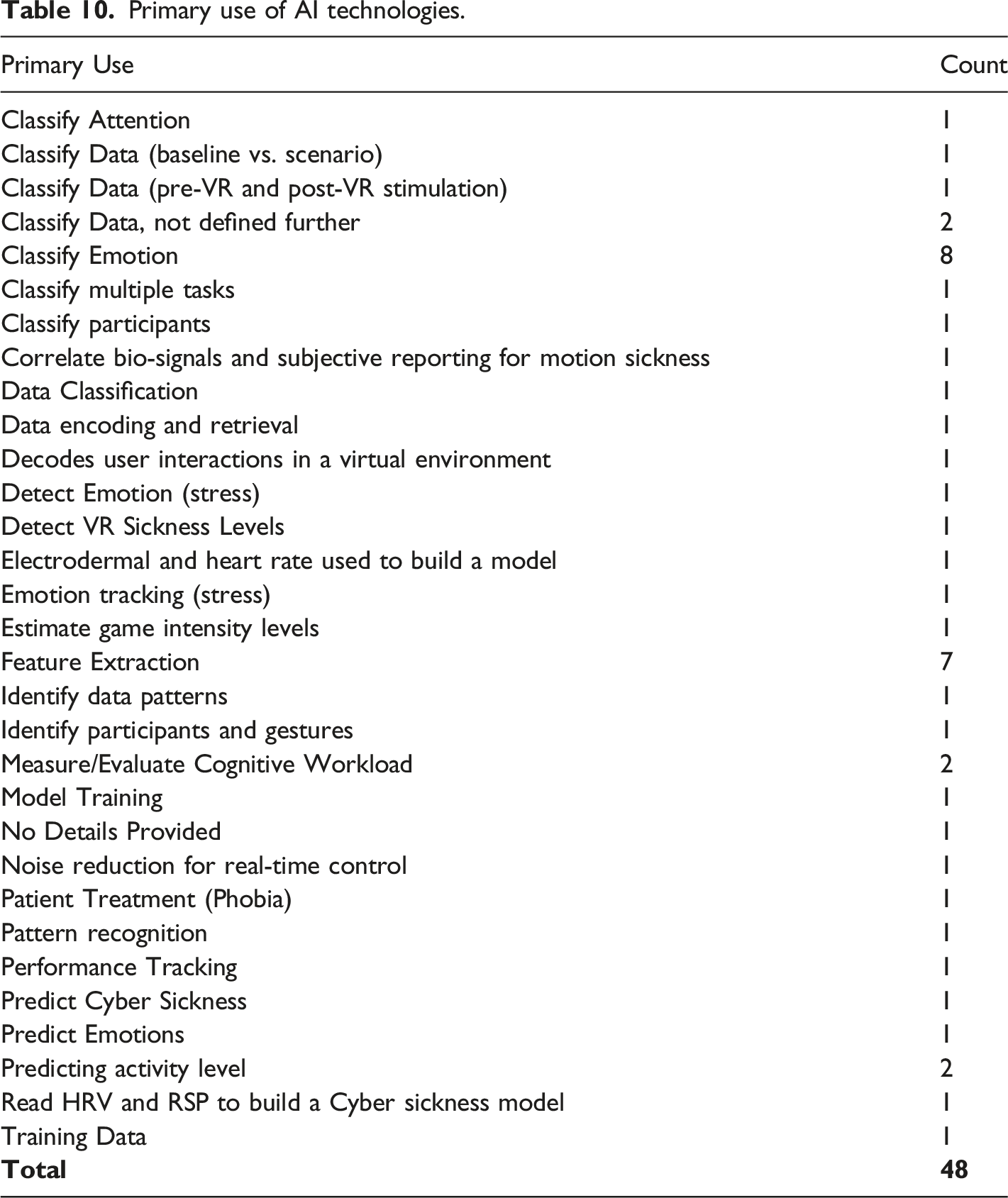

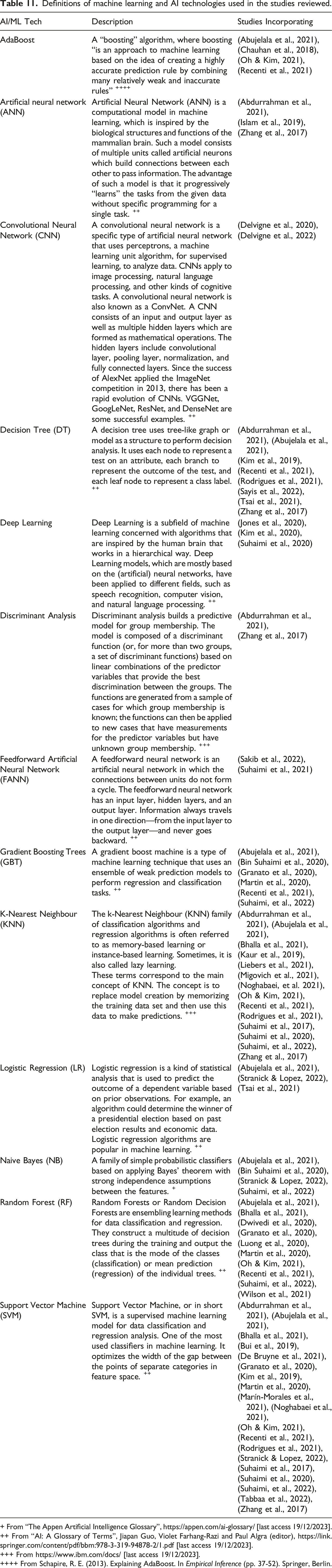

Primary use of AI technologies.

Definitions of machine learning and AI technologies used in the studies reviewed.

+ From “The Appen Artificial Intelligence Glossary”, https://appen.com/ai-glossary/ [last access 19/12/2023].

++ From “AI: A Glossary of Terms”, Jiapan Guo, Violet Farhang-Razi and Paul Algra (editor), https://link.springer.com/content/pdf/bbm:978-3-319-94878-2/1.pdf [last access 19/12/2023].

+++ From https://www.ibm.com/docs/ [last access 19/12/2023].

++++ From Schapire, R. E. (2013). Explaining AdaBoost. In Empirical Inference (pp. 37-52). Springer, Berlin.

Discussion

The results of the systematic literature review have highlighted several trends in the integration of biofeedback and artificial intelligence into XR training scenarios. The identification of these trends provides a useful starting point for the types of decisions that XR practitioners will need to make when considering using these technologies. In the following sections we highlight the implications of the results found, firstly in the context of biosignals/biofeedback and secondly when considering the use of AI technologies.

Many studies reviewed collected two or more biosignals using a single biosensing device. This is particularly relevant in applied settings, where ease of use and minimal interference in training/education activities is desired (Sakib et al., 2020). For example, Luong et al. (2020) notes, in terms of cumbersomeness, that there are advantages to integrating multiple physiological sensors into a VR HMD to monitor a user’s psychological state, rather than having to fit and maintain multiple sensors. Granato et al. (2020) observe that almost all the electrodes used in their experiments were located near the hands and face of the users. Therefore, there are opportunities to integrate multiple sensor technologies directly into the hardware being used, and in this case, a gamepad and a VR headset. With increasing use of off-shelf technologies, the provision for multiple biosignal capture in such technologies will be vital when moving from controlled laboratory/hospital environments to support more widespread usage (Tabba et al., 2022).

Light, portable devices such as wrist bands, wireless straps, or headbands are preferred (Migovich et al., 2021) for the collection of biosignals. Suhaimi et al. (2021) used a wearable EEG headset device that was made to be “portable and easy to set up without the need of any adhesive material to attach the electrodes onto the skin of any participant, the device also removes the need for a trained medical professional to operate the device”. Also, Wilson et al. (2021) observed that many applications can get “good-enough” results using only the sensors from the Empatica E4 wrist-band. Therefore, light and unobtrusive, while still providing the required biosignal support, is an important consideration when selecting biofeedback technology.

In terms of biosignals, eye tracking, electrodermal activity (EDA), and photoplethysmograms (PPG) present as particularly useful biomarkers of stress and/or cognitive load in XR training contexts. All can be captured with minimal interference to end users and have robust research supporting their use (Cho et al., 2017; Chauhan et al, 2018; Dell’Agnola et al., 2020; Kim et al, 2019; Luong et al., 2020). In addition to being used in primary data analysis, these biosignals are useful as a secondary source to support machine learning approaches (Cho et al., 2017; Jones et al., 2020; Martin et al., 2020). The generation and distribution of the relevant datasets will be important for growth in the use of these approaches.

Of those using studies using off-the-shelf biosensing equipment, the Empatica E4 emerged as the most common. Of note is the capacity for the device to capture multiple biosignals, although this is a noted feature of similar devices in the market. Also, a “plug and play” approach (Aljabri et al., 2020) to sensing technology is recommended, allowing sensing technologies to be updated/upgraded overtime while the fundamental benefits of the biofeedback implementation are retained.

As such, details regarding integration of biosensing technologies with synthetic environment development tools (i.e., game engines) should be a focus of development approaches. The Unity game engine was used in several studies (including De Bruyne et al., 2021; Delvigne et al., 2022; Dwivedi et al., 2020; Marín-Morales et al., 2021; Porssut et al., 2022; Rodrigues et al., 2021; Stranick & Lopez, 2022; Tsai et al., 2021) and the Unreal Engine 4 was used in only one study from the 48 studies included (McDermott et al., 2022). Hardware and software integration is important for system development and Unity is currently dominating the “lock-in”, where platforms are made dependent on and interoperable with each other to assure market viability (Foxman, 2019). It remains to be seen whether this current domination will extend from gaming into future XR application development.

A wide variety of machine learning (ML) approaches were applied to the task of implementing biofeedback systems in XR environments. Although there is significant promise, the limited number of studies in this review employing real-time analysis of biosignals (1% of studies) point to some of the challenges in implementing such systems. Biosignal data is commonly “noisy”, containing a variety of data artefacts from sources such as human movement (Zhang et al., 2017) and sensor connection issues. As such, biosignal data typically requires data cleaning, processing and/or filtering prior to analysis (Sakib et al., 2020). This is further compounded by individual variations in human biosignals (e.g., heart-rate variations (Marín-Morales et al., 2021)), which can introduce an additional transformation or baselining step to the pre-analysis process. Undertaking this preparatory work in real-time without introducing time lags that impact on provision of the feedback component of the biofeedback loop, or on the immersive experience, may not be feasible. Working with reduced datasets or sources, applied to a pre-trained ML system, is thus preferable (Sakib et al., 2020).

An additional challenge in identifying ML implementations of use in XR training systems incorporating biofeedback lies in the level of explanatory detail provided in some studies. Of the studies reviewed, 17 (15%) did not include sufficient detail to determine whether the implementation was in real-time or applied to pre-recorded data. The reasons for this lack of detail are not clear, although the cross-disciplinary nature of the work might offer some explanation. For example, teams involved in these studies may include members from the application domain (e.g., Healthcare professionals) together with members from the technical and/or computer science areas. Where articles are published in the application domain, fewer technical details may be included to improve the relevance of the work to the intended audience. Irrespective of this, care should be taken in inferring the appropriateness of approaches with limited technical detail on any implementation.

Despite these issues, the results of the review identified several ML approaches relevant to biofeedback enabled XR training/education solutions. Classification approaches dominate the results, followed by feature extraction. From the studies, 13 different ML algorithms (or algorithmic families) were identified, with K-Nearest Neighbour (KNN), decision trees, and artificial neural networks (ANN) featuring heavily. However, the implementation of ML approaches should be guided by current best practices, and we have produced three basic recommendations: • Application of data reduction techniques to select features (variables) with the highest prediction/classification fit should be performed as part of the machine learning pipeline. • Implementations should benchmark machine learning approaches and algorithms to identify optimum fit to the specific data and application/use in terms of accuracy, precision, and speed. • Consideration of the representativeness of data used for training machine learning algorithms is important. Overfitting and lack of generalizability are well-known issues that can be alleviated through the inclusion of sufficiently diverse training data representative of the full data space.

There are two main limitations with the review presented in this article. Firstly, the literature was sourced from a single repository, namely Scopus. However, Meho and Rogers (2008) completed a comparison of Scopus and Web of Science and found that Scopus provided a more compressive listing of research, specifically due to the inclusion of conference publications, namely in the ACM and IEEE peer-reviewed conference proceedings and the Springer Lecture Notes in Computer Science series. For computing research, conference publication forms a significant volume of current research and given the computing and human-computer interaction base of the biosignal/XR domains, Scopus is an appropriate choice. With the addition of using the formalism of the PRISMA guidelines as the basis of this systematic literature review, we are confident our snapshot of the literature is comprehensive.

Conversely, the snapshot nature of the literature review is the second limitation. Our review was conducted in February 2022 and covered the period of 1 January 2016 – 7 February 2022. As with any technology-based review, new publications can outdate the reviews results. Nevertheless, our review snapshot does provide insight across a 5-year period and has identified several interesting trends in both the use and integration of the technologies considered. Given the pace of journal article publication, our review is strengthened by the focus on the Scopus repository and the inclusion of peer-reviewed conference articles, which are often published in a timelier manner. Thus, this review presents as a benchmark across working technologies rather than a state-of-the-art review. As is often the case, tried and tested exemplars are the most useful for practitioners and this is the target audience for this review.

Conclusion

This review has highlighted the recent research literature implementing XR technologies in combination with biofeedback and AI approaches, with a focus on the specific biofeedback sensors used in the context of simulation training or education contexts.

The results indicated a number of trends including the efficiencies of multiple biosignal data collection with single biosensing devices, the need for practical considerations, such as the use of light and portable devices, and that some biosignals, such as eye tracking, electrodermal activity (EDA), and photoplethysmograms (PPG) present as useful biomarkers of stress and/or cognitive load which are likely to be of particular interest in XR training contexts. Although a wide variety of machine learning (ML) approaches were identified, only a limited number employed real-time analysis of biosignals (1% of studies) which indicates current challenges in implementing such systems and the need for more work in this area.

Papers that met the selection criteria were predominately from the fields of education and healthcare, indicating the increasing use of biofeedback and AI technologies in these domains. However, other domains, such as defence and general industry, are expanding the use of XR technologies for training so there is significant scope for the application of the lessons learnt from the studies documented in this review. Thus, this review serves as a valuable resource for researchers, practitioners, and policymakers interested in the use of XR, biofeedback, and AI in training and skill development.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by a Real Response Pty Ltd Research Grant.

Author Biographies