Abstract

Introduction

The role of reflection in experience-based learning is discussed widely. This paper researches the effects of a reflection assignment as part of debriefing with qualitative and quantitative methodology in five simulation game-based seminars.

Research Design and Methods

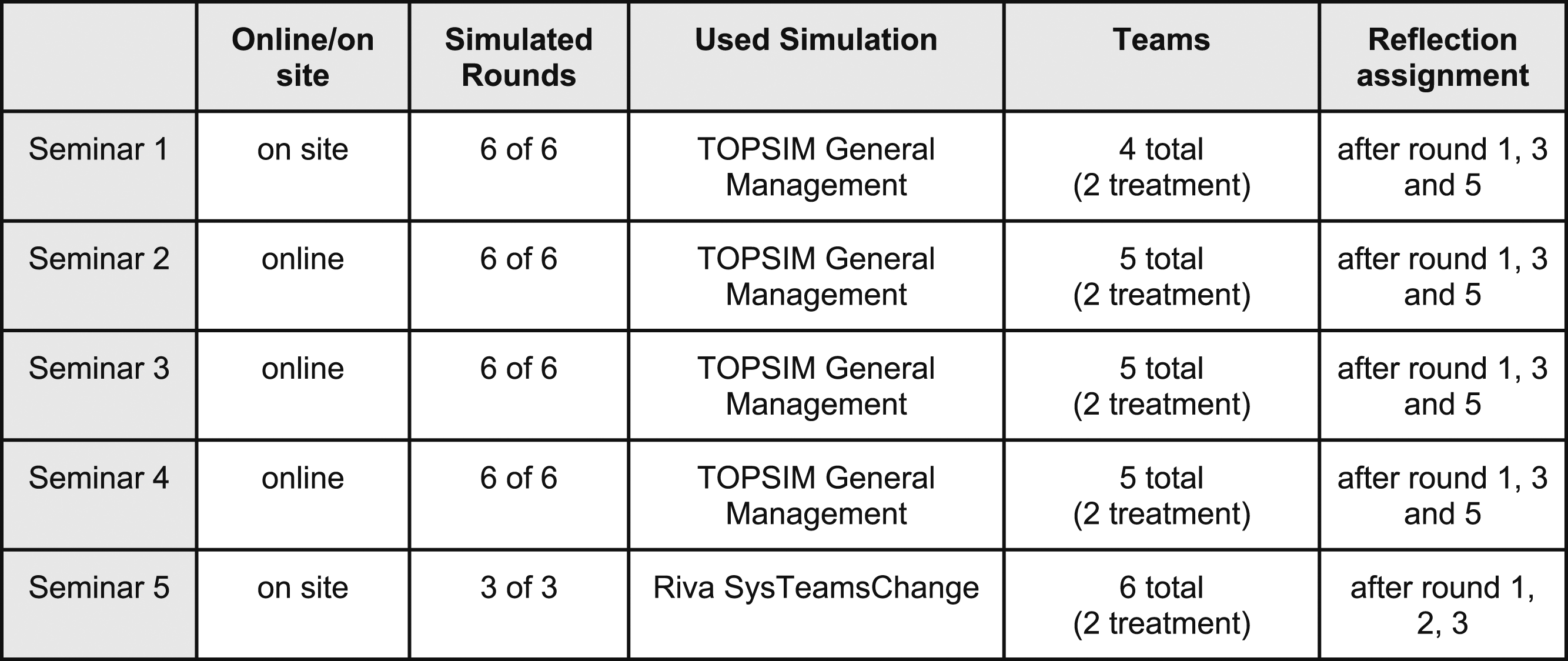

The intervention (reflection assignment) used in this study consists of three reflective questions that are discussed in groups. We aim to find out the effects of adding this intervention to the regular in-between-debriefings (three times during the whole seminar). The methodological setup is quasi-experimental and so, consisted of a test group and a control group. Two different types of simulation games were used, one a general management game and the other a change management game. Data used in this study is gathered from three different sources: First, we used in-game-performance data from both games. Second, we used two types of questionnaires to evaluate game experience and self-reported learning (MEEGA+ and ZMS inventory). Third, the reflection notes from the treatment-teams were used for qualitative analysis.

Results

In several dimensions (game-success, game-experience and self-reported learning) significant differences were found between treatment and non-treatment students. Qualitative data show a deep level of reflection for treatment teams differentiating for the two simulation games evaluated.

Discussion

Supported by the qualitative reflection data we assume that the better results for treatment-students are routed in the repetitive reflection. The permanent circular reflection helps treatment students to understand the games better and gain deeper insights.

Keywords

Introduction

In this research the effects of a formative assessment method consisting of three reflective questions is evaluated using a mixed method research design. In five sg based seminars treatment groups received an additional reflection assignment while non-treatment groups had “only” the normal debriefing. Differences between treatment and non-treatment groups are evaluated in various ways and qualitative analysis shows how reflection adds value for learning. The sgs used in this study are TOPSIM General Management (GM) and Riva Sys Teams Change (STC). To introduce the theory behind our research we draw the connection between reflection and learning and describe why this connection is relevant for learning with sg. After introducing the theory on levels of learning we outline the research-design and detailed research questions (RQ) for this study. So then we describe in detail how each RQ is analyzed (methods). After presenting the results of this study per RQ we discuss our findings and name limitations.

Introduction to the role of reflection in learning

The idea of sgs as an experiential learning method is “to provide an enhanced learning experience” (Pasin & Giroux, 2011, p. 1243). A simulated learning environment gives students the chance to experience, reflect and learn. Usually, sgs follow the structure of briefing, experience and debriefing (Duke, 2014; Geurts, Duke, & Vermeulen, 2007; Klabbers, 2009; Stoppelenburg, Caluwé, & Geurts, 2012). Briefing serves to introduce students to the simulation and prepare them with what they need to know about the sg (scenario, rules, roles and resources etc.) to start playing it. Part of briefing can be establishing goals for learning which can contribute to reflection later on. While the sg is played students act in their roles and gather experience, which then serves as input for reflection and debriefing both during and after the sg (Schwägele, Zürn, Lukosch, & Freese, 2021).

In literature sgs are associated with experience- and action based learning theories (Kolb & Kolb, 2009; Taylor, Backlund, & Niklasson, 2012), for example Kolb’s Experiential Learning Cycle (Alklind Taylor, 2014; Geithner & Menzel, 2016). It describes learning as a continuing cycle of concrete experience, reflection, abstract conceptualization and active experimentation (Kolb & Kolb, 2009). Learning can occur when experience is reflected and conceptualized. The new concepts are transferred to action, which leads to new experiences. Argyris and Schön developed another systemic oriented experiential learning theory and identified the concept of first and second order learning (1974) which is described in detail below. Schön adds the concept of reflection-in-action and reflection-on-action (1983). He elaborates how professionals handle (challenging) situations based on their experience by immediate reflection during their action (reflection-in-action) and reflecting on it afterwards (reflection-on-action). Relating to the concept of Schön, Greenwood adds the idea of reflection-before-action, meaning to anticipate a situation beforehand (1993).

Many authors emphasize the relationship between experience and reflection in experiential learning theories (Alklind Taylor, 2014; Hilzensauer, 2008; Klabbers, 2009; Kolb & Kolb, 2009; Nakamura, 2022). In sum, sgs offer opportunities to learn from experience through reflection. In the game play phases students aim to solve simulated challenges and thereby gather experience as an input for reflection/debriefing.

Contemporary research on reflection in simulation games

Reflection has a role in the learning processes within sgs (Baker, Jensen, & Kolb, 1997; J. Y. Lee, Donkers, Jarodzka, Sellenraad, & van Merriënboer, 2020; Nakamura, 2022). Adding reflection assignments during the gameplay is a form of formative assessment which enables participants to use the learnings from reflection and utilize the sg to the full. Only reflecting in debriefing after gameplay would leave out many opportunities for learning, whereas adding reflection triggers and opportunities during a sg session can serve as leverage points for learning. Specific research on the different forms of reflection and their effects in sgs is scarce at the moment. Nakamura (2022) researched the impact of adding pre-structured questions before the gameplay and in reflections and found a positive significant result on learning. Lee et al. (2020) performed a medical simulation study in which participants could take time outs resulting in higher experienced agency but not in an increased learning outcome. The authors explain the result by concluding participants did not receive specific instruction or prepared questions on how to use this reflection time effectively. Husebø et al (2013) researched the effects of debriefing questions on reflection during debriefing after gameplay with participants in medical sgs and found a positive (qualitative) result and suggests further research is necessary on types of questions asked and their effects on learning. We do not know if these results are generalizable to sgs in general, since medical sgs often have specific contexts such as procedural orientation with given norms on what behavior is correct according to pre specified procedures. However, there are also medical sgs having more open characteristics for instance when aimed at more tacit knowledge and competencies such as situational awareness.

These findings indicate structured questions could add value to (in-between-) debriefing to increase learning, however the number of studies so far is limited. This study aims to contribute to researching the effects of structured reflection assignments on learning with sgs.

Theoretical background on levels of learning

Argyris and Schön identified the concept of first and second order learning (1974) which has been used and referenced widely in management literature (Bartunek, 2014; Crossan, 2003; Friedman, Torbert, Nielsen, Silverman, & Bradbury, 2014). The basis of systemic oriented learning is seen in reflecting on and responding to deviations that happen in a given system. The type of response determines the type of learning involved. Theory development from the founding father of systems learning Chris Argyris is applicable here. First order learning can be defined as learning to apply predetermined rules and procedures in a given system. It leads to successful actions within the system. Second order learning requires a reflection on the norms behind the decisions and assumptions so processes can be adapted in such a way they fit the requirements of the situation. These process interventions are directed at checking if the norms applied are still relevant and adequate for the challenges the people in the organization are faced with (Argyris, 1977; Greenwood, 1988; Tosey, Visser, & Saunders, 2012). Greenwood (1988) emphasizes that especially second order learning is based on reflection because it criticizes the underlying structures and norms behind them. The concept of third order learning was introduced based on the work of Argyris as an extension of his theory (Tosey et al., 2012; Visser, 2007; Visser, Chiva, & Tosey, 2018). Third order learning means to reflect on how actors take on their roles in the system and if this is adding value to their personal values/needs and the values/needs of the system. In addition, third order learning is about learning to learn and remain adaptive. In this process also less tangible issues regarding virtues of wisdom and justice can be involved (Reynolds, 2014). Applying these levels of learning to the qualitative part of this study (especially research question 3 see next paragraph) enables us to draw conclusions on what type of learning was most prevalent and if this was in line with the aim of the specific case.

Aim of research and research questions

The aim of this research is to evaluate the impact of a structured reflection assignment that is repeated three times during the sg-based seminars as a part of the roundly in-between-debriefings. Therefore, treatment groups participated in a reflection assignment while non-treatment groups did not. The groups are then compared in various aspects using quantitative and qualitative methods. In detail the following research questions (RQ) are addressed in this study: - RQ1: How does the reflection intervention affect the sg results and perceived learning in the treatment groups? - RQ2: How does the reflection intervention affect game play variables (assessment of in-between-debriefing and debriefing, student engagement, teamwork, assessment of simulation)? - RQ3: What levels of learning/reflection do we see in the notes on the flips and can there be made interpretations on in what proportion they occur? - RQ4: What learning process do we see in the teams from round to round?

Research design and methods

Overview research design

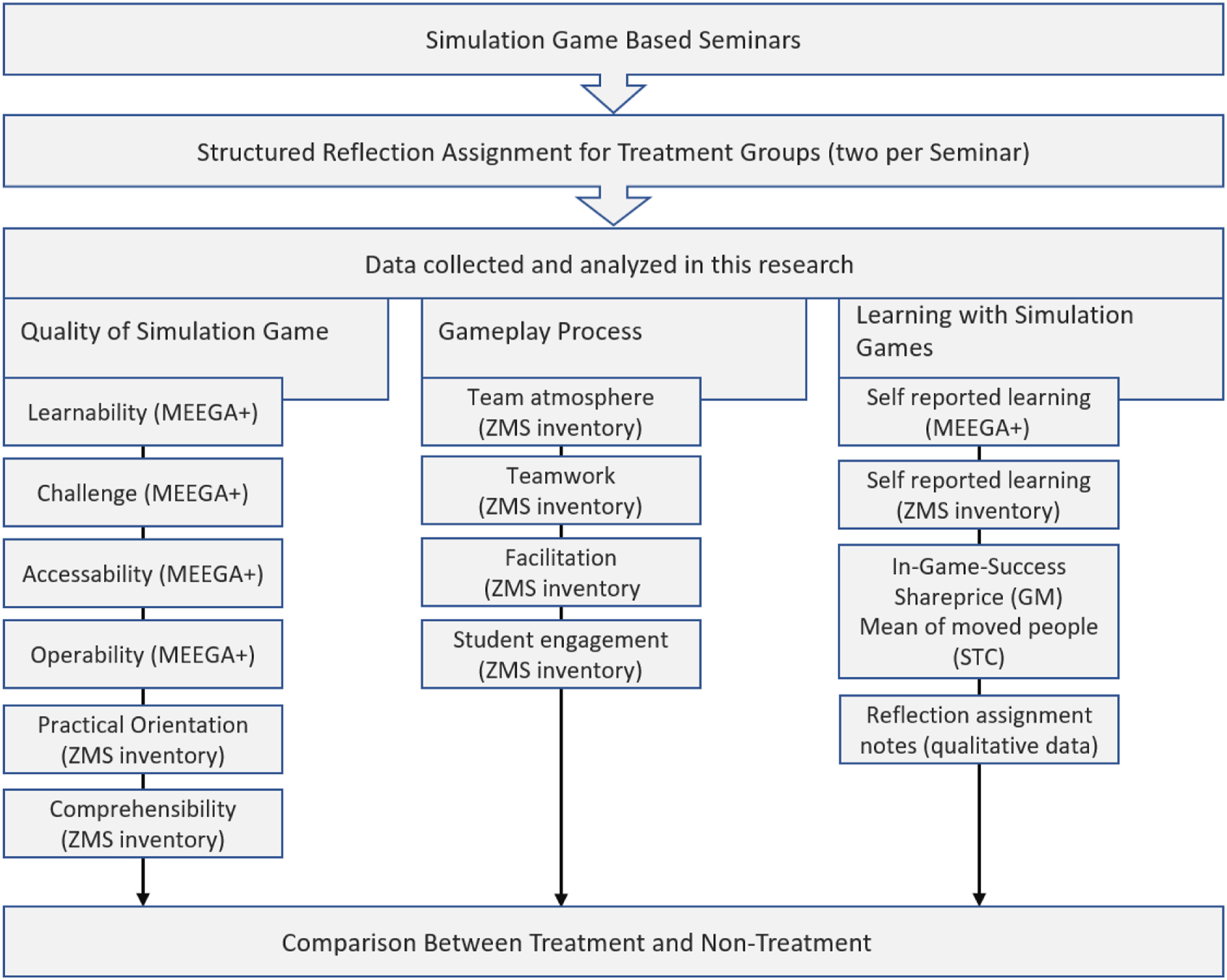

To address the formulated RQ a mixed method setup was used (see Figure 1). Quantitative evaluation was applied with two post game questionnaires and qualitative analysis was applied to the reflection notes gathered. Fife sg-based seminars were evaluated with a quasi-experimental design. Altogether 110 students participated in 25 teams. In each seminar two groups received a special reflection assignment with pre structured reflection questions (treatment groups) that was repeated three times during gameplay (beginning, middle, end). The other two to four groups (depending on how many students attended the seminar) did not receive the reflection assignment. In the treatment groups the assignment became part of the in-between-debriefing and was added after the discussion of results in the plenary. For non-treatment groups the in-between-debriefing ended after the plenary discussion. So, these groups had a little (about 10 to 15 minutes) more time to read, calculate and discuss their next round's decisions within their teams. The treatment was implemented three times during the sg-based seminars: At the start (after round one), in the middle of gameplay (round 3 for GM and round 2 for STC) and towards the end of the game (round 5 for GM, round 3 for STC). The procedure differentiated slightly because GM consists of six rounds and STC of three. There was no interaction between the groups at the reflection moments. The reflection-assignment exists of three pre structured questions that guide the teams to reflection on their decisions and behavior during the sg: 1. What went well? 2. What went not so well? 3. What do I need, what do we need to reach our goals? Overview research design.

These pre structured questions were open and quite general to allow participants the opportunity for personalized input on what they found relevant. Previous studies (J. Y. Lee et al., 2020; Nakamura, 2022) indicate that pre-structured questions support learning via reflection and also preparation with goal setting for the next game round.

In onsite seminars the teams received a prepared flipchart with invitation to discuss the questions written on the flipcharts and write their answers on the chart. In other case studies (Wijse-van Heeswijk et al., in press) we noticed participants were not so inclined to fill out the questions if they were not pre written on the flip over. In online seminars the teams received a prepared PowerPoint sheet with the same questions and were invited to discuss answers and make notes by screen-sharing. Afterwards the results were presented briefly to the professor and the other team.

At the start of the seminars students were informed that the seminar was evaluated in the context of a research project on the role of learning in sgs. Participation in the study was voluntary and data was gathered anonymously using Evasys Version 8.2.

Quantitative analysis approach (RQ1 and RQ2)

RQ1 focuses on the output of the seminar (learning and game results) while RQ2 focuses on the gameplay process (teamwork, engagement, assessment of simulation). To address these RQs students were asked to answer a longer questionnaire at the end of the seminars. These questions were aimed at reflecting on the seminar from start to end. The questionnaire consists of two inventories that are described below. Both inventories consider self-reported learning results (RQ1) as well as gameplay-process variables that are addressed in RQ2 (see Figure 1 for an overview). The first part of the questionnaire is called the ZMS inventory and was developed at the Center for Management Simulation. It measures learning satisfaction and relevant influencing factors on learning with simulation games (Trautwein & Alf, 2022) and consists of 27 Likert scaled items that can be combined to seven scales. Two scales measure the quality of the simulation game regarding comprehension of the game and the relation to realism of the game. One scale of five items measures how the simulation is facilitated. Two scales measure different aspects of teamwork (task orientation and atmosphere). Another scale measures the student engagement during the seminar and the final scale is about overall satisfaction and learning. Exploratory and confirmatory factor analysis confirmed the seven scales used in this research and alpha values between .783 and .943 can be reported for the scales (Trautwein & Alf, 2022). The second questionnaire is the MEEGA+ instrument developed by Petri et al. (2017) to evaluate educational games. The MEEGA+ questionnaire is a validated instrument and was originally developed for games with digital interfaces. Therefore, some questions were adapted to the games used in this study. For example, items used to evaluate the accessibility of games ask about fonts and colors. In the context of this study we asked “The game interface is clearly arranged.” and “The user interface is simple and well structured.” The social interaction scale of MEEGA+ asks if there was the possibility of interaction which is natural for the sg used in this research. So, we converted the question to whether the simulation promotes cooperation with other students. For quantitative analysis treatment students and non-treatment students are compared (for some analysis treatment groups and non-treatment groups are compared using group means). Since evaluation data on group level is n < 30 Mann-Whitney-U-tests are calculated for test statistics. U-Tests are appropriate for smaller samples which we have on group level and are even appropriate for non-parametric data (Bortz & Schuster, 2010; Eid, Gollwitzer, & Schmitt, 2011, p. 322).

To address RQ1 also success indicators from the sg are used knowing that this is controversially discussed (Brazhkin & Zimmerman, 2019; Pasin & Giroux, 2011). Critique on the use of game success indicators is that in some games groups could perform well by random luck instead of learning. We find this is very unlikely for the sgs used in this study because the simulations used have a high level of complexity. For GM students have to make many interdependent decisions in six consecutive rounds 1 which means that they have to bring their decisions into a coherent overall concept. Also, there is not one right solution but multiple strategies possible which have to be performed in a coherent way to achieve game success. For STC students have to play 42 action cards in a logical order according to the simulated change management process. For many action cards it is required to select proper game characters to play them which has a certain level of difficulty. Since many different characters in the game have to be approached it is highly unlikely one would select the right candidates by luck for each intervention every time. In both simulations it can be assumed as very unlikely that successful decisions can be taken by chance permanently. If a team performs well in the game, it indicates at least that they have learned to a certain extent how to play the sg in line with the learning goals of the sg.

Since RQ2 addresses process variables such as the quality of in-between-debriefings a short questionnaire was conducted after each in-between-debriefing. Students were asked to answer the perceived quality of debriefing using 3 items that were combined to a scale. Treatment groups filled it out after their assignment, control groups after the joint debriefing discussion. Via this procedure it is possible to compare the treatment groups with the non-treatment groups on the perceived quality of in-between-debriefing.

Qualitative analysis approach (RQ3 and RQ4)

In addition to quantitative data from questionnaires and game performance data the research design also provides qualitative data from the treatment groups. The notes from the reflection assignments were collected, documented, and used for qualitative analysis. This may grant insight into the level of group reflection and by comparing consecutive reflections of groups in learning processes. With RQ3 we look at the data on a micro level analyzing the single answers given by groups. With RQ4 we analyze the data in a broader view comparing the notes in a chronological manner.

With regard to RQ3 we converted the text from the flaps to an excel file and assigned codes according to the coding scheme in the appendix (Argyris, 1977; Visser et al., 2018): Code 1 for first order learning, Code 2 for second order learning and Code 3 for third order learning. Using these codes, we analyze the reflective response to find out what teams were involved with what learning levels during the seminar (these could either be actual learnings or reflections on certain learning levels). As described above, treatment teams participated in three reflection interventions during the process of gameplay answering three open questions (after round one, in the middle of the game and towards the end of the game).

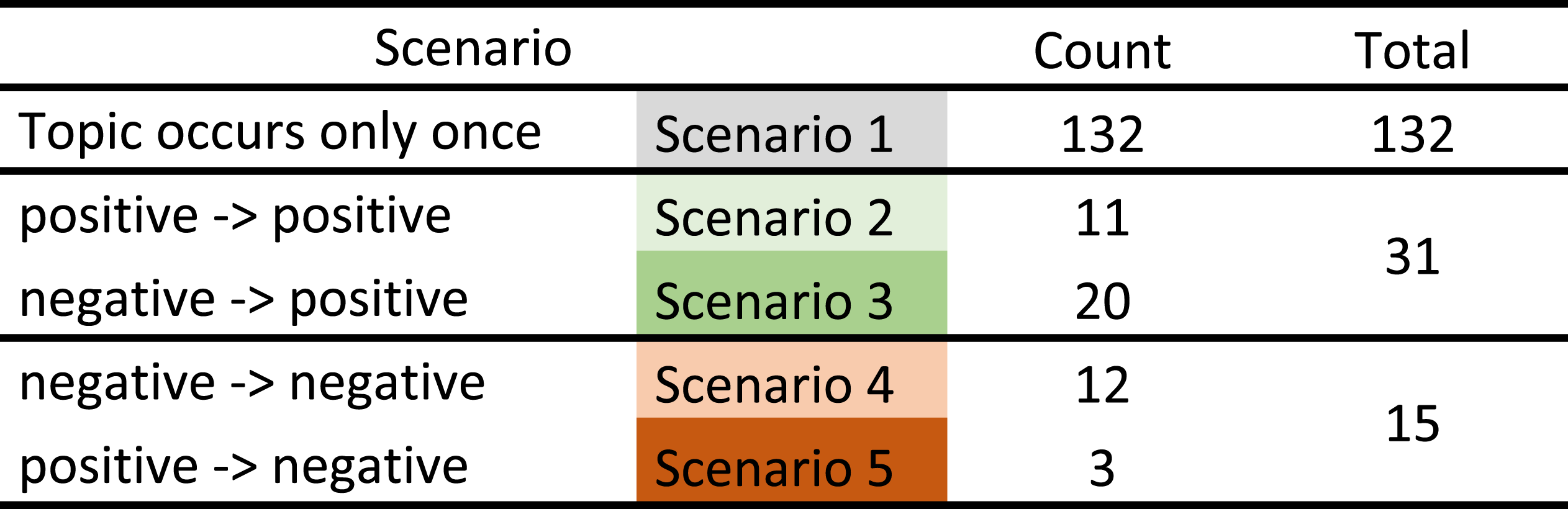

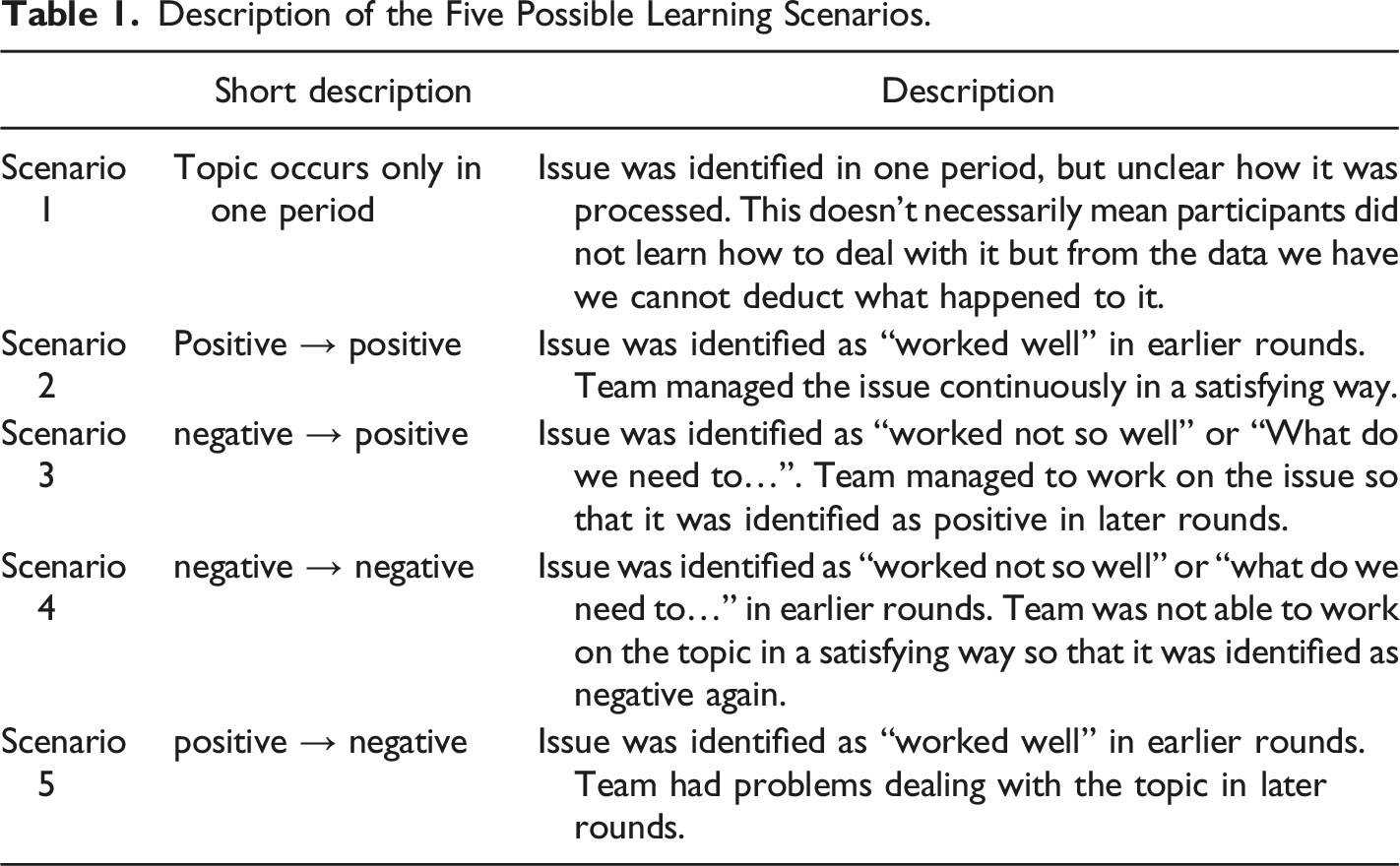

Description of the Five Possible Learning Scenarios.

Using the described scenarios, we see which topics were discussed when in the seminar and how the topics proceeded during the seminar. For example, scenario 3 negative -> positive shows that teams identified an issue as relevant but have so far not managed to work on the issue in a satisfying way (“went not so well” or “what do we need…”). In later rounds the topic is identified as “worked well”, indicating that teams found solutions to manage the issue properly. Especially scenario 3 can be interpreted as an indication for learning. By working on the issue it turned from negative to positive, indicating that students learned how to deal with the problem. 2

Case study and context description

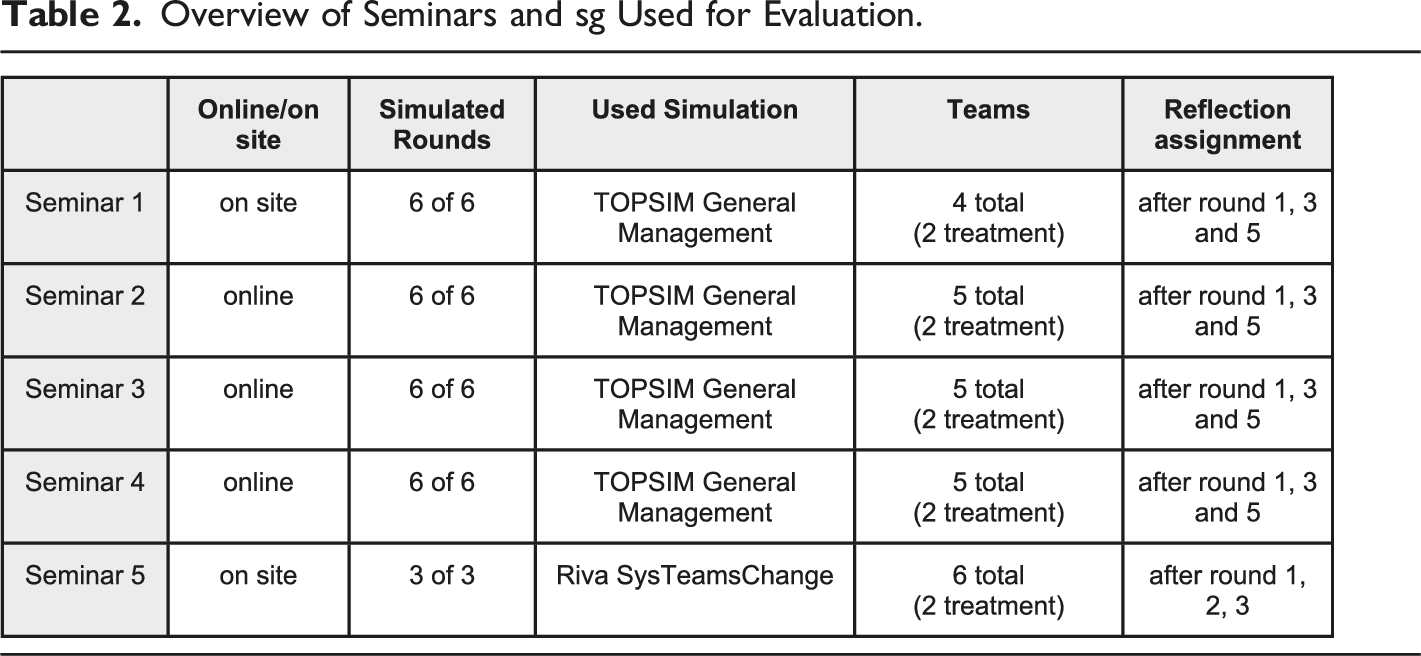

Overview of Seminars and sg Used for Evaluation.

Results/findings from both games

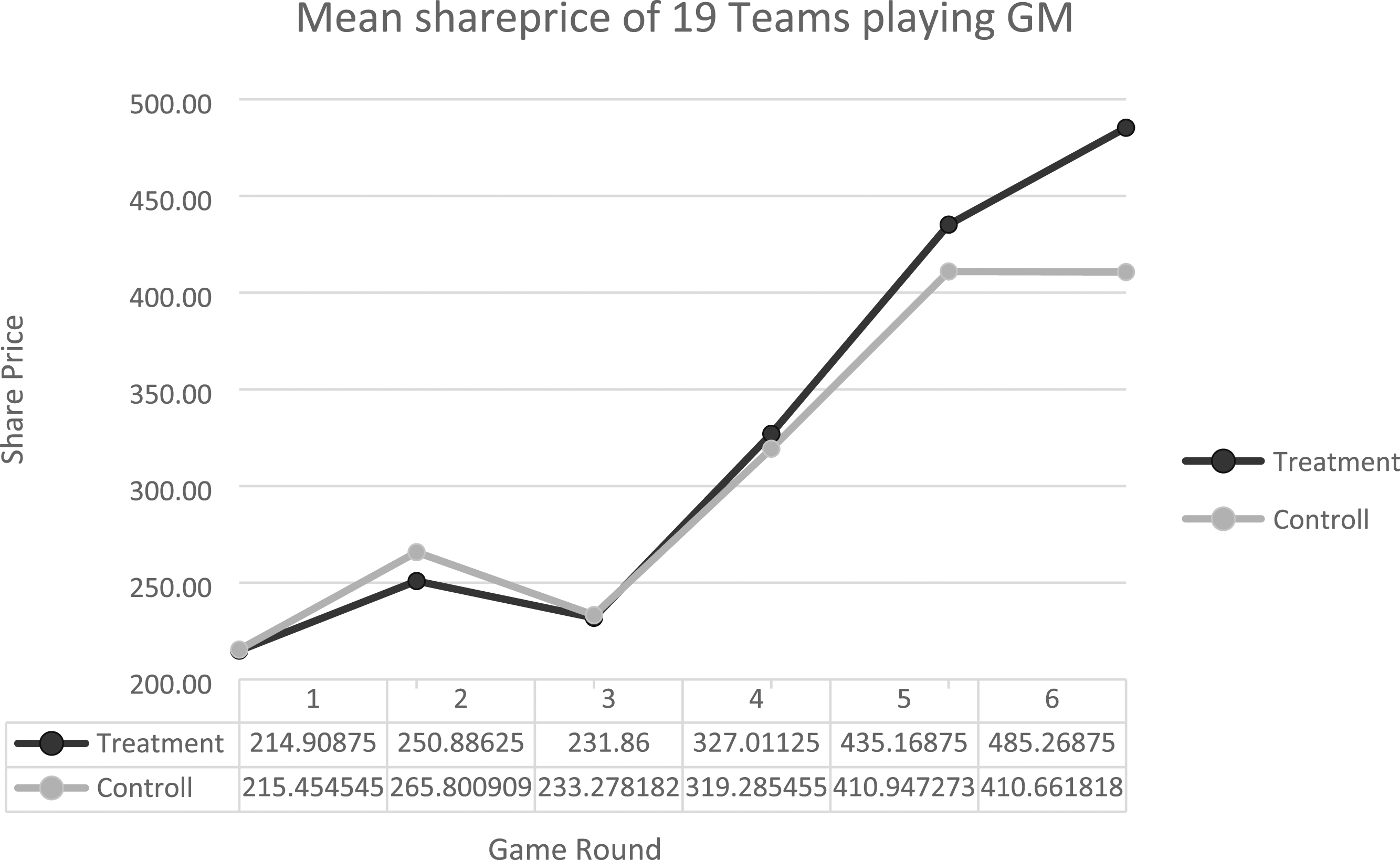

In this chapter the results of our research are described. In the text we follow the structure of the RQ raised above. game performance of treatment and control teams for GM.

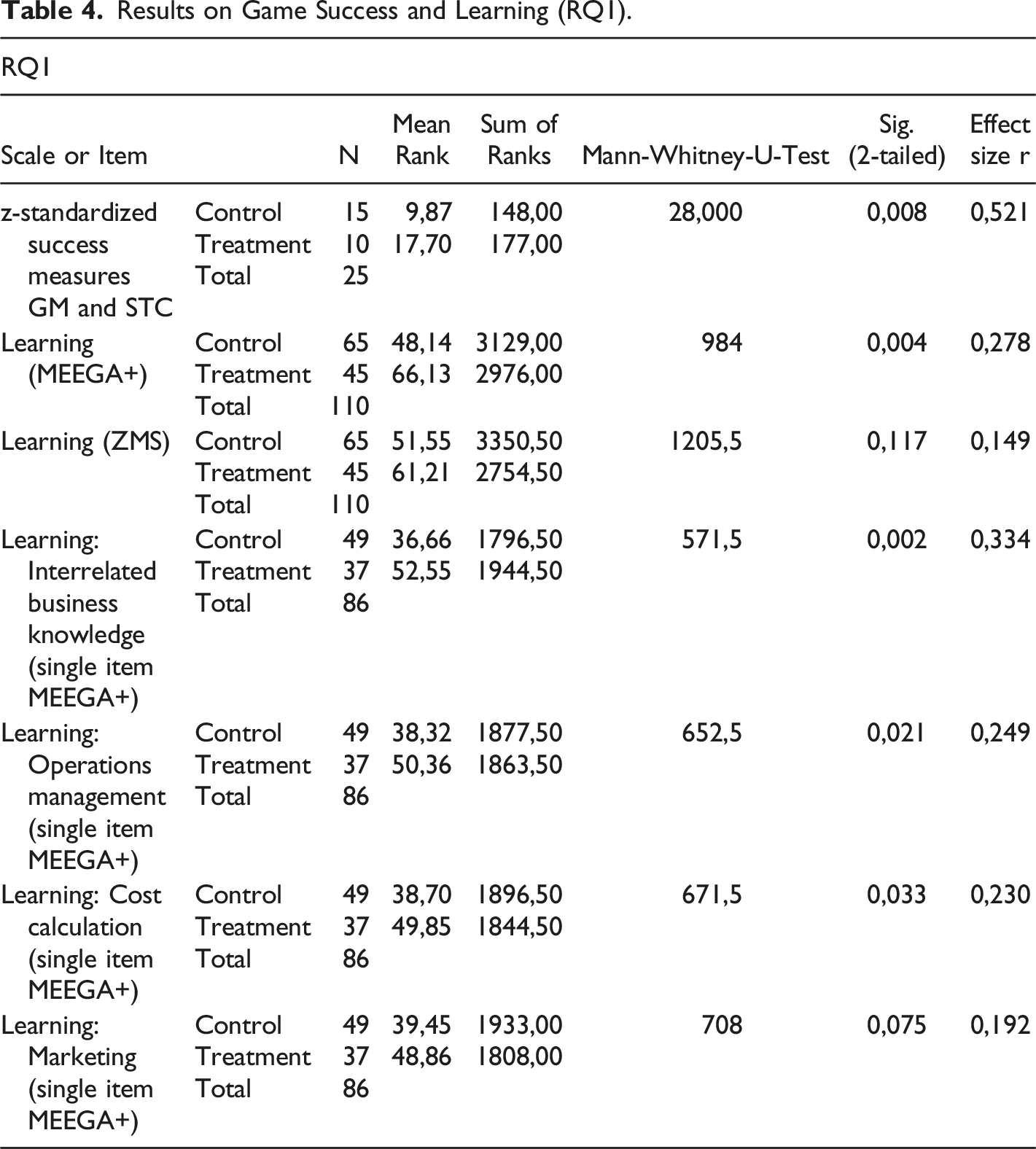

RQ1: Effects on performance in the game and perceived learning

Comparing treatment teams and control teams for GM (n=19) we see basically no effects in the first rounds. Starting from round 5 we see treatment-teams having better game results (Figure 2). Results in round six show a mean share price of 410 for non-treatment teams and a mean share price of 485 for treatment teams. 3

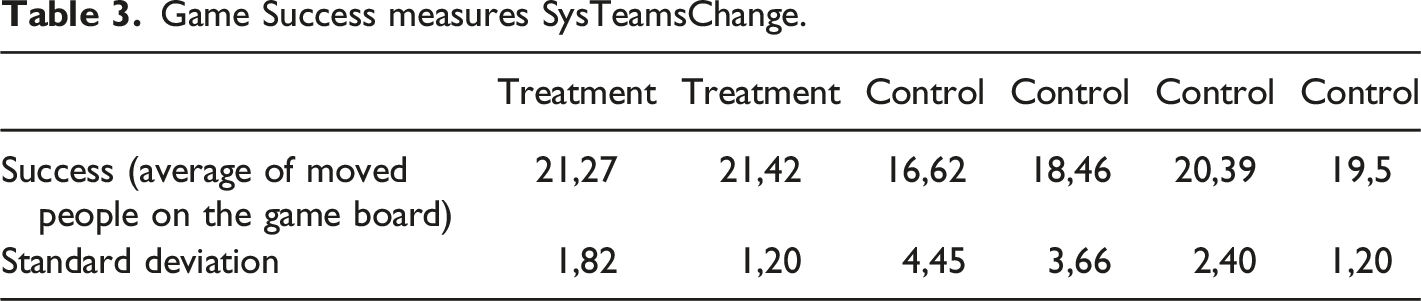

Game Success measures SysTeamsChange.

Results on Game Success and Learning (RQ1).

Interpreting students' perceived learning variables, a highly significant result is found on the MEEGA+ learning scale but not on the ZMS learning scale (Table 4). In detail three out of the four MEEGA+ learning single items show significant results: Treatment students assessed their learning better regarding cost calculation, operations management and for interrelated business knowledge (Table 4). The conflicting results between learning on MEEGA+ and the ZMS inventory may be due to the fact that the ZMS scale is formulated very general and consists of 6 items measuring learning and satisfaction on a general level (e.g. “I have learned a lot in the simulation game” or “How satisfied are you with the course overall?”), whereas the variables of MEEGA+ are formulated very specific 4 and request students to assess their learning with regard to a very specific topic. From the results can be deduced that treatment groups and non-treatment groups assessed the sg not different concerning learning and satisfaction (ZMS inventory) but made a difference when they were asked for detailed learning goals.

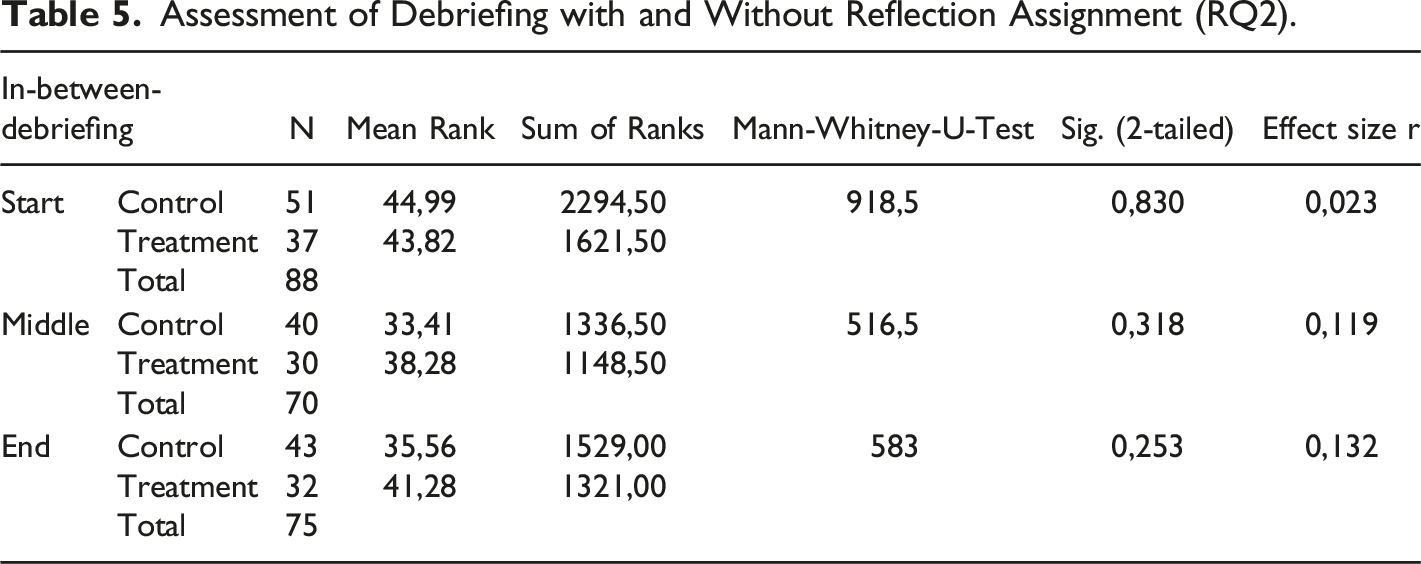

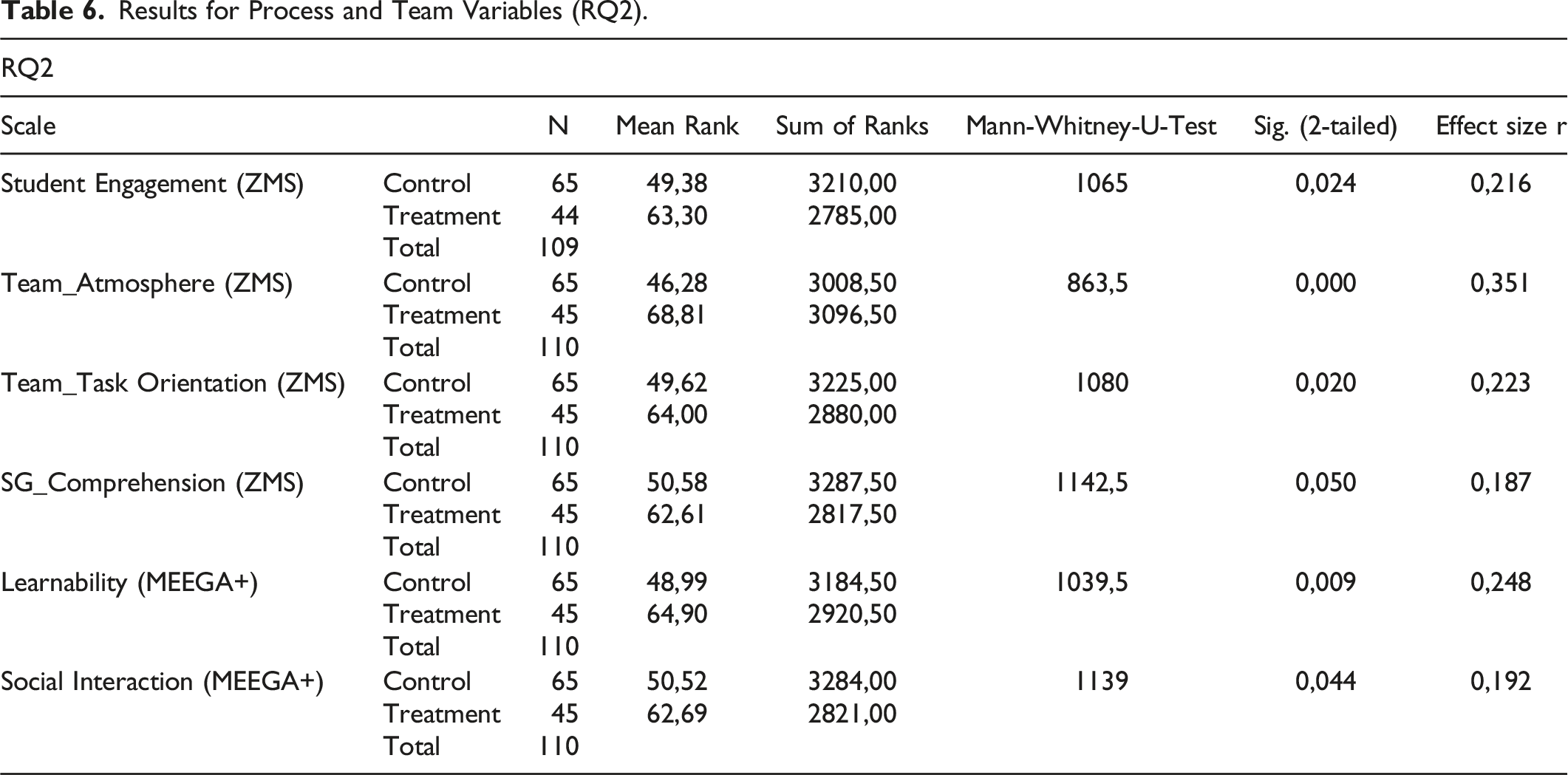

RQ2: Effects on process and team variables

Assessment of Debriefing with and Without Reflection Assignment (RQ2).

Results for Process and Team Variables (RQ2).

Looking at the MEEGA+ scales the results are in line with what is seen in the ZMS inventory. Among others we find significant differences for treatment and non-treatment students on the learnability scale and on the social interaction scale. Learnability here means to learn how to use and play the game (Petri, Gresse von Wangenheim, & Borgatto, 2018) and so has an intersection with the simulation variables from the ZMS inventory that ask for comprehension of the game. The structured reflection assignment likely leads to an improved understanding of the sg. In the treatment of our qualitative results we will go deeper into this explanation. The significant difference on the social interaction scale confirms the results from the ZMS inventory regarding teamwork. Both inventories suggest that the structured reflection assignments lead to an improved social experience as a group.

We conclude that the intervention did not influence the perceived quality of the roundly in-between-debriefing. But it has small to medium effects in three areas: treatment students are more engaged, they rate themselves to have a better understanding of the sg and an improved experience as a team. The three described differences may have an impact on the better results and higher assessment for learning that were presented in RQ1.

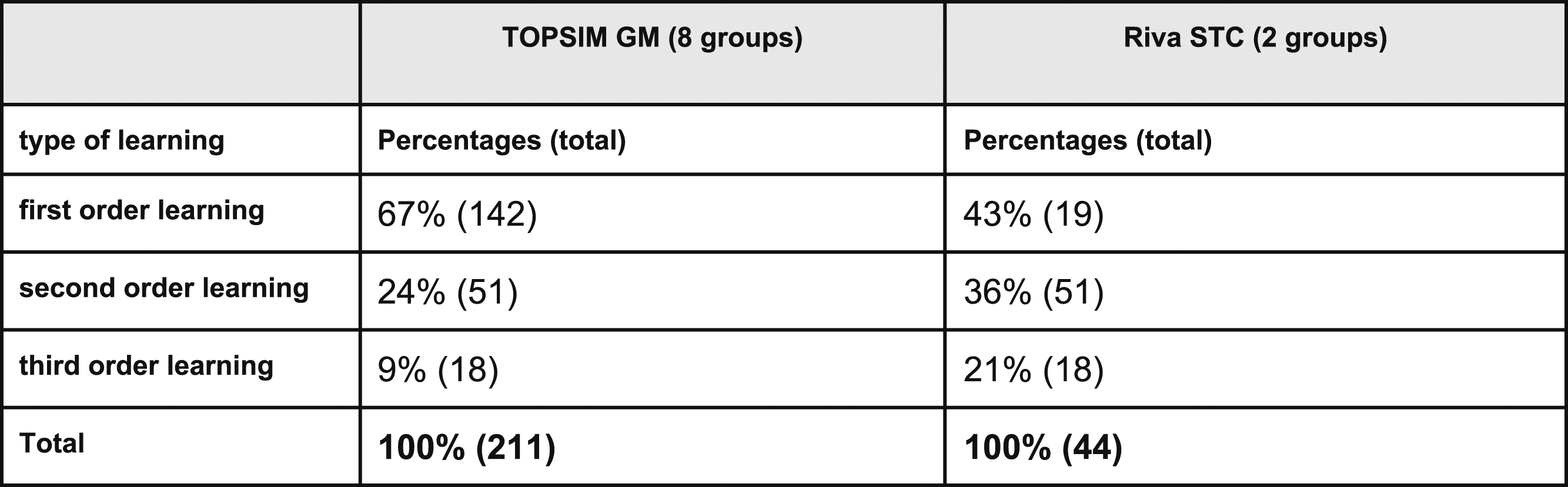

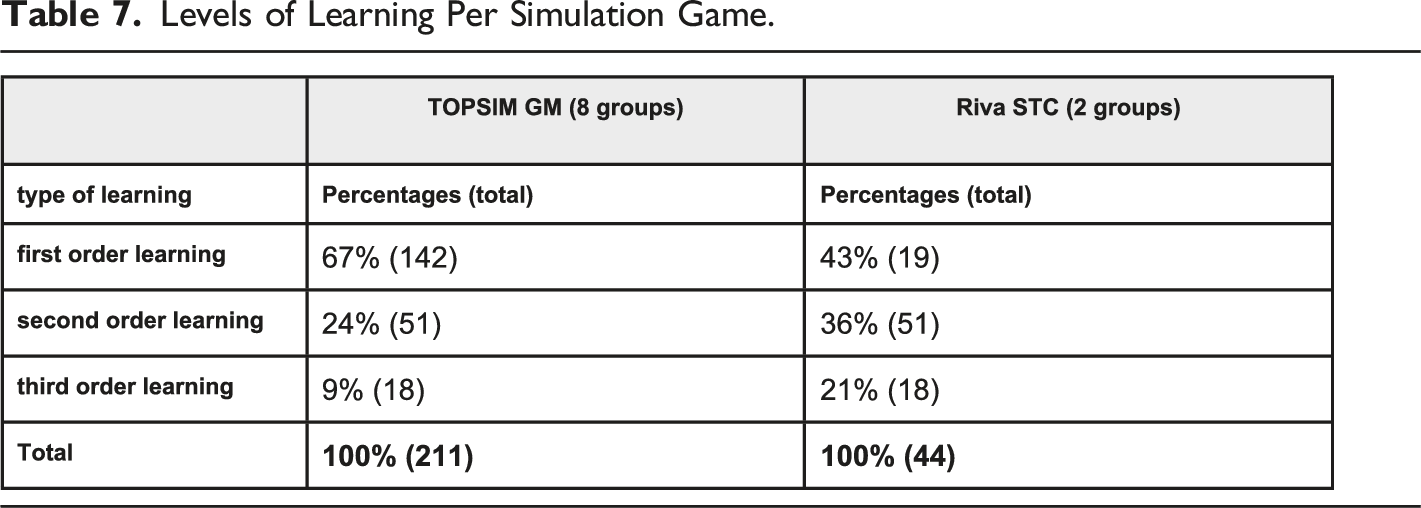

RQ3: What levels of learning/reflection do we see in the notes on the flips?

In the following we present the results of our qualitative analysis. From gathered answers on the reflection questions we can deduct that students discussed a broad variation of topics. Potential learnings are diverse and evolve during the game play over different game rounds. The teams made clear points on what they thought went well, what they considered not going so well and how they thought they could improve this.

On a regular basis it is visible that goals were achieved and new goals or topics were set every round or previous goals were further specified. These are both indicators for learning, because it is often visible that a preset goal is achieved in the next round or otherwise on the final third flip over. A further specification of a goal can be seen as deepening of insight (Ries, Schaap, van Loon, Kral, & Meijer, 2022). Sometimes it is not visible a goal has been achieved because no references are made toward it, however we do find participants set new goals/topics that could not have been set if they hadn’t solved the other issues. The learning aim of the simulation games used in this study is to manage as a team a large variety of indicators via sharing of information, planning and interpretation of results. Therefore, it makes sense the teams continuously add new topics especially when knowing the complexity of the game increases with more information and indicators as the game rounds proceed.

Levels of Learning Per Simulation Game.

For the change management game we see a majority of 43 percent in first order learning (Table 7). This can be explained by the goal of this game, which had less emphasis on applying pre-determined learned norms and rules because the players actually had to find out how to influence the game in such a way they developed an effective approach. Therefore, the process component logically had a more prominent role in this game and accounted for 36% of the cases. While third order learning accounted for another 21%, which makes sense because there was a stronger emphasis on the process and the participants also needed to reflect more on their role in the process and their added value.

A remark has to be made on the sample size which was considerably smaller for STC as opposed to the general management game (eight teams in GM, two teams in STC). Though the sessions were representative to other sessions as confirmed by the experienced facilitators with this game.

What does this mean in relation to the goal of the game? In GM the learning goal for the students was to run a profitable sustainable company. In STC students' learning goal was to move organizational members through the phases of organizational change. The type of learning goal combined with the characteristics of the sg’s and the facilitation interventions have implications for the type of learning taking place but how these actually relate and what the exact implications are is unknown. We state this cautious because learning as intended doesn’t necessarily deliver learning as happened (Leigh, 2003; Wijse-van Heeswijk et al., in press).

For both games we count a majority of first order statements on the reflection notes (e.g., “investment in new machines” or “not reached our sales target”). It shows us that teams are involved in understanding the content of the simulations and finding good decisions. This is in line with the ideas of the games: they are designed and used to apply knowledge and experience the interdependencies of systems. Seeing also a significant number of second and even third order statements emphasizes that students learning with simulation games are active participants in simulated social organizations. They are not only confronted with content but must make organizational decisions. In the third GM seminar one team reflected on the exchange of information within the team. This second order statement can be seen as an example for organizational decisions teams had to made. It can be assumed that decisions on different levels (first, second, third order) interact with each other. The organization of the exchange of information within a team may have great impact on facts that are available for decision making. Having not enough facts (first order) for decision making could lead to the idea to change the organization within the team (second order).

RQ4: What learning process do we see in the teams from round to round?

The reflection-notes on the flips document the group discussion in a chronological manner. So, the notes provide insight into a process of discussion and learning the treatment groups went through. As an example, two out of ten group reflections are elaborated in detail here. For these two examples all data can be found in the appendix (more data are available with the researchers). Next to the examples a summary containing data from all groups is given.

GM1 Team A (see appendix): For this group most of the topics occur only once (24 times, scenario 1). It remains unclear why the groups lost track of the topic. Possibly students learned immediately how to deal with the topic or found the topic irrelevant in later rounds. For scenario 1 it is unclear. We count three issues that were discussed as problematic in reflection one and two (“Calculation of cost of goods sold for copy budget” (1) “Staff utilization too high” (2), “Sales volume estimated too high” (3)) but were discussed as “went well” in reflection 3, which is labeled as scenario 3. In reflection one and two the topic of appropriate staff utilization was discussed as problematic (scenario 4). Only one topic was discussed as satisfying in the beginning (“good share price”) but as problematic in later rounds, which therefore is labeled as scenario 5.

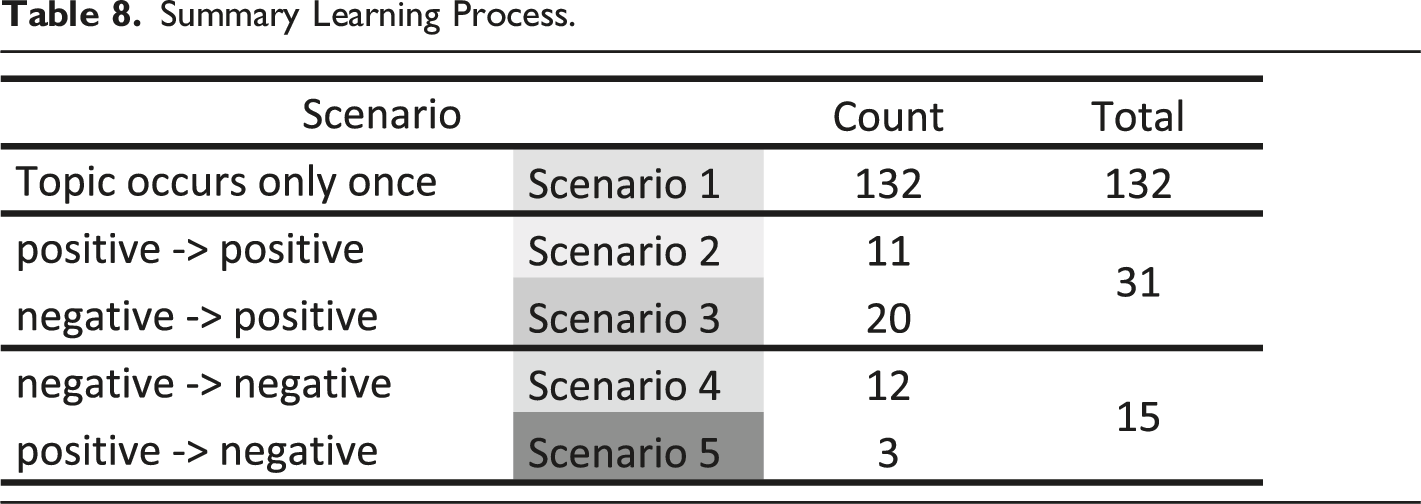

STC Team blue (see appendix) For this team we see seven examples of scenario 1. Scenarios 2 to 4 are found once each and no example of scenario 5 is seen. After round one the team stated that `a plan` was needed. Indeed, it is essential for the simulation to bring 42 action cards into a structured and continuing order. After identifying their lack of a plan, the team obviously found a functional pattern (a plan) because it was mentioned in all later reflections as positive. After round one the team identified a major problem and was able to work on it in the next rounds. These two examples lead to an inductive hypothesis explaining why we see a better game performance for treatment groups and higher values for self-reported learning: The structured reflection assignment helps students to identify and formulate relevant problems to work on. Formulating issues may reduce complexity and help teams to focus on relevant problems to work on.This hypothesis is confirmed by summarizing the qualitative data. As already seen in the described examples the first scenario is found most for all ten groups (Table 5). Due to the open reflection questions, it is logical to see topics that occur only once or are only relevant for one round. Learners proceed in learning and things that are learned (or do not matter anymore) can disappear from the reflection list. In 20 instances we see teams identifying an issue as problematic but finding ways to deal with it in a positive way in later rounds (scenario three). Identifying an issue as positive in more than one round (scenario two) is found 11 times. Altogether 15 times we see teams naming topics as problematic in more than one reflection round, indicating that teams were challenged by the topic and did not find satisfying solutions for them yet (scenario four). In only three cases we see issues `falling` from positive in earlier rounds to problematic in later rounds. Looking only at the four scenarios (two to five) that afford a topic to be mentioned at least in two reflections we can state that scenario three appears most frequent (Table 8 following). It confirms the inductive thesis that the consecutive reflection assignment helps groups to identify relevant topics and to work on them successfully.

Summary Learning Process.

Conclusion and Discussions

With a quasi-experimental research design the role of pre structured consecutive reflection questions for learning in sg was researched. In five sg-based seminars treatment groups participated in three reflection interventions (start, middle, end) with three pre structured open questions while non-treatment groups did not. It was found that treatment groups performed better in the games and assessed their self-reported learning higher when asked for concrete learning goals (RQ1). Differences between treatment and non-treatment students are also seen in the assessment of the gameplay process (RQ2). Effect sizes for sg-results and learning (RQ1) and engagement, teamwork and sg-comprehension (RQ2) were small and medium. With regard to the scope of the intervention this is still a remarkable result. In block seminars lasting two to three days three reflection sessions of fifteen minutes each made a difference. Treatment students see themselves to be more engaged in the game and have higher values for teamwork (ZMS inventory) and social interaction (MEEGA+). Also, the learnability (MEEGA+) and the comprehension (ZMS inventory) of the game was rated higher by treatment students, indicating that they had a better understanding of the sgs. Analyzing the reflection notes of treatment groups with qualitative methods we mainly find examples for first order learning and a tendency of more second and third order learning in the change management simulation. It can be assumed that the simulations address different levels of learning (RQ3). Looking at the reflection notes in a chronological order we find reflection-groups to identify relevant problems and to manage them successfully (RQ4).

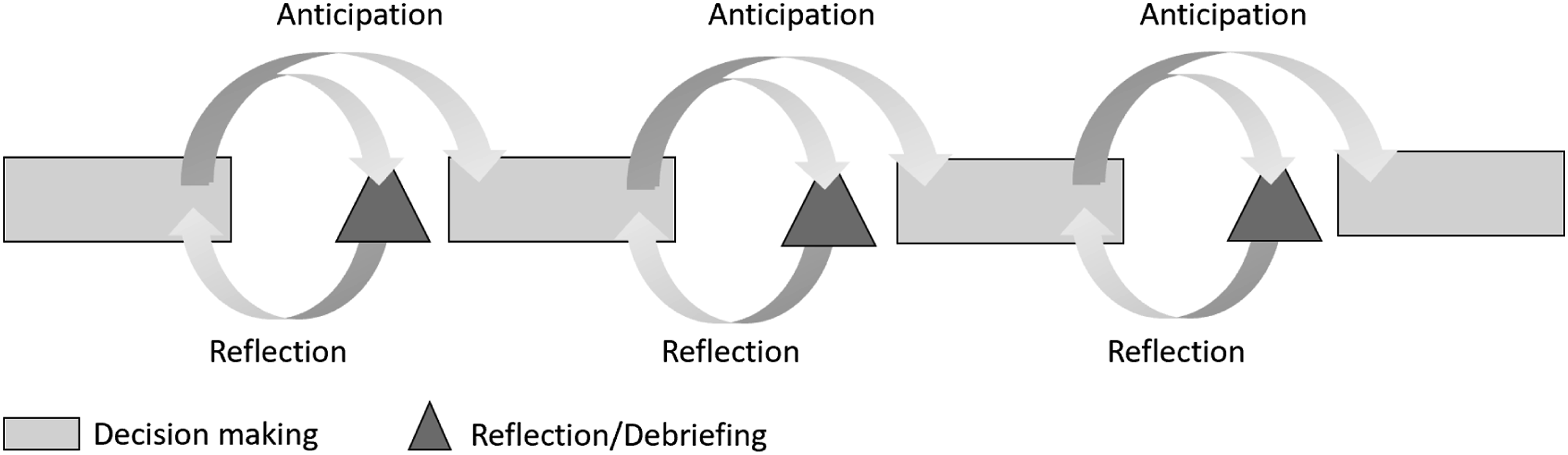

These results provide a congruent picture. The reflection intervention required group discussion and the results emphasize that treatment students rated their group experience and social interaction higher than non-treatment students. Treatment students rated themselves to have a slightly better understanding of the simulation games which is in line with better self-reported learning and even better success values by the end of the simulation games. Structured group discussion with open reflection questions (What went well?, What went not so well? and What do we need to improve?) may have led to a better understanding of the relevant problems for the simulation which leads to better results. In our qualitative analysis we see further indications explaining why treatment teams have better results and might have profited more from the seminars. The repeated reflection assignment helped teams to identify relevant problems and in many cases the teams were able to work on them properly (RQ4). For GM we reported roundly results (for STC roundly results are not available) and it is obvious that only in the last rounds treatment and non-treatment teams differentiated. This matches with our qualitative results. The reflection assignment serves as a circular learning mechanism. It takes repetition identifying issues or goals, working on them, reflecting them again and then to restart the circle. This is in line and confirms the theory on experiential learning. Experience and reflection complement each other in a circular relationship (Hilzensauer, 2008; Kolb & Kolb, 2009). Interrupting the gameplay with reflection assignments gives students the chance to look back to decision making and to identify relevant issues as shown in Graphic 1. By this process treatment teams gained focus in a complex and confusing environment. The relevant issues found are not only reflected but also anticipated for the next round of decision making. This leads to clearer ideas for action in the next rounds and provides players with agency. We think it is mainly this permanent circle of reflection and anticipation that helped treatment teams to understand the games better and therefore to achieve better results. Process of decision making and reflection.

The overall conclusion of this study is adding structured reflection questions for (in-between-) debriefings during sg sessions adds value to learning.

Limits and further recommendations

Even though the results are congruent and plausible they should be confirmed with further research and more participants/groups. Students participating in this study are all business students from one University in Stuttgart, Germany. To confirm and generalize these results more research is needed applying different contexts, cultures and professions.

We were able to use at least two different simulation games in this study. Further research should evaluate if the presented results are confirmed when applying the reflection assignment to other simulation games.

The discussion notes that were used for qualitative analysis represent only the results of the discussion and give only a narrow insight into the discussion. To understand this even better a deeper insight is needed and further qualitative data of group discussions and gameplay (voice, video) could be collected and analyzed. Especially the reflection notes of one group were almost the same from round to round and researchers were not sure how seriously the group took the discussion.

Supplemental Material

Supplemental Material - The Role of Reflection in Learning with Simulation Games – A Multi-Method Quasi Experimental Research

Supplemental Material for The Role of Reflection in Learning with Simulation Games – A Multi-Method Quasi Experimental Research by Tobias Alf, Marieke de Wijse, and Friedrich Trautwein in Simulation & Gaming.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.