Abstract

Background

Clinical simulations are complex educational interventions characterized by several design features, which have the potential to influence cognitive load, that is, the mental effort required to assimilate new information and learn. This systematic review and meta-analysis explored the associations between simulation design features and cognitive load in novice healthcare professionals.

Methods

Based on the Joanna Briggs Institute methodology, a search was performed in five databases for quantitative studies in which the cognitive load of novice healthcare professionals was measured during or after a simulation activity. Each clinical simulation was coded to describe its design features. Univariate and multivariate mixed model analyses were performed to explore the associations between simulation design features and cognitive load.

Results

From 962 unique records, 45 studies were included and 27 provided enough data on subjective cognitive load (i.e., Paas Scale and NASA-Task Load Index scores) to be meta-analyzed. In the multivariate analysis for the NASA-Task Load Index scores, each repetition of a simulation using the same scenario resulted in a linear decrease in cognitive load. In contrast, technology-based instruction before or during a simulation activity was associated with higher cognitive load. In the univariate analyses, other features such as feedback and instructor presence were also statistically associated with cognitive load. Regarding the univariate analyses of the Paas Scale scores, simulator type, briefing, debriefing, and repetitive practice were statistically associated with cognitive load.

Conclusion

This is the first meta-analysis exploring the relationship between clinical simulation design features and novice healthcare professionals’ cognitive load. Although the findings show that several design features can potentially increase or decrease cognitive load, several gaps and inconsistencies in the current literature make it difficult to appreciate how such reciprocity influences novice healthcare professionals’ learning. These limitations are discussed and avenues for educators and further research are suggested.

Background

Clinical simulations are often used in healthcare education to replicate real-world patient scenarios in an environment safe for education and experimentation purposes (Cook et al., 2013). According to simulation standards, a clinical simulation should be composed of three phases: (1) briefing, (2) simulation, and (3) debriefing (INACSL Standards Committee, Watts, et al., 2021). Specifically, the briefing is the time allocated before the simulation to prepare participants for the simulated clinical experience (INACSL Standards Committee, McDermott, et al., 2021). It can be used to identify participants’ learning expectations, build integrity, trust, and respect among them, or provide orientation to the simulation environment. The simulation phase is when participants experience the simulated clinical experience according to specific learning objectives (INACSL Standards Committee, Watts, et al., 2021). During this phase, a facilitator (i.e., generally a person skilled and trained in simulation) is dedicated to facilitating the simulated experience. They must adjust the course of the simulation according to learners and provide appropriate feedback during or after the simulation (INACSL Standards Committee, Watts, et al., 2021). The debriefing usually takes place after the simulation and is when professionals come together to reflect and discuss their experience for learning and improvement (INACSL Standards Committee, Decker, et al., 2021). Although widely used in healthcare professional education, clinical simulations remain complex interventions composed of several design features, that is, the active ingredients that should ensure the simulation effectiveness depending on the context (Cook et al., 2013). Indeed, each simulated clinical experience can also be performed according to different degrees of realism (high, medium, low fidelity), complexity (easy, moderate, hard), composition (individual, group), duration, and many more. However, the impact of these different design features on the cognitive learning process of healthcare professionals is still poorly understood.

Embedded in neurocognitive principles, recent neuroimaging studies have shown that clinical case scenarios with various complexity (easy/hard) elicited differential activation of regions of the human prefrontal cortex associated with working memory depending on the level of expertise of healthcare professionals, more so for healthcare professionals in training than experts (Hruska et al., 2016). This suggests that there could be an important relationship between clinical simulation design features and working memory, particularly for novice healthcare professionals (i.e., healthcare professionals in training or their early stage of clinical practice; less than five years). Thus, this idea can be further refined through the lens of the cognitive load (CL) theory (Paas & Van Merriënboer, 1994). CL theory is based on the understanding of human “cognitive architecture” or how we process information (Paas & Van Merriënboer, 1994). CL theory proposes that individuals have limited mental resources to deal with and integrate new information into long-term memory (Paas & Van Merriënboer, 1994). Specifically, there are two main types of CL: intrinsic load and extraneous load. In the context of clinical simulations, intrinsic load deals with elements of the simulations that are relevant to the learning task at hand, such as the learning objectives and theoretical content. Extraneous load is dedicated to the management of information that has the potential to distract healthcare professionals involved in the clinical simulations, such as noise or unclear directions (Leppink et al., 2014). Both loads are additive and may lead to cognitive overload or underload if the educational activity is not properly adapted to healthcare professionals’ level of expertise and information processing capacity.

The fundamental tenets of CL theory suggest that novice healthcare professionals cannot refer to as much knowledge and experience as experts when faced with the same clinical situation since they have not experienced the situation enough times to have stored the information in their long-term memory (Fraser et al., 2015; Szulewski et al., 2021). In addition, the novices do not have the experts’ ability to disregard redundant or irrelevant stimuli from the environment and, therefore, could be more easily distracted in a simulation activity that contains elements that are superfluous to the learning objectives, for example, an infusion pump that is constantly ringing (Szulewski et al., 2021). Some studies also suggest that clinical simulations can generate emotions, stress, and uncertainty among novice professionals, especially students, which would increase CL (mainly extraneous load) and interfere with their learning processes (Fraser & McLaughlin, 2019; Schlairet et al., 2015; Szulewski et al., 2021). Accordingly, studies have shown that keeping CL low at the beginning of a simulation training curriculum and increasing it steadily by providing more support to the novice healthcare professionals and gradually decreasing it—referring to the concept of scaffolding—can be beneficial for learning (Fraser et al., 2015; Josephsen, 2015). However, this is complex as the different simulation design features that are brought into interaction have the potential to influence more or less the novice healthcare professionals’ CL. In fact, we have little information on the association between simulation design features and novice healthcare professionals’ CL, which means that we know little about the activable levers to adjust the learning task and meet this subgroup’s cognitive specificities. We believe this exercise is essential for novice professionals as they are increasingly trained in their academic programs and early professional careers through clinical simulations (Aebersold, 2018; Datta et al., 2012).

To date, two literature reviews have synthesized the evidence on healthcare professionals’ CL in clinical simulations, and both identified several simulation design features that appear to increase or decrease CL (Rogers & Franklin, 2021; Sewell et al., 2019). However, results were presented narratively and included healthcare professionals from all levels of expertise, increasing the risk of bias in their interpretation. Moreover, the reviews combined the results of primary studies using subjective (e.g., self-reported rating scales) and objective (e.g., pupil fixation, heart rate) measures. Although these are all valid measures of CL, it can be argued that they are not equivalent conceptually. Indeed, subjective measures reflect CL as perceived by the learner at a given point in time (usually after the clinical simulation). In contrast, objective measures are based on observable behaviors or physiological parameters typically measured throughout the simulation (Brünken et al., 2010).

The present study aimed to perform the first meta-analysis of the relationship between clinical simulation design features and novice healthcare professionals’ CL while considering types of CL measures. This aim to better understand the complex and not entirely clear relationships between simulation design features and CL (Fraser et al., 2015). We believe that this is an essential first step to better understanding the mediating effect of CL on learning or performance in simulation. This could help identify what influence the novice healthcare professionals’ cognitive processing so that it can be further studied.

Methods

This systematic review was based on the Joanna Briggs Institute (JBI) methodology for systematic reviews of effectiveness (Tufanaru et al., 2017) and is reported according to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA; Page et al., 2021). The review protocol was published (Lapierre et al., 2021) and registered prospectively in PROSPERO (CRD42020187723). Here, we describe changes made since the publication of the protocol and present an abridged version of the method.

Summary of amendments made to the original protocol since its publication

Initially, we aimed to evaluate the effect of clinical simulations and their design features on CL in healthcare professionals and students. Due to a lack of studies comparing simulations with other interventions or comparing the design features of similar simulations, we could not answer this question. For this reason, we decided to focus on the associations between simulation design features and the CL of healthcare professionals. In addition, since we found that most of the studies in this area were conducted with novice healthcare professionals and based on our understanding of CL theory, we decided to concentrate on this specific population. The main research question that guided the present review and meta-analytic work was as follows: what are the associations between simulation design features and novice healthcare professionals’ CL?

Search strategy and data sources

The search strategy was based on the Preferred Reporting Items for Systematic reviews and Meta-Analyses literature search extension (PRISMA-S) (Rethlefsen et al., 2021). We searched five databases in May 2020 for studies published in English or French in peer-reviewed journals: Cumulative Index to Nursing and Allied Health Literature (CINAHL; EBSCOhost), Excerpta Medica dataBASE (EMBASE; Ovid), Education Resources Information Center (ERIC; ProQuest), MEDLINE (Ovid), PsycINFO (APA PsycNET), and Web of Science—Science Citation Index (SCI) and Social Sciences Citation Index (SSCI; Institute for Scientific Information [ISI]—Thomson Scientific). Our search strategy combined thesaurus terms and text words related to 1) healthcare professionals and students; 2) simulation activities; and 3) CL (see Table S1). We also scanned the reference lists of eligible studies to identify additional relevant studies.

Eligibility criteria

Studies that met the criteria listed below were eligible for inclusion: Population: Novice healthcare professionals from any discipline (e.g., medicine, nursing, paramedic, pharmacy). More specifically, we included all novice professionals currently in training and not graduated, that is, students and medical residents, and also graduated professionals with less than five years of experience. Studies involving students/residents/novice professionals and more expert clinicians could be included only if results were stratified according to participants’ training status. In addition, novice healthcare professionals had to engage actively in simulation activities for their own learning (i.e., not as observers, actors, or educators). Intervention: Any non-digital simulation modality used to reproduce a patient encounter (e.g., procedural simulation with part-task trainers; simulated clinical immersion with low- to high-fidelity manikins; simulated or standardized patients) (Chiniara et al., 2013). Comparison: Any other educational intervention or simulation activity, or no comparator. Outcome: Self-reported or objective CL measures during or after a simulation activity. Self-reported questionnaires were the primary outcome of this review, as they represent the most common method to measure CL in clinical simulation (Naismith & Cavalcanti, 2015). Objective indicators of CL were also considered, including 1) secondary task performance (e.g., reaction to a vibration stimulus); 2) physiological indices (e.g., heart, eye, or brain activity); and 3) observer ratings (e.g., changes in behaviors or speeches). Design: Experimental, quasi-experimental, or pre-experimental design (i.e., randomized controlled trials, non-randomized controlled trials, before and after studies, interrupted time-series studies, post-test only studies). Context: Any healthcare or educational setting.

Exclusion criteria

Digital simulations (e.g., virtual simulation or computer-based simulation) were excluded to reduce heterogeneity, as the CL of healthcare professionals in a digital environment may be affected by specific digital factors (e.g., computer literacy levels, digital device features).

Study selection

We worked independently and in duplicate to screen all titles and abstracts using Covidence (Veritas Health Innovation, Melbourne, Australia). We resolved disagreements by consensus or with the involvement of a third reviewer. We then assessed full texts for eligibility using the same method.

Data extraction

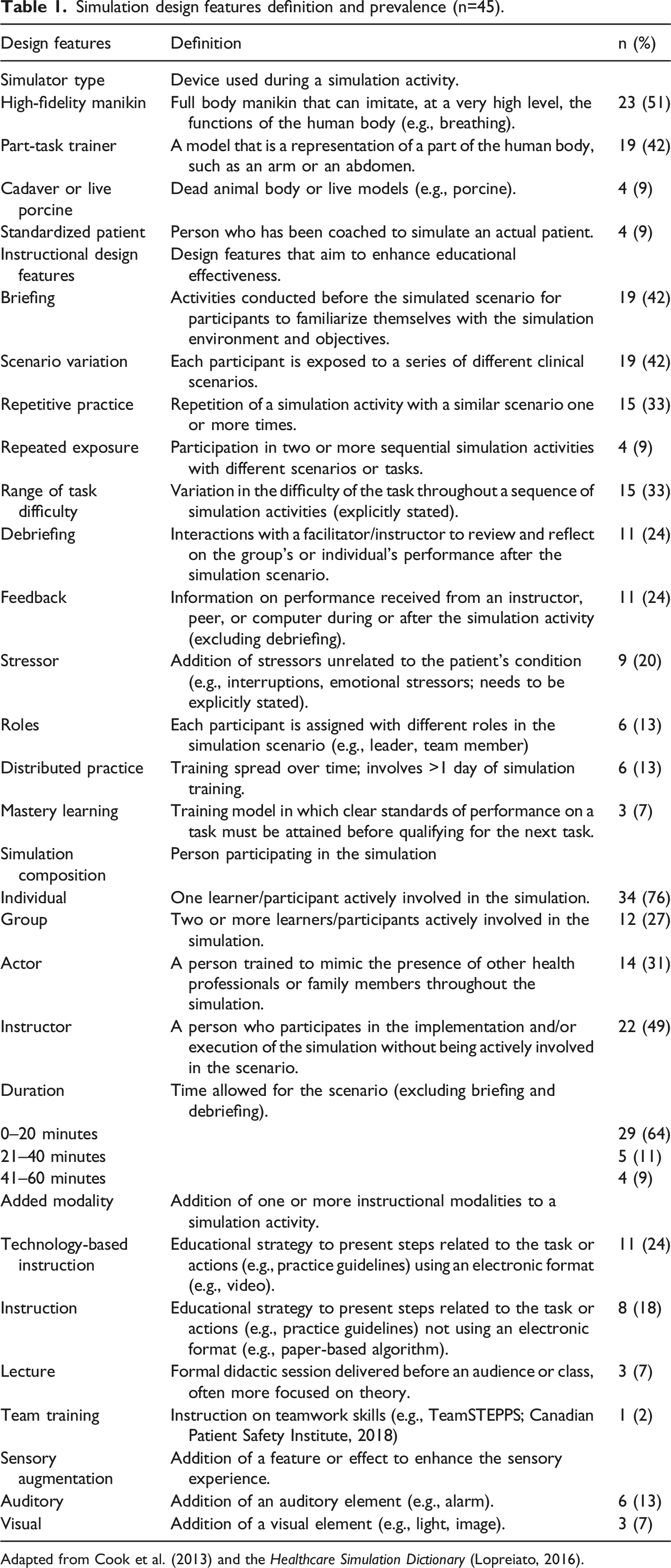

Simulation design features definition and prevalence (n=45).

Adapted from Cook et al. (2013) and the Healthcare Simulation Dictionary (Lopreiato, 2016).

To gain feedback on our codification, we e-mailed all corresponding authors of included studies on two occasions to ask if it was representative of what occurred in their simulation activities. Furthermore, we asked them to provide missing outcome data, if applicable. In total, 14/45 authors (31%) responded.

Quality assessment

Two reviewers assessed study quality using standardized critical appraisal instruments from the JBI (Tufanaru et al., 2017). Based on a previous review (Roberts & Cooper, 2019), quality scores of 10/13 or above were considered high, 7 to 9 as moderate, and anything lower than 7 as low for randomized controlled trials; whereas quality scores of 8 or 9/9 were considered as high, 6 or 7 as moderate, and any scores lower than 6 as low for quasi-experimental studies.

Data analysis

In collaboration with a statistician, we used univariate and multivariate linear mixed models to describe the associations between simulation design features and novice healthcare professionals’ CL. To account for varying sample sizes, the results of studies were weighted using the methods of meta-analyses (i.e., the inverse of the variance). Moreover, since some participants were included in more than one simulation activity (i.e., more than one observation), the dependence of observations was modeled using a compound-symmetry covariance matrix. The dependent variables related to CL were treated as continuous. NASA-Task Load Index (NASA-TLX) scores were adjusted to a 100 scale when authors used a 0–120 scale. Most of the design features were treated as categorical (presence or absence), except for three features treated as continuous (i.e., repetitive practice, repeated exposure to simulations, and time allowed to the simulation activity). To build the multivariate linear mixed models, all independent variables that were significant in the univariate models were put in the equation. Those that were found nonsignificant in the multivariate models were then deleted one at a time until all remained statistically significant (Kellar & Kelvin, 2013).

All analyses were performed using IBM SPSS Statistics for Macintosh, Version 26.0 (IBM Corp., Armonk, NY). Results are expressed using unstandardized regression coefficients (β) with 95% confidence intervals. P values ≤ 0.05 were considered significant. Analyses were performed when five or more simulation activities (i.e., observations) were available for the same design feature and outcome, using the same measurement tool. Although ten observations are generally recommended (Higgins & Thomas, 2019), we decided to reduce to five observations because results were often poorly reported, not allowing us to meta-analyze as many studies as expected. Finally, as assessed by inspection of a boxplot for values greater than 1.5 box-lengths, we found no outliers in the data.

Results

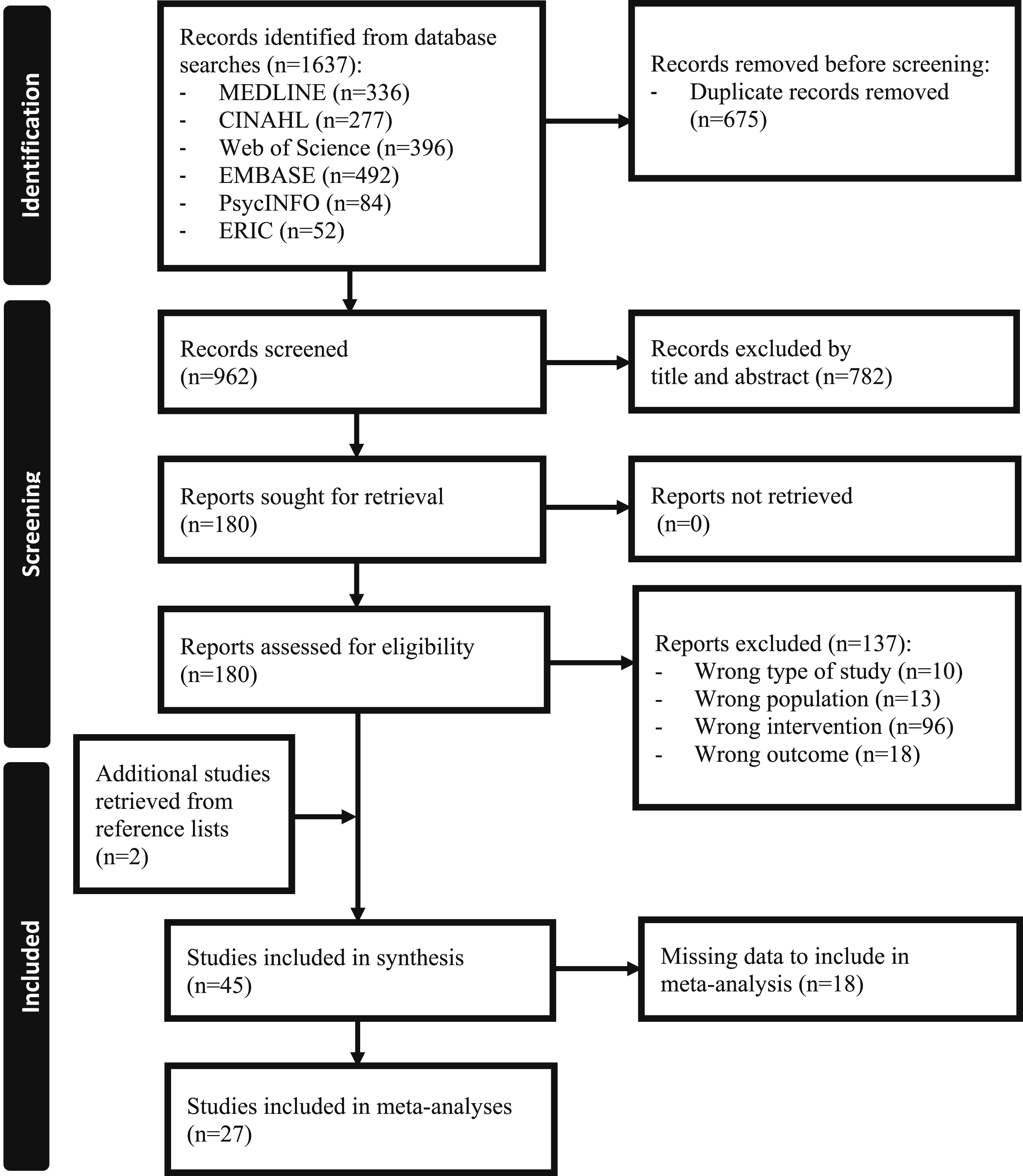

The initial search yielded 962 unique records (Figure 1). After screening the titles and abstracts, the full text of 180 potentially eligible studies was screened, and 45 studies were included in this review. Flow diagram of study selection.

Characteristics of the studies and participants

Most studies took place in North America (n = 29, 64%) between 2011 and 2021 (n = 40, 89%) with participants from medicine (n = 36, 80%), nursing (n = 5, 11%), pharmacy (n = 2, 4%), and pre-hospital services (n = 1, 2%). Only 1 study (2%) included participants from multiple disciplines. Most studies were conducted with students (n = 28, 62%) or residents (n = 19, 42%); only 1 study (2%) was conducted with novice graduated professionals (i.e., less than 5 years of clinical experience). The median sample size of the included studies was 30 (range 8–299).

Most studies were randomized controlled trials with parallel groups (n = 14, 31%) or crossover designs (n = 9, 20%). Thirteen studies (29%) were single-group repeated measures, 5 (11%) were single-group post-test only, and 4 studies (9%) were non-randomized controlled trials. Most studies (n = 37, 82%) used self-reported questionnaires such as the NASA-TLX (n = 20, 45% ; Hart & Staveland, 1988), the Paas Scale (n = 13, 29% ; Paas, 1992), the Surgery-Task Load Index [SURG-TLX] (n = 2, 4% ; Wilson et al., 2011), the Cognitive Load Questionnaire (n = 3, 7% ; Leppink et al., 2013), or a questionnaire specifically developed for the study (n = 1, 2%). Secondary task analysis (n = 10, 22%) were also used, including reaction time (n = 6, 13%), detection rate (n = 2, 4%), number of chart errors (n = 2, 4%), and math calculation (n = 1, 2%). Physiologic measures (n = 7, 16%) such as eye tracking (n = 6, 13%), heart rate (n = 4, 9%), electrodermal activity (n = 1, 2%) were less frequent. Only 1 study (2%) used behaviors as a measure of CL. Nine studies (20%) used multiple CL measures. Characteristics of the studies can be found in Table S2.

Methodological quality assessment

Regarding randomized controlled trials (n = 22), 6 (26%), 10 (43%), and 6 (26%) were rated as high, moderate, and low quality, respectively. Regarding quasi-experimental studies (n = 23), 6 (26%), 12 (52%), and 5 (22%) were of high, moderate, and low quality, respectively (see Table S3-S4).

Association between simulation design features and CL

Table 1 presents the definition of the simulation design features and their prevalence in the 45 studies according to our codification. The most common simulation design features were the simulator type, specifically high-fidelity manikin (n = 23, 51%) and part-task trainer (n = 19, 42%), the presence of briefing (n = 19, 42%), the variation in clinical scenarios (n = 19, 42%), and the simulation composition, which mainly was individual (n = 34, 76%).

Twenty-seven studies provided enough data on the NASA-TLX (n = 15; 49 unique observations) and the Paas Scale (n = 13; 46 unique observations) to be meta-analyzed; one study used both instruments. The other studies could not be meta-analyzed due to a lack of data from the same measurement methods.

NASA-TLX

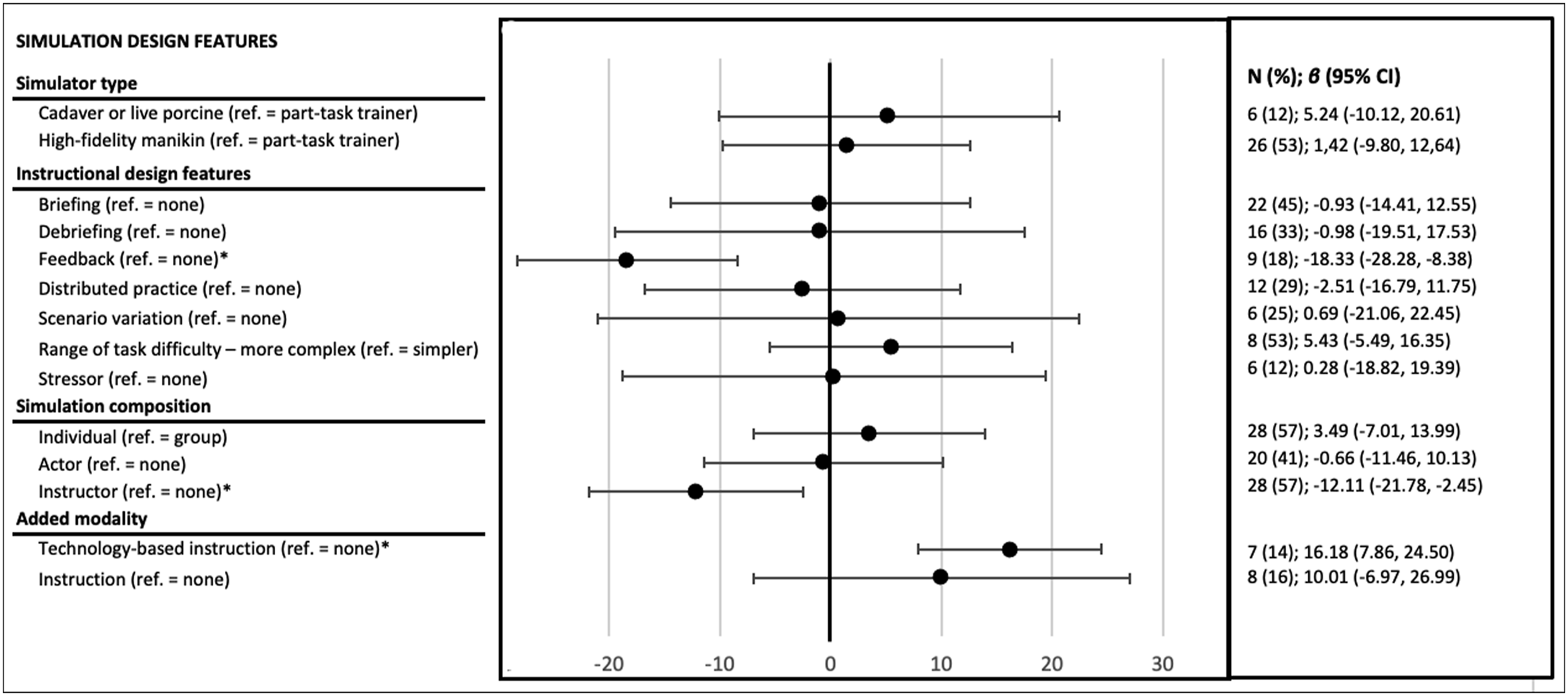

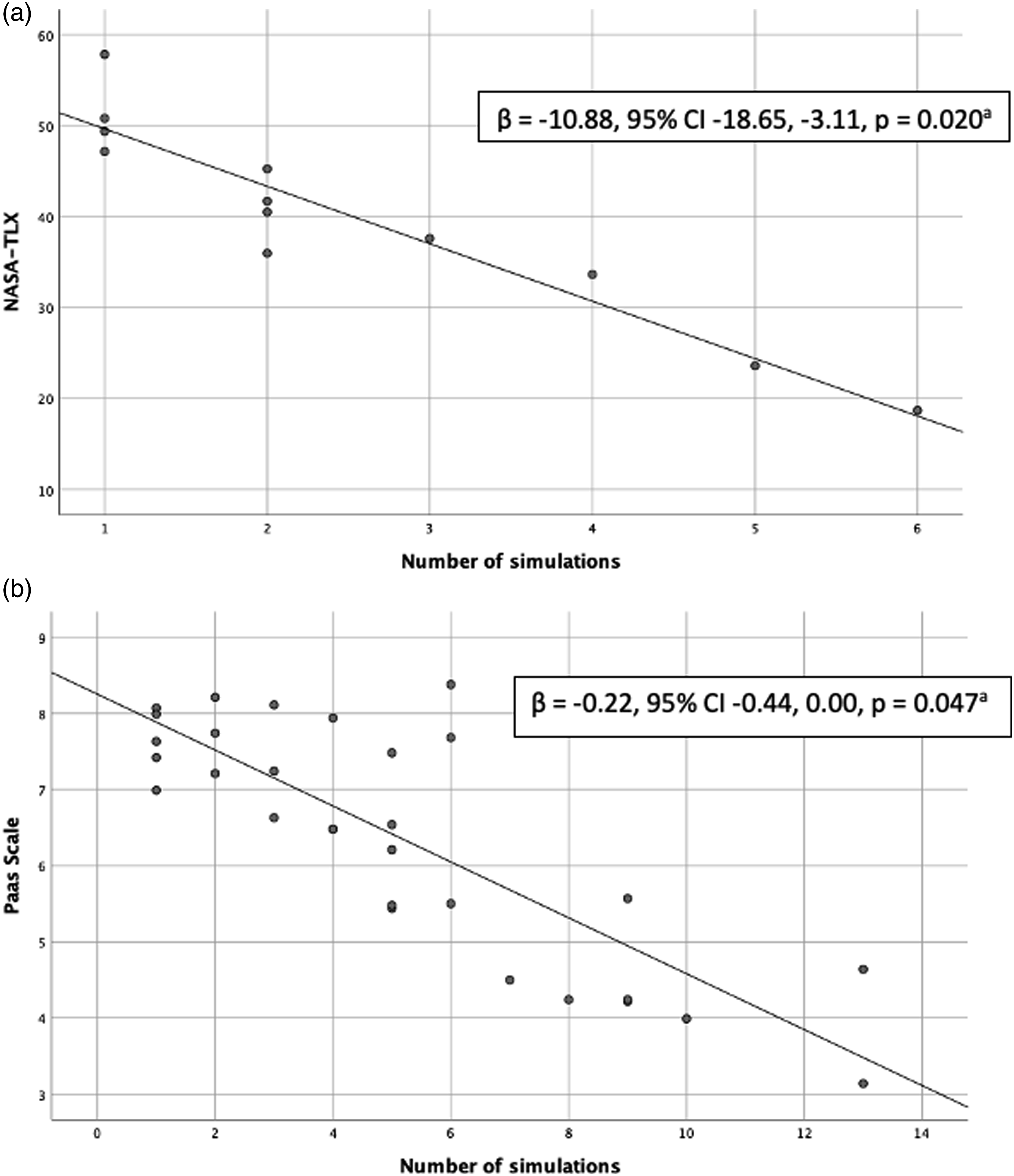

In the 49 observations using the NASA-TLX, the mean score was 53.19 with a standard deviation of 16.39 (range 0–100). As shown in Figures 2 and 3a, the univariate analyses revealed significant associations (P ≤ .05) between the NASA-TLX scores and four design features: 1) instructor or simulator feedback; 2) presence of an instructor; 3) technology-based instructions before or during the simulation activity; and 4) repetitive practice (i.e., opportunity to repeat a simulation with a similar scenario). Specifically, feedback during or after the simulation and the presence of an instructor were associated with lower CL (β = -18.33, 95% CI -28.28, -8.38, P = 0.001; β = -12.11, 95% CI -21.78, -2.45, P = 0.015). The presence of technology-based instruction was associated with higher CL (β = 16.18, 95% CI -7.86, 24.50, P = 0.001), whereas CL decreased linearly with each repetition of a simulation with the same scenario or task (β = -10.88, 95% CI -18.65, -3.11, P = .020; Figure 3a). Downward trends in CL were also observed with repeated exposure to simulation (i.e., two or more sequential simulation activities with different scenarios or tasks) and increasing time allowed (i.e., in 10-min increments), but they did not reach statistical significance (β = -8.01, 95% CI -18.14, 2.11, P = 0.115; β = -10.37, 95% CI -25,79, 5.06, P = 0.157). In the mixed model analysis, the presence of technology-based instruction (β = 7.13, 95% CI, 3.69, 10.56, P < .001) and repetitive practice (β = -7.35, 95% CI -8.58, -5.11, P < .001) remained significant. Association between simulation design features and NASA-TLX scores. Association between repetitive practice and NASA-TLX/Paas Scale scores

Paas Scale

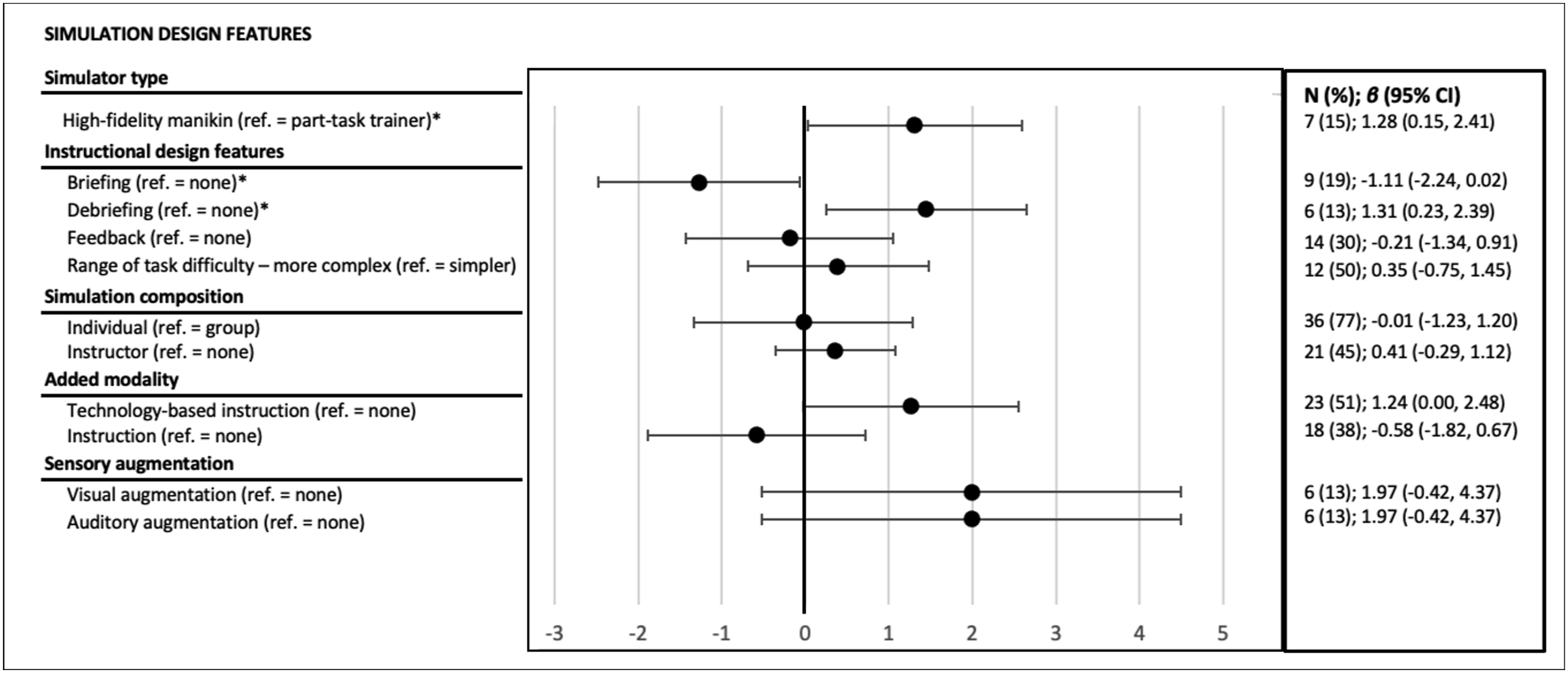

In the 46 observations using the Paas Scale, the mean score was 6.17 with a standard deviation of 1.43 (range 1–9). As shown in Figures 3b and 4, results from the univariate analysis for 46 unique observations revealed significant associations (P ≤ 0.05) between the Paas Scale scores and four design features: 1) simulator type (i.e., high-fidelity manikin compared to part-task trainer); 2) presence of a briefing; 3) presence of a debriefing; and 4) repetitive practice. Specifically, the use of high-fidelity manikin compared to a part-task trainer and the presence of a debriefing were associated with higher CL (β = 1.31, 95% CI 0.03, 2.59, P = 0.046; β = 1.45, 95% CI 0.25, 2.65, P = 0.021). However, the presence of a briefing was associated with lower CL (β = -1.27, 95% CI -2.48, -0.06, P = 0.040). For repetitive practice, the results showed that with each opportunity to repeat a simulation with a similar scenario or task, CL decreased linearly (β = -0.22, 95% CI -0.45, -0.00, P = 0.047; Figure 3b). The presence of technology-based instruction was very close to being statistically significant and showed an increasing trend (β = 1.26, 95% CI -0.03, 2.55, P = 0.054). Repeated exposure could not be analyzed due to a lack of data. In the mixed model analysis, no variable remained significant, potentially due to a lack of power. Association between simulation design features and Paas Scale scores.

Discussion

In this review, we explored the association between clinical simulation design features and subjective measures of CL. Simulation studies using objective measures of CL were insufficient in number for meta-analysis. Although the state of literature limits current results, there are essential points to discuss regarding design features and the measurement of CL in clinical simulation studies. The following discussion focuses on research gaps and recommendations for the field of clinical simulation design and beyond.

Simulation design features

Repetitive practice was the simulation design feature that appears to be most strongly related to CL when measured by different self-reported questionnaires. Specifically, many studies have shown that repeating a simulation scenario decreases the perceived CL of novice healthcare professionals (Abe et al., 2019; Haji, Khan, et al., 2015; Haji, Rojas, et al., 2015; Hu et al., 2016; Jirapinyo et al., 2017; Yurko et al., 2010). This could be explained by novices’ increased familiarity with the scenario or perception that their performance improves through repetition (Haji, Khan, et al., 2015; Young et al., 2014). This could also be explained by the fact that several task repetitions can lead to automaticity (i.e., the ability to execute with less effort and fewer mental resources; Paas & Van Merriënboer, 1994; Stefanidis et al., 2008). However, in this review, repeated exposure to simulation, regardless of the scenario or task also tended to diminish CL even though this relationship did not reach statistical significance. This suggests that professionals were getting accustomed to the concept and functioning of simulation as an educational technique, which may also explain why their CL eventually declined over time.

The presence of technology-based instruction—mainly video demonstrations before the clinical simulation—strongly increased novices’ self-reported CL on the NASA-TLX, while it was very close to being significant for the Paas Scale. In recent years, educational technology has become more accessible and popular in healthcare professions education (Car et al., 2019; Grimwood & Snell, 2020; Kononowicz et al., 2019). Yet, we are not aware of any study that specifically examined the impact of educational technology before or during a clinical simulation on CL. However, there is an extensive literature on CL and technological-based education, such as multimedia learning environments (Kalyuga & Liu, 2015; Mutlu-Bayraktar et al., 2019; Sweller, 2020), or even digital simulations (Chang et al., 2021; Frederiksen et al., 2020)—which was excluded from this review because of the significant difference between the training modalities. Still, an important message is that it is not the technology itself that matters, but how and when it is used to optimally engage the learners’ cognition (Kalyuga & Liu, 2015; Sweller, 2020). Our results highlight the importance of reflecting on the educational principles and value of such technological additions, which could be the object of another research question on its own.

The remaining design features were only significant in the univariate analyses. Feedback was associated with lower NASA-TLX scores. Feedback is related to the information on performance received from an instructor, peer, or computer during or after the simulation activity (Cook et al., 2013). Several studies included in this review have specifically focused on different aspects of instructor feedback, such as the use of video, positive vs. negative feedback, or its frequency (Abbott et al., 2017; Aljamal et al., 2019; Bosse et al., 2015; Byrne et al., 2002; Tofil et al., 2020). In these studies, CL seemed to be similar or decrease across different feedback groups, which is consistent with our results. Indeed, the feedback given by an instructor would allow to better structure the simulated task so that it is better understood (Hatala et al., 2014), thus decreasing the intrinsic CL. In contrast, two studies evaluated the effects of simulator feedback (Brown et al., 2018; Hempel et al., 2019), while no studies evaluated feedback from peers. Interestingly, the specific results of the simulator feedback showed the opposite effect from instructor feedback on CL. As Hatala et al. (2014), more theoretically based studies that include CL measures are still needed to better understand the benefits of this important design feature.

Instructor presence was also associated with lower NASA-TLX scores. In our opinion, these results are strongly associated with the presence of feedback, as an instructor is absolutely required to provide feedback. Indeed, no study has specifically looked at the presence vs. the absence of an instructor or even at instructors’ characteristics or behaviors that might influence learners’ CL. Indeed, as Frisby et al. (2018) did in a classroom context, it might be interesting to look at instructors’ behaviors that might influence the extraneous CL of novice healthcare professionals. This study showed that behaviors such as speaking quickly or using offensive words could impact learners’ extraneous CL.

Regarding the Paas Scale, simulator type (i.e., high-fidelity manikin compared to part-task trainer) was associated with higher scores. This is consistent with the postulate that simulation fidelity is positively correlated to intrinsic CL (Reedy, 2015). In fact, it is increasingly recognized that to manage novices’ CL better, you need to start with lower fidelity and increase it over time (Brydges et al., 2010; Reedy, 2015). Indeed, the idea that an immersive, high-fidelity learning environment is ideal for preparing novice healthcare professionals for clinical practice is increasingly questioned (Reedy, 2015). However, since fidelity is a complex concept composed of several dimensions (i.e., physical, psychological, conceptual ; Paige & Morin, 2013), these results do not allow us to differentiate the impact of the different spheres of fidelity on CL.

The presence of a briefing was associated with lower Paas Scale scores. Best practices emphasize the importance of briefing participants before clinical simulations since it allows professionals to familiarize themselves with the simulation environment and better understand the activity’s objectives (INACSL Standards Committee, Decker, et al., 2021). Consequently, it could have an influence on the extraneous CL, as this practice allows presenting and clarifying the different elements that could potentially distract the learner from the learning task. In fact, these results align with the numerous studies that showed positive outcomes of briefing for healthcare professionals (Tyerman et al., 2019).

As opposed to a briefing, the presence of a debriefing was associated with higher Paas Scale scores. The only study that clearly aimed to examine the effect of debriefing on CL showed that medical students had higher CL scores after the debriefing than after the scenario (Fraser & McLaughlin, 2019). However, another study, using an objective measure, showed that surgical residents who were debriefed had a reduced median response time to a vibration stimulus compared to those who were not—suggesting reduced CL as a result of debriefing (Boet et al., 2017). This apparent contradiction could be explained by the difference in CL measurement (subjective vs. objective), the difference in expertise (medical students vs. residents), or the use of different debriefing methods (Promoting Excellence And Reflective Learning in Simulation [PEARLS] (Eppich & Cheng, 2015) vs. Debriefing with Good Judgment (Rudolph et al., 2007)), among other factors.

Although several associations did not reach statistical significance, some trends are worth noting. First, complex simulations led to higher CL compared to simpler simulations, which surprisingly did not reach statistical significance even if all studies followed the same trend (Haji, Khan, et al., 2015; Kataoka et al., 2011; Tremblay et al., 2019). Indeed, the idea that novice healthcare professionals should be confronted from the beginning with the full complexity of clinical situations to be well prepared for the clinical reality, is now discouraged according to CL theory (Reedy, 2015). Second, sensory augmentation at the auditory and visual level also seemed to increase CL on the Paas Scale, which is consistent with findings that auditory interruptions (e.g., telephone call, monitor alarms) increase the CL of novice healthcare professionals (Campoe & Giuliano, 2017; Weigl et al., 2016; Wucherer et al., 2015). Other studies have investigated the effect of several concurrent stimuli, making it difficult to isolate their influence on CL (Mills et al., 2016; Tremblay et al., 2017).

Measuring CL

Subjective CL measures were the most frequent in the included studies, possibly because they are easy to use and non-intrusive. Although the Paas Scale and NASA-TLX have been used interchangeably, they somewhat differ in their form and conceptual foundations (Naismith et al., 2018). The Paas Scale is a single-item instrument that estimates mental effort on a 9-point scale, whereas the NASA-TLX consists of six subdomains scored on a 0–20 scale: mental demand, physical demand, temporal demand, effort, frustration, and performance (Hart & Staveland, 1988; Paas, 1992). A review by Naismith et al. (2015) found poor agreement between the two instruments in a simulation setting—the Paas Scale correlated only with the mental demand subdomain of the NASA-TLX. It is therefore not surprising that the results of the current meta-analyses were different for the two instruments.

Furthermore, an important limitation of these instruments is that they only capture the overall CL and not the contribution of each CL type (Brünken et al., 2010; Leppink et al., 2014). This is problematic since high or low overall CL is a very imprecise measure that does not allow to know which type of CL (intrinsic or extraneous) is impacted. To date, most instruments have been constructed to measure overall CL, and only a few measure intrinsic and extraneous loads separately (Klepsch et al., 2017; Leppink et al., 2013)—in this review, only four studies measured the different CL types (Josephsen, 2018; Naismith et al., 2015; Tremblay et al., 2017; Tremblay et al., 2019). The complexity in developing this kind of instrument might be explained by the ongoing debate about what composes CL, especially questions regarding the “germane” load (Leppink & van den Heuvel, 2015). The germane load was identified in early versions of the theory as the construction and automation of mental schema and its integration into the long-term memory (Paas et al., 2003). However, due to incongruence on the theoretical level, the germane load was recently reconceptualized as a subtype of the intrinsic load (Jiang & Kalyuga, 2020; Kalyuga, 2011; Leppink & van den Heuvel, 2015). For this reason, authors who aimed to measure different CL types had few options. In fact, they had to develop or adapt the rare instruments measuring the CL types, such as the Cognitive Load Questionnaire of Leppink et al. (2013), because they are not adapted to the simulation context. Therefore, it is understandable that many researchers have turned to instruments already validated and easy to use, such as the Paas Scale and the NASA-TLX.

Also worth noting, few studies have used objective measures of CL. An advantage of objective measures is that they allow measuring CL during the clinical simulation and observing temporal variations (Zheng & Greenberg, 2018). However, these measures have likely been used less because they may compromise the authenticity of a simulation or may interfere physically with the participants (e.g., having to react to a vibration stimulus during the simulation, adding an eye-tracking device; Zheng & Greenberg, 2018). Unobtrusive methods, such as behavioral observations, are beginning to be developed and show a promising avenue—however, research is still limited (Naismith et al., 2018).

Recommendations for educators and researchers

The results suggest that repeated exposure to simulations could be a successful strategy for educators who aim to reduce novices’ CL. Otherwise, we recommend that educators carefully reflect on the necessity of some design features before including them in simulations, particularly for technologies (e.g., high-fidelity mannequins or videos). Are these technologies absolutely essential to achieve the learning objectives? If so, at what time the technologies should be optimaly introduced during the simulated clinical experience? In addition, until proven otherwise, instructional strategies known to be central to simulations, such as briefing, feedback, and debriefing, should be constantly used, as per the simulation best practices (INACSL Standards Committee, Watts, et al., 2021).

For researchers, we believe that one of the needs to advance knowledge in this field is to develop, adapt, and use an instrument suitable for assessing the different types of CL in clinical simulation context. For example, Tremblay et al. (2019) have begun to work on adapting the Cognitive Load Questionnaire of Leppink et al. (2013), but its validation and wider dissemination remain to be done. Still in terms of measures, other non-subjective and least intrusive methods should be developed (such as behavioral observations), to address the specific context of clinical simulations, and to allow for the use of diverse data collection methods—ensuring stronger internal validity of studies. Also, we believe that rigorous control group studies evaluating the major simulation design features such as briefing, debriefing and feedback on CL are needed to understand the association between these variables better. To this end, it is essential to properly describe the simulation interventions being evaluated—Reporting guidelines for health care simulation research (Cheng et al., 2016) should be used to ensure consistency in the description of these complex educational interventions.

Strengths and limitations

This review has several strengths, including the implementation of a rigorous process based on the JBI and the new PRISMA guidelines. The protocol was prospectively registered, which enhances transparency. Although we had initially planned to evaluate the impact of simulation design features on CL through studies in which different clinical simulations were compared head-to-head, the absence of such studies precluded us from doing so. Thus, we had to rely on findings from univariate linear mixed models, which may be subject to confounding biases. Another limitation to this approach is that the multiplicity of statistical testing may have increased the risk of type 1 error. Despite these limitations, we firmly believe that the results, even if exploratory in nature, advance knowledge and put forward several research avenues on a subject of utmost importance in novice healthcare professions’ education. Although two independent reviewers performed coding with good agreement, it was based on the description of the clinical simulations, which could be subject to reporting bias. Finally, the results are based on studies that are generally of moderate methodological quality. For all these reasons, these results should be considered with caution.

Conclusion

This review provides the first meta-analysis exploring the relationships between the simulation design features and the novice healthcare professionals’ CL. Overall, the results demonstrate that there are significant associations between several simulation design features and novices’ CL, including instructor or simulator feedback, presence of an instructor, technology-based instructions, repetitive practice, simulator type, and debriefing. However, the results also highlight that there are still many unanswered questions to understand better these relationships, such as how to measure the different CL types appropriately. The lack of instruments leads to fundamental difficulties in the generation of new knowledge in this field. Ultimately, this work aims to understand better the relationships between the simulation design features and CL and then examine the impact these relationships have on the learning of novice healthcare professionals—but before we get to that point, much work remains to be done.

Supplemental Material

Supplemental Material - Association between Clinical Simulation Design Features and Novice Healthcare Professionals’ Cognitive Load: A Systematic Review and Meta-Analysis

Supplemental Material for Association between Clinical Simulation Design Features and Novice Healthcare Professionals’ Cognitive Load: A Systematic Review and Meta-Analysis by Alexandra Lapierre, Caroline Arbour, Marc-André Maheu-Cadotte, Billy Vinette, Guillaume Fontaine, and Patrick Lavoie in Simulation & Gaming

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.