Abstract

Collaboration is a construct comprising diverse definitions and frameworks. Additionally, being a latent variable and because of its complexity and interactive nature, collaboration is difficult to measure. Therefore, this systematic literature review was guided by two fundamental questions: what to measure and how to measure. Through the review and synthesis of 28 carefully selected studies we derived an integrative framework displaying indicators for peer collaboration in higher education and beyond. Moreover, the results give insights into measurement approaches of collaboration comprising information on data collection and analysis as well as contextual factors (e.g., task type, time).

In a digital era, humans not only need better technical preparation but also appropriate skills to adapt to the changing requirements at the workplace (Carnevale & Smith, 2013). The knowledge and skills that prepare individuals for lifelong learning in a complex and fast changing world have been labeled 21st century competencies, including communication, collaboration, critical thinking, creativity, problem solving, and digital skills (Buckingham Shum & Crick, 2016; van Laar et al., 2017). Especially communication and collaboration skills seem to play a crucial role, as a huge amount of literature highlights the importance of social interactions during knowledge building and skill acquisition (e.g., Ghazal et al., 2019; Martin et al., 2016). In fact, collaboration can contribute positively to the development of other skills like problem solving, creativity, and critical thinking (Ghitulescu, 2018; van Laar et al., 2019). Students merging from schools and universities into the workforce are expected to collaborate with others in order to solve complex problems and to create innovations (Y. Rosen & Mosharraf, 2016; Sartori et al., 2018). This is also why the Program for International Student Assessment (PISA) integrated collaborative problem solving as an additional assessment domain besides science, reading, mathematics, and financial literacy in 2015 (OECD, 2017). Beyond that, universities in particular are encouraged not only to transmit discipline-specific skills (often referred to as

Using a systematic literature review approach, the current study focuses on collaborative processes in adult peer learning, with two main goals. Firstly, it seeks to contribute to the conceptualization of collaboration as a complex, multifaceted, and context-dependent construct. Secondly, it provides an overview of best practices in measuring collaborative working and learning. Unlike previous reviews, which had a more generalized focus across various contexts and either considered conceptualization or measurement approaches, our review will adopt a context-specific approach and encompass both aspects simultaneously. Based on this synthesis, we present an integrative framework, as well as a roadmap (including what to measure, how to measure, and under which circumstances), for researchers and practitioners interested in collaborative processes. Although our findings are mainly tailored to the higher education context, they hold the potential to inspire researchers and practitioners of any other context in which collaboration is prevalent. In the following, we provide a summary of the theoretical foundation and the present research landscape, which encompasses prior reviews. This summary will then lead us to formulate our research questions and establish the significance of this study. Afterwards, we explain the systematic review procedure and present our findings. Building on this, we discuss strengths and limitations of this study and conclude with implications for research and practice.

Collaboration: Definition and Differentiation

“For a concept so widely used in everyday language there is a surprising lack of a clear understanding of what it is to collaborate” (Patel et al., 2012, p. 1). Indeed, Patel et al. (2012) emphasize that collaboration is a construct comprising diverse definitions and frameworks. Etymologically, the word

Collaboration can take place at different levels, such as within teams, across teams, or encompassing entire organizations. Moreover, depending on the discipline, the understanding of collaboration varies. Bedwell et al. (2012) conducted a multidisciplinary literature review, which highlights that, whereas collaboration is seen as a process in some fields of research (e.g., education), it is seen as a structure in others (e.g., management). In the majority of their reviewed literature, however, collaboration is conceptualized as a process (Bedwell et al., 2012). In school and university settings, the terms

As mentioned above, terms like coordination, cooperation, teamwork, and collaboration are often used interchangeably. However, collaboration is a higher-level process which is increasingly used in practice; “therefore, science needs to thoroughly understand what it is and what it is not in order to help practitioners maximize its effectiveness and usefulness” (Bedwell et al., 2012, p. 142). To this end, it is important to consider potential differences with respect to disciplines/domains, educational levels, and technologies used to support collaboration (Bedwell et al., 2012; Jeong et al., 2019). Moreover, especially in education context, the question arises whether the focus lies on

Measurement of Collaboration

The measurement of collaborative learning processes is important because it helps researchers and practitioners to assess the knowledge and skill level of individuals and groups. This, in turn, allows them to distinguish between high and low performers (e.g., Schneider et al., 2020) and helps to understand which mechanisms lead to successful learning. Subsequently, they can guide, adapt, and improve learning experiences. Furthermore, assessing and reflecting learning processes allows students or workers to monitor their own personal learning and skill development (Buckingham Shum & Crick, 2016). Additionally, measurement findings can be used for the development of group awareness tools, facilitating learners in becoming more aware of their interaction partners, thus catalyzing advantageous learning processes (Bodemer et al., 2018).

There are several issues associated with the assessment of collaboration. For example, a big challenge of measuring collaboration is resolving the issue of what aspects are being assessed (Child & Shaw, 2019). Assessment criteria are dependent on the purpose of the assessment (Strijbos, 2011), which can be either summative (focus on the learning outcome) or formative (focus on the learning process). Therefore, it is crucial to identify appropriate operationalizations of collaboration processes and outcomes as well as the corresponding measurement methods (Strijbos, 2016). Besides process and outcome variables, input variables like participant background (e.g., personality) and tasks (e.g., well vs. ill-defined tasks) should be considered when assessing collaboration (Kyllonen et al., 2017). A further question arising is whether the assessment should focus on the group or individual level. With respect to summative assessments in educational settings, for example, it is common that a group product is evaluated or that the individual level of knowledge following the group work is assessed.

Another difficulty in measuring collaboration lies in the high number of interactions that take place during collaborative learning (Caballero-Hernández et al., 2020). The learning patterns that take place in collaborations are much more complex than those in individual learning settings (Cen et al., 2016), which is especially true for bigger groups and groups that work together over several episodes of time. Furthermore, it is crucial to recognize that significance lies not only in the interactions among learners, but also in their utilization of the provided resources to address tasks, along with their engagement with the task environment, such as through different kinds of technologies (cf. Lämsä et al., 2021). Especially, when learning groups can decide independently when and how to work together (e.g., when they work together in a hybrid setting over the course of a semester), measuring their collaboration becomes extremely challenging. Consequently, studying collaboration requires considering the circumstances in which it takes place.

Prior research reflects a variety of methods for measuring collaboration like questionnaires (e.g., Caniëls et al., 2019), diary studies (e.g., Shah & Leeder, 2016), social network analysis (e.g., Kent & Cukurova, 2020), and lag sequential analysis (e.g., Cheng & Chu, 2019). Whereas some rely on self-reports, others are observations of learners’ interactions and behaviors in the digital environment. Digital environments, learning analytics, and educational data mining are becoming prevalent, as they establish an ecosystem where collecting data continuously to assess learning processes is feasible and manageable (Aldowah et al., 2019). For example, researchers have presented innovative technological frameworks for automating collaboration analysis (e.g., Anaya & Boticario, 2013; Duque et al., 2011; Noel et al., 2018). However, these techniques are mostly algorithmic and rarely based on theories (Baker et al., 2021), hence lacking a pedagogical framework. In turn, a missing theoretical foundation leads to an inadequate operationalization of collaboration. For example, in some research, it is unclear how collaboration is operationalized, while in some other, collaboration is simply operationalized as interaction and participation rates. Yet, this does not reflect the main focus of collaboration which lies in creating shared conceptions. To better understand learning processes, research utilizing learning analytics and educational data mining should establish meaningful links between the recorded data and the targeted construct (Wise et al., 2021). To build this bridge from “clicks to constructs” (Buckingham Shum & Crick, 2016, p. 16), a set of theoretical based descriptions is needed. As the aim of the current review is to shed light on collaborative processes more precisely, the results can serve as a solid theoretical basis for learning analytics and educational data mining approaches.

Prior Reviews and the Present One

Several review studies (e.g., Bedwell et al., 2012; Child & Shaw, 2019; D’Amour et al., 2005; Lai, 2011) have already been conducted to give an overview of collaboration processes and to propose theoretical and operational frameworks. Whereas reviews by Lai (2011) and Child and Shaw (2019) refer to school and university contexts, Bedwell et al. (2012) focused on collaboration at work and D’Amour et al. (2005) reviewed interprofessional collaboration in health fields. These reviews primarily contribute to the conceptualization of collaboration in terms of construct definition and differentiation from other constructs. Yet, they do not give detailed descriptions of the sub-facets of collaboration, provide a list of concrete behavioral indicators, or discuss appropriate measurement methods. Moreover, none of them was set-up or executed as a

To extend the existing body of research, the current review uses a selective and purposeful approach (cf. Xiao & Watson, 2019) by identifying best practice studies and analyzing them in depth. Hence, this study is expected to extend previous reviews by (a) giving a holistic overview with detailed descriptions of sub-facets of collaboration, while focusing on a specific target group, namely adult peer learners, (b) depicting best practice approaches to measuring collaboration, and (c) linking the conceptualization and measurement of collaboration. Since measures need to be selected and formulated to effectively capture the theoretical content (M. A. Rosen et al., 2018), it is important to establish a connection between the conceptual and measurement method domains. Considering the challenges in analytics (e.g., to map digital traces to collaborative learning constructs, Wise et al., 2021), reviewing best practices can enrich future measurement approaches. Taken together, this systematic literature review was guided by the following research questions: What aspects are important to consider when conceptualizing collaboration (RQ 1)? Further, how can they be measured (RQ 2)? Answering these research questions enables us to make a valuable contribution to the conceptualization of collaboration as well as to derive recommendations for measurement approaches.

Method

The systematic literature review was conducted based on the guidelines by Siddaway et al. (2019) as well as Gough et al. (2017). These guidelines provide recommendations for planning (e.g., selection of databases, formulation of search terms, and inclusion/exclusion criteria), conducting (e.g., considering interrater reliability), and organizing the review process (e.g., references to helpful tools and applications), as well as the presentation of results (e.g., description of included studies). Accordingly, the review process consisted of the following main steps: (1) identification, (2) screening, and (3) analysis.

Identification: Literature Search Strategy

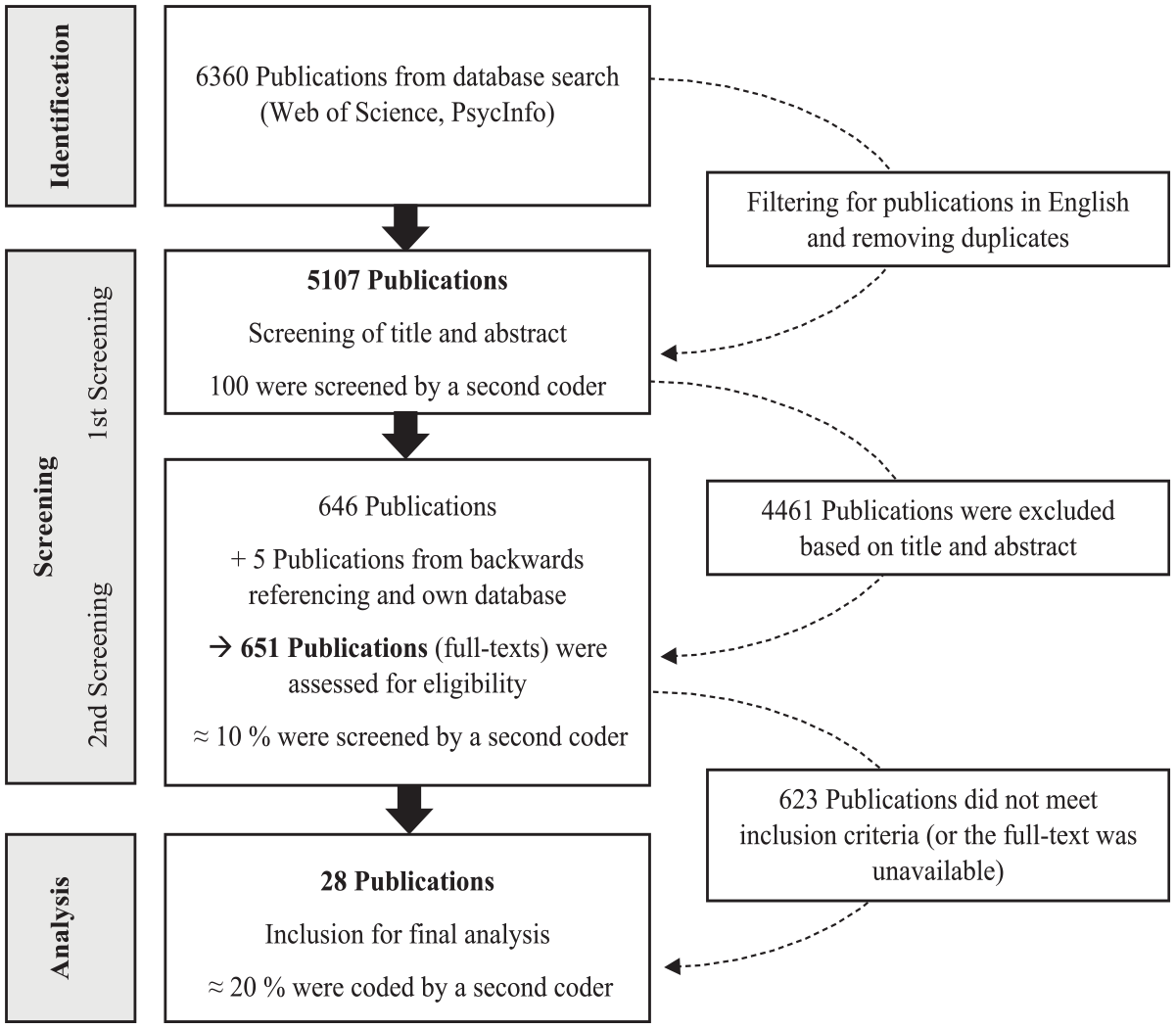

The literature search was conducted in September 2020. We consulted two important databases in the field of educational research (i.e., Web of Science, PsycInfo) to identify relevant studies. Our initial search that encompassed terms like teamwork and cooperation yielded over 20,000 results. To enhance precision, we fine-tuned the search criteria through consultations with colleagues. We further narrowed down the results using the Social Sciences Citation Index, concentrating on pertinent research domains such as Education, Psychology, Computer Science, and Business Economics. To maintain a manageable scope of literature, the decision was made to focus the search on articles with the concept of interest included in the title. The final search string used was: TITLE: (collaborat*) AND TOPIC: (skill* OR abilit* OR competenc* OR behav* OR learning) AND TOPIC: (concept* OR framework OR theor* OR assess* OR measur*). No restrictions with respect to the publication year were made. Further studies were gathered by employing literature snowballing techniques and reviewing the literature referenced in the authors’ previous research projects. After setting a language filter (English) and removing duplicates, the literature search strategy resulted in 5,107 publications.

Screening Process

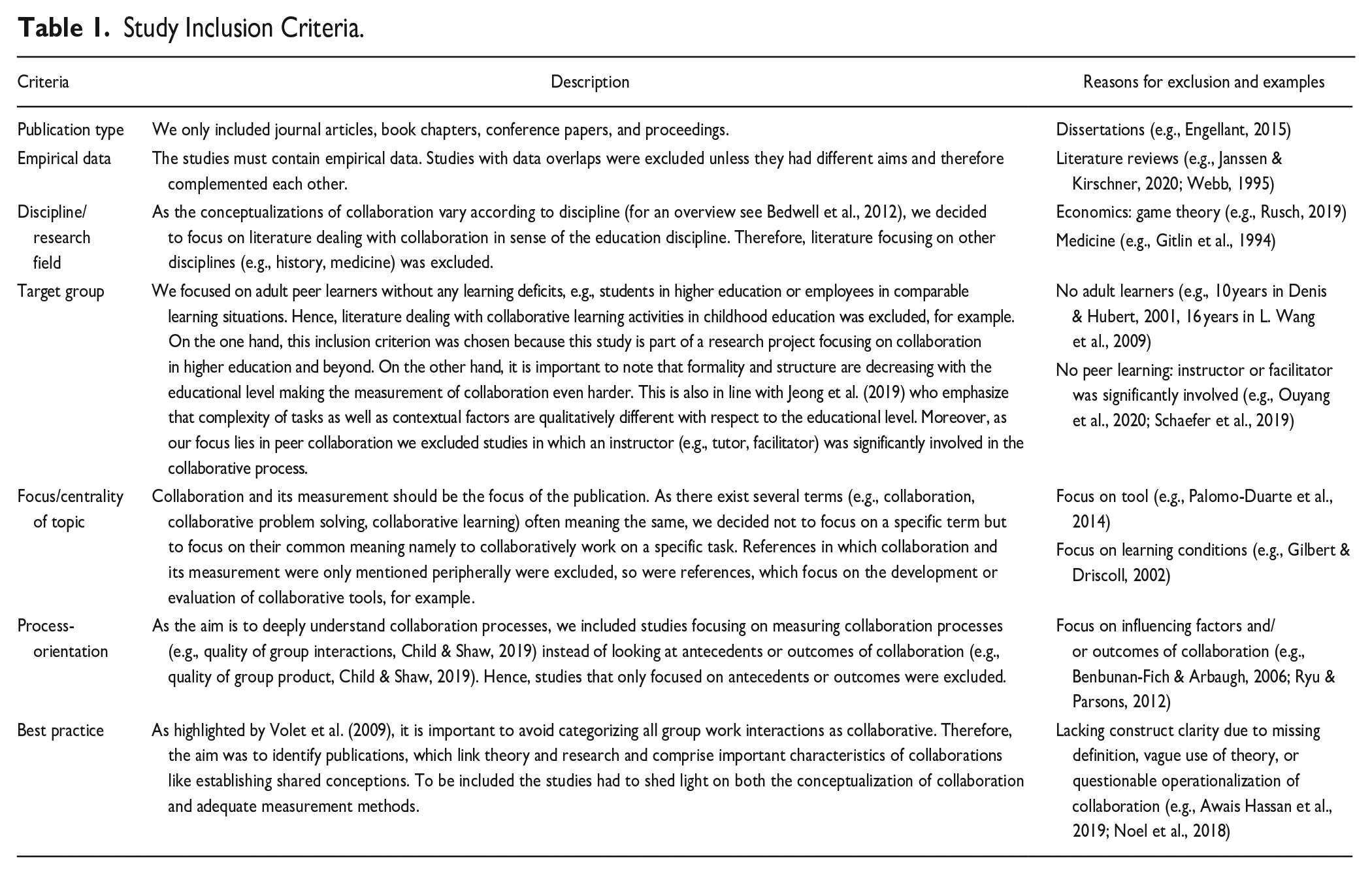

Taking into account the theoretical foundation outlined in the present study, the studies from our search results had to fulfill the inclusion criteria specified in Table 1 to be eligible for further analysis. The screening took place in two phases: an initial screening of titles and abstracts, and a screening for eligibility of the full-texts. The screening procedure is summarized in Figure 1. In the initial phase, two coders independently rated the first 100 abstracts. The calculation of Cohen’s Kappa resulted in κ = 0.71, reflecting a substantial agreement. Publications on which the coders did not agree were discussed until consensus was reached. Afterwards, the first author screened the remaining titles and abstracts using Rayyan, a tool for systematic reviews (Ouzzani et al., 2016). As recommended by Siddaway et al. (2019), the abstracts and titles were screened sensitively, resulting in 651 potentially relevant publications.

Study Inclusion Criteria.

Flow chart summarizing the screening procedure.

Next, we reviewed the publications using the inclusion criteria. At this stage, the focus shifted from sensitivity to specificity (in accordance with Siddaway et al., 2019), resulting in the inclusion of 28 publications for the final analysis. In certain instances, a case could have been made for either inclusion or exclusion. These borderline cases were discussed with colleagues. Additionally, approximately 10% of the studies, constituting a sample of 65, were independently assessed by a second coder. This evaluation aimed to ascertain the clarity of the inclusion criteria and the potential reproducibility of the decision-making process. The calculated Kappa value of κ = 0.74 reflects a substantial agreement indicating interrater reliability. Publications on which the coders did not agree were discussed until consensus was reached.

Analysis of Included Literature

To analyze the literature, qualitative content analysis was conducted using MAXQDA 2020 (VERBI, n.d.-a). An inductive-deductive approach was employed and the analysis was led by the two fundamental questions of

Results

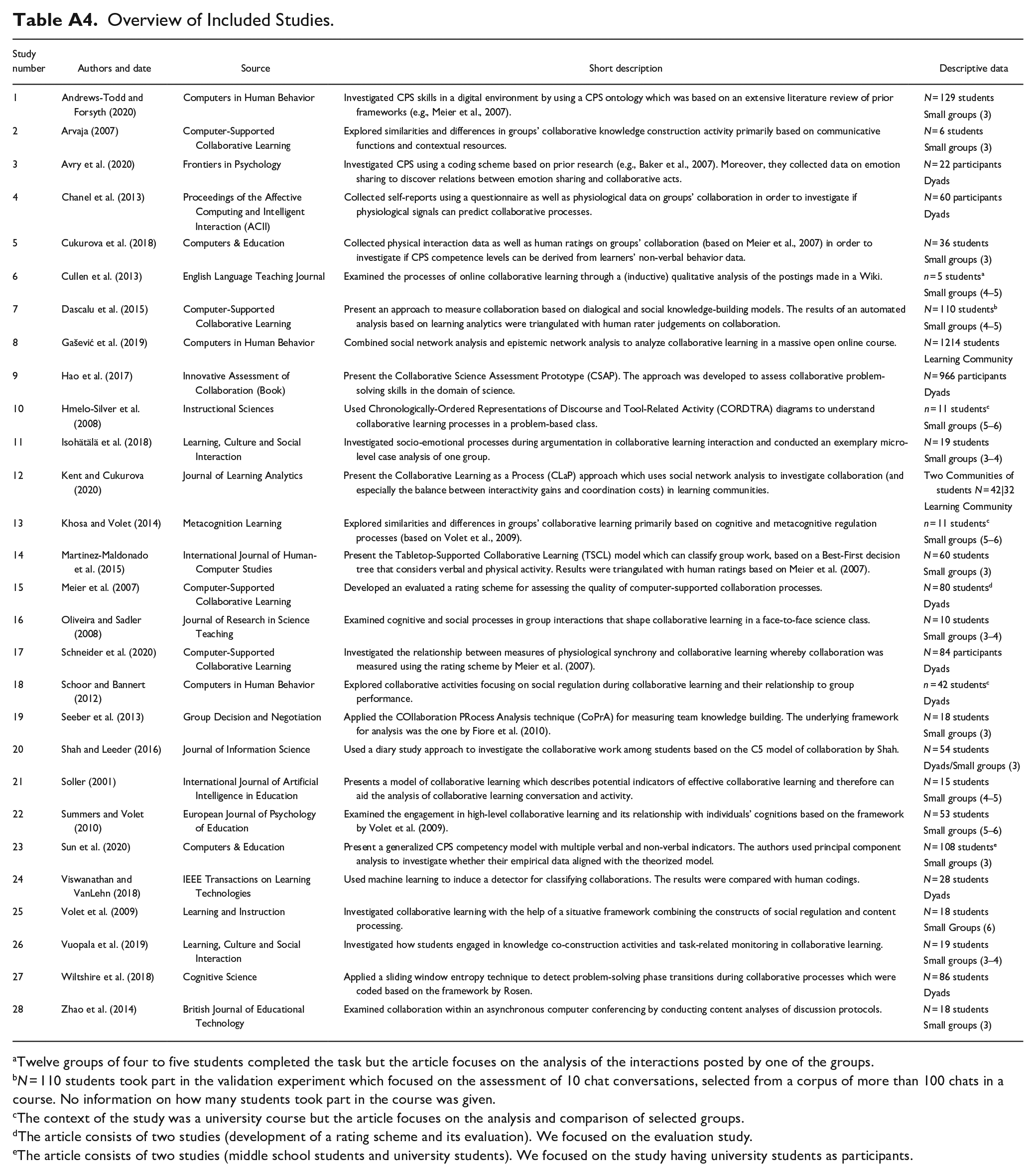

The final set of included studies (

Besides information on participants, we considered information about context and setting of the studies. In order for collaboration to occur and be measured, it is essential that there is an environment that enables participants to exhibit pertinent behaviors. Therefore, we categorized how the interaction between learners took place, what kind of problem-solving tasks were present, and whether they had to be solved in a short or long period of time. Furthermore, we investigated what kind of tools were used (for an overview, see Appendix A1). The included studies investigated collaborative problem-solving activities in face-to-face, online, or hybrid settings, whereby online settings were the most frequent (

What to Measure? Conceptualization of Peer Collaboration

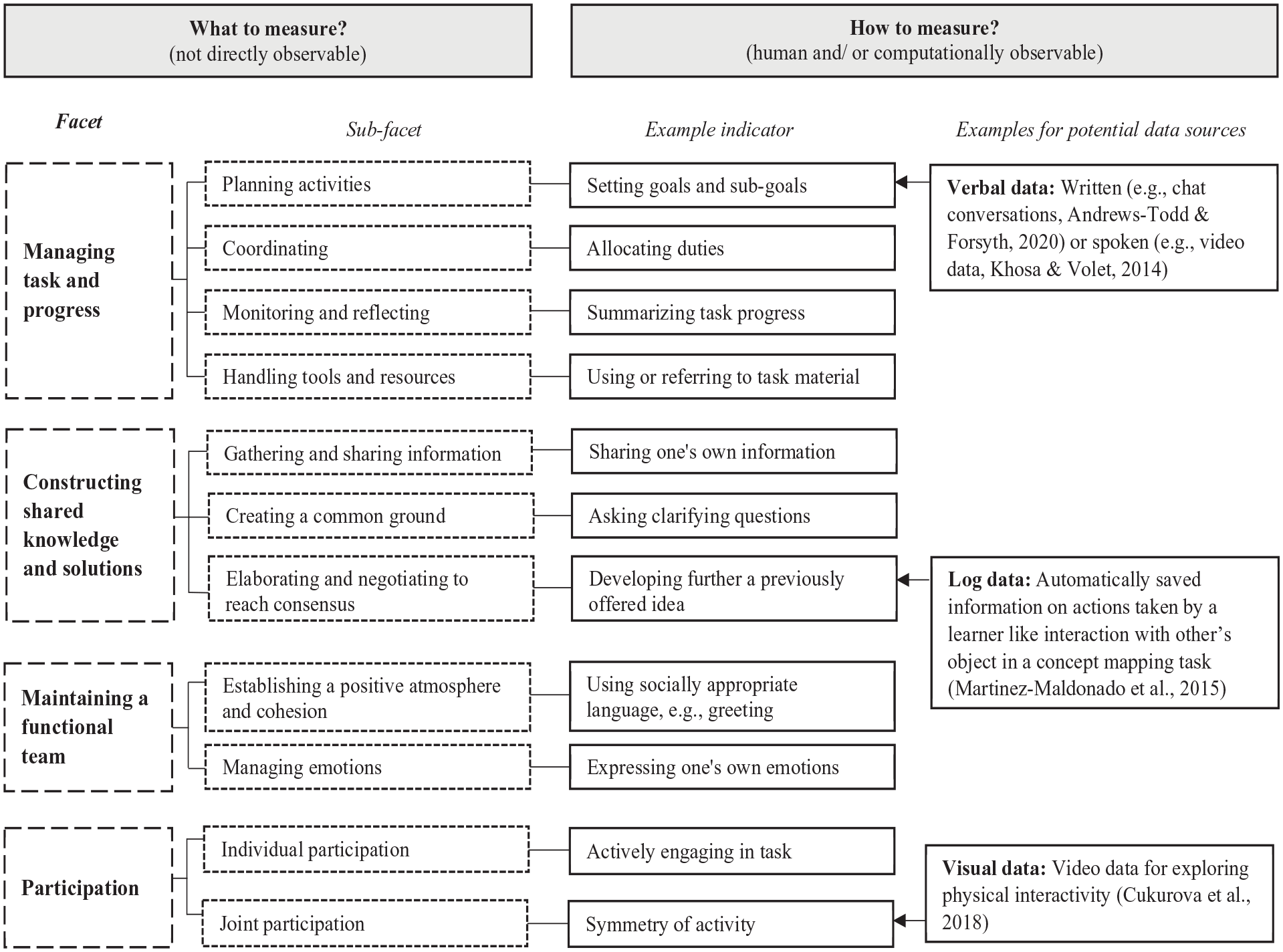

The inductive-deductive analysis approach led to an iterative formation of four broad categories that reflect the core facets of collaborative processes, including (1)

During analysis, it became clear that in most of the studies the frameworks presented were based on previous research and therefore served for a deductive approach (e.g., Sun et al., 2020; Wiltshire et al., 2018), while in some cases they were formed inductively from the study material (e.g., Cullen et al., 2013). Noticeably, two well-established frameworks were often referred to; namely the rating scheme by Meier et al. (2007, e.g., in Schneider et al., 2020), and the PISA framework (e.g., in Hao et al., 2017). Whereas the rating scheme by Meier et al. (2007) focuses on collaborative

Managing Task and Progress

This facet refers to actions and communications used to develop a plan and distribute roles. Furthermore, it includes observing and reflecting the collaborative progress in order to make adjustments as well as making use of tools and resources to solve the task.

Planning Activities

Planning activities encompasses a group’s involvement in gaining a comprehensive understanding of the task or issue at hand. It includes task clarification (Schoor & Bannert, 2012), managing the scope and content (Cullen et al., 2013; Shah & Leeder, 2016), determining goals and sub-goals (Andrews-Todd & Forsyth, 2020; Wiltshire et al., 2018), and developing a strategy of how the task will be approached (Shah & Leeder, 2016). This might be especially important in the beginning but remains relevant throughout the subsequent stages of collaboration, such as when planning the next steps to take (Hao et al., 2017) or deciding when to step forward (Seeber et al., 2013). Khosa and Volet (2014) further distinguish between high and low levels of planning, whereby a high level is not only proposing an approach on how to proceed but also involves conceptual justification for doing so.

Coordinating

Coordination encompasses a group’s action in dividing the work and arranging the task at hand. It includes task division (Meier et al., 2007; Schneider et al., 2020) in order to allocate subtasks, roles, and duties (Cullen et al., 2013; Schoor & Bannert, 2012). Moreover, the sub-facet also covers behaviors like accepting or refusing role distribution (Avry et al., 2020).

Monitoring and Reflecting

This sub-facet encompasses behaviors aimed at monitoring and assessing progress toward the goal, as well as reflecting on and adapting activities accordingly (e.g., Hmelo-Silver et al., 2008). This includes checking “what has been done, and what is still to be done” (Avry et al., 2020, p. 6) and therefore reflecting on what is required to solve the task (Khosa & Volet, 2014). To do so, the investigated groups in the included studies often summarized their current state (Hmelo-Silver et al., 2008; Vuopala et al., 2019). In addition, this sub-facet includes monitoring the remaining time for solving the task (Meier et al., 2007; Schneider et al., 2020). Some of the studies differentiated between individual and group monitoring (Hmelo-Silver et al., 2008; Schoor & Bannert, 2012).

Handling Tools and Resources

Handling tools and resources comprises behaviors related to technical skills as well as making use of resources to solve the problem, including how groups managed collaborative tool usage (Avry et al., 2020), whether groups used tools beneficially (Meier et al., 2007), and if they made use of helpful resources like course material (Arvaja, 2007).

Constructing Shared Knowledge and Solutions

Constructing shared knowledge and solutions refers to actions and communications used to pool all relevant information to solve the problem at hand and ensuring that all group members have a mutual understanding. Furthermore, it encompasses elaborating each other’s ideas as well as challenging them by discussing.

Gathering and Sharing Information

This sub-facet entails behaviors aimed at acquiring all pertinent information necessary to address the task. It comprises sharing one’s own information and insights (e.g., Cullen et al., 2013; Hao et al., 2017; Soller, 2001) as well as requesting relevant information from others (e.g., Arvaja, 2007; Wiltshire et al., 2018; Zhao et al., 2014).

Creating a Common Ground

Creating a common ground refers to communicative actions that ensure “what has been said is understood” (Andrews-Todd & Forsyth, 2020, p. 6) which reflects a shared understanding. In line with Cukurova et al. (2018), the basis for having a common ground is the ability to understand the cognitions, behaviors, and attitudes of others. For example, in many of the included studies, this entails asking clarifying questions (e.g., Chanel et al., 2013; Hmelo-Silver et al., 2008) to verify the adequate understanding of others’ ideas (Andrews-Todd & Forsyth, 2020; Sun et al., 2020). This process is supported by explaining ideas in one’s own words (Volet et al., 2009) and using examples (Arvaja, 2007).

Elaborating and Negotiating to Reach Consensus

Elaborating and negotiating to reach consensus reflects behaviors aimed at finding a solution for the problem-solving task. Hence, it includes developing and proposing solutions (e.g., Seeber et al., 2013; Sun et al., 2020) as well as elaborating and negotiating. Elaboration involves the expansion of ideas and behaviors that signify co-construction, such as developing further a previously offered information (Arvaja, 2007), deepening and broadening ideas (Chanel et al., 2013), and engaging with knowledge objects produced by others (Martinez-Maldonado et al., 2015). Negotiating becomes evident during an episode of incompatibility of ideas and opinions (Oliveira & Sadler, 2008). It is reflected by communications expressing agreement or disagreement of ideas (e.g., Andrews-Todd & Forsyth, 2020; Hao et al., 2017) and by arguing about them (e.g., Chanel et al., 2013; Soller, 2001), which often involves using justifications derived from one’s own conceptualizations or grounded beliefs (Arvaja, 2007; Hmelo-Silver et al., 2008). Elaboration and negotiating typically result in reaching consensus and making decisions (Khosa & Volet, 2014; Meier et al., 2007; Shah & Leeder, 2016).

Maintaining a Functional Team

Maintaining a functional team involves actions and communications reflecting a positive and respectful atmosphere during collaborative problem solving. Moreover, this facet encompasses behaviors related to managing motivation and emotion.

Establishing a Positive Atmosphere and Cohesion

In a positive atmosphere, “collaborative behaviour can flourish” (Cullen et al., 2013, p. 428). A positive atmosphere is established through socially appropriate language like greeting or apologizing for interruptions (e.g., Hao et al., 2017), listening actively (e.g., Isohätälä et al., 2018), showing awareness of the other group members (Cullen et al., 2013), acknowledgment (e.g., Oliveira & Sadler, 2008), expressing appreciation (e.g., Soller, 2001), and humor (e.g., Avry et al., 2020). In some of the included studies, different levels of acknowledgment were differentiated (Oliveira & Sadler, 2008), or reverse-coded indicators were proposed (“Makes fun of, criticizes, or is rude to others,” Sun et al., 2020, p. 4). Beyond that, this sub-facet includes showing and regulating motivation (Schoor & Bannert, 2012), which is related to group cohesion (Kent & Cukurova, 2020). In Zhao et al. (2014), cohesion was identified through the use of inclusive pronouns, for example.

Managing Emotions

This sub-facet reflects communications and behaviors related to one’s own emotions as well as to the emotions of others. Emotion management comprises sharing one’s own emotions, including positive ones like enjoyment or satisfaction and negative ones like frustration (Hao et al., 2017; Shah & Leeder, 2016). It also encompasses the ability to perceive emotions in others and engage in communication about them (e.g., Chanel et al., 2013). In written communications like forum discussions, the use of emoticons can serve as an indicator for expressing emotions (Zhao et al., 2014).

Individual and Joint Participation

This facet reflects participation on the individual level (individual engagement) as well as the group level (equality) during the collaborative process. In collaborative problem solving, each group member should engage actively (Isohätälä et al., 2018; Meier et al., 2007), “making sure that they undertake their share of the work and feel personally responsible for the group’s success while others are also undertaking their share in completing the task” (Cukurova et al., 2018, p. 96). This, in turn, leads to joint participation, meaning the whole group is taking part in on-task behaviors (Isohätälä et al., 2018). It pertains to the presence of verbal contributions from multiple group members, contrasting with a predominant single speaker approach (Summers & Volet, 2010), or the absence of active involvement from a single learner (Martinez-Maldonado et al., 2015). Ideally, every group member should contribute equally, which is manifested in the symmetry of their engagement (Martinez-Maldonado et al., 2015; Meier et al., 2007).

Further Insights Into the Collaborative Process

Some information on what was measured could not be assigned to the previous facets and were present in only a few studies. For example, Schoor and Bannert (2012) used the category, appraisal of partner’s cognition, as demonstrated by attempting to discern a group member’s thought process. Shah and Leeder (2016) addressed personal matters that could impact group work (e.g., p. 628, “A group member expresses having difficulty or fails to contribute because they are balancing other responsibilities or schedule conflicts”).

How to Measure? Measurement Approaches

With regard to the measurement approaches, we were interested in both how collaboration data were collected and how they were analyzed. We also checked what kind of additional data were collected in order to triangulate the results. For an overview of the whole category system created to answer RQ 2 (

Data Collection

With the exception of self-report data (diary study, questionnaire) in the studies by Shah and Leeder (2016) and Chanel et al. (2013), all measures of collaboration were based on observational data (visual, verbal, and log data). Eleven of the included studies captured visual data,

Data Analysis

Since most studies employed observational data, it is not surprising that the data have been analyzed primarily by content coding (

Several studies (

Synthesis: What, How, and Under Which Circumstances?

Based on the findings, previous frameworks (e.g., Meier et al., 2007; OECD, 2017) can be integrated into one. Given the review’s focus and our consideration of the studies’ context and settings, the definition of collaboration can be customized to align with the higher education context. Integrating previous definitions of collaborative learning, working, and problem solving (e.g., Bedwell et al., 2012; Patel et al., 2012; Roschelle & Teasley, 1995), we define collaboration in higher education as an evolving process whereby two or more students interact with each other as well as with tools and resources, within a single episode or series of episodes, working on a fixed task toward a shared goal. This process of co-elaboration is characterized by mutuality and equality of the students engaging in socio-cognitive (e.g., coordinating, creating a common ground) as well as socio-affective processes (e.g., establishing a positive atmosphere and cohesion). Ideally, the student group does not only find a joint solution for solving a problem, but each student profits through new knowledge and improved collaborative skills.

We used MAXQDA (VERBI, n.d.-a) to explore patterns and relations between RQ 1 (what) and RQ 2 (how). We also considered contexts and settings (e.g., task type, time). Notably, socio-affective aspects like

Taken together, Figure 2 serves as a compact overview of the derived integrative framework including the proposed facets of collaboration as well as potential measurement. Being a latent variable, collaboration and its facets are not directly observable (see Figure 2, illustrated by dashed lines) but must be made measurable by observable indicators (solid lines). Based on the reviewed literature, we came up with examples for potential data sources for observing them. The figure is inspired by Wise et al. (2021) discussing the main challenge of mapping (digital) traces to latent constructs in collaborative learning analytics. Since our included studies stemmed from various communities (such as CSCL and learning analytics) the integrative framework combines their respective strengths by being theoretically robust and presenting methodologically strong tools and techniques (cf. Wise et al., 2021). Given the selective and purposeful approach of our conducted review, this framework represents best practice operationalizations for measuring collaboration.

Overview of the proposed integrative framework for measuring collaboration (inspired by Wise et al., 2021).

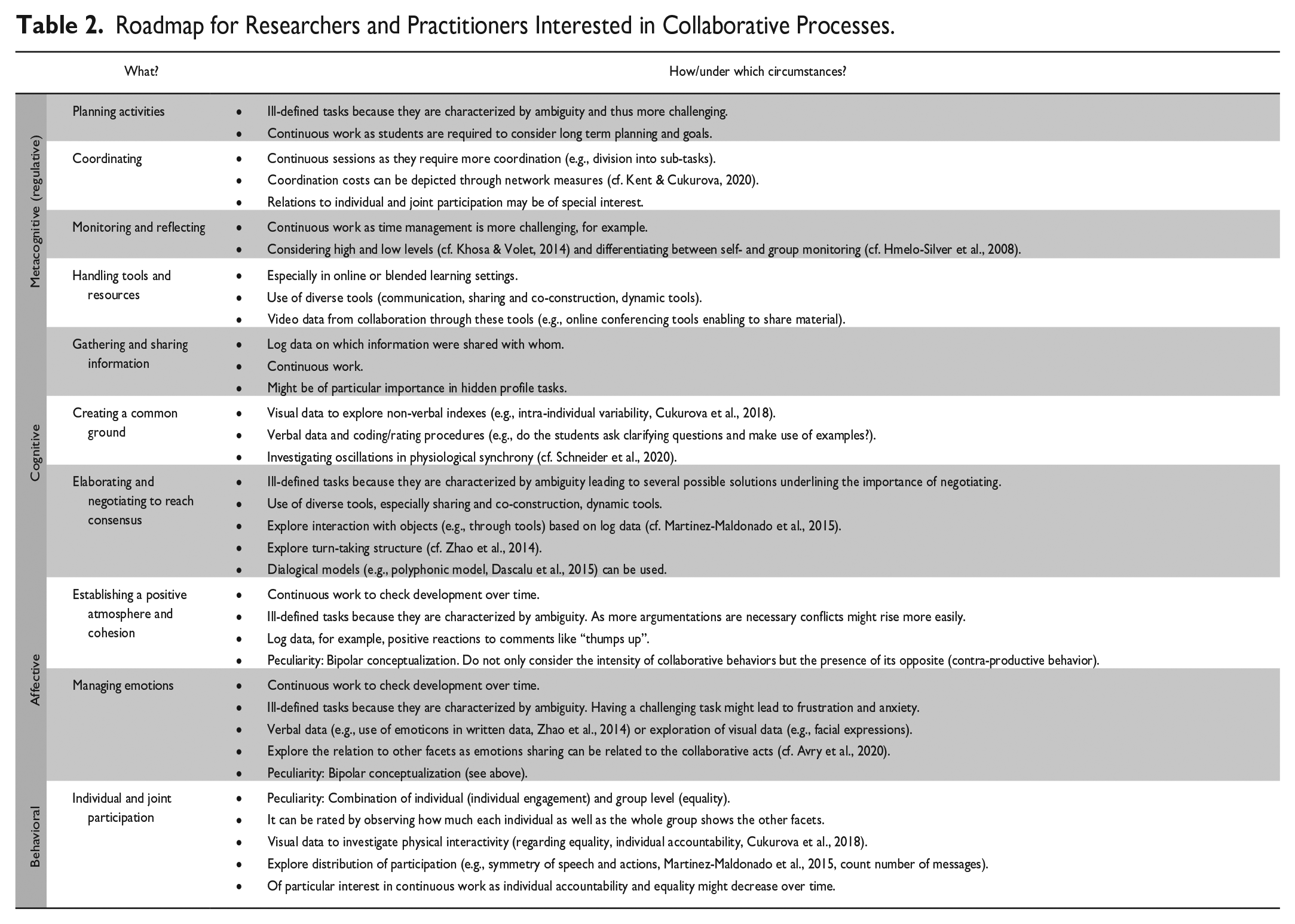

Additionally, detailed suggestions can be found in Table 2 depicting a roadmap (including what to measure, how to measure, and under which circumstances) for researchers and practitioners interested in investigating collaborative processes. It includes recommendations on suitable tasks, tools, and techniques for each of the sub-facets of collaboration in higher education. This way, researchers and practitioners can design learning environments or study designs that fit the dimensions (metacognitive, cognitive, affective, behavioral) of collaboration in which they are interested. The other way around, the table also depicts which dimensions of collaboration can be appropriately addressed and measured, when the context is predefined and not modifiable. For example, if interested in affective dimensions of collaboration, choosing continuous work as well as ill-defined tasks would be appropriate choices. First, having several work episodes allows monitoring the development of groups’ atmosphere and tone over time. Second, ill-defined tasks are characterized by ambiguity, creating a challenge, which might lead to emotional arousal. This way, researchers and practitioners could not only observe how groups’ atmosphere is established over time but also how emotions are handled. A peculiarity with the affective facets (e.g.,

Roadmap for Researchers and Practitioners Interested in Collaborative Processes.

Discussion

The results of this in-depth analysis of 28 systematically and carefully selected publications depict a detailed picture of both the conceptualization (RQ 1) as well as measurement approaches (RQ 2) of collaboration. With regard to RQ 1, we iteratively formed four broad categories derived from the included publications that reflect facets of collaboration: (1)

Strengths and Limitations

This systematic literature review extends previous reviews by investigating and integrating two important domains of collaboration: the conceptualization and measurement domain. Therefore, it sheds light on the two fundamental questions for researchers who are interested in collaborative processes (Meier et al., 2007). In evaluating the present study, a strength that can be noted is that the synthesis of several best practice approaches in measuring collaboration resulted in an integrative framework displaying descriptions of collaborative behaviors. To the best of our knowledge, this framework of collaborative indicators is the first that is based on a systematic literature review. It gives a holistic overview with detailed descriptions of sub-facets of collaboration, while focusing on a specific target group, namely adult peer learners. Additionally, it enriches prior work by selecting and depicting best practice approaches in measuring collaboration while linking the conceptualization and measurement of collaboration. Thus, it is comprised of helpful recommendations for researchers and practitioners interested in collaborative processes on what to measure, how to measure, and under which circumstances (cf. Table 2). With regard to the methodology, we considered the guidelines by Siddaway et al. (2019) as well as Gough et al. (2017). Particularly, it is noteworthy that interrater reliability was conducted at each step of the screening process including subsequent refinements.

Beside these strengths, there are also some noteworthy limitations of the study. First, the number of included studies (

Implications for Research and Practice

The integrative framework developed (see Figure 2) provides a solid basis but also has the potential to grow or be adapted through further research. As some of the categories show overlaps (e.g., using humor can be classified as

In line with Baker et al. (2013), we call for greater consideration of socio-affective processes during group work, for the following reasons. These processes are important as research suggests that affective support (e.g., appreciation of behaviors, appeals of cheering) and affective tone (e.g., shared feelings of excitement) within teams have the potential to raise motivation (Hüffmeier & Hertel, 2011; Hüffmeier et al., 2014) and performance (Paulsen et al., 2016). Contrarily, a negative climate might lead to negative emotions and lower satisfaction of group members (Bakhtiar et al., 2018). These findings also underline the importance of considering the bipolar conceptualization of the affective dimensions of collaboration (cf. Table 2). Furthermore, the study by Ollesch et al. (2020) investigating group awareness attributes showed that students weighted the visualization of friendliness higher than that of participation. This, in turn, leads to the question of the influence of group familiarity. As this aspect was not covered in our study, it should be considered in future research. Beyond that, further potential influencing factors on collaborative processes like collective task value (S. L. Wang & Hong, 2018) or learner readiness (Xiong et al., 2015) could also be investigated. Since our findings suggest that some sub-facets are of particular importance in certain group phases (e.g.,

Our framework is context-specific (i.e., higher education) and particularly suited for researchers and practitioners interested in collaborative processes of learning/student teams. How can our findings assist higher education professionals (e.g., professors, teaching assistants) in fostering collaboration among students? Since students often do not feel well prepared to work collaboratively (Wilson et al., 2018), higher education professionals are encouraged to integrate the detailed descriptions and context-related example indicators into their teaching to help students understand what collaboration means. For students, gaining a deeper insight into team dynamics and the individual contributions that contribute to its achievements holds significance not only due to the heightened demand for 21st-century skills in the job market, but also in light of the challenges and transformations brought about by student teamwork during the COVID-19 pandemic, as outlined by Wildman et al. (2021). A well-differentiated rating scheme that allows a clear classification and interpretation of collaborative behavior would be helpful for students to self-assess and reflect upon their collaboration. We believe that Behaviorally Anchored Rating Scales (BARS) might be a promising approach in this regard as they provide numerous advantages and focus on the quality instead of frequency of behavior (for an overview, see Georganta & Brodbeck, 2020). Including behavioral anchors representing different quality levels, BARS have been found beneficial in similar areas of application (e.g., self- and peer-evaluation of team member effectiveness, Ohland et al., 2012). Considering that students at higher educational levels are often less supported and more expected to self-regulate their (collaborative) learning, integrating self- and peer-evaluation as well as reflections based on BARS could hold promise in fostering their collaboration. Furthermore, we aim to heighten the awareness of higher education professionals regarding the selection of suitable tasks, tools, and time constraints during a collaborative process. Additionally, we seek to sensitize them to the value of providing feedback on students’ collaboration, wherever feasible. Multiple sources can be used to observe student collaboration; however, our findings underline the limitations of solely relying on quantitative data and the importance of using multi-layered measurement approaches.

Considering the inclination toward self-organized, agile teams characterized by minimal or absent hierarchical structures, alongside the growing organizational emphasis on cultivating a culture of lifelong learning, our framework could prove valuable within the organizational context as well. Researchers and practitioners are encouraged to explore the suitability of these indicators for collaboration within professional working environments. Hereby, it is important to note that practitioners often need more simple and unobtrusive measures than researchers (Salas et al., 2017) and that implementation is often dependent on corporate policy and strategy. Considering the drive for higher education institutions to cultivate the socio-relational skills of the forthcoming workforce, the framework could hold particular significance for practitioners within human resources departments. It could potentially be integrated into processes such as personnel selection. Additionally, it could be used as a source for giving feedback and team trainings, as well as for implementing joint reflections in the field of personnel development.

Conclusion

This systematic literature review synthesized research focusing on best practices in measuring collaborative processes in higher education. An integrative framework containing diverse indicators of collaboration were deduced from the included articles. The insights of our study aim to enrich multidisciplinary research and therefore have significance for several communities, including CSCL, learning analytics, and educational data mining. Moreover, the findings support researchers and practitioners (e.g., higher education professionals) who want to understand, measure, and foster student collaboration. This review can serve as a guideline when defining

Supplemental Material

sj-docx-1-sgr-10.1177_10464964231200191 – Supplemental material for Conceptualization and Measurement of Peer Collaboration in Higher Education: A Systematic Review

Supplemental material, sj-docx-1-sgr-10.1177_10464964231200191 for Conceptualization and Measurement of Peer Collaboration in Higher Education: A Systematic Review by Verena Schürmann, Nicki Marquardt and Daniel Bodemer in Small Group Research

Footnotes

Appendix

Overview of Included Studies.

| Study number | Authors and date | Source | Short description | Descriptive data |

|---|---|---|---|---|

| 1 | Andrews-Todd and Forsyth (2020) | Computers in Human Behavior | Investigated CPS skills in a digital environment by using a CPS ontology which was based on an extensive literature review of prior frameworks (e.g., Meier et al., 2007). | |

| Small groups (3) | ||||

| 2 | Arvaja (2007) | Computer-Supported Collaborative Learning | Explored similarities and differences in groups’ collaborative knowledge construction activity primarily based on communicative functions and contextual resources. | |

| Small groups (3) | ||||

| 3 | Avry et al. (2020) | Frontiers in Psychology | Investigated CPS using a coding scheme based on prior research (e.g., Baker et al., 2007). Moreover, they collected data on emotion sharing to discover relations between emotion sharing and collaborative acts. | |

| Dyads | ||||

| 4 | Chanel et al. (2013) | Proceedings of the Affective Computing and Intelligent Interaction (ACII) | Collected self-reports using a questionnaire as well as physiological data on groups’ collaboration in order to investigate if physiological signals can predict collaborative processes. | |

| Dyads | ||||

| 5 | Cukurova et al. (2018) | Computers & Education | Collected physical interaction data as well as human ratings on groups’ collaboration (based on Meier et al., 2007) in order to investigate if CPS competence levels can be derived from learners’ non-verbal behavior data. | |

| Small groups (3) | ||||

| 6 | Cullen et al. (2013) | English Language Teaching Journal | Examined the processes of online collaborative learning through a (inductive) qualitative analysis of the postings made in a Wiki. | |

| Small groups (4–5) | ||||

| 7 | Dascalu et al. (2015) | Computer-Supported Collaborative Learning | Present an approach to measure collaboration based on dialogical and social knowledge-building models. The results of an automated analysis based on learning analytics were triangulated with human rater judgements on collaboration. | |

| Small groups (4–5) | ||||

| 8 | Gašević et al. (2019) | Computers in Human Behavior | Combined social network analysis and epistemic network analysis to analyze collaborative learning in a massive open online course. | |

| Learning Community | ||||

| 9 | Hao et al. (2017) | Innovative Assessment of Collaboration (Book) | Present the Collaborative Science Assessment Prototype (CSAP). The approach was developed to assess collaborative problem-solving skills in the domain of science. | |

| Dyads | ||||

| 10 | Hmelo-Silver et al. (2008) | Instructional Sciences | Used Chronologically-Ordered Representations of Discourse and Tool-Related Activity (CORDTRA) diagrams to understand collaborative learning processes in a problem-based class. | |

| Small groups (5–6) | ||||

| 11 | Isohätälä et al. (2018) | Learning, Culture and Social Interaction | Investigated socio-emotional processes during argumentation in collaborative learning interaction and conducted an exemplary micro-level case analysis of one group. | |

| Small groups (3–4) | ||||

| 12 | Kent and Cukurova (2020) | Journal of Learning Analytics | Present the Collaborative Learning as a Process (CLaP) approach which uses social network analysis to investigate collaboration (and especially the balance between interactivity gains and coordination costs) in learning communities. | Two Communities of students |

| Learning Community | ||||

| 13 | Khosa and Volet (2014) | Metacognition Learning | Explored similarities and differences in groups’ collaborative learning primarily based on cognitive and metacognitive regulation processes (based on Volet et al., 2009). | |

| Small groups (5–6) | ||||

| 14 | Martinez-Maldonado et al. (2015) | International Journal of Human-Computer Studies | Present the Tabletop-Supported Collaborative Learning (TSCL) model which can classify group work, based on a Best-First decision tree that considers verbal and physical activity. Results were triangulated with human ratings based on Meier et al. (2007). | |

| Small groups (3) | ||||

| 15 | Meier et al. (2007) | Computer-Supported Collaborative Learning | Developed an evaluated a rating scheme for assessing the quality of computer-supported collaboration processes. | |

| Dyads | ||||

| 16 | Oliveira and Sadler (2008) | Journal of Research in Science Teaching | Examined cognitive and social processes in group interactions that shape collaborative learning in a face-to-face science class. | |

| Small groups (3–4) | ||||

| 17 | Schneider et al. (2020) | Computer-Supported Collaborative Learning | Investigated the relationship between measures of physiological synchrony and collaborative learning whereby collaboration was measured using the rating scheme by Meier et al. (2007). | |

| Dyads | ||||

| 18 | Schoor and Bannert (2012) | Computers in Human Behavior | Explored collaborative activities focusing on social regulation during collaborative learning and their relationship to group performance. | |

| Dyads | ||||

| 19 | Seeber et al. (2013) | Group Decision and Negotiation | Applied the COllaboration PRocess Analysis technique (CoPrA) for measuring team knowledge building. The underlying framework for analysis was the one by Fiore et al. (2010). | |

| Small groups (3) | ||||

| 20 | Shah and Leeder (2016) | Journal of Information Science | Used a diary study approach to investigate the collaborative work among students based on the C5 model of collaboration by Shah. | |

| Dyads/Small groups (3) | ||||

| 21 | Soller (2001) | International Journal of Artificial Intelligence in Education | Presents a model of collaborative learning which describes potential indicators of effective collaborative learning and therefore can aid the analysis of collaborative learning conversation and activity. | |

| Small groups (4–5) | ||||

| 22 | Summers and Volet (2010) | European Journal of Psychology of Education | Examined the engagement in high-level collaborative learning and its relationship with individuals’ cognitions based on the framework by Volet et al. (2009). | |

| Small groups (5–6) | ||||

| 23 | Sun et al. (2020) | Computers & Education | Present a generalized CPS competency model with multiple verbal and non-verbal indicators. The authors used principal component analysis to investigate whether their empirical data aligned with the theorized model. | |

| Small groups (3) | ||||

| 24 | Viswanathan and VanLehn (2018) | IEEE Transactions on Learning Technologies | Used machine learning to induce a detector for classifying collaborations. The results were compared with human codings. | |

| Dyads | ||||

| 25 | Volet et al. (2009) | Learning and Instruction | Investigated collaborative learning with the help of a situative framework combining the constructs of social regulation and content processing. | |

| Small Groups (6) | ||||

| 26 | Vuopala et al. (2019) | Learning, Culture and Social Interaction | Investigated how students engaged in knowledge co-construction activities and task-related monitoring in collaborative learning. | |

| Small groups (3–4) | ||||

| 27 | Wiltshire et al. (2018) | Cognitive Science | Applied a sliding window entropy technique to detect problem-solving phase transitions during collaborative processes which were coded based on the framework by Rosen. | |

| Dyads | ||||

| 28 | Zhao et al. (2014) | British Journal of Educational Technology | Examined collaboration within an asynchronous computer conferencing by conducting content analyses of discussion protocols. | |

| Small groups (3) |

Twelve groups of four to five students completed the task but the article focuses on the analysis of the interactions posted by one of the groups.

The context of the study was a university course but the article focuses on the analysis and comparison of selected groups.

The article consists of two studies (development of a rating scheme and its evaluation). We focused on the evaluation study.

The article consists of two studies (middle school students and university students). We focused on the study having university students as participants.

Acknowledgements

We would like to thank Kira Wolff, Louise Küry, Simon Heintzen and Ace Lat for their valuable support, especially when discussing study selection and conducting interrater reliability.

Author’s Note

Verena Schürmann is also affiliated with Research Methods in Psychology – Media Based Knowledge Construction, University of Duisburg-Essen, Duisburg, Germany.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.