Abstract

Feedback is a cornerstone of human development. Not surprisingly, it plays a vital role in team development. However, the literature examining the specific role of feedback in virtual team effectiveness remains scattered. To improve our understanding of feedback in virtual teams, we identified 59 studies that examine how different feedback characteristics (content, source, and level) impact virtual team effectiveness. Our findings suggest that virtual teams benefit particularly from feedback that (a) combines performance-related information with information on team processes and/or psychological states, (b) stems from an objective source, and (c) targets the team as a whole. By integrating the existing knowledge, we point researchers in the direction of the most pressing research needs, as well as the practices that are most likely to pay off when designing feedback interventions in virtual teams.

In the post-pandemic world, organizations and workers alike are grappling with the reality of collaborative forms of remote work and the question how to work effectively in virtual teams (i.e., teams composed of individuals who work interdependently toward a common goal—sometimes under conditions of geographic dispersion—while strongly relying on electronic communication technologies, e.g., Gilson et al., 2015; Raghuram et al., 2019). Several polls indicate that approximately 60% to 70% of workers will prefer to remain virtual to some degree in their work arrangements post-pandemic (Brenan, 2020; IBM, 2020; Ozimek, 2020), and many organizations are planning to make extensive use of virtual teams (e.g., Dropbox Team, 2020; Hartmans, 2021). Therefore, a clear and comprehensive understanding of the factors that contribute to virtual team effectiveness is critical.

One particular challenge faced by virtual teams is the inherent lack of information, especially team feedback (McLarnon et al., 2019), which is defined as information provided to a team to guide its activities (Gabelica et al., 2012). Generally speaking, feedback allows team members to gain and maintain knowledge regarding current states and situations (Carter et al., 2019; DeShon et al., 2004; Geister et al., 2006), make adjustments for guiding future actions, and implement new strategies based on what was learned (e.g., Carter et al., 2019; Donia et al., 2018). Feedback is thus an important vehicle to promote regulatory activity in teams (e.g., Hackman, 1987; Parker & Grote, 2020). In virtual teams, feedback appears to be particularly relevant because observational opportunities are fewer than when teams work in a physically co-located and high-visibility workspace (e.g., Geister et al., 2006; McLarnon et al., 2019). Accordingly, feedback provides virtual teams with information which may otherwise not have been available to them (Handke et al., 2020). Therefore, the effective conveyance and use of feedback may be a critical lever for organizations, managers, and peers for improving virtual team effectiveness.

Not surprisingly, there is a substantive literature of empirical studies investigating the effects of feedback in virtual teams. These studies suggest that feedback in virtual teams can foster team processes (e.g., team learning, Peñarroja et al., 2015), improve team cognition (e.g., shared mental models, Ellwart et al., 2015), and team performance (Jung et al., 2010). What is still puzzling though is that the general feedback literature also suggests that under some conditions, feedback is less beneficial or even harmful (DeNisi & Kluger, 2000; Kluger & DeNisi, 1996). Therefore, uncovering feedback characteristics that work best and minimize harm is equally important. The general feedback literature has attempted to do so through various classifications regarding specific feedback characteristics (e.g., comparing feedback on team processes and states versus feedback on team outputs, individual versus team-level feedback, and subjective versus objective feedback, e.g., Gabelica et al., 2012, 2014; London & Sessa, 2006). However, despite the clear potential of feedback to serve as a critical source of information and self-regulation in virtual teams, an integration of this literature and—as a result—a classification of feedback characteristics relevant to virtual team effectiveness is still missing. Therefore, a literature review that consolidates the existing evidence on feedback in virtual teams may be beneficial.

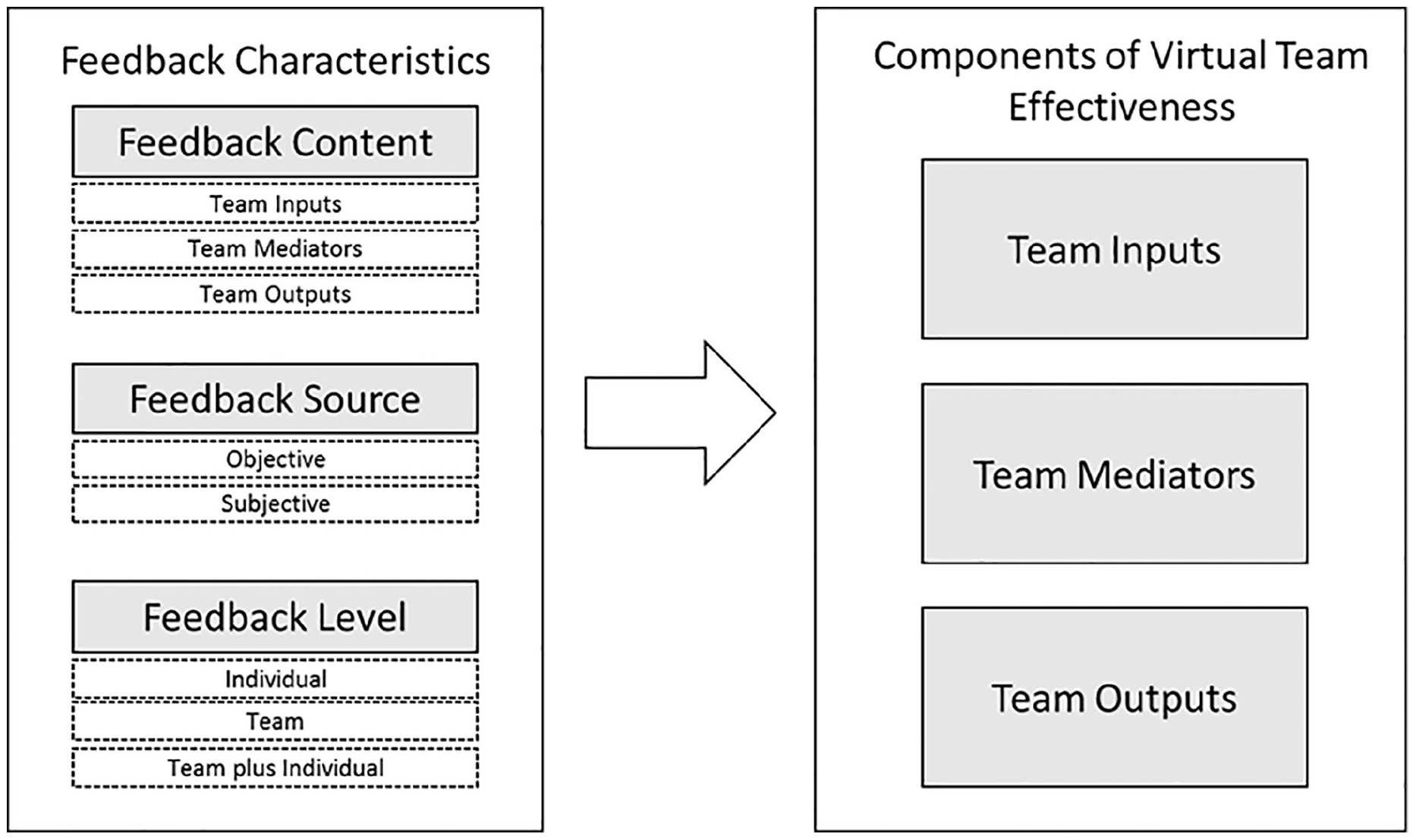

Here, we examine the existing literature on feedback in virtual teams with the goal of investigating whether and how feedback affects virtual team effectiveness as a function of specific feedback characteristics. To do so, we integrate existing feedback classifications (e.g., Alvero et al., 2001; Gabelica et al., 2012; London & Sessa, 2006) with input-mediator-output (IMO) models of team effectiveness (Campion et al., 1993; Ilgen et al., 2005; Marks et al., 2001; Mathieu et al., 2008, 2019). Specifically, we derive three feedback characteristics that we believe to offer the most parsimonious classification of feedback in virtual teams, using a combination of inductive and deductive approaches: (1) feedback content (i.e., giving feedback on team inputs, mediators, or outputs); (2) feedback source (i.e., feedback from subjective or objective sources); and (3) feedback level (i.e., individual level, team level, or as a combination). We use this classification to unpack the empirical effects of feedback on virtual team effectiveness (see Figure 1). Notably, we use the IMO framework not only to classify feedback content but also to disentangle different components of virtual team effectiveness. We believe this guiding framework will contribute to building a more comprehensive understanding of how feedback may be used to enhance virtual team effectiveness. Moreover, our consolidation of the extant literature promises to identify fruitful paths for future research and provide the most useful state of the science for practitioners in light of an increasingly virtual post-pandemic workforce.

Review framework.

In the remainder of this article, we first describe the literature search process, offer a brief definition of the focal constructs, and outline the framework for the synthesis of the key findings. We proceed to illustrate the form and effects of feedback by summarizing the reviewed studies by feedback characteristic. As team virtuality represents the embedding context in our framework, the findings we present are focused on the effects of feedback in virtual teams.

Review Methodology and Framework

Search Process

We conducted a two-step literature review (Arksey & O’Malley, 2005) integrating research on virtual teams and team feedback. In the first stage, the Scopus database was searched within social science, psychology, computer science, business, and multidisciplinary subject areas for the following keywords: team/group AND virtual/dispersion/remote/tele*/distance/media use/computer-mediated/computer-supported/distributed/online/electronic brainstorming/ICT AND feedback/awareness/peer assessment/debrief.

We expanded the keywords regarding team feedback to studies on (group) awareness, peer assessments, and debriefs, as these constitute related concepts that are widely studied. We directly excluded studies that were not based on adults. This was to ensure that our findings would be sufficiently distinct from other research domains, such as human-autonomy teams (HATs; i.e., teams of humans and intelligent, autonomous agents; O’Neill, McNeese et al., 2020). Additionally, the term team and/or group had to occur in the publication title (and not only in the abstract), to guarantee a strong group/team focus. In the second stage of the review process, manual searches were conducted for articles on feedback in virtual teams without indicating this in the title and/or abstract.

This search strategy generated 1,338 returns in Stage 1. We screened articles based on the title and abstract for research articles meeting three criteria. First, we only included studies reporting on empirical research. Second, a study had to include topics mentioning both team virtuality and team feedback. Specifically, we looked for studies reporting on how variations in feedback were associated with variations in the respective dependent variables. Third, studies had to have a team-level focus, meaning that they did not necessarily have to perform their analyses on the team level, but that the focal outcome(s) were relevant to team functioning and effectiveness. For instance, factors at the individual level, such as an individual team member’s contribution to the team, their helping behaviors, or their performance toward the collective outcome were considered relevant to the team (see Mathieu et al., 2019).

Two of our authors double-coded a subsample of 200 of the 1,338 studies (i.e., ~15%). The inter-coder reliability was κ = .75, reflecting a high level of agreement regarding decisions to include an article or not (values of .61 ≤ κ ≤ .80 reflect substantial point-by-point agreement; Landis & Koch, 1977). Accordingly, the remaining 1,138 studies were coded independently for suitability by the first author. This initial screening process revealed 79 studies for further inspection.

In a next step, we analyzed the full articles of the remaining 79 studies to ensure they still satisfied the inclusion criteria. This process revealed a further 24 studies that did not meet our criteria. For example, some did not occur in a collaborative setting (e.g., individuals playing first-person shooter games), and others constituted duplicate studies (e.g., conference proceedings). As a result, Stage 1 yielded 55 studies that we considered suitable for our review. In Stage 2, we conducted a further manual search to find studies that complied with our inclusion criteria by looking at (a) a review on work design in virtual teams (which included feedback as one of its core variables), and (b) literature on team feedback which had been conducted in a virtual context but did not explicitly mention this in title or abstract. Four additional studies were included when it became apparent, only by reading the full article, that they dealt with feedback in virtual teams and this could not have been picked up by our search query in Stage 1. Accordingly, Stage 2 resulted in a final list of 59 studies that formed the basis of our review (see Appendix).

Sample overview

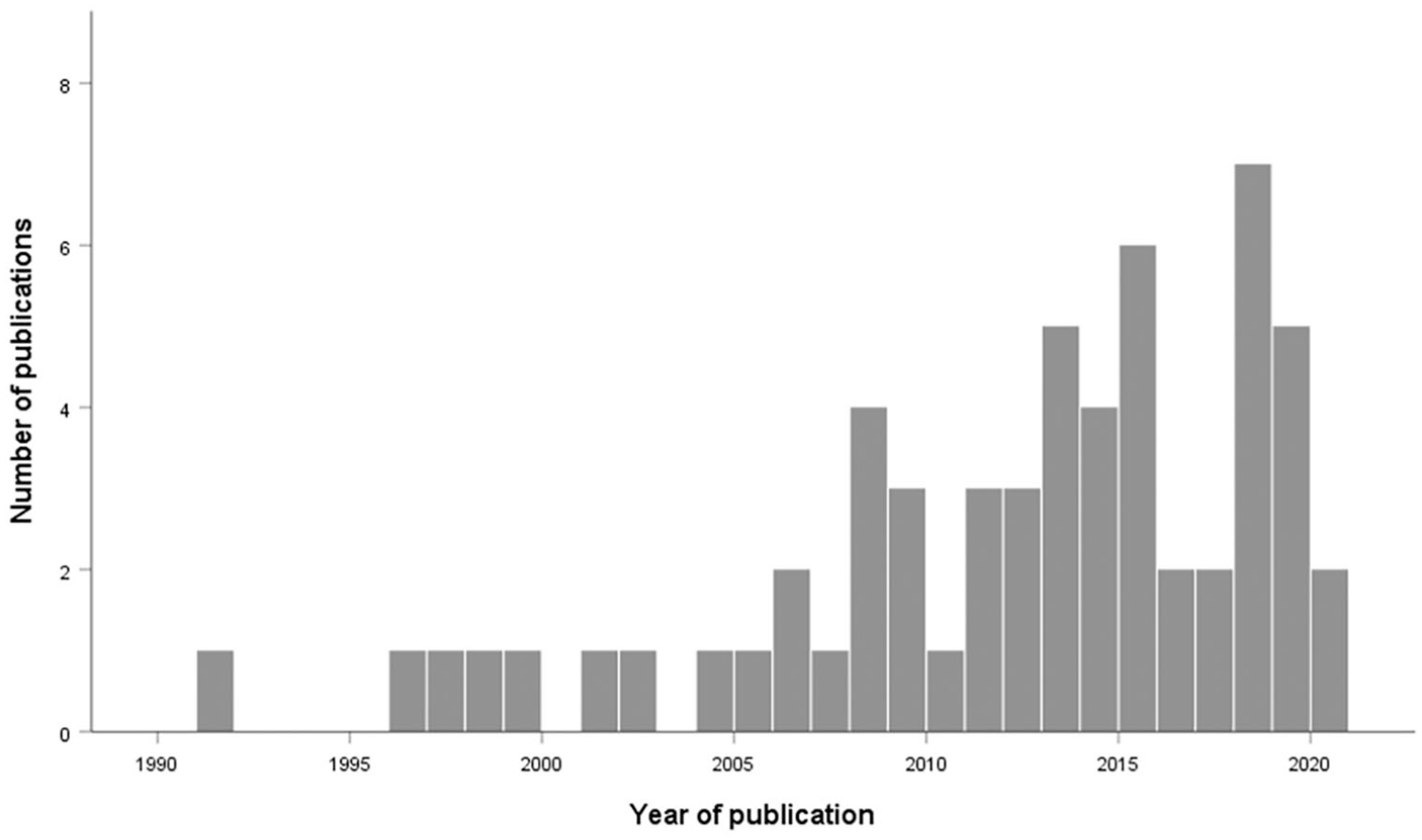

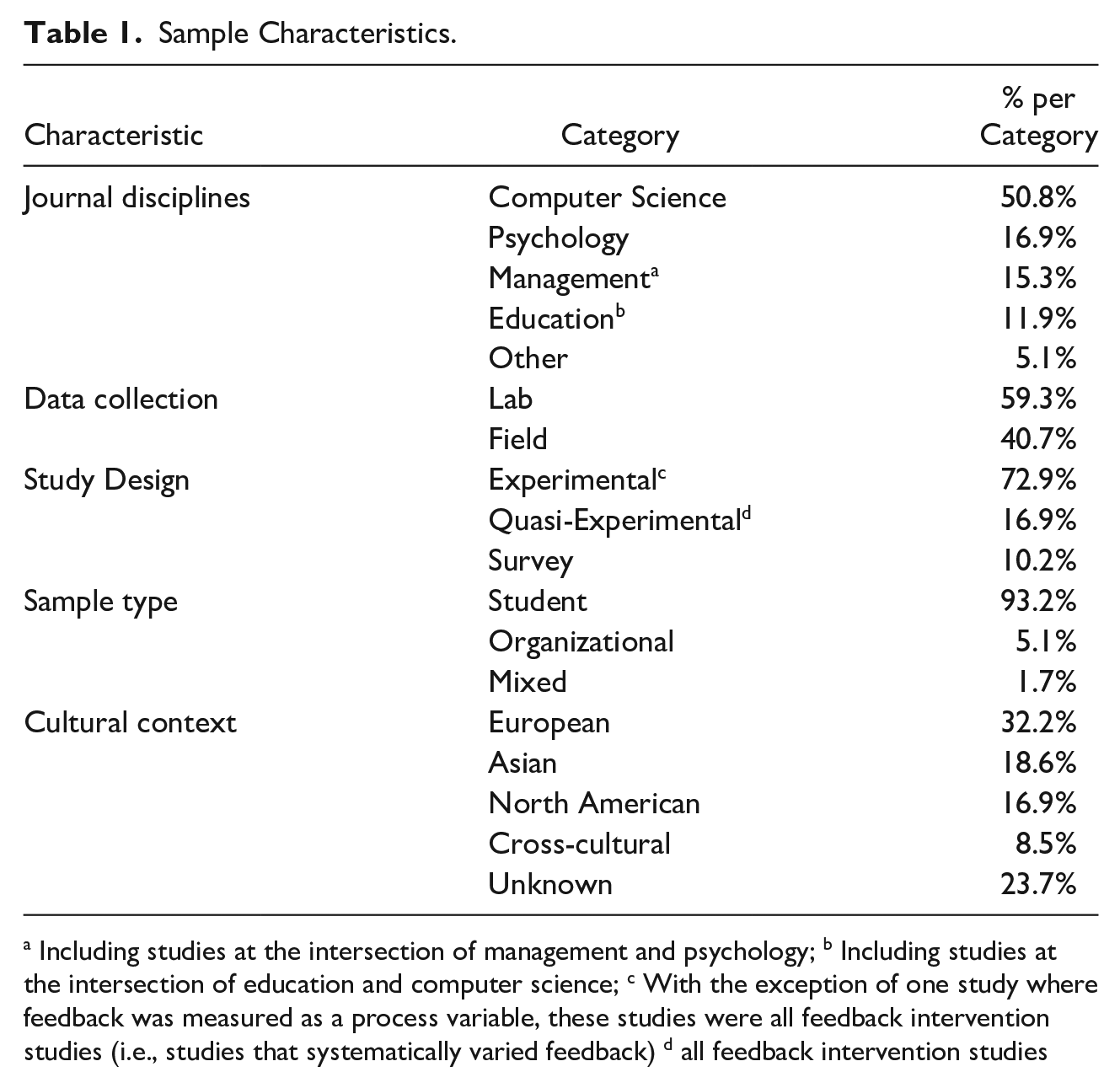

Our final review sample of 59 studies were published between 1991 and 2020. Figure 2 depicts a histogram of the reviewed publications sorted by year, showing an increasing trend over time. Leaning on Gibbs et al. (2017), we classified our studies based on these sample characteristics: journal discipline, data collection (field vs. lab), study design (experimental vs. quasi-experimental vs. survey), sample type (organizational vs. student), and geographic context within which these studies were conducted. See Table 1 for an overview of these sample characteristics.

Distribution of reviewed feedback and team virtuality studies of publication between 1990 and 2020.

Sample Characteristics.

Including studies at the intersection of management and psychology; b Including studies at the intersection of education and computer science; c With the exception of one study where feedback was measured as a process variable, these studies were all feedback intervention studies (i.e., studies that systematically varied feedback) d all feedback intervention studies

The reviewed studies represented various disciplines, with the majority (k = 30 studies) originating in computer science. Of the 59 studies, 52 were feedback intervention studies (i.e., studies that manipulated feedback). The remaining seven studies (six field survey studies and one lab experiment) looked at the effects of feedback but did not systematically manipulate it. Of the intervention studies, 34 were lab studies and 18 were field-based quasi-experiments. We defined lab studies as experiments (i.e., independent variables are systematically manipulated; participants are randomly assigned to conditions) with ad-hoc groups working within a setting under the researchers’ control, often with the unintended consequence that the team/group activities are somewhat unrealistic (e.g., Podsakoff & Podsakoff, 2019; Purvanova, 2014). In contrast, field studies investigated target behaviors in the teams’ natural environment, with teams working on complex, cross-functional issues over longer periods (e.g., Alvero et al., 2001; Purvanova, 2014). Following Gibbs et al. (2017), we coded classroom studies (i.e., studies where students work on projects as part of regular, graded class activities) as field studies because these projects often lasted several weeks or months with teams engaging in a series of complex and meaningful tasks. Field studies could be non-experimental, experimental, or quasi-experimental (see Podsakoff & Podsakoff, 2019).

Only four of the reviewed studies drew on organizational samples (with one study consisting of both students and employees). Student teams are typically artificially composed and work on the task for course credit or in the context of class assignments. In contrast, organizational teams are teams of employees working on ongoing, paid work assignments (Gibbs et al., 2017; Purvanova, 2014). In our review sample, the organizational teams did not necessarily consist of team members that usually worked together but that potentially came together from various organizations to participate in the study. For instance, two of these were employees that participated in the experiment because it was embedded into a developmental seminar (Hiltz et al., 1991; Michinov & Primois, 2005). With regards to the cultural context, the majority (k = 19) of the reviewed studies were conducted in European countries. Only five studies drew on cross-cultural samples, such as in the context of the X-Culture international consulting competition (McLarnon et al., 2019; Tavoletti et al., 2019).

Finally, the reviewed studies showed large variations regarding their sample sizes. On the team/group level, sizes ranged from only two teams to as many as 1,839 teams (M = 85.36; Md = 36; SD = 259.42). Only five studies analyzed interaction effects among different levels (or conditions) of virtuality and feedback. The remainder investigated feedback in virtual teams only. Team-level sample sizes, setting (i.e., virtual; virtual vs. face-to-face), study design, and data collection are noted for each study in the Appendix.

Key Definitions

Team virtuality

Even though team virtuality has been considered as a multidimensional construct, involving dimensions such as technology use, geographic dispersion, national diversity (e.g., Foster et al., 2015; Schulze & Krumm, 2017), the most common and distinct feature in our review is the extent to which teams have to rely on communication technologies (e.g., Ganesh & Gupta, 2010; Hoch & Kozlowski, 2014; Kirkman & Mathieu, 2005). Accordingly, technology reliance constitutes the conceptual foundation adopted in this review (i.e., virtual teams rely strongly or even exclusively on technologies to communicate and coordinate their actions, Dixon & Panteli, 2010; Kirkman & Mathieu, 2005). For the sake of simplicity, we contrast virtual teams against traditional or face-to-face teams, as this approach is consistent with the dichotomy that dominates the extant literature (see e.g., Handke et al., 2020; Schulze & Krumm, 2017).

Team feedback

Team feedback is commonly defined as information provided to a team to increase team performance (Geister et al., 2006; see also Earley et al., 1990; Kluger & DeNisi, 1996). Moreover, team feedback constitutes information that is given in a team setting (to team members individually or the team as a whole), as opposed to feedback considering individuals independently of the collaborative context (see also Gabelica et al., 2012). However, this widely applicable definition gives little indication as to what type of information constitutes feedback and how this may contribute to an improvement in team performance. As posited by self-regulation theory (Carver & Scheier, 1998), human behavior is guided by the desire to reduce discrepancies between current and desired states (i.e., goals). In this context, providing teams with feedback enhances a shared awareness of their current state, which is then compared to the team’s goals or desired states, in theory resulting in a coordinated effort to reduce discrepancies (Kozlowski et al., 1996; Park et al., 2013; Schmidt & DeShon, 2007; Vancouver et al., 2010).

To reduce these discrepancies, teams benefit from information about the outcomes of their activities but also to antecedent factors (i.e., input variables) that constrain or enable the team’s interactions as well as the processes and psychological states that convert inputs to outcomes (i.e., mediating variables; e.g., Mathieu et al., 2008, 2017, 2019). More specifically, we define team feedback as information provided to the team regarding events, features, processes, and psychological states relative to task completion or teamwork, as well their resulting outcomes, to guide the team’s future activities (see also Gabelica et al., 2012; Nadler, 1979).

Team effectiveness

Team effectiveness is typically studied using IMO frameworks, which distinguish between team inputs, team mediators, and team outcomes (e.g., Ilgen et al., 2005; Mathieu et al., 2008; McGrath, 1984). Team inputs are defined as “antecedent factors that enable and constrain members’ interactions” (Mathieu et al., 2019, p. 18; e.g., team work design). Team mediators “explain why certain inputs affect team effectiveness” (Ilgen et al., 2005, p. 519) and pertain to team processes (i.e., team interactions, e.g., communication) as well as team emergent states (i.e., psychological states correlating with team interactions, e.g., cohesion). Team outputs are the results but also by-products of team activities, which can encompass performance but also affective reactions (e.g., team viability; see Mathieu et al., 2008). The IMO model serves as a guidance for developing our review framework which we describe further below.

Review Framework

We organized our findings into an overall guiding framework. Figure 1 shows that team feedback affects virtual team effectiveness. Specifically, our framework allows us to classify the reviewed studies based on (1) their feedback characteristics, (2) an overall assessment of feedback effectiveness; and (3) their outcomes in terms of components of virtual team effectiveness. In the following, we will present the different elements of our framework and explain our coding process.

Feedback characteristics

As feedback can vary in certain characteristics which can influence the effect feedback has on team effectiveness, studies were classified based on three characteristics identified in the feedback literature: feedback content, source, and level (e.g., Alvero et al., 2001; Balcazar et al., 1985; Gabelica et al., 2012; London & Sessa, 2006). We reviewed the literature to identify the most commonly distinguished feedback characteristics. At the same time, we considered which aspects would most likely offer a meaningful distinction among the reviewed studies. For instance, categories such as feedback medium (Alvero et al., 2001; labeled feedback mechanism by Balcazar et al., 1985), would have shown little variance in the reviewed studies as almost all of them communicated feedback through some type of graph/visualization. Our objective was thus to identify feedback characteristics that would be both broad enough to be easily applied to the reviewed studies but also narrow enough to retain as much specificity of findings as possible. The result of this combination approach were three distinct feedback characteristics, outlined in detail below. While further feedback characteristics (e.g., feedback valence, temporal delay between target behaviors/perceptions and feedback, expecting but not necessarily receiving feedback, feedback visualization design) appeared to influence the effect of feedback in some of the reviewed studies, these characteristics rarely varied, so that they would not have offered a meaningful distinction between the reviewed studies.

Feedback content

We extend previous classifications (which sometimes identify feedback type or feedback purpose, e.g., Gabelica et al., 2012; London & Sessa, 2006) by defining feedback content as the primary focus of feedback. The literature has traditionally distinguished two different content areas: task/outcome/performance feedback and process feedback (e.g., Gabelica et al., 2012; Nadler, 1979). While outcome feedback reflects information about task performance itself, process feedback reflects how the task was performed. To align our team feedback definition above with existing team effectiveness frameworks (IMO model, e.g., Ilgen et al., 2005; Mathieu et al., 2008), we propose that feedback can be given with regards to team inputs, mediators (i.e., team processes, emergent states) as well as outcomes. Accordingly, we coded feedback content based on the following classification: Team input feedback describes information given about antecedent features that enable or constrain team members’ interactions. Team mediator feedback reflects information about interdependent activities and psychological states relative to task completion or teamwork. Finally, team output feedback constitutes information concerning results and valued by-products of team activities.

Feedback source

Team feedback can originate from different sources (e.g., Carter et al., 2019; London & Sessa, 2006). At this stage, we note that the term feedback source is often confounded with the various agents that present feedback. However, especially in the virtual context, the source of information (e.g., a co-worker) is often not the same as the agent presenting it (e.g., a communication technology) or the mechanism by which it is presented (e.g., verbally, written, or visual). Thus, we define feedback source as the extent to which feedback originates from subjective perceptions, opinions, or judgments or—inversely—from more objective measures (see also Balcazar et al., 1985; London & Sessa, 2006; Rotem & Glasman, 1979). Accordingly, our coding scheme differentiated between two categories of feedback source: Subjective and objective feedback. Subjective feedback constitutes information about perceptions, opinions, or judgments of features, processes, and psychological states relative to task completion or teamwork in order guide the team’s future activities (see Morgeson & Humphrey, 2006). Objective feedback, on the other hand, describes information on task completion or teamwork stemming directly from actions and events in the team environment or team members’ behaviors, leaving the process of judgment either to the recipient or a predefined algorithm. A predefined algorithm could be the calculation of participation equality based on team members’ contributions (number of keystrokes, number of messages), for instance, but it could also be the comparison of decisions based on predefined correct/optimal solutions.

Feedback level

Feedback in teams may also vary according to the level of the recipient (Gabelica et al., 2012; London & Sessa, 2006). In accordance with previous classifications (e.g., DeShon et al., 2004; Gabelica et al., 2012), we coded studies as either individual-level, team-level, or team- plus individual-level feedback. Individual-level feedback described information that targets only individual team members, whereas team-level feedback targets the team as a whole. Team-plus individual-level feedback, in turn, targets both the team and its individuals simultaneously, which means that team members obtain information about themselves as well as how they relate to others at the same time. For example, team-plus individual-level feedback could be a bar graph where each team members’ rating (of, e.g., motivation) is represented by a separate bar, making it possible to compare oneself with all other team members. Alternatively, ratings by other team members could also be aggregated to one score, thereby enabling comparisons between oneself and the team as an entity.

Overall assessment

To ease interpretation of feedback effects, we classified all studies based on their overall assessment. Leaning on Gabelica et al. (2012) we thus speak of (a) positive effects, when feedback was associated with uniformly positive effects on measured outcome variables (++); (b) partially positive effects, when feedback was associated with positive effects on some outcome variables and no effect on others (+); (c) mixed effects, when feedback was associated with positive effects on some dependent variables and negative effect on others (+/−); (d) partially negative effects, when feedback was associated with negative effects on some outcome variables and no effect on others (−); (e) negative effects, when feedback was associated with uniformly negative effects on measured outcome variables effects (−−); and (f) no effects, when feedback did not result in any changes with regards to the dependent variables (NE).

Components of virtual team effectiveness

In accordance with input-mediator-output (IMO) models of team effectiveness (Campion et al., 1993; Ilgen et al., 2005; Mathieu et al., 2008), we differentiated the measures of feedback effects employed in the reviewed publications into team inputs, team mediators, or team outputs. Examples for team inputs include work design (e.g., team member’s workload) or team members’ knowledge, skills, and other characteristics (KSAOs) relevant to task- or teamwork (e.g., team members’ preferences for a certain decision outcome). Examples for team mediators are team processes, such as communication or coordination as well as team emergent states, such as motivation or team mental models. Team outputs typically encompassed team or individual performance (toward a team goal), such as the quantity/quality of generated ideas or task completion time.

Coding Process

To analyze the reviewed articles, the first author went through all the studies to extract information relevant to categorization (e.g., information on feedback operationalization, dependent variables; comparable to first-order analysis, attribute coding, and magnitude coding in qualitative research, e.g., Gioia et al., 2013; Saldana, 2013). Subsequently, both the first and second author went through the information to categorize the reviewed studies with regards to the three feedback characteristics (content, source, and level). Any discrepancies were discussed and consensus was achieved through jointly refining the definitions of the three feedback characteristics and their respective categories as detailed above (see also Gioia et al., 2013). Classification of the components of virtual team effectiveness and the overall assessment was performed jointly by the first and the second author.

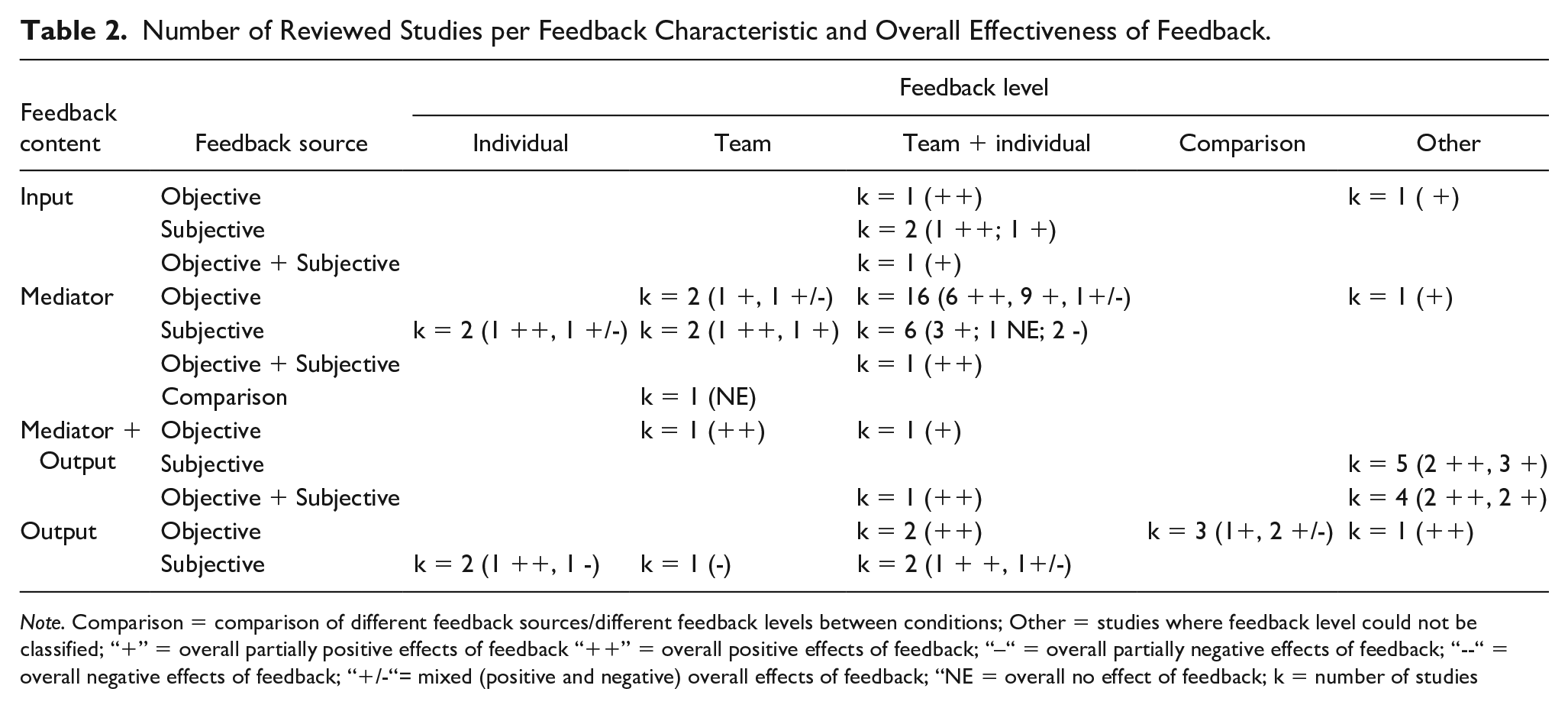

During the coding process, we also found studies that we could not classify within our coding scheme (see Table 2, column Other). Many of these included studies in which feedback levels differed depending on the type of information that was given. For instance, this could mean that team members were given team-level feedback with regards to one construct (e.g., team-level aggregates of team process perceptions) and team-level plus individual feedback with regards to another construct (e.g., evaluations of all team members’ unique contributions in a presented in a joint document). Accordingly, the unique contributions of the different feedback levels to team outcomes could not be distinguished in these studies.

Number of Reviewed Studies per Feedback Characteristic and Overall Effectiveness of Feedback.

Note. Comparison = comparison of different feedback sources/different feedback levels between conditions; Other = studies where feedback level could not be classified; “+” = overall partially positive effects of feedback “++” = overall positive effects of feedback; “–“ = overall partially negative effects of feedback; “−“ = overall negative effects of feedback; “+/-“= mixed (positive and negative) overall effects of feedback; “NE = overall no effect of feedback; k = number of studies

Results

We provide a structured overview of how team feedback affects team outcomes within the context of virtuality by using the review framework depicted in Figure 1 to unpack the findings in the reviewed studies. To do so, we consider each team feedback characteristic (beginning with feedback content) with its underlying categories separately. Specifically, we first give examples of how the respective feedback characteristics were operationalized in the reviewed studies and give some higher-level background information on study designs and/or team types commonly employed in these studies. Second, we present the overall assessment of feedback effectiveness reported in the reviewed studies. Third, we detail how feedback impacted different components of virtual team effectiveness. Table 2 gives an overview of the reviewed studies per feedback characteristic and their overall assessment.

Feedback Content

Team input feedback

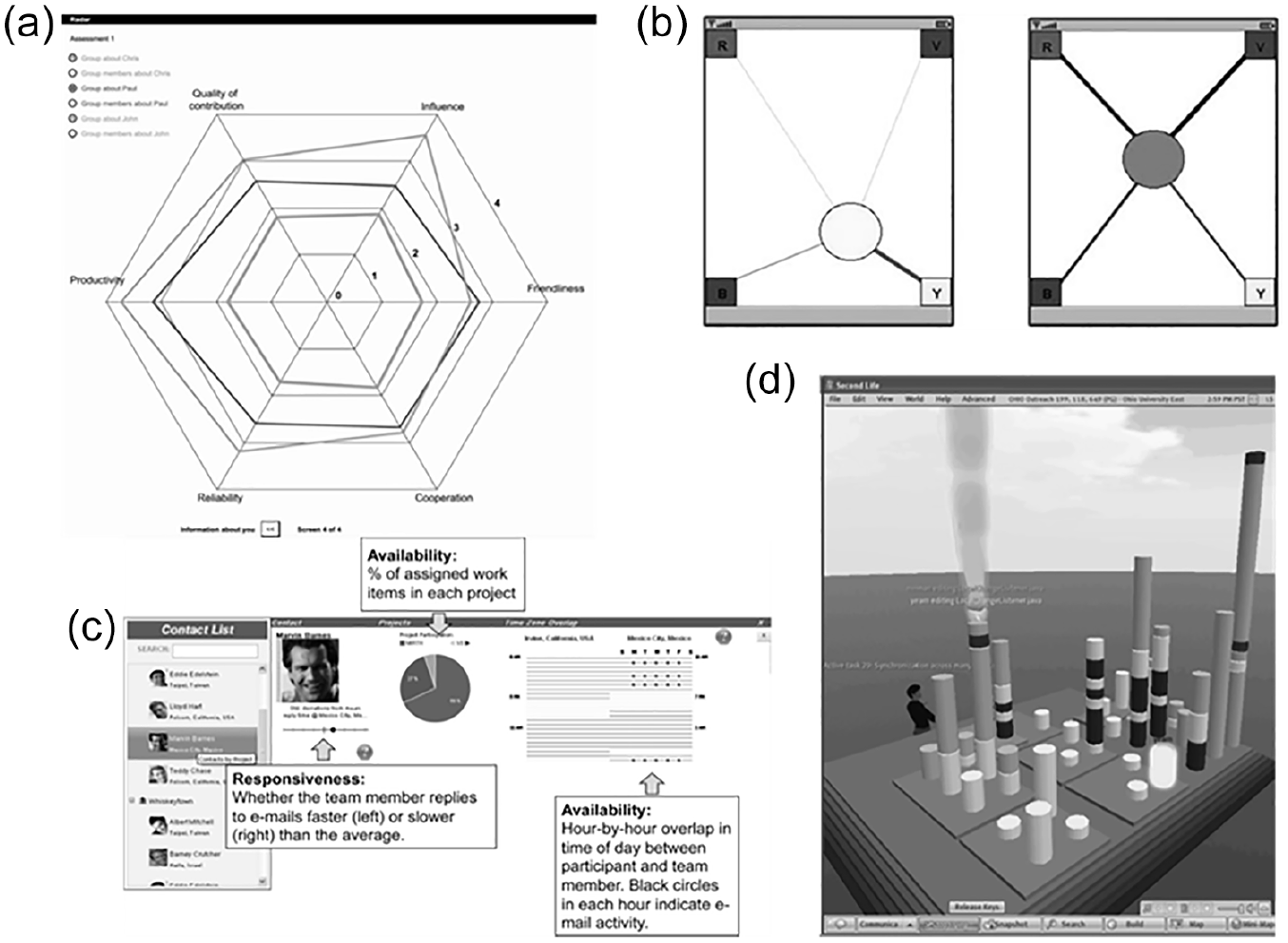

We found only five studies evaluating the effect of input feedback (i.e., Hong et al., 2018; Jongsawat & Premchaiswadi, 2014; Romero et al., 2009; Sonderegger et al., 2013; Trainer & Redmiles, 2018). These studies typically operationalized input feedback in form of visualizations reflecting information such as team members’ work schedules (Romero et al., 2009; Trainer & Redmiles, see Figure 3c) or pre-discussion preferences (Hong et al., 2018). Overall, all five studies drew on feedback interventions (i.e., systematic variations of feedback), and four of these were lab studies.

Exemplary feedback operationalizations.

All studies showed (partially) positive overall effects (see Table 2; positive effects: k = 2, ++; partially positive effects: k = 3, +). Three studies showed that input feedback resulted in increased positive team emergent states (i.e., group awareness, which was broadly defined as consciousness and information of various aspects of the group and its members; Jongsawat et al., 2014). One interesting finding is that input feedback could also result in improvements in factors that serve as inputs for future team performance episodes. Specifically, one study which varied both levels of team virtuality and levels of team feedback showed that feedback about inputs improved workload for virtual teams, but not for the non/low virtuality (i.e., face-to-face) teams (Sonderegger et al., 2013). That is, virtual teams who received input feedback (team members’ emotional states before collaboration) reported a lower workload in comparison to virtual teams that received no input feedback. In contrast, face-to-face teams that received input feedback reported a higher workload than face-to-face teams without feedback.

Team mediator feedback

We identified 31 studies evaluating the effects of feedback about team processes and psychological states (i.e., mediator feedback). Team mediator feedback was typically operationalized by graphs, which varied in size to reflect relative or absolute levels of team processes or states (e.g., Konradt et al., 2015; Leshed et al., 2009). The majority of these studies were lab experiments (k = 16), followed by field quasi-experiments (k = 7), field experiments (k = 7), and one survey study.

Most of the reviewed studies showed overall (partially) positive effects (positive effects: k = 9, ++; partially positive effects: k = 15, +) for mediator feedback. Typically, mediator feedback in virtual teams was shown to improve key team processes, such as team coordination (Meyer & Dibbern, 2012), team interactions and team learning (Lin & Tsai, 2016; Ma et al., 2020), cohesion (Lin & Tsai, 2016), cooperation (yet only for virtual, not face-to-face teams, Kim et al., 2012), or participation equality (Margaritis et al., 2006). Mediator feedback was also shown to improve psychological states, such as group awareness (Janssen et al., 2011; Meyer & Dibbern, 2012), cohesion (Kahai et al., 2012), and team mental models (Ellwart et al., 2015). We identified nine studies that hypothesized and confirmed positive effects for mediator feedback on team performance (e.g., Geister et al., 2006; Hollenbeck et al., 1998; Krancher et al., 2018; Liu et al., 2018). In some studies, mediator feedback enhanced positive associations between team processes (e.g., communication) and performance (e.g., Krancher et al., 2018; McLarnon et al., 2019).

Very few studies (mixed effects: k = 3, +/−; partially negative effects: k = 2, −) found negative effects of team feedback on the dependent variables (e.g., productivity/participation, Hiltz et al., 1991; Tavoletti et al., 2019; or team performance, Hiltz et al., 1991). However, some of these studies also suggest that the negative effects of feedback depend on how it was delivered. For instance, Kahai et al. (2012) found that feedback positivity (i.e., “positivity in the sentiments and attitudes expressed toward the input provided by group members,” p. 718) impeded team decision quality; additionally Glynn et al. (2001) found that team performance was only impaired when feedback was given with a temporal delay; without this delay, performance could actually be improved.

Team output feedback

We identified 11 studies evaluating the effects of output feedback (e.g., Jung et al., 2010; Wang et al., 2019). In terms of operationalization, team output feedback typically gave a count of correctly solved tasks (e.g., DeShon et al., 2004; Suleiman & Watson, 2008). Nine of these were lab studies, one was a field experiment, and one was a field quasi-experiment.

Overall, findings appear mixed. Six studies produced (partially) positive effects (positive effects k = 5, ++; partially positive effects: k = 1, +), three produced mixed (k = 3, +/−) effects, and two studies produced partially negative effects (k = 2, −). Regarding the positive effects, four studies found positive associations between output feedback and team performance (i.e., DeShon et al., 2004; Jung et al., 2010; Roy et al., 1996; Suleiman & Watson, 2008). In addition, reviewed studies also showed positive effects for output feedback for improving team mediators, such as communication (Buder & Bodemer, 2011), higher engagement in social interactions (Wang et al., 2019), and motivation (Hertel et al., 2008). However, a marginally significant interaction effect in Hertel et al.’s (2008) study suggests that motivation gains were slightly higher for face-to-face compared to virtual teams.

Regarding negative effects, five studies reported negative relationships between output feedback and team trustworthiness/trust (Jaakson et al., 2019), workload (Ostrander et al., 2020), participation (Buder & Bodemer, 2011), social loafing (Suleiman & Watson, 2008), and team performance (Marler & Marett, 2013). In sum, the effects of output feedback appear inconsistent, suggesting that they may depend on other features, such as the context in which feedback is administered in (e.g., was feedback used for a task in which team members focused on differences rather than similarities; Buder & Bodemer, 2011), how feedback was administered (e.g., whether feedback was delivered to the team or individuals, DeShon et al., 2004; Suleiman & Watson, 2008), and feedback valence (i.e., positive vs. negative feedback, Jaakson et al., 2019).

Combinations of feedback content

We identified twelve studies, which combined feedback about processes (and/or psychological states) with feedback about team performance (e.g., Peñarroja et al., 2015, 2017; see Table 2, Column mediator + output). Combinations of process and performance feedback were often provided by giving participants the possibility to monitor what remote team members were doing, or measuring how much online group work was extensively shared and discussed (i.e., process information) in combination with information/feedback on how well everyone was performing (i.e., feedback on performance from a supervisor/instructor). Most of these were laboratory studies (k = 6), followed by correlative field studies (i.e., survey studies, k = 5) and one quasi-experimental study.

All of these studies showed (partially) positive effects of team feedback (positive: k = 6, ++; partially positive: k = 6, +). Positive associations were found for outcomes such as time management, emotion management, and motivation management that are critical skills in collaborative online groupwork (Xu et al., 2013a, 2013b, 2014, 2017). Moreover, combined feedback also showed to be positively associated with cohesion, information elaboration, team learning (Peñarroja et al., 2015, 2017), and functional conflict management (Martínez-Moreno et al., 2015). Our literature search also identified some contextual moderators that enhance the positive effect of process-and-performance feedback, such as a high level of trust (Peñarroja et al., 2015) or the team’s openness to new experiences (Sanchez et al., 2018). Interestingly, Sanchez et al. (2018) showed that virtual teams with members who are less open to novel experiences benefit more from combined feedback interventions through a positive effect on team cohesion. The authors argued that process-and-performance feedback in these “less open-minded” (p. 145) virtual teams could help team members to take on new perspectives and learn from their peers.

Feedback Source

Subjective feedback

From the 59 studies, we identified 22 studies providing a form of subjective feedback to the virtual teams (and their respective members). Studies drawing on subjective sources typically used visualizations, such as bar graphs (e.g., Geister et al., 2006; Hong et al., 2018), line graphs (Phielix et al., 2011; Schoor et al., 2014; see Figure 3a), or scatter plots (e.g., Buder & Bodemer, 2008; Puhl et al., 2015). Some studies drew on verbal feedback directly from other team members or the instructor (e.g, Marler & Marett, 2013; Xu & Du, 2013). These studies were distributed fairly evenly across the different research designs, with a little less than half of them conducted in a laboratory (k = 9), and the rest in a field setting (survey studies: k = 5; field quasi-experiments: k = 5; field experiments: k = 3).

Overall, most of these studies reported (partially) positive effects (positive: k = 7, ++; partially positive: k = 8+). Regarding positive effects, the reviewed studies showed that subjective feedback was positively associated with reflection (Konradt et al., 2015), minority influence (Buder & Bodemer, 2008), participation (Puhl et al., 2015), coordination (McLarnon et al., 2019), and performance (Geister et al., 2006). Some studies showed interaction effects between subjective feedback and levels of virtuality. For example, Sonderegger et al. (2013) showed that subjective feedback (e.g., information about participants’ self-reported mood) was more conducive for virtual teams’ perceived workload and performance in comparison to non-virtual teams interacting in a face-to-face condition.

However, we also identified four studies that reported partially negative effects for subjective feedback on team mediators and team performance outcomes. Among these studies, Tavoletti et al. (2019) used a quasi-experimental design and evaluated the effect of peer evaluations in an impressive sample of 895 transnational global virtual teams throughout an entire 10-week project and showed that under the subjective feedback condition, virtual teams showed lower levels of average productivity and motivation, and no clear evidence of improved team performance. The authors discuss how subjective feedback (in particular when it originates from peers) can produce counter-productive team dynamics because “group members have an incentive to intentionally distort their evaluations of peers downward as a way of enhancing their own relative performance rating” (Tavoletti et al., 2019, p. 336)

Objective feedback

We found 29 studies drawing only on objective feedback sources. Objective feedback was presented in form of (1) node graphs (typically employed in social network analysis to depict the structure of team interactions; e.g., Gutwin & Greenberg, 1999; Janssen et al., 2011); (2) icons giving information on team members’ attributes and behaviors, such as time allocation to the team project (Romero et al., 2009), responsiveness to emails (Trainer & Redmiles, 2018), and files currently worked on (Ye et al., 2018, see Figure 3d); or (3) displays of team members’ contributions (e.g., Michinov & Primois, 2005; Ostrander et al., 2020). Two-thirds of these studies were conducted in the lab (k = 20). The remainder were three field quasi-experiments, five field experiments, and one survey study.

Nearly all these studies reported partially positive (k = 14, +) or positive (k = 11, ++) effects on measured outcome variables. Many studies reported positive effects of objective feedback on team performance (such as the number and quality of generated ideas, e.g., Jung et al., 2010; Michinov & Primois, 2005), followed by positive effects for objective feedback on team processes (e.g., participation, Castro-Hernandez et al., 2014; Martino et al., 2009) or team emergent states, such as group awareness (Janssen et al., 2011; Ye et al., 2018).

Four studies (e.g., Kahai et al., 2012; Ostrander et al., 2020) reported mixed effects, meaning that some outcome variables were negatively associated with objective feedback. For instance, one study found negative effects of objective feedback on perceived workload (Ostrander et al., 2020). The authors evaluated the effects of objective feedback for 16 pairs of learners working on a collaborative problem-solving task in a virtual environment. Specifically, during collaboration, team members’ actions were automatically assessed as being above, at, or below expectations. The results of this study revealed a possible unintended consequence, with team-level objective feedback contributing to higher levels of perceived workload (self-reported frustration and task load) than in the control condition. These results suggest that processing feedback during taskwork could constitute an excessively high cognitive demand.

Finally, one quasi-experimental field study directly contrasted the effects of objective against subjective feedback but found no effects on team communication (Borge & Rosé, 2016).

Combinations of feedback sources

We found seven studies combining subjective and objective feedback. For example, in a series of publications using the same sample and experimental procedure (Martínez-Moreno et al., 2015; Peñarroja et al., 2015, 2017; Sanchez et al., 2018), participants were given combined objective-and-subjective feedback. Subjective feedback used team members’ perceptions of key team processes (e.g., planning, coordination), which were displayed graphically. For objective feedback, participants received a document that showed individual and team performance scores provided by experts. All of these studies combining subjective and objective feedback drew on quasi-experimental or experimental designs (lab experiments: k = 6; field quasi-experiment: k = 1).

All of these studies reported (partially) positive effects (positive: k = 4, ++; partially positive: 3+). Specifically, the four related articles reported positive effects of feedback on different team mediators (i.e., team learning, Peñarroja et al., 2015), functional conflict management (Martínez-Moreno et al., 2015), cohesion (Sanchez et al., 2018), and reduced social loafing (Peñarroja et al., 2017). The other studies combining two feedback sources revealed further positive effects on team mediators (participation and team learning, Lin, 2018) and team performance (Hollenbeck et al., 1998; Jongsawat et al., 2014).

Feedback Level

Individual-level feedback

In four studies, feedback for virtual teams was exclusively provided at the individual level. In terms of feedback operationalization, individual-level feedback typically consisted of reports reflecting peer evaluations (e.g., McLarnon et al., 2019; Tavoletti et al., 2019).Three studies drew on peer assessments in field settings (i.e., field quasi-experiments) and one study was a laboratory study using a confederate to give feedback about individual contributions (Marler & Marett, 2013).

Overall, findings appear inconclusive (positive: k = 2, ++; mixed: k = 1, +/−; partially negative: k = 1, −). For instance, individual-level feedback appeared beneficial for team processes such as participation (Wang et al., 2019) and team effort (Tavoletti et al., 2019). Conversely, regarding team performance, individual-level feedback showed no (Tavoletti et al., 2019) or even negative (Marler & Marett, 2013) effects. A reason for this may be that individual-level feedback alone may not suffice in enhancing team performance but can make team communication more focused, thereby improving team coordination and performance. Specifically, McLarnon et al. (2019) found that individual-level feedback enhanced the effect of communication frequency on process coordination (i.e., the sequence, timing, and integration of individual members’ work) as well as the effect of process coordination on team performance.

Team-level feedback

Seven studies employed feedback only on the team level, drawing exclusively on quasi-experimental or experimental procedures with student samples that were evenly distributed among lab and field settings (lab experiment: k = 4; field experiment: k = 1; field experiment: k = 2). Team-level feedback was operationalized in various forms, such as graphs depicting team-level aggregates to survey responses (e.g., motivation, Geister et al., 2006, or team mental models, Konradt et al., 2015), or displays of team actions (Henning et al., 1997) and overall project goal attainment (Hsieh & O’Neil, 2002).

Most of these studies uncovered (partially) positive effects of team-level feedback (positive: k = 2, ++; partially positive: k = 2+). Specifically, team-level feedback showed positive effects on team reflection (particularly when combined with guided team reflexivity, Konradt et al., 2015) and team performance (Geister et al., 2006; Henning et al., 1997; Hsieh & O’Neil, 2002), as well as conditional positive effects of team-level feedback of teams’ average motivation and satisfaction when teams already had high initial levels of motivation (Geister et al., 2006).

Finally, three studies contrasted the effects of individual- versus team-level feedback, showing more beneficial effects of team-level feedback on team performance (DeShon et al., 2004; Suleiman & Watson, 2008) and performance awareness (i.e., accuracy in assessing own performance; Ostrander et al., 2020). Specifically, DeShon et al. (2004) employed a PC-based simulation of a radar-tracking task where team members needed to attend to tasks specifically assigned to them (thereby also promoting individual goal attainment) and could also help out other team members to contribute toward the collective goal. However, the task was also designed to systematically overload all team members, so that eventually team members would have to prioritize whether to attend to their own goal attainment or that of the team. Results showed that participants receiving individual-level feedback displayed the highest levels of individual performance at the end of the experiment, whereas team members that received only team-level feedback showed the highest team-oriented performance. Interestingly, team-level feedback also appeared to be more beneficial for team performance than team plus individual-level feedback, leading to the conclusion that the best effect on performance occurs “when team members received a single, focused source of feedback” (DeShon et al., 2004; p. 1051).

Team- plus individual-level feedback

The majority of all reviewed studies (k = 33) employed feedback that targeted both the team and its individuals, thereby enabling individual team members to gain not only a representation of their own behavior, perceptions, and performance but also how these relate to other team members. Two-thirds of these (k = 21) were conducted in a laboratory setting. Apart from one survey study, the remaining field studies were distributed evenly over quasi-experimental (k = 6) and experimental (k = 5) procedures. Team- plus individual-level feedback was typically presented in the form of graphs where each team member (or their contribution) is represented by a symbol varying in color, size, or location, thereby indicating how they related to other team members’ relative contributions (e.g., Kim et al., 2012, see Figure 3b; Leshed et al., 2009; Lin, 2018) or self-reported psychological states (e.g., affect, Sonderegger et al., 2013; motivation, Schoor et al., 2014).

Nearly all of them showed partially positive (k = 15, +) or positive (k = 13, ++) effects of feedback. Most studies found positive effects on team mediators such as participation (e.g., Castro-Hernandez et al., 2014; Kim et al., 2012; Liu et al., 2018), group awareness (Janssen et al., 2011), coordination (Kim et al., 2008), team learning (Lin, 2018), team mental models (Ellwart et al., 2015), and team reflection (Leshed et al., 2009). Moreover, studies also found positive effects on team performance (e.g., Jongsawat & Premchaiswadi, 2014; Jung et al., 2010).

However, there were also studies reporting negative effects of team- plus individual-level feedback on participation (i.e., number of contributions, Buder & Bodemer, 2011; Chavez & Romero, 2014; Hiltz et al., 1991) and decision quality (Hiltz et al., 1991; Kahai et al., 2012). A possible explanation for the inconsistencies regarding the effect on participation (i.e., number of contributions) might be that all studies that found negative effects used subjective feedback, whereas positive effects were found for objective feedback. Subjective feedback requires team members to make assessments (e.g., about each other’s contribution quality), whereas objective feedback does not (as it relies on automated mechanisms). Accordingly, subjective feedback requires more time and effort, which could otherwise be allocated to actual taskwork. A further interesting finding emerged from Kimmerle et al. (2007), who further unpacked the best ways to provide team- plus individual-level feedback. In one condition, participants received individual feedback about their own contribution only in comparison to a team-level average (i.e., no information on the individual contributions of the other team members was available to them). In the other condition, individual contributions were shown separately for each team member. In the latter feedback condition (as opposed to the average team feedback condition), team members cooperated significantly more than in the control condition without any feedback. The authors conclude that “it is not feedback about cooperative co-workers per se that increases the willingness to cooperate; the possibility for self-presentation must also be available” (Kimmerle et al., 2007, p. 906). Accordingly, a particularly motivating aspect of team- plus individual-level feedback may be that it allows personal contributions to be visible, thereby enhancing accountability (O’Neill, Boyce et al., 2020).

Discussion

The purpose of this review was to understand whether and how team feedback affects virtual teams. To address this question, we reviewed 59 studies that analyzed the effects of team feedback for virtual teams, and differentiated between three team feedback characteristics (content, source, and level). Our findings contribute to the broader science of virtual teams, particularly given the COVID-19 pandemic and the likelihood that virtual work will continue to persist post-pandemic. In the following, we first present our key findings and relate them to the general (team) feedback literature. The later sections are devoted to discussing both the theoretical and practical implications of our findings for virtual teams specifically as well as avenues for future research.

Overall, the current review uncovered a large number of (partially) positive effects of feedback. These findings are consistent with Gabelica et al.’s (2012) findings for teams in general, suggesting a similar overall trend for virtual as well as face-to-face teams. The majority of the studies we reviewed (k = 38) found positive effects particularly for team mediator outcomes, with the most prevalent positively affected variables being participation (e.g., number of contributions made to discussion or team project) and group awareness. The second largest outcome category in terms of positive effects were team outputs (k = 14, all of which referred to team performance in terms of e.g., decision quality, error rate). However, contrary to Gabelica et al.’s (2012) review, there were also several negative effects, which is more consistent with mixed findings from the general feedback literature (e.g., DeNisi & Kluger, 2000; Kluger & DeNisi, 1996; Lurie & Swaminathan, 2009). Ten studies found negative effects on some dependent variables; most of these were team performance. In general, negative effects were attributed to members attending to feedback (and possibly also giving it) during task performance, which interfered with directing cognitive resources toward task completion (e.g., Marler & Marett, 2013; Ostrander et al., 2020). Therefore, the timeliness of feedback with respect to team phases (Marks et al., 2001) may be integral to its effectiveness in virtual teams, and likely all teams (O’Neill et al., 2018; O’Neill, Boyce et al., 2020).

Regarding feedback content, providing virtual teams with feedback on their processes and emergent states (mediator feedback) appears to be beneficial for team processes, team emergent states, and team performance. Conversely, output feedback may be relevant particularly to virtual team performance (provided that it is given on the team level, see the corresponding paragraph). Interestingly, as opposed to mediator or output feedback alone, the combination of mediator and output feedback, yielded no negative effects and appeared particularly helpful for enhancing team processes, such as time management, conflict management, or team learning. These findings correspond to Nadler’s (1979) conclusion that while feedback on task performance (i.e., output feedback) is most likely to induce performance changes, process feedback (i.e., mediator feedback) is most effective when augmented with additional information on task performance (i.e., output feedback). Finally, very few studies employed input feedback; each of these revealed (partially) positive effects and appeared to be particularly promising for enhancing group awareness (i.e., the consciousness of various aspects of the group and its members).

Regarding feedback source, we identified differential effects for feedback containing information from objective versus subjective sources. Objective feedback, which stems directly from actions and events in the team environment or team members’ behaviors (leaving the process of judgment either to the recipient or a predefined algorithm), appeared to show the most consistently positive effects. Compared to subjective feedback, feedback from objective sources seemed particularly helpful not only for improving team processes and team psychological states but also for improving team performance. These findings align with theorizing from Kluger and DeNisi (1996), who suggest that feedback effectiveness decreases as the feedback recipient’s attention moves away from the task to the self. Specifically, when receiving feedback from other individuals (i.e., subjective feedback), recipients’ will focus their attention more toward nonfocal task processes, such as evaluating which implications it could have if they do not perform well or making judgments about the feedback giver. As a result, subjective feedback increases the salience of the feedback giver and may thus actually inhibit learning processes because recipients focus on receiving praise (or averting discouragement) or because they rely too strongly on guidance. Conversely, objective feedback could help recipients direct their attention toward the actual task and thereby enable learning through discovery (see also Parker et al., 2021).

Finally, regarding feedback level, we found more positive effects of team feedback when given on the team level, compared to the individual level. Similar to these findings for general (i.e., largely face-to-face) teams (e.g., Alvero et al., 2001; Hinsz et al., 1997), we presume that giving virtual team members team-level feedback helps them concentrate their efforts toward collective, rather than individual goals. This conclusion is supported by two of the reviewed studies that explicitly contrasted individual-level and team-level feedback within virtual teams (DeShon et al., 2004; Suleiman & Watson, 2008). However, our review also suggests that beneficial effects may be expected when individual- and team-level feedback are combined (i.e., team- plus individual-level feedback)—likely because it allows for self-presentation and social comparison—even if it is not yet clear whether this is significantly better than team-level feedback alone (DeShon et al., 2004).

Theoretical Implications

Our review suggests that team feedback is a crucial lever for virtual team success. Even though both team virtuality and feedback are considered relevant structural features for team effectiveness (e.g., Carter et al., 2019; Mathieu et al., 2008), no effort has yet been made to systematically integrate these two concepts. Moreover, even though extant literature has recognized that the effects of feedback vary between feedback type, source, and level, it has been unclear which approach may be particularly advantageous for virtual collaboration. Specifically, we found several important differences relative to reviews on general (team) feedback (Alvero et al., 2001; Balcazar et al., 1985; Gabelica et al., 2012; Nadler, 1979). As these prior reviews largely drew on individuals or teams working together under lower levels of virtuality than those in the reviewed studies, we believe that these differences are attributable to the virtual collaboration context.

First, mediator feedback was not only more frequently employed in the reviewed studies but also appeared to lead to relatively more positive effects than output feedback (cf. Gabelica et al., 2012; Nadler, 1979). We believe that a reason for these differences could be that mediator feedback is more important for virtual than for face-to-face teams because it gives them the information that they lack most. The lack of synchronicity, nonverbal communication cues, and shared context (e.g., due to time zone differences, high reliance on e-mails) contributes to an ambiguous work environment, making it particularly hard to grasp what team members are currently doing, thinking, and feeling (e.g., Geister et al., 2006; McLarnon et al., 2019). Moreover, communication in virtual teams is usually considered to be more task- than relationship-focused (Chidambaram, 1996), making it particularly helpful to obtain information about interpersonal processes and affective or motivational states. Accordingly, as shown by the consistently positive effects for feedback content combinations, supplementing output feedback with mediator feedback may be particularly relevant for virtual teams to effectively coordinate their efforts toward the team’s collective goal. This implication is further supported by two of the reviewed studies that specifically found that mediator feedback moderated the positive relationship between team processes, such as communication, and team performance (Krancher et al., 2018; McLarnon et al., 2019). These implications may also extend to input feedback, which has not been analyzed in prior research, but which is also likely to contain information that would be more easily observable in a face-to-face context (e.g., team members’ availabilities).

Second, as opposed to prior reviews on feedback in general (which speak of self-generated, mechanic, or computerized feedback; Alvero et al., 2001; Balcazar et al., 1985; Kluger & DeNisi, 1996), our review uncovered a high number of studies drawing on objective feedback sources. This may be because objective feedback is particularly easy to implement in the virtual team context, seeing as it can use data that stems directly from team members’ (inter)actions through technology (e.g., network graphs showing how contributions in the collaboration tool were distributed among team members) or directly integrated into the collaboration environment (e.g., team members’ calendars). As elaborated earlier, objective feedback also appears to be more beneficial in face-to-face environments as well, because it allows feedback recipients to concentrate their attention toward the task, rather than the self (Kluger & DeNisi, 1996. However, this effect could be even more relevant in virtual contexts, where individuals have been shown to compensate for the lack of social context cues (e.g., gestures, facial expressions) by attempting to extract as much relational information as they can from messages exchanged through technology (see social information processing theory, Walther, 1992). Accordingly, individuals may use subjective feedback to form impressions of the other team members, which is likely to be helpful at the beginning of virtual collaboration but which may shift the focus away from the focal task at crucial stages of task execution. Particularly when receiving output feedback, team members may thus be more likely to make judgments about the feedback giver, rather than exploring which strategies could contribute most toward improving team performance (see also Parker et al., 2021).

Third, our findings also differed from prior reviews concerning the level of feedback. Specifically, while prior reviews consistently found only a relatively small proportion of studies combining team and individual levels (Alvero et al., 2001; Balcazar et al., 1985; Gabelica et al., 2012), most of the studies we reviewed fell into this category (i.e., team- plus individual-level feedback). If feedback is integrated into the collaboration environment (i.e., presented by a communication technology), it can draw on predefined data aggregation algorithms and visualization templates. Accordingly, team- plus individual-level feedback may be particularly easy to implement within the virtual collaboration context because it does not require additional effort to give individual feedback in conjunction with team feedback. Similar to the face-to-face context, giving virtual teams team-level feedback is more beneficial for increasing team performance than if team members are given only individual-level feedback. Given the high uncertainty of what other team members are currently doing, thinking, and feeling in the virtual collaboration context, it seems likely that this effect will be even stronger for virtual teams, who have an even higher need to receive information on other team members (and not just themselves) compared to face-to-face teams. Moreover, receiving information on how one relates to others in terms of one’s perceptions, actions, and results should be particularly informative to ensure that future behaviors are well-coordinated within the team. This assumption is supported by studies that found (more) positive effects of team-plus individual-level feedback on team processes and performance (Kim et al., 2008, 2012; Sonderegger et al., 2013) for virtual compared to face-to-face teams.

Practical Implications

From a practical perspective, our review aimed to identify how feedback content, source, and level may differentially leverage the benefits of feedback for virtual teams. Specifically, we found that feedback may be particularly helpful for virtual teams when it combines performance-related information with information on team processes and/or psychological states, stems from an objective source, and targets the team as a whole (potentially including the possibility for individuals to identify their individual contributions).

Designing feedback to reflect these aspects not only allows team members to obtain information they would otherwise have had little or no access to but also harnesses the unique benefits provided by the virtual context. The fact that the majority of virtual teams’ interactions take place through communication technologies also means that feedback can be directly integrated into their collaboration environment. That is, communication technologies can gather, transform, and display information without requiring much or even any additional deliberation and effort from team members. For instance, team members can exchange information using a collaboration platform with an integrated feedback function drawing on automated assessments of team members’ contributions (e.g., number of contributions or themes extracted in these contributions using machine-based semantic analyses). Moreover, while objective feedback may be particularly easy to implement, these integrated feedback tools could also include possibilities for subjective feedback, such as peer assessments or short self-report surveys capturing team members’ affective or motivational states.

Finally, some of the reviewed studies also suggested that virtual teams require time and effort to adequately process feedback. Accordingly, even though feedback should be given in regular intervals and temporally contingent on the team’s behavior and important events in their environment (i.e., feedback should be given without too much temporal delay, so team members know which behaviors or events are related to which consequences), it is also important not to overtax team members with feedback during taskwork. Therefore, practitioners may want to allocate time devoted specifically to receiving and processing feedback. Given that feedback appears to be particularly helpful when coupled with guided reflexivity (e.g., Gabelica et al., 2014; Konradt et al., 2015), virtual teams could benefit from team debriefs/“after-action-reviews” (Tannenbaum & Cerasoli, 2013; see also sprint retrospectives, as implemented in scrum/agile project management, Schwaber & Sutherland, 2020), where they can collectively reflect upon feedback and discuss possibilities for improving future team- and taskwork.

Research Gaps and Avenues for Future Research

Our review on feedback in virtual teams uncovered several aspects which may help guide future research in this area. First, the majority of the reviewed studies drew on non-organizational samples. Accordingly, this raises the question of how findings on team feedback effects extend to teams that engage in multiple performance episodes, which are nested in projects that span several months or even years. Thus, we see a need for more research moving away from laboratory or classroom studies to a stronger focus on field (quasi-)experiments with organizational teams. This also pertains toward having control of working in virtual teams—while the degree of virtuality in laboratory or even classroom studies constitutes part of the experimental manipulation, organizational teams may have a higher degree of discretion over their degree of virtuality. Given that many organizations are likely to adopt more hybrid working models in the future (i.e., more possibilities to work remotely, e.g., from home), it would be fruitful for future research to concentrate on the implications of mandatory versus voluntary participation in virtual teamwork (e.g., in form of teamwork studies conducted during the COVID-19 pandemic, where the transition toward virtual teamwork was rather involuntary).

For instance, mandatory participation in virtual teams is likely to lead to more negative consequences in terms of perceived fairness, or a poor fit of personality and virtual work. These negative effects, in turn, may be more difficult to mitigate with team feedback than for teams who voluntarily engage in virtual work and can optimally capitalize on feedback. Conversely, it is also possible that team members who voluntarily work virtually are less dependent on feedback tools/interventions because they are more adept at compensating for the inherent lack of feedback in the virtual environment (e.g., because they possess more communicative skills to accommodate for a lack of nonverbal information). Accordingly, we would encourage future research on feedback in organizational teams to also consider factors such control over virtual work (which could be an aspect of job autonomy) as a potential moderator of feedback effects. Relatedly, another promising avenue for future research would be to investigate feedback seeking in the context of virtual teamwork. Obviously, feedback cannot only be provided to a virtual team from other people or from a system, but the virtual team itself can actively solicit feedback on its team inputs, processes, psychological states, and outputs. Accordingly, it would be interesting to learn more about the effects of feedback seeking on team performance in situations in which no other feedback is readily available.

Second, even though the majority of the reviewed studies employed iterative feedback (i.e., feedback that was displayed multiple times or even continuously during team collaboration), the dependent variables were often measured only at task completion. Accordingly, we still lack a detailed picture of how exactly feedback translates into subsequent team- and taskwork episodes in virtual teamwork. We thus encourage future research focusing on the dynamics of team feedback in a virtual setting by capturing its effects over multiple time points during collaboration. For instance, studies using project management tools with integrated feedback functions could assess the impact of feedback on team members’ motivation and satisfaction (through the implementation of regular short surveys) as well as on performance criteria such as the task completion rate and speed.

Third, a further research gap we identified during our review process is the lack of studies that vary not only feedback but also team virtuality. Specifically, we found only five studies that varied the degree of virtuality. This methodological drawback limits our understanding of how (or even if) feedback is particularly beneficial at increased levels of team virtuality. Accordingly, to truly understand the moderating role of feedback on the (potentially detrimental) effects of team virtuality, we advise future studies to measure variations in both team virtuality and feedback—ideally in a setting that enables a range of different levels of these two constructs (instead of the typical dichotomies employed in current research). Given the already existing conceptualizations of team virtuality as a continuous phenomenon (e.g., Foster et al., 2015; Kirkman & Mathieu, 2005) and the fact that 60% to 70% of workers want to continue working remotely to some degree post-pandemic (Brenan, 2020; IBM, 2020; Ozimek, 2020), the question remains how many so-called traditional/non-virtual teams will even continue to exist in the future. Accordingly, longitudinal field studies that enable us to assess team virtuality from a more dynamic perspective (such as through changes in the technologies team members use to communicate or the extent to which they engage in telework) may help us identify at which levels of team virtuality feedback is most crucial and how this may interact with further elements such as team tenure.

Fourth, many studies that we reviewed drew on small sample sizes and combined several feedback characteristics. Accordingly, to derive sound implications on the nature and effects of feedback in virtual teams, we encourage future research with larger sample sizes contrasting several distinct feedback conditions both with each other as well as with a control condition. For instance, we could not identify any studies that contrasted mediator and output feedback against each other, even though prior research suggests that feedback may be most effective when aligning feedback content with the desired outcome (e.g., giving feedback on team processes when wishing to improve team processes versus giving feedback on team performance when wanting to improve team performance). As prior research has not uncovered many studies drawing on objective feedback sources (which may be unique to the virtual setting, as we described above), it would also be interesting both to contrast objective and subjective sources of feedback as well as analyze their joint effect on team- and taskwork to test whether objective feedback really is superior to subjective feedback.

Finally, future research could consider extensions of objective feedback that delve deeper into the algorithmic management, artificial intelligence, and human-autonomy team (HAT) domains. The objective feedback we found in the reviewed studies was mainly descriptive, not evaluative. That is, while certain forms of data aggregation may lead to certain judgments (e.g., being represented by a larger node in a network graph means that one talked a lot/more than the others), these judgments are made by participants themselves, based on their background/contextual knowledge, which will not be represented in the feedback itself (e.g., knowing that one did not participate as much in group interactions in a certain week because of other projects, vacation etc.). Accordingly, in the reviewed studies, technology generally displayed information that the participants could use for evaluative purposes but did not make any judgments itself (except for very few studies on output feedback where participants’ performance was rated by an algorithm, e.g., Ostrander et al., 2020). However, given that in many areas (e.g., in the case of Uber drivers), job feedback is already influenced by systems that automatically track and assess information (see e.g., Parent-Rocheleau & Parker, 2021; Parker & Grote, 2020), objective feedback could become evaluative, only that the evaluation is made by an autonomous agent rather than by (human) co-workers or supervisors.

For instance, the team’s interaction rate could be related to other time points or certain standards (set by the team itself or an external agent), and suggestions could be made to change current behaviors. In this case, the technology delivering feedback would still be considered as a tool, rather than as an autonomous agent, but further extensions could consider whether the feedback giving technology could also execute certain actions (e.g., redirecting tasks from members with higher levels of workload to those with lower levels; for a detailed definition of the level of automation underlying HAT, see O’Neill, McNeese et al., 2020). As a result, objective feedback could lead to very different effects than those uncovered in the present review, requiring the consideration of a range of other factors that could influence the system’s acceptance and effectiveness, such as tangibility, transparency, and reliability (see e.g., Glikson & Woolley, 2020; Parent-Rocheleau & Parker, 2021).

Footnotes

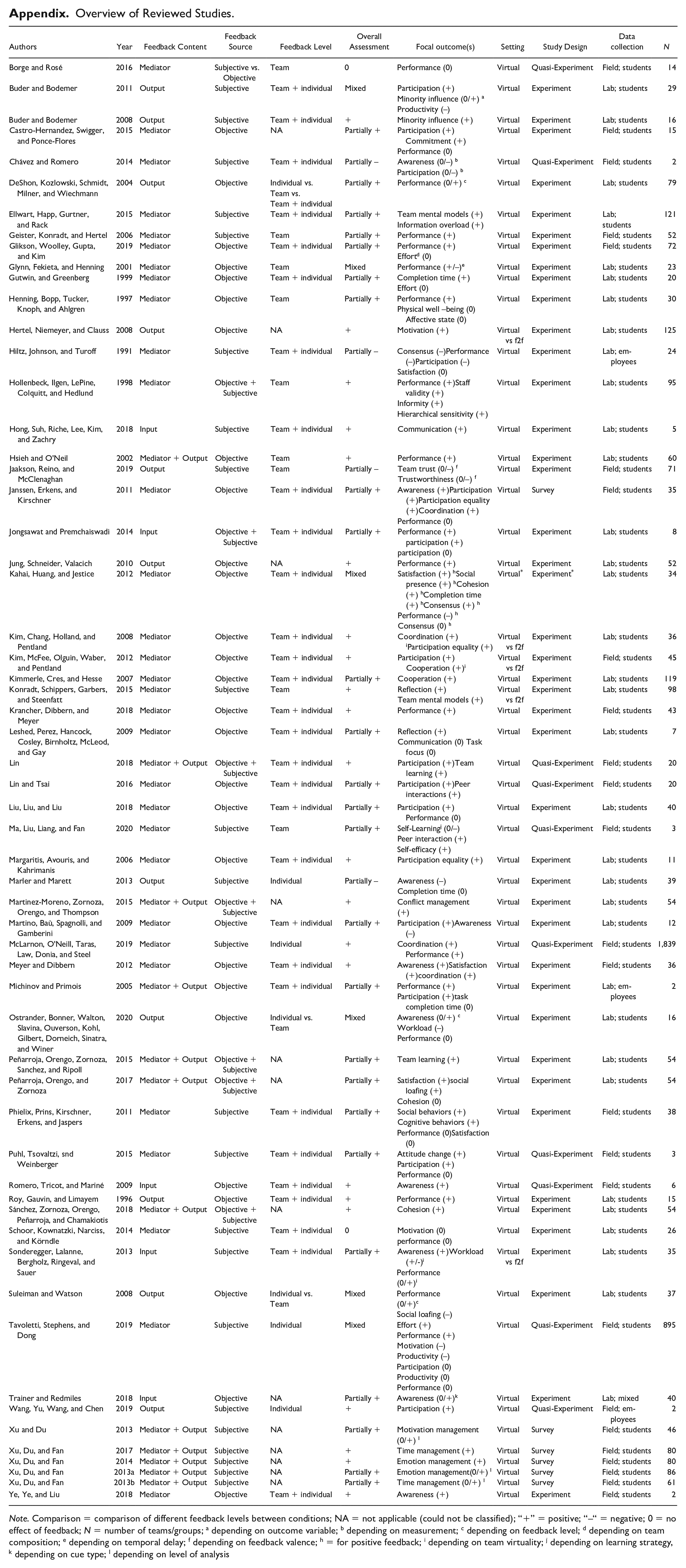

Appendix

Overview of Reviewed Studies.

| Authors | Year | Feedback Content | Feedback Source | Feedback Level | Overall Assessment | Focal outcome(s) | Setting | Study Design | Data collection | N |

|---|---|---|---|---|---|---|---|---|---|---|

| Borge and Rosé | 2016 | Mediator | Subjective vs. Objective | Team | 0 | Performance (0) | Virtual | Quasi-Experiment | Field; students | 14 |

| Buder and Bodemer | 2011 | Output | Subjective | Team + individual | Mixed | Participation (+) Minority influence (0/+) a Productivity (–) |

Virtual | Experiment | Lab; students | 29 |

| Buder and Bodemer | 2008 | Output | Subjective | Team + individual | + | Minority influence (+) | Virtual | Experiment | Lab; students | 16 |

| Castro-Hernandez, Swigger, and Ponce-Flores | 2015 | Mediator | Objective | NA | Partially + | Participation (+)Commitment (+) Performance (0) |

Virtual | Experiment | Field; students | 15 |

| Chávez and Romero | 2014 | Mediator | Subjective | Team + individual | Partially – | Awareness (0/–)

b

Participation (0/–) b |

Virtual | Quasi-Experiment | Field; students | 2 |

| DeShon, Kozlowski, Schmidt, Milner, and Wiechmann | 2004 | Output | Objective | Individual vs. Team vs. Team + individual |

Partially + | Performance (0/+) c | Virtual | Experiment | Lab; students | 79 |

| Ellwart, Happ, Gurtner, and Rack | 2015 | Mediator | Subjective | Team + individual | Partially + | Team mental models (+) Information overload (+) |

Virtual | Experiment | Lab; students |

121 |

| Geister, Konradt, and Hertel | 2006 | Mediator | Subjective | Team | Partially + | Performance (+) | Virtual | Experiment | Field; students | 52 |

| Glikson, Woolley, Gupta, and Kim | 2019 | Mediator | Objective | Team + individual | Partially + | Performance (+) Effort d (0) |

Virtual | Experiment | Field; students | 72 |

| Glynn, Fekieta, and Henning | 2001 | Mediator | Objective | Team | Mixed | Performance (+/–) e | Virtual | Experiment | Lab; students | 23 |

| Gutwin, and Greenberg | 1999 | Mediator | Objective | Team + individual | Partially + | Completion time (+) Effort (0) |

Virtual | Experiment | Lab; students | 20 |

| Henning, Bopp, Tucker, Knoph, and Ahlgren | 1997 | Mediator | Objective | Team | Partially + | Performance (+) Physical well –being (0)Affective state (0) |

Virtual | Experiment | Lab; students | 30 |

| Hertel, Niemeyer, and Clauss | 2008 | Output | Objective | NA | + | Motivation (+) | Virtual vs f2f | Experiment | Lab; students | 125 |

| Hiltz, Johnson, and Turoff | 1991 | Mediator | Subjective | Team + individual | Partially – | Consensus (–)Performance (–)Participation (–) Satisfaction (0) |

Virtual | Experiment | Lab; em-ployees | 24 |

| Hollenbeck, Ilgen, LePine, Colquitt, and Hedlund | 1998 | Mediator | Objective + Subjective | Team | + | Performance (+)Staff validity (+) Informity (+) Hierarchical sensitivity (+) |

Virtual | Experiment | Lab; students | 95 |

| Hong, Suh, Riche, Lee, Kim, and Zachry | 2018 | Input | Subjective | Team + individual | + | Communication (+) | Virtual | Experiment | Lab; students | 5 |

| Hsieh and O’Neil | 2002 | Mediator + Output | Objective | Team | + | Performance (+) | Virtual | Experiment | Lab; students | 60 |

| Jaakson, Reino, and McClenaghan | 2019 | Output | Subjective | Team | Partially – | Team trust (0/–)

f

Trustworthiness (0/–) f |

Virtual | Experiment | Field; students | 71 |