Abstract

Kinship navigator programs (KNPs) are an important strategy to prevent overreliance on the use of foster care, but the evidence for their effectiveness is still emergent. One of the challenges in building the evidence of KNPs is the lack of culturally responsive measures to assess program-specific outcomes. Four new measures were codesigned with advisors to the Ohio Kinship and Adoption Navigator Program, including service providers and caregivers with lived expertise, to assess the most proximal outcomes of the program: satisfaction with services, self-identified family needs, caregiver resourcefulness to meet children’s needs, and accessibility of community resources. Psychometric testing with 194 kinship caregivers and adoptive parents shows promising evidence for the measures’ reliability and validity. The findings have implications for navigator programs aiming to specify and measure proximal outcomes that are tailored to their program’s design.

Keywords

Kinship navigator programs (KNPs) are programs that offer an array of services to connect kinship families with services and supports in their communities, with the ultimate goal of supporting caregivers to promote child permanency and well-being. Services provided by KNPs vary in intensity but at minimum tend to include referrals and service navigation to help meet families’ needs. Some programs focus on specific child or adult interventions, but more common elements include service coordination, advocacy, peer support, mentoring, legal assistance, respite care, and crisis intervention (AdoptUSKids, 2015). While federal legislation supports KNPs (Family First Prevention Services Act [FFPSA], 2018) and studies show that kinship caregivers find them helpful (e.g., Rushovich et al., 2017), the state of the evidence for KNPs’ effectiveness is incipient, largely because studies of KNPs have lacked strong designs (Lin, 2014; Rushovich et al., 2021). The Title IV-E Prevention Services Clearinghouse (2021; hereafter “the Clearinghouse”) is responsible for reviewing and rating the evidence of programs eligible for federal reimbursement through FFPSA. As of September 2023, only four KNPs had been rated as “promising” or “supported.”

To build the evidence for KNPs, it is crucial that studies focus on relevant outcomes and assess them with appropriate measures (Rushovich et al., 2021). Existing studies of KNPs have used a wide array of indicators including self-report measures and administrative data to assess outcomes (see Lin, 2014, for a systematic review of measures used). The outcomes studied also range from proximal (e.g., access to resources) to distal (e.g., adult and child well-being and child permanency). The four Clearinghouse-approved KNPs received their ratings based on effects on child permanency outcomes for children and families with open child welfare cases, which were measured using child welfare administrative data (Forehand et al., 2022; Preston, 2021; Schmidt & Treinen, 2021; Wheeler et al., 2020).

Administrative data are an efficient source for certain outcomes (e.g., child permanency and safety) and are considered valid and reliable by the Clearinghouse (Wilson et al., 2019). However, administrative data center child welfare system outcomes, and are also limited in its ability to capture program participants’ perceptions, such as their experience of services, the specific family-identified needs that were met, and potential improvements related to well-being and family functioning. Moreover, administrative data often do not capture information for families that are not formally involved with the child welfare system, which may curtail the understanding of outcomes for a program’s full service population. For example, although the Arizona Kinship Support Services program (the only KNP currently rated as supported by the Clearinghouse) serves informal kinship families, its evaluation study excluded all informal kinship families from the analysis, because their information was not available in a standardized system (Schmidt & Treinen, 2021).

Self-report measures, on the other hand, are the primary source for understanding participants’ experiences with a program. These perspectives complement administrative data and can be especially necessary when attempting to understand how proximal family outcomes like caregiver well-being and feelings of support contribute to distal outcomes, such as placement stability. Limitations of self-report measures include vulnerability to measurement error, particularly when measures’ psychometric properties have not been studied. While studies of KNP often use at least some research-validated self-report measures, especially to assess well-being outcomes, these measures are rarely validated specifically with kinship caregivers. The unique circumstances of kinship families beg the question of whether measures developed for the general population of parents are valid for capturing positive outcomes of KNPs (Lee et al., 2016; Lorthridge, Reyes, Rosman, & Kaye, in press). Moreover, even when measures demonstrate adequate properties based on traditional psychometric standards in relevant samples, the perspectives of program service providers and participants should also be assessed to ensure the multicultural validity of measures in the specific context in which the measures will be used. Ideally, measures for new programs would be selected through an in-depth conceptualization of the program’s theory of change, including the outcomes the program intends to impact, and then validated with the program’s service population. This article describes an evaluation of team’s effort to collaboratively define outcomes and codesign survey measures in one KNP evaluation. The primary aim of this study is to complete the final step in the measure of development process: psychometric testing of the newly developed measures.

Ohio Kinship and Adoption Navigator Program

The Ohio Kinship and Adoption Navigator Program (OhioKAN) is a new statewide program designed to assist kinship and adoptive families. The program is funded by the Ohio Department of Job and Family Services and administered by Kinnect, a Ohio-based nonprofit. OhioKAN is a voluntary program and its service population is broader than many KNPs, as it includes adoptive families and an inclusive definition of kinship families regardless of whether their living arrangements are formal (i.e., facilitated by a child welfare agency) or informal (i.e., formed without child welfare system involvement). The program aims to use information, referrals, advocacy, and ongoing support to (a) enhance kinship and adoptive families’ access to suitable resources, and (b) increase caregivers’ awareness, skills, and confidence in navigating supports to (c) meet their family needs and provide a stable living situation for their children.

In preparation for a statewide cluster randomized effectiveness trial of OhioKAN, the evaluation team codesigned family survey measures based on input from families served and the program theory of change (see https://manual.ohiokan.org/ohiokan-program-manual/theory-change/overview). A complete list of the measures used in the evaluation is provided in the Supplemental Appendix. Consistent with principles of action research (Huang, 2010) and culturally responsive and equitable evaluation (Hood et al., 2015), the evaluation team partnered closely with program staff and advisors with lived experience as kinship caregivers and adoptive parents. Action research aims to generate knowledge about what works within a particular context of practice (Huang, 2010), with a core focus on respect for diversity, drawing on the strengths of communities, reflecting on cultural identities, power-sharing, and colearning (Minkler, 2000). Furthermore, culturally responsive and equitable evaluations reject the notion that evaluation is culture free, and they explicitly seek to use evaluation to advance equity (Hood et al., 2015). This approach to evaluation challenges power structures that privilege evaluators’ perspectives over those of program implementers and service recipients and specifically attends to historically marginalized groups (Hood et al., 2015). A key strategy for advancing equity in evaluation is to center the perspectives of service recipients when defining and measuring outcomes.

Together and grounded in these principles, the team of evaluators, advisors, and program staff developed new survey measures for the following constructs: (a) self-identified family needs, (b) caregiver resourcefulness to navigate the service system and meet their children’s needs, (c) accessibility of community resources, and (d) satisfaction with OhioKAN. The following section defines each construct and identifies how the newly developed surveys complement existing measures used in previous evaluations of KNPs.

Self-Identified Family Needs

The Brief Assessment and Screening to Inform, Connect, and Support (BASICS) was initially designed as a tool that navigators would use when they were first meeting a family to gather information about the family’s self-identified needs. This assessment was intended to be used by navigators in practice to guide decisions about appropriate service provision (e.g., which referrals or resources to provide). In collaboration with OhioKAN program advisors, the evaluation team later transformed the assessment into a retrospective self-report scale to be used in the program evaluation. Both versions of the BASICS assessment ask respondents to rate their need for services across 10 domains (e.g., basic needs, family functioning) that are defined with examples of common needs within that domain (e.g., housing, family communication). The version administered by navigators in practice focuses on families’ needs at the time they reach out to OhioKAN. The self-administered version of the BASICS included in the evaluation asks families to rate their need for service over the past month on a scale of 0 = “no additional support needed” to 4 = “we needed support right away.” A needs sum score ranging from 0 to 40 is generated by adding the responses to each domain. In addition, a total number of needs score can be generated by summing the domains that were rated above 0.

The BASICS was designed to fill a need for a brief scale that would capture kinship caregivers’ and adoptive parents’ perception of their family’s need for services. Existing validated scales (e.g., see Dunst, 2022) were found to be too long and lacked validation with kinship or adoptive families. The Family Needs Scale (Dunst et al., 1987) was the only identified measure that had some initial testing with kinship families (Lee et al., 2016) but its 41-item structure deemed it too burdensome for the type of participant engagement that OhioKAN intended to foster. In addition, existing scales were missing domains that advisors indicated were important to capture with the OhioKAN service population.

To address these gaps, the evaluation team generated an initial list of BASICS items by reviewing existing needs assessments and the literature on kinship and adoptive families’ needs. Additions unique to the BASICS included items to assess needs related to legal matters and the complex family dynamics that kinship caregivers describe as particularly taxing (Breman, 2014; Rubin et al., 2017; Smithgall et al., 2009). After initial items were proposed by the evaluation team, a team of nine program advisors with experience in multiple fields that serve kinship and adoptive families (e.g., child welfare, aging, formal and informal kinship, adoption and postadoption, implementation, and evaluation) individually reviewed the proposed domains. Advisors provided feedback regarding the face validity of domains (i.e., BASICS items) and their respective examples, the adequacy of the response scale, and whether the tool met the program’s data collection goals.

Caregiver Resourcefulness to Meet Children’s Needs

The Caregiver Resourcefulness Scale was developed to capture caregivers’ perceived ability to navigate the service system and meet their children’s needs. Items focus on caregivers’ knowledge of services (e.g., I knew where to find resources . . .), skills and confidence to advocate for services (e.g., I had the skills and confidence to share my opinions with professionals . . .), and perceived ability to meet their children’s needs (e.g., . . . I have felt that my support system and I were able of taking good care of my child in the ways that are important to me). Items are rated on a 5-point Likert-type scale ranging from “strongly disagree” to “strongly agree.” In addition, four of the 20 items have a “does not apply to me” option, because they are only relevant for families that interacted with the service system in the past month. A resourcefulness mean score ranging from 1 to 5 is calculated by adding all responses and dividing the sum by the number of applicable responses.

The scale was created to capture OhioKAN’s goal of increasing caregivers’ awareness, skills, and confidence in navigating resources and services to meet children’s physical and emotional needs. The importance of caregivers’ ability to navigate the service system to meet their children’s needs has been well-established, although often captured within broader constructs such as empowerment (Early, 2001; Koren et al., 1992) and self-efficacy (Bandura, 1977). These conceptualizations were not ideal, because they often relied on individualistic standards of success that were problematic in terms of multicultural validity. For example, the widely used Family Empowerment Scale (FES; Koren et al., 1992), though previously validated with kinship families (Hayslip et al., 2017), includes items like I believe I can solve problems with child when they happen and When faced with a problem involving my child, I decide what to do and then do it. Items such as these put the emphasis for solutions on the caregiver and disregard that families may make collaborative decisions (Lorthridge et al., in press). The measure developed in the current study addresses this shortcoming by using a more inclusive reference point for questions that asked about caregivers’ ability to take care of their children (i.e., my family/support system and I were capable of . . .).

More recent measures of resourcefulness based on emerging theories (e.g., Zauszniewski, Au, & Musil, 2012; Zauszniewski & Musil, 2013) focus on the ability to independently perform daily tasks and to seek help from others when unable to function independently. These conceptualizations offer a useful theoretical grounding for integrating proactive help-seeking behavior as an important protective factor in caregiving. The newly developed measure focused on a narrow set of service navigation activities that kinship caregivers and adoptive parents may need to perform to meet their children’s needs and provide a stable home. These service navigation activities are consistent with OhioKAN program theory about intended proximal outcomes and with the broader literature. Caregivers’ knowledge of and self-confidence in working with service providers and agencies, as well as caregivers’ efforts to advocate for and improve services for their children, have been linked to more positive outcomes in parents of children with emotional disturbance (Koren et al., 1992) and grandparents raising noncustodial children (Hayslip, Montoro-Rodriguez, Streider., & Merchant, 2017). The ability to navigate services may be especially important for kinship caregivers, as their families often face circumstances that stigmatize caregivers from the support that their families need, and may result in inequitable treatment by service providers (Hayslip & Kaminski, 2005).

Accessibility of Community Resources

The Accessibility of Community Resources Scale was designed to capture caregivers’ perceptions of the resources in their communities. Items focus on caregivers’ perceptions of access and availability of supports within their community and the extent to which supports are appropriate and effective in meeting kinship and adoptive families’ unique needs. Responses are rated on a 5-point Likert-type scale ranging from “strongly disagree” to “strongly agree.” Two of the eight items have a “does not apply to me” option, as they are only relevant for families that have sought community supports in the past month. A mean score ranging from 1 to 5 is calculated by dividing the sum of all responses by the number of applicable responses.

The scale was developed because the evaluation team did not find adequate tools that assessed kinship and adoptive caregivers’ perceptions of the accessibility of services in their communities. This construct is particularly important to OhioKAN, and other navigator programs, that rely heavily on accessible and appropriate community-based services to meet families’ needs. The OhioKAN program model and underlying theory of change include community-level activities designed to support community- and system-level changes that are intended to improve families’ access to relevant supports in their communities. Kinship and adoptive families also underscored the importance of understanding whether services are available when assessing whether families are able to access services.

Unlike existing validated tools that assess the helpfulness of potential sources of support more generally (e.g., the Family Supports Scale; Dunst et al., 1986), the newly developed items focus specifically on system-level supports such as community resources, programs, services, and service providers. In contrast the new scale includes references to the appropriateness of services for kinship and adoptive families in particular. Some previous attempts to more specifically assess service receipt and helpfulness in the context of KNPs have relied on long lists of predetermined services that can be burdensome for respondents to complete. For example, the assessment presented by AdoptUSKids (2015) consists of two questions for each of 40 different service types (e.g., psychological assessment, mentor for child, respite care). This type of comprehensive inventory may be useful in supporting kinship navigators’ service coordination strategy, but is less effective in capturing families’ overall perceptions of their community to measure differences among families or change across time, which were some of the goals of the newly developed scale. In addition, the new eight-item measure met the goal of being brief and not requiring intensive time and attention to complete. The new scale serves as a complement to the Caregiver Resourcefulness Scale, as caregivers’ ability to navigate the service system was expected to vary in relation to caregivers’ experiences with service systems in their communities.

Satisfaction With Ohio-KAN

Finally, to assess satisfaction with program services, the evaluation team developed the OhioKAN Satisfaction Survey. Families are asked to rate their agreement on 10 items using a 5-point Likert-type scale ranging from “strongly disagree” to “strongly agree.” Items focus on caregivers’ experience in interacting with the navigator (e.g., The OhioKAN navigator seemed to understand my needs.) as well as the quality of the help received (e.g., The resources that OhioKAN shared with me were relevant for my family’s needs and preferences.). The measure also includes an independent item focused on overall satisfaction (Overall, how satisfied were you with the help you received from OhioKAN?), which is rated in a 10-point scale ranging from “extremely dissatisfied” to “extremely satisfied.”

While there are various satisfaction surveys used in human services (e.g., Larsen et al., 1979; McMurtry & Hudson, 2000; Reid & Gundlach, 1984; Yatchmenoff, 2005), generic surveys were not able to adequately capture the specific approaches that OhioKAN has carefully defined and refined to align with their underlying program theory. The current measure was developed by reviewing the OhioKAN theory of change, statement of values, and principles of inclusion, diversity, equity, and accessibility. For instance, since OhioKAN emphasizes meaningful engagement and caregivers’ choice in services as indicators of high-quality services, the scale included items to assess these areas as part of caregivers’ overall satisfaction (e.g., In my interaction with OhioKAN, I felt like I could choose the next steps that were right for me and my family.). Moreover, existing satisfaction surveys have not traditionally integrated kinship and adoptive families’ perspectives about the experiences they would like to have in the program. Additional items in the current survey were identified based on results of qualitative interviews with families that participated in OhioKAN during its early implementation. For instance, since the adequacy of referrals provided was a major theme that emerged when families were asked if they would change anything about their experience with OhioKAN, a specific item related to the quality of referrals was added to the measure. (Resources shared with me were relevant for my family’s needs and preferences.)

Method

Approach to Measure Development

In selecting and developing measures, the evaluation team was guided by the goals that the final survey would include:

Items that were culturally appropriate for both kinship and adoptive families and consistent with outcomes identified by OhioKAN service recipients.

Constructs that were plausible, feasible, and specific to OhioKAN.

Strengths-based language that highlighted family resilience.

Brief instruments that did not require intensive time and attention to complete.

Items that are not overly intrusive into child’s or family’s personal details.

Items that were worded in ways that families understood and were appropriate across different cultural worldviews.

Instruments with sufficient psychometric properties of reliability and validity.

Prioritizing these principles, the evaluation team engaged the following steps (further detailed in Kaye, Lorthridge, Reyes & Rosman, in preparation) to generate the item pool for each measure and pretest, revise, and refine items, ultimately arriving at the new measures to test in the current study:

Identifying outcomes of interest by crowdsourcing outcomes from families served in an online survey (Lorthridge et al., in press), prioritizing the program’s theory of change with feedback from OhioKAN service providers, and drawing from existing research on KNPs.

Pretesting identified survey items via cognitive interviews with families and a culturally responsive and equitable evaluation expert review (Kaye et al., in preparation).

Partnering with advisors who were kinship caregivers or adoptive parents to revise measures and draft items for constructs for which appropriate measures were not found.

After reaching consensus with advisors who were kinship caregivers and adoptive parents about the face and multicultural validity of items, the team compiled a survey that included the new measures as well as existing validated measures to use as reference criteria during psychometric testing. The evaluation was determined to be “Exempt” by Solutions IRB, LLC.

Participants

The sampling frame for the survey administration in this study included 696 kinship caregivers and adoptive parents served by OhioKAN between August 2021 and January 2022. Eligible caregivers received an email inviting them to participate in an online survey, as well as up to three automated reminder emails if they had not completed the survey within a week. Respondents were compensated with a gift card for completing the survey. A subsample of respondents that completed the survey was invited to complete a retest administration within 2 weeks of the initial survey.

Measures

Data about family characteristics were derived from program participant information entered by OhioKAN service providers into OhioKAN’s administrative database. Families provided consent to the use of their demographic information for evaluation purposes when they requested OhioKAN services. In addition to the four newly developed measures, the online survey included two subscales from the FES (Koren et al., 1992) to use as the reference in correlations to assess criterion-related validity. Scores on the FES were hypothesized to be positively correlated to scores on the Caregiver Resourcefulness Scale and negatively correlated to scores on the BASICS. The full FES consists of 34 items that developers frame as representing empowerment across three levels: the family, the service system, and the community/political system (Koren et al., 1992). Similar to other validation studies focused on kinship caregivers that excluded items that were not realistically expected to apply to kinship caregivers (Hayslip et al., 2017), the political engagement subscale was excluded, as its items were beyond the scope of this study’s conceptualization of resourcefulness to navigate the service system to meet children’s needs. The FES response options are on a 5-point Likert-type scale (ranging from “never” to “very often”) and the scoring procedure involves summing the items within each subscale and summing all the items for a total scale score. The FES has demonstrated adequate psychometric properties with internal consistency ranging from .87 to .88 across the three subscales and test–retest reliability correlations ranging from .77 to .85 (Huscroft-D’Angelo et al., 2018).

On average, respondents completed the full online survey in 26 (range, 8–59) min. The newly developed measures comprised slightly less than half of the full survey (four of 10 scales) and 70% of all surveys started were completed. The completion time and completeness observed were likely influenced by the additional scales included in this survey to assess criterion validity. When used as part of evaluation studies, authors expect completion time would decrease and completeness would increase.

Data Analysis

Distributions of items within a scale and total scale scores were explored with frequencies and tests of skewness and kurtosis. Items with skew values above 3.0 are considered extremely skewed and items with kurtosis values above 10.0 can potentially be problematic for inclusion in factor analyses, as they can bias the parameters of the factor model (Kline, 1998). All items were examined to confirm that they garnered responses in each response category.

Missing data were less than 5% for each item across all respondents. To calculate scores for each scale, respondents were included if they answered at least 70% of the items within the scale. Missing responses for included cases were replaced by the person-specific mean of answered items within the scale. Scores for scales with the response option “Not applicable” were calculated by dividing the total score by the number of scale items minus items marked “Not applicable.” Total scores for other scales were calculated by summing the responses by the number of scale items.

Reliability, or the scale’s consistency and likelihood to yield stable results over time, was assessed in two ways. First, to assess the degree of interrelationship among items within a scale (the scale’s internal consistency), Cronbach’s alpha and McDonald’s omega total (McDonald, 1970, 1999) were calculated. Higher internal consistency provides stronger evidence that an instrument’s items measure the same underlying theoretical construct. While Cronbach’s alpha is the most commonly used reliability index, a number of recent critiques have shown that alternatives methods, such as omega total, have more realistic assumptions and are thus more adequate than Cronbach’s alpha (McNeish, 2018; Sijtsma, 2009; Zinbarg et al., 2006). For example, Cronbach’s alpha assumes that each item on a scale contributes equally to the total scale score (i.e., tau equivalence), whereas omega is more appropriate when items vary in how strongly they are related to the construct being measured (McNeish, 2018). Omega total was calculated by first conducting a factor analysis in Stata (version 17) and then entering the standardized factor loadings and error variance estimates in the spreadsheet provided by McNeish (2018). Cronbach’s alpha was calculated with the alpha command in Stata. In line with conventional standards, an alpha and omega of at least .70 were expected to consider a scale as having acceptable internal consistency (Bandalos, 2018). Second, the test–retest reliability of each scale was assessed by calculating Pearson’s correlation between the scale scores at the initial and retest administration. Significant correlations greater than or equal to .70 were considered acceptable (Nunnally & Bernstein, 1994).

Validity, or the extent to which scales measure the intended latent dimension, was also assessed in several ways. First, criterion-related validity tests were conducted by calculating Pearson’s correlations between a criterion and the new scale. Stronger correlations between two constructs that are thought to be related indicate higher validity of the instrument being tested. Correlations between a test instrument and its criterion should be statistically significant and in the expected direction (Bandalos, 2018). The criterion used for the Caregiver Resourcefulness Scale and the BASICS was the FES. The criterion for the satisfaction scale was a one-item indicator of general satisfaction. The correlation between the Accessibility of Community Resources Scale and the Caregiver Resourcefulness Scale was also assessed. Factor analyses were conducted for the Caregiver Resourcefulness Scale to determine whether the proposed items loaded onto discrete factors. Principal component analysis was conducted utilizing promax rotation, because the factors were thought to be correlated. A scree plot and interpretability were used to help determine the most appropriate number of factors to retain.

Following data analyses, the evaluation team shared results of the psychometric testing and factor analyses with advisors with lived expertise. Advisors confirmed the face validity of identified scales and subscales and helped to name each of the identified scales.

Results

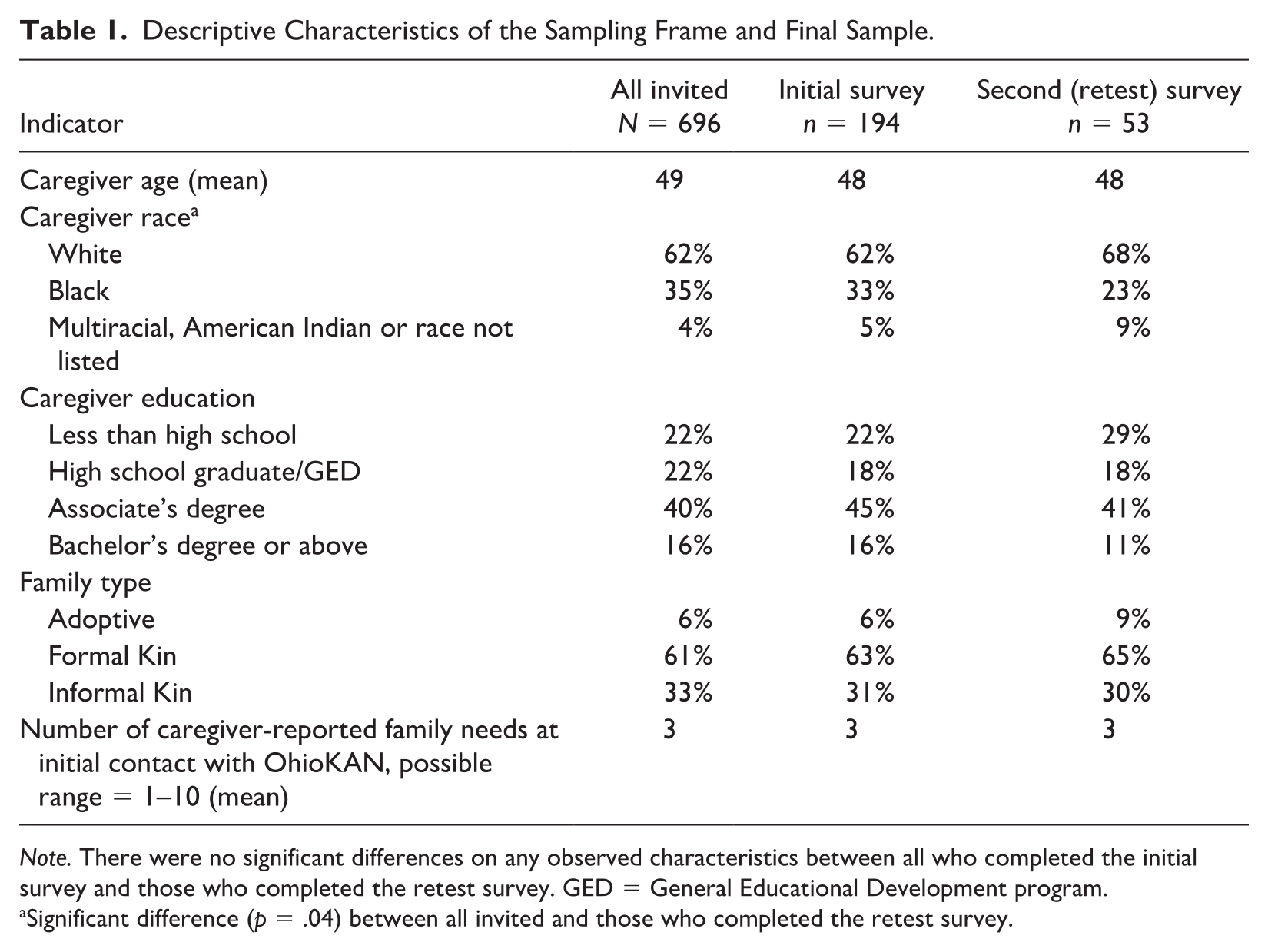

A total of 194 caregivers completed the initial survey. A subsample of 53 participants also completed the second administration. On average, there were 19 (range, 14–27) days between administrations. The initial survey response rate of 28% is reflective of the participant engagement challenges common to studies that rely on online surveys without additional active recruitment strategies (Fan & Yan, 2010). Despite the relatively low response rate, the families who responded to the survey were similar to families that did not respond to the survey in demographic characteristics. Descriptive characteristics of participants are shown in Table 1.

Descriptive Characteristics of the Sampling Frame and Final Sample.

Note. There were no significant differences on any observed characteristics between all who completed the initial survey and those who completed the retest survey. GED = General Educational Development program.

Significant difference (p = .04) between all invited and those who completed the retest survey.

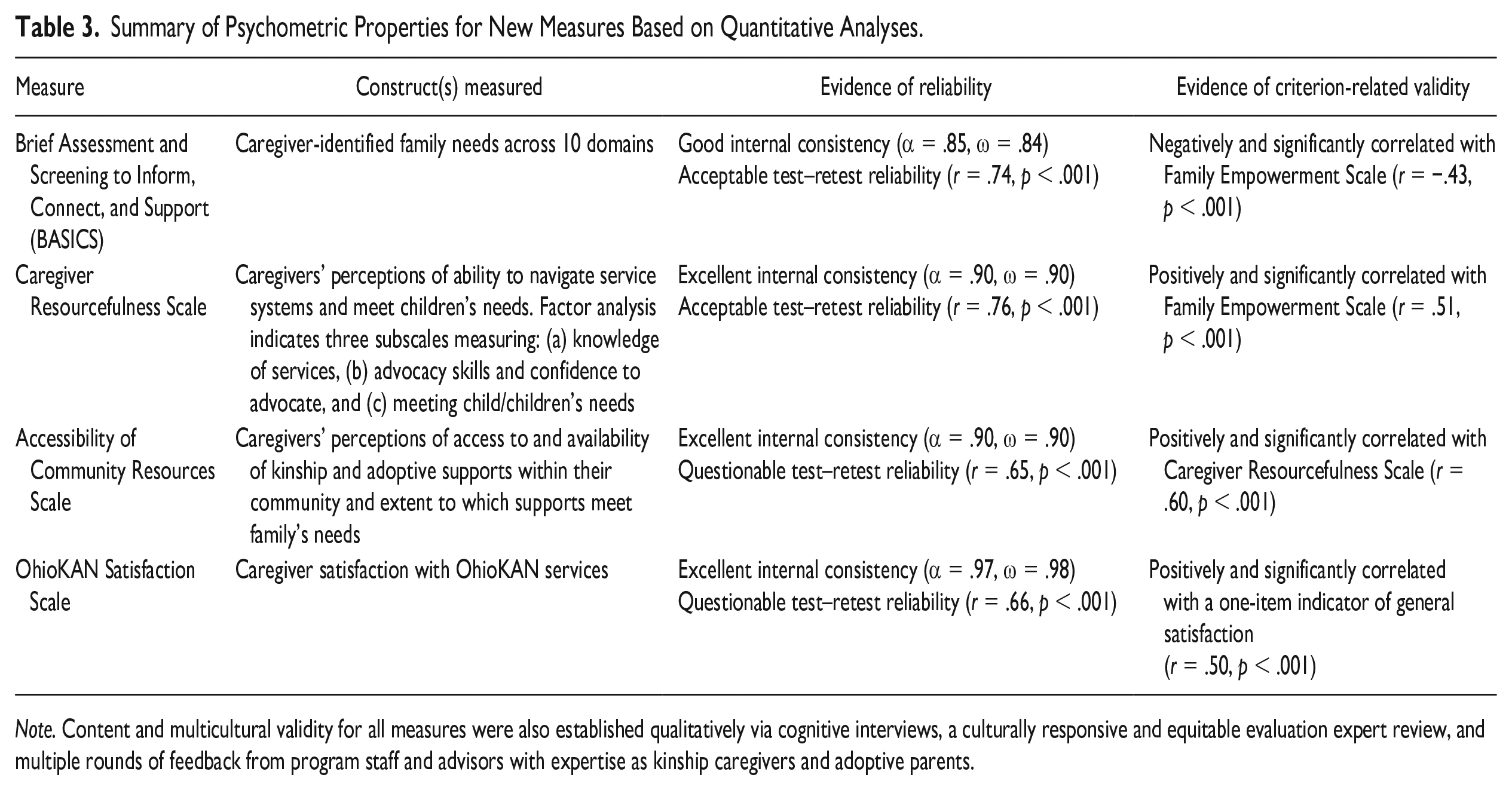

Brief Assessment and Screening to Inform, Connect, and Support

Normality analyses indicated that skewness and kurtosis were within acceptable ranges for all of the BASICS items. Test–retest reliability between sum scores at the first survey administration and the retest was acceptable (r = .74, p < .001). Interrelationships among items were explored with Cronbach’s alpha and McDonald’s omega total, and though they were not expected to be very high due to items representing different domains, results showed that the scale had good internal consistency (α = .85, ω = .84), meaning that needs were interrelated even across different domains. The sum score was significantly and negatively correlated to the average score of the FES (r = −.43, p < .001), indicating evidence of criterion-related validity.

The number of needs score (range, 0–10) showed a weak but statistically significant correlation between the first and second administration (r = .40, p < .001). Spearman’s correlations of all dichotomous indicators of the presence of a need within each domain at the first and second administration points were statistically significant, ranging from ρ = .38 to .61. As expected, the number of needs score was also significantly and negatively correlated to the average score of the FES (r = −.49, p < .001).

Caregiver Resourcefulness Scale

Normality analyses indicated that skewness and kurtosis were within acceptable ranges for all items, except Providing a safe and stable home. Specifically, this item was highly negatively skewed, with 88% of respondents endorsing the maximum response option (i.e., “strongly agree”). Cronbach’s alpha and omega total based on the 20 items both indicated that the scale had excellent internal consistency (α = .90, ω = .90). Excluding any of the items did not increase the total alpha test–retest reliability between average scores at the first survey administration and the retest was acceptable (r = .76, p < .001). The average score was significantly correlated to the average score of the combined FES subscales (r = .51, p < .001), providing some evidence of criterion-related validity.

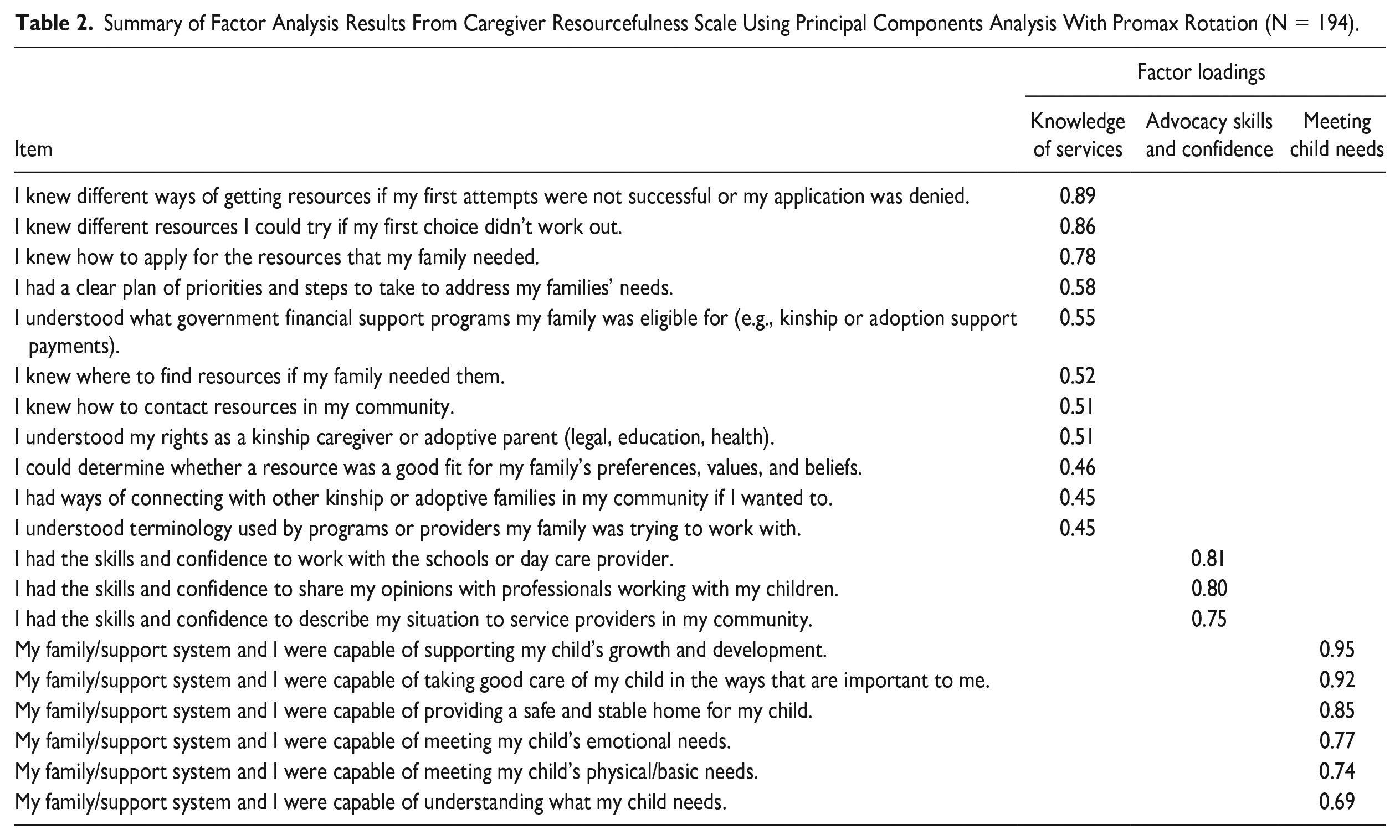

Results of exploratory factor analysis initially yielded 10 components. After exploration of a scree plot, the following factors yielding Eigenvalues above 1 were identified, as these also aligned with the conceptual differentiation between subconstructs: (a) knowledge of services, (b) advocacy skills and confidence, and (c) meeting children’s needs. Subscales based on the three factors also had high internal consistency (α = .90 for knowledge of services; α = .78 for advocacy skills and confidence; and α = .92 for meeting children’s needs). To assess whether the item with high kurtosis was biasing factor analysis estimates, the analysis was conducted with and without that item. Since results were consistent in both iterations, the item was retained. Factor loadings on the promax rotated matrix from a three-factor analysis are shown in Table 2.

Summary of Factor Analysis Results From Caregiver Resourcefulness Scale Using Principal Components Analysis With Promax Rotation (N = 194).

Accessibility of Community Resources Scale

Analyses indicated that skewness and kurtosis were within acceptable ranges for all of the Accessibility of Community Resources Scale items. Cronbach’s alpha and omega total based on the eight items also indicated that the scale had excellent internal consistency (α = .90, ω = .90). Excluding any of the items did not increase the total alpha. Test–retest reliability between the first survey and the retest was questionable (r = .65). The average score was positively and significantly correlated with the average score for the Caregiver Resourcefulness Scale suggesting evidence of criterion-related validity (r = .60, p < .001).

OhioKAN Satisfaction Scale

Normality analyses indicated that skewness and kurtosis were within acceptable ranges for all of the satisfaction items. Test–retest reliability between the sum score at the first survey administration and the retest was questionable (r = .66, p < .001). Cronbach’s alpha and omega total based on the 10 items indicated that the scale had excellent internal consistency (α = .97, ω = .98). The sum score was significantly and positively correlated (r = .50, p < .001) to the face valid, single-item indicator of overall satisfaction (Overall, how satisfied were you with the help that you received from OhioKAN?) that ranged from 1 (“extremely dissatisfied”) to 10 (“extremely satisfied”). A summary of findings for all three measures is shown in Table 3.

Summary of Psychometric Properties for New Measures Based on Quantitative Analyses.

Note. Content and multicultural validity for all measures were also established qualitatively via cognitive interviews, a culturally responsive and equitable evaluation expert review, and multiple rounds of feedback from program staff and advisors with expertise as kinship caregivers and adoptive parents.

Discussion

The current study described the development and testing of four new measures to assess outcomes relevant to KNPs. Unlike measures used in prior to KNP evaluations, which relied heavily on administrative data, the newly developed measures were specifically designed to reflect the priorities of a KNP and its service population. In addition to having strong validation from program advisors and families with lived expertise as kinship caregivers and adoptive parents, the results of the current study suggest that the new measures have adequate psychometric properties.

While all the measures demonstrated some initial evidence of reliability and validity, test–retest reliability coefficients for the Accessibility of Community Resources Scale and the OhioKAN Satisfaction Scale were below the most-often cited standards for “acceptable” (Nunnally & Bernstein, 1994). Guidance from the field of psychometrics acknowledges that standards for judging the minimum acceptable value for a test–retest reliability estimate are limited (Crocker & Algina, 1986), and the coefficients for both measures were still above the Clearinghouse requirements (Wilson et al., 2019). Nonetheless, these two measures warrant continued exploration, and may be less stable across time or need refinement.

Implications for Practice

This initial validation of new measures holds promise for advancing efforts to build the evidence of KNPs. Programs are better positioned to conduct rigorous evaluations when they utilize measures that are tailored to feasible outcomes based on the program design. The new measures presented in this study may serve as useful, time-saving resources for evaluators seeking to assess similar outcomes in other KNPs, especially if choosing tailored measures that also meet Clearinghouse’s standards for reliability and validity is a priority. Researchers and evaluators seeking to codesign new measures to assess different outcomes specific to another program may also benefit from considering the process used to develop the measures in the current study and further detailed in Kaye et al. (in preparation).

Data collected with the new measures have the potential to provide a more nuanced understanding of kinship and adoptive families’ experiences with KNPs and whether specific program components may drive observed benefits. By longitudinally assessing the full sequence of OhioKAN’s intended outcomes, the program will have access to a comprehensive picture of its impact in different areas of families’ lives across time. Likewise, by conceptualizing constructs as multifaceted (e.g., caregiver resourcefulness as consisting of knowledge as well as skills and confidence), results can more precisely explain if KNPs impact a particular facet of a construct more than others. Measures of interrelated constructs can be used to model a program’s theory by estimating multiple effects simultaneously in path or structural equation models that can account for bidirectionality, mediation, and moderation effects. These data can in turn inform resource allocation for specific program components and modifications for second-generation interventions (Hennessy & Greenberg, 1999).

Limitations and Future Directions

All samples have limitations that may introduce bias into the results. Although a strength of this study was that the sample was representative of the population served by OhioKAN on most of the characteristics measured (Table 1), a convenience sample was used and the response rate was low (28%). The possibility that nonrespondents could have been systematically different from respondents on nonobserved characteristics cannot be ruled out. The convenience sample was comprised of families at different points in their service experience with OhioKAN. It is possible that time since intake and the level of contact with OhioKAN could be influencing families’ responses. The sample was also smaller than ideal for a psychometric study of multiple measures. Future analyses that include larger samples should standardize time by assessing the psychometric properties of measures administered at consistent timeframes after intake.

Consistent with both the population of Ohio and OhioKAN’s service population, the vast majority of families identified as either white or Black/African American, and only 4% of families identified as part of a different racial group. The limited heterogeneity of the sample in terms of racial characteristics limits the generalizability of findings. Future studies that explore the reliability and validity of these measures with more diverse samples and disaggregate patterns by racial group and other meaningful characteristics are important next steps. In addition, the only validated criterion included in the current study was the FES. Correlating the new measures to a wider set of existing validated measures could better establish the validity of the new measures and differentiate their constructs. A larger sample and more validated criteria would also expand options for tests of discriminant validity and confirmatory factor analyses that could provide insights into the usefulness of subscales.

Conclusion

The BASICS, Caregiver Resourcefulness Scale, Accessibility of Community Resources Scales, and OhioKAN Satisfaction Scale are new measures, developed in close collaboration with program advisors and tailored specifically to assess outcomes relevant to KNPs. Initial evidence shows these measures meet and exceed conventional standards for reliability and validity while employing survey development strategies that promote equity and cultural responsiveness. Additional testing is needed to better understand these measures’ use over time and with a broader population. The measures provide a relevant and accessible resource for teams invested in building the evidence for KNPs.

Supplemental Material

sj-docx-1-fis-10.1177_10443894231208564 – Supplemental material for Initial Evidence for the Reliability and Validity of New Survey Measures to Evaluate Family Outcomes of Kinship and Adoption Navigator Programs

Supplemental material, sj-docx-1-fis-10.1177_10443894231208564 for Initial Evidence for the Reliability and Validity of New Survey Measures to Evaluate Family Outcomes of Kinship and Adoption Navigator Programs by Lucia M. Reyes, Sarah Kaye and Stephanie Hood in Families in Society

Footnotes

Acknowledgements

We, the authors, thank the Ohio Kinship and Adoption Navigator (OhioKAN) Program, Kinnect, and the Ohio Department of Job and Family Services (ODJFS) for their support and acknowledge that the findings and conclusions presented in this report are ours alone, and do not necessarily reflect the opinions of OhioKAN, Kinnect, or ODJFS.

We are grateful to the many OhioKAN advisors and program participants that provided feedback during the measure selection and development process. We especially thank Dr. Adriennie Hatten, Dr. Elisa Rosman, Dr. Emily Smith Goering, and Arlene Jones for participating in multiple rounds of careful review, feedback, and revisions while co-designing the measures in this study. We are also grateful to Dr. Jaymie Lorthridge for her culturally responsive and equitable evaluation (CREE) expert review and guidance.

Disposition editor: Cristina Mogro-Wilson

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This study was funded by the Ohio Department of Job and Family Services (ODJFS) through contract with Kinnect, as part of a larger evaluation of the Ohio Kinship and Adoption Navigator Program (OhioKAN).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.