Abstract

The Australian government spends millions of dollars funding new programs every year. Taxpayers, policy makers, school leaders, teachers, and students need to know whether these programs are good. Legislation ensures they are evaluated, but do those evaluations report what good looks like, how good these programs are, and for whom? This research sought an answer to that question by analysing publicly available educational evaluations using a new conceptual framework that integrated the logic of evaluation and evaluative reasoning. Both are essential to making a credible, valid, and defensible claim about how good something is: the logic of evaluation makes the judgement legitimate, and evaluative reasoning justifies it. We examined 37 reports using our framework using an adapted systematic quantitative analysis method. Only four provided a legitimate and justified evaluative judgement; the rest we categorised as research – not evaluation. Based on our findings, we propose an updated conceptual framework we called the stairways to heaven which clarifies the steps for evaluation in comparison with research. The evaluation stairway clarifies the logic, justifications, and their relationship, integrating current resources for evaluation practice. It can be used by evaluators, evaluation commissioners, and users to clarify when evaluation is needed and get actually evaluative evaluations that connect values and data to decision-making to drive positive social change.

What We Already Know

The difference between evaluation and research is centred on understanding context and ascribing value, merit, or worth to an evaluand or aspects of it. Theoretically, evaluations should work through a process of the logic of evaluation to make legitimate and justified arguments. Evaluative reasoning is necessary to surface arguments and ensure that evaluations are credible and defensible.

The Original Contribution the Article Makes to Theory and/or Practice

Surfaces the absence of evaluative reasoning in publicly available evaluations of Australian educational sector programs and problematises its impact. Uses a new integrated logic of evaluation and evaluative reasoning framework to visualise how they interact to build a credible, valid, and defensible arguments. Proposes the ‘stairways to heaven’ conceptual model to support future evaluation practice.

Introduction

Education is a fundamental human right, essential for equitable participation in society, and a lever for reducing inequality (Mathison, 2010; United Nations, 1948, 2020). In Australia, the Federal Government spends billions annually on educational interventions like the Better and Fairer Schools Initiative. The 2025–2026 budget provided $32.2 billion dollars through it to fund efforts across school sectors targeted at vulnerable students, including the Consent and Respectful Relationships Program ($20.4 M) and the National Student Wellbeing Program ($61.4 M) (Australian Government, 2025). Taxpayers, communities, students, teachers, and schools all have a right to know what good ‘looks like’ for education interventions like these, whether good is happening, and to what extent. Theoretically, evaluation can provide both learning and accountability to address this need (Mathison, 2010; Ni, 2010; OECD, 2013). The Australian government has both a stewardship responsibility and a legislative requirement (Government (2013); Australian Government The Treasury, nd) to provide evaluation of these interventions.

Our study started within this context, wondering whether evaluation in Australia is answering the question ‘What does good education look like?’ In situations like education when stakes are high due to budget, legislation, and stewardship, stakeholders need to be sure that judgements about goodness are credible, valid, and defensible. Within evaluation, following the process of evaluative reasoning results in judgements with those qualities. To answer our question, we needed a framework to recognise evaluative reasoning and a sample of evaluations to study. In this article, we describe and operationalise the concept of evaluative reasoning based on the literature and empirical research, report on our findings when we used it to analyse publicly available evaluation reports from 2014 to 2024 in the context of education in Australia, discuss the implications for public policy and practice, and propose a revised framework to facilitate evaluative reasoning in future practice.

Conceptual Framework for Evaluative Reasoning

Evaluation has often been defined as the process of generating judgements of merit, worth, and significance (Fitzpatrick et al., 2023; Owen, 2020; Patton, 2018a, 2020; Schwandt, 2008; Scriven, 1995; Stufflebeam & Coryn, 2014). In plain language, this means answering the question ‘what does good look like?’ for the item being evaluated (Gullickson, 2020). Evaluative reasoning (Davidson, 2014a; Gullickson, 2020; House, 1977; Hurteau et al., 2009; Meldrum, 2022; Nunns, 2016; Nunns et al., 2015) is necessary to make a valid claim about the goodness of something. It resides in the literature alongside two other concepts: evaluative attitude and evaluative thinking. Evaluative reasoning, attitude, and thinking all interact in the development of an evaluation and should be reflected in its ‘output’ (usually a report). We begin by clarifying the relationship among them to establish the space for our framework.

Davidson (2005) presented evaluative attitude as the personal orientation of the evaluator: ‘all serious criticism’ is valuable because it allows you to correct and or clarify. Knowing this, and actively seeking out such criticism, is central to being a good evaluator. After all, useful criticism is what we sell, so seeking it out ourselves is ‘walking the talk’ (p. 35). This disposition is an essential first step to critical thinking in general, so important for evaluators, commissioners, program staff, etc. (Abrami et al., 2008). Evaluative attitude is particularly important for leaders, as their orientation to criticism influences the culture and practices throughout the organisation – regardless of official policy (Friedman, 2007; Gullickson, 2010; Schein, 2010).

Several authors have defined evaluative thinking as the application of critical and other types of thinking in an evaluative context (Archibald, 2024; Buckley et al., 2015; Cole, 2023; Paproth et al., 2023; Patton, 2018b; Vo et al., 2018). An evaluative context includes an organisation, team or program; the needs and strengths of the contexts in which there are operating; the way they have framed the needs or problem they want to address in that context; the goals, policies, and programs that have been designed and created in response; how those solutions are implemented and the results they produce. Across all these areas, answering the questions of ‘what good looks like’ implies adding data and critical thinking at the individual, team, and/or organisational level to the prerequisite evaluative attitude. It also requires robust arguments about what good looks like, which requires evaluative reasoning.

Evaluative reasoning is at the centre of Clinton and Hattie’s (2021) discussion of evaluative thinking ‘a higher order, cognitively complex notion, grounded in procedural logic combining relevant values with nonevaluative data to achieve the task of making an evaluative judgement’ (p. 102,006). Evaluative reasoning establishes the logic of evaluation as the procedural logic (Fournier, 1995b; Scriven, 1991, 1994, 2007), necessary to generate a value judgement. Doing evaluative reasoning requires the knowledge and skill to bring together relevant values with evidence in a series of connected arguments that together make a legitimate and defensible claim about the goodness of something. Evaluative reasoning is the focus of this article and thus, the basis of our theoretical framework.

The definitions above have implications for skills, knowledge, and action related to how taxpayer dollars are spent in education. Those doing evaluation need to have an evaluative attitude, and be able to recognise, do and deliver quality evaluative thinking and evaluative reasoning. Potentially, those commissioning and using evaluation also need to have an evaluative attitude, recognise and do evaluative thinking, and recognise the presence or absence of evaluative reasoning and ideally, its quality. In all cases, evaluative reasoning is fundamental. The next sections we further discuss evaluative reasoning: what it is, its history and development, and existing research on it.

What is Evaluative Reasoning?

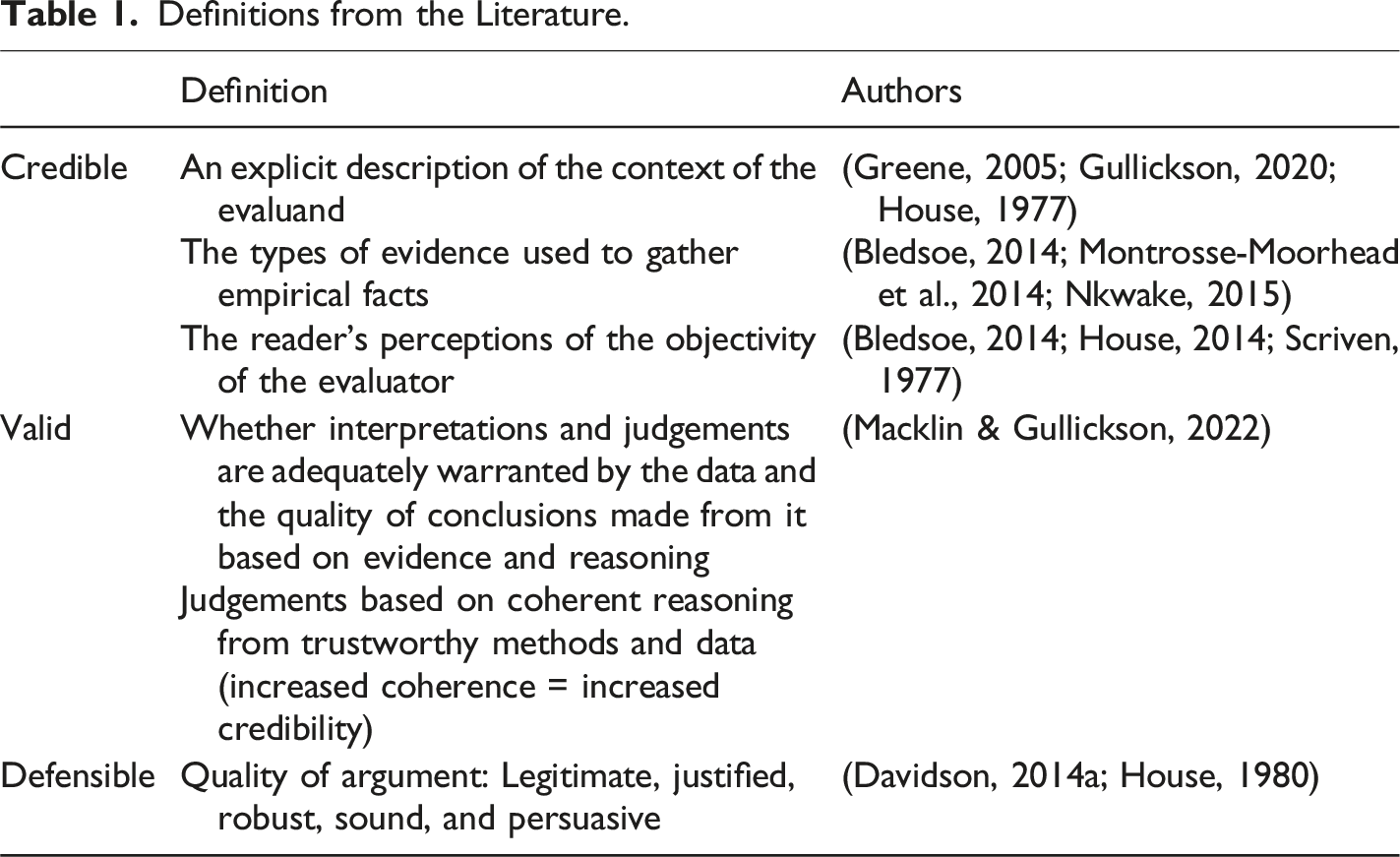

Evaluative reasoning involves using the logic of evaluation and supporting arguments to make a credible, defensible, and valid judgement about the goodness of something. Credible, defensible, and valid are terms from the literature (Table 1), and the text below provides plain language summaries of each: • Credible – inspiring belief; believable in context, with conflicts and limitations declared; and clarity about how factual evidence is gathered with limitations stated (Davidson, 2005; Fournier, 1995a; House, 1977, 1980; House & Howe, 1999; Hurteau et al., 2010; Hurteau & Williams, 2014; Nkwake, 2015; Nunns et al., 2015; Scriven, 1981). • Defensible – supported by argument; well-reasoned (robust, sound, persuasive, legitimate, and justified) (Davidson, 2005; Fournier, 1995a; Fournier & Smith, 1993; Nunns et al., 2015; Scriven, 1981). • Valid – extent to which appropriate conclusions are derived (data + argument) (Davidson, 2005, 2014b; House & Howe, 1999; Macklin & Gullickson, 2022; Nkwake, 2015). Definitions from the Literature.

Credible and valid both have had extensive discussions in the literature as evidenced by citations against the definitions above and in Table 1 below. Defensibility has had less, so we will describe that more in depth.

Defensibility

Defensibility is defined as ‘able to be supported by argument’ (https://dictionary/cambridge.org.dictionary/english/defensible). In the case of evaluation, the arguments are practical not theoretical (House, 1980) and well-reasoned and well-evidenced (Davidson, 2014a). The evaluation literature describes five characteristics of defensibility. • Robust (data + argument) Definition: Strongly formed (https://www.merriam-webster.com/dictionary/robust) Authors: Davidson (2014b); Nunns et al. (2015). • Sound (data + argument) Definition: Free from error, fallacy, or misapprehension (https://www.merriam-webster.com/dictionary/sound) Authors: Arens (2005); Davidson (2014a, 2014b); Fournier and Smith (1993); Valovirta (2002). • Persuasive (data + argument) Definition: Making you want to do or believe a particular thing (https://dictionary.cambridge.org/dictionary/english/persuasive) Authors: House and Howe (1999); House (1977, 1979); Valovirta (2002). • Legitimate (logic) Definition: Able to be defended with logic or justification; valid (https://languages.oup.com/google-dictionary-en/) Authors: Fournier (1995a, 1995b); Fournier and Smith (1993); House and Howe (1999); Hurteau et al. (2009). • Justified (argument) Definition: Having a good reason for something (https://dictionary.cambridge.org/dictionary/english/justified) Authors: Davidson (2014a); Fournier and Smith (1993); Gullickson (2020); Hurteau et al. (2009); Smith (1987).

We observed that often (i) these characteristics have been used without an associated definition or rationale and (ii) the definitions are not commensurate with each other. Consequently, we offer the following systematised and clarified language. To be defensible, the quality of the evidence and analysis, and the quality of the argument, need to be robust, persuasive, and sound. Defensible evidence and analysis must meet standards for validity (research) and credibility (context). A defensible argument must be legitimate and justified: • To be legitimately categorised as evaluation, the logic used in the argument must be the whole logic of evaluation. • To be justified, the steps in the logic must be warranted with good reasons.

As alluded to above, the concepts defensible, valid, and credible relate to each other. Credible and defensible overlap because context influences what counts as evidence and what logic must be applied to that evidence to make a justified argument. Defensible and valid overlap in relation to argument – its quality (defensibility) and its claims (validity). Valid and credible overlap because context influences what counts as a warrant (i.e. this is true because…). Compared with these concepts which have been frequently discussed in the evaluation literature, evaluative reasoning is comparatively thinly theorised. In the following section, we explore how the concept of evaluative reasoning has developed and operationalise it for research.

Operationalising Evaluative Reasoning

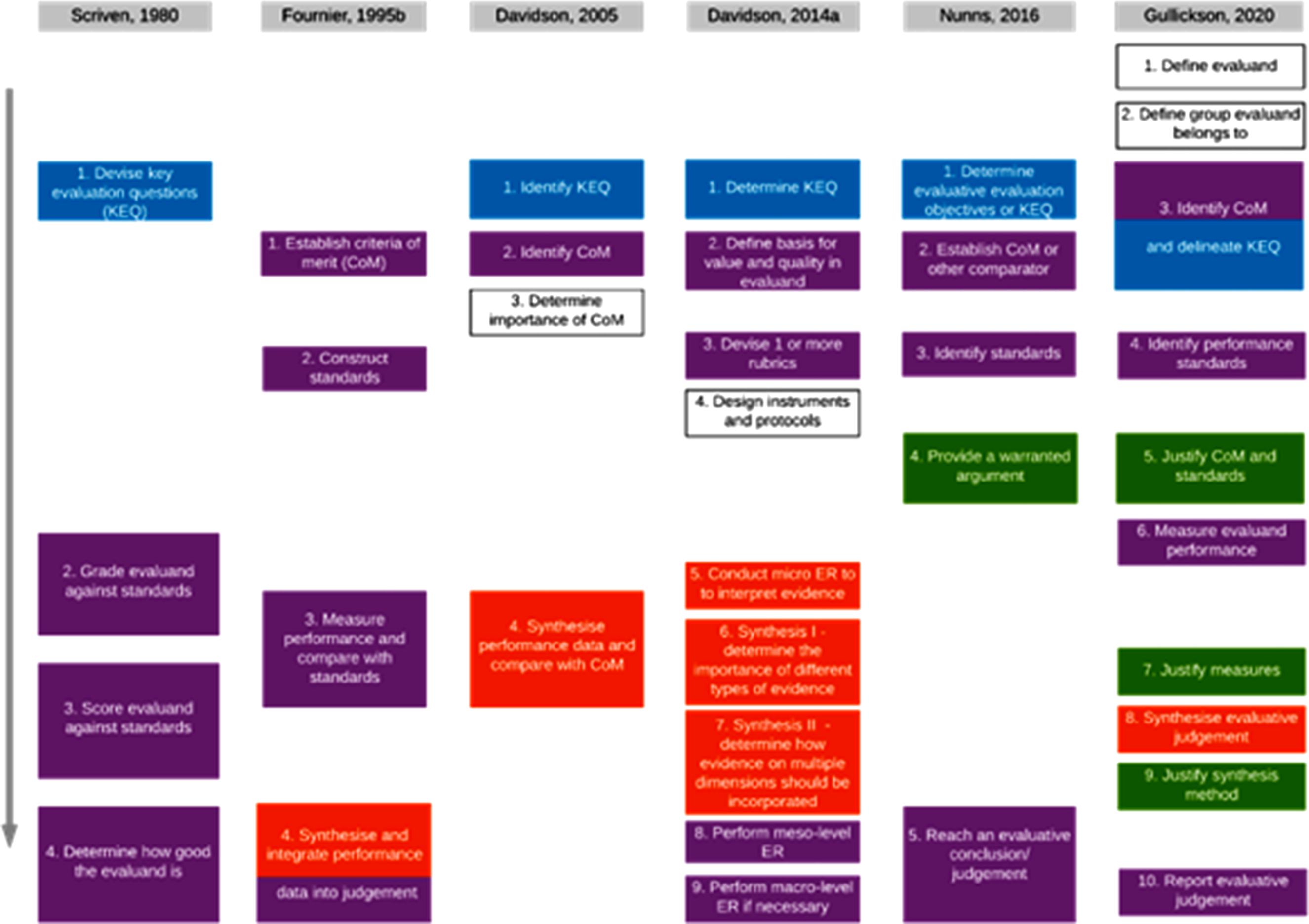

There has been significant expansion of Scriven’s (1981) original conceptualisation of the (general) logic of evaluation with additional steps that now constitute evaluative reasoning. Fournier, 1995b was the first to integrate the general logic with what she termed ‘working’ logic. This was also the first time that synthesis was identified as being part of what needed to be done to reach an evaluative judgement. Subsequently, Davidson (2005, 2014a) substantially operationalised synthesis by identifying three different levels: micro, meso, and macro. She also proposed an approach to conduct synthesis at the different levels and their relative contribution to an evaluative judgement. Finally, Nunns (2016), Nunns et al. (2015), and Gullickson (2020) developed significant argument around the importance of warranting in an evaluation. Nunns et al. (2015) proposed different types of warrant (literature, cultural, methodological, expert, and authority) that could be used to substantiate the evidence in an evaluation. While Gullickson (2020) expanded warranting to include its role in fully describing and justifying all aspects of an evaluation.

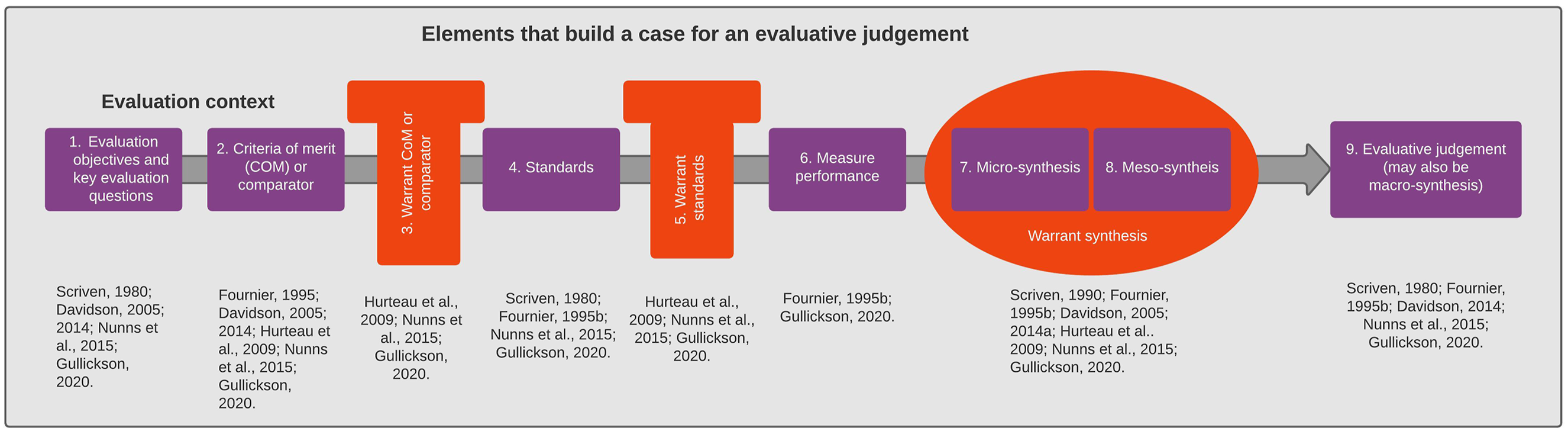

Figure 1 displays how evaluative reasoning interacts with the logic of evaluation in the literature. To differentiate between the logic and evaluative reasoning in Figure 1, the four-step logic, as it is most often cited, is highlighted in purple boxes. The theoretical development of synthesis is highlighted in orange boxes and evaluative reasoning elements in green. The blue boxes highlight the addition of evaluative evaluation questions or key evaluative questions, which are currently located outside both the commonly cited logic and evaluative reasoning. A single directional arrow indicates that the elements build upon each other; however, several authors (Davidson, 2014a; Gullickson, 2020; Hurteau & Williams, 2014) have indicated that evaluative reasoning is an iterative process. Literature support for the elements in the author’s coding framework.

All these elements are necessary in an evaluation, because when evaluators use the logic of evaluation they provide a legitimate evaluative judgement. When they justify the logic, by providing warrants and their associated backings, they provide a defensible, credible, and valid argument. In doing so, evaluators justify both the criteria and standards and conduct a synthesis integrating data about evaluand performance with standards. However, fundamentally synthesis is where the logic of evaluation and evaluative reasoning come together. This is because the logical process required for evaluation asks for the facts about evaluand performance to be combined with the standards (Davidson, 2014; Fournier, 1995a; Gullickson, 2020; Scriven, 1981, 1991).

Existing Research on Evaluative Reasoning

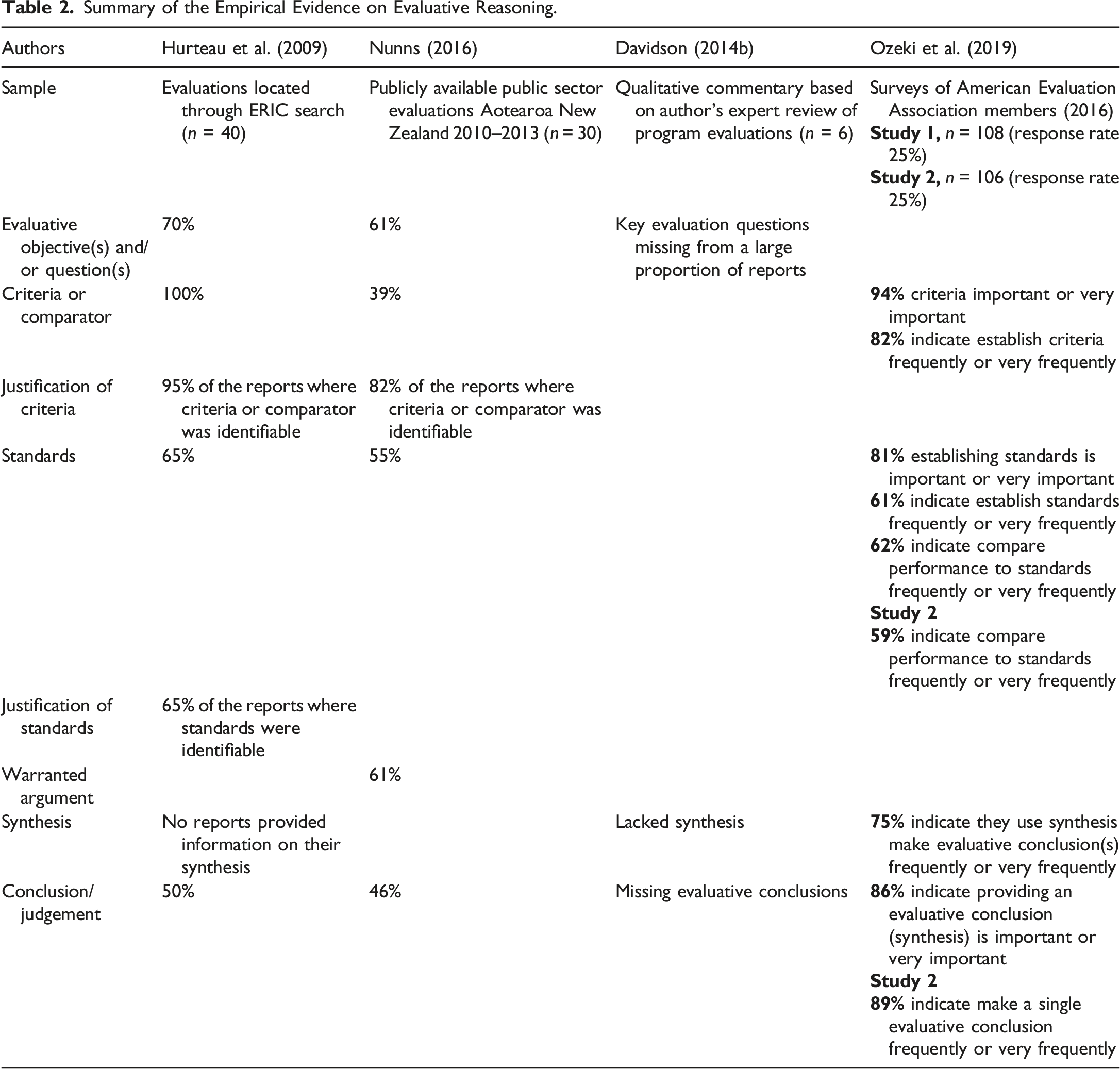

Summary of the Empirical Evidence on Evaluative Reasoning.

The population of empirical studies have contextual and theoretical limitations. Contextually, they cover a small sample of the global evaluator/evaluation community. Nunns and colleagues (2015) examined reports from Aotearoa New Zealand; Ozeki and colleagues (2019) had respondents from only one of the 21 global professional evaluation associations (Better Evaluation, 2018). Hurteau and colleagues (2009) drew their sample from the ERIC database, which could mean that they were from anywhere across the world; they provided no demographic information. Davidson (2014b) did not divulge the context of the six reports that she reviewed. On the theory side, three of the studies had important limitations related to our inquiry, although they built on each other. Hurteau and colleagues (2009) did not identify warrants in their model. Nunns et al. (2015) included warrants but not evaluative synthesis. Ozeki et al. (2019) covered the logic of evaluation asking respondents to quantify their knowledge and practice using self-assessment, which has known flaws (Wildschut et al., 2024), including lack of evidence to support the claims.

Our Framework

Our study addresses gaps in previous evaluative reasoning research by providing a complete sampling frame (date range, sector, and context). The framework developed to analyse the data also addresses previous gaps in evaluative reasoning steps, specifically micro-, meso-, and macro-synthesis and warranting. The following paragraphs outline how we developed our framework.

We adapted Nunns and colleagues (2015) conceptual framework because it covered the most aspects of evaluative reasoning. We explicitly included the elements of the expanded logic of evaluation and evaluative reasoning. The expanded logic included evaluation questions or objectives, and additional steps for synthesis: micro-, meso-, and macro- initially proposed by Scriven (1991) and expanded by Davidson (2014a). Warranting was added at several key places in the process, where steps in the logic need to be justified. The resulting conceptual framework supporting the provision of legitimate and justified evaluative judgement in evaluation reports is illustrated in Figure 2 below. The following discussion outlines the conceptual framework and provides definitions for each element of it. The integrated logic and evaluative reasoning conceptual framework.

The integrated logic and evaluative reasoning conceptual framework, a combination of Scriven’s 1981 logic of evaluation and evaluative reasoning steps, consists of nine steps. Elements constituting the logic of evaluation (elements 1, 2, 4, and 6–9) are outlined in purple. Micro- and meso-synthesis have been integrated into the framework as elements seven and eight. Macro-synthesis is included in element nine, although as Davidson (2014a) indicated, macro-synthesis rarely occurs. However, it is essential that all evaluations reach evaluative conclusions(s) and/or a judgement about evaluand performance. Elements constituting evaluative reasoning are outlined in orange. To justify is a synonym for warranting, so in the context of this discussion, to justify an argument, the evaluator needs to provide an appropriate warrant for it. Warranting an argument is represented as a t-shape (element 3 and 5) and an oval underneath the micro and meso-synthesis elements. Warranting synthesis has not been given a number under the micro- and meso-synthesis elements because it needs to occur for both and allocating a number to it would prioritise the evaluative reasoning that needs to occur at this point where inferences are being made.

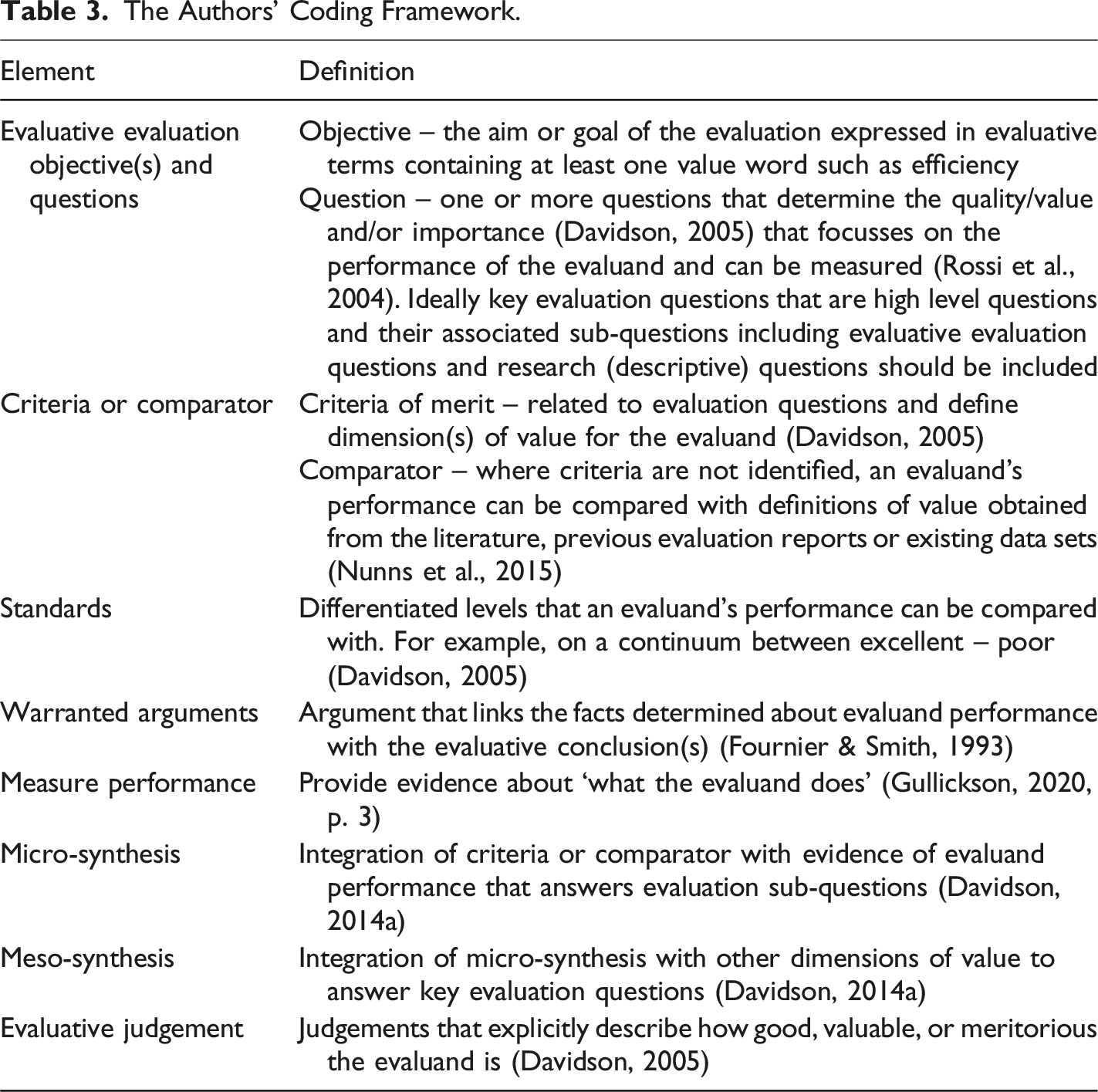

The Authors’ Coding Framework.

Context

We applied our coding framework to publicly available evaluation reports published about education-associated programs conducted in the Australian primary, secondary, and tertiary sectors. Our aim was to answer the research question: What quantitative evidence of evaluative reasoning can be found in education evaluation reports conducted in Australia between 2014 and 2024?

The need for this study evolved from a series of questions posed by us that could justifiably be asked by key stakeholders to evaluation of educational interventions in Australia. The first of these are the taxpayers (including parents/caregivers of children and young people participating in the interventions) who are contributing to the Australian Government’s 2025–2026 budget, $32.2 billion dollars. Taxpayers have a right to ask: (1) What is the value of educational programs to society? (2) How justified is the spending?

The second group of key stakeholders to evaluation of educational interventions in Australia are educators working in or conducting research in the primary, secondary, and tertiary sectors. Educators could ask: (1) What educational interventions work for whom and why? (2) So what? (3) What makes one new innovative program better than another?

Finally, members of the discipline of evaluation who could ask: (1) How can we improve evaluation practice? (2) How does the logic of evaluation integrate with ER and why is it important?

Understanding the presence of evaluative reasoning in evaluation reports from the education sector is fundamental to answering these questions from stakeholders.

Methods

We adapted Pickering and Byrne’s (2014) systematic quantitative analysis method to identify elements of reasoning evident in publicly available education evaluations. Pickering and Byrne (2014) proposed their systematic quantitative analysis method as a means of supporting postgraduate and early career researchers to write and publish literature reviews. The authors outlined a 15-step method for undertaking systematic and quantitative analysis of available literature to map the number of publications and associated findings related to a topic under investigation and its associated research questions.

Pickering and Byrne (2014) indicated that there are two strengths of the systematic quantitative analysis method. The first, that its systematic and quantitative approach may result in a reduced bias related to how literature is selected by a researcher to be included in a review. In this study, we relied on publicly available (published) evaluation reports, like the previous two empirical studies (Hurteau et al., 2009; Nunns, 2016). An evaluation was included in the data set if it met the inclusion criteria discussed below.

The second strength of systematic quantitative analysis method is that the quantification of literature results in an easy and comparatively fast map of a research topic when compared with the narrative approach to literature reviews. This strength of the systematic quantitative analysis method applied in the same way to this study and the findings resulted in clear identification of the presence of evaluative reasoning elements in the evaluations. Additionally, comparison between evaluations was fast and easy. This method was appropriate because it supported quantitative summing from text to identify elements of evaluative reasoning that we were interested in investigating.

The systematic quantitative analysis method calls for 15 steps to analyse literature. We changed the object of analysis to an evaluation report and removed the steps associated with a literature review, reducing the steps to 10. In the sections below, we discuss the search terms, inclusion criteria, data collection, and analysis.

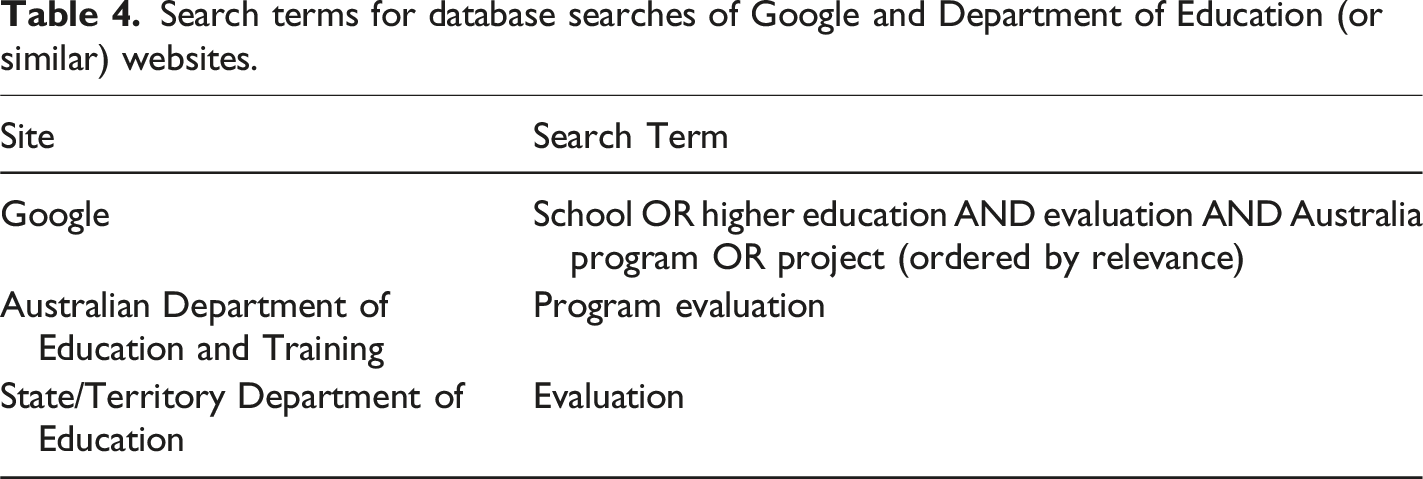

Search Terms and Searches

This study replicated and expanded Nunns et al. (2015) study in a different context; Nunns Aotearoa New Zealand Public Service; this study Australia; primary, secondary, and tertiary education sectors. Consequently, Nunns et al. (2015) search strategy was replicated. Only Google was used to identify publicly available evaluations that could also be accessed by members of the public (taxpayers) without specific knowledge and access to databases. Synonyms for the term evaluation were not used because we were only interested in reviewing evaluation reports.

Search terms for database searches of Google and Department of Education (or similar) websites.

Inclusion Criteria

To answer our research question in our chosen context, we chose inclusion criteria that enabled us to identify publicly available evaluation reports from the Australian primary, secondary, and tertiary education contexts: (1) Evaluation reports conducted on an education program or project in the primary, secondary, or tertiary sector published between June 2014 and November 2024. (2) Report was commissioned by a central, state, territory, or program/project funding agency. (3) Report was written by Australian-based agencies or authors, whether they are external or internal to the agency. (4) No author appeared more than twice in the sample. (5) No agency appeared in the sample more than twice. (6) The evaluation report was a complete report not a summary. (Adapted from Nunns et al., 2015, p. 147).

We used the first inclusion criterion to screen the record; we excluded those that did not meet it. Subsequently, the first author (KM) read each evaluation report and assessed it using the rest of the inclusion criteria. This resulted in a dataset of 37 evaluations.

Data Collection and Analysis

We structured the database in Microsoft Excel™ using elements and their definitions from the study’s conceptual framework (Figure 2 above) as column headings and used it to systematically review each evaluation report. According to the systematic quantitative analysis method, if an element was present in a report, we entered a 1 in its associated column. If an element was absent or only partially present a 1 was recorded in the ‘none’ column. We did not consider evidence quality or appropriateness as this was outside the bounds of the inquiry. We developed the database iteratively; as reports were reviewed, some columns were added. For example, we added columns for demographic information provided by the report such as the education sector, year, and whether state/territory or national datasets were included in the evaluation.

We reviewed reports independently. Author one (KM) reviewed 33 evaluation reports; Author two (AG) reviewed 7. We double-coded three reports and discussed discrepancies until consensus was reached. Our initial interrater reliability was 98%.

At the conclusion of data entry, we checked that each column only had one number (or no numbers) in it for each report. The database was also checked to make sure that each element was recorded for every report. Where there was a discrepancy, the report was retrieved and reviewed, and the error corrected. Subsequently, we created summary tables as different pages in the Excel spreadsheet and summed the elements within and between reports. We calculated descriptive statistics to quantify the presence of elements within and across reports. The full data set is available via the Open Science Framework (https://osf.io/8fbpw/?view_only=fb5de0c81eaf4d65824ccc12a6a86624).

Results

Below we present the findings of the analysis of 37 Australian education sector evaluation reports published between June 2014 and November 2024. We begin with the demographic characteristics of the data set. The systematic quantitative analysis findings follow.

Demographics

Of the 37 reports reviewed, agencies authored 62%, and individual consultancies or academics based at Australian tertiary institutions wrote 35%. 2017 and 2018 had the most reports (6) published, and 2024 had the fewest (0), followed by 2023 (1). Most reports (12, 32%) gathered data from evaluands that were located across the whole of Australia. The states of Victoria and New South Wales (NSW) were the next highest, gathering data from eight evaluands. Neither the Northern Territory nor Tasmania had any published reports. Data analysis focussed on evaluands in the primary, secondary, and tertiary sectors. The largest percentage (16, 43%) focussed on evaluands located in both the primary and secondary school sectors. These reports were distinct from those conducted in either the primary or secondary sectors as the evaluand that was the focus of the reports was active in both primary and secondary schools. Evaluands located only in the primary sector were the fewest number (6, 16%).

Systematic Quantitative Analysis of Evaluative Reasoning Elements

The aim of this study was to use systematic quantitative analysis to identify the presence of elements of the integrated logic of evaluation and evaluative reasoning conceptual framework (Figure 2) in the data set. The following sections focus on an analysis of the findings of the systematic quantitative analysis. Initially each of the reports was scored based on the number of elements present, and this enabled a synthesis of the overarching findings and different trends between reports that included four elements or more compared with those that contained three elements or less to be identified.

Overarching Findings

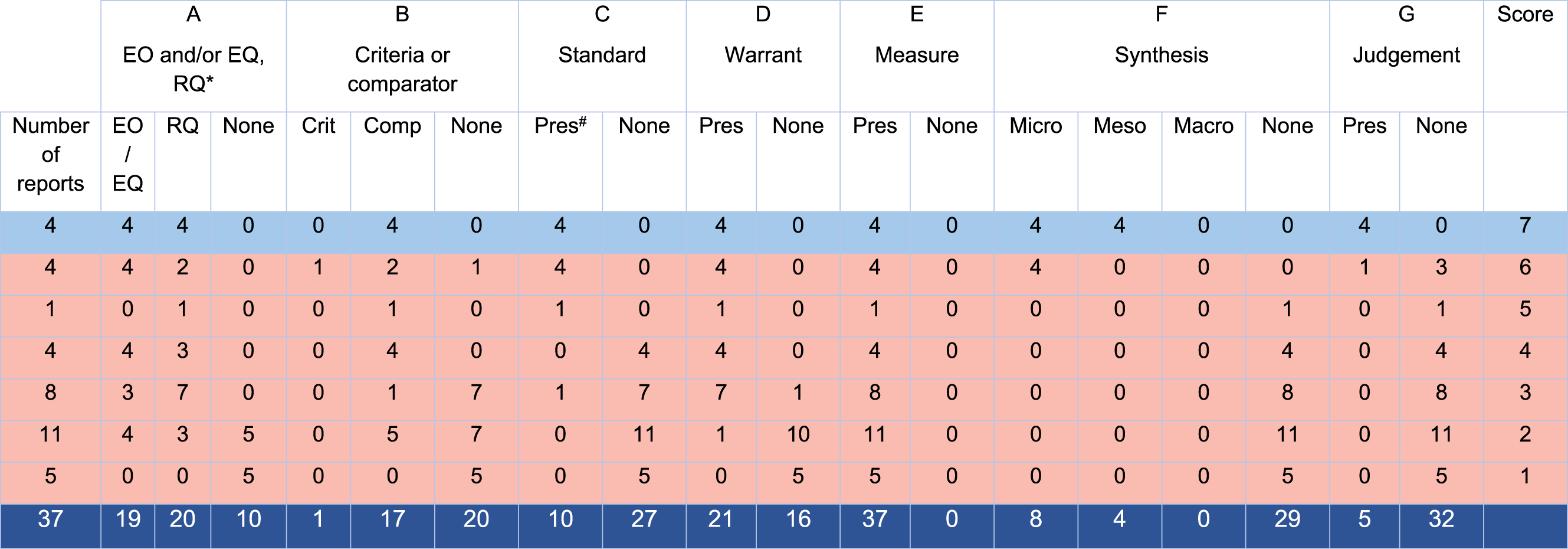

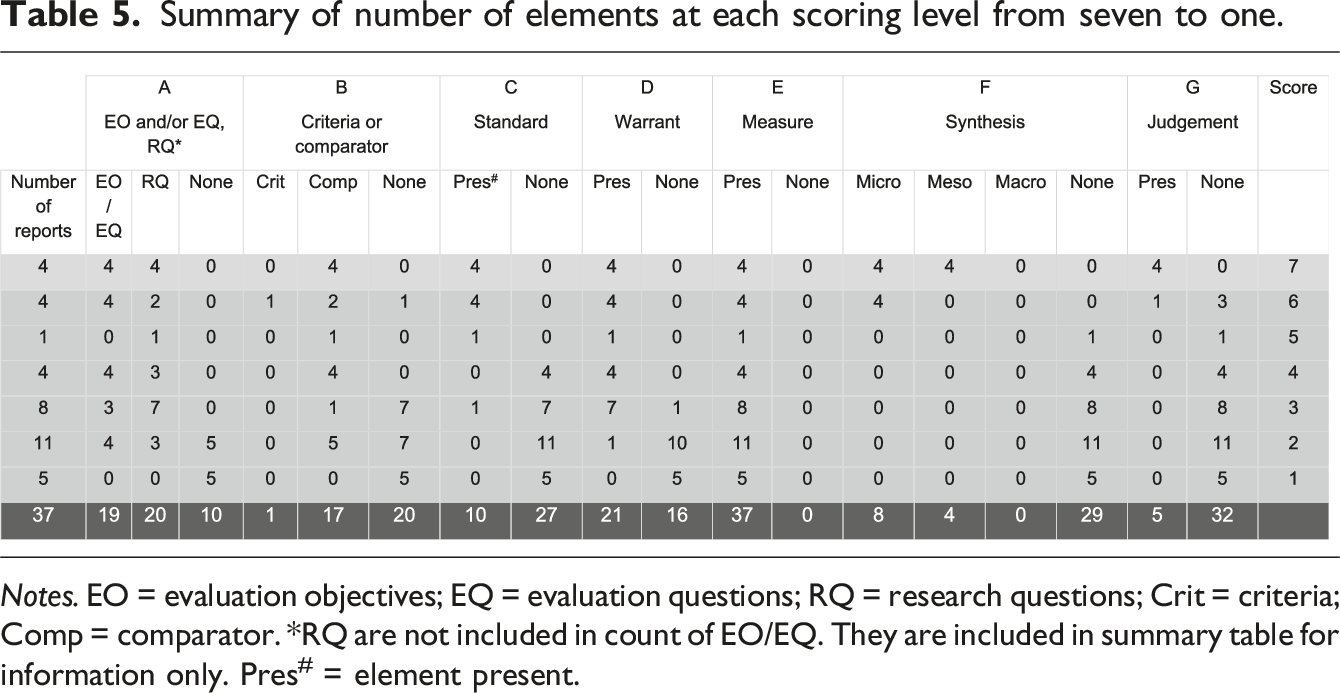

Summary of number of elements at each scoring level from seven to one.

Notes. EO = evaluation objectives; EQ = evaluation questions; RQ = research questions; Crit = criteria; Comp = comparator. *RQ are not included in count of EO/EQ. They are included in summary table for information only. Pres# = element present.

Report analysis provided four overarching findings. Firstly, the most significant finding was that only four reports (11%) of the 37 reviewed contained all seven elements. This means that only four reports constituted an evaluation according to the definition adopted for this study. This finding is significant because it indicates that although report titles included the word ‘evaluation’ they were not; primarily because the evaluators did not provide an evaluative judgement about the evaluand’s merit, worth, or significance. The reports that did not provide an evaluative judgement were categorised as research and are identified in Table 5 below and Supplemental File 3 with red shading.

Secondly, a general observation from the whole data set was that only one report (report 24) contained any identifiable criteria. These criteria, sustainability, effectiveness, and efficiency (economic benefit) were embedded in evaluation questions (report 24, p. 16). However, no specific definitions were provided for the criteria. In contrast, 17 reports contained comparators rather than criteria. Most comparators (12, 32%) were scholarly literature including other evaluations. This finding reflects that of Nunns et al. (2015) who also identified that more evaluations in their data set used literature as a comparator. While literature provides a broad context to situate the evaluand and its performance, it is an indirect comparator (Nunns et al., 2015). ‘Evaluative criteria specify the values that will be used in an evaluation’ (Peersman, 2014, p. 149) and provide the most explicit approach for comparison (Nunns et al., 2015). Consequently, an absence of explicit evaluative criteria undermines what it is to evaluate and fails to consider stakeholders’ values (Hurteau, 2008).

Thirdly, most of the data set (24, 65%) contained three elements or less. Therefore, only 13 (35%) reports scored 4 or more. A focus on the characteristics of the reports containing between six and four evaluative reasoning elements, which are closer to being evaluations that those with three elements or less, yielded the following observations. Eight of nine reports in this grouping did not provide a judgement about the evaluand. Report 28 provided a judgement ‘all three programs have been successfully designed and implemented’ (p. 49) but makes no explicit reference to any criteria in the report. The absence of this element meant that it was not classified as an evaluation according to the definition applied in this study.

In addition to missing a judgement, synthesis was the next element that was missing from reports that scored five or four. Synthesis is one of the elements that is critical to evaluations because it draws together performance evidence gathered about the evaluand to support judgements against criteria/comparator. The role of micro-synthesis in an evaluation is to integrate the criteria or comparator with evidence of evaluand performance to answer evaluation sub-questions (Davidson, 2014a). Evaluation sub-questions are related to one dimension of the evaluation. For example, if the evaluator wants to determine the effectiveness of a program that constitutes one dimension. If the evaluation is only measuring one dimension, then an evaluator will not need to conduct meso-synthesis because micro-synthesis will have answered the evaluation question. Subsequently, the evaluator should proceed to the next step in the logic of evaluation and provide an evaluative judgement.

Finally, standards and synthesis were missing from reports that scored four (4, 11%). In evaluations, standards identify differentiated levels, for example, on the continuum of excellent – poor, that an evaluand’s performance can be compared with (Davidson, 2005). This finding is significant because standards are also critical to evaluation. They provide a comparator that enables value to be ascribed to the evaluand’s performance. The absence of standards indicates that the evaluator has not identified how they will determine whether the evaluand’s performance was acceptable, according to pre-determined standards, in the subsequent synthesis step.

Reports Containing Three Elements or Less

Twenty-four (65%) of reports in the data set contained three elements or less. The consistent element included across all reports was measurement of some aspect of the evaluand’s performance. Aligned with measurement, most reports scoring three (7 out of 8), also included research questions. However, there was less consistency in included elements for reports scoring three. For example, when analysing reports containing three elements individually, report number 22 included research questions, ‘can XX program be applied in government schools?’ (p. 5), literature comparators, and results were compared with state achievement standards (standards) (p. 35 – 38), but did not provide a warranted argument, synthesis, or a judgement. Whereas reports 16 and 23 contained both evaluation objectives/evaluation questions and research questions and a warranted argument (in addition to measuring performance) only. Reports scoring two were mostly missing standards, warrants, synthesis, and a judgement, and there was inconsistent inclusion of objectives/evaluation questions and research questions and a comparator.

Discussion

In this study, we answered the research question: What quantitative evidence of evaluative reasoning can be found in education evaluation reports conducted in Australia between 2014 and 2024? Our analysis found only four (12%) of 37 reports constituted an evaluation as defined by our conceptual framework (Figure 2). To discuss, we compare previous empirical findings with those of this study, propose a new model for tracking and understanding evaluative reasoning and propose implications for practice.

Comparing the Findings of this Study with Other Empirical Studies

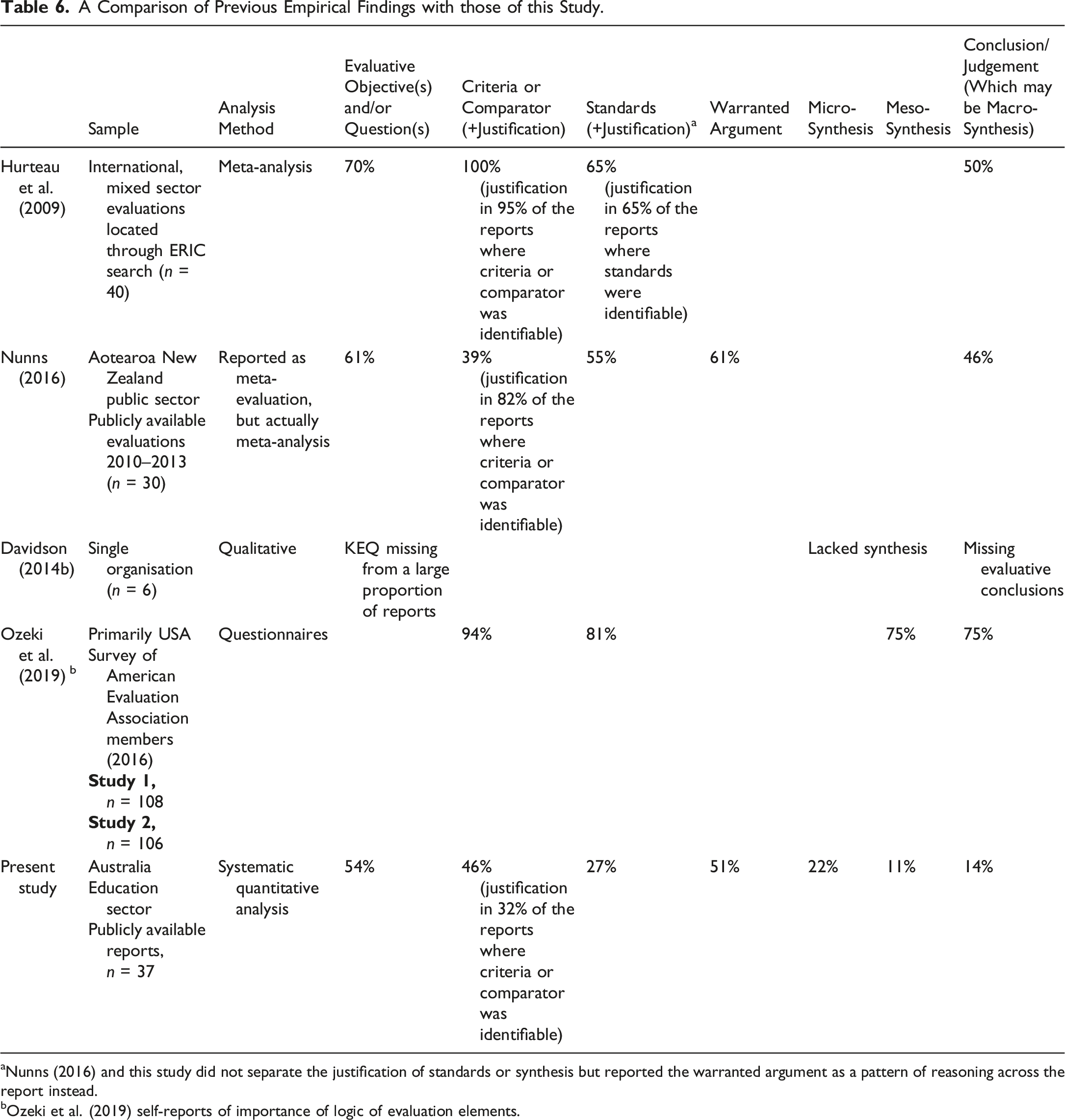

A Comparison of Previous Empirical Findings with those of this Study.

aNunns (2016) and this study did not separate the justification of standards or synthesis but reported the warranted argument as a pattern of reasoning across the report instead.

bOzeki et al. (2019) self-reports of importance of logic of evaluation elements.

Overall, there were fewer evaluative reasoning elements identified in this study when compared with all previously published studies. Percentage differences are more disparate for the presence of standards, synthesis and a judgement. They are less disparate for the presence of evaluative objectives and/or questions and a warranted argument.

Limitations of Previous Studies that our Study Addresses

Previous studies did not report evidence of all the elements of evaluative reasoning as we have done according to our integrated logic and evaluative reasoning conceptual framework. Two previous meta-analyses (Hurteau et al., 2009; Nunns et al., 2015) did not report the presence of warrants (Hurteau et al., 2009) or synthesis (Nunns et al., 2015). Our theoretical framework development highlighted the importance of both elements for a legitimate and justified evaluative judgement. The use of an integrated model to analyse evaluation reports presents an opportunity to support future evaluation practice.

Implications of our Findings for the Education Sector

This is the first study that we are aware of that has specifically focussed on evaluation in the education sector. While the practice of evaluation emerged from the US education sector it appears, like previous empirical findings, that its practice is variable, and it has evolved to end of term feedback sheets (Guenther & Arnott, 2011). Evaluation was originally about amelioration but then became about accountability (Mathison, 2008; Shadish, 1991). However, based on our research, use of evaluation reports for accountability may not be warranted for reasons related to research and evaluation.

Related to research, firstly, while all reports measured at least one aspect of the evaluand, only 20 (54%) of reports identified associated research question(s) that guided data collection and analysis. A further 10 reports (27%) did not identify objectives or research questions to direct their inquiry into the evaluand. This finding identifies that all reports are doing the research component necessary to gather facts about the evaluand, but a quarter of them are doing that without a specific focus. Secondly, warrants are missing from 16 (43%) reports. The evaluative reasoning elements present in these reports (in the main) are evaluation objectives/evaluation questions/research questions and measurement. This means research is being conducted into evaluands, but findings are not being justified by report authors.

Related to evaluation, 89% of reports analysed in this study failed to provide the information required for a legitimate and justified evaluative judgement. Thus, the most significant finding of this study is that the value of educational programs reported in the study sample is largely unknown. While all the reports provided a perspective of the participant experience of the evaluand, which Mathison (2010) identified as amelioration, a lack of a judgement about the value of the evaluand means that there is little understanding about whether the program was good (Ni, 2010; Schwandt, 2008) and why it was good (Ni, 2010). Additionally, and potentially more importantly, a lack of understanding about what a good educational program ‘looks like’ and what attributes make it good, places limitations on improving educational practices and supporting learning (OECD, 2013) in the Australian education sectors where the programs in the sample took place.

Limitations of our Study

Our study had three limitations. Firstly, was limited to publicly available evaluation reports that could be located using the search strategy. Thus, the sample may not include the total number of education program evaluation reports generated between June 2014 and October 2024. Secondly, a desk-based analysis meant we analysed only the information available in the report; we were blind to the contextual nature of the evaluand and the evaluation. Time, budget, and/or decisions made by the Commissioner will affect what was or was not included in these reports. Finally, the method used necessitates counting elements present in the conceptual framework. Therefore, this study does not make any judgement about the quality of evaluative reasoning in these evaluations nor of the evaluations themselves; we only made a judgement about the presence of reasoning elements in the data set based on our definitions.

Within these limitations, our study provides a ‘snapshot’ of evaluative practice in the Australian education sector between 2014 and 2024. Overall, the findings suggest variable practice of evaluative reasoning, aligning with previous empirical research in other countries and contexts (Hurteau et al., 2009; Nunns et al., 2015). In this study, it is evidenced by the finding that only four of the 37 evaluation reports analysed contained all seven reasoning elements. Further, in 24 reports (65%), three or fewer evaluative reasoning elements were able to be identified. As previously suggested, evaluative reasoning is a ‘building block of evaluation’ (Davidson, 2014a). Moreover, as ‘evaluation is an argument’ (Schwandt, 2008, p. 146) and the elements of evaluative reasoning provide a sound foundation for building credible, valid, and defensible arguments (Fournier, 1995a; House & Howe, 1999; Scriven, 1981), the variable practice found in this sample of reports is concerning for the Australian education sector, in particular.

A lack of understanding of what ‘to evaluate’ means has potentially impacted on this study because, although all the reports sourced contained the word ‘evaluation’ in the title, 33 reports exhibited the characteristics of research; research questions (in most), literature comparators; measurement; and, warranted arguments (in some). The research frame is reinforced by the absence of explicitly evaluative elements such as evaluative evaluation objectives and/or questions, standards, synthesis at any level, and judgements about value of the evaluand.

What Does This Mean for Practice?

The absence of evaluative reasoning in evaluation practice is not surprising. Only one evaluation textbook covers it explicitly (Davidson, 2005). Recent research shows it is not taught in introduction to evaluation subjects in university evaluation programs (LaVelle & Davies, 2021; LaVelle et al., 2023). While evaluation is often described as including determinations of merit, worth, and significance (Patton, 2008) the ‘how to’ aspect of this, and research on it, has been dramatically underdeveloped in comparison to research and practice related to research methods, utilisation, and stakeholder engagement (e.g. participatory methods).

The evaluation field is like body builders who miss a leg day – well-developed on top (e.g. methods, measurement, and causality), but underdeveloped in its foundation (e.g. values and valuing; logic and reasoning). Recent research (Roorda et al., 2020; Roorda & Gullickson, 2019; Teasdale, 2022; Teasdale and Pitts et al., 2023; Teasdale and Strasser et al., 2023) has made contributions to identifying criteria in evaluations in various contexts, but to make evaluative reasoning visible and actionable the field needs a clearer and simpler way of understanding it and differentiating it from research.

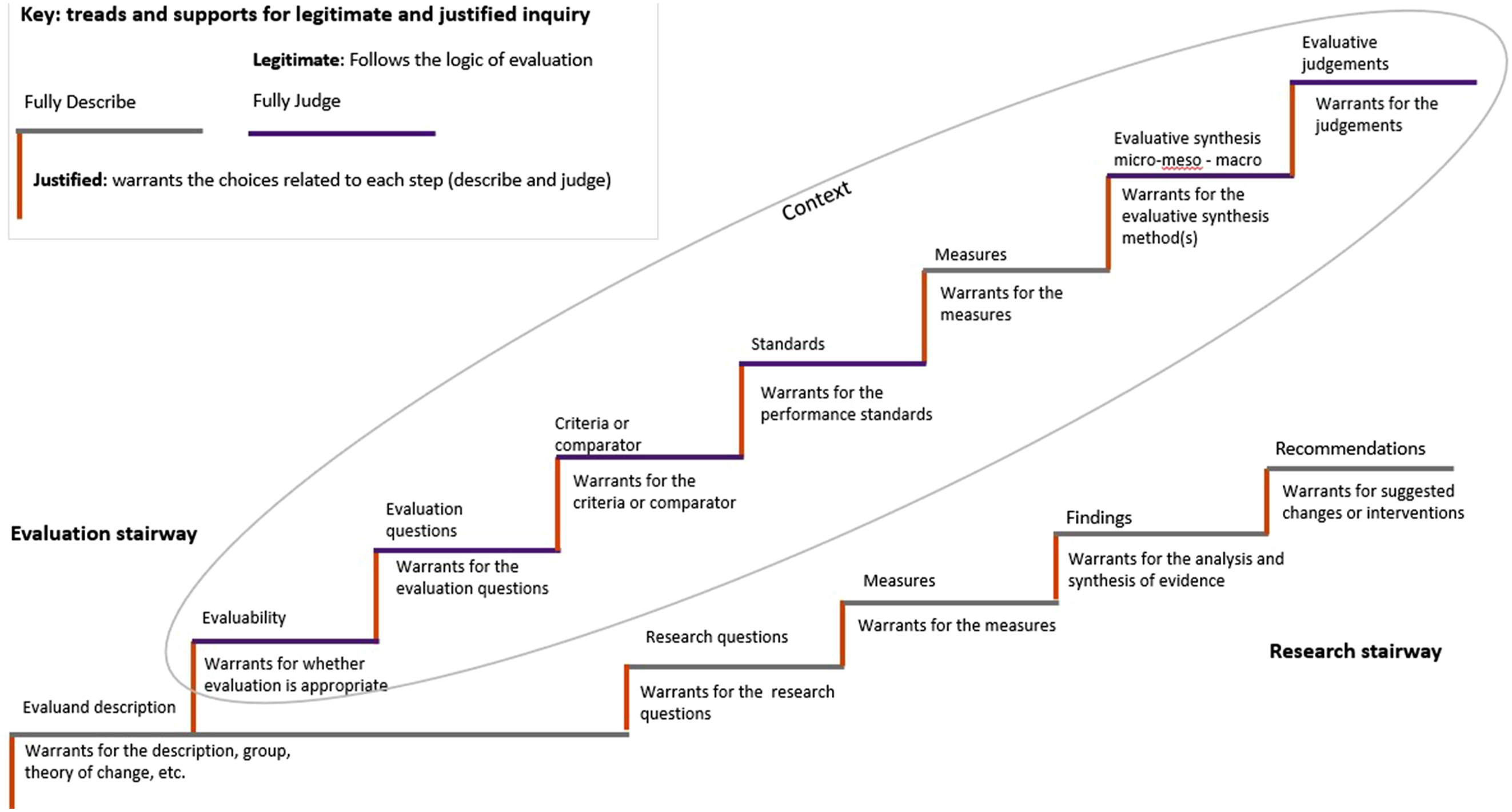

To that end, we propose Figure 3 which shows evaluation and research as separate stairways that start in the same place – describing the phenomenon – but lead to different destinations. Evaluation provides judgements about how good something is based on facts and values in context. Research provides facts to inform the knowledge base and recommendations. The stairway metaphor shows how describing, judging, and warranting interact for both research and evaluation. Each step of describing and/or judging is a tread on the stairway; descriptive steps are lighter coloured. The risers are the warrants, showing how each step needs to be supported to justify the choices made. In either case, missing a step, like criteria or standards for evaluation, or measures for both, means the argument has a leap in logic. Failing to provide a warrant means the argument has a step with no support. Missing any steps or supports undermines the validity, defensibility, and credibility of the claims. While following either stairway can lead to a helpful result, knowing which stairway you are on leads to clarity (heavenly!) about the inquiry process and the desired result. The two stairways can and should connect. Building on existing research can surface values, criteria, standards, measures and insights, to ensure past mistakes are not repeated and the burden of systemically underserved and over-evaluated populations is not increased with evaluation. Evaluative activity starts with explicit attention to the values of all stakeholders – not just evaluators, funders, or researchers – to ensure judgements are just. Identification of stakeholders, mapping of criteria, and participatory and reciprocal practices in evaluation can inform research efforts. The stairway of evaluation logic and reasoning © Authors CC-BY-4.0.

The evaluation stairway has some distinctions. Firstly, it is surrounded by context, which is essential to credibility. Choices made in the evaluation must be warranted based on context, which includes the physical, cultural, legal, theoretical, and organisational context of the evaluand, and the relevant ethical, cultural safety, and professional evaluation standards. Secondly, the final two steps of synthesis and judgement are a series of smaller steps and risers. Micro-synthesis generates judgements on data that provide the evidence for meso-synthesis; meso-level synthesis generates judgements on criteria or questions that are the evidence for macro-synthesis, which generates an overall judgement. Davidson (2014a) argued that only micro and meso steps are always needed. Third, while we have depicted this as a linear pathway, it is certainly more like M.C. Escher’s Ascending and Descending – a continuous process. Decisions made throughout will be informed and updated by all the other steps and supports.

Being explicit about evaluative reasoning can: • Provide direction for the sources, research and reasoning needed to support the various steps (Al-Bayati et al., 2024). • Reduce the amount of data required for collection (Vogels, 2025). • Prioritise and integrate the voices of the most important stakeholders (Hall et al., 2012; House, 2006; House & Howe, 2003). • Connect judgements about goodness directly to decision-making (Davidson et al., 2025).

These advantages demonstrate how evaluative reasoning can increase the quality, feasibility, and utility of evaluations. So how this be achieved? The stairway provides some insights about actions we can take as a field, as practitioners, and as commissioners and users of evaluation.

Firstly, we can consider evaluative attitude as part of the context. If the first step of describing the phenomenon is a landing, then evaluative attitude is a doorway on it, leading to the evaluation stairway. While standing on the landing, consider: Are those commissioning the evaluation open to critical feedback about the evaluand? If not, then the way is shut for explicit evaluative reasoning. A focus on good research (grey steps) can support future evaluative reasoning by (i) documenting the needs the evaluand is intended to address and how the problem has been framed, (ii) looking for relevant theories, (iii) being explicit about constructs (potential values), and (iv) summarising research and report findings that could be benchmarked against to set standards. If the attitude door is ajar, then it may be possible to use data to make the case for evaluation and increase evaluative attitude (Cousins et al., 2006). The steps explicitly related to valuing (purple) can help to identify whose voices are most important (Roorda & Gullickson, 2019) and integrate them in the ‘meaning making’ process across all the steps.

Using the stairway can help us think about warranting as an ongoing process throughout research and evaluation, which can be underpinned by cultural knowledge bases, existing theories, the peer reviewed and grey literature, and ongoing engagement with communities. Key resources from stakeholders and the knowledge bases can be used to warrant multiple steps. For instance, in a First Nations evaluation, the description of the evaluand may need to be done with systems mapping, the criteria based first on kinship and Country, and the ‘meaning making’ steps done in community, rather than by the evaluators or the commissioners. For educational evaluations, theories of learning, behaviour change, and implementation (Funnell & Rogers, 2011) can direct evaluators to relevant knowledge bases which hold potential criteria, standards, and measures. Realist evaluations and realist reviews will help warrant causal arguments, and developmental evaluations can provide principles that serve as criteria. Evaluation approaches can be used in response to the various warrants, as ways to action the choices that are appropriate for evaluating this evaluand in this context (Montrosse-Moorhead et al., 2024).

Conclusion

In (Pawson & Tilley, 1997) suggested that: Evaluation research has not exactly lived up to its promise: Its stock is not high. The initial expectation was clearly of a great society made greater by dint of research-driven policy making. Since all policies were capable of evaluation, those which failed could be weeded out, and those which worked could be further refined as part of an ongoing progressive research program. This brave scenario which (to put it kindly) has failed to come to pass (p. 13).

We propose that the promise of evaluation cannot be realised until evaluation practice consistently and effectively engages with evaluative reasoning. Taxpayers, policy makers, teachers, students, and all those who develop programs to influence education (and other sectors) need to understand whether their investments of time, energy, and money are worth it. Without explicit evaluative reasoning – logic and warrants – evaluation loses its power to help decision makers stop doing things that are harmful or wasteful, because the judgements are not made, or if made, are not valid, defensible, and credible.

Our research indicates there is work to be done: only four of the 37 evaluations analysed provided a legitimate and justified evaluative judgement about program value. The remaining 33 ‘evaluations’ are on the research staircase, providing descriptive facts about the evaluand, without ascribing value to it (and in some cases, not covering all the required steps). Using the stairways metaphor and the variety of resources available to support both the logic and warrants, can help commissioners and users get clear about whether they need evaluation or research and what is required for each. The steps on the evaluation stairway can assist evaluators produce credible, valid, and defensible evaluations of educational programs that attend to values in context. The ‘stairways to heaven’ can help all of us work together to provide an evidence-base for decision-making and for ensuring that quality education is available to all members of society.

Supplemental Material

Supplemental Material - Stairways to Heaven? Seeking Evaluative Reasoning in Publicly Available Evaluation reports from the Australian Education Sector 2014-2024

Supplemental Material for Stairways to Heaven? Seeking Evaluative Reasoning in Publicly Available Evaluation reports from the Australian Education Sector 2014–2024 by Kathryn Meldrum and Amy M. Gullickson in Evaluation Journal of Australasia.

Footnotes

Acknowledgements

The authors acknowledge Dr. Ghislain Arbour’s contributions as a co-supervisor on the Master of Philosophy study which was the first round of this research. Amy is grateful for the conversations with Dr. Jane Davidson, Dr. John Gargani, and the New South Wales Department of Education and Australian Evaluation Society Chapter about this research and its implications for practice.

Ethical Considerations

Evaluation reports constituting the data set for this study were retrieved from publicly available internet sources. Consequently, ethical approval was not necessary.

Funding

The authors received no funding to conduct the research.

Declaration of Conflicting Interests

The authors declare no potential conflict of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.