Abstract

Integrating evaluation initiatives in organisations as part of routine operations to support organisational learning and development can be difficult; extant literature lacks detail on the factors enhancing sustainability. This article presents research undertaken with evaluation advocates attempting to embed evaluation in their Australian non-profit organisations. The research involved interviewing seventeen participants, four of whom also were the focus of organisational case studies. The researchers used social interdependence theory to understand participants’ strategies for embedding evaluation and found that some elements of cooperative teamwork were more prominent than others. Participants in high hierarchical positions, or those who had influence, worked intentionally and incorporated strategies that aligned with all five elements. Examples of those strategies and their use in context presented herein may help leaders and internal and external evaluators increase the likelihood of embedding evaluation in organisational systems.

Keywords

• Integrating evaluation initiatives in organisations as part of routine operations to support organisational learning and development can be difficult. • Social interdependence theory provides a recipe for positive group dynamics which can result in a productive group work and contribute to making evaluation meaningful. • Evaluation advocates are non-evaluators working towards embedding evaluation in the organisational system.

• Evaluation advocates in high hierarchical positions, or those who had influence, worked intentionally, and incorporated strategies that aligned with all five elements of social interdependence theory to increase the likelihood of embedding evaluation in their organisational systems. • Examples of those strategies and their use in context may help leaders and internal and external evaluators increase the likelihood of embedding evaluation in organisational systems.What we already know:

The original contribution the article makes to theory and/or practice:

Introduction

Evaluation has been connected to organisational learning; evaluative inquiry is a form of organisational learning that connects personal and team level growth and development (Preskill & Torres, 1999). Although evaluation can be defined and undertaken in different ways, it is generally initiated for reasons linked to reporting and decision-making purposes for improvement or an assessment of quality and value (Davidson, 2005). This research used a definition of evaluation that encompasses options for what evaluation in organisations could involve in a particular context; it covers evaluation of strategic goals, the evaluation of programs and services, and includes the development of systems for learning and improvement (Rogers & Williams, 2006). When evaluation is relevant, meaningful, and useful for people working in non-profit organisations it can support improvement, assist with communicating effectiveness, be a source of information, and meet accountability requirements (Carman & Fredericks, 2010; Harman, 2019; Murray, 2010). Evaluation mainstreaming is possible when organisations systematically, fundamentally, and sustainably integrate evaluation into the organisational culture and practices (Gullickson, 2010; Sanders, 2003). However, a recent integrative review focusing on evaluation capacity building revealed that the factors that may affect the sustainability of effective evaluation practices in organisations are largely absent from the current literature (Bourgeois et al., 2023).

Embedding evaluation can be particularly challenging in organisations because of structural barriers, resource constraints, and interpersonal challenges (Bach-Mortensen et al., 2018; Campbell & Lambright, 2017; Gilchrist & Butcher, 2016; Norton et al., 2016). When an organisation has advocates who have been able to successfully address those challenges (Rogers et al., 2022; Rogers & Gullickson, 2018), embedding evaluation initiatives in organisations is the next challenge because it is often those individuals who are driving the initiatives. Recent research on the sustainability of evaluation capacity building initiatives found that employees recognise how fragile and person-dependent these can be: Turnover and potential turnover of key evaluation personnel was the main source of anxiety for organizations around sustainability… Although real turnover was an issue, the fear of potential turnover was most commonly related to the instability of evaluation within organizations (Wade & Kallemeyn, 2020 p. 5).

In the non-profit sector, the barriers to evaluation include minimal desire for change, reluctance to question assumptions, past negative experiences, difficult terminology, and a focus on individualism that supports the individual above organisational goals (Chaudhary et al., 2020; Donaldson et al., 2002; Geva-May & Thorngate, 2003; Mason & Hunt, 2018; Newcomer & Brass, 2016). Progress toward embedding evaluation in these organisations is perhaps even more person-dependent, as interpersonal relationships are key and the steps in the transition from individual and collective skills and knowledge through to embedding systems for sustainability of evaluation are not clear (Grack Nelson et al., 2018).

The extant literature does, however, identify non-evaluators who advocate for and champion the uptake of evaluation among colleagues as enablers to sustaining evaluation initiatives (Chaudhary et al., 2020; King & Volkov, 2005; Labin, 2014; Preskill & Boyle, 2008a; 2008b; Taylor-Powell & Boyd, 2008; Wade & Kallemeyn, 2020). How these individuals are working towards embedding evaluation in the organisational system may assist with understanding how to increase the likelihood that initiatives are sustainable (Rogers & Gullickson, 2018; Silliman et al., 2016a, 2016b).

The researchers used social interdependence theory to understand the interpersonal interactions that influenced how evaluation advocates and their colleagues embedded evaluation (Johnson & Johnson, 2002). The theory suggests that the way the goals of team members are related has an influence on expectations, interactions, and outcomes (Johnson, 2003). It is based on cooperation and competition; cooperative goals can lead to mutual success but competitive goals can lead to opposition (Johnson, 2003). To operationalise the theory for use in practice, the theorists empirically validated five elements of cooperative teamwork (Johnson & Johnson, 2015). Research has shown that when the elements are considered in the design of teamwork and purposefully structured, they can lead to increased productivity, increased psychological wellbeing, and beneficial quality interpersonal relations (Johnson & Johnson, 2002). The element of positive interdependence is when everyone needs each other for success and is essential for establishing cooperative relationships. The element of individual accountability is about doing one’s fair share. Social skills is about communicating, resolving conflicts, and cultural competence. Group processing involves reflecting on how well the group is functioning and the element of promotive interaction is about providing encouragement (Johnson & Johnson, 2015). This theory provided a useful lens through which to understand the social processes of facilitating interactions and managing challenging dynamics while implementing evaluative initiatives (King & Stevahn, 2013; Stevahn & King, 2005; 2016).

In this article, the researchers present findings from a study with non-evaluators, evaluation advocates, that demonstrate the ways and extent to which they were able to support evaluation to be embedded within the organisational system. Rogers et al. (2022) define an evaluation advocate as ‘an individual who motivates others and provides energy, interest, and enthusiasm by connecting evaluation with colleagues’ personal aspirations and the organizational goals to make judgements about effectiveness’ (p. 86). The research question that this article attempts to answer is: ‘In what ways do evaluation advocates working in non-profit organisations support evaluation to be embedded within the organisational system?’ This article will focus on their strategies for sustaining evaluation initiatives. The findings from this research would be applicable in other types of organisations and the theoretical applications may be useful for anyone working in a team because the findings are focused at the interpersonal level (Rogers et al., 2022). It will firstly provide background on the method and then compare the strategies of participants with and without power and influence. The discussion will explain these differences by drawing upon the theory of social interdependence. The article concludes with implications for the evaluation field.

Method

The researchers adopted a qualitative approach that involved the co-construction of findings with participants, colleagues, and evaluators to collaboratively develop an understanding of the social interactions that were happening in practice (Denzin, 2001; Denzin & Lincoln, 2005). The focus was on program evaluation and evaluation was understood to be the systematic process of seeking a judgement about the value of something, as defined in the Encyclopedia of Evaluation, ‘an applied inquiry process for collecting and synthesizing evidence that culminates in conclusions about the state of affairs, value, merit, worth, significance, or quality of a program, product, person, policy, proposal, or plan’ (Fournier, 2005). To include as many participants, types of initiatives, and uses of evaluation as possible, the researchers did not exclude any forms or approaches. Rather, a broad interpretation was adopted that included organisational self-assessment, evaluation of specific programs, services, and policies, and organisational learning and improvement systems (Rogers & Williams, 2006). As this research was part of a larger study, an indepth description of the methods is included in Rogers et al. (2022). This section of the article details specific information about the participants, research design, data collection, and data analysis strategies that are relevant for understanding the findings discussed in this article.

Design

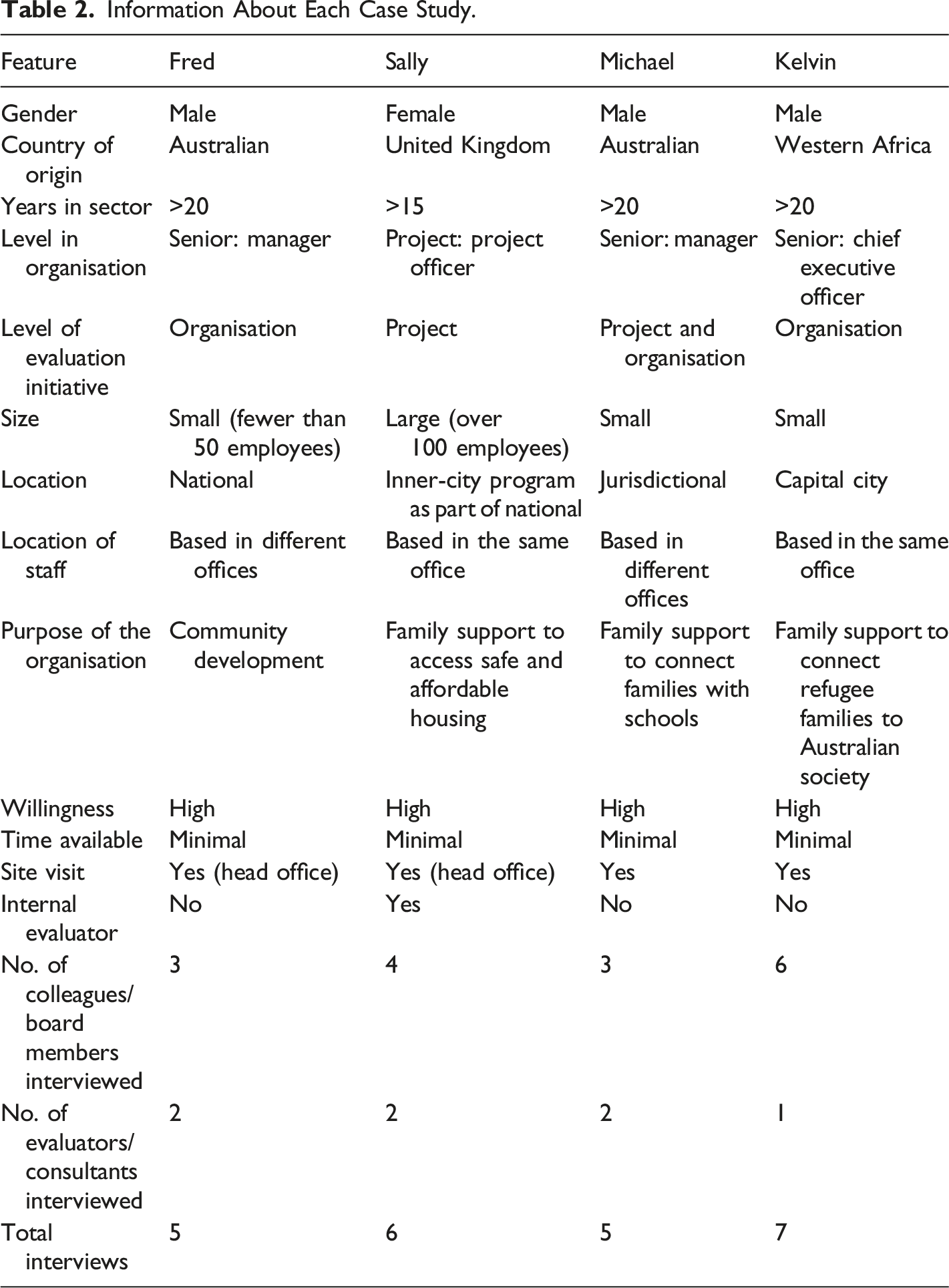

The individual was the unit of analysis in both parts of the qualitative research, which included seventeen semi-structured interviews (Patton, 2002) and four case studies (Stake, 2006). Qualitative methods were appropriate for emphasising the perspectives of participants and exploring their understanding. Formal theory assisted with determining if the discoveries around interpersonal interactions fitted within a larger body of evidence (Gay & Weaver, 2011). The interviews focused on the individual level and provided the basis for selecting and conducting the case studies. Interviewing offered a way of drawing out the participants’ perspectives and eliciting information that could not be ascertained by observation (Patton, 2002). The multiple case studies examined the characteristics and behaviours of individuals in relation to their interactions with colleagues in an organisational context (Stake, 2006). These were appropriate methods because the research involved understanding the challenging relationships between multiple systems (social, organisational, and inter-organisational). The study was conducted in accordance with the ethics approval granted from the University of Melbourne’s Human Research Ethics Committee (HREC: 1647875).

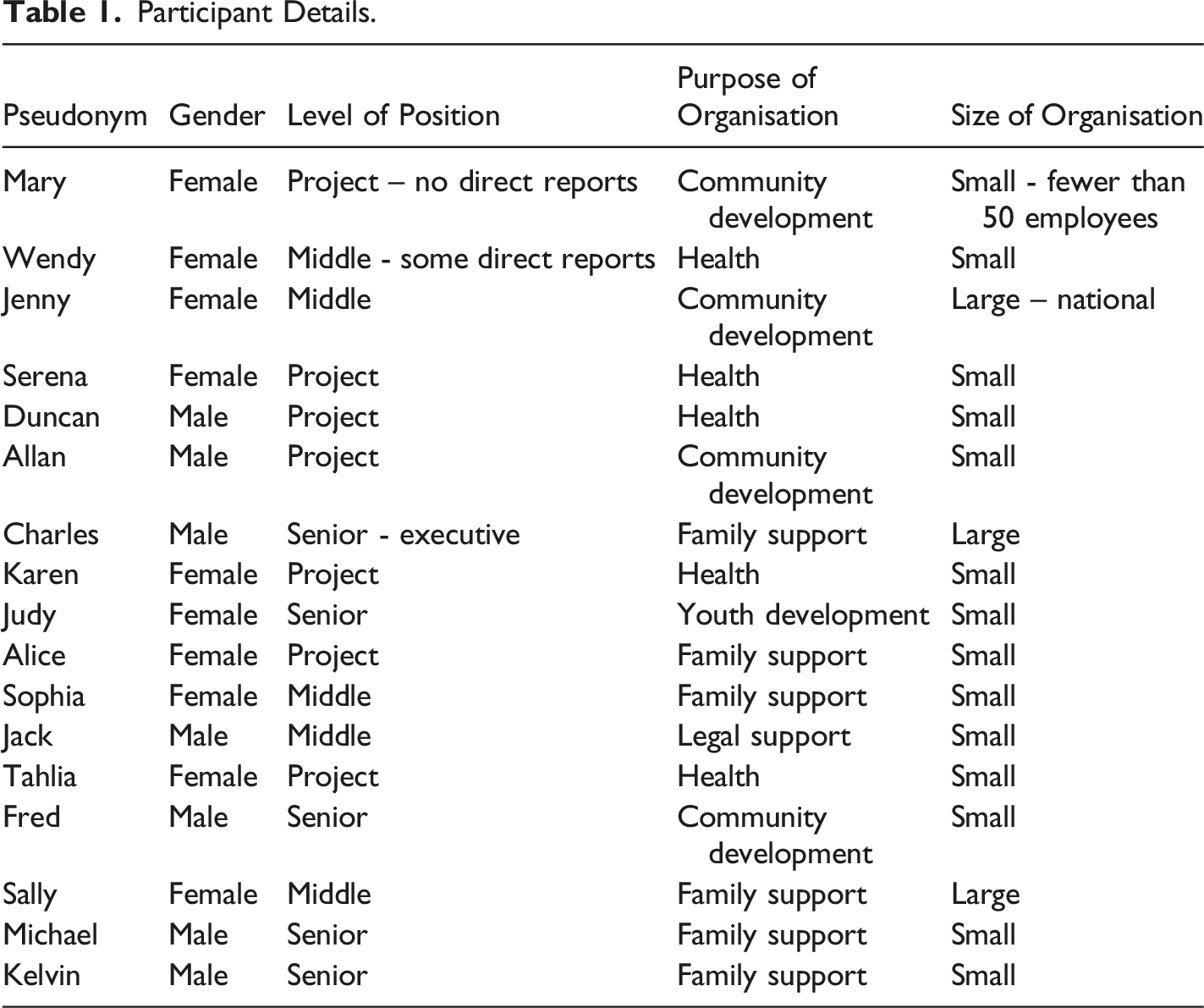

Sample

Participant Details.

Information About Each Case Study.

Data Collection and Analysis

Data collection occurred in 2017–2018. All participants were invited to review their transcripts and sense check findings. The interview schedule for the participants included the following questions: • What do you think are the key factors required to embed and sustain evaluation in an organisation? • Please give an example of what has worked for you. • What challenges have you faced?

Data collection for the case studies involved semi-structured interviews with evaluators and colleagues working with the participant, a document analysis, and a site visit. The interview schedule for the participants’ colleagues and evaluators who had worked with the participants included these questions: • What changes (positive and negative) have happened in the organisation in regards to evaluation? • How sustainable will the changes be in the long-term?

Analysis of the qualitative data was undertaken simultaneously with the data collection (Stake, 2006). As the data was collected, the constant comparative method was used to identify patterns and themes and code them under categories in an iterative process. A cross-case analysis of the case studies was the analytical approach used to highlight similarities and differences between cases prior to synthesising with the interview data and literature to enable the construction of characteristics of participants in different settings (Stake, 2006).

Findings

This section presents findings related to the strategies the participants used to facilitate group work around their evaluation initiatives and how these contributed to embedding evaluation in the organisational system. The first half of this section will examine participants’ responses to the question about strategies used to embed evaluation in the organisational system. It presents the reported findings that although participants actively promoted evaluation, they were not always able to ensure that their efforts were embedded in any sustainable way. The second half outlines how it was only participants in senior positions, or those who had influence, who worked intentionally and thought strategically about the group dynamics (how to hold people accountable and how to reflect on group dynamics) that would eventually contribute to embedding evaluation.

Attempts at Embedding Evaluation

This section begins by highlighting how the participants attempted to embed evaluation. Their strategies for embedding evaluation included linking evaluation to the benefits the organisation was seeking, demonstrating how they could use evaluation for advocacy, and using evaluation recommendations for improvement. Participants suggested that the best way for them to embed evaluation was to help their co-workers to understand its value and purpose. They did this by garnering support from different sections of the organisation, seeking expertise from their networks, and adapting tools. They opportunistically connected evaluation with other plans, kept evaluation on the agenda, and requested or allocated budgets for evaluation. Participants kept evaluation simple, manageable, useful, appropriate to audience and context, and used persuasive communication techniques.

This vignette from Judy illustrates how she was able to successfully incorporate evaluation into her small organisation of fewer than five people and influence other organisations they were working with:

As a result of being involved with an evaluation and interaction with an external evaluator, Judy recognised the power of evaluation for advocacy purposes. As the CEO of an organisation delivering a service to young people in marginalised communities from culturally and linguistically diverse backgrounds, she used her influence to guide her staff and the leaders of other organisations to support monitoring systems to be put in place. She grappled with the need for independent evaluation in a context where rapport building and relationships built on trust were so important for reliable and accurate information to be sourced. However, she developed a critical-friend relationship with an evaluator to overcome some of these challenges. Judy said: I think the big lesson for me is knowing that you can do some simple things in the evaluation space. That it doesn’t all have to be big, hard, long, challenging. That really, as long as you can think about coming back to that purpose, what do you need to learn from this? You can make it manageable…. So the great thing about my experience with [External Evaluator] has been working on those ways to make things happen simply and easily. And even if it is just a first step, that still has validity.

This vignette from Sophie demonstrates her awareness of the high levels of energy and enthusiasm required to support a team to embed evaluation into the way the staff did business:

In a management position, Sophie recognised the need to heave leadership support if evaluation was to be embedded in a sustainable way across the entire organisation. However, she found it challenging to make other managers see the benefit of evaluation. Sophie therefore used engagement strategies that showed her co-workers how using evaluation could help them to contribute to the organisational goals and encouraged them to try innovative tools. Sophie said: I’m a very positive sort of person. I have lots of energy and enthusiasm and interest. And I always see things as a challenge and as exciting and as interesting. But I think there’s a whole range of ways you could do it. But I think it’s about putting evaluation up as part of what we do and taking the fear out of it. Creating that energy around it and excitement around it. And saying to people, ‘Go and find some tools that you’d like – that you think could work’, like, ‘Let’s try that. Okay? You’re saying that’s a new tool. Let’s have a look at it. Let’s try it out, and if it doesn’t work, we can change’. So, I’m very much up for, ‘Let’s see how we go with it’.

Some participants thought embedding evaluative thinking was a good approach where they attempted to make asking evaluative questions part of the way the organisation did business. Another participant used an empowering process for staff to access their own data and generate further demand for evaluative information. Some participants also gave examples of where they built evaluation systems and tried to embed evaluation into processes, policies, and procedures. Examples included incorporating evaluation into job descriptions and integrating evaluation into the project management cycle so it became routine. Participants attempted to embed evaluation into the system by incorporating opportunities for reflection into meetings, infusing logic models across the organisation, and routinely sharing evaluative information between different parts of the organisation.

Challenges with Embedding Evaluation

However, even though participants excelled and created impact through small wins that were about generating enthusiasm for the potential of evaluation, many of the participants believed if they were not there advocating for evaluation, the initiatives and what they were working towards might collapse. This section shares how ultimately the participants believed their efforts would not be maintained beyond their personal involvement.

Judy was aware of how precarious embedding evaluation as a routine process was because, although she infused enthusiasm in her team, there was minimal capacity in the organisation for this work to continue if she was absent for any length of time. When she went on leave, she found: ‘That stuff instantly falls in a heap’. Jack made a similar pronouncement: ‘Without having also an allocator, or a voluntary champion, or a few – then I think that also makes it hard to sustain… I fear that if I left them tomorrow, it might all stop, or it might not keep happening in the same way. Or it might be a shadow of its former self’. Sophie made this recommendation: There’s only one thing that will work to keep it sustained – and that is commitment – total commitment from senior executives and the board. Unless it’s written into a strategic plan, and then down to the business units, or planning departments, or service areas, or whatever – unless it’s actually written there – it won’t happen. It will happen on an ad hoc basis, it will happen for particular programs, depending on funding, and depending on program constraints, and depending on who’s going to evaluate this. But if you really want a reflective, evaluative organisation, my belief is that there really does need to be part of who the organisation is from the top. I would prefer to have an organisation that was, ‘This is who we are. We are… an evaluative thinking organisation. We use evaluation’. It’s embedded in their strategic plan and their business plan and in everything they do.

Participants recognised themselves as people who could keep the momentum going. Their prodding, suggesting, cajoling, promoting, prompting, and encouraging were what kept things rolling. Their co-workers were predominantly not self-driven to continue taking the time to undertake evaluation. The busyness of everyday work soon took precedence if the participant was absent for any length of time.

In summary, participants expressed concern about how fragile and unsustainable embedding evaluation was without their direct input. A large proportion of the participants’ roles was related to interpersonal interactions. Without continuity of those connections and efforts, evaluation could disappear. The interpersonal skills were where participants excelled, applying the right amount of influence to the right person, in the right way, at the right time for decision-making and for evaluation initiatives to catch on.

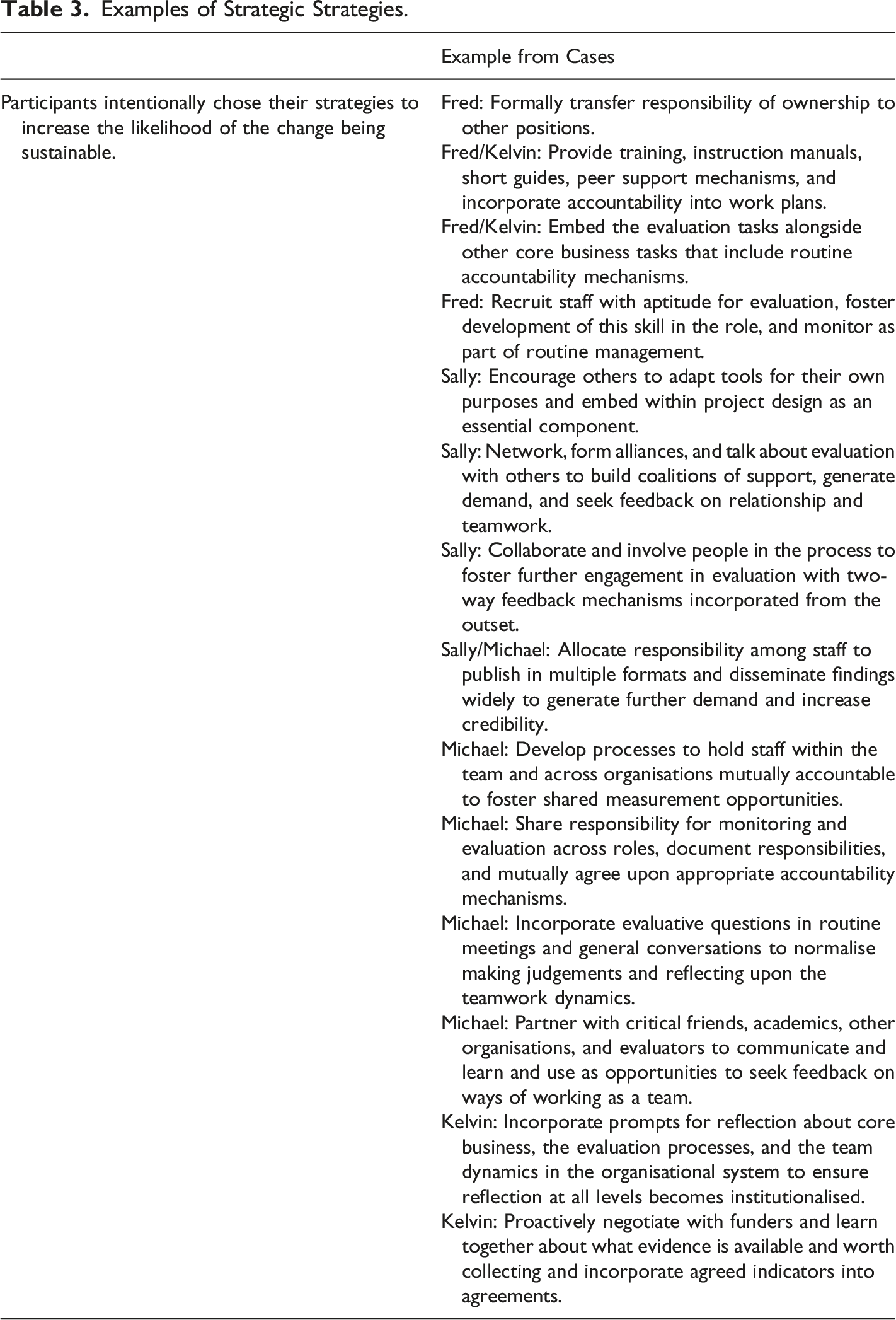

Strategies Used by Participants in Senior Positions or Positions of Influence

In contrast with many of the interview participants whose strategies were unplanned and relied upon seizing opportunities as they arose, the case study participants worked strategically and with intent. The strategies presented in this segment extend beyond the examples documented in the segment above. This segment presents evidence of strategies that are examples of holding other people accountable and reflecting on the group dynamics. While many interview participants felt that their efforts were not going to be embedded, these case study participants provide evidence that their planned approaches may have increased the likelihood of their changes being sustainable. This section increases understanding of what participants had to do to embed evaluation into their organisational systems.

Individual Accountability: Holding People Accountable

Evaluators and colleagues recounted the strategies participants used to intentionally involve others, share responsibility, decrease the dependence on themselves to drive the initiative, hold other people accountable, and ultimately, make sure team members contribute fairly. Fred was in a senior position with multiple accountability levers at his disposal. Kelvin was also able to use his seniority and authority to embed the evaluation tasks within the organisational processes. Sally’s case highlights how, from a project-level position, she had to generate demand for evaluation with people in senior positions so they could instigate accountability mechanisms as part of routine operations. Michael, in a senior position, not only held his team accountable, but generated demand for evaluation at the sector level. The collaborating organisations in Michael’s case enacted relationships that incorporated mutual accountability mechanisms.

Fred used accountability levers to help ensure that the evaluation initiative in his organisation was not person-dependent. In 2015, an external evaluator discussed the risks of the initiative being person-dependent and proposed strategies for how to build in sustainability. One of the strategies Fred initiated was a change management process that transferred the responsibility of driving the initiative to other people in the organisation. His colleague said, ‘He really took a back seat at that point and started removing himself and released me to do more, with the understanding that I was trying to get out of there as well. It was like a progressive plan to build up capability within the team’.

At Fred’s organisation, individual accountability related to data systems were another strategy used to embed evaluation. The organisation created an internal monitoring and evaluation database and then provided training, instruction manuals, short guides, peer support, and accountability mechanisms in work plans so that using and updating it became part of people’s routine tasks. Fred’s colleague said, That part is sustainable because our team understand the database. Not just how it works, but why we need to do it. And the second part is that now there is accountability processes in place so there is going to be six-month peer-to-peer audits of the database by the managers… They can use that to learn from each other and their teams, but also identify where there are gaps or weaknesses.

This approach ensured that this evaluation initiative was sustainable because Fred connected it to the core business of the organisation and to individual position descriptions. The same colleague said, ‘The way that we’ve integrated that framework into the database makes it more sustainable… They are passionate about and very on board about that framework, so to have that there and the database… It is embedded, it is integrated’.

Fred’s organisation also actively recruited operational staff who not only had community development skills, but the necessary cognitive skills to record monitoring information in the database. He encouraged the recruitment of staff with aptitude for evaluation, fostered development of these skills in their role, and monitored that development as part of routine management. As challenging as this was, the colleagues interviewed were on board with using the database, as shared by this Aboriginal community development officer: ‘I think it is just going to get more fine-tuned over the years as it goes’. Similarly, from this officer: ‘It is much more kind of manageable and easy and will support us to do the monitoring work and really capture the story of change that the groups are going through’.

In Kelvin’s case, he was a senior manager who could instigate and maintain accountability mechanisms for evaluation across the organisation. While the immediate focus may have been on survival of the organisation, he was implementing a person-centred approach with an evaluation initiative alongside to increase the likelihood of sustainability beyond the current government funding cycle. The dilemma this organisation faced prior to implementing the changes was captured by this board member who said, You can’t just be a lovely well-meaning community support organisation any more. You need to be well organised and structured and have a good business model to support the sustainability of the organisation. And how do you do that but not lose the empathetic good people and still have your caring culture?

Kelvin’s first step was to hold staff accountable for their tasks around evaluation. He focused on ensuring his team could provide the funders with the data they needed, with a longer term view that this would help ensure that the organisation was not dependent upon a restrictive grant cycle. Kelvin embedded an evaluation system within routine operations that supported the vision of the organisation and not the other way around. The systems were designed in collaboration with the staff who had to use them to measure their own achievement and demonstrate effectiveness in a proactive way. The external consultant interviewee said, What comes first is an individualised outcomes-based focus for the person… it was the approach to service delivery that came first, then being able to massage that in framing that in the way that met government reporting requirements… It didn’t matter what the government was doing – [Kelvin] had to get the care for the clients’ right first.

Kelvin’s subsequent steps were about proactively collaborating with funders to learn together about what evidence was available and worth collecting. He then incorporated the mutually agreed indicators into contracts that staff turned into work plans to hold themselves accountable. Proactively engaging and negotiating with funders to promote the way the organisation conducted business, conducted evaluation, and communicated the changes was a key way Kelvin supported the sustainability of the organisation and the evaluation systems that were being put in place. Board members accompanied Kelvin to meetings with representatives from the government departments and recounted, ‘It was most definitely a negotiation… He was engaging with those services and explaining why things were changing’. Being able to see the situation and communicate what was happening in a mutually beneficial way with both the staff on his team and also with funders was seen as important by this board member: He has a particular skill set around evaluation of outcomes, and he is able to articulate that in the tender responses that then seems to align with what the Commonwealth [funder] is wanting… We’re actively applying for tenders, some of which we may not have applied for in the past, and we’re being successful in our applications.

In Sally’s case, from a project-level position, it was harder to make her colleagues accountable as she did not have the delegations to assign evaluation tasks to people’s workloads. She was, however, able to generate demand for evaluation among senior leaders in the organisation who did have power. She committed to sharing information in multiple formats and created evaluation products that increased the credibility of her team. This was the catalyst for others in more senior positions to apply accountability mechanisms. For example, throughout the entire initiative, Sally was instrumental in making sure the findings were published and shared with a wide range of internal and external stakeholders. The evaluation was incorporated into the organisation’s strategic plan and the pilot developed into a service model. Hence, by sharing the findings with multiple audiences across different platforms, not only was the program and the evaluation likely to have made a contribution to the broader non-profit sector, but this triggered senior management to demand more evaluation from middle managers. There was evidence from one of the internal evaluators that indicated that the pilot alone had increased the profile of the evaluation unit: ‘I think there is a greater acceptance of the team and what we do’. The service design advisor was also highly positive about the evaluation. She found the information useful for advocacy purposes. She said this evaluation had generated demand for more evaluative information: I think it would be wonderful if all our programs could be evaluated… I think it gave us a wealth of information as a starting point, and it was certainly very useful… articulating what had worked well and hadn’t… We were really looking to build off that.

Sally and her team’s collaborative approach, combining program design and evaluation, also changed the way the internal evaluators worked with the project-level staff. The combination made such a valuable contribution on the first attempt that the evaluation unit increased their efforts to incorporate a similar collaborative approach into the design of other programs. The accountability processes and timelines of the evaluators changed as a result of Sally’s efforts; the evaluators henceforth committed to seeking engagement at an earlier stage of the process across all new initiatives. An evaluator said, ‘We recognised how important it was to have that close involvement… so more often now we try and get involved at an earlier stage so that we can have more of a role in developing the service model and the program logic together’.

Michael, working from a senior position, also ensured his staff understood that evaluation tasks were part of routine operations and he held staff accountable by embedding responsibilities into role expectations. In addition to these processes, he also generated demand for evaluation among the organisations with which they were collaborating. The purpose of this was to influence the working environment at a sector level so that the collaborations included mutual accountability mechanisms. For example, Michael formally requested that team members take every opportunity available to share the process of operation, outcomes, and information from evaluations to widely publicise the program. In the 10 years prior to conducting this research, the organisation disseminated over 20 pieces of documentation in different formats, and members of the team were routinely required as part of their roles to discuss the program and evaluation in the public domain. Michael led by example by making evaluation reports and newsletters publicly available on their website and giving presentations at conferences. He ensured that staff had time dedicated as part of their role to assist with producing these materials. This resulted in increased visibility at a local level and enabled the program to be referenced in publications developed by government departments and independent researchers. Importantly, these internal accountability mechanisms and subsequent interactions gave the organisation a platform to hold their funders to account without compromising the funding relationship because they could challenge inappropriate ideas by referencing their published evaluation products.

The evaluators interviewed in relation to Michael’s case reported that he and his team took up shared measurement opportunities with other organisations and incorporated mutual accountability mechanisms. He saw the value in developmental evaluation, collective impact projects, and shared value partnerships as ways of drawing the organisation away from output style accountability. Relationships with other stakeholders in the community, potential funders, other organisations undertaking similar or complementary programs, and external evaluators or researchers were all actively sought and invited onto the team. Participation on other reference groups, other collective impact structures, group training opportunities, and stakeholder meetings was also sought out and considered to be opportunities for learning, relationship building, and avenues to enhance ongoing collaboration. Representation on these groups was not left to Michael, but the responsibility was shared across roles. The team documented responsibilities and mutually agreed upon appropriate accountability mechanisms. All co-workers were encouraged to participate to share the potential opportunities across the team. Therefore, such participation continued to generate more interest in evaluation, not just on an intra-organisational level, but also at the inter-organisational level.

Group Processing: Reflecting on Group Dynamics

Evaluators and colleagues pointed to examples that indicated that the case study participants were strategically thinking about and implementing ways of reflecting on the group dynamics. They found opportunities to seek feedback from others about their work around evaluation. Although informal, these relationships incorporated a two-way feedback mechanism from the outset. During the pilot project, Sally used her social networks and took every opportunity to link with other sections of the organisation and seek constructive feedback on the initiative. This occurred even when they were not directly involved, even when the project had finished, and even when it was beyond her own organisation and involved representatives from other organisations and stakeholders. Sally formed networks, made alliances, connected with staff she had previously worked with, and instigated coalitions with other organisations to increase the profile of the project and the evaluation. Sally also communicated updates throughout implementation and sought feedback on the working relationship and teamwork.

Evaluators articulated how Michael had engaged with a variety of local independent researchers and evaluators over many years to seek assistance with demonstrating achievements, learning from external expertise, and seeking feedback on ways of understanding the degree to which they were working as a team and with other organisations. The benefits for the organisation of an ongoing mutually beneficial engagement with external expertise ranged from providing funders with credible information that was considered unbiased, obtaining additional in-kind support, filling an expertise gap regarding research and writing skills, and providing critical reflection in a culturally safe way that could foster further development of effective teamwork. While building the capacity of the team to undertake monitoring, evaluation, and reflection was at the forefront of any interaction, having an independent perspective to stimulate new ideas was also useful for staff. Michael worked collaboratively with an external evaluator to find ways he could undertake evaluation appropriately with his team, understand their preferences for ways of working and learning styles, and use the findings to improve the program, as recalled by this evaluator: ‘He’s learnt a lot from working with us, and I would see that we were able to help. The work was complementary, because there’s the same worldview about evaluation… It was a beneficial relationship’.

Evaluators and colleagues indicated that both Michael and Kelvin incorporated evaluative questions in routine meetings and general conversations to normalise making judgements and reflecting upon the teamwork dynamics. In Michael’s team an Aboriginal colleague did value and enjoy the routine opportunities for reflection and saw them as an opportunity to seek out support if she required it: We just all gather around. We connect with our team. It’s nice to hear what the other teams are doing… It’s nice to get together… And then Michael contacts the team later on, after the meeting if he needs to talk with any of us individually about any issues that we’re having.

In Kelvin’s case, he incorporated prompts for reflection about core business, the evaluation processes, and the team dynamics in the organisational system to ensure reflection became part of how they do business. There was recognition that the increased level of accountability in combination with the new evaluation system and reflection mechanisms was supporting the aspirations of the team to improve operations in a way that benefited their clients: Previously, we couldn’t actually give any details on how dependent or independent a client was, and why or when they exited. And I don’t think it’s fair on the client. It’s not fair on the other service… Definitely that accountability is something very new… I think this is the best position that we’ve ever been in.

Examples of Strategic Strategies.

Discussion

When teams are working effectively and productively, they are more likely to be able to make evaluation meaningful and relevant for their colleagues. This section explains how, to varying degrees, participants were able to combine the elements of cooperative teamwork, identified in social interdependence theory, for the purposes of developing a culture of evaluative inquiry. Effective collaboration is a key part of integrating evaluation into the people, systems, and culture of an organisation and ultimately the sustainability of evaluation within the organisation (Gullickson, 2010; Sanders 2003). This section details how participants’ level on the hierarchy and their degree of influence determined the extent to which they could apply all elements of cooperative teamwork and, consequently, embed evaluation in routine operations. It explains why there were differences between project- and senior-level participants and will conclude by articulating reasons why a clear delineation based on hierarchical level is not advisable.

Elements of Cooperative Teamwork

While this research found participants with similar motivations and similar aspirations for a culture of evaluative inquiry, there were differences among participants regarding the degree to which they were able to embed their evaluation initiatives. Project-level participants were less able to influence organisational processes and systems to make decisions. They said their key strategies for embedding evaluation included keeping evaluation on the agenda, using evaluation for advocacy, and being opportunistic to connect evaluation with other plans. Project-level participants recognised themselves as people who could maintain momentum through their influential communication approaches and who excelled by generating enthusiasm for the potential of evaluation. Evidence from the case studies, senior-level participants and project-level participants with influence, were more likely to embed their evaluation initiatives into routine operations. The elements of cooperative teamwork can help with understanding these differences.

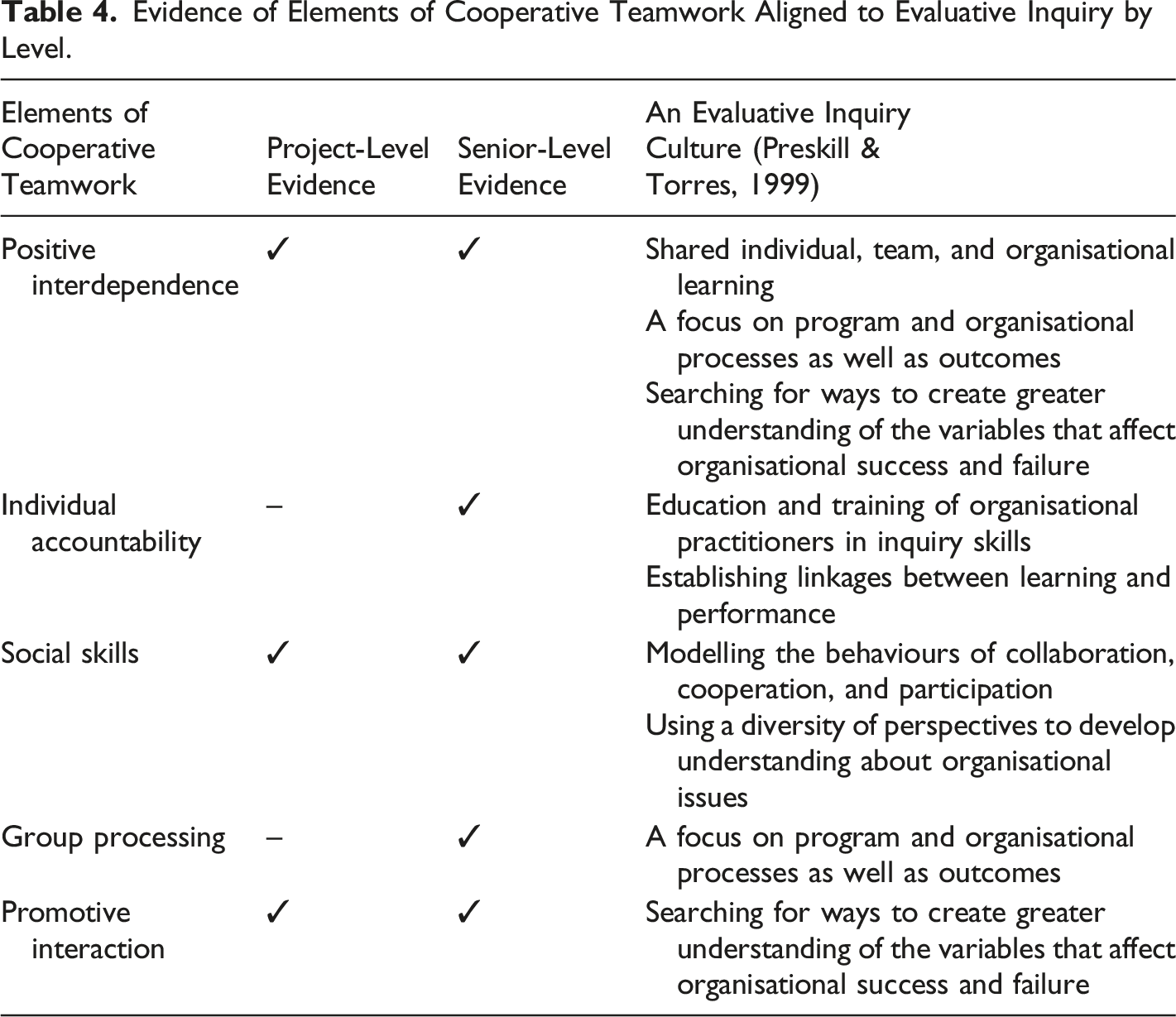

From across the large volume of strategies shared by all participants, most aligned with three out of the five elements of cooperative teamwork: positive interdependence, promotive interaction, and social skills. Participants in low hierarchical positions used opportunistic strategies that aligned with these three elements. Participants who had a higher degree of hierarchical and influential power supported structural changes, intentionally introduced the processes and systems to support evaluation to become routine, developed capacity, and further contributed to building a culture of evaluative inquiry. These strategies were more closely aligned with the other two elements of cooperative teamwork: individual accountability and group processing.

Related to the development of the social interdependence theory, when the theorists discussed the basic elements of cooperation, there were originally only three elements: positive interdependence, promotive interaction, and social skills (Johnson & Johnson, 1989). Further research subsequently resulted in an additional two elements, individual accountability and group processing, delineated for their importance (Johnson et al., 1998). This would therefore suggest that the project-level participants are incorporating the essential elements for cooperative teamwork but the more influential participants are able to extend their efforts with the additional elements.

The literature also supports the finding that only when participants were at a senior-level on the organisational hierarchy or in a position of influence over senior-level colleagues did they have the capacity to make intentional plans and put in place structural changes to develop the systems and processes required to embed a culture of evaluation (Bäck et al., 2020). For example, Fred at a senior-level, established peer support mechanisms and working groups. By contrast, project-level participants who had less influence over other employees to initiate formal strategies had to rely upon informal ways to connect, provide support, and have meaningful interactions. Therefore, individuals in senior-level positions or who were centrally located in their network were ideally placed to drive a strategic and comprehensive approach to evaluation that could support improvement and source the technical expertise required (Newcomer & Brass, 2016).

From a logistical standpoint, one explanation might be related to participants’ level in the organisation. This research suggests that there were limited examples of strategies that related to individual accountability and group processing because participants were in relatively low hierarchical positions that restricted the degree of influence over the work tasks of their co-workers. Peers on the same level can only use positive reinforcement and social mechanisms to support colleagues to do their fair share. Line supervisors, with the delegations to enforce changes such as updating job descriptions or setting annual performance targets, have more formal individual accountability mechanisms at their disposal (Gullickson, 2010). Therefore, project-level employees may have difficulty establishing the complete conditions for cooperation to occur (Tjosvold et al., 2003b). They might not be in positions that have the authority to hold individuals accountable or to ask peers to take time out to perform additional evaluation tasks. If strategies included encouraging peers on a horizontal level, managing up to influence decisions, or supporting on-the-ground staff, the project-level employees would not be able to hold any of these co-workers to account. Similarly, regarding group processing, incorporating reflective pauses and taking time to review the outcomes of the team in addition to other organisational, programming, and evaluation considerations might not have been prioritised by project-level participants. Promoting evaluation by finding shared goals, providing encouragement, and supporting inclusion might be beyond participants’ normal roles and therefore leave minimal time for being able to incorporate intentional strategic plans for actioning change on a structural level.

Another reason for the minimal focus on individual accountability and group processing might be because of the sensitivity required to instigate conversations around evaluation. As outlined earlier, peers might have felt anxious and reluctant to become involved with evaluation so participants might have been trying to establish an environment that was based on cooperation and not on competition (Deutsch, 2011). As such, where there is not only a negative interdependence situation, but in addition, when participants came from a low hierarchical position, they might have needed to emphasise the positive relational elements. Participants might have been reluctant to ask their co-workers to undertake accountability tasks because it did not align with their way of supporting peers. Strengths-based approaches that encourage their co-workers to continue doing good work or highlighting achievements to demonstrate to others what might be possible are more in alignment with their way of interacting on an interpersonal level. Focusing on creating shared goals, providing encouragement, and including diverse perspectives enabled participants to minimise the feelings of anxiety while promoting empowering strategies. Existing research supports this way of working in competitive and confrontational organisational contexts (Deutsch, 2011; Liu et al., 2018; Tjosvold et al., 2014; Tjosvold et al., 2003a).

Creating a Culture of Evaluative Inquiry

All participants in this research were working to build a culture of evaluative inquiry. They were attempting to integrate evaluation into their colleagues’ everyday practice and make evaluative information available for improvement, for decision-making purposes, and for communicating to multiple audiences. These attempts closely align with the concept of evaluative inquiry; by asking questions, talking and sharing knowledge, values, beliefs, and assumptions, co-workers can learn from each other, support improvement, and develop positive change (Preskill & Torres, 1998, p. 191).

However, as detailed above, many project-level participants were not confident that their initiatives would continue beyond their involvement. When asked about sustainability, they responded by saying they helped people to understand the value and purpose, linked evaluation to the benefits the organisation was seeking, and garnered support from different sections of the organisation. They then articulated that they believed that if they were not present, advocating for evaluation, the initiatives would not be sustained. There were only limited examples of strategies that could be aligned with individual accountability and group processing.

In contrast, this research found that, compared with project-level employees, senior-level participants had a greater scope of influence. In addition to encouraging evaluative thinking and appropriately promoting the benefits, they also considered evaluation capacity, worked with intent, and took a more strategic approach to developing a culture of evaluative inquiry. The case studies provided examples of participants who were moving towards a sustainable approach to embedding evaluation. These participants were still promoting evaluation to co-workers by finding ways of connecting individuals and their role in evaluation to the future vision of the organisation, and, like the project-level participants, they were also using multi-pronged individualised strategies to encourage and support teamwork in their diverse settings. However, while they remained focused on embedding evaluative thinking, they also considered evaluation capacity and developed systems. These participants incorporated strategies that more closely aligned with all five elements of cooperative teamwork, including individual accountability and group processing.

Evidence of Elements of Cooperative Teamwork Aligned to Evaluative Inquiry by Level.

Delineation Is Not Clear Cut

The table above might give the impression that there is a clear-cut differentiation among project-level and senior-level participants. However, the findings from this research indicate there was a progression that moved from critical thinking in relation to evaluation, or evaluative thinking (Buckley et al., 2015), through to developing a culture of evaluative inquiry where individuals and teams are connected to organisational learning (Preskill & Torres, 1999). Läubli Loud (2014) suggests, ‘Moving evaluative thinking from pockets of individuals and departments to the entire organization is preferable for sustainable, organizational learning and commitment to the principles and ideals of evaluation’ (p. 249). Therefore, this statement supports the notion that a culture of evaluative inquiry may be initiated with individuals thinking evaluatively, providing encouragement, generating enthusiasm, and other interpersonal strategies, and then progressing to more sustainable structural strategies (Garcia-Iriarte et al., 2011).

However, implementation of these strategies does not solely rest on high or low hierarchical power. Employees in lower-level position have a high potential for influence. Hyde (2018) has provided evidence to support that this type of leadership can happen from a low-power actor and these individuals are able to facilitate organisational change. Hyde (2018) defines low-power actors as ‘organizational members with relatively little formal authority but who nonetheless influence organizational processes and outcomes in ways disproportionate to their official role’ (p. 53). In this research, participants were leaders in evaluation regardless of hierarchical position, they were paying attention to the needs of their colleagues and supporting and coaching their development in relation to evaluation (Hyde, 2018). Sally’s case study provides an example to illustrate this point. There were also other project-level participants in this research who also thought in a way that aligns with Weiss’ description of how people collaborate around evaluation: Helping program people reflect on their practise, think critically, and ask questions about why the program operates as it does. They learn something of the evaluative cast of mind—the sceptical questioning point of view, the perspective of the reflective practitioner (Weiss, 1998).

Project-level participants exerted influence on other colleagues, which is congruent with the Johnson and Johnson definition of leadership (Johnson & Johnson, 2014). The examples from this research help to understand how evaluation advocates promoted evaluation to their colleagues in practice from both a low-power perspective and from more traditional leadership positions, with evidence from the case studies.

Hence, findings from this research appear to be consistent with the concept of needing to engage with individuals in organisations who think evaluatively as a first step until a tipping point is reached and evaluation becomes more firmly embedded (Archibald et al., 2012). This is a key reference in the literature about the role of non-evaluators in developing an evaluation culture. Buckley et al. (2015) state, ‘If Evaluative Thinking is promoted by an evaluation champion in a position of influence and is increasingly practiced by members of the organization as part of a learning community, an evaluation culture will follow’ (p. 384). The research finding that the strategies deployed depended on the level of the individual on the organisational hierarchy is supported by this quotation and adds weight to the part of the sentence that states ‘position of influence’ (Buckley et al., 2015).

Limitations and Strengths

One of the limitations of this research relates to the lack of resources available for organisations to participate. Managers of project-level participants that were offered an opportunity to be involved did not give their approval, stating a lack of time and lack of capacity. Willingness and enthusiasm were evident but lack of support from senior leaders with competing priorities meant, apart from one case, the case study participants were males at senior leadership levels. Males in leadership positions became the dominant type of participant that were able to use their authority and give permission for an external researcher to access the organisation. It would have been ideal to undertake the case studies with females from diverse cultural backgrounds who were influencing their organisations from a low-power position, but this unfortunately was not possible. For this reason, further research with females in low-power position would be highly warranted; funding would be necessary to compensate these organisations and individuals for their time and expertise. Undertaking additional research to expand upon the examples of successful influencing tactics, particularly considering the leadership and accountability reforms resulting from COVID-19 sector reform, would enable the field of evaluation to increase the focus of what is possible beyond individuals in senior positions on the organisational hierarchy. We may be inadvertently reinforcing the organisational hierarchy of power dynamics by alienating individuals at the lower power end by not acknowledging and supporting individuals to use their influence (Hyde, 2018; Peters, 2016).

Although the study design, with the combination of single interviews and case studies, attempted to ensure dependability and trustworthiness, the researchers recognise that making generalisations from both sources in the findings is a limitation. However, this research was able to find examples of how some participants, regardless of their position on the hierarchy, were able to affect change. Most participants’ capacity to influence was at the interpersonal level and they used a wide variety of small-scale strategies. However, although all participants were motivated to build a culture of evaluative inquiry and influence their peers to engage with evaluation, only participants in senior-level positions and participants who influenced people in senior-level positions incorporated intentional strategies and strategic thinking that increased the likelihood of making their efforts sustainable beyond their personal involvement. The findings demonstrated that without being at a high hierarchical position or having a degree of influence, it was likely difficult for participants to implement strategies that could contribute to embedding evaluation.

Conclusion

Social interdependence theory provides a recipe for positive group dynamics which can result in a productive group work. When the elements of the cooperative teamwork are intentionally incorporated into teams working on evaluation projects, this article demonstrates they are more likely to result in initiatives that are aligned with evaluative inquiry. The theory also assisted with summarising the differences between low-power participants and participants in positions of power and influence. Participants in low hierarchical positions used opportunistic strategies that aligned with the positive interdependence, promotive interaction, and social skills elements of cooperative teamwork. Participants who had a higher degree of hierarchical and influential power supported structural changes, intentionally introduced the processes and systems to support evaluation to become routine, developed capacity, and further contributed to building a culture of evaluative inquiry. The findings highlighted specifics from the case studies participants’ strategies aligned with the other two additional elements of cooperative teamwork: individual accountability and group processing.

In conclusion, this article argues that participants with strategies aligned with all five elements of cooperative teamwork increased the likelihood of embedding evaluation in their organisational systems. Any individual who wants to increase the likelihood of effective teamwork around evaluation could take a more intentional approach, using the examples provided in Table 3, and drawing upon all the elements of cooperative teamwork from social interdependence theory to structure interactions. To assist with increasing the likelihood of embedding evaluation in the organisational system and to find ways to work more strategically and with intent, professional development opportunities could encourage the incorporation of strategies across all five elements of cooperative teamwork. Tailored strategies and nuanced support could be particularly useful for people in lower hierarchical positions who want to extend their efforts to promote the use of evaluation and progress towards making their evaluation initiatives sustainable. Incorporating strategies that align with individual accountability and group processing to make their efforts less person-dependent could support individuals to amplify their impact and further harness their contribution.

Footnotes

Acknowledgements

This research presents the results of the first author’s doctoral thesis research. The second author served as a supervisor. Both would like to thank Professor Emerita Jean A. King, Professor Elizabeth McKinley and Professor Janet Clinton for their insightful expert counsel.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.