Abstract

In this article, we explore experiences and learnings from adapting to challenges encountered in implementing three Developmental Evaluations (DE) in British Columbia, Canada within the evolving context of the COVID-19 pandemic. We situate our DE projects within our approach to the DE life cycle and describe challenges encountered and required adaptations in each phase of the life cycle. Regarding foundational aspects of DEs, we experienced challenges with relationship building, assessing and responding to the context, and ensuring continuous learning. These challenges were related to suboptimal embeddedness of the evaluators within the evaluated projects. We adapted by leveraging online channels to maintain communications and securing stakeholder engagement by assuming non-traditional DE roles based on our knowledge of the context to support project goals. Additional challenges experienced with mapping the rationale and goals of the projects, identifying domains for assessment, collecting data, making sense of the data and intervening were adapted to by facilitating online workshops, collecting data online and through proxy evaluators, while sharing methodological insights within the evaluation team. During evolving crises, like the COVID-19 pandemic, evaluators must embrace flexibility, leverage, and apply their knowledge of the evaluation context, lean on their strengths, purposefully reflect and share knowledge to optimise their DEs.

Background

Due to the COVID-19 pandemic, organisations and programs have needed to continuously adapt to rapidly changing, unpredictable contexts. Likewise, evaluations have been required to adapt to evolving evaluation contexts and shifting organisational priorities (Gawaya et al., 2022). Developmental Evaluation (DE), with its focus on ‘inform[ing] adaptive development of change initiatives in complex dynamic environments’ (Dozois et al., 2010; Patton, 2011, 2021), has been suggested as a suitable evaluation approach to support the adaptation of initiatives and programs during crises like the COVID-19 pandemic (Karalis, 2020; Patton, 2021). Moreover, ‘adaptation to crisis’ has been recognied as an emergent purpose for DE (Patton, 2021). As the pandemic persists, practical learnings about applying DE during these extraordinary times must be shared.

Since March 2020, various perspectives on applying DE during the pandemic have emerged across various fields, including education, social justice and healthcare programs. Parker et al. (2021) implemented a DE to understand how to support medical students and faculty to transform their learning approaches during crisis. They identified the need for a systems-based, context-driven learning approach that embraces change, creates space for reflection and relies on real-time information. Using a DE allowed them to broaden and reframe education scholarship practice during the pandemic. Other articles describe DE’s suitability for programs implemented during COVID-19, highlighting the evolving circumstances initiatives must respond to (Karalis, 2020; Muskopf et al., 2021).

Despite the surge in literature on this topic, not much is known about practical adaptations developmental evaluators have made to respond to challenges of the pandemic, including restrictions on in-person gatherings, travel limitations and reduced access to project sites. Evaluators have also conducted DEs of initiatives while resources and attention were diverted towards pandemic response. While the literature describes practical steps for implementing DE in COVID-19, most remain theoretical and unreflective of real-life challenges faced by evaluators (Parker et al., 2021; Patton, 2021). Other evaluators describe practical challenges with conducting DE during COVID-19 but do not necessarily offer solutions to these challenges based on their experiences (Karalis, 2020; Muskopf et al., 2021; Wu et al., 2021).

In this article, we reflect on practical experiences and learnings from adapting DE processes during the COVID-19 pandemic (between March 2020 to June 2021). Our article is organised into three parts. First, we describe our approach to DE within a ‘DE life cycle,’ depicting core elements of our evaluative processes. Then, we describe three illustrative DE projects started before or during the COVID-19 pandemic and situate these within the DE life cycle. Next, we describe challenges encountered and adaptations implemented and reflect on emergent lessons gleaned over this period. Given ripple effects of the pandemic are expected to persist, evaluators will need to be nimble enough to respond to the evolving context. We share lessons that may be useful for other evaluators and their projects.

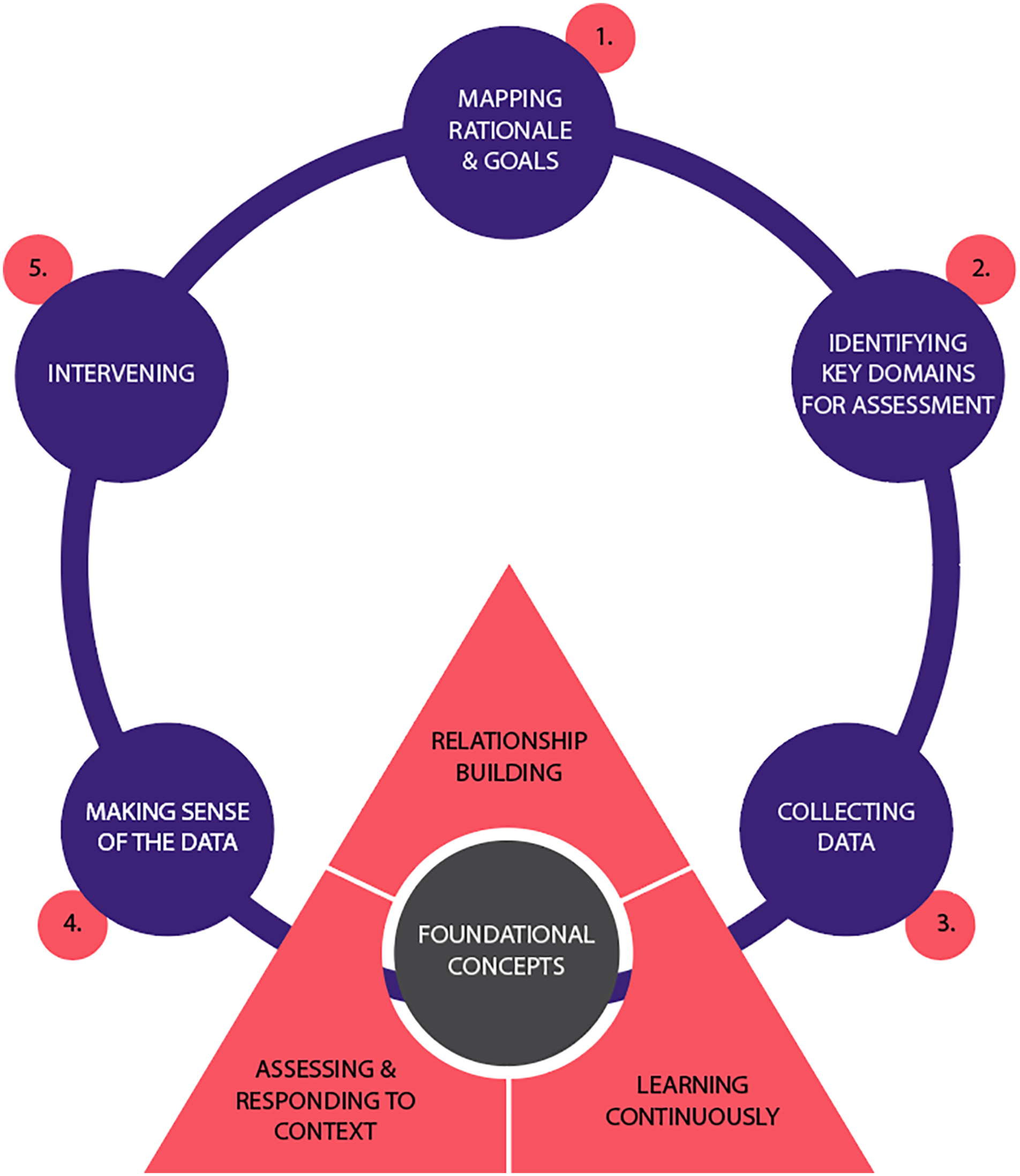

Developmental evaluation life cycle

As Patton (2011) describes, our DE practice is situated in programs, initiatives and strategies in their earliest stages of innovation, with the goal of supporting their adaptive development through evidence-informed decision-making. DE’s are suitable for such complex and uncertain contexts (Patton, et al., 2015; Rey et al., 2014). Figure 1 depicts our approach to DE. It is useful to distinguish elements foundational to successful implementation of all aspects of a DE (depicted in the triangle) and cyclical elements that recur in a responsive pattern at different times in a DE (represented by circles). While our interpretation of the DE life cycle has been influenced by the literature (Dozois et al., 2010; Patton, 2011), the content of this life cycle was developed through practice reflections among the evaluators. We posit that our interpretation of the DE life cycle may resonate with other evaluators who may consider its simplified depiction in developing their own DE projects. The developmental evaluation life cycle.

Foundational components of developmental evaluation

Building relationships is the primary focus of initial stages of a DE (Dozois et al., 2010), when the evaluator and project team get to know one another and establish the terms of, and priorities for, their work together. However, our experience suggests building, maintaining and rebuilding relationships is a foundational aspect of DE present at all stages of this work. Not only must developmental evaluators create and nurture close connections to innovators to remain attentive to their concerns and priorities, developmental evaluators can also become facilitators of relationships among members of a project team, for example, by facilitating key discussions and information sharing.

Similarly, developmental evaluators remain aware of and responsive to contexts in which innovation is taking place at all times. The conditions innovators encounter in complex and adaptive contexts are not fully known, change substantially over time and are not entirely within the innovator’s control. Given that DE is well suited to early-stage innovations in complex contexts, and the shifting sands encountered in these contexts are often mediators of the outcomes being evaluated, we found ‘defining the context’ of a DE is an iterative process, constantly being examined and re-examined at all stages of a DE. Continuous learning is both a value demonstrated by the evaluator and an outcome achieved at each stage of a well-functioning DE. There is no one stage at which ‘the learning’ occurs. A healthy partnership among evaluators and innovators collaborating on a DE project seeks, welcomes and establishes learning frameworks and evidence-guided adaptation (Dozois et al., 2010).

Cyclical components of developmental evaluation

The utilisation-focus of DE guides the evaluator to emphasise utility and relevance for intended users throughout the evaluative process (Patton, 2018). Typically, the first step is mapping the rationale and goals for change. This could be ‘big picture’ goals, including transforming service delivery or re-envisioning collaborative processes for community members to address systemic concerns. They could also be more specific, process-oriented or ‘small steps’ goals, such as adjusting a specific process to improve how a particular component of a program functions. Evaluators and their innovation partners at this stage might be developing or refining a theory of change (formally or informally), initiating a process of outcome mapping (Earl et al., 2001) or identifying a goal for change through a less formal and more nimble way (e.g., observing a process is not having intended results and agreeing on efforts to fix it).

With shared understanding of what needs to change, and a ‘theory’ (formally or loosely defined) articulated about how to address it, the evaluator proposes (or the team co-develops) key domains for assessment. Through these proposed domains, a consensus is achieved about how the evaluator will determine whether change has occurred, including a determination of methods for data collection, and indicators to be tracked. Once domains for assessment have been selected, the evaluator proceeds to data collection. Following this, the evaluator facilitates analytic and interpretive processes to support making sense of the data, often involving iterative processes in which data are analysed, and findings are presented to and discussed with innovators and other evaluation stakeholders to identify points for intervening. In our experience, these include instances where the evaluator intervenes to identify issues of critical importance for the project team (such as unintended or otherwise invisible consequences of their interventions) or instances where stakeholders collectively identify logical next steps or course correction supported by analysis of recently collected data. Either way, this process of sense-making and intervention is critical for real-time feedback, a hallmark of DE (Patton, 2011). Thereafter, the evaluator and innovators convene to identify a renewed goal for change, initiating a DE cycle anew.

Going virtual in an uncertain time

At the time of the declaration of the COVID-19 pandemic in March 2020, we were involved in several DEs, including the Nation of Wellness initiative (NoW), Culture Change in Long-Term Care (LTC) and Providence Living Place, Together by the Sea (PLPTBS) redevelopment project, which were at different phases of the DE life cycle. As an evaluation team in a university research centre located in a large urban teaching hospital, we were required as part of the university and hospital pandemic response to abruptly shift from working fully on-site to working fully off-site. Our evaluation team immediately set up weekly virtual team meetings to facilitate knowledge-sharing about our different evaluation projects, obtain feedback and maintain our team connections. Over time, we found these meetings were essential to our practice. Specifically, the meetings enabled us to reflect on what was working and what was not working, why we were observing the challenges we encountered, and adaptations required in our DEs. Learnings discussed in this article are a byproduct of our team reflections and knowledge-sharing activities that occurred between March 2020 and June 2021, when our team was able to resume some aspects of on-site activity.

Overview of our projects

Nation of Wellness

NoW is a youth-led initiative hosted by the Matsqui-Abbotsford Impact Society (Impact). Through a trauma-informed lens, NoW supports and trusts youth and young adults (14–28 years) to build a culture where young people (especially those who experience marginalisation) are seen, heard, included and celebrated. NoW initially emerged from an ongoing collaboration between Impact and the local school district focused on vaping in schools. While work was paused due to the pandemic in early 2020, NoW re-emerged in the summer of 2020 to support youth-led learning journeys through the ‘learning journeys pilot project’.

Our team was invited to lead the DE of NoW in summer 2020 to document and clarify early learnings generated from this social innovation. After the pilot, the cohort focused on substance use and mental health. Activities included critical analyses of academic research on youth cannabis use and mental health, dialogic engagement with community members and institutions (e.g., school, police department), and funding proposals to scale up NoW. We continued to work alongside the initiative, documenting and assessing the innovation and applying early learnings to guide and support Impact, the NoW Stewarding Group (comprising and led by young people involved in NoW), and their partners.

Our work with NoW was conducted entirely during the pandemic. Therefore, many features of NoW and its DE were continuously adapted to the evolving reality. Regarding the DE life cycle, both foundational and cyclical aspects of our work were impacted by the pandemic. For example, our ability to integrate with the project team was limited given COVID-19 restrictions on in-person gatherings. Project team members were focused on sustaining the project during crisis, which often shifted focus away from evaluation. Throughout the evaluation, we were challenged to adapt and explore new methods to capture experiences of those organising the initiative, their processes and what they were learning. We explored unusual spaces for establishing program theory, utilised novel techniques for gathering data and explored unconventional roles for evaluators to build trust and relationships.

Culture change in long-term care and the Providence Living Place, Together by the Sea developmental evaluations

The Culture Change in long-term care (LTC) and PLPTBS redevelopment DE projects are distinct but inter-related projects implemented in LTC communities. The Culture Change in LTC project aims to facilitate a shift from the institutional model of care towards a social-relational model of care and has been described in detail elsewhere (Iyamu et al., 2021). The project was implemented in multiple iterations, enabling integration of learnings in successive scale ups across LTC homes. Our DE aims to optimise outcomes for residents, their families and staff in each iteration by supporting evidence-based action.

The PLPTBS redevelopment project was launched in 2019 and is a 5-year multicomponent project seeking to ensure residents live in joy and achieve a meaningful life. Based on the concept of ‘dementia villages’, derived from De Hogeweyk Care Concept originating in the Netherlands (Glass, 2014; Harris et al., 2019), it involves two broad strategies. First, it involves developing a purpose-built physical environment to facilitate safe, easy access to the outdoors and living spaces that encourage social interactions. Second, it involves shifting the model of care towards a social-relational, person-centred model incorporated into a wider framework of quality care for residents. Our DE of this project leverages the Consolidated Framework for Implementation Research to contribute ongoing learnings toward improving the project (Damschroder et al., 2009) and seeks to address knowledge gaps on how the dementia village concept may improve outcomes for residents, family and staff (Harris et al., 2019).

Concerning the DE life cycle, the Culture Change in LTC project was in a unique situation when the pandemic hit in March 2020. While nearing the end of an implementation iteration (stage 5 of the DE life cycle, Figure 1) and preparing for its next iteration, the project experienced major changes in staffing, including departures of key project leaders. These changes affected foundational processes of our DE, particularly regarding relationship building. In contrast, the PLPTBS DE was just kicking off as the pandemic started. In both projects, members of the project teams grappled with their concurrent roles as frontline health workers supporting the COVID-19 response in LTC. Therefore, we experienced challenges with sustaining evaluation efforts during and after the most intense periods of the COVID-19 pandemic.

DE adaptations implemented during the COVID-19 pandemic

Foundational components of DE

Relationship building

DEs often begin with orienting the evaluator to the program, initiative or strategy, building relationships necessary for a successful evaluation and establishing a framework for continuous learning among project stakeholders (Dozois et al., 2010; Patton, 2011). Given restrictions on in-person gatherings at the beginning of the pandemic, we modified foundational components of our work. For instance, with the Culture Change project, the beginning of the pandemic restrictions meant that most components of ongoing culture change work would be on hiatus while leaders and direct care staff focused on outbreak response. At the same time, we became aware that key members of the leadership team would be transitioning to new roles, and would not be in a position to continue the culture change work they had been doing. In response, we focused on supporting a leadership team transition by creating legacy documents that served as a repository of project knowledge. This process allowed us to form relationships with the new project leadership, albeit online. In contrast, the PLPTBS DE project involved building entirely new relationships through online channels given the higher risk of COVID-19 infection in LTC residents and resulting access restrictions in LTC homes. In both cases, opportunities for interactions and intangible aspects of relationship building were limited online. We fostered trust and buy-in by leveraging our prolonged engagement with the LTC culture change context to highlight important information within legacy documents and additional insights from our DE, helping to orient new team members and engage them in the DE. This positioned the evaluators as trustworthy and credible partners that could support ongoing learning.

Conversely, the DE of NoW was initiated during summer 2020, when there were only minor restrictions to in-person gatherings. This allowed us to establish stronger foundational relationships with the project team by joining weekly outdoor in-person meetings during the project pilot. However, as the initiative and the pandemic progressed, it became increasingly challenging to maintain connections with the project team since communication was mainly mediated through online channels. The project team and our evaluation team were located in two different jurisdictions and, for some time, non-essential travel between jurisdictions was restricted by provincial health orders. With restrictions, the project team (mainly composed of youth with a strong culture of in-person meetings) was able to meet mainly in-person while our evaluation team joined meetings virtually as required by our organisation, provincial health orders, and in keeping with our ethical responsibility to do no harm (Santana et al., 2021). The different meeting spaces exacerbated feelings of disconnection from the project team and made it difficult to assess and respond to the physical context. To mitigate this disconnection, we relied on individual communication with project team members, frequently asking questions during team meetings for clarification, and maintaining access to project team members’ internal communications (often over social media). These approaches offered additional insight and supported our understanding of context as well as continuous learning about the initiative.

Further, we assumed roles outside of traditional DE responsibilities to enhance our relationships with the project team and build trust while preserving our role as evaluators to the best of our ability. For the Culture Change and PLPTBS DEs, we shared our knowledge of the context through reports and additional insights from evaluation activities, to ensure the project team felt supported throughout the early phase of their engagement on the evaluation. For NoW, we facilitated virtual and hybrid meetings and developed communication materials for events hosted by the initiative. Part of our motivation for supporting the initiative in these ways was aligning our processes with each initiative’s organisational culture of ‘all-hands-on-deck’ in the face of the pandemic.

Assessing and responding to context

In assessing and responding to context, we needed to be attentive to the project teams’ feedback and open to adapting our methods to new and complex situations. For example, in NoW, virtual and hybrid meetings required additional skills, technology and expertise compared to entirely in-person or entirely virtual meetings. The importance of good facilitation for hybrid sessions was emphasised in one project undertaken by NoW called GREENHOUSE, which had many community partners including researchers and theatre facilitators who joined NoW meetings virtually. Initially, GREENHOUSE meetings were hosted in a seminar style with different meeting attendees (in-person and virtual) providing input when called upon. However, the youth involved in NoW reported difficulty staying engaged and alert during hybrid sessions and that online and in-person participants were disconnected from one another. In response, GREENHOUSE meetings became more focused on interactive activities to promote connections among project team members and to accomplish meeting objectives. Activities were facilitated through interactive digital platforms like Google Jamboard and Ahaslides, as well as hybrid improvised games led by theatre facilitators. The PLPTBS project engagement sessions used similar tools including Google Draw’s whiteboard function to simulate collaborative brainstorming.

Continuous learning

To facilitate continuous learning, we explored the opportunity of increased acceptability of online meetings during the pandemic. For the LTC projects, we set up formal and informal knowledge-sharing sessions using online channels to connect the project team with other LTC leaders and researchers around the country and internationally who were learning from similar initiatives. We formalised these sessions as a learning alliance in the PLPTBS DE to facilitate knowledge exchange and timely feedback on issues encountered by the team (Lundy et al., 2005). This allowed us to engage in ways that not only ensured shared learning and evidence-based practice, but also strengthened our fledgling relationships. In comparison, knowledge exchange within the NoW DE typically occurred in more informal spaces, like staff meetings, which was reflective of the culture, structure and size of the program.

Cyclical components of DE

Our description of adaptations required to implement the cyclical components of DE during the pandemic roughly follow the sequence of the DE life cycle. However, given the different stages of the projects when the pandemic started, we focus on aspects of the life cycle pertinent to our projects during the pandemic.

Mapping rationale and goals

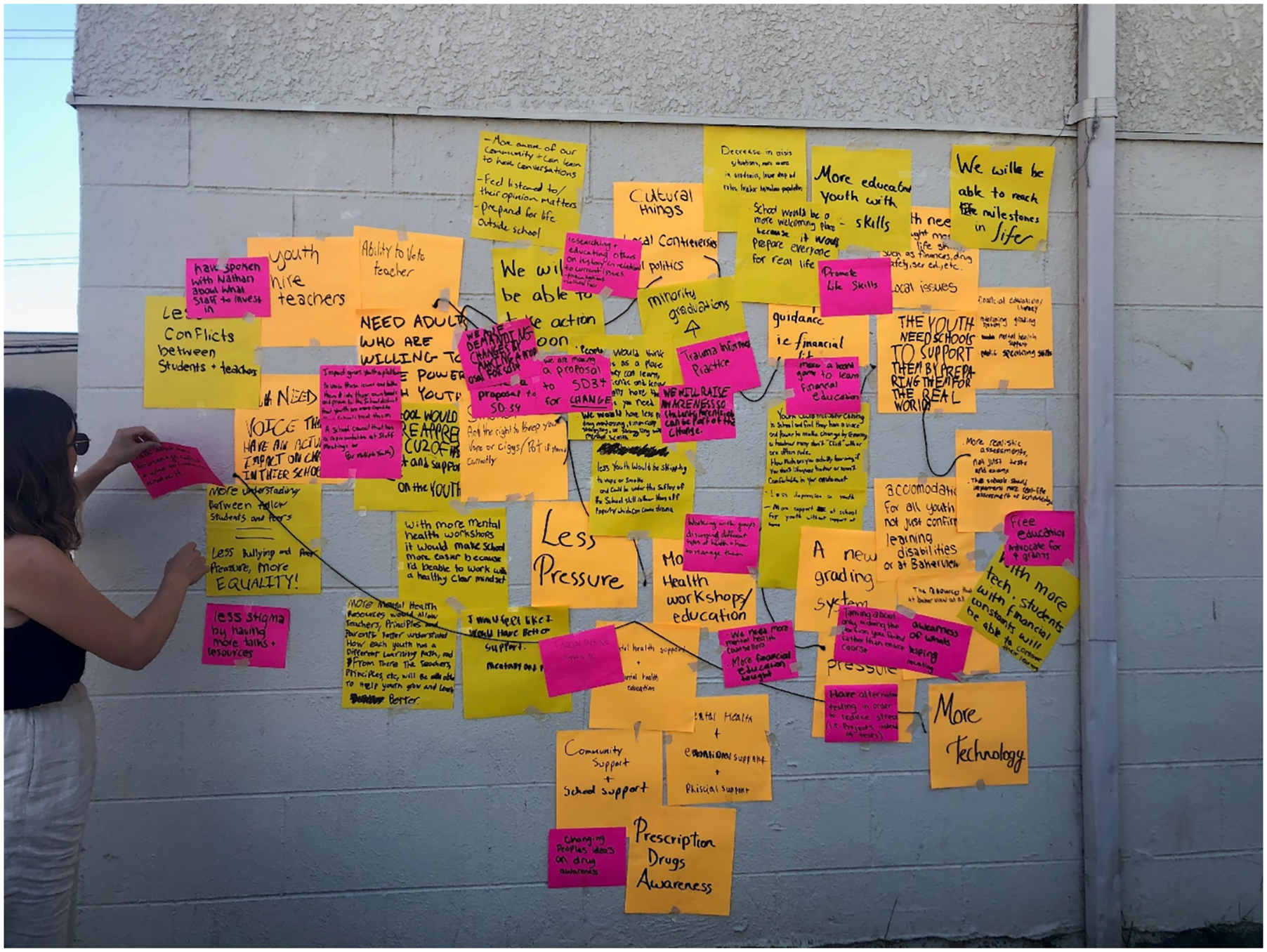

In mapping goals and rationales of the initiatives, we made changes that ensured stakeholders’ ability to be engaged despite restrictions. For instance, we hosted an outdoor workshop to map the theory of change of the NoW project. The outdoor workshop allowed for in-person interaction while maintaining required physical distancing to comply with regulations. The project team, including the youth, documented their responses to prepared questions regarding the theory of change on sticky notes, which were placed on an improvised whiteboard which was the exterior wall of the building that housed their regular meetings (Figure 2). Upon reflection with the team, we found the workshop’s physical interactivity and novelty of the unconventional space made the activity engaging and contributed to helping the project team find their ‘why’. Therefore, we hosted subsequent workshops in a similar fashion, using outdoor spaces whenever possible. Theory of change workshop held in an unconventional space.

In contrast, we mapped goals and rationale for the PLPTBS project at the height of the pandemic. The widespread restrictions were further compounded by the risk of COVID-19 to older adults in LTC. Therefore, we hosted a theory of change workshop entirely via Zoom online. Unlike traditional sessions taking 1–2 days at minimum, we held a 3-hour interactive event. This was appropriate considering the collective Zoom fatigue people were experiencing at the time (Santana et al., 2021). Prior to the workshop, we shared preparatory materials with participants and held informal discussions with various stakeholders to get a broad sense of the program, obviating the need for more prolonged discussion during the workshop.

Identifying key domains for assessment

When preparing domains of assessment, we maintained the trend towards remote collaboration. We shared workshop outputs with the project team and other stakeholders as electronic documents rather than in meetings. Through email discussions, draft domains of assessment were created based on the emergent theory. Going through this process required patience and flexibility of both the project team and the evaluators. Overall, this process was less engaging than it may have been if it was facilitated in-person, particularly in situations where healthcare workers and leaders had to grapple with conflicting priorities prompted by the pandemic. We supported the project teams through the process by sending intermittent prompts and reminders. Further, in the LTC context, all projects were required to include indicators related to COVID-19. For example, the Culture Change evaluation pivoted to helping health leaders understand the impact of the pandemic on health workers’ team dynamics and to assess their readiness for culture change as the outlook of the pandemic in LTC became more favourable with access to vaccines.

Collecting data

Challenges and adjustments necessary to facilitate data collection, especially in remote situations, are similar to those encountered in research, which have been extensively described (Dodds & Hess, 2021; Lobe et al., 2020; Santana et al., 2021; Vindrola-Padros et al., 2020). However, we describe adjustments unique to our context. For example, while initial data collection largely followed conventional ethnographic methods on the NoW project, we involved the youth as peer evaluators in later stages of the evaluation. This approach aligned with the initiative’s principles of being youth-led and contributed to mitigating COVID-19 risk. Specifically, youth were trained to conduct peer interviews amongst themselves, reducing travel requirements as provincial health orders still restricted non-essential travel across jurisdictions at the time. Further, the youth who were already part of each other’s ‘social bubbles’ carried out face-to-face interviews, reducing the number of additional contacts each person would have. Additional interviews were conducted as virtual or telephone interviews while surveys continued to be administered online. These processes have been tested over time and were implemented seamlessly in the various projects. However, we acknowledge that carrying out DEs without in-depth integration within the evaluation context as is required in most ethnographic approaches implies limitations in the depth and rigour of data collection, especially related to visibility on non-verbal cues existent in the context (United States Agency for International Development, 2021). Further, collection of data through online methods alone potentially may have introduced some selection bias as not all evaluation participants had seamless access and ability to use online technologies for interviews and other data collection activities. In our contexts, we found that offering multiple options for off-site data collection including telephone calls was an effective mitigating strategy.

Making sense of the data and intervening

Finally, in making sense of the data and intervening, the evaluation team constantly assessed the evolving context. Given all DE’s were of initiatives conceived pre-pandemic, a key challenge was distinguishing between aspects of the initiatives core to their replicability and those only relevant to the COVID-19 context. We found it useful to encourage reflections about the reasons for actions and decisions on each project, helping to determine if responding to the COVID-19 pandemic was a motivation for specific actions and strategies. However, we acknowledge that some of the projects have determined that building resilience to infectious disease outbreaks may be a useful strategy moving forward. Further, we were required to adjust our timelines for making sense of the data with stakeholders, frequently relying on emergent opportunities. Whenever possible, we continued to support real-time evidence generation through creation of short reports and steering conversations at team meetings to provide internal feedback. In some instances, more substantial reports (e.g., end of-year or end-of-iteration reports) were delayed to allow better engagement with the information, which we hypothesised would be achieved only through in-person meetings. For example, for the NoW project, we waited until it was safe to meet for in-person interactive sense-making. Our experience suggests in-person activities offer levels of engagement (especially for youth) not attainable with hybrid or virtual meetings.

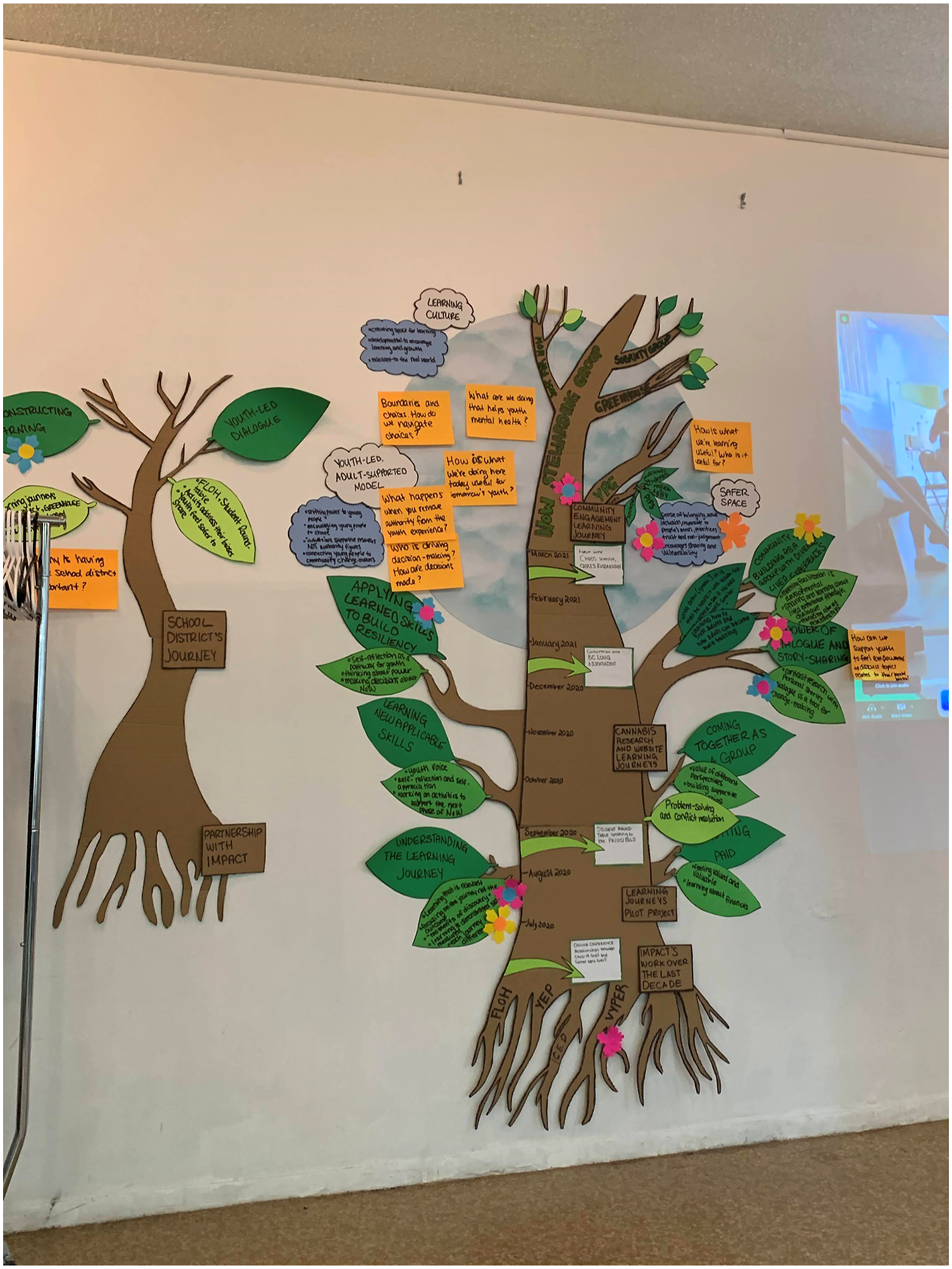

To share the final report, we created a visualisation of the findings that was reviewed at multiple participatory engagement sessions with the project team (Figure 3). By creating and sharing a physical visualisation of the report, we enabled deep reflections on findings and increased engagement with the report, which might not be accessible to young people or other participants in long-form written format. The project team safely engaged with the visualisation by taking turns viewing the graphic and placing physical markers on items they felt were relevant and meaningful to them. This activity facilitated dialogue about the findings in subsequent sessions. Despite the success of the reflection, the decision to wait until restrictions loosened up resulted in a month and half delay in getting feedback on the findings and then additional time to integrate them into the report. This delay may have impacted the relevance of the final report’s content to the project team as they were focused on new activities when the report was completed. The delays also affected the timeliness of the report to the funders. However, the funders were flexible with deliverables, timelines and project adaptations due to COVID. Visualisation of report findings created for participatory engagement sessions.

Discussion

We described our interpretation of the DE life cycle and adaptations made in foundational and cyclical components of our DE project’s life cycles in response to the COVID-19 pandemic. A common thread in our experiences was that we reoriented the DEs to remain relevant to stakeholders despite ever-shifting priorities and restrictions. Overall, we found DEs can support programs, initiatives and strategies to pivot their activities in response to the COVID-19 pandemic while sustaining momentum towards overarching goals (Gawaya et al., 2022; Patton, 2021). This article adds to existing literature by providing in-depth descriptions of practical adaptations evaluators may consider when planning and implementing DEs carried out during the COVID-19 pandemic and other crisis situations. While other authors have described DE’s utility in this context and potential considerations, we focus on issues encountered while maintaining key aspects of DEs, including embeddedness, trust, engagement, data collection and utilisation-focus (Thompson, 2021; United States Agency for International Development, 2021).

Similar to other evaluators (Erskine & Healey, 2022; Olson et al., 2021; Parker et al., 2021; UNODC Independent Evaluation Section, 2020), we learned that it is important for evaluators and their DEs to embrace flexibility. In all the projects, we experienced delays and complications that required flexibility in our response. Flexibility has been described as a hallmark of DE in responding to complexity and we found that this particularly applied to our assumed roles and how this contributed to relationship building with stakeholders (Patton, 2021; Patton, 2011; United States Agency for International Development, 2021). We described unconventional roles we undertook to make the evaluation relevant to stakeholders, including facilitating meetings and serving as information resources. These roles built trust with project teams, fostered embeddedness by ensuring our inclusion in team communications and allowed informal opportunities for communication that further ensured our integration with the teams (United States Agency for International Development, 2021).

Flexibility also enabled us to explore opportunities for stakeholder engagement, including outdoor workshops and online whiteboards, that enhanced creativity. These strategies were especially useful for inspiring engagement among the young people we worked with. While assuming unconventional roles as evaluators helped to ensure stakeholders’ continued engagement in the DE, we were unable to attain similar levels of embeddedness achieved with in-person DE projects. Specifically, we were limited in engagement in many typical informal activities and processes supporting relationship building, like impromptu discussions that occur when physically present with stakeholders, as is characteristic of DEs (Patton, 2011). During the pandemic, other authors have recommended intentional communication to create informal interactions and safe spaces for varied stakeholders to raise concerns in a way that fosters embeddedness (United States Agency for International Development, 2021).

To further facilitate stakeholders’ trust and engagement with the DE, it was crucial to leverage and apply our knowledge of the context. This aligns with maintaining a systems-view and positioning the DE to not only evaluate but concurrently help to achieve the overarching goals of the project being evaluated (Parker et al., 2021; Patton, 2021). For example, in adapting data collection to be led by youth being served by the project, we achieved our data collection goal while limiting contact and potential spread of COVID-19. This approach aligned with overarching principles of being a youth-led program, built evaluation capacity in the project team and demonstrated DE’s value to stakeholders (Patton, 2021). Similarly, demonstrating our knowledge of the context, and drawing on data collected prior to the pandemic also helped provide further insight on issues encountered in the pandemic and demonstrated DE’s value to the LTC Culture Change program and bolstered stakeholders’ trust.

In collecting data on the evolving and unpredictable context, it was useful to lean on our strengths. Many of our adaptations supported stakeholders to make sense of their new reality and reorient their initiatives to the pandemic. For example, we used our connections with experts in the field to help LTC organisations understand how the pandemic was influencing health workers’ mental health, which supported decision-making and priority-setting. Given our experience with knowledge translation, we also contributed communication materials and visualisations that helped stakeholders engage with and share the evolving goals of their project. Other evaluators also suggest that time and cost-saving on existing evaluations during the pandemic freed up resources to explore other strengths and capacities to demonstrate value including supporting communications and creating infographics (Thompson, 2021).

Lastly, it was important to purposefully reflect and share knowledge. Evaluators describe this in relation to reflections with project teams (Parker et al., 2021). We found knowledge-sharing amongst evaluators helped us more deeply understand aspects of our evaluation methods that worked and those requiring adaptations. For instance, our team reflections enabled us to further digital technologies that helped to foster collaboration among project teams. Continuous learning applies not only to the project but to the evaluation process (Parker et al., 2021; Patton, 2021; United States Agency for International Development, 2019). We must note that digital technologies may result in unexpected consequences. For example, hybrid meetings often resulted in asymmetrical discussions, limiting the ability of the evaluators who were online to contribute significantly to discussions among those who could meet in-person. Such asymmetric discussions have been described as a challenge to surmount in our new hybrid reality (Saatçi et al., 2019).

Conclusion

DEs can be valuable to support programs, initiatives and strategies, especially in uncertain times characteristic of the COVID-19 pandemic. Despite challenges to the foundational and cyclical aspects of DE, practical adaptations can be made, ensuring their continued contribution towards the overarching goals of the projects. Specifically, we added value by embracing flexibility, supporting project teams to make sense of changing contexts, leaning on our strengths and reflecting on our shared learnings. We hope these insights support other developmental evaluators to continue adapting and innovating during the pandemic and in other rapidly evolving contexts.

Footnotes

Acknowledgements

Our team gratefully acknowledges the support of Providence Living and St. Paul's Foundation for funding our evaluation work, as well as the Centre for Health Evaluation and Outcome Sciences (CHÉOS) for their ongoing support of our team.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.