Abstract

Children’s early experiences and environments profoundly impact their development; therefore, ensuring the well-being of children through effective supports and services is critical. Evaluation is a tool that can be used to understand the effectiveness of early childhood development (ECD) practices, programs, and policies. A deeper understanding of the evaluation landscape in the ECD field is needed at this time. The purpose of this scoping review was to explore the state of evaluation in the ECD field across four constructs: community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use and influence. A comprehensive search of 7 electronic databases, including Canadian and international literature published in English from 2000 to 2020, was conducted. A total of 30 articles met the inclusion criteria. Findings demonstrate that some studies include aspects of a community-engaged approach to evaluation; however, comprehensive approaches to community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use in the field of ECD are not commonly achieved. This review will inform strategies for bridging evaluation gaps in the ECD sector, ultimately equipping organisations with the evaluative tools to improve practices, programs, and policies that impact the children, families, and communities they serve.

Keywords

Introduction

The early years constitute a critical period of development, with substantial effects on children’s long term health and social outcomes (National Scientific Council on the Developing Child, 2020). Consequently, ensuring the well-being of children through effective early supports and services is critical. Evaluation is an integral part of this work, as it is a systematic approach for collecting and using information to understand the effectiveness of practices, programs, and policies (Nielsen et al., 2018). Yet, historically, evaluations in the context of early childhood development (ECD) programs have reflected a top-down approach, where the funder dictates the evaluative process (Bledsoe, 2014), failing to consider the context and histories of the communities they are working with (Bremner & Bowman-Farrell, 2020). A shift from evaluation-as-judgment to evaluation-as-learning is, therefore, necessary to transform evaluation into a tool for improving programs and developing ways to better serve children and families (Tribal Evaluation Workgroup, 2013). Empirical studies show that collaborative and participatory evaluation approaches increase evaluation use, promote capacity building at both individual and organisational levels (Acree, 2019), and encourage evaluation buy-in (Odera, 2021). There is a need to transform current evaluation approaches and methods, and foster practices that reflect the plurality of cultures and are actionable at the community level. By utilising community-driven and culturally responsive evaluation approaches, it is possible to design and improve early childhood practices, programs, and policies so that they better reflect community interests.

The Evaluation Capacity Network (ECN) at the University of Alberta formed in 2014 in response to the evaluation capacity needs of the early childhood sector within Alberta (Gokiert et al., 2017a) and has since broadened nationally and internationally in scope. As an interdisciplinary and intersectoral partnership, the ECN seeks to build evaluation capacity in the ECD field through engagement with early childhood stakeholders and a broader network of practitioners, researchers, funders, and evaluators. Through comprehensive community engagement consultations and surveys, the ECN identified gaps in our understanding of four evaluation constructs: community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use and influence.

Definitions of evaluation constructs

As there are no global definitions of the four aforementioned constructs, for the purposes of this review, we interpreted them as follows. Community-driven evaluation is grounded on the active role of relevant stakeholders in shaping the research process (Janzen et al., 2017). There are various approaches to community-driven evaluation, including participatory evaluation (Chouinard & Milley, 2018), collaborative evaluation (Acree, 2019), empowerment evaluation (Fetterman, 2019), and utilisation-focused evaluation (Patton, 2012). Each of these approaches are characterised by the level of stakeholder participation, with “involvement” at one end, different degrees of collaboration in the middle, and empowerment at the other end (International Association for Public Participation, n.d.). Common to all of these approaches is the desire to meaningfully engage stakeholders in evaluation processes and to ensure that findings are useful and actionable for those involved (O’Sullivan, 2012). Stakeholders can be defined as individuals, groups, or organisations who are invested in the evaluation and its findings. Typically, they include recipients of program services, as well as staff and funders (Brandon & Fukunaga, 2014).

Culturally responsive evaluation is commonly defined as a “systematic, responsive inquiry that is actively cognisant, understanding, and appreciative of the cultural context in which the evaluation takes place” (SenGupta et al., 2004, p. 13). Culture is an integral aspect of evaluation as it informs not only the contexts that programs are implemented in, but also the methods undertaken by researchers. Here, culture refers to the “shared norms and underlying belief system of a group as….guided by its values, rituals, practices, [and] language” (Frey, 2018, p. 2). This approach aims to improve the conditions of individuals belonging to marginalised groups by conducting valid and ethical evaluations that bolster the validity and utilisation of findings (Clarke et al., 2021).

The most frequently cited definition of evaluation capacity building (ECB) is attributed to Stockdill et al. (2002), who describe it as “the intentional work to continuously create and sustain overall organisational processes that make quality evaluation and its uses routine” (p. 14). Thus, the goal of ECB is the production of high quality program evaluations (Cousins et al., 2014). Enhancing evaluative capacity is also crucial in supporting the production of meaningful and actionable evaluations that meet external accountability requirements (Al Hudib & Cousins, 2021).

Evaluation use refers to the way “real people in the real world apply evaluation findings and experience” (Patton, 2008, p. 27), while evaluation influence is defined as the “consequences of evaluation that are indirect and unintentional” (Mark, 2017, p. 30). In other words, evaluation use is concerned with an organisation’s ability to understand findings, share them with relevant stakeholders, and integrate them into day-to-day operations. Given the time consuming and costly nature of evaluation, it is important to explore how evaluation data can be better utilised (Peck & Gorzalski, 2009).

Study context and purpose

To investigate how evaluation can become a community-driven and culturally responsive mechanism for improving practices, programs, and policies in the ECD field, research teams at post-secondary institutions in Canada and the U.S. (Queen’s University, Lakehead University, University of Alberta, and Claremont Graduate University) formed a working group to conduct a series of five complementary scoping reviews. This group consisted of academics and student trainees with expertise in the fields of early childhood, evaluation, and community-based research. Four scoping reviews were dedicated to exploring each of the four aforementioned constructs within the broader context of the social sector and evaluation literature. A scoping review is a methodological process of systematically mapping literature to synthesise research evidence on a specific topic in order to identify gaps in the literature and inform strategies and decision-making (Arksey & O’Malley, 2005). The purpose of this review was to examine these four constructs specifically in the context of early childhood development, and addresses the following research questions: What are the various approaches and underlying principles to community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use and influence within the ECD context, as described in the literature? What are the promising practices and identified challenges across these constructs, and their implications for evaluation capacity building efforts in the ECD field?

Methods

This scoping review was guided by the methodological framework developed by Arksey and O’Malley (2005), with enhancements as recommended by Levac et al. (2010), and reported in adherence to the PRISMA-ScR (Tricco et al., 2018). The team consisted of two graduate students, a postdoctoral fellow, professor, and project coordinator. A protocol containing the search strategy was established during the beginning stages of the study, and iteratively refined in collaboration with the larger working group. The review was conducted from May 2020 to February 2021, with the most recent search executed in November 2020. Preliminary findings were presented to a large stakeholder group over a 2-day strategic planning session in October 2020, to receive feedback on findings, their interpretation, and generate strategies for mobilising the knowledge to the broader partnership.

Information sources

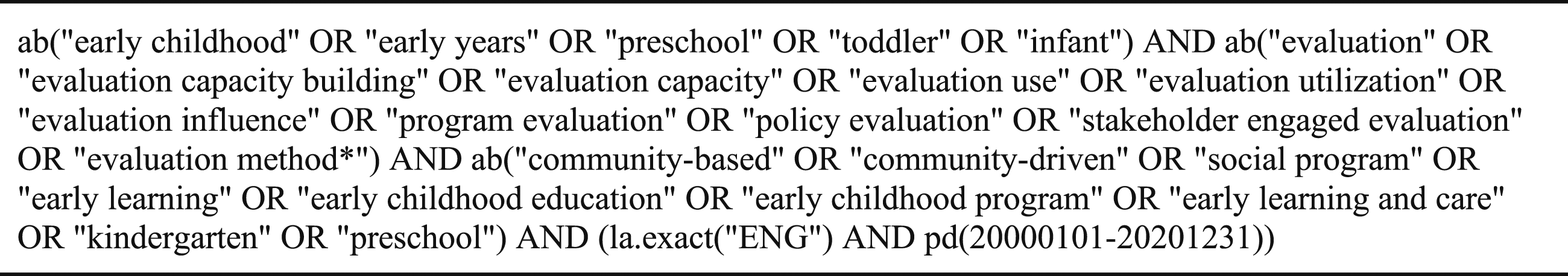

A robust search strategy was collaboratively developed by two members of the team (GP and SJ) and later executed by one reviewer (GP). A total of seven relevant electronic databases were searched: Academic Search Complete; Education Research Complete; PsycINFO; CBCA; ERIC; Education Database; and ProQuest Dissertations. We also manually searched the following nine evaluation journals: the American Journal of Evaluation; the Canadian Journal of Program Evaluation; New Directions for Evaluation; Evaluation; Evaluation and Program Planning; the Evaluation Journal of Australasia; Evaluation and the Health Professions; Journal of Multidisciplinary Evaluation; and Evaluation Matters. The other scoping review teams exclusively searched the evaluation journals, while our search expanded beyond these to capture as many relevant articles as possible. Literature published by partners of the ECN was also examined; these articles were subject to the inclusion and exclusion criteria reported below. While the other teams drew on the evaluation literature more broadly, this review focuses solely on evaluations conducted within the field of ECD. Only peer-reviewed studies discussing at least one of the four constructs were deemed eligible for inclusion. Figure 1 shows the keywords that were used to search the identified databases. Search strategy.

Selection of sources of evidence

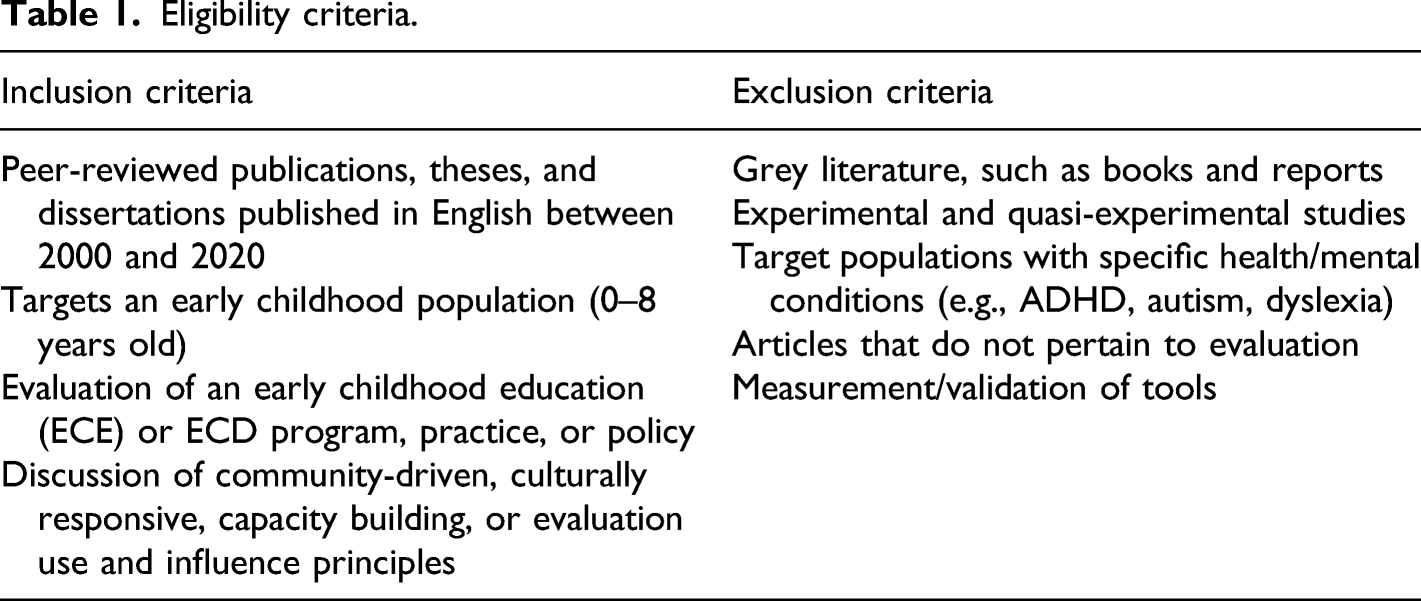

Eligibility criteria.

PRISMA flow diagram.

The most common reason for study exclusion was study purpose; many studies were experimental and quasi-experimental (Frey, 2018) and consequently, did not meet our inclusion criteria. We excluded these studies as they focused on measuring causal impacts or the effectiveness of programs and interventions, without a clear evaluation purpose, process, or evaluative conclusions.

Charting and synthesis of data

The same independent reviewers (GP and MT) charted and entered data into a standardised Excel spreadsheet created by the team. The data charting form was revised throughout the extraction process to chart data relevant to our research questions. Study characteristics (i.e., authors, publication year, setting, methods, and population) were captured, and thematic analysis was conducted for the constructs of community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use and influence.

Results

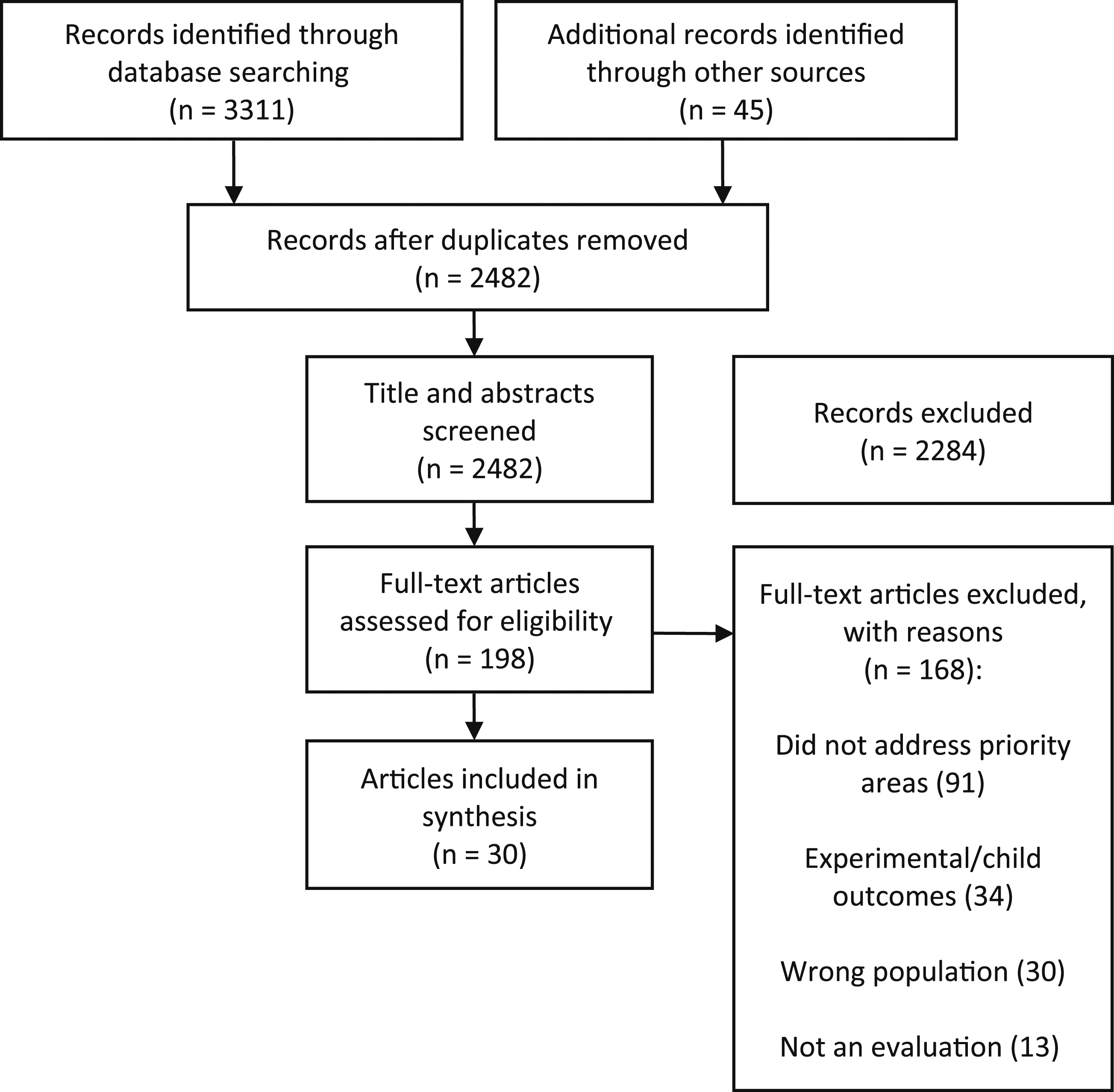

The database searches identified a total of 3,311 articles, and an additional 45 were identified through manual searches. After title, abstract, and full-text screening, 30 articles were included in the review. Figure 2 shows the PRISMA flow diagram, which maps out the number of studies identified, included and excluded, as well as the reasons for exclusion.

Results of individual sources of evidence

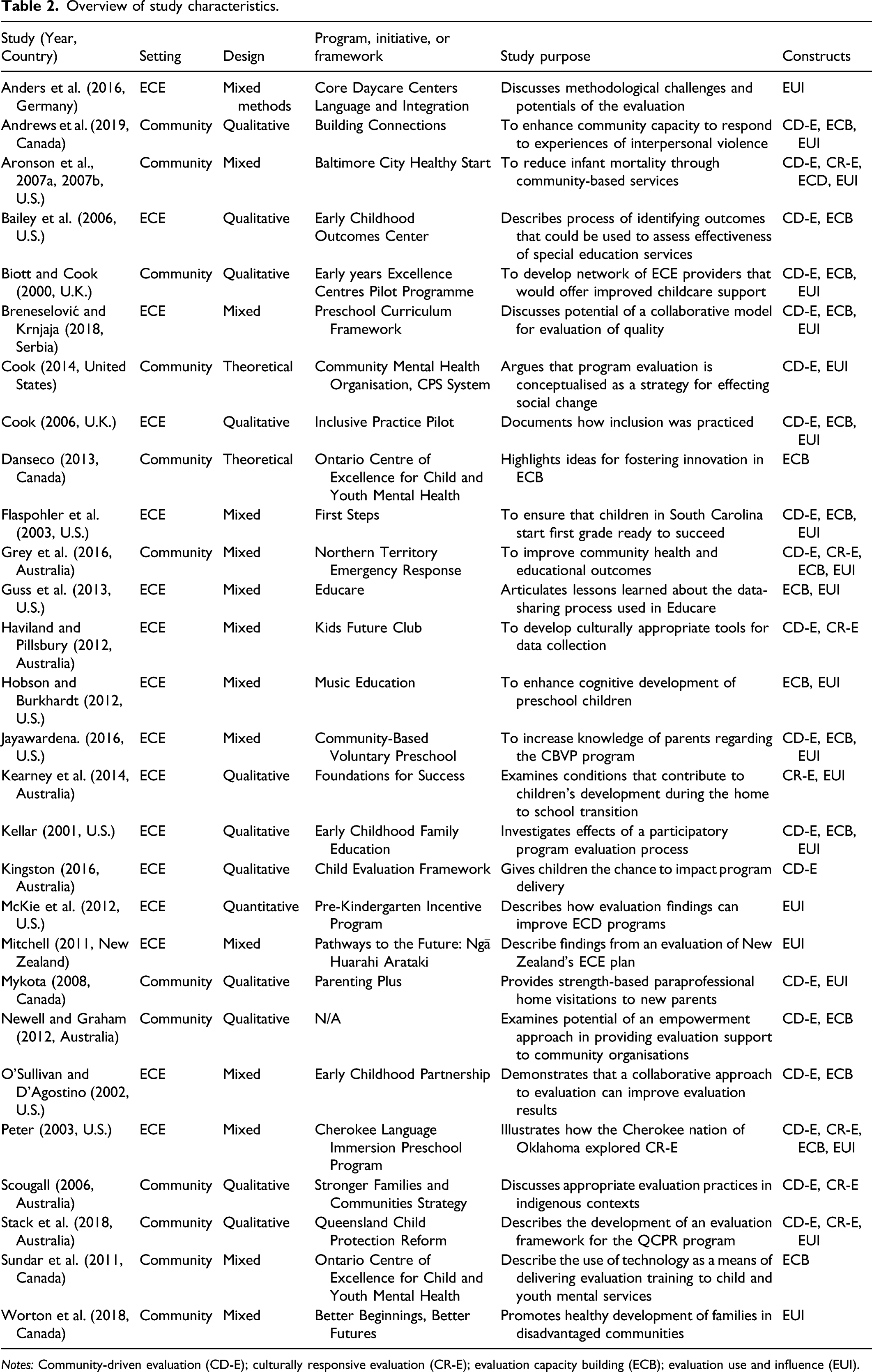

Overview of study characteristics.

Notes: Community-driven evaluation (CD-E); culturally responsive evaluation (CR-E); evaluation capacity building (ECB); evaluation use and influence (EUI).

Characteristics of sources of evidence

Synthesis of results

A thematic synthesis was conducted across the four constructs: community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use and influence. These four constructs are not mutually exclusive and there is considerable overlap in practices and principles. Within this thematic analysis, we refer to the constructs as previously defined, as definitions are inconsistent in the ECD literature.

Community-driven evaluation

More than half of the included studies (n = 18) described using specific approaches to community-based evaluation. Community-based approaches to evaluation used in these studies included participatory evaluation (n = 6), empowerment evaluation (n = 4), collaborative evaluation (n = 3), developmental evaluation (n = 2), utilisation-focused evaluation (n = 2), and democratic evaluation processes (n = 1). Empowerment evaluation aims to provide communities with the knowledge and tools they require to conduct their own evaluations, while a collaborative approach emphasises collaboration between evaluators and stakeholders, resulting in more relevant designs, data collection methods, and findings (Frey, 2018). Similarly, the goal of participatory evaluation is to engage stakeholders in all aspects of an evaluation, including its development, implementation, and analysis, and to ensure that findings are meaningful and actionable (Brewington & Hall, 2018). According to the included studies, factors affecting the uptake of community-driven evaluation methods include time constraints, lack of resources, and skepticism regarding the value of evaluation.

Ten of the studies incorporated working groups or stakeholder meetings, with select individuals representing a particular segment of the community (Aronson et al., 2007a, 2007b; Bailey et al., 2006; Biott & Cook, 2000; Jayawardena, 2016; Kellar, 2001; Kingston, 2016; Mykota, 2008; O’Sullivan & D’Agostino, 2002; Peter, 2003; Stack et al., 2018). Stakeholders were responsible for designing, implementing, and analysing evaluation plans, as well as developing data collection instruments and deliberating on results. In other words, these groups were used to leverage community experience and knowledge to contribute to decision-making. There were a few studies (n = 7) that did not include stakeholder committees but were characterised by collaboration between stakeholders and research staff to design an evaluation that was applicable and relevant to their specific context (Andrews et al., 2019; Cook, 2006; Flaspohler et al., 2003; Grey et al., 2016; Kearney et al., 2014; Newell & Graham, 2012; Scougall, 2006). These studies tried to include stakeholder insights by way of consultation and collaboration in the development of the evaluation. Specifically, two studies combined the experiences and skills of local residents with the expertise of professional evaluators (Aronson et al., 2007a, 2007b; Scougall, 2006). In Central Australia, this is known as the “malparrara” approach in which local Indigenous workers with contextual knowledge are paired with non-Indigenous professionals (Scougall, 2006, p. 51). This practice ensures that the research being conducted is both valid and meaningful.

Culturally responsive evaluation

Nine of the included studies had a cultural component; of these, six studies used a community-driven approach to enhance the cultural relevance and appropriateness of the evaluation (Aronson et al., 2007a, 2007b; Grey et al., 2016; Haviland & Pillsbury, 2012; Peter, 2003; Scougall, 2006; Stack et al., 2018). These studies engaged members of the target community during the early stages of the evaluation to design an evaluation plan that reflects community values and considers the culture of the community involved.

Five of these studies were situated within Indigenous communities (Grey et al., 2016; Haviland & Pillsbury, 2012; Kearney et al., 2014; Peter, 2003; Scougall, 2006). These studies made genuine efforts to include the community at nearly every stage of the evaluation process and were characterised by attempts to gain community consent, provide training to local researchers, and allow stakeholders the final word on all evaluation decisions (Grey et al., 2016; Scougall, 2006). It should be noted that only one article, of the 30, provided a definition of culturally responsive evaluation, stating that it is “respectful of the dignity, integrity, and privacy of the stakeholders in that it allows for their full participation” (Peter et al., 2003, p. 2). In this study, Cherokee peoples had complete control over the evaluation process. The Immersion Team, composed of the Deputy Chief of the Cherokee nation, staff from the Cultural Resource Centre, preschool teachers, and advisors from Northern Arizona University, had a direct impact on the study and whether it “conformed to Cherokee values” (Peter, 2003, p. 101).

While culturally responsive evaluation tends to employ community-driven practices, it does not follow that community-driven evaluation is also culturally responsive.

Evaluation capacity building

A few studies used direct approaches to capacity building (e.g., coaching or mentoring), while some relied on indirect approaches (e.g., participating in a collaborative evaluation process). Others used a combination of direct and indirect methods, such as providing training in research and evaluation methods and inviting stakeholders to participate in the evaluation. Fourteen studies report transferring knowledge and tools directly to the community by educating stakeholders—primarily community residents and program staff—in the principles of evaluation while also providing training in basic research techniques (e.g., facilitating focus groups, collecting data). Of these, 29% (n = 4) engaged community members in data collection activities, such as conducting interviews and administering surveys, while the remainder (n = 10) coached stakeholders in the purposes of evaluation to enhance organisational capacity. Only three studies used indirect approaches to building evaluation capacity (Breneselović & Krnjaja, 2018; Jayawardena, 2016; Peter, 2003), while four others used a combination of these methods (Grey et al., 2016; Kellar, 2001; Newell & Graham, 2012; O’Sullivan & D’Agostino, 2002).

Both Sundar et al. (2011) and Danseco (2013) touched on the capacity building efforts of the Ontario Centre of Excellence for Child and Youth Mental Health. While the former advocated for the use of interactive technology as a means of delivering evaluation training, the latter described a few key ideas intended to foster innovation in evaluation capacity building. These articles highlight the importance of transferring evaluation knowledge to clients and describe ways that they have been able to enhance the resources and support they provide. For instance, the Centre uses a blended learning design to facilitate professional development opportunities; this includes in-person consultations coupled with online learning resources, such as webinars, to accommodate individuals living in rural communities (Sundar et al., 2011).

Evaluation use and influence

Across nine studies, evaluation findings were used to inform future programming (Andrews et al., 2019; Aronson et al., 2007a, 2007b; Cook, 2006; Flaspohler et al., 2003; Hobson & Burkhardt, 2012; Kellar, 2001; McKie et al., 2012; Peter, 2003; Sundar et al., 2011). Others assert that feedback from evaluators can assist early childhood practitioners with changing their classroom practices or support them in better understanding effective “strategies for improving student outcomes” (Flaspohler et al., 2003; McKie et al., 2012, p. 58). Secondary and unintended outcomes of conducting an evaluation include the development of a training program for early childhood staff working with children with special needs, as well as the need for fundamental program changes needed to address quality issues (Cook, 2006; Kellar, 2001). Evaluation results were commonly disseminated through reports or stakeholder meetings (n = 5), although three studies described using findings to develop marketing tools, such as pamphlets or toolkits (Jayawardena, 2016; Newell & Graham, 2012; Worton et al., 2018). To ensure that evaluation findings would be useful to all stakeholders involved, two studies developed different evaluation reports for different audiences (Grey et al., 2016; Guss et al., 2013). Another innovative way of providing feedback to program staff was through report card meetings in which evaluators shared recommendations and strategies intended to improve classroom quality (McKie et al., 2012).

Jayawardena’s (2016) study relied on utilisation-focused evaluation (Patton, 2012), an approach which ensures that evaluation findings are useful to the community in question and can therefore be fully utilised. Similarly, Guss et al. (2013) discuss the use of feedback loops among evaluators and stakeholders to promote the utilisation of data. In this case, the evaluators’ responsibilities included coaching Educare staff in working with and applying data gathered from classroom observations, thus supporting the uptake of evidence-based practices.

Discussion

The goal of this scoping review was to explore community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use and influence in the field of ECD. In doing so, we hoped to identify promising practices within the sector to inform our capacity building efforts. This section is organised according to the four constructs described above. As previously mentioned, these constructs are not mutually exclusive and there is considerable overlap in practices and principles.

Summary of evidence

Community-driven evaluation

In efforts to use a community-driven approach, stakeholder engagement in the ECD sector was commonly observed during the early stages of evaluation where insights and experiences informed the evaluation design. However, while stakeholders were commonly involved in data collection activities (e.g., facilitating discussion groups or conducting surveys), it was less common for stakeholder engagement to be sustained to the later stages of the evaluation process, such as knowledge mobilisation or application of the evaluation findings. Most of the studies that embraced community-based approaches to evaluation used participatory methods in which evaluators and participants shared joint control of the evaluation. Only four studies mentioned employing empowerment evaluation, an approach that shifts control to program staff and participants (Fetterman, 2019). Whereas the former emphasises shared control of the evaluation, the latter approach sees evaluators take on a facilitator role to coach community and staff members through the evaluation process. Springett (2017) notes that “simple participation does not ensure a truly democratic process”, and that deliberation and dialogue are of utmost importance in participatory evaluations (p. 565). These components deliberately empower marginalised voices and assure the development of credible findings (Abma et al., 2017; Chouinard & Milley, 2018). While several studies actively engaged stakeholders as members of the evaluation team, many others did not. Based on these findings, it appears that community-driven evaluation approaches in which local members and stakeholders have full power over the evaluation process are not frequently utilised in the ECD sector. In fact, the literature indicates that community shaped and community informed approaches, in which funders are responsible for community outcomes and solutions may be co-designed, are used more often (Attygalle, 2020).

Further, the range of individuals defined as stakeholders differed across studies. Stakeholder representation sometimes included a broad scope of invested individuals (e.g., service providers, funders, parents), and in other cases was limited to program staff or direct program users. The lack of a common definition and a consistent approach to stakeholder engagement in community-driven evaluation may lead to uncertainties in understanding what constitutes stakeholder engagement. In cases where not all stakeholder groups are meaningfully engaged, some relevant voices, such as those of children and parents, are excluded. While most studies engaged program staff or community residents in the evaluation process, Kingston (2016) developed an evaluation framework to be used with four-to six-year-old children. She adopted a deliberative democratic evaluation approach in conjunction with a mosaic framework, which combines traditional methods of data collection with participatory techniques suitable for younger audiences (e.g., drawing, journaling). According to Purdue and colleagues (2018), involving children and youth in evaluations can strengthen the relevance and legitimacy of programs, as their experiences often differ from those of adults. As a result, this approach rectifies power imbalances between children and adults and ensures that children’s voices are not only heard, but taken into consideration. However, few studies tried to engage children in the research process; this suggests that researchers, evaluators, and practitioners may be unaware of the benefits of this approach or may not have the necessary training or resources to implement it.

There is a clear gap in the peer-reviewed evidence, as the majority of this work is likely occurring in non-academic spaces. Evaluators and practitioners working in the ECD field can therefore draw from resources in the gray literature to inform their practices. Derrick-Mills (2021) touches on various examples from early care and education studies to show how stakeholders were engaged in research. The brief provides several pertinent tips, including: regularly meeting with stakeholders to build trust and better understand their perspectives; establishing local advisory committees to support researchers in understanding the local culture; hosting regular stakeholder meetings or brainstorming sessions to guide the research process; drawing on qualitative methods of data collection, such as interviews or focus groups, to allow for in-depth conversations with relevant stakeholders; and importantly, supporting fair and respectful participation by being transparent about the goals and purposes of the research. Additionally, many nonprofit and government agencies produce reports that share strategies for quality improvement in early childhood services (e.g., Education Review Office, 2021; Institute of Education Sciences, 2013), and may prove valuable for those working in the sector.

Culturally responsive evaluation

Most of the articles featuring culturally responsive evaluation approaches came from work undertaken in Indigenous communities. Culturally responsive evaluations usually focused on the local context in which the evaluation was being conducted, rather than generalised understandings of culture. As a result, culturally responsive evaluation uses a bottom-up approach with a greater degree of shared power between community members and researchers. This ensures that the evaluation is appropriate and useful, and that community residents are given the opportunity to strengthen their evaluation skills. However, for evaluation to be useful to the field of early childhood, researchers must critique the role that evaluation has historically played in the marginalisation of specific communities (Ball & Janyst, 2008; Chouinard, 2016; Drawson et al., 2017; Gokiert et al., 2017b). For instance, traditional worldviews have been subjugated by Western ways of knowing, and evaluation has been used as a tool against certain communities. The early childhood field therefore requires evaluation approaches that are tailored to varied cultural contexts, including honoring cultural values that inform child well-being (Kirova & Hennig, 2013; Tribal Evaluation Workgroup, 2013).

Although several promising practices were identified, few studies described using a culturally responsive process in detail, and culture was ill-defined in most of the studies. Across the review, different terms were used to describe evaluations that account for culture, including culturally responsive and culturally sensitive. A single, universally accepted term and definition of culturally responsive evaluation would establish a common foundation that could be more easily applied in practice. These findings indicate that conducting an evaluation based on a community’s knowledge and cultural context is not widely practiced in the ECD sector; this is particularly problematic given the ethnocultural diversity of countries like New Zealand, Australia, Canada and the United States. It is critical for evaluators to honor the cultural context in which the evaluation is being conducted to avoid creating superficial understandings of how people experience and make sense of the world. Chandna et al. (2019) suggest working closely with the partner community to determine ways to overcome such barriers. An important part of this engagement process relates to relationship building between stakeholders and evaluators. Collaboratively determining priorities is crucial to ensuring that the evaluation respects local values (Tribal Evaluation Workgroup, 2013). Ultimately, increasing researchers’ and early childhood practitioners’ knowledge and awareness of culturally responsive evaluation practices will support the development of contextually appropriate programs, thereby ensuring that marginalised communities receive meaningful supports.

Commonly used research frameworks, such as participatory action research (PAR) and community-based participatory research (CBPR), can also be applied to evaluation. Both approaches emphasise stakeholder engagement and are committed to addressing issues of power, equity, and justice (Baum et al., 2006; Tremblay et al., 2018). Collier et al. (2018) drew on the principles of CBPR to strengthen the development and evaluation of an obesity reduction program in Palau. An advisory council of local stakeholders, including health workers, counselors, and educators, was established in order to ensure the cultural relevance of the research. The authors add that developing a culturally responsive program requires commitment from all partners; an understanding of cultural differences; and onsite researchers to allow for effective collaboration with stakeholders. To this end, Collier et al. (2018) suggest building intercultural research teams to rapidly and sensitively address potential barriers. Employing PAR or CBPR principles has the potential to enhance the quality and relevance of an evaluation, ultimately facilitating the use of research findings.

Evaluation capacity building

Building evaluation capacity can develop the knowledge, skills, and motivation necessary to foster desired organisational changes that result in the regular production of informative evaluation insights (Bourgeois & Cousins, 2013; Bourgeois et al., 2016). In building evaluation capacity, it is crucial not only to consider how to produce evaluative insights, but also how the field of ECD will use the information. Thus, evaluation is not an end in itself but rather a process intended to produce meaningful evidence and turn it into action (Patton, 2012). Multiple studies showcased efforts to build the evaluative capacity of organisations and communities. Attempts to enhance evaluation knowledge and skills were said to improve stakeholders’ understanding of research methods and assist communities in meeting funding requirements, with the ultimate goal of promoting sustainable evaluation practice. However, direct approaches to building evaluation capacity (e.g., coaching, mentoring) appeared to be more common than indirect approaches (e.g., participating in a collaborative evaluation); this may be due to a lack of time and resources, or perhaps the difficulty of carrying out such evaluations. The depth of participation, lack of evaluation knowledge among participants, and power inequities between both stakeholders and evaluators are cited as common challenges to successful collaborations (Chouinard & Milley, 2018). Thus, when conducting stakeholder-engaged work, it is important that researchers allocate sufficient time to adequately address these factors.

Further, the capacities that are built should foster evaluative practices that are community-driven and culturally responsive (Cram et al., 2018). These stakeholder-engaged approaches to evaluation support capacity building by advocating for the active participation of community members throughout all stages of an evaluation (Askew et al., 2012). Consequently, the linkage between ECB and collaborative evaluation approaches “derives from their shared interest in democratising and decentralising evaluation practice” (Hargraves et al., 2021, p. 99). This means that evaluative knowledge, skills, and attitudes are made accessible to all. Given the valuable insights and lived experiences of stakeholders, it is critical that evaluators engage them as equal partners. This is a crucial component to facilitating evaluation utilisation, as it ensures that findings are credible and also meaningful to those most impacted by the process (Askew et al., 2012). Moretti (2021) notes that purposeful conversations, coaching, and mentoring can be used to highlight the existing capacities of stakeholders so that they can more easily embrace the process and begin to view themselves as “natural evaluators” (p. 13). She adds that establishing a collaborative team environment and linking evaluation findings to positive outcomes for children can further motivate educators to undertake evaluation. By attending to these principles, evaluators can empower program staff, early childhood practitioners, and other stakeholders to conduct quality evaluations that provide proof of impact and can in turn help secure the funding and resources necessary to effect positive change in the ECD field.

Evaluation use and influence

Through the ECN’s engagement processes, we have learned that organisations in the early childhood field experience overwhelming pressure to collect data that demonstrate program success. However, many professionals lack the capacity to gather and use this information effectively, leading to the collection of data that is often not meaningful or actionable at the community level (Ontario Nonprofit Network, 2018). There is an urgent need to build individual and organisational capacity to ensure that evaluative insights are implemented in an appropriate and timely manner. Across the identified studies, evaluation findings were typically used to assess the programs’ impacts on the community, convey evidence of the impact of initiatives, and improve future efforts. Several studies described tangible ways that evaluation findings would be utilised, suggesting that researchers and evaluators are actively sharing results with stakeholders and other interested parties. While this is a promising trend, a gap in the literature relates to support with the mobilisation of findings. Without proper training in basic research and evaluation methods, early childhood organisations will continue to generate large amounts of uninformative data. Therefore, when building evaluation capacity, it is important to consider how ECD practitioners and other key stakeholders will use the resulting data. This complementary relationship between ECB and evaluation use has been highlighted across different studies (e.g., Cousins et al., 2014; Lopez, 2018; Olejniczak, 2017) but is most notably demonstrated in Patton’s (2012) utilisation-focused evaluation framework, which touches on the importance of training evaluation users in order to support evidence-based practices and decision-making. Consequently, evaluation capacity building activities should focus on developing the capacity to do evaluation, as well as use it (Cousins et al., 2014).

Recommendations

The findings from this scoping review indicate that there are three things that evaluators and practitioners working in the field of ECD should consider when carrying out community-based work: 1. Engaging stakeholders in intensive dialogue and encouraging their participation through direct or indirect approaches is crucial to conducting efficient, meaningful, and action-oriented evaluations that have the potential to effect change. This can be accomplished by collaboratively determining priorities, involving stakeholders in data collection, analysis, and knowledge translation, and having candid conversations about the purpose of the evaluation, ensuring that community members have opportunities to ask questions and provide feedback throughout the entire process (Tribal Evaluation Workgroup, 2013). The development of evaluation practices that are empowering, sustainable, and embedded in day-to-day routines have a strong potential to enhance the evaluative capacity of staff (Greenaway, 2013). 2. Those working with marginalised populations must carefully consider the issues of equity and social justice, and aim to minimise the unequal power relations present between evaluators and stakeholders (Thomas & Parsons, 2017). Culturally responsive evaluation should engage community members in a meaningful manner, and acknowledge the cultural context of the evaluation (Stickl Haugen & Chouinard, 2019). Most importantly, evaluators are encouraged to build trusting relationships with stakeholders and support their capacity to learn and understand the purpose and impacts of the work (Campbell & Wiebe, 2021). There are a plethora of resources around ethical practice and cultural safety that have emerged within in Australia, providing practical guidance on what constitutes culturally responsive evaluation and issuing recommendations for undertaking evaluations with disadvantaged communities (e.g., Cargo et al., 2019; Gollan & Stacey, 2021; Kelaher et al., 2018; McDonald & Rosier, 2011; Muir & Dean, 2017). 3. Evaluation capacity building is key to enhancing stakeholders’ knowledge, skills, and confidence in conducting quality evaluations, as well as implementing and mobilising findings. Thus, community-driven, culturally responsive, and capacity building approaches to evaluation must be combined with effective action to respond to the needs of children and families. It is important that research partnerships are action-oriented and lead to practical results that resonate with community members.

Limitations

This scoping review is the first that we are aware of to explore community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation and influence in the field of ECD. While it uncovers what little is known about evaluation in the field of ECD, it is not without limitations. The exclusion of gray literature and studies published in non-English languages, as well as the lack of an updated literature search, may have resulted in the omission of relevant studies; however, these exclusions were necessary for the feasibility of the review. Moreover, we engaged key stakeholders from the ECN, members of the working group, as well as a steering committee in reviewing preliminary findings and providing feedback to ensure the relevance of findings. A deeper examination of gray literature is nevertheless a future direction for enhancing this review and contributing to promising practices in the field of ECD.

As these findings reflect the peer-reviewed literature, it will be critically important to understand what is happening at the community level and within ECD organisations. It is possible that many innovations and promising practices are occurring, but the findings are not being reported or published and are therefore not accessible. As such, the next step is to engage ECD stakeholders and organisations in conversations about evaluation gaps, needs, and innovations.

Conclusion

This review has revealed that comprehensively applying community-driven, culturally responsive, evaluation capacity building, and evaluation use principles and approaches to evaluation in the ECD sector is not consistently or commonly done. Numerous studies include some aspects of a community-engaged approach, such as stakeholder involvement, but do not attempt to conduct evaluations that fully embrace the aforementioned constructs. These findings suggest that community-driven evaluation, culturally responsive evaluation, evaluation capacity building, and evaluation use are not fully understood, and standard practices are not readily shared in the literature. The studies that did use a community-based approach to evaluation were mostly led by university-based evaluators or researchers in partnership with community members. Perhaps there is sufficient literature across each of these domains outside of the field of ECD that we can learn from and apply to the sector. There is still a need for further exploration of evaluation practices within the ECD field to determine areas of the most significant need for evaluation capacity building, as well as to identify areas where assets and innovations already exist.

The ECN’s current priority is to understand evaluation needs and assets so as to direct our capacity building efforts. Informed by these reviews and a forthcoming gray literature scan, we are developing engagement toolkits that draw on multiple engagement processes and tools (e.g., stimulus papers, interviews) to guide community dialogues and further explore emerging themes. Findings from this review will inform strategies for bridging evaluation gaps in the ECD sector, ultimately equipping organisations with the evaluative tools to improve practices, programs, and policies that impact the families and communities they serve.