Abstract

Dear Editor,

OpenAI’s conversational AI language model, ChatGPT, makes use of deep learning to produce responses that are human-like on a wide range of subjects. In just 2 months after its November 2022 launch, it gained 100 million users. 1 Clinical decision-making and other medical domains could benefit from ChatGPT’s generation of logical text, which is produced through supervised and reinforced learning. Doctors now have a strong tool for evaluating medical data and improving clinical practice, thanks to its quick adoption throughout the world. However, it is essential to highlight that ChatGPT should support healthcare professionals in their work, not replace them. 2

Despite its widespread adoption and popularity, the therapeutic application of ChatGPT involves ethical and practical concerns. As ChatGPT does not have access to reliable sources or real-time medical databases, it limits its ability to offer accurate and up-to-date medical advice. The quality of the data it was trained on, which might not always indicate current medical guidelines, is another factor limiting its accuracy. For instance, ChatGPT can produce language that is realistic and human-like; it has generated attention from all over the world, yet there are constraints with ensuring the authenticity and individuality of its content. Studies have shown that reviewers can occasionally be tricked into believing that the product is authentic. 3 The challenge of ensuring the integrity of the created content, however, presents ethical questions for its use. At the same time, a recent study discovered that ChatGPT was able to provide excellent, sympathetic answers to queries from patients on a web-based forum. 3

Although ChatGPT responses are often considered empathetic and accurate, there is not much empirical evidence to support these statements. The clinical efficacy of AI-generated content needs to be investigated, especially in high-stake healthcare settings. The number of studies on ChatGPT’s clinical uses is limited, despite its increasing popularity. Strong features and possible risks are both present in ChatGPT, making it a 2-edged sword. One area where AI integration could be especially valuable is in electronic health record systems, where it could improve diagnostic accuracy, treatment planning and patient outcomes. 2 Nevertheless, as healthcare practitioners explore these possibilities, it is critical to underline ChatGPT’s role as a supplement, not a substitute.

In order to illustrate ChatGPT’s potential applications for specific medical conditions, let us examine its role in the context of CAR-T cell therapy for systemic lupus erythematosus (SLE). SLE is a hereditary autoimmune illness, mostly affecting women of childbearing age and is characterised by the generation of autoantibodies and damage to organs. Because B cells are essential to the pathophysiology of SLE, immunotherapy is a potentially effective treatment option. CAR-T cell therapy, which reroutes lymphocytes to target particular antigens, has shown promise in the treatment of SLE, providing successful B-cell depletion with positive early outcomes. However, further research is needed to determine long-term efficacy and safety. 4

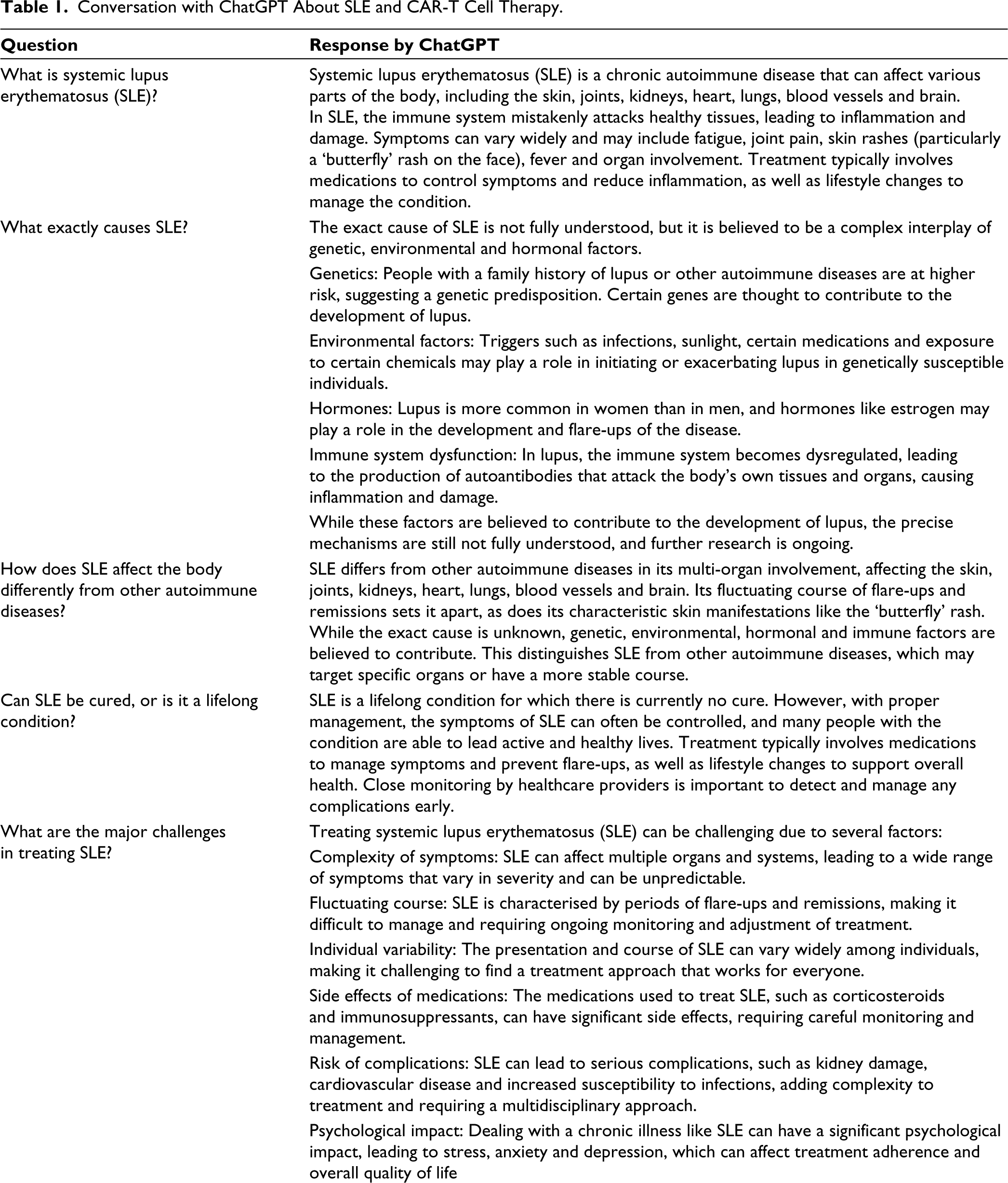

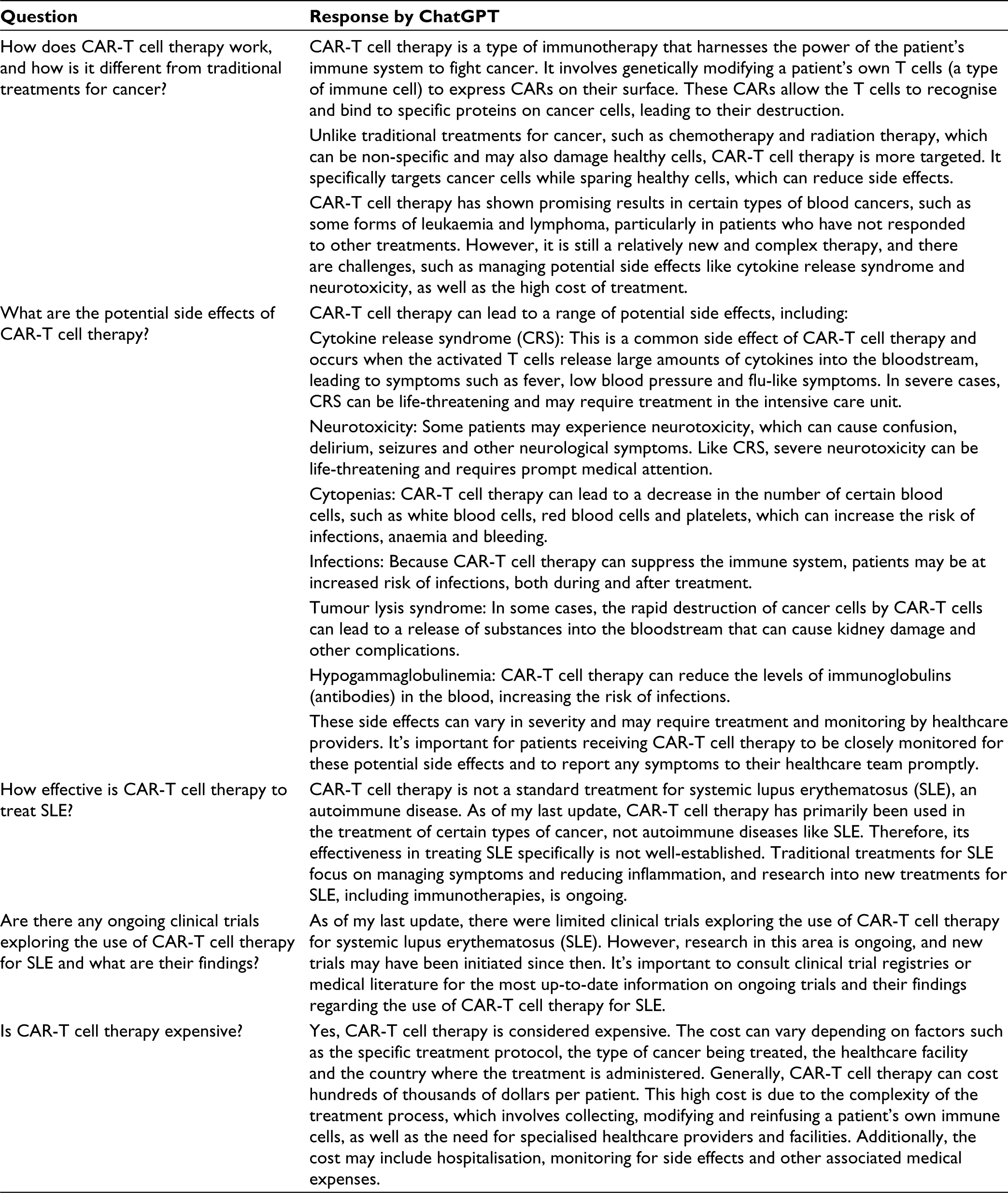

Table 1 describes a conversation comprising 10 questions between a common man and ChatGPT about SLE and exploring the impact of CAR-T cell therapy.

Conversation with ChatGPT About SLE and CAR-T Cell Therapy.

ChatGPT responds to the fears of patients in a compassionate manner. ChatGPT produces comprehensive articles with mostly accurate information in simple terms, like ‘In SLE, the immune system mistakenly attacks healthy tissues, leading to inflammation and damage’. Additionally, it provides users with realistic information, such as the statement that ‘SLE is a lifelong condition for which there is currently no cure’. It also produces material along the lines of ‘It’s important to consult clinical trial registries or medical literature for the most up-to-date information on ongoing trials and their findings regarding the use of CAR-T cell therapy for SLE’.

However, the unverified nature of some of these statements, particularly the lack of specific references, raises concerns about accuracy. ChatGPT and other AI technologies have the potential to improve clinical knowledge extraction; nevertheless, their non-deterministic nature limits them, as they frequently produce different results for little changes in information. 3 Additionally, while incorporating AI into healthcare, ethical issues such as data privacy, the possibility of AI bias and responsibility for wrong medical advice should be taken into consideration. It is critical for patient safety that AI systems adhere to strict criteria for accuracy and morality. Although ChatGPT has the potential to assist medical professionals in providing compassionate and high-quality information, particularly for conditions like SLE, ensuring accuracy and reliability should remain a priority.

In conclusion, as we investigate the role of AI in healthcare, it is critical to strike a balance between innovation, patient safety, ethical issues and economic concerns. Future research should concentrate on evaluating ChatGPT’s reliability and accuracy, especially for individuals with complicated illnesses like SLE. In order to create AI systems that deliver reliable and accurate medical information, collaboration between researchers studying artificial intelligence and healthcare professionals will prove crucial.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.