Abstract

Dear Editor,

Writing research articles involves extensive literature review, data analysis and synthesis of findings. This can be time-consuming and labour-intensive and many busy physicians may find it difficult to write their manuscript on their own. 1 Generative artificial intelligence (AI)-based chatbots possess the capability to compose content according to user instructions. Many authors are curious and apprehensive about utilising large language model (LLM)-based chatbots like ChatGPT, Meta AI, Claude and Perplexity in academic writing. As these chatbots are capable of generating an article within minutes, many of the authors are utilising them for various purposes of manuscript preparation and many may still have questions and doubts in mind. 2

LLM-based chatbots can be a valuable tool for authors in various aspects of writing a manuscript. It excels in generating initial drafts, providing a coherent structure and flow to the content, which can save authors’ significant time. It can assist in brainstorming ideas and suggesting relevant literature, helping authors explore different perspectives and fill gaps in their knowledge. It can aid in refining the language and style of the manuscript, ensuring clarity and fluency, and making complex concepts more accessible. It can also help in formatting references and citations according to specific guidelines. Furthermore, ChatGPT can generate summaries, abstracts and concise explanations of key points, enhancing the overall readability of the manuscript. While human expertise remains crucial for critical analysis and interpretation, chatbots can efficiently handle many supportive tasks. 2

LLM-based chatbots, despite its capabilities, exhibit notable weaknesses when it comes to writing scientific manuscripts. One primary concern is the accuracy and precision of its content, as it may generate text that contains factual errors or misinterpreted data, compromising the scientific integrity of the manuscript. This is also known as hallucination of chatbot where it writes content without any literary evidence. Additionally, it often falls short in providing appropriate citations and references. It can even generate fictitious reference. Furthermore, chatbots may struggle with in-depth critical analysis and the formulation of novel hypotheses, as it relies heavily on existing data and patterns rather than producing original insights.2,3 These limitations highlight the need for human oversight and expertise in the creation of scientific manuscripts to ensure their rigour and credibility.

Many of the chatbots have both a free version and a paid version. For example, ChatGPT3.5 is free whereas ChatGPT4 is a paid version. Free versions of LLM chatbots often have limited features, response times, and access to up-to-date data, while paid versions provide enhanced capabilities, faster response times, and access to the latest updates and advanced features. 4 Free versions may include usage restrictions, whereas paid versions typically offer a more seamless and uninterrupted user experience. Additionally, paid versions often come with customisation options for specific needs.

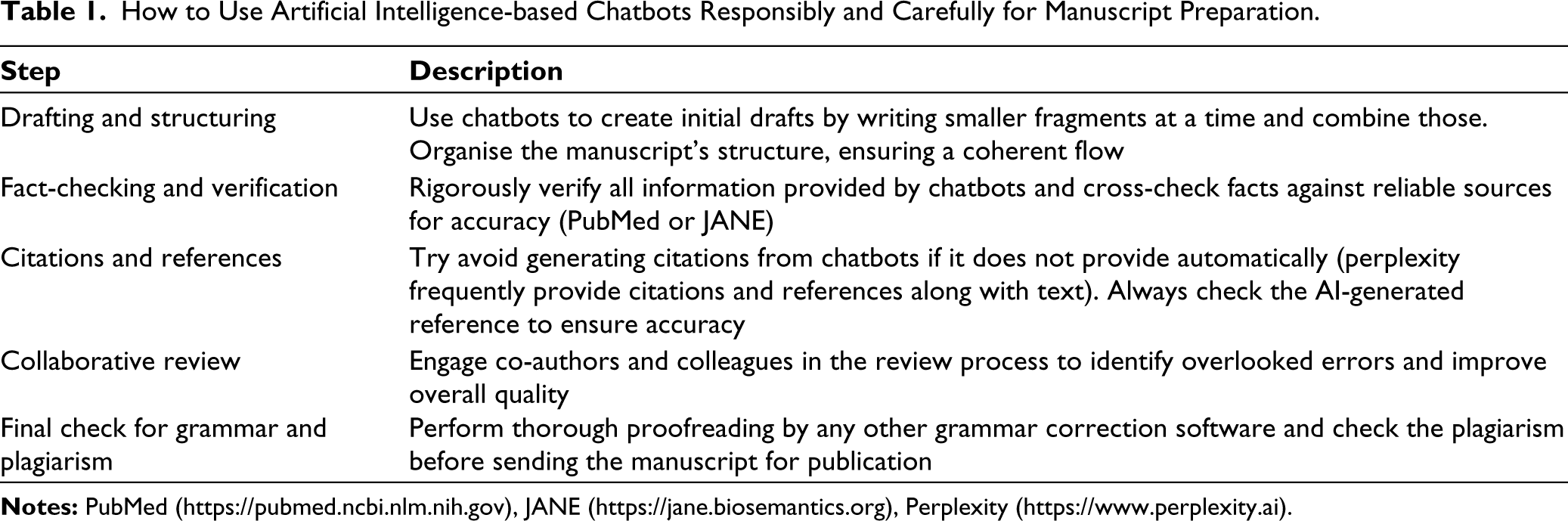

To overcome chatbots’ limitations in manuscript preparation, combine its use for drafting and structuring with rigorous human fact-checking and critical analysis. Table 1 shows brief steps for using chatbots responsibly and carefully for manuscript preparation. 5

How to Use Artificial Intelligence-based Chatbots Responsibly and Carefully for Manuscript Preparation.

Text plagiarism should always be checked by reliable software (e.g. iThenticate). However, AI detection may not be a necessary task in academia and publication due to several limitations. These tools often struggle with accurately identifying AI-generated text. AI models are becoming more sophisticated and human-like in their writing. False positives can erroneously flag genuine work, while false negatives might miss AI-generated content. Additionally, over-reliance on detection tools can lead to a superficial approach to integrity. If any non-native speaker of English writes a draft and edits only language by AI, the report would show high AI content. 6

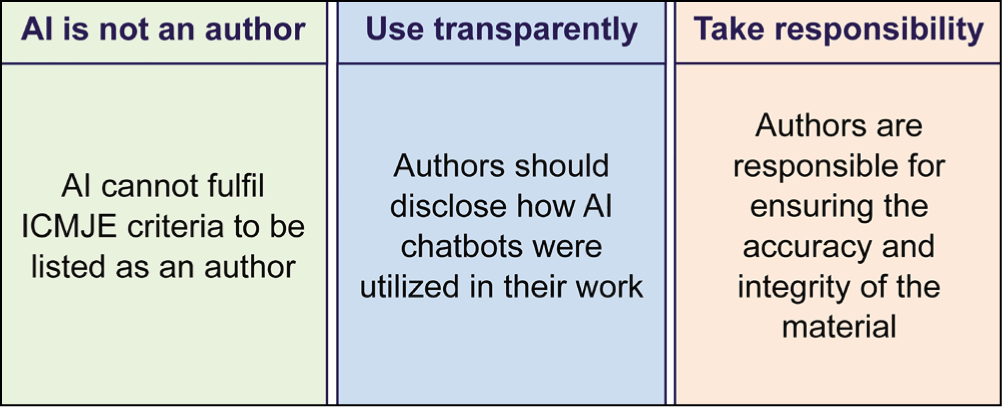

There is no inhibition, perhaps from all the segments of the scholarly community and publishers in the use of generative AI in manuscript preparation. The World Association of Medical Editors ‘acknowledges that chatbots perform different functions in scholarly publications’. Their guidelines for authors are summarised in Figure 1. 7

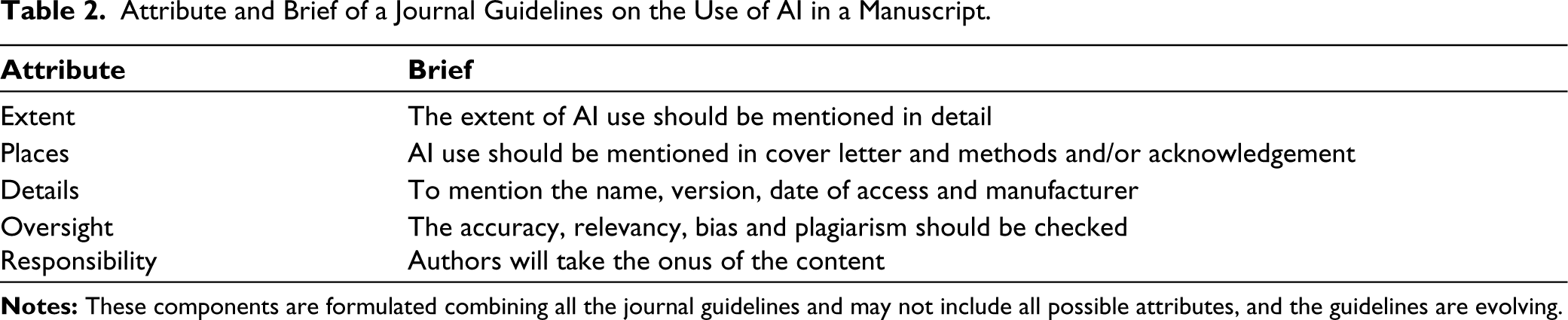

A chatbot cannot take responsibility for the content generated. Hence, it cannot fulfil the International Committee of Medical Journal Editors criteria to become an author. 8 Authors are free to take writing assistance from any chatbot. However, the type and extent of use should be specified. The authors should be responsible for the content they use in their manuscript. And finally, it should be acknowledged for ethical publication. 9 The attribute of ethical declaration of AI in manuscript is available in Table 2.

Attribute and Brief of a Journal Guidelines on the Use of AI in a Manuscript.

In research writing and publishing, some agencies help busy authors with data analysis and manuscript writing. 10 Many even help revise, reply to reviewer’s comments, and write cover letters. This is well known and many reputed journals are promoters of these services and many have their agencies. This is being considered ethical. Hence, if the same help is taken from AI tools, it is ethical. If authors get help from any human agency, they commonly acknowledge the nature and extent of help. In this article, we provided guidelines on acknowledging generative AI in manuscript preparation. Hence, take help, take responsibility for the content, and acknowledge!

Footnotes

Acknowledgements

We thank Ahana Aarshi and Sarika Mondal for helping in generating image for the figure used in the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.