Abstract

Background

It is estimated that 6%–7% of the population suffers from mental disorders. WHO reported that one in four families is likely to have at least one member with a behavioural or mental disorder. Post-pandemic, the world has experienced a huge surge in mental health issues. Unfortunately, not everyone is able to access the available mental health services due to constraints such as lack of financial assistance, living in remote areas, fear of being stigmatised and lack of awareness. The emergence of online mental health services could solve some of these problems, as these are easily accessible to people from anywhere, are cost effective and also reduce the fear of being judged or labelled. Lots of efforts are being made today to integrate artificial intelligence with the traditional form of psychotherapy. The role of chatbots for mental health services in the form of e-therapies has been found to be highly relevant and important.

Summary

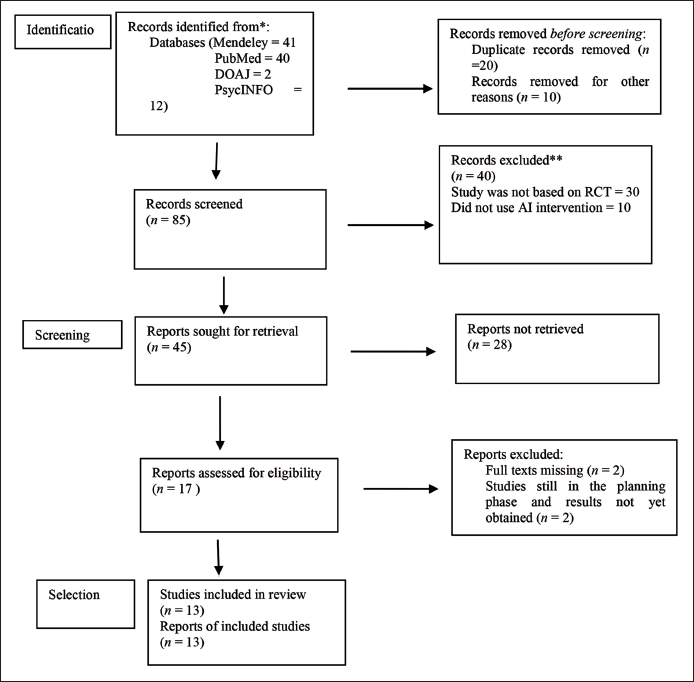

The present study aims to systematically review the evidence on the use of AI-based methods for treating mental health issues. Overall, 95 studies were extracted using some of the popular databases such as Mendeley, PubM, INFO and DOAJ. The terms used in the search included ‘psychotherapy’, ‘online therapies’, ‘artificial intelligence’ and ‘online counselling’. Finally, after screening, 13 studies were selected based on the eligibility criteria. Most of these studies had employed conversational agents as an intervention. The results obtained showed the significant positive consequences of using AI-based approaches in treating mental health issues.

Key Message

The study strongly suggests integrating AI with the traditional form of counselling.

Introduction

The COVID-19 pandemic shook the entire world, causing havoc and fear everywhere on earth. It exposed our vulnerabilities, affecting us both physically and mentally. Although today we have defeated coronavirus, but given the ambiguity and uncertainty of the current times, there has been a rapid surge in various mental health issues across different parts of the world. Even after surviving the pandemic, people continue to suffer from various mental health issues. According to WHO (2020), ‘mental disorders are one of the most significant public health challenges … they are the leading cause of overall disease burden. One of the most common mental illness worldwide is depression whereas two-thirds of population are left with unmet need’. 1 Unfortunately, to address these concerns, we do not have the required number of mental health professionals. 2 The limited availability of specialist mental health human resources, including psychiatrists, clinical psychologists and psychiatric social workers, has been one of the barriers in providing essential mental healthcare to all. 3 So many times, lots of people are not able to get proper help, and they continue to suffer.

The pandemic has encouraged nations to build digital mental healthcare programmes. These include telemedicine, online healthcare, use of chatbots or conversational agents for providing counselling and support to people and virtual consultations, among many others. One of the major advantages of these systems is that they can be accessed from anywhere by people, especially during times of crisis. 4 These are also cost effective and affordable for people who have financial constraints. Also, they can be useful for the practitioners to closely monitor their clients in real time.

Effectiveness of Artificial Intelligence in Psychotherapy

Chatbots have indeed made significant advancements in simulating human conversation and have found application in various domains including mental health. A chatbot is an application which initiates a conversation using AI that could be done at different platforms like messaging or voice chat. Some of the chatbots are completely automated while some require a human interface. 5 The first chatbot in the world was designed in 1966 by Weizenbaum, which came to be known as ELIZA. It became very popular as it could perform pattern matching to phrase responses based on decomposition rules. 5 It was soon followed by another mental health app called Siri, which was released by Apple. Then, Microsoft developed Cortana and Google developed Assistant. After these came the very famous ALEXA, built by Amazon in 2018. Woebot is a fully automated conversational agent that is used for treating depression and anxiety. It uses a digital version of cognitive behavioural therapy (CBT) for inducing behaviour modification in clients. There are chatbots like Ellie that can detect subtle changes in our facial expressions, our rates of speech or the length of pauses. This information could be used to make diagnostic assessments or provide more personalised interactions.

Methodology

The research method used in the present study is systematic review. The review followed the guidelines of PRISMA.

Selection of the Studies

In order to identify and select the related studies, electronic searches were conducted using Mendeley, PubMed, DOAJ and PsycINFO. The last search was done on 22 May 2023.The studies that were identified and retrieved were further analysed afterwards, through titles and abstract. All those studies that were not related to the research topic were excluded. Finally, for the final evaluation, full texts of the selected studies were retrieved. The details are given in the PRISMA flowchart in Figure 1.

Inclusion Criteria

The inclusion criteria for the current study were as follows:

An intervention method based on artificial intelligence was used with or without the aid of a therapist. Sample characteristics included mild level of mental health issues such as stress, anxiety and depression or no mental health issue The main form of treatment given was psychological in nature. The studies that were included were based on randomised control/clinical trials (RCTs)/quasi-experimental methodology. The studies that were included were conducted between 2018 and 2023.

Exclusion Criteria

The exclusion criteria for the current study were as follows: The studies that were based on methods other than RCTs/quasi-experimental methodology were not included AI was not used for treatment or counselling. Studies conducted before 2018 were not included. Sample characteristics with severe level of mental health issues were not included.

Search and Screening

An electronic search was conducted using variations of terms such as ‘online counselling’, ‘artificial intelligence’, ‘psychotherapy’ and ‘psychological issues’. These terms were used for searching articles and abstracts.

Data Extraction

For data extraction, a pre-designed sheet was used. The sheet included information such as the authors’ details, year of the study, sample size, research design, type of AI used and results.

Risk of Bias Assessment

The selected studies were assessed for quality using a study quality assessment tool from the National Heart Lung, and Blood Institute (NHLBI) (

Selection of the Studies

Using the four important databases, a total of 95 studies were generated. The details of the studies are as follows: Mendeley—41 studies, PubMed—40, DOAJ—2, PsycINFO—12. Repeated studies were eliminated. The remaining 85 studies were further screened title wise and abstract wise. Finally, based on the parameters of the inclusion criteria, 13 studies were identified.

Details of the Included Studies

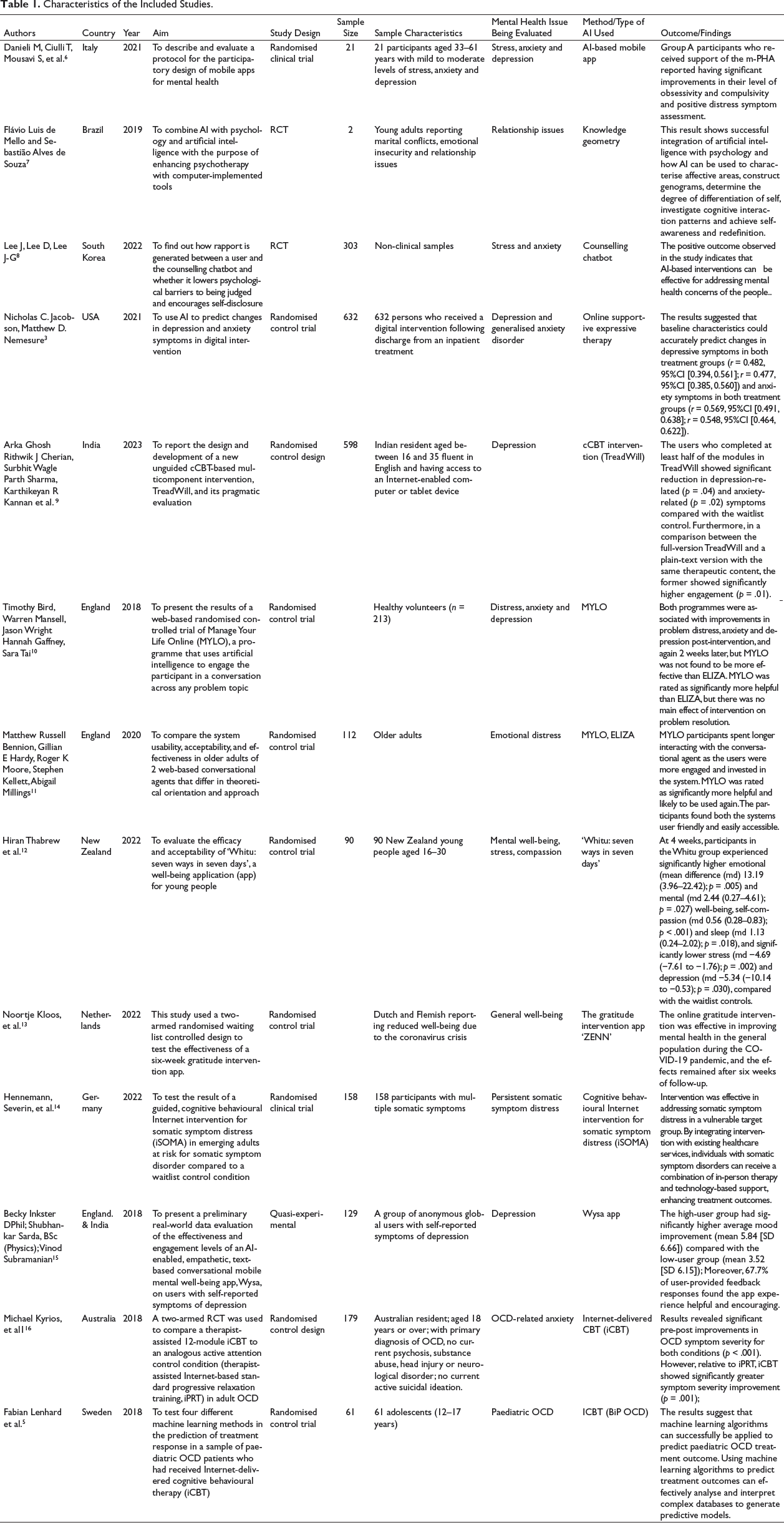

The details of the studies that were finally reviewed are given in Table 1.

Characteristics of the Included Studies.

Countries Where the Study Was Conducted

Different studies from across the world were included. The names of the countries are Italy, Brazil, the United States, South Korea, India, New Zealand, Germany, Netherlands, Australia, Sweden and England.

Major Psychological/Mental Health Issues

It was observed that most of the studies focused on studying people with anxiety and depressive symptoms (S1, S4, S6, S11), one study focused on relationship issues (S2), one research was done on emotional distress (S7), four studies worked on stress (S1, S3, S6, S8), two research studies focused on OCD-related issues (S12, S13) and one study was based on persistent somatic symptom distress (S10).

Research Design

The studies used in this article have followed RCT and quasi-experimental design as their methodology for getting results.

Treatment/AI App Used

It was observed that most of the studies used a conversational chatbot. Unguided computerised CBT (cCBT) was used in four studies as a means to provide therapy to the selected participants. (S5, S10, S13, S12). The intervention used in S5 was a cCBT named TreadWill, which was fully automated, easy to use and easily accessible to the participants. This study was done to evaluate how effective the cCBT was in reducing the symptoms of anxiety and depression. Intervention was provided by a software in two different versions. One was an experimental version, in which participants received videos, conversations and slides and not just plain text. The other was the active control version, in which the participants received the same therapeutic content but using plain text only. Google Sheets were used to embed the slides, and YouTube was used to embed the videos on the website. The participants were asked their preferred time to log in, and reminders were also sent to them 10 minutes prior to their scheduled time of joining. S10 used Internet-based CBT for somatic symptom distress (iSOMA) in emerging adults. The app included seven consecutive models and a short introduction. Participants were required to complete one module per week. The content of the modules was adapted from a validated face-to-face CBT programme for unexplained symptoms. The modules had psychoeducation, exercises and assignments via text, video or audio. By the time the participants reached the module, an app-based diary was introduced to them to monitor symptoms, perceived stress, illness anxiety and coping strategies. Within 48 hours of module completion, the participants received feedback through the internal messaging system of the intervention platform. These feedback were provided by trained psychologists. The participants had the option of also contacting their e-coaches through the internal messaging system of the intervention. The ICBT app called BiP OCD was used for intervention in the S13 study. BiP OCD is a 12-week web-based parent-supported and therapist-guided CBT protocol. It is delivered through an online portal that patients can access using a personal user name and password. The content is similar to that of a traditional face-to-face CBT intervention for OCD. The patients can get access to psychoeducational texts, videos, animations and exercises that they can do by themselves or with the help of their parents. In the S12 study, iCBT, a therapist-assisted 12-module app, was used with one group of participants. It included psychoeducation, mood and behavioural management, exposure and response prevention, cognitive therapy and relapse prevention. The study also used an Internet-based standard progressive relaxation training (iPRT), which was provided to the second group. The two groups were compared in terms of the improvement in their existing conditions. The procedures for both the groups were quite similar: participants received a weekly e-mail with a maximum of 15 minutes of preparation time from a remote therapist trained in e-therapy. The purpose of the e-mail was to monitor the progress and provide support and encouragement for the treatment.

One of the studies used a mobile personal healthcare agent (mPHA), an AI-based mobile app, as a means of intervention. Patients were engaged in short conversations; however, these conversations were not scripted but rather based on the recognition of their emotional state (S1). A web-based system called ‘Meet Yourself’ was used in another study, in which a total of 140 questions along with the probable answers and scores were provided by the psychotherapist. These were further grouped into five sub-areas, including affective, productivity, sociocultural, organic and spirituality areas. Towards the end of the session, an advice was generated by the AI for affective relational, assertiveness, self-esteem and autonomy areas (S2). S3 employed text-based psychotherapy, which is a fully automated, text-based programme that uses Google Dialogflow. This app is based on natural language processing technology. This technology enables a chatbot to give plausible responses to a wide range of user inputs. The dialogues are based on Rogerian Client–centred therapy, under the supervision of professional counsellors. The participants could talk about any problem and were provided with different strategies of problem solving. There was a link given to the participants through which they could connect with the chatbot.

In one study (S4), online supportive expressive therapy was used based on the machine learning model. AI was used with the purpose of predicting changes in the level of depression and symptoms of anxiety in digital intervention. The predictors included responses to a range of self-reported questionnaires occurring before the start of the treatment. Two groups were made: one group received online supportive expressive therapy with feedback from a therapist, and the other group received information about stress and coping, but no online supportive expressive therapy. Another study used a self-help computer software called Manage Your Life Online (MYLO). It is an automated programme that simulates a conversation between a client and a therapist 6 ; it also tries to help the participants in solving their problems by following the principles of MOL therapy. It analyses the inputs from the clients and looks for key terms and themes. It asks questions about the problem with the aim of increasing clients’ awareness about the conflict. The study also used another AI-based app, ELIZA, that is based on Rogerian therapy (S6). One more study used MYLO and ELIZA with the older adults (S10) for problem distress and resolution.

AI-based mental health was also used for promoting resilience and well-being (S11). The study used an empathy-driven conversational AI, WYSA, which is a text-based mobile well-being app. The app helps users in developing positive self-expression. Its free version was used, which is available 24×7. Emotions are expressed by the user through written conversations, and the app responds to them by providing evidence-based self-help practices such as CBT, DBT, positive behaviour support, mindfulness and several other techniques so as to build emotional resilience in the users. The app can easily be downloaded from Google Play Store. There is no requirement of signing in, and no personal information is asked by the app while using it. Another well-being app that was used was ‘Whitu’, seven ways in seven days (S8). It contains a total of seven modules to help young people. These modules are: (a) recognise and rate emotions; (b) learn relaxation and mindfulness; (c) practice self-compassion; (d) practice gratitude; (e) connect with others; (f) care for their physical health; and (g) goal setting behaviour. In this study, the intervention group had to download the app and was required to use it for four weeks. The users had the option to choose from the given strategies that worked best for them.

An interesting study was done in which a gratitude intervention app called ‘ZENN’ was used (S9). The app could be accessed by the users on their smartphones, laptop and tablets. The content included e-mail delivered intervention. It had a total of six modules covering psychoeducation on different aspects of gratitude such as looking at the positive side, appreciating daily activities, expressing gratitude, finding out the consequences of adversity etc. The modules had writing exercises on gratitude. One interesting feature of the app was the presence of persuasive elements; for example, on the homepage there was a sunflower picture that gradually changed colour on the basis of the progress made by the user (self-monitoring and liking). There were also daily motivational quotes and reminders for completing the exercises. After completing these exercises, an automated feedback suggestion was given to the users.

Results

As seen in Table 1, all 13 studies have reported a positive impact of AI-based approach in treating mental health issues. The system has also been found to be effective in enhancing resilience and well-being among the users (S11, S8). The findings from S1 show how machine language can be used to explain affective areas of the patients, construction of genograms, understanding and investigating the patterns of cognitive interactions and achieving self-awareness. AI-based tools can be used by therapists to enhance the quality and effectiveness of therapeutic work. S1 successfully highlights the integration between AI and psychology. Out of the two groups, group B, which received support through a mobile personal healthcare agent along with the training about stress management, reported greater improvements in OCD and positive distress symptom assessment. The psychotherapists were in favour of combining AI with their therapy practice.

One study aimed to design a chatbot that could encourage self-disclosure among the users using rapport-based dialogue such as small talk sessions and empathetic responses (S3). For this purpose, a fully automated text-based programme was implemented that was based on Rogerian Client–centred therapy dialogue. The results showed that it was through the feeling of social presence that the self-disclosure among the users was moderated. The rapport had no direct influence on self-disclosure, but feeling of rapport enhanced the users’ sense of social presence, which may have induced them to self-disclose more. These findings highlight an important aspect of AI as an aid in counselling, namely the potential for users to experience a sense of togetherness or connectedness with the machine. When the users perceive a social presence during interactions with the chatbot, they may be more inclined to participate in the sessions and share personal information.

The results from S4 indicated that by utilising machine learning algorithms, it becomes possible to analyse various data inputs and identify patterns that can predict an individual’s responsiveness to digital treatments. This predictive capability can help the practitioners in determining the most appropriate treatment approach for individuals. For example, for individuals who are predicted to be highly responsive to digital interventions, standalone digital interventions may be sufficient and effective in addressing their needs. On the other hand, for individuals who are predicted to have a lower likelihood of responding to digital interventions alone, an integrated approach combining both AI and other methods can be used. The users in S5 who could complete half of the modules in TreadWill showed a greater amount of reduction in depression and anxiety symptoms compared with the control group. The full version of TreadWill had significantly higher engagements.

Two of the studies used the mental health apps MYLO and ELIZA (S6, S7). These programmes use AI to engage participants in conversation on any problem topic. Engagement with both the conversational agents resulted in significant improvement in problem distress, anxiety and depression. Three studies had used Internet-based CBT (iCBT) as an intervention tool (S10, S13, S12). The results from these studies have also shown the positive impact of the AI-based approach on the improvement in the problem distress of the users. As stated in S10, iCBT for somatic symptom distress had a significant positive impact on somatic symptom distress. Similarly, Internet-delivered CBT showed greater improvement in symptom severity (S12). The results of S13 strongly suggest that machine learning algorithms can successfully be applied for predicting paediatric OCD treatment outcomes.

The results from the mental well-being apps used in S8 also show a positive impact on the users. The participants using the ‘Whitu’ app reported experiencing higher emotional and mental well-being, self-compassion and sleep. They also reported a lower level of stress and depression compared with the waitlist group. The online gratitude intervention app in S9 was found be effective in improving the mental health of the users during the COVID-19 pandemic. The empathy-driven app Wysa resulted in significant mood improvement in the users (S11).

Risk Bias Assessment

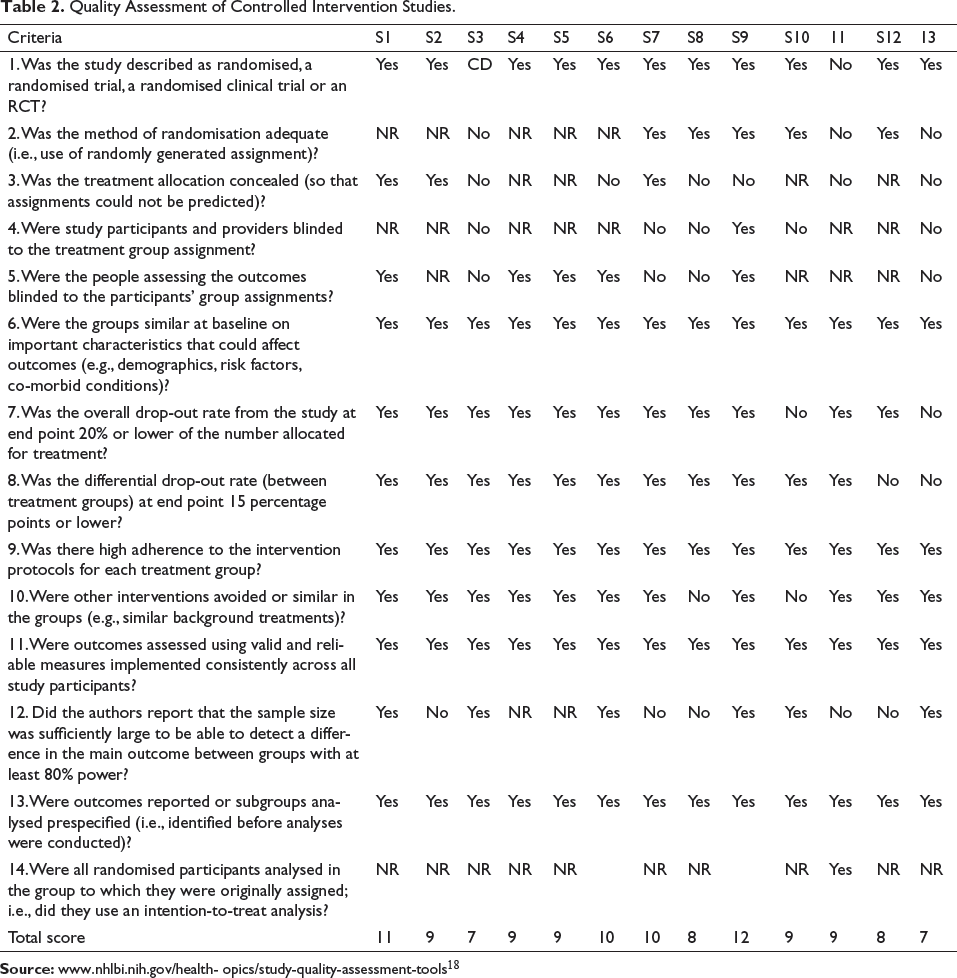

As mentioned earlier, the selected studies were assessed for quality using study quality assessment tools from the NHLBI (

It can be observed from Table 2 that after the assessment, S2 and S9 were rated ‘good’, as their overall score was 11 and 12, respectively. However, the remaining studies were rated ‘fair’. Some of the common missing parameters for studies were lack of information about the intention-to-treat analysis; only one study (S9) provided information about it. Many studies reported that the participants were not blinded for the treatment assigned; this could be because they wanted to encourage maximum participation from the users.

Quality Assessment of Controlled Intervention Studies.

Concerns When Using AI in Psychotherapy

The use of AI alone for treating various psychological problems is not very effective; however, it can work as an add-on resource for therapeutic work 6 and metaphors. It was seen in S1 that the mobile personal healthcare agent (mPHA) had limited dialogue capabilities. Using the app, the participants could not be engaged for extended conversations. It was only with the help of the therapist that the dialogue capabilities could be increased. In one of the studies, 26% of the respondents reported technical issues during the study, and this may have influenced their motivation to work with the app. These technical issues also included problem with login, uploading photo, app crash, refreshing the app etc. 19

Another limitation of a web-based intervention system is that there is no therapist to explain the rationale for certain interventions. It is important that the chatbots that are used should be clear and user friendly. If the system is confusing or frustrating to use, it is likely to be ineffective. 20 While using the web-based interventions, people may lose interest or become bored after some time, thereby leaving the intervention in between. Confidentiality and privacy have been found to be major ethical concerns in using chatbots for mental health intervention. 21 Of the 32 mental health and prayer apps, such as Talkspace, Woebot and Calm, analysed by a tech non-profit, 28 were flagged for ‘strong concerns over user data management’ and 25 failed to meet security standards like requiring strong passwords.

Conclusion

By incorporating AI technology into psychotherapy services, therapists can enhance their ability to reach and engage with patients. AI-based interventions can provide an accessible and convenient platform for individuals seeking mental health support, thus increasing the likelihood of attracting patients who may not have otherwise sought traditional therapy. This important role of AI has now been widely accepted by the therapists who recognise that AI can provide valuable support in improving patient adherence to treatment recommendations. Results from studies have even suggested that Internet-mediated therapies can be effective in reducing anger problems as well. 22 We are also aware about the fact that many people do not seek the help of mental health professionals as they have a fear of being judged or labelled by the person. However, with AI in place, this fear of people has been quite minimised as they reveal more to a virtual human because they perceive that they would not be negatively evaluated by the machine. When users disclose their personal information to a chatbot, they do not experience the fear of being negatively judged by the chatbot. 8

AI chatbot practitioners should develop ideas so that the users should feel more comfortable while using the chatbot systems. 10 Also, it was observed that the rapport formation which is an integral part of counselling, could be formed between the user and the chatbot. By carefully designing the conversation style and tone of voice, the chatbot can create a more inviting and empathetic environment for users. This can contribute in building rapport, which is characterised by a sense of mutual understanding, trust and connection between the user and the chatbot. The aim should be to design social chatbots that can be both useful and empathetic. 6

AI can also be used as a guide for decision making by the therapist, as they can decide about the appropriate level of digital or traditional care that can be given to the clients before starting any form of treatment. It can save the time, energy and resources of both clients and therapists. The digital form of intervention is also less costly as many people cannot afford the high costs of the traditional form of therapies. 18

Chatbots have been found to be useful in providing conversational support to older people. For psychological disorders involving a high level of shame and embarrassment, conversational agents may be useful. The chatbots can assist the traditional psychotherapists by reducing the number of sessions needed, thus enabling the therapist to focus more on challenging work during face-to-face treatment sessions. 20 The mental health app ‘Whitu’ used in S8 has highlighted the importance of certain features of the app that can make it more culture friendly and useful for the users. These include features like introducing a welcome song in the beginning, as stated by one participant: ‘I feel I should make a special mention of the karanga (welcome song) at the beginning of the app…as a young Maori women, being called into the app and have it welcome all my problems and grief instantly sparked a spiritual connection for me and I instantly felt at ease and felt safe enough to embark on my healing and well being journey’ (S8).

It was also suggested that finishing a greater number of modules on the app was weakly related to better outcomes; 18 while some of the suggestions included reducing the number of notifications and changing the videos and daily quotes, others emphasised on incorporating more persuasive elements such as tailoring the notifications or including a form of praise or a social role in the intervention by using a supportive avatar. 22

Author’s Contribution

The author did all the work from the extraction of studies from databases to the final reporting of the findings.

Footnotes

Acknowledgement

The author acknowledges the support received by the Central Library of Amity University in completing the paper, including technical assistance and valuable insights provided by the faculty members.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author received no financial support for the research, authorship and/or publication of this article.

Statement of Ethics

Not required as it is a review paper.