Abstract

This study presents a cognitive trust model for travel chatbots that quantifies trust based on user perceptions of chatbot capability and intention. Analyzing feedback from 738 users of a major Indian travel platform, we find that trust formation varies significantly based on interaction frequency and success rate. Users with fewer than 10 successful interactions demonstrate the highest trust levels (0.53), while unsuccessful interactions cause substantial trust erosion, particularly among new users. Perceived desire emerges as a critical driver of trust, while the capability dimension mediates the relationship between intention and overall trust. These findings advance our understanding of cognitive trust in travel chatbots and provide practical insights for designing trust-building mechanisms in conversational interfaces for online travel booking.

Introduction

According to the World Travel & Tourism Council (WTTC, 2025), the global travel and tourism sector contributed USD 10.9 trillion (10% of global GDP) in 2024 and is projected to reach USD 11.7 trillion in 2025, growing at an annual rate of approximately 3.5%. The online travel market contributes 69% to the global travel and tourism industry, with more than 80% of millennials and generation Z preferring to book entire trips online (Travelperk, 2024). With India surpassing the population of China to become the fifth largest economy and one of the key outbound tourist markets, it has the potential to grow from 13 million trips in 2022 to 80 million in 2040 (Aggarwal et al., 2023). Therefore, India as a market has a population still trying to adopt and acquaint themselves with new technology in multiple domains, with generation Z capturing the maximum market share of online product users (Statistica. 2023, 2024). Also, India, having already recovered 61% of the pre-COVID market, is among the quickest in recovery and fastest in growth (Aggarwal et al., 2023) to become the fourth largest spender globally on travel by 2030 (Verma, 2024). It is expected that Indian online travel sales will contribute 60% by FY 2028 with package holidays as the preferred online product booked through online travel websites. While travelers typically spend an average of 19 days researching and planning before finalizing an online booking, they have little patience for poor user experiences on travel websites and apps, which leads to lower retention rates (Kleweno et al., 2019). Therefore, creating an effective user experience for online booking has become a priority for industry leaders. Therefore, to create a cognitively effective user experience for online booking, leaders in the industry have focused on solving at least one of the three key challenges as per Bain and Google study: “identifying and providing on raw customer needs, de-averaging and personalizing marketing approach, and winning each traveler interaction” (Kleweno et al., 2019). To achieve a competitive edge in this user base who are time-sensitive and prefer speed, convenience, and choices suggested that the adoption of artificial intelligence (AI) by travel booking companies will create an edge (Travelperk, 2024). Among various AI tools, conversation agents or chatbots are becoming the go-to technology for personalizing online travel booking (OTB) experience.

In 2022, the travel and tourism industry forecasted usage of chatbots stands at 53% among overall hotels, and 42% in branded hotels vis-à-vis 64% in independent hotels (Stayntouch, 2022). Travel chatbots are becoming popular technology to provide exponential projected growth across industries ($1,250 million in 2025) (Partners, 2019). In the tourism industry, chatbots are utilized for customer service and support (Morosan & Bowen, 2018). For the user to interact and accept chatbot recommendations and travel inquiries, trust is critical (Nordheim et al., 2019). The experience of chatbot interactions is sometimes creepy due to a lack of trust (Rajaobelina et al., 2021). Therefore, initial trust and adoption of chatbots is still a major challenge for a business that has invested a large amount in technological advancement (Mogaji et al., 2021). Furthermore, by 2050, there is only a 50% probability of chatbots achieving AI awareness, indicating this technology is still in the evolution phase (Sidlauskiene et al., 2023). Moreover, research in OTB shows a gap as to how chatbot interactions are perceived by consumers and converted into trust, which requires further exploration as suggested by researchers (Chi et al., 2021; Jan et al., 2023).

Ryan (2020) evaluated three paradigms of trust: rational (cognitive), affective and normative toward AI. He claimed that trust placed in AI is only rational (cognitive) and should not be viewed on moral grounds. Cognitive trust is particularly significant in the case of AI virtual agents (chatbots) having medium to low humanness where the user requires initial trust based on initial usage experience (Ameen et al., 2022). Literature reviews are suggesting future direction toward the exploration of cognitive trust in different domains (Glikson & Woolley, 2020; Saydam et al., 2022). Repeated interactions with chatbots providing accurate and relevant responses increases cognitive trust, leading to repeat usage and satisfaction (Shahzad et al., 2024). To capture user perception toward interaction with any online medium or technology, user feedback has been an effective mechanism (Bozkurt et al., 2024). Researchers have used online reviews (feedback) for chatbots to understand satisfaction (Yoganathan & Osburg, 2024), engagement (Bozkurt et al., 2024), positioning (Tripathi & Wasan, 2021) and development of metacognitive reasoning (Ortega-Ochoa et al., 2024).

To understand cognitive trust toward the initial adoption of travel chatbots, this study focuses on validating a cognition-based trust model (Dubey & Kumar, 2019). Through the review of literature, we identified a significant gap: few studies use online user feedback to understand cognitive trust levels in chatbots, particularly for OTB. While research exists on trust in various digital contexts (Chawla & Joshi, 2019; Filieri et al., 2015), quantified trust models for travel chatbots remain underdeveloped (Wang, 2018). As Glikson and Woolley (2020) note, there is a need for exploration of cognitive trust across different domains. Our study addresses this gap by applying and validating a trust model in the OTB context. User trust is made up based on their perception of the chatbot’s ability to fulfill the requirement posed by the user. This study has taken user feedback toward the usage of chatbots to evaluate the trust in chatbots on different parameters. Through the review of the literature, we were unable to find a study using online user feedback as the organism to understand the level of cognitive trust in chatbots. Research in OTB shows a significant gap in understanding how chatbot interactions are perceived by consumers and how these perceptions convert into trust. This gap requires further exploration as noted by several researchers (Chi et al., 2021; Jan et al., 2023). While studies have examined various aspects of human–chatbot interactions, the specific mechanisms of cognitive trust formation in travel contexts remain underexplored. This gap is particularly significant given the rapid adoption of chatbots in the travel industry and their critical role in shaping customer experience during the complex, high-involvement process of travel planning and booking. Furthermore, repeated interaction helps both the user and the chatbot in improving usability. Therefore, the frequency of successful interaction is critical in understanding the development of cognitive trust between the user and chatbot. Based on the above discussion, this study will be among the few to explore dimensions of cognitive trust in OTB chatbots.

Based on the identified gaps in understanding cognitive trust in OTB chatbots, this study aims to quantify and analyze the dimensions of trust using a validated cognitive trust model. By examining how interaction frequency and success influence trust formation, we can provide both theoretical insights and practical guidance for improving chatbot design. To achieve these objectives, this study addresses the following research questions:

RQ1: How does the frequency of human–chatbot interactions (fewer than 10 vs. more than 10) affect cognitive trust dimensions in travel chatbots? RQ2: How do successful versus unsuccessful human–chatbot interactions impact the development of cognitive trust in travel chatbots? RQ3: What is the relative contribution of capability and intention dimensions to overall cognitive trust during human–chatbot interactions in travel contexts?

Theoretical Framework

Cognitive Trust Toward Chatbots

Trust is defined as a “psychological state” indicating the “willingness of a party” to depend or rely on others accepting vulnerability, leading to belief in someone based on positive expectations of the intention or behavior of another (Rousseau et al., 1988; Schoorman et al., 2007; Thielmann & Hilbig, 2015). Multiple studies have explored trust in the tourism industry. Cheng et al. (2022) explore trust in the accommodation-sharing economy due to collaboration with AI chatbot. Picard and Marriott (2021) integrated human–computer interaction theories with para-social relationship theory and found that social presence and cognition are precursors that are unique to the development of trust toward voice-based assistants (Alexa).

Furthermore, the trust placed in AI can be more accurately called “quasi-trust” or “virtual trust,” which is misplaced trust in the case of AI (Coeckelbergh, 2012). Researchers have stated regarding cognitive (rational) trust that by making logical choices based on the capacity (ability) and expertise (competence) of AI, the trustor predicts that the trustee will uphold the trust placed in them (Mollering, 2006; Nickel et al., 2010). Affective trust has overlapping factors of cognitive trust such as intimacy and passion toward an AI and is influenced by performance efficacy (Song et al., 2022). Glikson and Woolley (2020) identified tangibility, transparency, reliability, task characteristics and immediacy behavior influence trust in AI. Chi et al. (2021) developed a scale to capture user trust in AI social robots for service delivery. Thus, we propose a cognitive trust model for initial trust in chatbots comprising competence (functionality), ability (immediacy and reliability), desire and commitment.

We adopt the cognitive trust model suggested for electronic marketplaces in business-to-business (B2B) contexts (Kumar & Mohite, 2015) and adapt it to the B2C travel context. This adaptation is appropriate because both contexts share key characteristics: information asymmetry, reliance on digital interfaces and the need to establish trust without physical presence. However, we adjust the interpretation of dimensions to reflect B2C dynamics—particularly considering the more experience-focused nature of travel planning compared to B2B transactions. This model was adopted and studied for trust in robots (Dubey & Kumar, 2019). In this study, we try to apply this model to quantify the cognitive trust index in travel chatbots from the lens of user feedback. The proposed research model for the travel chatbot can be seen in Figure 1. Kumar et al. (2009) defined the trustworthiness of an information or technology system as the users’ confidence in delivering the said output with the ability and intention to match the users’ perception (Barber & Kim, 2001). The trust model has been widely applied to study trust in internet shopping (Lee & Turban, 2001), mobile wallets (Chawla & Joshi, 2019) and travel websites (Filieri et al., 2015). However, studies have explored trust qualitatively and fewer have tried to quantify it (Wang, 2018). For quantification of trust, studies have user ratings and reviews which are either positive or negative experiences leading to recommendations (Bobadilla et al., 2013; Majumder et al., 2022; Son & Kim, 2023). This study focuses on the quantification of trust in travel chatbots through user feedback or ratings.

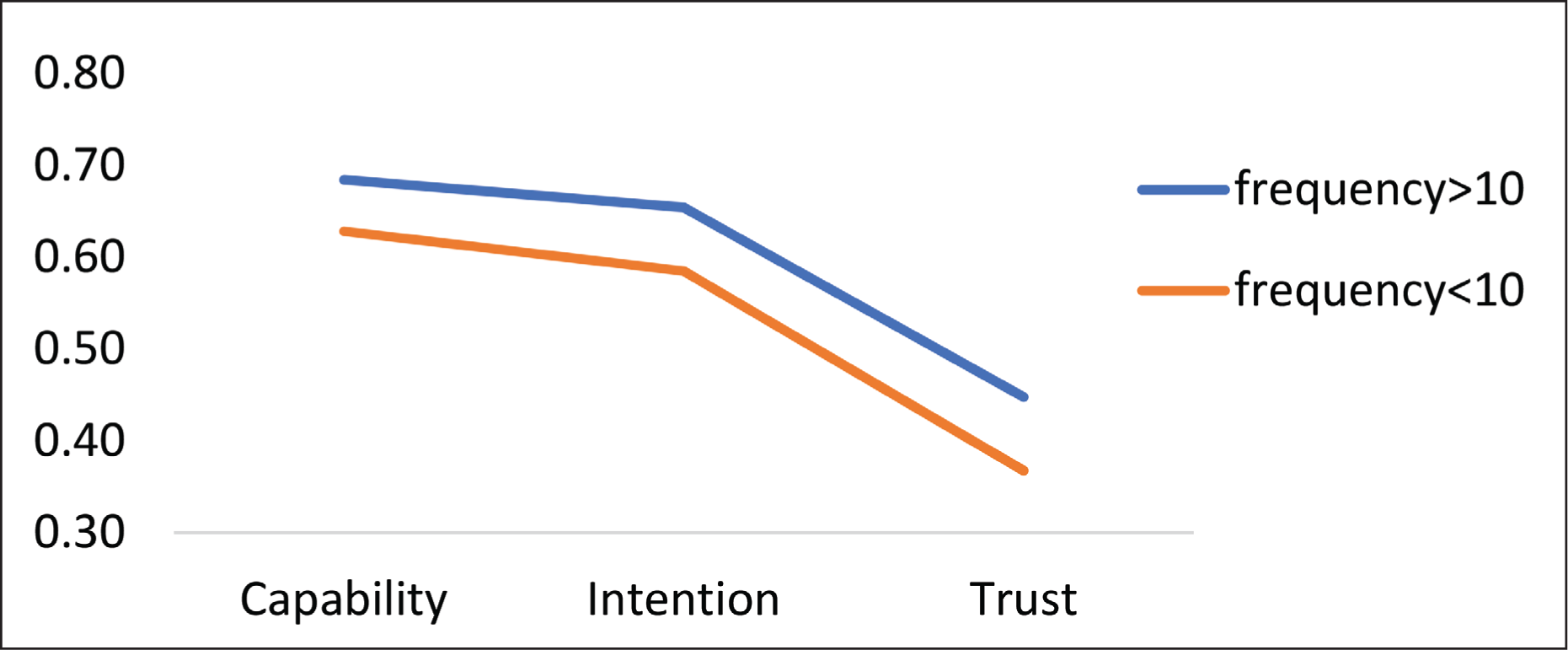

Three-way Interaction Between Capability, Intention, and Trust for Frequency > 10 and Frequency < 10.

Cognitive Trust Index dimensions

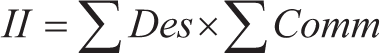

The trust index for a chatbot can be defined as the capability and intention of the chatbot to address customers’ requirements and resolve their queries. The capability of the chatbot is the measure of the ability and competence of the chatbot, which are intrinsic measures (Kumar & Mohite, 2015; Wang, 2018). Wang (2018) defined ability as the perception of skills, competence and expertise of systems as its reasoning capability toward users’ response and output produced. As defined by Michael et al. (1990), the intention of an agent is the goal they want to achieve. The intention of an agent gives the guidelines of what goals they want to achieve, and it will involve self-commitment to attain it (Michael et al., 1990). The willingness of the chatbot to communicate effectively with the customers is known as the intention of the chatbot. The intention (or perceived intention) of the chatbot is the measure of the desire and commitment promising fulfillment and reliability toward an action (Kumar & Mohite, 2015; Wang, 2018). Mathematically, the trust model can be identified as follows:

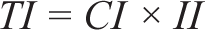

where 0 ≤ CI ≤ 1, 0 ≤ II ≤ 1

where CI is the ability index of the chatbot and II is the intention index of the chatbot. We adopt the multiplicative relationship (TI = CI × II) following established trust modeling approaches (Dubey & Kumar, 2019; Kumar & Mohite, 2015). This multiplicative relationship better represents the interdependent nature of capability and intention in trust formation—both components must be present for meaningful trust to develop. A multiplicative model ensures that if either component approaches 0, the overall trust index decreases significantly, reflecting the real-world observation that perceived deficiency in either capability or intention substantially undermines trust. This multiplicative approach enables us to test our hypotheses by: (a) preserving the interdependence between capability and intention dimensions, (b) allowing for sensitive measurement of trust differences between user groups with varying interaction frequencies, and (c) providing a framework for comparing the relative contributions of the capability and intention dimensions to overall trust. The model’s assumptions align with theoretical perspectives on trust as requiring both ability and goodwill perceptions (Kumar & Mohite, 2015).

Antecedents of Capability Index (Capacity and Expertise)

In the work done by Jehangir et al. (2012), a model has been proposed, which defines the ability index as the product of capacity and expertise. This was tested and validated for robots by Dubey and Kumar (2019). One of the most important factors affecting trust is “Capacity” (Cook & Wall, 1980; Deutsch, 1960; Jones et al., 1975; Sitkin & Roth, 1993). The ability to understand user response by analyzing an existing database of information to function efficiently by minimizing resources and maximizing output is defined as capacity (Martínez-Caro et al., 2020; Roberts et al., 2012). In more technical terms for information systems, the maximum rate of output of a process or system is defined as capacity determining its efficiency (Yeager et al., 2023). Capacity also encompasses the ability of artificial intelligence (AI) to modulate its response according to user emotions or situations (Lytridis et al., 2018). The capacity of an AI product directly affects its usability and trustworthiness responsible for generating appeal for consumers (Jung, 2021). Negative or low feedback from the users indicates mistrust of AI products, thus reducing its response acceptance to fit into the social structure for a user (Glikson & Woolley, 2020). Therefore, in the case of travel chatbots, the efficiency of response coupled with the time taken can be measured as capacity perceived based on user feedback. As stated by Marcolin et al. (2000), expertise is a world-class asset. The expertise of a user states their ability to perform the allotted tasks to the highest standards, thus creating extra value (Herling, 2000). Expertise is directly related to performing tasks and roles at expected standards (Eraut, 1998) or “capability to perform effectively” (Mulder, 2011) and equates it with ability. Song et al. (2023) analyzed the anthropomorphism expertise of robots in the service industry impacting trust in its services. The belief of the user in the expertise of robots and their services or responses increases trust in robots and their services or responses (in the case of chatbots). Like capacity, user feedback can indicate their level of trust in the expertise of the chatbot and its services (Glikson & Woolley, 2020). This was also tested successfully in the trust model for robot trust (Dubey & Kumar, 2019; Kumar et al., 2009). Therefore, expertise becomes a crucial antecedent to ability and trust for chatbots and can be measured through user feedback or ratings toward chatbot services and experience. In our study, the quality of a customer’s chatbot conversation will decide the chatbot’s expertise. The ability index will be a higher-order construct with competence and ability as formative components for further analysis. For this study, we will be calculating the capability index of the chatbot similarly. Communication with the chatbot can be rated in the Successful Feedback category only when the chatbot has the expertise to do so. Therefore, the past performances can be used to evaluate the capability of the chatbot that is, how efficient the chatbot was to resolve the customer’s query and get categorized in the Successful Task category. Therefore, the Capability Index is interpreted as follows:

where 0 ≤ CI ≤ 1.

Antecedents of Intention Index (Desire and Commitment)

Following guidelines established for communication to fulfill desired goals toward communicating with users confirms the intention of an agent (Michael et al., 1990; Wang, 2021). Achieving the intention efficiency of an agent requires the desire to perform the action or task at the highest standards and commitment toward the quality of output. Researchers have tried to define desire in multiple contexts. Desire is defined as “the motivational state of mind wherein appraisal and reasons to act are transformed into a motivation to do so” (Perugini & Bagozzi, 2001). Desire, as per Magill and Erden (2012), “is defined by its natural function concerning action and likewise distinguished from the role of belief concerning action.” In the case of AI, desire is the intention to achieve set goals concerning user response according to the programming of the service or product (Wadsley & Ryan, 2013). It is measured through point-to-point communication links between human–machine interaction (Li et al., 2019). The desire of an agent has a direct influence on user trust toward the agent and impacts the intention of the users’ perception of the agent. Osakwe et al. (2022) highlighted the same in their research on the usage of drones for last-mile delivery, and Chen (2023) in his study on tourism advertising through technological innovations. Piçarra and Giger (2018) studied the intention through desire and anticipated emotion for social robots, but qualitatively. We try to quantify the same using user feedback toward travel chatbots to form an intention index.

Commitment, the attitudinal component of loyalty, is defined as “an enduring desire to maintain a valued relationship” (Moorman et al., 1992). Commitment is related to people’s identification and sense of obligation, thus leading to long-term relationships between people. A probabilistic commitment is met if the provider’s efforts are likely to accomplish the intended outcome with the promised probability by the agreed-upon period, even if the precise outcome is not realized (Zhang, Durfee, & Singh, 2023). The provider’s commitment to changing certain characteristics of the state as desired by the recipient within a specified probability and timeframe was emphasized (Zhang, Durfee, & Singh, 2023). This commitment is based on the agent’s (chatbot) intention to fulfill a given task or service which moderates the trust of the user. Based on the perceived commitment of the agent (chatbot) ascertained by the user, agent feedback is impacted. Therefore, user feedback captures the perceived commitment of the chatbot and is therefore used in the study to quantify it.

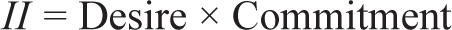

The Intention Index will be defined as a measure of desire and commitment of the chatbot. Desire refers to the situation that is perceived to be in an ideal situation. In terms of an agent, desire is conceived of as the states that the agent wishes to achieve. The desire of the chatbot explains the motivation toward communication with the customers. It characterizes the state of the art that needs to be realized for better performance of the chatbot. The commitment of an agent can be defined as efforts that are made to achieve an ideal state. It is expected that the chatbot should not give incompatible responses, that is, responses that are not in line with the expectations of the customer. This conduct of the chatbot has been defined as commitment. We propose the following hypothesis for higher-order constructs capability and intention. Hence, the interpretation of the Intention Index will be defined as follows:

where 0 ≤ II ≤ 1.

User Feedback (Review) of Travel Chatbot

Feedback plays an important role in the formation of cognitive trust toward any sort of information system (IS). Positive feedback leads to stronger trust, while negative feedback leads to weaker trust in IS (Ba & Pavlou, 2002). Effective feedback mechanisms or review systems induce trust and play a vital role in moderating users’ initial adoption of IS (Pavlou & Dimoka, 2006). Feedback (review) moderation effect has been validated by multiple studies across multiple domains. Dogra et al. (2023) found that customer reviews (feedback), in the case of online food delivery services (OFD), had a significant interaction effect on trust. Raza et al. (2023) found strong positive moderation by dispute resolution effectiveness and feedback on trust for OFDs. In travel and tourism research also, similar results were obtained for hotel consumers’ information adoption (Huiyue et al., 2022) and restaurant services (Park et al., 2021). Sensitivity to feedback (reviews) in travel and hospitality for online information adoption, trust and purchase intention is critical to be studied.

Based on the theoretical framework and research questions, we propose the following hypotheses:

H1: Users with more than 10 chatbot interactions will demonstrate higher overall cognitive trust than those with fewer than 10 interactions. H2: Successful chatbot interactions will result in significantly higher cognitive trust indices compared to unsuccessful interactions. H3: The intention dimension (desire and commitment) will have a stronger relationship with overall cognitive trust than the capability dimension (ability and competence) in travel chatbot interactions.

Methodology

AI agent (chatbot) interaction is a technology-mediated online consumer service requiring the ability to use the technology and capability toward effective usage of online services. Furthermore, MakeMyTrip.com and booking.com are on the preferred list of online travel websites through which users usually purchase online travel products (Statistica, 2024). Therefore, in this study, we have taken MakeMyTrip.com as the platform to get data for the study. To ensure measurement accuracy, we conducted preliminary reliability analysis for our multi-item constructs, with all showing acceptable internal consistency. The categorization approach was validated through pilot testing with a subset of 50 responses, confirming clear differentiation between the three feedback categories. This validation step ensured our transformation preserved the essential variance in the original data while enabling application of the trust model.

Data Collection and Factors Studied

For this research study, the leading travel website MakeMyTrip.com was selected for the usage of chatbot services for OTB, as it has a 60% market share in online travel agencies in India (Truyols, 2024). This would increase the chances of getting an adequate number of responses for the study. A self-administered questionnaire was used to collect data from online website users. Data were collected in the months of April and March 2023, as it is the start of vacation due to the declaration of season end and the starting of the planning stage for summer vacation in June. The first portion contained information regarding the study. The second portion captured the demographic information and frequency of chatbot usage for online travel booking. The last section comprised the factors under consideration for this study. Data were collected via convenience sampling and a cross-sectional survey. Due to the difficulty in approaching participants of such a large population, convenience sampling was used (Stratton, 2021). Convenience sampling has been supported as a suitable method for participant selection in such scenarios (Shahzad et al., 2023, 2024). It is a preferred method in online surveys due to easy access to respondents’ and measurement items’ relevancy; therefore, it is not a concern for this study (Ittefaq et al., 2024).

Pre-screening questions were asked to participants to identify those who booked online travel products but were not aware of online travel chatbots. Only participants providing “No” as an answer to the question “Are you already familiar with using online travel chatbots?” were allowed to complete the remaining questions. This ensured we captured first impressions and initial trust formation rather than pre-established trust relationships. We implemented procedural remedies to minimize common method bias, including anonymous data collection, temporal separation between demographic and trust-related questions and varied response formats across sections. While these measures help reduce potential biases in our self-reported data, we acknowledge this limitation in our discussion of future research directions. Furthermore, only participants having a single online purchase of ₹2,500 and above were considered for the sample. According to Statista (2024b, 2024c), the average spending on a room per night in a hotel in India was around $37, which was approximately equivalent to ₹2,500. Therefore, one of the criteria for selecting these participants was that they order a minimum amount of ₹2,500 per month on the said tourism app.

A total of 1,100 questionnaires were sent to participants, out of which 832 were received. Responses were further screened for missing values, multivariate outliers and unengaged responses, turning out 94 of them invalid and 738 valid responses (67% response rate). Out of 738 candidates, 405 participants were male, while the remaining 333 participants were female. Participants majorly fell in the age range of 22–30 years. The survey conducted for this study divides the participants into two major categories: participants who have used chatbots less than 10 times and participants who have used the chatbots more than 10 times. Moving ahead, the sub-categorization of these two categories has been done based on participants who have resolved their queries successfully using the chatbots.

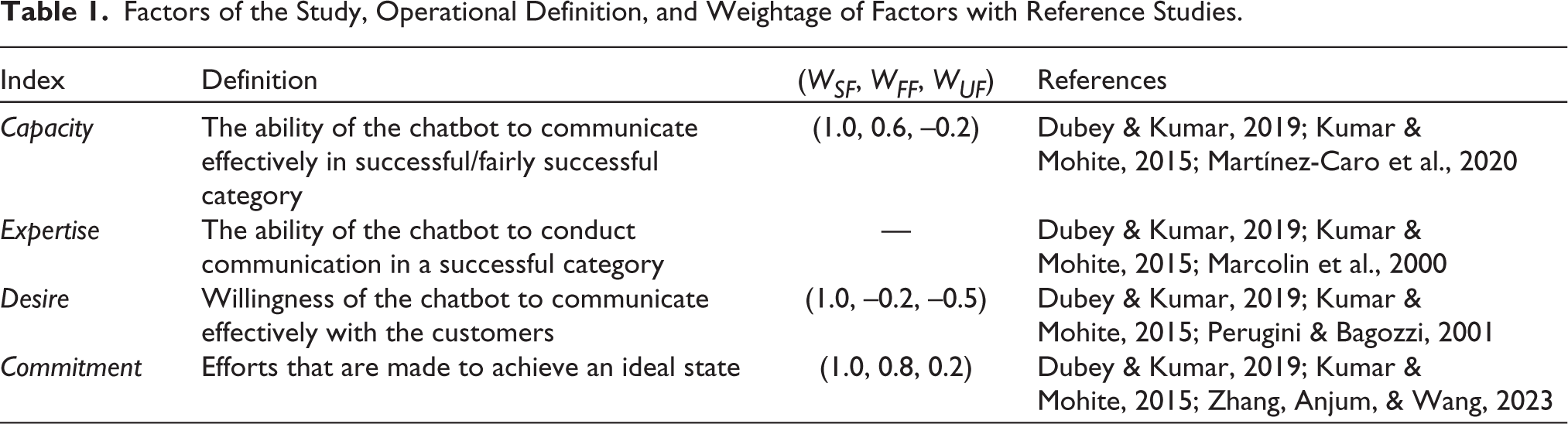

Table 1 includes factors of the study with operational definitions and assigned weights to be used in the analysis of responses with reference papers.

Factors of the Study, Operational Definition, and Weightage of Factors with Reference Studies.

Procedure

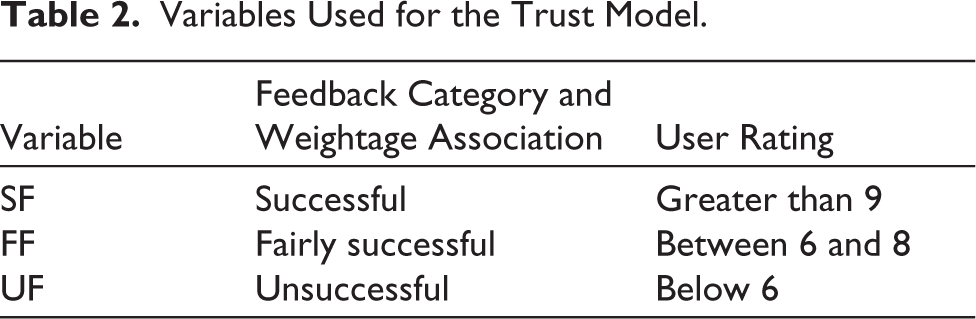

The Likert scale has been used widely to capture opinions and feedback for attributes in marketing studies (Li, 2013). Dubey and Kumar (2019) divided the responses on a Likert scale into subsets of successful, fairly successful, and unsuccessful. This reduced the variance but enabled us to validate the model of the study to fulfill the objectives considered. The transformation of Likert scales is suggested and explained in multiple studies (Chakrabartty, 2020; Heo et al., 2022). Responses on our 10-point scale were categorized as “unsuccessful” if below 6, “fairly successful” if between 6 and 8, and “successful” if 9–10. This categorization balances granularity with analytical clarity, allowing us to apply the trust model effectively while preserving meaningful distinctions in user feedback (see Table 2). To parameterize the proposed model for the chatbots, customer feedback is taken into consideration and is divided into three categories.

Variables Used for the Trust Model.

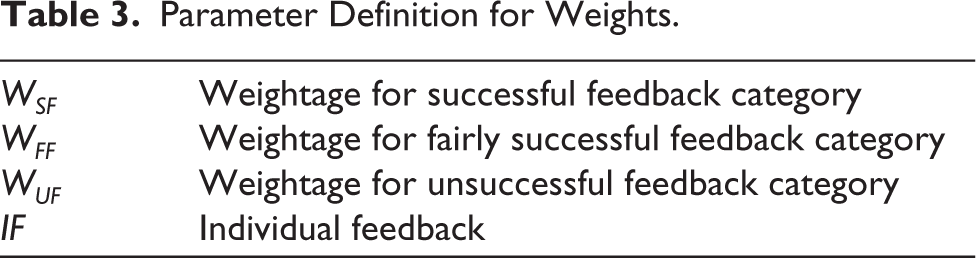

To evaluate the trust index, the categories have also been assigned weights. These weights will be different for each index and have been defined individually.

The process involved organizing the responses from candidates into distinct categories to better understand their performance. These categories were defined as successful feedback, fairly successful feedback, and unsuccessful feedback. The criteria for classification were based on the numerical ratings assigned by users, ranging from 1 to 10. Specifically, feedback receiving a rating of 9–10 was deemed successful, feedback with a rating of 6–8 was considered fairly successful, and any feedback with a rating below 6 was categorized as unsuccessful. This categorization provided a structured way to evaluate and interpret the candidates’ performance based on the given ratings, facilitating a more nuanced analysis of their responses.

Parameter Definition for Weights.

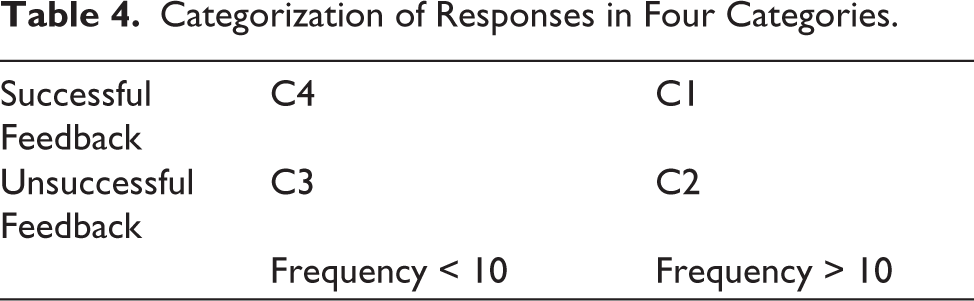

The responses by the users were then categorized into four quadrants based on the frequency of interaction and results of queries. These categories were C1 (Frequency > 10, successful queries), C2 (Frequency > 10, unsuccessful queries), C3 (Frequency < 10, unsuccessful queries) and C4 (Frequency < 10, successful queries). Table 3 shows the categorization of the responses according to the two parameters feedback and frequency. All the 738 users were categorized based on these conditions and were then used to evaluate the trust measures for the chatbot. Table 4 shows the categorization of responses in four categories as discussed above.

Categorization of Responses in Four Categories.

Analysis

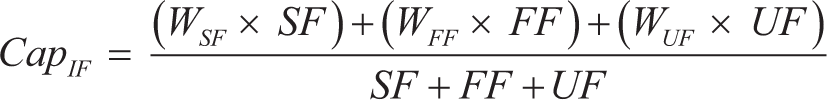

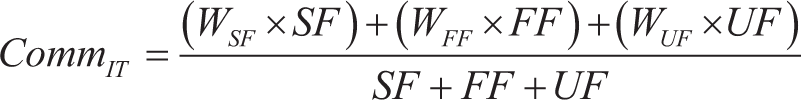

Capability Index

In this study, capacity refers to the skills of the chatbot to execute communication either in the successful feedback category or in the fairly successful feedback category. Since the unsuccessful feedback will impact the ability of the chatbot, the unsuccessful task category will be assigned a negative weightage (hence, WUF = –0.2). Similarly, for the successful and fairly successful feedback, the weightage (WSF and WFF) assigned will be 1.0 and 0.6, respectively. Capacity is calculated as follows:

This formula has been designed with the following assumptions:

0 ≤ CapIF ≤ 1. WSF and WFF will carry a value between 0 and 1. WSF > WFF, the better the feedback of the conversation will be, the better weightage will be assigned to the task. WUF < 0, weightage for the unsuccessful tasks will carry a value less than 0 since it causes a negative effect on the ability of the robot.

Competence for the chatbot will be calculated as the percentage of the resolved queries and the total queries conducted. The total number of conversations/tasks performed equals the summation of successful, fairly successful, and unsuccessful categories. Competence will be defined as follows:

The relationship has been derived with the following assumptions:

0 ≤ ExpIF ≤ 1. SF > 0, the number of successful tasks is greater than 0. SF + FF + UF ≠ 0.

With the help of the formula derived above, the Capability Index of the chatbot will be measured as follows:

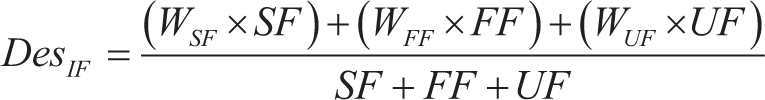

Intention Index

The communication feedback of the customers can be a very good tool to judge the desire of the chatbot. To deliver an excellent experience to the customers, the chatbot needs to perform all its tasks in the successful task category. Hence, the weightage given to the successful task category will be 1. Since the main purpose of the chatbot is to provide an excellent experience to customers, fairly successful and unsuccessful tasks would be given negative weights, that is, –0.2 and –0.5, respectively. Desire is calculated as follows:

Commitment can be calculated from the past experiences of the chatbot. The weights in the case of commitment have been assigned as follows: WSF = 1.0, WFF = 0.8, and WUF = 0.2. The commitment of the chatbot will be defined as follows:

With the help of the equations defined for commitment and desire, the equation of the Intention Index can be derived as follows:

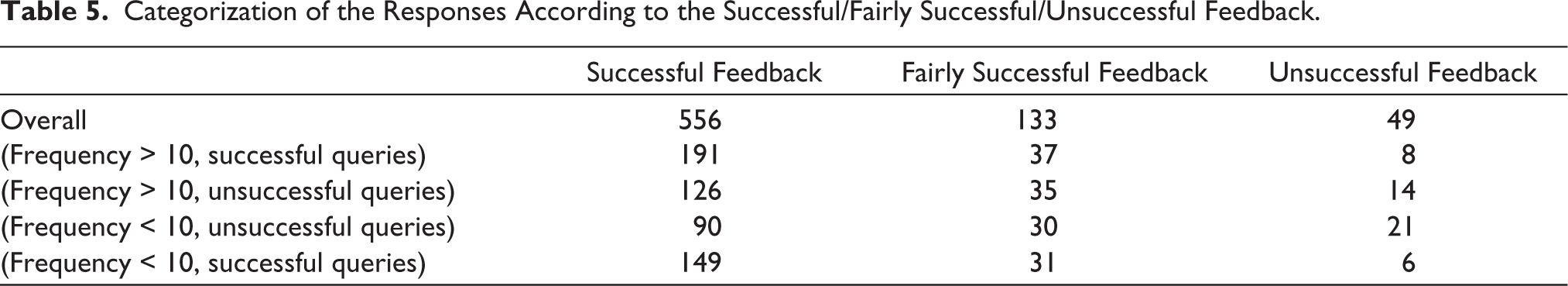

Categorization of the Responses According to the Successful/Fairly Successful/Unsuccessful Feedback.

Results and Findings

Table 5 presents the data obtained after the categorization. These parameters are then used for the calculation of variables involved in measuring the trust index of the chatbot.

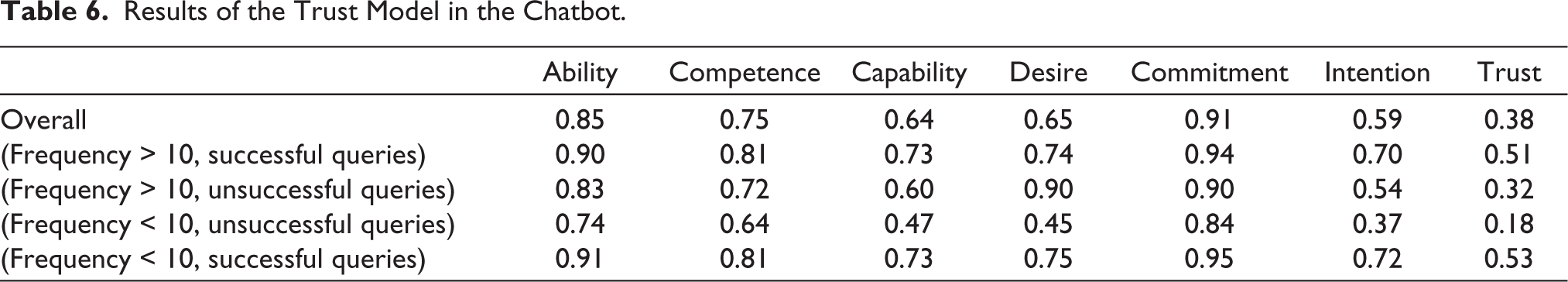

Results of the Trust Model in the Chatbot.

From the responses submitted by the users of the app, the cognitive trust model was developed to measure the given parameters of the app. Table 6 represents the results of the calculated trust model. As per the results, the trust in the chatbot was highest for the customers who have interacted with the chatbot less than 10 times and most of the issues have been successfully resolved. Similarly, the trust value for the customers who have interacted with the chatbots more than 10 times and have successfully resolved the queries most of the time is 0.51, which turns out to be that of customers in segment C3, but higher than all other categories.

The customers who interacted with the chatbots less than 10 times, of which a majority have not been resolved successfully, show a relatively lower trust value of 0.32. On the other hand, the customers who interacted with the chatbot less than 10 times and had mostly unsuccessful queries had a very low trust value of 0.18. These results show that the customers’ trust in chatbots is highest when the number of conversations is less, and the queries are mostly resolved successfully. These results also show that trust in chatbots is lowest when the number of conversations is less than 10 and the issues are often left unresolved.

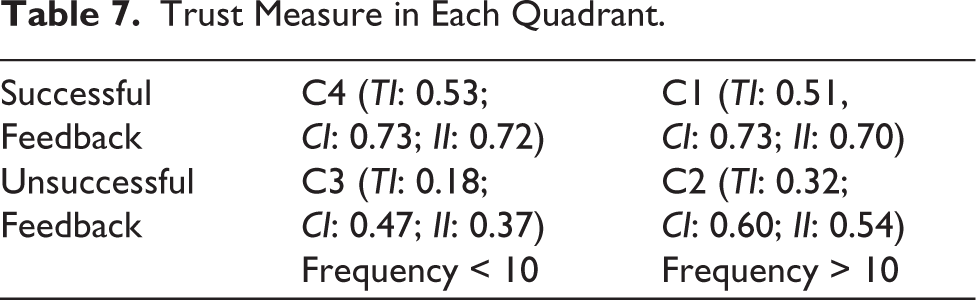

Trust Measure in Each Quadrant.

Table 7 shows the trust measure of the chatbot based on the categorization (C1–C4). It shows that when the chatbot successfully resolves the queries of the user, the trust measure is more than 0.50 (50%). However, when the chatbot is unable to resolve the issues of the users, the trust measure drops significantly. Another result that must be considered is the variation of the trust measure based on the frequency of the interaction. The results demonstrate that when a user interacts less than 10 times, the variation of trust measure during successful and unsuccessful feedback is significantly higher (trust value drops from 53% to 18%). On the other hand, the trust measure in the users who interact more with the chatbot does not witness such variation (from 51% to 32%) in successful and unsuccessful feedback, respectively.

As mentioned in the trust model, the intention index is composed of the desired index and commitment index and is a measure of the inclination of the chatbot to provide the clients with the best effective manner. The desired index of the trust model indicates the motivation of the chatbot to complete the conversation with feedback of 9 or 10, that is, successful feedback category. In the given results, it can be observed that the value of the intention index is low, which in turn affects the overall trust value of the chatbots of the apps. These results align with the results of the work done by Garvey et al. (2022), where the authors have suggested that the AI agents lack intentions as compared to the human agents. Similarly, the Capability Index of the model prioritizes the successful feedback positively. Capability shares a direct relationship with successful tasks, suggesting that the capability of the chatbot will increase only when the count of successful tasks is much higher as compared to the other two categories.

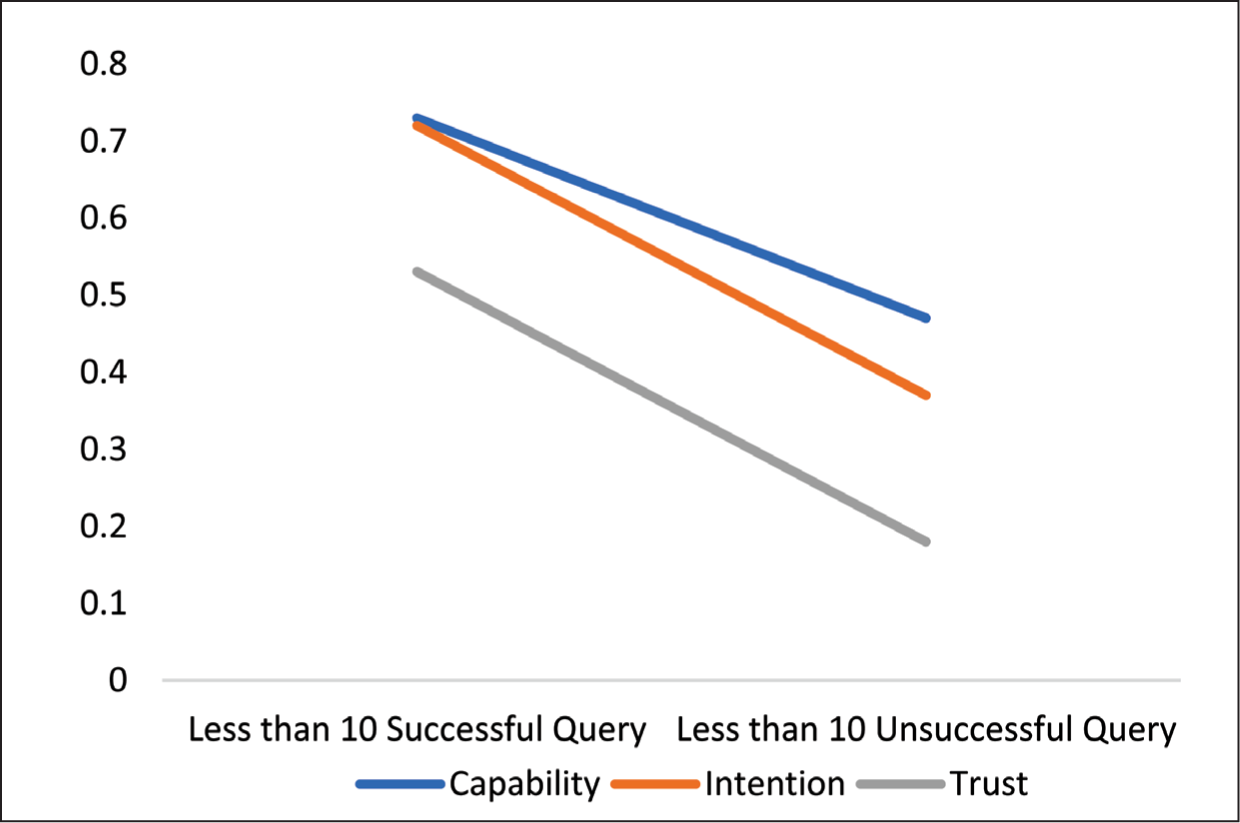

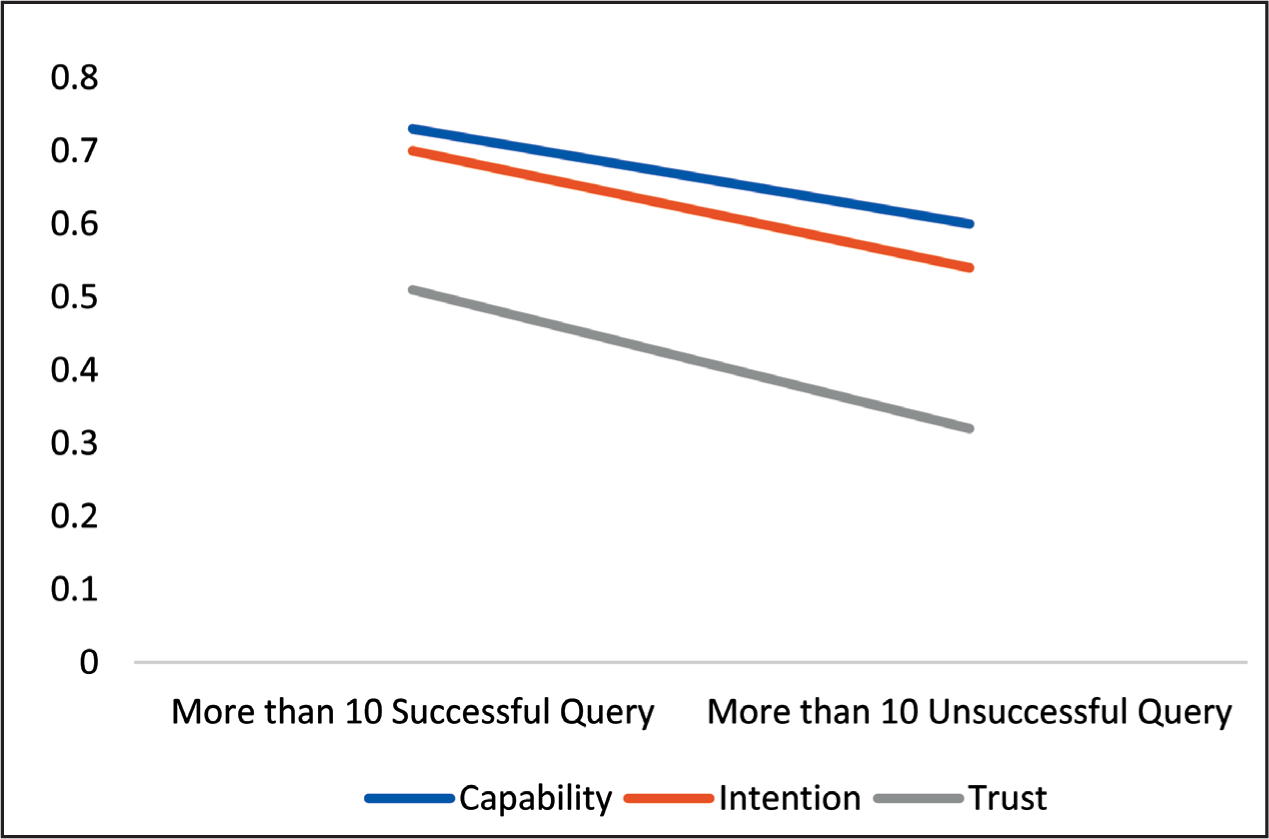

Figure 1 represents capability, intention, and trust index based on frequency of interaction. The interrelationship between capability and intention can be witnessed in Figures 2 and 3. In Figure 2, all three measures are in a downward trend from successful to unsuccessful feedback. However, the intention curve shows a steeper downward trend as compared to the capability of the chatbot. In more than 10 conversations, the same trend can be witnessed (Figure 3). Furthermore, the steepness in the measures can be observed more in Figure 2 as compared to Figure 3. It can also be observed in both cases that the decline in intention is more as compared to capability, which shows that capability mediates the relationship between intention and trust.

Three-way Interaction Between Capability, Intention, and Trust for Less Than 10 Queries.

Three-way Interaction Between Capability, Intention, and Trust for More Than 10 Queries.

Our analysis provides support for all three hypotheses. Regarding H1, we found that users with more than 10 successful interactions showed a trust index of 0.51, compared to 0.32 for those with unsuccessful interactions, confirming that interaction frequency significantly affects trust. For H2, the substantial difference in trust indices between successful and unsuccessful interactions (0.53 vs. 0.18 for <10 interactions; 0.51 vs. 0.32 for >10 interactions) strongly supports our hypothesis that interaction success dramatically impacts trust formation. Finally, supporting H3, we observed that the intention dimension showed greater variance across user groups than the capability dimension, confirming its stronger relationship with overall trust in this context.

Discussion and Implications

Discussion

Unlike general AI trust models, our cognitive trust model specifically captures the unique dynamics of travel planning contexts, where users engage with chatbots for multi-step processes requiring both information provision and decision support. Our findings extend beyond service robot trust models (Chi et al., 2021) by highlighting the critical role of perceived desire in contexts where physical embodiment is absent. Similarly, our model advances e-commerce chatbot trust understanding by demonstrating how trust evolution differs based on interaction frequency in the travel domain. In conclusion, this research article has dug into the development and exploration of a cognitive trust model for chatbots, formed from the core components of capacity and intention, as detected from user feedback. Through a thorough examination of user interactions and perceptions, we discovered the subtle interplay between a chatbot’s shown capabilities and perceived intentions in creating cognitive trust.

Users who interacted with the chatbot for less than 10 interactions resonated more trust when the interactions were successful than if they were unsuccessful (see Table 7). Diagnosing this output revealed that in both cases of less than 10 interactions (successful and unsuccessful), users found variance in the perceived capability of the chatbot as well as the perceived intention toward the query. From the sub-components of capability and intention, the perceived desire was found to be a key outlier creating a major impact in the cognitive trust index. Thus, it indicates that a lower number of interactions with OTB chatbots is not an effective barometer to evaluate the development of trust. However, positive experience with interaction with chatbots has an association with cognitive trust development and satisfaction (Shahzad et al., 2024). Businesses that are investing heavily in technologies for competitive advantage should focus on repetitive successful interaction (Fang, 2019) to develop cognitive trust, leading to purchase intention through technology usage (Mkedder et al., 2024). Successful interactions are critical to avoid users’ switching behavior initially by increasing the pull factors, according to the push–pull mooring framework (Moon, 1995) by providing anywhere/anytime pertinent response (Li & Zhang, 2023). This would increase the initial cognitive trust, a known factor in automation acceptance (Huang et al., 2024), in human–chatbot interaction, leading to the development of behavioral intention through the use of platforms (Li & Zhang, 2023). Our findings both align with and extend existing HCI trust models. While our capability dimension parallels elements in previous frameworks, our intention dimension captures elements not fully addressed in traditional HCI approaches. Specifically, the pronounced variance between intention and capability indices in our results suggests that travel contexts may require greater emphasis on perceived intentions than general technological interactions, where capability often dominates trust formation (Glikson & Woolley, 2020).

In the case of more than 10 interactions, similar results were obtained with lower variance in capability and intention along with trust index. After 10 interactions, the user showed a slower decline in the trust index when the queries were unsuccessful (see Figures 2 and 3). Thus, when the threshold of 10 interactions is crossed by human–chatbot interaction, the cognitive trust levels are less receptive toward fluctuations and few unsuccessful interactions will be acceptable. An increasing number of interactions or communication shapes individual behavior and users are more inclined to use chatbots (Li & Zhang, 2023). As indicated by results, the frequency of usage leads to increased and stable cognitive trust index, repeat usage, and low switching behavior through moderation of pull factors. This is in line with the findings of various research studies on technology-mediated consumer services suggesting why users prefer chatbot-based consumer service and opt for it over human agents (Abou-Foul et al., 2023; Li & Zhang, 2023; Rzepka et al., 2022).The observed decline in trust after unsuccessful interactions aligns with expectation-confirmation theory, where negative disconfirmation of expectations creates a more pronounced effect than confirmation. In the context of chatbot interactions, users likely begin with baseline expectations of competence; when these expectations are violated through unsuccessful interactions, trust erodes rapidly. This effect is particularly pronounced in users with fewer than 10 interactions because they lack the stabilizing influence of a more extensive interaction history.

Our findings align with Shahzad et al. (2024), who found that repeated interaction with chatbots providing accurate responses increases cognitive trust leading to repeat usage. Similarly, our observation of trust resilience after multiple interactions echoes Mozafari et al.’s (2022) findings on trust stabilization patterns. However, our study extends these works by quantifying the specific impact of interaction frequency on trust dimensions within the travel context. The strong influence of perceived desire on the trust index suggests that chatbot personality design may be particularly important in travel contexts. Chatbots that demonstrate enthusiasm for helping with travel planning through appropriate language and tone may enhance perceived desire and thereby strengthen overall trust. Customization that aligns chatbot communication style with user preferences could further enhance the intention dimension, particularly for users who have fewer than 10 interactions where trust appears most malleable.

Implications

Theoretical Implications

The present study has proposed a cognitive trust model to calculate the trust value of chatbots through consumer feedback during online travel booking. This study adds to the literature in the domain of technology-mediated consumer services, chatbot interactions, and service quality (Choi & Zhou, 2023; Shahzad et al., 2024). Through this, the study offers several contributions to the literature on human–chatbot interaction.

First, this study acquires its novelty through the developed model to capture consumers’ feedback for their interaction with chatbots to understand the level of cognitive trust. Borghi and Mariani (2023) used customer feedback to understand service robot’s performance impact on user satisfaction. Similarly, user feedback helped in understanding satisfaction for mobile apps (Kumar et al., 2023). Furthermore, research has specifically studied the importance of user feedback for information multidimensionality (Wang, Du, & Wang, 2023) and its relation to a firm’s performance (Agag et al., 2023). However, scanning of the literature and the authors’ knowledge study of consumer reviews for capturing cognitive trust levels in chatbot interaction did not exist. This study tries to fill this void through the model proposed for cognitive trust.

Second, the study addresses the call from the researchers to understand the role of human–chatbot interaction on multidimensional trust development in specific (Behera et al., 2021; Li et al., 2023; Wang, Li, et al., 2023). This study extends and re-emphasizes the importance of initial customers in trust development toward an application or information system (Chao, 2019). The article contributes to the theoretical understanding of trust formation in the context of travel chatbots. It sheds light on how users evaluate capabilities and intentions, which are fundamental elements in establishing trust (Zhang, Anjum, & Wang, 2023).

Third, the study extends cognitive trust models in human–computer interaction and offers insights into the unique considerations relevant to travel-oriented applications. Understanding how users develop trust in chatbots involves delving into the cognitive processes underlying their decision-making (Dwivedi et al., 2023). Expanding cognitive chatbot literature (Behera et al., 2021; Dubey & Kumar, 2019) through exploration of capability and intention opens further areas to be explored and understand trust development for AI agents (chatbots) (Choung et al., 2023; Li et al., 2023).

Fourth, the understanding of the relationship between desire and intention through feedback of user–chatbot interaction adds to the nascent, but growing, domain of AI–human adoption, acceptance, and reuse intention. Desire, having the strongest influence on consumer propensity (Wilkins et al., 2023), has been found to influence user trust in acceptance and usage of technology (Teng et al., 2023). Third, the emphasis on the critical role of the initial 10 customers’ feedback introduces a novel aspect of trust research. The article could lead to theoretical discussions on the importance of early user experiences in shaping the overall trustworthiness of a system (Choung et al., 2023). Successful chatbot interactions are driven by competence, reliability, and transparency and are captured through users’ perception of chatbot service quality, trust, and overall experience (Shahzad et al., 2024). Customer feedback has been used widely in research to monitor, evaluate, benchmark, and quantify firm or technology performance by researchers such as net promoter score, customer effort score, and customer satisfaction (Abdelmoety et al., 2022; Agag et al., 2023). Similarly, customer feedback is equally important, if not more important, for the online travel industry for repeat visits and recommendations to increase average revenue per user through the development of cognitive trust (Tripathi & Wasan, 2021).

Lastly, the study provides two more indexes apart from the cognitive trust index, capability index, and intention index, which adds to the literature on both chatbots and cognitive trust theories. Cognitive trust models have been proposed for B2B e-marketplace (Kumar & Mohite, 2015) and industrial cyberintelligence (Wang & Nazir, 2023), but not for online travel chatbots to capture consumer propensity toward using it. Studies have explored the impact of chatbot interaction with consumers from the viewpoint of customer experience (de Oliveira et al., 2023), customer journey (Ameen et al., 2022), flow experience in e-tailing (Silva et al., 2022) and creepiness (Rajaobelina et al., 2021). Although there is a growing interest in the literature on trust concerning human and chatbot interactions, there are multiple suggestions in the literature for further exploration of the trust factor in the tourism industry (Følstad et al., 2021; Hsiao & Chen, 2022; Mostafa & Kasamani, 2022). This study tried to address this gap by exploring factors of cognitive trust.

Managerial Implications

The findings of this study on the cognitive trust model for chatbots have important managerial implications for organizations and developers working to install and improve chatbot systems. Managers should prioritize improving chatbot capabilities and intentions throughout the initial exchanges. High investment in technology by the organization (Fang, 2019) having medium to low humanness requires initial trust based on initial usage experience (Ameen et al., 2022). Initial trust and adoption of chatbots is still a major challenge (Mogaji et al., 2021); therefore, organizations should set up ways to track user feedback and iteratively improve chatbot skills and intentions to retain favorable user perception over time. This would avoid the users’ switching behavior initially by increasing the pull factors, by providing anywhere/anytime pertinent response (Li & Zhang, 2023) and increasing initial cognitive trust, a known factor in automation acceptance (Huang et al., 2024).

Based on our findings, we recommend specific design strategies for travel chatbot developers. First, since trust is highest during initial interactions (<10) with successful outcomes, developers should prioritize accuracy and relevance in these early exchanges by implementing comprehensive knowledge bases focused on common travel queries. Second, given the critical role of perceived desire in trust formation, chatbots should be programmed to explicitly communicate their commitment to helping users through phrases such as “I’m here to help you plan the perfect trip” or “Let me find the best options for you”. Third, to address the significant trust decline after unsuccessful interactions, especially among new users, chatbots should implement graceful failure handling mechanisms that maintain perceived intention even when capability falls short—for example, by acknowledging limitations while offering alternative solutions: “I don’t have information on that specific resort, but I can show you similar highly-rated options nearby.” Finally, our finding that trust stabilizes after 10 interactions suggests implementing progressive personalization features that visibly adapt to user preferences over time, reinforcing both capability and intention perceptions.

Furthermore, by 2050, there is only a 50% probability of chatbots achieving AI awareness, indicating this technology is still in the evolution phase (Sidlauskiene et al., 2023). Repeated interaction increases cognitive trust and shapes individual behavior and users are more inclined to use chatbots (Li & Zhang, 2023), leading to repeat usage and satisfaction (Shahzad et al., 2024) and purchase intention through technology usage (Mkedder et al., 2024). This would increase the human–chatbot interaction, leading to the development of behavioral intention by using platforms (Li & Zhang, 2023). Therefore, businesses should focus on developing engagement techniques that go beyond first contacts, emphasizing long-term positive experiences to build cognitive trust. Prioritizing user-centric design concepts and maintaining transparency in chatbot operations can improve perceived capabilities and intentions. Users are more inclined to trust a chatbot that communicates properly, offers relevant information, and performs activities that are consistent with their expectations.

The observed slower fall in the trust index after 10 contacts, even for unsuccessful questions, emphasizes the significance of ongoing monitoring and adaptation. Recognizing perceived desire as a significant outlier influencing the trust index, managers should concentrate their efforts on understanding and addressing user perceptions of the chatbot’s readiness to help. Managers must engage in user education to understand chatbot capabilities and limits. Clear communication of what users can expect from the chatbot, as well as moderating expectations, can have a favorable impact on perceived capacity and intention, ultimately contributing to cognitive trust. According to the data, users’ trust declines more slowly after 10 contacts, showing the potential for long-term partnerships.

Limitations

This study has various limitations. First, the study’s domain specificity and usefulness of the cognitive trust model may differ depending on the type of interactions and user expectations in certain settings. The focus on quantitative data, such as user feedback scores and interaction count, restricts our knowledge of the qualitative aspects of trust development. Our reliance on self-reported data introduces potential biases, including social desirability bias and recall inaccuracies. While we implemented methodological controls such as anonymous response collection and recent-interaction focus, future research could triangulate self-reported trust with behavioral measures to strengthen validity. Future studies can use qualitative research approaches, such as interviews or focus groups, which may provide more detailed insights into user opinions and experiences. Second, the study depends heavily on user feedback gathered at discrete times, which may neglect the changing nature of user perceptions. Future studies could investigate real-time feedback methods to capture changing user sentiments during ongoing interactions with chatbots. External factors influencing user trusts, such as cultural variations or individual user characteristics, are not thoroughly investigated in this study. Future research could investigate how these external elements influence cognitive trust formation in chatbot encounters. Third, the study focuses on user trust within the first 10 contacts and does not go into detail about how trust dynamics evolved. Further research could focus on understanding how cognitive trust develops and evolves. Fourth, the study understands only cognitive trust for chatbots. Future research can explore other technology-mediated online consumer services through the lens of this model to generalize it. Lastly, demographics focused on the study are limited to millennials and generation Z in the Indian context. Future studies can apply this model to compare demographics and psychographic profiles of users for chatbots.

Future Research

Future research could focus on creating dynamic trust models that can respond to changing user interactions. Incorporating machine learning techniques to continuously develop the chatbot’s skills and intentions in response to user feedback may improve the cognitive trust model’s responsiveness. Complementing quantitative studies with qualitative research methodologies, such as user interviews and sentiment analysis of open-ended comments, can provide a more nuanced picture of user trust building and reveal insights that quantitative data alone may miss. Investigating how cultural differences influence the sense of chatbot trust can help to develop culturally sensitive chatbot designs. Comparative studies in various cultural contexts can provide insights into both universal and context-specific trust-building mechanisms. Longitudinal research can provide insights into how trust evolves. Understanding how user trust fluctuates beyond initial interactions, as well as identifying variables that contribute to maintained or declining trust, can help inspire long-term engagement strategies. Incorporating explainable AI techniques can improve transparency in chatbot decision-making processes, answering user concerns about understanding how the chatbot arrives at specific responses. This, in turn, can boost perceived capability and intention, impacting cognitive trust. This may prompt further exploration into methodologies for early user testing and feedback collection in the development of chatbot applications. Future research could examine the long-term evolution of trust beyond our observed 10-interaction threshold. Longitudinal studies tracking users over extended periods could reveal whether trust continues to stabilize or eventually reaches inflection points where different dynamics emerge. Such research would be particularly valuable for understanding how persistent trust can be maintained throughout the customer relationship life cycle.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.