Abstract

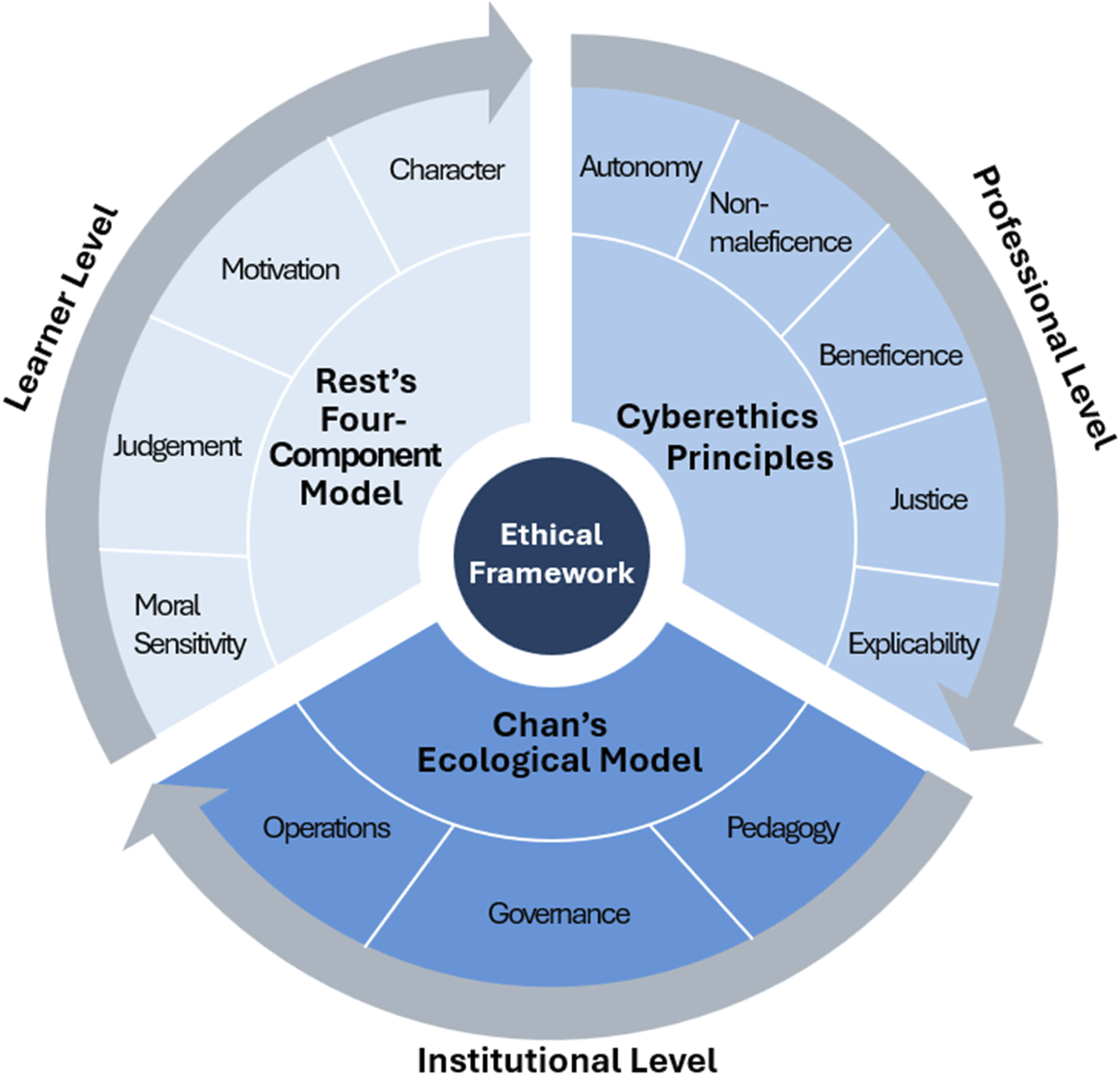

The rapid adoption of generative artificial intelligence (GenAI) in nursing education presents urgent ethical challenges, particularly as students employ these tools in high-stakes clinical prioritization tasks. Although general AI literacy initiatives exist, nursing-specific resources for addressing risks of bias, inequity, and misinformation in GenAI-mediated decision making remain limited. This study adapts an interdisciplinary, open-access AI ethics learning toolkit to develop a conceptual, nursing-focused mini-toolkit. The adaptation integrates three complementary ethical frameworks: Rest’s Four-Component Model at the learner level (moral sensitivity, judgment, motivation, and character), cyberethics principles at the professional level (autonomy, nonmaleficence, beneficence, justice, and explicability), and Chan’s ecological model at the institutional level (pedagogy, governance, and operations). Collectively, these frameworks scaffold ethical reasoning across individual, professional, and systemic domains. The resulting mini-toolkit includes case vignettes that simulate GenAI-supported prioritization scenarios, reflection prompts to cultivate bias recognition and accountability, and rubric criteria to guide faculty in assessing ethical reasoning and oversight. Rather than prescribing fixed answers, the toolkit creates structured opportunities for dialogue, inquiry, and professional judgment. By embedding ethical reasoning into prioritization pedagogy, the toolkit positions faculty and students as critical evaluators rather than passive users of GenAI, reinforcing nursing’s commitment to equity, justice, and patient-centered care. Situated within a broader global movement toward responsible and human-centered AI integration, this study contributes to nursing ethics by translating abstract principles into pedagogically actionable tools and modeling a methodology for adapting interdisciplinary frameworks into nursing-specific applications. Future work should pilot and evaluate the toolkit in authentic educational contexts, examining its impact on ethical reasoning, bias recognition, and collaborative decision making.

Keywords

Introduction

Generative artificial intelligence (GenAI) is reshaping education and professional practice by providing immediate access to knowledge, language support, and adaptive learning experiences.1,2 Large language models (LLMs) such as ChatGPT offer students new ways to explore clinical reasoning, but outputs may lack contextual accuracy and contain bias.3–5 Reliance on unvalidated outputs may normalize bias, erode professional integrity, and weaken commitments to justice in patient care. These risks are especially consequential in clinical prioritization and triage, where decisions determine who receives care first under conditions of uncertainty. 6

Clinical prioritization is a recognized nursing competency that is often taught through multipatient simulation and structured frameworks. 7 Unlike procedural skills, it requires distributive justice and moral discernment. Because LLMs are not designed to support clinical reasoning or ethical triage, 8 nursing curricula must prepare students to engage with GenAI critically and ethically while recognizing both its risks and potential learning value.

A central challenge in nursing education is determining how ethical and pedagogical frameworks can guide student use of GenAI in clinical prioritization practice, given risks related to bias, accuracy limitations, and misuse. To address this challenge, this paper presents a conceptual adaptation of an interdisciplinary AI ethics learning toolkit into a nursing-focused resource for GenAI-mediated prioritization tasks. The adaptation is intended to foster critical appraisal, bias recognition, and human oversight, while supporting faculty in aligning prioritization pedagogy with nursing commitments to equity, justice, and patient-centered care.

Background

A growing body of literature identifies GenAI as both a disruptive force and a pedagogical opportunity in higher education. Chen et al. 1 found that students use ChatGPT to expand perspectives and support collaborative knowledge building, although awareness of bias and accuracy limitations remains limited. Ng et al. 9 noted that most AI literacy initiatives have targeted computer science learners, leaving nursing and other health professions underserved. Mao et al. 10 and Bozkurt et al. 11 further documented how GenAI challenges traditional assessment practices and compels institutions to rethink teaching and evaluation. In response, policy frameworks such as Chan’s ecological model 12 and Laine et al.’s expansion of ethical principles 13 highlight the importance of transparency, governance, and explicit ethical alignment. These calls for oversight are reinforced by empirical evidence that GenAI can reproduce existing inequities, underscoring the risks of uncritical adoption.

Algorithmic bias and equity

The persistence of algorithmic bias has been demonstrated across multiple domains. An et al. 14 showed that LLMs disadvantaged Black male applicants in resume evaluations, illustrating how inequities can persist in advanced systems. Jumreornvong et al. 15 synthesized evidence of sex, race, socioeconomic, and statistical biases in AI used for pain management and other clinical domains, demonstrating how flawed training data and constrained evaluation processes can perpetuate inequities in patient care. At a broader level, the 2025 AI Index Report documented record-setting adoption rates alongside increased reports of bias and safety incidents, underscoring the need for vigilant governance at scale. 16 UNESCO 17 similarly emphasized that life-and-death decisions in healthcare must remain under human oversight, framing justice as a non-negotiable principle in AI-mediated clinical contexts.

Prioritization as an ethical stress test

Clinical prioritization differs from other nursing tasks in terms of its ethical stakes. Whereas documentation, technical procedures, or medication calculation rely primarily on precision and technical accuracy, prioritization requires moral discernment about how limited time and resources should be allocated among multiple patients.18,19 Research on rationing and unfinished nursing care suggests that these decisions are often implicit and shaped by time scarcity and resource limitations. 18 Suhonen et al., 19 in a scoping review, further demonstrated that priority-setting is frequently value-laden and influenced by professional norms, patient characteristics, and organizational contexts. Bierer and Truog 6 emphasized that prioritization inherently involves distributive justice, as decisions about “who first” or “who waits” directly affect patient outcomes under conditions of scarcity. When GenAI is introduced into prioritization exercises, these ethical stakes become even more pronounced. Prioritization mediated by GenAI therefore functions as an ethical stress test in nursing education. Algorithmic bias and accuracy limitations can shape prioritization recommendations in ways that appear clinically reasonable but lead to inequitable decisions. These risks underscore the need for an ethics toolkit that helps students evaluate GenAI outputs with appropriate oversight.

Ethical engagement and faculty readiness

Scholars have moved beyond risk identification and are advancing structured approaches for ethical engagement with GenAI. Sengul et al., 5 drawing on Rest’s Four-Component Model, 20 describe how moral sensitivity, judgment, motivation, and character can guide responses to bias, privacy, and academic integrity concerns. De Gagne et al. 4 extend this work by articulating cyberethics principles (autonomy, nonmaleficence, beneficence, justice, explicability) as practical touchstones for responsible adoption and educators role-modeling in digital environments. Cary et al. 3 integrate AI literacy, cultural humility, and cyberethics, positioning nurses as critical evaluators of GenAI and as advocates for equity when algorithmic systems risk amplifying health disparities. Together, these contributions underscore the need to embed ethical reasoning within pedagogical design as a core component of GenAI use. Empirical studies, however, show variability in faculty readiness for GenAI integration. Ehmke et al. 21 identified significant skills gaps among U.S. nursing faculty, highlighting the need for institutional investment in training and support. Summers and Lee 22 reported ongoing ambiguity in Australia about whether student use constitutes misconduct, reflecting inconsistent institutional policies. Saleh et al. 23 similarly found that faculty in Jordan and the United States recognized GenAI’s instructional potential but raised concerns about misinformation, reduced interaction, and ethical misuse. Studies in South Korea also described cautious optimism alongside persistent concerns about accuracy, fairness, and professionalism.24,25 Collectively, these findings suggest that supporting ethical student engagement with GenAI is a shared challenge and a global pedagogical priority.

The gap in existing toolkits

Despite the growing availability of general AI literacy resources and broad ethical guidelines, nursing-focused GenAI ethics toolkits that explicitly guide student use during clinical prioritization remain limited. Existing frameworks, such as the CHECK approach 26 and the Practical Intelligence Generative AI Toolkit for Nurse Educators, 27 provide valuable guidance for responsible integration of GenAI into curriculum design, assignment development, and scholarship. Vanderlaan et al.’s toolkit, 27 for example, emphasizes practical teaching applications such as course planning, lesson design, simulation co-creation, and student engagement, while promoting transparency, error tolerance, and ethical awareness. However, these resources do not provide context-specific guidance for student use of GenAI in high-stakes prioritization scenarios where distributive ethics and patient outcomes are directly implicated. As a result, learners may engage with GenAI in prioritization exercises without structured support for evaluating its recommendations when equity and fairness are central to decision making. This mini-toolkit fills a gap in existing nursing resources by translating broad ethics guidance into prioritization-specific teaching tools for structured student engagement with GenAI.

Methodology: Conceptual adaptation approach

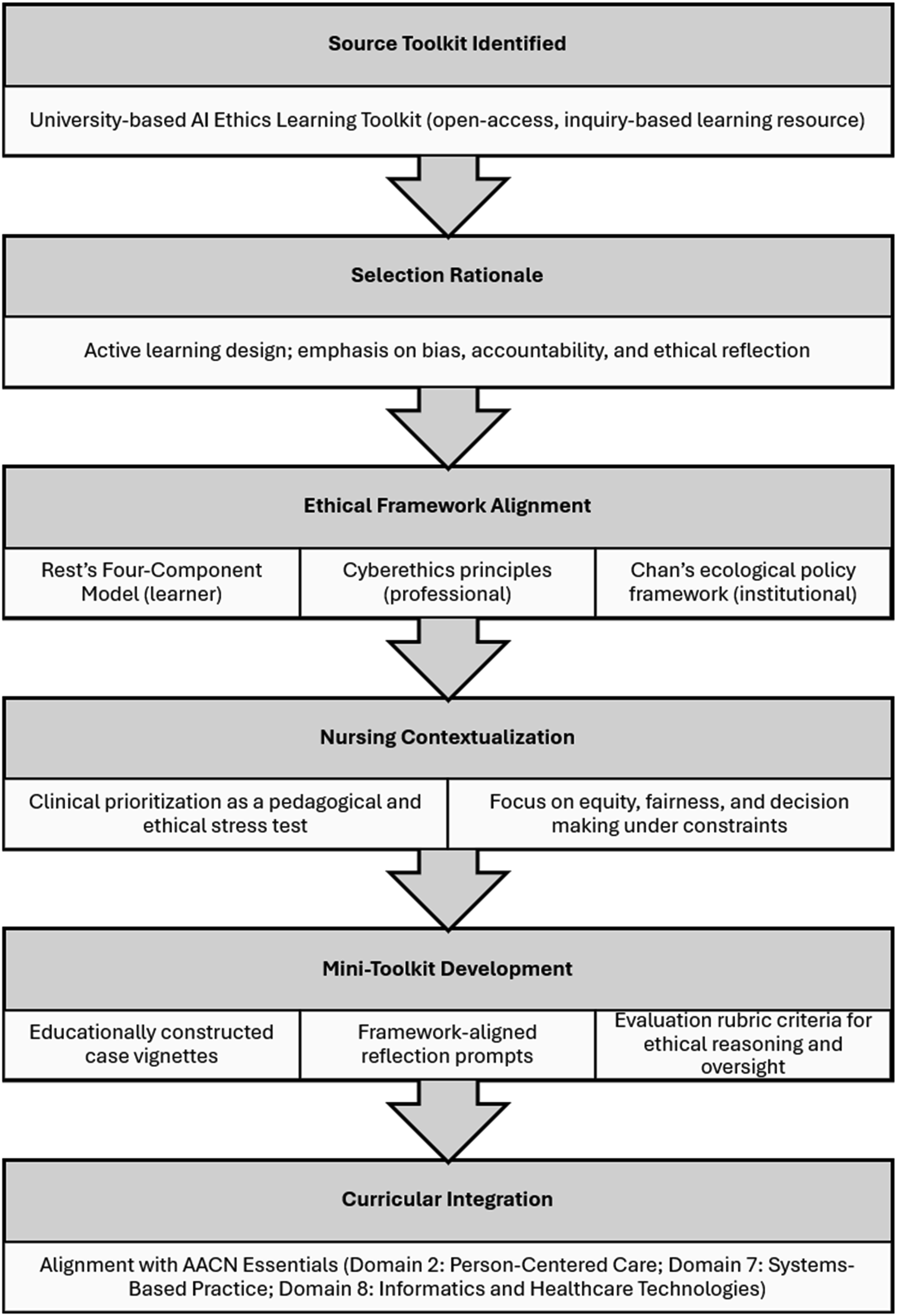

This study employs a conceptual adaptation method to examine how pedagogical and ethical frameworks can guide learner engagement with GenAI during clinical prioritization. Because the focus is on pedagogy, ethics, and curricular design, a conceptual approach is appropriate for adapting existing resources and developing context-specific educational applications. The mini-toolkit is intended primarily for pre-licensure (entry-to-practice) nursing students and can be adapted for advanced levels by increasing clinical complexity and the ethical demands of the scenarios. All clinical scenarios were educationally constructed for teaching purposes and were not derived from identifiable real patient cases. Figure 1 summarizes the conceptual adaptation process from toolkit selection to development of the nursing-focused mini-toolkit components. Conceptual adaptation process for developing a nursing-focused GenAI ethics mini-toolkit for clinical prioritization.

Pedagogical foundation

The Duke AI Ethics Learning Toolkit 28 was selected as the pedagogical foundation because it is an active learning resource rather than a static policy guide. Organized around conversation prompts, guided inquiry, and applied activities, the Toolkit fosters critical engagement with AI systems and supports inquiry-based pedagogy. Although it was originally designed for interdisciplinary contexts, its emphasis on discussion of bias, accountability, and social impact aligns closely with clinical prioritization in nursing. Students are given scenarios in which they must not only determine an order of care but also consider the ethical implications of their decisions. GenAI outputs cannot be evaluated on technical accuracy alone; they must be appraised through the lenses of justice, equity, and professional responsibility. By embedding reflection and ethical reasoning into active learning, the Duke toolkit offers a flexible framework that can be meaningfully adapted to prepare nursing students for critical engagement with GenAI in prioritization practice.

Ethical framework foundations

The mini-toolkit was anchored in ethical frameworks that operate across learner, professional, and institutional levels, thus allowing broader ethical principles to be translated into actionable teaching strategies. Laine et al.

13

synthesized emerging concepts such as intellectual property, sociocultural responsibility, and human-centric design, offering an important overview of the ethical landscape of GenAI. For nursing education, however, more directly applicable models are required to guide teaching and learning. At the learner level, Rest’s Four-Component Model

20

informed the design of prompts that cultivate moral sensitivity, judgment, motivation, and character, particularly in evaluating fairness and bias in GenAI outputs. At the professional level, the cyberethics principles articulated by De Gagne et al.

4

grounded scenarios and rubrics in core nursing values of autonomy, nonmaleficence, beneficence, justice, and explicability. At the institutional level, Chan’s ecological policy framework

12

emphasized faculty responsibility, governance policies, and operational supports that sustain ethical practice. Collectively, these frameworks provided an integrated foundation that linked ethical reasoning with both classroom pedagogy and professional nursing practice. Figure 2 illustrates the integration of these three frameworks, depicting their alignment across learner, professional, and institutional levels within the nursing GenAI ethics context. Multilevel ethical framework guiding the nursing GenAI ethics mini-toolkit.

Structure of the mini-toolkit

The mini-toolkit is structured around three instructional elements designed to promote critical engagement with GenAI during prioritization exercises: First, case-based vignettes present GenAI-generated prioritization orders, prompting students to evaluate the reasoning involved, identify potential bias, and, when appropriate, consider alternative decisions grounded in ethical reasoning. Second, reflection prompts are aligned with ethical frameworks to cultivate moral sensitivity, fairness, and professional accountability, with some prompts extending to systemic dimensions of equity and institutional responsibility. Third, evaluation rubrics provide faculty with criteria to assess student engagement by focusing on bias recognition, articulation of ethical reasoning, and evidence of human oversight in decision making. To enhance curricular relevance, the mini-toolkit is aligned with the American Association of Colleges of Nursing (AACN) Essentials, 29 which outline a competency-based model of nursing education; specifically, it addresses Domain 2 (Person-Centered Care), Domain 7 (Systems-Based Practice), and Domain 8 (Informatics and Healthcare Technologies).

Application: Nursing-focused mini-toolkit

For this initial version, three core topics were selected and adapted from the Duke AI Ethics Learning Toolkit: “Can we trust AI?”, “Is AI biased?”, and “Who benefits from AI?” 28 These topics were prioritized because they capture ethical concerns most relevant to clinical prioritization, including trust in decision outputs, recognition of bias, and questions of equity. Each retains the original overview from the Duke toolkit but has been reframed for nursing contexts and extended into case vignettes, reflection prompts, and sample rubric criteria. Collectively, they demonstrate how GenAI ethics can be translated into prioritization pedagogy. To illustrate the structure and pedagogical application, Topic A: “Can We Trust AI?” is presented below in full; the complete mini-toolkit, including Topics B (“Is AI Biased?”) and C (“Who Benefits from AI?”), is provided in Appendix I as supplementary material, with each topic functioning as a standalone teaching resource.

Implementation guidance

The nursing-focused mini-toolkit is designed for flexibility across curricular contexts. Faculty can integrate its topics and vignettes into multipatient simulations, use reflection prompts in pre-briefing or debriefing, or adapt materials for small-group classroom discussions and post-conference practicums. Rubric criteria may serve as guides for peer or instructor evaluation of student reasoning. To accommodate varying levels of faculty expertise, the toolkit offers multiple entry points: those new to GenAI may use prompts and rubrics without engaging AI tools directly; experienced users may incorporate live prompting into classroom or simulation activities. Across these applications, the emphasis remains on human oversight, ethical reasoning, and professional accountability. Embedding the mini-toolkit within established teaching practices allows programs to foster critical engagement with GenAI while supporting competency-based education outcomes outlined in the AACN Essentials.

Discussion

This discussion examines the pedagogical, ethical, and professional significance of the nursing-focused mini-toolkit and considers implications for curriculum design, faculty development, and interdisciplinary adaptation. Although this conceptual adaptation represents an initial pilot rather than a validated intervention, it demonstrates how ethical reasoning can be embedded into prioritization pedagogy to support critical appraise GenAI-supported decision making. The following sections synthesize the toolkit’s educational relevance, professional implications, global alignment, scalability, implementation considerations, and directions for future research.

Pedagogical integration and value

The nursing-focused mini-toolkit can complement existing teaching practices by embedding ethical reasoning into multipatient simulation and prioritization exercises. These activities often emphasize clinical judgment under conditions of scarcity. Integrating GenAI outputs adds structured reflection that prompts learners to examine trust, bias, and equity alongside clinical reasoning. For example, a GenAI-generated prioritization list may place a younger postoperative patient ahead of an older adult with comparable symptoms, reinforcing age-based bias if accepted without scrutiny. Discussing such cases helps learners recognize how value judgments can be embedded in algorithmic reasoning and strengthens their ability to justify counterarguments grounded in patient-centered care. A variety of strategies, including individual reflection, partner activities, and group decision making, can scaffold ethical reasoning while mirroring the collaborative demands of practice. This approach aligns with the NLN Vision Statement, Artificial Intelligence in Nursing Education, 30 which calls for curricula that foster AI literacy, ethical engagement, and faculty readiness. In this way, GenAI shifts from an unstructured risk to a structured opportunity for guided, values-based inquiry.

Ethical and professional engagement

Grounded in Rest’s Four-Component Model, 20 cyberethics principles, 4 Chan’s ecological policy framework, 12 and the AACN Essentials, 29 the mini-toolkit connects ethical reasoning with pedagogy across learner, professional, and institutional levels. In practice, these frameworks may generate productive tensions. For example, a survival-focused triage rationale may prioritize physiological stability (Chan’s operational dimension), while equity-driven reasoning foregrounds the needs of vulnerable populations (cyberethics principle of justice). By incorporating structured reflection prompts and rubric criteria, the toolkit supports learners in examining completing values and articulating ethical rationales. Faculty responsibility remains central; the toolkit supports professional accountability by emphasizing transparency, oversight, and responsible integration of GenAI into clinical education. These elements are especially important given concerns that AI systems can function as “black boxes,” limiting transparency and assigning undue authority to algorithmic recommendations rather than human judgment. 31

Global context and ethical imperatives

Global analyses suggest that challenges in nursing education reflect broader trends in rapid GenAI adoption. The AI Index Report 2025 documents record-setting growth of GenAI in education and healthcare alongside increased reporting of bias incidents and accountability failures. 16 These findings point to a widening gap between technological capacity and ethical preparedness, suggesting that adoption has outpaced discipline-specific safeguards. In nursing, the stakes are heightened because biased or flawed reasoning in AI-generated recommendations may influence patient outcomes. Ooi et al. 32 similarly frame GenAI as a cross-disciplinary innovation with substantial opportunity and risk, and they identify healthcare and education as particularly vulnerable to misuse and overreliance. They argue that ethical engagement must extend beyond theory to include applied strategies that strengthen critical evaluation and human oversight. This mini-toolkit advances this aim by translating ethical frameworks into pedagogically actionable tools that support moral discernment and reflective judgment in AI-mediated decision making. Positioned within broader calls for transparent and human-centered AI, it offers a practical model for operationalizing ethics in prioritization pedagogy.

Adaptability and scalability

The mini-toolkit’s modular design supports adaptation across educational contexts. Although developed for pre-licensure learners and diverse clinical prioritization situations, its components can be tailored for graduate and continuing education by increasing clinical complexity, contextual ambiguity, and the ethical demands of the scenarios. The toolkit can also extend to interprofessional learning, where students in health professions, social work, and public policy examine shared ethical challenges in AI-mediated decision making. This flexibility aligns with the NLN’s emphasis 30 on competency-based and context-responsive approaches to AI literacy. Scalability, however, depends on sustained implementation. Meaningful adoption requires faculty development, simulation infrastructure, and ongoing ethical dialogue to preserve conceptual integrity across settings. Accordingly, scalability is best viewed as an iterative contextual translation, supported by evaluation and collaboration across disciplines.

Faculty development and institutional integration

Gaps in faculty readiness remain a barrier to responsible GenAI integration. Studies report uncertainty about whether student use constitutes innovation or misconduct, with variation across regions and institutions.21–24 The mini-toolkit addresses this challenge by offering flexible entry points: faculty may begin with reflection prompts and rubrics without direct AI use, and those with greater expertise can embed live prompting exercises into simulation or classroom settings. Adoption may be strengthened through faculty workshops, peer review of AI-enhanced teaching materials, and integration into simulation labs or professional development portfolios. These strategies echo the NLN Vision Statement’s emphasis on credentialing, interdisciplinary collaboration, and curriculum redesign.

Limitations and future directions

This conceptual adaptation has several limitations. First, evidence on GenAI use in high-stakes contexts such as prioritization and triage remains limited, underscoring the need for framework-based guidance as empirical work develops. Second, GenAI systems evolve rapidly, so case vignettes may require periodic updates. To address this challenge, the mini-toolkit emphasizes enduring ethical principles such as equity, transparency, and accountability rather than tool-specific features. Third, variation in faculty readiness may constrain implementation. The toolkit addresses this barrier by offering entry points that can be scaled to instructor expertise and course context. Finally, this adaptation represents an initial conceptual pilot rather than an empirically validated toolkit. Future research should evaluate the mini-toolkit in authentic educational settings, such as multipatient simulation, and examine outcomes such as ethical reasoning, bias recognition, accountability in decision making, and teamwork during collaborative activities. Faculty perspectives will be essential for assessing feasibility and curricular integration. At a broader level, accreditation bodies and professional organizations may consider how AI ethics can be embedded into evolving educational standards, given the need for discipline-specific resources.

Conclusion

This study adapts an interdisciplinary, open-access AI ethics learning toolkit into a nursing-focused mini-toolkit for clinical prioritization pedagogy. By translating ethical frameworks into case vignettes, reflection prompts, and rubric criteria, the toolkit creates structured opportunities for students to practice critical appraisal, bias recognition, and human oversight in high-stakes decision making. For faculty, it offers a flexible resource that can be integrated into simulation, classroom, and clinical settings to support guided engagement with GenAI. As a conceptual pilot, the toolkit is not intended to provide definitive solutions but to open pathways for ethical inquiry, dialogue, and curricular innovation. Its contribution lies in showing how abstract ethical principles can be transformed into practical pedagogical tools that address the realities of AI in nursing practice and education. Future work should test its educational impact and expand its scope for nursing education, including use in interprofessional learning contexts.

Supplemental material

Supplemental material - Ethical engagement with generative AI in Nursing education: An AI ethics toolkit for clinical prioritization

Supplemental material for Ethical engagement with generative AI in Nursing education: An AI ethics toolkit for clinical prioritization by Jennie C. De Gagne, David J. Carrejo, and Hyeyoung Hwang in Nursing Ethics.

Footnotes

Acknowledgment

The authors would like to thank Donnalee Frega, PhD for her editorial consultation.

Ethical considerations

This article does not contain any studies involving human participants performed by any of the authors.

Authors contributions

All authors contributed to the conceptualization, writing, editing, and reviewing of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.