Abstract

The integration of artificial intelligence technologies is transforming healthcare and nursing education, offering significant benefits for patient care and professional development. However, as their use grows, it is crucial to address the ethical implications to ensure that fundamental principles like justice and integrity are maintained in both clinical and educational settings. The aim of this integrative review is to explore the ethical challenges, risks, and future perspectives of integrating artificial intelligence into nursing education. It examines how these tools influence ethical decision-making processes, identifies critical barriers, and proposes strategies for ethical implementation. Following an integrative review methodology, 184 articles were identified, with 124 remaining after duplicate removal. Fifteen peer-reviewed studies analyzed. The methodological quality of the included studies was assessed using appropriate Joanna Briggs Institute Critical Appraisal Checklists based on study design. Searches were conducted in the PubMed, Cochrane Library, MEDLINE (Ovid), Scopus, Web of Science, CINAHL, and ScienceDirect databases from 2014 to September 2024. Data was analysed using constant comparative analysis. The review is registered in the PROSPERO database (CRD42024609440). Guided by Rest’s Four-Component Model of Moral Behavior, the synthesis was organized under four components. Moral Sensitivity included themes such as ethical and psychosocial effects, data privacy, equity in access, and cultural sensitivity. Moral Judgment covered ethical reasoning skills, AI accuracy, bias, and academic integrity. Moral Motivation addressed over-reliance on AI and the need for ethical frameworks. Moral Character highlighted educator roles and research priorities for ethical AI use. Artificial intelligence offers transformative opportunities for nursing education, but also presents significant ethical challenges. To ensure its responsible integration, nursing curricula must adopt clear ethical frameworks, equip educators with the skills to guide students and address disparities in access.

Introduction

The rapid development of artificial intelligence (AI)-based technologies, including chatbots, robotics, virtual reality (VR), and augmented reality (AR), is reshaping the landscape of nursing education.1,2 Generative AI (Gen-AI), a subset of AI, refers to algorithms capable of producing new content such as text, images, or simulations based on training data. Once primarily applied in clinical settings, these technologies are now increasingly integrated into academic environments to enhance the preparation of future nurses. 3 Among generative AI tools, ChatGPT is currently the most widely used platform by nursing students and educators globally due to its accessibility and versatility. However, other generative AI tools such as Google’s Bard, Microsoft Bing’s AI chatbot, Meta’s LLaMA, and Stanford’s Alpaca model are also publicly available and gaining traction in post-secondary and nursing education settings.4,5 In recent years, the adoption of ChatGPT and similar generative AI tools has accelerated in post-secondary education, particularly in nursing, where educators and students alike explore their pedagogical utility. 6 Robotic systems integrated with AI facilitate more realistic interactions with nursing students compared to traditional high-fidelity mannequins, thereby enhancing the effectiveness of simulation-based nursing education. 7 These tools can support learning by generating personalized feedback, simulating clinical scenarios, and encouraging reflection on ethical dilemmas.6,8,9 Studies suggest that such applications may foster student engagement and cognitive development when integrated thoughtfully into curricula.8–10 As AI tools evolve, their role in shaping not only technical knowledge but also moral competencies becomes increasingly important.9,11 In nursing education, where the formation of ethical judgment is foundational, the use of generative AI tools must be approached with pedagogical intentionality and ethical sensitivity. 12

Background

Ethical decision-making is a fundamental component of professional nursing education. 10 The process involves evaluating alternatives through the lens of core ethical principles such as beneficence, non-maleficence, and justice, while considering academic responsibilities and learner-centred values. 10 In the context of AI utilisation within post-secondary educational settings, this process assumes an even greater significance. 2 Recent reviews indicate that while AI tools can enhance access to information, their utilisation may also compromise students' critical thinking, ethical reasoning, and academic integrity if not adequately regulated.6,11,12 Consequently, the integration of ethical decision-making frameworks into nursing education is imperative to ensure that the benefits of AI do not come at the expense of ethical and cognitive development.8,12 In light of the rapidly evolving digital learning landscape, nursing education assumes a pivotal role in preparing future nurses to address complex technological and moral challenges. 2 Within this context, nurse educators are expected to adopt AI-driven innovations and critically assess their pedagogical and ethical implications, thereby positioning themselves as leaders in the digital transformation of the discipline. 9

Despite the considerable opportunities presented by AI-supported technologies in education, there are also significant challenges that must be addressed, particularly in the context of preparing students for professional ethical standards.2,8 The increasing use of AI in educational environments has raised profound concerns, requiring vigilant pedagogical oversight to ensure that fundamental ethical principles are upheld.8,11 As the popularity of ChatGPT and similar generative AI tools continues to grow among students, educators, and researchers, there is a pressing need to ensure their use is grounded in sound ethical and educational principles. 1 The establishment of a robust ethical framework is crucial to harnessing the potential of AI while safeguarding learners’ cognitive integrity and professional development. 3 Ethical decision-making in nursing education is grounded in professional values such as beneficence, non-maleficence, justice, and respect for autonomy. 13 This normative framework guides learners not only in choosing morally appropriate actions but also in developing a sense of responsibility toward patients and communities 14 Recent studies have emphasized that nursing students’ ethical reasoning is shaped by a combination of moral intentions, cognitive dispositions, and contextual factors, including the integration of emerging technologies such as AI and generative AI tools.14,15 Tools like ChatGPT have the potential to either support or disrupt students’ ethical awareness depending on how they are introduced and regulated within educational settings.14,15 For example, Sengul et al. 15 found that students who demonstrated higher levels of trust in AI tended to exhibit automation bias, relying heavily on AI outputs even in morally complex situations. Conversely, students who had more frequent exposure to ethical dilemmas in their training demonstrated lower bias levels and greater confidence in their moral reasoning. 15 These findings reinforce the idea that critical engagement with ethical scenarios enhances students’ resilience to technological overreliance. 14 Therefore, developing structured ethical frameworks in nursing education that integrate both normative principles and cognitive-behavioral predictors is essential for ensuring ethically responsible AI usage in academic and clinical practice.14,15

Recent reviews have highlighted the growing interest in integrating AI into nursing education.1,6,8 For instance, Lifshits and Rosenberg 11 conducted a scoping review analysing the incorporation of AI technologies into academic learning environments, emphasizing the ethical ambiguity surrounding their use in educational scenarios. In a similar context, the systematic review by De Gagne et al. 12 synthesized findings from studies, revealing a consistent gap in frameworks guiding the ethical use of generative AI in post-secondary nursing education. These studies emphasize the pressing need for the development of structured ethical guidelines.11,12 While a number of reviews have addressed AI’s use in healthcare more broadly, few have focused specifically on the unique ethical challenges posed by generative AI tools in nursing education.11,12 This review contributes to filling that gap by offering a focused ethical analysis situated within the academic preparation of future nurses.

Materials and methods

Aims

This integrative review aims to identify and analyze the ethical challenges emerging from integrating AI-powered tools into nursing education, thereby contributing to developing ethical guidelines for their use in this critical field.

Research questions

• How does the use of AI influence ethical decision-making processes in nursing education settings? • What challenges and benefits do nursing students face when incorporating AI into the curriculum? • How can the ethical use of AI be ensured in nursing education?

Design

To guide the ethical lens of this review, Rest’s Four-Component Model of Moral Behavior was adopted as the conceptual framework. 16 This model outlines four sequential components essential for ethical decision-making: moral sensitivity, moral judgment, moral motivation, and moral character. Its application enabled a structured examination of how generative AI tools, such as ChatGPT, may influence each phase of the ethical decision-making process in nursing education. 14, 16 The model provides a meaningful foundation to explore how AI integration may either enhance or hinder the ethical competencies of nursing students within evolving digital learning environments. 16

Integrative review is an important methodology for nursing and other disciplines to evaluate and synthesize data from various sources in order to answer research questions, generate new theories, and provide a comprehensive perspective on existing knowledge.17,18 This approach allows for the inclusion of diverse representations of the focal phenomena by authors employing various methodologies. The integrative review was conducted based on the methodological framework outlined by Whittemore and Knafl 17 and further supported by Toronto. 18 The process included five stages: problem identification, literature search, data evaluation, data analysis, and presentation of findings. To enhance transparency and rigor in reporting, the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 checklist 19 was used as a reporting tool (see Supplementalry File 1). This review aimed to identify key ethical considerations, challenges, benefits, and strategies for using ChatGPT and other AI-assisted technologies in nursing curricula, with a focus on ethical decision-making processes and best practices in educational settings, as gleaned from existing published literature.

Search methods and selection criteria

The search strategy was developed in collaboration with a librarian at a higher education institution. The review is registered in the PROSPERO (International Registry of Prospective Systematic Reviews) database (CRD42024609440). Articles were retrieved from seven databases: PubMed, Cochrane Library, Medline (OVID), Scopus, Web of Science, CINAHL, and Science Direct. Searches were conducted using a combination of the following keywords: “ChatGPT,” “ChatGPT-3,” “ChatGPT-3.5,” “ChatGPT-4,” “Chatbot,” “Artificial Intelligence,” “AI Tools,” “Machine Learning in Nursing Education,” “AI-driven Education,” “Ethical Issues,” “Ethical Decision-Making,” “AI and Ethics,” “Nursing Education,” “AI in Nursing Education,” “AI in Healthcare,” “Nurses,” “Nursing Students.” Additional searches were performed for key concepts in each database using relevant subject headings. The final search strategy involved combining individual search terms with appropriate conjunctions (“and” and “or”) to refine results. An academic librarian was consulted to optimize the search strategy for each database. All records were imported into Covidence software for systematic screening and analysis.

Eligibility criteria

The review keywords were selected according to the Medical Subject Headings and PICOS. The inclusion criteria for the studies according to the PICOT-SD (Patients Interventions Comparison Outcome Time-Study Design) are as follows:

Inclusion and exclusion criteria

Inclusion criteria

Studies were included if they met the following conditions: • Peer-reviewed empirical studies employing quantitative, qualitative, or mixed-methods research designs. • Focused explicitly on ethical decision-making in relation to the integration of ChatGPT or other AI tools in nursing education; • Involved nursing students or registered nurses as the primary study population; • Published in English between January 1, 2014, and September 30, 2024; • Reported outcomes directly related to ethical reasoning, ethical challenges, or ethical decision-making frameworks in educational settings.

Exclusion criteria

The following were excluded: • Studies that discussed general AI use without emphasis on ethical decision-making in nursing education; • Research that included participants not directly related to nursing education (e.g., medical students, general health sciences students not in nursing programs); • Studies published in non-peer-reviewed journals, non-empirical articles, reviews without primary data, commentaries, opinion papers, or editorials; • Studies that only discussed the clinical (not educational) applications of AI.

Study selection and data extraction

Characteristics of included studies for systematic review (N = 15).

Search outcome

This search yielded 184 articles. After removing 60 duplicates, we evaluated our final sample of 124 articles for inclusion. We screened the titles and abstracts of all papers and identified 46 articles as potentially relevant to ethical decision-making in integrating AI into nursing education (Figure 1). Finally, this review included 15 studies (in the English language), all of which met the full inclusion criteria. Study characteristics, the title, authors (year), country, population, design, aim, AI type, and main findings of each study are summarized in Table 1. To ensure consistency with the review’s stated aim and eligibility criteria, the search strategy was designed to capture studies specifically focusing on nurses and nursing students. While the term “health sciences students” was not explicitly included in the search terms, two studies20,21 involving mixed health-related populations were included with caution. Wang et al.,

20

a quantitative study, presented subgroup-specific findings for nurses and nursing students, and only these data were extracted and synthesized. In contrast, Güner et al.

21

was a qualitative study involving academics from various health disciplines, including nursing faculty. While individual roles were not always delineated, several quotations and thematic findings clearly addressed ethical concerns within nursing education contexts. We therefore included only those insights attributed to nursing educators. These decisions were made with methodological rigor to ensure that the synthesis of ethical challenges remained accurate, credible, and aligned with the review’s targeted population. PRISMA flow diagram.

Quality appraisal

Descriptive and cross-sectional studies risk of bias assessment.

Q1: Inclusion criteria clearly defined? Q2: Were objective, standard criteria used for measurement of the condition? Q3: Exposure measured validly/reliably? Q4: Were the outcomes measured in a valid and reliable way? Q5: Confounders identified? Q6: Strategies to deal with confounders? Q7: Was appropriate statistical analysis used? Q8: Were the study subjects and the setting described in detail?

Quasi-experimental studies risk of bias assessment.

Q1: Is it clear in the study what is the “cause” and what is the “effect”? Q2: Was there a control group? Q3: Were participants included in any comparisons similar? Q4: Did comparison groups receive similar care aside from the intervention? Q5: Were outcomes measured multiple times before and after the intervention? Q6: Were comparison group outcomes measured consistently? Q7: Were outcomes measured in a reliable way? Q8: Was follow-up complete, and were any group differences in follow-up addressed? Q9: Was appropriate statistical analysis used?

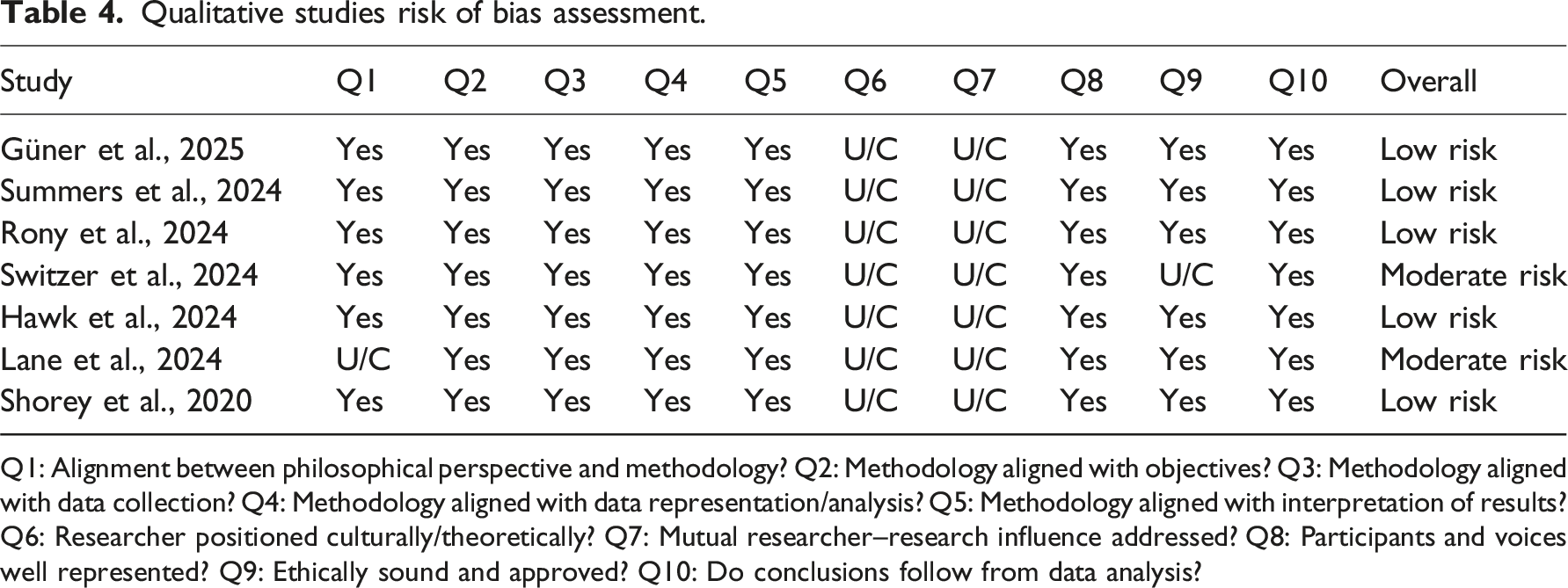

Qualitative studies risk of bias assessment.

Q1: Alignment between philosophical perspective and methodology? Q2: Methodology aligned with objectives? Q3: Methodology aligned with data collection? Q4: Methodology aligned with data representation/analysis? Q5: Methodology aligned with interpretation of results? Q6: Researcher positioned culturally/theoretically? Q7: Mutual researcher–research influence addressed? Q8: Participants and voices well represented? Q9: Ethically sound and approved? Q10: Do conclusions follow from data analysis?

Data abstraction and synthesis

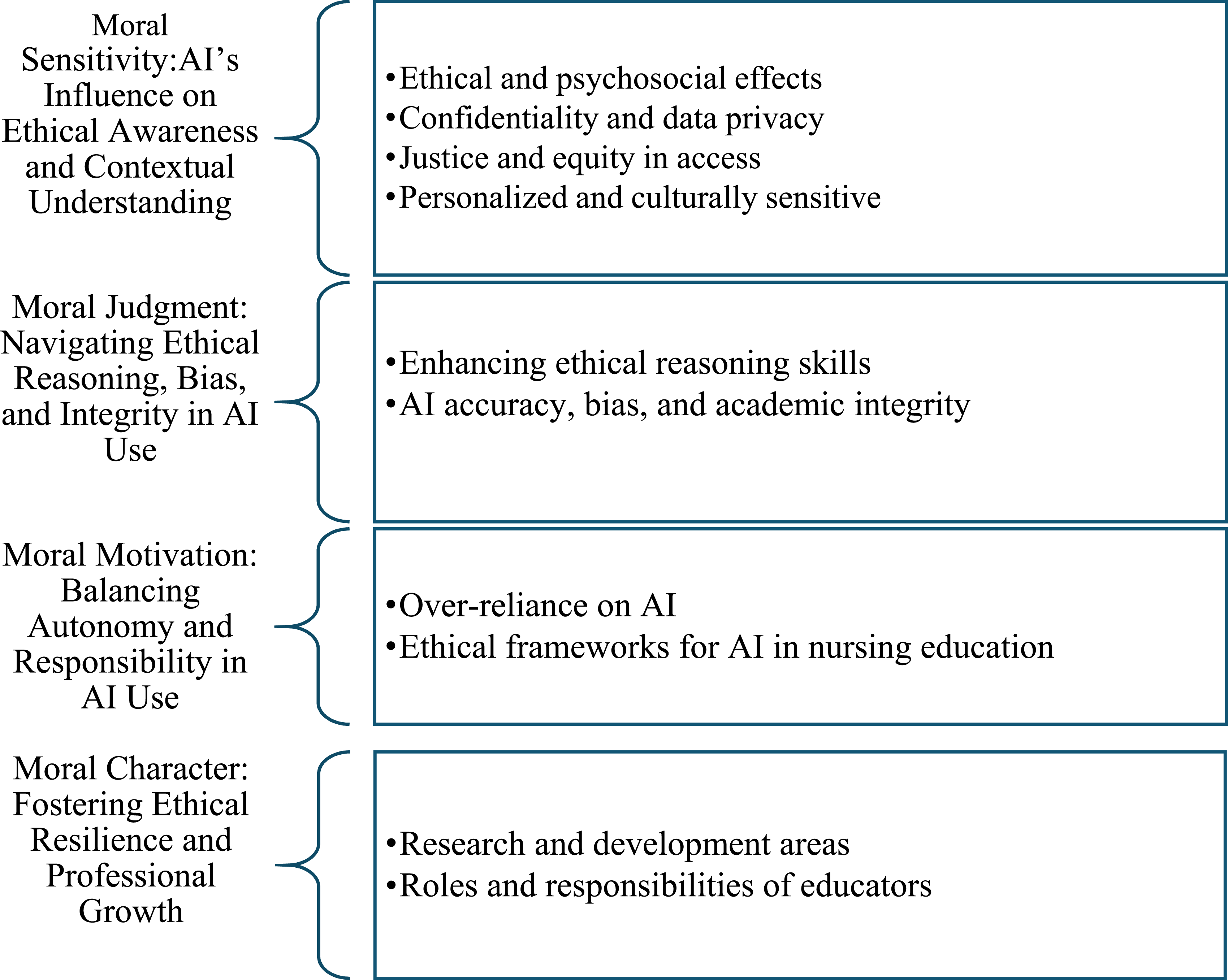

Identifying patterns and synthesizing findings is essential for data abstraction in integrative reviews. 17 To guide the ethical lens of this review, Rest’s Four-Component Model of Moral Behaviour, which includes moral sensitivity, moral judgment, moral motivation, and moral character, was adopted as the conceptual framework. 16 This model informed the data abstraction and synthesis process by offering a structured lens through which to interpret the ethical and psychosocial implications of AI integration in nursing education. In this review, data were analysed using constant comparative analysis, as recommended by Whittemore and Knafl 17 for integrative reviews. This method allows for continuous comparison of data across studies to identify consistencies, variations, and emerging patterns. Initially, the first author compiled extracted data into a structured summary table, which was subsequently reviewed by the entire research team. A process of data immersion followed, during which the first author read the included studies to capture salient features and conceptual similarities. Key concepts were colour-coded and grouped according to their alignment with the four components of Rest’s model. 16 The team collaboratively examined and refined these categories to ensure interpretive rigor and reduce the potential for individual bias. Through collaborative discussions and iterative analysis, subcategories were developed and refined under each major component, ensuring both theoretical alignment and interpretive rigor. The final synthesis was structured around the four components of Rest’s Four-Component Model of Moral Behavior. 16 Under Moral Sensitivity, the themes identified included ethical and psychosocial effects, confidentiality and data privacy, justice and equity in access, and personalized and culturally sensitive approaches. For Moral Judgment, the review synthesized studies addressing the enhancement of ethical reasoning skills and issues related to AI accuracy, bias, and academic integrity. Finally, the component of Moral Character encompassed research and development areas as well as the roles and responsibilities of educators. Within the theme of Moral Motivation, two key topics were identified: over-reliance on AI and the development and implementation of ethical frameworks for AI in nursing education. This structured and iterative process is consistent with established approaches to data synthesis in integrative reviews. 25

Ethical approval

Ethical approval for this review was not required because it involved neither experimentation nor patient involvement in active data collection.

Findings

Characteristics of the included studies

The analysis incorporated a total of 15 studies conducted across eight different countries. Of these, nine studies utilized quantitative, cross-sectional research designs, while the remaining seven employed qualitative, exploratory, and descriptive designs in addition to hermeneutic methodologies. The other six studies are categorized as qualitative in approach. Sample sizes ranged from 3 26 to 1243 20 participants. Studies were conducted in the United States of America (USA) (n = 5), Korea (n = 3), Singapore (n = 2), China (n = 1), Australia (n = 1), Türkiye (n = 1), Bangladesh (n = 1), Morocco (n = 1).

Quality appraisal

All included studies were critically appraised using appropriate JBI checklists based on their methodological design. Among the descriptive and cross-sectional studies, three out of four27–29 demonstrated low risk of bias, with clearly defined inclusion criteria, robust outcome measurement strategies, and appropriate statistical analyses. One study 20 was rated as moderate risk due to unclear reporting regarding exposure measurement and confounding strategies (Table 2). For quasi-experimental studies, all four30–33 were categorized as moderate risk, primarily due to the absence of pre-intervention outcome measurement and incomplete follow-up data, although most maintained clarity in causal assumptions and used appropriate statistical techniques (Table 3). Regarding qualitative studies, the majority21,34–37 were assessed as low risk, with strong alignment between methodology, data collection, and interpretation. However, two studies,26,38 exhibited moderate risk due to a lack of clarity in philosophical positioning, ethical approval reporting, and limited reflexivity (Table 4). Overall, the quality of the included studies was deemed acceptable, with most meeting core methodological standards and contributing reliable insights to the synthesis.

Results of the integrative review

In this review, findings were organized according to Rest’s Four-Component Model of Moral Behavior.

16

Moral Sensitivity included themes such as ethical and psychosocial effects, confidentiality and data privacy, justice and equity in access, and personalized and culturally sensitive approaches. Moral Judgment covered the development of ethical reasoning skills and considerations related to AI accuracy, bias, and academic integrity. Moral Motivation addressed concerns about over-reliance on AI and the importance of establishing ethical frameworks in nursing education. Moral Character involves research and development areas, and the roles and responsibilities of educators in ensuring ethical integration of AI. (Figure 2). Themes and sub-themes.

Moral sensitivity: AI’s influence on ethical awareness and contextual understanding

A total of 14 studies addressed the theme of Moral Sensitivity: AI’s Influence on Ethical Awareness and Contextual Understanding, focusing on the recognition of ethical issues and the contextual impacts of AI on individuals and learning environments. Four sub-themes were identified under this category: Ethical and psychosocial effects, Confidentiality and data privacy, Justice and equity in access, and Personalized and culturally sensitive approaches.

Ethical and psychosocial effects

AI has notable psychosocial impacts on nursing students, influencing their ethical values, attitudes, and professional development.20,30,31,35 Some studies indicate that AI can shape students’ perceptions of professionalism and ethical responsibilities in healthcare, with both positive and negative implications.35,38, Ethical considerations, such as non-maleficence and beneficence, are central to these discussions.20,35 AI can enhance learning outcomes and contribute to professional growth.20,31,35 Some studies indicated that Chat-GPT could be a valuable tool in nursing education by expanding educational resources and offering real-time responses to students’ inquiries, thereby supporting their ethical decision-making and clinical practice.31,35 However, Darnell et al. 33 identified various ethical concerns related to the use of e-learning and virtual patient technology in suicide safety planning education. Nurses expressed frustration with the responses provided by virtual patients, emphasizing the need for more realistic interactions to better prepare them for real-life scenarios. In addition, concerns about its potential for harm such as providing inaccurate or misleading information pose risks to students’ learning and patient care.20,38

Confidentiality and data privacy

Studies highlight concerns regarding the privacy of nursing and patient data in the use of AI in nursing education.27,28,37 Ethical considerations surrounding the security of sensitive information emphasize the critical importance of safeguarding student and patient data.27,28 Rony et al. 35 emphasize the necessity of establishing robust legal and professional frameworks to guide the incorporation of AI into nursing education, ensuring adherence to privacy regulations and maintaining ethical standards. Cho and Seo 29 and Wang et al. 20 emphasize in their studies the necessity of thoroughly understanding the ethical and legal implications of integrating AI into nursing education curricula. Furthermore, ethical concerns related to data security, particularly protecting information collected via AI systems, are deemed crucial in nursing education. 27 While risks such as privacy breaches and data security threats remain significant, establishing ethical guidelines for data use can mitigate these risks.20,37

Justice and equity in access

Integrating AI in nursing education highlights issues of justice and equity in access, particularly affecting students in resource-limited settings.30,33 Access disparities create ethical challenges, as limited access to AI tools such as Chat-GPT may prevent equal learning opportunities for all students.33,37 This lack of access can lead to feelings of exclusion or resistance toward AI, especially for students who face technological barriers.21,30 While AI promises to bridge educational gaps, particularly in underserved regions, ensuring equitable access remains a central ethical concern.30,33 Addressing these challenges requires proactive strategies to foster positive engagement with AI while carefully managing and mitigating disparities in access.29,32

Personalized and culturally sensitive

Darnell et al. 33 and Shorey et al. 37 emphasized the importance of balancing cultural awareness with personalized learning in nursing education to create an inclusive and effective educational environment through AI. While addressing cultural diversity, AI systems must also focus on realistic and adaptive interactions, tailoring educational content to learners’ individual needs, preferences, and personality traits.21,30 By incorporating culturally relevant information alongside personalized simulations and feedback, AI might support students in developing critical thinking and decision-making skills essential for diverse healthcare settings. 21 Shorey et al. 32 highlighted the significance of cultural appropriateness in the design of AI-supported clinical scenarios in nursing education. Their qualitative study on virtual reality-based communication training revealed that students responded more positively and effectively when learning scenarios reflected culturally relevant patient interactions, such as appropriate communication styles, beliefs about health and illness, and context-specific expressions of empathy. The authors suggest that incorporating cultural nuances such as language use, non-verbal cues, and patient values can make AI-enhanced learning environments more relatable and realistic. 32 Such culturally grounded design fosters greater emotional engagement, improves students’ readiness for practice in multicultural settings, and supports the development of culturally competent care.

Moral judgment: Navigating ethical reasoning, bias, and integrity in AI use

All the studies (n:15) addressed the theme of Moral Judgment: Navigating Ethical Reasoning, Bias, and Integrity in AI Use, focusing on how individuals evaluate and decide on the most ethical course of action in response to AI-related dilemmas. Two sub-themes were identified under this category: Enhancing ethical reasoning skills and AI accuracy, bias, and academic integrity.

Enhancing ethical reasoning skills

AI, has implications for ethical decision-making in clinical education and nursing practice,29,31 While it might support students in developing ethical reasoning skills by simulating complex dilemmas and offering multiple perspectives, concerns exist about its potential to reduce critical thinking and foster over-reliance on technology. 36 Some studies suggest that AI can help students make informed decisions, yet it may also risk promoting dependency.21,30,35,36 In addition to these findings, in their quasi-experimental study, Shin et al. 30 aimed to examine the effects of AI-assisted learning on nursing students’ ethical decision-making and clinical reasoning abilities. The findings indicated that students in the control group, who engaged with traditional textbooks, demonstrated significantly greater proficiency in critical thinking and in formulating and applying ethical standards compared to their counterparts in the experimental group who utilized ChatGPT. 30

AI accuracy, bias, and academic integrity

AI-supported technologies in nursing education present significant concerns regarding accuracy, bias, and academic integrity.29,35,36 Inaccurate or biased AI-generated content poses risks, particularly in clinical contexts where misinformation could lead to harmful outcomes. Incorrect or biased AI-generated content can mislead students, potentially leading to severe consequences in clinical settings.29,35,36 AI systems may reflect biases from their training data, affecting the fairness and reliability of information in healthcare education.29,35 Regular updates and validation of these tools are essential to mitigate these risks. 36 In addition, Lane et al. 38 and Summers et al. 34 highlight the risks associated with using AI in nursing education, noting that rapid content generation may lead nursing students to avoid traditional learning processes, thereby undermining critical thinking. They also address the potential for AI to increase the risk of plagiarism and unethical practices in academic work.

Moral motivation: Balancing autonomy and responsibility in AI use

A total of 10 studies addressed the theme of Moral Motivation: Balancing Autonomy and Responsibility in AI Use, which refers to the internal drive to act ethically by prioritizing moral values over personal gain or convenience. Two sub-themes were identified under this category: Over-reliance on AI and Ethical frameworks for AI in nursing education.

Over-reliance on AI

The use of AI in nursing education raises concerns about the potential over-reliance on AI as an infallible source of knowledge.30,38 Ethical considerations revolve around the risk of students and educators becoming overly dependent on AI for decision-making, which could hinder the development of independent problem-solving skills essential in nursing practice.29,35 Studies suggest that excessive reliance on AI tools for answers may undermine students’ critical thinking abilities and clinical judgment, limiting their capacity to make autonomous decisions in clinical settings.21,29,34,35 However, ethical strategies to prevent over-reliance on AI systems in students’ learning processes are essential.21,38 Some studies indicate that integrating AI with traditional teaching methods can help mitigate these risks by promoting a balanced approach, ensuring that AI is a supportive tool rather than a replacement for independent critical reasoning and decision-making.20,29,35

Ethical frameworks for AI in nursing education

Studies have shown that AI technologies require the integration of ethical decision-making frameworks to ensure responsible usage.35,37,38 Clear ethical guidelines are essential to govern the application of AI tools, such as Chat-GPT, within nursing curricula.28,29,36 These frameworks should address critical concerns, including data privacy, the potential for misinformation, and cultural sensitivity.27,28,32,33 It is crucial to prepare nursing students to engage with AI in an ethically responsible manner, ensuring they understand the implications of AI-assisted decision-making, particularly in contexts that may involve vulnerable populations.29,37,39 Güner et al. 21 and Summers et al. 34 found that these ethical guidelines would serve as a foundation for responsible AI use, promoting trust and safeguarding against misuse in educational settings.

Moral character: Fostering ethical resilience and professional growth

The theme of Moral Character: Fostering Ethical Resilience and Professional Growth was evident in 11 studies, focusing on the perseverance, integrity, and moral courage required to translate ethical decisions into consistent action. Two sub-themes were identified under this category: Research and development areas and Roles and responsibilities of educators.

Research and development areas

According to Hawk et al. 36 and Lane et al. 38 research on AI integration within nursing education should address the need to enhance both the educational benefits and the ethical standards of its application. Research should explore the development of AI tools that improve educational outcomes while addressing vital ethical concerns, such as data privacy, bias, and cultural sensitivity.31,38 Additionally, AI’s potential to promote critical thinking, support clinical decision-making, and foster cultural competence in nursing education should be further investigated.29,31 Some studies included in this review highlight that ensuring that AI tools align with ethical frameworks will be essential in guiding their responsible use and maintaining trust in nursing education and practice.21,24,31,35

Roles and responsibilities of educators

Arcia 28 and Cho and Seo 29 emphasize in their studies that the role of educators in nursing education extends beyond teaching technical skills; they are crucial in guiding students through the ethical complexities of AI integration. Educators are responsible for providing clear ethical frameworks that govern AI usage in nursing, ensuring students understand the potential risks and benefits.27,33,35 Through AI integration, educators can foster the development of ethical decision-making skills, encouraging students to engage in ethical reasoning when interacting with AI technologies.27,29,33–35 Studies have shown that this includes promoting awareness of critical ethical considerations such as data privacy, misinformation, and cultural sensitivity.27,28,32,33 Educators must also ensure that students are prepared to recognize and mitigate the potential risks associated with AI tools.28,29 By creating an environment that supports ethical reflection, educators help students develop the necessary skills to make sound ethical decisions in healthcare, ultimately ensuring the responsible use of AI in clinical practice.28,29,31

Discussion

The findings of this integrative review offer a comprehensive ethical analysis of how AI-powered tools, such as ChatGPT, intersect with the moral decision-making processes in nursing education. Drawing upon Rest’s Four-Component Model of moral behavior, moral sensitivity, moral judgment, moral motivation, and moral character, this discussion explores how the reviewed studies reflect these core ethical dimensions. The thematic findings are interpreted through this model to better understand how generative AI tools may support or challenge the development of ethical competencies in nursing students.

Moral sensitivity: AI’s influence on ethical awareness and contextual understanding

Moral sensitivity involves recognizing how actions affect others, particularly in ethically ambiguous situations. Several studies emphasized how AI tools influence students’ emotional and ethical awareness (Ethical and psychosocial effects). For instance, AI was shown to impact students’ perceptions of professionalism and ethical responsibility by facilitating simulations that provoke ethical reflection and emotional engagement with patient-centered dilemmas.20,30,31,35 However, these effects varied; while some students experienced enhanced awareness, others reported disconnection or confusion due to depersonalized interactions with AI.35,38. Confidentiality and data privacy concerns further highlight the importance of moral sensitivity in recognizing the consequences of AI misuse. Studies documented ethical dilemmas surrounding the security of sensitive nursing and patient information, underscoring the necessity for students to be sensitized to privacy issues.27,28,32 Similarly, justice and equity in access emerged as a moral sensitivity issue. Limited or unequal access to AI tools among students from diverse socioeconomic backgrounds risked creating educational disparities.30,33 Personalized and culturally sensitive applications of AI were also viewed as essential for supporting ethical awareness, with culturally appropriate AI-enhanced scenarios fostering empathy and inclusion.21,32

Moral judgment: Navigating ethical reasoning, bias, and integrity in AI use

Moral judgment entails evaluating various actions and determining the most ethical choice. Studies reporting on the sub-theme Enhancing ethical reasoning skills suggested that AI might support moral judgment development by exposing students to multifaceted ethical scenarios.31,34,36 However, findings were mixed. Hawk et al. 36 suggested that AI tools can simulate ethical complexity, but Shin et al. 30 showed that traditional instruction better supported students’ critical ethical reasoning.30,34 In light of these contradictions, a more modest interpretation is warranted AI might support ethical reasoning under the right conditions but cannot substitute structured, guided ethical training.27,29 Concerns related to AI accuracy, bias, and academic integrity further underscore the challenges in ethical judgment. Studies noted that biased or misleading AI outputs could compromise students’ ability to make sound ethical decisions.29,35,36 Additionally, risks such as plagiarism and shortcut learning were reported, highlighting the need for nursing students to critically evaluate AI-generated information rather than accepting it at face value.34,38

Moral motivation: Balancing autonomy and responsibility in AI use

Moral motivation involves prioritizing ethical values over personal gain. The theme of Over-reliance on AI directly relates to this dimension. Several studies indicated that students might develop a dependency on AI tools for decision-making, which risks displacing intrinsic ethical motivation with technological shortcuts.21,35,38 Some participants reported a preference for AI-driven solutions, even when critical thinking would have yielded more robust answers.30,38 Educators must therefore cultivate moral motivation by framing AI as a complementary tool and reinforcing student responsibility. 29 Frameworks and guidelines for the ethical use of AI in nursing education also play a vital motivational role. Studies highlighted the absence of institutional ethical frameworks as a significant gap.28,37,38,40 These frameworks are essential to motivating students and educators to reflect on their ethical obligations when interacting with AI technologies.

Moral character: Fostering ethical resilience and professional growth

Moral character represents perseverance to act ethically despite challenges. Studies aligned under the research and development areas identified a need for sustained inquiry into how AI affects long-term professional identity, ethical development, and decision-making resilience.29,36,38 For example, the qualitative study by Summers et al. 34 emphasized that self-reports often reflect confirmation bias, raising concerns about the durability of ethical behavior in AI-supported learning. Several studies proposed that AI tools could be purposefully designed to strengthen ethical resilience by adapting to learners’ developmental trajectories and incorporating feedback mechanisms that address cognitive distortions.21,31,35 The role of educators was also viewed as essential in reinforcing moral character.28,29,35 Through structured discussions, scenario-based learning, and guided reflection, educators can actively support the cultivation of moral courage and integrity among nursing students, ensuring that ethical reasoning is not only developed but also maintained in practice. Furthermore, as reported by Shorey et al. 32 students expressed concern that automated responses lacked human sensitivity and empathy, potentially distorting their understanding of professional communication. Conversely, Rony et al. 35 noted that exposure to AI-supported decision-making tools enhanced students’ awareness of accountability and beneficence. These findings illustrate how AI influences students’ understanding of professionalism and ethical responsibility, enhancing awareness of accountability on the one hand, while risking reduced empathy and overreliance on automation on the other.

Finally, interpreting the findings through Rest’s Four-Component Model 16 deepens our understanding of how AI impacts the ethical dimensions of nursing education. Moral sensitivity was influenced by psychosocial and contextual factors such as privacy and equity. Moral judgment was shaped by AI’s potential to both support and compromise ethical reasoning. Moral motivation was tested by the risk of over-reliance and a lack of structured guidance. Finally, moral character was associated with long-term ethical behavior and professional integrity. To ethically integrate AI into nursing curricula, educators and institutions must create learning environments that deliberately engage each component of ethical decision-making. This approach will better prepare nursing students to navigate the complex ethical terrain of digital health education with competence and integrity.

Limitations

This integrative review has several limitations that should be considered when interpreting the findings. First, only English-language, peer-reviewed studies were included, which may limit the generalizability of the findings to non-English-speaking and culturally diverse contexts. Second, the geographic concentration of studies from specific countries may not fully reflect variations in nursing curricula and AI integration across global educational systems. Third, the methodological diversity of the included studies, ranging from descriptive cross-sectional designs to quasi-experimental approaches, presented challenges in applying a uniform quality appraisal. To address this, each study was evaluated using the JBI critical appraisal checklist most appropriate for its design, ensuring methodological rigor and consistency across the review. Fourth, several studies relied heavily on self-reported perceptions and experiences regarding AI use, which introduces the possibility of reporting bias, confirmation bias, and social desirability bias. For example, in qualitative studies such as Summers et al., 34 the absence of objective performance measures limits the assessment of actual ethical behavior. Fifth, one included qualitative study 21 involved a mixed group of health sciences academics. Although only content relevant to nursing education was extracted, the inability to fully disaggregate the responses of nurse educators from other participants limits attribution of these findings solely to the nursing profession. Finally, the primary focus on ChatGPT may restrict the transferability of findings to other AI tools with differing capabilities or interfaces. These limitations underscore the need for future research that incorporates a broader linguistic, methodological, technological, and disciplinary scope to more comprehensively assess the ethical implications of AI integration in nursing education.

Implications for research and practice

This review highlights several critical implications for future research and practice integrating AI into nursing education. Future studies should prioritize developing comprehensive ethical frameworks that address key challenges such as data privacy, algorithmic transparency, and bias mitigation. These frameworks should ensure robust personal and clinical data protection while fostering trust in AI technologies. Longitudinal research is also necessary to evaluate the effects of AI on students’ ethical reasoning, critical thinking, and decision-making skills across diverse cultural and educational settings. Nursing education programs must equip educators with the skills to integrate AI tools into curricula effectively. Educators play a pivotal role in guiding students on the ethical use of AI, highlighting its benefits while mitigating risks such as over-reliance and misinformation. Furthermore, proactive strategies must address the risks of algorithmic bias, which can undermine trust and lead to inequities in educational outcomes. Ensuring equitable access to AI technologies, particularly in resource-limited regions, fosters inclusivity and reduces disparities.

Conclusion

This integrative review comprehensively examines the impact of AI and their applications on ethical reasoning and decision-making processes within nursing education. While highlighting the potential of AI to enhance ethical reasoning skills, the review also underscores key ethical challenges, including data privacy, over-reliance on AI, algorithmic biases, academic integrity, and inequities in access. Notably, tools like ChatGPT are recognized for their ability to support learning by offering students diverse perspectives on complex ethical scenarios. However, the risks of misinformation and the potential erosion of independent thinking skills are also emphasized. The current review further highlights the importance of designing AI systems to enhance cultural sensitivity and ensure adaptability for effective use in diverse cultural contexts. Looking ahead, the review stresses the need for ethical frameworks to guide AI integration, the critical role of educators in providing ethical guidance, and the expansion of research and development efforts to address these ethical challenges. These findings indicate that AI in nursing education should be viewed not merely as a technological innovation but as a tool that supports ethical sensitivity and cultural competence. This review clearly demonstrates the necessity of careful planning and implementation for the ethical integration of AI, ensuring that its adoption in nursing education aligns with both technological advancements and the core ethical values of the profession.

Supplemental Material

Supplemental Material - Ethical decision-making and artificial intelligence in nursing education: An integrative review

Supplemental Material for Ethical decision-making and artificial intelligence in nursing education: An integrative review by Tuba SENGUL, Seda SARIKÖSE, and Asiye GÜL in Nursing Ethics.

Footnotes

Acknowledgements

The authors thank the Head of Koç University Health Sciences Library (Ertaç Nebioğlu) for his support throughout the literature review.

Author contributions

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Data availability statement

Data sharing is not applicable to this article as no datasets were generated or analyzed during the current study.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.