Abstract

Palliative care aims to improve the quality of life for seriously ill individuals and their caregivers by addressing their holistic care needs through a person- and family-centered approach. While there have been growing efforts to integrate Artificial Intelligence (AI) into palliative care practice and research, it remains unclear whether the use of AI can facilitate the goals of palliative care. In this paper, we present three hypothetical case examples of using AI in the palliative care context, covering machine learning algorithms that predict patient mortality, natural language processing models that detect psychological symptoms, and AI chatbots addressing caregivers’ unmet needs. Using these cases, we examine the ethical dimensions of utilizing AI in palliative care by applying five widely accepted moral principles that guide ethical deliberations in AI: beneficence, nonmaleficence, autonomy, justice, and explicability. We address key ethical questions arising from these five core moral principles and analyze the potential impact the use of AI can have on palliative care stakeholders. Applying a critical lens, we assess whether AI can facilitate the primary aim of palliative care to support seriously ill individuals and their families. We conclude by discussing the gaps that need to be further addressed in order to promote ethical and responsible AI usage in palliative care.

Introduction

The integration of artificial intelligence (AI) into healthcare has seen great advances, especially in supporting disease detection, clinical decision-making, health services management, and prognosis prediction. 1 Studies have shown that state-of-the-art AI may demonstrate comparable performance to clinicians in image recognition and even occasionally outperform medical students in open-ended medical examinations.2,3 Such advancements imply that AI has the potential to provoke revolutionary changes to the structure of how patient care is provided.

A healthcare field that has not yet been largely affected by the advancements of AI is palliative care.4,5 Palliative care is specialized care that aims to reduce the suffering of patients with life-threatening illnesses and their families. 6 It often focuses on symptom management but also on other aspects of person-centered care and supports decision-making, regardless of the diagnosis and disease stage. 7 In this context, AI could be integrated into practice or research to promote timely palliative care delivery and personalize support to care recipients. For instance, providers could identify patients who would benefit from advanced care planning (ACP) using algorithms that predict patients’ prognoses. 8 AI can also be used to review clinical notes and detect signals of patients’ psychological distress or other symptoms.9,10 Furthermore, considering the current popularity and accessibility of generative AI (e.g., OpenAI’s ChatGPT), caregivers could utilize these tools to address their informational needs.

As AI is becoming increasingly pervasive within society, use of AI in palliative care is not simply a technical innovation but rather a socio-technological phenomenon, comprising both technological (e.g., information structure of the AI model) and sociological (e.g., how AI is comprehended by users and how it changes their workflow) integrations. 11 Therefore, an examination of its ethical dimensions is needed to understand how the use of AI may impact seriously ill individuals and other stakeholders, while also determining whether AI can strengthen the person-centered intent of palliative care. However, recent reviews exploring AI in palliative care have focused more on technical aspects, assessing the purpose for which the tool was developed, its training data, methodologies, and model performance.4,12,13 This leaves a crucial void surrounding whether the use of AI is ethically sound in the context of palliative care.

Therefore, in this paper, we present three case examples of AI application in the field of palliative care and analyze the ethical dimensions based on five moral principles that are widely accepted to guide ethical decision-making and are directly relevant to AI (i.e., beneficence, nonmaleficence, autonomy, justice, and explicability). 14 By addressing key questions based on these core moral principles, we identify opportunities and concerns associated with the use of AI in palliative care and introduce insights on how to develop and deploy AI in a way that facilitates the goal of palliative care.

What is Artificial Intelligence?

AI is a field of computer science that develops systems to imitate human-like behaviors, such as learning, reasoning, and self-correction. 15 Alan Turing’s work on the Turing Test laid the theoretical foundation of AI, considering a machine to be “intelligent” if a human judge cannot distinguish its responses from those of a human. 16

In this article, we specifically focus on machine learning and natural language processing, which are both computer-based technologies under the scope of AI. Machine learning is a set of techniques and tools used to classify, make predictions, or cluster groups based on similar characteristics, 17 enabling data-driven decisions or automations. On the other hand, natural language processing transforms unstructured data (e.g., human narrative) into structured data, allowing computers to derive meaning from human language. 18 This forms the basis for computers to perform various language-related tasks, including chatbot development, language translation, information extraction and summarization, and spam detection. 19

Principles for ethical AI

As the development and deployment of AI has had major impacts on society, there is a growing need to establish ethical principles to adopt AI in a way that can benefit society. Based on a review of high-profile initiatives, Floridi and Cowls put together a unified framework on what constitutes “ethical AI.” 14 This framework, built upon principlism, 20 includes core ethical principles widely accepted in bioethics: beneficence, nonmaleficence, autonomy, and justice. In the context of AI, Floridi and Cowls illustrated beneficence as underscoring the role of AI in promoting the well-being of people and nonmaleficence as focusing on preventing breaches of personal privacy and AI misuse. Autonomy emphasizes human agency in choosing their own decisions, while justice involves eliminating discrimination and unfairness and also preserving unity. However, recognizing that these four principles alone do not fully translate all ethical challenges associated with the use of AI, Floridi and Cowls also proposed a fifth principle: explicability. Explicability encompasses both the epistemological aspect of understanding how AI works and the ethical aspect of accountability. These five principles altogether encapsulate the guidance provided by expert-driven documents for adopting ethical and socially beneficial AI. 14

Case examples of utilizing AI in palliative care

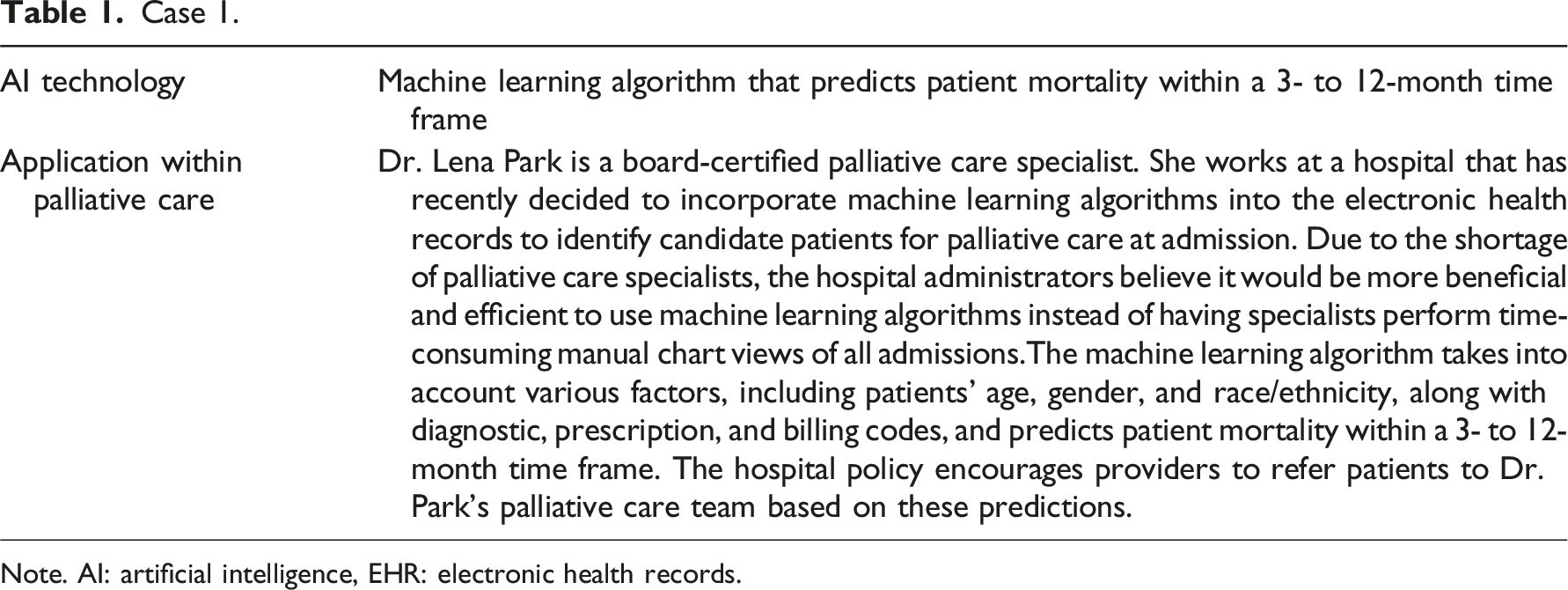

Case 1.

Note. AI: artificial intelligence, EHR: electronic health records.

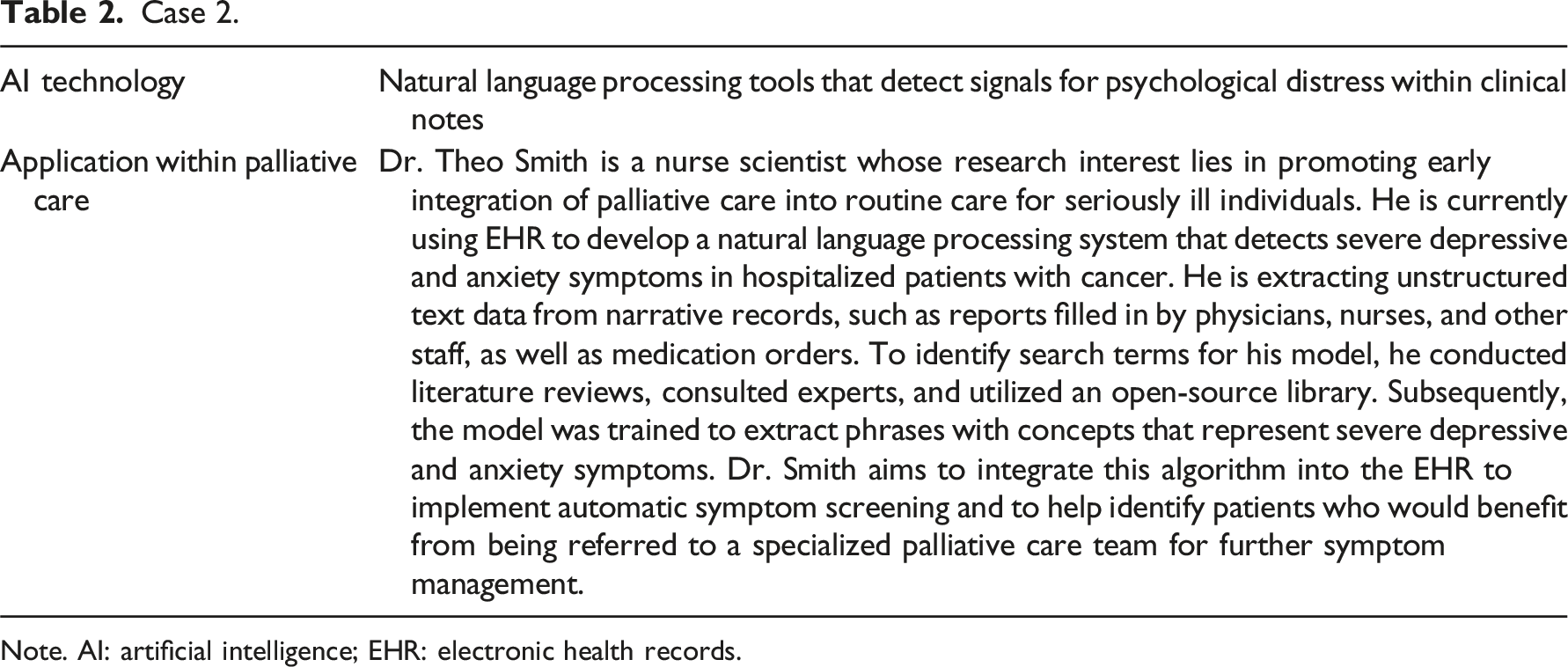

Case 2.

Note. AI: artificial intelligence; EHR: electronic health records.

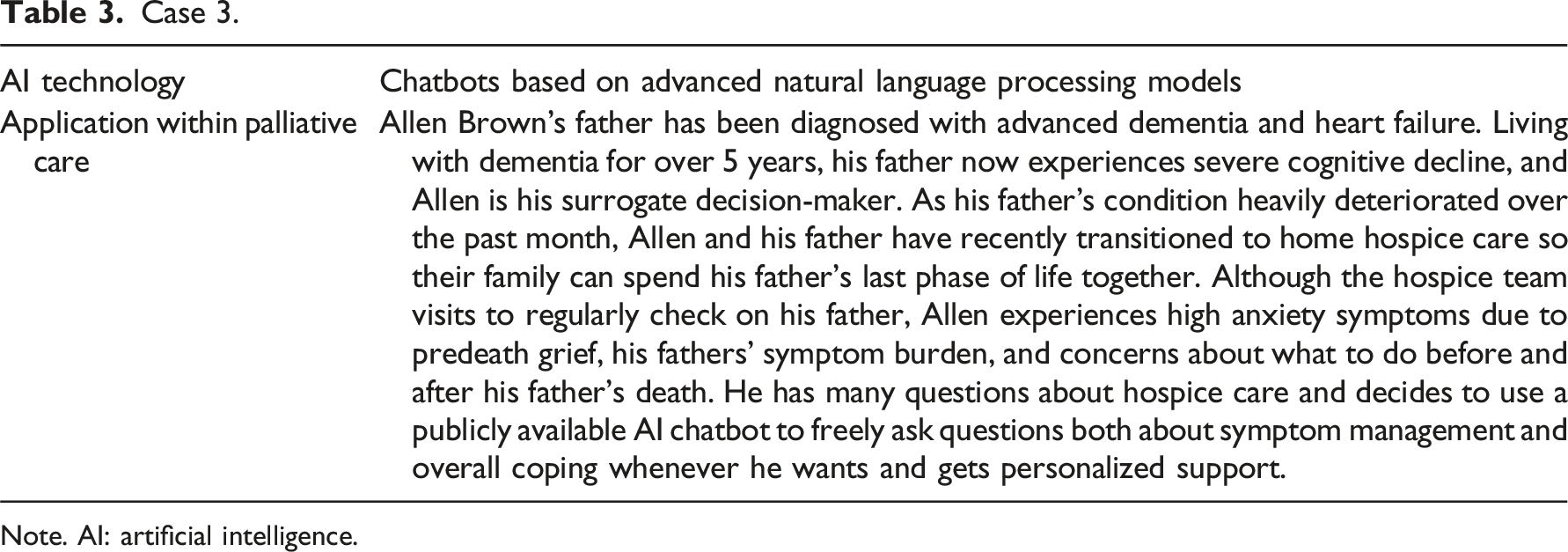

Case 3.

Note. AI: artificial intelligence.

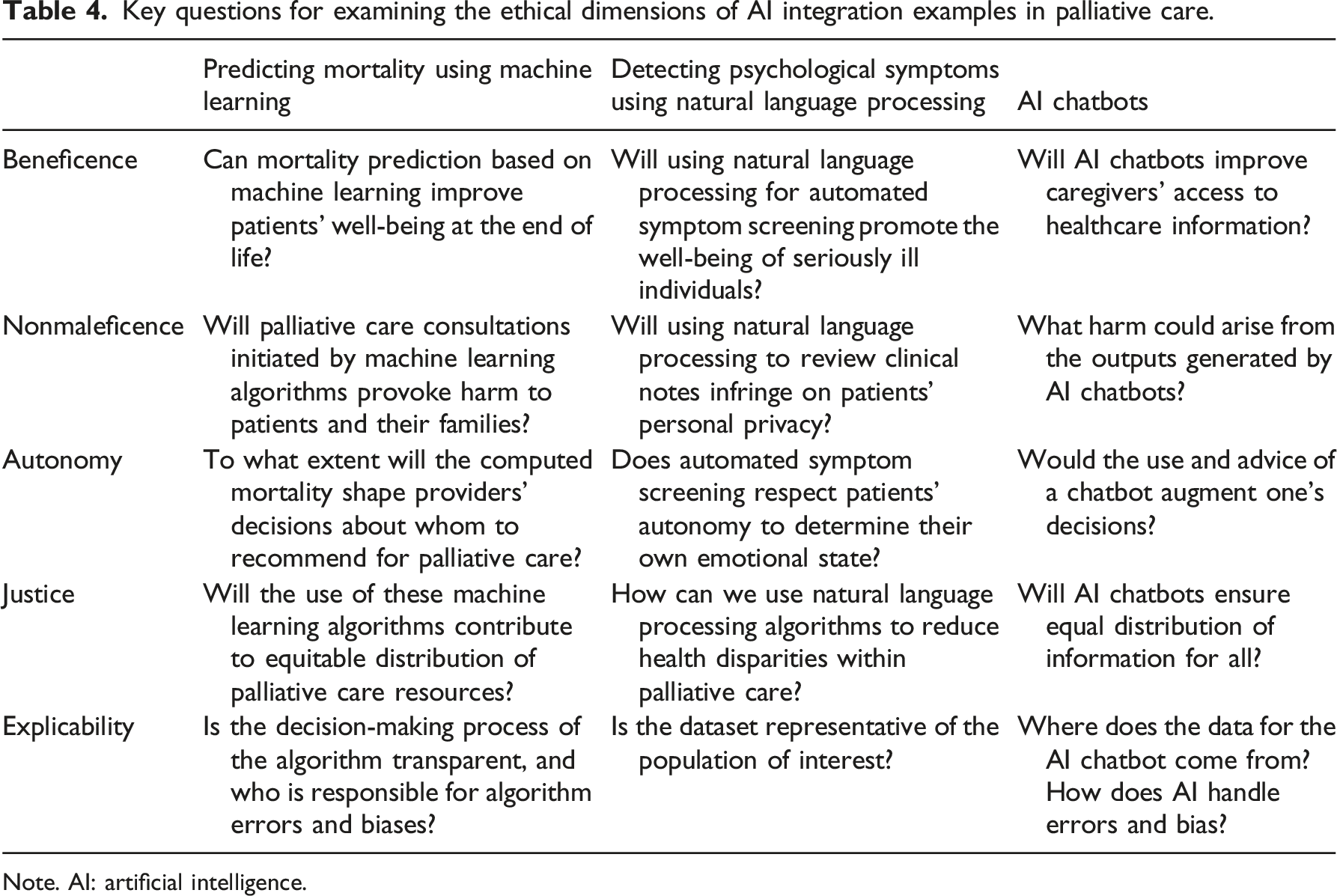

Key questions for examining the ethical dimensions of AI integration examples in palliative care.

Note. AI: artificial intelligence.

Case 1. Mortality-predicting machine learning algorithms used for palliative care referrals

To date, mortality prediction has been the most common purpose of applying AI in palliative care.4,12 Regarding beneficence, mortality-predicting algorithms may promote patient well-being by prompting opportunities for goals-of-care discussions and ACP. Previous research underscores the benefits of discussing goals of care and preferred treatment throughout the course of a serious illness. These include improved quality of life, reduced use of life-sustaining treatments, and increased utilization of hospice and palliative care.21,22 Nonetheless, providers find it difficult to initiate ACP in real-world practice, often due to time constraints, uncertain prognosis, patient and family disagreements, and lack of knowledge and skills in managing ACP.23,24 Case 1’s algorithm could act as a nudge to prompt ACP specifically tailored to the patients’ illness trajectory and ensure that no high-risk patients are being missed in the system. 25 This would be particularly beneficial for high-risk patients who lack access to or awareness of palliative care resources.

However, these AI applications are not free from potential patient harm, because computed predictions may cause distress to seriously ill individuals and families. Some patient-caregiver dyads may feel uneasy about palliative care consultations being initiated based on an algorithm rather than a provider’s assessment, especially if they struggle with accepting terminality. Discomfort caused by the perceived absence of personal interaction in the patient-provider relationship could later escalate to heightened perceptions of mistrust in the healthcare system. For historically marginalized populations, mistrust is already identified as a barrier to palliative care, 26 and when combined with a lack of confidence in AI, it may worsen their sense of institutional and provider-related biases. Conversely, there may be other cases where patients hear about or read the specific numbers produced from the AI model (e.g., 180-day mortality of 30%) and decide that it is too great a risk and refuse possibly beneficial treatments. While patients can, and sometimes do, also refuse clinical care without AI involvement, the influence of AI is unique and one where the ethical, technological, and pragmatic day-to-day challenges have not been fully addressed. The quality of the output depends on the quality of the data used to train and test the model. If the data itself represent certain biases, then these algorithms will reintroduce them. 27 Deploying a biased algorithm in practice may perpetuate harm, especially to the patients whose characteristics differ from the training data. False positives can unnecessarily lead to distress and/or impact care decisions, while false negatives contribute to a systematic oversight of high-risk patients.

Another crucial consideration is the extent to which these mortality-predicting algorithms can shape providers’ decision-making. The algorithms could complement providers’ prognostic intuition or offer additional insights.25,28 However, solely relying on this metric for identifying potential palliative care recipients can subtly sway providers’ focus toward patient survival as the primary outcome of interest. Palliative care is inherently holistic, taking into account the patients’ psychosocial well-being, spirituality, and culture, 6 and high mortality risk may not always correspond with palliative care needs. The diversion from the goal of palliative care can be more pronounced when involving automation bias (i.e., overreliance on automated context and ignoring other information, including one’s own judgment) or confirmation bias (i.e., tendency to accept information that aligns with one’s previous hypothesis or beliefs). These cognitive biases triggered by the use of AI can further interfere with providers’ agency in decision-making.

As palliative care is not solely for those facing imminent death, allocating palliative care resources primarily based on mortality jeopardizes its inclusive scope and raises ethical concerns on who should benefit from this resource and who makes that determination. For instance, the National Comprehensive Cancer Network identified several problems that might indicate a patient’s need for palliative care, including uncontrolled symptoms, patient or family distress related to the diagnosis and treatment, and challenges in goal-setting or decision-making. 29 Offering palliative care based on mortality and training algorithms accordingly can imply a prioritization of those at end of life and disregard others who may not be at end of life but nonetheless have greater needs for palliative care. Therefore, while integrating mortality-predicting algorithms may increase the volume of referrals to palliative care, it does not guarantee that patients with high palliative care needs will receive adequate access. Instead, given the great shortage of palliative care specialists, 30 without clear alternatives, these tools may inadvertently burden the few specialists available by directing a greater number of referrals for critically ill patients facing imminent death.

Although machine learning algorithms have become sophisticated in predicting outcomes, the major obstacle of deploying such tools in practice is that we cannot understand why the model made a certain prediction. This makes the algorithm seem like a “black box”—it is difficult to see what is happening inside. When providers cannot understand the logic behind the algorithm’s decision-making process, they are unable to judge whether or not the results are trustworthy. 31 This lack of transparency becomes especially problematic when end-users nevertheless over-rely on the technology. It leaves them vulnerable to the harm associated with potential algorithmic errors and biases, without any clear entity to take responsibility for these mistakes.

In summary, Case 1’s AI-based mortality prediction may help facilitate goals-of-care discussions and ACP for high-risk patients, personalized to their illness trajectory. Nonetheless, if mortality were to be the standard parameter for offering palliative care, it conflicts with the ethical principle of justice due to unbalanced resource allocation and the equitable treatment of patients. It may also increase the burden on the limited number of palliative care providers, create discomfort for patients and families due to AI-initiated referrals, and affect providers’ decision-making processes. Moreover, the potential lack of transparency in the algorithms’ decision-making process further exacerbates these concerns.

Case 2. Natural language processing systems detecting psychological distress

Symptom management is an essential component of palliative care. Automated symptom screening based on AI may, to some degree, correspond with the principle of beneficence by improving patient well-being through timely symptom management. Given the descriptive nature of affective symptoms, related data are often documented as free-text in the electronic health records (EHR). Even when patients do not directly report the presence of psychological distress, its indicators may be embedded in the text documented by the medical staff. Thus, Case 2’s tool can help capture signals of emotional distress and save time and costs by not having to manually review volumes of clinical notes accumulated throughout patient care. 18 However, the algorithm in Case 2 focuses only on a limited set of predefined symptoms. Palliative care addresses the holistic needs of patients (e.g., physical, emotional, financial, spiritual), 6 but the algorithm’s narrow focus might fail to capture the complex nature of patients’ experience with a serious illness. While the use of this algorithm may benefit certain patients with emotional distress, in the palliative care context, this approach does not fully support its comprehensive nature.

Case 2 also raises privacy concerns as it is unclear whether patients are sufficiently informed that third-party researchers can access their clinical notes. Although de-identified, narrative documentations reviewed for potential palliative care referrals may still include personal information about patients and their families, especially when discussing distressful life events or sharing raw emotions. Concerns about serious illness and one’s end of life is particularly sensitive with expressions of fears, loneliness, grief, and insecurities about death. 32 Despite this vulnerability, researchers working with secondary EHR data are typically not required by institutional review boards to re-contact patients for informed consent. Although patients have the right to opt out of having their data used for secondary purposes, there are also cases where patients are not aware of such options or that their inclusion in the EHR automatically makes them eligible for a future study, as described by Ulrich et al. 33 Therefore, patients and families remain unaware of the specific research objectives and potential algorithms that could be built using their health information. These issues raise concerns about the original consent for the secondary use of health data, as patients may not fully comprehend the implications, let alone be aware of such use.

Regarding patient autonomy, automated symptom screening based on an AI algorithm may not fully embrace the individualized nature of psychological distress experienced by each person. Unlike structured questionnaires that allow respondents to choose the severity of their depressive or anxiety symptoms, natural language processing systems detect terms or context learned from the training data. 10 This approach may not consider the subjective nature of certain symptoms, as patients may describe a stressful event without necessarily feeling that they are experiencing stress themselves. Each person possesses a unique threshold for resilience to distress, and thus they retain the power to determine their own emotional state. Although some patients may indeed be distressed without realizing it, an algorithm making this indication on their behalf mitigates their right to self-determination and conflicts with the person-centered approach of palliative care.

Nonetheless, natural language processing systems designed to detect symptoms can contribute to identifying systematic injustice in symptom management. Using these algorithms, researchers can more readily analyze symptom data to identify patient subgroups experiencing unequal access to symptom management and palliative care. These findings could then inform targeted interventions to address health disparities within the field. Striving for equitable distribution of palliative care resources and ensuring individuals, regardless of their background or demographics, receive the support they need aligns with the principle of justice.

However, it is important to note that if the data are not representative of the population of interest, employing these algorithms will not rectify health disparity in palliative care. It will rather exacerbate it. If the medical center in Case 2 primarily represents a specific demographic or socioeconomic group, the model’s prediction will be skewed toward that group. This unintended prioritization, combined with the black-box issue, can reinforce existing biases.

Case 2’s use of natural language processing to detect signals of anxiety and depression in clinical notes may contribute to timely management of these particular symptoms and help researchers identify health disparity gaps within palliative care. However, these benefits rely on the assumption that the data accurately represent the population of interest. Furthermore, the signals identified by these algorithms may not align with patients’ actual perceptions or experiences. To support the holistic and person-centered approach of palliative care, the full scope of one’s symptom experience has to be considered, while also safeguarding privacy concerning patient distress.

Case 3. AI chatbots addressing unmet information needs in palliative care

AI chatbots that generate human-like responses have been receiving great attention, especially after the public release of OpenAI’s ChatGPT. Chatbots such as ChatGPT not only respond to user queries at any time, but they also customize the responses based on user preferences and can perform tasks such as summarizing or comparing lengthy literature. Mirza et al. 34 recently introduced the potential of utilizing ChatGPT to adjust the reading level of medical documentations, thereby improving readers’ comprehension. This additional layer of support for information is especially important in the field of palliative care, given the lack of awareness and knowledge regarding the specialty among the general public. 35

However, the responses of AI chatbots are not always accurate, which may create more harm than benefit. For instance, Kim et al. asked ChatGPT, Microsoft Bing Chat, and Google Bard to differentiate palliative, supportive, and hospice care and provide references. Although the responses were generally accurate, some errors and omissions were still present, and unreliable references were provided. 36 The risk of inaccuracy, when combined with limited knowledge of palliative care and the lack of specialists to validate the chatbots’ responses, can amplify the misconceptions that already exist. This is also worrisome for those in hospice care as terminally ill patients may be less able to advocate for themselves due to their serious condition. 37

The more urgent concern is the possibility of users’ overreliance on these chatbots without exercising their autonomy in decision-making. Questions asked in palliative care often go beyond symptom management; they also address patient care issues intertwined with moral conflicts. Although the decision for comfort care is already made in Case 3, caregivers may second-guess this or have feelings of guilt. 38 Unlike conventional search engines that present a wide range of search results for users to choose from, AI chatbots provide direct and succinct answers to the user’s questions. While they may not offer explicit treatment recommendations for ethical issues, there is no guarantee that the scope of information in their succinct answer, or the way they frame it, is entirely value-neutral. Furthermore, evidence that users perceive AI as a superior form of knowledge is emerging 39 —users may accept AI-generated responses unquestioningly, especially if they lack confidence in their own judgment or are unaware of AI’s limitations. Given the lack of social support for hospice caregivers, 40 the chatbots’ perceived non-judgmental and attentive tone could also result in a positive bond, 41 which may add another reason for overreliance.

AI chatbots in palliative care may also worsen the already existing divide caused by unequal access to digital infrastructure. Even among persons with internet access, a new form of disparity may arise based on AI chatbot subscriptions, as users encounter varying information quality depending on whether they use the free or paid version. 42 Moreover, based on the language, the chatbots can provide different quality outputs. These attributes can lead to unequal distribution of information, adding an additional layer of inequality to the conventional digital divide.

Explicability is an especially important principle to consider in Case 3. Currently, state-of-the-art AI chatbots are corporately owned and their training data are not publicly available. 43 It is unclear whether or how companies have implemented strategies to mitigate biases within the large amount of data and ensure fairness, particularly for marginalized populations. In fact, Zack et al. 44 demonstrated the potential for ChatGPT perpetuating societal biases by overrepresenting racial stereotypes in diseases and offering different directions when modifying the demographics in the prompts. However, despite these issues of opacity, AI chatbots cannot hold any accountability for their responses and associated impact.

In summary, AI chatbots may benefit users by improving access to health information tailored to their needs. Yet, ethical concerns persist, including the potential for overriding users’ autonomy in decision-making and the risk of harm caused by inaccurate yet persuasive responses. These issues require extra attention in palliative care, where dilemmas often involve moral conflicts and there is a general lack of understanding about its services and concepts. Moreover, new disparities may emerge based on user subscriptions or language barriers. Finally, the fact that end-users are unaware of the strategies used to address potential bias in the data raises serious concerns, as does the unclear entity responsible for the chatbots’ impact.

Discussion

In this critical paper, we have explored whether AI utilization within palliative care aligns with the principles for developing ethical AI and can facilitate the overarching goal of supporting seriously ill individuals and their families. Indeed, AI offers many opportunities within this domain, such as prompting goals-of-care discussions and ACP and promoting timely management for symptom burden. AI can also generate health information for patient-caregiver dyads personalized to their inquiries. Nonetheless, we highlight several gaps that need further attention to ensure AI better supports palliative care.

The main concern pertains to whether AI can shape or augment the decisions of the patient-caregiver dyads and palliative care providers and override their agency to retain autonomy. We pointed out cognitive biases that impact users’ decision-making across various AI applications. Tschandl et al. also demonstrated the possibility of AI misleading clinicians, even when it contradicts their initial impressions. 45 This issue becomes more concerning given the ongoing uncertainty surrounding the extent to which end-users are accountable for the harm caused by decisions involving AI. 46 The vulnerability of patients with life-limiting illnesses further amplifies the ethical concerns about autonomy and accountability in palliative care. Therefore, we strongly suggest that providers view AI tools as a supplement to their practice, rather than a substitute of human effort, at the individual, institutional, and policy level. Also, before AI predictions are deployed within standard care, specific guidelines describing the extent and context in which providers can disregard these predictions must be established to protect providers’ own decision-making autonomy without fear of retaliation.

Another ethical concern is the possibility of AI perpetuating harm through biased data. Obermeyer et al. 47 presented evidence for racial bias in a widely distributed algorithm, and Diaz et al. 48 found both explicit and implicit age-associated bias within various natural language processing tools. To mitigate these potential harms, ethical concerns could be approached as computational issues that require further attention. For instance, bias could be reframed as an issue requiring focus on label noise (i.e., meaningless or corrupted data caused by mislabeling that does not adequately reflect the true attributes of the target variable) as well as feature noise (i.e., erroneous or missing data). 49 Techniques such as finetuning (i.e., applying pretrained weights on a smaller and application-specific dataset 50 ) are also introduced to make the algorithm output more representative. Other computational techniques that can potentially address ethical concerns regarding the data should be further identified. Moreover, beyond generating accurate outputs, emphasis should also be placed on AI’s ability on providing explanations for the derived outputs. This is particularly crucial for AI chatbots prone to generating erroneous outputs as if they were true. Providing explanations as to why such responses were generated can enhance trust and empower users to make a decision based on the rationale given. 51 These efforts will not only safeguard end-users from potential harm but will also improve transparency, ultimately enhancing users’ informed AI usage and autonomy in decision-making.

We have also highlighted the potential distress that patients and caregivers may experience when utilizing AI in palliative care. Distressing emotions could result from depersonalization, data privacy concerns, potential interference with one’s autonomy, and the lack of access to digital infrastructure that supports AI usage. A recent Pew Research Center report also found that 52% of US adults expressed concern about the increased use of AI in their daily lives and 60% would be uncomfortable if their provider relied on AI for their care. 52 Given this public uncertainty, if AI-based predictive analytics are to be deployed in standard palliative care practice, the extent to which AI has been utilized needs to be explicitly shared with the patient. Because some patients may not want to know their computed mortality risk, obtaining informed consent before disclosing such information could also be an option to ensure patient autonomy and respect for their preferences. There is much that remains to be learned with the use of AI within the clinical arena and especially as it relates to palliative care services. There is always an opportunity to improve communication between patients and their providers and additional training in informed consent practices and disclosure of AI outputs would be beneficial. Referrals for psychological support may also be needed. If the output was later shown to be an error, providers should also be aware of how to inform patients without causing further mental harm. Moreover, the possibility of secondary use of EHR data should be more explicitly informed to the patients and families at the system level to better safeguard data privacy.

Finally, equitable allocation of resources is crucial for palliative care, as the field emphasizes support for all serious ill individuals, regardless of age, care setting, diagnosis, and prognosis. 6 On one hand, AI could improve access for high-risk individuals who lack awareness of palliative care, and researchers could identify health disparities within the field using these algorithms. However, depending on the main outcome of interest and the quality of the data, AI may still inadvertently prioritize a certain group, which diverges from the original intent of palliative care. Further discussion is needed to agree upon which outcome should guide these algorithms to ensure equitable distribution of palliative care resources. Moreover, the costs and training required for advanced technologies introduce new disparities in access. AI developers targeting patient-caregiver dyads should consider how to make the technology accessible and user-friendly for marginalized population. For example, utilizing user-centered or participatory design approach during the development and testing of AI tools can help designers better understand the specific situation and needs of end-users. 53

Conclusion

Palliative care is specialized care for seriously ill individuals and families, with the goal of improving their quality of life and alleviating suffering. Guided by principles for ethical AI, we examined the ethical dimensions of three cases using AI in the palliative care context. Despite the opportunities it can offer for the field, it remains unclear whether AI can ethically support the person-centered and holistic intent of palliative care. Further discussion is needed to identify outcomes future AI designers should focus on to best reflect the aim and scope of palliative care. The needs and situations of the palliative care population should be comprehensively reflected in these tools, with a specific focus on inclusivity for marginalized stakeholders. Before its integration into practice, additional training should be established for providers in effectively communicating with patients and caregivers regarding AI usage, as well as concrete guidelines to mitigate patient distress caused by its disclosure. More attention is required in addressing biases within the data and promoting explainability. These efforts will help strengthen the ethical and responsible use of AI in palliative care, ensuring that its use facilitates the goal of supporting seriously ill individuals and their families.

Footnotes

Acknowledgments

We would like to thank Dr. Sara F. Jacoby for her invaluable feedback and support.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.