Abstract

In today’s age of revanchist nationalism and xenophobia, ‘identity’ is commonly understood as a question of collective belonging. But another valence of identity is highly empirical, associated with the individual and constantly enforced by and beyond the state: ‘ID’, or ‘identity verification’. A focus on ID is novel because a new kind of speculative identity verification is apace: digital identity. We argue that, distinct from biometrics and other forms of identity verification such as fingerprints that seek to tie an individual to their body, digital ID enables and heightens a search for intent that is social and speculative. This is a novel subject and space for borders and policing. States and the firms they hire to police their borders are increasingly concerned with digital identity as the means to know individuals, and especially individuals who are beyond their borders but whose intent they surmise is to cross and harm. We examine two disparate cases of digital identity and efforts to police them in order to conceptualize what digitalizing identity means for bordering specific individuals. This effort to identify via privately held data carries a focus on particularly vulnerable populations such as refugees and missing people, reinforcing collective identity and borders against these ‘strangers’, while tracing a trajectory further into the material world of digital capitalism.

Introduction

‘Identity’ is a term of resurgent importance. Its renewed significance owes to new iterations of nationalism, populism, and efforts to reinforce the relationship between race, patriotism, territory and nationality. And yet identity is vitally a question of the individual, and ‘questionable’ minorities, whose policing reiterates collective belonging. Long have states created and managed techniques to identify the suspect body with identity verification (ID) to collapse the possibility of collective identity being infiltrated by ‘strangers’ (Simmel, 1950) of unknown identity. The state’s own legitimacy and struggle to maintain order is materialized in ID documentation and techniques to know the individual with birth certificates, driving licences, marriage certificates, addresses, or even branding or tattoos. These serve to differentiate and identify identity at scales; to be available in event and interaction, such as when a border or policing agent asks: ‘Show me your ID’.

This is a global question. Scholars from across the social sciences are right to show how borders make the world (Walia, 2014; Walker, 2010), how practices of bordering separate the location from the space (Gazzotti, 2021), and that borders are about policing racial and class differentiation (Ali, 2020; Danewid, 2022). When it comes to identity, scholarly work points to states’ deepening use of biometrics for asserting domestic borders as a proxy for an international war on terror (Amoore, 2006). Where the territory of the state includes a wider project of bordering with biometric segregation, it creates an elsewhere frontier of ‘war-like architectures’ (Amoore, 2009). To identify individuals, biometrics are used as a means of ‘pre-emptive mobility governance’, to cast away unwanted arrivals far from the border by, also, ‘fast-tracking wanted travelers’ (Broeders and Hampshire, 2013)

Biometrics are a necessary concern for critical interrogation. Here, in addition, we argue for greater recognition for digitalization and its deployment to develop identity recognition and verification that thickens proactive methods of identifying and policing who people are, where they come from and what their intent may, potentially, be. These methods draw upon the digitalization of biometric techniques such as facial recognition, fingerprints and iris scans, and yet they cannot be reduced to these. This is because digital identity recognition and verification (digital ID) is also rooted in an expanding effort to digitalize, advanced foremost by capitalist firms. Whether derived by a social media handle, a device ID or the international mobile equipment identity (IMEI) of a telephone linked to bank or other accounts, the digital footprint of individuals is derivative of new spatiality and a constant search for a profit margin. This data, rooted in people’s digital social relations and digital history, now serves as a means to identify and police. Though created and curated by companies, the way of knowing and identifying ‘a body’ rests in its social relations.

Decoupling biometric identification and digital identification matters because the two have different moral underpinnings. Biometrics shrink behaviour and responsibility to the real and potential acts of a body. Digital identity, by contrast, is derived from relations between people, and, as such, offers much more to companies and governments who seek to speculate. Digital ID is a means to conjecture about what could be because of who they know, what they do, where they have been, and what choices they have made with others. Unlike biometrics, digital identity is future-oriented and offers inference about intent and trajectory. This is an especially proactive and anticipatory practice that gathers vast but targeted data from before the border as a ‘30 second examination’ where ‘identity, documents and narrative must align’ (Salter, 2008: 376). Amidst new flows of human movement and ubiquitous computing, digital ID makes the border a speculative future being built around the digital social context of populations. In other words, if biometrics are about knowing individual bodies, digital identity is about placing those bodies within a web that reveals a larger arena of surplus, digitalization, the creation of digital social relations and, ultimately, of global inequality. While conceptually separate, these two are commonly elided in both practice and scholarly discussion.

Biometrics and digital ID must also be seen as connected in key ways. Contemporary forms of digital identity also use biometrics. Each is increasingly used to predict the other, such that the legitimacy of a digital ID is strengthened if it can be connected with a body via biometrics. A facial recognition technique to identify an individual uses selfies and photos from social media scraped by firms such as Clearview AI. Or it could use an individual’s iris scan to legitimate a search of a Facebook account to know if this iris has a criminal history, record, or intent. In a world of digital fakes and images that are mundanely digitally generated, biometrics should to be twinned with digital ID in order for a company or state to claim an individual actually exists. Moreover, making this connection bypasses a need for state-issued identity documents, while countervailing efforts to create digital forgeries. But whether orchestrated through Microsoft’s Azure or Sam Altman’s World ID, these efforts to combining biometrics and digital identity are derived from fear. To make their product, they intervene in populations who have real reasons to mobilize and seek a more just world. As we will show, the effort to identify seeks and finds a particular category of destitution, and uses it instrumentally. Refugee camps, the urban poor and family members of the disappeared have all become the target – the base matter for making money, and borders.

We argue, then, that the making and use of digital identification is about identifying and policing suspect populations before they are biometrically known and that this effort is rescaling the project of knowing individuals and their social relations. We emphasize how the bureaucratization of identity verification by states matters, and show how it works through a selective and speculative expansion of digital identity verification by states and their capitalist partners beyond the regimentation of their own populations and borders. Through a relationship with capitalist firms that escalates the connection between profit and digitization, states and companies espouse the ability to know and make claims about every aspect of those arriving or moving within their borders. This process depends on inferring identity based not on a shared kind of citizenship, or on the body, but on digitally generated metadata, relations with preconceived categories of marginality, including notions of race, class, deceptiveness, propensity to commit a crime, ‘ideology’, and so on (Benjamin, 2019; Broussard, 2018).

To demonstrate, we consider two apparently disparate cases of populations outside of states who are the subject of speculative digital ID efforts. First, in São Paulo, Brazil, where between 20,000 and 25,000 people are registered as missing per year, Microsoft gained access to a database of tens of thousands of images and digital identity data from a mothers’ group, Mães da Praça da Sé, made up of thousands of relatives of disappeared people. This data, meticulously collected and aggregated by the group on a case-by-case basis since the mid-1990s, has been a crucial way for people to find their missing loved ones, and to keep their memory alive. Second, on the edges of the European Union, migrants and potential asylum seekers are subject to efforts to gather individual-level data about their motives and morality. The EU invested €45 million in a phrenology-based ‘deception detector’ tool, iBorderCTRL, which prompts nationals from ‘third countries’ with a 3D interviewer, targets micro-expressions, and combines its facial analysis with other national and supranational law enforcement and migrant-related databases to establish whether someone might be ‘truthful’ enough to travel.

By placing these two cases alongside each other, we maintain that apparently discrete and disparate efforts to demystify the identity of a category of real or potentially transient individuals through digital means have a common focus: the curation of a globally distributed digital ID system attuned to imagined threats in waiting. To surmount the possibility of a crossing, these digital efforts make border regimes and the defence of collective identity elastic, seeking to foreclose any evasion long before an individual arrives at a material frontier – or perhaps even decides they want to.

Our contribution, then, is threefold. First, we refocus on the materiality of documentation and the making and equivocated use of ‘ID’ historically to show how digital ID is a new cog in bordering techniques, distinct from previous regimes centred on the use of biometrics to verify the ‘owners’ of paper-based documentation. Second, we reveal how digital identity making by states and firms beyond their borders is crucial to the rescaling of everyday border policing where the digital and biometric can go hand in hand. And, last, we suggest that these processes increasingly affix the border to particular and suspect bodies, even if these bodies are elsewhere, disappeared, or dead.

This work is based on a cumulative nine years of ethnographic and qualitative research carried out in Latin America, Europe and the United States. While not carried out discretely for this particular collaboration, the authors undertook this work in the same moment and while in constant dialogue, encompassing interviews and participant observation of police, prosecutors, state institutions and tech firms, as well as longstanding and ongoing engagement with family members of the disappeared in Brazil (Denyer Willis, 2021, 2022, 2023) and with refugees and their communities in Berlin, New York and elsewhere (Mahmoudi, 2025). These cases, then, emerged not from a positivist methodology of hypothesis generation and testing but from grounded recognition of cognate processes, patterns and categories emergent from an in-parallel epistemological attention to the digitization of life at the margins, and that is empirically rooted in interviews, participant observation, life histories and public documentation that attend particularly to questions of the contemporary disappeared and refugees in flight. These methods and their connection to each of the cases in question are discussed in much greater detail elsewhere, and both cases discussed here are a novel elaboration of earlier work for this entirely new contribution (Denyer Willis, 2022; Mahmoudi, 2025).

States and the selective identity regime

Explicit or innuendo, scholars have long held that the work of the state is to identify and order populations (Acemoglu et al., 2013; Vu, 2010). Some argue that this is a metric of the ‘quality of governance’ itself (Moore, 2004), and yet for others such dominance is an ongoing and always incomplete effort to make populations and space legible (Scott, 2020). Why states retain an uneven knowledge of their populations raises larger questions about the rationality of government, as especially germane to whom it protects by identity and whom it seeks to identify, and why. Explanations for uneven government of populations can be parsed along at least two lines: states lack capacity to govern all, or states lack the will. In the frame of identity, we see the latter. Selective or wilful inattention to verifying and caring for populations is not happenstance, but a consistent condition of states’ concern for enabling some lives over others, such that some must be known and cared for, while others should be left to ‘lesser knowledge’. This differentiated governance can even raise questions about how to assert that a citizen actually exists, since uneven attention to populations means some are never registered at birth (Hunter and Brill, 2016). In this way, the knowledge–power relationship can be seen as an ‘ignorance–power’ condition where the state takes on ‘split personalities’ that lead to divergent outcomes (Barnard, 2019). Differentiation in justice, life chances and ‘premature death’ is the point (Gilmore, 2007), such that the work of apparently universal institutions – police, law – has disaggregating and differentiating effects.

‘Identity’ thus requires special consideration, and in at least two processes. First, identity signifies a national project or projects of collective belonging, a purportedly shared understanding of common interest and benefit – what Singh (2011) calls ‘we-ness’. Such a shared understanding of identity could enable projects of mutual benefit, care and trust in redistribution, such that being a part of a common identity would mitigate suffering and inequality and enable participatory projects of inclusive governance (Brubaker, 2004). While certainly ‘imagined’ (Anderson, 1991) social and historical processes of community, these processes are actually nonetheless constantly curated and enforced by political interests, actors and violent relations. This kind of identity making is conservative and exclusive, ‘predicated on reproductive heteronormativity’ (Spivak, 2009: 75). Race, gender and class inequality and have long been fundamental questions to the unequal making of both national and subnational identities that can be as sharp and local as ‘white domination and resistance by people of color’ (Omi and Winant, 2014: 3), or as global via colonizing legitimations and their visions of racial hierarchy in world making (Bell, 2016).

Second, differentiating populations means identity becomes a mundane street-level question of an almost binary sort: is an individual identifiable? A question of police and policing, requiring individuals to have and carry government-issued identity documents (ID) enables identity border-work for inclusive and exclusive filtering (Tawil-Souri, 2011), where ‘IDing’ particular individuals hinges on a concern for real or imagined threat, and a project to contain threats against an imagined identity population. Street-level identity verification – the process – uses ID – the material outcome of state bureaucratization of individuals – to recognize and police individual behaviour that does not elide with an imagination of collective belonging. Whether used to clamp down on ‘anti-social behavior’, ‘loitering’ or ‘murder’, the use of ID to police individuals transcends law; a ‘stop and frisk’ is as much about imagined threat as it is about bodies out of place, such as in defence of ‘White space’ (Anderson, 2015). It is not happenstance that forms of ID are commonly rooted in identifiers of banal and heteronormative belonging – marital status, name of parents, race, fixed address – in addition to rudimentary biometric data – photograph, fingerprints – about who the individual is in body form (Abe, 2006).

This is not a linear or total project, of course; it is a regime of selective identity. ID cards and their uneven use are also highly performative of idea(ls) and imaginations of identity – nations produced or fractured by borders as colonial artefacts (Alimia, 2019). Moreover, some cities in the United States have sought to usurp the state’s sovereign command of ID as affixed to immigration status by providing local forms of documentation to undocumented individuals to allow them to access public services and benefits (De Graauw, 2014). Conversely, some states have opted for utilizing strategic ignorance in the interior (McGoey, 2012), while posturing more stringent recording policies (Kalir and Schendel, 2017), thus presenting identification as both a deterrent and, at the same time, an essential requirement for accessing e.g. state services. For these disparate reasons, a worry with whose identity and personal interests identity documents and verification serve makes odd political bedfellows, bringing together libertarians, socialists and minorities, among others, who recognize real and potential surveillance by states via verification of individuals (Lyon, 2009; Thierer, 2001).

Some techniques of bureaucratic identity making and identity verification are hegemonic. Birth certificates, driving licences and other government-issued ID hold widespread sway as authoritative means for identity and mobility to be regimented and policed within countries. Some countries, like Brazil, have national ID cards that include a fingerprint, photo, name of mother and father, place and date of birth and other characteristics. The advent of this kind of ID by the Brazilian government, required by law to be carried on the person, included the creation of an analogue database of simple biometrics. However, the making of this kind of ID was not happenstance; it was created by a Cold War regime intent on asserting order and identifying political subversives. Nevertheless, today millions live in Brazil as sub-registrados – undocumented by the state – and can never even be counted as murdered or disappeared as a result (Escóssia, 2021).

Long rooted in a governance preoccupation with the vagrant, traveller and unknown ‘stranger’, the ID logic of knowing and domesticating individuals remains a pressing concern today (Wilson, 2017). This ‘anti-nomadic ethics’ tethered to identity belonging in a circumscribed space belies a fixation between people who do not belong, who cannot be domesticated, and the likelihood that this mobility or unbelonging equates to evasion, ill will or criminality (Axster et al., 2021; Kabachnik, 2014). Or as Simmel (1950) once wrote, ‘the stranger’ presents a problem because they are not ‘radically committed to the unique ingredients and peculiar tendencies of the group’, being more likely to see these odd fixations, tropes and mores as dubious.

Under this light, the person whose ID can suggest bad intent must be proactively identified. ‘The criminal’ is more than a legal category; it is a modus for reasserting collective identity via that which threatens it. From the making of witches (Currie, 1968) to mass imprisonment of ‘super-predators’ and ‘drug dealers’ (Baker and Simmons, 2015) or the reification of the ‘terrorist’ (Tilley and Hopkins, 2008), the effect is to shore up a differentiation between who belongs and can benefit, and who does not and should not. Such striations of dis/belonging exist within states as much as they also become questions of border enforcement. Checkpoints, stop-and-frisk and ID requests are not instances, events or data points; they disaggregate identity as collective and identity as individual and verifiable in a constant effort to make nations (Bishara, 2015).

Supra-state forms of identification have a similar hegemony in materiality and process. The passport is the near unitary means for knowing individuals in the international order (Salter, 2008). Powerfully affixed to collective identity hierarchies on the world stage, a passport evokes privileges of particular modes of identity in a constant comparative exercise. Its use and legitimation by states has long served as the primary – and highly analogue – means of identity recognition and assertion beyond and between states. While the passport can be used to assert identity within states and has important symbolic value (Pogonyi, 2019), everyday intra-state kinds of ID lack legitimacy elsewhere. Under these conditions, the passport collapses both collective and individual processes of identity into a single kind of documentation, evidence of both national belonging (Bivand Erdal and Midtbøen, 2023) and the ability of a government to deny entry to an individual.

For all of the power of government-issued identity documents, and the means to verify individuals within borders, an empirical problem arises in a moment of ubiquitous computing and datafication: What is required for an individual who leaves their own country and does not have any of these forms of documentation, was never provided any, or could not afford the rising cost? And how can their actual reason for crossing be known?

Digitizing identity

Borders and biometric ID systems have captured much scholarly discussion (Amoore, 2006, 2009; Balzacq, 2007; Broeders, 2007; Dijstelbloem and Meijer, 2011; Dunleavy, 2006; Muller, 2010; Prins et al., 2011; Vaughan-Williams, 2010). For good reason, this literature has been chiefly concerned with border security and migrant interception, often taking refugee camps or borders as sites of analysis (Chouliaraki and Georgiou, 2022; Molnar, 2024; Villa-Nicholas, 2023). These works highlight the practice of border digitalization as an effort to ‘remote control’ prospective migrants stemming from the War on Terror (Broeders and Hampshire, 2013), as well as how border and, in particular, biometric technologies serve as symbolic fronts for the race to the bottom on stricter immigration policy in Europe (Dijstelbloem and Meijer, 2011). Others have interrogated the manifestations, expressions and experiences of the border beyond its physical demarcation (Bauder, 2011; De Genova, 2016; Mbembe, 2019; Paasi, 2012; Salter, 2012; Vaughan-Williams and Pisani, 2020). This renewed attention suggests there is a ‘death of the line’ and proposes an important shift to understanding the space as ‘the suture’ (Salter, 2012) where processes of violent differentiation problems are plastered over but never really mended. At the same time this ‘control of citizens, travellers, migrants and illegal aliens is coming closer to their bodies’, generating what Stenum (2017) refers to as the ‘body-border’. As critical race and migration scholars such as Maynard (2019) and Mezzadra (2020) have argued, borders expose a colonial continuity of the racializing objectives of the border; it promises ‘death, removal and containment’ at, between and beyond the border (Achiume, 2021; Maynard, 2019). Walia’s invitation to reorient ourselves to the border as a deeper (and deepening) imperialism, catalysed by the dawn of racial capitalism (Robinson, 1983), allows us to conceive of the border as an increasingly abstract space, present wherever the possibility for the reassertion of state identity, capital extraction and threat neutralization arises.

The combination of preemptive mobility governance and new ways of measuring and intervening in the movement of suspect populations is a powerful rationale for using data to identify and divine intent. In an online sphere where users proliferate and speed of transactions matters, Silicon Valley companies work diligently to create a new regime of digital identity verification along these lines. Rooted in online histories, profiles and identities individuals have created online over the last 20 years, the push to digitally identify users is rapidly changing how people are digitally documented and regimented around the world. Taking identity verification far beyond what states can do, a specific new tranche of digital capitalism sets out to identify everyone on earth. Done especially to make digital capitalism inevitable, private sector solutions are ‘built upon large centralized silos that store identity data’ (Sedlmeir et al., 2021: 604) independent of user knowledge. Digital identity verification is ubiquitous for digital purchases and lending, online interactions, seamless log-ins, and is one of the primary means to keep platform capitalism safe and advancing at an ever faster pace (Denyer Willis, 2023). It does so with biometric data but not solely; data used to recognize and identify people draws deeply from socially produced digital contexts – online purchase histories, rate of scroll, interests and engagement, interactions with others on social media, the age and use of an email address, and so on – that provide a convincing depth of profile about individuals that can then be pinned down to device IDs, an identifier, locations, names and places (Pybus and Coté, 2022).

Along these lines, Zuboff (2019) describes the use of metadata by firms and states as a new era of surveillance capitalism, which ‘lays claim to private experience for translation into fungible commodities that are rapidly swept into the exhilarating life of the market’ (p. 10). This, she claims, is a fundamental shift in capitalism. Under surveillance capitalism, behavioural data that were once discarded or ignored were rediscovered . . . as a means of generating revenue and ultimately turning investment into revenue. [Users] became a means to profit in new behavioural futures markets in which users are neither buyers nor sellers nor product. (Zuboff, 2019)

Nevertheless, Zuboff’s approach is limited by its inattention to unevenness. Speculative form of digital identification, which indeed surveil, are highly and deliberately partial. In addition, much of the behavioural metadata central to digital identification is based on conjecture about abstract racialized categorizations of identity, forged out of white imaginaries about the other (Brock, 2018). Surveillance capitalism is a helpful but limited frame that requires greater materiality and specificity.

To this end, we see this regime at the border and upon migration (Miller, 2019), where digital technologies increasingly determine fates (Molnar, 2019). Proactive practices of bordering increasingly elide with private efforts to digitally identify people via vast amounts of privately held data stored independent of state-issued documentation. Under these configurations, governments from privileged global regions turn to the private sector to make unknown people knowable. Still fixated on the ‘stranger’, digital identity at the border means finding and asserting knowledge about aspects of individuals who are not their own citizens and to demystify new ‘frontiers’ of appearance, behaviour, beliefs and intention. Digital identity is a primary means to set the standards for what is understood as a legible – or even good – global border subject (Schulze Wessel, 2016)

This includes techniques for individuals who may never have had a passport and who may not carry state-issued documents. To achieve this, technology companies are responding with digital fixes that seek a tether with biology (Madianou, 2019; Mirzoeff, 2020). This might enable the detection (via satellite imagery and remote biometric surveillance), categorization (via advanced computational classification and behavioural prediction systems), verification (contingent on a vast array of cloud computing and biometric recognition devices) and, ultimately, control of moving bodies. Digital and biometric forms of ID extend or deny forms of state-centred identity by ‘hard-coding’ parameters that determine an individual as threat, vagrant, or promise as a good citizen of capitalism (Axster et al., 2021; Stenum, 2017).

Such processes of border datafication (Villa-Nicholas, 2023), fortification, expansion and externalization push the border closer to bodies of those fleeing persecution, long before and after they reach the frontier of the European Union or the United States. This transgressive process makes a broader set of ‘risks’ available to the speculative calculus of a ‘good’ versus ‘bad’ binary, thus generating an ever-growing list of static variables and probabilistic conditions that flag particular individuals and communities for capture. The ever flexible and expanding instrumentalization of amorphous ‘risk’ helps bolster the border-surveillance industrial complex (Akkerman et al., 2021; Miller, 2019), guaranteeing turnover of capital from states and multilateral organizations to tech companies. Tech companies, in turn, promise to demystify, datify and remove the unknown threats to nations obscured within bodies, intent, likeness and potential. Instead, these become ways of knowing and intervening.

Digitizing the disappeared: São Paulo and Microsoft

The city of São Paulo might be one of the world’s most celebrated global cities. It is also, though, one of the world’s most unequal urban regions, made iconic in widespread images of luxury high-rises abutting informal settlements. Amidst this acute inequality is a surgent kind of problem: between 20,000 and 25,000 people are reported missing each and every year by family members and loved ones. Where missing people go, and why, is a complex problem with manifold layers and pathways (Denyer Willis, 2022). Thousands of people die nameless on city streets every year, buried as indigents in city cemeteries. Others disappear into an expansive prison system that has grown by 575% since the 1980s, where two in every five inhabitants are pre-trial. The city and state are also home to one of the most intransigent organized crime groups in Latin America: an organization that both attenuates violence by asserting rules and is behind a disturbing new pattern of mass graves –cemitérios clandestinos – that increasingly dot the city (Biondi, 2016; Denyer Willis, 2015; Denyer Willis and Durán-Martínez, 2024). Facing this violence, others flee at a moment’s notice from their homes and loved ones, seeking to hide from the organization in other cities, other neighbourhoods, or on the street. Yet others disappear because of a more long-standing problem: police violence and the terror it creates (Alves and Costa Vargas, 2017). Every year, police kill upwards of seven hundred people, some of whom are never found, or whose bodies disappear within the state’s medical institutions, nary to be found by those who search (Gennari and Carneiro, 2016). All of this violence also creates a different kind of disappearance: they who flee.

Amidst these conditions, disappearance is more than an ancillary or happenstance problem. Multiple people vanish every day, leaving loved ones to search for answers, bodies and some kind of resolution. Like elsewhere in Latin America, the scale and scope of this problem seems unprecedented (Wright, 2017). New forms of social organization – incipient communities – attest to this reality. One of the most important is a group of mothers that now gathers regularly in the central square of the city, at the Praça da Sé, and which has become well known as the Mães da Praça da Sé – the Mothers of Cathedral Square. These mothers echo the gendered nature of disappearance, hearkening the other well-known groups of people organized around searching and finding, such as Argentina’s Madres de la Plaza de Mayo, which have long been a crucial voice against totalitarian violence in the region (Cuchivague, 2012). But São Paulo’s mothers of the contemporary disappeared are also different from the madres in other terms; they are generally not White and they are markedly poor. They arrive at the square not by walking around the block, but by spending hours on multiple forms of public transit to arrive downtown.

Run as an NGO by a mother whose daughter vanished in the 1990s, the Mães da Praça da Sé does critical things for the growing population of people left behind. They provide resources, institutional knowledge, guide loved ones of the missing about how and where to search, and enable a kind of solidarity between people whose lives have fallen apart, and who find little respite or care from the state. Perhaps most crucially, for more than 30 years the organization has collected records of the missing: names, biographical information, photographs, basic life histories and related details about tens of thousands of people that cannot be found. Most of these people are men.

One of the most common senses in the community of people left behind by the missing is that people and institutions do not care about their problem. It is difficult to find concern, to attract attention, to drum up moral outrage that might culminate in search parties, the creation of a state-made DNA database, or push institutional reforms to deal with a rising tide of disappearances. As people struggle mightily to get the state to do something to help, manifestations of interest and all potential solutions are welcomed, lest they lead to even the most modest answers to a problem defined by a shroud of questions. If even one person can be found, it helps.

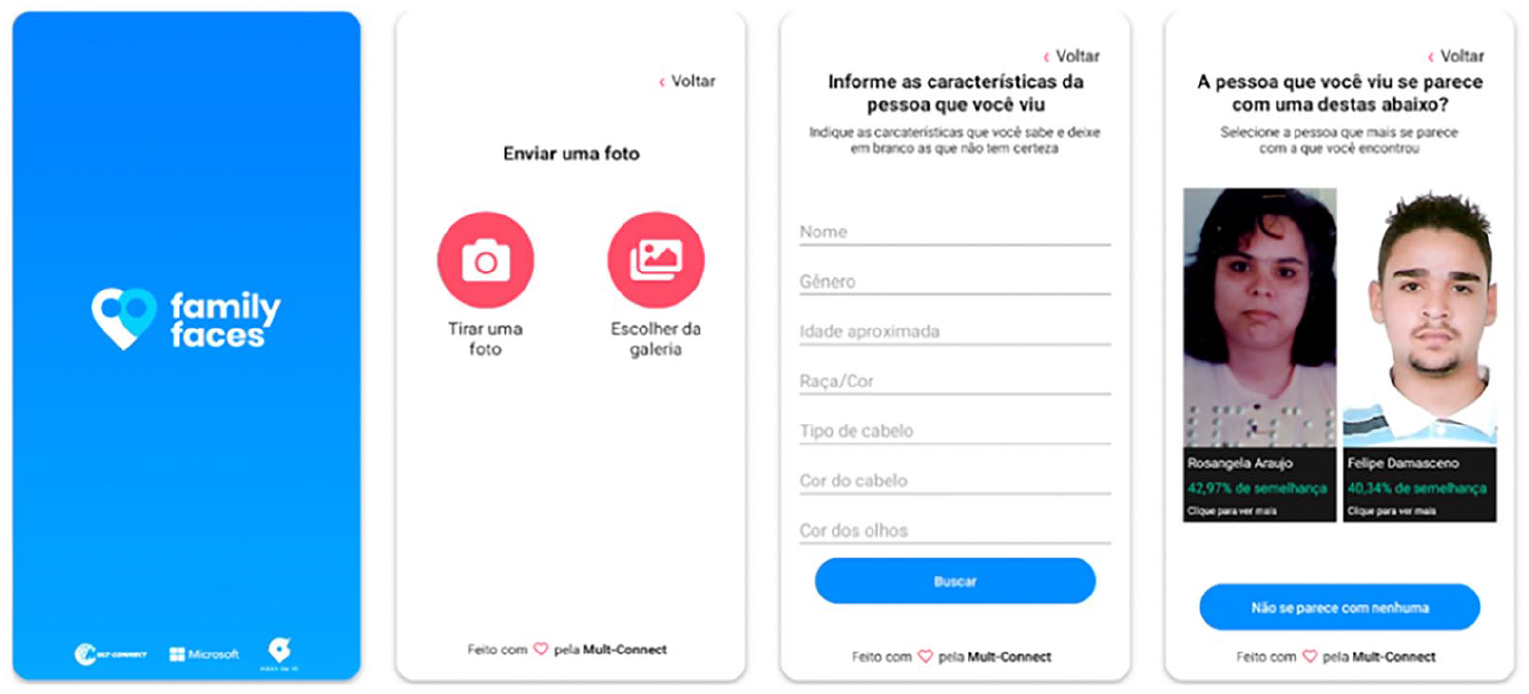

When Microsoft came calling, they took notice – and for good reason. The organization promised a novel and exciting solution to the vertiginous problem of finding the disappeared. As part of a programme that it calls ‘AI for Humanitarian Action’, Microsoft wanted the data from the more than 10,000 cases of missing men and women that the organization had collected since 1996. This data was full of potential for technological innovation, which could lead to new outcomes and, hopefully, found loved ones. In exchange for providing data, Microsoft would create an app (Figure 1) that would enable anyone on a search for the missing to check whether any given person, on the street or otherwise, had been kept in the mothers’ database.

Family Faces.

As Microsoft put it in a press release at the time, the partnership with the Mães da Praça da Sé envisioned ‘the creation of an application that uses facial recognition . . . to strengthen the search for the missing and to ally with the work of searching by public entities in partnerships with police, hospitals, emergency rooms, shelters and other institutions’. It continues:

The platform uses cognitive tools, artificial intelligence and storage on Microsoft’s Azure cloud software. With it, it will be possible to identify a person suspected to be in a state of abandonment with facial recognition. A user will just have to take a picture of the person and compare their physiognomy with the organization’s database. The app will search and display if the characteristics are compatible with someone who is missing. It will also be possible to search for people by physical characteristics (skin, hair and eye color).

‘[This partnership] is a powerful example’, wrote Brad Smith, Microsoft CEO, ‘of how we can apply technology to help solve the greatest challenges in our society.’ Missing people, people on the run and the mass graves emergent are indeed one of the great challenges of our time.

Microsoft’s real goal in temporarily intervening in the world of disappearance seems something quite different, however. This data is crucial for a different application, and one that is of another order of contemporary importance: for these images and data to be aggregated, digitized and incorporated into Microsoft’s Azure cloud infrastructure for use in practical applications and advanced computing, all of which is for sale. With its focus on the missing in São Paulo, Microsoft has taken data from a distinct population and added their characteristics to a database of images and data that the company has collected on what it calls ‘modern migrants’ − people outside the Global North, including refugees, displaced people and the missing. At best, this technological effort was a ‘light-touch’ solution to the actual problem of finding missing people. Instead, the data aggregation and technology made use of these absent and missing bodies because of their status as missing, and with the vestiges of their images that remain. Here, the concern is that these people must be identified − not because they are missing, nor as a means to search better, but, instead, because of where they might turn up if they are alive.

Images and data of missing people might seem discrete, but they are past, present and future. Their past is a history shrouded in doubt and suspicion about who they really were before they vanished. Their present is a condition of both hopefulness − for family − and threat − that they might re-emerge in another place, perhaps on the other side of a border. Their future is being laid down through the use of the vast database of their images and personal identifiers used by Microsoft, and others, to teach ‘intelligent’ machines about the ‘physiognomy’ of who is likely to disappear, to be disappeared, or to flee without documentation to somewhere they should not. Unlike data collected by Microsoft, the app is not available to users outside of Brazil. Moreover, users in Brazil claim that it is ‘useless’ in their ratings, having been built by a third-party technology firm hired to do the task as a one-off solution, and not by Microsoft engineers engaged with this ‘greatest challenge’ in our society.

The company’s interest is elsewhere. One of Microsoft’s most notable clients, which uses the Azure cloud database, is Immigration and Customs Enforcement (ICE). With several contracts in place, totalling tens of millions of US dollars, Microsoft has written openly and prominently about providing its data and cloud services to ICE to enable it to ‘modernize’ its work, stating:

ICE’s decision to accelerate IT modernization using Azure Government will help them innovate faster while reducing the burden of legacy IT. The agency is currently implementing transformative technologies for homeland security and public safety, and we’re proud to support this work with our mission-critical cloud.

This selective attention to identity − not to knowing where people are or searching for them, but to placing a territorial concern on the meaning of that identity − underscores a project of global bordering. Their digital identity must be known because their status as ‘missing’ is itself suspicious. Microsoft and ICE know who they are already, with both biometrics and a digital identity brimming with social context and condition; it is that context and condition that feeds speculation that a border crossing is their future.

This proactive search for speculative data is part of two logics. The first is that one’s digital identity is the means of speculation. The second is that this speculation drives Microsoft’s quest for profit: the subject might cross the border. It supplies solutions for a demand that comes from ICE, which claims that 17,000 Brazilians were apprehended in one single US border sector, El Paso, Texas, in the fiscal year ending November 2019.

Biology and speculative intent: Automating xenophobia

Imagine narrowly escaping death, battling the tide, disinterested coastguard and drones across the Mediterranean. Even before you arrive, somewhere, on some anonymous server, your digital identity is flagged by an automated decision-making system (ADMs). At the border checkpoint, a massive database of pre-registered fingerprints confirms the biometrics of incoming migrants, yet border guards demand every face to be scanned for more ‘efficient’ and ‘accurate’ processing. Every processed face is piled together with other undesirables in line for human processing. A predictive algorithm processes your mobile phone – acquired while on the run – as you stand in line at the port of entry, warning the border guard not to trust you; that you are from a country that in reality you only transited through for a few hours. You are flagged for ‘emotion recognition’ and an extensive interrogation (Mahmoudi, 2025).

Between 2014 and 2020, Frontex – the European border and coastguard authority – invested €434 million on surveillance and IT infrastructure. For the years 2021–2027, the European Commission has earmarked some €34.9 billion for border control (Frontex, 2021). These investments have gone to further expand Frontex systems such as the EURODAC (European asylum dactyloscopy database) protocol, which, up until now, has been enabling multinational sharing and cross-referencing of fingerprint data with other national and supranational law enforcement and migration-related databases (Aizeki et al., 2023). The European Commission recently further extended the remit of EURODAC to include facial images and even reduced the legal age at which images may be collected from 14 years of age to 6. These capabilities emerge from a commitment by European institutions to increase the EU’s artificial intelligence capabilities at its borders. Even as the landmark AI Act is advancing as the most comprehensive attempt to regulate AI across the region, its margins are defined by the arrival of people on the move (AccessNow, 2023).

The EU’s research and innovation funding programme (formerly known as Horizon 2020, now Horizon Europe) has since 2014 funded several consortia in their pursuit of the production of speculative technologies for border control and migration management. Each Horizon-funded consortium, made up of a rainbow coalition of universities, law enforcement agencies, military contractors and start-ups, typically engages with an EU agency to which its work is especially relevant, in this case Frontex. The Horizon programmes fund a great many border security projects that enable Frontex to fulfil its strategic objectives to ‘reduce vulnerability of the external borders, guarantee safe, secure and well-functioning EU borders, and further enhance European Border and Coast Guard capabilities’ (Frontex, N.D.). These include capabilities the agency considers critical for its operations, such as ‘unmanned platforms, document fraud detection, situational awareness, biometrics, command and control, artificial intelligence, robotics, augmented reality, integrated systems and identification of illicit drugs’ (Frontex, N.D.). A memorandum between the European Commission’s department for migration and home affairs (DG-Home) and Frontex enables the agency to exploit and implement these projects and support their dissemination.

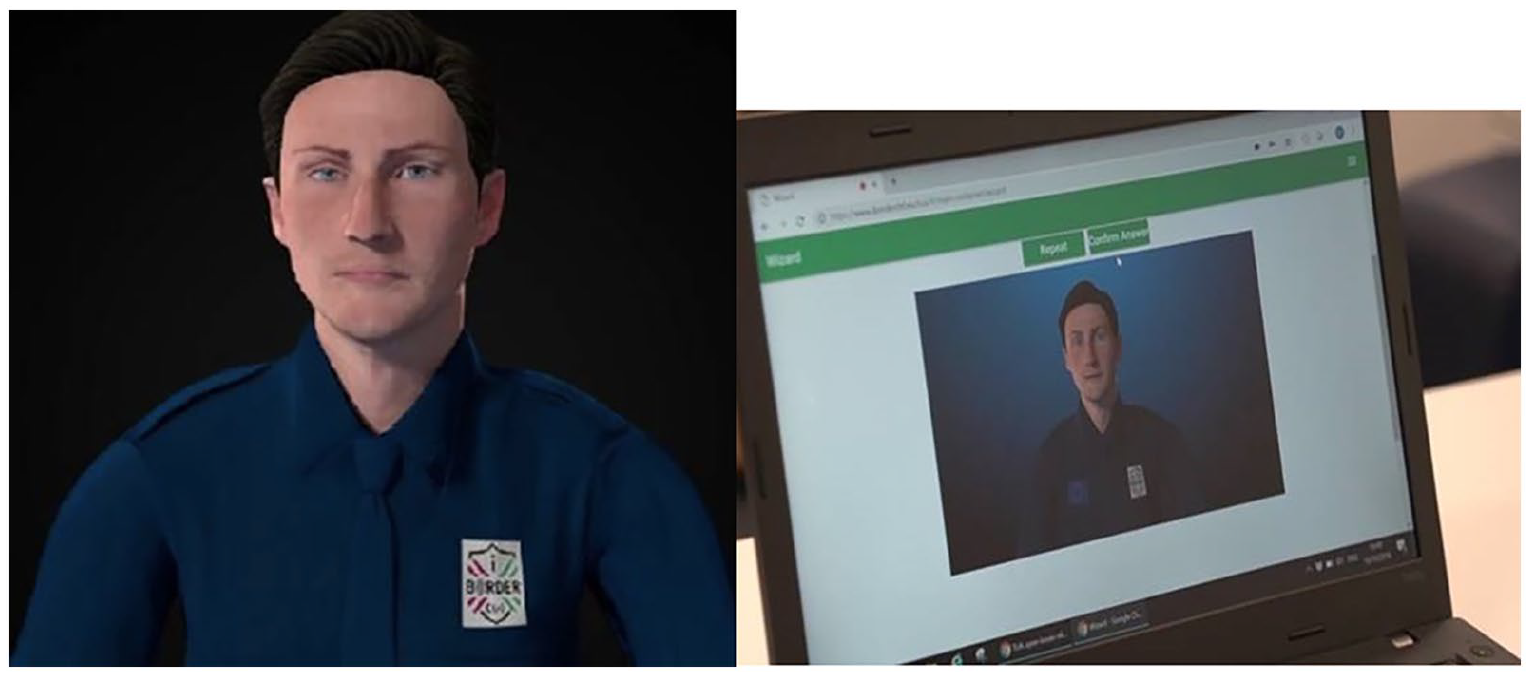

One such consortium is the Intelligent Portable Border Control System, or iBorderCTRL. iBorderCTRL is a system that combines elements of facial and emotion recognition – enduring techniques in spite of their basis in the eugenicist practices of phrenology and physiognomy – to determine the level of ‘deceptiveness’ in an individual looking to travel from a third country. This particular function of the project is known as the Automated Deception Detection System (ADDS). Prior to travel, individuals are invited to upload their passport photos and personal information to a web app using their own computer, which is subsequently cross-referenced with law enforcement databases, social media information and any other listings that might indicate whether the traveller poses a public security risk. Before checking in to travel, an on-screen 3D avatar prompts the traveller with a series of questions, using onboard cameras to capture, map and analyse their facial expressions as they respond (Gallagher and Jona, 2019). A QR code is generated, which travellers are required to bring with them to the border guard (this is also iBorderCTRL’s guarantee that there is a human in the loop); the code, which provides the guard with a score out of 100, informs them whether or not to trust the individual in question. No one is informed of their score, yet depending on where they fall on the scale, they may be classed as ‘high’ or ‘medium’ risk and stopped from completing their journey.

The system was initially tested on 39 volunteers, before being taken to fake borders to be used only among 30 further volunteers, in advance of rolling out the system for piloting in EU countries bordering non-EU countries (iBorderCTRL.no). For the first test population, the system – having been trained on majority male volunteers of European ethnic origin – reported greater accuracy (around 75%) for that particular grouping of individuals than others less represented (O’Shea et al., 2018). This is hardly surprising; in 2018, Joy Boulwamini and Timnit Gebru published a NIST paper that tested facial recognition for bias, finding that these systems – even premier versions designed by e.g. IBM – returned false positives at a rate of anywhere between 60% and 90% depending on context. They found women of colour, in particular, were disproportionately misidentified, largely due to an overly white and male composition of training data. Similarly then, iBorderCTRL’s already abysmal accuracy might have looked very different, had the researchers behind it opted to also study the number of false positives generated by the system.

iBorderCTRL complicates the simple one-dimensional bias problem of facial recognition, as in addition to attempting to detect, identify and ‘decipher’ faces, it sets a generalized baseline for emotional expressiveness of faces, globally. The project helps expedite existing anxieties within European institutions and states that migrants – especially asylum seekers and refugees – are deceitful, using controversial and previously dismissed psychometric methods in combination with AI. In a paper reviewing the testing of iBorderCTRL, the authors lament that ‘there are no meaningful single non-verbal indicators of deception’ (O’Shea et al., 2018). Basing much of its rationale on Paul Ekman’s work on microexpressions that attempted to claim universality behind the frequency of eye-blinking, direction of sight, movement of facial muscles, changes in tone of voice, iBorderCTRL seeks to encode these facial variations into categories of ‘deception detection’ (Sánchez-Monedero and Dencik, 2022).

The project team, nevertheless, is unapologetic in innovating the biometrics industry by introducing microexpression-based lie detection, describing their product as ‘deploy[ing] well established as well as novel technologies together to collect data that will move beyond biometrics and onto biomarkers of deceit’. These biomarkers are matched against a series of predefined standards for what might constitute a truthful interaction with the system (Thales Cogent Automated Biometric Identification System (CABIS), no date); these include the following:

All participants will use their true identities as recorded in their identification documents.

All participants will answer questions about a real relative or friend who is an EU/UK citizen (equivalent of a Sponsor in border questions asked by EU border guards).

All participants will pack a suitcase with harmless items typical of going on a holiday.

Participants will answer questions about identity, sponsor and suitcase contents.

All answers to questions can be answered truthfully. (O’Shea et al., 2018).

Scenarios defined as deceitful include:

All participants given fake identities (male/female) and short life history.

All participants are given a short description of a fake relative from the EU. (O’Shea et al., 2018)

The assessment process also includes being exposed to imagery of various factors, biohazards and possession of illegal goods while interacting with the avatar, in order to test reactions elicited by travellers. These markers of truthfulness and deceit amidst ‘problems’ systematizes a somewhat arbitrary and bounded notion of trust based on one’s ability to engage in invasive, and potentially life-endangering, disclosures. Those on the run from problems of persecution or hunger are now presented with a reality in which former strategies of survival are no longer available. The avatar greets them, for a moment generating hope and fear, while the border subject assesses what they might share with the disembodied figure dressed as a private border operator on their screen (Figure 2). But the system is not simply demanding ID enclosure; iBorderCTRL treats the body as an ID, extracting characteristics even from the handful of seconds an individual might spend wondering what information they are able to safely share. In this way, ‘Show your ID’ becomes merely a form of technical intimidation.

Automated Deception Detection on-screen avatar.

While the iBorderCTRL pilots reached the end of their project cycle, aspects of it continue to live on in systems being implemented across the EU and by Frontex. This includes, for example, through the forthcoming European Travel Information and Authorisation System (ETIAS). The ETIAS system cross-references with open-source data online, including social media, medical information and more, to assert digital identity and determine the threat a traveller might pose to the security of Europe. At the state level, countries including Germany have been deploying such tools for years. Since 2017, the Federal Office for Migration and Refugees has been using tools such as the Dialect Identification Assistance System (DIAS), and phone extraction, to check whether the origin and identity of asylum seekers is coherent with their claims (AFAR report). Seemingly discrete experimental projects set up through multistakeholder consortia, such as those enabled by the Horizon programme, obfuscate the incremental adoption of speculative digital ID techniques by border enforcement agencies.

New digital forms of bordering, such as those proposed by iBorderCTRL, entangle their subjects with the border, by codifying, formalizing and distinguishing desirable physical attributes, behaviours and emotions from undesirable ones, and packaging them together with records and assumptions beyond biometrics (iBorderCtrl, 2019; iBorderCtrl project, 2018).

Digital identity and the re-scaling of border regimes

The digital solutions that states want, companies provide. Companies automate based on speculation, using biometrics – but not only so. That speculation comes from digital identity, which is the digital metadata about individuals, whom they are connected to, where they have been, what they have done. This expands the bounds of identity verification, and of borders, into the future.

We identify two conditions that matter. First, the two common understandings of identity –identity as belonging and identity as means to police and exclude from belonging – are embroiled in change. Digital identity is an extension of the latter as an especially powerful means to police, attuned as it is to specific individuals, their social conditions, and their imagined status as vulnerable or threatening. Regions of acute capital and wealth like the United States, the EU and Australia are increasingly and selectively identifying individuals who are not their own citizens, and who may or may not even be seeking to enter, for the speculation that they could. Motivated by a future-oriented and proactive attention to the possibility they might, they develop paradigm-shaking means of extending digital identity into a global realm by speculative means.

This happens irrespective of the ability or interest of states elsewhere to make their own citizens legible. So far, digital identity is about data gathered and controlled by proprietary companies and some governments, and not by others. Digital identity, with biometrics, is the technological means to advance a border regime beyond material borders. It is precisely these kinds of populations – racialized, subjects of everyday and police violence, who seek escape from war, structural violence and slow or acute death – that are most compelled to leave without being documented by their own countries. Moreover, while a passport is a ‘tangible product of functioning citizenship’ (Kingston in Maitland, 2018: 37), governments surely see them as insufficient means to parse the ‘goodness’ of an individual.

Digital ID developments in the United States and the EU help to demonstrate how contemporary migration – known, unknown or preemptible – feeds a growing demand to know and identify people. The question has been who and how. The answer is those people whose vulnerable condition might compel them at a moment’s notice. These people may not have a passport. But even if they do not, they almost certainly already have a digital footprint and a digital identity that can be found and regimented.

Second, conditions and patterns of mobility have also changed. In ‘sending countries’ especially, governance through selective attention to life, legality and violence – what Auyero and Sobering (2019) call the work of the ‘ambivalent state’ – is an increasingly global condition creating and maintaining acute citizen vulnerability within states. Deplorable life conditions produced by ambivalent governance – violence, organized crime, income-less existence, precariousness and hunger – are powerful motivators for individuals to travel. In this way, the undocumented are already produce by a mode of government. This ambivalence to human thriving collides with a stern xenophobia elsewhere, where ‘the terms of sovereignty, citizenship, community, belonging, justice, refuge and civil rights’ are remade with ‘race and nativist language to structure mobility, belonging, elimination, and extermination’ (Besteman, 2019).

The cases discussed in this paper reveal novel but speculative and flexible patterns to identify individuals on the move, and within these logics. First, efforts to digitize identity verification rest heavily on a notion that biometrics, combined with data about the online lives of individuals in question, are transferable or recognizable with digital methods. Transcending analogue types of biometrics such as inky fingerprints, dental records, statements of eye, skin colour or ‘ethnicity’ that states have used on ID in varied ways, new concern for digital biological identifiers is a crucial tether between digital lives online, lives unverified by their states, and material movements across borders. And yet these efforts have a precursor: data for digital identity is taken and gathered from populations already delineated as problematic, because their general category – refugee or disappeared – implies they could be a threat to the concentration of global capital and its social relations in the country they hope to enter.

Moreover, speculative identity verification is itself central for accumulation of technical and symbolic capital that allows the digital industry to further expand. Digital capture locks in hypothetical or ‘past’ individuals, who may be long dead, to predetermined characteristics and policies that are future-oriented. It fuses bodies and borders with technical capital and the austere politics of xenophobia, whether or not people travel. The disappeared in São Paulo are biometrically and digitally conscripted by virtue of their unknown location – which their own government demonstrates ambivalence to – and collated into a larger mode for identification, policing and prediction of speculative border crossings, in an attempt to foreclose the possibility that they might make it across – if they are even alive. In Europe, foreclosures on movement attempt to extract an individual’s propensity for deceptiveness in a mixture of biology, eugenics and digital surveillance. Here, technologists and technocrats make a science out of a supposed connection between facial, skeletal, muscular and phenotypical composition, gestures of individuals and digital presence into grounds for rejection at the border.

Second, these methods of preempting real or imagined threats, while speculative, are tethered to inequitable concerns for life. In these two cases, this speculation is trained on individuals in flight, and who are already pre-categorized as subjects of harm. And yet, by binding potential threats to these discrete populations and deploying policy along these same lines, digital identity takes on a life of its own. The life of digital identity is highly uneven, being motivated by assumed categories and populations of supposed threat, who must be identified and made knowable for what they could do. The dynamic emerging is not only one of taking the past into the future.

This form of technical development, through which emerging AI-driven technologies are either tested on ‘marginalized’ populations, or where advanced surveillance technologies are further expanded and trained through employment of marginalized bodies, reproduces global racial capitalism and violent bordering, together, premised on selective conjecture to resolve abiding contradictions.

Third, digital identity matters because it serves to rescale the claim that a technological product is a superior means of identifying individuals and parsing ‘good’ from ‘bad’. Disappearance and flight from war are not novel problems. Their framing as the ‘greatest challenges in our society’ by companies makes them problems for digital technology, independent of what we know about uses and abuses of identity verification that are already part of the story. Techniques of digital identity verification assert micro solutions to major and longstanding structural problems of inequality and governance. Technology firms use these problems of dramatic and ongoing human suffering to fortify collective identity against the unknown stranger and the need to identify them and their intent. Disappearance and flight from war are readily transformed into an ‘identity making machine’ that reifies collective identity against visions of those who might do it harm.

The real significance is not what digital identity solves but what it produces and makes real in the process. Identity belonging and identity denial are not just useful populist ploys for resurgent nationalists to fall back on to define their terms. The reassertion of identity belonging in populist terms is evidence of a crisis of the state for which the rise of global identity verification is not a by-product, but, rather, a palliative.

Conclusion

Identity belonging and identity verification are used to paper over a grand contradiction: the economic liberalism of capitalism seeks minimal governance and bounds, but the political liberalism of the state means it must secure, maintain and hold steady. This contradiction faces recurring crises, of which digital identity is just one supposed solution. Looking closely at identity making reveals this fallacy. Efforts to know and verify individuals and populations is not complete, nor total; it is always becoming and ever the subject of political will and fugitivity.

Similarly, digital identity is not total and will never become so. There are at least three reasons why this is the case. First, pillars of digital identity are constructed upon uneven foundations. Knowing who someone is, might be, or could become, hinges on who they have been known as – if they have been known – hitherto. While digital advocates argue their means can transcend such banal problems of ‘weak states’, new problems arise at scale. Where digital identity verification becomes a question of purely digital means of speculating on identity beyond biometrics – via digital profiles, selfie images, or online activity, which is to say that an identity or its verification has no analogue, material or tether – crucial new challenges emerge for knowing who exists and who has been generated by a machine. In an era of proliferating bots, digital profiles, and social media and email accounts that can be automated and created by AI-like systems, biometrics are digital identity’s link to actually existing people. In this way, they are distinct but necessary bedfellows in an age of capitalist digitalization and securitization of borders.

Second, digital identity techniques are being made with unequal attention to populations, and with a particular mode of inequitable global governance in mind. This selectiveness is motivated not by benevolence or care, but by logics of fear, and calls for security in regions with dramatic concentrations of global wealth. In other words, the work of digital identity verification is the novel work of a longstanding pattern and politics of global inequality – nations and communities of belonging and shared identity cast against identities that portend threat from elsewhere. This differentiation advances with a particular logic of everyday policing and order maintenance that is not circumscribed by states and borders.

Third, digital identity techniques are not being made to improve life. Instead, they code in the alphabet of fear. For Glendinning (1990), who writes of neo-Luddism, these efforts impose the worst qualities of technology, including ‘a rigid and irreversible imprint on their users’ that does not ‘promise political freedom, economic justice [nor] economic balance’. Digital identity work, of the advancing kind that we have examined, is bounded by discrete projects, events, or attention to a specific problem, such as the ‘greatest challenge’ of ‘modern migrants’. These efforts are part of larger and ever more tightly knit fabric that seeks to learn from, and make claims about, its own data, including what it fabricates.

Building other means of technological solidarity and counter-practices of data justice is possible. Migrants rights organizers have, over recent years, taken direct action at the offices of technology giants such as Google, Amazon and Palantir, corresponding with tech workers to raise awareness about – and an imperative to refuse – the intimate relationship between their labour and the production of borders. They present a break with approaches seeking to improve the experience of marginalized populations with more technology, assuming the inevitability of datafication. Their refusal, whether in the form of concealment, destruction of digital identity assertion, use of alternative technology configurations, or direct physical tech-sabotage, are crucial kinds of neo-Luddite praxis. Through these efforts, the techno-solutionist insistence on a digitally perfectible world, with its inscrutably knowable identities, is exposed as cells, barbed wire, bank accounts, and walls written in ones and zeros.

Footnotes

Acknowledgements

The authors would like to thank their research collaborators who shared generously from their experiences facing the exploitative nexus of technology and policing, and to colleagues who offered advice and sounding boards along the way, including Duncan Bell in particular, who reviewed an early iteration of this paper.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.