Abstract

Many innovations have come from people working together as partners in thought. These partnerships, however, are not restricted to single encounters. Some of the most meaningful collaborations evolve over weeks, months, or even lifetimes. What are the core computations that enable long-term thought partnerships? Prior work in cognitive science has made initial progress by investigating how people construct mental models of their partners on the fly, establish common ground using language and other modalities, and generate joint plans that lead to successful outcomes. However, it remains unknown what cognitive mechanisms enable such interactions to evolve into genuine partnerships over longer timescales, especially under measures of success that extend beyond task performance. Theoretical and empirical progress on these issues could be instrumental for defining and designing AI systems that may even be capable of establishing long-term thought partnerships with humans. This article outlines several promising avenues for leveraging approaches from cognitive science and AI to study enriching intellectual partnerships.

We all have a choice: how to make use of our limited lifetime. Some may choose to spend their days caring for others, helping those who are sick; others may choose to pursue the adventures of science and navigating into the unknown, or building something new. Many of these pursuits are not done in isolation. We regularly engage with others in the quest for our dreams, be it asking for advice on a business decision, consulting another doctor on a tricky case, or codesigning the next experiment with a fellow scientist. Our “thought partnering” (Collins et al., 2024; Yanai & Lercher, 2024) is not limited to a single instance, or even a few interactions. If we are lucky, we find and develop deep, long-term collaborations. Some of the best innovations in science and the arts have come from multiyear thought partnerships, from Amos Tversky and Daniel Kahneman, to Esther Duflo and Abhijit Banerjee, to Steven Spielberg and John Williams.

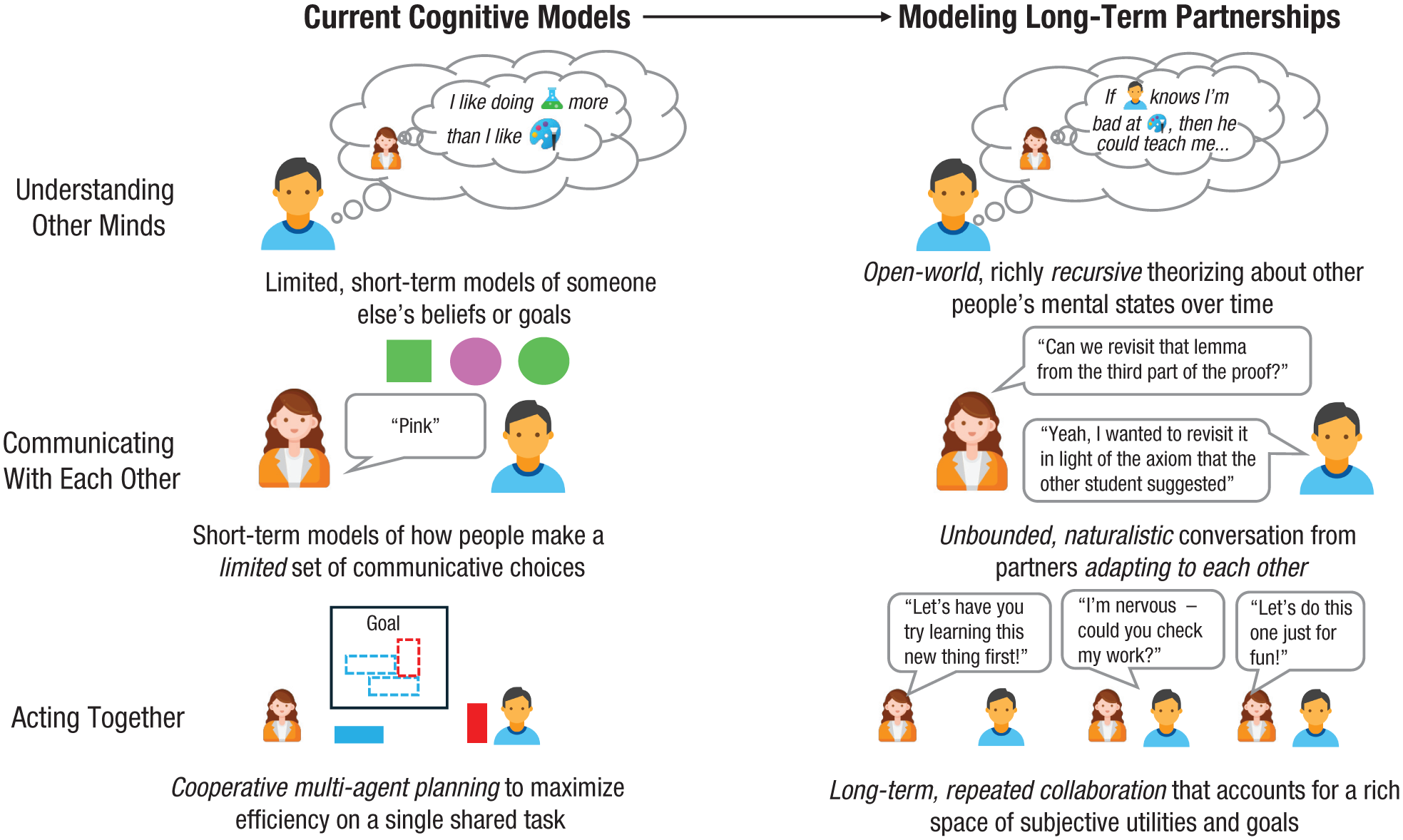

Cognitive science has made substantial progress in how we think about how people collaborate with each other. This article reflects on this progress and asks how we may move beyond models of short-term collaboration toward modeling long-term meaningful thought partnership. We start by discussing three bodies of work: modeling each other, modeling joint action, and modeling communication (Fig. 1). We discuss challenges in capturing the core computations that empower collaboration over longer time horizons. We then turn to how—and whether—ideas from human-human partnerships can extend to possible meaningful long-term human-AI thought partnerships.

Three foundational lines of work in cognitive science for modeling human collaboration. Key open questions raised by our (left) current cognitive models as we seek to capture (right) long-term partnership include how we scale models of (top) how people understand other minds by reasoning about others’ mental states, (middle) how people communicate with each other by choosing language to convey information effectively to a partner, and (bottom) how people act together to jointly achieve shared goals.

Laying the Theoretical Foundations of Partnership: Understanding, Communicating, and Acting Together

We begin by reviewing three foundational lines of work that underlie our discussion of partnership: formal theories of how people understand other minds, how they communicate with each other, and, ultimately, what it means for them to act together to achieve a shared goal. However, each raises several significant challenges for scaling these theories toward rich models of long-term partnership. We highlight these open questions here.

Understanding other minds

When people enter into a collaboration, they often know that each person has their own rich inner mind, with their own individual beliefs, goals, and feelings. Potential collaborators can understand a lot about each other just from watching how a partner acts. Computational models of theory of mind formalize this knowledge of how other peoples’ mental states shape their actions (Baker et al., 2009; Jara-Ettinger et al., 2020). For example, the computational framework proposed by Baker et al. (2009) formalizes a simple set of intuitions that most people have about others: People expect that others are not merely acting at random; people’s actions are likely based on their goals and own beliefs about what is happening.

In practice, however, contemporary theory-of-mind models used in cognitive science face several fundamental challenges that come up especially in the case of long-term collaboration. First, the ways that people reason about each other over the course of an extended collaboration are often nested. A mentor might want to help their junior colleague in the long run while also mitigating any embarrassment their colleague might feel for needing a hand. This kind of reasoning is often highly uncertain and recursive in that it involves thinking about how another person might think about oneself, several layers deep. More generally, any extended collaboration involves navigating a changing landscape of new considerations that come up over time, often unexpectedly. Existing computational models do not easily explain how people can reason about an enormous and changing space of possible factors under the computational time and memory than human minds have at their disposal (Griffiths, 2020). Understanding how to scale formal theory-of-mind models toward longer time scales and more realistic settings in which anything might come up at any time is an open question.

Communicating with each other

Human collaborators do not just try to read each other’s minds by watching each other’s actions, however, as in Baker et al. (2011). Instead, they can communicate with each other. Successful collaboration relies on some shared knowledge. Potential collaborators may not start knowing the same things or even agree on their shared goals. Communication lets collaborators close those gaps (Clark & Brennan, 1991). A collaborator can tell their partner key details necessary to work effectively on a task (e.g., how to use a tool) and information about themselves (e.g., what they prefer to work on, what they need to learn). Rational models of communication such as the rational speech act framework (Frank & Goodman, 2012) frame communication as a thoughtful, intentional action that requires reasoning about what a partner may already believe and how they are likely to interpret (or misinterpret) communicative choices.

However, rational models of communication pose several computational challenges to explain real communication between long-term intellectual partners. First, many rational models of communication are idealized models that cannot be directly applied to explain how people reason about unbounded, arbitrary natural language in general. Most cognitive models built on this framework operate over a predefined set of possible communicative actions, such as a set of phrases and their interpretations. Second, these models do not generally explain the dramatic ways in which collaborators can change their communicative channels over long periods of time. Real close collaborators often develop specialized vocabularies to capitalize on their shared knowledge and talk more precisely about key concepts in their domain (Hawkins et al., 2023). Modeling long-term collaboration requires scaling rational models of communication to account for the richness of real natural language and the ways in which partners change that language to suit their needs.

Acting together

What makes something a collaboration is ultimately the act of working jointly toward a shared goal, wherein partners act together in ways that complement and enrich each other, yielding something greater than the mere sum of two individuals working on their own. Theories of cooperative multiagent action and planning formalize how several people can work together to efficiently achieve a shared goal, often under uncertainty (Grosz & Kraus, 1996). Many of these theories build on notions of individual rational planning and goal-directed action that describe how any one agent should plan their actions to optimally achieve a goal. The Bayesian delegation model (Wu et al., 2021), for instance, uses this general idea to formalize a basic but fundamental aspect of cooperation. It describes how collaborators should reason about what small subtask their partners are currently on track to achieve so that the team can avoid redundant effort or accidentally slow each other down as they work (Mieczkowski et al., 2025).

As models of true long-term collaboration, however, these computational approaches raise several open questions. Long-term planning of any kind poses significant challenges for current cognitive theories. Much like theory of mind, existing theories of rational action often suggest that planning and acting require much more time and computational effort than people have at their disposal. Moreover, existing theories of multiagent action usually focus narrowly on how two people might achieve any one goal as quickly as possible. Real collaborators take a much richer and wider set of considerations into account, both for themselves and for their partners: People who choose to collaborate over the long term might continue working together because the partnership feels fair, because they can always learn something new and surprising from the other person, because they find it rewarding to teach and mentor another person, or because the work itself and the act of working with their partner brings them meaning and joy. Real people—when collaborating well—strive to act toward each other with dignity, empathy, and mutual respect. All of these go well beyond a simple notion of cooperative action and yet lie at the very heart of what it means to collaborate well.

Building Toward Theories of Meaningful, Long-Term Partnership

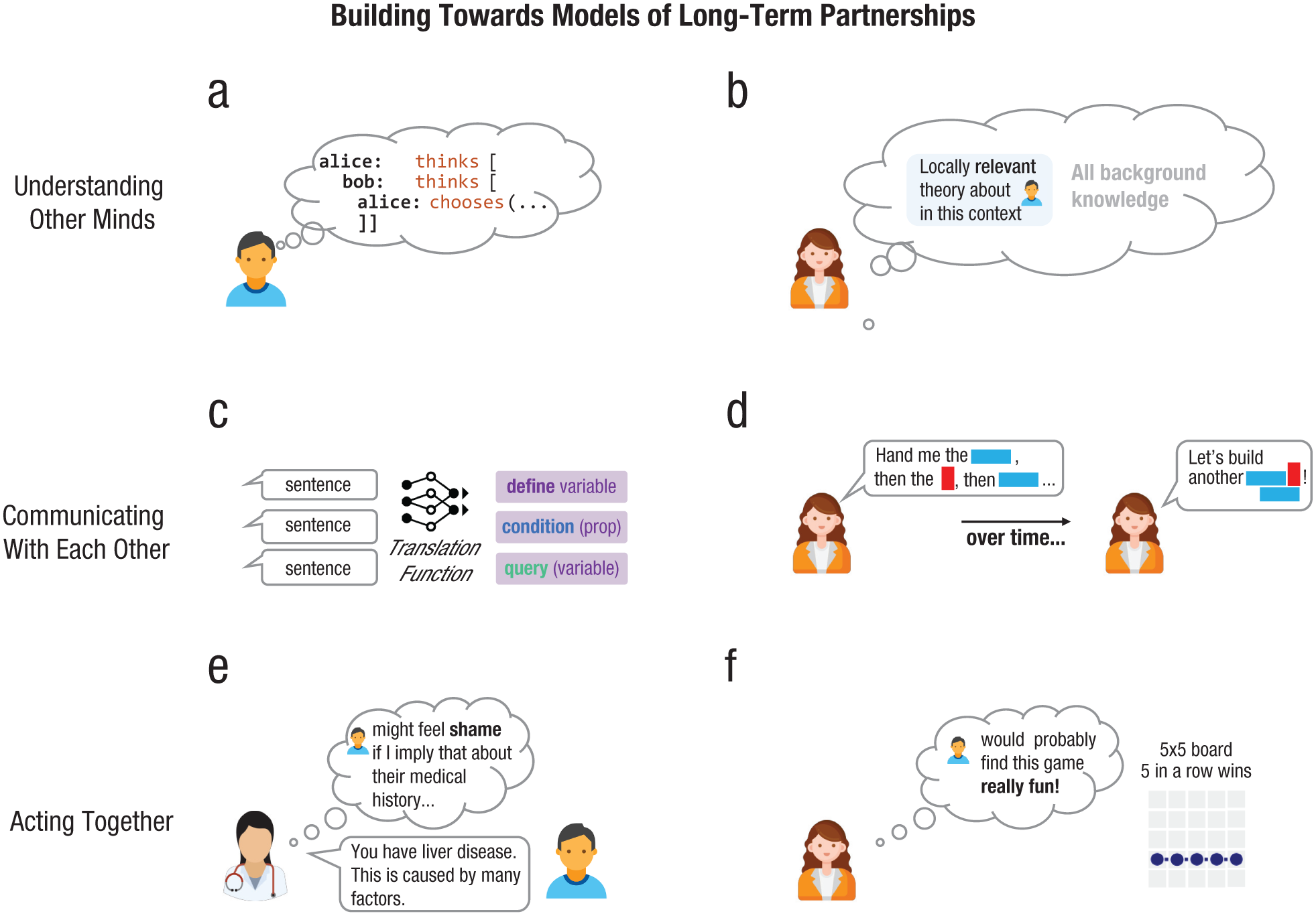

We next discuss a few recent works that have started to make progress toward understanding, formalizing, and empirically studying computations potentially underlying the cognitive mechanisms driving rich human-human long-term thought partnerships (Fig. 2).

Examples of recent work that addresses key questions in modeling long-term partnership. To build toward long-term models of understanding other minds, the memo programming language (Chandra et al., 2025) formalizes (a) richly recursive models of mental states, and recent work by Wong et al. (2025) and Zhang et al. (2025) formalizes (b) how people might propose small, relevant theories of someone else’s mental states in a given context. To build toward long-term models of communicating with others, recent work by Loula et al. (2025) and Wong et al. (2023) formalizes (c) how people might turn naturalistic language into structured mental representations of conceptual content, and other work by Ellis et al. (2021) and O’Donnell (2015) formalizes (d) how people might adapt their vocabulary to talk about more complex, shared concepts over time. To build toward long-term models of acting together, recent work by Chen et al. (2024), Collins, Chandra, et al. (2025), and Houlihan et al. (2023) models how people might (e) act to take into account someone else’s emotions or (f) reason about subjective and experiential sources of reward, such as what someone else would find “fun” (Chu et al., 2025; Collins, Zhang, et al., 2025).

Modeling how people understand each other in real-world, long-term partnerships

How do collaborators make sense of the complexities of any real person, juggling what their partner might want from the task at hand, alongside what that other person might be thinking about them? And how do they even begin to know what the other person is thinking—even imperfectly—in the messy dynamics of a real partnership? Modeling any real long-term partnership will require scaling today’s limited theory-of-mind models to handle richly recursive reasoning about other minds and reasoning about sets of actions and mental states that someone cannot know in advance. These two computational problems might be linked to explain how we feasibly reason about and understand each other without an inordinate amount of computational effort. For instance, modeling how collaborators might consider richly recursive theories about what another collaborator has in mind but assume that what their partner is thinking is probably limited by their own available memory and effort (Griffiths et al., 2015; Zhi-Xuan et al., 2020). Or more broadly, explaining real long-term human collaboration might require building on recent work suggesting that people reason in general, including about each other, by constantly coming up with and revising small but sensible theories that capture just enough detail to understand the situation at hand (Brooke-Wilson, 2023; Wong et al., 2025; Ying et al., 2025; Zhang et al., 2025). These small, approximate theories of another person, built just to capture what seems relevant and useful to make sense of what they are doing, might explain how people can ever understand and reason about each other in an ever-changing collaboration, all of which may be advanced by new computational frameworks for modeling social agents (Chandra et al., 2025).

Modeling communication over real language and changing language

How do long-term collaborators reason about what to say out of the enormous space of things they could say? And how do they change the language they use over time to suit their needs, inventing the kinds of rich and specialized vocabularies that are used in real academic work and in extended collaborations? Two exciting research directions for modeling communication between long-term partners involve explaining how rational communication can integrate with general language models and redefining rational communication to account for how people invent new concepts and change their vocabularies accordingly. In the first direction, recent work that integrates structured rational models for inference and planning with large statistical language models (Lew et al., 2020; Loula et al., 2025; Wong et al., 2023) suggests one starting point for integrating rational communicative frameworks such as the rational speech act framework (Frank and Goodman, 2012). These approaches suggest complementary directions for bringing together recent advances in statistical language modeling with existing cognitive models of human reasoning. The rational meaning construction framework (Wong et al., 2023) posits that people make sense of language in general because they can translate generally from natural language into structured mental “programming languages” for representing and reasoning about the world. The language model probabilistic programming framework (Lew et al., 2020; Loula et al., 2025) suggests that people might also have ways for defining general inference algorithms over small, composable primitive distributions learned from linguistic experience. Neither of these frameworks yet incorporates the rich mentalizing about other minds that characterizes real human communication. Future work could build on each approach toward rational communicative models that explain how agents reason about each other but that can operate over the domain of general natural language. In the second direction, a growing body of work suggests that people might invent new conceptual abstractions that help them reason and plan, much like software engineers construct growing libraries of abstractions defined over the primitives of simpler programming languages (Ellis et al., 2021; O’Donnell, 2015). These frameworks propose how people might use and change the language they speak over time by coining new words that let them efficiently talk about these new conceptual abstractions. Most work in this vein has been largely theoretical or explored in limited experimental settings; future work could build on these approaches to explain how people fundamentally change the communicative channels at their disposal to suit a deepening intellectual partnership.

Modeling meaningful collaboration

Why do people choose to collaborate with each other at all? How do people try to work toward all of the things that make any real collaboration meaningful or even begin to assess whether a collaboration has been valuable beyond the mere fact of whether any one job got done? These are deep questions beyond the reach of existing computational models—and perhaps often beyond the reach of people trying to get any would-be collaboration off the ground. Still, several intersecting lines of recent work suggest how we might begin to build toward these kinds of rich, subjective evaluative criteria within formal computational theories. One recent line of work (Houlihan et al., 2023) takes steps toward formalizing how people understand each others’ emotions. This work points out that even emotions as nuanced as pride, envy, joy, and respect that are neither completely arbitrary nor ineffable can be formally modeled in theory-of-mind frameworks. Other recent work also takes steps to formalize how people evaluate what it felt like to experience working with another person, beyond any obvious external reward. Chu et al. (2025) and Collins, Zhang, et al. (2025), for instance, ask how people evaluate whether playing a game with someone else will actually be fun—separate from whether they think they will win or lose. They propose that these subjective evaluations of experience are fundamentally rooted in the same underlying cognitive capacities that let us plan how to act in general, or reason about other people’s mental states and actions, and point to aspects of what makes an experience rewarding—whether it feels joyful—beyond simply achieving a task as efficiently as possible. Other recent work examines how people might plan around the kinds of subjective and experiential goals that we mention here—modeling, for instance, how people might adjust their actions to be empathetic as well as efficient (Collins, Chandra, et al., 2025), or how people might specifically act to intervene on someone’s emotions to save them from embarrassment or cheer them up (Chen et al., 2024). Each of these models carves off only a handful of subjective considerations, such as empathy or embarrassment, and describes how people might plan around them with respect to a fairly small set of possible actions and outcomes. Real partnerships involve many of these considerations at once. Understanding how people come to understand, in a general sense, what feels rewarding to them and others—and how that shapes their plans and actions in the long term—requires answering many of the formal questions we raise throughout this section and breaking new ground.

Studying long-term partnership with new tasks and data sets

A cognitive science of long-term collaboration requires not only model developments but also new experimental methods and evaluative measures that actually probe the kinds of theoretical and computational questions we raise here. Work from McCarthy et al. (2025), in which two people cooperated on a realistic computer-aided design task, or Vélez et al. (2024), in which groups of people developed and passed on miniature technological innovations on a sped-up timescale, suggests the kinds of tasks we might use to study complex collaboration even in a controlled laboratory setting. Future work should also collect richer and more subjective evaluative measures within any of these tasks, such as whether people expect or found the act of working together to be respectful, dignified, or fair.

Imagining Meaningful Long-Term Human-AI Thought Partnerships

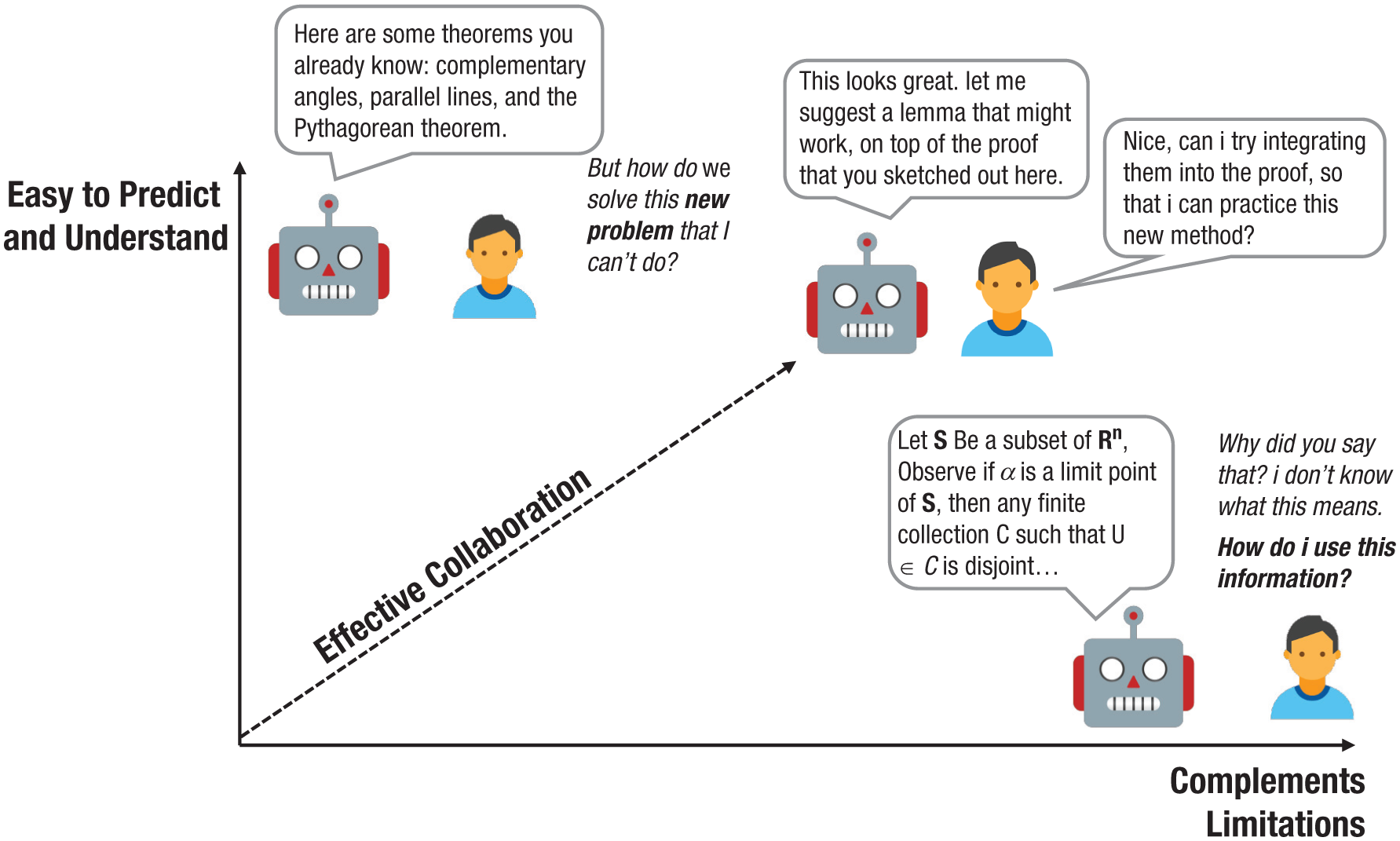

This article focuses on three core computations that may underlie long-term human thought partnerships—the ability to grow and change one’s understanding of other agents; to flexibly adapt communication between agents; and to form and evolve shared goals that incorporate subjective utilities, such as having fun. To what extent do these concepts extend, or break down, when applied to understand asymmetric human-AI interaction? Despite immense progress in AI, recent advances have often been decoupled from collaborative efficiency (Challapally et al., 2025); the most performant AI systems may not be the most effective collaborators (Bansal et al., 2021; Carroll et al., 2019). Indeed, many current systems sit at one of two unsatisfying ends of a collaborative section (Fig. 3). An effective human-centric AI system may be one that both meets our expectations yet also is sufficiently unpredictable—as many good human thought partnerships are. Although good collaborators often construct a common lexicon (as discussed above), collaborators also teach and push one another, bringing to bear distinct knowledge bases. Herein, AI systems offer tremendous potential as new kinds of collaborators that can shape new strategies for thinking, such as how AI gameplay is influencing human-human gameplay strategies (Shin et al., 2023).

Several dimensions to inform AI thought partner design. Systems that meet our expectations (but do not complement our limitations) may be so simple or human-like that we do not get comparative benefit from engaging with AI. Conversely, systems that are optimized exclusively to complement people’s limitations may end up being so complex that people struggle to predict when and how they should be used. Human-centric AI systems may require a middle ground, ideally moving toward the upper right quadrant and potentially requiring trading off one or the other axis. Yet whether balancing these two axes alone is sufficient for a system to warrant the label “thought partner” is an open question (Collins et al., 2024).

However, even if we build AI systems that meet our expectations and complement our limitations, does that mean that they warrant the label “thought partner” (Collins et al., 2024)? Or is there a tacit assumption of something else underlying the collaborations for it to be worthy of the label “partnership”? Perhaps the collaboration itself is not only an effective means to an end but also a relationship that grows in value as it develops precisely because of the assumed personhood of everyone involved. Something like personhood and the belief that both partners have a valuable and individual agency of their own, and yet have chosen nonetheless to each renew the terms of the partnership over time, might well be fundamental. It may be this assumption of agency, in the fullest sense, that leads people to dramatically reshape their communication to reflect both parties or bend the collaboration to the needs and individual desires of each other. Perhaps human partners do not seek to understand each other’s minds’ only to better achieve their shared goals but because they also simply believe each partner to have a mind worthy of being understood. Cognitive scientists are poised to help define, extend, and reshape the foundations of durable long-term thought partnerships and inform how we design the next generation of human-facing AI systems. Theories about such partnerships (human-human and, potentially, human-AI) may in turn offer mirrors for what we truly expect and seek out in human partnerships with one another.

Conclusion

Some of the richest human experiences take place with the meeting of two minds. Lasting collaborations define many of the relationships that color our individual experience and the partnerships whose intellectual contributions shape modern society. In this article we reviewed several computational foundations that already exist to study long-term partnerships within cognitive science and outlined the horizons ahead if we hope to scale toward the complexity and timescales of real, extended collaborations. In doing so, we also hope to lay the groundwork for imagining what kind of AI systems may be worth the name “thought partner”—systems that can be durable and meaningful enough that we would want to invite them into our lives over longer horizons: collaborations that look beyond myopic productivity on short-term tasks and toward truly complex partnerships that are fulfilling, dignified, and even fun.

Recommended Reading

Collins, K. M., Sucholutsky, I., Bhatt, U., Chandra, K., Wong, L., Lee, M., Zhang, C. E., Zhi-Xuan, T., Ho, M., Mansinghka, V., Weller, A., Tenenbaum, J. B., & Griffiths, T. L. (2024). (See References). Explores the idea of human-compatible AI thought partners that engages deeply with cognitive science.

Hawkins, R. D., Franke, M., Frank, M. C., Goldberg, A. E., Smith, K., Griffiths, T. L., & Goodman, N. D. (2023). (See References). Studies computations underlying cooperative language change between partners over time.

McCarthy, W. P., Vaduguru, S., Willis, K. D., Matejka, J., Fan, J. E., Fried, D., & Pu, Y. (2025). (See References). Develops new tasks for studying collaboration in the context of computer-aided design.

Yanai, I., & Lercher, M. J. (2024). (See References). Discusses the value of specifically dyadic (two-person) collaborations in science.

Footnotes

Acknowledgements

We thank Kartik Chandra for many valuable comments on this manuscript; Ilia Sucholutsky, Tom Griffiths, Umang Bhatt, Adrian Weller, Valerie Chen, Lance Ying, Tan Zhi Xuan, Kerem Oktar, Alex Lew, Tyler Brooke-Wilson, Kaya Stechly, Elizabeth Mieczkowski, Junior Okoroafor, Max Kleiman-Weiner, Tim O’Donnell, Neil Lawrence, Eric Horvitz, and Ced Zhang for many conversations around thought partnerships that informed this work; and Robert L. Goldstone and our reviewers for invaluable comments and questions that fundamentally shaped our thinking around human-AI thought partners.

Transparency

Action Editor: Robert L. Goldstone

Editor: Robert L. Goldstone

Author Contributions

K. M. Collins and L. Wong contributed equally to this manuscript. All authors wrote and edited the manuscript. All of the authors approved the final manuscript for submission.