Abstract

Artificial intelligences (AIs), although often perceived as mere tools, have increasingly advanced cognitive and social capacities. In response, psychologists are studying people’s perceptions of AIs as moral agents (entities that can do right and wrong) and moral patients (entities that can be targets of right and wrong actions). This article reviews the extent to which people see AIs as moral agents and patients and how they feel about such AIs. We also examine how characteristics about ourselves and the AIs affect attributions of moral agency and patiency. We find multiple factors that contribute to attributions of moral agency and patiency in AIs, some of which overlap with attributions of morality to humans (e.g., mind perception) and some that are unique (e.g., sci-fi fan identity). We identify several future directions, including studying agency and patiency attributions to the latest generation of chatbots and to likely more advanced future AIs that are being rapidly developed.

Artificial intelligences (AIs), such as robots and chatbots, have increasingly advanced cognitive and social capacities. For example, the chatbot GPT-4 can write poetry, reason through mathematics problems, pass classic theory-of-mind tests, and communicate responsively in human language (Bubeck et al., 2023). The rapid advancement of AIs raises a host of important moral psychological questions. Do people see AIs as moral agents—entities that can do right and wrong and are morally responsible for their actions? Do they see them as moral patients—entities that can be targets of right and wrong actions and so deserve moral concern? How do people feel about such AIs, and what factors influence whether AIs are seen as moral agents and patients? This article reviews the growing literature on the moral psychology of AI and what it can tell us about human moral psychology.

Moral Agency: Holding AIs Morally Responsible

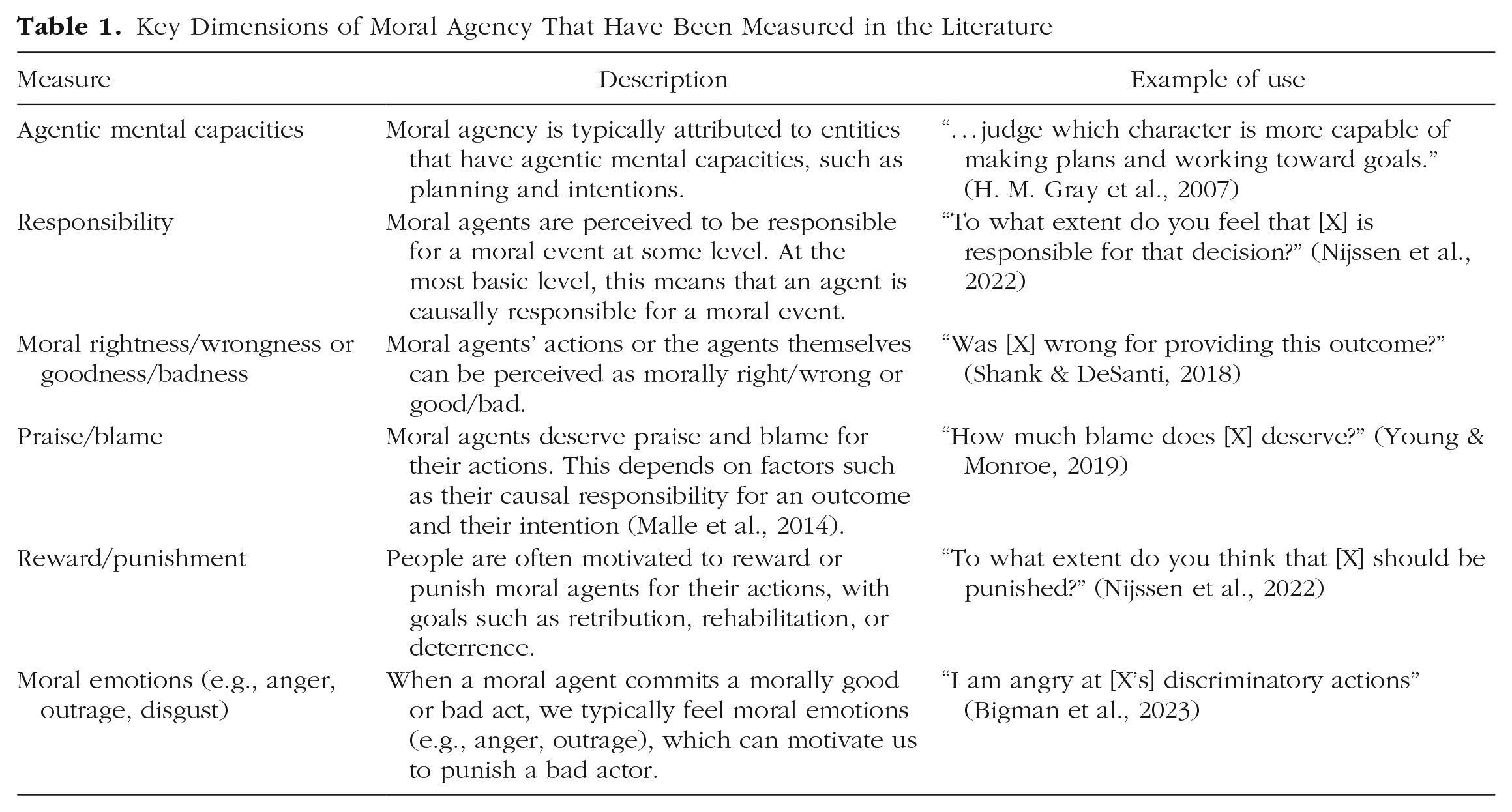

The concept of moral agency is multidimensional and has been measured in various ways in the literature (Table 1). Moral agents are morally responsible, which at the most basic level means being causally responsible for a moral event (Guglielmo, 2015). We can think of moral agents’ actions as morally right or wrong, or more strongly, we might consider moral agents themselves as morally good or bad, which can support the notion that they deserve praise or blame for their actions. Moral agency is typically attributed to entities that are perceived as having minds, particularly, agentic mental capacities reflecting the capacity to act intentionally (K. Gray et al., 2012; Malle et al., 2014). People are often motivated to reward or punish moral agents, with goals such as retribution, reform, and deterrence. Finally, people often experience emotions, such as anger and outrage, in response to moral agents’ actions (Bigman et al., 2023).

Key Dimensions of Moral Agency That Have Been Measured in the Literature

Do people hold AIs morally responsible?

Research suggests that people view AIs as moral agents to some degree and think they have a moderate level of capacities associated with moral agency. For example, H. M. Gray et al. (2007) found that people think robots have agentic mental capacities (e.g., memory, planning) comparable to a young child and more than a chimpanzee. Shank and DeSanti (2018) found that people attributed some degree of moral wrongness and responsibility to AIs (e.g., decision-making algorithms, social media bots) for violating various moral norms, such as racist parole decisions. Similarly, people think sophisticated “AI-driven robots” deserve blame for causing environmental damage (Kneer & Stuart, 2021) and that “AI programs” and “robots” deserve punishment for medical and military harms (Lima et al., 2021). Beyond cognitive judgments, people experience moral emotions in response to harms caused by AIs. Bigman et al. (2023) found that people expressed some degree of outrage toward decision-making algorithms for sexist hiring decisions, though less than toward humans making the same decisions. In short, people attribute some degree of moral agency to AIs, reflecting that they perceive AIs to have some degree of agentic mental capacities.

How do people feel about AIs as moral agents?

Despite attributing moral agency to AIs, people are uncomfortable with AI moral agents. Bigman and Gray (2018) found that people are averse to AIs (e.g., autonomous computer programs) making moral decisions, such as whether to perform a risky surgery to save a child, partly due to AIs’ perceived lack of mental capacities. This aversion appears hard to reduce. Limiting AIs to advisory roles somewhat reduced discomfort, but increasing AIs’ perceived capacity for experience and expertise had limited effects (Bigman & Gray, 2018). This poses a difficult challenge, with AIs being increasingly used for decision-making in areas that have significant moral consequences, such as in criminal justice and human resources.

What kinds of AIs are held morally responsible?

Given that AIs are attributed some degree of moral agency, what factors influence the extent to which they are seen as moral agents? Theoretical models of moral agency emphasize internal factors, that is, characteristics of the agents themselves (e.g., Guglielmo, 2015). Theoretically, perceptions of agentic mental capacities should be important (K. Gray et al., 2012), and empirical research shows that such capacities do affect attributions of moral agency to AIs. For example, Yam et al. (2022) found that an anthropomorphic robot (i.e., with a human-like voice, face, and name) responsible for giving negative feedback in an experiment was perceived as having more agentic mental capacities (e.g., communication, thought) than a non-anthropomorphic one, and this resulted in it being punished more for its feedback. Monroe et al. (2014) found that AIs’ (e.g., “AI in a human body,” “advanced robot”) perceived degree of agentic capacities, such as choice and intentions, predicted how much they were blamed for moral violations, such as attacking a stranger. Maninger and Shank (2022) also found that capacities such as choice and intentions predicted how much various AIs (e.g., smartphone apps, robots) were blamed for moral violations, though even after accounting for these capacities, they found that AIs were blamed less than humans.

One explanation for why AIs are still blamed less than humans is that they are perceived to lack some mental capacities that are required for human-level moral agency. Nijssen et al. (2022) found that robots described as having emotions were held more responsible and considered more deserving of punishment for committing moral violations than nonemotional robots. This suggests that for AIs to be attributed human-level moral agency, they must have, in addition to the agentic mental capacities emphasized by existing theory, experiential mental capacities reflecting the capacity to sense and feel (see K. Gray et al., 2012). Understanding the effects of experiential capacities on moral agency may be particularly important in the context of AIs, for whom experience and agency can come apart, unlike in human moral agents, for whom they typically come together.

External factors beyond the agent may also be an important yet neglected part of our understanding of the moral psychology of AIs. For example, the moral domain might be critical. Maninger and Shank (2022) found that AIs were blamed more for violating fairness norms (e.g., biased criminal judgment) and less for violating betrayal norms (e.g., denouncing one’s country). The type of decisions made can also have an effect. Malle et al. (2015) found that robots were blamed more for failing to act to sacrifice an individual for the greater good in a moral dilemma than for taking action, whereas the opposite held for humans. A possible explanation for the effects of the external factors is that they interact with the perceived internal capacities of AIs. For example, moral dilemmas like the one just described are difficult for humans partly because of the emotional cost of sacrificing an individual. But AIs are perceived as having little emotion (see later discussion on moral patiency), so the dilemmas are expected to involve less conflict for them, and they are expected to act to bring about the greater good.

Who holds AIs morally responsible?

There is evidence of developmental changes in attributions of moral agency to AIs. Flanagan et al. (2021) found that 5-to-7-year-olds were less likely than adults to think a humanoid robot would choose to play a game if doing so would hurt someone else’s feelings and were more likely than adults to hold the robot morally responsible. However, they still attributed less responsibility to the robot than to a human child. Little is otherwise known about individual differences in moral agency attributions to AIs. Theoretically, people more likely to anthropomorphize (i.e., attribute nonhumans with human-like characteristics) should be more likely to view AIs as moral agents, because they should grant AIs greater degrees of relevant mental capacities. Epley et al.’s (2007) three-factor model of anthropomorphism theorizes that we anthropomorphize when knowledge about humans is activated and applied to nonhumans, and this depends on cognitive factors (e.g., what is known about nonhuman entities) and motivational factors, in particular, the extent to which we are motivated to understand another entity and engage with them socially. There is evidence supporting this model in the context of AIs. For example, Eyssel and Reich (2013) found that people induced to feel lonely (and so feel more motivated to engage socially) attributed more mind to a robot than did those in a control condition. However, more research is needed to understand whether such effects in turn influence attributions of moral agency to AIs.

Moral Patiency: Granting AIs Moral Concern

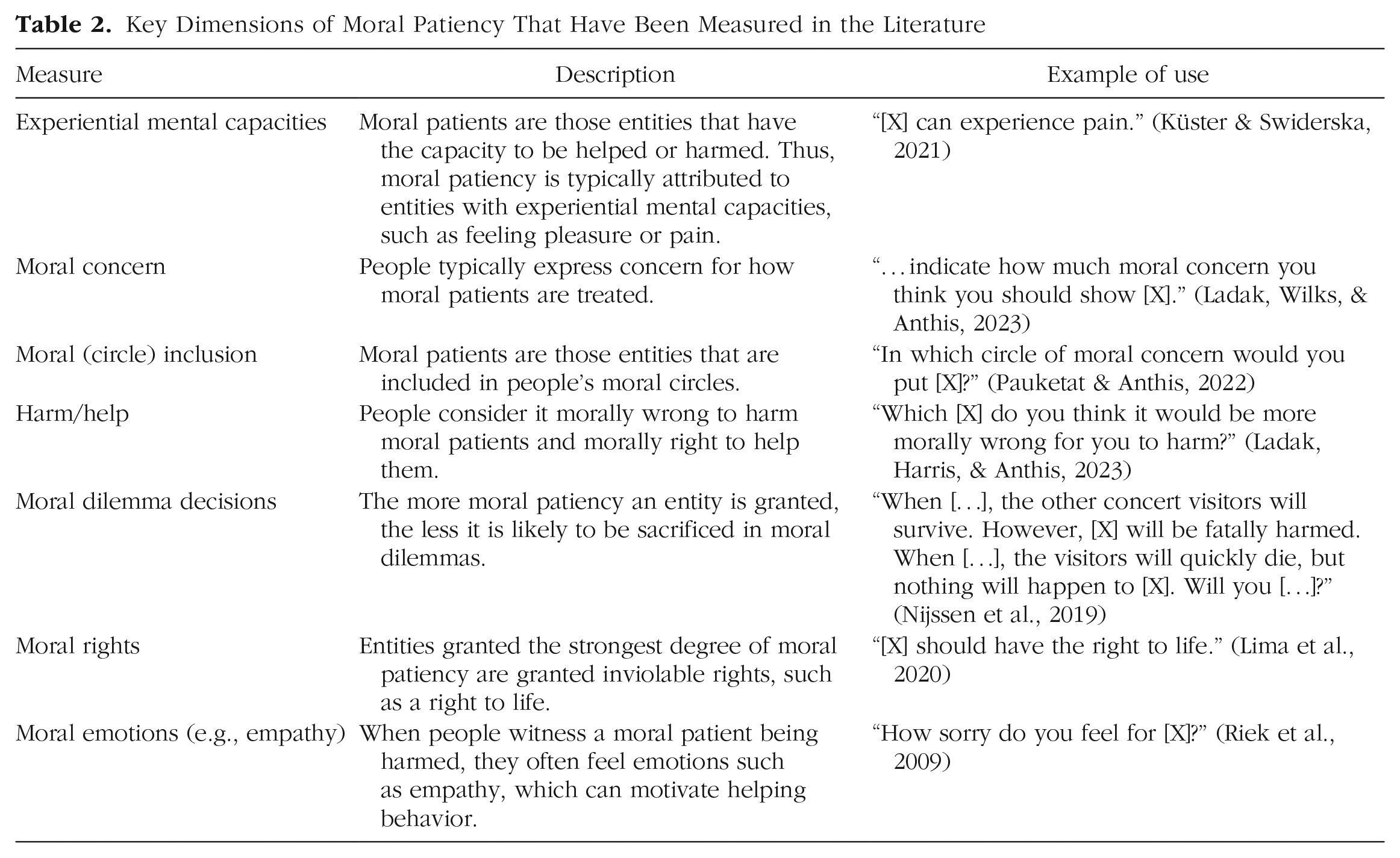

As with moral agency, moral patiency is multidimensional and has been studied with a variety of measures (Table 2). Fundamentally, moral patients are entities that can be helped or harmed, which requires experiential mental capacities, such as feeling pleasure or pain (K. Gray et al., 2012). People tend to express moral concern for how moral patients are treated, consider it morally wrong to harm moral patients, include them in their moral circles (i.e., the boundary that distinguishes entities that receive moral consideration from those that do not), and are less willing to sacrifice them in moral dilemmas. Moral patients may also qualify for moral rights, such as the right to have their lives protected. Finally, people may experience emotions, such as empathy, when they perceive moral patients being harmed, which can motivate helping behavior (Batson et al., 1997).

Key Dimensions of Moral Patiency That Have Been Measured in the Literature

Do people grant AIs moral concern?

In contrast to moral agency, people generally do not to see AIs as moral patients, nor do they think that AIs have capacities associated with moral patiency. For example, H. M. Gray et al. (2007) found that people think robots have experiential capacities (e.g., fear, pain) comparable to a dead person, and Haslam et al. (2008) found that people think “robots (machines)” have very little capacity for emotion. Pauketat and Anthis (2022) found that people place “robots” and “artificial intelligence” at the outskirts of their moral circles, further from the center than chickens, apple trees, and murderers. And Lima et al. (2020) found that people do not support granting 10 possible moral rights to “robots” and “AI programs” and are only (slightly) favorable to one (the right against cruel punishment and treatment).

However, although people do not explicitly attribute moral patiency to current AIs, they do feel emotions associated with patiency toward them. For example, Riek et al. (2009) found that people feel empathy for human-like robots in emotionally evocative film clips. Tan et al. (2018) found that people felt personal distress and often intervened to help a robot being abused, though they sometimes intervened for reasons other than the robot’s moral patiency, such as the financial cost of repairing the robot. Also, although Lima et al. (2020) found little support for moral rights, interventions such as reading about other nonhuman entities that have rights increased people’s level of support. Finally, evidence suggests that people think future AIs could be moral patients. Ladak, Harris, and Anthis (2023) found that people think it can be in principle morally wrong to harm an “artificial being,” and Pauketat and Anthis (2022) found that people are more willing to grant future “artificial beings” the capacity for emotion. Although people do not explicitly view current AIs as moral patients, such perceptions may change, particularly as AIs become more human-like and advanced.

How do people feel about AIs as moral patients?

Mirroring people’s aversion to AI moral agents, people express discomfort toward AIs with capacities associated with patiency. K. Gray and Wegner (2012) found that perceiving the capacity for experience in robots causes the “uncanny valley,” the creepy feeling some people report when interacting with robots that closely resemble humans. This discomfort can be reduced by stripping robots of their capacity for experience (Yam et al., 2021); however, because the capacity for experience is typically associated with moral patiency, this approach may result in the denial of patiency for AIs. Moreover, as noted earlier, the capacity for experience may be important for attributing human-level moral agency to AIs and for having AIs make decisions humans support. The interactions between perceptions of experience, discomfort, and moral agency and patiency should be further explored in future research.

What kinds of AIs are granted moral concern?

Like with moral agency, internal and external factors can influence the extent to which AIs are attributed moral patiency. Theoretically, internal factors should reflect experiential mental capacities (K. Gray et al., 2012). Research suggests that these capacities do influence moral patiency attributions to AIs. For example, Nijssen et al. (2019) found that human-looking robots described in anthropomorphic language were less likely to be sacrificed in moral dilemmas than mechanical-looking robots described in mechanistic language, and this was due to their greater perceived capacity for experience. Similarly, robots with social rather than economic functions were attributed greater capacity for experiencing emotions and were less likely to be harmed (Wang & Krumhuber, 2018). Ladak, Harris, and Anthis (2023) found both experiential and agentic capacities predicted how morally wrong people think it is to harm AIs, including expressing and recognizing emotions, cooperating, and making moral judgments.

Turning to external factors, Eyssel and Kuchenbrandt (2012) found that an in-group robot was perceived as having greater capacity for experience than an out-group robot. Küster and Swiderska (2021) found that people attributed greater capacity for experience and for feeling pain to a robot observed being harmed than to one unharmed. And Tanibe et al. (2017) found that imagining oneself helping a robot led to greater perceptions of the robot’s capacity to feel pleasure, greater willingness to grant the robot rights, and lesser willingness to harm the robot. In short, external factors affect perceptions of the internal capacities of AIs, such as their capacity for experience, which can in turn affect the extent to which they are attributed moral patiency.

Who grants AIs moral concern?

Pauketat and Anthis (2022) tested a range of predictors and found that sci-fi fan identity (identification with the science fiction fan group), substratism (prejudice against AIs), techno-animism (attributing life to technological entities), and feeling positive emotions toward AIs most consistently predicted moral patiency attributions to AIs. There is also evidence of developmental changes in attributions of moral patiency to AIs. Reinecke et al. (2021) found that children granted robots greater capacity for experience, vulnerability to harm, and a claim to protection than did adults. Children, particularly younger ones, also distinguished less than adults did between a human child and a robot on these measures. An individual’s psychological state can also have an effect. Ladak, Wilks, and Anthis (2023) found that people who felt more empathy and closeness with a human-like AI worker expressed greater moral concern for AIs as a group.

Future Directions and Conclusions

People attribute some degree of moral agency to AIs, reflecting that AIs are perceived to have a moderate degree of agentic capacities (H. M. Gray et al., 2007). By contrast, people attribute little moral patiency to AIs, reflecting that AIs are generally not perceived to have experiential capacities (H. M. Gray et al., 2007). Why do people perceive agentic but not experiential capacities in AIs? The simplest explanation is that AIs are typically designed this way: They calculate, decide, and act without any feeling or emotion. However, as AIs become increasingly human-like and expressive, like the latest chatbots, people may increasingly perceive them as experiential and in turn attribute them moral patiency. These attributions are currently less well understood but, given the possible implications for human-AI interaction, should be a priority for future research. Additionally, research should aim to understand the effects of perceived patiency on attributions of moral agency (and vice versa). Such effects are potentially important in the context of AIs, for whom experiential and agentic capacities can come apart, unlike with humans, for whom they typically come together.

Although AIs are usually attributed less moral agency than humans (e.g., Kahn et al., 2012), people sometimes attribute them more moral agency than humans. For example, Hong et al. (2020) found that people blamed self-driving cars more than humans for causing accidents, and this was due to their perceived greater competency at driving. As AIs become increasingly advanced and competent, will they be attributed greater moral agency than humans in other contexts too? Or will the increasing human-likeness of contemporary AIs (e.g., communication style) limit their attributed moral agency at a closer-to-human level? Similarly, will people perceive superhuman levels of experience in future AIs, and will they attribute such AIs superhuman levels of moral patiency? These questions are important because if AIs continue to exceed humans in their capacities, they could challenge humans’ long-standing position at the top of the hierarchy of moral agency and patiency.

Some researchers argue that people’s desire to blame and punish AIs is misplaced because AIs do not have the capacities that would make them appropriate targets of such behaviors (e.g., Danaher, 2016). However, this desire may reflect an important aspect of human moral psychology—that we intuitively seek a target to blame for a morally bad outcome, even if one does not exist. However, people may also have other reasons to punish AIs. Lima et al. (2021) found that people want to punish AIs because they think AIs can learn from their mistakes (and not for retribution or deterrence). This possibility raises several questions: What kinds of punishment do people think would enable AIs to learn? What kinds of punishment do people consider morally acceptable? And will punishment serve retributive and deterrent functions for future, more advanced AIs?

We must also acknowledge the broad scope of what “AI” means. Research has examined perceptions of human-like and mechanical robots, decision-making algorithms, and self-driving cars, among other entities. There are likely important similarities and differences in people’s moral judgements of these entities, such as human-like robots being perceived to have greater capacity for emotion than mechanical robots (Nijssen et al., 2019). However, such comparative research is sparse. A comprehensive comparative exploration of how people judge different types of AIs is an important next step for the field.

There are several challenges regarding the design of AIs. A first challenge concerns acceptability. People feel discomfort with AI moral agents and patients, despite their increasing prevalence in society. How could AIs be designed to reduce discomfort? A second challenge concerns transparency. Can AIs be designed so that their internal workings are sufficiently transparent that we can tell whether they are genuine moral agents and patients? How would such transparency affect attributions of moral agency and patiency to AIs? A third challenge concerns alignment. Can (and should) AIs be designed to make moral decisions in the ways that humans want them to? Do people’s expectation that AIs make different decisions than humans (e.g., Malle et al., 2015) merely reflect current AIs, or does this generalize to future, more advanced AIs? Addressing these challenges should be a focus for not only computer scientists and engineers but psychologists as well.

The fact that people attribute moral agency and (to a lesser extent) patiency to AIs via some of the same fundamental processes (e.g., mind perception, anthropomorphism) as they do to biological entities shows the generalizability of important aspects of human moral psychology. However, AIs are (and will likely continue to be) very different from biological entities. As such, other social identities and psychological processes (e.g., sci-fi fan identity, substratism) that do not operate in the context of biological entities also influence our moral judgments of AIs. Further, as AIs continue to (rapidly) improve their capabilities, arguably beyond human level on some tasks (e.g., driving), decades-old certainties about morality based largely on human psychology are being called into question. Given the rapid advancement of AI, it is critical for researchers to further identify and integrate these effects into psychological theory and the design and development of AI systems.

Recommended Reading

Bigman, Y. E., Waytz, A., Alterovitz, R., & Gray, K. (2019). Holding robots responsible: The elements of machine morality.

Gray, K., Young, L., & Waytz, A. (2012). (See References). A theoretical article that maps the two dimensions of mind perception theory (agency and experience) to moral agency and moral patiency.

Ladak, A., Wilks, M., & Anthis, J. R. (2023). (See References). An experiment testing the effects of encouraging perspective taking on moral attitudes toward artificial intelligences (AIs).

Nijssen, S. R. R., Müller, B. C. N., van Baaren, R. B., & Paulus, M. (2019). (See References). An experimental study testing the effects of anthropomorphism, agency, and experience on people’s willingness to sacrifice robots in moral dilemmas.

Pauketat, J. V. T., & Anthis, J. R. (2022). (See References). A study testing the effects of a range of predictors on moral patiency attributions to AIs.

Footnotes

Acknowledgements

Many thanks to Jacy Reese Anthis, Michael Dello-Iacovo, and Janet Pauketat for helpful comments on earlier versions of this article.

Transparency

S. Loughnan and M. Wilks contributed equally to the manuscript. All of the authors approved the final manuscript for submission.