Abstract

Delusions are distressing and disabling symptoms of various clinical disorders. Delusions are associated with an aberrant and apparently contradictory treatment of evidence, characterized by both excessive credulity (adopting unusual beliefs on minimal evidence) and excessive rigidity (holding steadfast to these beliefs in the face of strong counterevidence). Here we attempt to make sense of this contradiction by considering the literature on epistemic vigilance. Although there is little evolutionary advantage to scrutinizing the evidence our senses provide, it pays to be vigilant toward ostensive evidence—information communicated by others. This asymmetry is generally adaptive, but in deluded individuals the scales tip too far in the direction of the sensory and perceptual, producing an apparently paradoxical combination of credulity (with respect to one’s own perception) and skepticism (with respect to the testimony of others).

Keywords

Delusions are unjustified and often bizarre beliefs that are symptomatic of numerous psychiatric and neurological disorders (Coltheart et al., 2011). Examples of delusions include the belief that one’s daily life is being recorded for national broadcast (Gold & Gold, 2012), that one’s genitals have been stolen and replaced with someone else’s (Connors & Lehmann-Waldau, 2018), and that the COVID-19 pandemic will precipitate a zombie apocalypse (Ovejero et al., 2020). Delusions are distressing to patients and their families and perplexing to clinicians and theoreticians. Among other puzzles is an apparent paradox concerning the way patients with delusions respond to evidence (Furl et al., in press): Deluded individuals seem to display both excessive credulity (being too willing to adopt unusual beliefs on minimal evidence) and excessive rigidity (being unwilling to relinquish them in the face of strong counterevidence). Here we attempt to illuminate this conundrum by drawing on the literature on epistemic vigilance.

Epistemic Vigilance

A set of putative cognitive mechanisms serves a function of epistemic vigilance: to evaluate communicated information so as to accept reliable information and reject unreliable information (Sperber et al., 2010). The existence of these mechanisms has been postulated on the basis of the theory of the evolution of communication (e.g., Maynard Smith & Harper, 2003; Scott-Phillips, 2008). For communication between any organisms to be stable, it must benefit both those who send the signals (who would otherwise refrain from sending them) and those who receive them (who would otherwise evolve to ignore them). However, senders often have incentives to send signals that benefit themselves but not the receivers. As a result, for communication to remain stable, there must exist some mechanism that keeps signals, on average, reliable. In some species, the signals are produced in such a way that it is simply impossible to send unreliable signals—for instance, if the signal can be produced only by large or fit individuals (see, e.g., Maynard Smith & Harper, 2003). In humans, however, essentially no communication has this property. 1 It has been suggested instead that humans keep communication mostly reliable thanks to cognitive mechanisms that evaluate communicated information, rejecting unreliable signals and lowering our trust in their senders—mechanisms of epistemic vigilance.

To evaluate communicated information, mechanisms of epistemic vigilance process cues related to the content of the information (Is it plausible? Is it supported by good arguments?) and to its source (Are they honest? Are they competent?). A wealth of evidence shows that humans possess such well-functioning mechanisms (for review, see, e.g., Mercier, 2020), that they are early developing (being already present in infants or toddlers; see, e.g., Harris & Lane, 2014), and that they are plausibly universal among typically developing individuals. Crucially for the point at hand, these epistemic vigilance mechanisms are specific to communicated information. Our own perceptual mechanisms evolved to best serve our interests, and there are thus no grounds for subjecting their deliverances to the scrutiny that must be deployed for other individuals.

There is now a large amount of evidence that people systematically discount information communicated by others. This tendency has often been referred to as egocentric discounting (Yaniv & Kleinberger, 2000), and it has been observed in a wide variety of experimental settings (for a review, see Morin et al., 2021). For instance, in advice-taking experiments, participants are asked a factual question (e.g., What is the length of the Nile?), provided with someone else’s opinion, and given the opportunity to take this opinion into account in forming a final estimate. Overall, participants put approximately twice as much weight on their initial opinion as on the other participant’s opinion, even when they have no reason to believe the other participant less competent than themselves (Yaniv & Kleinberger, 2000).

The discounting of others’ opinions can be overcome if we have positive reasons to trust them or if they present good arguments—in particular, if our prior opinions are weak (see, e.g., Mercier & Sperber, 2017). However, in the absence of such positive reasons, discounting is a pervasive phenomenon. There is no such systematic equivalent when it comes to perception. Although in some cases we can or should learn to doubt what we perceive (e.g., when attending to the reminder that “objects in mirror are closer than they appear” while driving), this is typically an effortful process with uncertain outcomes. In visual perception, for example, models in which the observer behaves like an optimal Bayesian learner have proven very successful at explaining participants’ behavior (e.g., Geisler, 2011). Even if there are deviations from this optimal behavior (e.g., Stengård & van den Berg, 2019), they do not take the form of a systematic tendency to favor our priors over novel information.

There is thus converging evidence (a) that humans process communicated information differently than information they acquire entirely by their own means and (b) that the former is systematically discounted by default (i.e., in the absence of reasons to behave otherwise, such as reasons to believe the source particularly trustworthy or competent). This, however, leaves open significant questions of great relevance for the present argument. In particular, to what stimuli does epistemic vigilance apply to? Presumably, epistemic vigilance evolved chiefly to process the main form of human communication: ostensive communication, which includes verbal communication but also many nonverbal signals (from pointing to frowning). Related mechanisms apply to other types of communication, such as emotional communication (Dezecache et al., 2013).

What of behaviors that have no ostensive function (e.g., eating an apple) or even aspects of our environment that might have been modified by others (e.g., a book found on the coffee table)? Although such stimuli should not trigger epistemic vigilance by default, they may under some circumstances. One might interpret a friend eating an apple as an indication that the friend has followed health advice to eat more fruit, or one could interpret one’s spouse’s placement of a book on a table as an invitation to read it—whether it was so intended or not. The behavior might then be discounted: We might suspect our friend of eating the apple only for our benefit while privately gorging on junk food.

Other cognitive mechanisms, more akin to strategic reasoning, but bound to overlap with epistemic vigilance, must process noncommunicative yet manipulative information (on the definition of communication vs. manipulation or coercion, see Scott-Phillips, 2008). A detective should be aware that some clues might have been placed by the criminal to mislead her. In some circumstances, therefore, epistemic vigilance and related mechanisms might apply even to our material environments, instead of applying only to straightforward cases of testimony. Still, epistemic vigilance should always apply to testimony, whereas it should apply to perception only under specific circumstances, such that the distinction between these two domains (testimony vs. perception) remains a useful heuristic.

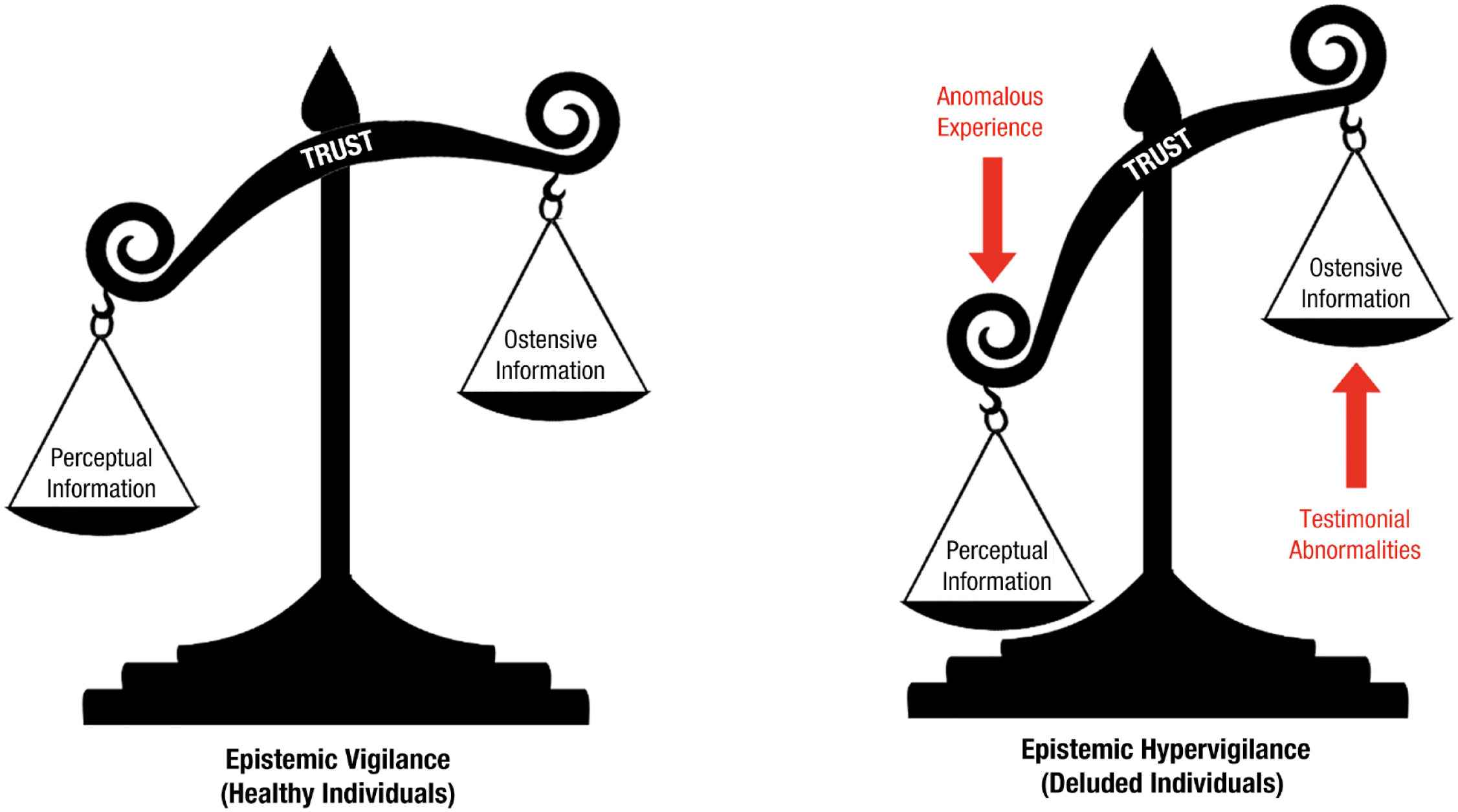

How might these considerations inform our understanding of delusions? Whereas in healthy individuals the scales are adaptively tipped in favor of trusting the perceptual over the ostensive, this imbalance may be maladaptively exacerbated in delusions (Fig. 1). This could be for at least two complementary reasons: Sensory or perceptual evidence may be overweighted, and testimonial evidence may be underweighted. We review each of these possibilities in turn.

How are different sources of information weighed in belief formation? In healthy individuals (left panel), the scales are adaptively tipped in favor of trusting perceptual evidence over ostensive evidence. This imbalance may be maladaptively exacerbated in deluded individuals (right panel).

Anomalous Perceptions

The notion that delusions stem from errant percepts has a long history. A young female patient admitted to the State Lunatic Asylum at Danvers, Massachusetts, in 1879 complained of noises in her head and expressed the belief that she had live bees in her skull. According to Southard (1916), the woman had a medical condition (softening of the cranial bones) that produced misleading perceptual data, “poisoned at the sensory source” (p. 429). Later theorists have also linked delusions to experiential abnormalities. Maher, for example, saw delusions as efforts to make sense of striking and anomalous perceptual experiences: “The locus of the pathology is in the neuropsychology of experience” (Maher, 1999). Kapur (2003) suggested that the abnormally salient experiences that delusions putatively explain are occasioned by dysregulated dopamine transmission. Subsequent theoretical and empirical work suggests that dopamine dysregulation generates psychosis by impairing the brain’s capacity to weight the reliability, or precision, of sensory prediction errors (Adams et al., 2013; Haarsma et al., 2021).

One point of contention among those who implicate experiential abnormalities in the etiology of delusions is whether these abnormal experiences are sufficient for delusions to develop. Maher, for example, considered delusions to be essentially normal—even scientific—responses to deluded individuals’ unsettling experiences, implying that they do not unduly weight these experiences in forming beliefs. The corollary is that anyone with an experiential abnormality would become deluded (but see Noordhof & Sullivan-Bissett, 2021, for discussion). In contrast, advocates of the “two factor” theory of delusions (e.g., Coltheart et al., 2011; Coltheart & Davies, 2021; cf. Corlett, 2019) highlight dissociations between anomalous experiences and corresponding delusions.

The example most frequently discussed by two-factor theorists is Capgras delusion, wherein people come to believe that close friends or relatives have been replaced by physically identical impostors. Capgras patients are thought to have a disturbance in the brain regions or networks that generate the feelings of familiarity that attend conscious recognition of familiar faces (e.g., see Darby et al., 2017). The resulting phenomenology, a sense that something does not feel right about these visually familiar others, may make the impostor explanation appealing. Not all patients with this phenomenology, however, adopt the impostor delusion. M. Turner and Coltheart (2010) discuss a nondelusional patient who, after undergoing surgery for intractable epilepsy, reported that her mother felt different: “The first thing I noticed was Mum, when she walked in the room . . . It was like a picture of her, but it wasn’t her . . . just didn’t feel like her” (pp. 371–372).

A second example, also relating to the experience of familiarity (but in this case hyper- rather than hypo-familiarity), is a case of “déjà vecu” reported by M. S. Turner et al. (2017). Following a severe head injury, their patient came to believe that he had previously lived through live televised events (“Everyone says ‘you only think you’ve seen it before.’ But I’ll swear black and blue that I have seen it before”; M. S. Turner et al., 2017, p. 144; emphasis in original). These authors suggested that the patient’s injury had disrupted neural recognition systems, generating false feelings of familiarity. But, of course, false feelings of familiarity are very common among nondeluded people, and indeed, Kalra et al. (2007) have documented a nondelusional patient with medication-induced déjà vu who described the feeling—but not the belief—of having lived through live televised events (“I was a little freaked out when I watched TV as I felt I was watching repeats, although I knew I wasn’t”; p. 312).

For two-factor theorists, the existence of such patients points to the need for an additional explanatory factor (the eponymous second factor) to account for why experiential anomalies lead to delusions in some individuals but not others. One possibility is that deluded individuals place undue weight on these experiences when revising beliefs, perhaps because they overestimate their reliability (see McKay, 2012; Miyazono & McKay, 2019). 2 Alternately, critics of two-factor theory have suggested that deluded individuals simply have more intense anomalous experiences than nondeluded individuals (Corlett, 2019; Noordhof & Sullivan-Bissett, 2021; Sakakibara, 2019). In either case, deluded individuals favor perceptual evidence over ostensive evidence, to their detriment. In this case, the ostensive evidence includes testimonials from friends, family, and clinicians who try to persuade them that their delusions are inaccurate. The opinions of these otherwise trustworthy and reliable people are often completely discounted by the delusional patients—a fact long taken for granted in theorizing about delusions but whose import has not always been fully appreciated.

Testimonial Abnormalities

According to Miyazono and Salice (2020), most previous theorizing about delusions—including that reviewed already—has implicated “individualistic sources of evidence” (e.g., perception, reasoning, memory), with less attention paid to how deluded individuals treat social sources (e.g., testimony). To remedy this, Miyazono and Salice advocate a “social epistemological turn” in the study of delusions (see also Bell et al., 2021). In particular, they suggest that testimonial abnormalities—including the active discounting of testimonial evidence (“testimonial discount”)—figure in the etiology of delusions.

The extent to which previous conceptions of delusion display an “individualistic bias” is perhaps open to debate. As Miyazono and Salice (2020) themselves note, delusions are defined by the American Psychiatric Association (2013, p. 819) as “firmly held despite what almost everyone else believes,” which implies a neglect of testimony. And other authors have suggested that a disconnect between a person’s beliefs and the beliefs of others in the same cultural context may be precisely what makes the former beliefs delusional (e.g., Bentall, 2018). In Miyazono and Salice’s account, however, “testimonial discount” is not just a matter of deluded individuals being insulated from the testimony of others (what they call “testimonial isolation”) or of the testimony of others being overridden by other (individualistic) sources of evidence but involves deluded individuals actively distrusting the sincerity and competence of their interlocutors. In other words, they are epistemically hypervigilant.

Of course, it is easy here to confuse cause and effect: Patients with persecutory delusions, who are convinced that others intend them harm, may doubt the benevolence of others because of their delusions. The question remains as to what generates the paranoid ideation in the first place. Alterations to coalitional cognition—processes involved in group affiliation and social perception—may play an important role here (Bell et al., 2021). Consistent with this idea, Miyazono and Salice (2020) suggest that a failure of group identification may erode or preclude the trust that is typically extended to in-group members. However, recent work by Corlett and colleagues (e.g., Reed et al., 2020; Suthaharan et al., 2021) suggests that paranoia originates not in excessive social concern but in a rigid belief that the world in general (i.e., not just the social world) is volatile.

Conclusion

Delusions are scientifically and clinically challenging symptoms. Deluded individuals are often thought to “jump to conclusions” (Garety & Freeman, 2013), a notion that itself encapsulates a central enigma of delusions: Those who jump to conclusions err both in that they reach a conclusion on minimal evidence (being too willing to accommodate evidence) and in that they reach a conclusion at all (displaying an apparent imperviousness to contradictory evidence; see Furl et al., in press). While acknowledging other important approaches to this enigma (e.g., those that focus on how the balance between prior expectations and sensory evidence alters over time, for instance, in the transition from prodromal to frank psychosis; Haarsma et al., 2020; see also Corlett & Fletcher, 2021), we argue that the apparent paradox can be partly dissolved by distinguishing two categories of evidence: the perceptual and the ostensive. 3 Deluded individuals, we suggest, are overresponsive to the former and underresponsive to the latter.

Whereas there is little evolutionary benefit to scrutinizing the evidence our senses provide, evidence communicated by others warrants a healthy degree of skepticism. This asymmetry is adaptive, but in deluded individuals, the balance tips too far in the direction of the perceptual. This may be for several complementary reasons. First, deluded individuals may be subject to perceptual aberrations—unusually intense experiences that cry out for explanation (Maher, 1999; Sakakibara, 2019)—and may overestimate the reliability of these experiences (Adams et al., 2013; Kapur, 2003; McKay, 2012; Miyazono & McKay, 2019). Second, deluded individuals may have defective social epistemology—characterized both by reduced exposure to the testimony of others (“testimonial isolation”) and by the active disregard of that testimony (“testimonial discount”; Miyazono & Salice, 2020). These various factors may conspire to produce an apparently paradoxical admixture of credulity and rigidity.

Recommended Reading

Adams, R. A., Stephan, K. E., Brown, H. R., Frith, C. D. & Friston, K. J. (2013). (See References). Argues that delusions reflect an aberrant encoding of confidence or precision of beliefs about the world—specifically, a reduction in the precision of prior beliefs relative to sensory evidence.

Bell, V., Raihani, N. & Wilkinson, S. (2021). (See References). Spearheads a social turn in the study of delusions, arguing that delusions involve alterations to coalitional cognition—processes involved in affiliation, group perception, and the strategic management of relationships.

Bortolotti, L. (Ed.). (2018). Delusions in context. Palgrave Pivot. An accessible, open-access book that gathers together a variety of perspectives on the study of delusions from experts in different disciplinary areas (including clinical psychiatry, philosophy, clinical psychology, and cognitive neuroscience).

Furl, N., Coltheart, M. & McKay, R. (in press). (See References). A chapter that articulates and explores the paradoxical treatment of evidence that deluded individuals seem to display.

Sperber, D., Clément, F., Heintz, C., Mascaro, O., Mercier, H., Origgi, G. & Wilson, D. (2010). (See References). Lays out the view that humans should be equipped with cognitive mechanisms whose function is to evaluate information communicated by others.

Williams, D. (2018). Hierarchical Bayesian models of delusion. Consciousness and Cognition, 61, 129–147. An accessible critique of the predictive coding/computational psychiatry approach to delusions.