Abstract

The impact of the implementation of artificial intelligence (AI) on workers’ experiences remains underexamined. Although AI-enhanced processes can benefit workers (e.g., by assisting with exhausting or dangerous tasks), they can also elicit psychological harm (e.g., by causing job loss or degrading work quality). Given AI’s uniqueness among other technologies, resulting from its expanding capabilities and capacity for autonomous learning, we propose a functional-identity framework to examine AI’s effects on people’s work-related self-understandings and the social environment at work. We argue that the conditions for AI to either enhance or threaten workers’ sense of identity derived from their work depends on how the technology is functionally deployed (by complementing tasks, replacing tasks, and/or generating new tasks) and how it affects the social fabric of work. Also, how AI is implemented and the broader social-validation context play a role. We conclude by outlining future research directions and potential application of the proposed framework to organizational practice.

Keywords

Imagine a loan consultant working in a large bank and proud of being responsible for complex loan decisions involving millions of euros. Then, over time, management replaces the previously human-only task of loan decision making with a faster, automated, more precise, but unintelligible algorithm. Now picture a surgeon, operating with real-time artificial intelligence (AI) analysis of operative videos. This technique reduces the duration of surgery and improves patients’ outcomes (examples adapted from Hashimoto et al., 2018; Strich et al., 2021). In both cases, the workers experience dramatic changes to core work tasks that challenge their understandings of their work and themselves in relation to their work—their identities (Endacott, 2021). Identities offer a system of self-reference for attitudes and behaviors and define an individual’s place in society (Tajfel & Turner, 1986). Understanding how workers react to AI-related changes requires examining how self-understandings are affected. In this article, we develop an integrative functional-identity framework to expand current understanding of AI’s effects on workers and enable a constructive implementation of AI at work.

This analysis is crucial given the rapid expansion of AI across business sectors, including health care (e.g., diagnostic scanning and analysis), operations and production management (e.g., resource optimization), retail (e.g., chatbots), defense and security (e.g., cybercrime detection), banking and finance (e.g., stock-market predictions), and human resource management (e.g., recruitment and selection). AI implementation is often guided by business priorities, such as enhanced efficiency, which have been criticized for placing too little importance on workers’ identity processes when AI supplements work (as in the example of the surgeon) or takes away work (as in the example of the loan consultant). At the same time, AI can also generate new tasks and create new roles. These changes are situated in a currently largely polarized discourse about AI, spanning highly optimistic views about its benefits (e.g., freeing workers from laborious and repetitive tasks) to more catastrophic predictions of human unemployment. In this review of AI’s impact on workers’ identities and their subsequent attitudes and behaviors, we begin by outlining the technology’s functionality.

What Is AI, and Why Is AI Different?

The term “AI” refers to “a collection of interrelated technologies used to solve problems that would otherwise require human cognition” (Walsh et al., 2019, p. 2). Advancements in AI are attributed to wider data access and collection (big data), greater computational power, and enhanced modeling approaches (e.g., neural networks). “AI” represents a range of technologies using a variety of computational methods, particularly machine learning, which involves computerized learning processes inspired by human intelligence (Walsh et al., 2019). Through simple (e.g., decision trees) or more complex (e.g., artificial neural networks, or deep learning) modeling methods, AI can analyze large data sets via learning processes that are supervised (i.e., learning guided by a human) or unsupervised (i.e., machine-autonomous learning from the data; Walsh et al., 2019). Common methods that are often referred to as AI also include natural-language processing (e.g., analysis and generation of text) and pattern recognition (e.g., identifying associations in data sets; Walsh et al., 2019).

All forms of current AI fall into the category of narrow AI. This means the technology can undertake a domain-specific task (e.g., assessing résumés) but, unlike humans, cannot translate its capabilities to new domains (e.g., driving a car after learning to assess résumés). Despite what the term “narrow” suggests, AI already outperforms humans in a range of functions through the speed, accuracy, and scale of its processing capabilities (Walsh et al., 2019, p. 34). Debate remains regarding when (and whether) AI will achieve human-equivalent, or general, intelligence. Nevertheless, there is widespread societal debate, often fearful, surrounding the rapid growth of AI and what that means for the future. This is reflected in, and largely influenced by, popular-culture depictions of advancing technologies and debates regarding the future of work (Cave et al., 2018).

Several aspects of AI differentiate it from prior technologies. The enhanced predictive and forecasting abilities of machine-learning algorithms, particularly through unsupervised learning, extend AI’s capabilities into tasks traditionally viewed as human cognitive work. As AI is trained on large data sets, the nature of these data (e.g., their uncertain representativeness across populations) and how they are accessed and secured also raises questions about increasing “datafication” of workplaces and the fairness of the outcomes that AI generates. Implementation of AI generates implications for workers’ privacy and autonomy. The use of neural network models means AI’s computations are often a black box, unknowable to AI designers and end users alike, which has implications for accountability and transparency when AI is used for decision making. AI’s objective technical capabilities also generate subjective perceptions of the technology. For example, perceptions of agency in AI processes, via its self-learning nature and autonomous deployment, can make it seem like a quasisocial actor that can act independently on behalf of a human, and this has implications for workers’ self-understandings (Brunn et al., 2020; Endacott, 2021).

A Functional-Identity Framework for AI

Examination of AI’s impact on workers should be grounded in its functional capacities and the way the technology affects specific work tasks (Brynjolfsson & Mitchell, 2017; Das et al., 2020). Functionally, AI can (a) complement and support existing human work tasks, (b) replace existing human work, and/or (c) create new human tasks and subsequently new work roles (Acemoglu & Restrepo, 2020; Brynjolfsson & Mitchell, 2017). Depending on the nature of the tasks involved (e.g., their structure, repetitiveness, and outcomes), but also economic and structural factors, different occupations will be differentially affected by AI-related changes. For example, occupations that rely more heavily on information-technology tasks will be more highly exposed, and exposed at an earlier time, to effects of task substitution, task replacement, and new-task generation, which will lead to more fundamental changes in occupational structure (Das et al., 2020). AI-related changes, supplementations, replacements, and new-task generation may also happen simultaneously in different areas of an occupation (Brynjolfsson & Mitchell, 2017).

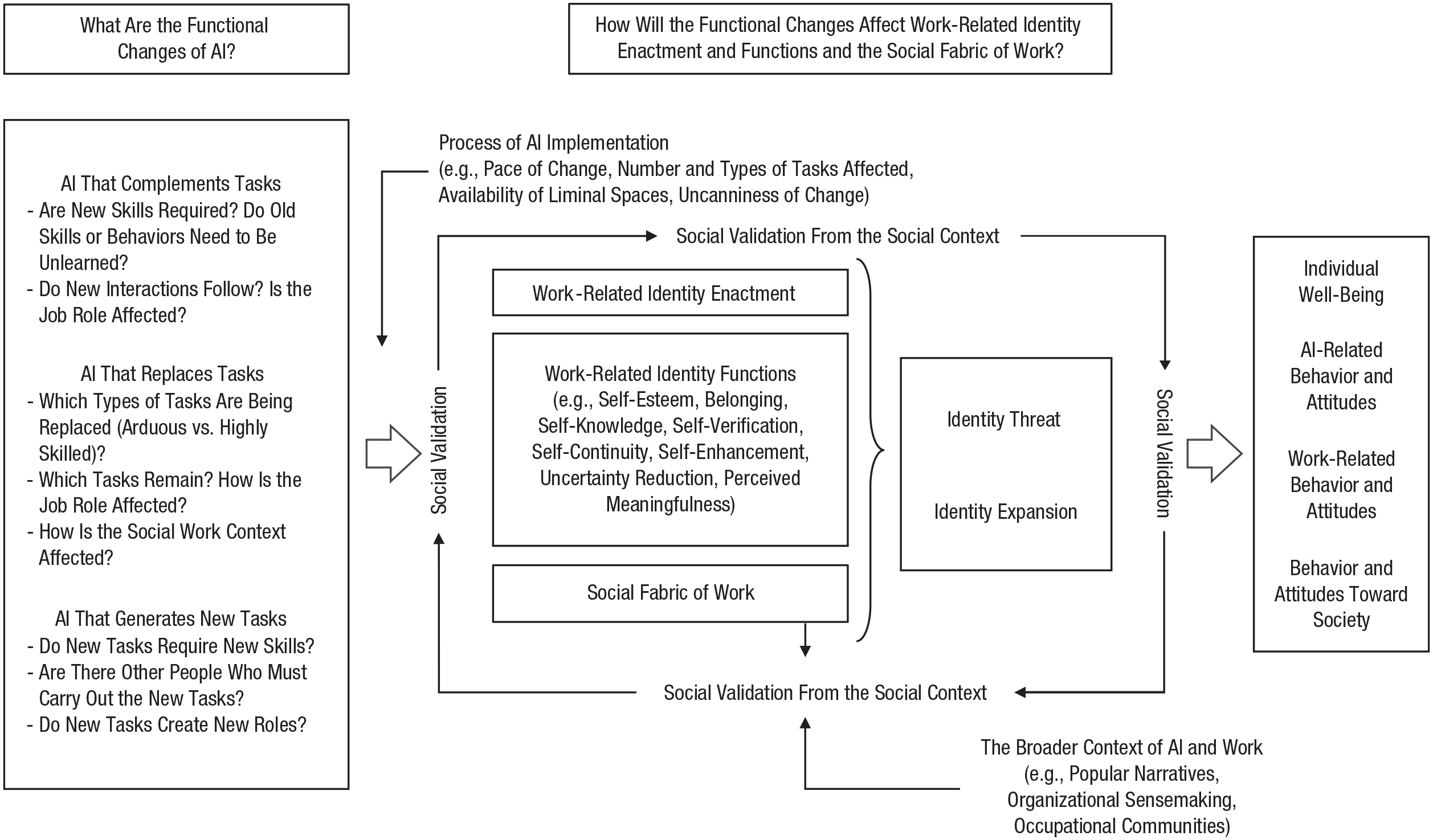

Figure 1 illustrates how changes and challenges associated with AI implementation can be understood using this functional-identity framework. The introduction (or anticipated introduction) of a nonhuman “intelligent” actor demands sensemaking, which will affect how workers think about themselves and experience their work—generating opportunities for both work-related identity threat and work-related identity enhancement, with subsequent effects on well-being, behavior, and attitudes. To understand which responses are the most likely ones, it is important to ask the following questions: (a) What are the functional work changes anticipated, and what challenges will arise from AI use in a specific work context? and (b) How will these changes and challenges affect the enactment of important work-related identities (e.g., occupational, role, and organizational identities), their functions (e.g., belonging, self-esteem, and self-enhancement), and the social fabric of work (e.g., team composition and organizational status hierarchies)? Furthermore, responses also depend on certain conditions. That is, the change process associated with AI implementation will shape employees’ responses. Relevant factors include the number and type of tasks affected, the pace of change, and the social context of the implementation, both within and outside work.

A framework of functional task changes related to implementation of artificial intelligence (AI), the effects of these changes on work-related identity, and individual, work-related, and societal outcomes. Functional task changes caused by AI can affect identity enactment, work-related identity functions, and/or the social fabric of work in various ways, which can lead to identity threat or enable identity expansion, depending on processes of implementation but also the broader context of work. These identity-change processes are embedded in a changing social context, which acts as a source of social validation and can support identity change.

Work-related identities reflect “who you are” and “what you do” regarding work. They are informed by the social groups people feel part of and by enactment of certain behaviors that are prototypical for those groups, and they offer important social recognition for those behaviors (Ashforth & Schinoff, 2016; Nelson & Irwin, 2014). Work offers plenty of opportunities for social self-categorization in that people can see themselves as part of an occupation, an organization, or a work team. People act according to social-group norms in their work and thereby gain social recognition. Furthermore, work-related identities fulfill multiple important identity functions. For example, they provide a sense of self-esteem and offer opportunities to experience meaning, a sense of belonging, and competence (see Ashforth & Schinoff, 2016). In addition, work contexts—especially teams, colleagues, and supervisors, along with their respective organizations and occupational communities—can offer social validation to ingrain those work-related identities and ensure that they fulfill their functions.

To make sense of dramatic AI-induced changes, workers will consider what these changes mean for their work-related identities, for their ability to meet identity functions (e.g., self-esteem), and for their enactment of identity-relevant behaviors through work. For this reason, consequences of AI-induced work changes depend on whether they generate threats or enhancements to identities and their functions. If identities and their functions are threatened, undermined, or lost, this not only is upsetting for the individual, whose well-being is affected, but also will result in a variety of identity-protection responses (Petriglieri, 2011). Conversely, if AI-induced change supports identity functions, and brings people closer to their ideal work selves, people can restructure, adapt, and expand their work identity (Endacott, 2021). Theoretically, all of this will have consequences for the individual as well as for the individual’s attitudes and behaviors toward AI, toward the changed workplace, and perhaps toward society at large (Craig et al., 2019; Nelson & Irwin, 2014; Petriglieri, 2011). Individuals may vary in their identity responses, and additional variation can result from the specific process by which AI is implemented, as noted earlier.

Sensemaking is not a singular process but rather happens in a social context that offers validation for new behaviors and new definitions of identity that will make identity changes stick or unstick (Ashforth & Schinoff, 2016). AI changes to key tasks can affect occupational boundaries and consequential team and organizational structures, thereby changing the social fabric of work (Craig et al., 2019). Moreover, the broader organizational, occupational, and societal context will matter for identity changes, as it provides a system of norms and expectations as reference points for sensemaking (Endacott, 2021). In the following sections, we leverage this framework to examine potential identity consequences associated with three main workplace functions of AI.

AI that complements and supports existing human tasks

AI offers new tools to complement and support existing work, such as through real-time monitoring or intervention in work environments (e.g., analyzing smartphone data to identify workplace hazards; Howard, 2019) or providing and structuring informational inputs (e.g., improving scheduling; Endacott, 2021). Workers using AI may need to acquire new skills (e.g., fluency in data analytics and evaluating data outputs) or unlearn old routines, as the demand for certain tasks in their jobs shifts (Lanzolla et al., 2020). Any resulting changes in identity functions (e.g., self-esteem, belonging), in turn, may affect work-related identities (Ashforth & Schinoff, 2016).

Task-related changes will also affect the social fabric of work. For example, some researchers propose that the usage of AI in psychiatry requires data-management skills and reliance on close collaborations with software engineers, thereby redefining organizational hierarchies and what it means to be a (competent) medical professional (Brunn et al., 2020). Interview studies indicate that imposed work changes in general are initially perceived as identity threats, but workers can gradually move toward acceptance if they manage to adapt to the changes and modify their identities (Chen & Reay, 2021). This outcome has been shown to depend on the manner in which AI is implemented, such as whether people have a voice in the implementation and whether there is a gradual experience of change. Another relevant factor is the availability of liminal, or transitional, safe spaces that allow for new learning and competency gain, which can facilitate adaptation toward new work-related self-understandings (e.g., seeing oneself as an information specialist rather than a radiologist; Jha & Topol, 2016). Also, if AI improves people’s ability to enact certain identity motives (e.g., to become better in their jobs and thereby gain self-enhancement), work-related identities can be extended, and “working with AI” can become a positive identity category (Endacott, 2021).

AI that replaces human tasks

AI-enhanced processes can also replace various cognitive and manual tasks previously done by humans, including (a) arduous and repetitive tasks (e.g., pattern recognition, stock refilling), (b) other routine tasks (e.g., scheduling, diagnostics, data search), and (c) more highly skilled tasks associated with complex decision making (e.g., AI-automated financial, legal, or policing decisions; customer service). Such replacement brings additional identity challenges, over and above those presented by AI that complements current tasks. When AI replaces tasks, workers are no longer able to enact task-related professional self-understandings. This can disrupt a sense of self-continuity and possibly frustrate the satisfaction of other related identity functions that carrying out the replaced tasks previously served (e.g., gaining self-esteem, certainty, meaning; Endacott, 2021). However, if the replacement of certain tasks by AI enables workers to get closer to their aspired identities (e.g., because it removes an obstacle to accessing identity-relevant functions by ameliorating a high failure rate or social stigma), workers will find it easier to change their identities, and the replacement will be more readily accepted (Endacott, 2021). The replaced tasks may also reshape the organization of remaining work. For example, interacting with or being managed by a self-learning, unintelligible algorithmic process that acts in a quasihuman way may feel uncanny (Schafheitle et al., 2020). Moreover, if decisions are perceived as being made without appropriate contextual information, or if they are perceived as incorrect or arbitrary, they may not be trusted (Raisch & Krakowski, 2021), which can result in feelings of alienation or dehumanization.

If the replacement of tasks is accompanied by the replacement of humans, this will also alter the social fabric of work, which, in turn, will affect how remaining workers can validate their existing work-related identities (Endacott, 2021). Workers who lose significant aspects of their jobs, or their job roles, will face the greatest identity challenge. How can they protect their self-esteem and achieve a sense of self-continuity and self-verification if the social self-categorizations enabling those functions no longer exist?

AI that generates new human work tasks

Despite its opportunities for human replacement, the implementation of AI can also create new tasks and job roles. Various “algorithmic occupations” are emerging, focused on training AI (e.g., getting tasks ready for automatization, teaching the algorithm), explaining the changes to workers (e.g., convincing them to use algorithmic outputs), and sustaining the use of AI (e.g., considering its ongoing ethical implications; Wilson et al., 2017). More small-scale changes due to AI will also create new tasks for workers, which might demand new skills. These new tasks are likely to be met with a variety of reactions. For example, people have been found to mourn the loss of changed work, try to conserve existing professional identities, and avoid new tasks (Chen & Reay, 2021). However, if liminal spaces are created for people to engage in learning and in identity restructuring, then identity expansion and adjustment to the changes is more likely to happen.

Identity conditionality

Whether functional changes lead to identity threat or enhancement will depend not only on how they affect workers’ self-understanding and their ability to enact work-related identities and to enjoy their identity functions, but also on (a) how AI-related task changes are implemented (e.g., the pace or pervasiveness of change) and (b) the broader social-validation context. The social groups people feel part of provide a system of norms and values that guide how they make sense of AI interventions at work and how they behave in regard to AI implementation. For example, workers who feel that a new AI tool runs against professional norms may report frustration and show resistance (Chen & Reay, 2021; Strich et al., 2021). This sensemaking will happen in a changed work context, as the functional task changes might recompose teams and organizational hierarchies by creating new roles and replacing old ones. The functional change may also shift the norms of what constitutes esteemed, desirable, and knowledgeable behavior in the eyes of other people. This change will foster identities that have been expanded and changed and threaten identities that are no longer adaptive. Also, the wider popular narrative surrounding AI technologies will be of influence. Currently, popular opinions on AI tend to fall into two camps: those that foretell doom (i.e., opinions that are overly skeptical and distrusting toward AI) versus those that foretell utopia (i.e., opinions that are overly excited and overly trusting toward AI; Raisch & Krakowski, 2021). Both positions can be problematic (Craig et al., 2019), and whether workers are more likely to experience identity threat or identity expansion will depend on which position more closely resonates with them. Thus, organizational attempts at sensemaking can be helpful in solidifying the expansion of new identities (Ashforth & Schinoff, 2016). Also, occupational communities for new or changed occupations can assist with collective sensemaking and redefining professional roles that will enable gradual identity development (Chen & Reay, 2021).

A Way Forward: Recommendations for Future Research and Practice

Our framework shows the importance of identity for understanding workers’ reactions toward AI implementation and the outcomes of such implementation. If AI-related changes modify or remove work that reflects valued components of people’s identities or reduces the opportunity to enact these identities, AI implementation creates a greater risk of identity threat (Craig et al., 2019; Petriglieri, 2011). Conversely, if AI-related changes bring people closer to their ideal work selves or enable better job-related coping and positive self-definitions, then positive work-related identity change is more likely (Endacott, 2021).

Although we have identified several factors that influence workers’ reactions to AI implementation and the outcomes of such implementation, more research is needed to specify when, where, and by whom AI-related changes are assessed as irrelevant, supportive, or threatening for work-related identities and their functions. For example, workers who are entrenched in standard procedures are likely to experience threat after AI implementation (Nelson & Irwin, 2014), whereas those with a more playful frame of reference (e.g., high levels of openness to experience) are more likely to experience positive identity growth (Schneider & Sting, 2020). Research confirms that senior experts tend to experience greater identity threat from task replacement by AI than beginners do (Strich et al., 2021). More research is also needed to examine how workers dynamically respond to AI-induced demands to adjust and recraft their identities, by redefining what they do and who they are in relation to it (Strich et al., 2021), as well as to investigate the consequences of AI implementation for workers’ well-being, attitudes and behavior toward AI, and work-related outcomes (e.g., performance, commitment, engagement; Craig et al., 2019). Future research may also extend our framework to the team level to allow an examination of disruption and the process of recovery among teams during AI implementation.

Our proposed framework also offers several practical recommendations for organizations. Best practices in AI implementation often focus on identifying salient stakeholders, such as workers, and their expectations and needs (Wright & Schultz, 2018). If workers’ needs are to be taken seriously, and if identity threat is particularly likely to occur in situations of distrust, of “black-box-ism” (in which algorithmic decisions appear to be unintelligible), and of replacement, leaders should adopt approaches to AI implementation that identify, mitigate, and compensate for these issues. For example, research shows that employers can assist workers in forming new identities conducive to acceptance and mastery of AI by providing narratives that focus on sensemaking and identity development (e.g., “we are on the advanced side of technology”), and help reduce workers’ fears or aversion to AI (Tong et al., 2021). Employers must also appropriately retool, retrain, and reskill workers (Brunn et al., 2020), so that they can interact with AI in ways that get them closer to their ideal work selves (Endacott, 2021). Offering social validation and a safe liminal space to restructure and enact new identities can also help sustain these efforts (Chen & Reay, 2021). Organizational leaders also need to be mindful of social relationships at work and beyond, as these will shape how people see themselves and evaluate how AI may remove or reconfigure social connections (Endacott, 2021).

As for the pace of AI implementation, identity research suggests that workers would benefit from paced replacement that is limited to particular tasks (ideally those not relevant to identity), rather than radical changes that affect aspects central to the job (Ashforth & Schinoff, 2016). Replacement will be faster when new technology can simply be “plugged in” and slower when new technology would demand a redesign of the work environment (Brynjolfsson & Mitchell, 2017). More research is needed to systematically compare workers’ outcomes across different kinds of AI implementation.

In conclusion, AI-related changes to work affect workers’ understanding of work, of themselves in relation to work, and of their social environment. As the use and capabilities of AI expand, workers, organizations, and broader society must manage these changes to enable workers to grow and develop toward satisfying and meaningful work selves.

Recommended Reading

Ashforth, B. E., & Schinoff, B. S. (2016). (See References). A useful starting point on work and identity that offers insights into identity processes in organizations, particularly in times of change and sensemaking.

Jha, S., & Topol, E. J. (2016). (See References). A discussion of how two clinicians must focus on their core and imitable skills and shape their understandings of self in relation to work when adapting to the use of artificial intelligence (AI) that replaces and complements human tasks.

Nelson, A. J., & Irwin, J. (2014). (See References). A context-specific account of the dynamics of identity change among librarians as a form of technology (not AI in this case) serves to replace, complement, and generate new work within their occupation.

Strich, F., Mayer, A. S., & Fiedler, M. (2021). (See References). An in-depth empirical examination of how workers experience the implementation of an AI system and the mechanisms through which they both protect and strengthen their professional identities.

Walsh, T., Levy, N., Bell, G., Elliott, A., Maclaurin, J., Mareels, I., & Wood, F. (2019). (See References). A thoughtful, extensive, and accessible overview of the nature of AI technologies, the sectors to which they are being applied, and their wider implications for individuals, society, and work.