Abstract

Gaming is a domain of profound skill development. Players’ digital traces create data that track the development of skill from novice to expert levels. We argue that existing work, although promising, has yet to take advantage of the potential of game data for understanding skill acquisition, and that to realize this potential, future studies can use the fit of formal learning curves to individual data as a theoretical anchor. Learning-curve analysis allows learning rate, initial performance, and asymptotic performance to be separated out, and so can serve as a tool for reconciling the multiple factors that may affect learning. We review existing research on skill development using data from digital games, showing how such work can confirm, challenge, and extend existing claims about the psychology of expertise. Learning-curve analysis provides the foundation for direct experiments on the factors that affect skill development, which are necessary for a cross-domain cognitive theory of skill. We conclude by making recommendations for, and noting obstacles to, experimental studies of skill development in digital games.

Gaming is not only an industry with greater revenue than the global music and film industries combined, but also is a domain of profound skill development. Gamers exist in the millions and invest significant time in competing and improving at their favorite games. Automatic tracking shows that the average player of a successful game may play hundreds or thousands of hours, and the most dedicated gamers clock more than 10,000 hr (e.g., https://wol.gg/, https://wof.gg/, https://wastedondestiny.com/leaderboard). This is all the more impressive given that such tracking represents only time actively playing the game, not peripheral aspects of practice such as time thinking about or discussing the game or time spent on out-of-game training of components of play. Data from game play can be very rich, going beyond mere records of match outcomes or scores and including records of every action taken by a player during a game, so they have great potential for scientific research. A key benefit is that every record of play is a record of skill practice for subsequent play.

Compared with novices, experts anticipate better, react faster, organize behavioral sequences and strategies differently (Ericsson et al., 2018), and even show different neural responses to problems in their domain of expertise (Bilalić, 2017). Comparing individuals of different skill levels has provided a good understanding of the underlying cognitive mechanisms that distinguish experts from novices. For example, de Groot’s classic work on chess (de Groot, 1965) demonstrated the differences in memory that distinguish expert chess performance. However, such cross-sectional analysis leaves a missing link: a full account of how expert behavior develops from initial practice, including which factors maximize final expert-level performance.

The Learning Curve

Practice is the fundamental factor determining skill and expertise. All studies of skill, regardless of whether they investigate alternative factors, must begin with an account of the effect of practice. There is a lawful relationship between the amount of practice and performance: Learning is initially rapid and slows as it progresses. This is called the learning curve. This canonical pattern of diminishing returns from practice holds for video games, as demonstrated by an analysis of the longitudinal performance measures of more than 45,000 players of Axon, a simple game that nonetheless requires core cognitive functions of rapid perception, decision making, and action implementation (Stafford & Dewar, 2014).

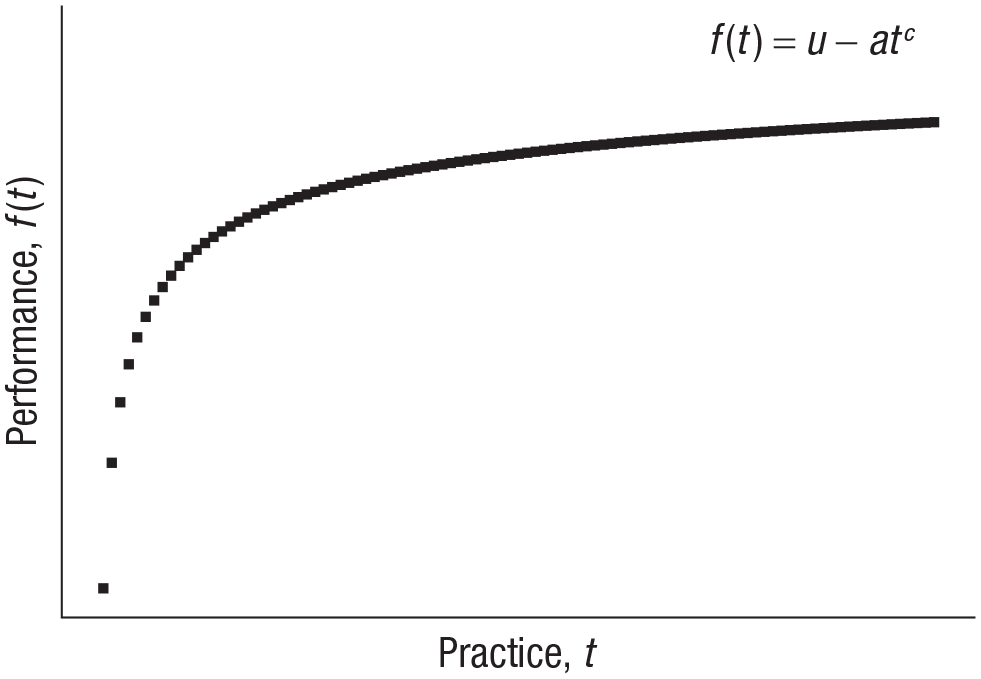

The relationship between practice and performance can be characterized mathematically. Figure 1 shows a power-law formulation, which has a long history in the study of skill, but the function that best characterizes this relationship has been contested, in debates over the number of free parameters and whether exponential or power-law functions provide the best fit (Evans et al., 2018; Heathcote et al., 2000; Steyvers & Benjamin, 2019). On OSF (https://osf.io/fvm8s/), we have provided code for implementing a simple learning curve and fitting it to observed data. Note that nonlinear curve fitting is an operation of some delicacy. In this article, we do not attempt to present a comprehensive treatment or best practice regarding learning curves (which can have multiple forms) or curve fitting. Instead, we wish to illustrate the in-principle use of a learning-curve function. Our claim here is merely that fitting a standard curve can serve as a valuable theoretical anchor: Because it allows extraction of separate parameters for the learning rate, initial performance, and asymptotic performance, curve fitting makes it possible to see the impact of different factors on different aspects of skill acquisition, and if done in multiple studies, it will enhance the comparability of results. Furthermore, digital games provide exactly the longitudinal, high-sample-rate data from a large and diverse sample population that can arbitrate questions about the best form of the learning curve (e.g., Steyvers & Benjamin’s, 2019, analysis was based on data from 54 million plays of a gamified brain-training platform).

The learning curve as a theoretical anchor for studies of skill acquisition in games. This figure shows a simple, three-parameter, power-law learning curve: Performance, f(t), is a function of practice, t; an upper limit, u; the learning gain, a, which defines how far initial performance is from the upper limit; and the learning rate, c. The notation follows Steyvers and Benjamin (2019). Code for implementing this learning-curve function, and fitting it to data, is available on OSF, at https://osf.io/fvm8s/.

Understanding of practice will be enhanced by inspecting the influence of different styles of practice on the learning curve, as mere repetition is not sufficient to develop expertise. According to the deliberate-practice account, experts must engage in extensive practice, while focusing on skill subcomponents, escalating challenge, and using immediate and detailed performance feedback (Ericsson et al., 1993). Deliberate practice explains a large amount of individual variation in measures of performance but is not the only factor that influences skill acquisition (Macnamara & Maitra, 2019). This opens the question of how other aspects of practice can be related to skill acquisition, a question that game data are well positioned to help answer.

Intraindividual Factors: The Nature of Practice

For the individual player, a major question about factors affecting skill acquisition is how to maximize gains from practice: how to learn most quickly and how to reach the highest performance level.

Various practice behaviors have been shown to change the shape of the learning curve. These behaviors range from taking breaks, to exploring the environment, to playing the game with other people. One effect that has been robustly established in the lab is that a given amount of spaced practice, compared with the same amount of massed practice, generates superior retention and/or performance. Games have afforded the opportunity to confirm that this phenomenon holds true over longer time scales and with larger sample sizes than in most lab studies (Huang et al., 2017; Stafford & Dewar, 2014; Stafford et al., 2017; Stafford & Haasnoot, 2017).

Analysis of game data does not always confirm experimental findings, however. For example, a test of sleep consolidation (i.e., greater improvement in performance after a practice-test interval filled with sleep, compared with an equivalent interval without sleep) revealed no evidence for this effect in game data (Stafford & Haasnoot, 2017), although it is unclear if this was due to the relative simplicity of the game studied, to participants being able to self-pace their practice (e.g., to sleep only when they had reached the limit for performance gains for the day), or to other factors.

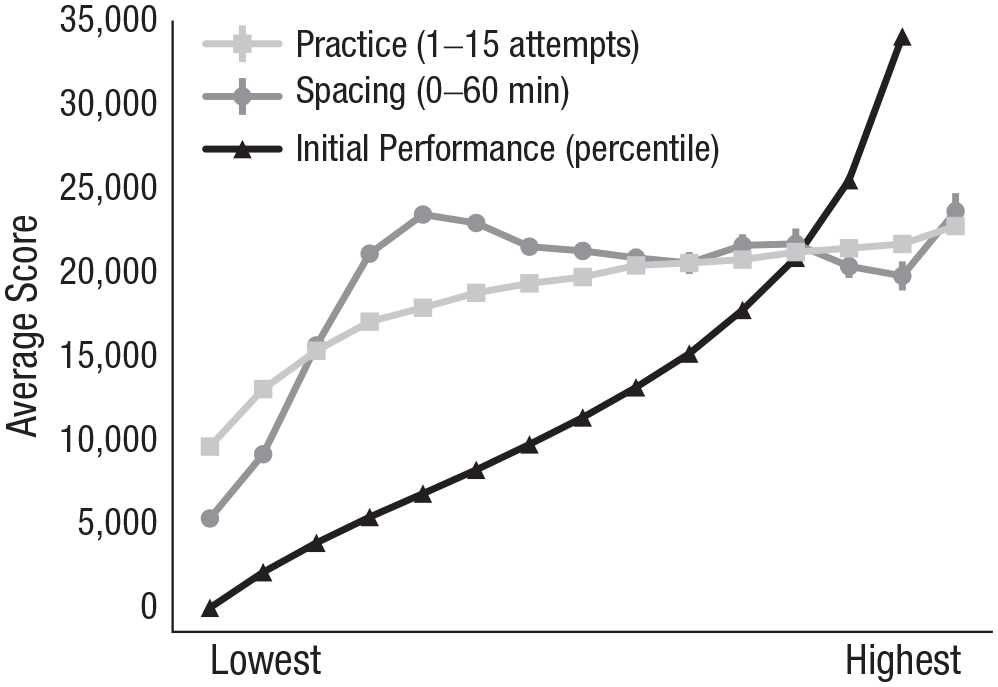

This study of sleep consolidation in game play also showcases another benefit of game data: High density of measurements per individual and a large sample size allow effects to be presented in terms of continuous parameters, rather than as binary comparisons, as Figure 2 illustrates.

Parametric comparison of factors affecting performance. Average performance level is shown across the observed ranges of three factors: amount of practice, spacing between early and later games, and level of initial performance. Note that each factor has a different range, defined by observations in the sample population, so the x-axis is scaled from low to high. Each factor is positively related to performance, but the shapes of the parametric curves are very different. Adapted from “Testing Sleep Consolidation in Skill Learning: A Field Study Using an Online Game,” by T. Stafford and E. Haasnoot, 2017, Topics in Cognitive Science, 9(2), Fig. 4 (https://doi.org/10.1111/tops.12232). Copyright 2016 by the Cognitive Science Society.

To maximize learning outcomes, players need to focus on actions that have been previously effective; however, to know which strategies and decisions are effective, they need to explore the environment. Thus, self-directed game play provides an example of the explore-exploit trade-off (i.e., exploitation of the action judged most immediately rewarding must be balanced against longer-term investment in investigating alternative actions; Mehlhorn et al., 2015). In one study (Stafford et al., 2017), players who explored more, as assessed by various measures of in-game behavior, had higher initial performance on average, but they did have not faster learning rates. Thus, this study did not confirm the prediction that early exploration affects longer-term performance. Exploring the space of possible social styles of play may be an exception to this pattern of a null effect on learning rate, as demonstrated by two studies that used different operationalizations of in-game social behavior. In one study (Landfried et al., 2019), more consistent teammate selection (low exploration) was associated with faster learning, and in the other (Stafford et al., 2017), higher assist rate (a measure of cooperative play within a selected team, and so higher exploration in social space) was associated with slower learning.

Observational studies allow analyses of rich behavioral data from large samples, covering timescales that are difficult to access in lab studies. They demonstrate the influence of learner-determined spaced practice, social play, and exploration in a high-motivation, nonarbitrary skill environment. However, because they do not use random assignment to test effects, they leave unanswered the question of whether forcing players to adopt a particular practice style would generate the same changes in skill acquisition.

Interindividual Factors

When one considers a population of players, analysis naturally turns to a wider space of factors that might underlie expertise, including factors that are fixed with respect to the individual but may vary between individuals.

“Talent” is commonly attributed to players who start at a relatively high level of performance and/or progress to high performance rapidly. This label obscures the underlying factors, which might include basic physiology, prior experience, superior transfer from one skill to another, or superior cognitive capacity to learn or generate insights.

Learning rate and initial ability are not independent factors. Players whose initial performance is higher may learn faster (Stafford & Dewar, 2014). Aung et al. (2018) analyzed data from 313,184 players of League of Legends and found that the learning rate on the first 10 games of 2016 predicted final performance a year (and at least 150 games) later.

Progress on understanding components of talent will come from out-of-game measures, such as independent measures of cognitive ability. Kokkinakis et al. (2017) showed that fluid intelligence (abstract-reasoning ability that is independent of general knowledge) correlates positively with rank in League of Legends, although Röhlcke et al. (2018) failed to find this relation using data from a similar game. Reasons for such divergent results could include different outcome measures, such as categorical rank versus a numerical measure of overall performance, as well how complex analyses account for control variables.

Collecting additional measures, whether demographic or cognitive, has great potential to augment analysis of game data (but requires extra effort and entails additional concerns with respect to players’ consent and the risks associated with data storage).

An example is analysis of the relation between age and skill development. Players’ age has been shown to be a consistent predictor of performance in games; older players reach lower levels of performance (Kokkinakis et al., 2017; Röhlcke et al., 2018) and can be more likely than younger players to quit when experiencing difficulties (Steyvers & Benjamin, 2019).

Age not only influences the interplay between skill and practice but also can be used to investigate the development of expertise across the life span. Investigating how skill changes with age allows researchers to identify ranges of peak performance, declines that occur in later life, and factors that underlie changes in performance across a lifetime. For example, looking at age-related changes in a speed-based measure of performance playing StarCraft 2, a real-time strategy game, Thompson et al. (2014) showed that the peak of performance is around the age of 24. This peak is close to what has been found for speed and power sports, such as basketball (28 years; Vaci, Cocić, et al., 2019), and is in contrast with peaks identified in cognitive-based domains, such as chess (36 years; Strittmatter et al., 2020). After reaching the peak of gaming performance in the mid 20s, players’ skill declines, which is likely partly due to slowing in response times, yet this decline does not seem to be dependent on players’ level of knowledge (Thompson et al., 2014). However, knowledge and expertise do seem to alleviate age-related declines in the case of board games, for which performance depends more on strategic and tactical thinking (Vaci et al., 2015). Focus on age-related changes in gaming performance might unearth other factors relevant to the development of expertise, shining light on complex interactions of inter- and intraindividual factors. For example, the interaction between initial ability and practice changes throughout chess players’ careers; whereas the effect of practice is the strongest at the beginning of a career, initial ability has the strongest relation to performance at the peak and later stages of a career (Vaci, Edelsbrunner, et al., 2019).

Toward a Cognitive Account of Skill Acquisition

The study of skill in gamers has offered promising early results and exciting prospects for future work. However, tantalizing results do not add up to a comprehensive theoretical account. As one author observed, “Cognitive skill acquisition awaits its Newton” (Ohlsson, 2008, p. 388). Researchers gather observations on patterns of learning, but real progress will come with testing theories of the cognitive mechanisms that allow individuals to acquire skills. Gobet (2017) made the case that progress in this area will require computational accounts of all aspects of task performance. Although we cannot hope to even sketch such a comprehensive theory here, we believe that learning-curve analysis—including formal modeling of individual learning curves—is a necessary step and will allow research on digital games to contribute to the wider literature on skill development. We wish to highlight some theoretical and methodological challenges that will need to be overcome on the way to such a theory.

Although learning curves are typically portrayed as smooth, this is a simplification. In addition to extraneous noise, endogenous processes within skill development—for example, restructuring of a skill’s subcomponents—can interrupt smooth progression. Gray and Lindstedt (2017) have highlighted the importance of attending to plateaus, dips, and leaps in the learning curve, and have proposed a method for identifying these discontinuities (see also Donner & Hardy, 2015). Note that this framework puts attention on the progress of the individual learner, rather than utilizing the power of large samples to extract a stable average learning curve.

The restructuring of component skills that occurs as part of skill development means that the factors that best predict superior performance may vary across levels of expertise (Thompson et al., 2013). As players’ expertise grows, they become more similar to one another in their skill components, and this consistency removes the variation that would allow researchers to identify the components’ importance to performance. This, again, underscores the importance of tracing the learning curves of individuals across their histories of skill acquisition.

Implications for the Study of Expertise in Games

We suggest that future research on skill acquisition will require attention to the details of individuals’ learning curves, not just large sample sizes. Fitting a learning curve to an individual’s data creates a simple summary statistic, the learning rate. This allows direct measurement of the rate of skill acquisition and analysis of how different factors affect it. In addition, learning curves typically involve a parameter for the asymptotic value, which can also be a key statistic for analysis, allowing the prediction of eventual level of skill from early performance.

Given that much of the work using games to study skill acquisition is inspired by experimental studies, it is perhaps surprising that it comprises so few direct experiments (but see Johanson et al., 2019; Piller et al., 2020). Part of the challenge of using real games in experiments is that one needs either to be a game designer, with the necessary technical and creative abilities, or to recruit the help of a game designer. Another challenge is that the decision to design an experimental game immediately raises the question of which game and which properties it should have. A game for investigating skill acquisition should have the following properties:

Entertainment value: Games offer the chance to study skill acquisition under conditions of intrinsic motivation (Baldassarre et al., 2014), but making enjoyable games is very difficult. By definition, if researchers could design games that people wanted to play, they could be game designers. The ideal game for research would make it possible to recruit participants easily because they would want to play (perhaps they would even pay the researchers!).

Challenge: Difficulty is a key game feature and a key variable for skill acquisition. The ideal game for research would have one or more mechanisms for adapting difficulty during training (as well as a standardized difficulty level for testing performance).

A single performance measure: Analysis of performance is best supported when there is a single scalar metric that defines level of performance and that players themselves are aiming to maximize. Many games have the advantage that they provide a final score or clear victory conditions (e.g., chess), but it is not certain that players universally play to maximize these. Complex games may have a variety of in-game outcomes that players may seek to balance (e.g., in League of Legends, players seek high rank, but may try to optimize intermediate measures such as kill-to-death ratio or gold per minute; see Vardal et al., 2022, for an account of why this matters).

A benchmarked performance measure: Measures of memory, for example, have floors (0% success) and ceilings (optimal performance, 100% success). Although many games have scores, only a subset have definitions of optimal performance or standards against which moves can be gauged. The ideal game for research would allow performance to be analyzed for how close to optimal it was, or how far from chance.

Identifiable and quantifiable cognitive components: The ideal game for research would be clearly characterized in terms of what component processes it relies on for performance, thereby allowing eventual expert performance to be analyzed for how and when the components are optimized. Identifiable components would need to be measured or ranked in terms of quality. Strittmatter et al. (2020) have provided a notable example; they used an engine with superhuman performance ability to provide a move-by-move analysis of chess performance. This approach converts chess, which has a clear overall performance measure (win, draw, or loss) into a game in which each move could be analyzed for optimality.

Inclusion of out-of-game measures: Availability of out-of-game measures is desirable in investigations of skill acquisition. Kokkinakis et al. (2017) provided a model for inclusion of such measures, showing how psychometric measures (e.g., of fluid intelligence) can contribute to the prediction of game performance.

Even when experiments are well designed and researchers take advantage of the principled foundation provided by fitting learning curves, the analysis of skill development in digital games presents numerous complexities and limitations. Players may come to games with different (and unclear) backgrounds, which affect transfer learning and create heterogeneity in players’ cognitive abilities and strategic approach to practice. Different players may have different motivations, which can affect their retention of learning and their rate of acquisition, as well as which aspects of game performance they are trying to maximize (e.g., some players may only want to win, whereas others may play to socialize and care less about winning). These heterogeneities will affect generalization of findings to non-game-playing populations and nongame domains.

So far, games research has taken inspiration from the psychological science of skill acquisition, offering promising confirmation, qualifications, and extensions of existing results. We have argued that the potential of games for understanding human skill acquisition cannot be met without more experimental studies, and studies that test multiple factors concurrently. We have suggested using the learning curve as an anchor for more theoretically comprehensive studies: It has a mathematical characterization, should be analyzed at the level of the individual, and can be a tool for examining different effects. We look forward to the time when inspiration flows both ways, and the study of skill acquisition in games inspires the wider psychological science of skill acquisition in all domains.

Recommended Reading

Gobet, F. (2017). (See References). Reflects on a special issue of Topics in Cognitive Science devoted to analysis of game data, evaluating contributions in light of Alan Newell’s program for progress in psychological theory.

Gray, W. D., & Lindstedt, J. K. (2017). (See References). Presents a framework for understanding the importance of discontinuities in the learning curve, showing how they can provide important insights into skill acquisition.

Stafford, T., & Haasnoot, E. (2017). (See References). Shows how game data can be used to compare multiple factors’ patterns of influence on performance.

Strittmatter, A., Sunde, U., & Zegners, D. (2020). (See References). Uses move-by-move data and a high-level chess engine to evaluate typical move quality in tournament chess, thereby creating a de facto optimality analysis of the game and showing an increase in move quality over historical time.

Vaci, N., Edelsbrunner, P., Stern, E., Neubauer, A., Bilalić, M., & Grabner, R. H. (2019). (See References). Shows how individual differences in numerical abilities change the relationship between practice and chess performance over the course of players’ careers.