Abstract

Humans tend to anticipate events when they synchronize their actions with sound (such as when they clap to music), which has puzzled scientists for decades. What accounts for this anticipation? We review two theoretical mechanisms for synchrony: predictive coding and dynamical systems. Both theories are grounded in neural activation patterns, but there are important distinctions. We contrast their assumptions, their computations, and their musical applications to anticipatory synchronization.

Some of the most intricate temporal sequences that humans produce are the sounds they use to communicate, including speech and music. People precisely coordinate their movements with sound; speakers prepare for their turn to talk, and musicians time their tones to synchronize or align with sound. Even young toddlers spontaneously drum or sway in response to auditory rhythms, which suggests that humans are predisposed to synchronize movement with sound. Because movement preparation is often slower (100–250 ms) than the rate of musical events (up to 8–10 tones/s), individuals must anticipate future musical events in order to prepare their movements. A striking feature of musical synchronization is the tendency people show to produce musical events prior to the sound with which they intend to synchronize; musicians produce tones about 30 to 50 ms sooner than a regular auditory beat, and nonmusicians anticipate even sooner (50–80 ms before the beat; Repp & Siu, 2015). This behavior is referred to as anticipatory synchronization, a cornerstone of human synchrony.

Several key variables influence individuals’ ability to synchronize actions with musical sound. One is musical training, which reduces the anticipatory synchrony, defined as negative mean asynchrony (when individuals’ produced actions precede stimulus onsets, such as when they clap to music and their claps precede the onsets of the musical tones). Another factor is sensory feedback; the more feedback available from self-generated and external auditory outcomes, the smaller the asynchrony (Repp & Siu, 2015). A third factor is the predictability of auditory sequences; the more regular a sequence, the smaller the observed variability in asynchrony. Measures of the mean and variance of asynchrony are used by synchronization models in different ways, as we discuss below.

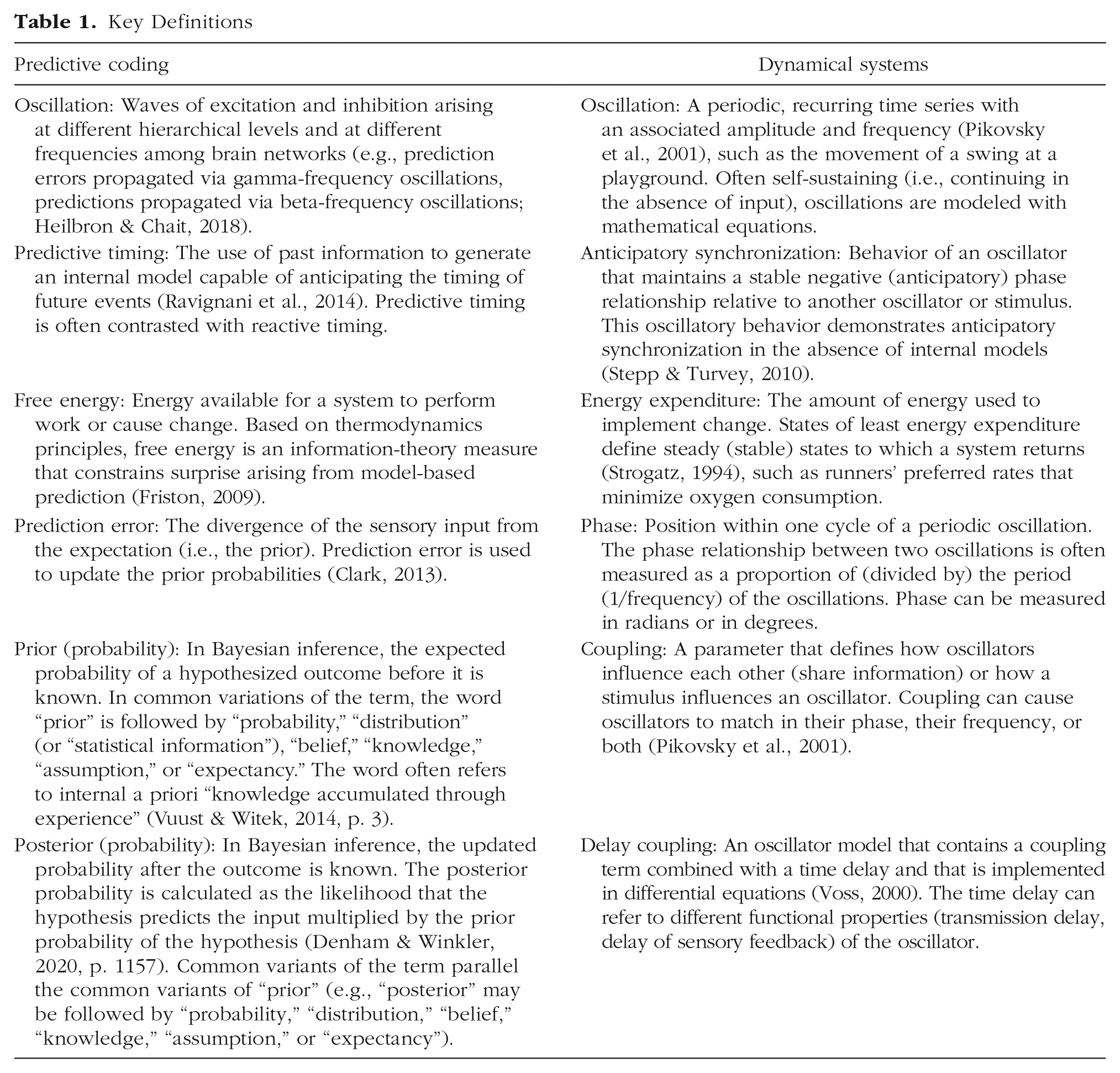

Two prominent theories offer relevant mechanisms for musical synchronization. One is predictive coding (PC), and the other is dynamical systems (DS). We compare these theories as applied to anticipatory synchronization and discuss their assumptions, computations, and limitations (see Table 1 for a summary of key definitions).

Key Definitions

Predictive Coding

Origins

Early origins of the concept of predictive coding have been traced back to Helmholtz, whose account of learning was based on hierarchical layers of representation (as described in Friston, 2009). More recent precursors to predictive coding include internal models of motor commands (Wolpert et al., 1995) and mental simulations of partners’ joint actions (Sebanz & Knoblich, 2009), according to which internal modeling of future events is based on representations of the mapping between one’s perceptions and actions (Clark, 2013). Internal models of motor control predict the sensory consequences of actions by simulating behavior and compensating for time delays in sensorimotor systems (Wolpert & Flanagan, 2001). Internal models have been applied to poor-pitch singing through comparison of emulations of vocal-fold tension with auditory feedback from resulting pitches (Pfordresher & Mantell, 2014). Extensions to interpersonal, or joint, action propose that a partner’s actions are simulated through the use of internal models to generate predictions for upcoming events (Sebanz & Knoblich, 2009). A musical application of joint action combines simulation of actions for oneself and for one’s partners, as well as imagining sounds produced by partners, with error correction (van der Steen & Keller, 2013).

Recent PC models compute the difference between an internal model and perceived events with a goal to minimize the entropy (the inverse of predictability) of predictions relative to future observed outcomes (Friston, 2009, 2018). Influenced by neurophysiological evidence of cascading processes among hierarchical levels in the visual system (Rao & Ballard, 1999), recent PC models rely on Bayesian inference to update internal models and have been applied to explain binocular rivalry (i.e., alternating perception of different images presented to the two eyes) and bistable visual percepts (i.e., percepts with two stable states; see Clark, 2013, for a review). Applications of PC models to auditory perception have recently been assessed (Denham & Winkler, 2020; Heilbron & Chait, 2018).

Assumptions

One key assumption of this approach is that an internal model is used to predict future behavior. Comparison of perceptual input with the prediction generates a difference (prediction error) that is used to adjust the internal model and then discarded. There is no need to store the original perceptual input or the prediction error, and thus the required representation is parsimonious. A second assumption is a hierarchical organization of brain networks in which lower areas receive sensory input that is projected to higher cortical areas. A hierarchy is formed by forward excitatory connections from sensory areas to association areas and by backward inhibitory connections downward, as well as by lateral interactions between units within layers. The hierarchical system permits the computation of prediction error at one level while the internal model is updated at another level; the computations occur in a cascaded fashion. Although the temporal properties of this cascade of forward, backward, and lateral connections are not well specified, the spatial projections are proposed to include the auditory network from brain stem to cortex (Koelsch et al., 2019).

Computations

To create accurate predictions of future states, PC models minimize prediction error over iterations, on the basis of Bayesian inference. The bottom layer of the hierarchy contains error units that sense the incoming information and compute the prediction error, which is sent to higher layers. The top layer encodes the prior probabilities, that is, the likelihoods that given sensory inputs correspond to predicted states. This is the internal model. State units in the top layer (actively) generate the prediction signal and send it downward to the error units. In addition, units within a level are connected laterally. Error units and state units have bidirectional signals: Forward connections convey prediction error to state units, and reciprocal backward connections send the predictions to error units. These bottom-up and top-down messages serve to minimize the use of free energy (Friston, 2009). Minimizing free energy corresponds to maximizing the probability that the sensory input matches the predicted outcome, given the internal model. Over successive iterations, the state units’ prior probabilities (existing beliefs before new evidence is introduced) become closer to the error units’ posterior probabilities (i.e., updated beliefs after new evidence is introduced). Eventually, when prior probabilities match posterior probabilities, the internal model is stable.

Applications to music

Vuust and Witek (2014) proposed a PC model to explain perception of musical syncopation. Syncopation, the occurrence of musical tones in unexpected metrical locations, is typical of groove music and often causes listeners to move, sway, or dance. Musical meter, a hierarchy of regular pulses that form patterns of strong and weak beats, provides the prior experience that tones will occur more often on strong beats. A listener’s internal model of meter generates predictions that include the movements needed to produce the syncopated rhythm, while suppressing the listener’s tendency toward overt action. This suppressed tendency to move arises from predictions of when the beat is expected to occur (see Koelsch et al., 2019, for related neurophysiological evidence). Prediction errors have two important parameters: a mean value and a variance associated with the mean. Vuust et al. (2018) observed that high amounts of syncopation are associated with high variance in prediction error (low precision). The more precise the prediction error, the more impact that error is expected to have. Only prediction errors associated with small variances cause the higher-level predictions to be adjusted; prediction errors with large variances are ignored (Vuust et al., 2018), a claim that is testable in synchronization tasks.

Two studies have tested PC models of anticipatory synchronization. The first study (Heggli, Konvalinka, et al., 2019) tested dyadic partners’ internal models as they synchronized with each other while they tapped identical duration patterns. Each partner heard over headphones a metrical context that was either the same one their partner heard (which created similar shared internal models with matched top-down predictions) or a different one (which created dissimilar internal models with unmatched top-down predictions). These matched and mismatched metrical contexts manipulated the top-down prior beliefs for when the partners’ taps should occur. Some of the contexts created polyrhythms (i.e., when participants tapped three times to every 4 context beats) that could be perceived as ambiguous. Small mean negative asynchronies were observed on average. Asynchronies became more variable when the two partners’ metrical contexts differed (although partners recovered quickly within trials), which was interpreted as evidence for prediction errors resulting from the discrepancy in the partners’ different internal models.

The second study that tested PC models of anticipatory synchronization (Elliott et al., 2014) involved individual participants who were presented with two auditory cues that created ambiguous percepts with which they tried to synchronize. The two stimulus cues were altered in their asynchrony (relative phase) and temporal regularity across trials. Participants’ large mean negative asynchronies decreased (i.e., moved toward 0) as the two cues’ relative phase increased (i.e., diverged from 0). Participants used different strategies depending on the cues’ relative phase: They synchronized to the integrated (combined) cues when the cues’ phase difference was small, but they synchronized with one cue or the other when the phase difference was large. Models fitted to the asynchrony variabilities provided support for a Bayesian causal inference model with four free parameters that captured prior probabilities associated with the auditory cues; prediction errors indicated that participants attempted to minimize the variance in asynchrony and extract a temporally regular beat.

Remaining issues

PC applications to musical synchrony to date have targeted ambiguous percepts in order to demonstrate the internal model’s impact. One open question is whether PC models can predict anticipatory synchrony (captured by mean behavior). Another is whether an internal model can change only in the presence of prediction error (when contextual information is available), or if it will also change in the absence of novel information. A final question is, what are the time costs of implementing a hierarchy that relies on several steps between layers for prediction error to be computed and sent upward and for prediction signals to be adjusted and sent downward?

Dynamical Systems

Origins

DS theories, which explain how systems change over time, have their origins in analysis of physical synchrony among pendulum clocks (Huygens, 1665, described in Pikovsky et al., 2001) as well as biological synchrony in circadian rhythms, cardiac rhythms, and motor coordination (Haken et al., 1985; Winfree, 1967). Synchronization arises when oscillators (alternating waveforms that repeat) that are self-sustaining (continue in the absence of external input) share information via coupling, which causes them to adapt to each other or to a stimulus (see Table 1). Their self-sustaining nature is supported by neural (magnetoencephalographic) representations of musical pulse that develop at a beat frequency despite the absence of stimulus energy at that frequency (Tal et al., 2017). When an oscillator is momentarily disturbed by input, it soon returns to its original frequency, its stable state of minimum energy expenditure. An oscillator will resonate (respond with increased amplitude) to a stimulus when its natural frequency is close to the stimulus frequency or when the coupling between oscillator and stimulus is high. Linear oscillators synchronize 1:1 with stimulus events; nonlinear oscillators additionally synchronize at higher resonances (e.g., 1:2; 1:3). The perception of hierarchical meter thus arises from higher-order resonances; internal models are not required (Large, 2008).

Anticipatory synchronization arises in delay-coupled systems when a driven oscillator (i.e., an oscillator affected by external input) compares its own time-delayed memory of a previous state with the current input (Voss, 2000). Delay coupling refers to coupling between two or more oscillators that is modulated by time delay from at least one oscillator. Stepp and Turvey (2010) described delay coupling as “strong” anticipation: Anticipated future states are based on present and past information already in the system without the need for internal models. Machado and Matias (2020) demonstrated the biological plausibility of delay-coupled models by simulating delay coupling in spike neuronal populations that lead to bistable visual percepts. Time delays in DS models have been implemented to account for intrabrain synchronization dynamics (Deco et al., 2009, 2011) as well as interpersonal synchronization (Varlet et al., 2012).

Assumptions

DS theories of human synchrony assume that behavior arises in a system composed of coupled subsystems of oscillators whose emergent processes explain perception and cognition. Individual oscillations arise from the joint activity between coupled excitatory and inhibitory neurons and are modeled either at the biophysical level or, more commonly, by using oscillator models with simplifying assumptions. Oscillatory time series arise from neuronal interactions, as well as from neurons interacting with external quasiperiodic stimuli, such as musical sequences. Most DS theories assume continuous change over time, but they can also be modeled discretely, as in the case of musical pulse (Large, 2008). Time delay in DS models, often implemented as a constant for simplicity, is assumed to represent the synaptic transmission rate in a neural system (Machado & Matias, 2020).

Computations

Delay-coupled DS models have been proposed to account for anticipatory synchronization. Based on self-sustaining oscillators, the coupling term in these models is often adapted from the Kuramoto model (Strogatz, 2000) which assumes that each oscillator has an intrinsic frequency that can be tuned during development via Hebbian learning (strengthened connections between simultaneously firing neurons; Tichko et al., 2021). Oscillators adapt their phase on the basis of how much they differ from incoming stimulus onsets, modulated by the coupling strength; higher coupling means more phase correction and faster synchronization. Time delay compares the oscillator’s past (at a constant delay) with the current input (Voss, 2000), thus providing a type of self-feedback. Most critical is that the oscillator’s past incorporates the past of the stimulus; thus, the oscillator (passively) reacts to present events as a function of present and past states of both stimulus and oscillator.

Applications to music

Roman et al. (2019) tested synchronization patterns of individuals who tapped to a metronome, using a single Hopf oscillator (an oscillator whose intrinsic frequency can adapt to a stimulus frequency) with time delay to represent unidirectional coupling with the metronome. The model, designed to mimic neural oscillations and adapt its frequency, simulated the neural delays necessary to account for the observed tappers’ negative mean asynchrony. The model successfully predicted the difference in the degree of anticipatory synchronization between musicians and nonmusicians who tapped at a range of metronome rates.

Demos et al. (2019) applied a bidirectional delay-coupled model, adapted from the Kuramoto model, to the more complex case of asymmetric anticipatory synchronization between partners playing musical duets. Each partner was modeled as a simple oscillator with parameters for time delay, coupling, and intrinsic frequency. Each oscillator could be driven by or could drive the other oscillator, depending on whether one partner heard feedback, both partners heard feedback, or neither partner heard feedback. The model accounted for anticipatory synchrony under experimental conditions that manipulated auditory feedback to shift the oscillators from bidirectional to unidirectional information transmission. The use of delay-coupling terms successfully predicted the driven partner’s mean anticipatory synchronization (e.g., the driven partner performed earlier than the driver partner) when the driver partner could not hear the driven partner; this was the first delay-coupling implementation to extend beyond single-person models of musical synchrony to interpersonal interaction.

Heggli, Cabral, et al. (2019) examined how partners alter their synchronization in a tapping task, using bidirectional coupling but with no time delay. Two oscillators modeled each individual’s perception and action as coupled; in addition, bidirectional coupling linked each person’s action to the partner’s perception. The model captured different patterns of synchronization variability, such as when partners showed mutual adaptation (increased coupling) or when both partners tried to lead at the same time (reduced coupling). Similar coupled oscillator networks without time delay can produce anticipatory synchronization (Pyragiene. & Pyragas, 2015).

Remaining issues

The delay-coupling term in DS models accounts for both individual and dyadic anticipatory synchronization. One question is how time delays map to neural features, such as synaptic transmission (Machado & Matias, 2020), and to functional properties of neural networks (Deco et al., 2011). Another question is whether the delay is constrained by the individual, task, or social context. Finally, how can these parameters be interpreted and connected back to behavior given the complexity of their nonlinear interactions?

Model Comparisons

Although assumptions and computations differ between PC and DS models, they have similarities, too. Both classes of models rely on interconnections between excitatory and inhibitory neurons. The primary differences concern the role of time and the organizational architecture: The hierarchical representations in PC models include several layers, more types of nodes than DS models (only PC models include modulatory nodes), and more connections than the bottom-up DS oscillations, which are based solely on excitatory-inhibitory interactions. These computational distinctions suggest that the models diverge in parsimony. These distinctions parallel differences in the synchrony behaviors accounted for: The PC models’ Bayesian properties account for variability in synchrony, whereas the DS models’ delay-coupled differential equations account for mean (directional) synchrony. Although these theories are not mutually exclusive, important paradigmatic and architectural differences prevent an easy merger of them: PC models, evolved from information theory and systems neuroscience, make top-down/bottom-up distinctions (such as in Heggli, Konvalinka et al.’s, 2019, mismatched internal models of musical partners). In contrast, DS models, evolved from physics and mathematical biology, include interactions across multiple spatiotemporal scales (such as unidirectional and bidirectional coupling within and across ensemble musicians). In short, predictive coding explains synchrony by generating predictions based on prior learning; dynamical systems explain synchrony by coupling already existing oscillations without a need to generate predictions.

Conclusions

PC and DS models of musical synchronization share similarities, including the goal to minimize energy; reliance on differences between expected and observed outcomes; and grounding in neurophysiological models of excitatory and inhibitory activation. The models have important differences in how much they rely on prior knowledge about the resulting output, how previous adaptations to stimuli are retained, and whether they are intended to account for the mean or variability of synchrony. To date, only DS theories have successfully modeled anticipatory synchronization.

Future research directions include modeling noisy conditions; explaining roles of contextual learning, musical pleasure, and reward; and scaling up to larger groups. Advances are likely to be assisted by machine learning and other mathematical tools for capturing musical synchrony.

Recommended Reading

Balanov, A., Janson, N., Postnov, D., & Sosnovtweva, O. (2009). Synchronization: From simple to complex. Springer. An easy-to-read introduction to synchronization theory from a dynamical-systems perspective.

Bubic, A., von Cramon, D. Y., & Schubotz, R. I. (2010). Prediction, cognition and the brain. Frontiers in Human Neuroscience, 4, Article 25. https://doi.org/10.3389/fnhum.2010.00025. A comprehensive, accessible overview of predictive coding.

Keller, P. E., Novembre, G., & Loehr, J. (2016). Musical ensemble performance: Representing self, other, and joint action outcomes. In S. S. Obhi & E. S. Cross (Eds.), Shared representations: Sensorimotor foundations of social life (pp. 280–310). Cambridge University Press. A recent synthesis of predictive coding in musical joint action.

Kelso, S. A. J. (1995). Dynamic patterns: The self-organization of brain and behavior. MIT Press. A foundational overview of dynamic systems in human behavior.

Washburn, A., Kallen, R. W., Lamb, M., Stepp, N., Shockley, K., & Richardson, M. J. (2019). Feedback delays can enhance anticipatory synchronization in human-machine interaction. PLOS ONE, 14(8), Article e0221275. https://doi.org/10.1371/journal.pone.0221275. A recent extension of dynamical systems to human-machine interaction.

Footnotes

Acknowledgements

We thank Jon Cannon, Janeen Loehr, Kerry Marsh, Peter Pfordresher, Paula Silva, and Tim Sparer for discussions on the manuscript contents and Jocelyne Chan for assistance with manuscript preparation.

Transparency

Action Editor: Robert L. Goldstone

Editor: Robert L. Goldstone