Abstract

The involvement of top-down processes in perception and cognition is widely acknowledged by now. In fields of research from predictions to inhibition, and from attentional guidance to affect, a great deal has already been charted. Integrating this newer understanding with accumulated findings from the past has made it clear that human experience is determined by a combination of both bottom-up and top-down processes. It has been proposed that the ongoing balance between their relative contribution affects a person’s entire state of mind, an overarching framework that encompasses the breadth of mental activity. According to this proposal, state of mind, in which multiple facets of mind are clumped together functionally and dynamically, orients us to the optimal state for the given circumstances. These ideas are examined here by connecting a broad array of domains in which the balance between top-down and bottom-up processes is apparent. These domains range from object recognition to contextual associations, from pattern of thought to tolerance for uncertainty, and from the default-mode network to mood. From this synthesis emerge numerous hypotheses, implications, and directions for future research in cognitive psychology, psychiatry, and neuroscience.

Keywords

For several decades, a major obstacle for progress in both neuroscience and cognitive psychology has been human intuition. Thinking of the brain as hierarchical and sequential seems much more natural than thinking of it as relying on interactions in multiple directions and on simultaneous propagations, and in earlier decades, we as researchers examined the brain in a way that our minds could grasp more easily. What helped reinforce this misleading intuition was what was known about the anatomy and physiology of the sensory cortex at the time. The visual cortex, for example, is structured in a hierarchical manner; cells in higher areas are sensitive to more complex features at increasingly large portions of the visual field. This organization lends itself to the belief that visual processing occurs serially from the bottom up. Following those early years, however, anatomical evidence for reciprocal, feedback and feedforward, connections in the cortex gradually started to mount, and physiological reports of the major involvement of top-down processing in many avenues of perception and cognition have been accumulating since (e.g., Bar, 2003; Engel et al., 2001; Gilbert & Li, 2013; Rao & Ballard, 1999).

The roles and participation of top-down processes are now widely accepted, which has gradually helped researchers acknowledge that perception and cognition are both combinations of bottom-up and top-down influences. In fact, the fundamental aspects of human mental life all seem to vary along a continuum of the extent to which they rely on top-down versus bottom-up information and processes. Examples include how much people rely on incoming sensory information compared with memory and expectations, or the extent to which people focus attention on externally determined cues compared with internal goals and guidance. Furthermore, how much people operate on the basis of top-down guidance versus bottom-up information varies dynamically according to circumstances such as context and overall state (Herz et al., 2020).

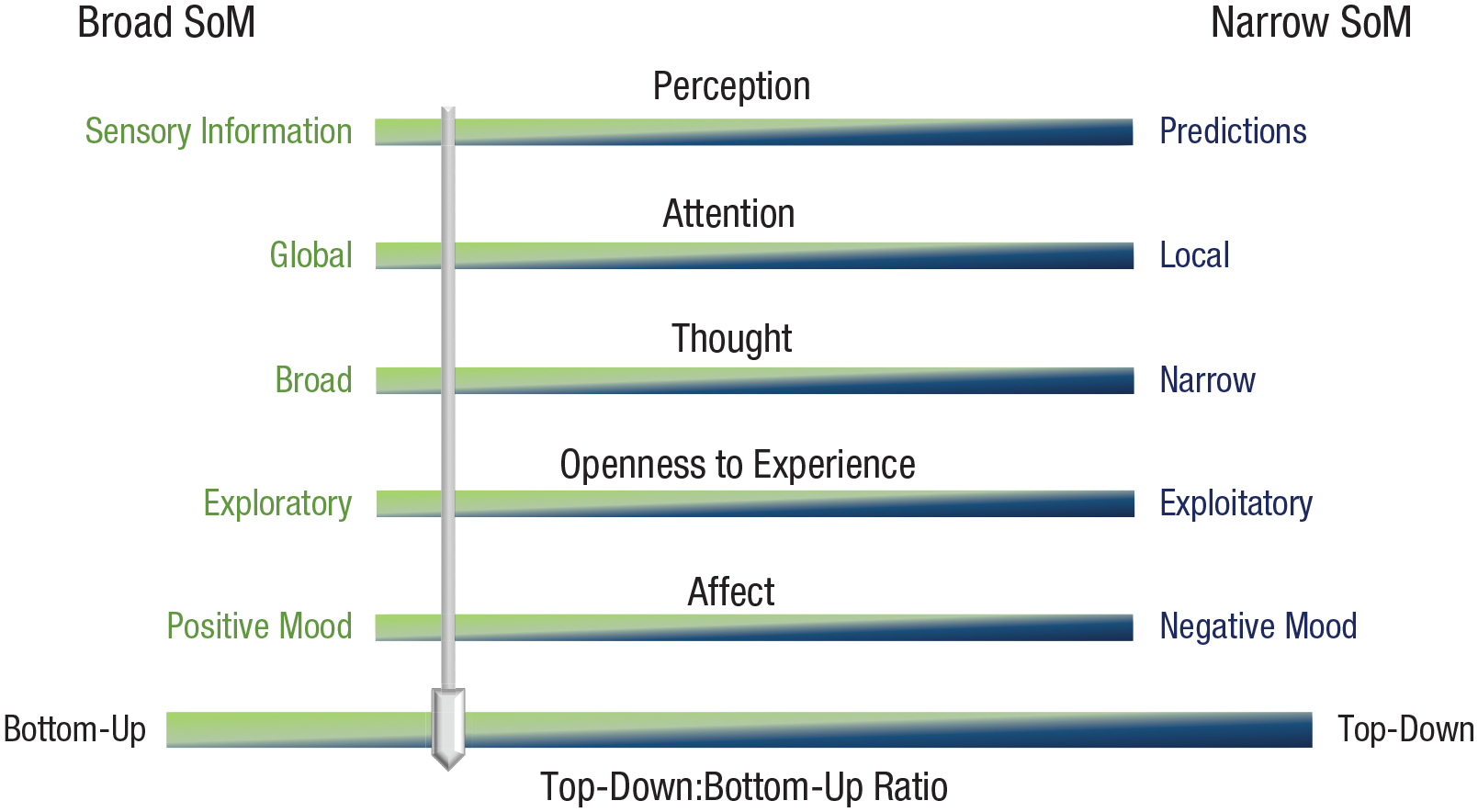

What my colleagues and I have noticed over the years is that people’s dispositions, biases, and tendencies in central domains such as perception, attention, thought, openness to experience, and mood not only vary along a continuum of how much they are driven internally or externally, but also vary along this continuum in tandem. Changes along this continuum happen in a synchronized manner, which is dictated by the individual’s overarching state of mind (SoM; Herz et al., 2020). In the following sections, I examine the involvement of top-down processes in multiple domains, elaborate on the concept of SoM, and propose novel extensions of this framework for the future.

Prediction in Recognition

Objects such as pens, trains, apples, surfboards, and binoculars make up the world. For people to understand where they are, what to do, and how, they first need to recognize the objects around them. Recognition is so cardinal that people look at an object and cannot help making an effort to recognize it, which perhaps is best exemplified by how frustrating it feels to see an object and not be able to assign it a meaning (Fig. 1). But how is it that people understand what they see? This question has been of interest for centuries, and in modern times has attracted an exceptional amount of research. I limit my discussion to objects perceived visually, both because they are the most extensively studied and because much of what I discuss here is also applicable to objects of other modalities, such as auditory objects.

Illustration of the mandatory nature of object recognition. As you view this example of an apparently meaningless object (encountered in a Berlin train station), you can sense the brain’s persistent search for meaning.

Cognitive psychologists and neuroscientists want to know how objects are represented in memory, how they are recognized, how they are manipulated, and more. A great deal is already known, and it seems that even a greater deal is not.

Understanding of object representation proper, however, is lacking. In fact, it is still unclear even how exactly the letter “A,” for example, is represented by neurons. Part of this delay is due to some persistent debates—which were nevertheless important for eventual progress—such as the debate about whether objects are represented by collections of two-dimensional instances (e.g., Edelman & Bülthoff, 1992; Ullman, 1989) or as three-dimensional structural descriptions (Biederman, 1989; Vogels et al., 2001).

The second, even bigger source of the delay in understanding of object representation is the artificial boundary between perception and cognition. Perception and cognition have traditionally been treated (and taught) as two separate domains, portrayed as operating at different times and on different inputs. According to this paradigm, which has been dominant for decades, perception first interprets the physical properties of the environment coming through the senses, and cognition comes into play only subsequently, once perception is completed, to deploy higher-level processes such as control and decision-making. Perception and cognition are certainly nonoverlapping to some extent, but they do work simultaneously, helping each other on a regular basis (not necessarily in all domains; see Firestone & Scholl, 2015). Eliminating this arbitrary boundary was essential in order to allow the integration of more progressive ideas.

In my own lab, this integration started with a neuroimaging (functional MRI) experiment on object recognition (Bar et al., 2001). We were looking for the “Aha” moment when participants were aware of what the object presented to them was. We found that the activity most diagnostic of successful recognition took place in the fusiform gyrus within the visual cortex. But what caught our attention beyond this somewhat intuitive result was that the prefrontal cortex, specifically, a region within the orbitofrontal cortex (OFC), was also active in proportion to recognition success. This was puzzling because up to that point, the prefrontal cortex was taken to mediate high-level functions exclusively. Why would it also show sensitivity to performance in visual object recognition?

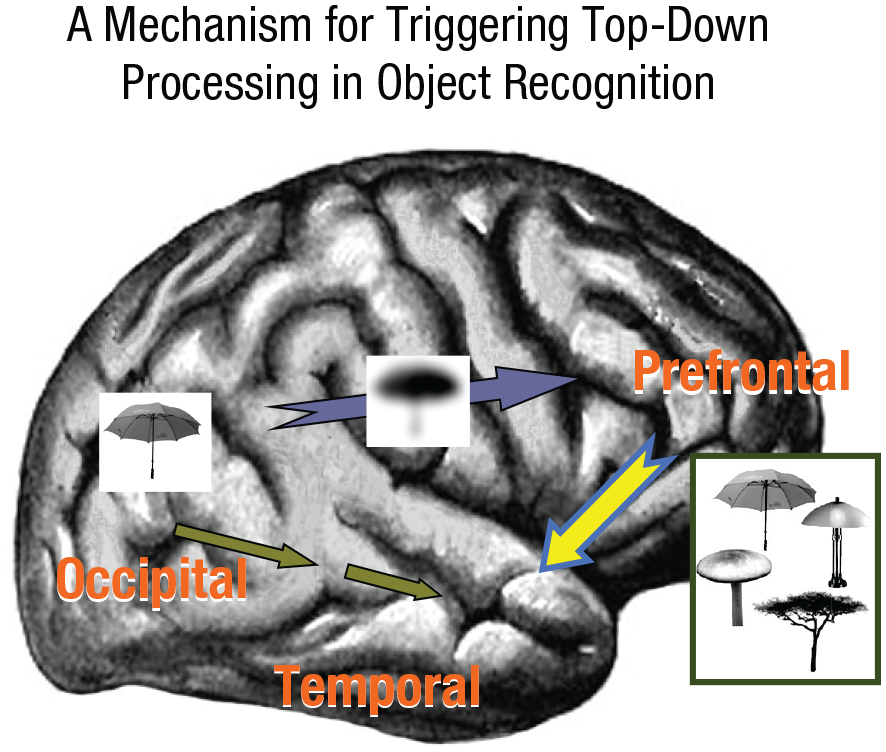

Two alternative accounts could explain the involvement of the OFC in recognition. The first, which is more in accordance with the older beliefs about bottom-up processing and the separation of perception from cognition, suggests that this OFC activation is a reflection of a postrecognition process in which some high-level operations are executed after the identity is clear (e.g., “it is raining, so I must take an umbrella”). The alternative account is that this OFC activation actually reflects prerecognition feedback processes that are intended to facilitate recognition by projecting top-down “initial guesses” as predictions. Specifically, our idea was that in addition to the ventral pathway of visual recognition, there is a quick bypass projection from early visual areas directly to the OFC, where activation of predictions about an object’s identity is triggered (Bar, 2003). What might be this quick and predictive information? Low spatial frequencies (LSFs; Fig. 2) are extracted early (Merigan & Maunsell, 1993) and projected rapidly (Bullier & Nowak, 1995), and thus are optimal for generating rapid predictions about objects that share the same LSF signature (Fig. 3).

Illustration of the different types of information conveyed by different spatial frequencies. Low spatial frequencies (right) maintain the global properties, blobs, and aspect ratios of the intact image (center). High spatial frequencies (left), on the other hand, accentuate the edges and the fine details.

A model for quick generation of top-down predictions that facilitate object recognition. The low-spatial-frequency (LSF) version of the input umbrella image is projected to the prefrontal cortex (more specifically, the orbitofrontal cortex) and triggers the activation of the most likely objects that fit that LSF image, thereby significantly facilitating recognition in the visual cortex (including the occipital and temporal cortices).

To show that the OFC activation indeed generates LSF-based predictions, we had to prove first that this site is more sensitive to low compared with high spatial frequencies, which we did (Bar, Kassam, et al., 2006; Chaumon et al., 2013; Kveraga et al., 2007). More important, we also had to show that this OFC site is differentially activated before recognition is accomplished in the visual cortex, or else its activation is not really predictive. To do that, we used magnetoencephalography, which, unlike functional MRI, has superior temporal resolution that allowed us to ask whether OFC activation in recognition develops early enough to be predictive, and indeed it does (Bar, Kassam, et al., 2006).

The possibility and support for the idea that the brain uses a rudimentary, LSF image quickly to generate predictions led us to suggest that rather than asking “what is this?” when encountering an object, the brain asks “what is this like?” (Bar, 2007). What may seem like a small semantic difference actually encompasses a radical change in how to view the mechanism through which people understand the world around them: Rather than making up a representation based solely on the bottom-up sensory input, the brain seeks a comparison between rudimentary information in the input and representations that resemble it in memory. Once the brain makes the analogy between the new and the familiar, it gains access to the vast information it has already acquired about such objects during previous encounters. In many respects, a good part of what people perceive is memory, rather than sensory input proper. As I discuss later, the ratio between how much perception is based on input and how much it is based on memory varies, and is determined by SoM.

This dependence on past experience to understand the world seems to go far beyond the realm of objects. My colleagues and I have shown that it operates also in the formation of fast first impressions about other people, which also relies on LSF information (Bar, Neta, & Linz, 2006). Similarly, we found the same quick reliance on LSF information in an experiment in which we tested our hypothesis that preference for everyday objects is biased toward objects with smooth rather than sharp contours (Bar & Neta, 2006).

Taken together, this research suggests that quick, rudimentary LSF information seems to guide, or even determine, object recognition, scene recognition (Torralba & Oliva, 2003), first impressions of people, and object preference. From this consistent finding, we derived a more global hypothesis, the lasting-primacy hypothesis, stating that what the individual extracts from the environment very early has a lasting effect on perception and cognition.

According to this hypothesis, the brain typically makes the earliest possible connection between sensory input and memory, and then it settles on this analogy to prepare for the future (Bar, 2007). As I elaborate later, this is not always the case, and the extent to which primacy lasts depends on SoM. When people are more open to uncertainty for the sake of learning, for example, they seem to suspend predictions and lean more toward bottom-up information streaming through the senses. With all their resourcefulness, people take their predictions into consideration in some states more than in others.

Contextual Associations

Objects in the world do not appear in isolation. Rather, they co-occur with other objects, embedded in complete and usually coherent scenes. What is interesting and extremely useful about ecological, real-world scenes is that the object associations and spatial relations between objects are typical and frequent: refrigerators and ovens together in kitchens, tennis balls and tennis racquets together in tennis courts, and so on. Such regular co-occurrences provide the statistical probability of finding object x in context y and location z at time t. By storing the statistical regularities of the world in memory, consciously or not, one is better prepared for what is ahead (Bar, 2004).

Despite the omnipresence of scenes and object associations in the world, there was surprisingly little research on this topic for many years, with the exception of the pioneering work of Biederman (e.g., Biederman et al., 1982). Aminoff and I were curious about the brain mechanisms that mediate contextual associations, and we started our quest by looking for the possible differences between the activation elicited by recognizing objects that are strongly associated with a certain context (e.g., a hard hat or a barn) and the activation elicited by recognizing objects that are equally frequent in the environment but are not associated with a particular context (e.g., water bottle; Bar & Aminoff, 2003). The results pointed at two interesting and previously unexpected overlaps, each of which propelled a new research program.

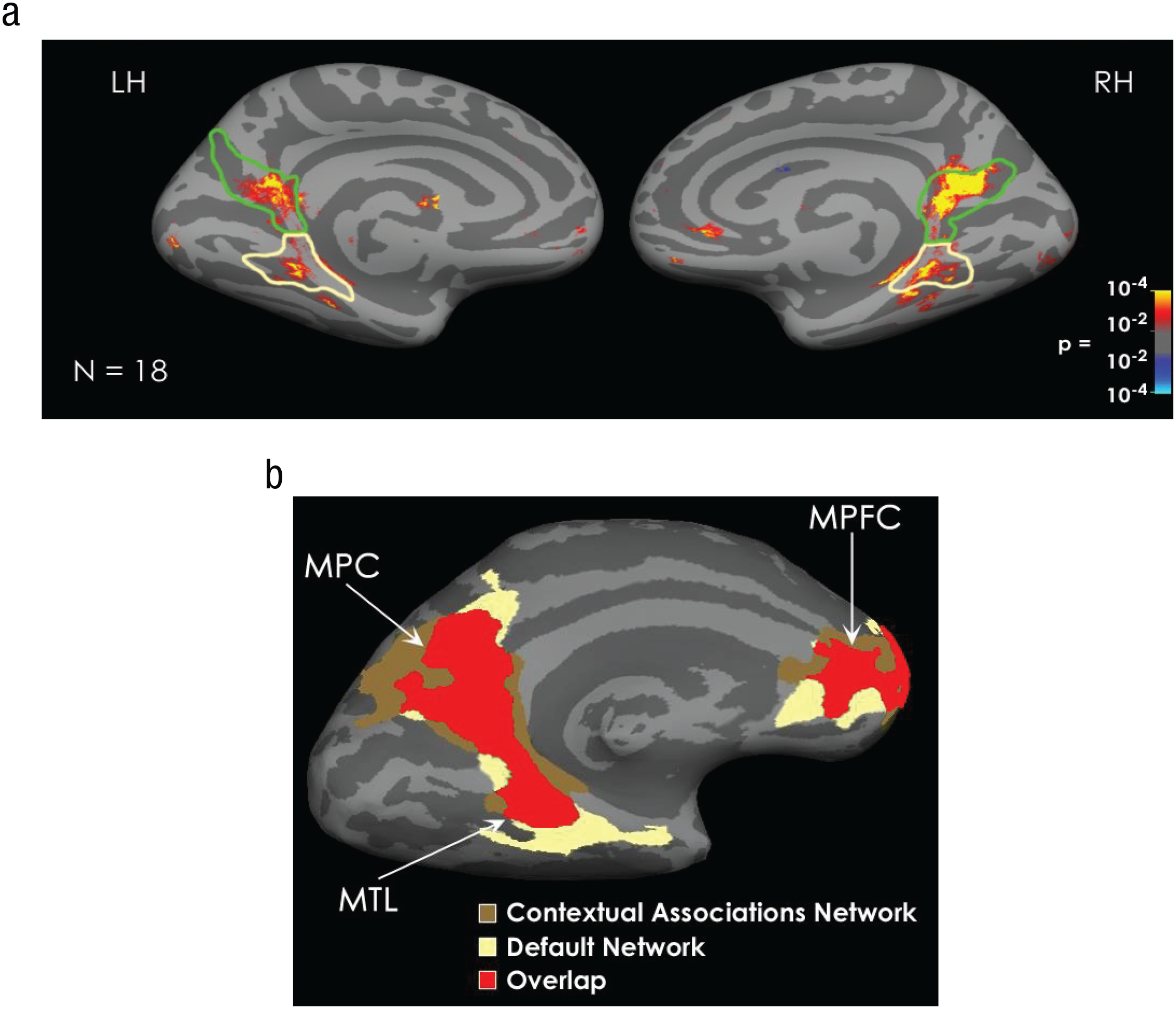

First, we found that part of the network that is activated by contextual associations (Fig. 4a) includes the parahippocampal cortex (PHC), and in particular, a region in it that had previously been linked with the perception of places and even dubbed the parahippocampal place area (Epstein et al., 1999). This ignited an interesting scientific debate about whether places are different from context or are simply themselves a type of context (Bar et al., 2008). The debate seems to have gradually settled on an organization whereby more spatial associations activate a more posterior part of the PHC, and associations that are more abstract and not tied to specific place information activate a more anterior part of the PHC (Bar & Aminoff, 2003).

Illustration of the overlap between the contextual-associations network (Bar & Aminoff, 2003) and two other major networks. In (a), the functional MRI responses in the left hemisphere (LH) and right hemisphere (RH) represent contextual activity (statistical significance values are at the bottom right), and superimposed on them are the outlines of the activation pattern obtained for places via a standardized procedure of comparing activity in response to places with activity elicited by objects (what is referred to as the parahippocampal place area, or PPA, localizer). The yellow outlines are in the medial temporal lobe (MTL), and the green outlines are in the medial parietal cortex (MPFC). Adapted from Bar et al. (2008, Fig. 3a). The image in (b) shows the overlap between the contextual-associations network and the default-mode network. The main regions of overlap include the MTL, the MPC, and the medial prefrontal cortex (MPFC; Bar et al., 2007).

Second, we found that the contextual-associations network overlaps with what is now known as the default-mode network (DMN), a massive network of regions that was originally defined as vigorously active when participants are not engaged in a task or when their mind wanders (Fig. 4b). I elaborate on this important overlap in the next sections, when discussing associations, predictions, thought, and SoM. As I explain, although associations provide the building blocks for much of mental activity, SoMs directly modulate their extent.

The Proactive Brain

The combined findings on context and associations led to a broader hypothesis according to which associations are the units of thought (Bar et al., 2007). They provide the foundation with which people can think, predict and anticipate, plan, entertain multiple possible futures, ruminate, worry, and fantasize.

It seems that the most efficient way for the brain to help people is by preparing them for what is coming, so it is not surprising that so much of what it does is thinking about the future (Bar, 2007). From deciding what to cook on the basis of what is in the refrigerator to deciding how to pack for a vacation, and from dodging a ball to preparing for an interview, the human brain is proactively and almost continuously considering the future. Foresight is not necessarily a conscious operation, but it is typically there to help.

To be able to imagine the future and to provide informed approximations on what might unfold, the brain uses experience, as stored in memory. One cannot predict how an encounter with an alien would unfold, but one can predict how a visit to the mechanic or to a museum would likely go, in global but sufficient detail. This might be the reason people are so attracted to novelty: to augment with new material the ever-evolving repertoire of things they can anticipate. And so the main role of memory is revealed: serving the future (Bar et al., 2007).

In large part, the forward-looking processes—predicting, planning, simulating—take place in the DMN. The initial discovery of the DMN triggered a quest to understand what it is exactly that people do when not required to do anything. In addition to planning and predicting, the functions most predominantly attributed to the DMN are self-related thinking and theory of mind. Why would the DMN overlap so strikingly with the contextual-associations network (Fig. 4b)? It is because all those functions and processes in which the DMN has been implicated rely on associations at their core: what goes with what, what happens when, what to expect given personal history, and what future is most likely from among several alternatives. Associations are the elements of predictions, and therefore also of the increasingly complex processes that build upon them.

Being able to predict and anticipate, through associative memory, provides a sense of stability and familiarity in the world. An interesting question, then, is what happens to individuals who cannot activate the rich associations stored in memory in a natural and regular manner. This question became relevant when we at the lab started considering clinically depressed individuals. One hallmark of depression (and anxiety) is ruminative thinking, a cyclical thought pattern that surrounds a certain topic repeatedly. Rather than thinking broadly associatively, patients with depression remain within a narrow semantic loop of thoughts (Nolen-Hoeksema, 2000). This is interesting because people in a good mood think with broader associations (e.g., Isen et al, 1985), and it seems that thinking broadly may improve mood (Bar, 2009).

Mood and the Breadth of Thought

Given the links among ruminations, associations, and mood, I proposed the hypothesis that mood is directly linked to how associative or inhibited an individual’s mental processes are (Bar, 2009). This hypothesis has been supported by multiple studies since it was first proposed. For example, merely making people read lists of associated words that progress broadly from one word to the next (e.g., “pillow, feathers, goose, flying, clouds”) resulted in better mood than making them read lists of words that imitate rumination by remaining close to a narrow focus (e.g., “pillow, sheets, sleep, mattress, blanket”; Mason & Bar, 2012). Furthermore, another study showed a negative correlation between the extent to which depression patients ruminate and their neuronal volume of structures that are known to be part of the contextual-associations network (Harel et al., 2016). According to this mechanistic account, abnormally increased top-down inhibition constricts the ability of these patients to expand their thinking, such that they do not exhibit the healthy, broadly and coherently associative thought pattern. Targeting this proposed mechanism by aiming to reduce inhibition and broaden thinking patterns is a promising approach for alleviating symptoms of depression, stress, anxiety, and other mental disorders in which rumination is a typical thinking style.

A link between how people think and how they feel points to fundamental cooperation and synchrony between faculties that have previously been taken as remote and perhaps even detached from one another. These are now linked via the construct of SoM.

States of Mind

The observed link between mood and breadth of thought added to a host of such connections researchers had noticed over the years between mental processes that were previously taken as independent and therefore studied in isolation. There is now ample evidence that perception, attention, thought, openness to experience, and mood are linked in multiple ways. Each of these facets of thought is characterized by a continuum. Perception can range from relying more on sensory input to relying more on top-down predictions and biases, attention can change from being global to being local in its scope, thought can be broader or narrower in its associations, one can change from being highly exploratory (willing to tolerate uncertainty for the sake of learning and experience) to being highly exploitatory (preferring familiarity), and affect can range from a positive to a negative mood.

Together, these observations gave rise to the concept of an overarching SoM (Herz et al., 2020). My colleagues and I proposed that these different facets of thought are tied together and change together along a broad-narrow continuum. SoM bundles this host of mental faculties to orient an individual’s dispositions, tendencies, and sensitivities to the demands of the specific circumstances in a synchronized manner (Fig. 5).

State of mind (SoM) and its multiple dimensions of influence. The different dimensions are connected to each other, and they change in tandem according to SoM, as determined by the ratio between top-down and bottom-up processes. Adapted from Herz et al. (2020, Fig. 1A).

The synchrony among these diverse dimensions implies that if one is in the broad end of the spectrum, one’s perception relies more on bottom-up information and less on predictions and that one attends to the environment with a global “spotlight,” thinks in a broadly associative manner and thus more creatively, is more exploratory (open to learn and experience even at the expense of reduced certainty), and is generally in a better mood. If one is on the narrow end, however, one leans more on memory and predictions for perception, surveys the environment with local attention to finer details, thinks more narrowly and perhaps even ruminates, prefers certainty and the familiar, and is generally in a more negative mood.

Several previous frameworks are relevant to the proposal of an overarching SoM. One example is the regulatory focus theory (RFT; Higgins, 1997). RFT is particularly related to one aspect of our multifaceted definition of SoM, that of openness to experience. According to RFT, there are prevention and promotion modes of decision making during goal pursuit, which differ primarily in their emphasis on safety and security compared with achievement and advancement. (See Herz et al., 2020, for other related theories.)

According to our proposal, the unifying mechanism that mediates the overarching SoM relies on the ratio between top-down processes and bottom-up processes. When more weight is given to bottom-up signals, one is in a broader SoM, and when the top-down signals are assigned larger weights, one is in a narrower SoM. The mind is not fixed, and tendencies and dispositions depend as a whole on context, dictated by SoM.

The proposed rationale for aligning the different dimensions of SoM is optimization. Optimizing the state of each mental facet to best meet the current circumstances presumably requires that they all meet the present requirements with a similar perspective, or breadth of SoM. Nevertheless, exceptions are possible. It seems that some disturbances (internal) and conflicting demands (external) can give rise to misalignments in certain cases. One can be in a great mood and highly exploratory, yet upon hearing a distant shriek can focus attention to be very narrow. Similarly, in attention-deficit/hyperactivity disorder, thinking is associative and expansive, but occasional frustration from the reduced ability to focus might still result in a negative mood, and so thinking and mood may not be aligned in those cases.

Neuronal synchrony has been discussed and characterized for decades, and it is considered by many researchers to be a global principle in the operation of the brain (Uhlhaas et al., 2009). Along with possible neuromodulatory mechanisms (Avery & Krichmar, 2017), neuronal synchrony might be the mechanism by which the top-down:bottom-up ratio is determined, to dictate a specific SoM, but how this is accomplished, and how this synchrony breaks down for certain dimensions, under certain conditions, will have to be characterized in the future. Furthermore, the unifying synchrony that is proposed as the main feature of SoM might be developed and honed through experience, and the development of optimally coordinated states is also a direction for future exploration.

On a final note, it is worth mentioning the presumed relation between DMN activity and SoM. DMN activity is not necessarily top-down. In other words, the fact that it is generated and occurs internally does not automatically make it a top-down process. When one is in a broad SoM, one’s perception is oriented more toward bottom-up, sensory information, and less weight is assigned to top-down predictions. Thought pattern is also more broadly associative in this state because there is less top-down inhibition to constrict associative breadth, so DMN activity can be broadly associative. In a narrow SoM, on the other hand, there is more top-down contribution to perception (i.e., more weight on predictions) and more top-down inhibition to limit the breadth of thought and DMN activity. Therefore, there are different forms of top-down involvement in the various dimensions of SoM (e.g., object predictions vs. inhibition signals), but all maintain the principle that top-down processes are weighted more heavily than bottom-up processes in narrow SoM and the reverse is true in broad SoM.

Conclusions

I have presented an account connecting objects, associations, context, predictions, and affect in a unifying framework describing human experience. The common thread is the involvement of both top-down and bottom-up influences that change dynamically with circumstances to affect diverse faculties, including perception, attention, thought, openness, and mood, in tandem. This new framework naturally suggests several directions for future research. In addition to those mentioned earlier, one important area for future investigation is whether there are additional facets of thought that are independent parts of SoM and cannot be constructed out of the dimensions already included in the current definition of SoM. These might include motivation, cognitive control (Dreisbach & Goschke, 2004), and rational thinking, to name a few. Realizing that dynamic yet unifying states of mind orchestrate much of one’s mental world to meet the needs of the moment has deep implications for understanding the brain and mind, for research practice, and for the clinical worlds of psychology and psychiatry.

Recommended Reading

Bar, M. (2009). (See References). The detailed hypothesis linking associative thinking and mood.

Bar, M., & Aminoff, E. (2003). (See References). Details of how the elements of the contextual-associations network were discovered.

Bar, M., Kassam, K. S., Ghuman, A. S., Boshyan, J., Schmid, A. M., Dale, A. M., Hämäläinen, M. S., Marinkovic, K., Schacter, D. L., Rosen, B. R., & Halgren, E. (2006). (See References). The first article to combine functional MRI and magnetoencephalography to examine top-down influences in object recognition.

Herz, N., Baror, S., & Bar, M. (2020). (See References). The original article in which we proposed and detailed the state-of-mind framework.

Trapp, S., & Bar, M. (2015). Prediction, context, and competition in visual recognition. Annals of the New York Academy of Sciences, 1339(1), 190–198. An account of how competition might underlie the role of context and predictions in recognition.