Abstract

Neuroscientific evidence identifies the brain networks and cognitive processes involved in people’s thoughts and feelings about their behavior. This helps individuals understand the judgments and decisions they make with regard to their own and others’ moral and immoral behavior. This article complements prior reviews by focusing on the social origins of everyday moral and immoral behavior and reviewing neuroscientific research findings related to social conformity, categorization, and identification to demonstrate (a) when people are motivated by social norms of others to follow particular moral guidelines, (b) what prevents people from considering the moral implications of their actions for others, and (c) how people process feedback they receive from others about the appropriateness of their behavior. Revealing the neural mechanisms involved in the social processes that influence the moral and immoral behaviors people display helps researchers understand why and when different types of interventions aiming to regulate moral behavior are likely to be successful or unsuccessful.

Keywords

The desire to find explanations for everyday observations of corruption, fraud, or abuse of power has made moral behavior a hot topic in psychological research (Ellemers, van der Toorn, Paunov, & van Leeuwen, 2019). Even when it seems obvious that such behaviors are unethical, immoral, or even unthinkable, this does not preclude such problems from emerging time and again. How is this possible?

Moral convictions cause people to assume that everyone knows what is morally “right” as opposed to morally “wrong” (Skitka, 2010). Attempts to influence moral behavior accordingly focus on changing the cost-benefit ratio of undesired choices through regulation, sanctions, and incentives. Yet it is not self-evident that people agree about the moral course of action or that morally problematic behavior always results from deliberate choices. Especially when considering everyday behavior, rather than examining hypothetical responses to “raceless, genderless strangers” (Hester & Gray, 2020), one should not underestimate the influence of social-context factors on people’s decisions and actions. Indeed, such behaviors are often influenced by people’s concerns about moral critique from others and the fear of social exclusion (Ellemers et al., 2019). Examining the impact of the social context on people’s moral and immoral behavior is challenging because it may occur outside of their awareness or deliberate control. In the current review, we complement insights from self-reported concerns and behavioral observations by focusing on neuroscientific research. This can help researchers understand the cognitive and affective processes underlying the social origins of everyday moral and immoral behavior—elucidating how social norms, social categorization, and identification affect what people find morally acceptable or unacceptable.

Neuroscience of Morality

Self-reported judgments, preferences, and principles dominate research on moral psychology (Ellemers et al., 2019). Yet self-report measures rely on people’s ability and willingness to accurately reflect on and report what they did and why. Behavioral measures reveal automatic action tendencies (measured, e.g., by reaction times) and behavioral displays toward particular stimuli or other individuals. However, both offer only an indirect, and potentially inaccurate, view on people’s intrinsic motivations, automatic cognitive processing, or intuitive feelings that drive them. In other words, what these measures cannot reveal is how, when, and why people’s thoughts and actions come about. Furthermore, both self-report and behavioral outcomes are interpretable in different ways because the same answer or task response may come about for various reasons. Neuroscientific research methods can help to unravel these mechanisms—for instance, when they reveal whether the same behavior is associated with enhanced cognitive conflict (e.g., when publicly adjusting to a social norm even though it contrasts with one’s private opinion) or changes in perceptual attention (e.g., when public adjustment to a social norm is due to a shift in one’s personal point of view).

The added value of neuroscientific research methods is especially insightful for research on everyday moral and immoral behavior. This occurs in social contexts in which shifting identities and group memberships affect self-views and determine which others seem self-relevant (Spears, 2020). Indeed, deviance in social interactions, transgression of group norms, and identification with shared interests are often seen as defining moral and immoral behavior (Moore & Gino, 2013). So what seems the moral thing to do may differ from one group or context to another (Ellemers & Van der Toorn, 2015). This explains why research has accumulated to reveal that people are highly motivated to do what is right and appear moral to themselves and others even though evidence of moral lapses and moral failures is widespread. In the moral domain, people are known to have difficulty reflecting on the true origins of their choices—including how these are influenced by others—which they tend to justify in retrospect (see Haidt, 2001). The more people are motivated to do what is right, the more vulnerable they are to having biased self-views in the moral domain—also referred to as the “paradox of morality” (Ellemers, 2017, p. 33). This limits the information value of self-report and behavioral measures, particularly when used to study human morality. Adding neuroscientific research methods can help to unravel when, why, or how justifications of immoral acts may come about.

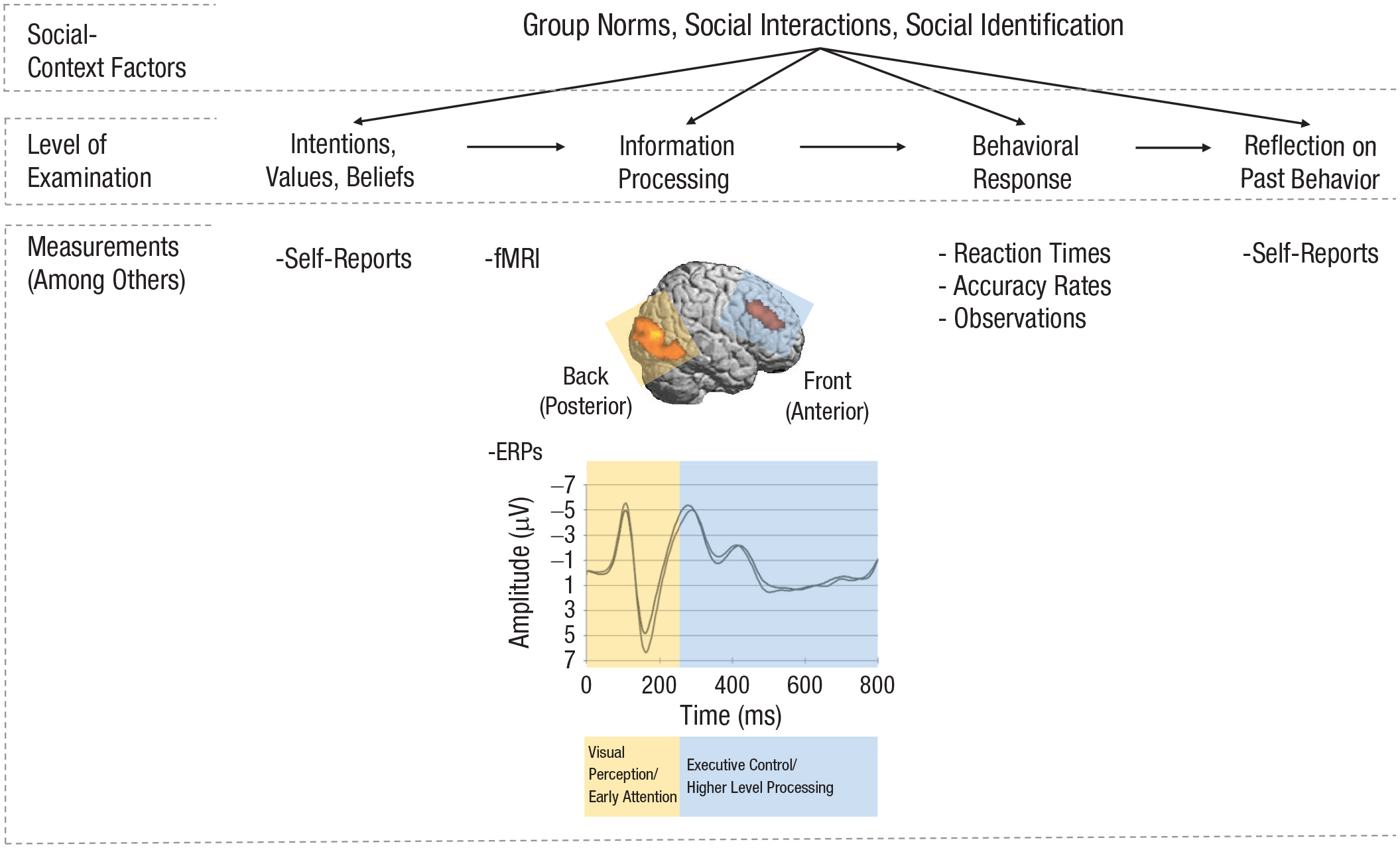

Prior reviews that covered the neuroscience of morality mostly revealed which brain networks are involved in abstract moral judgment and hypothetical decision-making (Greene, 2009; Lieberman, 2007; Moll, Zahn, de Oliveira-Souza, Krueger, & Grafman, 2005). Here, we focus on how neuroscientific research methods reveal the social origins of everyday moral and immoral behavior by examining conformity to group norms and the categorization of, and attention for, others—depending on one’s social identity. We include evidence from functional MRI (fMRI) techniques—revealing which brain regions and networks are involved when people perform a particular task—and event-related potentials (ERPs) derived from an electroencephalogram (EEG)—indicating how quickly the brain responds to particular events (e.g., the receipt of information or providing a response). The use of these methods sheds light on how the interpretation of social factors and contexts affects basic neural mechanisms such as visual perception and reward processing and how rapidly such effects take place (see also Fig. 1). Revealing the neural mechanisms that influence when and how people process and consider social information relevant to their moral choices at this fundamental level may thus help researchers to understand why and when different types of interventions aiming to regulate people’s moral behavior are likely to be successful or unsuccessful.

Schematic illustration of the use of functional MRI (fMRI) and event-related potentials (ERPs), in addition to the self-reports and behavioral measures often used to examine the effects of social-context factors on behavior in general (and moral behavior in particular), to reveal the information-processing stage that research participants may be unable or unwilling to reflect on or report about. The fMRI and ERP measures can complement one another because of the high spatial resolution of fMRI—enabling researchers to localize brain activity when contrasting one experimental condition with another—and the high temporal resolution of ERPs—revealing the rapid time course and differences in amplitudes of brain activity associated with experimental manipulations (e.g., viewing pictures of in-group vs. out-group members). The figure displays where (fMRI) and when (ERPs) early and later processes unfold (yellow and blue areas, respectively). However, it should be noted that these processes are not as clearly distinguishable as depicted in the figure, nor do they occur independently from one another. For instance, ERP modulations measured at posterior sites of the cortex can be caused by processes in the prefrontal cortex. The figure is meant to serve only as a guide to the comparison and compatibility of the additional use of fMRI and ERPs in the study of social origins of moral and immoral behavior. The fMRI image and ERP waveform are adapted from the authors’ own research findings(van Nunspeet, 2014; van Nunspeet, Ellemers, Derks, & Nieuwenhuis, 2014).

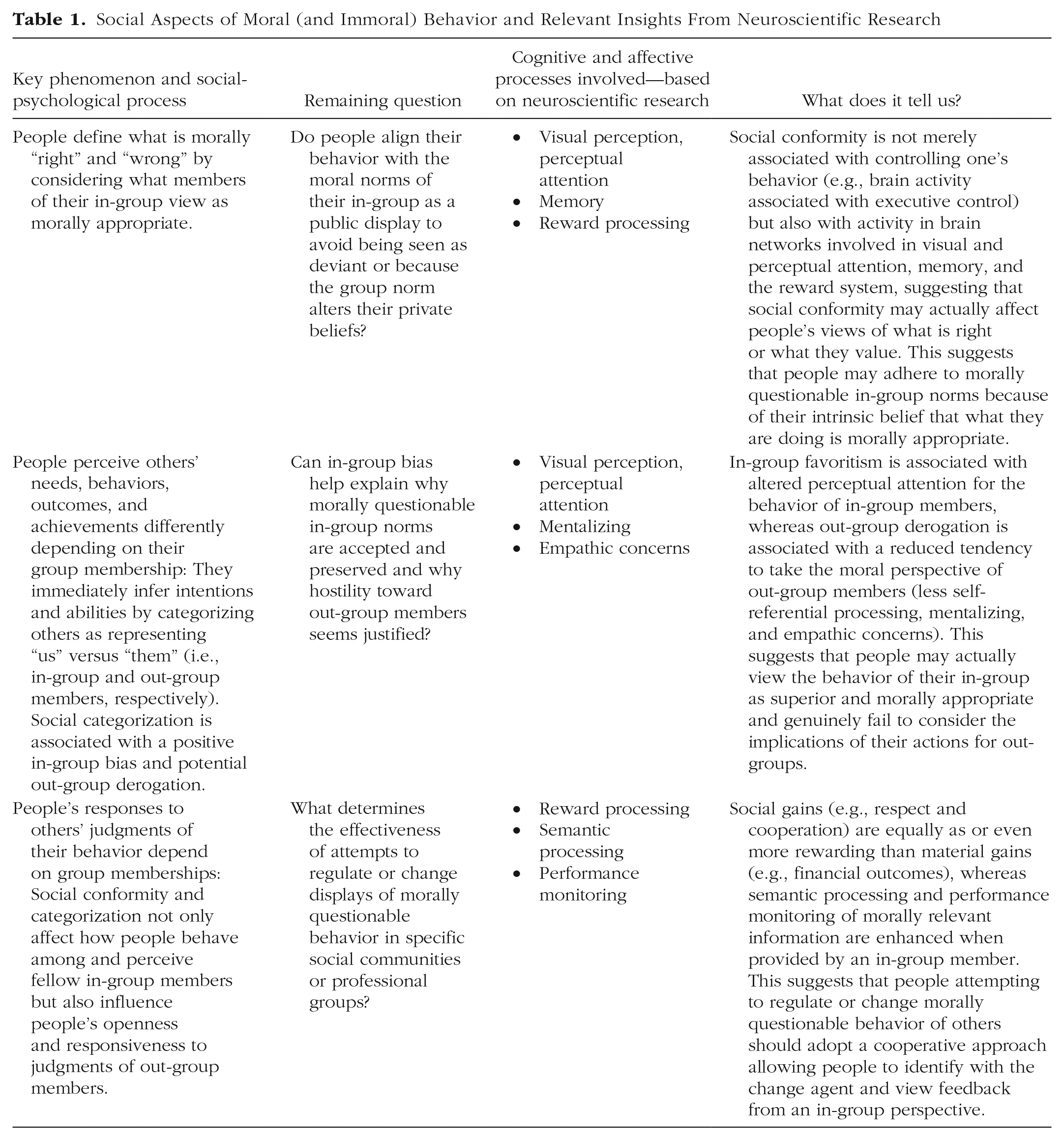

Below, we consider (a) how group norms guide the specific versions of general moral principles people develop, (b) how people limit the range of others they consider when evaluating the implications of their actions, and (c) why people may seem insensitive to moral feedback from others. We extend evidence from behavioral observations with neuroscientific evidence—showing, for instance, that deviation from groups norms enhances cognitive conflict and how social identification redirects people’s perception and attention in favor of the people like them. We outline how this helps to answer remaining questions about the social origins of people’s moral and immoral behavior, which has practical implications for people with an interest in guiding moral behavior in everyday situations, including teachers, employers, compliance officers, or public authorities (see Table 1).

Social Aspects of Moral (and Immoral) Behavior and Relevant Insights From Neuroscientific Research

People develop specific versions of general moral principles by conforming to the group

A shared understanding of morally “right” as opposed to morally “wrong” behaviors allows individuals to work together in organizations and live together in communities. Adherence to such guidelines secures social respect and inclusion; violation results in disrespect and social exclusion. In professional contexts or when people are confronted with specific dilemmas, general moral principles (“do no harm”) are not very helpful. This is why people tend to seek guidance from other group members (e.g., fellow professionals, supporters of the same political party, believers in the same religion) when deciding what is the right thing to do in a particular situation (Ellemers & Van der Toorn, 2015).

We know that the influence of others may lead people to behave in ways they would otherwise consider wrong. However, both fMRI and ERP research allows us to understand that these effects may go beyond the adjustment of visible behaviors or expressed opinions to group norms. That is, information about what others in the group find appropriate may actually cause an alteration in people’s personal beliefs or social perception (for an overview, see, e.g., Stallen & Sanfey, 2015). This was demonstrated, for instance, in one of the first fMRI studies on social conformity (Berns et al., 2005). People were found to conform to incorrect responses provided by others when performing a mental rotation task. The researchers examined whether this form of social conformity is related to brain activity associated with executive control or with the processing of the visual stimuli. During the task, research participants were exposed to external information consisting of incorrect responses from a socially relevant as opposed to a nonrelevant source (i.e., ostensible other participants or a computer). Despite identical response times, results revealed that—compared with individual responses—displays of social conformity were associated with decreased brain activity in the network needed for task performance. Additionally, the implications of being exposed to a socially relevant source were visible in brain activity expanding this network but still located in the visual cortex. This was increased when participants conformed to incorrect responses ostensibly offered by other participants rather than a computer (Berns et al., 2005). Together, these fMRI results revealed that social conformity changes brain activity associated with visual perception rather than brain activity in networks associated with executive control. This suggests that people who conform to group norms not only adjust their explicit answers to those of others but also may actually perceive the task options differently.

EEG research on social conformity using a line-judgment task (inspired by the classic experiments of Asch) has also revealed that the greater the discrepancy between an individual’s and a group’s response (i.e., disagreement with two or more than two others in a group of five), the greater the ERP amplitudes associated with cognitive conflict and expectancy violation. Furthermore, the level of expectancy violation can predict a participant’s subsequent conformity (Chen, Wu, Tong, Guan, & Zhou, 2012). In other words, behavioral adjustments to social norms also depend on cognitive responses to disagreement within a group.

Knowledge of other people’s preferences may also change one’s own preferences and affect the things one values. This was revealed, for instance, in fMRI research showing that people who aligned their ratings of the attractiveness of faces to other people’s ratings showed increased brain activity in reward-related areas that are also active when anticipating and winning monetary prizes (Zaki, Schirmer, & Mitchell, 2011). Likewise, exposure to erroneous recollections of others in the group altered the neural representation of people’s individual accurate memories (Edelson, Sharot, Dolan, & Dudai, 2011). Importantly, however, people are not equally affected by any source of social influence. Instead, they specifically adjust their own responses to what is considered appropriate by in-group members but are less inclined to conform to out-group norms (e.g., Stallen, Smidts, & Sanfey, 2013).

Together, such research findings help illuminate how people can come to behave in ways that are considered morally wrong. The social rewards of conforming to in-group norms can cause people to actually perceive, value, and remember things differently. This explains that people can adjust their views of what is morally acceptable because of exposure to specific in-group norms while being convinced that they still adhere to the same general moral principles. Developers of interventions aiming to attenuate the social normalization of lying, cheating, or other forms of morally questionable behavior could benefit from this knowledge by testing ways to prevent social communities or organizations from developing practices conveying that morally questionable behaviors are normative for the group.

People limit the range of others they consider when evaluating the implications of their actions

The tendency to differentiate in-group from out-group members affects people’s moral and immoral behavior beyond the internalization of morally questionable group norms. That is, both fMRI and ERP research reveals that people can categorize the same individuals in different ways, depending on whether they consider them in-group members or out-group members. This, in turn, explains the emergence of different forms of in-group bias, including the tendency to favor in-group members over out-group members when evaluating individuals or allocating resources to them (e.g., Cikara & Van Bavel, 2014; Kawakami, Amodio, & Hugenberg, 2017).

People often tend to evaluate the performance of their own team members as superior even when the objective performance of different teams is the same. Additionally, fMRI data reveal that such a social bias can be related to patterns of brain activity relevant for perception-action coupling. In a study by Molenberghs, Halász, Mattingley, Vanman, and Cunnington (2013), only participants who displayed a positivity bias toward their own group on a behavioral level (i.e., by overestimating their fellow team members’ fast responding on a task) showed enhanced brain activity in an action-perception network when observing their own as opposed to the other team performing the task. This clarifies that explicit judgments can reflect altered perceptual attention.

Besides this general tendency to overestimate the abilities and achievements of the in-group, people are inclined to consider their in-group as more moral than other groups (Ellemers, Pagliaro, & Barreto, 2013). This, in turn, may cause morally questionable behavior of in-group members to remain unnoticed. For instance, neuroscientific evidence shows that people are less likely to process behavioral information about the moral character of others they trust. That is, once they had formed a positive impression of the trustworthiness of their interaction partner, people showed less brain activity associated with feedback processing that would allow them to learn about their partner’s moral behavior on a trial-and-error basis (Delgado, Frank, & Phelps, 2005). This suggests that once people have decided to trust someone to do the right thing, for instance because they belong to the same group, they are less inclined to reflect on the moral character of the behavior actually displayed.

The tendency to evaluate efforts, needs, and achievements of in-group members more positively than those of out-group members can also explain why individuals who show empathy and altruism for members of their own group may derogate or neglect others only because they view them as out-group members. Neuroscientific research has shown a reduced tendency to infer the mental states of other people who fall outside one’s circle of moral regard. That is, less activity is displayed in brain networks used to consider the mind-set of such out-group members (Harris & Fiske, 2009). Failing to take into account the thoughts and feelings of other people makes them seem lesser humans who are less worthy of moral consideration, empathy, or help and invites immoral behavior toward them. These tendencies can be intensified when members of different groups are seen to compete with each other, for instance to obtain jobs, housing, or other scarce resources. In fact, fMRI research has shown that when people perform in a group competing against another group, the salience of personal moral values is reduced (Cikara, Jenkins, Dufour, & Saxe, 2014). That is, the competitive context was found to diminish the self-referential processing of moral information, which increased the potential harm directed toward the out-group.

These neuroscientific findings indicate the deeply rooted and far-reaching implications of in-group versus out-group perceptions and judgments. People may be less critical of the moral choices of fellow group members because they fail to attend to the behavior displayed. Alternatively, they may actually view behavior that harms other people as morally appropriate because of the reduced tendency to mentalize and experience empathic concerns for out-group members. These common in-group versus out-group tendencies can contribute to the emergence and persistence of morally questionable behavior that favors in-group over out-group members.

People resist moral feedback from out-group members

The research reviewed so far demonstrates how social conformity, in-group versus out-group categorization, and in-group bias affect people’s convictions about morally appropriate as opposed to inappropriate behaviors. The tendency to trust and favor in-group members over out-group members and view the in-group as morally superior reduces concern for the thoughts and feelings of out-group members and invites suspicion of their motives. This is important information for people who attempt to influence and regulate others’ behavior by appealing to general principles of morally acceptable behaviors. If legislators, managers, compliance monitors, or regulators are seen as out-group members, these appeals are unlikely to be effective. Attempts to address and influence people’s behavior on moral grounds can even backfire when these raise defensive responses that prevent people from considering the moral appropriateness of their own behavior.

Neuroscientific studies have often compared the brain activity associated with the reward value of material as opposed to social gains. This work has consistently shown that social acceptance, reciprocity, and trust result in similar patterns of activity in the neural reward system as the provision of material gains (e.g., monetary incentives; for reviews, see, e.g., Bhanji & Delgado, 2014; Sanfey, 2007). However, it also matters who the “providers” of such social gains are: People seek moral approval of their decisions and behaviors by other members of their group (Ellemers et al., 2013), and they are suspicious of the motives that out-group members have in monitoring or evaluating their moral choices, causing them to be less sensitive to these judgments (Hornsey & Imani, 2004).

The group membership of evaluators also affects neurocognitive indicators of motivation and cognitive engagement. For instance, in an fMRI study of leadership effectiveness, participants displayed increased brain activity indicating attention to the meaning of inspirational “we” statements (i.e., semantic processing) only when these were made by an in-group leader (Molenberghs, Prochilo, Steffens, Zacher, & Haslam, 2017). Furthermore, ERP research revealed that people are generally inclined to monitor their task performance when they think this indicates their morality (as shown in enhanced ERPs associated with error monitoring when the implications of a task were framed in terms of one’s morality vs. competence; van Nunspeet, Ellemers, Derks, & Nieuwenhuis, 2014). However, brain activity indicating enhanced monitoring of moral-task performance mainly emerges when people receive feedback from in-group members rather than out-group members (van Nunspeet, Derks, Ellemers, & Nieuwenhuis, 2015).

Together, these studies explain that communication of moral ideals and provision of moral feedback are likely to be less effective—or may even backfire—when the messengers are perceived as representing another group. This is another conclusion that clearly has practical implications. Supervisors, legislators, or regulators who aim to influence the moral behavior of specific social communities or professional groups would do well to consider ways in which they can convey a sense of common identity and raise trust in their intentions.

Conclusion

In this review, we focused on the social origins of moral behavior to reveal the mechanisms that explain how people can come to endorse and persist in morally questionable behavior and may resist attempts to offer moral guidance. We have offered evidence of the basic neurocognitive mechanisms raised by social concerns and in-group versus out-group differences to illustrate why common efforts to regulate moral behavior may not be effective. The available evidence clarifies that people do not always deliberately consider the moral costs of their actions. Instead, social norms, in-group versus out-group categorizations, and social identification can cause biases that induce people to process information differently—at a very fundamental (neural) level. Future neuroscientific research could more specifically address the effects of social categorization and conformity on morally questionable behavior. Doing so and taking the insights from such social neuroscientific research into account may help us design more effective ways to guide and influence people’s moral behavior in everyday life.

Recommended Reading

Amodio, D. M., Bartholow, B. D., & Ito, T. A. (2014). Tracking the dynamics of the social brain: ERP approaches for social cognitive and affective neuroscience.

Ellemers, N. (2017). (See References). A complete and highly accessible overview of what is known about morality in groups.

Ellemers, N., van der Toorn, J., Paunov, Y., & van Leeuwen, T. (2019). (See References). A recent review of empirical studies addressing moral decision-making, judgments, emotions, behavior, and self-views on both the intra- and interpersonal as well as the intra- and intergroup levels.

Eres, R., Louis, W. R., & Molenberghs, P. (2018). Common and distinct neural networks involved in fMRI studies investigating morality: An ALE meta-analysis.

Robertson, D. C., Voegtlin, C., & Maak, T. (2017). Business ethics: The promise of neuroscience.