Abstract

The measurement of individual differences in cognitive ability has a long and important history in psychology, but it has been impeded by the proprietary nature of most assessment measures. With the development of validated open-source measures of ability (collected in the International Cognitive Ability Resource, or ICAR, available at ICAR-project.com), it is now possible for many researchers to assess ability in large surveys or small, lab-based studies without the expenses associated with proprietary measures. We review the history of ability measurement and discuss how the growing set of items included in ICAR allows ability assessments to be more generally available to all researchers.

Ever since antiquity, people have used measures of cognitive ability for selection and prediction. The story is told in the Hebrew Bible (Judges 7) of Gideon, who rejected potential soldiers for showing fear and not having battle wisdom. Plato, in

Although intelligence tests were initially designed to study “inferior states of intelligence” in children (Binet & Simon, 1916, p. 9), early test administrators began assessing “normal” children in terms of their mental age using test items ordered by average performance as a function of chronological age. This practice emerged from efforts to ensure that students received a level of education that was appropriate for their intellectual development (Binet, 1908; reprinted in Binet & Simon, 1916). 1 The introduction of the “intelligence quotient” led to an explosion of research examining its validity. Terman (1916), for example, demonstrated that children who scored at levels typical of older children were also rated by teachers as more intelligent. A test that had been developed to assess low levels of ability thus became one that could assess the entire range of cognitive ability.

Early research on intelligence also contributed to advances in measurement and theory. While still a graduate student, Charles Spearman (1904) published a fundamentally important article establishing the tradition of measuring general intelligence (

There were several prominent applications of early intelligence research. For example, the notions of item difficulty and deviations from mean performance led to the creation of an index of competence used in the Army Alpha exam for placing U.S. Army recruits in World War I (Yoakum & Yerkes, 1920). In 1932, every 11-year-old school child in Scotland was assessed, laying the foundation for a remarkable follow-up study 69 years later showing the stability of ability measures (

Theories of Intelligence

Ever since Spearman’s (1904) work, it has been routinely noticed that all cognitive measures form a

However, it has been recognized for more than 100 years (e.g., Thomson, 1916) that the existence of such a positive manifold is a descriptive finding and should not be taken as having any necessary causal meaning, as there are several ways that such a positive manifold might be produced (Bartholomew, Deary, & Lawn, 2009; Kovacs & Conway, 2019). Sampling independent “bonds” (Bartholomew et al., 2009), dynamic mutualism (Van Der Maas et al., 2006), and overlapping processes (Kovacs & Conway, 2019) all results in the same set of positive correlations without a causal general factor. This can be seen via simulation of a genetic-factor model of independent genes with pleiotropic effects (simulated as cross loadings) that yields a positive manifold and a

By analogy, an equivalent positive manifold may be found in measures of body size. Whether measured by weight, height, chest circumference, or hundreds of more precise measures, adult humans differ in a general factor of size (e.g., see the U.S. Air Force, or USAF, data set in

Developmentally, cognitive ability can be thought of as a propensity to acquire new information and new reasoning skills. It is analogous to differences in stickiness as snowballs roll downhill. Just as sticky snowballs become larger than those that are less sticky, so do high-ability individuals acquire more information than low-ability individuals as they experience life.

Classic Longitudinal Studies

The question of causality does not diminish the usefulness of the general factor as a predictor of real-world outcomes. Terman and Oden (1959) reported on the lifetime accomplishments of 1,528 “termites”; these were very bright 3rd- to 8th-grade Californians with Stanford-Binet scores mainly above 140 (roughly, the top 1% of the student population). The participants were psychologically healthy and showed impressive levels of accomplishment over their lifetimes (see Lubinski, 2016), contrary to the prevalent hypothesis when the study began that high ability was related to psychological fragility. In a more recent longitudinal study based on the representative sample of 440,000 U.S. high school students in Project Talent, 50-year follow-ups of 1,952 9th to 12th graders demonstrated the predictive validity of cognitive performance tests. Ability measures taken 50 years earlier correlated at .50, .35, and .35, respectively, with (subsequent) educational attainment levels, occupational level, and estimated income (Spengler, Damian, & Roberts, 2018), and the effects remained robust even when analyses controlled for parental social status (partial correlations were .40, .29, and .28).

The often-stated claim that differences in ability do not make much difference for the outcomes of the top 1% to 2% in ability is contradicted by differences in the achievement of participants in another 50-year longitudinal study of mathematically precocious youth (Lubinski & Benbow, 2006). Even among students identified by their SAT scores at age 14 to be among the top 1%, those students in the top 0.01% had even more accomplishments in the next 35 to 50 years than did those who were “merely” exceptional. Lubinski reminds us that there are 6 standard deviations of ability above the mean level and that one third of the total range is observed within the top 1% (Lubinski, 2016; Lubinski & Benbow, 2006.

Genetics of Cognitive Ability

Classic behavioral-genetics work comparing the similarities of identical twins with fraternal twins, as well as the lack of similarity of adopted siblings, shows that roughly 70% to 80% of the variance in ability as measured by conventional intelligence tests (among those siblings with a middle-class background) is under genetic influence (Bouchard, 2014). These findings show systematic increases with age. Sibling pairs, whether adopted, dizygotic, or monozygotic twins, are all very similar when 5 to 7 years old, but the adopted siblings become less similar, whereas the monozygotic twins become more similar as they age (Bouchard, 2014). Much lower estimates of heritability come from genome-wide-association studies, which examine common polymorphisms. Analyses of more than 1 million participants in the UK Biobank have shown that years of education (a proxy for cognitive ability and motivation) may be associated with 1,271 independent single-nucleotide polymorphisms (Lee et al., 2018). The implications of these findings are that ability and subsequent outcomes are substantially heritable, but this does not imply that environmental influences are not important. It also underscores the fact that heritability is a hodgepodge ratio of genetic variance to total variance (genetic plus environmental) for a particular sample, leaving many unanswered questions about the extent to which changes in the environment can affect phenotypic scores. Psychological and physical differences can be highly heritable but also highly malleable by the environment (e.g., height). Furthermore, in the United States, heritability-of-ability estimates vary as a function of social class (Giangrande et al., 2019), but this effect is not observed in Europe or Australia, which may be taken as a sign of greater socioeconomic inequality in the United States (Tucker-Drob & Bates, 2016).

Cognitive Ability and Cognitive Processes

Although research on

Measurement: The Development of the International Cognitive Ability Resource (ICAR)

Even though clearly important, the study of individual differences in cognitive ability has been limited by several constraints, including the related issues of cost, sample size, and scalability. The high costs of ability testing stem from the field’s reliance mainly on proprietary licensed measures. The expense of licensing tends to severely constrain researchers’ budgets, leading to the collection of smaller sample sizes than might otherwise be possible. Even the Educational Testing Service “French Kit” (Ekstrom, French, Harman, & Derman, 1976) is $0.15 per copy for graduate students and is not suitable for Web-based administration. It is also the case that the most widely used (“high stakes”) measures tend to require one-on-one or proctored, small-group administration. These problems are compounded by the tradition of relying on undergraduate samples, as this often leads to restriction of range and concerns about generalizability.

To alleviate these problems, we developed and validated an open-source ability test that is well suited for administration on the Web (the ICAR; Condon & Revelle, 2014; see Fig. 1). Although the original instrument had just 60 items spanning four constructs, with the help of an international consortium,

2

we have expanded the total item pool to more than 1,000 items and 19 lower-level constructs. Additional measures are currently under development for an increasingly broad range of constructs. For the sake of cross validation against other ICAR measures, subsets of each type are administered to large online samples using a

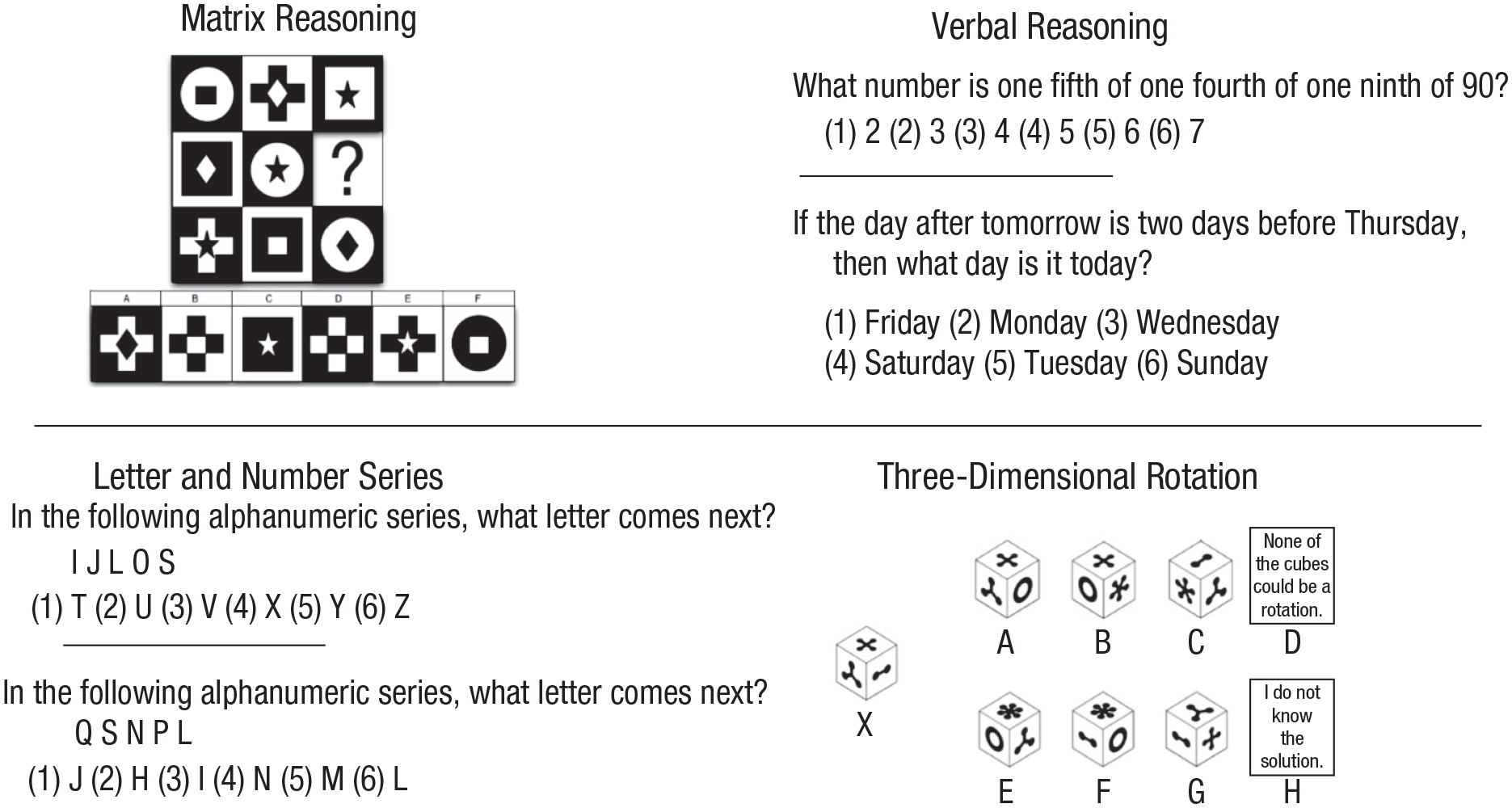

The original 60-item International Cognitive Ability Resource (ICAR). The original ICAR was composed of four item types (examples of which are shown here) and had a clear hierarchical factor structure. See Condon and Revelle (2014) for more example items, and join the ICAR project at ICAR-Project.com for access to all of the items.

Applications of ICAR

Although one reviewer suggested that to compare the ICAR with the Stanford-Binet is analogous to comparing a cheap rip-off to a Versace handbag, we view the utility of ICAR in terms of the wide range of applications in just the past few years. ICAR measures of cognitive ability have already been used in many studies and publications, with various real-world criteria and different item types (e.g., the 79 studies reviewed by Dworak, Revelle, Doebler, & Condon, 2020). Such projects include an online survey that utilized 35 verbal-reasoning and three-dimensional-rotation items to provide participant feedback and evaluate individual differences in a nationwide sample (Van Der Krieke et al., 2016). Other studies assessed how 46 verbal-reasoning and matrix-reasoning items related to genetic scores of education attainment and showed that large-scale genetic studies can rely on online collection of cognitive-ability measures (Liu et al., 2020). ICAR items have also been utilized with experience-sampling methods to test the relationship between cognitive ability and creativity. Cognitive ability was also found to moderate the relationship between everyday positive affect and everyday creativity (Karwowski, Lebuda, Szumski, & Firkowska-Mankiewicz, 2017). Using 16 items, one cross-sectional study found that higher cognitive ability was related to greater aptitude in discriminating between “pseudo-profound bullshit” and profound statements (Bainbridge, Quinlan, Mar, & Smillie, 2019). Research has used as few as 4 items to find that cognitive ability relates negatively to the political ideologies of right-wing authoritarianism, social-dominance orientation, and attitudes toward President Trump (Choma & Hanoch, 2017).

Future Directions

We have received requests for the use of ICAR items with younger subjects (under age 14) and as potential measures of cognitive decline in the elderly. The factor structure of the original 60 items of the ICAR was based on the responses of 96,958 participants with a median age of 22 but who ranged in age from 14 to 90 years. A subsequent validation against self-reported SAT and ACT scores was completed for those 34,229 participants between 18 and 22 years of age. Thus, there is a need to further validate the items with younger and older participants. Although some researchers have used as few as four items in their studies, and many have used just the 16 items from the sample test, we encourage users to go beyond these 16, and even the 60 described by Condon and Revelle (2014), and use items sampled from the larger (> 1,000) pool of items that are available at the ICAR project website.

Recommended Reading

Deary, I. J. (2000).

Deary, I. J. (2001).

Haier, R. J. (2016).

Lubinski, D. (2016). (See References). A very thoughtful review of intellectual precocity featuring the Terman and Stanley, Benbow, and Lubinski longitudinal studies.

Mackintosh, N. J. (2011).