Abstract

Admitting that one’s research findings are wrong involves admitting a potential instance of incompetence, which can keep scientists from engaging in wrongness admission. However, wrongness admission can yield favorable perceptions. In five experiments (N = 2420), we tested whether wrongness admission yields higher perceived trustworthiness in the scientist and trust in science and discipline-specific research as well as public funding support for the scientist, science, and discipline-specific research. Scientists engaging in wrongness admission (vs refuse or do not comment) were perceived as more trustworthy and received more support for federal funding for their own research. Moreover, wrongness admission yielded similar levels of science and discipline-specific public funding support. Wrongness admission not only facilitated higher scientist trustworthiness, but trustworthiness was, in turn, associated with greater trust in science and psychology, as well as scientist and psychology public funding support. This work highlights potential benefits of scientist wrongness admission amidst failed replications.

Intellectual humility is largely recognized as a virtue (Chancellor and Lyubomirsky, 2013; Krumrei-Mancuso, 2017). People have recently called for more intellectual humility among scientists in response to psychology’s replication crisis (Hoekstra and Vazire, 2021). Indeed, recognizing and acknowledging when one’s prior work is wrong should help science move forward. Yet, expressing intellectual humility by engaging in wrongness admission—or the act of publicly stating that one has been wrong—is scary reputationally, because it could be perceived as an admission of incompetence regarding the issue in question. People tend to manage their impressions by focusing on agentic traits, like competence (Abele and Wojciszke, 2007, 2014), which might be particularly true for scientists whose competence is tied to their professional identity (Carlone and Johnson, 2007). Therefore, they may fear that their professional reputation is contingent on how competent others perceive them, which is reflected in their work (Fetterman and Sassenberg, 2015). However, prior work shows that scientists who engage in wrongness admission after a failed replication are rated as better scientists by their peers than those who refuse (Ebersole et al., 2016; Fetterman and Sassenberg, 2015). Nevertheless, it could be that the public sees wrongness-admitting scientists as less competent and that such admissions decrease trust in science. Based on prior work, however, we hypothesize that the opposite is true and tested this idea in the current studies.

Intellectual humility and wrongness admission

Intellectual humility is often conceptualized as acknowledging the shortcomings and limitations of one’s knowledge and recognizing one’s intellectual biases (Porter et al., 2022). People likely resist intellectual humility due to the well-established clash between impression formation processes and impression management motivations. When forming impressions of others, people tend to focus on communal traits (friendliness, trustworthiness, etc.), whereas they are motivated to display agentic traits (mainly competence) when managing their impressions (Abele and Wojciszke, 2007, 2014). Indeed, interpersonal factors can hinder people’s willingness to engage in intellectual humility, including the desire to maintain social status (Porter et al., 2022). Moreover, an abundance of evidence has linked perceptions of competence to social status (Brambilla et al., 2010; Fiske et al., 2002, 2007), so managing impressions of our competence may be intimately tied with the motive to refrain from engaging in intellectually humble behaviors.

According to Porter’s (2025) conceptual framework, intellectual humility can be experienced both internally (encompassing one’s own thoughts) and externally (overtly expressing one’s intellectual humility), from both a self-oriented (acknowledging one’s own intellectual limitations) and other-oriented (acknowledging others’ intellect) perspective. One self-oriented external manifestation of intellectual humility is wrongness admission (Fetterman et al., 2022). During wrongness admission, an individual publicly acknowledges that they were wrong about a factual argument or previously held belief. In doing so, they demonstrate that they are aware of their limitations regarding their knowledge of the topic or argument in question. While people are often hesitant to admit wrongness, likely for fear of negative perceptions of their competence, doing so actually facilitates more positive reputational consequences. For example, people who publicly engage in wrongness admission on social media are perceived as more communal and even more competent than those who refuse to do so (Fetterman et al., 2022). Moreover, regardless of their political ideologies, people tend to rate politicians who engage in wrongness admission as more communal and competent than those who refuse to admit (Henderson et al., 2025). Likewise, entrepreneurs who engage in wrongness admission during a pitch are also seen as more hirable and competent (John et al., 2019).

Overall, wrongness admission appears to have rather beneficial (vs damaging) reputational consequences. In fact, because people focus on communal traits when forming impressions (Abele and Wojciszke, 2007, 2014), wrongness admission appears to extend these benefits. Yet, wrongness admission, by definition, also signals learning, which should reflect competence as well. Ultimately, wrongness admission is good for one’s personal and professional reputation. However, it is unclear whether these effects translate to public perceptions of people who are assumed to be on the high-end of competence and for whom they trust are getting things right.

Wrongness admission and trust in scientists and scientific research

The utility of intellectual humility and wrongness admission in fostering favorable professional reputations might also extend to scientific research. This is especially crucial when the motives of the scientists are called into question, such as through their handling of data (Pickett and Roche, 2018) or simply the research questions they address (Benson-Greenwald et al., 2023). In these cases, people are less inclined to support public funding for these efforts. Public opinion, in turn, influences policymaking (Burnstein, 2003), which in this case would impact the allocation of research funds.

In recent years, psychological science has undergone scrutiny and distrust from the public in the wake of failed replications of some of its foundational early findings (Camerer et al., 2018; Maxwell et al., 2015; Open Science Collaboration, 2015). This “replication crisis” is certainly one contributing factor to a decrease in confidence in scientific findings from both the public and scientists themselves (Rutjens et al., 2018). Failed replications reduce the public’s trust in psychology (Wingen et al., 2020), while successful replications enhance scientist trustworthiness (Hendriks et al., 2020). Yet, psychological scientists may be able to restore some of this deteriorating public trust through their self-corrective actions.

There will always be a degree of uncertainty in the findings from any scientific research endeavor (Popper, 1959). Therefore, it has become crucial to understand how to foster trust in psychological science beyond successfully replicating research findings. One approach is improving educational practices to enhance the public’s understanding of the scientific method and research endeavors (Covitt and Anderson, 2022; Fensham, 2012; Zeyer, 2021). This educational approach can extend to informing the public of current practices that scientists are employing to improve confidence in their own findings. For example, explaining the efforts psychological scientists are making to ensure the public’s confidence and trust in their findings—such as adopting open science practices like making all materials, analysis code, and data publicly available—is linked to greater trust in science (Rosman et al., 2022). Moreover, understanding the potential reforms to address issues around failed replications of earlier findings (vs simply understanding the replication crisis and its causes) enhances trust in scientists and ongoing research in psychology (Methner et al., 2023).

The findings demonstrating the positive impact of understanding efforts that science as an institution is making to foster self-correction in the research process can also extend to individual scientists’ intellectual humility about their research findings. In other words, scientist wrongness admission should enhance perceived trustworthiness of the scientist. People who are more intellectually humble are receptive to alternative perspectives (Porter and Schumann, 2018), and consequently, tend to be more prosocial (Krumrei-Mancuso, 2017). Therefore, expressing intellectual humility through admitting wrongness should signal to others that they are concerned about the welfare of others and can be trusted. Indeed, empirical evidence supports this possibility. Wrongness admission contributes to enhanced perceptions of communion (Fetterman et al., 2022), which subsumes trustworthiness (Abele et al., 2016). When specifically considering perceptions of scientists, recent research further supports these posited associations: intellectually humble scientists are seen as more trustworthy (Koetke et al., 2024).

Prior work has also investigated how the public perceives scientists who perform self-corrective actions in response to criticisms of their work. For example, scientists who willingly correct prior misinterpretations of their research findings and highlight key limitations are perceived as more generally trustworthy in the eyes of the public (Hendriks et al., 2016). Building on these findings, Altenmüller et al. (2021) found that the public perceives scientists who are self-corrective about errors in their research as more trustworthy and credible. While these findings do not directly address what happens when a scientist gets something wrong and admits it, Fetterman and Sassenberg (2015) found that fellow scientists consider scientists who engage in wrongness admission as better scientists. However, the benefits of a scientist’s wrongness admission, as a specific intellectually humble self-corrective action, have yet to be applied to perceptions from the public. Therefore, it is crucial to test the extent to which the perceptions of trustworthiness of a wrongness-admitting scientist extend to the public in a similar way as the previously demonstrated self-corrective actions.

The potential reputational benefits of wrongness admission for scientists should also extend to trust in the scientist’s field of expertise and science in general. In other words, we expect that the enhanced trustworthiness of a wrongness-admitting scientist will, in turn, be associated with more favorable attitudes toward science. When people hold both positive and negative perceptions of outgroup members, they tend to generalize these attitudes to the groups themselves (Stark et al., 2013). This seems to also be the case for lay people’s perceptions of scientists. Not only are intellectually humble scientists seen as more trustworthy, but the perceived intellectual humility of the scientist is associated with people’s overall trust in science (Koetke et al., 2024). Moreover, perceived trustworthiness and morality of the scientist is directly linked to trust in science (Wintterlin et al., 2022) and support for the scientist to receive funding (Pickett and Roche, 2018). Therefore, we posit that perceived trustworthiness mediates the link between a scientist’s intellectual humility (signaled by their wrongness admission) and the public’s trust in and support for funding for scientific research.

Current investigation

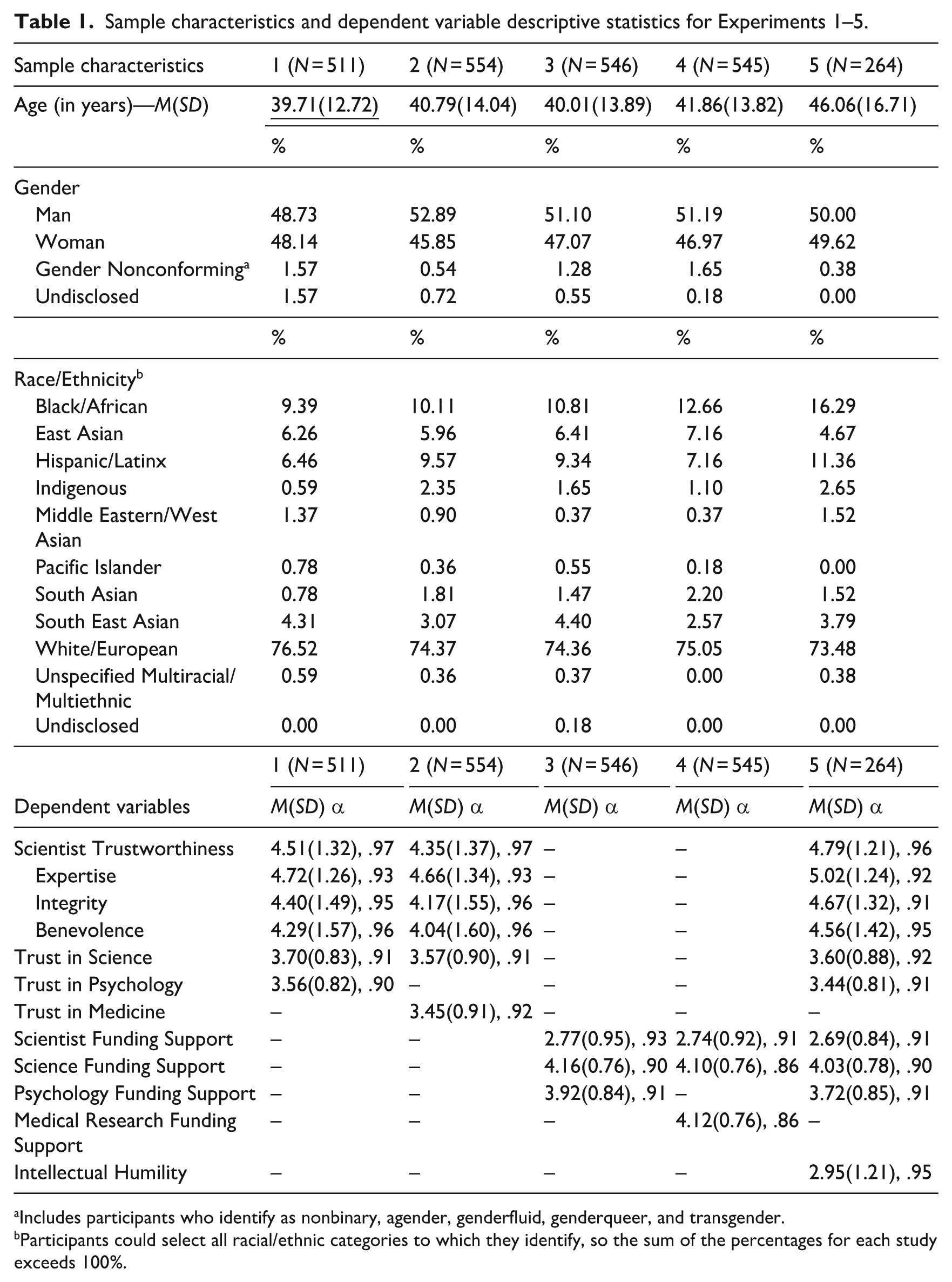

We sought to investigate the role that wrongness admission, as a behavioral manifestation of intellectual humility, plays in influencing perceptions of scientists’ trustworthiness from the public as well as the public’s support for federal funding for research initiatives. In five preregistered experiments, we tested whether scientists and scientific research are perceived more favorably when scientists engage in wrongness admission after a failed replication. 1 We tested the effect of scientists’ wrongness admission on perceived trustworthiness in the scientist and trust in scientific, psychological, and medical research (Experiments 1, 2, and 5) as well as funding support for the scientist and scientific, psychological, and medical research (Experiments 3–5). Finally, we tested the extent to which perceived scientist trustworthiness mediates the effects of wrongness admission on trust in and funding support for scientific research (Experiment 5). In addition, in Experiment 5, we validated wrongness admission as an external expression of one’s intellectual humility. The preregistrations, data, and materials are available on the Open Science Framework (https://osf.io/mxrnb/). We report sample demographic characteristics and dependent variable descriptive statistics for all studies in Table 1.

Sample characteristics and dependent variable descriptive statistics for Experiments 1–5.

Includes participants who identify as nonbinary, agender, genderfluid, genderqueer, and transgender.

Participants could select all racial/ethnic categories to which they identify, so the sum of the percentages for each study exceeds 100%.

Experiments 1 and 2

We first tested the effects of a scientist engaging in wrongness admission (vs refusal and no comment) about a finding that failed to replicate on the public’s perceptions of their trustworthiness and the public’s trust in science, psychology (Experiment 1), and medicine (Experiment 2). We hypothesized that a scientist engaging in wrongness admission (vs one who refuses or does not respond) regarding a finding that failed to replicate will be perceived as more trustworthy and will facilitate higher levels of trust in science, medicine, and psychology from the public.

Method

Participants

We conducted a power analysis (Faul et al., 2013) for Experiments 1 and 2 based on the smallest effect, Cohen’s f = .143, found by Fetterman and Sassenberg (2015) who employed a similar design. To detect this effect at 80% power for the main effect of a one-way between-subjects design with three levels, we needed a minimum sample of 477 participants. For pairwise comparisons, a power analysis for independent samples t-tests yielded a minimum sample of 193 per condition (579 participants total). To account for data loss, we recruited 600 participants in Experiment 1 and 599 participants in Experiment 2 from Prolific in exchange for US$1. All participants identified as United States nationals and had a minimum Prolific approval rating of 95%.

According to our preregistration, we removed 89 participants in Experiment 1 and 45 in Experiment 2 for failing to meet at least one of the following criteria: (1) indicating that they commit to providing thoughtful responses, (2) responding correctly to a single-item attention check (see section “Procedure and Materials”), or (3) responding to all dependent variable items. After exclusions, we had analyzable samples of N = 511 (Experiment 1) and N = 554 (Experiment 2).

Procedure and materials

Participants completed both experiments in Qualtrics. After providing informed consent, participants responded to a commitment question (Geisen, 2022). We then told participants that we were investigating the impact of research findings disseminated via various media outlets on the public, and that they would read and rate one of (ostensibly) many articles.

Participants were randomly assigned to read one of three fabricated articles, ostensibly published by the Associated Press. In each article, participants read about a scientist, “Dr. [redacted],” whose prior research failed to replicate in an attempt by an independent scientist from a different university. In the Admission Condition (Experiment 1 n = 190, Experiment 2 n = 193), Dr. [redacted] responded, “In light of the evidence, it looks like I was wrong about the effect.” In the Refusal Condition (Experiment 1 n = 177, Experiment 2 n = 183), Dr. [redacted] responded, “In light of the evidence, I am not sure about the replication study. I still think the effect is real.” In the No Comment Condition (Experiment 1 n = 144, Experiment 2 n = 178), Dr. [redacted] responded, “I have no comment regarding the replication study.” In Experiment 1, Dr. [redacted] was described as a Professor of Psychology whose “novel effect in human behavior” was that they “had discovered a new factor that influenced people’s decision-making,” whereas in Experiment 2, Dr. [redacted] was described as a Professor of Internal Medicine whose “novel effect of a new treatment for a chronic illness” was that they “discovered a new groundbreaking treatment for a chronic autoimmune disease.”

After reading each article for 1 minute, participants then completed measures of scientist trustworthiness, trust in science, and trust in psychology (Experiment 1) or medicine (Experiment 2). Following the dependent measures, participants completed an attention check asking participants how Dr. [redacted] responded in their respective articles. In Experiment 1, participants were presented the following options: “They admitted that they were wrong,” “They did not admit that they were wrong,” “They did not provide any response,” 2 and “I don’t remember.” 3

Scientist trustworthiness

Participants rated the trustworthiness of Dr. [redacted] using the 14-item Muenster Epistemic Trustworthiness Inventory (METI; Hendriks et al., 2015). Using trait descriptive adjectives assessed along a 7-point scale, this measure captures three factors of trustworthiness: expertise (6 items; e.g. 1 = competent, 7 = incompetent), integrity (4 items; e.g. 1 = sincere, 7 = insincere), and benevolence (4 items; e.g. 1 = moral, 7 = immoral). After reverse-scoring all items, we computed composite scores for each factor and an overall trustworthiness score.

Trust in science

Participants rated their trust in the scientific community along a 5-point scale (1 = strongly disagree, 5 = strongly agree) using the 5-item Institutional Trust in the Scientific Community measure (e.g. “Information from the scientific community is trustworthy”; Nisbet et al., 2015). After reverse-scoring three negatively worded items, we averaged the five items to compute a composite trust in science score.

Trust in psychology and medicine

To measure trust in psychology for Experiment 1, we used the Wingen et al. (2020) adaptation of the Institutional Trust in the Scientific Community measure (Nisbet et al., 2015), changing “scientific community” to “psychological science community.” The measure of trust in medicine for Experiment 2 was adapted in a similar manner, in which we address trust in the “medical community.” After reverse-scoring three negatively worded items, we averaged across the five items of each measure to compute composite trust in psychology (M = 3.56, SD = 0.82, α = .90) and trust in medicine (M = 3.45, SD = 0.91, α = .92) scores.

Results

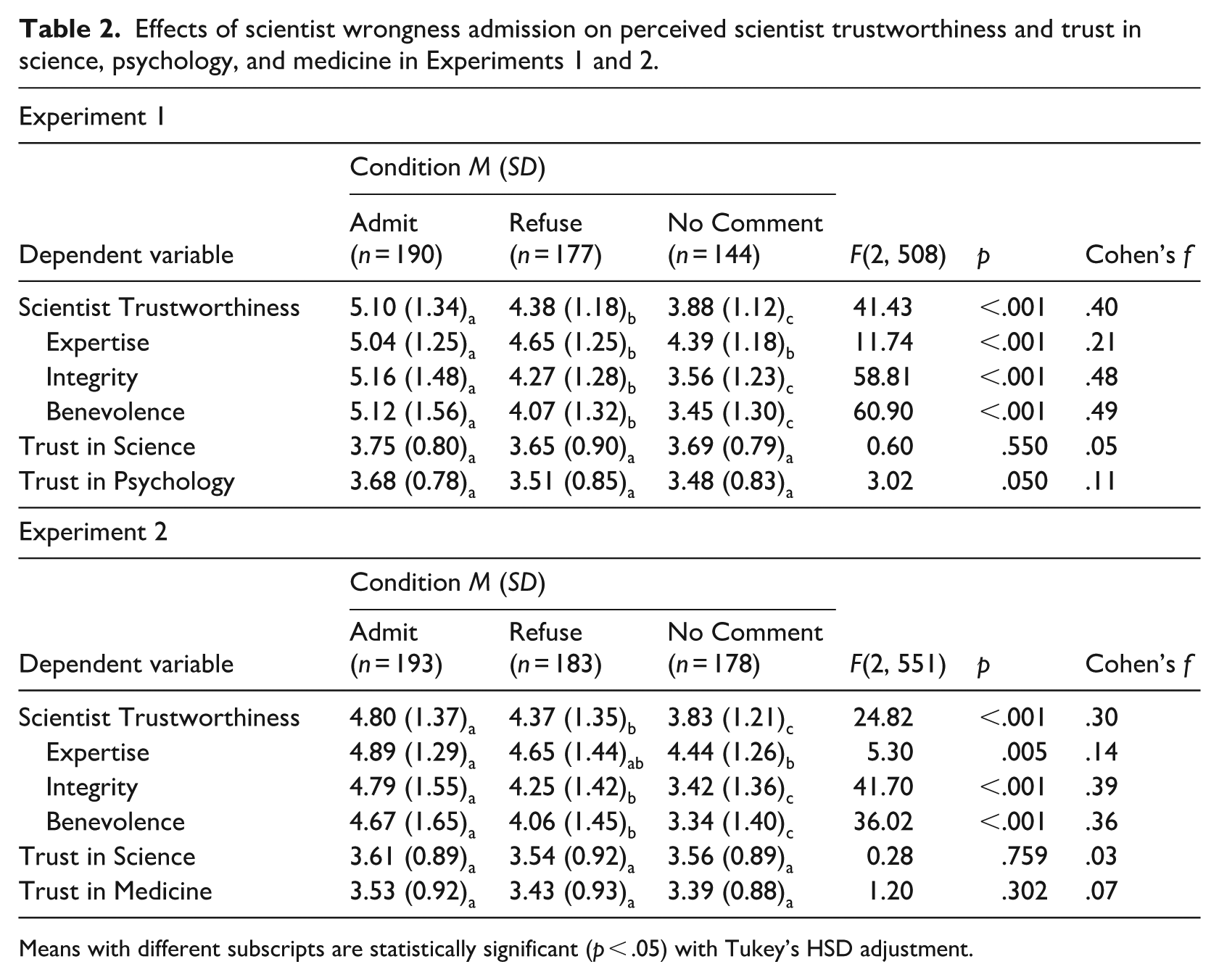

To test the effects of wrongness admission on perceptions of scientist trustworthiness and trust in science, psychology, and medicine, we submitted each dependent variable to a one-way ANOVA (Table 2). Scientists who engaged in wrongness admission (vs refused or did not comment) were rated as more trustworthy, as evinced by higher scores on expertise, integrity, and benevolence. In addition, we found a significant main effect of our wrongness admission manipulation on trust in psychology (Experiment 1). However, while the difference in means between conditions was in the predicted direction, post hoc analyses did not evince significant differences between the conditions. Similarly, scientists who engaged in wrongness admission did not yield significantly higher levels of trust in science or medicine compared with those who refused or did not comment.

Effects of scientist wrongness admission on perceived scientist trustworthiness and trust in science, psychology, and medicine in Experiments 1 and 2.

Means with different subscripts are statistically significant (p < .05) with Tukey’s HSD adjustment.

Exploratory analyses

While we did not preregister this hypothesis or analysis, we were also interested in exploring the extent to which perceived trustworthiness mediates the effect of wrongness admission on trust in science (both general and discipline-specific). Indeed, wrongness admission (vs refusal and no comment) indirectly facilitated higher levels of trust in science (Experiment 1: indirect effect = .253 [.161, .355], p < .001; Experiment 2: indirect effect = .210 [.134, .298], p < .001), psychology (indirect effect = .241 [.147, .349], p < .001), and medicine (indirect effect = .211 [.137, .297], p < .001), through scientist trustworthiness (for full model statistics, see Supplemental Material).

Discussion

Experiments 1 and 2 demonstrate the personal reputational benefits of scientists engaging in wrongness admission when their original findings fail to replicate. However, it seems that one instance of a scientist engaging in wrongness admission about a non-replicated finding may not be enough to foster trustworthiness in science as an institution. Even so, it is also important to point out that wrongness admission did not decrease judgments of trustworthiness in science. We, therefore, sought to address whether these findings extend to influencing the public’s attitudes toward supporting government funding for the scientist and general and discipline-specific (psychology and medicine) science.

Experiments 3 and 4

In Experiments 3 and 4, we investigated whether wrongness admission fosters positive attitudes toward funding the research of the wrongness-admitting scientist specifically, as well as research in psychology, medicine, and science more generally. We employed the same experimental manipulations used in Experiments 1 and 2. We hypothesized that a scientist who engages in wrongness admission (vs refuses or does not comment) regarding a failed replication will elicit more support from the public to fund their own research as well as research in science, psychology, and medicine.

Method

Participants

In line with our Experiments 1 and 2 sampling plans, we recruited 602 participants in Experiment 3 and 600 participants in Experiment 2 from Prolific in exchange for US$1. All participants identified as United States nationals and had a minimum Prolific approval rating of 95%. We removed 56 participants in Experiment 3 and 55 in Experiment 4 according to the same exclusion criteria described in Experiments 1 and 2. This resulted in analyzable samples of N = 546 (Experiment 3) and N = 545 (Experiment 4).

Procedure and materials

We employed the same experimental paradigm used in Experiments 1 and 2, with the only difference being the dependent variables assessed. After responding to the commitment question, participants were randomly assigned to one of the three fabricated article conditions described in Experiments 1 and 2: Admission (Experiment 3 n = 187, Experiment 4 n = 181), Refusal (Experiment 3 n = 182, Experiment 4 n = 191), or No Comment (Experiment 3 n = 177, Experiment 4 n = 173). Like the previous experiments, Experiment 3 described the scientist as a Professor of Psychology, whereas Experiment 4 described the scientist as a Professor of Internal Medicine.

After reading each article for 1 minute, participants then completed measures assessing attitudes toward government funding for the scientist, science, and psychology (Experiment 3) or medicine (Experiment 4). Following the dependent measures, participants completed an attention check asking participants how Dr. [redacted] responded in their respective articles followed by a brief demographic questionnaire.

Attitudes toward government funding

Participants responded to three measures, each comprising five items measured along a 5-point scale (1 = strongly disagree, 5 = strongly agree) assessing their attitudes toward government funding for (1) the scientist, (2) science, and (3) psychology (Experiment 3) and medicine (Experiment 4). Each item was worded similarly across measures. For example, participants responded to the item, “The government should support [the research of Dr. [redacted]/scientific research/research in psychology [medicine]] through providing grant funding.” After reverse scoring two negatively worded items for each measure, we averaged the five items to compute composite scores for attitudes toward government funding for the scientist, scientific research, and research in psychology and medicine.

Results and discussion

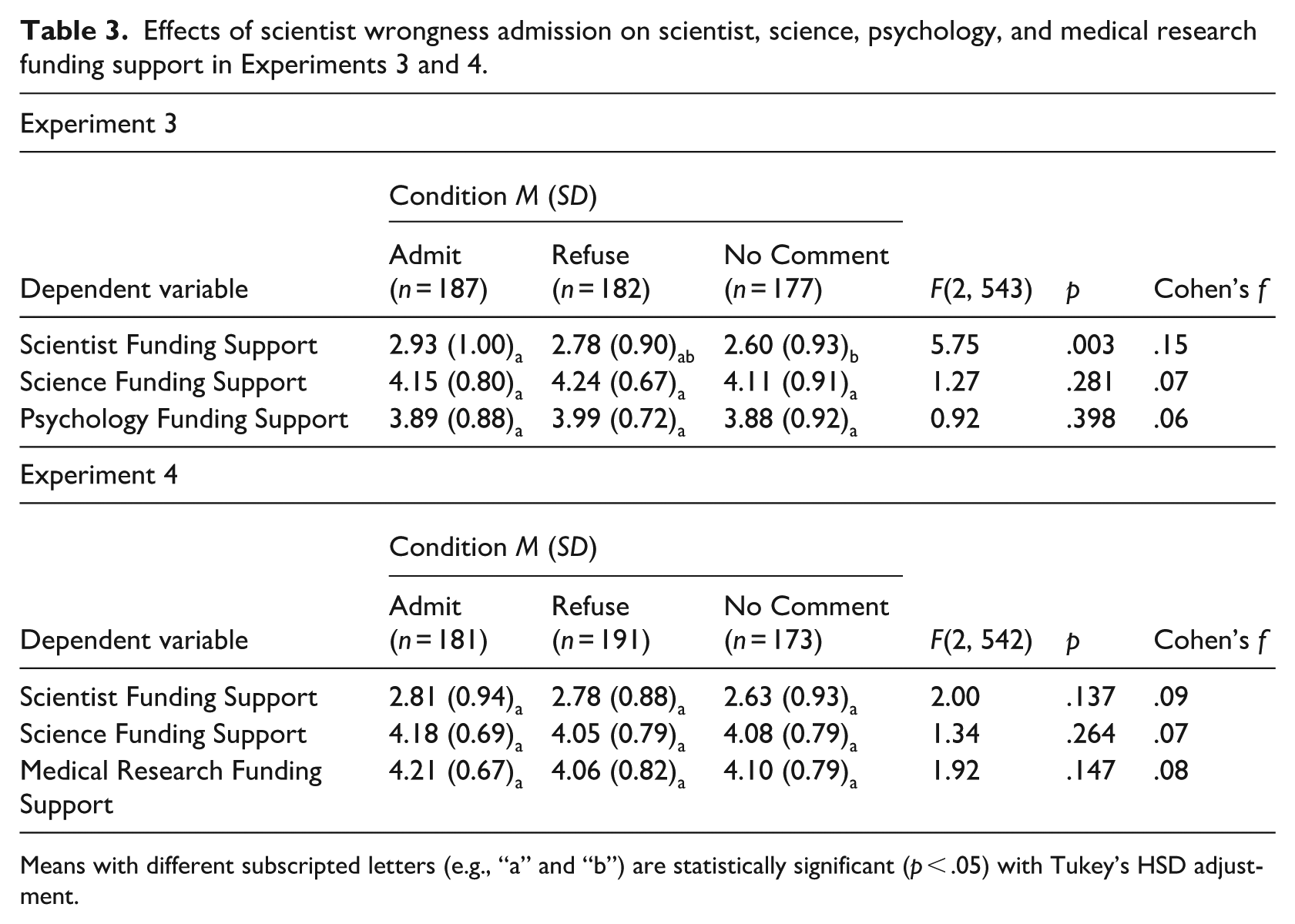

To test the effects of wrongness admission on supportive attitudes toward government funding for the scientist and general and discipline-specific science, we submitted each dependent variable to a one-way ANOVA (Table 3). In Experiment 3, scientists who engaged in wrongness admission received greater support for their research than did the scientist who did not provide a comment (there was no difference between admission and refusal). Beyond this, neither experiment demonstrated that engaging in wrongness admission about one’s finding confers higher levels of support for federal funding for science, psychology, or medicine. Nevertheless, contrary to worries about engaging in wrongness admission yielding negative consequences, on average, we do not find evidence that this is the case.

Effects of scientist wrongness admission on scientist, science, psychology, and medical research funding support in Experiments 3 and 4.

Means with different subscripted letters (e.g., “a” and “b”) are statistically significant (p < .05) with Tukey’s HSD adjustment.

Experiment 5

Experiment 5 addressed limitations in the experimental designs of the prior experiments. First, we modified the No Comment condition to reflect a true non-response from the hypothetical scientist in the fabricated news article (i.e. the participants read that the scientist was not contacted to respond). Second, to improve ecological validity of the article, we included a last name of the scientist and a corresponding affiliated university as well as a university affiliated with the research team who failed to replicate their finding. Third, to establish support for our assumption that wrongness admission is an intellectually humble behavior, we included participants’ perceptions of the scientist’s intellectual humility as a dependent variable. Finally, given that our exploratory analyses from Experiment 1 demonstrated that scientist trustworthiness mediated the effect of wrongness admission on trust in science (general and discipline-specific), we sought to replicate these findings and extend them to the public’s support for funding scientific research.

We first hypothesized that a scientist who engages in wrongness admission (vs refuses or does not respond) regarding a finding of theirs that failed to replicate will be perceived as more intellectually humble and trustworthy. Second, we hypothesized that a scientist engaging in wrongness admission (vs refusing or does not respond) will yield greater public trust in psychology and science and a greater endorsement of government funding to support the scientist and research in psychology and science. Finally, we hypothesized that the effect of wrongness admission (vs refusal and no response) on trust in psychology and science and funding support for the scientist, psychology, and science will be mediated by perceived trustworthiness of the scientist.

Method

Participants

We conducted a power analysis (Faul et al., 2013) based on the smallest effect of the condition on scientist trustworthiness, our hypothesized mediator (Cohen’s f = .3), which we obtained in Experiment 2. To detect this effect size at 80% power (α = .05) for the main effect for a one-way between-subjects design with three levels, the minimum sample required was 111. However, for the pairwise comparisons, an additional power analysis (for an independent samples t-test) yielded a sample size of 45 participants per condition (135 total). To account for data loss, we recruited 300 Prolific participants from the United States in exchange for US$1.40. We removed 36 participants according to the same exclusion criteria outlined in the prior studies, resulting in an analyzable sample of N = 264.

Procedure and materials

We employed a similar experimental paradigm used in Experiments 1 and 3. After responding to the commitment question, participants were randomly assigned to one of the three fabricated article conditions describing “Dr. Robinson,” Professor of Psychology at the University of Michigan who “had discovered a new factor that influenced people’s decision-making” (mirroring Experiments 1 and 3). The response from the scientist in the Admission (n = 86) and Refusal (n = 89) conditions mirrored the responses from Experiments 1 and 3. In the No Comment Condition (n = 89), participants read that the professor “was not contacted for a response to the new findings.” In other words, the modified No Comment condition reflects a true non-response, rather than indicating that the professor responds by stating that they have no comment (which is a comment in and of itself).

After reading their respective articles for 1 minute, participants then completed a measure assessing Dr. Robinson’s intellectual humility using an adapted version of the 5-item General Intellectual Humility Scale (e.g. “Dr. Robinson reconsiders their opinions when presented with new evidence”; Leary et al., 2017) along a 5-point scale (1 = Not at all like Dr. Robinson, 5 = Very much like Dr. Robinson). Participants then completed the same measures used in Experiments 1 and 3 assessing Dr. Robinson’s trustworthiness as well as their levels of trust in psychology and science and their attitudes toward government funding for Dr. Robinson, science, and psychology. Participants then completed the same attention check used in Experiments 2–4 followed by a brief demographic questionnaire.

Results and discussion

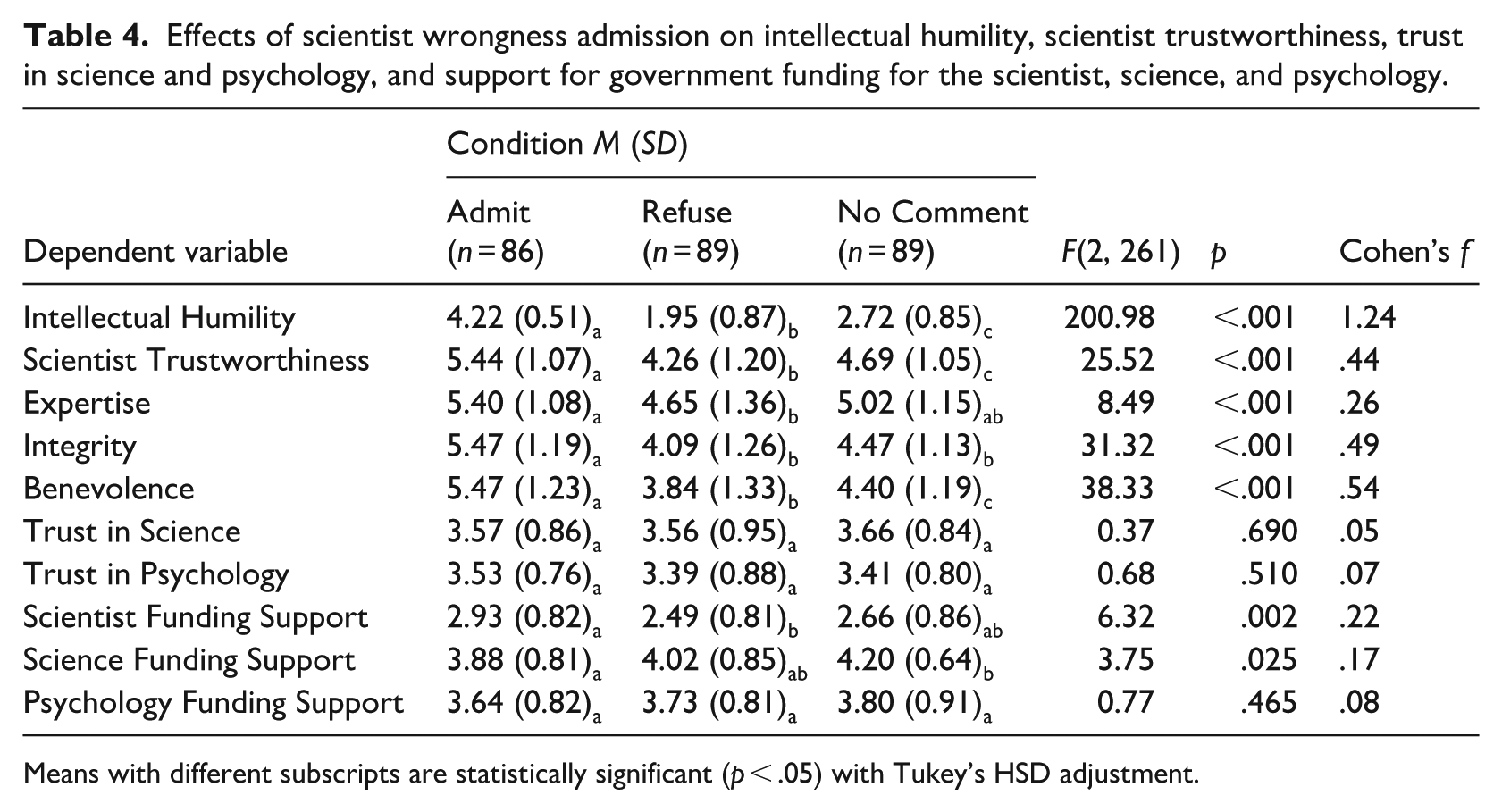

To test the reputational impact of wrongness admission, we first submitted each dependent variable to a one-way ANOVA (Table 4). First, in line with our prediction that engaging in wrongness admission is perceived as an intellectually humble behavior, participants in the wrongness admission (vs refusal and no comment) condition reported Dr. Robinson as being more intellectually humble. Supporting our primary predictions, participants perceived the wrongness-admitting Dr. Robinson as more trustworthy, and they supported funding for them to a greater extent. When Dr. Robinson refused (vs provided no comment), participants perceived them as less trustworthy. However, wrongness admission again yielded neither higher levels of perceived trust in science and psychology nor funding support for science and psychology. Moreover, contrary to our predictions, wrongness admission (vs not comment) negatively impacted participants’ science funding support.

Effects of scientist wrongness admission on intellectual humility, scientist trustworthiness, trust in science and psychology, and support for government funding for the scientist, science, and psychology.

Means with different subscripts are statistically significant (p < .05) with Tukey’s HSD adjustment.

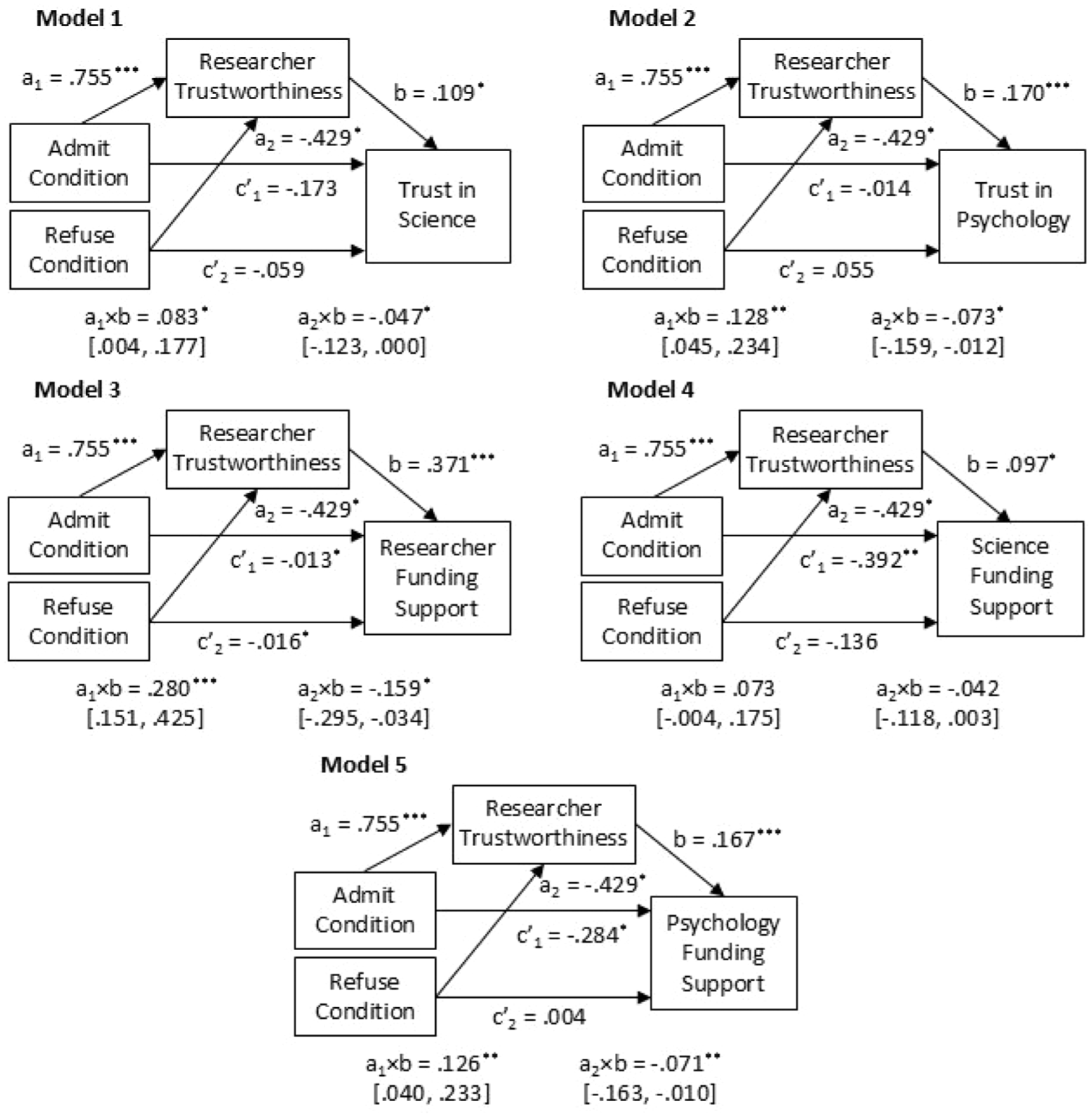

We also tested the extent to which scientist trustworthiness mediates the effect of wrongness admission on trust in science and psychology and funding support for Dr. Robinson, science, and psychology (Figure 1). We ran a series of mediation models, in which we included two dummy-coded treatment variables to reflect the experimental manipulation, with the No Comment condition treated as the referent condition: Admit Condition (1 = Admit, 0 = Refuse and No Comment), and Refuse Condition (1 = Refuse, 0 = Admit and No Comment). We obtained significant positive indirect effects of Admit Condition and significant negative indirect effects of Refuse Condition on all outcomes, except for science funding support. This largely supports our hypothesis that people perceive psychological scientists as more trustworthy when they engage in wrongness admission, which in turn, facilitates greater trust in science and psychology as well as support for the scientist and psychology. In addition, this experiment provided evidence for the negative impact of admission refusal. This underscores the point that while researchers may be motivated to refuse to admit they are wrong in order to protect their reputations, doing so may actually hurt their reputations.

Mediation models for wrongness admission predicting trust in science and psychology and researcher, science, and psychology funding support, through researcher trustworthiness.

General discussion

In five experiments, we tested the effects of a scientist’s wrongness admission about a finding that failed to replicate on perceptions of trustworthiness in and support for federal funding for the scientist, science, psychology, and medicine. In line with our hypotheses, scientists engaging in wrongness admission in Experiments 1 and 2 were perceived as more trustworthy compared with those who do not respond to fail replications and those who refuse to admit. While these direct effects did not extend to trust in science, psychology, or medicine, we found that wrongness admission did foster higher trust in science, psychology, and medicine indirectly through perceived trustworthiness. This was replicated in Experiment 5.

Moreover, while Experiments 3 and 5 demonstrated that a wrongness-admitting scientist received more support for federal funding for their own research (compared with providing no comment), this finding did not replicate in Experiment 4, and it did not extend to support for funding for science, psychology, or medicine. In fact, according to Experiment 5, wrongness admission (vs not responding) seems to negatively impact people’s support for funding scientific research. This may be due to people’s hesitation to support potentially wasteful spending to fund research they see as already having a track record for being erroneous. However, this finding should be interpreted with caution. This main effect of admission on reduced support for science funding was rather small, and there were no significant differences between refusal and admission and between refusal and no comment. Moreover, this effect was not found for participants’ funding support for psychological research.

When treating trustworthiness as a mediator in Experiment 5, wrongness admission indirectly increased scientist and psychology funding support (but not science funding support), and admission refusal indirectly decreased scientist and psychology funding support. Altogether, these findings demonstrate that scientists are no worse off engaging in wrongness admission about their findings in the wake of failed replications than they are not admitting. In fact, they may be perceived more favorably by the public.

Theoretical contributions and implications

The current work builds on the existing literature investigating the reputational impact of scientists engaging in intellectually humble behaviors. While earlier work highlights the importance of self-corrective behaviors to facilitating the public’s trust in scientists and even science (e.g. Altenmüller et al., 2021; Hendriks et al., 2016), this work has not yet done so from an intellectual humility framework. Therefore, this work not only builds on the established findings of being self-corrective but also extends the work demonstrating the reputational benefits of admitting wrongness (and the drawbacks of refusing to admit wrongness), especially when it comes to scientists (Fetterman and Sassenberg, 2015).

Moreover, this work takes a deeper dive into the unique role that wrongness admission plays in enhancing perceptions of a scientist’s trustworthiness, and how this trustworthiness has downstream links to trust in and support for scientific endeavors. For example, Anvari and Lakens (2018) found that informing the public of the reforms in place to combat the replication issues (i.e. to foster transparency in psychological science) did not increase support for public funding for psychological research. This demonstrates that understanding or perceiving transparency in psychological research may not be enough to influence the public’s support for government funding in psychological research. However, the public may perceive a scientist as more trustworthy when they display intellectual humility, discuss reforms to increase transparency, and other corrective actions. This trustworthiness may be, in turn, linked to greater support for public funding.

This work also builds on a growing line of research investigating antecedents and consequences of distrust in science and how the scientific community can combat these issues. For example, recent work highlights some potential recommendations to enhance scientists’ intellectual humility in reporting their findings during the publication process (Hoekstra and Vazire, 2021). However, as the current work demonstrates, it may be just as important to express intellectual humility post-publication when those findings are called into question. In doing so, our findings demonstrate wrongness admission’s utility to foster—and even potentially restore—trust in scientists.

There are also implications for communicating research findings in the media and how failed replications are reported. Prior work has investigated how scientific research is reported to the public, especially the importance of accurately conveying the uncertainties of the research process when interpreting the findings reported (Friedman et al., 1999; Woloshin and Schwartz, 2006). Since we employed a news story experimental manipulation, this work may shed light on how people perceive research being reported in the media, particularly when reporting failed replications. Of course, it is certainly important for journalists and media outlets to be clear about caveats or uncertainties of the findings they are sharing. However, demonstrating the intellectual humility of the scientist themselves in light of failed replications can take this a step further. By showing that scientists are willing to admit that the effects of their findings may not be replicable, media outlets can further foster their readership’s trust in the scientific community. At the very least, it will not harm the community’s reputation.

Limitations and future directions

While our findings provide support for the positive reputational outcomes of scientist wrongness admission, several limitations should be considered and addressed in future research. First, for Experiments 1–4, after removing participants for failing the commitment and attention check items and for incomplete responses, our samples fell below their preregistered sample sizes. However, we were rather conservative in our preregistered desired effect size, relying on the smallest (rather than the average effect, for example) observed by Fetterman and Sassenberg (2015). As a result, most of the primary models obtained effect sizes larger than our desired effect. Nevertheless, it is important to note that some of the other models (e.g. those of Experiments 3 and 4) may have been underpowered.

Future work should also take into consideration individual differences of the perceiver as well as situational characteristics that may impact the benefits of wrongness admission. Regarding individual differences of the perceiver, someone who is already low in trust in science, is less scientifically literate, or does not engage with science may see a wrongness admission as further evidence for distrust, and as such, may perceive a wrongness-admitting scientist more negatively. As for situational characteristics, wrongness admission may not be as beneficial when it comes to higher-stakes research. For example, scientists updating their projections regarding the impact COVID-19 after considering new evidence seem to have contributed to a decreased trust in science (Kreps and Kriner, 2020). Reduced trust in science, in turn, negatively impacted support for preventive behaviors to reduce the spread of COVID-19 (Sulik et al., 2021) and intentions to engage in such behaviors (Pagliaro et al., 2021; Plohl and Musil, 2021).

We created fabricated news articles that resemble an Associated Press online article to increase realism. However, we also needed to ensure equivalence across articles in terms of the information that we provide, such that the articles did not provide any potential confounding variables. Doing so compromised some level of ecological validity. For example, the article simply ends after the brief response from the scientist. Instead, news articles reporting scientific research often provide additional information. In addition, scientists may not provide such brief responses as the ones we included in our stimuli (i.e. simply admitting wrongness or refusing to admit about the research findings). Rather, scientists may also discuss self-correction intentions, such as those tested by Altenmüller et al. (2021), or at the very least provide justification for their stance on the failed replication. Therefore, future work should employ a more ecologically valid news article manipulation.

In a similar vein, there may be several factors that may contribute to a study’s failure to replicate. Our fabricated news articles do not discuss any details regarding the methodology of either the original study or its replication but simply mention that the original study failed to replicate. Therefore, it is important to note that not all failed replications require wrongness admission, especially when there are valid caveats in the study design that may have influenced the results. However, when the scientist recognizes that their research results may be wrong or incorrect, our work demonstrates that the best route is to admit they were wrong. Therefore, future work on scientist wrongness admission and intellectual humility should also take into consideration the nuances of a study’s research design.

We wanted to collect data from a diverse sample of United States nationals, so we employed Prolific. However, this first comes with the limitation of employing online studies, which can impact the controllability of participants’ environments when they choose to participate. This may especially be problematic when participants are asked to read news articles carefully. Although we used an attention check, we cannot guarantee that all participants completed the study in the same or even similar environments. In addition, recruiting participants from Prolific limits the generalizability of our findings. While crowdsourcing resources like Prolific offer opportunities to recruit participants with diverse demographic backgrounds (Gosling et al., 2010), most often the samples that scientists recruit from these sources are still Western, educated, industrialized, rich, and democratic (WEIRD; Medvedev et al., 2024; Stewart et al., 2017; Henrich et al., 2010). Therefore, we must keep these demographics in mind when interpreting and attempting to generalize our findings.

Finally, In Experiments 3–5, we developed our own measures of funding support for the scientist, science, psychology, and medicine. We did so because we were unable to find a viable measure of funding attitudes that can be applied to our experiments. While these measures exhibited acceptable internal consistency reliability and the items that comprise these measures are arguably face valid, future work would benefit from further scrutinizing the validity and reliability of this measure.

Conclusion

Scientists may be hesitant to admit they are wrong about their research findings if they failed to replicate. Wrongness admission is essentially admitting a lack of competence. However, our work demonstrates that scientists are not perceived any more negatively when they engage in wrongness admission compared with if they refuse to admit or simply refuse to comment. Instead, our work demonstrates that scientists who engage in wrongness admission are perceived as more trustworthy, whereas scientists who refuse to engage in wrongness admission are perceived less trustworthy. The enhanced trustworthiness that comes from wrongness admission translates to higher trust in and support for scientific research. Therefore, if we as scientists want to restore the public’s trust in our work and the work in our respective fields when our research findings are called into question, we may want exhibit intellectual humility.

Supplemental Material

sj-docx-1-pus-10.1177_09636625251372820 – Supplemental material for Shedding light on public perceptions of scientists who engage in wrongness admission amidst a failed replication

Supplemental material, sj-docx-1-pus-10.1177_09636625251372820 for Shedding light on public perceptions of scientists who engage in wrongness admission amidst a failed replication by Nicholas D. Evans and Adam K. Fetterman in Public Understanding of Science

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We would like to acknowledge the generous financial support of the John Templeton Foundation (Grant No. 62265), Applied Research on Intellectual Humility: A Request for Proposals.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.