Abstract

Lay readers’ trust in scientific texts can be shaped by perceived text easiness and scientificness. The two effects seem vital in a time of rapid science information sharing, yet have so far only been examined separately. A preregistered online study was conducted to assess them jointly, to probe for author and text trustworthiness overlap, and to investigate interindividual influences on the effects. N = 1467 lay readers read four short research summaries, with easiness and scientificness (high vs low) being experimentally varied. A more scientific writing style led to higher perceived author and text trustworthiness. Higher personal justification belief, lower justification by multiple-sources belief, and lower need for cognitive closure attenuated the influence of scientificness on trustworthiness. However, text easiness showed no influence on trustworthiness and no interaction with text scientificness. Implications for future studies and suggestions for enhancing the perceived trustworthiness of research summaries are discussed.

Keywords

1. Introduction

Public use of online resources for scientific information seeking is on the rise. In a 2020 German general population survey, about 40% of respondents reported using the Internet often or very often to seek information on scientific developments (Wissenschaft im Dialog/Kantar, 2020). The scientific information available online is manifold and encompasses social media posts, news articles, science magazines, or open access journals. This easy access encourages public engagement with research findings and supports informed decision making. Yet, this is not without caveats. Information disseminated online is typically subject to fewer checks and balances in comparison to other media sources (Martens et al., 2018, pp. 17–18), which can result in rapidly spreading false news or misinformation. For instance, in their recent study of Twitter data, Vosoughi et al. (2018) demonstrated that false news reached 1500 people at a rate nearly six times higher than true news. Furthermore, oversimplification or overgeneralization of scientific findings may not necessarily be transparent (Barzilai and Chinn, 2019), and directly gauging a source’s content veracity via “first hand evaluations” (Bromme and Goldman, 2014) often requires extensive knowledge in a field. Cultivating such knowledge is time-consuming and often done in specialized educational settings, which makes firsthand evaluations difficult for laypeople.

Consequently, they often shift to “second-hand evaluations” (Bromme and Goldman, 2014), that is, to information surrounding a scientific output. For instance, a text may offer information on the author’s background, expertise, institutional affiliation, funding, or motives for publication. Using this information as a heuristic base for trust allows laypeople to evaluate scientific works in a resource-efficient manner, often enabling them to discern between warranted and unwarranted claims (Cummings, 2014). Even though these processes are meaningful for written science communication, evidence is limited on how specific textual features such as easiness or scientificness influence lay readers’ secondhand evaluations. The present study therefore analyzes how these features and their interaction influence lay readers’ perceptions of text and author trustworthiness.

2. Trust, epistemic trust, and their assessment

Trust has become a crucial concept in science communication, spurred by phenomena such as “fake news” and conspiracy theories (Lewandowsky et al., 2017). Yet trust is by no means a new concept and has been studied in psychology since the 1950s (cf. McGuire, 1996). In an often-cited article, Mayer et al. (1995) define trust as the willingness of a party to be vulnerable to the actions of another party based on the expectation that the other will perform a particular action important to the trustor, irrespective of the ability to monitor or control that other party.

In a scientific communication context aimed at knowledge and knowledge acquisition, the term “epistemic trust” is often invoked (see Hardwig, 1991; Sperber et al., 2010). But which factors determine whether a lay reader grants epistemic trust? Following the dichotomy laid out by the Elaboration Likelihood Model (ELM; Petty and Cacioppo, 1986), it is possible to broadly categorize research along the lines of “central criteria” and “peripheral criteria.” Central criteria refer directly to source content and information embedded within a text, typically requiring effortful processing. Previous studies have varied these criteria in terms of argument strength or quality, for instance, by using statistics and data in arguments (Petty et al., 1981; Yi et al., 2013). In contrast, peripheral criteria refer to external information (e.g. about the author) and typically require less effortful processing. This type of information can include characteristics of the author such as expertise (Petty et al., 1981; Yi et al., 2013) or public fame (Petty et al., 1983). Likewise, aspects such as in-text citations and a bibliographical reference section (Zaboski and Therriault, 2020), scientific jargon (Thon and Jucks, 2017), or tone of language (König and Jucks, 2019) can fall into this category. This framework allows for classifying two existing effects as a consequence of peripheral criteria processing: the “easiness effect” and the “scientificness effect.”

3. The easiness effect and the scientificness effect

The easiness effect, as reported by Scharrer et al. (2012), describes readers’ tendency to rate a text as more trustworthy and to show more agreement with it when it depicts information in an accessible form. In two initial experiments with 88 undergraduate students each, Scharrer and colleagues presented their participants four scientific texts differing in argument strength and comprehensibility. The authors altered comprehensibility via the use of technical terms, inclusion of unnecessary information, and repetitions. In both experiments, participants rated arguments from comprehensible texts as more convincing and agreed to them more strongly than arguments from incomprehensible texts. Participants also showed greater confidence in their own decision and a lower desire to consult an expert prior to deciding on a claim. The effect has since been demonstrated in multiple follow-up studies (Scharrer et al., 2013, 2014, 2017, 2019). One possible explanation for its emergence draws on processing fluency and feeling-as-information theory (for a review, see Schwarz et al., 2021): texts using less technical terms and a clearer structure may generate more familiarity and positive affect. Readers might then use these positive feelings as a heuristic basis for trustworthiness or risk assessments. This position received support from studies on metacognition: an increase in jargon not only decreases processing fluency, but has been linked to greater motivated resistance to persuasion and higher risk assessments (Bullock et al., 2019) as well as lower engagement with scientific content (Shulman et al., 2020). Previous research also suggests that higher text easiness may be beneficial for readers with low, but not high, prior knowledge when answering knowledge questions (McNamara et al., 1996).

The perceived scientificness of texts also seems to influence trustworthiness, with the scientificness effect referring to readers’ tendency to assign higher credibility to sources presented in a scientific style. Thomm and Bromme (2012) reported a series of two experiments with 78 and 86 university students, respectively. In both experiments, students read two scientific texts. As a within-manipulation, one text was written in scientific discourse style (containing citations, descriptions of methodology, and passive voice), while the other was written in factual style (no citations, methodology not mentioned, and active voice). Students rated the scientificness and credibility of the texts and their subjective agreement with the text’s claims. Results revealed that texts written in scientific discourse style were perceived as more scientific and more credible, and they elicited higher claim agreement compared to texts written in factual discourse style. Bromme and colleagues (2015) were able to replicate the effect in two additional studies, and Winter et al. (2015) reported an experiment in which an assertive, one-sided text on the negative impact of computer games on aggressive behavior in children was paradoxically less effective than a more nuanced one-sided version in influencing readers’ attitudes. Drawing on the trust conceptualization by Mayer et al. (1995), readers may use scientificness to make inferences about researchers’ ability, adherence to scientific standards, and communication intentions. A higher level of scientificness may thus result in higher credibility judgments and an increased willingness to agree to researchers’ claims.

To summarize, both easiness and scientificness may influence lay readers’ credibility ratings and claim agreement. To our knowledge, most previous studies have examined the effects separately. Yet scientificness and easiness are often confounded (i.e. a text including statistical language and jargon is often harder to read, Stricker et al., 2020). Efforts to increase text scientificness may thus potentially reduce easiness effects or fostering text easiness may hamper scientificness effects. Furthermore, individual reader characteristics may influence these effects. It appears crucial to investigate both effects in a combined experimental design. Assessing their potential interactions may have beneficial implications, for example, by providing information to individuals preparing lay-friendly research summaries on how to maintain trustworthiness while keeping information density balanced.

A further issue concerns the definition of trust and trustworthiness itself. Previous literature has either focused on lay readers’ perceived trust of text authors (Hendriks et al., 2015, 2016a, 2016b; König and Jucks, 2019; Merk and Rosman, 2019) or text credibility (Bromme et al., 2015; Kerwer et al., 2021a; Scharrer et al., 2013, 2019; Thomm and Bromme, 2012; Winter et al., 2015). Whereas both concepts—author trustworthiness and text trustworthiness—assess trust, it is currently unclear whether they constitute a single or two separate dimensions for lay readers.

4. The present study

Based on the aforementioned research gaps, we conducted an experimental online study that systematically varied the easiness and scientificness of four fictitious lay-friendly research summaries to determine influences on author and text trustworthiness. Using previous findings as a foundation, we preregistered 1 the following confirmatory hypotheses:

Hypothesis 1 (H1): There is a difference in participants’ ratings of author expertise (H1a), integrity (H1b), benevolence (H1c), and text trustworthiness (H1d) between different types of research summaries: expertise ratings, integrity ratings, benevolence ratings, and ratings of text trustworthiness are higher with regard to texts exhibiting high easiness compared to low easiness.

Hypothesis 2 (H2): There is a difference in participants’ ratings of author expertise (H2a), integrity (H2b), benevolence (H2c), and text trustworthiness (H2d) between different types of research summaries: expertise ratings, integrity ratings, benevolence ratings, and ratings of text trustworthiness are higher with regard to texts exhibiting high scientificness compared to low scientificness.

Furthermore, we investigated four exploratory research questions:

Research Question 1 (RQ1): Is there a relationship between participants’ ratings of text trustworthiness and their ratings of author trustworthiness (expertise, integrity, benevolence)?

This question addresses the issue of overlap between lay readers’ evaluations of author and text trustworthiness. Since individual beliefs on a subject are updated based on the assumed trustworthiness of a source and irrespective of claim veracity (Pilditch et al., 2020), a distinction between the two dimensions seems plausible.

Research Question 2 (RQ2): Is there an interaction effect between text easiness and text scientificness on participants’ ratings of author trustworthiness (expertise, integrity, benevolence, RQ2a) and ratings of text trustworthiness (RQ2b)?

It is currently unclear if the easiness and the scientificness effect interact to influence author and text trustworthiness. As we have outlined, increasing either easiness or scientificness could potentially reduce the impact of the other effect. We chose to examine the interaction between the effects separately for perceptions of author and text trustworthiness via an exploratory approach.

Research Question 3 (RQ3): Do individual epistemic justification beliefs influence the relationships reported in H1, H2, and RQ2?

As mentioned above, individual reader characteristics may influence the easiness and scientificness effect. In this context, it appears worthwhile to focus on readers’ epistemic justification beliefs. Based on previous literature, Ferguson et al. (2012, 2013) proposed three distinct belief dimensions: justification by authority (knowledge is justifiable based on expert accounts), personal justification (knowledge is justifiable based on personal experiences and opinions), and justification by multiple sources (knowledge is justifiable only by comparing and synthesizing sources). Taking these dimensions into account may help to clarify boundary conditions of the easiness and scientificness effect. For instance, lay readers with a stronger justification by authority belief may be more susceptible to markers of scientificness when it comes to trustworthiness. We again chose an exploratory approach for further examination.

Research Question 4 (RQ4): Does individual need for cognitive closure (NCC) influence the relationships specified in H1, H2, and RQ2?

Another reader characteristic that may interact with text features of easiness and scientificness is NCC. NCC refers to the desire to obtain a definite answer for a question compared to an answer that leaves room for ambiguity (Kruglanski et al., 2010). A high NCC has been associated with a tendency to base judgments on easily obtainable information (Ford and Kruglanski, 1995) and comes with a desire to minimize group conflict (De Grada et al., 1999). A possible interaction of NCC with text easiness or scientificness appears plausible: lay readers with a high NCC may be more inclined to rate a text as trustworthy if it presents information accessibly and less inclined to do so if features of scientific discourse increase. Because this relationship has not yet been probed, we again opted for exploratory analyses.

5. Materials and methods

Sample size calculations and power analysis

A priori sample size was estimated with GPower 3.1 (Faul et al., 2009). For comparisons of interest, knowledge, and prior beliefs between the four summary conditions as well as for exploratory examinations of the influence of epistemic justification beliefs and NCC, we selected “ANOVA: fixed effect, special, main interactions and effects” as a test. For small effect sizes (f = .10), an alpha error of α = .05 and a power of β = .90, this resulted in a recommended sample size of N = 1269. For a planned mixed-model analysis (random effect for participants) of the influence of text easiness, scientificness, and their interaction on author and text trustworthiness, we utilized an online tool by Westfall et al. (2014), specifying a counterbalanced design, an effect size of d = .5, four stimuli, a power of β = .90, a residual variance partitioning coefficient (VPC) of .4, a participant intercept VPC of .3, a stimulus intercept VPC of .1, a participant × stimulus VPC of .05, a participant slope VPC of .1, and a stimulus slope VPC of .05. The recommended sample size was N = 427.9. We selected a target sample size of Ntarget = 1272 to allow for a balanced participant distribution across the 12 quota and 24 experimental conditions.

Sample

We conducted a within-subject experimental online study with a large German-speaking general population sample (Ntarget = 1272) obtained via the panel provider Respondi. Participants were invited by Respondi and received monetary compensation for study completion. We specified the following quota conditions, aiming for an even participant distribution: gender (male vs female), age (18–44 vs 45 and older), and educational attainment level (low level = “Hauptschulabschluss” vs medium level = “Mittlere Reife’’ vs high level = “Abitur”). This resulted in 2 × 2 × 3 = 12 quota conditions, with ntarget = 106 participants per condition. To be included in the study, participants had to (1) be at least 18 years old, (2) at least hold a secondary school degree, and (3) possess native speaker level proficiency in German.

The final dataset was slightly larger than originally intended for technical reasons. In total, N = 1709 participants began the study and N = 1467 participants completed it. For complete cases, the number of participants in the 12 quota conditions ranged from 119 to 131. On average, participants were Mage = 46.94 years old (Min = 18, Max = 88, Mdn = 45, SD = 15.19).

Design

The study used a within-subject repeated-measures design and varied three independent variables: research summary easiness (high vs low), scientificness (high vs low), and the research summary topic (four different texts, see below). Participants read all summaries in a fully randomized order. They were written by the study authors and presented results of four journal articles from the fields of health psychology, work and organizational psychology, clinical psychology, and educational psychology. 2 Crossing the summaries with easiness and scientificness resulted in a 2 × 2 repeated-measures design with pseudo-randomized condition assignment (see Supplemental Material 1). Participants’ assignment was determined at the beginning of the experiment.

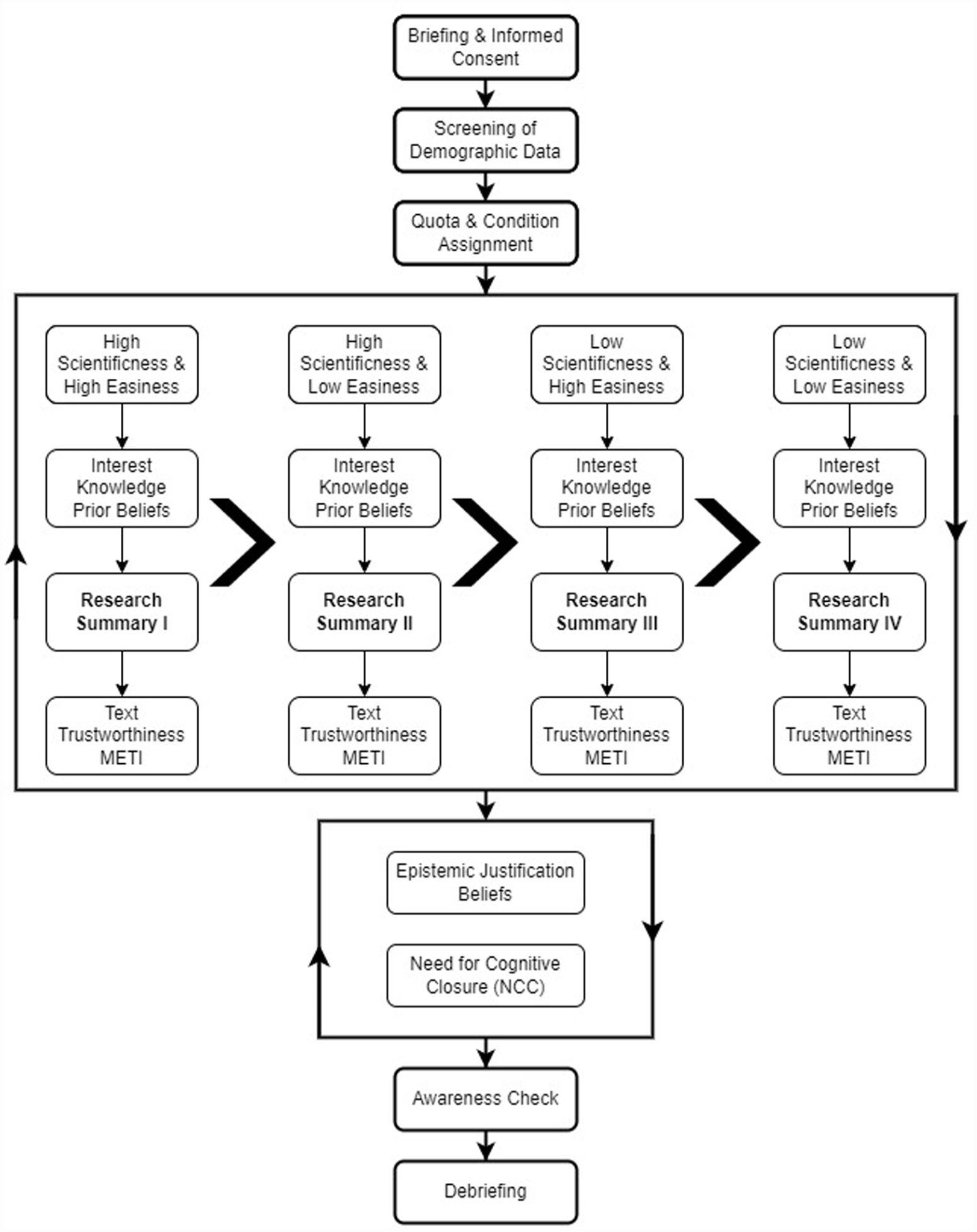

Procedure

The study was carried out online via the survey software Unipark. Initially, participants were told a cover story. Our true goal of examining the easiness and scientificness effect was originally hidden to avoid demand characteristics and additional processing effects. We then sought their informed consent, collected demographic information, and verified that all inclusion criteria were met. Next, participants were allocated to 1 of the 24 experimental conditions and read all four research summaries in a randomized order. For each summary, they were first asked about their interest in, knowledge of, and prior beliefs about the presented topic. They then read each summary for a minimum of 90 seconds, with textual features (text easiness and scientificness) determined by the experimental condition. Subsequently, participants provided their assessments of text easiness, text scientificness, text trustworthiness, and author trustworthiness. After rating all summaries, participants completed a questionnaire on their epistemic justification beliefs, a short NCC scale, and an attention check. Finally, they were debriefed, received contact information, and were redirected to Respondi for compensation. Figure 1 provides an overview of the experimental design. Mean completion time for the study was approximately 23.5 minutes. The study was approved by the ethics committee of Trier University (EK Nr. 09/2022) and preregistered at PsychArchives ahead of data collection: https://doi.org/10.23668/psycharchives.5382.

Illustration of the experimental procedure. Participants received summaries covering all four possible combinations of easiness and scientificness in a randomized order. After rating text and author trustworthiness for all summaries, data on participants’ epistemic justification beliefs and NCC were collected in a randomized order. The experiment ended with an awareness check and a debriefing.

6. Variables

Research summaries

The four research summaries were based on psychological journal articles and presented to participants as works authored by female psychological researchers. In reality, they had all been written by the study authors. All summaries varied in terms of easiness and scientificness, with two possible conditions (low vs high). Summaries in the low-easiness condition employed long sentences, subordinate clauses, double negatives, and an unstructured presentation without paragraphs. In contrast, summaries in the high-easiness condition used short sentences, abstained from subordinate clauses, replaced complicated words with shorter synonyms, and structured information using paragraphs and bullet points. To ensure readability differences, we applied two measures of readability: the Flesch Reading Ease score (Flesch, 1948) and the Lix (Björnson, 1968). For low easiness, we aimed for a Flesch score between 30 and 50 and a Lix score greater than 60. For high easiness, we specified a Flesch score of 50 to 60 and a Lix score of 50 to 60.

For scientificness, summaries in the low condition used evaluative language (e.g. “This impressive study”), omitted references and research method descriptions, presented results descriptively, and used exclamations (e.g. “Don’t you think?!”). The high-scientificness condition was characterized by neutral language, references to other journal articles, more precise method descriptions, and presentation of results supported by statistics. The original German summaries and English translations are provided in Supplemental Material 2.

Trustworthiness

After each summary, participants rated perceived text trustworthiness (“For me, the summary of the topic XYZ seemed . . .”) on a Likert-type scale ranging from 1 (not credible at all) to 8 (very credible). Furthermore, we used the Muenster Epistemic Trustworthiness Inventory (METI; Hendriks et al., 2015) to obtain ratings of author trustworthiness. Asking participants for their assessment of the summary author (“With reference to her insights, the scientist whose summary on the topic XYZ I just read seems to be . . .”), the 14-item METI probes for perceptions of expertise (6 items), integrity (4 items), and benevolence (4 items). Items are based on a semantic differential, originally allowing for ratings between 1 and 7. However, participants only received a scale with ratings between 1 (e.g. unintelligent) and 5 (e.g. intelligent) in the present study due to a programming error. 3 Implications of this will be discussed in the Limitations section. Scale reliability determined by Cronbach’s alpha (α) was still excellent, with α = .95 for expertise, α = .93. for integrity, and αs = .91–.93 for benevolence across summaries. Confirmatory factor analysis (CFA) also confirmed the METI’s structure of three distinct trust dimensions. 4

Epistemic justification beliefs

Epistemic justification beliefs were assessed with a questionnaire developed by Klopp and Stark (2016). It consists of nine items, asking participants to provide answers to three items targeting justification by authority, personal justification, and justification by multiple sources. Answers were given on a Likert-type scale ranging from 1 (entirely false) to 6 (entirely correct). Reliability analyses yielded good to acceptable reliability, with α = .82 for justification by authority, α = .78 for personal justification, and α = .77 for justification by multiple sources. The results of CFA supported three separate justification belief dimensions (see Note 4).

Need for cognitive closure

Participants’ NCC was measured with a German short NCC scale (Schlink and Walther, 2007). It consists of 16 items such as “I do not like unforeseeable situations,” and participants provided answers on a Likert-type rating scale from 1 (completely disagree) to 6 (completely agree). A Cronbach’s alpha of α = .78 suggested adequate scale reliability.

Covariates and manipulation checks

Data on multiple covariates were collected. Participants’ prior interest, topic knowledge, and topic beliefs were measured before each summary. For prior interest, participants answered a single item (“I personally find the topic of XYZ interesting”) on a scale from 1 (not interesting at all) to 8 (very interesting). Similarly, one self-assessment item (“Concerning the topic XYZ, I have . . .”) assessed prior topic knowledge on a scale from 1 (no prior knowledge) to 8 (profound prior knowledge). In addition, we tapped into readers’ subjective beliefs about each summary topic with two questions, one of which was always inverted. They conveyed strong prior attitudes regarding the presented topics (e.g. “I believe that exercise outside is one of the best methods to reduce stress”) with responses recorded on a Likert-type scale ranging from 1 (strongly disagree) to 10 (strongly agree).

Finally, as a manipulation check, after reading each summary, participants provided their subjective ratings of text easiness (“I found the summary of the topic XYZ that I just read . . .”) on a scale from 1 (very difficult to read) to 8 (very easy to read). Subjective text scientificness was similarly assessed (“I found the summary of topic XYZ that I just read . . .”: 1 (very unscientific) to 8 (very scientific)).

Awareness check

We used an awareness check to confirm that participants worked through the study attentively. It was included at the end of the study to minimize impacts on response behavior. Participants were presented a short fictional story about their behavior during a famine (see Gamez-Djokic and Molden, 2016). Midway through, they read that the story was included solely to verify attention and that the following question should be left unanswered. Next, a question with nine possible responses on a Likert-type scale ranging from 1 (absolutely not) to 9 (absolutely) appeared. The awareness check was coded as pass when participants selected no answer and clicked continue. Otherwise, the awareness check was coded as fail. In total, n = 1144 participants (77.87%) passed the awareness check.

Statistical modeling

Data were analyzed with the statistical software R, version 4.2.1 (R Core Team, 2022). To test H1 and H2 and RQ2–RQ4, we computed mixed models. 5 Text easiness and scientificness were coded with the low conditions as reference categories, and we computed partial R² statistics for each model predictor. All statistical tests were conducted with a significance criterion of p = .05. The entirety of our analyses and all utilized packages are reported in Supplemental Material 3.

7. Results

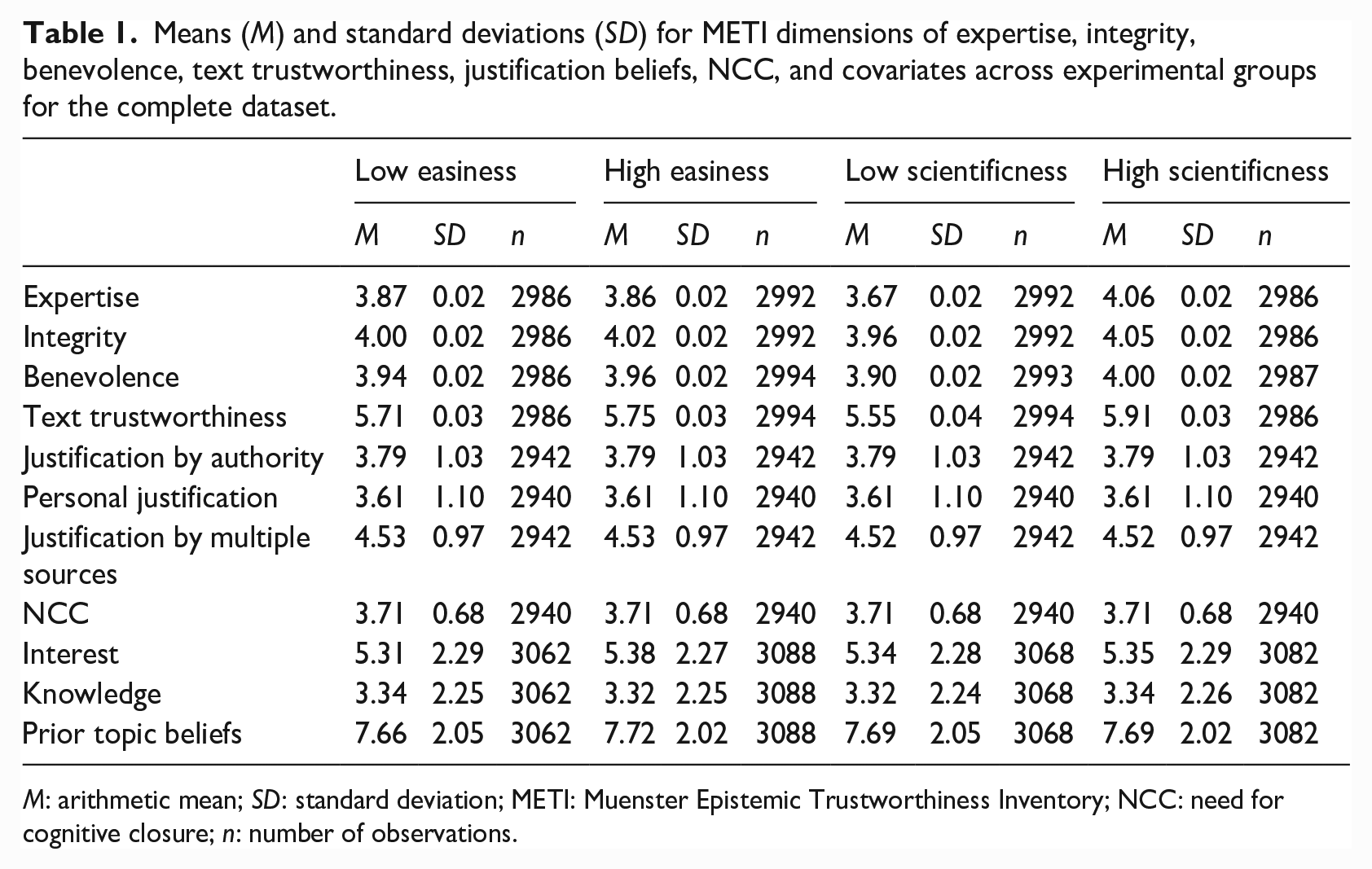

Descriptive statistics for author and text trustworthiness, epistemic justification beliefs, NCC, and covariates are provided in Table 1. Due to the extent of our analyses, we will report findings as follows: whereas the results of analyses focusing on H1 and H2 will be presented in detail, reports of the analyses addressing RQ1–RQ4 will emphasize key findings only. For all the analyses using complex mixed models (i.e. H1, H2, RQ2, RQ3, RQ4), model parameters were standardized using R’s scale function. We calculated all analyses twice, first using data from all participants and then using data of those who successfully passed the awareness check. The differences that emerged were miniscule; therefore, the following analyses are based on the subset of participants who passed the awareness check. Results for the complete dataset are available in Supplemental Material 3.

Means (M) and standard deviations (SD) for METI dimensions of expertise, integrity, benevolence, text trustworthiness, justification beliefs, NCC, and covariates across experimental groups for the complete dataset.

M: arithmetic mean; SD: standard deviation; METI: Muenster Epistemic Trustworthiness Inventory; NCC: need for cognitive closure; n: number of observations.

Manipulation check and covariates

We defined three regression models, with subjective easiness and scientificness as dependent variables. The first model served as a baseline model. The second model introduced the experimental condition (either the easiness or scientificness manipulation) and research summary type as fixed predictors, and participant identifier (ID) as a random intercept. Finally, the third model added the interaction between experimental condition and research summary type. For subjective easiness, the second model provided the best fit compared to the baseline model, χ2(4) = 287.694, p < .001, while the third model provided no fit improvement (p = .232). For subjective scientificness, the third model provided the best fit compared to the second model, χ2(3) = 15.981, p < .001. The main effects of the easiness (b = .396, SE = .048, p < .001) and scientificness conditions (b = 1.168, SE = .101 p < .001) reached significance. 6 Texts in the high-easiness condition (M = 5.963, SD = 1.960) were overall rated as easier compared to the low-easiness condition (M = 5.571, SD = 2.076), and similar results emerged for scientificness (high scientificness: M = 5.975, SD = 1.630 vs low scientificness: M = 4.654, SD = 2.071). Thus, our manipulations of text easiness and scientificness were successful. The easiness and scientificness conditions did not differ regarding prior interest, knowledge, or topic beliefs (see Supplemental Material 3, ps > .152 for all model comparisons).

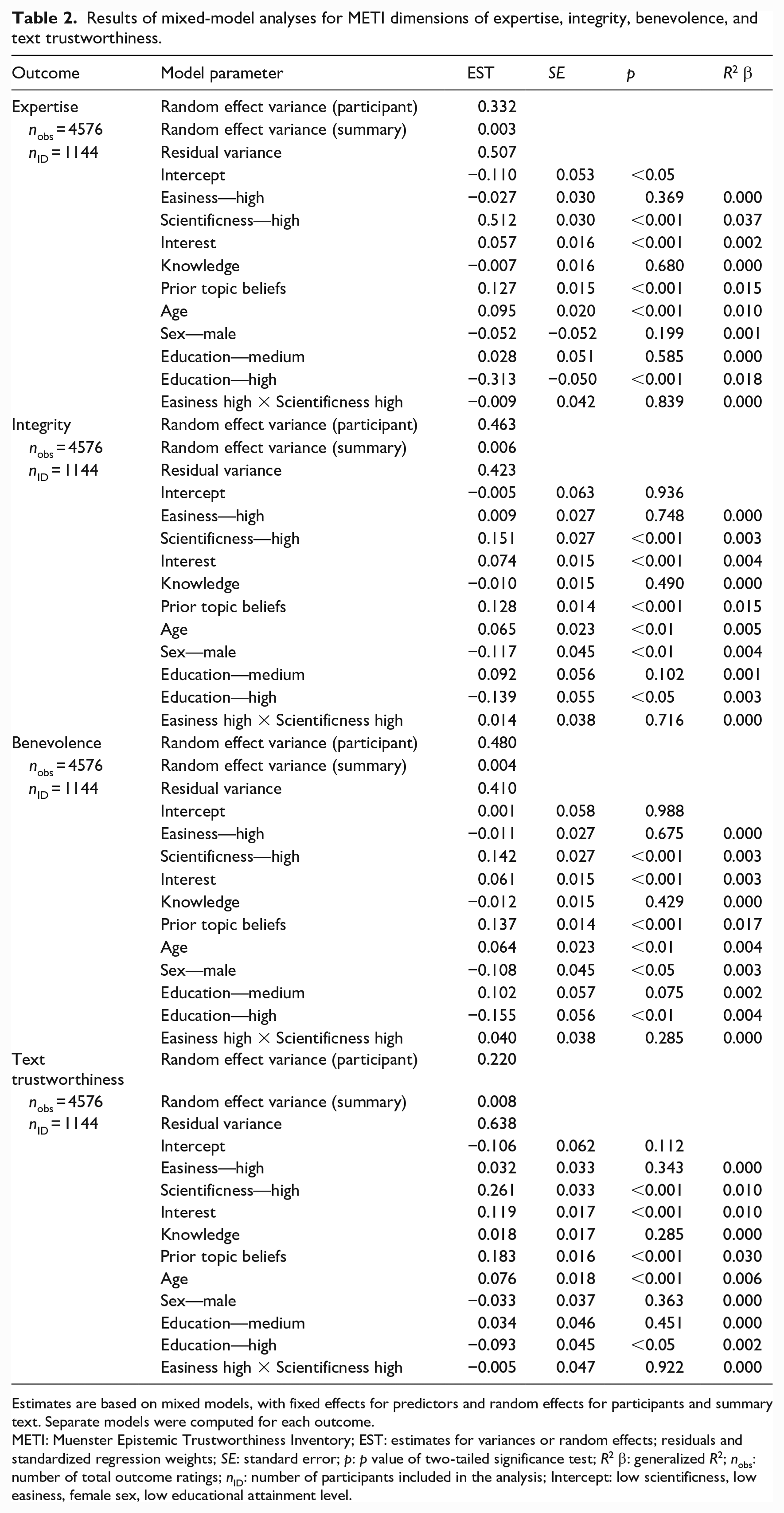

H1 and H2: Effect of easiness and scientificness on trustworthiness

Mixed-model analyses were conducted to determine the influence of text easiness, scientificness, and the covariates on the outcomes. Predictors were introduced stepwise. Results for the final mixed models are reported in Table 2. Both for models using author trustworthiness (i.e. expertise, integrity, benevolence) and text trustworthiness as outcomes, summary easiness did not significantly predict higher trust (β = –.027–.032, all ps > .343). In contrast, higher summary scientificness led to significant increases in ratings of METI Expertise (β = .512, SE = .030, p < .001, R² = .037), METI Integrity (β = .151, SE = .027, p < .001, R² = .003), METI Benevolence (β = .142, SE = .027, p < .001, R² = .003), and text trustworthiness (β = .261, SE = .033, p < .001, R² = .010). Using shorter sentences, a simpler sentence structure, or structuring in the summary did not have an influence on readers’ evaluations of trustworthiness. However, readers’ evaluations were positively influenced by summary features such as references, detailed research method descriptions, statistics, and a neutral tone. H1a–H1d are thus rejected, while H2a–H2d are confirmed.

Results of mixed-model analyses for METI dimensions of expertise, integrity, benevolence, and text trustworthiness.

Estimates are based on mixed models, with fixed effects for predictors and random effects for participants and summary text. Separate models were computed for each outcome.

METI: Muenster Epistemic Trustworthiness Inventory; EST: estimates for variances or random effects; residuals and standardized regression weights; SE: standard error; p: p value of two-tailed significance test; R2 β: generalized R2; nobs: number of total outcome ratings; nID: number of participants included in the analysis; Intercept: low scientificness, low easiness, female sex, low educational attainment level.

RQ1: Relationship between text trustworthiness and author trustworthiness

The overlaps between participants’ ratings of text and author trustworthiness were computed via Pearson’s product-moment correlations. For all summaries, the dimensions of expertise, r(1142) = .660–.733, p < .001; integrity, r(1142) = .591–.649, p < .001; and benevolence, r(1142) = .551–.643, p < .001, were significantly positively correlated with text trustworthiness. High ratings on the METI thus coincided with high text trustworthiness and low ratings on this measure coincided with low text trustworthiness.

RQ2: Interaction effect between easiness and scientificness

The models defined to test H1 and H2 were also used to explore potential interactions between text easiness and scientificness on trustworthiness outcomes by including an additional interaction term (see Table 2). This interaction, however, failed to reach significance in all models (β = –.009 to –.040, all ps > .285). Neither perceptions of author trustworthiness nor text trustworthiness were influenced by an interplay between text easiness and scientificness.

RQ3: Individual epistemic justification beliefs

The impact of individual epistemic justification beliefs on text and author trustworthiness and their interaction with text easiness and scientificness were examined with mixed models.

Because our findings revealed that only text scientificness emerges as a significant predictor for text and author trustworthiness, we will highlight its interactions with justification beliefs in the following.

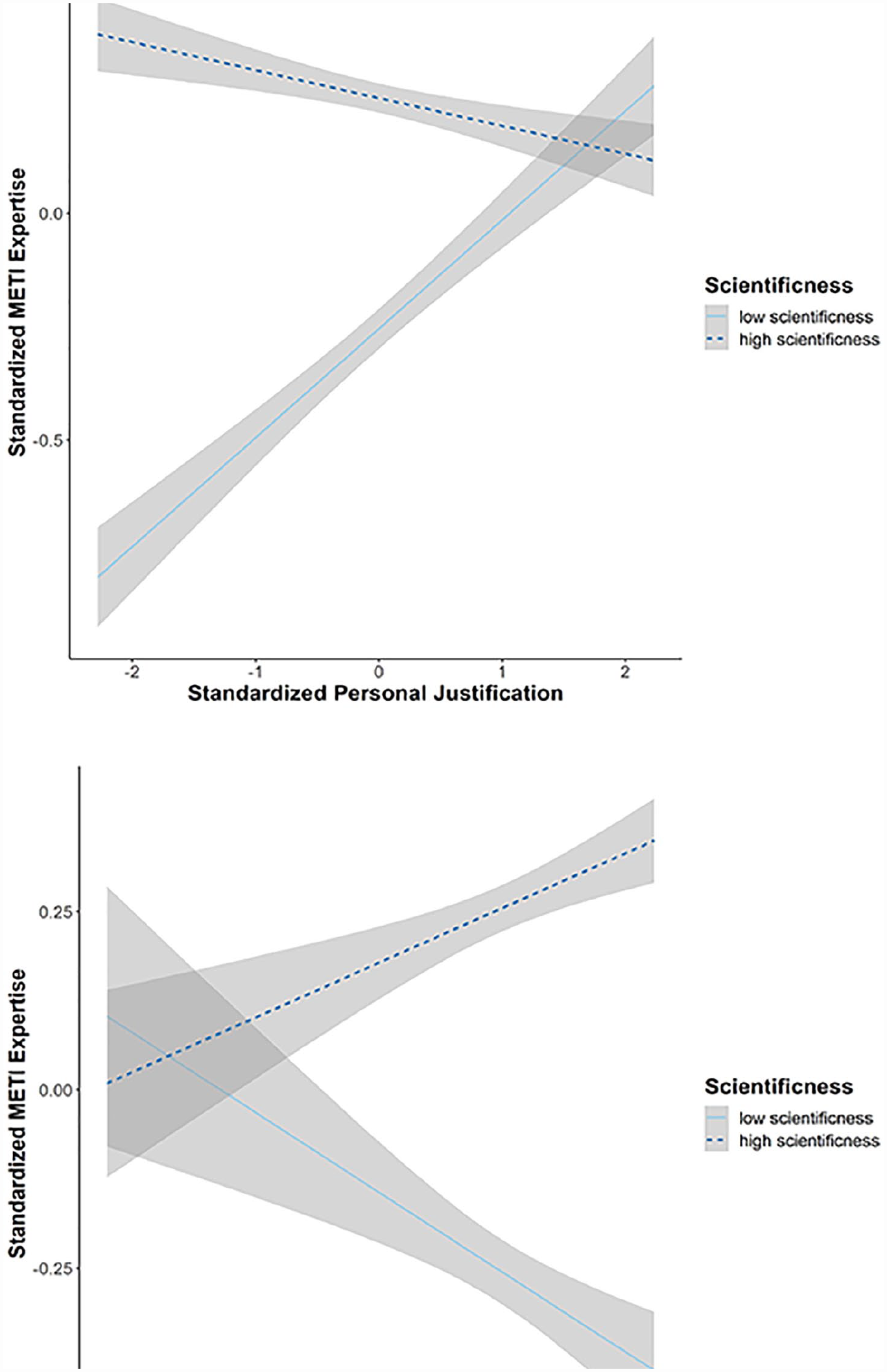

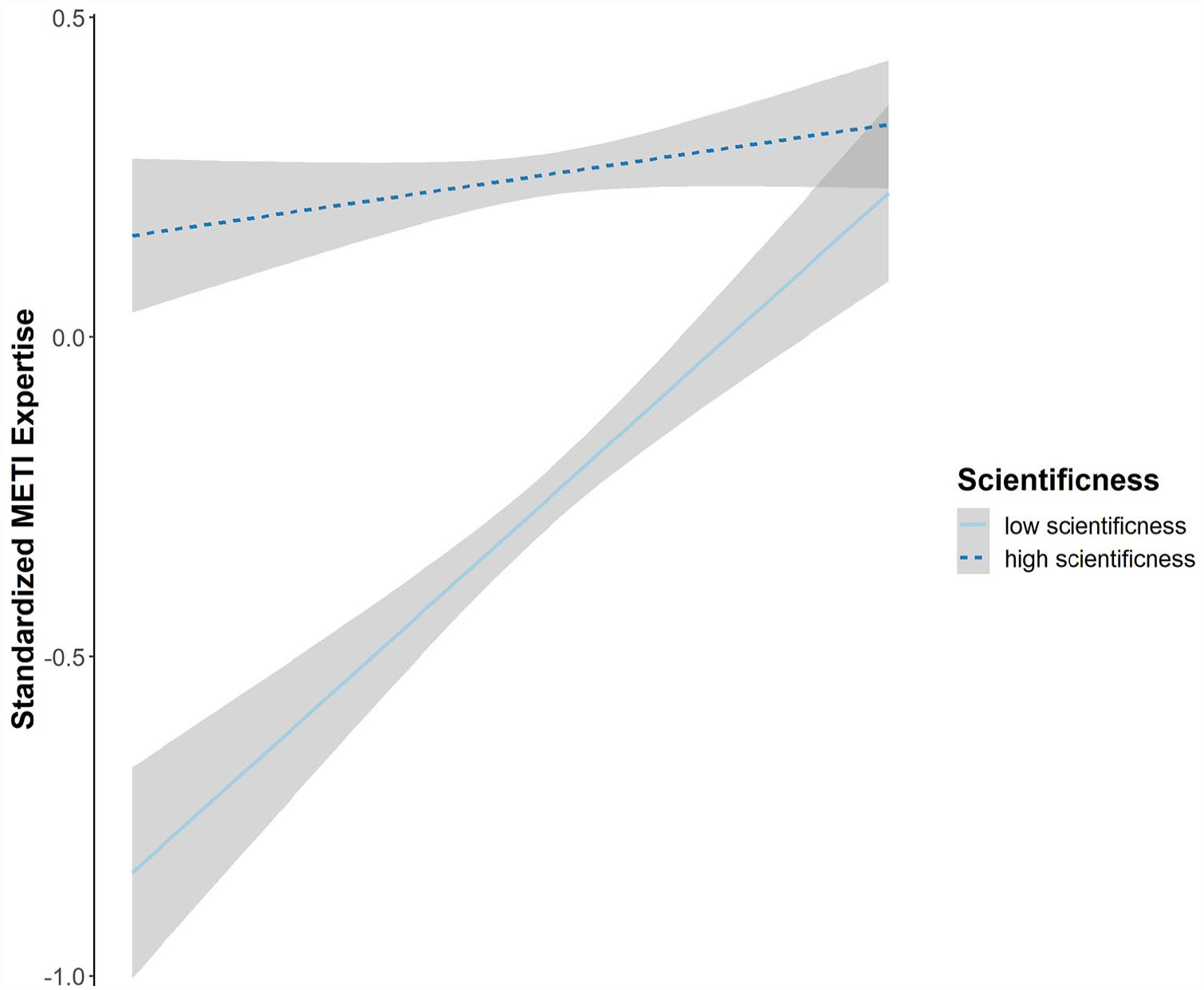

A significant interaction of justification by authority with text scientificness was only found for METI Expertise (β = –.044, SE = .021, p < .05, R2 < .001). For all other outcomes, the interactions between justification by authority and text scientificness remained nonsignificant (β = –.019 to –.030, all ps > .115). Regarding personal justification, significant interactions with text scientificness emerged for METI Expertise (β = –.305, SE = .020, p < .001, R2 = .007), METI Integrity (β = –.160, SE = .019, p < .001, R2 = .007), METI Benevolence (β = –.156, SE = .019, p < .001, R2 = .007), and text trustworthiness (β = –.279, SE = .023, p < .001, R2 = .022). Finally, there was a significant interaction between justification by multiple sources and text scientificness for METI Expertise (β = .145, SE = .021, p < .001, R2 = .006), METI Integrity (β = .079, SE = .019, p < .001, R2 = .002), METI Benevolence (β = .085, SE = .019, p < .001, R2 = .002), and text trustworthiness (β = .096, SE = .024, p < .001, R2 = .003). Figure 2 illustrates the interactions between text scientificness and personal justification beliefs as well as justification by multiple sources for METI Expertise. Similar patterns emerged for the other outcome dimensions (see Supplemental Material 3). Generally, participants with low personal justification belief perceived both the summary author and the summary itself as more trustworthy when a more scientific writing style was used. However, these differences diminished for participants with high personal justification beliefs. An opposite pattern emerged for justification by multiple sources. Participants with a low belief in justification by multiple sources did not show trustworthiness differences between summaries of low and high scientificness. However, participants with a high belief in justification by multiple sources rated authors and texts as more trustworthy in the high-scientificness condition.

Interactions between personal justification beliefs and text scientificness (upper panel) and justification by multiple sources (lower panel) for METI Expertise. Scores on the x and y axes are standardized, with M = 0 and SD = 1. The gray area represents a 95% CI. With increasing personal justification beliefs, readers ascribed more expertise to researchers in the “low-scientificness” condition. With increasing justification by multiple-sources beliefs, readers rated researchers’ expertise as higher in the “high-scientificness” condition.

RQ4: Need for cognitive closure

We conducted mixed-model analyses to investigate whether individual NCC interacted with text easiness or scientificness to influence author or text trustworthiness. As before, model testing was carried out stepwise. Results revealed that the interaction between individual NCC and text scientificness significantly predicted METI Expertise (β = –.135, SE = .021, p < .001, R2 = .005), METI Integrity (β = –.088, SE = .019, p < .001, R2 = .002), METI Benevolence (β = –.086, SE = .019, p < .001, R2 = .002), and text trustworthiness (β = –.111, SE = .024, p < .001, R2 = .004). Figure 3 depicts the interaction for METI Expertise, and illustrations for the other outcome dimensions are provided in Supplemental Material 3. As can be seen, participants with low NCC rated both the summary author and the summary as significantly more trustworthy when a highly scientific writing style was used. However, these differences became less pronounced with increasing individual NCC, primarily due to a trustworthiness increase for summaries of low scientificness. For high NCC participants, no trustworthiness differences emerged between the two scientificness conditions.

Interaction between scientificness and individual NCC for METI Expertise. Scores on the x and y axes are standardized, with M = 0 and SD = 1. The gray area represents a 95% CI. As readers’ NCC increases, they ascribe more expertise to researchers in the “low-scientificness” condition.

Exploratory analysis

After testing H1, H2, and RQ2, we used the same mixed models and replaced the categorical predictors of text easiness and scientificness with participants’ subjective easiness and scientificness ratings from the manipulation check. 7 As a result, both the effects of easiness (all βs > .081, ps < .001, R2s > .081), scientificness (all βs > .377, ps < .001, R2s > .166), and their interaction (all βs > .028, ps < .001, R2s > .002) reached significance when predicting the METI dimensions and text trustworthiness. The implications of these findings will be considered in more detail in the “Discussion” section.

8. Discussion

The aims of the present study were to investigate the impact of the easiness and scientificness effect and their potential interaction on lay readers’ judgments of trustworthiness. In addition, we examined the overlap between author and text trustworthiness and potential impacts of individual epistemic justification beliefs and NCC on the easiness and scientificness effect.

Main findings

Overall, our findings provide support for the scientificness effect (H2). In line with prior research (Bromme et al., 2015; Thomm and Bromme, 2012), this highlights the importance of scientific communication style for building trustworthiness. Combining our findings with the concept of “second-hand evaluations” (Bromme and Goldman, 2014), it can be argued that lay readers use text scientificness as a cue to make conclusions about researchers’ qualifications and, ultimately, about their trustworthiness.

Crucially, our study did not replicate the easiness effect (H1) and yielded no evidence for an interaction between text easiness and scientificness on author and text trustworthiness (RQ2). How can one explain these present findings that stand in contrast to multiple previous studies (Scharrer et al., 2012, 2013, 2014, 2017, 2019)? First, it is worth pointing out that previous research primarily varied easiness through scientific jargon. While this considerably affects the easiness of text material, jargon could also be considered as a feature of scientificness (Hirst, 2003) and, as such, as a possible confound. Because we aimed to examine easiness and scientificness separately, we decided against employing jargon as an easiness variation. As a consequence, this may have resulted in comparatively smaller effect sizes and contributed to the nonsignificant results that were obtained for the easiness effect.

Another point worth mentioning is the easiness effect and the interaction of easiness × scientificness that emerged in exploratory analyses of the participants’ subjective ratings. To help make sense of this, it is possible to draw on findings from Tolochko et al. (2019). While simultaneously examining syntactic and semantic complexity, they were able to demonstrate that only variations in the semantic complexity of texts had a significant impact on readers’ assessment of text complexity. Our study primarily varied easiness on a syntactic level (i.e. via readability and structuring), which may be why we found no effect of text easiness on trustworthiness. Participants’ subjective appraisal of easiness likely goes beyond syntactic features, and their subjective appraisal of semantic complexity may play a greater role for trustworthiness. As such, exploring interindividual factors in easiness perceptions may be an interesting avenue for future research.

We additionally aimed to determine the overlap between author and text trustworthiness (RQ1). Overall, there was a strong positive association between text trustworthiness and the three METI author trustworthiness dimensions. When participants rated a text as highly trustworthy, they were also likely to ascribe high expertise, integrity, and benevolence to its author. While large, the observed correlations were not perfect, and certain reader or text characteristics may have varying impacts on text and author trustworthiness. Further examinations here may have merit for science communication.

In line with prior conceptualizations (cf. Ferguson et al., 2012, 2013), our findings also emphasize the role of epistemic justification beliefs in lay readers’ trust allocations (RQ3): if a reader takes a subjective stance and believes that all individual positions have merit, it may not matter much if a scientific text uses references or neutral language. However, with a strong belief in justification by multiple sources, these features may become crucial, for example, by linking the present text to other studies or by signifying a desire to communicate impartially. Building trust via scientificness may be harder in lay readers with strong personal justification beliefs, and there seems to be a need for scientificness features specifically when addressing readers valuing justification by multiple sources.

Finally, we observed an influence of individual NCC on the scientificness effect (RQ4). Given that a high NCC coincides with a preference for unambiguous information (Kruglanski et al., 2010) and a reduced likelihood of persuasion in group formats (Kruglanski et al., 1993), participants with a high NCC in the present study may not have been strongly motivated to take additional information from the high-scientificness summaries into account. Their trustworthiness assessments may have already formed after reading the first sentences and remained relatively stable, suggesting that NCC should be considered in connection with increasing trust and that the scientificness effect may depend on reader characteristics.

Strengths and limitations

Major strengths of our study are its elaborate design and its diverse sample in terms of gender, age, and educational background. Furthermore, we utilized short research summaries based on published research from four different areas of psychology. Both aspects increase the generalizability of our findings. In addition, text easiness and scientificness were assessed simultaneously, whereas previous studies had focused on either one or the other. This allowed for a direct examination of interaction effects.

However, limitations remain. The METI programming error and the subsequent use of a scale with five instead of seven points may have impacted our results. Specifically, the case can be made that the limited scale range may have decreased the variability in participants’ trustworthiness judgments and contributed to the nonsignificant easiness effect. While this cannot be ruled out entirely and future studies should use the correct scale, mean and standard deviation differences are often comparable between five- and seven-point scales (Dawes, 2008). In addition, while syntactical complexity has been shown to influence readers’ complexity ratings, this does not always translate to significant effects on knowledge or text comprehension (Hackemann et al., 2022). A similar pattern may be applicable for the impact of text easiness on trustworthiness judgments.

In addition, all summaries were presented in German. Cultural influences regarding science perceptions—and thus regarding scientificness and knowledge justification—cannot be ruled out. Science communication research has previously often focused on science perceptions in Western countries, and there is a need to further examine whether phenomena such as the easiness or scientificness effect apply cross-culturally.

Another limitation arises from the combination of multiple text characteristics to create summaries of high easiness or scientificness. While combining text features likely increased our chances of discovering easiness or scientificness effects in the first place, it also rendered us unable to assess the impact of individual text features (e.g. use of references) on said effects. Future research could thus compare the influence of singular feature variations on trustworthiness, providing insights into which features are most important for lay readers’ perceptions of easiness or scientificness.

9. Conclusion

By examining a diverse sample and four fictional research summaries, we demonstrated a positive influence of text features associated with scientificness on lay readers’ trustworthiness perceptions. Furthermore, we showed that individual epistemic justification beliefs and NCC affect the impact of scientificness on lay reader trust.

Some initial considerations for increasing the perceived trustworthiness in lay-friendly research summaries can be derived: first, it may be advantageous to include references and to precisely describe research methods. Second, a neutral style of language may be beneficial compared to a personal, aggressive style. Third, reporting basic quantitative statistics instead of descriptive interpretations could be considered. And fourth, taking into account the target audience of research summaries could have positive outcomes. For instance, features of scientificness could be introduced as early and saliently as possible when addressing an audience with high NCC.

However, boosting scientificness out of the singular notion that higher trustworthiness is desirable could be a double-edged sword. While providing additional details on the research methods that were used or the statistical results of a study may increase trustworthiness, previous research demonstrated that such additional details reduce lay readers’ user experience (Kerwer et al., 2021b). Perhaps even more crucially, increasing trustworthiness may lead to a higher tendency of lay readers to consume research superficially, which contradicts the overall goal of science to encourage critical thinking. Finding the middle ground between increasing trustworthiness and reporting findings objectively poses a balancing act for science communication. Yet academic science has to increasingly compete with pseudoscience and fake news for public trust. Therefore, it seems crucial that researchers familiarize themselves with aspects that foster public trust in their research. Such knowledge may also benefit the public in informed decision making and discerning between science and pseudoscience. Our hope is that our study helps to provide a pathway toward these objectives.

Supplemental Material

sj-pdf-1-pus-10.1177_09636625231176377 – Supplemental material for Indicators of trustworthiness in lay-friendly research summaries: Scientificness surpasses easiness

Supplemental material, sj-pdf-1-pus-10.1177_09636625231176377 for Indicators of trustworthiness in lay-friendly research summaries: Scientificness surpasses easiness by Mark Jonas, Martin Kerwer, Anita Chasiotis and Tom Rosman in Public Understanding of Science

Footnotes

Acknowledgements

We would like to acknowledge Niclas Gobert for his support in testing the online study and in creating the English translations of the research summaries, as well as Lisa Trierweiler for proofreading the manuscript and language editing.

Data availability statement

Data and code for this publication are available at PsychArchives. Data: http://dx.doi.org/10.23668/psycharchives.12798, code: ![]()

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by internal ZPID funds. The authors received no third-party funding.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.