Abstract

Scientists increasingly use Twitter for communication about science. The microblogging service has been heralded for its potential to foster public engagement with science; thus, measuring how engaging, that is dialogue-oriented, tweet content is, has become a relevant research object. Tweet content designed in an engaging, dialogue-oriented way is also supposed to link to user interaction (e.g. liking, retweeting). The present study analyzed content-related and functional indicators of engagement in scientists’ tweet content, applying content analysis to original tweets (n = 2884) of 212 communication scholars. Findings show that communication scholars tweet mostly about scientific topics, with, however, low levels of engagement. User interaction, nevertheless, correlated with content-related and functional indicators of engagement. The findings are discussed in light of their implications for public engagement with science.

Twitter has emerged as an important platform for science communication (e.g. Brossard and Scheufele, 2022; Guenther et al., 2021; Henning and Kohler, 2020). It is popular among scientists (e.g. Ke et al., 2017) and has been heralded for its potential to foster public engagement with science (e.g. Jia et al., 2017). In contrast to traditional, journalistic media, the facilitation of two-way interaction is seen as an enabling factor (e.g. Collins et al., 2016). This aligns with current approaches in science communication to dialogue and participation (e.g. American Association for the Advancement of Science (AAAS), n.d.; Irwin, 2008; Smith, 2015), for example, as part of the legitimization of science funding (e.g. Guenther and Joubert, 2021; Kahle et al., 2016). Although scientists 1 have various motives to use Twitter, they can use the microblogging service to stimulate public engagement with science (e.g. Jünger and Fähnrich, 2020).

Such a motive suggests that scientists are communicating on Twitter in a way that is engaging public audiences (e.g. Côté and Darling, 2018; Darling et al., 2013). Public engagement with science—although there are many meanings and objectives (e.g. Weingart et al., 2021)—is about opportunities for dialogue, two-way interaction, mutual learning, and participation (e.g. AAAS, n.d.; Irwin, 2008; Jünger and Fähnrich, 2020). The underlying assumption of this article is that to foster public engagement with science, tweet content should be designed in a dialogue-oriented and thus engaging way. Thus, the present study applies indicators of engagement to measure how engaging tweet content is, that is, to what extent tweets potentially invite public audiences to interact and encourage dialogue. Tweet content designed in an engaging, dialogue-oriented way is also supposed to link to user interaction (e.g. retweeting; see also Della Guista et al., 2021), which further increases the visibility of content (e.g. Chan et al., 2022). Hence, the relationship between indicators of engagement and user interaction (i.e. likes, retweets) will also be examined. Empirically, this will be investigated for tweets of communication scholars. How scientists use Twitter seems to be dependent on their respective scientific field (e.g. Della Guista et al., 2021; Henning and Kohler, 2020; Holmberg and Thelwall, 2014). However, limiting this study to communication scholars is justified by the fact that social scientists are underrepresented in research on science communication (e.g. Jünger and Fähnrich, 2020; Rauchfleisch, 2015), although they are overrepresented on Twitter (e.g. Ke et al., 2017). Thus, the sample chosen for this study is used as a test case to investigate the proposed relationships (see also Chan et al., 2022).

1. Indicators of engagement in Tweet content and their links to user interaction

On Twitter, scientists enjoy independence from news media (e.g. Smith, 2015), staying up-to-date about current research trends (e.g. Côté and Darling, 2018; Veletsianos and Kimmons, 2016), and reaching global audiences (e.g. Thelwall et al., 2013). Most scientists state that they use Twitter to reach peers, with public audiences ranked second; however, what they like most about Twitter is its audience diversity (e.g. Collins et al., 2016). Twitter thus blurs the boundaries between external science communication to public audiences and internal, scholarly communication to peers (e.g. Jünger and Fähnrich, 2020), affecting communication behaviors (e.g. Jia et al., 2017), such as which topics are discussed and how.

Regarding the topics, scientists usually but not always tweet about science (e.g. Jünger and Fähnrich, 2020). They share publications (e.g. Schmitt and Jäschke, 2017), but also newspaper articles (e.g. Ke et al., 2017), and may disclose personal information (e.g. Sugimoto et al., 2017) or links to entertainment (e.g. Rauchfleisch, 2015). Since less is known about the intensity of Twitter use and the specific tweet content for the population investigated in this study, we ask this initial research question:

RQ1. What are the topics that communication scholars tweet about?

Regarding the how, scientists can use Twitter for many reasons, among them to foster public engagement with science (e.g. Jünger and Fähnrich, 2020). However, the presence of Twitter alone does not guarantee conversations about science (e.g. Sugimoto et al., 2017). Thus, for the how of communication, this study is interested in indicators to measure the degree to which tweet content of communication scholars can be described as engaging, that is, the degree to which this content invites a reader to interact and is open to dialogue (e.g. Yeo et al., 2020). This is not a full conceptualization of public engagement with science as a concept; we do not measure if tweet content leads to participation/involvement of publics (e.g. AAAS, n.d.; Weingart et al., 2021). Rather, levels of engagement will be considered, namely, how much tweet content potentially enables dialogue. Consequently, indicators of engagement are defined as characteristics of scientists’ tweet content that potentially enable dialogue with nonscientific publics (see also Weingart et al., 2021). We separate content-related from functional indicators of engagement.

This study proposes seven dialogue-oriented characteristics of tweet content as content-related indicators of engagement (see also Della Guista et al., 2021; Jünger and Fähnrich, 2020; Yeo et al., 2020): (1) references made (i.e. who is addressed), with references made to others seen as more engaging than references to oneself; (2) evaluations, and (3) emotions, which can express opinions and trigger reactions, (4) humor (because of its conversational nature), (5) elements of discussion (e.g. questions and answers), (6) socializing (e.g. congratulations), and (7) activation (such as requests) because of their dialogic nature. Jünger and Fähnrich (2020) describe such indicators as speech acts. Findings so far have shown that tweets of communication scholars are often scholarly communication, and tweets containing links to scholarly articles frequently only provide publication titles or short summaries (e.g. Thelwall et al., 2013); such tweets are thus not seen as engaging public audiences because they are not dialogue-oriented.

In addition, functional indicators of engagement refer to a platform-specific affordance. On Twitter, these include (1) hashtags, which create connections and (2) mentions of other accounts; (3) audiovisual content, which can be stimulating; and (4) the links provided. Hence, such indicators can also foster interaction and dialogue (e.g. Della Guista et al., 2021). Scientists seem more prone to use links in their tweets than other users (Schmitt and Jäschke, 2017); they also often mention others and/or use hashtags (e.g. Veletsianos and Kimmons, 2016). We propose, therefore, a second research question:

RQ2. Applying content-related and functional indicators, how engaging and thus dialogue-oriented is the tweet content of communication scholars?

These indicators of engagement in tweet content are subsequently supposed to affect user interaction (e.g. Darling et al., 2013). User interaction, also referred to as social media engagement, audience engagement, or user behavior (Guenther et al., 2021; Kahle et al., 2016), usually includes the number of likes, retweets, and comments of a tweet, which research showed can increase reach and affect how audiences interpret content (e.g. Brossard, 2013; Yeo et al., 2020). User interaction can be categorized into consuming (e.g. viewing content), participating (e.g. one-click activities such as liking, sharing), and generating (e.g. creating own content, commenting) (see Taddicken and Krämer, 2021). Previous research has shown that functional indicators of engagement, such as mentions (e.g. Della Guista et al., 2021), can increase user interaction—the same may be true for content-related indicators of engagement (e.g. humor; Yeo et al., 2020). Indeed, many tweets of scientists contain hashtags, mentions, and links—sometimes above the Twitter user average—which can potentially increase conversation and collaboration (e.g. Holmberg and Thelwall, 2014). However, research on this is sparse; thus, we will explore this issue in this question:

RQ3. How do indicators of engagement relate to user interaction (i.e. numbers of likes and retweets)?

2. Method

Sample

To answer the RQs, the present study relies on a quantitative content analysis of tweets by communication scholars who are members of the German Communication Association (Deutsche Gesellschaft für Publizistik und Kommunikationswissenschaft, DGPuK). Members of DGPuK are usually affiliated with institutions in Germany, Austria, and Switzerland. A list of members was retrieved in mid-2020 (n = 1234). An assistant helped to identify relevant members (i.e. active researchers affiliated with universities/research institutes; n = 676; 55%). This decision to exclude nearly half of the members of DGPuK was because not all members produce research and hence would be expected to communicate about their findings (see also Côté and Darling, 2018). For those classified as relevant, the search function on Twitter, follower lists, and institutional profiles were used to identify their respective Twitter handles. When a profile was identified, the student assistant noted the person’s gender (male or female), career position (PhD student, post doc, or professor), and looked up the respective H-index on Google Scholar. In total, 308 accounts were counted. Using the rtweet package (Kearney, 2019), for reasons of practicality, we retrieved the last (maximum of) 100 tweets of each account in October 2020, resulting in a sample of n = 23,136 tweets.

Following Jünger and Fähnrich (2020), we only kept accounts that counted more than 10 tweets and more than 10 followers (thus excluding 37 accounts). Furthermore, accounts had to be active with at least one tweet in the last month before data collection (excluding 54 accounts). 2 This resulted in a sample of 217 accounts and n = 19,259 tweets. Since we were only interested in original posts—that is, content created by individuals—we excluded retweets, replies, and quotes—leading to a final sample of 212 accounts and n = 2884 tweets.

Systematic content analysis

The content analysis is based on formal and content-related categories. For the formal categories, the rtweet package contained information relevant for the analysis, namely, the number of likes and the number of retweets. Hence, in this study, we concentrated on user interaction as online participation (e.g. Taddicken and Krämer, 2021). In addition, coders had to assess the relevance of a tweet. Tweets that only contained one word, a link, or an emoji, and those written in any language other than German or English were excluded. Coders also assessed if the tweet was part of a thread or not.

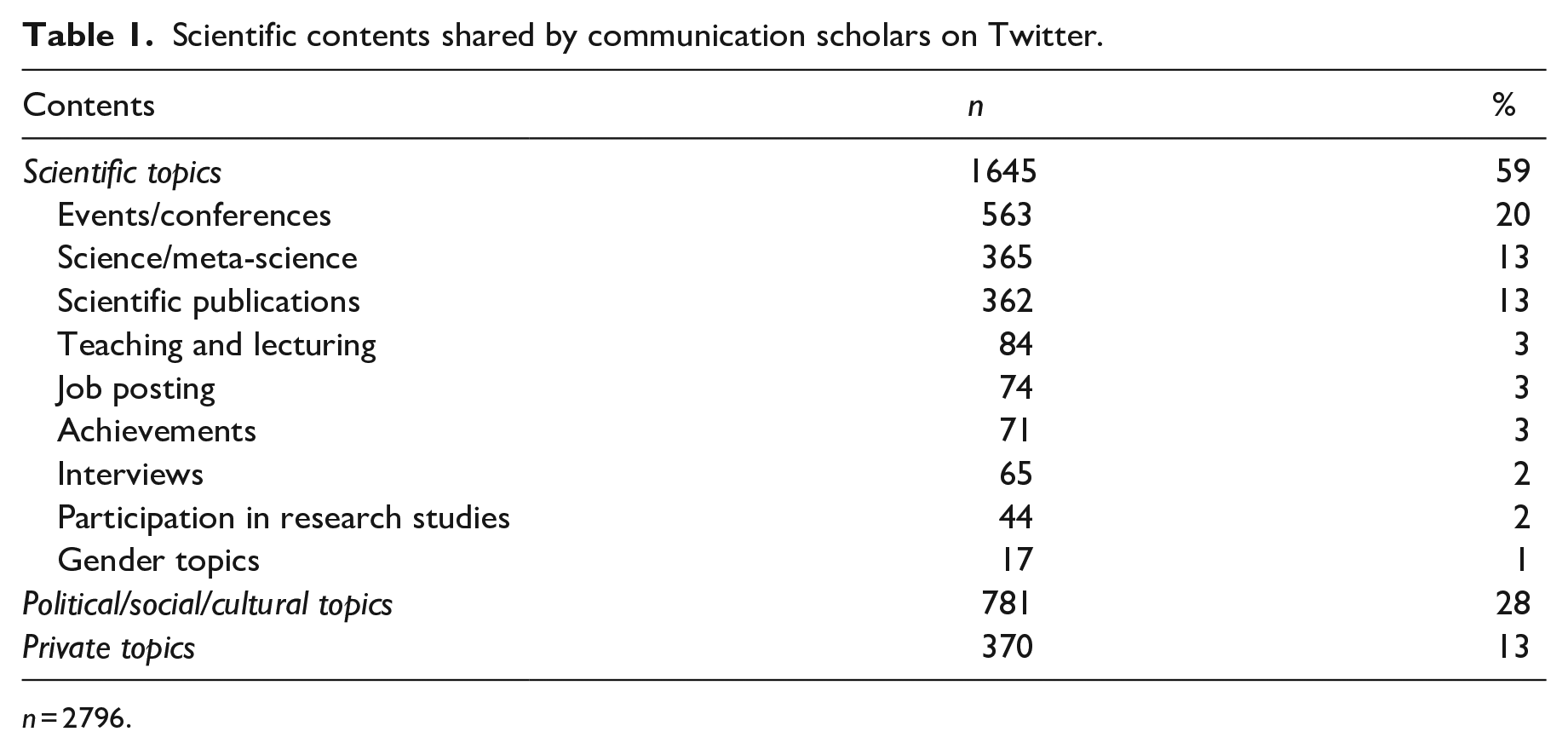

For the content-related categories, coders had to familiarize themselves with the actual tweet content, any audiovisual elements, or links, which had to be opened. They then coded the topic of the tweet (scientific, political/social/cultural, or private topics; see Table 1) based on similar categorizations (Holmberg and Thelwall, 2014; Jünger and Fähnrich, 2020). The coding was stopped in cases where the tweet’s content was not assessed as scientific, because in this study engagement refers to science communication. For those tweets that fell under one of the scientific topics, coders then coded categories with respect to content-related indicators of engagement (see Jünger and Fähnrich, 2020; Thelwall et al., 2013). They coded references made in tweet content and, for those tweets that referred to others, who they were. Furthermore, they coded evaluations, emotions, humor, questions/answers, congratulations, and requests (e.g. most categories coded as present or not present). In addition, the rtweet package allowed us to gather data on four functional indicators of engagement (Holmberg and Thelwall, 2014): use of hashtags, mentions, audiovisual content, and/or links, which were dichotomized (present or not present). The codebook is provided in the Supplemental material (see Table S1).

Scientific contents shared by communication scholars on Twitter.

n = 2796.

Two coders familiarized themselves with the codebook and the coding process in several training sessions. Intercoder reliability was assessed with two random samples of 200 tweets. Using Krippendorff’s alpha (and Holsti, as a check), the coders reached satisfactory results, with the average scores for the formal categories α = .83 (CR = .99) and for the content-related categories α = .77 (CR = .95). Thus, the coders independently coded the total sample.

3. Results

From the 2884 tweets considered in this study, n = 2796 were deemed relevant for coding—those excluded were mostly written in languages other than English or German or only contained a link without additional content. Only 60 (2%) were (part of) threads. Regarding RQ1 (topics), communication scholars mostly tweeted about scientific topics, with a predominant focus on events/conferences, meta-scientific topics, and scientific publications (see Table 1). It was less common that communication scholars tweeted about political/social/cultural or private topics.

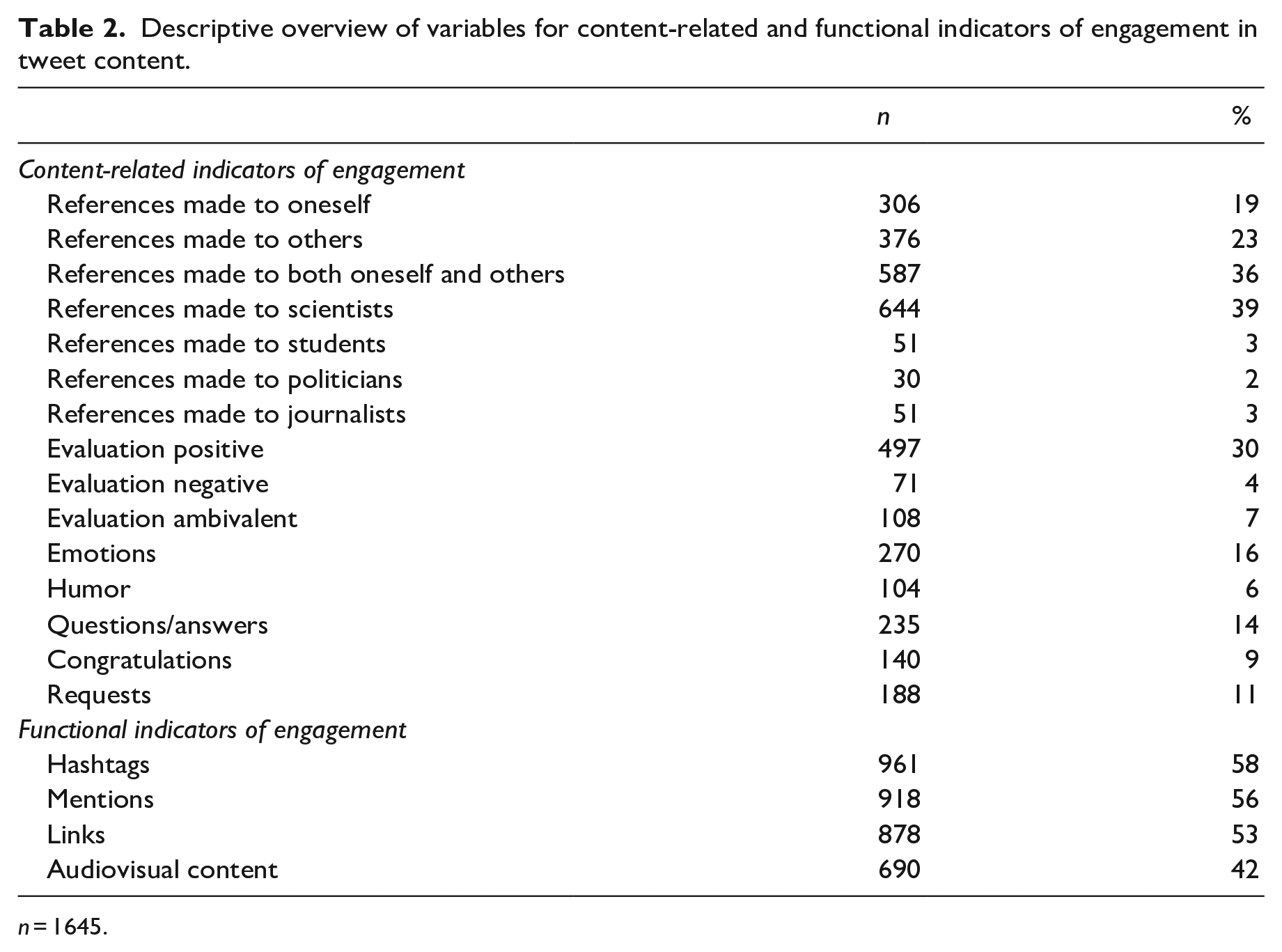

For RQ2 (engagement), we considered the 1645 tweets classified as science tweets (see also Table 2). For content-related indicators of engagement, references were predominantly made to both oneself and to others. Fewer tweets referred to others only, to oneself only, or did not make references at all. If references were made to others, then it was mainly to scientists, less often to students or journalists, and least of all to politicians. When it comes to evaluations, they were given in less than half of all tweets—most of them were positive. Emotions were found more often than humor, and questions/answers were more often present than requests or congratulations. A sum index based on these seven indicators reached a Mdn = 1, representing low content-related engagement.

Descriptive overview of variables for content-related and functional indicators of engagement in tweet content.

n = 1645.

For functional indicators of engagement, hashtags, mentions, links, and audiovisual content were more frequently present. Consequently, a sum index based on these four indicators reached a Mdn = 2, showing moderate functional engagement. Tables S2 and S3 in the Supplemental material show how the categories related to the two indicators of engagement varied by gender, career status, H-index, and number of followers.

For RQ3 (indicators of engagement and user interaction), the strongest correlation existed between number of likes and number of retweets (r = .630***). 3 The number of likes showed positive correlations to both content-related (r = .184***) and functional indicators of engagement (r = .221***), whereas the number of retweets showed a stronger positive correlation with functional (r = .264***) than with content-related indicators of engagement (r = .073**).

4. Discussion

This study analyzed how engaging communication scholars’ tweet content potentially is for public audiences. As expected, these scholars mostly tweeted about science (see also Jünger and Fähnrich, 2020). The findings regarding engagement pointed to the fact that content-related indicators of engagement were not frequently used—if they were, then this mainly because of references made to others (who were usually scientists) and (positive) evaluations (see also Della Guista et al., 2021; Thelwall et al., 2013). Functional indicators of engagement were more frequent—usually hashtags, mentions, and links (see also Schmitt and Jäschke, 2017). According to Büchi (2016), such structural attributes indicate that tweets are not meant to be self-contained. However, given the findings presented, tweets by communication scholars included indicators of engagement, but only on a low to moderate level. They are thus potentially not enabling much dialogue. In this context, Jia et al. (2017) concluded that scientists use social media to encounter but not to engage audiences, and Thelwall et al. (2013) also found that tweets provide little more than publicity. What could be implied is that communication scholars used Twitter predominantly for purposes other than enabling dialogue with audiences—at least regarding the indicators used in this study.

An important question addressed in this article was if such content-related and functional indicators of engagement were associated with user interaction. The findings of the present study showed some support for this. Likes and retweets correlated with both types of engagement positively; retweets correlated stronger with functional indicators of engagement. The reasons why likes correlated with both content and functional indicators and retweets more with platform-specific affordances should be addressed in studies to come—in the present study, coefficients are rather small. Future studies should consider additional factors (e.g. scientists’ gender, position, number of followers) and test causal relationships with a larger and more diverse sample. Furthermore, our data do not show who is responsible for the user interaction collected (e.g. other scholars or nonscientific audiences). User interaction could indicate scholarly reach, reach of nonscientific audiences, or both.

Naturally, the present study has some more important limitations, potentially affecting future research. We focused only on communication scholars (of DGPuK), although the respective scientific fields scientists associate with may affect their behavior on Twitter (e.g. Della Guista et al., 2021; Holmberg and Thelwall, 2014). Whether the findings presented here are similar for other scientific fields (e.g. natural sciences) needs to be explored further. In addition, to capture scientists’ own communication behavior, we analyzed only original tweets. The sample collection revealed that members of DGPuK more frequently retweet, reply, and quote than post themselves. The question of how engaging retweets, replies, and quotes are may be included in studies to come. Furthermore, we worked with a set of content-related and functional indicators of engagement; however, we do not propose that this list is complete nor that it is feasible to use all engagement indicators in a single tweet. Since we were not able to work with the number of comments, future research should go beyond analyzing only online participation (e.g. Taddicken and Krämer, 2021). Other researchers may also use mixed methods and combine content analytical approaches with surveys and interviews, and look at other social media platforms, because it is likely that they promote different types of public engagement with science (Kahle et al., 2016). This is especially relevant as recent developments in Twitter’s headquarters may lead scientists to migrate to other microblogging services or platforms. How valid the findings of the present study are with respect to other social media platforms needs to be assessed in future studies.

In general, public engagement with science can be seen as a normative concept, and this has often been criticized (e.g. Jünger and Fähnrich, 2020). It may be true that if social media are used by scientists for publicity only (Thelwall et al., 2013), then this indicates a rather non-engaging, unidirectional mode of communication and may even remind scholars of old approaches in science communication. However, this has to be assessed differently if Twitter is used with an intention for scholarly communication. Scientists can, but they do not have to use Twitter for enabling dialogue with nonscientific audiences. Surveys point to the fact that most scientists use Twitter to reach their peers (e.g. Collins et al., 2016). In addition, scientists have often been described as ambivalent regarding public engagement activities, especially with respect to the blurred spaces between internal and external science communication (e.g. Peters, 2013), which is true for Twitter. What this article adds to the discussion is a focus on the presumed prerequisite that to facilitate public engagement with science, tweet content should be designed in a dialogue-oriented way—with the finding that tweets are only moderately designed in this way, although there is some support that this is linked to user interaction. Future research may reconsider these relationships and also discuss them in the context of altmetrics (e.g. Chan et al., 2022; Sugimoto et al., 2017).

Supplemental Material

sj-docx-1-pus-10.1177_09636625231166552 – Supplemental material for Science communication on Twitter: Measuring indicators of engagement and their links to user interaction in communication scholars’ Tweet content

Supplemental material, sj-docx-1-pus-10.1177_09636625231166552 for Science communication on Twitter: Measuring indicators of engagement and their links to user interaction in communication scholars’ Tweet content by Lars Guenther, Claudia Wilhelm, Corinna Oschatz and Janise Brück in Public Understanding of Science

Footnotes

Acknowledgements

The authors thank Laura Gdowzok for assistance in classifying members of the German Communication Association (DGPuK). They also thank the editor and the two reviewers for their thoughtful input, as well as Marina Joubert who read and commented on an earlier version of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Parts of this research were supported through financial help to hire student assistants by the Department of Media and Communication at Ludwig Maximilian University of Munich.

Data availability statement

The data that support the findings of this study are available from the corresponding author (L.G.), upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.