Abstract

Public Understanding of Science is an interdisciplinary journal serving the scholarly community and practitioners. This article reports an analysis of the readability and jargon in articles published in Public Understanding of Science throughout its almost three decades of existence to examine trends in accessibility to diverse audiences. The accessibility of Public Understanding of Science articles published in 1999/2000 (47), 2009 (49) and 2019 (65) was assessed in terms of readability and use of jargon. Readability decreased and use of jargon increased between 1999 and 2000 and the two following decades for empirical and non-empirical papers, and all parts including the abstracts. An analysis of rare words shows that most are not part of the general academic vocabulary or disciplinary jargon, but rather words that appeared only in one article. Public Understanding of Science has moved away from everyday language. This does not mean it is incomprehensible to its scholarly readership, but may have consequences to other audiences such as practitioners.

1. Rationale

According to its website, Public Understanding of Science (PUS) is an interdisciplinary journal whose mission is to serve scholars studying the interrelationship between science and the public in different cultures. It also addresses scientists from all disciplines, research managers and science communication practitioners who are interested in the public understanding of science and the manifold ways in which science and publics communicate, interact, and influence each other. A recent editorial emphasized, ‘we insist that submissions must account for the inter- and transdisciplinary character of PUS in terms of relevance, comprehensibility, and readability’ (Peters, 2020).

This article presents an empirical analysis of the readability and jargon in articles published in PUS throughout its almost three decades of existence to capture trends in its accessibility to these diverse audiences. We follow the example of an earlier work that explored trends in the focus of PUS publications over two decades and which found topical changes in terms of specific emerging technologies and environmental concerns (Bauer and Howard, 2012). A lexicographic study of 465 abstracts published in PUS identified 5 classes of associated concepts between 1992 and 2001 and between 2002 and 2010, where language shifted from ‘public understanding’ to ‘public engagement’ (Suerdem et al., 2013). Here, we focus on one aspect of academic writing in PUS papers: the choice of vocabulary.

Academic writing assumes that its readers have previous knowledge of the field, familiarity with the standard scientific article structure, the use of academic vocabulary (e.g. analyse, facilitate) and jargon (e.g. ion, cytokine; Rakedzon et al., 2017). Jargon is defined by Merriam-Webster as ‘the technical terminology or characteristic idiom of a special activity or group’, but also as ‘obscure and often pretentious language marked by circumlocutions and long words’.

Jargon is a sore spot in scientific communication in general. A comparison of 5000 pairs of lay summaries written for a general audience and their corresponding academic abstracts showed that scientists intuitively use less jargon when writing for the public but far from enough to be understood (Rakedzon et al., 2017). This echoes earlier findings that scientists use less jargon in communication with a general audience than in communication with peers, but do not always employ terms that are less obscure (Sharon and Baram-Tsabari, 2014). A corpus of over 7000 abstracts published between 1881 and 2015 from 123 scientific journals demonstrated that the readability of science is steadily decreasing. This trend has been argued to be indicative of the growing use of general scientific jargon (Plavén-Sigray et al., 2017).

Studies describe how jargon may be excluding to non-scientists (e.g. Brooks, 2017; Halliday and Martin, 1993; Turney, 1994). Recently, Shulman et al. (2020) found that the presence of jargon (operationalized as scientific language) interferes with readers’ ability to fluently process scientific information, even when definitions of these terms are provided. They found that jargon affects individuals’ social identification with the science community which in turn affects self-reports of scientific interest and perceived understanding. Bullock et al. (2019) showed in an earlier study that the presence of jargon led to greater resistance to persuasion, increased risk perceptions and lower support for technology adoption. In a study of professionals’ use of water-related terminology, the professionals most likely to overestimate community understanding were influenced by their own understanding rather than experience with communities (Dean et al., 2018).

However, PUS readership does not include all the general public. It includes ‘scientists of all disciplines, research managers, and science communication practitioners’ that for the purpose of clarity will be referred from now on as ‘practitioners’. Research practice partnership between science communication scholars and practitioners may present benefit for practice by inspiring publics, promoting understanding of science and engaging publics more deliberatively in science based on the best evidence available (Riedlinger et al., 2019). It may also advance science communication research ‘through the development, use, and evaluation of evidence-based approaches to the practice of communicating with people about science’ (Standing Committee on Advancing Science Communication Research and Practice, 2020). However, these beneficial outcomes might be hampered if science communication practitioners do not identify themselves as an audience for science communication scholarship.

A survey conducted at a 2007 UK science communication conference (40% response rate) found that 42% of the attendees read PUS and 36% read Science Communication at least occasionally. Around 8% read one or the other regularly. However, 55% never read either journal (Miller, 2008). A 2011 survey of science communication practitioners in Australia found that only those involved in science communication practice (rather than research and practice) were more likely to seek out professional advice from informal conversations with colleagues (82%) than to refer to science communication books or papers (68%; Metcalfe and Gascoigne, 2012). In Germany, a representative survey showed that hardly any practitioners knew (let alone used) scholarly journals, and were rarely cognizant of specific theoretical or methodological schools of thought (Gerber et al., 2020). This might well be the case in other non-English speaking countries. ‘While having a “universal language of science” has allowed scientists to communicate ideas freely and gain access to global scientific literature, the primary use of a single language has created barriers for those who are non-native English speakers’ (Márquez and Porras, 2020, p.1), which includes many science communicators.

A series of interviews conducted in 2016–2017 with 34 science communication experts explored their views on issues related to science communication research. The experts’ views were mixed as regards the need to link research with practice. Some considered this to be an important challenge for all researchers whereas others disagreed, saying it was not their role and that not all research in science communication has practical implications. The minority felt that science communication researchers were already doing a good job of associating research and practice (Gerber et al., 2020). The perceived lack of relevancy of academic science communication scholarship to practice was attributed to the fact that journals are intended for an academic readership, which makes publishing papers with a practical focus harder (Gerber et al., 2020).

Surely, vocabulary use is not the only, not even the most important, factor in making science communication scholarship worthwhile reading for practitioners. There are many such factors – from topic choice to implications. This analysis, however, addresses two fundamental aspects of this question, readability and jargon use, asking: how accessible are PUS papers for diverse audiences, including practitioners, and are they becoming less accessible with time?

2. Method

Data mining and cleaning

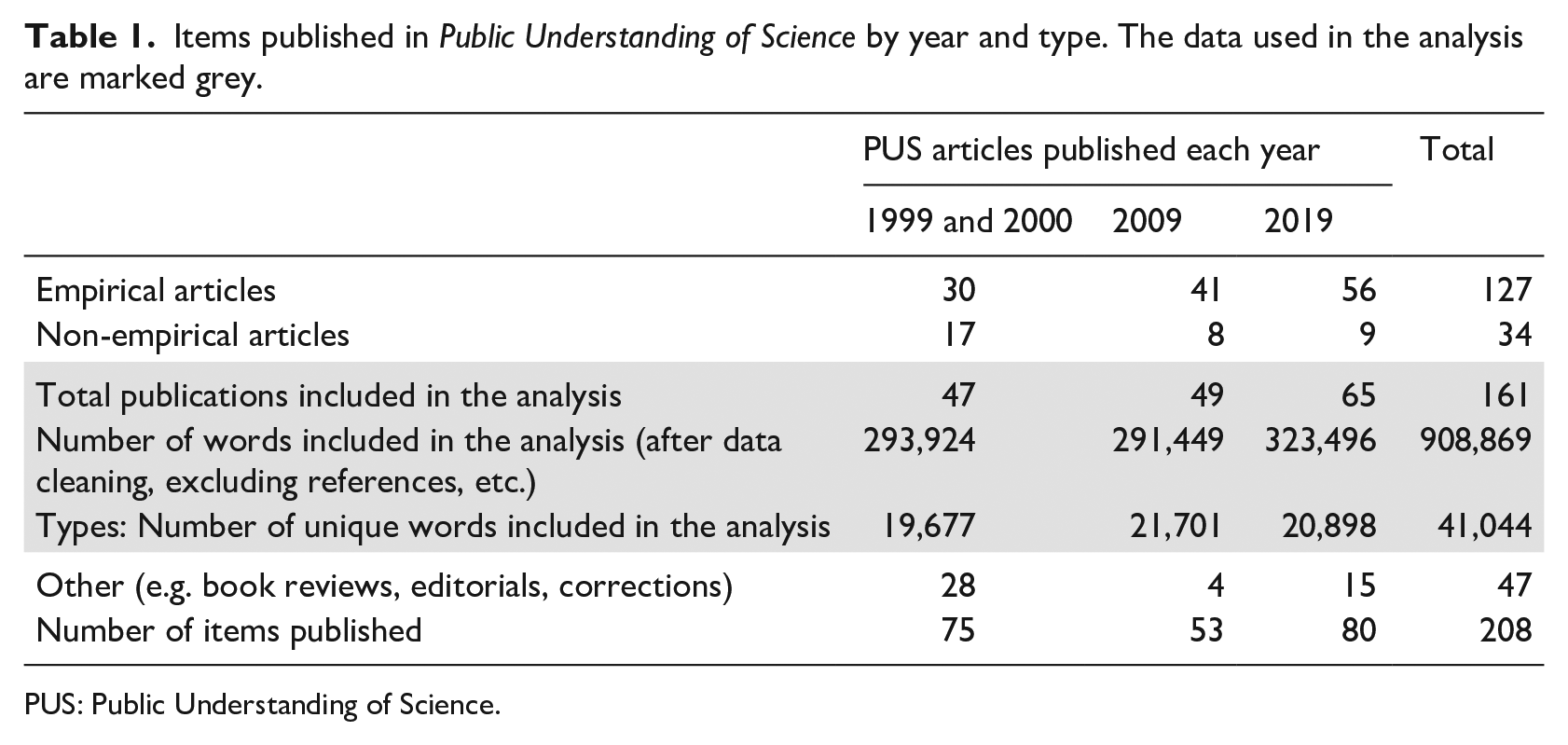

All PUS articles published in 1999/2000, 2009 and 2019 were downloaded at the beginning of 2020 and converted to Word docx. In order to analyse the main text, we manually deleted the figures, tables, figure captions, funding disclosure, list of authors and affiliations, appendices, keywords and bibliography. This resulted in over 900,000 words (tokens), and over 40,000 unique words (types; Table 1).

Items published in Public Understanding of Science by year and type. The data used in the analysis are marked grey.

PUS: Public Understanding of Science.

Data transformation

Manuscripts were manually classified into empirical papers, non-empirical papers and other publications, including book reviews, editorials and corrections. Only empirical and non-empirical papers were included in the analysis, for a total of 161 papers (Table 1). Empirical articles were further manually divided into the canonical IMRAD sections: abstract, introduction, method, results and analysis, discussion.

Due to the limited number of papers published in 1999 (10 empirical and 12 non-empirical), we merged the 1999 data with the 2000 data (20 empirical and 5 non-empirical). No significant difference was found in the jargon score for these 2 years.

Data analysis

General audience accessibility was assessed using the following two measures:

Jargon use. All the texts were analysed using the De-Jargonizer automatic jargon identifier to obtain the jargon score and extract the rare words. The De-Jargonizer is a free open source service (scienceandpublic.com) that classifies each word into high, medium or rare frequency: high-frequency (e.g. questions) contain words that are familiar to most readers, mid-frequency (e.g. questionnaires) contain words that should be familiar to intermediate and advanced readers, and rare words (e.g. qualitative) includes many words that are likely to be unfamiliar to non-experts. This classification is based on the frequencies of over 70 million words from roughly 135,000 articles published online on BBC channels (including Sport, Health, News, Future, etc.) from 2016–2019 (earlier dictionaries are also available). The crawling included editorial content alone. Advertisements, reader comments, phone numbers, website URLs and email addresses were ignored. The tool, its development and validation are described in detail in Rakedzon et al. (2017).

Updates to the algorithm included addressing line wrap: From 2009–2019, dashes were used to connect 2 parts of split words written on adjacent lines, causing the misidentification of words as jargon (e.g. gov-ernment was calculated as two unfamiliar words ‘gov’ and ‘ernment’) in 2 of the 3 time periods. When a document is converted from PDF to the WORD format, the location of words at the end of the line is not maintained. Hence, it is impossible to distinguish words that have been separated by a dash for line wrapping from phrases that use it to make compound words. In order to eliminate this problem, all dashed words were concatenated, thus creating the opposite problem to the misidentification of compound words (e.g. decision-making became ‘decisionmaking’). However, the frequency of this error was estimated to affect only 0.7%–0.9% of the rare words based on a random sampling of dashed words, and was constant for the 3 time periods, suggesting that this error had a minor effect on the results.

Rare words identified by the De-Jargonizer were further classified as general academic vocabulary, if appearing in the top 20,000 words in COCA-Academic which includes the Academic Vocabulary List (Gardner and Davies, 2014: https://www.academicvocabulary.info). Words that appeared in the plural, or as a verb suffix (e.g. s, es, ed) were manually converted to their basic form. Words containing an ‘ing’ suffix were not changed, since they may be an adjective. Words that were not included in the list after these changes could be specific academic vocabulary (e.g. ‘popularization’) or non-academic vocabulary.

Readability. Readability measures the clarity and ease of reading a text, based on the number of words, syllables and sentence length. In this study, we used the following two readability formulas: the Flesch Reading Ease formula and the Flesch–Kincaid formula. The Flesch Reading Ease formula (Flesch, 1948) predicts reading ease on a scale of 1–100, with a higher score indicating that the text is more readable. The Flesch–Kincaid formula converts the Flesch Reading Ease formula into grade levels, indicating the grade level required to read the text easily. Higher scores indicate less readable text. The readability scores were collected semi-automatically using Word macro and checked several times for consistency.

All articles were scored based on these two methods. The full dataset is provided as Supplemental material (Excel file).

Methodological limitations

Publications only covering 4 years were selected for analysis. A larger sample size from more years could have produced more fine-grained patterns related to specific topics, sub-disciplines or editorial shifts. Although the publications were written in three different decades, the dictionary that was used by the De-Jargonizer is based on the 2016–2019 word frequency, and the academic word list used was published in 2014 (Gardner and Davies, 2014). Since the vocabulary of articles published in 1999/2000 is not aligned as well with the De-Jargonizer as the vocabulary of publications published in 2019, the results for 1999/2000 papers could represent an overestimation of the percentage of rare words relative to 2019.

Statistical analysis

A two-tailed, two-sample unequal variance t-test was used to test for significant changes in jargon scores for the four time periods, rare words percentage over the years, between article parts, and the change of Flesch Reading Ease readability scores. To test for significant differences in readability scores in the empirical articles as compared to the abstracts, a paired two-sample t-test for means was conducted.

3. Results

The general audience accessibility of publications appearing in PUS for 1999 and 2000 (n = 47), 2009 (n = 49) and 2019 (n = 65) was evaluated for their readability and use of jargon. Both showed a significant decrease between 1999/2000 and the two following decades.

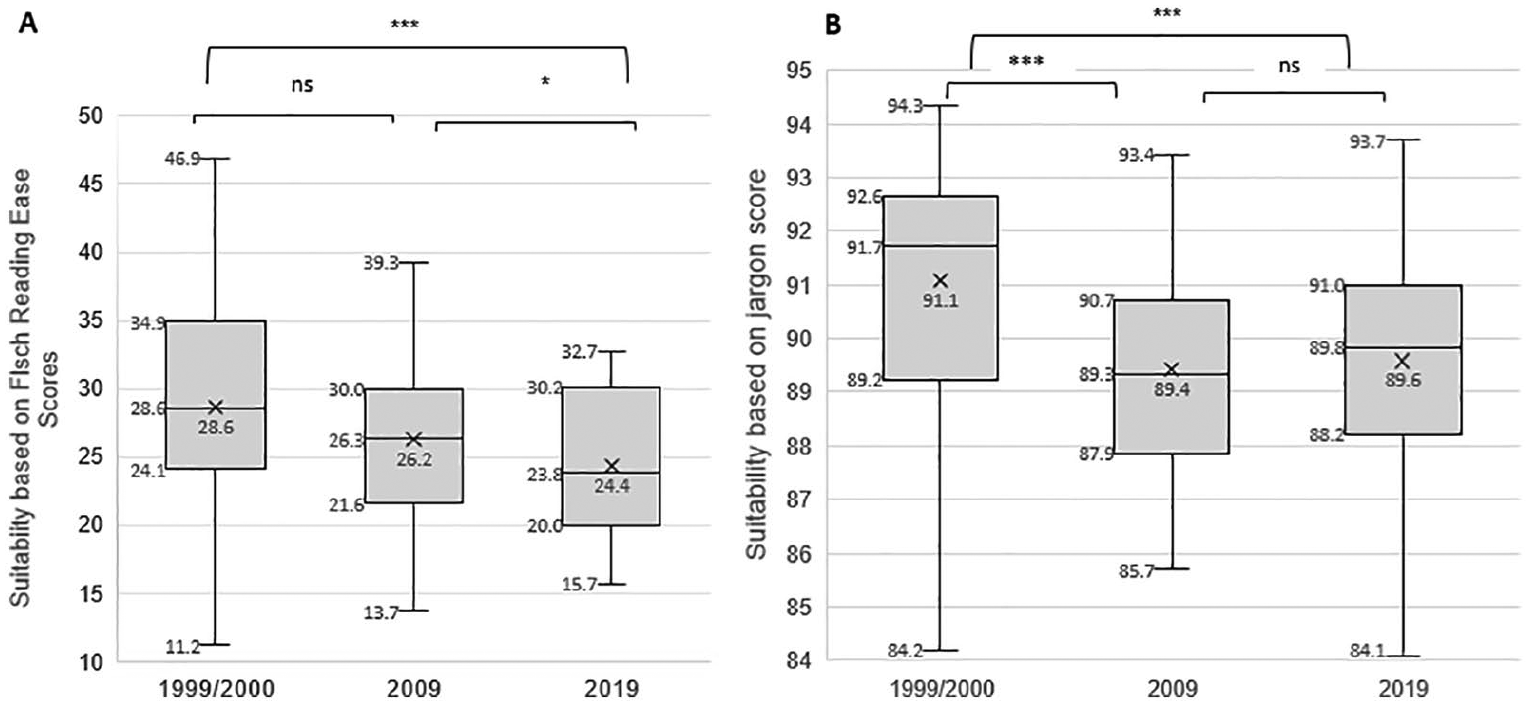

The average readability of articles published in 1999/2000 was 31.5 ± 7.6, which represents a difficult style, and corresponds to a grade 13–16 reading level (Dubay, 2004). In 2009 and 2019, the score dropped to 28.6 ± 8.0 and 25.7 ± 5.5, respectively, which represents a very difficult style, and a college-level education (Figure 1, panel A). Experimental papers were slightly more readable than non-experimental articles for each of the years based on Flesch–Kincaid Grade Level scores (1999/2000: 14.7 vs 15.3; 2009: 15 vs 15.4; 2019: 15.2 vs 16.2). This difference was significant (p < .01) in 2019, but non-significant in 1999/2000 and 2009. The average jargon score in 1999/2000 was 91.1 ± 2.2. In 2009 and 2019, the score dropped to 89.4 ± 1.9 and 89.6 ± 2.1, respectively (Figure 1, panel B).

General audience accessibility of papers published in the journal Public Understanding of Science in the years 1999 and 2000 (n = 47), 2009 (n = 49) and 2019 (n = 65) based on their A. Flesch reading ease readability scores and B. Jargon scores.

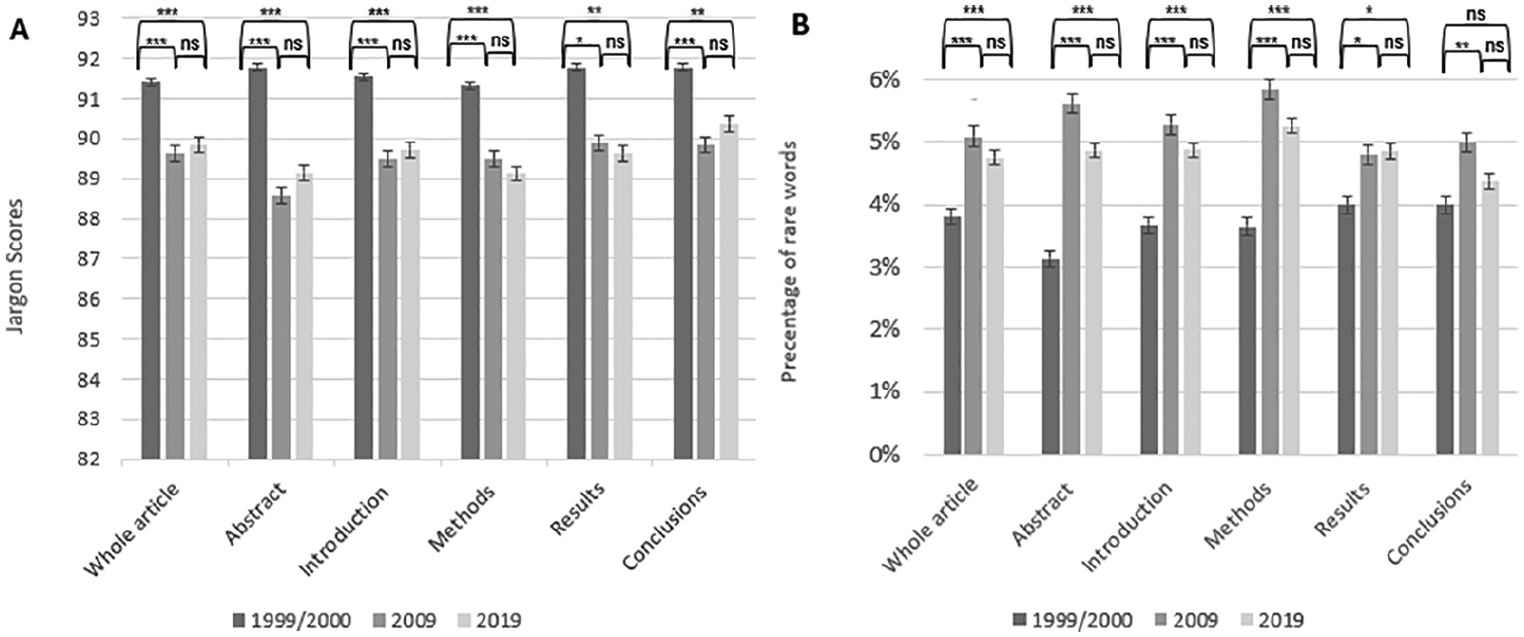

The general audience accessibility of all the empirical articles published in PUS in 1999/2000 (n = 30), 2009 (n = 41) and 2019 (n = 56) was assessed by section: Abstract, Introduction, Method, Results and Conclusion. The Flesch–Kincaid Grade Level scores of the abstracts were significantly higher (p < .001) on average than those of the full papers, for all years; in other words, the abstracts were harder to read than the average full paper (1999/2000: 14.7 vs 16.4; 2009: 15 vs 17.1; 2019: 15.2 vs 17.3).

Empirical papers published in 1999/2000 used more common vocabulary than papers published in the next two decades. This was true (and significant) for each canonical section (Figure 2, panel A). The jargon score addresses both mid-frequency and rare words. Whereas the percentage of rare words in 1999/2000 ranged from 3%–4% for the different sections, it ranged from 4%–6% for 2009 and 2019 (Figure 2, panel B), where, in particular, the ‘Method’ section contained more jargon. Hsueh-Chao and Nation (2000) found that familiarity with 98% of all the vocabulary in a text is needed to accurately comprehend the content unassisted when reading for pleasure.

General audience accessibility of all the empirical papers published in the journal Public Understanding of Science in the years 1999 and 2000 (n = 30), 2009 (n = 41), and 2019 (n = 56) divided by section: Abstract, Introduction, Methodology, Results, and Conclusion, based on (A) Jargon score and (B) percentage of rare words.

Not all rare words can be considered equally difficult. Among the rare words that appeared most frequently in 1999/2000 were ‘empirical’, ‘hypotheses’, ‘biotechnology’, ‘understandings’, ‘qualitative’, ‘discourses’, ‘salient’, ‘implicitly’, ‘generalized’, ‘cloning’, ‘popularization’, ‘literate’, ‘correlations’, ‘discursive’ and ‘explanatory’ (appearing 11–148 times each). In 2009, there were numerous instances of ‘qualitative’, ‘empirical’, ‘publics’, ‘biotechnology’, ‘discourses’, ‘understandings’, ‘cloning’, ‘discursive’, ‘normative’, ‘dissemination’, ‘embryonic’, ‘twentieth’ and ‘nanotechnology’ (appearing 17–331 times each). The year 2019 featured the following: ‘empirical’, ‘regression’, ‘variance’, ‘publics’, ‘qualitative’, ‘hypotheses’, ‘descriptive’, ‘communicators’, ‘normative’, ‘empirically’, ‘dissemination’, ‘predictors’, ‘methodological’, ‘heterogeneous’, ‘understandings’ and ‘evaluations’ (appearing 46–75 times each). Three of these words were found in all three time points: ‘empirical’, ‘understandings’ (plural) and ‘qualitative’; many appeared in two of the time points, such as ‘hypothesis’, ‘discourses’ (plural), ‘discursive’ and ‘publics’ (plural). A similar type of words was identified by Plavén-Sigray et al. (2017) who composed a general scientific jargon list of about 2000 words, which included words such as ‘novel’, ‘robust’, ‘significant’, ‘distinct’, ‘moreover’, ‘therefore’, ‘primary’, ‘furthermore’, ‘influence’, ‘underlying’, ‘suggesting’.

It is also worth mentioning specific emerging technologies: ‘biotechnology’ and ‘cloning’ were among the most frequent rare words in earlier years, but not in 2019. ‘Biotechnology’ did appear in 2019 as a rare word, but less frequently than in earlier years. ‘Cloning’ appeared much less frequently in 2019, compared to 1999/2000 whereas in 2009 it was one of the most frequent words. Earlier studies comparing the focus of PUS papers over the course of the last 20 years found that genetics, genetically modified organisms, and biotech were the focus of many more research papers in 2002–2010 than in 1992–2001 (Bauer and Howard, 2012). Notably, in 1999/2000 ‘correlations’ was one of the frequently used rare words, but in 2019 ‘regression’, ‘variance’ and ‘predictors’ as rare-but-frequent methodological words were used in its place.

This list of frequently-used-rare-words consists for the most part of general academic vocabulary, that presumably PUS readership would not find excluding. Therefore, the rare words were further classified as ‘general academic vocabulary’ or not, based on an established academic vocabulary list. Overall, 18.3% (2589 of the 14,149 rare words) of the rare words identified by the De-Jargonizer were on the academic vocabulary list. There was a difference between the three time points: general academic words made up 30.7% of the rare words in 1999/2000, but only 21.8% in 2009, and 23.6% in 2019.

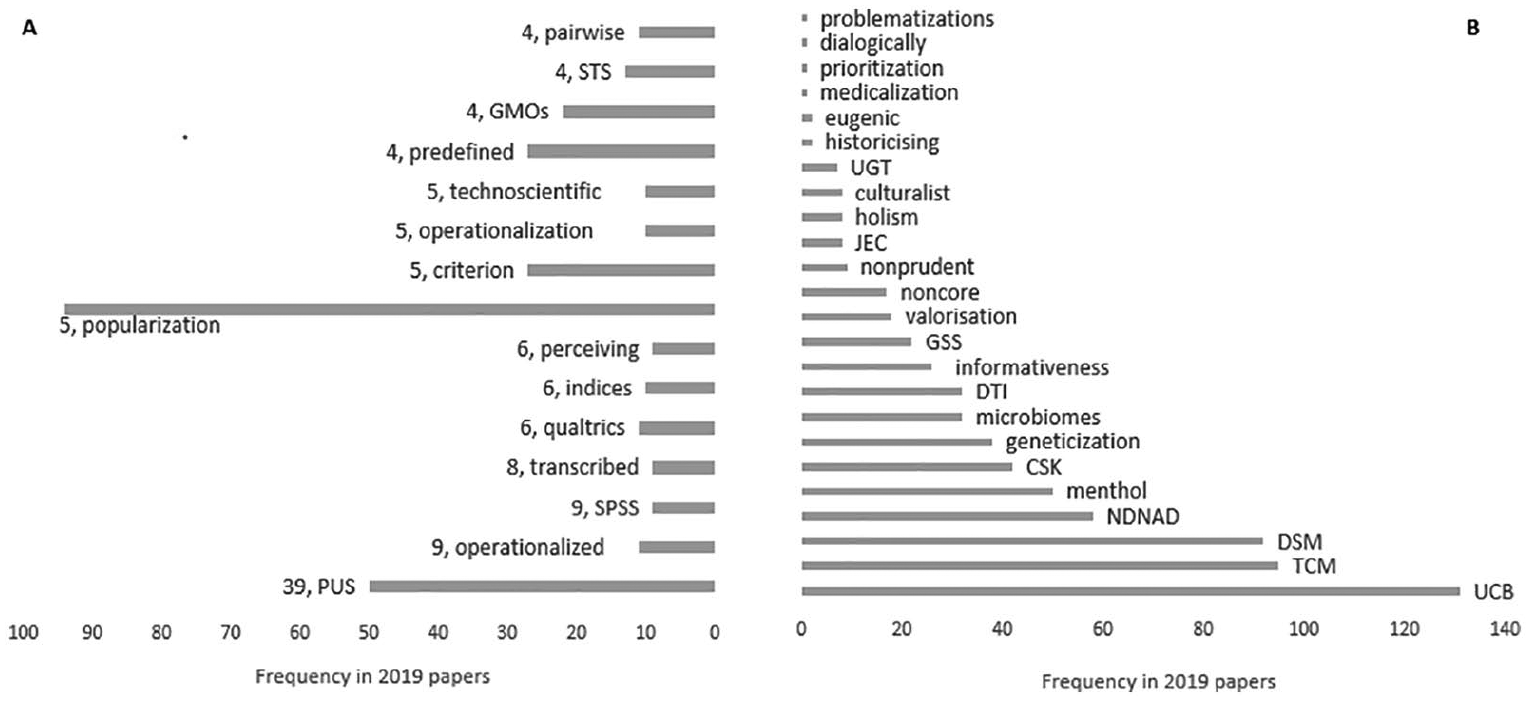

The next step was to examine the distribution of the remaining words – rare words which are not general academic vocabulary – across articles published in the same year, to identify shared disciplinary jargon. For this purpose, we used the rare words which were not listed in the academic list and appeared in articles published in 2019 (4262 words in 65 papers). On average, each of these words appeared in fewer than 1.4 articles (SD = 1.6) with a median of 1. Figure 3 lists examples of these frequent rare words that were not identified as general academic vocabulary. Panel A lists frequent rare words that appeared in several papers the same year. These include some natural candidates for PUS in-group jargon, such as ‘popularization’ and ‘technoscientific’. Panel B lists examples of words that appeared many times, but only in one paper published that year. Some of these terms might be difficult for readers to understand.

Examples of words identified as ‘rare’ by the De-Jargonizer in papers published in 2019, after excluding general academic vocabulary identified by the new academic vocabulary list (A) Frequent and shared by the community: Examples of words appearing in more than one article, listed by the number of different articles (the number near the term) and (B) Frequent and exclusive: Examples of words that appeared only in one article in 2019.

Finally, it was tricky to measure the Jargon score of our own paper, because the results contain long lists of rare words. Excluding all words appearing between apostrophes this article scored 89.6 – exactly, the average of 2019 papers. The most frequent rare words that were not identified as general academic vocabulary used in this article were the following: ‘PUS’ (18), ‘readability’ (12), ‘empirical’ (9), ‘abstracts’ (plural) (8), ‘dejargonizer’ (8), ‘publics’ (plural) (4), ‘flesch’ (4), ‘fleschkincaid’ (4), ‘methodological’ (3), ‘nonempirical’ (3) and ‘readable’ (3).

4. Discussion

This exploration of the vocabulary use of PUS papers over its three decades of existence was designed to characterize changes in the journal’s accessibility for wider audiences. The findings seem to indicate a drift in readability and a change in the language of PUS articles from everyday language towards the use of rare words. This is true for empirical and non-empirical papers, and for all sections of the empirical papers including the abstracts.

While these findings suggest that wider audiences might find the articles less accessible, this picture is not necessarily bad. This change might be the result of professionalization among science communication researchers, and hence, an indication of the consolidation of the discipline that ultimately manifests itself in specialized vocabulary and more advanced methods (evidenced by the relative frequency of ‘regression’, ‘variance’ and ‘predictors’ for example in 2019). A more in-depth analysis of the rare words identified by the De-Jargonizer showed that most were not part of the general academic vocabulary expected of scholars and graduate students. In fact, most of these rare words which were not general academic vocabulary appeared in only one paper that year, which is not an indication of in-group jargon.

The finding that PUS appears to be moving away from everyday language does not mean it is incomprehensible to its readership but rather to the general public. However, PUS does not only aim to serve science communication scholars, but also scientists from all disciplines, research managers and science communication practitioners. It is a ‘transdisciplinary journal’ in the words of the May 2020 editorial, ‘meaning that we want to be relevant for practical activities aiming at the science–public relationship’ (Peters, 2020). How does this vocabulary use align with this goal? Does it support evidenced-based practice and research-practice collaborations? Should readers be satisfied with a journal in which social scientists talk to each other, instead of researchers and practitioners actually communicating with each other?

What are the conclusions for authors? Avoid the avoidable complications. The De-Jargonizer can give provide initial indications of problem areas. In particular, writers should consider the comprehensibility of the abstracts (Peters, 2020), which are more often than not the only part of the paper available to practitioners.

What are the conclusions for researchers as a community of practice? There is a practical contradiction in aims between writing for a large and diverse readership on the one hand and expecting disciplinary depth, which includes professional language as a shortcut, on the other hand. However, there is also a normative dilemma: what should be the norms regarding jargon use and excluding language in this community? One potential solution would be to require publishing practitioner-abstracts for each accepted PUS article as posts on the PUS blog alongside posts by practitioners. This solution applies only to English-readers but could create a mutual space where scholars and practitioners could correspond with each other.

Supplemental Material

Results_by_article_name-1306 – Supplemental material for Jargon use in Public Understanding of Science papers over three decades

Supplemental material, Results_by_article_name-1306 for Jargon use in Public Understanding of Science papers over three decades by Ayelet Baram-Tsabari, Orli Wolfson, Roy Yosef, Noam Chapnik, Adi Brill and Elad Segev in Public Understanding of Science

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

Baram-Tsabari’s research focuses on bridging science education and science communication scholarship, and she published extensively in both disciplines.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.