Abstract

In this paper we propose and examine randomization and allocation procedures in master protocol trials where each subtrial is performed as a single-arm study, which is motivated mainly by rare diseases. The subtrial analysis is done by comparing the single-arm observations data to historical data or expert judgment and following prespecified decision rules, without using data from any other study in the master protocol trial. The objective of this paper is to examine relevant options for participant allocation procedures in a variety of patterns in which the subtrials enter the master protocol trial, such as umbrella, platform and perpetual patterns. Our simulation study shows that our proposed allocation procedures using the predictive probability of current study success may bring substantial efficiency gains in the master protocol trial, making a clever allocation of the in-trial participants to achieve study success declarations sooner, benefiting the out-of-trial population.

Keywords

Introduction

Master protocol trials, such as platform, umbrella and basket trials,1,2 are increasingly used for rare (a.k.a. orphan) diseases because they offer gains that may outweigh the methodological issues associated with them.3,4 The purpose of such trials is to provide a common infrastructure for sponsors to make it easy to investigate an intervention in a rare disease in an intervention-specific subtrial, defined in the corresponding intervention-specific appendix to the master protocol. The gains often cited for master protocol trials over a series of separate trials include (i) statistical efficiencies achieved from the same number of trial participants by methods taking advantage of features such as sharing of the control data, (ii) operational efficiencies, because trial design, site selection, staff training, database set-up and other infrastructure require fewer resources, and (iii) health benefit for trial participants, as the combination of suitably chosen subtrial-entry patterns, decision rules for early subtrial stopping, and allocation procedures leads to allocating more trial participants to better subtrials sooner. The three types of gains lead to a multiplicatively faster drug/intervention development and thus higher overall health benefit for the target population. 5

The procedure for allocating participants when there are several subtrials open for enrollment is a key element of the design of a master protocol trial. This topic has attracted a lot of research attention. For master protocols that include one or more control arms, one of the main questions is the allocation to the control(s), which can either be one common control whose data are shared by all subtrials for their individual analyses, or an intervention-matched control in every subtrial, or both.6,7 Moreover, allocation between the subtrials can be made unequal or even adaptive, using early subtrial stopping or response-adaptive randomization, both of which can be specified in a number of ways depending on the trial objectives (e.g. participants benefit, statistical power, etc.), see Thall and Wathen, 8 Wason and Trippa, 9 Wathen and Thall, 10 Viele et al. 11 The choice of the allocation procedure heavily affects the operating characteristics of each subtrial. 12 It may introduce or amplify a number of undesirable biases, which need to be properly investigated and evaluated by the trial designers, especially when the trial data is planned to be used for marketing authorization applications. 13

Practically all the research in this area has focused on master protocol trials that include one or more controls (shared or intervention-specific). However, in a trial for a rare disease, one may not always be able to include a control, for example because there is no approved intervention on the market, because it may be unethical to use a placebo control, or because the patient population is very small.

14

Single-arm (a.k.a. non-randomized) studies may become an acceptable, preferred, or even the only option in these situations. Bell and Tudur Smith

15

reported that single-arm studies compose a relevant proportion of clinical trials,

Master protocol trials consisting of single-arm subtrials have been proposed recently but, to the best of our knowledge, their performance has not yet been investigated. The EU-PEARL project published two master protocols: one for neurofibromatosis type 1 and one for neurofibromatosis type 2.16,17 These master protocols consist of single-arm subtrials for different manifestations of neurofibromatosis type 1 or 2. They allow for randomization between subtrials when several interventions are available at the same time.

18

The European Medicines Agency

19

reflection paper on single-arm trials also considers that these could be performed under a master protocol: An example for such a trial would be a particular kind of platform trial where several investigational treatment arms are included but which are not formally compared, and which can be viewed as a series of single-arm trials.

These examples can be viewed as master protocols that consist of a number of single-arm studies, run in series and/or in parallel. An allocation procedure needs to be employed across subtrials if several of these are open for enrollment at the same time. The purpose of using a response-adaptive allocation procedure in such a trial is to skew the enrollment of participants to subtrial(s) that are most likely to declare success, which brings health benefit to both in-trial participants and the out-of-trial patient population. This purpose differs from conventional trials with a prespecified overall trial sample size, where response-adaptive allocation procedures aim to increase the group size for specific intervention(s). In a master protocol with prespecified subtrial sample sizes, all subtrials will eventually get their planned allocation of trial participants. Because of this, the statistical operating characteristics like statistical power and type I error (derived under the assumption of no temporal trends) for each subtrial are not affected by using a response-adaptive allocation procedure. The above argument is stated assuming a fixed sample size for each single-arm subtrial, but applies analogously also to more complex trials with early subtrial stopping.

In this paper, we examine allocation procedures in a master protocol trial where all subtrials are single-arm studies. The research was motivated by the EU-PEARL master protocols for neurofibromatosis. The allocation procedures explored here are based on the concepts of frequentist predictive probability 20 and conditional probability of study success, which lend themselves easily for also defining response-adaptive allocation procedures. Our main objective is to explore the effects of response-adaptive allocation on the (non-statistical) operating characteristics of such a trial, more specifically, whether these procedures are capable of sooner completion of enrollment to the subtrials that are more likely to declare success at the final analysis. We present the results of an investigation of three allocation procedures over a number of different scenarios. The performance is evaluated using a newly developed and open-source clinical trial designer and simulator “Single-arm,” 21 building on SIMPLE, an R software for simulating clinical trials.22,23

The paper is organized as follows. In Section 2 we describe procedures for allocating participants to subtrials that we consider relevant for a master protocol trial of single-arm studies, proposing a family of allocation procedures using predictive probability of current study success as our main contribution. In Section 3 we describe the setup of our simulation study, including the definitions of the different subtrial-entry patterns to be considered, the simulated sets of assumptions about unknown truths, and the master protocol trial design variants to be compared. In Section 4, simulation results comparing the performance of design variants across a comprehensive set of scenarios are presented. In Section 5 we describe three individual simulations that are illustrative of how the allocation procedures behave. We discuss the strengths and limitations of our methodology as well as potential further research topics in Section 6.

Randomization and allocation procedures

EU-PEARL master protocols

In this subsection, we briefly review the approach to randomization taken in the EU-PEARL master protocols for neurofibromatosis type 1 (NF1) and 2 (NF2), which serve as the motivation for this paper. Each of these protocols includes several manifestations of the disease. Patients diagnosed with one of these manifestations are eligible to be enrolled to the corresponding trial. Both the NF1 and NF2 trials have been set up as platform-basket trials (including several manifestations under one master protocol), because patients may switch between manifestations over time. Technically, they are a collection of manifestation-specific platform trials. These in turn consist of many single-arm subtrials, which are defined by the intervention being applied and the manifestation being treated. In other words, if an intervention is tested for two different manifestations, then this defines two subtrials. If there are two interventions tested for one manifestation, then this also defines two subtrials.

The inclusion and exclusion criteria as well as the primary endpoint differ between manifestations. Within a manifestation, the same endpoint is used across all subtrials and the inclusion and exclusion criteria at the master protocol level are also the same. However, there may be subtrial-specific inclusion and exclusion criteria that may be specified in the corresponding intervention-specific appendices to the master protocol. An example of a subtrial-specific exclusion criterion is that patients who have been treated with the intervention that is being tested in the subtrial before cannot be enrolled to the subtrial. Pretreatment with the intervention under investigation could have occurred outside of the NF1 or NF2 trials, or within, when treating a different manifestation (as patients can suffer from different manifestations of neurofibromatosis). A patient must meet the inclusion and exclusion criteria at the master protocol level and give consent to participate in the trial.

When there are several manifestation-specific subtrials that are open for enrollment at the same time, instead of being randomized directly to subtrials, participants are randomized to preference sequences in order to allow for non-eligibility. For each participant, the randomization procedure assigns an allocation preference sequence of the available subtrials. For example, if subtrials

Proposed randomization and allocation procedures

In this subsection, we develop and describe a number of different procedures for allocating participants among subtrials at the master protocol level. The subsection is written without assuming a specific endpoint type, final analysis method or decision rule. More specific considerations are discussed in subsection 2.3.

The allocation procedures considered can be response-agnostic (ignoring all the accumulated endpoint observations) or response-adaptive, and for a given participant they can result in an allocation that is random or deterministic (conditional on available data). Note that if there is only one subtrial open for enrollment, all the allocation procedures result in a deterministic, unblinded allocation of the participant to that subtrial. In the rest of the paper, we consider that all the participants have the same covariate (like manifestation in the EU-PEARL trials). If there were several covariates, we could apply the allocation procedure adjusting it independently to each covariate.

In a master protocol for a rare disease, stakeholders might be interested in a trial objective of allocating participants quicker to those subtrials that are more likely to declare success, to make an efficient use of the rare patient population. Another objective could be to ensure fair or regular allocation of participants to subtrials that are open for enrollment. These different objectives are reflected in the participant allocation procedures that we evaluate in this paper.

To formally define different randomization and allocation procedures, we need to introduce some notation. Suppose that subtrials

When a newly enrolled participant

In order to allocate participant

Equal fixed randomization

The simplest allocation procedure is to just randomize participant

Oracle

The next allocation procedure is based on the conditional probability (the conditional power)

This allocation procedure, which we call Oracle (

Note that this procedure will typically allocate more participants than

The third allocation procedure, Predictive Probability (

These predictive probabilities can be calculated based only on observed endpoint data, with no unknown endpoint parameters needed. When there are no observed endpoint data available in subtrial

Such a predictive probability has to be obtained based on the analysis described in the master protocol or in the intervention-specific appendix for the subtrial. It depends upon the type of endpoint, the primary analysis method and the decision rules. We discuss this in more detail and describe the calculation of PrP in subsection 2.3, after introducing the randomized PrP procedure which also employs PrP values.

Randomized predictive probability

Both these procedures (

In order to avoid this, we propose randomized procedures defined as a mixture of the

Discussion

The predictive probabilities that are required for the

Similarly, in subsection Appendix A.3 we provide the formula for calculating the conditional probability for binary endpoint that we use in our simulation study for

For our simulation study, all subtrials use the same endpoint, the same analysis and the same decision rules, motivated by the EU-PEARL NF trials. For more details see Heimann et al. 25 An advantage of using predictive probability as proposed here is that one can in principle apply the approach to master protocol trials when the decision rules, analysis, or even the endpoints differ across the subtrials. The common scale is the probability scale, and the common measure is the predictive probability to declare success at the end of the study given the data observed so far. How this predictive probability is calculated does not matter when defining the allocation procedures.

Both

The allocation procedures we have proposed are based on the predictive probability for a subtrial to declare success at the final analysis. They are not based on predicting the unknown endpoint parameter (e.g. response rate) of the intervention in the subtrials. These two concepts lead to the same ordering of subtrials if subtrial definitions (sample size, endpoint characteristics, analysis and decision rules), number of allocations and number of observations are identical, but they may disagree in general. That the allocation procedure favors the subtrial that is more likely to declare success is desirable from the sponsors’ point of view, who may have defined the decision rules to declare success following different objectives or research questions, answering of which is the main objective of the master protocol trial. In certain situations, other reasons, such as the change in the standard of care or change in the disease nature, may lead to some subtrials being stopped on ethical or scientific grounds for being obsolete and/or possibly harmful despite having a relatively high predictive probability.

Strictly speaking, the procedures defined in the previous paragraphs can only be applied when the inclusion and exclusion criteria of all subtrials are the same, so that every participant is eligible for every subtrial. This may not be the case, as discussed in subsection 2.1. This issue can be resolved by randomizing to allocation preference sequences of available interventions, as in the EU-PEARL NF trials. The

A reader familiar with Bayesian response-adaptive randomization (BRAR) (cf. Thall and Wathen

8

) might wonder whether

Simulation study

We have designed a simulation study which mimics the EU-PEARL trial for a rare disease such as neurofibromatosis described in Dhaenens et al. 27 or Heimann. 20 In the following subsections we provide more detail on the simulation study set-up.

Enrollment of trial participants

We consider a discrete-time simulation model, that is regularly-spaced time periods, where each period would—in the context of a rare disease—typically be interpreted as a month or as a quarter. The enrollment of participants to the master protocol trial takes place with one enrolled participant per period, so participant

Subtrial-entry patterns

One of the key features of master protocol trials is that they can prespecify that new subtrials can enter during the course of the trial as new interventions become available for investigation. Sometimes, there are several interventions available when the trial starts, sometimes the trial may be set up without an intervention and the timing when interventions become available is unknown. The effect of different timings when subtrials enter master protocol trials is not well understood.28,29 When there already are several subtrials open for enrollment, it is a strategic decision whether to allow additional subtrials to enter, which ones to allow, and when to let them enter the trial. Such a decision will impact the trial participants, the speed of completion of the currently enrolling subtrials, the attractiveness of the trial to potential future participants and the attractiveness of the trial to the sponsors of potential futur interventions.

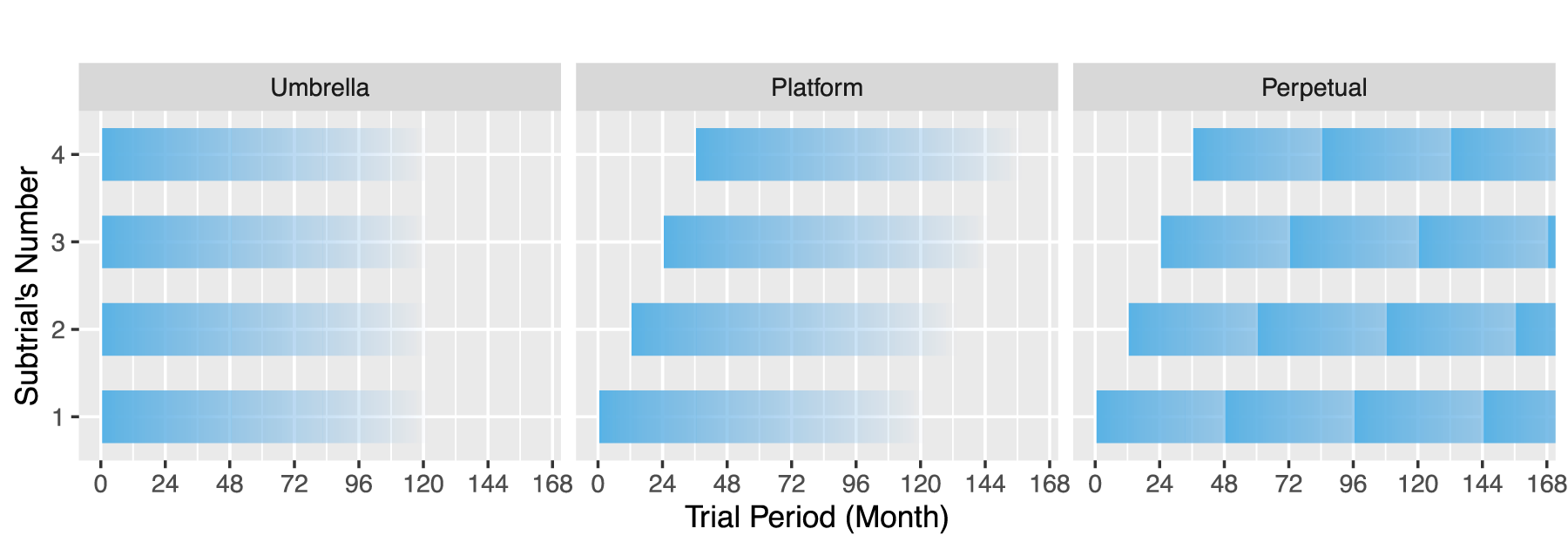

The simulation results are presented in three subtrial-entry patterns:

the umbrella pattern, which has a fixed number of subtrials, all entering from the beginning of the trial and concurrently open for enrollment (until completing their respective sample sizes), sometimes also called a multi-arm trial; the platform pattern, which has a fixed number of subtrials entering the trial over time in a staggered fashion, leading to a varying number of them concurrently open for enrollment; and the perpetual pattern, which is a variant of the platform pattern with an unbounded stream of subtrials entering the trial over time, but with a fixed limit on the number of subtrials concurrently open for enrollment.

These patterns are illustrated in Figure 1, where the darkest color highlights the time of entry into the master protocol trial, and fading illustrates the uncertainty of the enrollment completion time of a subtrial. In our simulation study, in the platform and perpetual pattern we assume that there is

An illustration of subtrial-entry patterns.

In our simulation study we limited the number of open subtrials to four, as in Figure 1. For master protocols with umbrella or platform subtrial-entry patterns, a subtrial that completes enrollment is not replaced. In the perpetual pattern, the number of open subtrials is limited to four, and once this number is reached, a new subtrial (once it is available) can only enter when another one completes enrollment.

All three patterns may be applicable in different situations and are of interest on their own, often without being able to choose between them. The EU-PEARL master protocols discussed in subsection 2.1 fall into the perpetual pattern, with subtrials gradually entering whenever they become available. The total number of subtrials nor the limit on the number of concurrently open subtrials are not fixed in the protocol.

For each subtrial

Response rate vectors

In the simulation scenarios presented here, we consider

Subtrial analysis and decision rules

For each subtrial with its prespecified sample size, the decision of success declaration is taken as a result of the final analysis, which is performed at the end of the period during which the endpoint of the last enrolled participant of the subtrial is observed. There are no decisions taken at interim sample sizes.

The final analysis for each subtrial is as defined in the EU-PEARL master protocols20,25 based on confidence distributions, see subsection Appendix A.1 for details. To define a decision rule for our simulation study, we chose the ineffective response rate to be

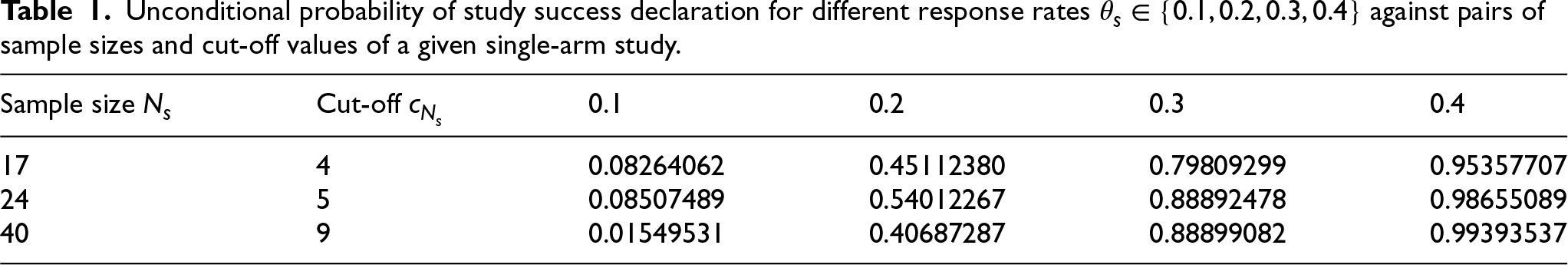

Unconditional probability of study success declaration for different response rates

against pairs of sample sizes and cut-off values of a given single-arm study.

Unconditional probability of study success declaration for different response rates

The decision rules regard an intervention of subtrial

A simulation scenario is defined jointly by the set of assumptions about unknown truths and by a master protocol trial design variant. The set of assumptions that were varied includes the subtrial-entry pattern and the vector of subtrial response rates, defined in the previous subsections. We did not vary the assumptions about the participants’ enrollment.

The design variant includes the choice of sample size

Overall, we present results of

It is important to realize that the ordering of the subtrials’ response rates does not matter in the umbrella pattern regardless of the allocation procedure, nor does it matter when the allocation procedure is

Simulation results

The simulation results presented in this section are averages obtained from 50,000 individual simulation runs of each simulation scenario. Taking the averages means that we are reporting expected values of the metrics that are obtained via Monte Carlo simulation.

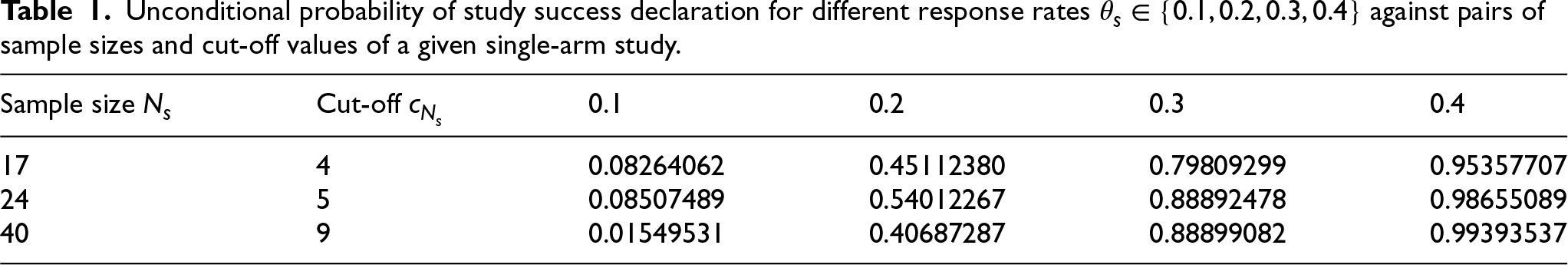

Number of correct success declarations

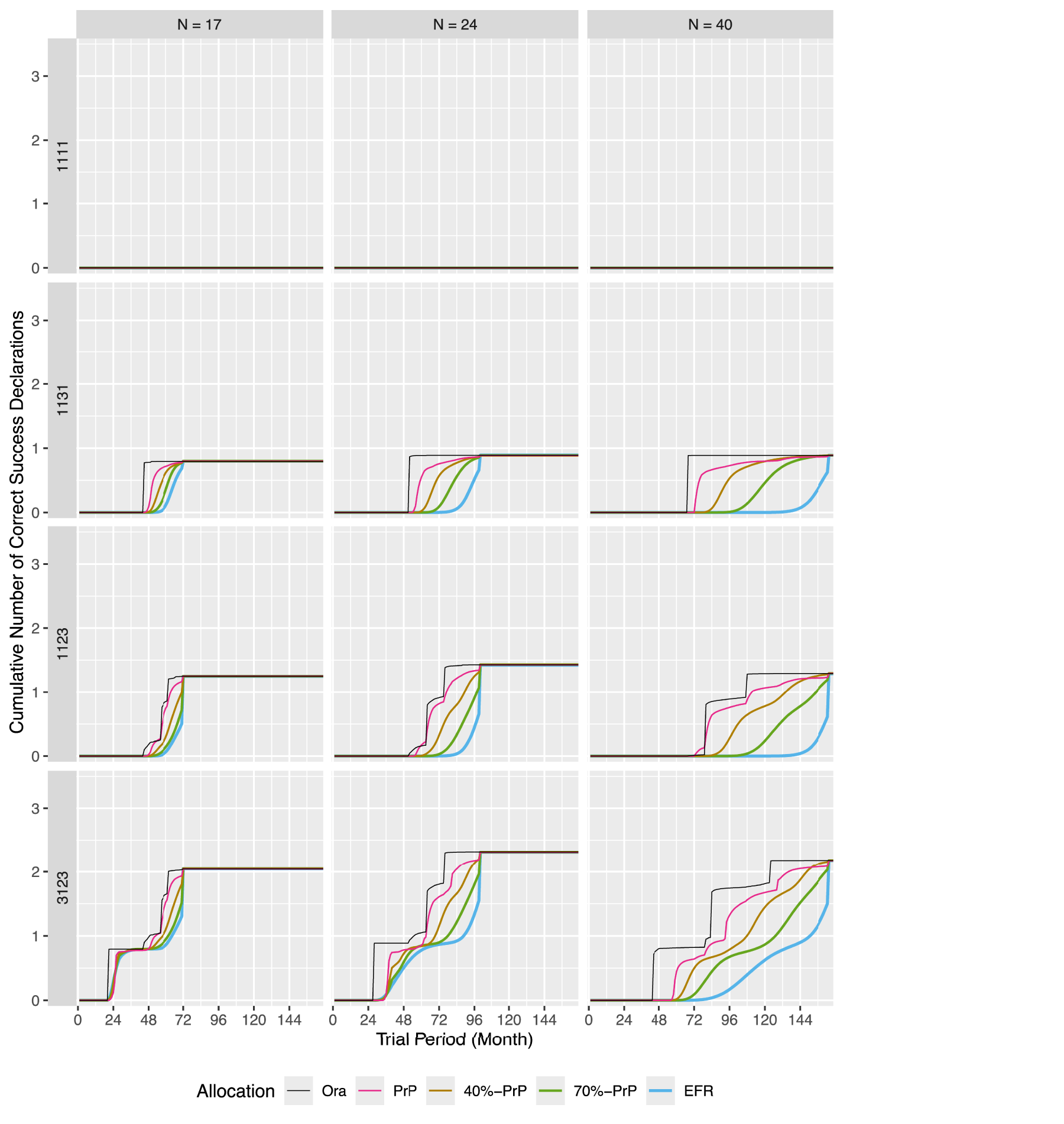

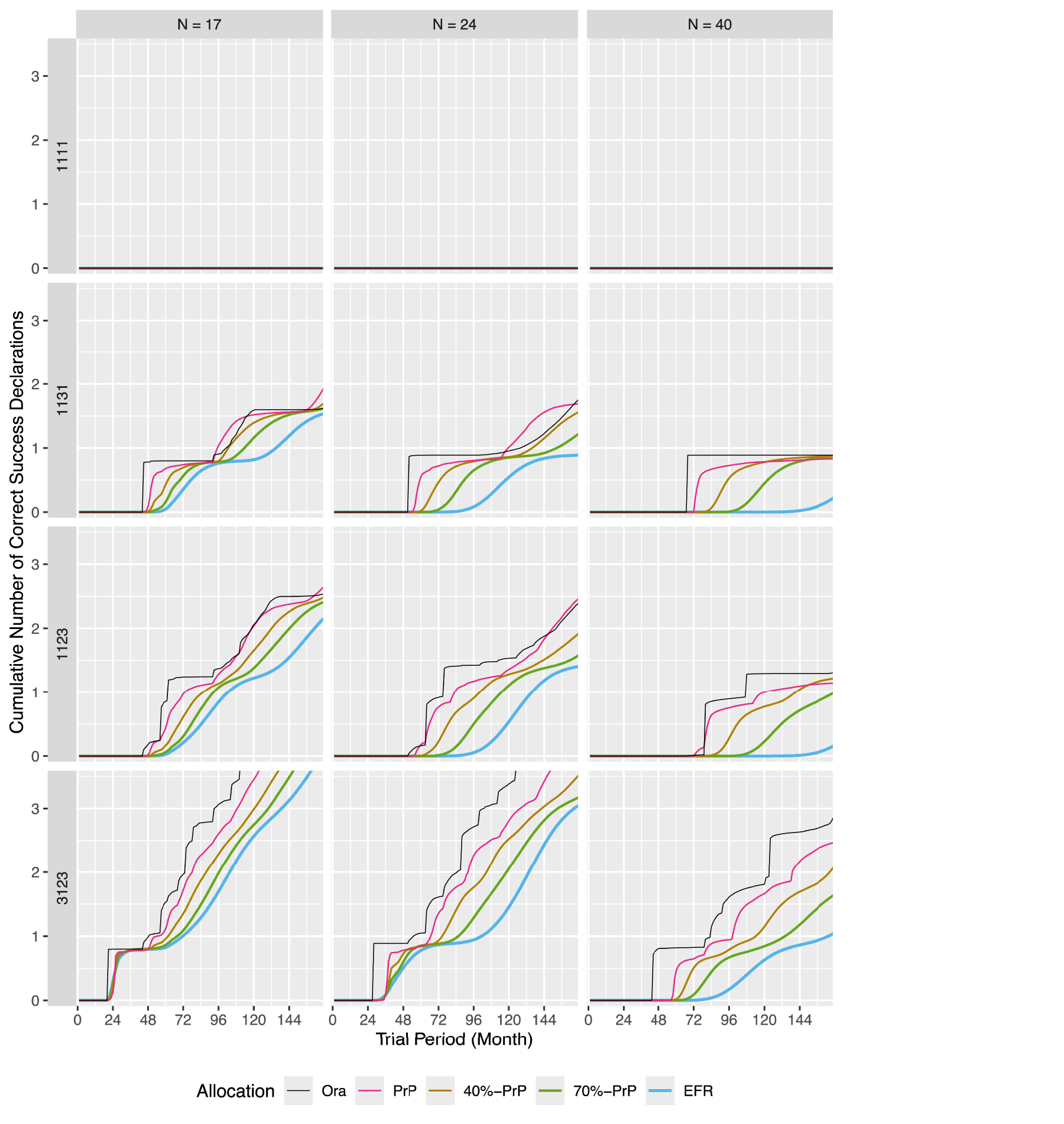

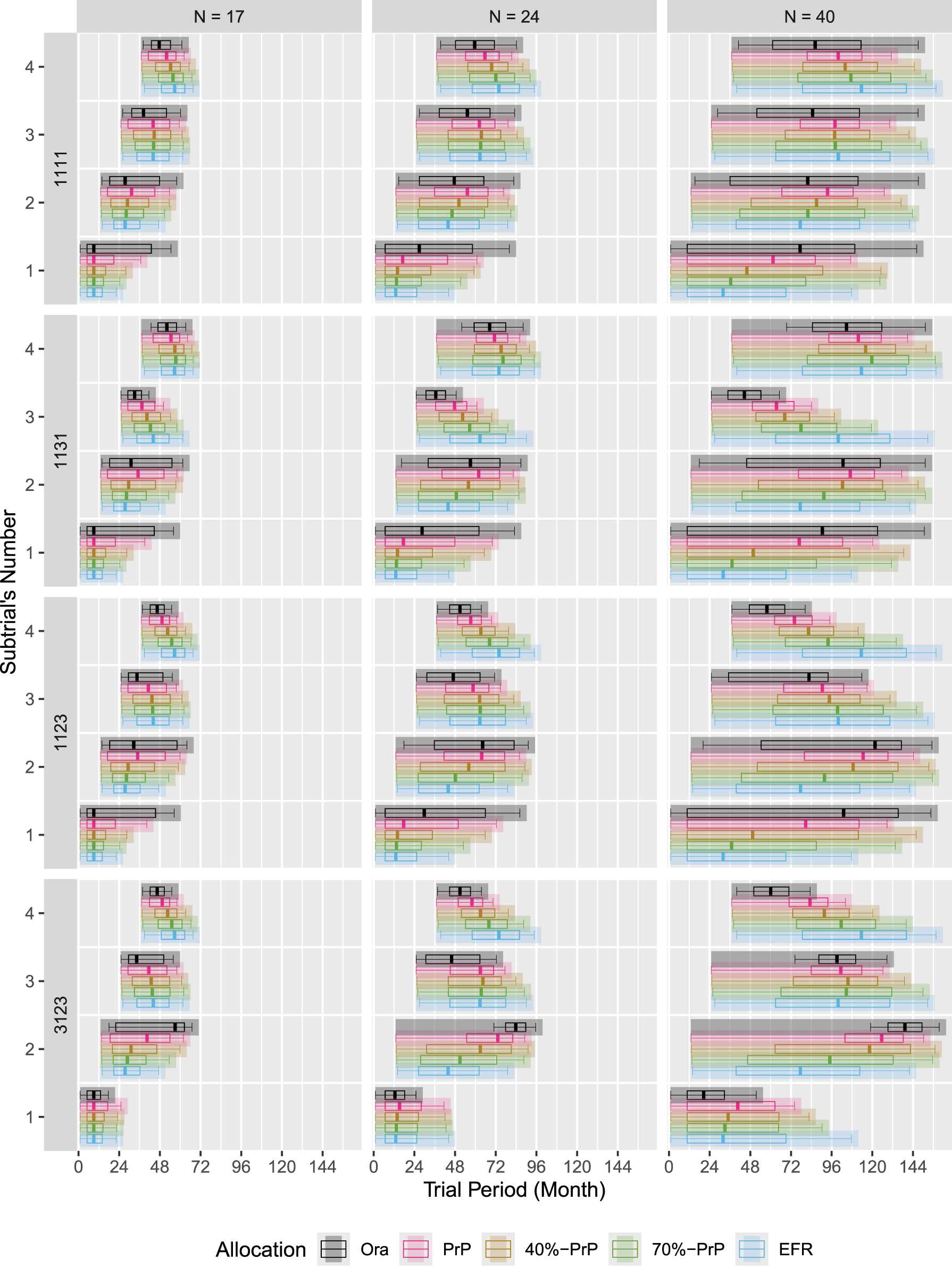

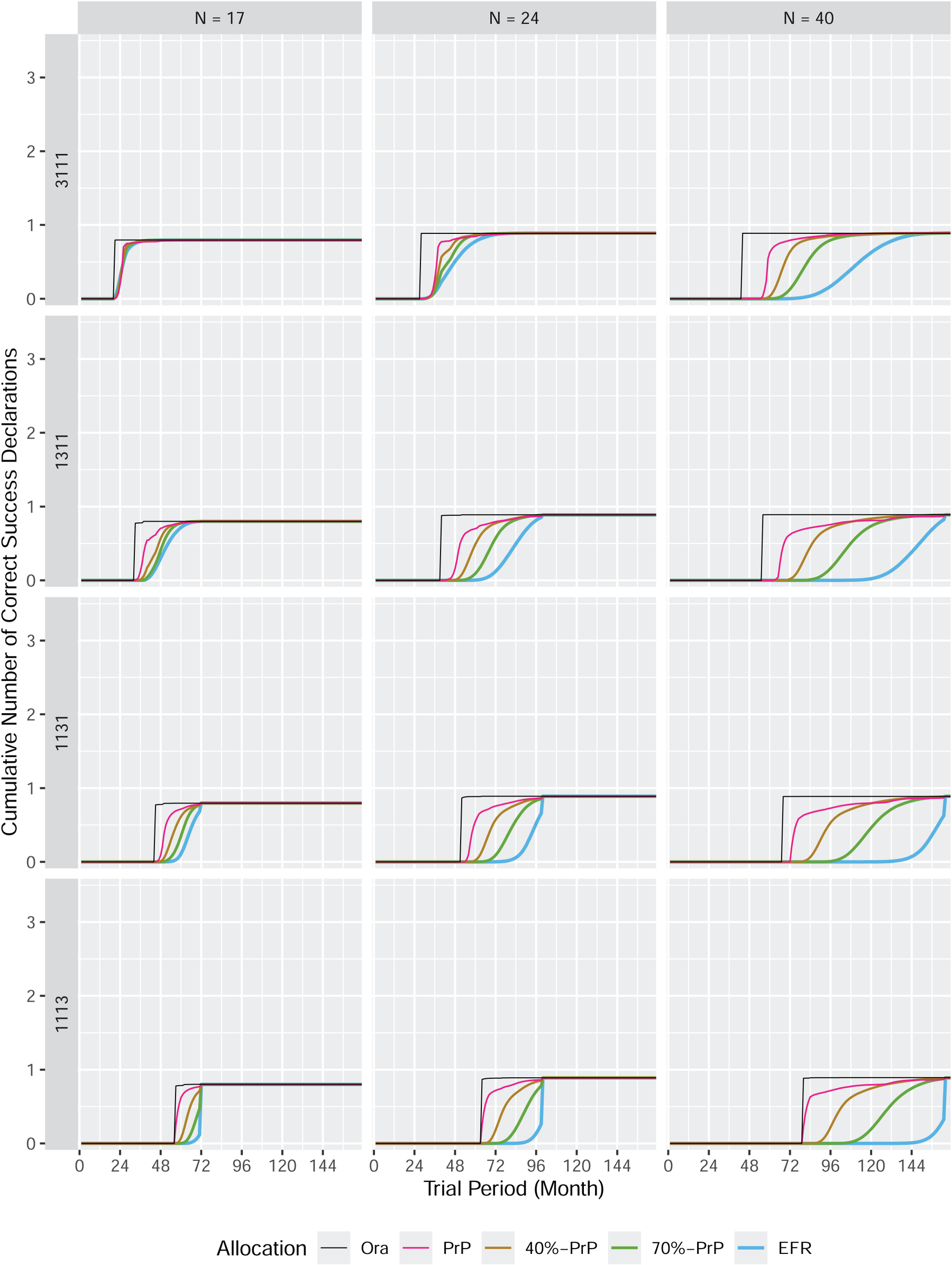

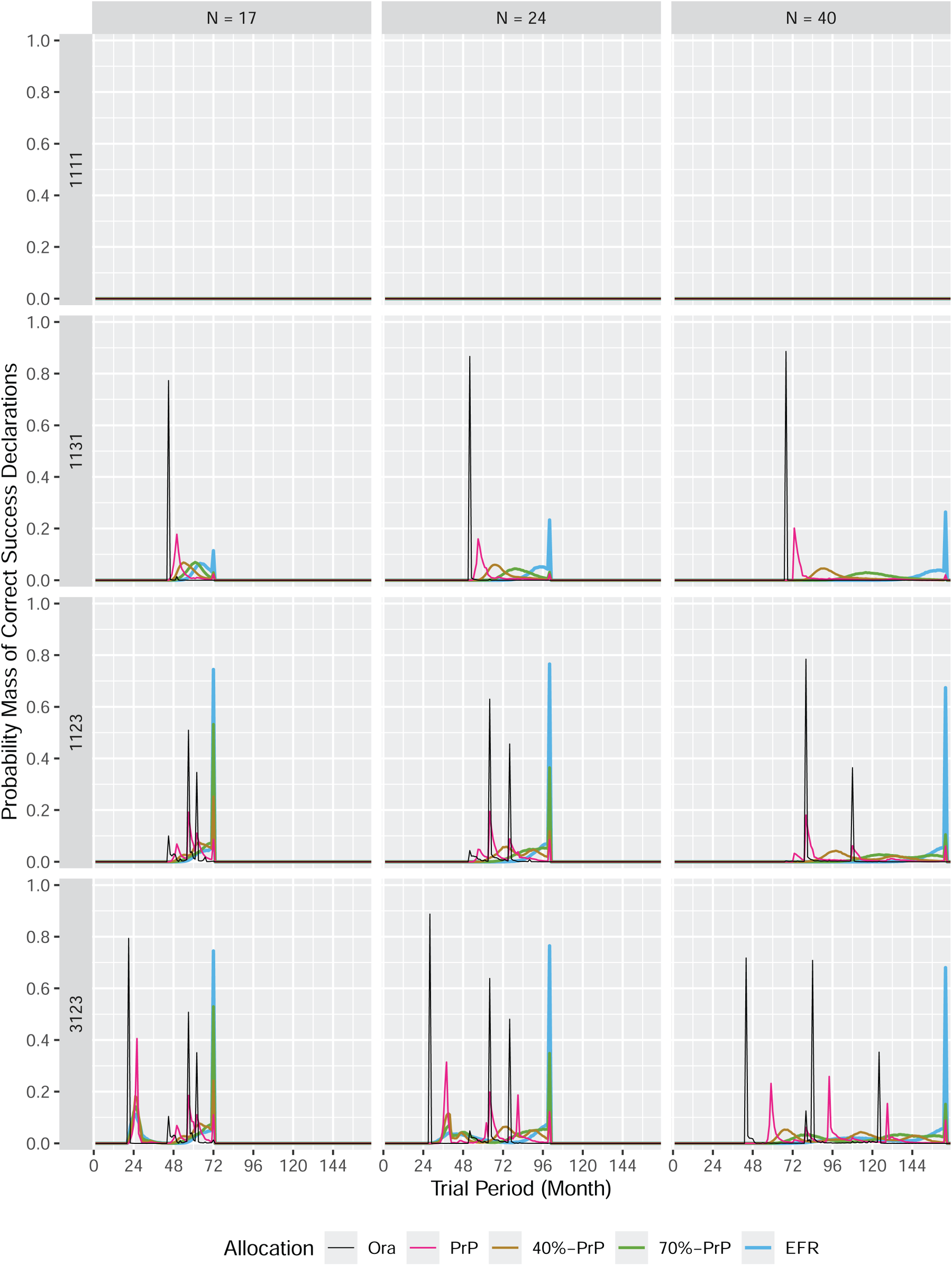

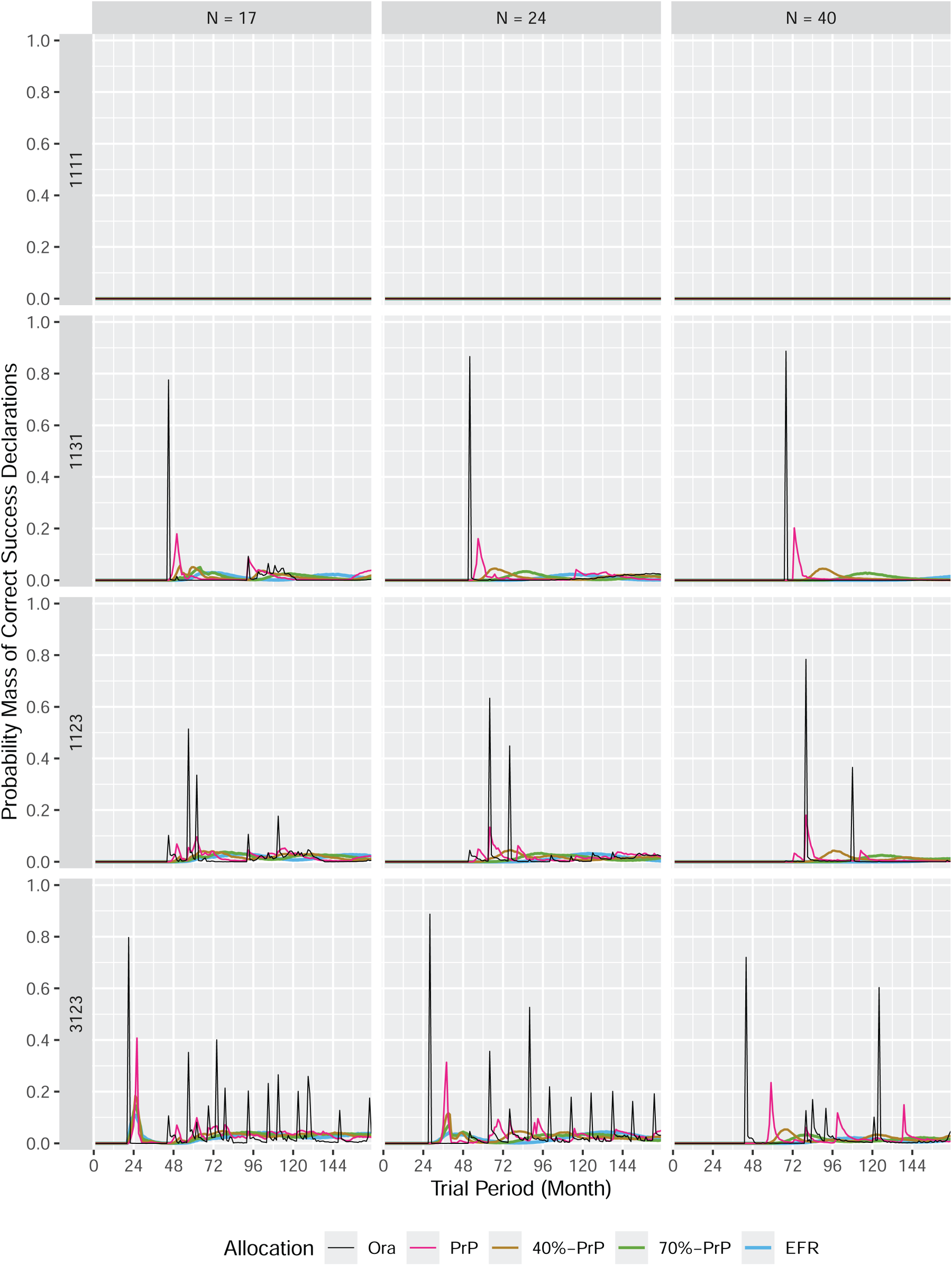

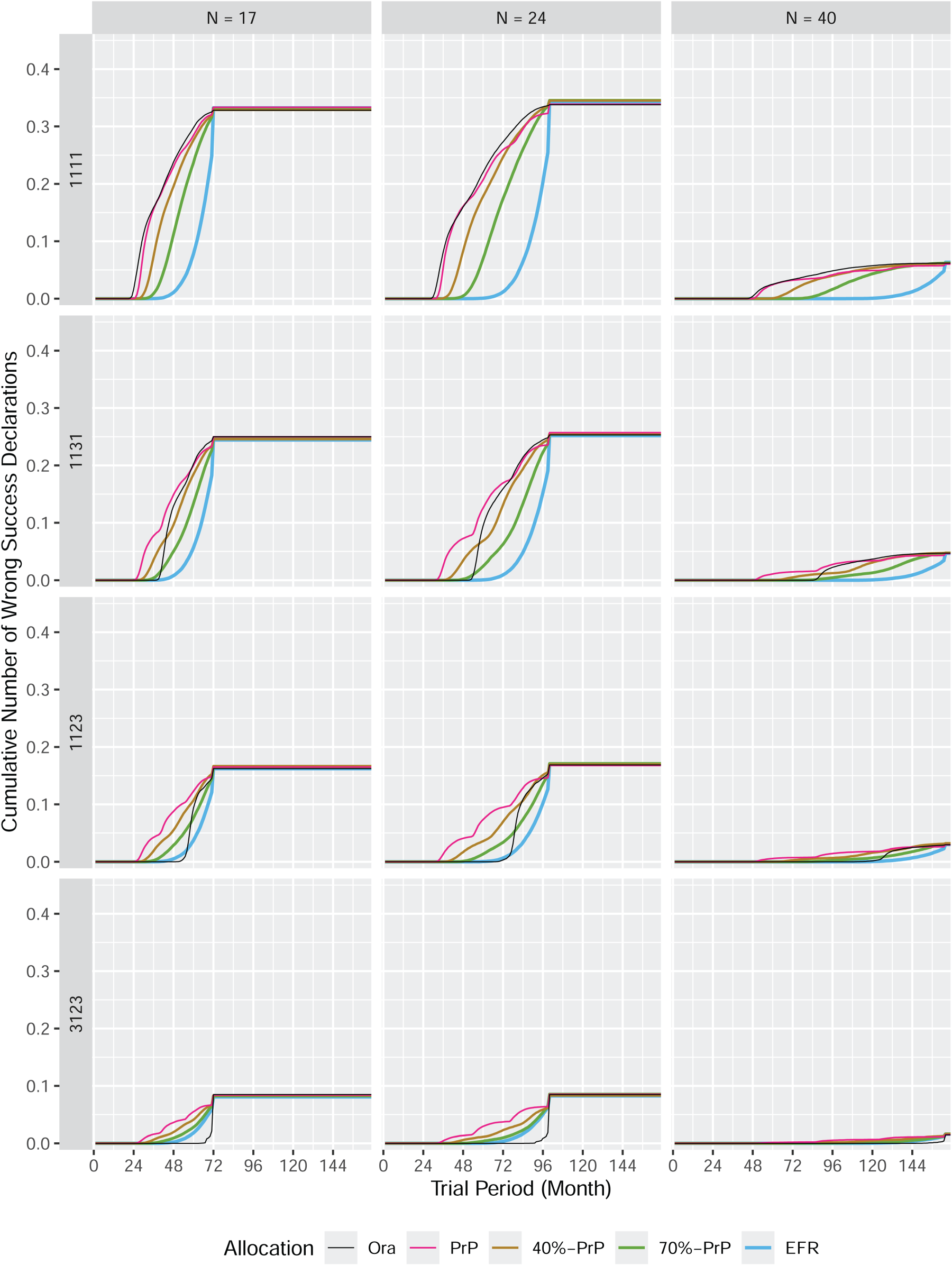

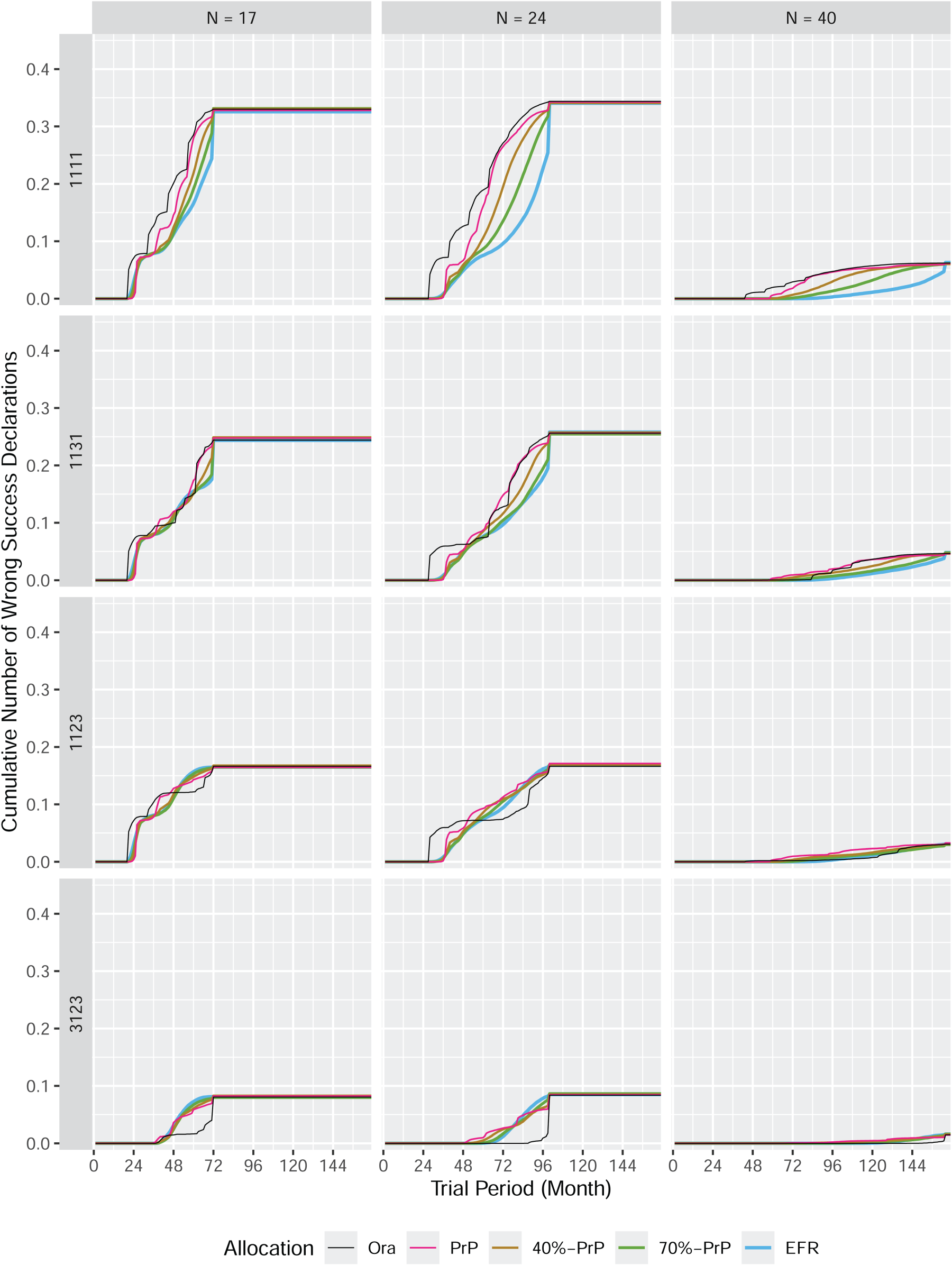

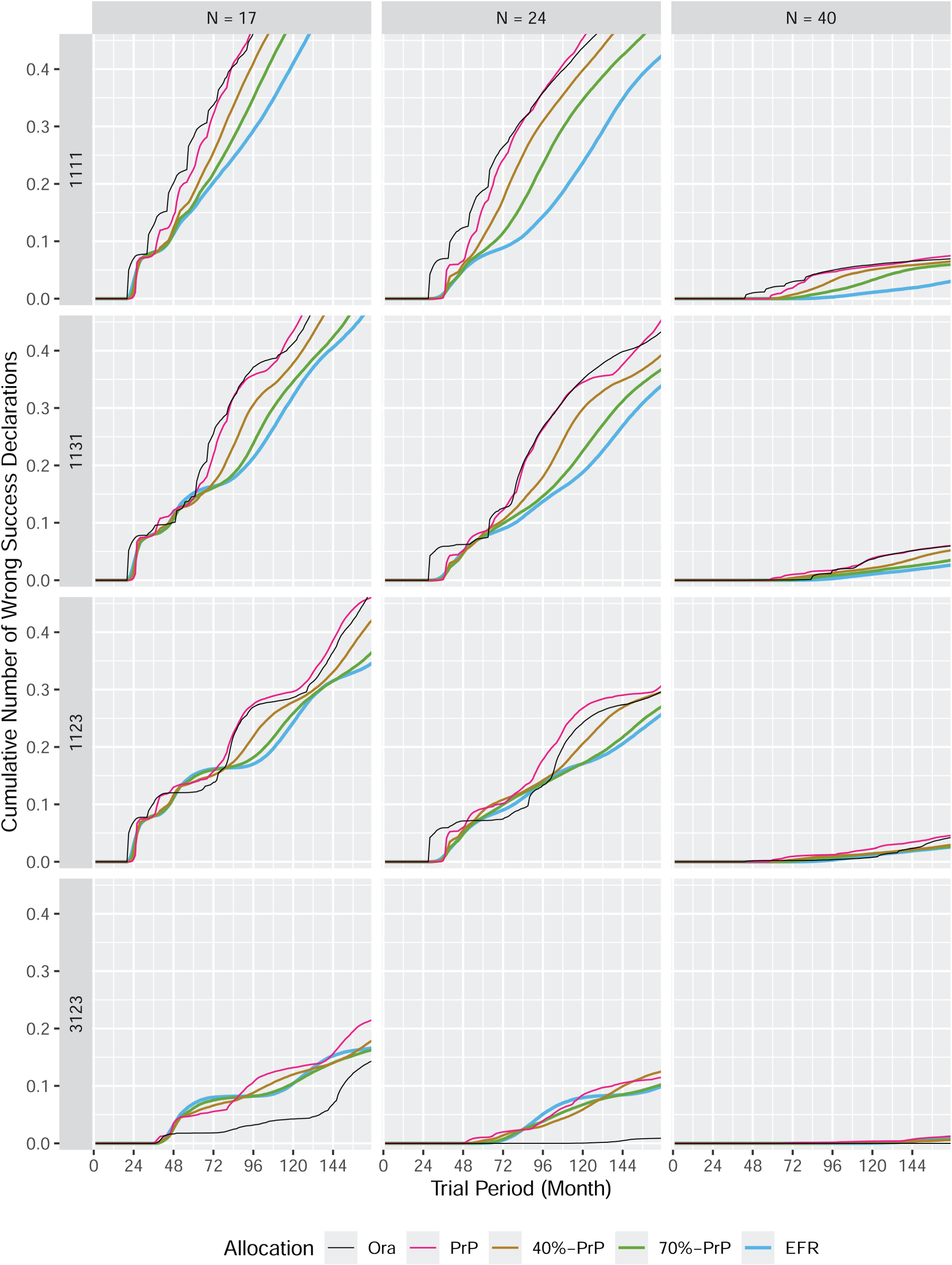

The figures in this section present the expected cumulative number of correct success declarations (vertical axis) as a function of time (horizontal axis) for the different response rate vectors (rows) and sample sizes (columns). There is a separate figure for each subtrial entry pattern (umbrella, platform, and perpetual). The different colors in these figures represent the different allocation procedures:

A correct success declaration is a success declaration for a subtrial with an underlying response rate

The master protocol trials with umbrella (called umbrella trial in what follows) and platform (called platform trial in what follows) subtrial-entry pattern include exactly four subtrials in our simulations. These master protocol trials end after

For the umbrella and platform trials, the overall expected cumulative number of correct success declarations at the end of the trial (i.e. the plateau) is predetermined by the scenario, and can be obtained from Table 1. This table provides the probability to declare success for a subtrial as a function of sample size (rows) and true response rate (columns). Therefore, in an example when just one subtrial has a response rate

The cumulative number of correct success declarations in an umbrella trial.

The cumulative number of correct success declarations in a platform trial.

For master protocol trials with a perpetual subtrial-entry pattern (called perpetual trial), the number of subtrials is not limited to four. Rather, there will always be maximum of four subtrials that are open for enrollment for any participant

The cumulative number of correct success declarations in a perpetual trial.

Figure 2 summarizes the results for umbrella trials. Since the overall expected cumulative number of correct success declarations is predetermined and does not require simulations, the information displayed lies in the time course, which describes the trajectory how this number is reached.

Not surprisingly, the slowest procedure is

In contrast, the

In the scenario 1123 (third row), the

Whatever the scenario, the

Figure 2 shows that the procedure based on predictive probabilities (

The randomized predictive probability procedures (

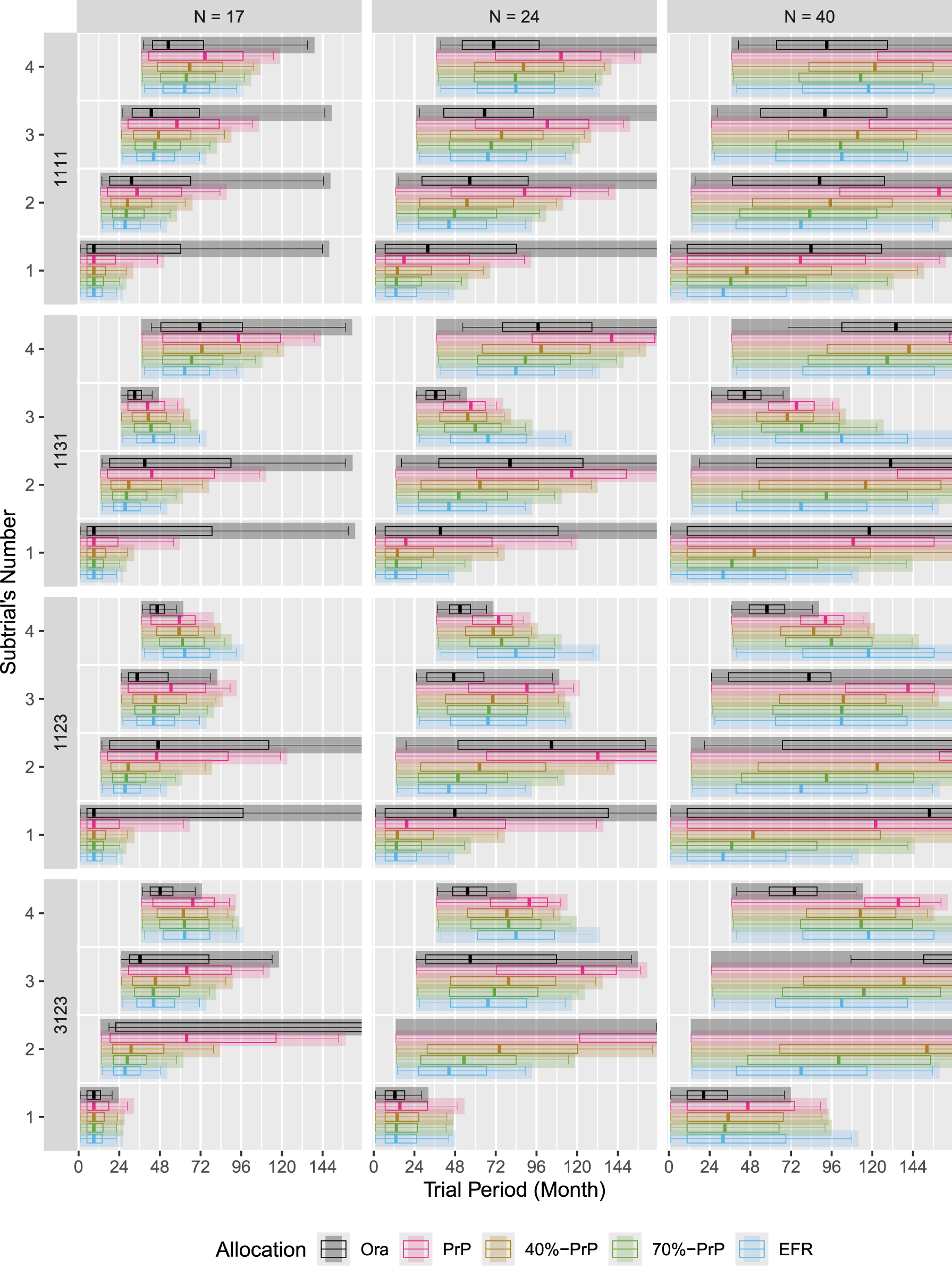

Platform trials

In principle, the situation is the same for platform trials, see Figure 3 for the cumulative distribution and Figure 10 in Appendix B for the probability mass. The difference to the umbrella trial is a smaller “frame” (the difference between the curves for the

In a platform trial, the

How the performance of the

Note that the results would change if we change the entry order of the subtrials. In a scenario like 3111, one would probably see less difference between an umbrella and platform trial. The difference between the two entry patterns becomes more pronounced the later the good subtrial enters the trial: for 3111 the two patterns are very similar, whereas for 1113 the difference is most visible, see Figure 8 in Appendix B.

Perpetual trials

The situation is different for master protocols with perpetual entry pattern, called perpetual trial, as shown in Figure 4 with the cumulative distribution and Figure 11 in Appendix B with the probability mass. The total number of correct success declarations within the first

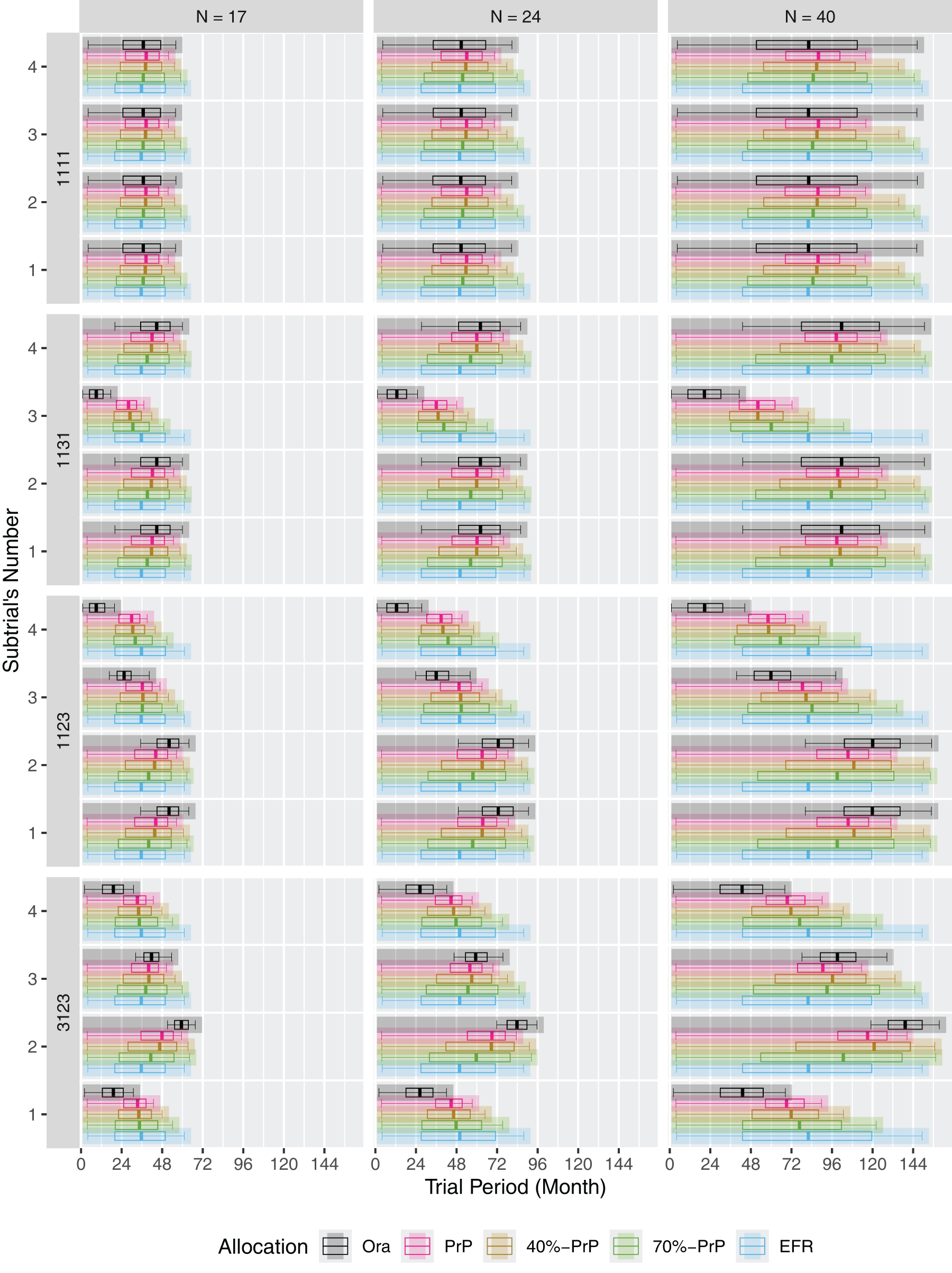

Subtrial duration and enrollment anatomy

The figures in this section present the expected enrollment to subtrials (vertical axis) under each allocation procedure as a function of time (horizontal axis). Each plot of the figure is determined by a response rate vector (rows) and sample size (columns). Within each plot of the figure, there is a row for each subtrial (the top row corresponds to subtrial

The shaded bar represents the expected duration when the corresponding subtrial is in the trial. The bar starts at the expected time of opening for enrollment and ends at the expected time of final analysis. The starting time is predetermined in our simulation results: for umbrella trials, all subtrials are open from period 1, for the platform and perpetual trials, subtrial

The boxplots exhibit the anatomy of enrollment of participants and their allocation to each subtrial. The left and right whiskers of the boxplots indicate the expected time when the first and the last participant are allocated to the respective subtrial, and the box itself represents the inter-quartile range and the median of the expected time when 25%, 50%, or 75% of the sample size was enrolled to that subtrial.

Umbrella trials

Figure 5 provides deeper insight into how the allocation procedures compare for umbrella trials. Within each scenario (defined by a response rate vector and a sample size), there is no difference in subtrial duration when using the

The expected subtrial duration in an umbrella trial.

As an example, take the second row, where subtrial 3 has a response rate

Similar findings apply to the third and fourth rows. In the third row, subtrial

In general, the

The

For example, in the second row, both procedures clearly favor subtrial

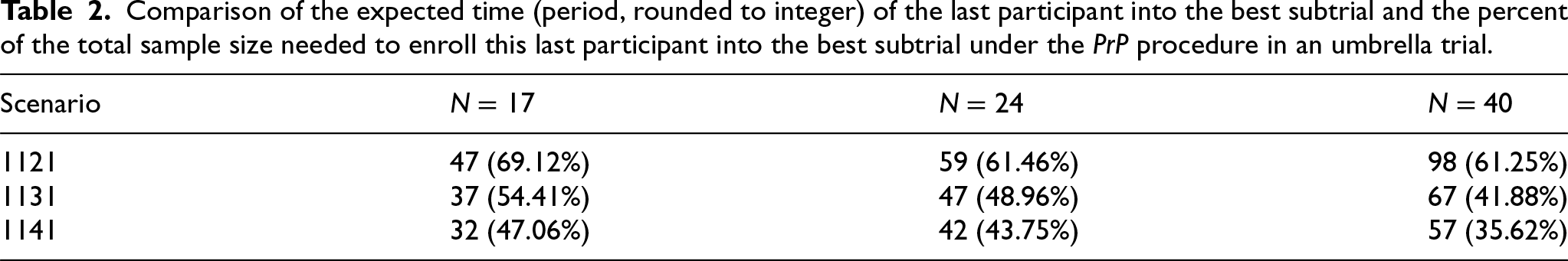

Table 2 shows that the performance of the

Comparison of the expected time (period, rounded to integer) of the last participant into the best subtrial and the percent of the total sample size needed to enroll this last participant into the best subtrial under the

Note that the corresponding values for

We can also see that the behavior of the randomized

The situation is more involved for platform trials with staggered trial entry, as presented in Figure 6. Under

The expected subtrial duration in a platform trial.

In contrast, the

One can explain this behavior of the

The

Figure 7 shows the expected subtrial durations for a perpetual trial. Only the first four subtrials to enter are displayed. In principle, we see similar patterns as for platform trials under the different allocation procedures. However, the expected subtrial duration for all the subtrials is considerably longer than that for the subtrials in a platform trial. This is because a subtrial that completes enrollment is replaced in a perpetual trial, increasing the competition about new participants to be allocated. This means that null subtrials tend to enroll slower (as compared to a platform trial) and will remain in the master protocol trial for longer.

The expected subtrial duration in a perpetual trial.

The cumulative number of correct success declarations in a platform trial: variants of scenario 1131.

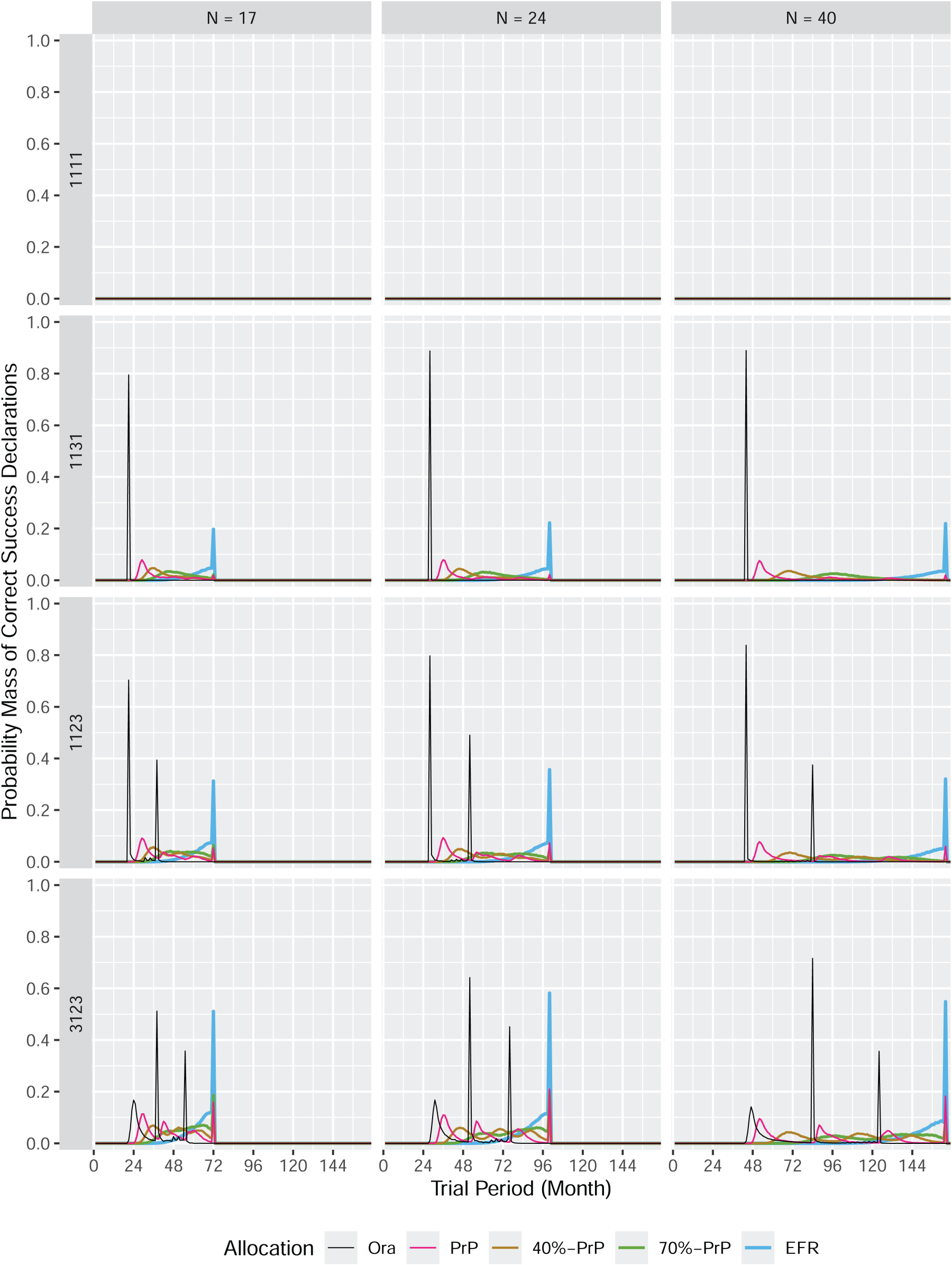

The probability mass of correct success declarations in an umbrella trial.

The probability mass of correct success declarations in a platform trial.

The probability mass of correct success declarations in a perpetual trial.

In subsections 4.1 and 4.2 we have discussed that the

This can be best explained by looking at the number of wrong success declarations as displayed in Figures 12–14 in Appendix B. These figures display similar information as those discussed in subsection 4.1, except that wrong success declarations are presented instead of correct success declarations. A wrong success declaration is a success declaration for a null subtrial (i.e. with response rate

The cumulative number of wrong success declarations in an umbrella trial.

The cumulative number of wrong success declarations in a platform trial.

The cumulative number of wrong success declarations in a perpetual trial.

As an example, take the first row of Figure 12 corresponding to the global null scenario 1111. All four subtrials are null, because their true response rate is

One could argue that this is not an issue in the global null scenario 1111, because it does not matter which of the four null subtrials finishes first, or whether they all finish around the same time. However, when looking at the second row of Figure 12 we observe the same behavior of the

In a scenario with both good and null subtrials, it is not a desired feature to complete a null subtrial first. This is the motivation to define randomized procedures, and one can see from the three figures that these procedures indeed reduce this undesired feature.

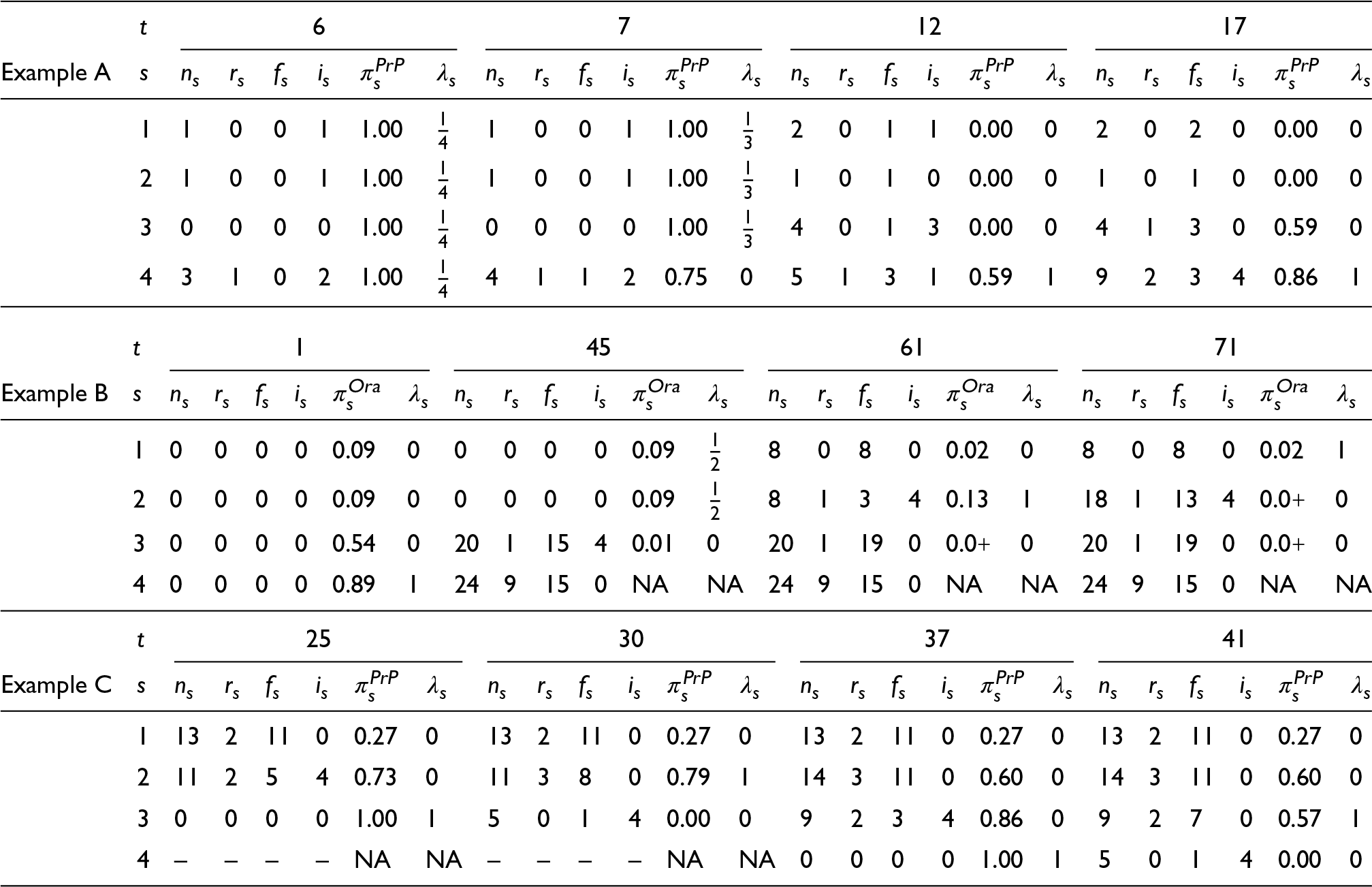

In this section, we present

As before, enrollment to the trial is simulated at the rate of 1 new participant per period, and that participant’s endpoint is observed after 4 periods. The simulation of the operation of the trial is that participants are enrolled at the beginning of the period, and the endpoint is observed and analysis is done at the end of the period, setting up the allocation/randomization for the next period (Table 3).

Example A: An umbrella trial using

PrP

In the umbrella trial all subtrials start at the outset. Initially, there is no information on any subtrial so each is assigned a predictive probability of 1, with the result that initial allocation is equal randomization across the 4 subtrials. The first participant’s endpoint is observed during period 5 and included in the update for the start of period 6. Up to this point, the random allocation sequence was subtrial 4, subtrial 4, subtrial 2, subtrial 4, subtrial 1. Randomly, one participant has been allocated to subtrials 1 and 2, and 3 participants to subtrial 4. The observation of the first participant on subtrial 4 is a response and the state of the trial is as follows: subtrials 1, 2 and 3 have predictive probability of success of 1 by virtue of having no observations, and subtrial 4 has a predictive probability of success of 1 as 1 response out of 1 observation has been observed.

So allocation in period 6 is equal randomization across all subtrials, and by chance participant 6 is allocated to subtrial 4. The second participant enrolled (also by chance on subtrial 4) is observed and is a non-response. Subtrials 1 through 3 still have no observations and so are still given a predictive probability of 1, but subtrial 4’s 1 response and 1 non-response gives it a predicted probability of 0.75. Our allocation procedure just allocates to the prevailing subtrial(s), so allocation in period 7 is equal randomization across subtrials 1, 2 and 3 (Table 3).

Allocations and observations data for example individual simulations of response rates scenario 1123, at the start of period

for each subtrial

:

is the number of allocated participants,

is the number of observed responses,

is the number of observed non-responses,

is the number of incomplete observations (unavailable due to endpoint delay),

is the probability of success declaration (rounded to

decimals, where

represents a strictly positive value rounded to

) under procedure

, and

is the resulting randomization probability.

Allocations and observations data for example individual simulations of response rates scenario 1123, at the start of period

Over the next 5 periods, non-responses come in for every subtrial and eventually, at the start of period 12 every subtrial has at least one observation. The first participants on subtrials 1, 2 and 3 result in non-responses, and so allocation becomes restricted to subtrial 4 with its 1 response and 3 non-responses. In period 16 the last incomplete participant on subtrial 3 is observed and is a response. For one period there is equal randomization between subtrial 3 and subtrial 4, both having 1 response and 3 non-responses. The participant arriving in period 17 is allocated to subtrial 4 and period 12’s participant is observed and adds a response to subtrial 4.

From this point on, allocation is solely to subtrial 4 until it is fully enrolled in period 31. It already has 3 responses, while 5 responses are sufficient to guarantee success at final analysis and in this instance that occurs in period 22 (suggesting that early subtrial stopping for success could be useful in this setting). From then on, it has a predictive probability of 1.

Notice how the

The umbrella trial using the

Hence subtrial 4 is allocated to exclusively from the outset. Enrollment to subtrial 4 completes in period 24, with the first 24 participants in the trial allocated to it. What happens next is not so straightforward though. Initially of course subtrial 3 is allocated to, as the subtrial with the next highest response rate. However only one response is observed in the first 16 participants on subtrial 3, and in period 45, even knowing that the true response rate for the remaining 8 participants on subtrial 3 (4 of whom have been allocated to subtrial 3 already but have not been observed) is

Subtrials 1 and 2 are now allocated to. As the observations of the participants already allocated to subtrial 3 arrive, they are all non-responses. Not surprisingly, since the simulated response rate is only 0.1, the observations for participants on subtrials 1 and 2 are non-responses, until period 61 when a response is observed on subtrial 2.

All allocation is now to subtrial 2 until the number of non-responses observed, and the shrinking number of possible future observations means that, at the start of period 71, subtrial 1 gains the mantle of highest predictive probability, despite having no responses.

Allocation is then to subtrial 1 for the next 10 periods until at period 81 it is shown that it too has a lower conditional probability than subtrial 3. Allocation then switches back to subtrial 3, which completes to 24 enrolled, with one more response, but not enough to make the subtrial to declare success. The trial completes allocating to subtrials 1 and 2, all of which are non-responses.

Example C: A platform trial using

PrP

The last individual simulation we present is in the same response scenario (1123), using

In this setting, at the outset only subtrial 1 is present, so all allocation is to subtrial 1 until subtrial 2 joins the trial at the start of period 13. Until it has observation data, subtrial 2 is given a predictive probability of 1, and so is allocated the next 4 participants. In period 17 the first participant on subtrial 2 is observed and it is a non-response, meanwhile there has been observed 2 responses and 10 non-responses on subtrial 1. Allocation switches to subtrial 1 for 1 period, but the second observation on subtrial 2 is a response and allocation remains with subtrial 2 until period 25 when subtrial 3 enters.

From period 25 allocation is to subtrial 3 as it has no observations data and is given a predictive probability of 1. In period 30, the first observation on subtrial 3 becomes available and is a non-response, and allocation returns to subtrial 2. By the start of period 33, 4 of the first participants on subtrial 3 have been observed with 2 responses and 2 non-responses, and allocation switches back to subtrial 3 until period 37 when subtrial 4 enters.

Subtrial 4 starts with no observations data and given a predictive probability of 1. In period 41 the first observation on subtrial 4 becomes available and it is a non-response, so allocation switches back to subtrial 3. However, once the first 4 participants on subtrial 4 are observed, there are 2 responses and 2 non-responses, and allocation returns to subtrial 4 where it remains until subtrial 4 completes enrollment. Allocation then returns to subtrial 3 until it too completes enrollment. Finally, allocation switches back and forth between subtrial 1 and 2 until both complete (and fail to declare success).

Discussion

These accounts of individual simulations hopefully make clear how the allocation procedures behave. Where trials use designs with some complexity, analyzing individual simulations is crucial to understanding how the adaptations perform in practice (and not just the average), for debugging the simulation code (looking at individual simulations exposed two bugs in our implementation, well after we were happy with the overall operating characteristics of the simulations), and for conveying the design to others.

A feature of the

Conclusion

In this paper we have introduced participant allocation and randomization procedures for master protocols that consist of single-arm subtrials. The objective of these procedures is to allocate participants sooner to those subtrials that are more likely to declare success. We have simulated the performance of these procedures under different patterns of subtrial entry to the master protocol, for different sample sizes, and for different scenarios of underlying response rates, and compared their performance to that of two reference procedures: equal fixed randomization across subtrials (which is a realistic alternative for such master protocols), and an allocation procedure that is based on knowledge of the true underlying response rates of the subtrials (which is not implementable in real life but serves as a reference for how soon the subtrials that are most likely to declare success could complete enrollment). To perform the simulations, we have developed an open-source clinical trial designer and simulator “Single-arm.” 21

Our simulations show that the procedure that allocates each participant to the subtrial with the highest predictive probability of current study success is able to considerably speed-up enrollment to subtrials that are most likely to declare success. The relative performance of this procedure improves with increasing sample sizes per subtrial, and with increasing response rates in the subtrial with the intervention with highest response rate, ceteris paribus. It outperforms the randomized procedures which were tested in our simulation study. The

The randomized procedures that were tested are mixtures between the predictive probability procedure and equal randomization. They are not quite as efficient as the

Our simulation study was set up for small sample sizes, which was driven by one of our motivating examples in a rare disease with a binary endpoint. In such a situation, the power does not increase monotonically with increasing sample sizes when fixing the underlying response rate, see Chernick and Liu. 30 The benefit of the procedure would be even more pronounced when looking at larger sample sizes, or when considering situations with a continuous endpoint. In any case, for binary endpoints one needs to carefully consider which sample size to use.

The procedures considered in our simulation study are based on the predictive probability to declare success in the final analysis given the available data when a new participant is to be randomized. Ultimately, it does not matter how this predictive probability is being calculated, whether according to a frequentist approach (as in this paper), or using a Bayesian approach. 31 The type of endpoint does not matter either, and by using a neutral scale like predictive probabilities one can even combine subtrials with different types of endpoints when applying the procedure. The event of the predictive probability (current study success) may also be adjusted to other objectives of the master protocol trial, for instance, identifying the most efficacious intervention or an intervention with the highest predicted probability of success of a future trial, the whole development program, adoption by the out-of-trial patient population, etc.

The family of allocation procedures offers a potential for further investigation and improvements when considering additional metrics. For instance, ideas for allocation procedures from the literature on the multi-armed bandit problem

32

might be worth exploring and may potentially be tweaked to fit this particular setting. Furthermore, we have taken a very naïve approach to tie-breaking in

There are several directions in which the proposed allocation procedures or the setting of the simulation study could be extended, but we have kept the paper streamlined with the aim of focusing on the foundational methodological ideas. There is therefore room for introducing such extensions either in future research or when employing these in a particular disease context, which would typically be motivated by practical considerations for specific disease, and not considered all at the same time. Such features include: allowing early subtrial stopping on the basis of success declaration once the required number of responses is observed; allowing early subtrial stopping on the basis of futility (both of which could help to further accelerate screening for efficacious interventions and might mitigate some of the concerns against response-adaptive allocation); allowing early endpoint observations in a schedule of visits; employing other randomization schemes than the complete randomization we have used in order to avoid extreme imbalance in participants’ allocations; development of distributed, multi-center implementation of the proposed allocation procedures as master protocol trials for rare diseases will likely be implemented as multi-center trials.

Footnotes

Acknowledgments

The authors are grateful to Elias L. Meyer for his support and feedback on our coding of the clinical trial designer and simulator based on SIMPLE.

Ethical considerations

Not applicable.

Consent to participate

This article does not contain any studies with human or animal participants.

Consent for publication

Not applicable.

Declaration of conflicting interest

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Tom Parke is an employee and Peter Jacko was an employee of Berry Consultants, a consulting company that specializes in the design, conduct, oversight, and analysis of adaptive and platform clinical trials. Günter Heimann was an employee of Novartis Pharma AG when this work was mostly done and owns stocks of this company.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was originated and partly supported by the EU-PEARL project that received funding from the Innovative Medicines Initiative 2 Joint Undertaking under grant agreement No 853966-2; this Joint Undertaking received support from the European Union’s Horizon 2020 research and innovation programme and EFPIA.

Data availability

The datasets generated and analyzed during the current study are available from the corresponding author on reasonable request.

Methodology details

In this section we briefly describe how the predictive probability of success is obtained for our simulation study. The approach is based on the EU-PEARL trial and the methodology described in Heimann et al., 20 which we briefly present here to make this paper self-contained.

Additional simulation results

In this section we present additional simulation results discussed in Section 4.