Abstract

There are multiple possible cluster randomised trial designs that vary in when the clusters cross between control and intervention states, when observations are made within clusters, and how many observations are made at each time point. Identifying the most efficient study design is complex though, owing to the correlation between observations within clusters and over time. In this article, we present a review of statistical and computational methods for identifying optimal cluster randomised trial designs. We also adapt methods from the experimental design literature for experimental designs with correlated observations to the cluster trial context. We identify three broad classes of methods: using exact formulae for the treatment effect estimator variance for specific models to derive algorithms or weights for cluster sequences; generalised methods for estimating weights for experimental units; and, combinatorial optimisation algorithms to select an optimal subset of experimental units. We also discuss methods for rounding experimental weights, extensions to non-Gaussian models, and robust optimality. We present results from multiple cluster trial examples that compare the different methods, including determination of the optimal allocation of clusters across a set of cluster sequences and selecting the optimal number of single observations to make in each cluster-period for both Gaussian and non-Gaussian models, and including exchangeable and exponential decay covariance structures.

Introduction

The cluster randomised trial is an increasingly popular experimental study design. It is used to evaluate interventions applied to groups of people, like classrooms, clinics, or villages, or when the outcome for one individual in the group depends on the outcomes for the others, as is the case with infectious diseases, for example.1,2 The design of a cluster trial involves the specification of all aspects of the study, many of which are determined by practical, ethical, and contextual restrictions. However, one major aspect of cluster trial design that can be resolved, or at least supported, with statistical analysis is the sample size of individuals and clusters, when observations are captured from the clusters and individuals, and when each cluster receives the intervention(s).

From both an ethical and practical standpoint, minimising the number of individuals, clusters, or observations required to achieve an inferential goal is highly desirable. For cluster trials, inferences are almost always based on the variance of the estimator of the treatment effect. Designs that minimise the variance of a specific parameter, or combination of parameters, are said to be ‘c-optimal’. However, for any particular design problem, enumerating all the different possible designs and their associated variances to identify the c-optimal design is often impossible, given the number of possible variants. Therefore, we use algorithms that can quickly identify an efficient, or ‘optimal’, design. The correlation of outcomes within clusters, over time, and potentially within-individuals over time, makes the analysis of the efficiency of a study design more complex though, over and above individual-level studies with independent observations.3–5

There have been recent methodological advances in the optimal experimental design literature to identify optimal designs in studies with correlated observations, as well as several recent studies to look at the problem specifically for certain types of cluster randomised trial. In this article, we review the literature on optimal cluster randomised trial designs and review and translate more general methods and algorithms from the broader literature to this context. We present results for different cluster trial design scenarios using a range of methods to illustrate the use of the different approaches and to identify optimal cluster trial design for a range of contexts.

What is the optimal cluster trial problem?

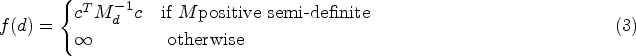

There are multiple types of optimality in the experimental design literature, which are referenced using an ‘alphabet’ system of letters.6,7 The primary objective of a cluster randomised trial is almost always to provide an estimate of the treatment effect of an intervention and an associated measure of uncertainty for one or more outcomes. Other parameters in the statistical model, such as the covariance parameters or intraclass correlation coefficient, are not of primary interest. For example, the predominant method used to justify the sample size within a particular study design is the power for a null hypothesis significance test of the treatment effect parameter.3,4 Thus, efficiency and optimality in this setting relate to minimising the variance of the treatment effect estimator, which is c-optimality.

We now introduce concepts and notation to describe the methods to identify c-optimal cluster trial designs. Unless otherwise stated, time is modelled discretely where there are repeated measures. Approximations can be made to a continuous time model by finely discretising time within this framework; using a regular grid over a continuous space is a common strategy in optimal design work.

8

We represent matrices using capital letters, for example

We assume there are

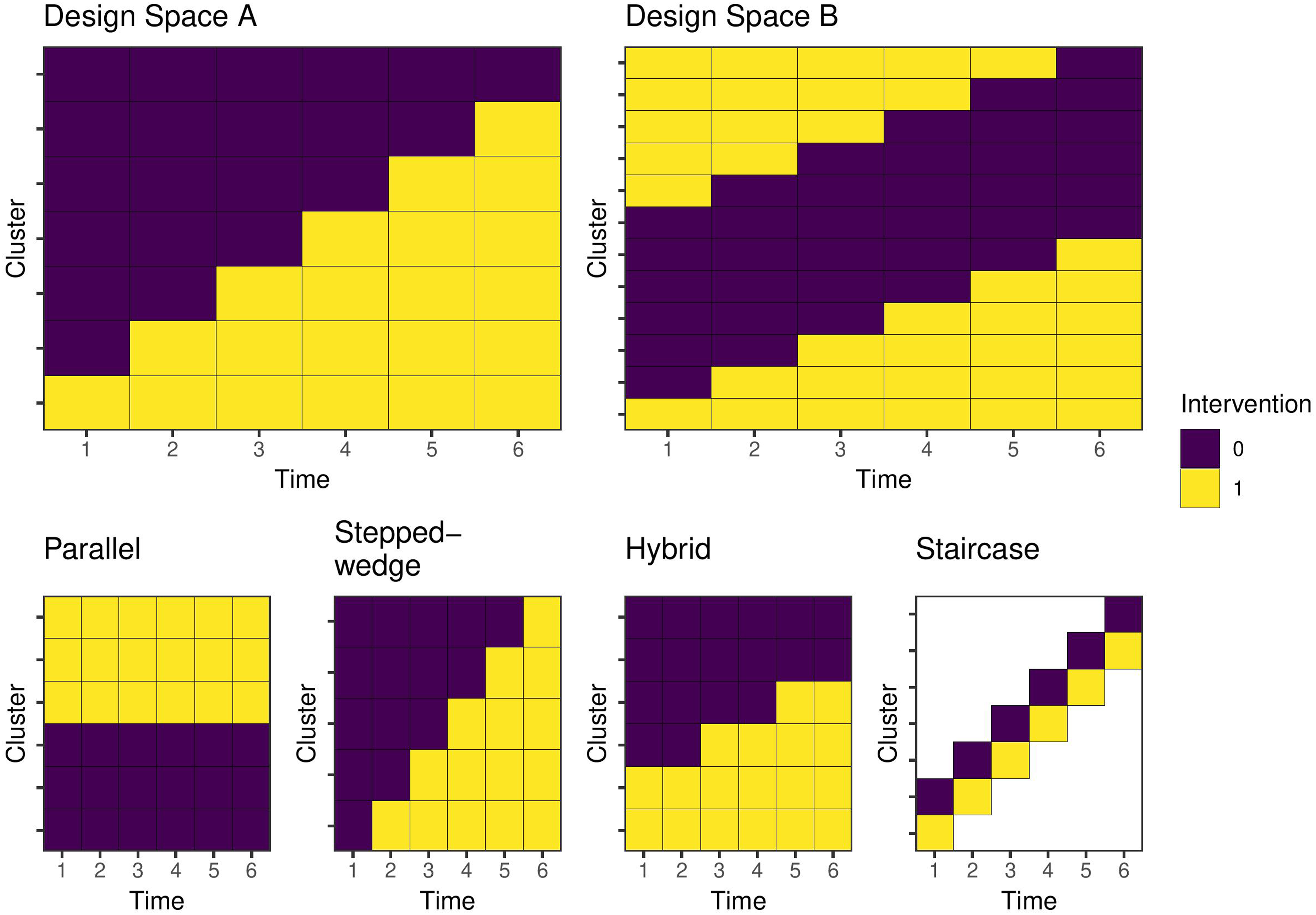

The top left panel of Figure 1 (Design Space A) represents a cluster randomised trial design space. This design space includes the following restrictions:

No reversibility, that is, clusters can only cross from control to intervention states. There must be contemporaneous comparison in at least one time period, that is, a before-and-after design would not be permitted since it would not include a randomised comparison.

Each row indicates a cluster sequence, and each column a discrete time interval or period. Within each cell, there are a pre-specified number of observations. Each row in the diagram is illustrated only once, but there may be multiple repeats of the same row in the design space depending on the formulation of the problem. Where there are multiple instances of the same cluster sequence, the design space includes the most common types of cluster randomised trial design with repeated measures: a parallel design, in which a cluster receives the intervention in all periods or the control in all periods, or a stepped-wedge cluster randomised trial, where the intervention roll out is staggered such that all clusters start in the control condition and then one or more clusters receives the intervention in each time period until all clusters are in the intervention state. A ‘hybrid’ design consists of a mix of parallel and stepped-wedge cluster sequences, and a ‘staircase’ design includes only the cluster-periods on the diagonal. Figure 1 illustrates these designs. Given that this design space incorporates among the most widely used cluster randomised trial designs, and that these restrictions reflect common real-world limitations, it is an obvious choice for many applications. However, more complex design spaces (such as Design Space B) are required to allow for alternative designs like cluster cross-over. Such a design space is illustrated in Figure 2, which removes the no reversibility restriction. In these cases, the cross-over design is almost always the optimal design.

9

Examples of cluster trial design spaces and study designs for six time periods. Each row represents a cluster, or cluster sequence, and may be repeated more than once in the design space. Each cell is a cluster-period and contains one or more individual potential observations. Design Space A encodes a no reversibility assumption and includes contemporaneous comparisons. Design Space B allows for both addition and removal of the intervention over time. Parallel, stepped-wedge, hybrid, and staircase are all designs within both design spaces.

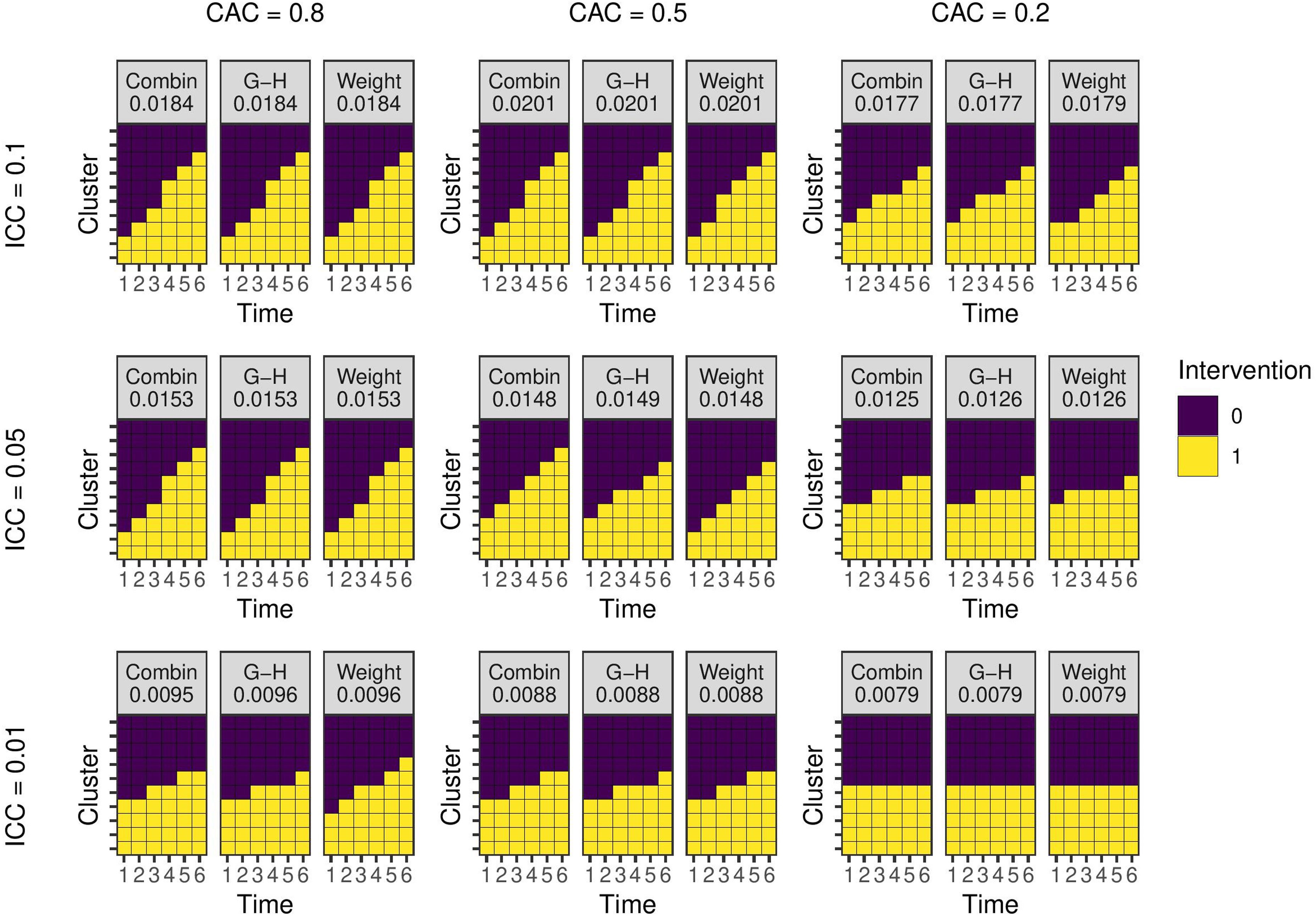

Optimal study designs with 10 clusters and six time periods for different values of the ICC and CAC using a linear mixed model with EXC2 covariance structure with

We base our analyses around a generalised linear mixed model (GLMM). For outcome vector

We assume that the matrices

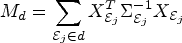

Most experimental design criteria are based on the Fisher information matrix. For the GLMM above, the information matrix for the generalised least squares estimator, the best linear unbiased estimator, for a particular design is:

Without loss of generality, we focus on models for cluster trials where individuals are cross-sectionally sampled in each cluster-period. Where relevant and also without loss of generality, we use

Covariance function

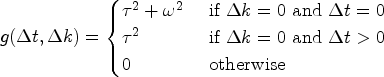

The observed outcome for an individual Cluster Exchangeable

Nested Exchangable

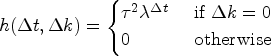

Auto-regressive or exponential decay

In the cluster and nested exchangeable functions above, the parameter

For Gaussian-identity models, we use Intra-class correlation coefficient. Equal to Cluster-autocorrelation coefficient. Equal to

The

In this discussion, we also assume there is a single treatment that enters the model as a dichotomous treatment indicator. More complex cluster trial designs may feature multiple arms and treatments, 11 including continuous treatments representing dose. We do not consider these designs here, however the optimal design methods below can be extended to these cases.

Methods and previous literature

We divide the currently available methods for the optimal cluster trial design problem into three categories: (i) derivation of exact formulae for the treatment effect variance or precision for specific models and design spaces; (ii) general ‘multiplicative’ methods that derive weights to place on each unique experimental unit; and (iii) general combinatorial optimisation algorithms designed to select the optimum

Exact formulae

For simpler models one can derive explicit formulae for

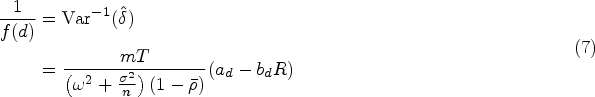

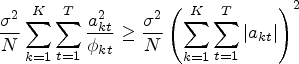

Girling and Hemming

9

provided perhaps the most notable study of this type for cluster trials. They derive a formula for the precision of the treatment effect estimator in a linear mixed model under covariance structure EXC2 along with individual-level cohort effects, although we drop the individual level cohort effects for this summary. They consider the problem of determining which periods to introduce the intervention into each of the

We can rewrite model (1) as a linear mixed model for individual

Girling and Hemming

9

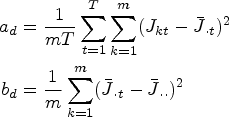

provided a method for using equation (7) to produce an optimal design under a no reversibility constraint. We assume the clusters are numbered such that a lower numbered cluster has greater than or equal number of intervention periods than any higher numbered cluster. We then map the cluster-period indexes to coordinates on a unit square

Lawrie et al.

13

derive explicit formulae for the optimal proportion of clusters to allocate to each sequence (row) in the Design Space A to minimise

Zhan et al.

15

extended Lawrie et al.’s analyses using Girling and Hemming’s work to identify more general ‘optimal unidirectional switch designs’ by extending the probability weights (8) to a larger design space with sequences incorporating exclusively control or intervention conditions, and with EXC1 covariance functions. Here, unidirectional switching means no reversibility, giving, for example Design Space A in Figure 1. The more general probability weights for the design space with

There are several other studies that derive expressions for the treatment effect variance to identify efficient study designs. Hooper and Copas 16 considered a linear mixed model with AR1 covariance for a cluster randomised trial with continuous recruitment. They consider a parallel study design with baseline measures and aim to identify the when the intervention should be implemented in the intervention arm under different sample sizes and covariance parameters. They calculate the value of (3) for a large range of models and graphically compare the results. Copas and Hooper 17 take a similar approach with a linear mixed model with EXC1 covariance with a parallel trial design. They aim to identify optimal sample sizes and the proportion of data to collect in baseline and endline periods. Moerbeek 18 also uses an explict criterion, although not strictly for c-optimality, as they aim to identify an optimal sample size within treatment and control groups subject to a budget constraint. They consider only a single time period, such that the treatment effect estimator is a difference in means. Lemme et al also consider a similar cost–benefit optimisation approach for multicentre trials. 19

Deriving explicit formulae for the variance or precision is appealing due to its relative simplicity. Identifying a c-optimal design does not require specialist tools and can be done using spreadsheet software. However, these methods are typically limited to specific models and designs, such as exchangeable covariance structures, linear models, and equal cluster-period sizes. The mathematical approach used to derive the precision formula does not carry over to more complex covariance structures or design spaces, nor to problems where the experimental unit is an observation or cluster-period. One can calculate the value of the c-optimality criterion directly for any design, as Hooper and Copas 16 do. However, the number of designs one must calculate the variance for grows exponentially and prohibitively with the size of the design space. More general methods are required for these extended problems.

Determining probability weights for experimental units, as the studies cited above do explicitly,15,13 is a useful strategy to simplify the optimal design problem. One can generalise this approach to tackle more complex models and design spaces. We place a probability measure

Elfving’s theorem

Elfving’s theorem is a classic result in the theory of optimal designs.

20

The original formulation considered independent, identically distributed observations. Holland-Letz et al.

21

and Sangol

22

generalised the theorem to the case where there is correlation within experimental units and multiple observations, such as within a cluster, but not between experimental units, such as if the experimental unit was a cluster-period or observation. Elfving’s theorem provides a geometric characterisation of the c-optimal design problem. If the experimental units are uncorrelated, the information matrix in equation (2) can be rewritten as:

A ‘generalised Elfving set’ is:

A design

For proof see Holland-Letz et al. 21 and Sagnol. 22

Sagnol

22

shows how the generalised Elfving theorem can be used to define a second-order cone program, which is a type of conic optimisation problem than can be solved with interior point methods. This program returns the optimal values of

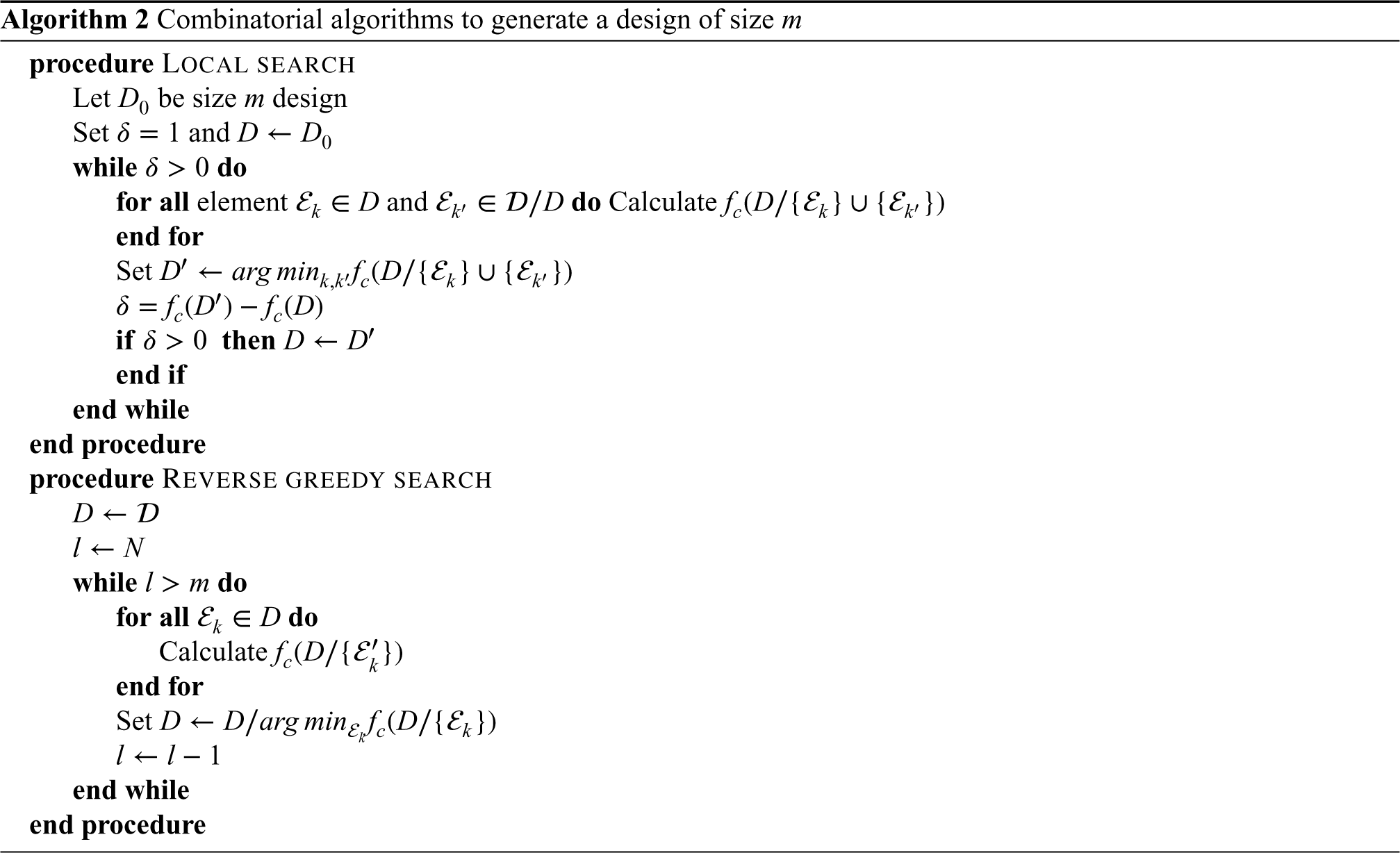

Mixed model weights

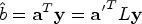

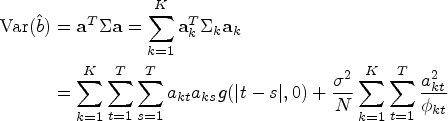

Girling (forthcoming) has proposed an algorithm for finding the optimum set of weights that can be applied to the case when experimental units are equivalent to cluster-periods. Since observations in this context are exchangeable within a cluster-period, when the weights are rounded to number of observations (see next section), the result is equivalent to when the experimental unit is a single observation. We consider the aggregated cluster-period model (6). The best linear unbiased estimator for the linear combination

Rounding proportions of experimental units

Where a method produces an optimal design in terms of the proportion of experimental units of each type to include, we must use a rounding procedure to translate it into exact numbers. There are several methods for converting proportions to integer counts that sum to a given total. The problem was famously identified for converting popular vote totals in states into number of seats in the US House of Representatives; the solutions are named after their proposers.

24

Pukelsheim and Rieder

25

following others

26

argued that the procedure of John Quincy Adams is the most efficient method of rounding to an exact design. As Pukelsheim and Rieder note though, the design weights do not contain enough information to exactly identify a experimental design, and so multiple designs may be generated. However, this procedure is based on the assumption that a ‘fair’ allocation includes at least one experimental unit of each type. For many cluster trial design problems we do not require this restriction, for example, a parallel trial is optimal in some cases.

9

In other cases though, there may be practical reasons to ensure staggering of the roll-out,10,27 in which case this rounding scheme would be the most efficient. Hamilton’s rounding procedure is an alternative method. We initially assign

While the solutions generated by different rounding schemes, and the algorithms discussed in the next section, may in fact be an exact optimal solution, they cannot guarantee such a result. In the results section we provide several examples where the results of the methods may disagree. The equivalence theorem 28 provides precise conditions to check whether a given design is indeed optimal. However, it requires knowledge of the optimal design. Girling and Hemming 9 use an approach of comparing the relative efficiency of the design to that of a cluster cross-over, which is the most efficient if it is within the design space. Not all design spaces include the cluster cross-over design, and so the optimal design may not be known. Holland-Letz et al. 23 derived a lower bound for the relative efficiency of a given design in the context of a pharmacokinetic study with correlated observations.

Combinatorial optimisation algorithms

Watson and Pan

29

showed how the c-optimal design criterion in equation (3) is a ‘monotone supermodular function’, which means it is amenable to one of several combinatorial optimisation algorithms that are well-studied in the literature. A supermodular function is one for which, given a design

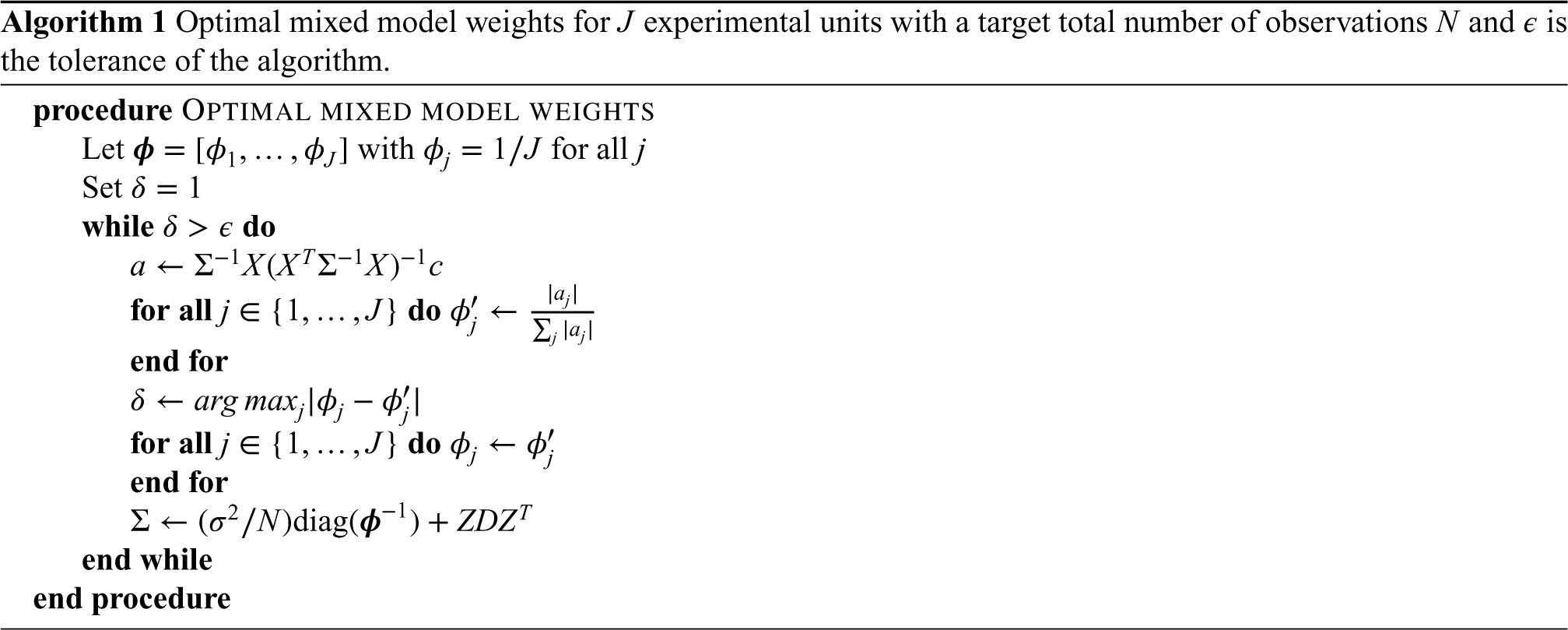

The three algorithms relevant to supermodular function minimisation are the local search, the greedy search, and the reverse greedy search algorithm.26,31,32,33 We exclude the greedy search algorithm here, as it starts from the empty set and successively adds observations. As we require a minimum of

Finding the subset of size

The local search algorithm starts from a design of the desired size

The reverse greedy algorithm starts from the complete design space and successively removes the experimental unit that results in the largest decrease in variance. Proofs of the constant factor approximation for the reverse greedy algorithm depends on the ‘steepness’ or ‘curvature’ of

Watson and Pan 29 investigated these algorithms for a range of study designs, including cluster randomised trials. They find that empirically the reverse greedy and local search algorithms provide similar performance in terms of the variance of the resulting design. The reverse greedy search is deterministic, while the local search starts from a random design, so Watson and Pan run the local search multiple times and select the best design. They also suggest several approaches to improve the computational efficiency of these algorithms.

Kasza and Forbes 36 used a reverse greedy approach to identify optimal designs. They describe the method as estimating the ‘information content’ of clusters or cluster-periods in a design space like Figure 1, where their measure of information is the marginal change in variance from removing the observations from the design. The results presented by Kasza and Forbes 36 are qualitatively similar to those using other methods and algorithms, such as those presented below.

Hooper et al.

37

examined the optimal cluster trial designs in the context of the linear mixed model with covariance function AR1. They consider a discrete approximation to a continuous time model with continuous recruitment and polynomial functions of time. The design space consists of individuals regularly spaced over a time interval within clusters; the individuals constitute the experimental unit. They aim to provide a set of illustrative optimal designs under different parameter values for the covariance function. The method used to identify these designs could also be described as a variant of the ‘reverse greedy’ algorithm. Each iteration of the algorithm is supplemented with a type of local search, although the swaps of experimental units that can be made are limited at each step to preserve a no reversibility restriction. The designs presented by Hooper and Eldridge

10

are often qualitatively different from those presented here resulting from other methods. However, the design space they use includes a wide range of other designs, and their specfication of

Computational complexity

The computational complexity of the local and greedy searches scales as

The multiplicative weighting, optimal mixed model weights, and combinatorial methods all require calculation of the covariance matrix

Zeger et al.

41

suggested that when using the marginal quasilikelihood, approximations can be improved by ‘attenuating’ the linear predictor. For example, for the binomial-logit model one would use

Morbeek and Maas 42 examined optimal designs for clustered studies with a binomial-logisitic mixed model. The derive an approximation to the variance of the treatment effect parameter under the EXC1 covariance function using a linearisation approach with the marginal quasilikelihood. They specifically aim to identify the optimal number of individuals within a cluster in a cost–benefit framework.

The methods to generate an optimal design have so far assumed the model parameters are known. However, a well known issue for optimal experimental design methodology is that a design that may be optimal for one set of parameters or model specification may perform poorly for another. Robust designs that are efficient across a range of specifications are therefore desirable. There are multiple possible criteria for modifying the c-optimal design criterion to account for multiple designs. For example, Girling and Hemming 9 considered a minimax criterion in which they identify a design that maximises (minimises) the minimum (maximum) precision (variance) over all values of the correlation between cluster-period means. This results in a ‘hybrid’ trial design (see Figure 1). Van Breukelen and Candel 43 also considered a minimax criterion to identify a robust optimal cluster trial design when the ICC is unknown. Similarly to Moerbeek, 42 they use a cost–benefit framework and examine the optimal design under a fixed budget.

As a robust optimality criterion, the maximin function is not necessarily generally applicable. For the combinatorial methods, we require that the objective function is supermodular to fit within the framework discussed above, and the maximum of a set of supermodular functions is not necessarily supermodular. As an alternative, we can use a ‘weighted average’. In particular, we assume there is a set of

Dette 44 generalises the Elfving theorem for this robust criterion for models with uncorrelated observations. One can further generalise this theorem to the case where observations are correlated within experimental units following the results of Holland-Letz et al. 21 and Sagnol. 22 However, a specification for a program to solve this generalised problem using conic optimisation methods, extending the results of Sagnol in the single model case, is not currently available, and remains a topic for future research. An extension of the optimal mixed model weights method to robust optimal designs is similarly an open question.

Another robust c-optimality criterion is the weighted average:

We have provided code samples and examples using the

Results and examples

In this section, we provide a range of examples to illustrate the use of the methods and summarise results from several of the papers cited above. For the combinatorial algorithms, we use the reverse greedy algorithm. For multiplicative weighting methods, we select the best design from a variety of different rounding methods. Where applicable we also compare the results to those presented by Girling and Hemming. 9

Clusters as experimental units

For the first set of examples, we consider Design Space A in Figure 1 with seven unique cluster sequences and six time periods. Our goal is to identify a design of

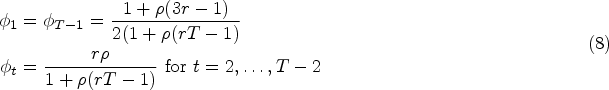

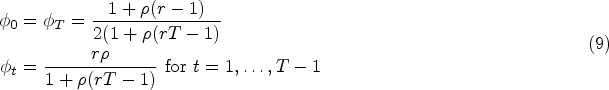

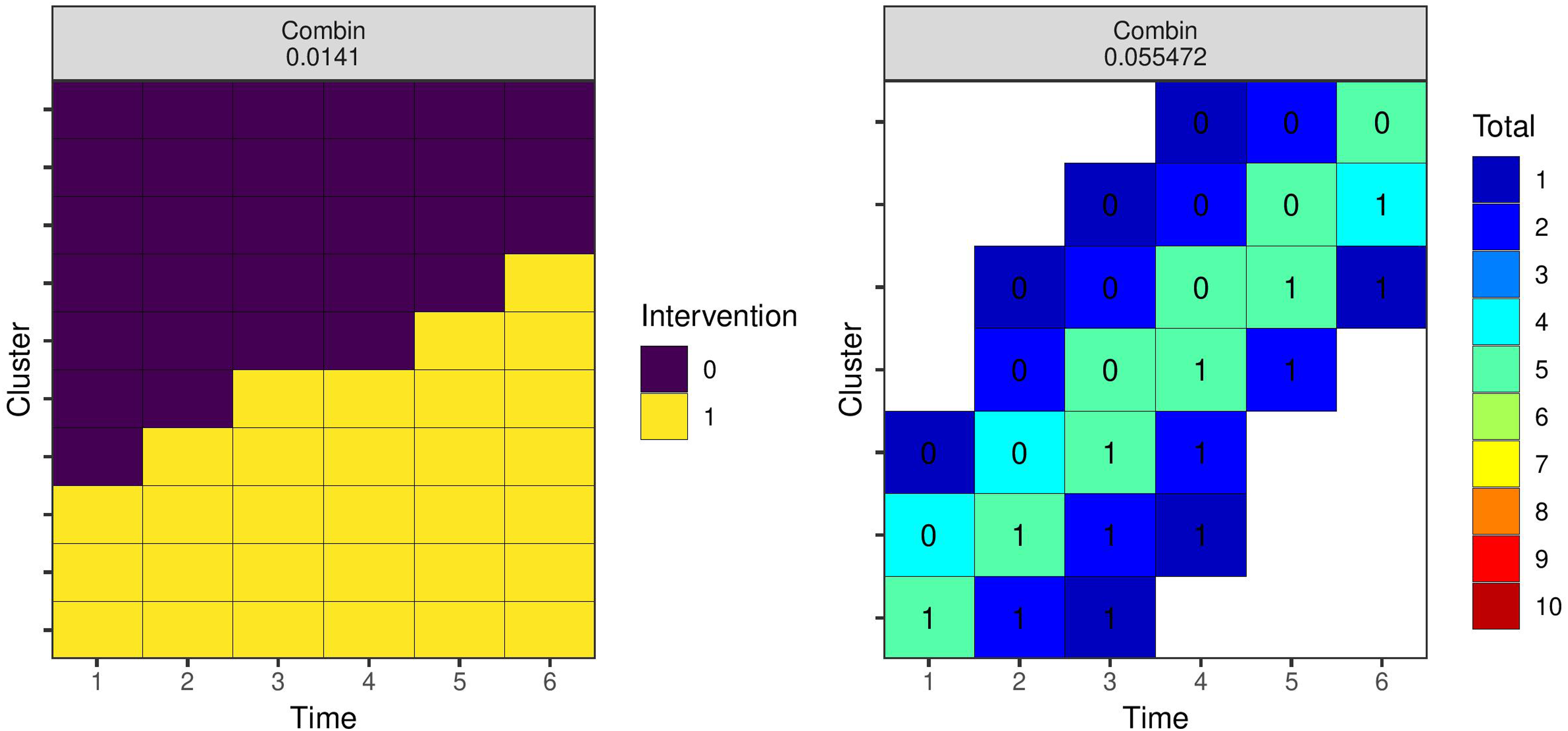

Figure 2 shows the results using the EXC2 covariance function with

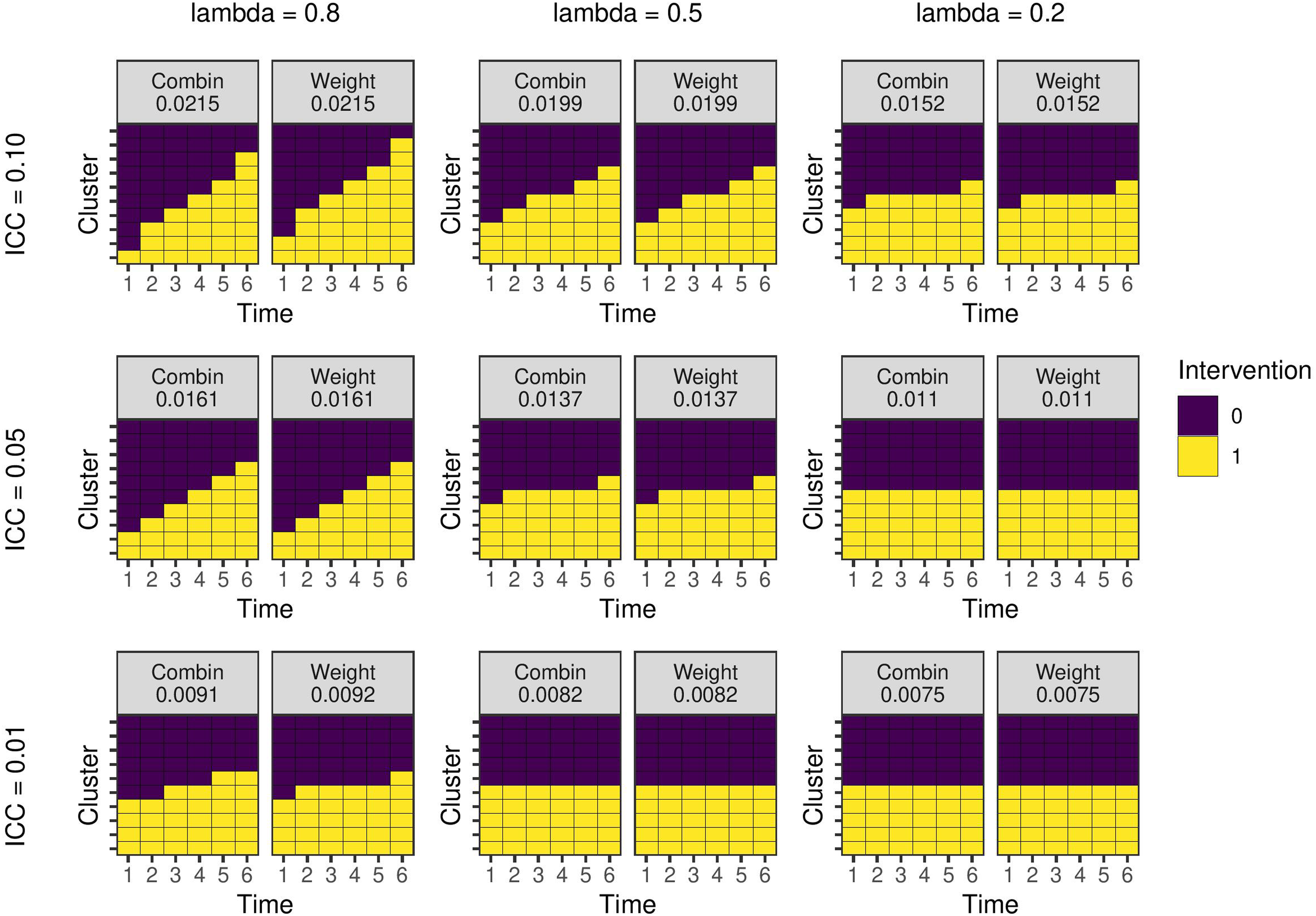

Optimal study designs with 10 clusters and six time periods for different values of the ICC and autoregressive parameter

The previous example assumes any design might be permissible within the design space. However, more restrictive design problems may be of interest given practical limitations on intervention roll out. As an example, we may require there to be only two trial arms within which all clusters receive the intervention at the same time. The question is then when each arm should receive the intervention (if at all). We can consider this problem as selecting two experimental units from Design Space A containing the seven experimental units in Figure 1, since the variance of this design is proportional to a design with

Optimal study designs of two cluster sequences and six time periods for different values of the covariance parameters with the EXC2 and AR1 covariance functions. C = combinatorial local search. W = experimental unit weights. The number on each panel is the treatment effect estimator variance for the design. The rows are difference values of the ICC. (a) EXC2 covariance function; (b) AR1 covariance function. AR: auto-regressive; ICC: intra-cluster correlation coefficient.

Optimal study designs of 80 individuals with seven clusters and six time periods using a linear mixed model with EXC2 covariance structure with different values of the ICC (rows) and CAC (columns). Results from the combinatorial reverse greedy search (with up to 10 individuals per cluster-period) and optimal mixed model weights algorithms. The number for the left two columns is the estimator variance from the design. The number within each cell is the intervention status and the colour represents the number of observations (left two columns) or the weight (right two columns). ICC: intra-cluster correlation coefficient; CAC: cluster autocorrelation coefficient.

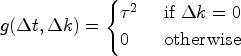

For the next examples, we specify a single observation as the experimental unit. The design space is as specified in Figure 1 with seven clusters and six time periods, and each cluster-period has 10 unique individuals who each contribute an observation. Using the combinatorial algorithms, our goal here is to select 80 observations of the 420 possible observations up to a maximum of 10 per cluster-period. The mixed model weights can also be calculated using Algorithm 1 for comparison. Figures 5 and 6 show the results for the EXC2 and AR1 covariance functions, respectively. In general, the levels of within cluster-period correlation (CAC or

Optimal study designs of 80 individuals for different values of the ICC and

For non-Gaussian models, we illustrate how the parameters

Figure 7 shows the optimal designs of 80 individuals for the binomial-logistic example using the combinatorial and optimal mixed model weight algorithms. When the base rate is low, the relative difference in individual-level variance between time periods is larger, and the resulting designs favour placing more observations in those later time periods. When the base rate is higher, the designs more closely resemble those from the linear model in Figures 5 and 6. The optimal weights suggest that when the base rate is low in this example, we should place all our efforts in the last periods; the combinatorial algorithms have specified a cap of 10 observations per cluster-period and so distribute the observations in the next-best cluster-periods.

Optimal study designs of 80 indivudals with 10 clusters and six time periods for different values of the base rate (rows) and intervention effect size (columns) with a binomial-logistic mixed model. Results from the combinatorial reverse greedy search (with up to 10 individuals per cluster-period) and optimal mixed model weights algorithms. The number for the left two columns is the estimator variance from the design. The number within each cell is the intervention status and the colour represents the number of observations (left two columns) or the weight (right two columns).

To illustrate robust optimal designs, we consider the 18 models and parameter values represented by the panels Figures 1 and 3. We assume that there is no prior knowledge of the likely values of the covariance parameters, nor the covariance function, and so assign equal prior weights to all 18 designs. We use the weighted average robust criterion (16), and run the local search algorithm 100 times, selecting the lowest variance design. The left panel of Figure 8 shows the resulting optimal design with respect to the equal weighting prior. Similarly to Girling and Hemming, 9 the design is a ‘hybrid’ trial design with six of 10 clusters following a parallel trial design, and the remaining four a staggered implementation roll-out. We also identify a robust optimal design for individual experimental units with the 18 designs shown in Figures 5 and 6 using the same procedure. The resulting design is shown in the right-hand panel of Figure 8.

Robust optimal study designs of 80 indivudals with 10 clusters and six time periods with respect to a prior that weights each possibility from earlier examples equally. Results from the combinatorial local search run 100 times and selecting the best design. The left panel is for a design space with clusters as experimental units, and the right panel where individuals are experimental units. The numbers in the cells on the right panel show the intervention status.

Comparison of algorithms

The correlation between observations in a cluster randomised trial setting complicates identification of optimal study designs. Indeed, there have been relatively few studies on the topic of optimal cluster trial designs, particularly when compared with individual-level randomised controlled trials. However, recent methodological advances provide several approaches for approximating c-optimal designs with correlated observations.

We have discussed three different types of method within a general framework for cluster trials using exact formulae for specific models specifications and design spaces and using an algorithm or enumerating and evaluating multiple relevant designs; determining weights to place on each experimental units in a design space; and, combinatorial algorithms for selecting an optimal subset of experimental units. These categories are not exhaustive and new methods may be developed using novel approaches. Each of the three types of method has their advantages and disadvantages. Minimising exact functions for the estimator variance would be preferable, but explicit formulae are only available in the simpler cases. Many authors (e.g. Girling and Hemming, 9 Zhan et al., 15 and Lawrie et al. 13 ) consider the linear mixed model with cluster and cluster-period exchangeable random effects, for example. The combinatorial algorithms produced the lowest variance design in all the examples we considered where we could compare methods, but were generally more computationally demanding, especially when one takes into account the suggestion to run the algorithm multiple times and select the best design. The optimal mixed model weights algorithm identifies the optimal weights for each cluster-period, although may not produce an exact design when rounding the totals. The optimal mixed model weights algorithm is much faster to run than other generic algorithms. For the examples presented in Figures 5 and 6, the reverse greedy search took around 1 min, the local search 10 s, and the model weights 50 ms. In many circumstances, it is difficult or impractical to specify an exact number of individuals, and so weights would be sufficient, in which case the mixed model weights are likely the best choice given its efficiency. However, for more complex design problems, such as setting maximum or minimum number of observations in different cluster-periods, the combinatorial approaches may be required.

Small sample bias

A well recognised issue for cluster trials, and GLMMs in general, is that the generalised least squares estimator of the standard errors of

Usefulness of optimal designs

Optimal designs are not always practical. For example, many of the designs in Figures 5 to 8 where the experimental unit was the individual included cluster-periods with a single individual. It is very unlikely that this would ever be implementable in practice given the logistics of data collection within clusters such as hospitals, clinics, or schools. However, one can view these optimal designs as a benchmark against which to justify a chosen study design. Hooper 10 suggests that there is a common misconception among cluster trial practitioners that the stepped-wedge design is more efficient than a parallel trial. The results of Girling and Hemming, 9 which are replicated in Figure 2, and others show that this is not the case. The most efficient design depends on the covariance parameters, and in the case of a non-linear model, the parameters in the linear predictor too. Indeed, a useful heuristic is that emerges from these results is that the less variable the cluster means over time, the more ‘variable’ the intervention should be (i.e. more staggered over time). Identifying an optimal design can help design a practicable trial that is more efficient than might otherwise be considered. Where individual-level experimental units are used, it can identify which cluster-periods to exclude entirely and which to place more effort into. Kasza et al. 36 proposed just such an approach based on a ‘reverse greedy’ type algorithm.

The framework we use to present these methods requires enumeration of all the unique experimental units. For more complex design problems the design space can then become very large. For example, Hooper et al. 16 used a discrete approximation to a continuous time model, and aim to identify when a cluster should start and stop recruiting and when it should implement the intervention. There is a very large number of possible cluster sequences that would fit within this design space given the large number of time increments, even with the no reversibility and symmetric restrictions they use. Enumerating the complete design space and subjecting it to one of the algorithms above would likely be highly computationally demanding. Indeed, this issue raises the question of how one might approach cluster trial optimal design question with continuous time. Other examples in the literature in which a treatment variable is potentially continuous, have relied on selecting a small number of discrete possible values; the finer the discretisation the better the result. 8 Extending this to larger number of possible conditions, or treating time as truly continuous thus remains a topic of future research. One potentially useful approach for continuous time models may be ‘particle swarm’ optimization and other ‘nature inspired’ methods. 51

Bayesian optimal designs

We have also not considered Bayesian optimal design. While Bayesian methods are relatively rarely used for the design and analysis of cluster randomised trials, there are growing number of examples (e.g. at Work Wellbeing Programme Collaboration 52 ). Chaloner 53 provides a review of Bayesian optimal experimental design criteria. Bayesian optimal designs are based on maximising a utility function for the experiment. The resulting optimality criteria though are highly similar to their Frequentist counterparts, but they introduce the added complexity of needing to integrate over the prior distributions of the model parameters. There have been several methodological advances and new algorithms proposed for identifying Bayesian optimal experiemental designs. For example, Overstall and Woods 54 provided perhaps the most general solution to this problem for non-linear Bayesian models. The algorithms in this article might also be used to find approximate solutions to Bayesian cluster trial design problems. For example, the robust criterion (16) could be translated to a Bayesian context where the weights are derived using a Riemann sum approximation to the integral over the prior distributions. 29 However, further research is required into Bayesian methods for the design and analysis of cluster randomised trials.

Conclusion

The final choice of study design for a cluster randomised trial results from the confluence of a range of practical, financial, and statistical considerations. However, there is an ethical obligation to try to minimise the sample size required to achieve a research objective. Methods to identify optimal or approximately optimal study designs therefore serve a useful purpose where there is flexibility in the roll out of an intervention. We have identified several methods relevant to cluster randomised trials, which can be used on a standard computer in a short amount of time. We would therefore suggest that examining the optimal trial design should be a step in the design of every cluster randomised trial.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article: This work was supported with funding from the Medical Research Council MR/V038591/1.