Abstract

There is a dearth of robust methods to estimate the causal effects of multiple treatments when the outcome is binary. This paper uses two unique sets of simulations to propose and evaluate the use of Bayesian additive regression trees in such settings. First, we compare Bayesian additive regression trees to several approaches that have been proposed for continuous outcomes, including inverse probability of treatment weighting, targeted maximum likelihood estimator, vector matching, and regression adjustment. Results suggest that under conditions of non-linearity and non-additivity of both the treatment assignment and outcome generating mechanisms, Bayesian additive regression trees, targeted maximum likelihood estimator, and inverse probability of treatment weighting using generalized boosted models provide better bias reduction and smaller root mean squared error. Bayesian additive regression trees and targeted maximum likelihood estimator provide more consistent 95% confidence interval coverage and better large-sample convergence property. Second, we supply Bayesian additive regression trees with a strategy to identify a common support region for retaining inferential units and for avoiding extrapolating over areas of the covariate space where common support does not exist. Bayesian additive regression trees retain more inferential units than the generalized propensity score-based strategy, and shows lower bias, compared to targeted maximum likelihood estimator or generalized boosted model, in a variety of scenarios differing by the degree of covariate overlap. A case study examining the effects of three surgical approaches for non-small cell lung cancer demonstrates the methods.

Keywords

1 Introduction

1.1 Motivating research question

Lung cancer is the leading cause of cancer-related mortality worldwide and is estimated to have caused over 1.7 million deaths in 2018.1 The most common type of lung cancer is non-small cell lung cancer (NSCLC), accounting for approximately 85% of all lung cancer cases. 2 When feasible, NSCLC tumors are treated using surgical resection, which remains the most effective option for a cure. 3

Open thoracotomy long stood as the standard surgical procedure for stage I–IIIA NSCLC tumors. However, open thoracotomy is associated with considerable postoperative complications and mortality, especially in the elderly.4,5 Beginning in the late 1990s, two newer and less invasive techniques, video-assisted thoracic surgery (VATS) and, more recently, robotic-assisted surgery, were increasingly used.6,7 The adoption of VATS and robotic-assisted surgery seemed to signal that the newer procedures offer a clinical benefit relative to open resection.8,9 However, to our knowledge, no randomized controlled trials (RCTs) have been conducted to compare the effectiveness of these surgical procedures, in part due to difficulties in recruiting patients and high study costs. As a consequence, VATS and robotic-approaches were adopted into routine care without sufficient scrutiny.6,10

In place of RCTs, large-scale population-based databases, such as the Surveillance, Epidemiology, and End Results (SEER)-Medicare database, provide research opportunities for comparative studies. The SEER-Medicare database comprises a large sample of patients who received each of the three surgical procedures and reflects patient outcomes in the real world setting, containing demographic and clinical information for Medicare beneficiaries with cancer in various United States regions. 11 However, in contrast to RCTs, the real-world adoption pattern of the three surgical approaches largely depends on the patients’ sociodemographic and tumor characteristics, which may result in an unbalanced cohort with significant differences in the distributions of sociodemographic characteristics, comorbidities, cancer characteristics, and diagnostic information across treatment groups. 12

The research question poses several challenges for statistical analyses. First, in practice, statistical methods designed for a binary treatment are often used to account for underlying differences in patient characteristics to compare each pair of surgical procedures.6,10,13 Unfortunately, applications of these methods can lead to the comparisons of disparate patient subgroups, which may increase bias in treatment effect estimates. 14 Second, common measures for comparative effectiveness are postoperative complications, which are binary outcomes. Thus, the treatment effects are typically based on the risk difference (RD), odds ratio (OD), or relative risk (RR), 15 all of which make it less straightforward to obtain inference, relative to continuous outcomes.16–18 Third, the robotic-assisted surgery is a new advanced technology that was just adopted into practice in recent years. As a result, the number of patients who are operated via this approach is smaller compared to the other two approaches, yielding unequal sample sizes across the treatment groups. Appropriate causal inference methods that can address these challenges are needed.

1.2 Overview of methods for causal inference with multiple treatments

Recent years have seen a growing interest in the development of causal inference methods with multiple treatments using observational data. The theoretical work of Imbens 19 and Imai and Van Dyk 20 extended the propensity score framework in the setting with a binary treatment 21 to the general treatment setting. Subsequently, methods designed for a binary treatment have been reformulated to accommodate multiple treatments, including regression adjustment (RA), 22 inverse probability of treatment weighting (IPTW),23,24 and vector matching (VM). 14 Lopez and Gutman 14 provide a comprehensive review of current methods for multiple treatments. These methods focus on continuous outcomes.

RA,22,25,26 also known as model-based imputation, 27 uses a regression model to impute missing outcomes, estimating what would have happened to a specific unit had this unit received the treatment to which it was not exposed. The causal estimand of interest can be estimated by contrasting the imputed potential outcomes between treatment groups. The critical part of this method is the specification of the functional form of the regression model. With a low-dimensional set of pre-treatment covariates, it is relatively easy to specify a flexible functional form for the regression model. If there are many pre-treatment covariates, however, such a specification is more difficult, and possible misspecification of the regression model could bias the estimate of treatment effects. RA also heavily relies on extrapolation for estimation when the covariate distributions between treatment groups are far apart. 27

IPTW19,23,24 methods attempt to obtain an unbiased estimator for treatment effects in a way akin to how weighting by the inverse of the selection probability adjusts for unbalances in sampling pools, introduced by Horvitz and Thompson 28 in survey research. A challenge with IPTW is that treated units with low generalized propensity scores (GPSs) that are close to zero can result in extreme weights, which may yield erratic causal estimates with large sample variances.29,30 This issue is increasingly likely as the number of treatments increases. 14 An alternative method is to use trimmed or truncated weights, in which weights that exceed a specified threshold are each set to that threshold.31,32 The threshold is often based on quantiles of the distribution of the weights (e.g. the 1st and 99th percentiles).

Alternatives to estimate GPSs in the IPTW framework include generalized boosted models 24 (GBMs) and Super Learner33,34 (SL). GBMs grow multiple regression trees to capture complex and nonlinear relationships between treatment assignment and pre-treatment variables. The estimation procedure can be tuned to find the GPS model producing the best covariate balance between treatment groups. This feature of GBMs should help alleviate extreme weights and improve the estimation of causal effects. 24 However, the algorithm can be computationally intensive, and the robust procedure for estimating the variances of the effect estimates is not guaranteed to result in proper confidence intervals. SL uses ensemble of machine learning approaches including regression, ridge regression, and classification trees, to estimate a weight for each treatment. There is no guarantee that these probabilities sum to 1, and it is common to normalize weights accordingly. To limit extreme weights, Rose and Normand 34 use a lower bound of 0.025 for each probability.

Targeted maximum likelihood estimation34,35 (TMLE) is a doubly robust approach that combines outcome estimation, IPTW estimation, and a targeting step to optimize the parameter of interest with respect to bias/variance. Rose and Normand 34 implement TMLE by estimating both GPSs and a binary outcome using SL. For obtaining variance estimates, Rose and Normand 34 use influence curves, though bootstrapping is also suggested. The use of TMLE has, to the best of our knowledge, not been deeply vetted for multiple treatment options by using simulations.

Lopez and Gutman

14

proposed the VM algorithm, which can match units with similar vector of GPSs. VM is designed to replicate a multi-arm randomized trial by generating sets of units that are roughly equivalent on measured pre-treatment covariates. VM obtains matched sets using a combination of

Before describing Bayesian additive regression trees (BART)—one tool that we think is equipped to handle the complexity of causal inference with multiple treatments—it is worth explicating on why one approach that, although intuitive and easy to perform, is not recommended: a series of binary comparisons (SBCs). To wit, grouping subjects into separate sub-populations, each with two treatments, and then using approaches designed for binary treatment is an approach often used in practice. 14 However, SBCs can (i) lead to non-transitive causal estimates, (ii) increase bias, and (iii) leave it unclear which treatment is optimal, all of which make it inappropriate for causal inference when there are more than two treatments. 14

1.3 BART for causal inference

While the advanced regression and propensity score-based techniques described above were created for causal inference with multiple treatments, these methods were developed with continuous outcomes in mind, and they have been less studied in the context of both a binary outcome and multiple treatments.

In recent years, BART,36,37 a nonparametric modeling tool, has become more popular in causal settings. Hill 38 proposed the use of BART for causal inference with a binary treatment and a continuous outcome. Hill 38 and Hill and Su 39 used simulations to show that, in scenarios where there are non-linearities in the response surface and the treatment assignment mechanism, BART generates more accurate estimates of average treatment effects (ATEs) compared to various matching and weighting techniques, and comparable estimates in linear settings.

BART boasts several advantages for causal inference with a binary treatment.38,40 First, BART allows for an extremely flexible functional form. Second, BART avoids ambiguity with respect to covariate balance diagnostics required by propensity score-based approaches. Third, BART generates coherent uncertainty intervals for treatment effect estimates from the posterior samples in contrast to propensity score matching and subclassification, for which there is lack of agreement regarding appropriate interval estimation.20,38 Finally, BART is easy to implement and requires less researcher programming expertise. However, like any methods that do not first discard units that fall out of areas of the covariate space where common support does not exist, one vulnerability of BART is that there is no mechanism to prevent it from extrapolating over these areas.

We surmise that the strengths of BART are transferable to the multiple treatment setting. In the sections that follow, we conduct two sets of simulations to investigate the operating characteristics of BART for estimating the causal effects of multiple treatments on a binary outcome, and compare BART to the existing methods discussed previously. We further supply BART with a strategy to identify a common support region and compare it to the propensity score-based strategy with respect to the proportion of units retained for inference and the accuracy of treatment effect estimates based on the retained inferential units. We subsequently apply the methods examined to analyze a large dataset on stage I–IIIA NSCLC patients, drawn from the SEER-Medicare registry, and estimate the comparative effect of robotic-assisted surgery versus VATS and open thoracotomy on postoperative outcomes.

2 Potential outcomes framework for multiple treatments

2.1 Notation and assumptions

Our notation is based on the potential outcomes framework, which was originally proposed by Neyman 41 in the context of randomization-based inference in experiments. Potential outcomes were generalized to observational studies and Bayesian analysis by Rubin,42–44 in what is now known as the Rubin causal model. 45

Consider a sample of

In general, causal effects are not identifiable without further assumptions because only one of the potential outcomes is observed for every unit. We make the following identifying assumptions:

The stable unit treatment value assumption,

46

that is no interference between units and no different versions of a treatment. The positivity or sufficient overlap assumption, that is The treatment assignment is unconfounded, that is

Under the unconfoundedness assumption, for any treatment

2.2 Definition of causal effects

Causal effects are summarized by estimands, which are functions of the unit-level potential outcomes on a common set of units.42,44 For dichotomous outcomes, causal estimands can be the RD, OD, or RR. For purposes of illustration, we use RD in this paper.

Following Lopez and Gutman,

14

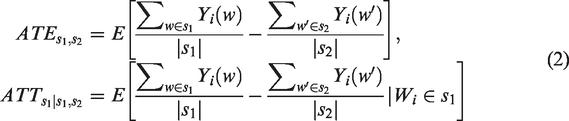

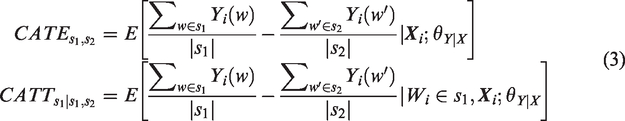

we provide a broad definition of the causal RD that may be of interest with multiple treatments. Define

In equation (2), the expectation is over all units,

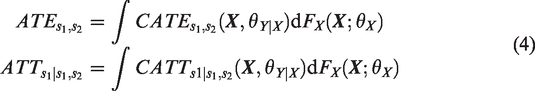

Causal inference methods via modeling the response surfaces (e.g. BART and RA) arrive at the population or sample marginal treatment effects by integrating the conditional effects over the distribution of

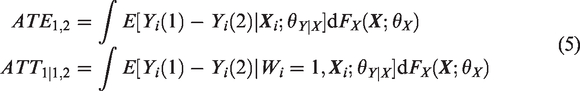

In our motivating example, one of the research questions of interest is to compare the effectiveness of a newer minimally invasive procedure (i.e. robotic-assisted surgery) versus the existing surgical procedures (e.g. VATS) in the overall population, or among those patients who received robotic-assisted surgery. The corresponding target causal estimands are defined as

2.3 Treatment effects using BART

Under the identifying assumptions, treatment effects such as

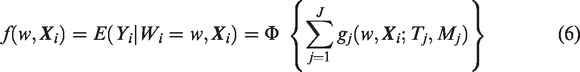

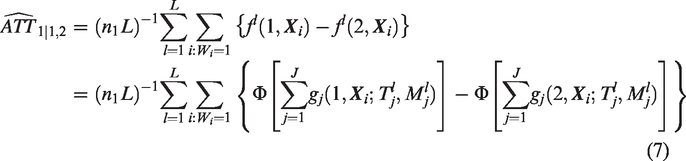

BART is a Bayesian ensemble method that models the mean outcome given predictors by a sum of trees. For a binary outcome, the BART model can be expressed using the probit model setup as

2.4 Common support

Because problems can arise when drawing inference to regions of the covariate space where there are insufficient number of units in all treatment groups, propensity score-based methods are typically equipped with strategies for defining a common support region. For BART, there is no such a mechanism to prevent it from extrapolating over areas where a common support does not exist.

For a binary treatment, one strategy is to discard units that fall beyond the range of the propensity score.51,52 Hill and Su 39 argue that these strategies typically ignore the information embedded in the response variable and propose alternative discarding rules. Illustrative examples with one or two predictors were used to compare the two types of discarding strategies and their implications on estimation of the causal effects and the proportion of inferential units retained. Advantages of BART over the propensity score approach manifest in examples where there is lack of common support for variables only predictive of treatment but not of the outcome or the treatment mechanism is more difficult to model. However, in practice, identifying common support is often required for a high-dimensional covariate space. In addition, the two types of strategies have not been compared in the multiple treatment setting.

To address these limitations, we propose a strategy for BART to define both a common support region and the corresponding discarding rules. Whereas Hill and Su

39

use a common support for binary treatment using the

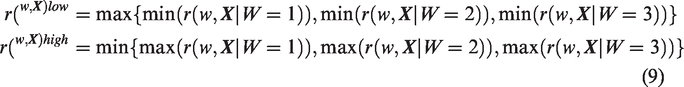

For multiple treatments with

When estimating the ATEs, we apply the discarding rule in equation (8) to each treatment group.

There is likewise a lack of consensus for defining a common support region with GPS-based approaches. For matching using the GPS, Lopez and Gutman

14

propose a rectangular support region. Let

For weighting methods, techniques such as trimming 32 or stabilizing (more useful for time-varying confounding, see Hu et al., 53 Hu and Hogan, 54 and Hernán and Robins 55 ) are frequently used in place of a common support. However, the lack of common support in the covariate space may lead to extreme weights and unstable IPTW estimators. In this article, we used fixed quantile-based trimming. More recently, Ju et al. 56 developed an adaptive truncation approach based on the collaborative TMLE methodology and showed their estimators achieved the best performance for both point estimation and confidence interval coverage among all propensity score truncation-based estimators.

3 Simulation studies

3.1 Design and implementation

We conduct expansive simulations in order to better understand how BART will work in complex causal settings. Our first set of simulations, Simulation 1, contrasts BART with other approaches, while our second set, Simulation 2, looks into the role that covariate overlap plays in inferences with multiple treatments.

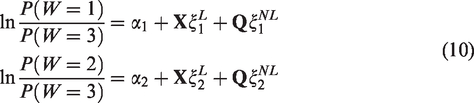

The design of both simulations mimics the range of scenarios that are representative of the data structure in the SEER-Medicare registry. Three treatment levels (

We implemented the methods as follows. For RA, we first fit a Bayesian logistic regression model with main effects of all confounders using the bayesglm() function in the arm package in R. We then drew a total of 1000 MCMC samples of regression coefficients from their posterior distributions and predicted the potential outcomes for each unit and relevant treatment group. When implementing IPTW, we estimated GPSs by including each confounder additively to a multinomial logistic regression model, a GBM, and a SL model, respectively. The stopping rule for the optimal iteration of GBM was based on maximum of absolute standardized bias, which compares the distributions of the covariates between treatment groups.

24

We implemented SL using the weightit() function in the R package WeightIt for multinomial treatment and included three algorithms: main terms regression, generalized additive model, and support vector. The treatment probabilities are normalized to sum to one. The weights—inverse of the GPSs—were then trimmed at 5 and 95% to generate trimmed IPTW estimators. GPSs for VM were estimated using multinomial logistic regression with main effects of all confounders. We used a combination of

To judge the appropriateness of each technique, we use mean absolute bias (MAB), root mean squared error (RMSE), and coverage probability (CP). In addition, we examine the large-sample convergence property of each method.

3.1.1 Simulation 1: Which causal approach yields the lowest bias and RMSE?

We compare each of the 10 approaches across a combination of two design factors: the study sample size (i.e. the total number of units) and the ratio of units in the treatment groups. We varied the two factors in three scenarios: (1) 1200 with a 1:1:1 ratio, (2) 4000 with a 1:5:4 ratio, and (3) 11,600 with a 1:15:13 ratio to represent equal, moderately unequal, and highly unequal sample sizes across treatment groups. The relatively small sample size (400) in the first group—which will be used as the reference group of the ATT effects—and the scenario of highly unequal sample sizes mimic the SEER-Medicare data in the motivating study.

We considered 10 confounders with five continuous variables and five categorical variables. We assumed that both the treatment assignment mechanism and the response surfaces are nonlinear models of the confounders, as a realistic representation of the application data. Specifically, the treatment assignment follows a multinomial logistic regression model

3.1.2 Simulation 2: How do levels of covariate overlap impact causal estimates?

Only BART, TMLE, and IPTW-GBM, the top performing methods in Simulation 1, are used in Simulation 2, which more deeply examines the impact of covariate overlap.

We generated datasets following the simulation configuration of scenario 3 in Simulation 1, including the total sample size, the ratio of units, the number of continuous and categorical confounders, and the response surface models, to mimic the SEER-Medicare dataset. To create varying covariate overlaps that are “measurable” in degrees, we generate the treatment variable and covariate distribution as follows.

Three levels of covariate overlap were designed: (1)

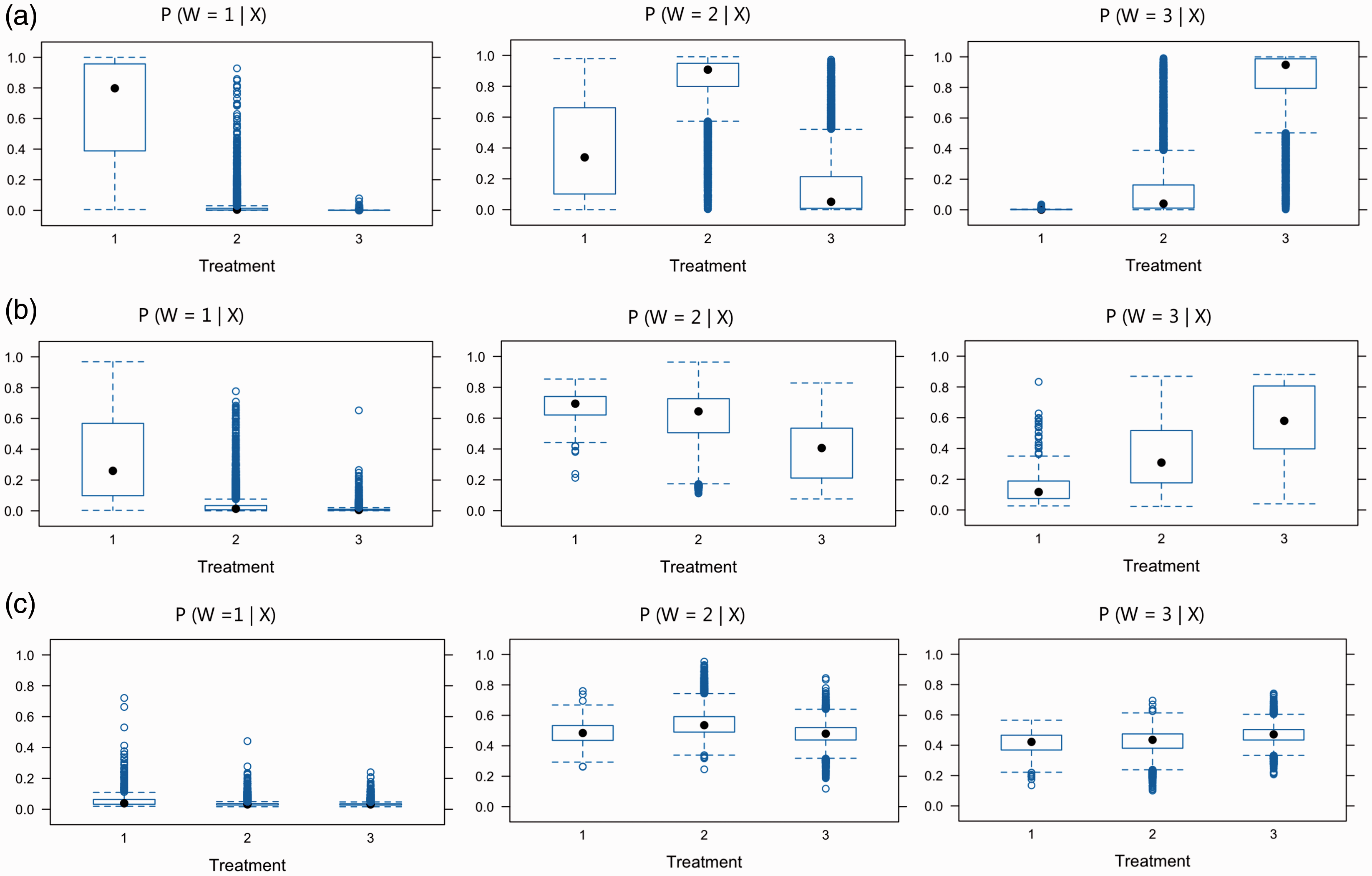

Distributions of estimated GPSs across the treatments are compared using boxplots. For each overlap scenario, we estimated the GPS for each unit in the sample using GBMs, and plotted the distributions of estimated GPSs using a separate boxplot for the unit receiving each type of treatment (Figure 1). Substantial overlap in boxplots is presented in the strong overlap scenario, while the weak overlap scenario highlights the different distributions of GPSs.

Overlap assessment for the scenarios of (a) weak, (b) moderate, and (c) strong covariate overlap. Each panel presents boxplots by treatment group of the estimated GPSs for one of the treatments,

3.2 Simulation results

3.2.1 Simulation 1

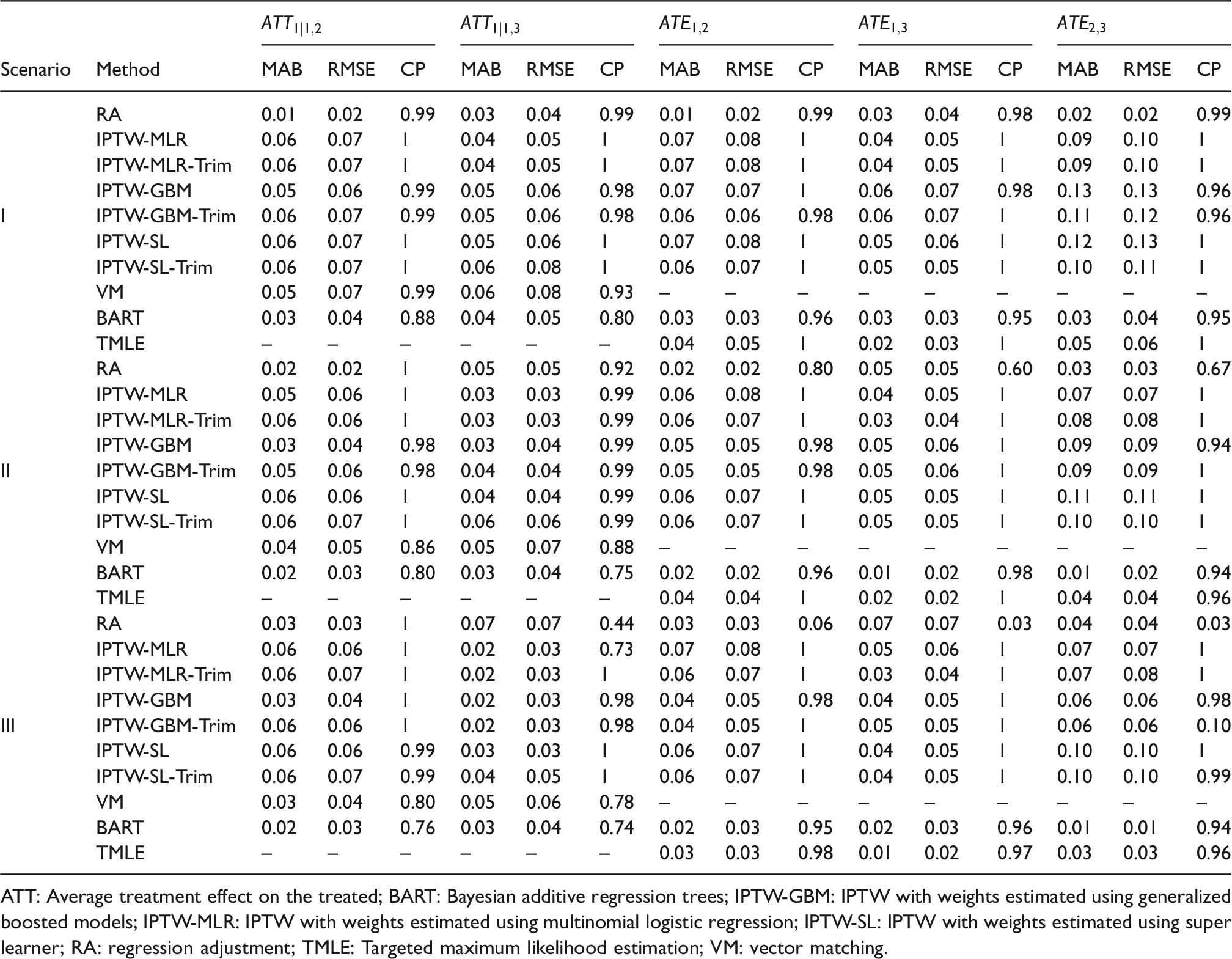

Table 1 presents the MAB, RMSE, and CP of the estimates of two ATT effects

Comparison of the estimated average treatment effects on the treated in terms of mean absolute bias (MAB), root mean square error (RMSE), and coverage probability (CP) across 200 replications in Simulation 1. The causal estimand is based on risk difference.

ATT: Average treatment effect on the treated; BART: Bayesian additive regression trees; IPTW-GBM: IPTW with weights estimated using generalized boosted models; IPTW-MLR: IPTW with weights estimated using multinomial logistic regression; IPTW-SL: IPTW with weights estimated using super learner; RA: regression adjustment; TMLE: Targeted maximum likelihood estimation; VM: vector matching.

No single method trumped others in estimating both

For the ATE estimates, BART consistently provided lower MAB and RMSE followed by TMLE, across all three scenarios with different ratio of units. BART had nominal CP across all three scenarios. IPTW methods and TMLE yielded conservative intervals and greater than the nominal CP. RA was sensitive to the ratio of units. In the scenario with highly unequal sample sizes across treatment groups, RA had subpar performance. The intervals produced by RA rarely covered the true effects, resulting in a low CP. Altogether, BART and TMLE provided the best performances across settings for the ATE estimates. Boxplots of biases from 200 replications in pairwise ATT and ATE estimates appear in Figures S1 and S2 in Supplemental Materials.

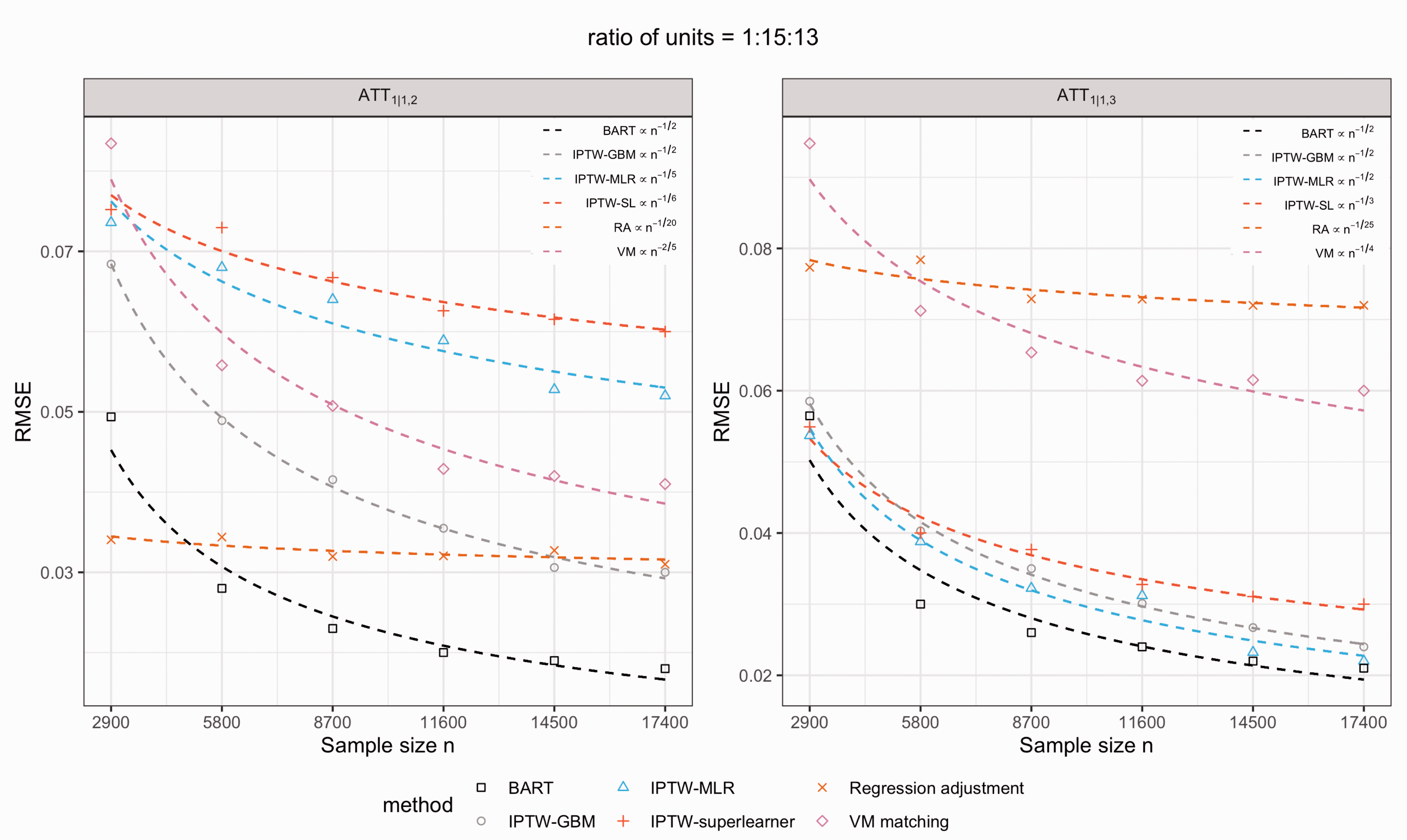

In Figure 2, we examined the large-sample convergence property of each of six methods. We considered only the scenario with the ratio of units = 1:15:13, which is the most representative of the SEER-Medicare registry. We simulated the data with increasing sample sizes of

The large-sample convergence rate of each of six methods for the estimates of two treatment effects,

3.2.2 Simulation 2

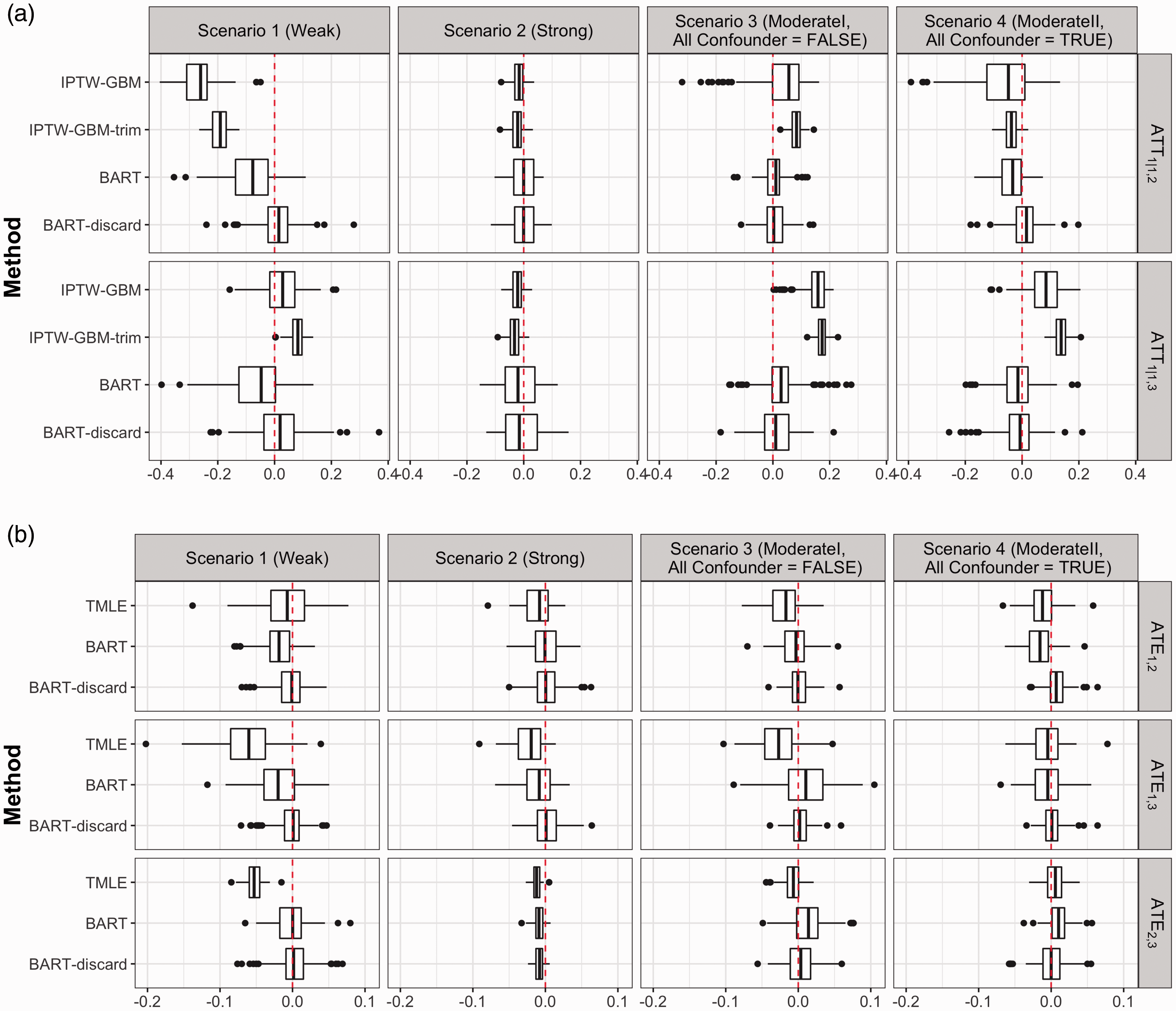

Figure 3 displays boxplots of biases of

Biases among 200 replications under scenarios of differing covariate overlap for IPTW-GBM versus BART and two treatment effects

BART boasted smaller bias under nearly all levels of overlap compared to TMLE and IPTW-GBM. The advantage is more evident when there is more lack of covariate overlap. The larger biases and RMSEs (see Table S2 and Table S3 in Supplemental Materials) in the IPTW-GBM estimates under the

Our BART discarding rule (8) considerably reduced the biases in the estimates of both ATE and ATT effects in the

4 Application to SEER-Medicare data on NSCLC

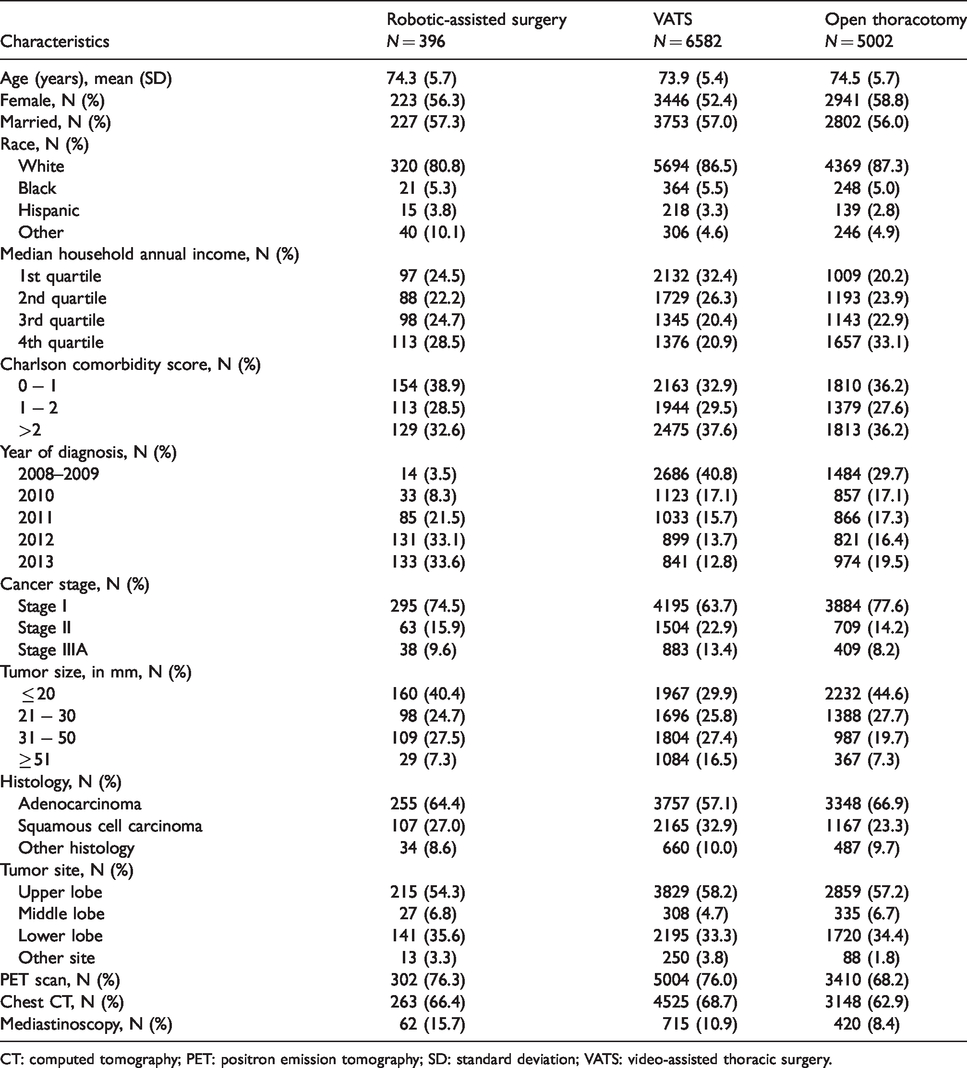

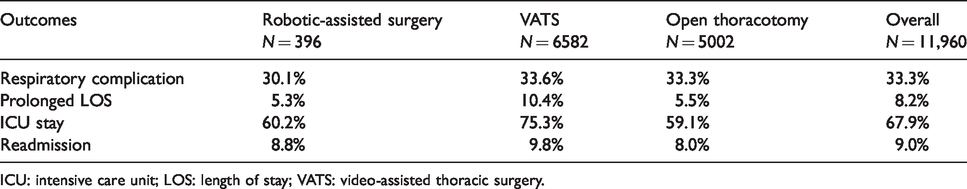

Clinical encounter and Medicare claims data on 11,980 patients with stage I–IIIA NSCLC were drawn from the latest SEER-Medicare database. These patients were above 65 years of age, diagnosed between 2008 (first year patients in the registry underwent robotic-assisted surgery) and 2013, and underwent surgical resection via one of the three approaches, including robotic-assisted surgery, VATS, or open thoracotomy. The dataset contains individual-level information at baseline on the following variables: age, gender, marital status, race, ethnicity, income level, comorbidities, cancer stage, tumor size, tumor site, cancer histology, and whether they underwent positron emission tomography, chest computed tomography, or mediastinoscopy. Table 2 summarizes these variables for each surgical approach. We compared the effectiveness of the three surgical approaches in terms of four outcomes: the presence of respiratory complication within 30 days of surgery or during the hospitalization in which the primary surgical procedure was performed, prolonged length of stay (LOS) (i.e. > 14 days), intensive care unit (ICU) stay following surgery, and readmission within 30 days of surgery. Table 3 presents the outcome rates in the three surgical groups.

Baseline characteristics of patients in three surgical groups in SEER-Medicare data.

CT: computed tomography; PET: positron emission tomography; SD: standard deviation; VATS: video-assisted thoracic surgery.

The outcome rates in three surgical groups: robotic-assisted surgery, VATS, and open thoracotomy.

ICU: intensive care unit; LOS: length of stay; VATS: video-assisted thoracic surgery.

Among the 11,980 patients, 396 (3.3%) received robotic-assisted surgery, 6582 (54.9%) underwent VATS, and 5002 (41.8%) were operated via open thoracotomy. We estimated the causal effects of robotic-assisted surgery versus VATS or open thoracotomy among patients who underwent robotic-assisted surgery (i.e.

Table S4 in Supplemental Materials presents the point and interval estimates of

To highlight the importance of simultaneous comparisons of multiple treatments, we implemented each method using SBCs to show how such inappropriate practices can result in different and confusing estimates of treatment effects. Table S4 also includes the estimates of

We further explored the sensitivity of BART for binary outcomes to the choice of end-node prior, specifically via the hyperparameter

5 Summary and discussion

Our paper makes two primary contributions to the causal inference literature. First, we extend BART to the multiple treatment and binary outcome setting, highlighting that the strengths of BART for binary treatment also manifest with multiple treatments. Second, we propose a common support rule for BART, and find that BART consistently shows superior performance over alternative approaches in various scenarios with differing levels of covariate overlap.

In addition to the primary findings in our simulations corresponding to bias, RMSE, CP, and large-sample convergence property, BART boasts a few additional advantages that make it a unique tool for the multiple treatment setting. As one example, BART is computationally efficient. All simulations were run in R on an iMAC with a 4 GHz Intel Core i7 processor. On a dataset of size

We apply the methods examined to 11,980 stage I–IIIA NSCLC patients, drawn from the latest SEER-Medicare linkage. Results suggest that robotic-assisted surgery may be preferred in terms of prolonged LOS and ICU stay, among those who were operated via the robotic-assisted technology, relative to open thoracotomy or VATS. Different choice of methods, or inappropriate practice such as implementing SBCs for pairwise ATT effects, may lead to different conclusions about the treatment effects, explicating the importance of appropriate methods and practice for causal inference with multiple treatments.

The promising performance of BART in the complex multiple treatment setting will lay groundwork for several future research avenues. First, the flexibility offered by nonparametric modeling of BART can be leveraged to model regression relationships in survival data. Second, individual treatment effects that are easily obtained from BART provide a building block for estimating the heterogeneous treatment effect. Finally, we have made a significant untestable assumption related to unmeasured confounding. Developing sensitivity analyses under this complex multiple treatments setting leveraging BART would also be a worthwhile and important contribution.

Supplemental Material

sj-pdf-1-smm-10.1177_0962280220921909 - Supplemental material for Estimation of causal effects of multiple treatments in observational studies with a binary outcome

Supplemental material, sj-pdf-1-smm-10.1177_0962280220921909 for Estimation of causal effects of multiple treatments in observational studies with a binary outcome by Liangyuan Hu, Chenyang Gu, Michael Lopez, Jiayi Ji and Juan Wisnivesky in Statistical Methods in Medical Research

Footnotes

Acknowledgements

This study used the linked SEER-Medicare database. The interpretation and reporting of these data are the sole responsibility of the authors. The authors acknowledge the efforts of the National Cancer Institute; the Office of Research, Development and Information, CMS; Information Management Services (IMS), Inc.; and the SEER Program tumor registries in the creation of the SEER-Medicare database.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by award ME_2017C3_9041 from the Patient-Centered Outcomes Research Institute and by grant P30CA196521-01 from the National Cancer Institute.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.