Abstract

Blinded sample size reassessment is a popular means to control the power in clinical trials if no reliable information on nuisance parameters is available in the planning phase. We investigate how sample size reassessment based on blinded interim data affects the properties of point estimates and confidence intervals for parallel group superiority trials comparing the means of a normal endpoint. We evaluate the properties of two standard reassessment rules that are based on the sample size formula of the

1 Introduction

In clinical trials for the comparison of means of normally distributed observations, the sample size to achieve a specific target power depends on the true effect size and variance. For the purpose of sample size planning, the effect size is usually assumed to be equal to a minimally clinically relevant effect while the variance is often estimated from historical data. For situations where only little prior knowledge on the variance is available, clinical trial designs with sample size reassessment based on interim estimates of this nuisance parameter have been proposed. Stein 1 developed a two-stage procedure, where the second stage sample size is calculated based on a first stage variance estimate aiming to achieve a pre-specified target power. In the context of clinical trials, several extensions of Steins two-stage procedure have been considered2–8 that all require an unblinding of the interim data for the computation of the variance estimate. However, regulatory agencies generally prefer blinded interim analyses as they entail less potential for bias.9–13

Gould and Shih 14 proposed to estimate the variance from the blinded interim data by computing the variance from the total sample (pooling the observations from both groups), instead. Although this interim estimate is not consistent 15 and has a positive bias if the alternative hypothesis holds, the bias is negligible for effect sizes typically observed in clinical trials. 16 Furthermore, sample size reassessment based on the total variance has no relevant impact on the type I error rate in parallel group superiority trials 17 (see also the literature18–21) and achieves the target power well. Similar results on sample size reassessment based on blinded nuisance parameter estimates were obtained for binary data, 22 count data23–25 longitudinal data, 26 and for fully sequential sample size reassessment. 27 For non-inferiority trials with normal endpoints, a minor inflation of the type I error rate for small sample sizes has been observed.28,29 However, if the sample size reassessment rule is not only based on the primary endpoint but also on blinded secondary or safety endpoint data, the type I error rate for the test of the primary endpoint may be substantially inflated. 30

While for adaptive clinical trials with sample size reassessment based on the unblinded interim treatment effect estimate, it is well known that unadjusted point estimates of the effect size and confidence intervals may be biased;31,32 the properties of point estimates and confidence intervals computed at the end of an adaptive clinical trial with blinded sample size reassessment have received less attention. In this paper, we investigate the bias and standard error of the treatment effect point estimate and compare the absolute mean bias due to blinded sample size reassessment to upper boundaries derived for adaptive designs with unblinded sample size reassessment. 33 We also investigate the bias of the final variance estimate under sample size reassessment rules that are based on the blinded interim variance estimate. We also investigate the bias of the final variance estimate under sample size reassessment rules that are based on the blinded interim variance estimate. Previously, this bias had been investigated for a corresponding unblinded reassessment rule (which had no upper sample size bound), sharp bounds for the bias had been derived and an additive bias correction had been suggested. 8 Here, in this paper, we derive corresponding bounds for the bias of the final variance estimate in the blinded case. Furthermore, we assess the coverage probability of one- and two-sided confidence intervals.

In Section 2, we introduce adaptive designs with blinded sample size reassessment. Theoretical results on the bias of estimates are presented in Section 3. In Section 4, we report a simulation study quantifying the bias and coverage probabilities in a variety of scenarios. The impact of the results is discussed in the context of a case study in Section 5. Section 6 concludes the paper with a discussion and recommendations. Technical proofs are given in Appendix 1.

2 Blinded sample size reassessment

Consider a parallel group comparison of the means

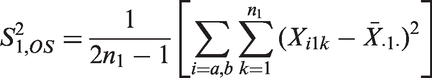

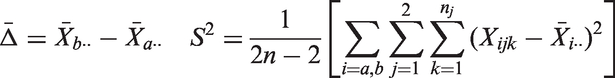

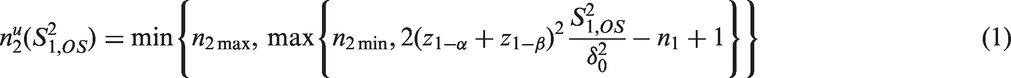

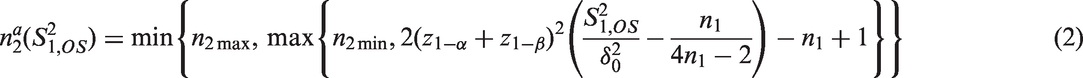

As example consider a sample size reassessment rule aiming to control the power under a pre-specified absolute treatment effect. It is derived from the standard sample size formula for the comparison of means of normally distributed observations with a one-sided

3 Theoretical results on the bias of the final mean and variance estimators

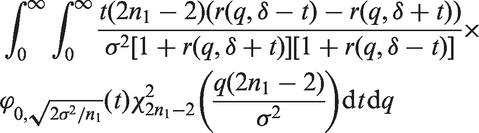

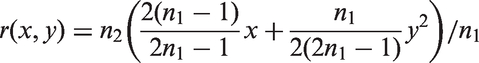

In this section, we consider adaptive two-stage designs with general sample size reassessment rules where the second stage sample size is given as some non-constant function Consider an adaptive two-stage design with a general sample size reassessment rule The bias where

Under the null hypothesis If If the sample size reassessment rule is increasing and Theorem 1

Note that the sample size functions

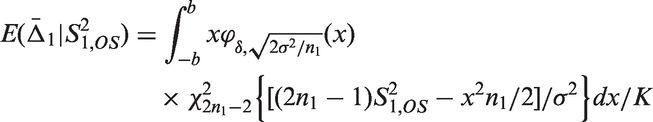

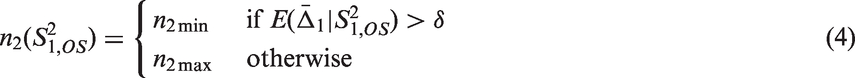

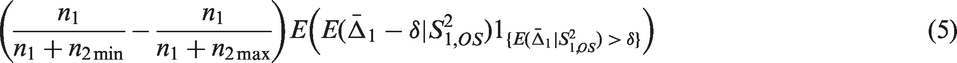

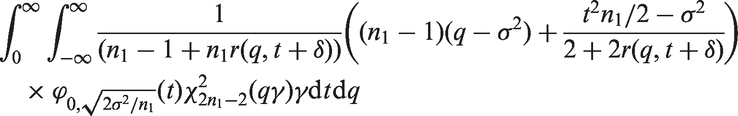

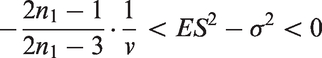

In Theorem 2, we give an upper bound for the bias of the mean in adaptive two-stage designs with a general sample size reassessment rule Consider an adaptive two-stage design with a general sample size reassessment rule the expected first stage treatment effect estimate conditional on the variance estimate where the bias of the maximum bias of For Note that, by symmetry, the In Theorem 3, we derive the bias of the variance estimator computed at the end of the trial for general sample size reassessment rules Consider an adaptive two-stage design with a general sample size reassessment rule the bias of the variance estimator where the bias of if In Theorem 4, we derive a lower bound for the bias of the variance estimator Consider a design with blinded sample size reassessment based on

Theorem 2

Theorem 3

Theorem 4

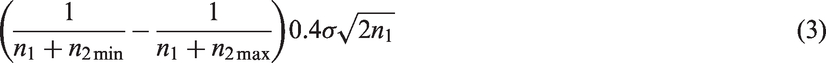

Note that this lower bound has a similar form as the bound derived for the bias in adaptive two-stage designs with sample size reassessment based on the unblinded variance estimate.

8

In the latter setting, the bound is given by

4 Simulation study of the properties of point estimates and confidence intervals

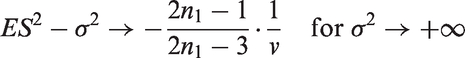

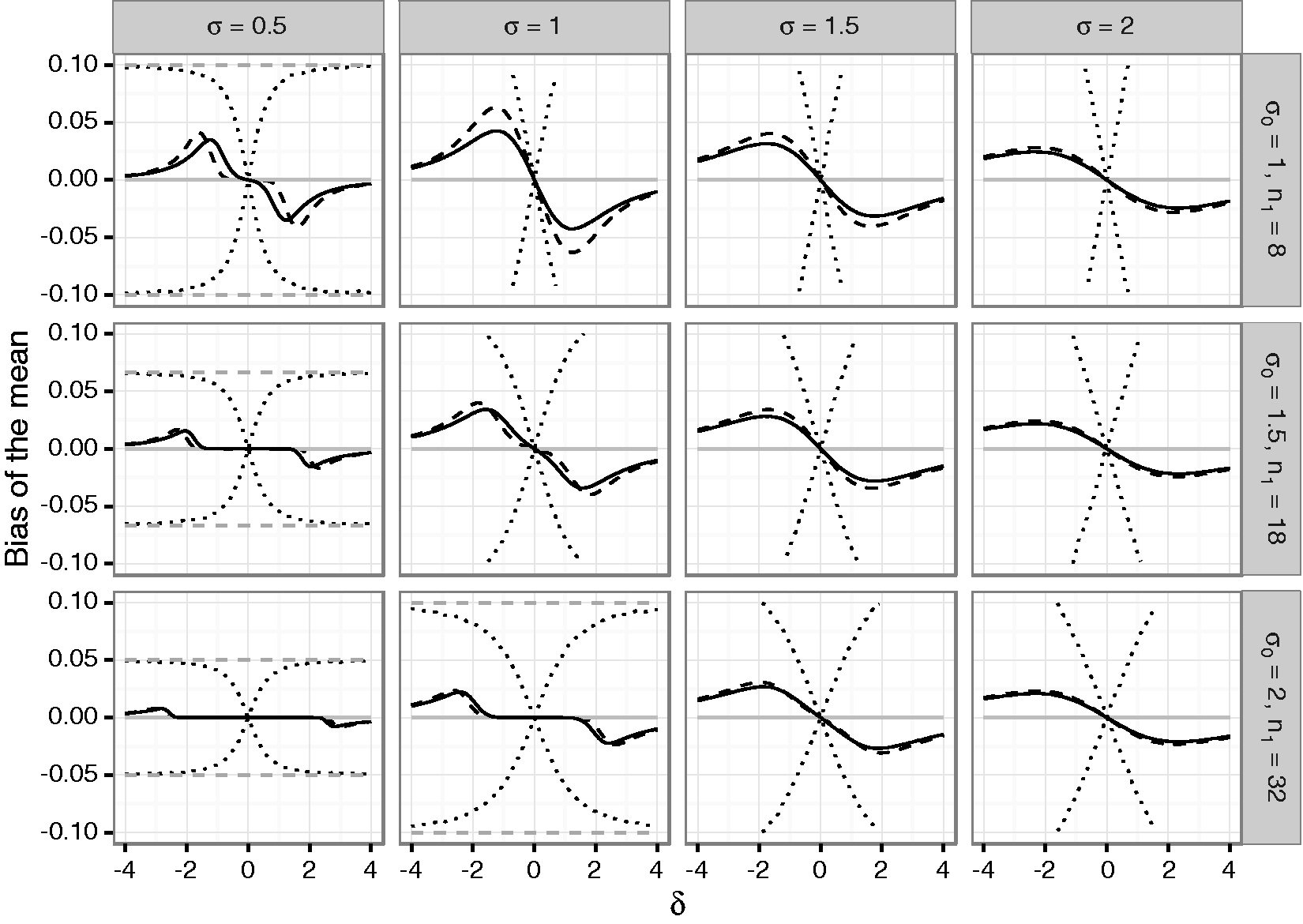

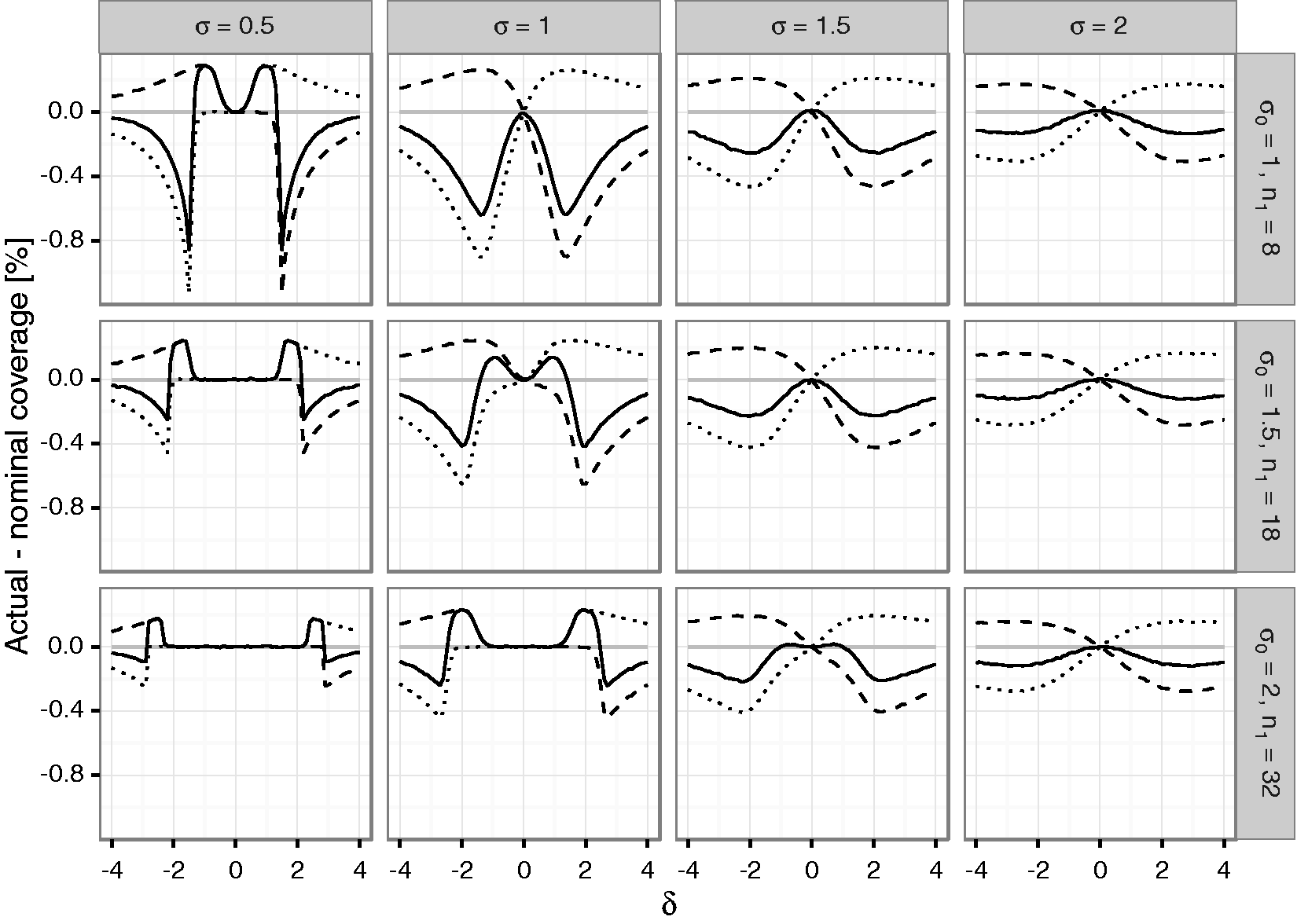

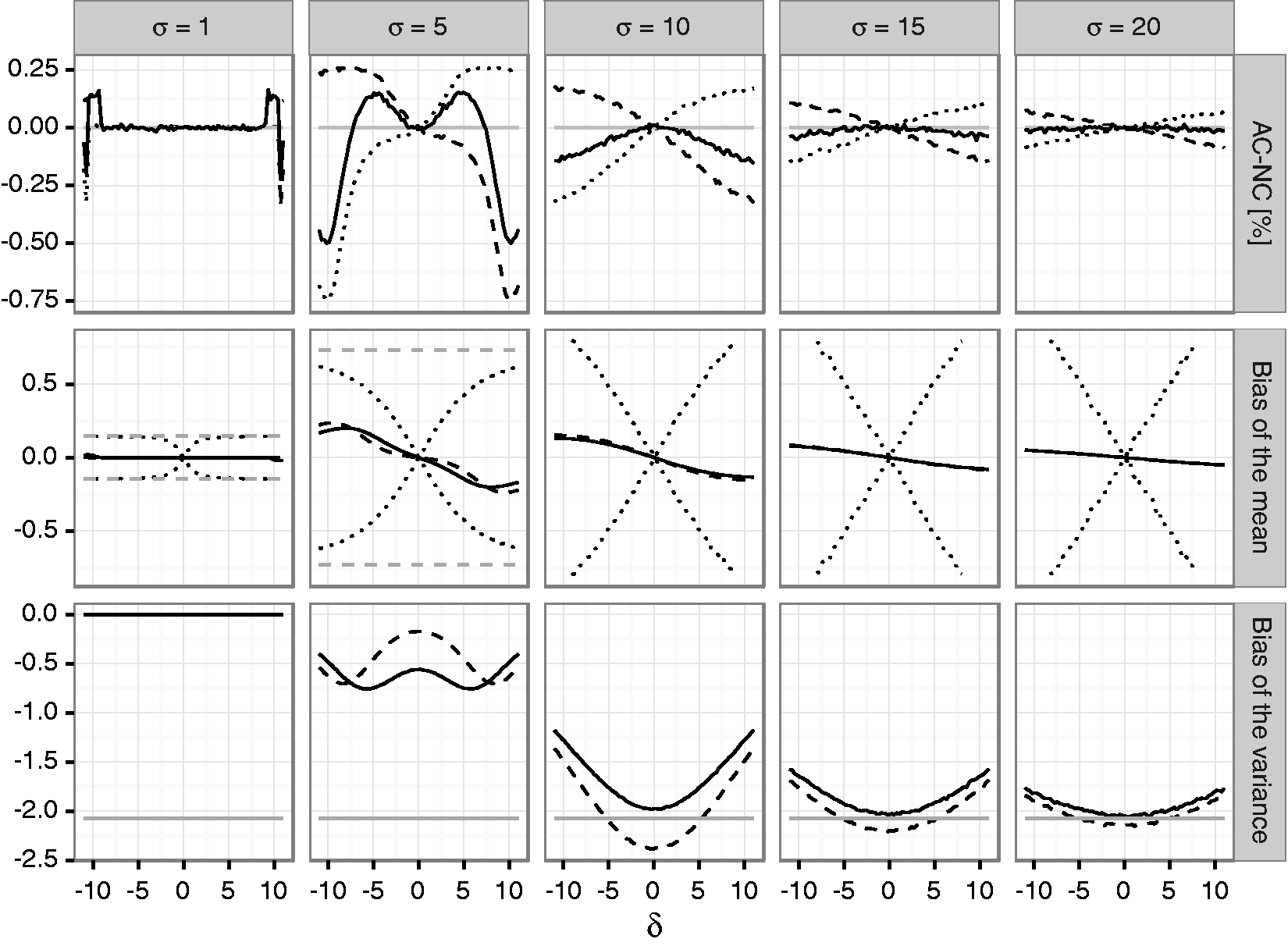

To quantify the bias of mean and variance estimates as well as the actual coverage of corresponding one- and two-sided confidence intervals in adaptive two-stage designs with blinded sample size reassessment, a simulation study was performed. We assumed that a trial is planned for an effect size Bias of the mean under blinded sample size reassessment using the unadjusted (solid line) and adjusted (dashed line) interim variance estimate. The dotted lines show maximum negative and positive bias that can be attained under any blinded sample size reassessment rule according to Theorem 2. The dashed gray line shows the maximum bias that can be attained under any (unblinded) sample size reassessment rule. The treatment effect used for planning is set to Bias of the variance under blinded sample size reassessment using the unadjusted (solid line) and adjusted (dashed line) interim variance estimate. The gray line gives the lower bound from Theorem 4 for the bias under sample size reassessment based on the unadjusted variance estimate. The treatment effect used for planning is set to

For both sample size reassessment rules, the bias of the mean is zero under the null hypothesis and has the opposite sign as the true effect size, otherwise (in accordance with Theorem 1). For very large positive and negative effect sizes, the bias is close to zero. This is due to the fact that a large effect size results in a large positive bias of the blinded interim variance estimates which in turn results in very large second stage sample sizes. As a consequence, the overall estimates are essentially equal to the (unbiased) second stage estimates, and the bias becomes negligible. In the considered scenarios, the absolute bias is decreasing in the first stage sample size. This also holds for the upper bound of the bias for general sample size reassessment rules. However, while the bias for the adjusted and unadjusted reassessment rules is small for larger sample sizes, the upper bound for general sample size reassessment rules still exceeds 10% in the considered scenarios even in the case where

The variance estimate is negatively biased for both considered sample size reassessment rules. For increasing variances, the absolute bias under the null hypothesis

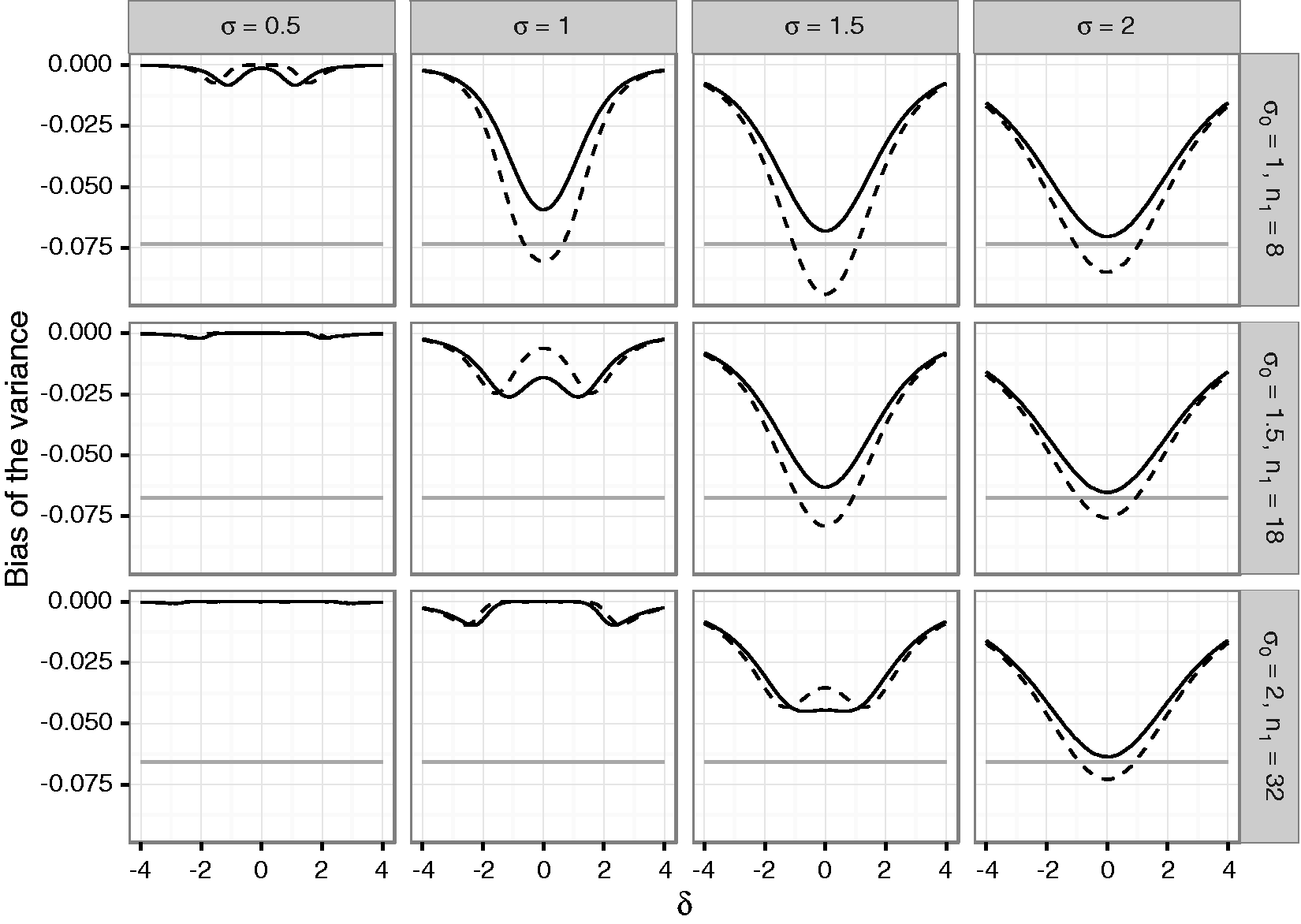

The difference of the coverage probabilities of the confidence intervals to the nominal coverage probabilities (i.e., 0.975 for the one-sided, 0.95 for the two-sided confidence interval) is shown in Figure 3 for the unadjusted sample size reassessment rule (see Figure 1 of the Supplementary Material for corresponding results for the adjusted sample size reassessment rule). For positive Difference between actual and nominal coverage probabilities in percentage points under blinded sample size reassessment using the

The two-sided coverage probability (which is given by one minus the sum of the non-coverage probabilities of the lower and upper bounds) is not controlled over a large range of

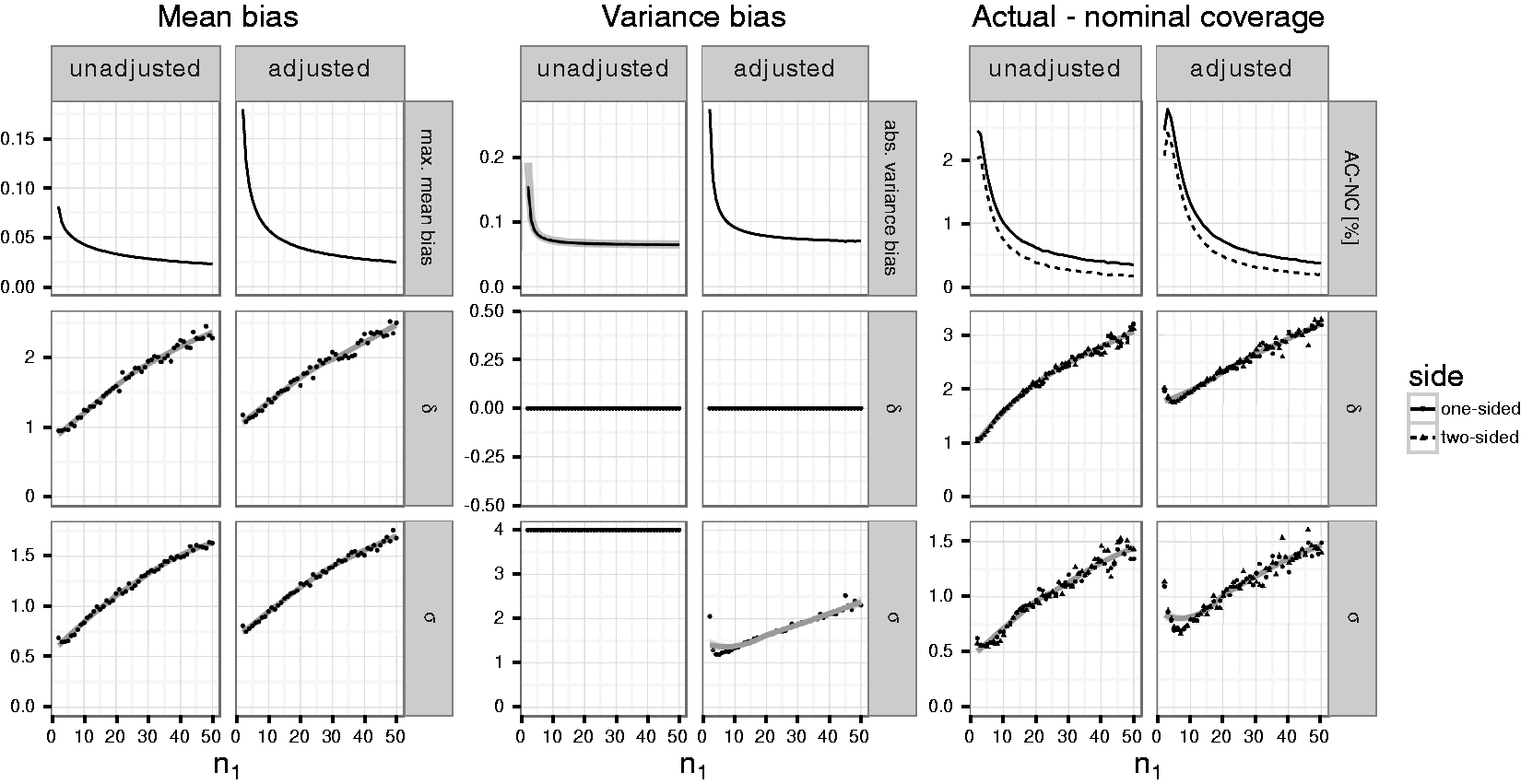

While Figures 1 to 3 demonstrate the dependence of the bias and coverage probabilities on the true mean and standard deviation, in an actual trial these parameters are typically unknown. Therefore, we computed the maximum absolute bias of the mean, variance and the maximum difference between nominal and actual coverage probabilities for fixed first stage sample sizes over a range of values for the true mean and variance (see Figure 4). The optimization was performed in several steps based on simulations across an increasingly finer grid of true mean differences Maximum absolute mean and variance bias as well as maximum negative difference between actual and nominal coverage probabilities (in percentage points) for given per group first stage sample sizes

Overall, the maximum bias of the mean and variance estimates as well as the maximum difference between nominal and actual coverage probabilities using the adjusted sample size reassessment rule exceed that of the unadjusted rule. The maximum absolute bias of the mean bias (Columns 1 and 2 of Figure 4) drops from 0.18 (0.08) for trials with a per group first stage sample size of

For the unadjusted rule, the simulations suggest that the absolute variance bias is maximized for

The maximum negative difference between actual and nominal coverage probabilities (“AC-NC,” in Columns 5 and 6 of Figure 4) of the one-sided confidence intervals ranges from about 2.8 (2.4) to 0.4 (0.3) percentage points if the adjusted (unadjusted) variance estimate is used to reassess the sample size. For the two-sided confidence intervals, differences between actual and nominal coverage are slightly lower ranging from 2.4 (2) to 0.2 (0.2) percentage points, respectively. For increasing first stage sample sizes, the maximum is attained for increasing values of both

In Section 4 of the Supplementary Material, the corresponding results for restricted sample size rules that limit the maximum second stage sample size to twice the preplanned second stage sample size, are given. We observe that for scenarios where

5 Case study

We illustrate the estimation of treatment effects with a randomized placebo controlled trial to demonstrate the efficacy of the kava-kava special extract “WS® 1490” for the treatment of anxiety

35

that was used to illustrate procedures for blinded sample size adjustment.

17

The primary endpoint of the study was the change in the Hamilton Anxiety Scale (HAMA) between baseline and end of treatment. Assuming a mean difference between treatment and control of

As in the previous case study,

17

we assume that sample size reassessment based on blinded data is performed after 15 patients per group, that the sample size reassessment rules were applied with

To estimate the bias of the mean and variance estimate as well as the coverage probabilities of confidence intervals, we performed a simulation study for true effect sizes Coverage probabilities and bias of mean and variance estimates for the case study. The first row of panels shows actual-nominal coverage probabilities (AC-NC) for the 97.5% upper (dashed line), lower (dotted line) and the 95% two-sided confidence intervals, for the unadjusted reassessment rule

5.1 Unadjusted sample size rule

For fixed

5.2 Adjusted sample size rule

If the sample size reassessment is based on the adjusted variance estimate, the absolute bias of the variance and mean estimate will be even larger taking values up to 2.49 for the variance and up to 0.25 for the mean, respectively. The inflation of the non-coverage probabilities goes up to 0.9 percentage points for the one-sided confidence intervals and 0.6 percentage points for the two-sided intervals.

6 Discussion

We investigated the properties of point estimates and confidence intervals in adaptive two-stage clinical trials where the sample size is reassessed based on blinded interim variance estimates. Such adaptive designs are in accordance with current regulatory guidance that proposes blinded sample size reassessment procedures based on aggregated interim data.9–11 We showed that such blinded sample size reassessment may lead to biased effect size estimates, biased variance estimates and may have an impact on the coverage probability of confidence intervals. The extent of the biases depends on the specific sample size reassessment rule, the first stage sample size, the true effect size and the variance.

We showed that for the unadjusted and the adjusted sample size reassessment rules that aim to control the power at the pre-specified level, the bias of confidence intervals may be large for very small first stage sample sizes but is small otherwise. Under the null hypothesis, even for first stage sample sizes as low as 16, the confidence intervals do not exhibit a relevant inflation of the non-coverage probability. For positive effect sizes, inflations (even though minor) are observed also for somewhat larger sample sizes. This corresponds to previous findings that the type I error rate of superiority as well as non-inferiority tests is hardly affected by blinded sample size reassessment.17,28 In addition, we show that for positive treatment effects the lower confidence interval (which is often the most relevant because it gives a lower bound of the treatment effect) is strictly conservative in all considered simulation scenarios. The upper bound in contrast shows an inflation of the non-coverage probability, which is however only of relevant size if sample sizes are small.

An approach to obtain conservative confidence intervals even for trials with small first stage sample sizes is to apply methods proposed for flexible designs with unblinded interim analyses32,33,36–39 which are based on combination tests or the conditional error rate principle. Such approaches allow to construct confidence intervals which control the coverage probabilities. However, they are based on test statistics that do not equally weigh outcomes from patients recruited in the first and second stage. A hybrid approach that strictly controls the coverage probability could be based on confidence intervals that are the union of the fixed sample confidence interval and the confidence interval obtained from the flexible design methodology. Similar approaches have been proposed for hypothesis testing in adaptive designs.40,41

We demonstrated that the treatment effect estimates are negatively (positively) biased under the adjusted and unadjusted sample size reassessment rules for all positive (negative) effect sizes. The bias decreases with the first stage sample size but for worst case parameter constellations it remains noticeable also for larger sample sizes. In addition, for the worst case sample size reassessment rule based on blinded variance estimates, the bias may be substantial even for large first stage sample sizes: As the effect size increases the maximum bias of the blinded sample size reassessment rule approaches the bias of unblinded sample size reassessment. As a consequence to maintain the integrity of confirmatory clinical trials with blinded sample size reassessment, binding sample size adaptation rules must be pre-specified and it is important to verify on a case-by-case basis that the reassessment rules used do not have a substantial impact on the properties of estimators should be used.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Martin Posch and Franz Koenig were supported by Austrian Science Fund Grant P23167. Florian Klinglmueller was supported by Austrian Science Fund Grants Number P23167 and J3721.

Supplementary material

Supplementary figures and additional details on the simulation study are presented in an additional document, which is distributed with the paper as Supplementary Material.

The

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.